text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

Opened 4 years ago

Closed 4 years ago

#7521 closed defect (fixed)

ExtractUrlPlugin: setup.py doesn't work if you haven't already installed it

Description

Your setup.py contains this:

from tracextracturl.extracturl import __revision__ as coderev from tracextracturl.macro import __revision__ as macrorev

setup.py should never import from your package. If you're lucky it works, but this can cause a host of problems. For one, pip can't determine the dependencies of your plugin unless everything your plugin imports is already installed, which makes installing a little difficult...

Attachments (0)

Change History (1)

comment:1 Changed 4 years ago by martin_s

- Resolution set to fixed

- Status changed from new to closed

Note: See TracTickets for help on using tickets.

Thanks for reporting this issue. Fixed in [8393]. | http://trac-hacks.org/ticket/7521 | CC-MAIN-2014-52 | refinedweb | 131 | 60.31 |

Introducing TurboSignals

My.

Basics

TurboSignals is a simple library. It includes signal classes for up to ten parameters (Signal0, Signal1, …, Signal10) as well as a class for var args (SignalN). These are paired with slot interfaces (Slot0, Slot1, …, Slot10 and SlotN) that you implement in order to receive the signal’s callback. The purpose of an explicit slot type is to avoid using a Function variable, which is very slow. This was shown in my article on Runnables along with how Runnables are a good strategy for avoiding that slowdown. Some helper classes (FunctionSlot0, FunctionSlot1, …, FunctionSlot10 and FunctionSlotN), however slow, are provided for when you really want to provide an arbitrary function.

Advanced Features

One nicety of TurboSignals is that the dispatch operation is “safe” insomuch as that calls to addSlot, removeSlot, and removeAllSlots will not affect which slots are called. One drawback though is that parameters to dispatch are all untyped (*) and therefore there is no compile-time checking of the parameters and the possibility exists that there will be type errors at runtime. This is true too in the Event/EventDispatcher system as well as as3signals, only the latter explicitly checks for this problem at runtime to give more informative errors.

Usage Example (faster)

This example runs at maximum speed, which is probably not needed for simple button clicks. implements Slot0 { public function MainMenu(button:Button) { button.clicked.addSlot(this); } public function onSignal0(): void { trace("button was clicked"); } }

Usage Example (slower)

This example allows you to make your callback private and name it as you wish, courtesy of the FunctionSlot0 adapter class. { public function MainMenu(button:Button) { button.clicked.addSlot(new FunctionSlot0(onButtonClicked)); } private function onButtonClicked(): void { trace("button was clicked"); } }

Usage Example (complex)

This example runs at maximum speed with multiple buttons.

import com.jacksondunstan.signals.*; public class Button extends Sprite { public var clicked:Signal1 = new Signal1(); public function Button() { addEventListener(MouseEvent.MOUSE_DOWN, onMouseDown); } private function onMouseDown(ev:MouseEvent): void { this.clicked.dispatch(this); } } public class MainMenu implements Slot1 { private var __button1:Button; private var __button2:Button; public function MainMenu(button1:Button, button2:Button) { __button1 = button1; __button2 = button2; button1.clicked.addSlot(this); button2.clicked.addSlot(this); } public function onSignal1(target:*): void { if (target == __button1) { trace("button 1 was clicked"); } else if (target == __button2) { trace("button 2 was clicked"); } } }

Parameter Passing Strategy

As you can see from above, functionality from alternative systems can be emulated in TurboSignals by simply adding more event parameters. The Event/EventDispatcher system’s Event.target is attained by simply passing a reference to this as a parameter to dispatch. Likewise, the type field can easily be passed. You may also choose to pass objects like Event that include these too, which may help with speed as multiple arguments slow down the dispatch operation.

Performance Data

The TurboSignals distribution includes a suite of performance tests for TurboSignals as well as as3signals and Event/EventDispatcher. Here are the results for the first version:

TurboSignals – 1 Listener (1000000 dispatches)

TurboSignals – 10 Listeners (1000000 dispatches)

TurboSignals – 1 Function Listener (1000000 dispatches)

TurboSignals – 10 Function Listeners (1000000 dispatches)

as3signals – 1 Function Listener (1000000 dispatches)

as3signals – 10 Function Listeners (1000000 dispatches)

Event/EventDispatcher – 1 Function Listener (1000000 dispatches)

Event/EventDispatcher – 10 Function Listeners (1000000 dispatches)

Performance Graphs

Performance Analysis

The latest version of as3signals (as of today, 2/15/2010) goes a long way to improve performance of the Event/EventDispatcher system, especially on Mac OS X. TurboSignals goes a lot further though and nearly matches the speed of its inspiration: the simple list of Runnables. TurboSignals manages to dispatch events about 17 times faster than as3signals when implementing the slot directly and about 3 times faster than as3signals when using a Function variable to allow for a the callback to be private, named, or anonymous. That said, as3signals is itself 4-13x faster than the Event/EventDispatcher system. So if you are planning on dispatching frequently or to many listeners, you should definitely take a look at TurboSignals.

#1 by Piergiorgio Niero on February 16th, 2010 · | Quote

What about adding\removing listeners at runtime?

is there any possibility to “(un)subscribe” (from)to a signal at runtime?

I think the interface implementation way only would become a lot limitating for medium\large projects.

is there any way to workaround that?

#2 by jackson on February 16th, 2010 · | Quote

Adding/subscribing is done by addSlot and removing/unsubscribing is done either by removeSlot or removeAllSlots.

You can work around implementing your own slot only at the cost of a speed hit. The primary way of doing this is to use FunctionSlotX classes, where X is 0-10 or N. See the example titled “Usage Example (Slower)” and the corresponding performance data and graphs related to “function listeners”. The idea is that TurboSignals gives you the option to implement the slot interface yourself for maximum speed where you really need it (see the “Why Would I Need Speed?” section of the TurboSignals project page.

#3 by whitered on February 16th, 2010 · | Quote

The next step to improve performance is to use Vector instead of Array. And here is my extremelly simple implementation of signals:

#4 by jackson on February 16th, 2010 · | Quote

I originally used Vector instead of Array in my last article: Callback Strategies. I switched to Array to make the library usable in Flash 9 rather than restricting it to Flash 10. The performance difference was negligible anyhow.

I did quite a lot of looking around for other implementations of signals and slots whilst working on my own, but didn’t find yours. Thanks for the link! It is indeed a very simple implementation, but sometimes that’s all you want. One performance-related aspect that has helped Robert Penner (and myself, I suppose) is to only copy the callbacks list when it actually needs to be copied. That is, set a flag indicating if you’re dispatching and if it is true in your addCallback or removeCallback then you can copy then. Robert Penner claims that doubled his performance!

#5 by whitered on February 16th, 2010 · | Quote

wow, thanks for useful idea. I’ll surely apply it.

#6 by whitered on February 17th, 2010 · | Quote

trying to implement this optimization on my project, I’ve found a bug that exists in TurboSignals. If we redispatch the signal that is already dispatched, its __slotsNeedCopying flag will be reset, so its slots can be changed on the run. See the demo:

#7 by jackson on February 17th, 2010 · | Quote

Nice find! I will look into this shortly and reply here with any resolution I come up with. If you have a way of resolving it, I do welcome patches.

Thank you for the detailed example!

#8 by whitered on February 17th, 2010 · | Quote

just clone your slots if __slotsNeedCopying in dispatch() method as you do in other ones

#9 by jackson on February 17th, 2010 · | Quote

That would work, but I’d prefer not to copy the slots unless it’s really necessary or it helps performance. I implemented the fix by adding a __numDispatchesInProgress integer that I use to determine if I can safely set __slotsNeedCopying to false after the dispatch is done. I also added a unit test based on your demo code above. Version 1.0.1 is now up on the project page and Google Code and it should run at virtually the same speed as version 1.0.

#10 by whitered on February 18th, 2010 · | Quote

This implementation has a hidden danger: signal become broken when a slot throw an error. In that case signal’s __numDispatchesInProgress counter will always be greater than in should be so the signal will often clone its slots when it isn’t necessary.

I think redispatches happens rather rarely so I’ve decided to keep things simple and to clone my callbacks in dispatch method on redispatches.

#11 by jackson on February 18th, 2010 · | Quote

Yes, it would lead to lots of slot list copying later on and it would also lead to the rest of the slots not being called. The former is a performance problem and the latter is a correctness problem. Unfortunately, the only way I know of to fix the correctness problem is to wrap the slot call in a try/catch block. As I’ve shown before, this would introduce a big performance problem itself.

Personally I think that you should expect uncaught exceptions to do bad things to your program. TurboSignals is but a drop in the ocean of code that isn’t handling thrown errors. Luckily with TurboSignals you get off easy: the rest of your slots don’t get called and it performs slower from then on, but at least it never crashes.

I’d welcome anyone to reply here with comments on how TurboSignals should handle uncaught errors thrown by slots.

#12 by whitered on February 18th, 2010 · | Quote

Yes, this is true that you should expect uncaught exceptions to do bad things. But I think that you should not expect bad things from exception that was caught and handled.

Code like that can delude a developer:

On the other hand this implementation will result in slower dispatching but do it clearly and in very rare situations (recursive dispatchings)

Not but what to wrap the slot call in a try/catch block is the worst idea as I think.

#13 by jackson on February 18th, 2010 · | Quote

I like your logic a lot and I can totally see this point of view. It seems that in this situation, as you point out, we must choose where to optimize. A case like you show above could very well happen and the current penalty in TurboSignals would be slower addSlot, removeSlot, and removeAllSlots calls from then on. This is indeed a steep penalty, so avoiding it as you have optimizes to remove this penalty. But in the process of optimizing that way you have, as you again have pointed out, made recursive dispatching slower.

So the question is this: should we optimize for recursive dispatching (TurboSignals’ current way) or for thrown errors (the way the signal class you linked works)? To me the matter comes down to the legitimacy of the two uses cases. In my view, errors are bad but recursive dispatching is a valid feature of TurboSignals. I prefer not to punish the valid uses of TurboSignals for the sake of the invalid uses. You can, of course, do whatever you’d like to in your own signal class. :)

#14 by Robert Penner on February 16th, 2010 · | Quote

This is great! I love your creative thinking and passion for performance testing. The more options, the better.

#15 by Robert Penner on February 16th, 2010 · | Quote

I noticed you’re using AsUnit 4 alpha for your unit tests. I looked through your test code and it looks really good so far (there’s a lot of it). I’d love to hear your thoughts on unit testing and the role it played in TurboSignals development.

#16 by jackson on February 16th, 2010 · | Quote

TurboSignals seemed to be a good place to apply unit testing since its functionality is what I call “pure data”. That is, there is no graphical or audio output that can only be properly judged by the human eye and ear, no interaction requiring precision timing (especially with Flash Player!), and so forth. Further, I didn’t have any (public) application to start using TurboSignals in, so I needed some test application. I started off with a homebrew set of tests like I’ve done in previous articles, but it started to get messy, overly verbose, and difficult to scale after about a dozen tests. All of this pointed toward using some unit testing framework.

I went with AsUnit as it’s clearly one of the most popular choices and the little I’ve seen of it before (mostly from you!) looked pretty good. I couldn’t find a SWC build of it though and didn’t feel like setting up Ruby just to do the build, so I actually took your build of version 4’s alpha out of as3signals. It seems to run fast enough, very consistently, and was easy to set up.

All in all I’d say this was a good place to use unit testing and it’s been quite successful. It certainly isn’t a project built with TDD as I wrote all of the code before the tests, but the validation part of it is very nice. :)

#17 by Joa Ebert on February 16th, 2010 · | Quote

Hey, the TurboSignals look very promising and I like the approach of having classes like Signal0. Scala does this as well very successful. It is a shame you can not apply more syntactit sugar.

Did you try what happens when you are using linked lists and object pooling approaches as well? I think your life will be much easier if your listeners are nodes of a linked list. Traversal should be faster and removing listeners is not a big deal as well. It can work without a copy. Most of the time you are removing a listener in its callback so that can be even done in O(1).

#18 by jackson on February 16th, 2010 · | Quote

I haven’t tried linked lists yet, but I’m willing to give it a go. Earlier on in development I tried making the iterator a private field and then checking the index to remove from against it to see if it was at or before the current callback. In such a case there’s no need to copy the list, which would be a nice optimization as far as speed, garbage creation, and (instantaneous) memory footprint. This turned out to be disastrous to performance though as accessing a field is quite a bit slower than accessing a local variable.

So it seems as though I’d still need to copy the list on addSlot/removeSlot/removeAllSlots if I switched from Array to a linked list. In my my implementation from December it looked like both copying and traversing were slower in linked lists. Do you have some suggestions on how to make the linked list implementation fast enough to make it worthwhile here? I’d much appreciate any suggestions you could give.

#19 by matthew on February 16th, 2010 · | Quote

It seems to me that the speed benefits aren’t worth the cost in readability and required code.

For one, this system requires you to check the target in the callback whenever you have multiple dispatchers using the same signal number. That could get out of hand pretty fast, as your example with two buttons shows. Chances are that every listener for more than one signal would need to act as a switch, delegating to other functions based on the target.

It also couples the implementation of the listener and dispatcher in a strange, non-semantic way, requiring your listener to know an arbitrary piece of metadata (the slot number) in addition to the event type (“clicked”). A tiny change in the dispatcher’s implementation (the slot number of a particular event) would require huge, cascading changes across an entire codebase.

If you’re really in need of the performance benefits gained from using the Observer pattern, maybe it would be better to use tools that would facilitate that (templates, snippets, macros, etc.)

#20 by jackson on February 16th, 2010 · | Quote

Check out the example titled “Usage Example (Slower)” using FunctionSlot. That shows how to use TurboSignals like you’d use Event/EventDispatcher or as3signals with an arbitrary Function callback. Then check out the performance data to see how TurboSignals is still faster than Event/EventDispatcher or as3signals, even when using FunctionSlots. If you only used Signal1 with FunctionSlot1 to dispatch Event objects (or similar), you’d still realize a speed gain without any of the drawbacks you mention.

But the important part about TurboSignals is that it gives you a choice to go even further and speed up your callbacks by implementing the slot directly. Yes, you lose some code cleanliness in the process, but sometimes it’s worth it. By providing FunctionSlot classes in TurboSignals in addition to Slot interfaces, I leave that choice up to the individual programmer on his or her own project.

#21 by Alec McEachran on February 16th, 2010 · | Quote

Hi Jackson,

Congratulations, this is really interesting work. While I don’t imagine I’ll use it in many places in projects I work on since the syntax is somewhat confusing for team environments, I am definitely going to give this a good hard look for game loops, and other performance critical code.

Now I understand why you were holding back running as3signals vs Event analysis earlier!

This is a great addition to the as3 canon. Thanks.

#22 by jackson on February 16th, 2010 · | Quote

I’d like to hear your thoughts on how to improve the syntax. Personally, I think the “Usage Example (Slower)” code shows how to use it quite similarly to Event/EventDispatcher and as3signals and the performance data shows that even there you get a nice speedup.

#23 by Winx Alex on March 24th, 2010 · | Quote

For all this we can conclude that events run faster if they are called on object(not true pointer or thru EventDispatcher) but object.function(args).

So you need to make few changes in original Robert Panner code. Let took Signal.as and

– “add” function should took add(object,”nameOftheFunction”)

-listeners array would take now object-“nameOftheFunction” pair instead of pointer of the function

-“dispatch” function

in instead of listener.apply(null, valueObjects);

should make object[“nameOftheFunction”](valueObjects) or something like that maybe with object[“nameOftheFunction”].apply(object,valueObjects)…..

#24 by jackson on March 24th, 2010 · | Quote

It would be fantastic if this would result in a dramatic speedup similar to the speedup you get in TurboSignals. This would relieve the programmer of the need to create Slot derivatives and allow them to name callback functions however they wish, both downsides of using TurboSignals. However, I believe that there are two reasons why this would result in code that runs at the same speed or possibly even slower than as3signals does right now. Firstly, the dynamic access (object["nameOfTheFunction"]) is quite slow. Secondly, what you get back is indeed just a Function variable, so calling it (.apply(object,valueObjects)) would be as slow as the original (listener.apply(null, valueObjects).

What makes TurboSignals fast is the strong guarantee that the compiler and JIT are afforded by using a typed object: the Slot class. Removing the typing causes the slowdowns you see in both as3signals and EventDispatcher, although the latter has many more problems than just weak typing.

#25 by Winx Alex on March 25th, 2010 · | Quote

Could you make test.

object[“nameOfTheFunction”])

vs

object.nameOfTheFunction().

so we see what is quite slow. Everything is compromise. Little bit slower but cleaner. Except in atom accelerator :)

#26 by jackson on March 26th, 2010 · | Quote

Sure. Given these simple slots:

I wrote this simple test:

And got these results:

So it’s about 40x slower than a direct call. That’s a bit much of a compromise for TurboSignals. :)

#27 by eco_bach on November 21st, 2010 · | Quote

Hi Jackson

Interesting comparison between AS3Signals. What about bubbling? I absolutely rely on event bubbling in all my projects. Is this supported by TurboSignals?

#28 by jackson on November 21st, 2010 · | Quote

Bubbling is, unfortunately, not supported right now. It would certainly be slow, but so are

FunctionSlots. The key would be to add a new set of signal classes analogous to as3signals’

DeluxeSignalso as to not slow down the regular signals. TurboSignals is open source, want to volunteer? :)

#29 by Mark on February 18th, 2011 · | Quote

Hi I am trying to use turbosignals using your example “USAGE EXAMPLE (COMPLEX)” in my own project.

I think 2 things are wrong in this example:

The clicked Signal1 never gets instantiated and gives an object-null error when trying to call the addSlot function. For the example it would be an idea to add somewhere ‘new Signal1()’

Another thing which cost me more time to explore;

I have a class called Enemy, which have a Signal1. I also have a class called Game which implements Slot1.

When I use a type in the onSignal1 function in the Game class like this:

..I get this Error: “Interface method onSignal1 in namespace com.jacksondunstan.signals:Slot1 is implemented with an incompatible signature in class Game.”

It is fixed when I use this, but then I lose my completion:

How is it possible your example uses a type at that place?

I am using flex_sdk_4.1.0.16076, compiling as FP10.1

#30 by jackson on February 18th, 2011 · | Quote

These are both excellent points. I’m amazed that in over a year, no one caught these errors in the examples.

I’ve updated the article to actually create the signals and to remove the type from the

Signal1callback, which is unfortunately necessary for compilation. If you want to get the type back, you’ll need to do a cast. Also, if you use a

FunctionSlotto mimic the

EventDispatcheror as3signals approach, you can still type the parameter. You give up some speed—as shown in the article—and some compile-time checking—it can’t make sure your arguments are of the correct types, the same problem you have with

EventDispatcherand as3signals—but you do at least get to give your argument an explicit type.

I wish I know of a way that was fast, flexible, and type-safe. Unfortunately, AS3 seems to force compromise here:

TurboSignalswith the types explicitly stated instead of using

*

TurboSignals

The key seems to be that all of AS3’s dynamic functionality disposes of all the compile-time checking.

#31 by Mark on February 18th, 2011 · | Quote

Ah! I thought I was doing something wrong, since I have never used any kind of signals.

Making an own typesafe signal would be the answer, but casting is not a problem I think. The pure turbosignals without functionslots already loses some readability, so it feels a bit dirty anyway. The functionslots are a better solution for that.

I have explorer the classes to see how it worked. I am not a performance expert but I think some small things could be improved.

– Use vectors instead of arrays (who uses fp9 anyway)

– Optimise the dispatch function:

– Remove the var from function, and if you use a vector, there would be no need to cast to type inside the loop.

– Remove the default value 0 of i

I don’t see any reason why

__numDispatchesInProgresscounts up and down in the same function, It will always end as 0? What does slotsNeedCopying mean?

I think the signals are a very great concept. I really like the fact you don’t have to create event-types (Strings), but the signals describe the event itself. They are just very simple. (

clicked:Signal).

BTW In most of my apps I create a singleton which extends

EventDispatcher, to have global events (bad, but very very handy ☺). How do signals perform when they are static?

#32 by jackson on February 18th, 2011 · | Quote

I’ve learned more about AS3 performance the last year and now see that you have some good points. For example, defaulting

ito 0 is pointless. The cast is also not helping and a

Vectorcould be used if Flash Player 9 support is dropped. As for removing the locally-cached

__slotscopy, that was done intentionally because local variable access is much quicker than field access. As for

__numDispatchesInProgressand

__slotsNeedCopying, they are there to protect against modifications to the signal during the dispatch. Without them, certain conditions (errors during dispatch, adding/removing slots during dispatch, dispatching during dispatch) can corrupt the signal’s state.

Signals should perform the same when they are static, but the static lookup (e.g.

MyGlobalSignals.enterFrame) is more expensive.

#33 by Mark on February 19th, 2011 · | Quote

Thanks for explaining, I wonder how much MyGlobalSignals.enterFrame.dispatch() vs MyGlobalEventDispatcher.dispatchEvent(new CustomEvent()) performs.

#34 by jackson on February 19th, 2011 · | Quote

The dispatch performance should be the same as the non-static dispatch performance you see in the article. Only the static access (when you add slots, remove slots, or call

dispatch) would be slowed down.

#35 by Mark on February 23rd, 2011 · | Quote

How do you remove a slot if you are using the FunctionSlot ?

The only way to remove it is to create a variable of the functionslot, like this?

It would be great if there is an alternative to this. Maybe a FunctionSignal or something would be a great addition, which creates FunctionSlots inside the class like addSlot(func);

#36 by Mark on February 23rd, 2011 · | Quote

Just tried to create my own FunctionSignal1, all slots are now functions, so semantically they are a bit different but the idea is clear.

I don’t know if this is performing as fast as the normal Signal1 with a FunctionSignal, haven’t tested that, but I think this is more useable in normal projects since you can normally pass the listener function.

#37 by jackson on February 23rd, 2011 · | Quote

TurboSignals is meant as a fast alternative to

EventDispatcherand as3signals. If you’re going to use

Functionobjects directly, you’ve given up most of the speed advantages. If speed is not your goal, consider

EventDispatcheror as3signals instead.

#38 by Mark on February 23rd, 2011 · | Quote

I am still using the FunctionSlot and the dispatch function still calls the function from the interface. I think removing the slots now is more simple, since the example does not show how to remove slots. Do you this this is slower? Sorry if i removed the turbo from the signal :) I think these signals are more readable then CustomEvents anyway, I am starting to like the idea of this lightweight event alternative.

#39 by whattatorpe on April 5th, 2012 · | Quote

One year old thread… well, I’ll try anyways…

I’m not a hardcore programmer, so maybe my question is kind of illiterate:

This way Turbosignals or Signals work, isn’t all this against encapsulation?

Let’s picture a menu and a menuButton for folding or unfolding it.

I use the flash IDE events (addEventListener, dispatchEvent… you’ll know better) and doing so, menuButton need no public vars and I can reuse it everywhere, as long as I add addEventListener (custom_event). I can reuse it in other projects, classes, clips on the stage, and if I remove it or simply don’t use it, I don’t need to make any changes in the code besides removing new:menuButton

But using Turbosignals I cannot move my menuButton between classes or even different projects, I have first to implement access to every public var:Turbosigna in menuButtnl, or, if I later regret, I end up with a lot of referenceless variables I have to deal with.

Is it that I’m missing something, or, to say it clearly, the problem is that I do need serious programming tuition?

Anyways, as far as the parts I’m able to understand, great blog

#40 by jackson on April 5th, 2012 · | Quote

Thanks for the kind words about the site. The way I see it, there is only one major stylistic difference between TurboSignals and

EventDispatcher: TurboSignals does not use

Stringevent names. Consider these two simple classes:

And some code that uses them:

If I understand your question correctly, you’re wondering about how the user will change when the button changes. Say the two events are replaced by a single “click” event. Here’s what the button classes would look like:

And here’s the user code:

To me, it’s about the same level of maintenance required by both versions.

#41 by whattatorpe on April 7th, 2012 · | Quote

A year-old thread and I get an answer the same day… I don’t know how to say how awsome I find this. Danke!!

I think you undestood my question even better than I did. After a while fiddling both with ED and TS, yep, code is moved a bit here and there, but as long as you don’t rely on event bubbling things don’t change that much.

I guess that when I saw that public variables had to be declared, it came to my mind all this things actual programmers say about how bad dependencies are for encapsulation and so, but after a while dealing with your code I think I’m rather uninformed on the subject.

Thanks again for sharing your knowledge.

#42 by jackson on April 7th, 2012 · | Quote

No problem; I’m happy to help. :)

One more thought on the subject: you could separate your classes even further by using an interface. For example:

This is a lot harder and messier than with

EventDispatchersince classes using that approach don’t have any strongly-typed definition of what events they dispatch. I’ll leave it up to you to think about how you’d even go about making an interface like

Clickablewith the

EventDispatchersystem. :)

#43 by Shay Pierce on November 1st, 2012 · | Quote

Hey Jackson,

This system seems very interesting and pragmatic; I had meant to take a stab at integrating it into an event-driven game of mine for a while, and finally took a stab at it the other day. However I found it to be rather cumbersome to convert existing EventDispatcher-based code over, and I became very concerned about the lack of specificity in the function names.

I’d say that the strangest-feeling part of all this is the arbitrary-feeling-yet-constant intrusion of the concern around the “number of parameters”. In particular it bugged me that, for an object that listened for many different events (which I have at least a couple of), all of those unrelated events that happened to share the same number of parameters would all call the same function… without even an event-type string being provided to distinguish them. (Obviously the FunctionSlot wrapper can be used to mitigate this; but even that has the painful clunkiness of worrying about the number of parameters.)

Maybe it’s silly to assume a developer of your expertise hasn’t considered this, but I have to ask: have you looked at the use of the “args” variable array, to support an arbitrary number of parameters to a function?

It seems like at least providing this as a (slightly slower?) alternative would allow many things to be a great deal cleaner. Of course I don’t know the performance implications of using a function like that instead of a normal one, and in fact I haven’t checked whether interfaces play well with that feature. Just curious if you’ve tried it.

Thanks for creating the library, I think I’ll be using it selectively in my game (and/or exploring the method I just described above, heh!). | https://jacksondunstan.com/articles/585?replytocom=604 | CC-MAIN-2020-16 | refinedweb | 5,175 | 60.24 |

Good time of day everyone.I want to create plugin for ST3. But I am not familar with Python3. I decided to write it in other script language. So, I need code snippet to run my script (external program) in backround mode and to do something when script is finished.

I have:

class FuncThread(threading.Thread):

def __init__(self, target):

self._target = target

threading.Thread.__init__(self)

def run(self):

self._target()[/code]

Somewhere in "run"

[code]

thread = threading.Thread(target = my_long_calculate, args = (self.view, edit))

thread.start()

[/code]

my_long_calculate looks approximately as:

[code]subprocess.Popen(cmd, stdout=subprocess.PIPE, startupinfo=startupinfo).communicate()[0]

But my ST still is hanging (locked) when my_long_calculate() is running. What am I doing wrong?

What on earth are you doing that would make it easier to shell out to a different process than to clutz through Python?

Also, how are you planning to turn the script's output into changes to Sublime Text?

It is not easier! I told that I dont known python (I dont understand languages without curly brackets). I'm sure that my task can be solved in Python, but I dont know it, everything is simple.

This is example ST3 plugin "Hello from node.js"gist.github.com/unlight/6262932

run view.run_command("hello_node") command in ST console.

You don't really show enough code to see what is wrong. Also, you are not supposed to retain or pass the "edit" object between threads. Instead, call view.run_command of a second TextCommand that will apply the appropriate changes.

As an aside, using the sublime.set_timeout_async function might be simpler than manually creating a thread.

@sapphirehamsterI upload full code of test plugin on github: github.com/unlight/HelloNodeI used

sublime.set_timeout_async(lambda: self.view.run_command("long_loop"), 0)

In long_loop command occurs "long calculation" which I emulate by Javascript setTimeout() function, and in this time ST3 is "hanging" (cannot move cursor, cannot write something, etc.)

I think you may have misunderstood when I said it needs to run a second TextCommand. You need to call set_timeout_async on your own function that will do the long computation, and then call the TextCommand. It would look something roughly like this:

class ExampleNodeCommand(sublime_plugin.TextCommand):

def run(self, edit):

sublime.set_timeout_async(self.long_command, 0)

def long_command(self):

args = [get_node_path(), PLUGIN_FOLDER + "/longloop.js"]

for region in self.view.sel():

output = run_process(args)

pos = region.begin()

self.view.run_command('example_node2', {'pos': pos, 'output': output})

class ExampleNode2Command(sublime_plugin.TextCommand):

def run(self, edit, pos=None, output=None):

self.view.insert(edit, pos, output)

I didn't test this, so there may be typos, but it's the general idea. Also, you may have problems if there are multiple cursors (the positions may shift, so you may want to iterate over the Selection in reverse).

Nice. It works! Thank you very much.

thank you for your comment and example code, this has been very helpful for me! kudos! | https://forum.sublimetext.com/t/execute-external-program-in-background-non-blocking-thread/11122 | CC-MAIN-2016-07 | refinedweb | 489 | 53.58 |

Hello everyone,

Im new to java programming and especially new to this forum. Im taking my first college computer science course. I did very well on my first exam (loops, methods, keyboard input, types, etc). However, this question seriously has me stumped. How do I make the quarter circle/square? I attached a picture of the question.

Heres what I know:

- I know I will need a loop

- Need to declare Random integer- how do I do this?

- I have no idea where to start

Code for program:

import java.util.Random; class Dogs { public static void main (String[] args) { Random integer r = new Random // Completely wrong probably.

Any help or suggestions will be greatly appreciated. Thank you in advance. | http://www.javaprogrammingforums.com/java-theory-questions/32979-would-appreciate-help-beginner-programmer.html | CC-MAIN-2016-30 | refinedweb | 120 | 69.18 |

Mutliple thread access to object method

Discussion in 'Java' started by Crouchez, Aug 30, 2007.

Want to reply to this thread or ask your own question?It takes just 2 minutes to sign up (and it's free!). Just click the sign up button to choose a username and then you can ask your own questions on the forum.

- Similar Threads

mutliple CPUs, threading and JVM 1.4.2David, Nov 3, 2004, in forum: Java

- Replies:

- 0

- Views:

- 470

- David

- Nov 3, 2004

[nio] Mutliple Messages in ByteBufferMichael B Allen, Dec 13, 2004, in forum: Java

- Replies:

- 1

- Views:

- 463

- Esmond Pitt

- Dec 13, 2004

mutliple schemas/namespaces - xml validationDominique, Jun 29, 2004, in forum: XML

- Replies:

- 3

- Views:

- 502

- Dominique

- Jun 29, 2004

Mutliple Drop down list boxesAmi, Aug 25, 2006, in forum: ASP .Net

- Replies:

- 1

- Views:

- 421

- =?Utf-8?B?QXVndXN0aW4gUHJhc2FubmE=?=

- Aug 25, 2006

Error logging for mutliple sites on same server, Nov 6, 2006, in forum: ASP .Net

- Replies:

- 3

- Views:

- 331

- Phil Wilks

- Nov 7, 2006 | http://www.thecodingforums.com/threads/mutliple-thread-access-to-object-method.533799/ | CC-MAIN-2015-22 | refinedweb | 171 | 78.48 |

Opened 14 years ago

Closed 12 years ago

Last modified 12 years ago

#6447 closed (fixed)

cache backends that are primarily for local dev should enforce same key restrictions as memcached

Description

Using a locmem:// or postgres cache backend, I can generate a cache key using the following expression:

repr(f.__module__) + repr(f.__name__) + repr(args) + repr(kwargs)

but if I configure memcached, it fails silently, causing an empty page to be sent to the client.

I have confirmed this is the source of the problem by generating an md5.hexdigest of the above key - in which case, memcached works correctly.

I have included the context for the above key, in case it is relevant. It is a decorator method which caches the return value of an arbitrary function, assuming its value depeonds solely on its parameters, and that the method never returns None

from django.core.cache import cache as _djcache def cache(seconds = 3600): def doCache(f): def x(*args, **kwargs): key = md5(repr(f.__module__) + repr(f.__name__) + repr(args) + repr(kwargs)).hexdigest() result = _djcache.get(key) if result is None: result = f(*args, **kwargs) _djcache.set(key, result, seconds) return result return x return doCache

Attachments (5)

Change History (20)

comment:1 Changed 14 years ago by

comment:2 Changed 14 years ago by

Decision needed. It appears that John has a good point. I'd suggest to either fix the code or add a note to the docs. At the very least I'd suggest adding a note to the docs. I don't feel qualified in this area to attempt to take a shot at adding this to the docs.

comment:3 Changed 14 years ago by

comment:4 Changed 14 years ago by

This ticket was apparently closed because #7460 dealt with bad keys and a solution was committed for that ticket. The problem is that this happened only at the template tag interface. Applications that use the low level interface to the cache system still break with bad keys. The documentation for the cache system still does not mention this limitation of the memcache backend, and having inconsistent keying limitations (especially one of such a basic level as whitespace vs no whitespace) in various backends makes it much harder to build reusable apps (a cache key that works for my localmem:// cache may not work with the memcached backend being used on someone elses server).

Ideally, I'd like to see the memcached backend have similar logic applied to it as was used in in #7460 (e.g. use urlquote to ensure keys have no spaces in them) so that all interfaces are protected from spaces. Lacking that, I'd suggest an update to the cache docs that draws attention to memcached's limitation and provides a recommendation to application programmers who are writing applications that use the low-level cache layer to always escape their keys if their app might be used on a server that utilizes memcached.

comment:5 Changed 12 years ago by

Following discussion with jacobkm and jezdez in IRC, the plan is to leave the memcached backend unmodified (as any key mangling there could slow down a critical code path), but modify the other builtin backends (locmem, dummy, file, db) so they throw an error (or perhaps just a warning?) if you pass them a key that would be invalid on memcached. This discourages/prevents writing cache code that would not be portable to memcached.

I'm working on a patch.

comment:6 Changed 12 years ago by

comment:7 Changed 12 years ago by

Marking as accepted per the above IRC conversation with core committers.

Changed 12 years ago by

patch to enforce same key restrictions on all cache backends

comment:8 Changed 12 years ago by

- I chose to make an invalid key an exception, rather than a warning. My feeling is cache code ought to be backend-portable, so if it'll blow up on memcached it might as well blow up elsewhere too. I'll change this if core devs prefer it as a warning.

- If the idea is that cache-code ought to be backend-portable, is there any reason for the dummy backend to still accept *args, kwargs on most of its methods, where the other backends do not? What's the rationale for keeping this?

- As noted before, the design decision here is not to apply any additional key checking to the memcached backend, for speed reasons. The restrictions applied match memcached's restrictions (it appears that some control characters are accepted in keys by memcached itself, but python-memcached applies the same restrictions we apply here), so the result should be the same on all backends, except that memcached will raise a different exception.

Changed 12 years ago by

same as previous patch, with added doc note re key restrictions

Changed 12 years ago by

updated patch, applies to trunk

comment:9 Changed 12 years ago by

comment:10 Changed 12 years ago by

comment:11 Changed 12 years ago by

Since Django supports third-party cache backends, we can't control (thankfully!) what possible external caches people might choose to use in production. So an error here, restricting everybody to memcache's particular limitations, feels inappropriate. A warning -- in it's own class so that it can be easily silenced via warnings.ignore() -- would be my preference. Definitely warning people of importabilities is a good plan. Forcing them to effectively choose memcache for now and forever is bad.

Changed 12 years ago by

updated patch using warnings

comment:12 Changed 12 years ago by

Added a new patch following design discussion with Russell K-M, Malcolm T, Jannis L, and Jeremy Dunck.

Changed 12 years ago by

fixed a couple documentation issues in the previous patch

comment:13 Changed 12 years ago by

comment:14 Changed 12 years ago by

(In [13766]).

comment:15 Changed 12 years ago by

(In [13767]) [1.2.X].

Backport of r13766 from trunk.

The problem is that memcached doesn't allow whitespace in keys. I ran into this a while back, and dealing with it in your code is the right solution. Changing the memcached backend to do it makes examining the cache next to impossible, as Jacob explained while closing #3241 as "wontfix". You could try

urllib.quote(key)or

re.sub('\s+','',key)if you want to make the keys less opaque (and if you know they'll end up under 250 characters, I suppose). | https://code.djangoproject.com/ticket/6447 | CC-MAIN-2022-27 | refinedweb | 1,086 | 59.43 |

hey,

some people already wondered (including me) about why you had to set CCVER=gcc34 manually when building -Devel nrelease isos on -Release.

This is the situation:

release1.2:/usr/src/nrelease % echo $CCVER

CCVER: Undefined variable.

release1.2:/usr/src/nrelease % grep CCVER /etc/make.conf

release1.2:/usr/src/nrelease % make release

...

( cd /usr/src/nrelease/..; CCVER=gcc2 make buildworld )

...

>>> stage 4a: populating /usr/obj/usr/src/world_i386/usr/include

...

===> include/rpcsvc

rpcgen -C -h -DWANT_NFS3 /usr/src/include/rpcsvc/key_prot.x -o key_prot.h

cpp: in path [/usr/obj/usr/src/ctools_i386_i386]/usr/libexec/gcc2/cpp: No such file or directory

*** Error code 1

...

So, why does it pass CCVER=gcc2? This is because:

release1.2:/usr/src % grep ^CCVER /usr/share/mk/bsd.cpu.mk CCVER ?= gcc2

Okay. This is a default setting for building when no CCVER is set. This should be overridden for -Devel builds. It is!

release1.2:/usr/src % tail Makefile

# # Build compatibility overrides # .ifdef CCVER .if ${CCVER} == "gcc2" CCVER= gcc34 .endif .endif

It replaces CCVER=gcc2 with CCVER=gcc34! Still this doesn't work (see above). This is why:

% cat Makefile FOO?= gcc2 .makeenv FOO .if ${FOO} == "gcc2" FOO= gcc34 .endif all: @echo FOO=${FOO}, env FOO=$${FOO} % make FOO=gcc34, env FOO=gcc34 % make FOO=gcc2 FOO=gcc2, env FOO=gcc2 % env FOO=gcc2 make FOO=gcc34, env FOO=gcc2.

How do we solve this?

cheers simon | http://leaf.dragonflybsd.org/mailarchive/bugs/2005-08/msg00101.html | CC-MAIN-2015-06 | refinedweb | 237 | 55.4 |

Deploy a Sample Custom Skill as a Web Service

The Java samples provided with the Alexa Skills Kit can be hosted as web services. This document provides the steps this scenario – both running as sample on the command line, as well as deploying a sample as a web service with AWS Elastic Beanstalk, a service offering by Amazon Web Services.

Note that it is generally faster and easier to deploy and test the samples as AWS Lambda functions on AWS Lambda (a service offering by Amazon Web Services). You can use Lambda with either the Java or Node.js samples. See Deploying a Sample Custom Skill to AWS Lambda for detailed steps.

The samples are available in GitHub repositories. For details about getting the samples and the specific samples included, see Using the Alexa Skills Kit Samples (Custom Skills).

- System Requirements

- Building and Running a Sample from the Command Line

- Setting up Hello World on Elastic Beanstalk

- Creating the Maven Webapp Project and Importing the Code

- Adding a SpeechletServlet and Setting up Servlet Mapping

- Deploying the Sample to Elastic Beanstalk

- Configuring the Elastic Beanstalk Environment to Use HTTPS

- Registering the Sample in the Developer Portal

- Testing the Sample

- Accessing Log Files

- Next Steps

System Requirements

Setting up and running the samples requires the following:

- The Java library (

alexa-skills-kit-x.x.jar), provided in the

repodirectory.

- Java SE Development Kit (JDK) 7 or later. To download the JDK, visit.

- Maven for dependency management and building the samples.

Additional requirements depend on how you want to test the samples. See the relevant sections below:

Building and Running a Sample from the Command Line

These steps show how to build and run the Hello World sample from the command line on the Linux platform. Doing this lets you send HTTP requests using a command line tool such as

cURL.

The

Launcher class provided in the

src/main/java folder starts a Jetty server that can host a

Speechlet for one of the samples, such as the

HelloWorldSpeechlet. This is a convenient way to run the samples from the command line.

Note that running the sample in this way does not normally make it available through an Internet-accessible endpoint, so you cannot test your code with an actual device in this way. Testing on the command line can still be useful for understanding the requests and responses used by the web service for an Alexa skill. For an example of deploying the sample to the cloud instead, see Setting up Hello World on Elastic Beanstalk, below.

Running Hello World from the command line requires these tools, in addition to the Java requirements noted earlier:

To run a sample from the command line, do the following:

- Download or clone the amzn/alexa-skills-kit-java GitHub repository.

- Create a self-signed certificate, as described in Testing a Custom Skill.

- Use the private key and certificate to set up a Java KeyStore and include information about the KeyStore in the

pom.xmlfile for the samples. See Setting Up an SSL/TLS Java KeyStore.

- Build and run the sample using Maven, then use

cURLto send requests to the sample and note the responses. See Running the Sample from the Command Line.

In these instructions,

<alexa-skills-kit-folder> is the folder you cloned from the GitHub repository.

Setting Up an SSL/TLS Java KeyStore

Follow these steps to create a Java KeyStore and to configure the Hello World sample to build using that KeyStore:

- Create a private key and self-signed certificate. See the detailed instructions in Testing a Custom Skill.

Use the following

opensslcommand to create a PKCS #12 archive file from your private key and certificate.Replace the

private-key.pemand

certificate.pemvalues shown here with the filenames for your key and certificate. Specify a password for the archive when prompted.

openssl pkcs12 \ -keypbe PBE-SHA1-3DES \ -certpbe PBE-SHA1-3DES \ -inkey private-key.pem \ -in certificate.pem -export \ -out keystore.pkcs12 \

Indented para…

Use the following

keytoolcommand to import the PKCS #12 file into a Java KeyStore, specifying a password for both the destination KeyStore and source PKCS #12 archive:

$JAVA_HOME/bin/keytool \ -importkeystore \ -destkeystore java-keystore.jks \ -srckeystore keystore.pkcs12 \ -srcstoretype PKCS12 \

Edit the

pom.xmlfile in

<alexa-skills-kit-folder>

/samplesto provide settings for the

javax.net.ssl.keyStoreand

javax.net.ssl.keyStorePasswordsystem properties. Set the values to the path to the KeyStore and password created in the previous step.

<java classname="Launcher" classpathref="java.sdk.classpath" fork="true"> <sysproperty key="javax.net.ssl.keyStore" value="/insert/your/path/java-keystore.jks" /> <sysproperty key="javax.net.ssl.keyStorePassword" value="insert_your_password" /> </java>

Running the Sample from the Command Line

Build and run the sample using Maven (

mvn):

- In a command-line terminal, navigate to

<alexa-skills-kit-folder>

/samples. This folder contains the

pom.xmlfile you previously edited.

- Build the samples with the following Maven command:

mvn assembly:assembly -DdescriptorId=jar-with-dependencies package

- Once the samples are successfully built, run the sample with the following Maven command:

mvn exec:java -Dexec.executable=”java” -DdisableRequestSignatureCheck=true

The sample starts a server using the

Launcher class. You can then use the command-line utility

cURL to transmit

HTTPS requests to the sample to test its behavior. Note that the

Maven command to run the samples includes the

disableRequestSignature flag. This is because the

SpeechletServlet included in the Java library only accepts incoming HTTPS requests that are signed by Alexa. The

disableRequestSignature flag temporarily disables this signature verification.

To send a request using

cURL, open a new terminal window and transmit a request to formatted as JSON. For example (JSON shown on multiple lines for clarity):

curl -v -k --data-binary '{ "version": "1.0", "session": { "new": true, "sessionId": "session1234", "application": { "applicationId": "amzn1.echo-sdk-ams.app.1234" }, "attributes": {}, "user": { "userId": null } }, "request": { "type": "LaunchRequest", "requestId": "request5678", "timestamp": "2015-05-13T12:34:56Z" } }'

For details about the JSON syntax for a launch request, see JSON Interface Reference for Custom Skills.

The Hello World sample generates a simple response to all requests that includes text meant to be spoken back by Alexa and a card displayed in the Alexa App. The body of this response is in JSON format, similar to the following:

{ "version": "1.0", "response": { "outputSpeech": { "type": "PlainText", "text": "Welcome to the Alexa Skills Kit, you can say hello" }, "card": { "type": "Simple", "title": "HelloWorld", "content": "Welcome to the Alexa Skills Kit, you can say hello" }, "reprompt": { "outputSpeech": { "type": "PlainText", "text": "Welcome to the Alexa Skills Kit, you can say hello" } }, "shouldEndSession": false }, "sessionAttributes": {} }

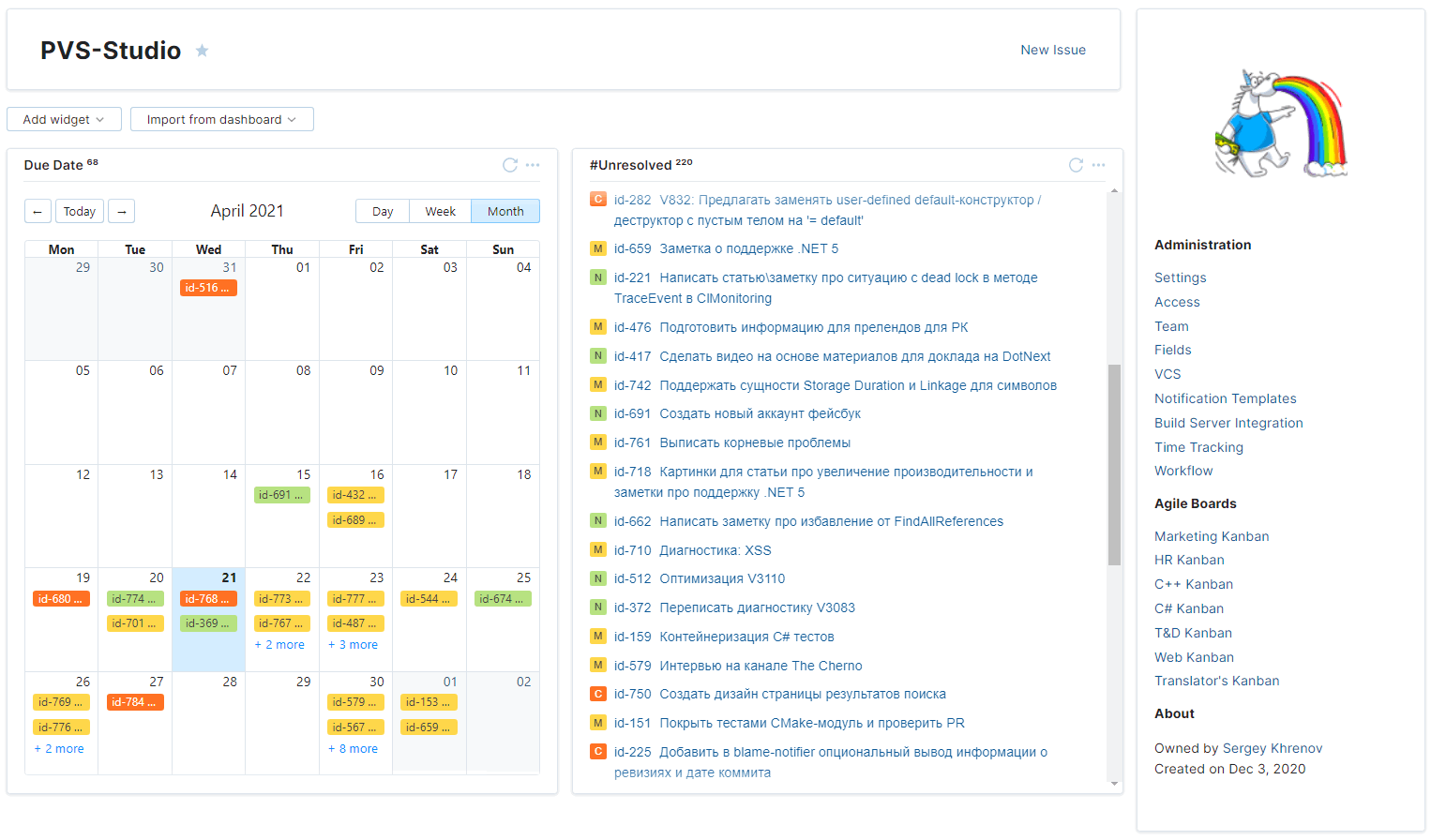

Setting up Hello World on Elastic Beanstalk

To test a skill with the Service Simulator or an Alexa-enabled device, you need to host the web service for the Alexa skill at an Internet-accessible endpoint. There are many different ways you can accomplish this. One possible method is to host the skill on AWS Elastic Beanstalk (a service offering by Amazon Web Services). These steps walk you through setting up the Hello World sample on Elastic Beanstalk.

Deploying Hello World to AWS Elastic Beanstalk requires the following tools, in addition to the Java requirements noted earlier:

- OpenSSL

- An account on Amazon Web Services (AWS)

- AWS Toolkit for Eclipse (note that this plug-in requires Eclipse Java EE)

- AWS Command Line Interface

- Maven and Maven Integration for Eclipse

In these instructions,

<alexa-skills-kit-folder> is the folder you cloned from the GitHub repository.

To deploy a sample to Elastic Beanstalk, do the following:

- Download or clone the amzn/alexa-skills-kit-java GitHub repository.

- If you do not already have an account on AWS, go to Amazon Web Services and create an account.

- Install the AWS Toolkit for Eclipse.

Configure the AWS Toolkit for Eclipse with your account credentials: a. Log in to the AWS Console and navigate to Identity and Access Management (IAM). b. Create a new IAM user and grant the user full access to Elastic Beanstalk. c. Select the option to generate an access key for the new user. Choose to download these credentials. d. In Eclipse, click on Window and then Preferences. In the AWS Toolkit section, enter your Access Key ID and Secret Access Key.

Run Maven once to add the Alexa Skills Kit Java library (

alexa-skills-kit-x.x.jar) to your local repository: a. In a command line, navigate to

<alexa-skills-kit-folder>

/samples. b. Run the command:

mvn install.

This copies the compiled

jarfile from

<alexa-skills-kit-folder>

/repointo your local Maven repository, so it will be available when you build the sample later.

- Create a new Maven project using the

maven-archetype-webapparchetype and import the sample code. See Creating the Maven Webapp Project and Importing the Code.

- Add a class that extends

SpeechletServletto host the

HelloWorldSpeechletand set up servlet mapping in

web.xml. See Adding a SpeechletServlet and Setting up Servlet Mapping.

- Deploy the project to AWS Elastic Beanstalk. See Deploying the Sample to Elastic Beanstalk.

- Configure the new Elastic Beanstalk environment to use HTTPS. See Configuring the Elastic Beanstalk Environment to Use HTTPS.

- Register the sample in the Developer Portal. See Registering the Sample in the Developer Portal.

- Test the skill with either the Service Simulator on the developer portal, or an Alexa-enabled device registered to your developer portal account. See Testing the Sample.

Creating the Maven Webapp Project and Importing the Code

The Maven

maven-archetype-webapp archetype creates a new project that is structured as a web application and set up to build with Maven.

When setting up your project, note that you can drag files and folders from your file system into Eclipse. When prompted, select the option to copy the files into the project.

- In Eclipse, click File > New > Maven Project.

- If this option is not available, choose File > New > Project, expand Maven and select the Maven Project option.

- If Maven is not shown in the New Project dialog box, make sure that your version of Eclipse includes Maven Integration for Eclipse.

- Select a location for the project. Make sure the Create simple project option is not selected.

- When prompted to select an archetype, select maven-archetype-webapp. Note that the list of archetypes is long, so you may want to filter it by typing “webapp” in the filter box.

- Enter a Group Id and Artifact Id for the project. For instance, you can set both of these fields to alexa-skills-kit-samples.

This creates a new, blank project set up as a web app. You now need to add the sample source code and dependencies:

- The project should have a

src/mainfolder. If

maindoes not already contain a

javafolder, create a new folder called

java. The end result should be a structure like this:

src/main/java.

- Copy the

helloworldfolder from

<alexa-skills-kit-folder>

/samples/src/main/javainto the

src/main/javafolder in the new project.

- You can do this either by dragging

helloworldinto eclipse and selecting the Copy files and folders option, or copying the folder within the file system.

- The project should now contain a single package called

helloworld, containing two classes (

HelloWorldSpeechletand

HelloWorldSpeechletRequestStreamHandler. Note that the second class is provided for Lambda and not used in this example).

- Copy the file

<alexa-skills-kit-folder>

/samples/pom.xmlinto the root of the Maven project, replacing the

pom.xmlthat was generated when creating the project. The

pom.xmlprovided with the samples is already setup with all of the dependencies necessary to build the sample. However, it is set up to build a JAR file rather than a WAR.

- Edit the

pom.xmlfile and make the following changes:

- Change the

<packaging>setting to

war.

- Under

<build>, add the following line:

<finalName>alexa-skills-kit-samples</finalName>

- Right-click on the project, click Maven and then click Update Project.

Adding a SpeechletServlet and Setting up Servlet Mapping

The Java library includes a

SpeechletServlet class. This implementation of a Java EE servlet handles serializing and deserializing the body of the HTTP request and calls the appropriate

Speechlet methods based on the request (

onLaunch(),

onIntent, and so on).

To host your web service on Elastic Beanstalk, you need to extend this class and add a constructor that instantiates the appropriate

Speechlet class in the sample (

HelloWorldSpeechlet). Then, set up servlet mapping in the

web.xml file for the project.

To create the

SpeechletServlet:

- In the

helloworldpackage, create a new class that extends

com.amazon.speech.speechlet.servlet.SpeechletServlet. For this example, name the new class

HelloWorldServlet.

- Implement a default constructor for the new class that instantiates

HelloWorldSpeechletby calling

setSpeechlet().

The

HelloWorldServlet class should look like this:

package helloworld; import com.amazon.speech.speechlet.servlet.SpeechletServlet; public class HelloWorldServlet extends SpeechletServlet { public HelloWorldServlet() { this.setSpeechlet(new HelloWorldSpeechlet()); } }

To set up the servlet mapping:

- Open the

src/main/webapp/WEB-INF/web.xmlfile in the project.

- Add the

<servlet>and

<servlet-mapping>tags to identify the servlet class and map the class to a URL.

The

<servlet> and

<servlet-mapping> sections should look like this:

<servlet> <servlet-name>HelloWorldServlet</servlet-name> <servlet-class>helloworld.HelloWorldServlet</servlet-class> </servlet> <servlet-mapping> <servlet-name>HelloWorldServlet</servlet-name> <url-pattern>/hello</url-pattern> </servlet-mapping>

After making all of these updates, save all files, then right-click on the project, click Maven and then click Update Project.

At this point, the project should be fully set up. Do a build just to ensure that everything builds correctly. Right-click the project, click Run As and then click Maven install. Verify that a

war file is created in the

target folder for the project.

Deploying the Sample to Elastic Beanstalk

You can deploy web project directly from Eclipse if you are using the AWS Toolkit for Eclipse. The initial deployment creates a new application and environment. An environment represents a particular version of a web service, deployed onto AWS resources. The environment name is used to determine the endpoint for the web service. See Elastic Beanstalk Components for more information.

Once the web service is deployed, you can make code changes in Eclipse and re-deploy to the same environment. This updates the environment with a new version of your web service.

AWS Pricing: There is no extra charge to use Elastic Beanstalk. You pay for the actual AWS resources your application uses (such as EC2). Note that pricing for EC2 usage differs between regions – see Amazon EC2 Pricing for details.

To deploy the Hello World sample to Elastic Beanstalk:

- Use Maven to build a

warfile for the project: Right-click the project, click Run As and then click Maven install. Verify that a

warfile is created in the

targetfolder for the project.

- In the Eclipse Project Explorer, right-click the project and click Amazon Web Services then Deploy to AWS Elastic Beanstalk.

- Select the Manually define a new server option.

- For the server type, select AWS Elastic Beanstalk for Tomcat 8, then click Next.

Select the Region where you want to deploy the application.

Note: All calls from Alexa come from the US East (N. Virginia) AWS region. Therefore, for best performance and reduced cost, create your Elastic Beanstalk environment in the US East (N. Virginia) region.

- Enter an Application Name and Environment Name and click Finish.

Deploying the application to Elastic Beanstalk may take several minutes.

At this point, you should be able to connect to the environment in a browser. However, your service cannot take requests from Alexa until you configure the environment to use HTTPS.

Configuring the Elastic Beanstalk Environment to Use HTTPS

To meet the security requirements of Alexa, the endpoint for your web service must present a valid SSL certificate. When testing on Elastic Beanstalk, you can use a self-signed certificate for this. You need to configure the Elastic Beanstalk environment to present the signed certificate.

You do this by uploading your certificate as a server certificate and then configuring the Elastic Beanstalk Load Balancer for your environment to use HTTPS.

- Create a private key and self-signed certificate.

- See the detailed instructions in Testing a Custom Skill. Be sure to specify the domain name for your new Elastic Beanstalk endpoint when creating the certificate.

- Make a note of the

.pemfiles for your certificate and private key.

Install and configure the AWS Command Line Interface if you have not already done so.

Use the following AWS command to upload your self-signed certificate to AWS as a server certificate.

aws iam upload-server-certificate \ --server-certificate-name CertificateName \ --certificate-body \ --private-key

- For the

server-certificate-name, specify a name to identify the certificate in AWS.

- For the

certificate-body, specify the path and filename of the

.pemfile for the certificate.

- For the

private-key, specify the path and filename of the

.pemfile for your private key.

- Log in to the AWS Management Console. Navigate to Elastic Beanstalk and then navigate to your new environment.

- In the left menu, click Configuration, then open the Load Balancing section.

Make the following changes:

- Set Secure listener port to 443.

- Set Protocol to HTTPS.

- From the SSL Certificate ID drop-down list, select the name of the server certificate you uploaded in step 3.

- Save your changes. Elastic Beanstalk automatically updates your environment.

- Verify you can connect to your environment’s URL from a browser using the.

Registering the Sample in the Developer Portal

Finally, you need to register the sample in the developer portal. This makes it possible for you to test it. You don’t need to fully complete all registration fields. For testing, you must enter at a minimum:

- Skill Information: Skill Type (Custom Interaction Model), Name, and Invocation Name.

- Interaction Model: Intent Schema, Sample Utterances, and Custom Slot Types.

- Configuration: Endpoint. Select the HTTPS option and enter the URL for your service’s endpoint.

- SSL Certificate: Select one of the options. If you want to use a self-signed SSL certificate for testing, create that certificate and upload it to the Developer Portal.

- Test: Set to Enabled.

For the steps to register the skill, see Registering and Managing Custom Skills in the Developer Portal. Use the values in the following table.

Testing the Sample

Once the sample is deployed to Elastic Beanstalk, configured for HTTPS, and registered on the developer portal, you should be able to invoke the skill by saying one of the phrases for interacting with skills:

- “Alexa, ask <invocation name> to <command>.”

- “Alexa, tell <invocation name> to <command>.”

- “Alexa, talk to <invocation name>”

For a list of supported phrases, see Supported Phrases to Begin a Conversation. For details about the provided samples and possible intents, see Using the Alexa Skills Kit Samples (Custom Skills).

If you want to experiment with changes, make your code changes in the project and then re-deploy the code to Elastic Beanstalk. You can re-deploy to the same Elastic Beanstalk environment:

- In the Eclipse Project Explorer, right-click the project and choose Amazon Web Services > Deploy to AWS Elastic Beanstalk.

- In the Run On Server dialog box, select Choose an Existing Server, select the existing environment to which you want to deploy the web service, then click Finish.

Accessing Log Files

Log files can be very useful when debugging and troubleshooting. In an Elastic Beanstalk configuration set up as described here, you can access log files for the web service in three ways:

- View a snapshot of the log files from the AWS Console. This snapshot includes the last 100 lines from each log file, all in a single view or download.

- Download all the logs from AWS Console.

- Configure your Beanstalk environment to automatically publish the logs to AWS S3 bucket.

For details about these different options, see Working with Logs.

The provided samples use SLF4J and log4j for logging. By default this writes log messages to the console terminal. To write log messages to a log file stored with your Elastic Beanstalk environment, you need to configure the logger with a

log4j.properties configuration file. Add this file to the

Java Resources\src directory in your Java Web Project.

For example, the following

log4j.properties file configures the logger to write log entries to a file called

/var/log/tomcat8/helloworld.log:

log4j.logger.helloworld=info log4j.rootLogger=info, tail log4j.appender.tail=org.apache.log4j.FileAppender log4j.appender.tail.File=${catalina.base}/logs/helloworld.log log4j.appender.tail.layout=org.apache.log4j.PatternLayout log4j.appender.tail.layout.ConversionPattern=%d: %-5p %c{1} - %m%n

See SLF4J and log4j for information about configuring logging.

The

HelloWorldSpeechlet writes a log entry for each type of request the service receives:

private static final Logger log = LoggerFactory.getLogger(HelloWorldSpeechlet.class); ... @Override public SpeechletResponse onLaunch(final LaunchRequest request, final Session session) throws SpeechletException { log.info("onLaunch requestId={}, sessionId={}", request.getRequestId(), session.getSessionId()); ... }

Using the above configuration and launching Hello World generates log entries similar to the following (as viewed in the snapshot of the last 100 lines of logs in Elastic Beanstalk):

------------------------------------- /var/log/tomcat8/helloworld.log ------------------------------------- 2015-03-27 18:06:48,976: INFO HelloWorldSpeechlet - onSessionStarted 2015-03-27 18:06:49,087: INFO HelloWorldSpeechlet - onLaunch | https://developer.amazon.com/docs/custom-skills/deploy-a-sample-skill-as-a-web-service.html | CC-MAIN-2017-43 | refinedweb | 3,508 | 56.96 |

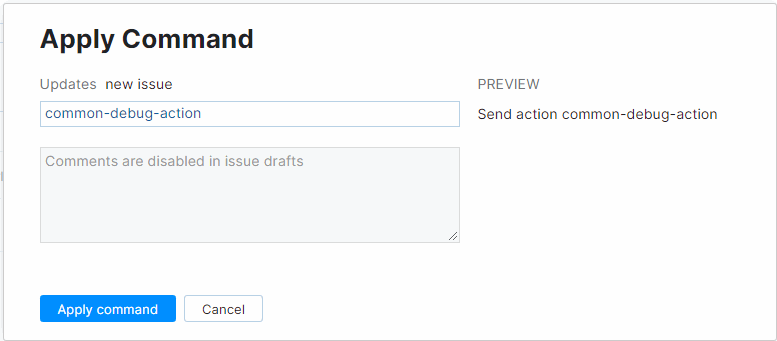

JSP forwarding

JSP forwarding What is JSP forwarding?

Hi,

You can use the JSP forward tag for jsp forwarding. This will help you forward your request to some other page on the same server.

Here is the code for jsp forwarding

problem in forwarding response

problem in forwarding response My response is not getting displayed. i have used RequestDispatcher.forward,RequestDispatcher.include and response.sendRedirect.There is no error on console nor any javascript error

Redirecting and forwarding to views

In this section, you will learn about redirecting and forwarding views through prefixes

Array Creation - JSP-Servlet

Array Creation hi i have a requirement in which i need to convert... 1000 comma separated string values now i convert it into single array like

String x[]=csvvalue.split(",");

this gives me an array containing all the strings

JSP Array

JSP Array

.... The below example gives you a

demo on use of Array in JSP.

Understand with Example

The Tutorial illustrate an example from 'JSP Array'. To understand the

example we

Array problem - JSP-Servlet

Array problem Respected Sir/Madam,

I am having a pop up window in which the database values are available.. Its in the format of:

One radio button ID Name

Like this there is a table with n

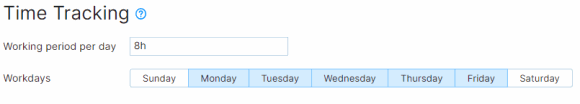

JSP Array Length

JSP Array Length

JSP Array Length is performed when you want to compute the length of array in

JSP.

Understand with Example

The Tutorial to access JQuery array in jsp page?

how to access JQuery array in jsp page? JQury Array:

var elems =this.value;

var arr = jQuery.makeArray(elems);

How to get in jsp page

getting html list in a array

getting html list in a array hi i want to get html unordered list in a array using jsp

Convert a tsring to an array - JSP-Interview Questions

want to convert to an array that i wwant to process it character by chracter...:

The String is strContent

the array is Mystr

F11633 is a text file..., 0);

}

jsp:include to Collection

The values into Array

Java

Struts

JSP

J2EE

Download this example...

Array to Collection

In this example we are converting values of an array into

collection create an array in which no element is duplicate.

if duplicate then tell to user duplicate.

duplicacy is tell to user during when he enter the element

retrieve JSON array objects in javascript

retrieve JSON array objects in javascript I am getting one value by JSON array, but how can i store multiple values in json array and how can i...", how? please ignore my db

connection in jsp

JSONObject

How to pass Array of string from action class to jsp page

How to pass Array of string from action class to jsp page this is my action class

package login.ipm;

import java.sql.*;

import java.util.ArrayList... jsp page is

<%--

Document : select_service

Created on : Aug 31

Passing a 2 Dimentional Array From one Jsp to Another Jsp file and Retreving it

Passing a 2 Dimentional Array From one Jsp to Another Jsp file and Retreving it Hi

I have a 2 dimensional integer array declared and values are dynamically stored into it in one jsp file .I passed this array into another Jsp

PHP Array Operator

] => JSP [P] => PHP [A] => ASP )

Values of array c are:

Array ( [S] => JSP [H] => PHP [P] => ASP )

Union of arrays a and b: Array ( [J... of arrays b and c: Array ( [S] => JSP [H] => PHP [P] => ASP [j] =>

Storing content from file path to an array

the contents in this text file to an array. I am using jsp.

I can access my path but how to store the contents in an array?

I am looking forward in hearing from you

saving form bean with Array of objects (collection) - Struts

saving form bean with Array of objects (collection) Hi all... thanks..:) I am facing problem to capture my array of objects(Order) in form bean into action class, the array i get from form is NULL..:( Let me explain

jsp - JSP-Servlet

JSP associative array Does JSP objects acts like associative array

JSP - JSP-Servlet

one JSP file to another file.This will redirect to the different page without... or forwarding. This is the servlet API equivalent to SSI includes.

The uri

Forwarding Messages using Java Mail

Forwarding Messages using Java Mail

This Example shows you how to forward a message using javamail api. there is no method to forward a mail from one user to another

PHP Push Array to Array

PHP Push Array to Array array_push() function using array in PHP

Declaring string array

Declaring string array

An array is the collection of same data type.

Suppose if we have a declare an array of type String

JSP - JSP-Servlet

Difference between jsp forward and sendRedirect What is difference between jsp forward and send Redirect? Difference between jsp forward and sendRedirectJSP forward action (<jsp: forward/>)jsp forward action

jsp forward action tag

application context as the forwarding JSP file.

Syntax of forward action Tag:

<...jsp forward action tag Defined jsp forward action tag ?

The <jsp:forward> element forwards the request object containing

JavaScript Array of checkboxes

JavaScript Array of checkboxes

... that help you in

understanding JavaScript Array of checkboxes. For this we...;br>

<input type=checkbox name=scripts value='Jsp

java - JSP-Interview Questions

file, another JSP file, or a servlet. It should be noted that the target file must be in the same application context as the forwarding JSP file...() and forward() methods? Hi

JSP forward action transfers the control

Access value of array using OGNL in struts2.

Access value of array using OGNL in struts2.

Here, you will see how to access value of array in struts2 using OGNL.

1-index.jsp

<%@taglib...] : <s:property<br/>

Value array list

jsp forward not functioning

".

Is it because of that, the jsp:forward is not forwarding...jsp forward not functioning If the form in AddStudent.jsp... the student in the database (works OK)

}

%>}else{%>

<jsp

array example

array example giving input from outside for array example

Array sort

Array sort Program that uses a function to sort an array of integers

Attendance Generation - JSP-Servlet

no forwarding of leaves for next month otherwise (no loss of pay)

2 if i used more than 2 leaves no forwarding of leaves for next month but loss of pay should

length in array

length in array wat s length in array

Array of structure

Array of structure create employment details with necessary field using array of structure

array ADT

array ADT Write a program using array_ADT to retrieve a list of elements from a file

array ADT

array ADT Write a program using array_ADT to retrieve a list of elements from a file

Java array

Java array How can one prove that the array is not null but empty

Array Sorter

Array Sorter I need a program that will ask the user to either "Enter an Array" or to "Exit the program" If the user want to enter an array the are asked to enter ten numbers. Then those ten numbers are stored in an array

Array and input

Array and input if this is my array

int briefcases [ ]={1,2,3,4,5,6,7,8,9,10,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26};

i want to ask user to input one of the above numbers then i want to print the array without the number

array ADT

array ADT Write a program using array_ADT to retrieve a list of URLs from a file. In your program take an array index as the input and delete the entry corresponding to that index

Computer - JSP-Interview Questions

ForwardServlet extends HttpServlet{

private static final String forwardTo = "/jsp/ResultServlets";

private static final String includeIn = "/jsp/ResultServlets... ServletException, IOException {

String mode = req.getParameter("mode");

// Forwarding

using array

using array Circular left shift array element by one position

array string

array string how to sort strings with out using any functions

length in array

length in array hi

wat is length in array and where s it used