text stringlengths 454 608k | url stringlengths 17 896 | dump stringclasses 91

values | source stringclasses 1

value | word_count int64 101 114k | flesch_reading_ease float64 50 104 |

|---|---|---|---|---|---|

score:2

In my case this is occur bcoz of i declared

<form> inside another

<form/> tag.

score:5

You can portal a form like this:

import Portal from '@material-ui/core/Portal'; const FooComponent = (props) => { const portalRef = useRef(null); return <> <form> First form <div ref={portalRef} /> </form> <Portal container={portalRef.current}> <form>Another form here</form> </Portal> </>; }

In the example above I use the react material-ui Portal component. But you can try to implement it with React Portals as well

score:24

I faced this issue when using

ant design table and turns out its not ant design which throws the warning. It's the web standards description

"Every form must be enclosed within a FORM element. There can be several forms in a single document, but the FORM element can't be nested."

So, there should not be a form tag inside a form tag.

To solve the issue (in our case), remove the

Form tag inside the DynamicFieldSet "return" and replace with a

div tag

Hope it helps :)

Source: stackoverflow.com

Related Query

- React: how to use child FormItem components without getting Warning: validateDOMNesting: <form> cannot appear as a descendant of <form>

- How to use async await in React components without useEffect

- How does react components use data without passing as props

- How to use React without unsafe inline JavaScript/CSS code?

- How to get values from child components in React

- How do I make components in React Native without using JSX?

- How to use SCSS variables into my React components

- How to style child components in React with CSS Modules

- How to use react j s components in react native app?

- How will React 0.14's Stateless Components offer performance improvements without shouldComponentUpdate?

- How can I dispatch from child components in React Redux?

- How to handle click events on child components in React.js, is there a React "way"?

- How to create custom React Native components with child nodes

- How to use redux-toolkit createSlice with React class components

- How to use jinja2 server side rendering alongside react without violating inline-script CSP

- How to use Media Queries inside a React Styled Components Keyframe?

- How to deal with React Native animated.timing in same child components

- How to use react components from other files

- how to import data in react from a js file to use in components

- React Typescript how send props and use it in child component

- How can I use React Material UI's transition components to animate adding an item to a list?

- How I can render react components without jsx format?

- How to update (re-render) the child components in React when the parent's state change?

- How to style child components in react styled-components

- How to use this.refs for a list of child components

- How does React re-use child components / keep the state of child components when re-rendering the parent component?

- How to traverse all React components including DOM components without TestUtils.findAllInRenderedTree?

- how to use curly brackets without it being an expression in react

- How to use this.props in React Styled Components

- How to use client.query without JSX components in Apollo Client?

More Query from same tag

- Enforce prop should be either of two functional components

- add a plugin from a library in tailwind

- Delete from array React

- passing function between a parent component and child component problem

- Why componentWillMount should not be used?

- Redux mapDispatch ToProps. What data should i pass as an argument?

- How to use Media Queries inside a React Styled Components Keyframe?

- how can I pass a useState props using TypeScript to a child component

- State is not upating in renderer() component

- useState with an argument in it's array is breaking a setInterval and makes it glitchy and erratic

- Updating Multiple Documents In Mongoose With Different Values Each -- Express Js

- Working with the same ReactJS form component on the same page

- next.js swr I do not want to execute it only at the time of initial drawing

- How to add header to axios.create in react/redux app

- How to show a message after Redux dispatch

- How to communicate between React components which do not share a parent?

- React Antd table rendering before data is populated

- React-UseState hook-> state update does not re-render the page immediately

- How can I use create-react-app with Spring Boot?

- I have an object. I need to print its name as <option> on an <selector> but return another variable

- Server side rendering with loadable components not working

- Trying to return a promise from an action creator dispatched from mapDispatchToProps

- Legend not displaying on Line or Bar chart - React-Chartjs-2

- TypeScript is not recognizing reducer, shows type as any on hover

- module import work with react in dev but not in build

- Can't get the cookies from golang server inside the react js project

- the props change when the state change

- React semantic ui table.row set row active onclick

- React and express

- React.js, Displaying Two Svg Images Next To Each Other With Space In Between | https://www.appsloveworld.com/reactjs/100/12/react-how-to-use-child-formitem-components-without-getting-warning-validatedomn | CC-MAIN-2022-40 | refinedweb | 851 | 50.57 |

Rails 5.1 loves Javascript

Ruby/Rails developers have had a controversial relationship with Javascript for the past years. True or false, Javascript was accused of being the holdback for web; we all know that this is not true anymore. The language has improved significantly over the past few years; partly due to advent of ES6.

Furthermore, the rivalry between Rails and Javascript doesn’t exist anymore. If you want to create ambitious web applications the only option is Javascript! Basically, there is no other choice.

This makes it possible for the two ecosystems to thrive next to each other. Javascript is great for frontend work; also it could be an option for backend implementation; however some people prefer to write their backend in other languages. For that, Ruby/Rails could be a great choice.

Apparently that hate relationship is improving now with onset of Rails 5.1. The first beta version of 5.1 is out and it comes with out of the box support for Webpack/React/Angular/Vue.js. Surprise!

Rails 5.1 is a huge step in the right direction. It includes

webpacker gem which lets us to use Webpack to manage app-like JavaScript modules in Rails.

Webpacker makes it easy to use the JavaScript preprocessor and bundler Webpack to manage application-like JavaScript in Rails.

The nice thing about webpacker is that it coexists with asset pipeline, as the purpose is to use it for app-like Javascript (served from

app/javascript/packs directory), not images, css or even Javascript snippets. These will continue to be served from

app/assets directory. More details on this later.

Let’s see how we can harness the power of Rails 5.1 to create a Rails/React app. Note that Rails 5.1 is a beta version; so let’s not rely on it for production.

First thing to do is to install Rails 5.1. Let’s create a

Gemfile with the following content:

source ''

ruby '2.2.5'

gem 'rails', github: 'rails/rails'

This makes sure that we are pulling the most recent version of rails from master branch on github (at this moment it’s Rails 5.1.0beta1).

Then we just need to run

bundle install to install Rails.

Voila!

Now that we have Rails 5.1 installed, we can run the following to create a React friendly Rails app:

rails new myapp --webpack=react

When this command succeeds we have a new folder named myapp in the current directory. We need to

cd into it and run

bundle install to install all dependencies. The next necessary step is to install javascript dependencies via yarn or npm. If we settle on yarn for this, we just need to run

yarn, this will install our Javascript dependencies.

Note: to use webpacker with angular and Vue create your new app as following:

rails new myapp --webpack=angular #webpacker with angular

rails new myapp --webpack=vue #webpacker with Vue.js

At this point, Rails has created a javascript friendly app for us. If you look under

app/javascript you see a new folder named

packs with two files in it (application.js and hello_react.js). This packs folder is a new thing added in Rails 5.1.

app/javascript

└── packs

├── application.js

└── hello_react.js1 directory, 2 files

Everything under

packs directory is automatically compiled by Webpack. The best practice is to place actual application logic in a relevant structure within

app/javascript and only use these pack files to reference that code so it’ll be compiled. Let’s have a look at

hello_react.js:

import React from 'react'

import ReactDOM from 'react-dom'class Hello extends React.Component {

render() {

return <div>Hello {this.props.name}!</div>

}

}document.addEventListener("DOMContentLoaded", e => {

ReactDOM.render(<Hello name="React" />, document.body.appendChild(document.createElement('div')))

})

It’s a react component! Rails has generated us a sample react component that we can render in a view whenever we want. We can even write

jsx in it. What could be better!?

This react component only renders

Hello React but we can make complex components if we need to.

The next step is to render our component using Rails. Let’s create a Rails controller:

bundle exec rails g controller Pages index

This command created a

PagesController with an index method in it. It also creates us a view template at

app/views/pages/index.html.erb. To render our React component, we just need to replace the content of the file with:

<%= javascript_pack_tag 'hello_react' %>

This tells Rails to embed our React component in this view.

We have everything ready now. We just need to run our rails server in one terminal and run our webpack-dev-server in another one; but before that let’s add a small tweak to our

config/environments/development.rb to enable javascript_pack_tag to load assets from webpack-dev-server:

config.x.webpacker[:dev_server_host] = ""

We are ready to run our servers now. In one terminal, run

bundle exec rails server to start Rails server and in the other one run:

./bin/webpack-dev-server --host 127.0.0.1

If you open your browser and navigate to you should see

Hello React on your screen.

Note: you may face

No data received ERR_EMPTY_RESPONSE error when running your server. Have a look at this issue for solutions.

Dissecting Webpacker

According to the docs:

Webpacker ships with three binstubs:

./bin/webpack,

./bin/webpack-watcherand

./bin/webpack-dev-server. They're thin wrappers around the standard webpack.js executable, just to ensure that the right configuration file is loaded.

./bin/webpack-dev-server will launch a Webpack Dev Server listening on serving our pack files. It will recompile the files as we make changes. We set

config.x.webpacker[:dev_server_host] in our

development.rb to tell Webpacker to load our packs from the Webpack Dev Server. This setup allows us to leverage advanced Webpack features, such as Hot Module Replacement. If we want to enable Hot Module Loading we just need to send a

--hot option to webpack-dev-server binstub when running it:

./bin/webpack-dev-server --hot --host 127.0.0.1

Webpacker provides us with a default set of configuration for development and production environments. If you look under

config/webpack you see:

config/webpack

├── development.js

├── production.js

└── shared.js0 directories, 3 files

Feel free to change them according to your needs but in most basic cases the default configs are good enough.

Linking to Sprockets’ assets

Lots of times we need to load normal assets that are served using asset pipeline. To do so, we just need to add

.erb extension to our javascript file, then we can use Sprockets’ asset helpers to load the asset:

// app/javascript/my_pack/example.js.erb

<% helpers = ActionController::Base.helpers %>

var catImagePath = "<%= helpers.image_path('kitten.png') %>";

According to the docs, this has been enabled by the

rails-erb-loader loader rule in

config/webpack/shared.js.

Deploying to Heroku

The first step to deploy our app on Heroku is to add a

Procfile with following content:

web: bundle exec puma -p $PORT

After that we just need to create a new heroku app and add respective buildpacks for node and ruby:

heroku create

heroku buildpacks:add --index 1 heroku/nodejs

heroku buildpacks:add --index 2 heroku/ruby

After that we can push our app to heroku with:

git push heroku master

Done!

You can find the full source code for this example here.

Resources: | https://daqo.medium.com/rails-5-1-loves-javascript-a1d84d5318b?readmore=1&source=user_profile---------6---------------------------- | CC-MAIN-2021-39 | refinedweb | 1,246 | 58.08 |

Jaime Rodriguez On Windows Store apps, Windows Phone, HTML and XAML.

As usual, raw, unedited, useful info from the Microsoft’s internal WPF discussions lists.

Answer: You must use call the EnableModelessKeyboardInterop method for keyboard to work.

Answer: That's right - we do a number of things to sync with rendering. Time only "changes" at the start of a render pass, which is scheduled differently based on a number of factors (Desktop Window Manager present and enabled? Monitor refresh rate, desired framerate for animations, etc), and then too the time chosen is actually "in the future" a bit because we're trying to produce a set of changes that will be correct when they hit the screen. To do this, we estimate the future presentation time for a given UI thread's render pass. My guess is that this is what they're seeing in this case

Answer:Blend’s behaviors are an attached property, but publicly they are not exposed as a DP- you can do attached properties that are not DP’s by having just the static GetProperty/SetProperty.We use this syntax to keep the behavior syntax smaller- by not using a real DP here we were able to default the collection to having a value and remove 2 lines of XAML. If this were a real DP then you’d have to add the collection to the XAML as well:

<Button>

<i:Interaction.Behaviors>

<i:BehaviorCollection>

<s:SimpleBehavior/>

</i:BehaviorCollection>

</i:Interaction.Behaviors>

</Button>

In addition behaviors cannot be used inside of styles; the core issue is that we intentionally made behaviors not sharable- you can’t apply the same behavior to multiple elements. The reason is that if you use the WPF animation API as an example that it adds a ton of complexity to make the types sharable and it really detracts from the level of simplicity that we were looking for in Behaviors.

In WPF, everything applied through a style is shared across each element that it’s applied to. To get around this in early prototypes I used a trick with Freezables and the CoerceValueCallback to clone the behaviors every time they’re applied to an element and are already applied to something else, but none of this is present in SL and it can lead to some unexpected runtime behavior.

Answer:We don’t have any automatic selection logic. You can iterate over all of the frames and find the one you want based upon each frame’s properties. You should be able to get them all from a frame by doing frame.Decoder.Frames. Alternatively, you can just do BitmapDecoder.Create and read the frames that way rather than indirectly through BitmapFrame.Create.Note: pre-Win7 WIC does not support Vista’s PNG icon frames. If you hit a PNG frame you will get an exception on Vista and XP.

1. Is there a way to get WPF to listen to these changes given that WPF does not appear to subscribe to change events via PropertyDescriptor.AddValueChanged? It appears that WPF will bind to the properties offered through the type descriptor, but WPF does not monitor that type descriptor for value changes. It does appear to listen to INotifyPropertyChanged events, but this is not that useful if we are extending and object with custom properties.

2. Is there a way to have WPF bypass ICustomTypeDescriptor.GetProperties() when setting up a binding? In this case, custom types may hide the thing we actually want to bind to, so it would be useful to force WPF not to use the custom descriptor during binding.

Answer:1. WPF will listen to ValueChanged if the object doesn’t implement INotifyPropertyChanged. If both are available we only listen to INPC, to avoid duplicate notifications. There are objects that expose both – chiefly ADO.Net’s DataRowView – so it’s a real issue. If you have appropriate access, you can get the object to raise the PropertyChanged event with a property name for your custom property. But if it’s not your object (and its OnPropertyChanged method is private), you’re out of luck.

2. No. We actually call TypeDescriptor.GetProperties(item), which in turn calls ICTD.GetProperties, so it’s out of our hands. Usually people want the custom descriptor to override the native one; we don’t have any way to ask for the other way around. (I’m curious what your scenario is, though. This is the first time someone’s asked for this.)

Subject: Binding to dictionary with multiple indexers If I add a second indexer to a collection class, it will no longer bind to WPF FrameworkElement such that its index path can be referenced. The following works fine on regular collection, But I add a second indexer, or if the collection is keyed, the binding no longer works:

Path=[0].

Answer: Your two indexers have different signatures, probably something like

public object this[int index] { …}

public object this[string s] {…}

The property path is declared in XAML, where everything is a string. So when you say “Path=[0]”, WPF has to decide whether you mean the first indexer with argument (int)0, or the second indexer with argument (string)”0”. There’s nothing in the XAML to indicate which one you mean, so I think we choose the line of least resistance and pick the second indexer – it requires no type conversion.

At any rate, you can provide the missing guidance by saying

Path=[(sys:Int32)0]

assuming you’ve previously declared

xmlns:sys=”clr-namespace:System;assembly=mscorlib”

This says what you think it does: “use the indexer that takes an int argument”.

Subject: SketchFlow transitions between screens? Is it possible to create transitions between screens in SketchFlow?

Answer: It should be doing this by default. The default transition is a fade, if you right-click a navigation connection in the map, there are a few more to choose from “Transition Styles”.

Subject: RE: How to improve the text rendering of your .NET 4.0 WPF Applications

Answer: Important caveat: Do not use TextFormattingMode=”Display” on text that is going to be scaled by a RenderTransform (or scaled in any way other than by changing the font size). It will end up blurry.

Some more recommendations:

Subject: RE: Partner's question about WPF-Image convert & Flash support

Answer:

There are external EMF->XAML converters:

Indeed, WPF does not provide any native flash rendering. I doubt we ever will. Some options: 1) Host a web browser. Yes this is HWND interop code, so there are some compromises: the infamous airspace issues being the most prominent.

2) For display only, you might look at a DirectShow filter, like:

Subject: Property increment value i nBlend If you expose a numeric property on a type and it is available in the property window you can change the value by dragging the cursor up, down, left and right. Is there a way to tell Blend what the incremental value should be?

Yes, you need to supply a design-time assembly that uses the NumberIncrementsAttribute.

public sealed class NumberIncrementsAttribute : Attribute, IIndexableAttribute

Name:

Microsoft.Windows.Design.PropertyEditing.NumberIncrementsAttribute

Assembly:

Microsoft.Windows.Design.Interaction, Version=4.0.0.0

Subject: pack: registration?

I forget, how do you open a pack: URI? I tried using WebRequest.Create, but that gave me an error saying that pack: wasn’t registered… Then I tried using PackUriHelper to cause it’s static cctor to run, to try and get the prefix registered… PackWebRequest isn’t constructable (from what I can see)…

[Multiple interesting data points]

#1 System.Windows.Application has a static ctor where ResourceContainer package is added to PreloadedPackages so that downstream PackWebRequestFactory can find it. So you need to be running in the context of an Avalon application to get this.

#2 If you use PackUriHelper class, the “pack:” prefix gets registered with the System.Uri class and this helps in performing the correct parsing and construction of System.Uri objects for pack Uris.

The other registration is of the pack: scheme with WebRequest so that you can use WebRequest.Create method to return the PackWebRequest object. This can be done in your code. PackWebRequestFactory does not register it. You could use PackWebRequestFactory directly to get the PackWebRequest too –

PackWebRequest request = (PackWebRequest)((IWebRequestCreate)new PackWebRequestFactory()).Create(packUri);

Subject: RichTextBox viewable area

Is there any way to get the viewable area in a RichTextBox?

Answer:

TextPointer upperLeftCorner = rtb.GetPositionFromPoint(new Point(0, 0), true /* snapToText */); TextPointer lowerRightCorner = rtb.GetPositionFromPoint(new Point(rtb.ActualWidth, rtb.ActualHeight), true /* snapToText */);

You could refine this to get a tighter fit by looking at RichTextBox.ViewportWidth/ViewportHeight, to omit space for surrounding chrome like the possibly visible ScollViewer or Border. But for optimizing a property set on the viewable area first, including a small amount of extra content around the viewport is probably fine.

Subject: ValidatesOnDataErrors not working on bindings inside an ItemsControl.

I noticed that ValidatesOnDataErrors is not working on bindings inside an ItemsControl.ItemTemplate. I confirmed this problem has been fixed in Framework 4.0 but our target is 3.5.

the workaround is to use the “long” form of ValidatesOnDataErrors:

<Binding.ValidationRules>

<DataErrorValidationRule/>

</Binding.ValidationRules>

Happy coding!! | http://blogs.msdn.com/b/jaimer/archive/2009/11.aspx | CC-MAIN-2013-20 | refinedweb | 1,534 | 55.24 |

The Serial Programming Guide for POSIX Operating Systems will teach you how to successfully, efficiently, and portably program the serial ports on your UNIX® workstation or PC. Each chapter provides programming examples that use the POSIX (Portable Standard for UNIX) terminal control functions and should work with very few modifications under IRIX®, HP-UX, SunOS®, Solaris®, Digital UNIX®, Linux®, and most other UNIX operating systems. The biggest difference between operating systems that you will find is the filenames used for serial port device and lock files.

This guide is organized into the following chapters and appendices:

This chapter introduces serial communications, RS-232 and other standards that are used on most computers as well as how to access a serial port from a C program.

Computers transfer information (data) one or more bits at a time. Serial refers to the transfer of data one bit at a time. Serial communications include most network devices, keyboards, mice, MODEMs, and terminals.

When doing serial communications each word (i.e. byte or character) of data you send or receive is sent one bit at a time. Each bit is either on or off. The terms you'll hear sometimes are mark for the on state and space for the off state.

The speed of the serial data is most often expressed as bits-per-second ("bps") or baudot rate ("baud"). This just represents the number of ones and zeroes that can be sent in one second. Back at the dawn of the computer age, 300 baud was considered fast, but today computers can handle RS-232 speeds as high as 430,800 baud! When the baud rate exceeds 1,000, you'll usually see the rate shown in kilo baud, or kbps (e.g. 9.6k, 19.2k, etc). For rates above 1,000,000 that rate is shown in megabaud, or Mbps (e.g. 1.5Mbps).

When referring to serial devices or ports, they are either labeled as Data Communications Equipment ("DCE") or Data Terminal Equipment ("DTE"). The difference between these is simple - every signal pair, like transmit and receive, is swapped. When connecting two DTE or two DCE interfaces together, a serial null-MODEM cable or adapter is used that swaps the signal pairs.

RS-232 is a standard electrical interface for serial communications defined by the Electronic Industries Association ("EIA"). RS-232 actually comes in 3 different flavors (A, B, and C) with each one defining a different voltage range for the on and off levels. The most commonly used variety is RS-232C, which defines a mark (on) bit as a voltage between -3V and -12V and a space (off) bit as a voltage between +3V and +12V. The RS-232C specification says these signals can go about 25 feet (8m) before they become unusable. You can usually send signals a bit farther than this as long as the baud is low enough.

Besides wires for incoming and outgoing data, there are others that provide timing, status, and handshaking:

Two standards for serial interfaces you may also see are RS-422 and RS-574. RS-422 uses lower voltages and differential signals to allow cable lengths up to about 1000ft (300m). RS-574 defines the 9-pin PC serial connector and voltages.

The RS-232 standard defines some 18 different signals for serial communications. Of these, only six are generally available in the UNIX environment.

Technically the logic ground is not a signal, but without it none of the other signals will operate. Basically, the logic ground acts as a reference voltage so that the electronics know which voltages are positive or negative.

The TXD signal carries data transmitted from your workstation to the computer or device on the other end (like a MODEM). A mark voltage is interpreted as a value of 1, while a space voltage is interpreted as a value of 0.

The RXD signal carries data transmitted from the computer or device on the other end to your workstation. Like TXD, mark and space voltages are interpreted as 1 and 0, respectively.

The DCD signal is received from the computer or device on the other end of your serial cable. A space voltage on this signal line indicates that the computer or device is currently connected or on line. DCD is not always used or available.

The DTR signal is generated by your workstation and tells the computer or device on the other end that you are ready (a space voltage) or not-ready (a mark voltage). DTR is usually enabled automatically whenever you open the serial interface on the workstation.

The CTS signal is received from the other end of the serial cable. A space voltage indicates that is alright to send more serial data from your workstation.

CTS is usually used to regulate the flow of serial data from your workstation to the other end.

The RTS signal is set to the space voltage by your workstation to indicate that more data is ready to be sent.

Like CTS, RTS helps to regulate the flow of data between your workstation and the computer or device on the other end of the serial cable. Most workstations leave this signal set to the space voltage all the time.

For the computer to understand the serial data coming into it, it needs some way to determine where one character ends and the next begins. This guide deals exclusively with asynchronous serial data.

In asynchronous mode the serial data line stays in the mark (1) state until a character is transmitted. A start bit preceeds each character and is followed immediately by each bit in the character, an optional parity bit, and one or more stop bits. The start bit is always a space (0) and tells the computer that new serial data is available. Data can be sent or received at any time, thus the name asynchronous.

Figure 1 - Asynchronous Data Transmission

The optional parity bit is a simple sum of the data bits indicating whether or not the data contains an even or odd number of 1 bits. With even parity, the parity bit is 0 if there is an even number of 1's in the character. With odd parity, the parity bit is 0 if there is an odd number of 1's in the data. You may also hear the terms space parity, mark parity, and no parity. Space parity means that the parity bit is always 0, while mark parity means the bit is always 1. No parity means that no parity bit is present or transmitted.

The remaining bits are called stop bits. There can be 1, 1.5, or 2 stop bits between characters and they always have a value of 1. Stop bits traditionally were used to give the computer time to process the previous character, but now only serve to synchronize the receiving computer to the incoming characters.

Asynchronous data formats are usually expressed as "8N1", "7E1", and so forth. These stand for "8 data bits, no parity, 1 stop bit" and "7 data bits, even parity, 1 stop bit" respectively.

Full duplex means that the computer can send and receive data simultaneously - there are two separate data channels (one coming in, one going out).

Half duplex means that the computer cannot send or receive data at the same time. Usually this means there is only a single data channel to talk over. This does not mean that any of the RS-232 signals are not used. Rather, it usually means that the communications link uses some standard other than RS-232 that does not support full duplex operation.

It is often necessary to regulate the flow of data when transferring data between two serial interfaces. This can be due to limitations in an intermediate serial communications link, one of the serial interfaces, or some storage media. Two methods are commonly used for asynchronous data.

The first method is often called "software" flow control and uses special characters to start (XON or DC1, 021 octal) or stop (XOFF or DC3, 023 octal) the flow of data. These characters are defined in the American Standard Code for Information Interchange ("ASCII"). While these codes are useful when transferring textual information, they cannot be used when transferring other types of information without special programming.

The second method is called "hardware" flow control and uses the RS-232 CTS and RTS signals instead of special characters. The receiver sets CTS to the space voltage when it is ready to receive more data and to the mark voltage when it is not ready. Likewise, the sender sets RTS to the space voltage when it is ready to send more data. Because hardware flow control uses a separate set of signals, it is much faster than software flow control which needs to send or receive multiple bits of information to do the same thing. CTS/RTS flow control is not supported by all hardware or operating systems.

Normally a receive or transmit data signal stays at the mark voltage until a new character is transferred. If the signal is dropped to the space voltage for a long period of time, usually 1/4 to 1/2 second, then a break condition is said to exist.

A break is sometimes used to reset a communications line or change the operating mode of communications hardware like a MODEM. Chapter 3, Talking to MODEMs covers these applications in more depth.

Unlike asynchronous data, synchronous data appears as a constant stream of bits. To read the data on the line, the computer must provide or receive a common bit clock so that both the sender and receiver are synchronized.

Even with this synchronization, the computer must mark the beginning of the data somehow. The most common way of doing this is to use a data packet protocol like Serial Data Link Control ("SDLC") or High-Speed Data Link Control ("HDLC").

Each protocol defines certain bit sequences to represent the beginning and end of a data packet. Each also defines a bit sequence that is used when there is no data. These bit sequences allow the computer see the beginning of a data packet.

Because synchronous protocols do not use per-character synchronization bits they typically provide at least a 25% improvement in performance over asynchronous communications and are suitable for remote networking and configurations with more than two serial interfaces.

Despite the speed advantages of synchronous communications, most RS-232 hardware does not support it due to the extra hardware and software required.

Like all devices, UNIX provides access to serial ports via device files. To access a serial port you simply open the corresponding device file.

Each serial port on a UNIX system has one or more device files (files in the /dev directory) associated with it:

Since a serial port is a file, the open(2) function is used to access it. The one hitch with UNIX is that device files are usually not accessable by normal users. Workarounds include changing the access permissions to the file(s) in question, running your program as the super-user (root), or making your program set-userid so that it runs as the owner of the device file.

For now we'll assume that the file is accessable by all users. The

code to open serial port 1 on an

sgi® workstation running

IRIX is:

Listing 1 - Opening a serial port.

f1", O_RDWR | O_NOCTTY | O_NDELAY); if (fd == -1) { /* * Could not open the port. */ perror("open_port: Unable to open /dev/ttyf1 - "); } else fcntl(fd, F_SETFL, 0); return (fd); }

Other systems would require the corresponding device file name, but otherwise the code is the same.

You'll notice that when we opened the device file we used two other flags along with the read+write mode:

fd = open("/dev/ttyf1", O_RDWR | O_NOCTTY | O_NDELAY);

The O_NOCTTY flag tells UNIX that this program doesn't want to be the "controlling terminal" for that port. If you don't specify this then any input (such as keyboard abort signals and so forth) will affect your process. Programs like getty(1M/8) use this feature when starting the login process, but normally a user program does not want this behavior.

The O_NDELAY flag tells UNIX that this program doesn't care what state the DCD signal line is in - whether the other end of the port is up and running. If you do not specify this flag, your process will be put to sleep until the DCD signal line is the space voltage.

Writing data to the port is easy - just use the write(2) system call to send data it:

n = write(fd, "ATZ\r", 4); if (n < 0) fputs("write() of 4 bytes failed!\n", stderr);

The write function returns the number of bytes sent or -1 if an error occurred. Usually the only error you'll run into is EIO when a MODEM or data link drops the Data Carrier Detect (DCD) line. This condition will persist until you close the port.

Reading data from a port is a little trickier. When you operate the port in raw data mode, each read(2) system call will return however many characters are actually available in the serial input buffers. If no characters are available, the call will block (wait) until characters come in, an interval timer expires, or an error occurs. The read function can be made to return immediately by doing the following:

fcntl(fd, F_SETFL, FNDELAY);

The FNDELAY option causes the read function to return 0 if no characters are available on the port. To restore normal (blocking) behavior, call fcntl() without the FNDELAY option:

fcntl(fd, F_SETFL, 0);

This is also used after opening a serial port with the O_NDELAY option.

To close the serial port, just use the close system call:

close(fd);

Closing a serial port will also usually set the DTR signal low which causes most MODEMs to hang up.

This chapter discusses how to configure a serial port from C using the POSIX termios interface.(3) and tcsetattr(3). These get and set terminal attributes, respectively; you provide a pointer to a termios structure that contains all of the serial options available: baud rate constants (CBAUD, B9600, etc.) are used for older interfaces that lack the c_ispeed and c_ospeed members. See the next section for information on the POSIX functions used to set the baud rate.

Never initialize the c_cflag (or any other flag) member directly; you should always use the bitwise AND, OR, and NOT operators to set or clear bits in the members. Different operating system versions (and even patches) can and do use the bits differently, so using the bitwise operators will prevent you from clobbering a bit flag that is needed in a newer serial driver.

The baud rate is stored in different places depending on the operating system. Older interfaces store the baud rate in the c_cflag member using one of the baud rate constants in table 4, while newer implementations provide the c_ispeed and c_ospeed members that contain the actual baud rate value.

The cfsetospeed(3) and cfsetispeed(3) functions are provided to set the baud rate in the termios structure regardless of the underlying operating system interface. Typically you'd use the following code to set the baud rate:

Listing 2 - Settingflag |= (CLOCAL | CREAD); /* * Set the new options for the port... */ tcsetattr(fd, TCSANOW, &options);

The tcgetattr(3) function fills the termios structure you provide with the current serial port configuration. After we set the baud rates and enable local mode and serial data receipt, we select the new configuration using tcsetattr(3). The TCSANOW constant specifies that all changes should occur immediately without waiting for output data to finish sending or input data to finish receiving. There are other constants to wait for input and output to finish or to flush the input and output buffers.

Most systems do not support different input and output speeds, so be sure to set both to the same value for maximum portability.

Unlike the baud rate, there is no convienience function to set the character size. Instead you must do a little bitmasking to set things up. The character size is specified in bits:

options.c_cflag &= ~CSIZE; /* Mask the character size bits */ options.c_cflag |= CS8; /* Select 8 data bits */

Like the character size you must manually set the parity enable and parity type bits. UNIX serial drivers support even, odd, and no parity bit generation. Space parity can be simulated with clever coding.

options.c_cflag &= ~PARENB options.c_cflag &= ~CSTOPB options.c_cflag &= ~CSIZE; options.c_cflag |= CS8;

options.c_cflag |= PARENB options.c_cflag &= ~PARODD options.c_cflag &= ~CSTOPB options.c_cflag &= ~CSIZE; options.c_cflag |= CS7;

options.c_cflag |= PARENB options.c_cflag |= PARODD options.c_cflag &= ~CSTOPB options.c_cflag &= ~CSIZE; options.c_cflag |= CS7;

options.c_cflag &= ~PARENB options.c_cflag &= ~CSTOPB options.c_cflag &= ~CSIZE; options.c_cflag |= CS8;

Some versions of UNIX support hardware flow control using the CTS (Clear To Send) and RTS (Request To Send) signal lines. If the CNEW_RTSCTS or CRTSCTS constants are defined on your system then hardware flow control is probably supported. Do the following to enable hardware flow control:

options.c_cflag |= CNEW_RTSCTS; /* Also called CRTSCTS */

Similarly, to disable hardware flow control:

options.c_cflag &= ~CNEW_RTSCTS;

The local modes member c_lflag controls how input characters are managed by the serial driver. In general you will configure the c_lflag member for canonical or raw input.flag |= (ICANON | ECHO | ECHOE);

Raw input is unprocessed. Input characters are passed through exactly as they are received, when they are received. Generally you'll deselect the ICANON, ECHO, ECHOE, and ISIG options when using raw input:

options.c_lflag &= ~(ICANON | ECHO | ECHOE | ISIG);

Never enable input echo (ECHO, ECHOE) when sending commands to a MODEM or other computer that is echoing characters, as you will generate a feedback loop between the two serial interfaces!

The input modes member c_iflag controls any input processing that is done to characters received on the port. Like the c_cflag field, the final value stored in c_iflag is the bitwise OR of the desired options.

You should enable input parity checking when you have enabled parity in the c_cflag member (PARENB). The revelant constants for input parity checking are INPCK, IGNPAR, PARMRK , and ISTRIP. Generally you will select INPCK and ISTRIP to enable checking and stripping of the parity bit:

options.c_iflag |= (INPCK | ISTRIP); (000 octal) is sent to your program before every character with a parity error. Otherwise, a DEL (177 octal) and NUL character is sent along with the bad character.

Software flow control is enabled using the IXON, IXOFF , and IXANY constants:

options.c_iflag |= (IXON | IXOFF | IXANY);

To disable software flow control simply mask those bits:

options.c_iflag &= ~(IXON | IXOFF | IXANY);

The XON (start data) and XOFF (stop data) characters are defined in the c_cc array described below.

The c_oflag member contains output filtering options. Like the input modes, you can select processed or raw data output.

Processed output is selected by setting the OPOST option in the c_oflag member:

options.c_oflag |= OPOST;

Of all the different options, you will only probably use the ONLCR option which maps newlines into CR-LF pairs. The rest of the output options are primarily historic and date back to the time when line printers and terminals could not keep up with the serial data stream!

Raw output is selected by resetting the OPOST option in the c_oflag member:

options.c_oflag &= ~OPOST;

When the OPOST option is disabled, all other option bits in c_oflag are ignored.

The c_cc character array contains control character definitions as well as timeout parameters. Constants are defined for every element of this array.

The VSTART and VSTOP elements of the c_cc array contain the characters used for software flow control. Normally they should be set to DC1 (021 octal) and DC3 (023 octal) which represent the ASCII standard XON and XOFF characters.

UNIX serial interface drivers provide the ability to specify character and packet timeouts. Two elements of the c_cc array are used for timeouts: VMIN and VTIME. Timeouts are ignored in canonical input mode or when the NDELAY option is set on the file via open or fcntl.

VMIN specifies the minimum number of characters to read. If it is set to 0, then the VTIME value specifies the time to wait for every character read. Note that this does not mean that a read call for N bytes will wait for N characters to come in. Rather, the timeout will apply to the first character and the read call will return the number of characters immediately available (up to the number you request).. This method allows you to tell the serial driver you need exactly N bytes and any read call will return 0 or N bytes. However, the timeout only applies to the first character read, so if for some reason the driver misses one character inside the N byte packet then the read call could block forever waiting for additional input characters.

VTIME specifies the amount of time to wait for incoming characters in tenths of seconds. If VTIME is set to 0 (the default), reads will block (wait) indefinitely unless the NDELAY option is set on the port with open or fcntl.

This chapter covers the basics of dialup telephone Modulator/Demodulator (MODEM) communications. Examples are provided for MODEMs that use the defacto standard "AT" command set.

MODEMs are devices that modulate serial data into frequencies that can be transferred over an analog data link such as a telephone line or cable TV connection. A standard telephone MODEM converts serial data into tones that can be passed over the phone lines; because of the speed and complexity of the conversion these tones sound more like loud screeching if you listen to them.

Telephone MODEMs are available today that can transfer data across a telephone line at nearly 53,000 bits per second, or 53kbps. In addition, most MODEMs use data compression technology that can increase the bit rate to well over 100kbps on some types of data.

The first step in communicating with a MODEM is to open and configure the port for raw input:

Listing 3 - Configuring the port for raw input.

int fd; struct termios options; /* open the port */ fd = open("/dev/ttyf1", O_RDWR | O_NOCTTY | O_NDELAY););

Next you need to establish communications with the MODEM. The best way to do this is by sending the "AT" command to the MODEM. This also allows smart MODEMs to detect the baud you are using. When the MODEM is connected correctly and powered on it will respond with the response "OK".

Listing 4 - Initializing the MODEM.\r", 3) < 3)); }

Most MODEMs support the "AT" command set, so called because each command starts with the "AT" characters. Each command is sent with the "AT" characters starting in the first column followed by the specific command and a carriage return (CR, 015 octal). After processing the command the MODEM will reply with one of several textual messages depending on the command.

The ATD command dials the specified number. In addition to numbers and dashes you can specify tone ("T") or pulse ("P") dialing, pause for one second (","), and wait for a dialtone ("W"):

ATDT 555-1212 ATDT 18008008008W1234,1,1234 ATD T555-1212WP1234

The MODEM will reply with one of the following messages:

NO DIALTONE BUSY NO CARRIER CONNECT CONNECT baud

The ATH command causes the MODEM to hang up. Since the MODEM must be in "command" mode you probably won't use it during a normal phone call.

Most MODEMs will also hang up if DTR is dropped; you can do this by setting the baud to 0 for at least 1 second. Dropping DTR also returns the MODEM to command mode.

After a successful hang up the MODEM will reply with "NO CARRIER". If the MODEM is still connected the "CONNECT" or "CONNECT baud" message will be sent.

First and foremost, don't forget to disable input echoing. Input echoing will cause a feedback loop between the MODEM and computer.

Second, when sending MODEM commands you must terminate them with a carriage return (CR) and not a newline (NL). The C character constant for CR is "\r".

Finally, when dealing with a MODEM make sure you use a baud that the MODEM supports. While many MODEMs do auto-baud detection, some have limits (19.2kbps is common) that you must observe.

This chapter covers advanced serial programming techniques using the ioctl(2) and select(2) system calls.

In Chapter 2, Configuring the Serial Port we used the tcgetattr and tcsetattr functions to configure the serial port. Under UNIX these functions use the ioctl(2) system call to do their magic.

The ioctl system call takes three arguments:

int ioctl(int fd, int request, ...);

The fd argument specifies the serial port file descriptor.

The request argument is a constant defined in the

<termios.h> header file and is typically one of the following:

The

TIOCMGET ioctl gets the current "MODEM"

status bits, which consist of all of the RS-232 signal lines except

RXD and TXD:

To get the status bits, call ioctl with a pointer to an integer to hold the bits:

Listing 5 - Getting the MODEM status bits.

#include <unistd.h> #include <termios.h> int fd; int status; ioctl(fd, TIOCMGET, &status);

The

TIOCMSET ioctl sets the "MODEM" status bits

defined above. To drop the DTR signal you can do:

Listing 6 - Dropping DTR with the TIOCMSET ioctl.

#include <unistd.h> #include <termios.h> int fd; int status; ioctl(fd, TIOCMGET, &status); status &= ~TIOCM_DTR; ioctl(fd, TIOCMSET, status);

The bits that can be set depend on the operating system, driver, and modes in use. Consult your operating system documentation for more information.

The

FIONREAD ioctl gets the number of bytes in

the serial port input buffer. As with

TIOCMGET you pass in

a pointer to an integer to hold the number of bytes:

Listing 7 - Getting the number of bytes in the input buffer.

#include <unistd.h> #include <termios.h> int fd; int bytes; ioctl(fd, FIONREAD, &bytes);

This can be useful when polling a serial port for data, as your program can determine the number of bytes in the input buffer before attempting a read.

While simple applications can poll or wait on data coming from the serial port, most applications are not simple and need to handle input from multiple sources.

UNIX provides this capability through the select(2) system call. This system call allows your program to check for input, output, or error conditions on one or more file descriptors. The file descriptors can point to serial ports, regular files, other devices, pipes, or sockets. You can poll to check for pending input, wait for input indefinitely, or timeout after a specific amount of time, making the select system call extremely flexible.

Most GUI Toolkits provide an interface to select; we will discuss the X Intrinsics ("Xt") library later in this chapter.

The select system call accepts 5 arguments:

int select(int max_fd, fd_set *input, fd_set *output, fd_set *error, struct timeval *timeout);

The max_fd argument specifies the highest numbered file

descriptor in the input, output, and error sets.

The input, output, and error arguments specify

sets of file descriptors for pending input, output, or error

conditions; specify

NULL to disable monitoring for the

corresponding condition. These sets are initialized using three macros:

FD_ZERO(fd_set); FD_SET(fd, fd_set); FD_CLR(fd, fd_set);

The FD_ZERO macro clears the set entirely. The FD_SET and FD_CLR macros add and remove a file descriptor from the set, respectively.

The timeout argument specifies a timeout value which consists

of seconds (timeout.tv_sec) and microseconds (timeout.tv_usec

). To poll one or more file descriptors, set the seconds and

microseconds to zero. To wait indefinitely specify

NULL

for the timeout pointer.

The select system call returns the number of file descriptors that have a pending condition, or -1 if there was an error.

Suppose we are reading data from a serial port and a socket. We want to check for input from either file descriptor, but want to notify the user if no data is seen within 10 seconds. To do this we'll need to use the select system call:

Listing 8 - Using SELECT to process input from more than one source.

#include <unistd.h> #include <sys/types.h> #include <sys/time.h> #include <sys/select.h> int n; int socket; int fd; int max_fd; fd_set input; struct timeval timeout; /* Initialize the input set */ FD_ZERO(input); FD_SET(fd, input); FD_SET(socket, input); max_fd = (socket > fd ? socket : fd) + 1; /* Initialize the timeout structure */ timeout.tv_sec = 10; timeout.tv_usec = 0; /* Do the select */ n = select(max_fd, NULL, NULL, ; /* See if there was an error */ if (n 0) perror("select failed"); else if (n == 0) puts("TIMEOUT"); else { /* We have input */ if (FD_ISSET(fd, input)) process_fd(); if (FD_ISSET(socket, input)) process_socket(); }

You'll notice that we first check the return value of the select system call. Values of 0 and -1 yield the appropriate warning and error messages. Values greater than 0 mean that we have data pending on one or more file descriptors.

To determine which file descriptor(s) have pending input, we use the FD_ISSET macro to test the input set for each file descriptor. If the file descriptor flag is set then the condition exists (input pending in this case) and we need to do something.

The X Intrinsics library provides an interface to the select system call via the XtAppAddInput(3x) and XtAppRemoveInput(3x) functions:

int XtAppAddInput(XtAppContext context, int fd, int mask, XtInputProc proc, XtPointer data); void XtAppRemoveInput(XtAppContext context, int input);

The select system call is used internally to implement timeouts, work procedures, and check for input from the X server. These functions can be used with any Xt-based toolkit including Xaw, Lesstif, and Motif.

The proc argument to XtAppAddInput specifies the function to call when the selected condition (e.g. input available) exists on the file descriptor. In the previous example you could specify the process_fd or process_socket functions.

Because Xt limits your access to the select system call, you'll need to implement timeouts through another mechanism, probably via XtAppAddTimeout(3x).

This appendix provides pinout information for many of the common serial ports you will find.

RS-232 comes in three flavors (A, B, C) and uses a 25-pin D-Sub connector:

Figure 2 - RS-232 Connector

RS-422 also uses a 25-pin D-Sub connector, but with differential signals:

Figure 3 - RS-422 Connector

The RS-574 interface is used exclusively by PC manufacturers and uses a 9-pin male D-Sub connector:

Figure 4 - RS-574 Connector

Older SGI equipment uses a 9-pin female D-Sub connector. Unlike RS-574, the SGI pinouts nearly match those of RS-232:

Figure 5 - SGI 9-Pin Connector

The SGI Indigo, Indigo2, and Indy workstations use the Apple 8-pin MiniDIN connector for their serial ports:

Figure 6 - SGI 8-Pin Connector

This chapter lists the ASCII control codes and their names. | https://www.cmrr.umn.edu/~strupp/serial.html | CC-MAIN-2019-51 | refinedweb | 5,166 | 62.07 |

Play a multimedia file in J2ME Program (Audio/Video) using MMAPI

By: Vikram Goyal Printer Friendly Format.

//A Simple MMAPI MIDlet

import javax.microedition.midlet.MIDlet;

import javax.microedition.media.Manager;

import javax.microedition.media.Player;

public class SimplePlayer extends MIDlet {

public void startApp() {

try {

Player player =

Manager.createPlayer(getClass().getResourceAsStream("/media/audio/chapter3/baby.wav"),"audio/x-wav");

player.start();

} catch(Exception e) {

e.printStackTrace();

}

}

public void pauseApp() {

}

public void destroyApp(boolean unconditional) {

}

}

To keep things simple at this stage, the media file is played by creating an InputStream on a wav file, which is embedded in the MIDlet’s JAR. This media file is kept in the folder media/audio/chapter3 and is called baby.wav (which is the sound of a baby crying).

Of course, you don’t need to play an audio file only. You can substitute the wav file with a video file, provided the emulator supports the format of the video file. The video will not show anywhere, because this listing doesn’t provide a mechanism to show the video. You can substitute the wav file for a midi, tone, or any other supported audio format. The point is that playing multimedia files using the MMAPI is as simple as creating a Player instance using the Manager class and calling method start() on. when iam trying to executing above program i am ge

View Tutorial By: BHAGYALAXMI at 2008-10-11 06:45:57

2. pls send simple to hard j2me coding

View Tutorial By: Seenu at 2008-10-29 15:19:03

3. java.lang.IllegalArgumentException means usually t

View Tutorial By: Seink at 2008-11-12 11:03:00

4. me too got the same problem....

but m sure

View Tutorial By: HEHE at 2008-11-19 01:49:04

5. me too got the same problem....

but m sure

View Tutorial By: HEHE at 2008-11-19 01:50:15

6. I am not found a J2ME file for playing a audio pla

View Tutorial By: S.M. ASHIQUER RAHMAN at 2008-11-22 22:33:43

7. I want to say, that you have to give complete code

View Tutorial By: Jasvir Yadav at 2009-09-05 07:23:15

8. You are totally learning yourself

The code

View Tutorial By: Narayan at 2009-09-14 03:28:35

9. very good helped me a lot

View Tutorial By: Swaran at 2009-12-30 09:51:36

10. I agree with BHAGYALAXMI. I faced the same problem

View Tutorial By: Alok at 2010-01-10 12:03:37

11. Even i got d same error. can u plz tell me where t

View Tutorial By: Priya at 2011-03-14 12:16:54

12. java.lang.IllegalArgumentException

at java

View Tutorial By: gopal at 2011-03-21 00:45:51

13. I run the code . It's good.

View Tutorial By: Golam Rabbi at 2011-07-12 07:06:00

14. @All

You need to first keep your .w

View Tutorial By: Nithya at 2011-08-31 02:27:48

15. What exactly is d directry for d folder Media?? It

View Tutorial By: Oraclematrix at 2012-01-15 21:40:54

16. javax.microedition.media.MediaException: Malformed

View Tutorial By: adi at 2012-04-01 11:51:17

17. Put *.wav at where store java file and nnsert thes

View Tutorial By: BAE at 2012-09-18 12:26:55

18. one have to store .wav file in res folder of your

View Tutorial By: swetha at 2013-06-14 06:47:57

19. public void playSound(String filename) {

View Tutorial By: Rowan at 2014-07-17 10:26:02 | https://www.java-samples.com/showtutorial.php?tutorialid=830 | CC-MAIN-2020-05 | refinedweb | 611 | 67.96 |

On Thu, Aug 5, 2010 at 2:49 AM, Ivan Lazar Miljenovic <ivan.miljenovic at gmail.com> wrote: > As part of an attempt at resolving the FGL vs inductive-graphs naming > mess, one solution that Edward Kmett and I thrashed out will involve > utilising a new top-level module namespace of Graph.* instead of using > Data.Graph.* as currently found in FGL. However, Don Stewart > recommended that I ask of the collective wisdom that is the libraries > mailing list before moving ahead with this proposal. > > The three main reasons for such a new top-level namespace are: > > * Avoid module clashes (ala mtl and monads-{fd,tf}): for my > still-vapourware graph classes library I would have to use > Data.Graph.Classes or some such due to Data.Graph being used by the > containers library; the new library Thomas Bereknyei are working on > can then use Graph.Inductive to avoid clashing with FGL's > Data.Graph.Inductive. > > * Reduce the length of module names: this reason might not mean as > much and the net benefit would be minimal, but something like > Graph.Inductive.Algorithms.Directed is a bit nicer than > Data.Graph.Inductive.Algorithms.Directed > > * Not all graph-related modules are necessarily strictly about > data-types, etc. (and I can't find any distinction about what defines > the Data.* namespace anyway); e.g. my graphviz library currently uses > Data.GraphViz.*, though it should arguably go under Graphics.* instead > (it's currently under Data.* because that's how I found it when I took > over maintainership). > > So, am I able to start using Graph as a new top-level namespace? The > end goal is that eventually all graph-related libraries will use this > namespace (whereas currently most such libraries use Data.Graph.*, > graphviz being the notable exception). Personally, I dislike this proposal. Yes, there are an awful lot of modules a well-developed graph ecosystem will have. But this is true of lists, or arrays, or any other extremely common and useful abstraction. (Should we have a toplevel Monad.* namespace because there are so ridiculously many monad libraries and transformers and whatnot?) Clashes are more an argument for merging and improving libraries, or at least maintainers coordinating with each other. -- gwern | http://www.haskell.org/pipermail/libraries/2010-August/014033.html | CC-MAIN-2014-15 | refinedweb | 368 | 59.4 |

How do you use existing (but not basic) Qml types (for example Rectangle, Buttons, etc.) as properties for custom C++ - defined types?

- mahlersand

Seems like a stupid question, but I have not found anything about this. There is a page in the documentation that shows how to use custom types as properties for C++ - defined types, but what do I do if I want, for example, a Rectangle as a background for an Item?

- p3c0 Moderators

Hi @mahlersand

If I understood you correctly, you cant use

Rectangle,

Buttonsdirectly inside C++ defined types as they don't have a public C++ API's. if you are using

QQuickPaintedItemthen you can draw your own rectangle using various

QPaintermethods.

- mahlersand

@p3c0 Yes, it's just that i wanted to avoid that, as i wanted the

Rectangleto be accessible and modifiable from within the QML code. That is currently only (theoretically) possible through the private classes

#include <QtQuick/5.5.0/QtQuick/private/qquickrectangle_p.h>, but it is not really working.

- p3c0 Moderators

@mahlersand Using private classes is not recommended as its implementation may change from version to version. The best would be to use

QQuickPaintedItemand create your own custom types. | https://forum.qt.io/topic/58994/how-do-you-use-existing-but-not-basic-qml-types-for-example-rectangle-buttons-etc-as-properties-for-custom-c-defined-types/1 | CC-MAIN-2018-09 | refinedweb | 197 | 54.22 |

import "golang.org/x/text/internal/gen"

Package gen contains common code for the various code generation tools in the text repository. Its usage ensures consistency between tools.

This package defines command line flags that are common to most generation tools. The flags allow for specifying specific Unicode and CLDR versions in the public Unicode data repository ().

A local Unicode data mirror can be set through the flag -local or the environment variable UNICODE_DIR. The former takes precedence. The local directory should follow the same structure as the public repository.

IANA data can also optionally be mirrored by putting it in the iana directory rooted at the top of the local mirror. Beware, though, that IANA data is not versioned. So it is up to the developer to use the right version.

UnicodeVersion reports the requested CLDR version.

Init performs common initialization for a gen command. It parses the flags and sets up the standard logging parameters.

IsLocal reports whether data files are available locally.

func Open(urlRoot, subdir, path string) io.ReadCloser

Open opens subdir/path if a local directory is specified and the file exists, where subdir is a directory relative to the local root, or fetches it from urlRoot/path otherwise. It will call log.Fatal if there are any errors.

func OpenCLDRCoreZip() io.ReadCloser

OpenCLDRCoreZip opens the CLDR core zip file. It will call log.Fatal if there are any errors.

func OpenIANAFile(path string) io.ReadCloser

OpenIANAFile opens the requested IANA file. The file is specified relative to the IANA root, which is typically either or the iana directory in the local mirror. It will call log.Fatal if there are any errors.

func OpenUCDFile(file string) io.ReadCloser

OpenUCDFile opens the requested UCD file. The file is specified relative to the public Unicode root directory. It will call log.Fatal if there are any errors.

func OpenUnicodeFile(category, version, file string) io.ReadCloser

OpenUnicodeFile opens the requested file of the requested category from the root of the Unicode data archive. The file is specified relative to the public Unicode root directory. If version is "", it will use the default Unicode version. It will call log.Fatal if there are any errors.

Repackage rewrites a Go file from belonging to package main to belonging to the given package.

UnicodeVersion reports the requested Unicode version.

WriteCLDRVersion writes a constant for the CLDR version from which the tables are generated.

WriteGo prepends a standard file comment and package statement to the given bytes, applies gofmt, and writes them to w.

WriteGoFile prepends a standard file comment and package statement to the given bytes, applies gofmt, and writes them to a file with the given name. It will call log.Fatal if there are any errors.

WriteUnicodeVersion writes a constant for the Unicode version from which the tables are generated.

type CodeWriter struct { Size int Hash hash.Hash32 // content hash // contains filtered or unexported fields }

CodeWriter is a utility for writing structured code. It computes the content hash and size of written content. It ensures there are newlines between written code blocks.

func NewCodeWriter() *CodeWriter

NewCodeWriter returns a new CodeWriter.

func (w *CodeWriter) Write(p []byte) (n int, err error)

func (w *CodeWriter) WriteArray(x interface{})

WriteArray writes an array value.

func (w *CodeWriter) WriteComment(comment string, args ...interface{})

WriteComment writes a comment block. All line starts are prefixed with "//". Initial empty lines are gobbled. The indentation for the first line is stripped from consecutive lines.

func (w *CodeWriter) WriteConst(name string, x interface{})

WriteConst writes a constant of the given name and value.

WriteGo appends the buffer with the total size of all created structures and writes it as a Go file to the the given writer with the given package name.

func (w *CodeWriter) WriteGoFile(filename, pkg string)

WriteGoFile appends the buffer with the total size of all created structures and writes it as a Go file to the the given file with the given package name.

func (w *CodeWriter) WriteSlice(x interface{})

WriteSlice writes a slice value.

func (w *CodeWriter) WriteString(s string)

WriteString writes a string literal.

func (w *CodeWriter) WriteType(x interface{}) string

WriteType writes a definition of the type of the given value and returns the type name.

func (w *CodeWriter) WriteVar(name string, x interface{})

WriteVar writes a variable of the given name and value.

Package gen imports 21 packages (graph) and is imported by 2 packages. Updated 2017-08-15. Refresh now. Tools for package owners. | http://godoc.org/golang.org/x/text/internal/gen | CC-MAIN-2017-34 | refinedweb | 746 | 60.51 |

interactive_add_button_layout 1.0.0

Custom Layout with interactive add button to impove your UI and UX .

Interactive Add button layout #

Custom Layout with interactive add button to impove your UI and UX .

inspired from Oleg Frolov.

Usage #

Import the Package #

add this dependencies to your app

dependencies: interactive_add_button_layout: ^0.1.0

Use the Package #

add this import statement

import 'package:interactive_add_button_layout/interactive_add_button_layout.dart';

The layout need to be the root layout of your widget (screen)

and Now to use it, add this code to your widget :

return Scaffold( ... body: AddButtonLayout( parameters )

The layout has 6 parameters which are :

child: you know what is that xD, in case you don't it's the child of the layout which mean that the layout is his parent .

row: a List of Widgets to be diplayed in a row for the Row layout .

column: a List of Widgets to be diplayed in a column for the Column layout .

onPressed: the function to be called when the user click the add button .

color: the color of the layout (color of the background), by default it's

Color(0xff2A1546).

btnColor: the color of the add button

the

row and

column and

child are required !

Example : #

you can find a demo app in

./example

Gif #

Contribution #

Feel free to contribute, to report a bug or to suggest a feature, Thank you :) | https://pub.dev/packages/interactive_add_button_layout | CC-MAIN-2020-34 | refinedweb | 225 | 55.95 |

Share code: Serving files over HTTP

- Webmaster4o

Note: This has been done before, but there were a few improvements I wanted to make to what had been done before.

This script is an editor action, called "Serve Files." It has three improvements over existing implementations:

- Serve every file from the directory of your script at its filename

- Serve a zip of the entire directory in which your script is contained at

/. This is useful for copying a lot of files to a computer at once

- Printing your local IP to the console, to make it easier to see where you should go on your computer

It will also clean up the zip it creates after you stop the server.

Here's the script:

import os import shutil import socket import urllib.parse import dialogs import editor import flask # SETUP # Resolve local IP by connecting to Google's DNS server s = socket.socket(socket.AF_INET, socket.SOCK_DGRAM) s.connect(("8.8.8.8", 80)) localip = s.getsockname()[0] s.close() # Initialize Flask app = flask.Flask(__name__) # Calculate path details directory, filename = os.path.split(editor.get_path()) dirpath, dirname = os.path.split(directory.rstrip("/")) zippath = os.path.abspath("./{}.zip".format(dirname)) # Make an archive shutil.make_archive(dirname, "zip", dirpath, dirname) # Configure server routings @app.route("/") def index(): """Serve a zip of it all from the root""" return flask.send_file(zippath) @app.route("/<path:path>") def serve_file(path): """Serve individual files from elsewhere""" return flask.send_from_directory(directory, path) # Run the app print("Running at {}".format(localip)) app.run(host="0.0.0.0", port=80) # After it finishes os.remove(zippath)

- Webmaster4o

I think Flask tries to get the

Content-Typecorrect, but I'm not sure if there's a header for forcing download. I'll look into it. 👍 | https://forum.omz-software.com/topic/3326/share-code-serving-files-over-http | CC-MAIN-2017-26 | refinedweb | 296 | 58.69 |

In this tutorial, we will learn about the well built relationship between pointers and arrays in C/C++ programming. Pointers are variables that are used to store the address of a variable/function and even arrays that are blocks holding sequential data. We can operate an array using pointers in a very interesting and useful manner.

Before moving ahead make sure to go through the following introductory reading about pointers:

Pointers with arrays

When we declare any array, we have to specify its data type and size. For example, an integer array named ‘array’ of size 5 will be declared as follows:

int array[5];

This means that we have indeed created 5 integer variables named array[0], array[1], array[2], array[3] and array[4]. These five integers will be stored in the memory as a block of five consecutive integers. The figure below shows the horizontal memory for example purposes.

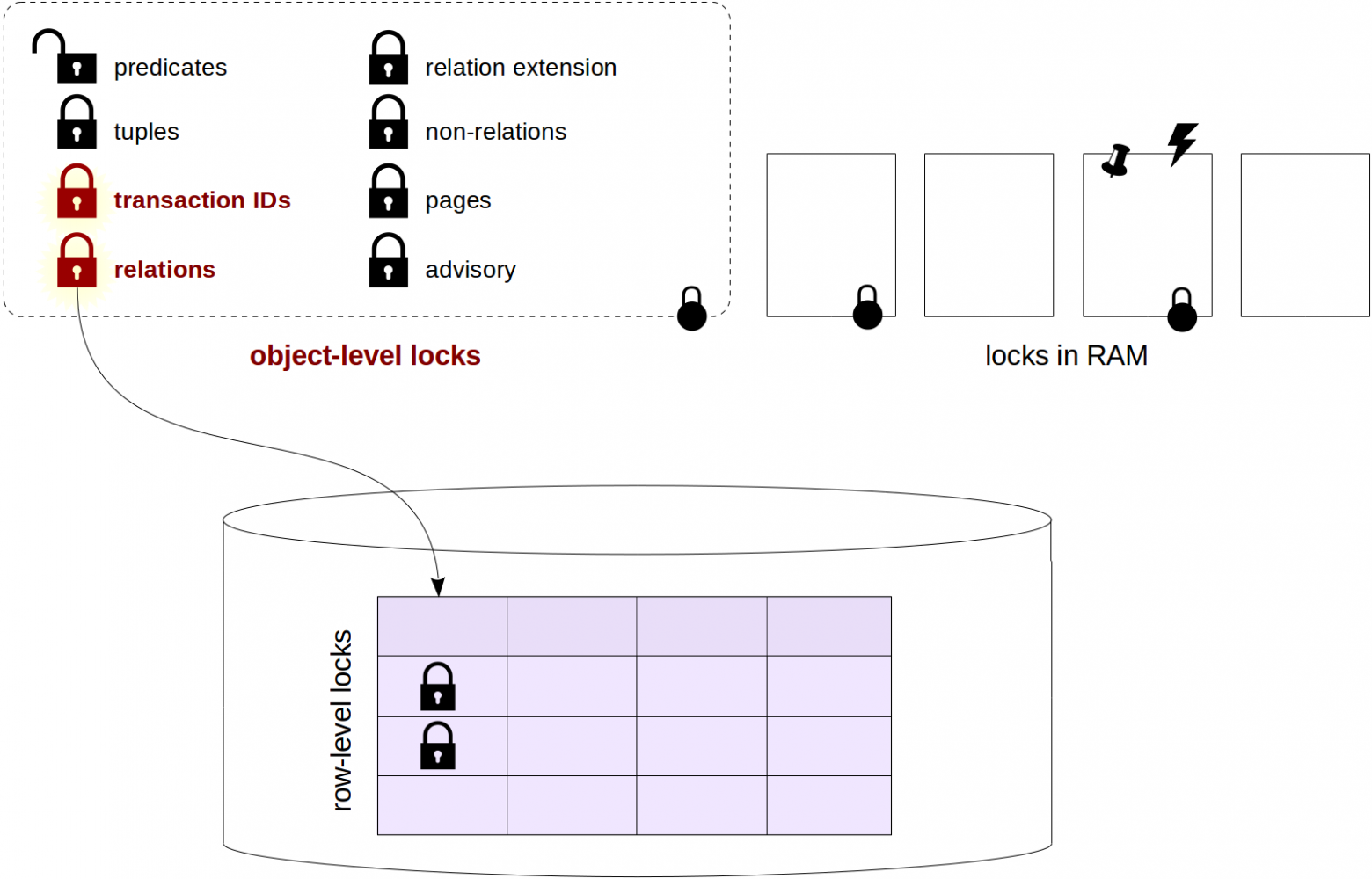

For example, we are showing that array[0] is stored at 100, array[1] will be 4 bytes ahead of array[0] at 104 because integer variables need 4 bytes of memory. Thus, array[2] will be at address 108, array[3] will be at address112 and array[4] will be at address 114. . The overall size of the array will be 20 bytes(5×4) and these 20 bytes will form one consecutive block as shown in the section of the memory in which the array is stored.

Similarly, we can also show the memory horizontally from left to right as well. We will increase the address while moving from left to right. Additionally, the array[5] has the following values: {10,11,12,13,14}.

The following table shows the addresses and values for each index.

Note: The address locations are just for example purposes and do not depict exact locations.

Important: The array elements are stored in contiguous memory locations, each element occupying four bytes, since it is an integer array.

Now for observational purposes let use the horizontal representation of the memory. This time we will show the memory a little bit more extended to the right so that we can accommodate more variables. Let’s say we have an integer variable ‘x’ and its value is set to ‘100’. For example, x is located at address 200. Now if we have a pointer to integer ‘ptr’. In ‘ptr’ we want to store the address of ‘x’. This will be accomplished through the lines of code given below.

int x = 100; int *ptr; ptr = &x;

You can also view it in the horizontal memory segment as shown below:

Now if we print ptr as an output then the value in ptr would be equal to 200. You can use the following statement to print the value of ‘ptr.’ This is the address of the variable ‘x’.

printf("\n%d",ptr);

If we deference ptr and print the value stored in this location then the value will be equal to 100. You can use the following statement to print the value. Use an asterisk sign in front of the pointer variable to access the value of the variable it points to.

printf("\n%d",*ptr);

Additionally, we also know that we can increment/decrement a pointer variable by a constant. So we can do something like this:

ptr=ptr+1; printf(ptr);

This will take us to the address of the next integer. As integers take up 4 bytes of memory so now the next index will be at 204. If we want to print ‘ptr’ then the output should be 204.

If we try to dereference ‘ptr’, and try to print *ptr then we do not know the value at this address. We can not say what will be printed. We know that ‘x’ is at address 200 but do not know the value of the variable at the next address (204) location.

Assigning an array to a pointer

However, this can be known when working with arrays and pointers. For this integer array (array[5]), also shown in the figure located at address 100. We will now declare a pointer to an integer called ‘ptr.’ Next, we will store the address of the first element of the array in this pointer. This will be achieved by putting an ampersand operator(&) in front of array[0]. Then printing ‘ptr’ will give us the output 100.

int array[5]={10,11,12,13,14}; int *ptr; ptr = &array[0]; printf("\n%d",ptr);

Printing *ptr will give 10 in the output.

printf("\n%d",*ptr);

If I want to print (ptr+1) then the address would be 204 and if i try to dereference (p+1) and try to print this value then it will be ’11.’

printf("\n%d",ptr+1); printf("\n%d",*(ptr+1));

Similarly, if we wanted to access the third element of the array we could print (ptr+2).

Thus, using pointer arithmetic makes sense in the case of arrays, as we know the value in the adjacent location.

Accessing the address of the first element of an array

One more property of the array is that by just using the name of the array we can access the address of the first element of the array. Then ‘array’ gives us the pointer to the first element in the array.

For example:

ptr =array;

In fact we do not even need to take this address in another pointer variable. If we simply print ‘array’ then this gives us nothing but the address of the first element of the array which is 100 in our case.

int array[5]={10,11,12,13,14}; int *ptr; ptr = array; printf("\n%d",array);

Assigning an array to a pointer is equal to assigning the memory address of the first value in the array to the pointer

How to get the memory address and value of an array element?

- To obtain the value of the first element of the array we will have to deference ‘array’ as shown below. This will give us the value of the first element of the array which is 10 in our case.

printf("\n%d",*array);

- If we want to print (array+1) then this will give us the address of the second element in the array i.e.104 and *(a+1) will give us the value of the second element of the array i.e. ’11’ in our case.

printf("\n%d",array+1); printf("\n%d",*(array+1));

For an element in the array at index ‘i’, we can retrieve the address of this particular element in the memory using either &array[i] or (array+i). These two will give us the address of array[i].

The value of array[i] can be retrieved using either array[i] or *(array+i). This is an important concept. Both statements are equivalent.

- The address of the first element in the array can also be called the base address. Simply using the variable name e.g. ‘array’ gives us the base address of the array.

Let us look at some example C codes to further understand the concepts of pointers with arrays.

Subscript notation vs Pointers. Which one to choose when accessing values?

As you noticed, we used two different methods to locate the memory addresses of the elements of the array as well as accessing the value of the elements. We used both subscript notation and pointers to access the values of elements. Although we obtained the same result in either case but using one of them has a slightly higher advantage over the other, depending upon the way you want to access the elements.

- If you want to access elements in an array in a particular fixed order e.g. from beginning to end or using some other logic, then using pointers is clearly a better option. Moreover, using pointers to access elements in arrays is more faster than using subscript notation.

- However, if there is no fixed logic being used to access the elements then it is very easy to use the subscript notation instead of working with pointers. It is simpler and easier to program.

Example Codes for pointers with arrays

In our first example program, we have an integer array called ‘num’. If we simply print ‘num’ then it should give us the address of the first element in the array. We can also obtain the address of the first element in the array by using the ampersand operator in front of num[0]. Printing num[0] will print the first element in the array. Moreover, we can also access the first element in the array by using an asterisk operator in front of the variable name ‘num.’