hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6

values | lang stringclasses 1

value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191

values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9acad0ded6269eef35484dbc21c3aee4d10f2db7 | 24,052 | md | Markdown | fontlab7/srcdocs/mkdocs/fontlab.YPanelManager.md | fontlabcom/fontlab-python | e0c1083c2cee2d7a25b1197c5ae47da235fd5007 | [

"Apache-2.0"

] | 3 | 2020-09-16T02:13:27.000Z | 2022-03-09T14:23:31.000Z | fontlab7/srcdocs/mkdocs/fontlab.YPanelManager.md | fontlabcom/fontlab-python | e0c1083c2cee2d7a25b1197c5ae47da235fd5007 | [

"Apache-2.0"

] | null | null | null | fontlab7/srcdocs/mkdocs/fontlab.YPanelManager.md | fontlabcom/fontlab-python | e0c1083c2cee2d7a25b1197c5ae47da235fd5007 | [

"Apache-2.0"

] | null | null | null |

<a name="fontlab.YPanelManager"></a>

# `YPanelManager`

<dt class="class"><h2><span class="class-name">fontlab.YPanelManager</span> = <a name="fontlab.YPanelManager" href="#fontlab.YPanelManager">class YPanelManager</a>(PythonQt.PythonQtInstanceWrapper)</h2></dt><dd class="class"><dd>

<pre class="doc" markdown="0... | 38.238474 | 336 | 0.711084 | yue_Hant | 0.342629 |

9acae4841906c14d897847adce550b39989fea08 | 4,362 | md | Markdown | BENCHMARK.md | janosch-x/immutable_set | 8224cc7664d8c96b7b55a2e3d97413af7b980876 | [

"MIT"

] | 1 | 2018-06-27T00:13:16.000Z | 2018-06-27T00:13:16.000Z | BENCHMARK.md | janosch-x/immutable_set | 8224cc7664d8c96b7b55a2e3d97413af7b980876 | [

"MIT"

] | null | null | null | BENCHMARK.md | janosch-x/immutable_set | 8224cc7664d8c96b7b55a2e3d97413af7b980876 | [

"MIT"

] | null | null | null | Results of `rake:benchmark` on ruby 2.5.1p57 (2018-03-29 revision 63029) [x86_64-darwin17]

Note: `stdlib` refers to `SortedSet` without the `rbtree` gem. If the `rbtree` gem is present, `SortedSet` will [use it](https://github.com/ruby/ruby/blob/b1a8c64/lib/set.rb#L709-L724) and become even slower.

```

#- with 5M ove... | 33.045455 | 208 | 0.44475 | kor_Hang | 0.508332 |

9acae81eaacc7a27d9b3acd7f16bf7c0e7be4347 | 173 | md | Markdown | CHANGELOG.md | samAroundGitHub/eslint-plugin-bud | eed64a583ac7043b250894e263144d520d22c5ef | [

"MIT"

] | null | null | null | CHANGELOG.md | samAroundGitHub/eslint-plugin-bud | eed64a583ac7043b250894e263144d520d22c5ef | [

"MIT"

] | 4 | 2020-09-06T13:48:29.000Z | 2021-09-01T19:29:40.000Z | CHANGELOG.md | samAroundGitHub/eslint-plugin-bud | eed64a583ac7043b250894e263144d520d22c5ef | [

"MIT"

] | null | null | null | #ChangeLog

## 0.1.0 - 2019.07.19

### Added

* 新增统计eslint规则

* 新增统计eslint-plugin-import规则

* 新增统计eslint-plugin-jsx-a11y规则

* 新增统计eslint-plugin-react规则

* 新增统计eslint-plugin-vue规则 | 17.3 | 30 | 0.751445 | yue_Hant | 0.491172 |

9acb5fc9cb5017a71b6f42acdc3ee420a700191f | 5,469 | md | Markdown | README.md | jstty/beelzebub | c78f0bb984669f79011d7fc6d3820b9ab000c574 | [

"MIT"

] | 10 | 2016-11-09T17:48:57.000Z | 2019-10-17T11:09:24.000Z | README.md | jstty/beelzebub | c78f0bb984669f79011d7fc6d3820b9ab000c574 | [

"MIT"

] | 52 | 2016-08-18T07:47:20.000Z | 2022-03-28T00:11:08.000Z | README.md | jstty/beelzebub | c78f0bb984669f79011d7fc6d3820b9ab000c574 | [

"MIT"

] | 4 | 2016-07-28T17:24:56.000Z | 2016-11-09T17:45:29.000Z | <!-- # Beelzebub - One hell of a task master! -->

<center id="top"><img src="./assets/bz-logo-full.png" /></center>

[](http://travis-ci.org/jstty/beelzebub)

[ for commit guidelines.

# [4.12.0](https://github.com/RedHatInsights/insights-common-typescript/compare/@redhat-cloud-services/insights-common-typescript@4.11.0...@redhat-cloud-... | 37.714789 | 209 | 0.789562 | eng_Latn | 0.208027 |

9acc7d925bc3a35f552388065663d1905e4925f3 | 34 | md | Markdown | README.md | peter-budo/bookschelf | bed6681ece7f60fcbc1afb5b9cbb37748fa20937 | [

"Apache-2.0"

] | null | null | null | README.md | peter-budo/bookschelf | bed6681ece7f60fcbc1afb5b9cbb37748fa20937 | [

"Apache-2.0"

] | null | null | null | README.md | peter-budo/bookschelf | bed6681ece7f60fcbc1afb5b9cbb37748fa20937 | [

"Apache-2.0"

] | null | null | null | # bookschelf

Database of my books

| 11.333333 | 20 | 0.794118 | eng_Latn | 0.900259 |

9acc8cbd8e1d5944585bb63ac11f565defc71994 | 3,080 | md | Markdown | _posts/2012-05-07-remote-config-files-in-codeigniter.md | mikedfunk/mikedfunk.github.io | ba5757bb36284c05626aa44965b8d37e2dce9a6c | [

"MIT"

] | null | null | null | _posts/2012-05-07-remote-config-files-in-codeigniter.md | mikedfunk/mikedfunk.github.io | ba5757bb36284c05626aa44965b8d37e2dce9a6c | [

"MIT"

] | 2 | 2021-09-27T21:32:40.000Z | 2022-02-26T04:00:09.000Z | _posts/2012-05-07-remote-config-files-in-codeigniter.md | mikedfunk/mikedfunk.github.io | ba5757bb36284c05626aa44965b8d37e2dce9a6c | [

"MIT"

] | null | null | null | ---

title: Remote Config Files In CodeIgniter

layout: post

---

I ran into a situation recently where I had multiple CodeIgniter apps which depended on the same config values. No problem, right? Just use a common third_party folder. That would work, except they were on different servers! My solution was to echo the con... | 31.752577 | 366 | 0.628571 | eng_Latn | 0.93037 |

9acd1e9cdf2f80801e08c2231bcf1491bd059718 | 1,415 | md | Markdown | README.md | epigos/data-explorer | d90be65fe046b49025dc6fa422baa5c127dcb734 | [

"MIT"

] | 1 | 2017-01-18T08:40:40.000Z | 2017-01-18T08:40:40.000Z | README.md | epigos/data-explorer | d90be65fe046b49025dc6fa422baa5c127dcb734 | [

"MIT"

] | null | null | null | README.md | epigos/data-explorer | d90be65fe046b49025dc6fa422baa5c127dcb734 | [

"MIT"

] | null | null | null | # Data Exploration with Matplotlib and D3.js

[](https://travis-ci.org/epigos/data-explorer)

[](https://badge.fury.io/py/dexplorer)

This is a small library built with Tornado, Matplotlib and Pandas to ... | 25.727273 | 139 | 0.714488 | eng_Latn | 0.945755 |

261d46d8ba232dad7c29e705a45ba2913ad9a5a0 | 2,761 | md | Markdown | content/post/2007-06-21-cliff-ive-used-vmware-in-production.md | scottslowe/weblog | dcf9c6a5d0a8d9b7fb507ce7b6fcee1b11eb065f | [

"MIT"

] | 9 | 2018-12-19T09:50:28.000Z | 2022-03-31T00:40:39.000Z | content/post/2007-06-21-cliff-ive-used-vmware-in-production.md | scottslowe/weblog | dcf9c6a5d0a8d9b7fb507ce7b6fcee1b11eb065f | [

"MIT"

] | 2 | 2018-04-23T13:45:38.000Z | 2020-01-24T23:04:16.000Z | content/post/2007-06-21-cliff-ive-used-vmware-in-production.md | scottslowe/weblog | dcf9c6a5d0a8d9b7fb507ce7b6fcee1b11eb065f | [

"MIT"

] | 9 | 2018-04-22T05:43:46.000Z | 2022-03-02T20:28:45.000Z | ---

author: slowe

categories: Rant

comments: true

date: 2007-06-21T23:55:26Z

slug: cliff-ive-used-vmware-in-production

tags:

- Virtualization

- VMware

title: Cliff, I've Used VMware in Production

url: /2007/06/21/cliff-ive-used-vmware-in-production/

wordpress_id: 475

---

"How many people have deployed VMware or Xen or... | 92.033333 | 608 | 0.788845 | eng_Latn | 0.995294 |

261d5a5a64c9282d9c54f01aacad0bc428bc3357 | 2,346 | md | Markdown | _posts/2011-07-04-bit-of-a-struggle.md | david74a/david74a.github.io | 9c03796423d8d0545c12541eada7ac9123e0e7f3 | [

"MIT"

] | null | null | null | _posts/2011-07-04-bit-of-a-struggle.md | david74a/david74a.github.io | 9c03796423d8d0545c12541eada7ac9123e0e7f3 | [

"MIT"

] | null | null | null | _posts/2011-07-04-bit-of-a-struggle.md | david74a/david74a.github.io | 9c03796423d8d0545c12541eada7ac9123e0e7f3 | [

"MIT"

] | null | null | null | ---

layout: post

title: Bit of a struggle

published: true

---

# Figueira de Foz to Aveiro and Sao Jactim

*Sao Jactim*

I think it's fair to say the marina at Figueira de Foz is

a\) not the most efficient in the world - it's fine to be relaxed, but too near the h... | 73.3125 | 524 | 0.76769 | eng_Latn | 0.999918 |

261d86c00d8588373deed52843193fabdb3dd5f0 | 2,061 | md | Markdown | README.md | dnaase/QRF_spark | fb8982c9e7d468ba14d15ebe8db3b49e11d52431 | [

"MIT"

] | null | null | null | README.md | dnaase/QRF_spark | fb8982c9e7d468ba14d15ebe8db3b49e11d52431 | [

"MIT"

] | null | null | null | README.md | dnaase/QRF_spark | fb8982c9e7d468ba14d15ebe8db3b49e11d52431 | [

"MIT"

] | null | null | null | # QtlWater

A meQTL/eQTL detection method based on Gradient Boost Tree model by using recombination rate and HiC signal to boost the power.

Liu Y & Kellis M. QtlWater: boosting molecular quantitative trait loci mapping power by incorporating recombination rate and chromatin conformation changes. In preparation.

## Tab... | 26.088608 | 246 | 0.755459 | eng_Latn | 0.499053 |

261f64d3fd77c1ad6a078f188c12492dbbe0c117 | 872 | md | Markdown | commands/navigation/cl-navigation.md | danielmoi/command-line-tute | e192ece8ddf25c6d78913e4269998b4c4203874b | [

"Apache-2.0"

] | 1 | 2018-02-17T23:14:31.000Z | 2018-02-17T23:14:31.000Z | commands/navigation/cl-navigation.md | danielmoi/command-line-tute | e192ece8ddf25c6d78913e4269998b4c4203874b | [

"Apache-2.0"

] | null | null | null | commands/navigation/cl-navigation.md | danielmoi/command-line-tute | e192ece8ddf25c6d78913e4269998b4c4203874b | [

"Apache-2.0"

] | null | null | null | # Command Line Navigation

## Find command

```

<Up> Show the previous command

CTRL-P Pressing <Up> again will cycle backwards through the list

[chars]<CTRL-R> Show the first command in the Search History that match chars

Pressing <CTRL-R> again will cycle backwards t... | 25.647059 | 88 | 0.607798 | eng_Latn | 0.984101 |

261fb1393de88242863596dbc38416cd43bef7e0 | 606 | md | Markdown | catalog/beautiful-gunbari/en-US_beautiful-gunbari.md | htron-dev/baka-db | cb6e907a5c53113275da271631698cd3b35c9589 | [

"MIT"

] | 3 | 2021-08-12T20:02:29.000Z | 2021-09-05T05:03:32.000Z | catalog/beautiful-gunbari/en-US_beautiful-gunbari.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

] | 8 | 2021-07-20T00:44:48.000Z | 2021-09-22T18:44:04.000Z | catalog/beautiful-gunbari/en-US_beautiful-gunbari.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

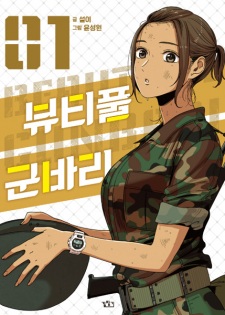

] | 2 | 2021-07-19T01:38:25.000Z | 2021-07-29T08:10:29.000Z | # Beautiful Gunbari

- **type**: manhwa

- **original-name**: 뷰티풀 군바리

- **start-date**: 2015-02-23

## Tags

- comedy

- drama

- slice-of-life

- military

## Authors

- Seoli (Story)

- Yoon

- Sung-Won (Art)

## Sinopse

What ... | 19.548387 | 189 | 0.679868 | eng_Latn | 0.826287 |

262011c6a9ac6b210a816f24c94d12bd95b171c0 | 397 | md | Markdown | MusicFestival/samples/EPiServer.ContentApi.MusicFestival.Frontend/README.md | episerver/ascend2018-lab-extend-ui | 1adc7b55efd553de1f9325cf1581033d70d5785d | [

"Apache-2.0"

] | 3 | 2018-03-13T21:18:45.000Z | 2018-03-19T15:29:58.000Z | MusicFestival/samples/EPiServer.ContentApi.MusicFestival.Frontend/README.md | episerver/ascend2018-lab-extend-ui | 1adc7b55efd553de1f9325cf1581033d70d5785d | [

"Apache-2.0"

] | 1 | 2020-05-10T06:19:30.000Z | 2020-05-10T06:20:05.000Z | MusicFestival/samples/EPiServer.ContentApi.MusicFestival.Frontend/README.md | seriema/ascend2018-lab-extend-ui | 1adc7b55efd553de1f9325cf1581033d70d5785d | [

"Apache-2.0"

] | null | null | null | # EPiServer Headless, Demo App

A demo project for the EPiServer Headless project. Built with Vue to be served in a WebView Xamarin App.

## Build Setup

``` bash

# install dependencies

npm install

# serve with hot reload at localhost:8080

npm run dev

# build for production with minification

npm run build

# build fo... | 18.904762 | 104 | 0.763224 | eng_Latn | 0.980091 |

262038b1c6df3d858a8f645d59cb384d9737ab38 | 1,889 | md | Markdown | README.md | coreyja/devto-view-count-graphs | e7d175005b75c59c50ca12624235cdf726214d10 | [

"MIT"

] | 1 | 2021-01-11T19:48:20.000Z | 2021-01-11T19:48:20.000Z | README.md | coreyja/devto-view-count-graphs | e7d175005b75c59c50ca12624235cdf726214d10 | [

"MIT"

] | null | null | null | README.md | coreyja/devto-view-count-graphs | e7d175005b75c59c50ca12624235cdf726214d10 | [

"MIT"

] | 1 | 2021-01-12T06:47:05.000Z | 2021-01-12T06:47:05.000Z | # DEV.to View Count

This project was created for the DEV.to Digital Ocean Hackathon: https://dev.to/devteam/announcing-the-digitalocean-app-platform-hackathon-on-dev-2i1k

It is a Rails app that tracks your DEV.to Article stats, and can create graphs of your views and commnets over time!

## Deploying To Digital Ocean... | 52.472222 | 182 | 0.782954 | eng_Latn | 0.989134 |

2620e36e6ecdc8a43da8214982f82891368dc267 | 1,140 | md | Markdown | firmware/docs/build_environment/win2_clean.md | SLA00/Kermite | 324c9fcc50baad893c0c8aa2e67a899bca35e176 | [

"MIT"

] | null | null | null | firmware/docs/build_environment/win2_clean.md | SLA00/Kermite | 324c9fcc50baad893c0c8aa2e67a899bca35e176 | [

"MIT"

] | null | null | null | firmware/docs/build_environment/win2_clean.md | SLA00/Kermite | 324c9fcc50baad893c0c8aa2e67a899bca35e176 | [

"MIT"

] | null | null | null | # OSの環境をなるべく汚染しない構成

基本的な構成は[OSの設定でパスを通す場合](./win1_default.md)と同様です。

開発環境の汚染を防ぐため、全部のツールにはパスを通さず、Makeにだけパスを通している点が異なります。

## OSの環境設定でパスを通すもの

* Make for Windows

## Makefileの中でパスを指定して利用するもの

* AVR-GCC

* arm-none-eabi-gcc

* GOW (or CoreUtils)

* MinGW

GNU Makeをグローバルにインストールしてパスを通し、他のツールはパスを通さずMakefile内だけから参照するように... | 33.529412 | 109 | 0.739474 | yue_Hant | 0.611182 |

2621601b3239b9afaa3d961d80f85faa979dbeaf | 2,279 | md | Markdown | expertise.md | regulawolf/github.io | a43c0185ed1f375255b37331a45edba26119cc36 | [

"CC-BY-3.0"

] | null | null | null | expertise.md | regulawolf/github.io | a43c0185ed1f375255b37331a45edba26119cc36 | [

"CC-BY-3.0"

] | null | null | null | expertise.md | regulawolf/github.io | a43c0185ed1f375255b37331a45edba26119cc36 | [

"CC-BY-3.0"

] | 1 | 2019-09-17T09:45:28.000Z | 2019-09-17T09:45:28.000Z | ---

title: Expertise

date: '2018-09-07T13:24:01.000+00:00'

layout: page

menu_title: Expertise

banner_title: Expertise

banner_subtitle: ''

seo_title: Regula Wolf I Stiftungs- und Public Management

banner_image: "/uploads/steinwasser_2_Ausschnitt.jpg"

keywords:

- Fördermassnahmen

- Fördermodelle

- Förderbeiträge

- Regula... | 47.479167 | 702 | 0.82975 | deu_Latn | 0.991453 |

26222a279723a915b6adde7f3e0a4987e072b3ab | 57 | md | Markdown | README.md | RichardSaxion/HyEnModel | 2e57b433a127ef386fcb0c5dfb65297521c1bc6f | [

"CC0-1.0"

] | null | null | null | README.md | RichardSaxion/HyEnModel | 2e57b433a127ef386fcb0c5dfb65297521c1bc6f | [

"CC0-1.0"

] | null | null | null | README.md | RichardSaxion/HyEnModel | 2e57b433a127ef386fcb0c5dfb65297521c1bc6f | [

"CC0-1.0"

] | null | null | null | # HyEnModel

Hydrogen System Model as part of energy hubs

| 19 | 44 | 0.807018 | eng_Latn | 0.901658 |

262272b2f40527a922ba67b7b633c245e22a99b4 | 652 | md | Markdown | guide/spanish/miscellaneous/html-elements/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 10 | 2019-08-09T19:58:19.000Z | 2019-08-11T20:57:44.000Z | guide/spanish/miscellaneous/html-elements/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 2,056 | 2019-08-25T19:29:20.000Z | 2022-02-13T22:13:01.000Z | guide/spanish/miscellaneous/html-elements/index.md | SweeneyNew/freeCodeCamp | e24b995d3d6a2829701de7ac2225d72f3a954b40 | [

"BSD-3-Clause"

] | 5 | 2018-10-18T02:02:23.000Z | 2020-08-25T00:32:41.000Z | ---

title: HTML Elements

localeTitle: Elementos HTML

---

La mayoría de los elementos HTML tienen una etiqueta de apertura y una etiqueta de cierre.

Las etiquetas de apertura tienen este aspecto: `<h1>` y las etiquetas de Cierre tienen este aspecto: `</h1>` .

Tenga en cuenta que la única diferencia entre abrir y cerra... | 43.466667 | 161 | 0.768405 | spa_Latn | 0.994657 |

26227fe7ddc812b46127b8ee6bef1c53287292ad | 420 | md | Markdown | README.md | stemnic/xv6-rv32-systemc | 6f2dc6e34f99f59d88697a2ff7030dd49422a589 | [

"MIT-0"

] | 8 | 2020-10-09T15:14:17.000Z | 2021-09-30T19:20:18.000Z | README.md | riscv2os/xv6-rv32 | 73b634560546a13f3c1a209c09cc6b584e1de68f | [

"MIT-0"

] | null | null | null | README.md | riscv2os/xv6-rv32 | 73b634560546a13f3c1a209c09cc6b584e1de68f | [

"MIT-0"

] | 6 | 2021-01-15T03:33:39.000Z | 2022-03-05T04:13:09.000Z | # xv6-rv32

This is a port of MIT's xv6 OS [1] to 32 bit RISC V (rv32ia).

This currently runs in qemu-system-riscv32 (tested with qemu-5.0.0) using virtio drivers.

The official version of xv6 supports x86 [1] and 64 bit RISC V (rv64) [3]. See the

original documentation in README.

[1] https://pdos.csail.mit.edu/6.828/... | 35 | 89 | 0.72381 | eng_Latn | 0.510885 |

2622d296e446a4aa2a5aafcfb947b666342d2556 | 3,565 | md | Markdown | papers/mdp_homomorphisms_plannable_approximations.md | smspillaz/reading | b9c014906296162db61886e4e9e8600dbfce2b84 | [

"MIT"

] | 1 | 2022-03-10T06:07:19.000Z | 2022-03-10T06:07:19.000Z | papers/mdp_homomorphisms_plannable_approximations.md | smspillaz/reading | b9c014906296162db61886e4e9e8600dbfce2b84 | [

"MIT"

] | null | null | null | papers/mdp_homomorphisms_plannable_approximations.md | smspillaz/reading | b9c014906296162db61886e4e9e8600dbfce2b84 | [

"MIT"

] | null | null | null | # Plannable Approximatiosn to MDP Homomorphisms: Equivariance under Actions

tl;dr:

- Symmetries may exist in MDPs

- Introduces a contrastive loss function which enforces action equivariance on the learned representations

- When the loss is zero, you have a homomorphism of a deterministic MDP

Equivalence classes: ... | 48.835616 | 269 | 0.690323 | eng_Latn | 0.994567 |

2622f50be56ae8df10dd432307ccc83cb06b51fc | 802 | md | Markdown | docs/api/bindings/ThemeContext.md | theghostyced/fela | e58f5b722ae52acd08c1f5030c9b2549beb61b0e | [

"MIT"

] | 1 | 2020-04-08T17:05:23.000Z | 2020-04-08T17:05:23.000Z | docs/api/bindings/ThemeContext.md | theghostyced/fela | e58f5b722ae52acd08c1f5030c9b2549beb61b0e | [

"MIT"

] | null | null | null | docs/api/bindings/ThemeContext.md | theghostyced/fela | e58f5b722ae52acd08c1f5030c9b2549beb61b0e | [

"MIT"

] | null | null | null | # ThemeContext

ThemeContext is the internal instance of `React.createContext` that is provided by the new [Context API](https://facebook.github.io/react/docs/context.html). It is exposed to be used with React's new [useContext API](https://reactjs.org/docs/hooks-reference.html#usecontext).

> **Note**: Although it is ... | 34.869565 | 275 | 0.745636 | eng_Latn | 0.833251 |

262302c79db6437a735e404fd50fd78bb74aa9bd | 13,190 | md | Markdown | windows-driver-docs-pr/netcx/writing-an-mbbcx-client-driver.md | k-takai/windows-driver-docs.ja-jp | f28c3b8e411a2502e6378eaeef88cbae054cd745 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows-driver-docs-pr/netcx/writing-an-mbbcx-client-driver.md | k-takai/windows-driver-docs.ja-jp | f28c3b8e411a2502e6378eaeef88cbae054cd745 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows-driver-docs-pr/netcx/writing-an-mbbcx-client-driver.md | k-takai/windows-driver-docs.ja-jp | f28c3b8e411a2502e6378eaeef88cbae054cd745 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: MBB NetAdapterCx クライアント ドライバーを作成します。

description: MBB NetAdapter クラスの拡張機能と、クライアント ドライバーが MBB moderm に対して実行する必要がありますタスクの動作について説明します。

ms.assetid: FE69E832-848F-475A-9BF1-BBB198D08A86

keywords:

- (MBB モバイル ブロード バンド) WDF クラスの拡張機能、MBBCx、モバイル ブロード バンド NetAdapterCx

ms.date: 03/19/2018

ms.localizationpriority: mediu... | 65.95 | 531 | 0.793556 | yue_Hant | 0.949781 |

26241fb4e545f055df3b2be85cfe76c0feb098d9 | 1,774 | md | Markdown | windows-driver-docs-pr/devtest/wdftester-installation.md | DriverRestore/windows-driver-docs | 444b23fdd0c241c8fd841c23d3b069f51dc3c333 | [

"CC-BY-3.0"

] | null | null | null | windows-driver-docs-pr/devtest/wdftester-installation.md | DriverRestore/windows-driver-docs | 444b23fdd0c241c8fd841c23d3b069f51dc3c333 | [

"CC-BY-3.0"

] | null | null | null | windows-driver-docs-pr/devtest/wdftester-installation.md | DriverRestore/windows-driver-docs | 444b23fdd0c241c8fd841c23d3b069f51dc3c333 | [

"CC-BY-3.0"

] | null | null | null | ---

title: WdfTester Installation

description: WdfTester Installation

ms.assetid: 39645ca4-3f4e-4a1f-bf62-7b44856ce58e

---

# WdfTester Installation

Before you can run the WdfTester tool on your driver, you must first copy the WdfTester files to a working directory and run an installation script.

**To install WdfTes... | 50.685714 | 931 | 0.797069 | eng_Latn | 0.736121 |

262445d0c8e51bcf2526aee3caba426c20db406f | 260 | md | Markdown | OpenLayers-Tests/mapWithInfoOnClick/README.md | Gouga34/OpenGeo | 0b8842fe5cdb5b195a4df872753328a23429441e | [

"MIT"

] | null | null | null | OpenLayers-Tests/mapWithInfoOnClick/README.md | Gouga34/OpenGeo | 0b8842fe5cdb5b195a4df872753328a23429441e | [

"MIT"

] | null | null | null | OpenLayers-Tests/mapWithInfoOnClick/README.md | Gouga34/OpenGeo | 0b8842fe5cdb5b195a4df872753328a23429441e | [

"MIT"

] | null | null | null | Le but est d'afficher les informations relatives à une des parcelles affichées lorsque l'on clique sur celle-ci.

Ce code est basé sur l'exemple de la doc : [WMS GetFeatureInfo (Image Layer)](http://openlayers.org/en/v3.14.2/examples/getfeatureinfo-image.html)

| 86.666667 | 146 | 0.788462 | fra_Latn | 0.956363 |

2624744353c06150ff006c0abd740d1b4fa50fed | 2,572 | md | Markdown | articles/vs-azure-tools-access-private-azure-clouds-with-visual-studio.md | ggailey777/azure-docs | 4520cf82cb3d15f97877ba445b0cfd346c81a034 | [

"CC-BY-3.0"

] | null | null | null | articles/vs-azure-tools-access-private-azure-clouds-with-visual-studio.md | ggailey777/azure-docs | 4520cf82cb3d15f97877ba445b0cfd346c81a034 | [

"CC-BY-3.0"

] | null | null | null | articles/vs-azure-tools-access-private-azure-clouds-with-visual-studio.md | ggailey777/azure-docs | 4520cf82cb3d15f97877ba445b0cfd346c81a034 | [

"CC-BY-3.0"

] | 1 | 2019-03-31T17:25:38.000Z | 2019-03-31T17:25:38.000Z | ---

title: Accessing private Azure clouds with Visual Studio | Microsoft Docs

description: Learn how to access private cloud resources by using Visual Studio.

services: visual-studio-online

documentationcenter: na

author: TomArcher

manager: douge

editor: ''

ms.assetid: 9d733c8d-703b-44e7-a210-bb75874c45c8

ms.service: ... | 62.731707 | 427 | 0.783826 | eng_Latn | 0.896297 |

262641a3cee71862eaad933da3ff14b9498d736d | 56 | md | Markdown | node_modules/gregorian-calendar-format/HISTORY.md | shengwenjia/ReactNews | e2e9751d59f1cdb016ee99735cd07a32ce75b15a | [

"MIT"

] | 1 | 2017-02-20T11:53:20.000Z | 2017-02-20T11:53:20.000Z | node_modules/gregorian-calendar-format/HISTORY.md | shengwenjia/ReactNews | e2e9751d59f1cdb016ee99735cd07a32ce75b15a | [

"MIT"

] | null | null | null | node_modules/gregorian-calendar-format/HISTORY.md | shengwenjia/ReactNews | e2e9751d59f1cdb016ee99735cd07a32ce75b15a | [

"MIT"

] | null | null | null | # History

----

## 4.1.0 / 2016-01-22

- support YY/YYYY | 9.333333 | 21 | 0.553571 | eng_Latn | 0.204051 |

2626dcd1c5de1cbb93256a5fc85dd75f03b6fbf6 | 1,080 | md | Markdown | content/appcenter/market/serviceprovider/10_prerequisite.md | Wciel/yiqiyun-government-docs | 401baef9981aadfe6af243dbee8326fab20c82d9 | [

"Apache-2.0"

] | null | null | null | content/appcenter/market/serviceprovider/10_prerequisite.md | Wciel/yiqiyun-government-docs | 401baef9981aadfe6af243dbee8326fab20c82d9 | [

"Apache-2.0"

] | 41 | 2021-10-11T05:37:26.000Z | 2022-01-20T03:44:06.000Z | content/appcenter/market/serviceprovider/10_prerequisite.md | Wciel/yiqiyun-government-docs | 401baef9981aadfe6af243dbee8326fab20c82d9 | [

"Apache-2.0"

] | 8 | 2021-10-09T02:40:37.000Z | 2022-01-20T03:17:06.000Z | ---

title: "入驻须知"

description: 入驻须知

weight: 10

draft: false

---

若您需要在**山东省计算中心云平台应用市场**发布应用,需要 [申请入驻](http://appcenter.yiqiyun.sd.cegn.cn/apply) 山东省计算中心云平台应用市场。

入驻山东省计算中心云平台应用市场需要满足[企业入驻条件](#企业条件)。在应用上架之前,需要完成[企业资质](#企业资质)的审核和[相关合约](#相关合约)的签署。

<img src="../../_images/um_appserver_apply.png" style="zoom:60%;" />

## ... | 17.704918 | 100 | 0.738889 | yue_Hant | 0.642113 |

26273e7e021c29eb7addc47301914750e89a18db | 1,938 | md | Markdown | _posts/2019-01-02-emerald.md | kokipedia/kokipedia.github.io | 0c32737aee323773b0edc7afd16de1fd1631bfbd | [

"Apache-2.0"

] | null | null | null | _posts/2019-01-02-emerald.md | kokipedia/kokipedia.github.io | 0c32737aee323773b0edc7afd16de1fd1631bfbd | [

"Apache-2.0"

] | null | null | null | _posts/2019-01-02-emerald.md | kokipedia/kokipedia.github.io | 0c32737aee323773b0edc7afd16de1fd1631bfbd | [

"Apache-2.0"

] | null | null | null | ---

layout: post

title: "Kangaroo Emerald Cookware"

category: cookware

image: cover.jpg

---

Peralatan masak ekonomis berbahan aluminium dengan lapisan _xylan_ yang anti lengket. Cocok banget untuk digunakan sehari-hari.

***

## Simak Videonya...

<div class="video-container">

<iframe src="https://www.youtube.com/embed/... | 31.258065 | 183 | 0.757482 | ind_Latn | 0.884381 |

26275e367e93f7c43b69a8013da8699729faae4c | 418 | md | Markdown | README.md | bmstu-iu9/utp2019-4-chat | d8c5d09f170751cbaa6b564e0a71ff5c46fa4ec8 | [

"MIT"

] | 2 | 2019-08-31T22:31:30.000Z | 2019-08-31T22:32:05.000Z | README.md | bmstu-iu9/utp2019-4-chat | d8c5d09f170751cbaa6b564e0a71ff5c46fa4ec8 | [

"MIT"

] | 7 | 2019-07-23T11:30:09.000Z | 2019-09-22T16:03:21.000Z | README.md | bmstu-iu9/utp2019-4-chat | d8c5d09f170751cbaa6b564e0a71ff5c46fa4ec8 | [

"MIT"

] | 1 | 2019-08-05T18:31:40.000Z | 2019-08-05T18:31:40.000Z | # utp2019-4-chat

Чат (капитан Герман Кульчицкий)

## Члены команды:

* [Кульчицкий Герман](https://github.com/jetsnake)

* [Хробак Юлия](https://github.com/yukhrobak)

* [Ковайкин Роман](https://github.com/kovrom777)

* [Базартинова Фарида](https://github.com/farichase)

* [Хилядникова Ива](https://github.com/ivvhis)

* [Мед... | 34.833333 | 52 | 0.739234 | bjn_Latn | 0.071605 |

2627be0bd7b44743911bd64b0a904da8c894acd9 | 103 | md | Markdown | iota-streams-core-mss/README.md | JakeSCahill/streams | ef7fcacf8aec5ab88610f0c9951e09fdee9d549b | [

"MIT",

"Apache-2.0",

"MIT-0"

] | null | null | null | iota-streams-core-mss/README.md | JakeSCahill/streams | ef7fcacf8aec5ab88610f0c9951e09fdee9d549b | [

"MIT",

"Apache-2.0",

"MIT-0"

] | null | null | null | iota-streams-core-mss/README.md | JakeSCahill/streams | ef7fcacf8aec5ab88610f0c9951e09fdee9d549b | [

"MIT",

"Apache-2.0",

"MIT-0"

] | 3 | 2020-10-26T20:22:54.000Z | 2021-10-03T04:46:02.000Z | # A rust implementation of the IOTA Streams Merkle signature scheme over Winternitz one-time signature

| 51.5 | 102 | 0.834951 | eng_Latn | 0.779734 |

26283a2cfcb268c0dd4d8f30f862579bcb8b6284 | 67 | md | Markdown | docs/DevelopersGuide/BrowserUI/options.md | enja-oss/ChromeExtensions | baa34ff4c7a0b88ed3bfbbd66a2a4edf4f5f47bf | [

"CC-BY-3.0"

] | 1 | 2015-03-16T11:13:37.000Z | 2015-03-16T11:13:37.000Z | docs/DevelopersGuide/BrowserUI/options.md | enja-oss/ChromeExtensions | baa34ff4c7a0b88ed3bfbbd66a2a4edf4f5f47bf | [

"CC-BY-3.0"

] | 1 | 2020-08-02T05:12:51.000Z | 2020-08-02T05:12:51.000Z | docs/DevelopersGuide/BrowserUI/options.md | enja-oss/ChromeExtensions | baa34ff4c7a0b88ed3bfbbd66a2a4edf4f5f47bf | [

"CC-BY-3.0"

] | null | null | null | # [Options](https://developer.chrome.com/extensions/options.html)

| 22.333333 | 65 | 0.761194 | kor_Hang | 0.638554 |

2629037a8c6a2b6cff3e74645617863635fc3be3 | 1,218 | md | Markdown | docs/csharp/misc/cs1511.md | mtorreao/docs.pt-br | e080cd3335f777fcb1349fb28bf527e379c81e17 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/csharp/misc/cs1511.md | mtorreao/docs.pt-br | e080cd3335f777fcb1349fb28bf527e379c81e17 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/csharp/misc/cs1511.md | mtorreao/docs.pt-br | e080cd3335f777fcb1349fb28bf527e379c81e17 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

description: Erro do Compilador CS1511

title: Erro do Compilador CS1511

ms.date: 07/20/2015

f1_keywords:

- CS1511

helpviewer_keywords:

- CS1511

ms.assetid: c04b5268-5bc3-41db-af6b-463ab1d802b4

ms.openlocfilehash: 678287a3f4d5382ce9d7f11002430f636495d298

ms.sourcegitcommit: 5b475c1855b32cf78d2d1bbb4295e4c236f39464

m... | 24.857143 | 254 | 0.688834 | por_Latn | 0.857148 |

262914a743334d39ffc8ca28e40dfe66a8547e07 | 283 | md | Markdown | 2-resources/_GENERAL-RESOURCES/awesome-resources/awesome-list-master/AWESOME.md | eengineergz/Lambda | 1fe511f7ef550aed998b75c18a432abf6ab41c5f | [

"MIT"

] | null | null | null | 2-resources/_GENERAL-RESOURCES/awesome-resources/awesome-list-master/AWESOME.md | eengineergz/Lambda | 1fe511f7ef550aed998b75c18a432abf6ab41c5f | [

"MIT"

] | null | null | null | 2-resources/_GENERAL-RESOURCES/awesome-resources/awesome-list-master/AWESOME.md | eengineergz/Lambda | 1fe511f7ef550aed998b75c18a432abf6ab41c5f | [

"MIT"

] | 1 | 2021-11-05T07:48:26.000Z | 2021-11-05T07:48:26.000Z | # Awesome Lists

a awesome list of awesome lists :joy:

## Awesome Tech

- [Awesome-Tech](https://awesome-tech.readthedocs.io/elasticsearch/)

## Elasticsearch

- [@dzharii](https://github.com/dzharii/awesome-elasticsearch)

## Plotly

- [@ucg8](https://github.com/ucg8j/awesome-dash)

| 20.214286 | 68 | 0.727915 | eng_Latn | 0.263384 |

262a94e0d35f1666ad212a3abbb1222ddd25229e | 1,373 | md | Markdown | catalog/clockwork-planet/en-US_clockwork-planet-manga.md | htron-dev/baka-db | cb6e907a5c53113275da271631698cd3b35c9589 | [

"MIT"

] | 3 | 2021-08-12T20:02:29.000Z | 2021-09-05T05:03:32.000Z | catalog/clockwork-planet/en-US_clockwork-planet-manga.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

] | 8 | 2021-07-20T00:44:48.000Z | 2021-09-22T18:44:04.000Z | catalog/clockwork-planet/en-US_clockwork-planet-manga.md | zzhenryquezz/baka-db | da8f54a87191a53a7fca54b0775b3c00f99d2531 | [

"MIT"

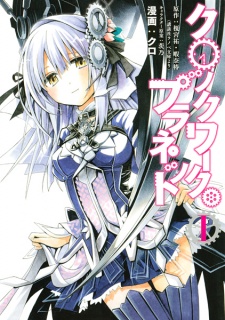

] | 2 | 2021-07-19T01:38:25.000Z | 2021-07-29T08:10:29.000Z | # Clockwork Planet

- **type**: manga

- **volumes**: 10

- **chapters**: 51

- **original-name**: クロックワーク・プラネット

- **start-date**: 2013-09-26

- **end-date**: 2013-09-26

## Tags

- fantasy

- sci-fi

- shounen

## Authors

- Kami... | 35.205128 | 324 | 0.736344 | eng_Latn | 0.991984 |

262aa4dcc4a2b92858a303b077dc3761b7c26f7e | 2,533 | md | Markdown | sdk-api-src/content/directxmath/nf-directxmath-xmvectornegativemultiplysubtract.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/directxmath/nf-directxmath-xmvectornegativemultiplysubtract.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | sdk-api-src/content/directxmath/nf-directxmath-xmvectornegativemultiplysubtract.md | amorilio/sdk-api | 54ef418912715bd7df39c2561fbc3d1dcef37d7e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

UID: NF:directxmath.XMVectorNegativeMultiplySubtract

title: XMVectorNegativeMultiplySubtract function (directxmath.h)

description: Computes the difference of a third vector and the product of the first two vectors.

helpviewer_keywords: ["Use DirectX..XMVectorNegativeMultiplySubtract","XMVectorNegativeMultiplySubtra... | 26.663158 | 217 | 0.782866 | eng_Latn | 0.51559 |

262aafcb333f2454aefa49f60036c66acc7ee956 | 8,461 | md | Markdown | guide/index.md | rufuspollock/awesome-crypto-critique | 4395459b5ec4fee9d514ef9be098944329301724 | [

"CC0-1.0"

] | 800 | 2022-01-12T13:00:06.000Z | 2022-02-24T10:07:41.000Z | guide/index.md | rufuspollock/awesome-crypto-critique | 4395459b5ec4fee9d514ef9be098944329301724 | [

"CC0-1.0"

] | 31 | 2022-01-13T21:24:06.000Z | 2022-02-24T09:00:51.000Z | guide/index.md | rufuspollock/awesome-crypto-critique | 4395459b5ec4fee9d514ef9be098944329301724 | [

"CC0-1.0"

] | 46 | 2022-01-13T13:50:05.000Z | 2022-02-23T21:43:14.000Z | # Introduction

This page serves as a root from which all other topics branch and can be explored.

## Key Concepts

Understand the terminology used to describe crypto and web3.

* [Web3](/concepts/web3.md)

* [Crypto asset](/concepts/cryptoasset.md)

* [Bitcoin](/concepts/bitcoin.md)

* [Ethereum](/concepts/ethereum.md)... | 41.886139 | 108 | 0.726746 | yue_Hant | 0.311583 |

262ab453d1c6b215b120b253e295595e31cbe680 | 3,707 | md | Markdown | docs/visual-basic/programming-guide/language-features/strings/walkthrough-validating-that-passwords-are-complex.md | Youssef1313/docs.it-it | 15072ece39fae71ee94a8b9365b02b550e68e407 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/visual-basic/programming-guide/language-features/strings/walkthrough-validating-that-passwords-are-complex.md | Youssef1313/docs.it-it | 15072ece39fae71ee94a8b9365b02b550e68e407 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/visual-basic/programming-guide/language-features/strings/walkthrough-validating-that-passwords-are-complex.md | Youssef1313/docs.it-it | 15072ece39fae71ee94a8b9365b02b550e68e407 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Convalida della complessità delle password

ms.date: 07/20/2015

helpviewer_keywords:

- String data type [Visual Basic], validation

ms.assetid: 5d9a918f-6c1f-41a3-a019-b5c2b8ce0381

ms.openlocfilehash: 6e8697379a6fbb5cc15b60291e5b822897c2c013

ms.sourcegitcommit: 17ee6605e01ef32506f8fdc686954244ba6911de

ms.trans... | 74.14 | 707 | 0.787969 | ita_Latn | 0.997007 |

262b1f2c8b8ce919c3904961caae1306bea23761 | 4,568 | md | Markdown | docset/winserver2016-ps/dcbqos/Get-NetQosDcbxSetting.md | e0i/windows-powershell-docs | f6f7b8522cd6aeb5d26afdcfc01917239b024536 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docset/winserver2016-ps/dcbqos/Get-NetQosDcbxSetting.md | e0i/windows-powershell-docs | f6f7b8522cd6aeb5d26afdcfc01917239b024536 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docset/winserver2016-ps/dcbqos/Get-NetQosDcbxSetting.md | e0i/windows-powershell-docs | f6f7b8522cd6aeb5d26afdcfc01917239b024536 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

author: Kateyanne

description: Use this topic to help manage Windows and Windows Server technologies with Windows PowerShell.

external help file: MSFT_NetQosDcbxSetting.cdxml-help.xml

manager: jasgro

Module Name: DcbQoS

ms.author: v-kaunu

ms.date: 12/27/2016

ms.mktglfcycl: manage

ms.prod: w10

ms.reviewer:

ms.sites... | 28.55 | 316 | 0.773643 | eng_Latn | 0.689682 |

262bd5a5fb9be85028bd07f629084cc7a2f237a3 | 72 | md | Markdown | README.md | knowankit/starter-template | 0ce2326a8fcc92db2d568649aac6cf1c559fb8c3 | [

"MIT"

] | null | null | null | README.md | knowankit/starter-template | 0ce2326a8fcc92db2d568649aac6cf1c559fb8c3 | [

"MIT"

] | null | null | null | README.md | knowankit/starter-template | 0ce2326a8fcc92db2d568649aac6cf1c559fb8c3 | [

"MIT"

] | 1 | 2019-10-24T00:18:40.000Z | 2019-10-24T00:18:40.000Z | # StartUpTemplate

A basic template for representing any startup company

| 24 | 53 | 0.847222 | eng_Latn | 0.992516 |

262bd72451207d202778df7d0a1d958e0e3b8eaf | 1,808 | md | Markdown | README.md | 0xtreelike/Earec | c9c62e97fa8fa6b96cab09bca1aacc61d6c9e5f3 | [

"MIT"

] | 1 | 2022-02-25T12:07:13.000Z | 2022-02-25T12:07:13.000Z | README.md | 0xtreelike/Earec | c9c62e97fa8fa6b96cab09bca1aacc61d6c9e5f3 | [

"MIT"

] | null | null | null | README.md | 0xtreelike/Earec | c9c62e97fa8fa6b96cab09bca1aacc61d6c9e5f3 | [

"MIT"

] | null | null | null | # Earec CLI

# Introduction

The Earec command-line interface (Earec CLI) is a set of commands used to create and manage activities of an Event and is designed to get you working quickly for collecting time-series data to gain insights.

# Documentation

### Earec CLI commands

1. **Event**

- *create, view, delete, ... | 28.698413 | 207 | 0.56969 | eng_Latn | 0.915382 |

262c03afe9e93f788bdd3f8c02bb57edcca64c4e | 2,616 | md | Markdown | Skype/SfbServer/schema-reference/call-detail-recording-cdr-database-schema/conferences.md | GabGonzalezPM/OfficeDocs-SkypeForBusiness | 97269bfc910d64bda83ff270da636a1c2434a9d7 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-07-07T17:18:48.000Z | 2020-07-07T17:18:48.000Z | Skype/SfbServer/schema-reference/call-detail-recording-cdr-database-schema/conferences.md | GabGonzalezPM/OfficeDocs-SkypeForBusiness | 97269bfc910d64bda83ff270da636a1c2434a9d7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | Skype/SfbServer/schema-reference/call-detail-recording-cdr-database-schema/conferences.md | GabGonzalezPM/OfficeDocs-SkypeForBusiness | 97269bfc910d64bda83ff270da636a1c2434a9d7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Conferences table in Skype for Business Server 2015"

ms.reviewer:

ms.author: v-lanac

author: lanachin

manager: serdars

ms.date: 7/15/2015

audience: ITPro

ms.topic: article

ms.prod: skype-for-business-itpro

f1.keywords:

- NOCSH

localization_priority: Normal

ms.assetid: c3da6271-b3c6-4898-894f-10456ec794d0

d... | 67.076923 | 215 | 0.699159 | eng_Latn | 0.913711 |

262dae75e258e5cb43157b6490fbb682eda7997a | 22,580 | md | Markdown | articles/service-fabric/service-fabric-cluster-capacity.md | fuadi-star/azure-docs.nl-nl | 0c9bc5ec8a5704aa0c14dfa99346e8b7817dadcd | [

"CC-BY-4.0",

"MIT"

] | 16 | 2017-08-28T07:45:43.000Z | 2021-04-20T21:12:50.000Z | articles/service-fabric/service-fabric-cluster-capacity.md | fuadi-star/azure-docs.nl-nl | 0c9bc5ec8a5704aa0c14dfa99346e8b7817dadcd | [

"CC-BY-4.0",

"MIT"

] | 575 | 2017-08-30T07:14:53.000Z | 2022-03-04T05:36:23.000Z | articles/service-fabric/service-fabric-cluster-capacity.md | fuadi-star/azure-docs.nl-nl | 0c9bc5ec8a5704aa0c14dfa99346e8b7817dadcd | [

"CC-BY-4.0",

"MIT"

] | 58 | 2017-07-06T11:58:36.000Z | 2021-11-04T12:34:58.000Z | ---

title: Overwegingen bij het plannen van Service Fabric cluster capaciteit

description: Knooppunt typen, duurzaamheid, betrouw baarheid en andere zaken waarmee u rekening moet houden bij het plannen van uw Service Fabric cluster.

ms.topic: conceptual

ms.date: 05/21/2020

ms.author: pepogors

ms.openlocfilehash: 9268df... | 113.467337 | 677 | 0.784544 | nld_Latn | 0.999882 |

262df7240ecf7b0ccb8673c5a17aa99f31e23e09 | 772 | md | Markdown | README.md | tautme/2020-09-09-CCatHome-git-adam | 1fb10faa140fbba4b6c866904f9f496e189c3bf8 | [

"Apache-2.0"

] | null | null | null | README.md | tautme/2020-09-09-CCatHome-git-adam | 1fb10faa140fbba4b6c866904f9f496e189c3bf8 | [

"Apache-2.0"

] | 1 | 2020-09-09T16:01:52.000Z | 2020-09-09T16:01:52.000Z | README.md | tautme/2020-09-09-CCatHome-git-adam | 1fb10faa140fbba4b6c866904f9f496e189c3bf8 | [

"Apache-2.0"

] | null | null | null | # 2020-09-09-CCatHome-git-adam

Carpentry Con at Home Git workshop Part 2

- git clone <url>

- make sure you are not in another repo

- just like git init do only one per repo

- `git branch <branch_name>`: create a new branch where you are (`HEAD`)

- `git checkout <branch_name>`: move to another branch

- `git switch ... | 38.6 | 87 | 0.729275 | eng_Latn | 0.997952 |

262df8d1521afae7a40bbddf9bcf823f8bd4f928 | 2,447 | md | Markdown | docs/mfc/using-documents.md | baruchiro/cpp-docs | 6012887526a505e334e9f7ec73c5a84a59562177 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2021-04-18T12:54:41.000Z | 2021-04-18T12:54:41.000Z | docs/mfc/using-documents.md | Mikejo5000/cpp-docs | 4b2c3b0c720aef42bce7e1e5566723b0fec5ec7f | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/mfc/using-documents.md | Mikejo5000/cpp-docs | 4b2c3b0c720aef42bce7e1e5566723b0fec5ec7f | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: "Using Documents | Microsoft Docs"

ms.custom: ""

ms.date: "11/04/2016"

ms.technology: ["cpp-mfc"]

ms.topic: "conceptual"

dev_langs: ["C++"]

helpviewer_keywords: ["documents [MFC], C++ applications", "data [MFC], reading", "documents [MFC]", "files [MFC], writing to", "data [MFC], documents", "files [MFC]", "... | 50.979167 | 386 | 0.724969 | eng_Latn | 0.843648 |

262ea4ebe33d7e68096eac8049d74d5dc1527b31 | 4,892 | md | Markdown | WindowsServerDocs/identity/ad-ds/manage/troubleshoot/Configuring-a-Computer-for-Troubleshooting.md | gugaangelis/windowsserverdocs.pt-br | 0dde216f7e5647a5493397714275e8f323d8ebde | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-ds/manage/troubleshoot/Configuring-a-Computer-for-Troubleshooting.md | gugaangelis/windowsserverdocs.pt-br | 0dde216f7e5647a5493397714275e8f323d8ebde | [

"CC-BY-4.0",

"MIT"

] | null | null | null | WindowsServerDocs/identity/ad-ds/manage/troubleshoot/Configuring-a-Computer-for-Troubleshooting.md | gugaangelis/windowsserverdocs.pt-br | 0dde216f7e5647a5493397714275e8f323d8ebde | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

ms.assetid: 155abe09-6360-4913-8dd9-7392d71ea4e6

title: Configurar um computador para solução de problemas

ms.author: iainfou

author: iainfoulds

manager: daveba

ms.date: 08/07/2018

ms.topic: article

ms.openlocfilehash: 049addf848e231104e844c06627997c71b335d20

ms.sourcegitcommit: 1dc35d221eff7f079d9209d92f14fb630f95... | 82.915254 | 536 | 0.800491 | por_Latn | 0.999364 |

262eea4aef4018913de68a8757ac6078144cce2f | 4,300 | md | Markdown | CONTRIBUTING.md | Veercodeprog/site-www | 5ca9f703763b04ab53f6ab6c3e7845e0ffff4cf6 | [

"CC-BY-4.0"

] | 967 | 2016-07-15T13:24:43.000Z | 2022-03-25T13:18:15.000Z | CONTRIBUTING.md | Veercodeprog/site-www | 5ca9f703763b04ab53f6ab6c3e7845e0ffff4cf6 | [

"CC-BY-4.0"

] | 2,852 | 2016-07-12T19:35:07.000Z | 2022-03-31T23:52:16.000Z | CONTRIBUTING.md | chenglu/dart.cn | e9afe8a9c60343e99fdb913fe16fbcfcdfc3a754 | [

"CC-BY-4.0"

] | 741 | 2016-07-14T21:32:39.000Z | 2022-03-29T15:46:18.000Z | # Contributing :heart:

Thanks for thinking about helping with [dart.dev][www]!

You can contribute in a few ways.

* **Fix typos.** The GitHub UI makes it easy to contribute small fixes, and

you'll get credit for your contribution! To start, click the **page icon**

at the upper right of the page. Then click the **p... | 47.252747 | 156 | 0.74814 | eng_Latn | 0.933902 |

262ef2b9777e72005e51334cee35f9eafacfe0c3 | 4,716 | md | Markdown | docs/concepts/applications.md | soothsayerco/incubator-usergrid | a82f0fb347bcb42d7de9089b36c18ba4a54429cc | [

"Apache-2.0"

] | 1 | 2021-03-06T05:07:48.000Z | 2021-03-06T05:07:48.000Z | docs/concepts/applications.md | soothsayerco/incubator-usergrid | a82f0fb347bcb42d7de9089b36c18ba4a54429cc | [

"Apache-2.0"

] | null | null | null | docs/concepts/applications.md | soothsayerco/incubator-usergrid | a82f0fb347bcb42d7de9089b36c18ba4a54429cc | [

"Apache-2.0"

] | null | null | null | # Applications

You can create a new application in an organization through the [Admin

portal](/admin-portal). The Admin portal creates the new application by

issuing a post against the management endpoint (see the "Creating an

organization application" section in [Organization](/organization) for

details). If you need... | 60.461538 | 133 | 0.650551 | eng_Latn | 0.994963 |

262f3c6e5c9aed94143ac78dcdd03112f59821ea | 2,075 | md | Markdown | posts/guest-poem-“we-always-want-to-be-right-”-by-aaron-drake.md | kylegrover/booptroopeugene | bf8c97cda2bcb85cadb7b228943d36ea74fa6bc5 | [

"MIT"

] | 1 | 2020-07-06T00:27:11.000Z | 2020-07-06T00:27:11.000Z | posts/guest-poem-“we-always-want-to-be-right-”-by-aaron-drake.md | kylegrover/booptroopeugene | bf8c97cda2bcb85cadb7b228943d36ea74fa6bc5 | [

"MIT"

] | 12 | 2020-06-25T22:49:34.000Z | 2020-08-24T05:20:03.000Z | posts/guest-poem-“we-always-want-to-be-right-”-by-aaron-drake.md | kylegrover/booptroopeugene | bf8c97cda2bcb85cadb7b228943d36ea74fa6bc5 | [

"MIT"

] | 2 | 2020-06-12T06:14:24.000Z | 2020-06-15T20:21:29.000Z | ---

title: "Guest Poem: “We Always Want to Be Right,” by Aaron Drake"

date: 2020-06-16T22:21:28.843Z

author: remysaverem

summary: |-

We want to always be right,

It’s left or right,

Black or white,

Wrong and right...

tags:

- post

- guest post

- prose and poetry

---

* [Improving the Documentation](#improving-the-documentation)

* [Reporting a Bug](#reporting-a-bug)

Writing some Code

-----... | 47.698925 | 479 | 0.729486 | eng_Latn | 0.986258 |

262fdf39fc87ffa9c09efb85e412dcb7887eb63f | 540 | md | Markdown | docs/api/internal/modules.md | 914988803/cea | c1c9f2d9e78243e8261c4c8408faac7912c964fb | [

"MIT"

] | 68 | 2021-05-25T05:50:12.000Z | 2022-03-31T14:08:46.000Z | docs/api/internal/modules.md | 914988803/cea | c1c9f2d9e78243e8261c4c8408faac7912c964fb | [

"MIT"

] | 34 | 2021-07-05T03:04:40.000Z | 2022-03-25T08:31:32.000Z | docs/api/internal/modules.md | 914988803/cea | c1c9f2d9e78243e8261c4c8408faac7912c964fb | [

"MIT"

] | 33 | 2021-05-30T10:54:32.000Z | 2022-03-27T05:02:19.000Z | [@ceajs/attendance-plugin](README.md) / Exports

# cea

## Table of contents

### Classes

- [default](classes/default.md)

### Functions

- [attendanceCheckIn](modules.md#attendancecheckin)

- [checkIn](modules.md#checkin)

## Functions

### attendanceCheckIn

▸ **attendanceCheckIn**(): `Promise`<`void`\>

#### Returns... | 12.55814 | 51 | 0.653704 | kor_Hang | 0.325754 |

262ff9a5f43b95cb371ce1bfa4df73ba59450a4b | 1,599 | md | Markdown | README.md | atmarksharp/kueri | 74e5f8042c4a38f17863cfb77a9f138921c68d8d | [

"MIT"

] | null | null | null | README.md | atmarksharp/kueri | 74e5f8042c4a38f17863cfb77a9f138921c68d8d | [

"MIT"

] | null | null | null | README.md | atmarksharp/kueri | 74e5f8042c4a38f17863cfb77a9f138921c68d8d | [

"MIT"

] | null | null | null | # Kueri

jquery-like http parser using nokogiri   (**kueri** means *"query"* in Japanese)

https://rubygems.org/gems/kueri

## Installation

Add this line to your application's Gemfile:

```ruby

gem 'kueri'

```

And then execute:

$ bundle

Or install it yourself as:

$ gem install kueri

## Usage

```ruby... | 30.75 | 324 | 0.724828 | eng_Latn | 0.969219 |

26303ebc6123d35b918f8272aa44bf43f166df09 | 1,904 | md | Markdown | README.md | julenr/SWSPA | a7d40c59978ffc5193c99fe120dad87e7b0d6bad | [

"MIT"

] | null | null | null | README.md | julenr/SWSPA | a7d40c59978ffc5193c99fe120dad87e7b0d6bad | [

"MIT"

] | null | null | null | README.md | julenr/SWSPA | a7d40c59978ffc5193c99fe120dad87e7b0d6bad | [

"MIT"

] | null | null | null | # Star Wars Universe Project

The goal of this project is to lay out a small project that is both fun (touches interesting technologies) and illustrative (touches relevant technologies).

## Brief: A "Star Wars Universe"

This SPA renders a list of people, films, species, starships, vehicles and planets from within the ... | 59.5 | 287 | 0.749475 | eng_Latn | 0.989242 |

263079f6fe8a6d87c02c30f409221c16a5d7b0ae | 2,619 | md | Markdown | README.md | ttencate/ebisu_dart | 440ce0cedd41586c81ebce8273bde6a479258146 | [

"Unlicense"

] | 12 | 2020-09-05T15:09:14.000Z | 2021-11-15T11:37:09.000Z | README.md | ttencate/ebisu_dart | 440ce0cedd41586c81ebce8273bde6a479258146 | [

"Unlicense"

] | 1 | 2021-05-11T21:24:52.000Z | 2021-05-16T08:19:37.000Z | README.md | ttencate/ebisu_dart | 440ce0cedd41586c81ebce8273bde6a479258146 | [

"Unlicense"

] | 3 | 2020-11-04T11:04:45.000Z | 2021-05-04T10:29:29.000Z | Ebisu

=====

This is a Dart implementation of the [Ebisu](https://fasiha.github.io/ebisu/)

quiz scheduling algorithm, originally developed in Python by

[Ahmed Fasih](https://github.com/fasiha).

In a nutshell, Ebisu works by modelling the probability that a fact will be

remembered correctly at any arbitrary moment sinc... | 31.939024 | 80 | 0.744559 | eng_Latn | 0.98795 |

2630bec72218d42dcd186da8beaa2a54e4b4962a | 625 | md | Markdown | VBA/PowerPoint-VBA/articles/presentation-getworkflowtasks-method-powerpoint.md | oloier/VBA-content | 6b3cb5769808b7e18e3aff55a26363ebe78e4578 | [

"CC-BY-4.0",

"MIT"

] | 584 | 2015-09-01T10:09:09.000Z | 2022-03-30T15:47:20.000Z | VBA/PowerPoint-VBA/articles/presentation-getworkflowtasks-method-powerpoint.md | oloier/VBA-content | 6b3cb5769808b7e18e3aff55a26363ebe78e4578 | [

"CC-BY-4.0",

"MIT"

] | 585 | 2015-08-28T20:20:03.000Z | 2018-08-31T03:09:51.000Z | VBA/PowerPoint-VBA/articles/presentation-getworkflowtasks-method-powerpoint.md | oloier/VBA-content | 6b3cb5769808b7e18e3aff55a26363ebe78e4578 | [

"CC-BY-4.0",

"MIT"

] | 590 | 2015-09-01T10:09:09.000Z | 2021-09-27T08:02:27.000Z | ---

title: Presentation.GetWorkflowTasks Method (PowerPoint)

keywords: vbapp10.chm583098

f1_keywords:

- vbapp10.chm583098

ms.prod: powerpoint

api_name:

- PowerPoint.Presentation.GetWorkflowTasks

ms.assetid: d589e00c-3f1b-77e6-d021-b67b4d045c9a

ms.date: 06/08/2017

---

# Presentation.GetWorkflowTasks Method (PowerPoint... | 16.025641 | 67 | 0.7744 | yue_Hant | 0.461734 |

2631638686afd411ea18851859632eddadbef00f | 16,435 | md | Markdown | docs/database-engine/configure-windows/server-properties-advanced-page.md | Sticcia/sql-docs.it-it | 31c0db26a4a5b25b7c9f60d4ef0a9c59890f721e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/database-engine/configure-windows/server-properties-advanced-page.md | Sticcia/sql-docs.it-it | 31c0db26a4a5b25b7c9f60d4ef0a9c59890f721e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/database-engine/configure-windows/server-properties-advanced-page.md | Sticcia/sql-docs.it-it | 31c0db26a4a5b25b7c9f60d4ef0a9c59890f721e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Proprietà server (pagina Avanzate) | Microsoft Docs

ms.custom: ''

ms.date: 03/14/2017

ms.prod: sql

ms.prod_service: high-availability

ms.reviewer: ''

ms.technology: configuration

ms.topic: conceptual

f1_keywords:

- sql13.swb.serverproperties.advanced.f1

ms.assetid: cc5e65c2-448e-4f37-9ad4-2dfb1cc84ebe

author... | 120.845588 | 1,269 | 0.786736 | ita_Latn | 0.996639 |

2631f133c8bdfa3e8bd7fb44fe02af7cd99aa87d | 2,773 | md | Markdown | _posts/2018-11-11-pokemon-4ever-celebi-voice-of-the-forest-tamil-dubbed-full-movie-download.md | tamilrockerss/tamilrockerss.github.io | ff96346e1c200f9507ae529f2a5acba0ecfb431d | [

"MIT"

] | null | null | null | _posts/2018-11-11-pokemon-4ever-celebi-voice-of-the-forest-tamil-dubbed-full-movie-download.md | tamilrockerss/tamilrockerss.github.io | ff96346e1c200f9507ae529f2a5acba0ecfb431d | [

"MIT"

] | null | null | null | _posts/2018-11-11-pokemon-4ever-celebi-voice-of-the-forest-tamil-dubbed-full-movie-download.md | tamilrockerss/tamilrockerss.github.io | ff96346e1c200f9507ae529f2a5acba0ecfb431d | [

"MIT"

] | 1 | 2020-11-08T11:13:29.000Z | 2020-11-08T11:13:29.000Z | ---

title: "Pokémon 4Ever: Celebi - Voice of the Forest Tamil Dubbed Full Movie Download"

date: "2018-11-11"

---

Download And Online Watching Pokemon 4 Movie 4 Ever HD Rip Tamil Dubbed Pokemon Movie Khatre ka Jungle Full Movie In Tamil Download

**First On Net**

[.

| 30.666667 | 71 | 0.782609 | eng_Latn | 0.999521 |

263262d3b575ba4a96f51db49f44b977fbd40415 | 274 | md | Markdown | src/__tests__/fixtures/unfoldingWord/en_tq/2ch/07/10.md | unfoldingWord/content-checker | 7b4ca10b94b834d2795ec46c243318089cc9110e | [

"MIT"

] | null | null | null | src/__tests__/fixtures/unfoldingWord/en_tq/2ch/07/10.md | unfoldingWord/content-checker | 7b4ca10b94b834d2795ec46c243318089cc9110e | [

"MIT"

] | 226 | 2020-09-09T21:56:14.000Z | 2022-03-26T18:09:53.000Z | src/__tests__/fixtures/unfoldingWord/en_tq/2ch/07/10.md | unfoldingWord/content-checker | 7b4ca10b94b834d2795ec46c243318089cc9110e | [

"MIT"

] | 1 | 2022-01-10T21:47:07.000Z | 2022-01-10T21:47:07.000Z | # Why was Solomon able to send the people of Israel away to their homes with glad and joyful hearts after the festival?

The people were sent away by Solomon with glad and joyful hearts because of the goodness that Yahweh had shown to David, Solomon, and his people Israel.

| 68.5 | 152 | 0.79562 | eng_Latn | 1.000002 |

2632aa36440e35d0e61953620c8e340596113040 | 57 | md | Markdown | README.md | transOSTeam/wordPuzz | 91a9dddf9a3b24ac6c02edced6471bcdbcc406ea | [

"MIT"

] | 1 | 2018-12-21T21:43:35.000Z | 2018-12-21T21:43:35.000Z | README.md | transOSTeam/wordPuzz | 91a9dddf9a3b24ac6c02edced6471bcdbcc406ea | [

"MIT"

] | null | null | null | README.md | transOSTeam/wordPuzz | 91a9dddf9a3b24ac6c02edced6471bcdbcc406ea | [

"MIT"

] | null | null | null | wordPuzz

========

a simple multiplayer word puzzle game

| 11.4 | 37 | 0.701754 | eng_Latn | 0.985036 |

2633593ee9bb2fdb989719591ccbed5033a5273a | 13,435 | md | Markdown | content/blog/HEALTH/7/0/c203e1991a154fca379b9db2e502570b.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | 1 | 2022-03-03T17:52:27.000Z | 2022-03-03T17:52:27.000Z | content/blog/HEALTH/7/0/c203e1991a154fca379b9db2e502570b.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | content/blog/HEALTH/7/0/c203e1991a154fca379b9db2e502570b.md | arpecop/big-content | 13c88706b1c13a7415194d5959c913c4d52b96d3 | [

"MIT"

] | null | null | null | ---

title: c203e1991a154fca379b9db2e502570b

mitle: "Cómo ser exitoso y evitar errores en extensión de la visa de turista"

image: "https://fthmb.tqn.com/42OjeVKXACxAG88UzHBZjulwcVU=/2122x1415/filters:fill(auto,1)/166267310-56a51ba65f9b58b7d0dae017.jpg"

description: ""

---

Los turistas extranjeros may se en... | 1,679.375 | 13,161 | 0.783402 | spa_Latn | 0.997019 |

2633c74e1de4ce0a430e84f98a0d767e73883dbb | 234 | md | Markdown | INSTALL.md | NLC1609/caffeecoin | a7d34887633fa43f2ef60f7c53d5be16d4b49f20 | [

"MIT"

] | 3 | 2021-12-22T16:32:46.000Z | 2022-02-28T05:15:09.000Z | INSTALL.md | NLC1609/caffeecoin | a7d34887633fa43f2ef60f7c53d5be16d4b49f20 | [

"MIT"

] | 1 | 2021-11-07T20:20:53.000Z | 2021-11-10T07:38:25.000Z | INSTALL.md | NLC1609/caffeecoin_sourcecode | a7d34887633fa43f2ef60f7c53d5be16d4b49f20 | [

"MIT"

] | 1 | 2021-12-12T09:42:42.000Z | 2021-12-12T09:42:42.000Z | Building CaffeeCoin

================

See doc/build-*.md for instructions on building the various

elements of the CaffeeCoin Core reference implementation of CaffeeCoin.

Or refer to this link https://caffeecoin.com/running_node.html

| 29.25 | 71 | 0.764957 | eng_Latn | 0.950757 |

2634d9617d1f7ecb5567c176756802e791ea9bdd | 1,046 | md | Markdown | README.md | russbiggs/spot-the-box | d193d5786a8e36a8406a0ed59fc53905e42f4d72 | [

"MIT"

] | 7 | 2020-08-17T20:48:56.000Z | 2021-03-02T02:11:08.000Z | README.md | russbiggs/spot-the-box | d193d5786a8e36a8406a0ed59fc53905e42f4d72 | [

"MIT"

] | 24 | 2020-08-17T18:15:58.000Z | 2020-10-10T18:33:35.000Z | README.md | russbiggs/spot-the-box | d193d5786a8e36a8406a0ed59fc53905e42f4d72 | [

"MIT"

] | 2 | 2020-08-18T04:46:06.000Z | 2020-08-20T05:12:46.000Z | # Spot the Box

<img width="180" alt="Screen Shot" src="https://user-images.githubusercontent.com/8487728/91896147-9c7c7980-ec55-11ea-95ce-6eceed06eecd.png">

## About

There are over 200k United State Postal Service collection boxes across the United States. Recent news has reported some of these boxes are being remove... | 61.529412 | 440 | 0.792543 | eng_Latn | 0.993635 |

263517e884baa115e5f4e7324718eef9bf1ae158 | 945 | md | Markdown | README.md | betopinheiro1005/curso-nodejs-maransatto | d0f6d43f6bccb8a89f2b3d7a4ce27841c5a7d8f2 | [

"MIT"

] | null | null | null | README.md | betopinheiro1005/curso-nodejs-maransatto | d0f6d43f6bccb8a89f2b3d7a4ce27841c5a7d8f2 | [

"MIT"

] | null | null | null | README.md | betopinheiro1005/curso-nodejs-maransatto | d0f6d43f6bccb8a89f2b3d7a4ce27841c5a7d8f2 | [

"MIT"

] | null | null | null | # Curso REST API com Node.JS

## Fernando Silva Maransatto

### Instalação de dependências

```bash

npm install

```

### Lista de aulas - [Vídeos do curso](https://www.youtube.com/watch?v=d_vXgK4uZJM&list=PLWgD0gfm500EMEDPyb3Orb28i7HK5_DkR)

Aula 01 - Criando o ambiente

Aula 02 - Criando as rotas

Aula 03 - Melhorand... | 33.75 | 123 | 0.728042 | por_Latn | 0.946061 |

263552d717ffac51f44939a0de8eea33a10a40f5 | 1,403 | md | Markdown | readme.md | nine-tails9/npm-VueGenerator | 5d190740c142190401df3425520a15d19e3ffba6 | [

"MIT"

] | null | null | null | readme.md | nine-tails9/npm-VueGenerator | 5d190740c142190401df3425520a15d19e3ffba6 | [

"MIT"

] | null | null | null | readme.md | nine-tails9/npm-VueGenerator | 5d190740c142190401df3425520a15d19e3ffba6 | [

"MIT"

] | null | null | null | # @nine_tails9/vuemodelgenerator

[](https://opensource.org/licenses/MIT)

## Description

This tiny npm package let's you generate Vue Model Template to get started with your work.

Package includ... | 29.851064 | 163 | 0.736279 | eng_Latn | 0.89928 |

26355d3ed0f8dfdb64cd3a7dea278b5373a105bb | 573 | md | Markdown | node_modules/kouto-swiss/_docs/utilities/size.md | pys0728k/pys0728k.github.io | 8187116388cdfdfe7de8331e104f990967830d21 | [

"MIT"

] | 3 | 2018-01-25T02:30:12.000Z | 2020-09-08T20:53:18.000Z | node_modules/kouto-swiss/_docs/utilities/size.md | pys0728k/pys0728k.github.io | 8187116388cdfdfe7de8331e104f990967830d21 | [

"MIT"

] | 1 | 2022-02-10T18:27:59.000Z | 2022-02-10T18:27:59.000Z | node_modules/kouto-swiss/_docs/utilities/size.md | pys0728k/pys0728k.github.io | 8187116388cdfdfe7de8331e104f990967830d21 | [

"MIT"

] | 4 | 2019-11-05T22:54:58.000Z | 2021-02-25T15:14:52.000Z | # size mixins

The size mixins gives you a convenient shortcut for setting `width` and `height` properties at the same time.

**Note:** If only one value is given, the `width` and `height` will have the same values.

**Note 2:** When giving two values, making one to false will not displaying it.

### Usage

```stylus... | 12.456522 | 111 | 0.638743 | eng_Latn | 0.990041 |

26361d95f38c22518cd8e8d824e74e5b51c2d9e1 | 9,672 | md | Markdown | Android_Development_with_Kotlin/05. Android Component/05.3 Broadcast Reciever.md | BhaswatiRoy/winter-of-contributing | 8632c74d0c2d55bb4fddee9d6faac30159f376e1 | [

"MIT"

] | 1,078 | 2021-09-05T09:44:33.000Z | 2022-03-27T01:16:02.000Z | Android_Development_with_Kotlin/05. Android Component/05.3 Broadcast Reciever.md | BhaswatiRoy/winter-of-contributing | 8632c74d0c2d55bb4fddee9d6faac30159f376e1 | [

"MIT"

] | 6,845 | 2021-09-05T12:49:50.000Z | 2022-03-12T16:41:13.000Z | Android_Development_with_Kotlin/05. Android Component/05.3 Broadcast Reciever.md | BhaswatiRoy/winter-of-contributing | 8632c74d0c2d55bb4fddee9d6faac30159f376e1 | [

"MIT"

] | 2,629 | 2021-09-03T04:53:16.000Z | 2022-03-20T17:45:00.000Z | # Android : BroadCast And BroadCast Receivers

<br>

* [Audio on BroadCast And BroadCast Receivers](#Audio-on-BroadCast-And-BroadCast-Receivers)

<br>

## What are Broadcast in Android?

***Broadcasts*** are messages that the Android system and apps send when events occur that might affect the functionality of other a... | 33.700348 | 189 | 0.712986 | eng_Latn | 0.845835 |

26367669d0020e4ce4903ef4f312027dc34e1ba1 | 1,879 | md | Markdown | pages/services/spark/2.3.1-2.2.1-2/history-server/index.md | abudnik/dcos-docs-site | 37758cb4cb688751b76957c44834fe86557b37ae | [

"Apache-2.0"

] | 1 | 2019-04-12T10:30:56.000Z | 2019-04-12T10:30:56.000Z | pages/services/spark/2.3.1-2.2.1-2/history-server/index.md | abudnik/dcos-docs-site | 37758cb4cb688751b76957c44834fe86557b37ae | [

"Apache-2.0"

] | null | null | null | pages/services/spark/2.3.1-2.2.1-2/history-server/index.md | abudnik/dcos-docs-site | 37758cb4cb688751b76957c44834fe86557b37ae | [

"Apache-2.0"

] | null | null | null | ---

layout: layout.pug

navigationTitle: History Server

excerpt: Enabling HDFS for the Spark History Server

title: Spark History Server

menuWeight: 30

model: /services/spark/data.yml

render: mustache

---

DC/OS {{ model.techName }} includes the [Spark History Server][3]. Because the history server requires HDFS, you mus... | 31.847458 | 185 | 0.67323 | eng_Latn | 0.377591 |

26368568495635222a5a8d420871618fee199ca9 | 6,311 | md | Markdown | Lync/LyncServer/lync-server-2013-registration-table.md | chethankumarshetty1986/OfficeDocs-SkypeForBusiness | 7387d631cf895992906a46d3b7576a2ac76f5b4d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | Lync/LyncServer/lync-server-2013-registration-table.md | chethankumarshetty1986/OfficeDocs-SkypeForBusiness | 7387d631cf895992906a46d3b7576a2ac76f5b4d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | Lync/LyncServer/lync-server-2013-registration-table.md | chethankumarshetty1986/OfficeDocs-SkypeForBusiness | 7387d631cf895992906a46d3b7576a2ac76f5b4d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: 'Lync Server 2013: Registration table'

description: "Lync Server 2013: Registration table."

ms.reviewer:

ms.author: v-lanac

author: lanachin

f1.keywords:

- NOCSH

TOCTitle: Registration table

ms:assetid: 05ff9dd3-1aaa-4af0-bd69-8789fb8eaeb3

ms:mtpsurl: https://technet.microsoft.com/en-us/library/Gg398114(v=O... | 32.530928 | 250 | 0.676755 | eng_Latn | 0.378478 |

2636a56ef0572b535bc778ab59d906ea4fbf9647 | 41 | md | Markdown | README.md | DanilMarchyshyn/python_traning | 696b672978708f81c5e5d2b655111bc45da06022 | [

"Apache-2.0"

] | null | null | null | README.md | DanilMarchyshyn/python_traning | 696b672978708f81c5e5d2b655111bc45da06022 | [

"Apache-2.0"

] | null | null | null | README.md | DanilMarchyshyn/python_traning | 696b672978708f81c5e5d2b655111bc45da06022 | [

"Apache-2.0"

] | null | null | null | # Repository for Python_Traning tesT

Vova | 20.5 | 36 | 0.853659 | eng_Latn | 0.587864 |

2637312b935a3f675df3f219f2c872284b08b26e | 98 | md | Markdown | bin/dns/record-set/addRecord/examples.md | ad-m/h1-cli | 2de33110baeeb342b5680ccf7b51acb41e25f5e0 | [

"MIT"

] | null | null | null | bin/dns/record-set/addRecord/examples.md | ad-m/h1-cli | 2de33110baeeb342b5680ccf7b51acb41e25f5e0 | [

"MIT"

] | 3 | 2017-09-06T21:49:12.000Z | 2018-06-04T13:24:33.000Z | bin/dns/record-set/addRecord/examples.md | ad-m/h1-cli | 2de33110baeeb342b5680ccf7b51acb41e25f5e0 | [

"MIT"

] | 2 | 2018-06-15T14:45:03.000Z | 2020-05-29T21:46:01.000Z | ```bash

{{command_name}} --zone-name 'my-domain.tld' --name subdomain --value '{{dns_value}}'

```

| 24.5 | 85 | 0.632653 | eng_Latn | 0.295994 |

2637616ef5e9782e34e29c6b8ca6749449a5863b | 1,011 | md | Markdown | README.md | TimeSeriesPrediction/time-series-web-client | 7ab0faae71153ee2863b59020fcc571a34e5ac4c | [

"MIT"

] | null | null | null | README.md | TimeSeriesPrediction/time-series-web-client | 7ab0faae71153ee2863b59020fcc571a34e5ac4c | [

"MIT"

] | 4 | 2017-07-26T13:06:42.000Z | 2017-09-26T08:32:04.000Z | README.md | TimeSeriesPrediction/time-series-web-client | 7ab0faae71153ee2863b59020fcc571a34e5ac4c | [

"MIT"

] | null | null | null | # Time Series Prediction Web Client

[](https://travis-ci.org/TimeSeriesPrediction/time-series-web-client)

[](https://david-dm.o... | 91.909091 | 185 | 0.801187 | yue_Hant | 0.391661 |

2637fb1397d0463a6b4af52514654ab3be1df699 | 85 | md | Markdown | README.md | 3kg4kR/brazil-geodata-amcharts4 | c959e1cffd882e32be090715c07c865be19a4fbf | [

"MIT"

] | 2 | 2021-09-17T12:06:32.000Z | 2021-09-17T12:07:09.000Z | README.md | igor-sillva/brazil-geodata-amcharts4 | c959e1cffd882e32be090715c07c865be19a4fbf | [

"MIT"

] | null | null | null | README.md | igor-sillva/brazil-geodata-amcharts4 | c959e1cffd882e32be090715c07c865be19a4fbf | [

"MIT"

] | null | null | null | # brazil-geodata-amcharts4

Geodata de todos os municípios brasileiros para Amcharts4

| 28.333333 | 57 | 0.847059 | por_Latn | 0.989418 |

2638b0be76bb5f89993b1a818db84bfb2f62f135 | 29 | md | Markdown | README.md | AndresIturria/discord-update-channel-name | cb5930322076978b99b96f5eb7d026aefeacc21d | [

"Apache-2.0"

] | null | null | null | README.md | AndresIturria/discord-update-channel-name | cb5930322076978b99b96f5eb7d026aefeacc21d | [

"Apache-2.0"

] | null | null | null | README.md | AndresIturria/discord-update-channel-name | cb5930322076978b99b96f5eb7d026aefeacc21d | [

"Apache-2.0"

] | null | null | null | # discord-update-channel-name | 29 | 29 | 0.827586 | eng_Latn | 0.58732 |

2638cb7fa5e373655c32b9ddc0065ee42c2c58d7 | 230 | md | Markdown | src/Introduction01/README.md | y-uti/dpmt | e65b2637078ce021e1e5a73b0e888fd3314ae8fe | [

"MIT"

] | null | null | null | src/Introduction01/README.md | y-uti/dpmt | e65b2637078ce021e1e5a73b0e888fd3314ae8fe | [

"MIT"

] | null | null | null | src/Introduction01/README.md | y-uti/dpmt | e65b2637078ce021e1e5a73b0e888fd3314ae8fe | [

"MIT"

] | null | null | null | Introduction 1

==============

- List I1-6, I1-7

- Runnable インタフェース相当のものがないのでスキップ

- List I1-8

- ThreadFactory クラス相当のものがないのでスキップ

- List I1-10

- synchronized キーワード相当の機能がないのでスキップ

- List I1-11, I1-12

- 練習問題内の正しくないプログラムなのでスキップ

| 19.166667 | 36 | 0.708696 | yue_Hant | 0.300703 |

26398d5f9185205b0c114ad3e7ebcce168586698 | 9,535 | md | Markdown | articles/service-fabric/run-to-completion.md | tsunami416604/azure-docs.hu-hu | aeba852f59e773e1c58a4392d035334681ab7058 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/service-fabric/run-to-completion.md | tsunami416604/azure-docs.hu-hu | aeba852f59e773e1c58a4392d035334681ab7058 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/service-fabric/run-to-completion.md | tsunami416604/azure-docs.hu-hu | aeba852f59e773e1c58a4392d035334681ab7058 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: RunToCompletion szemantikai Service Fabric

description: A Service Fabric RunToCompletion szemantikai leírását ismerteti.

author: shsha-msft

ms.topic: conceptual

ms.date: 03/11/2020

ms.author: shsha

ms.openlocfilehash: 6f2f6aa4380fcf6909957118bf682275350ce68c

ms.sourcegitcommit: a43a59e44c14d349d597c3d2fd2bc7... | 70.110294 | 620 | 0.807446 | hun_Latn | 0.996354 |

263a56f21bb2a27613da108be4afe2dfee2cd4a6 | 2,532 | md | Markdown | windows-driver-docs-pr/image/scannerconfiguration.md | AnLazyOtter/windows-driver-docs.zh-cn | bdbf88adf61f7589cde40ae7b0dbe229f57ff0cb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows-driver-docs-pr/image/scannerconfiguration.md | AnLazyOtter/windows-driver-docs.zh-cn | bdbf88adf61f7589cde40ae7b0dbe229f57ff0cb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | windows-driver-docs-pr/image/scannerconfiguration.md | AnLazyOtter/windows-driver-docs.zh-cn | bdbf88adf61f7589cde40ae7b0dbe229f57ff0cb | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: ScannerConfiguration 元素

description: 所需的 ScannerConfiguration 元素是元素的集合,用于描述扫描程序的可配置的功能。

ms.assetid: 79c26d0d-ebee-4baf-8689-f5bae088883d

keywords:

- ScannerConfiguration 元素成像设备

topic_type:

- apiref

api_name:

- wscn ScannerConfiguration

api_type:

- Schema

ms.date: 11/28/2017

ms.localizationpriority: medium

ms... | 22.017391 | 253 | 0.695498 | yue_Hant | 0.236062 |

263b095f1acc601501b4c52d92baf7ce475247ae | 555 | md | Markdown | README.md | pkcsecurity/dvcs-smackdown | 73c8fc4d3afd224877fe1cea808d493ec257f4a4 | [

"Unlicense"

] | null | null | null | README.md | pkcsecurity/dvcs-smackdown | 73c8fc4d3afd224877fe1cea808d493ec257f4a4 | [

"Unlicense"

] | null | null | null | README.md | pkcsecurity/dvcs-smackdown | 73c8fc4d3afd224877fe1cea808d493ec257f4a4 | [

"Unlicense"

] | null | null | null | # DVCS Smackdown

This is an example website for Bootstrap that contains HTML, JS, and CSS,

that we can all modify as desired.

Please, **use this as a chance to dry-fire the DVCS systems**, so submit

pull requests, fork, add things, blow things up, get a feel for it all

so we can have (experiential) personal preferenc... | 30.833333 | 74 | 0.731532 | eng_Latn | 0.987089 |

263bef494c819ec2cf7999c521da41862001497d | 5,278 | md | Markdown | docs/sympl/assignment-to-globals-and-locals.md | zspitz/dlr | e6a0ca5616c11b5f93e55b9e7c9d5542923d7335 | [

"Apache-2.0"

] | null | null | null | docs/sympl/assignment-to-globals-and-locals.md | zspitz/dlr | e6a0ca5616c11b5f93e55b9e7c9d5542923d7335 | [

"Apache-2.0"

] | null | null | null | docs/sympl/assignment-to-globals-and-locals.md | zspitz/dlr | e6a0ca5616c11b5f93e55b9e7c9d5542923d7335 | [

"Apache-2.0"

] | null | null | null | ---

sort: 4

title: Assignment to Globals and Locals

---

# 4 Assignment to Globals and Locals

We already discussed lexical and globals in general in section 3.5. This section discusses adding variable assignment to Sympl. This starts with the keyword **set**, for which we won't discuss lexical scanning or parsing. As ... | 83.777778 | 750 | 0.729443 | eng_Latn | 0.995428 |

263c09805a0f7a609ed4791a23f7463905040313 | 53 | md | Markdown | README.md | lffranca/gonga-admin | 1ab5d4440df1c5ba6edbbfd5cc41219fed22e116 | [

"MIT"

] | 1 | 2021-11-08T18:19:16.000Z | 2021-11-08T18:19:16.000Z | README.md | lffranca/gonga-admin | 1ab5d4440df1c5ba6edbbfd5cc41219fed22e116 | [

"MIT"

] | null | null | null | README.md | lffranca/gonga-admin | 1ab5d4440df1c5ba6edbbfd5cc41219fed22e116 | [

"MIT"

] | null | null | null | # gonga

More than just another GUI to Kong Admin API

| 17.666667 | 44 | 0.773585 | eng_Latn | 0.984998 |

263c77cf0b9a28d75efea47dd93353099ca17081 | 597 | md | Markdown | README.md | nrfta/go-gqlgen-helpers | e72bf33098cb3c6f844c72a0325cf375a0e0d529 | [

"MIT"

] | null | null | null | README.md | nrfta/go-gqlgen-helpers | e72bf33098cb3c6f844c72a0325cf375a0e0d529 | [

"MIT"

] | 2 | 2021-12-20T00:15:34.000Z | 2021-12-20T00:15:34.000Z | README.md | nrfta/go-gqlgen-helpers | e72bf33098cb3c6f844c72a0325cf375a0e0d529 | [

"MIT"

] | null | null | null | # go-gqlgen-helpers

## Install

```sh

go get -u "github.com/nrfta/go-gqlgen-helpers"

```

### GraphQL gqlgen error handling

```go

// ...

router.Handle("/graphql",

handler.GraphQL(

NewExecutableSchema(Config{Resolvers: &Resolver{}}),

... | 16.583333 | 90 | 0.700168 | yue_Hant | 0.226563 |

263d551dc2cc0cfbd076e1ee7236212cdb1b9732 | 1,901 | md | Markdown | _posts/leetcode/2021-06-15-minimum-path-sum.md | GracefulSoul/GracefulSoul.github.io | a084e18c1df3804866de8c8dcd9093a6babc017f | [

"MIT"

] | 2 | 2018-01-15T07:42:25.000Z | 2021-02-21T07:06:12.000Z | _posts/leetcode/2021-06-15-minimum-path-sum.md | GracefulSoul/GracefulSoul.github.io | a084e18c1df3804866de8c8dcd9093a6babc017f | [

"MIT"

] | 1 | 2021-10-18T02:42:16.000Z | 2021-10-18T02:42:16.000Z | _posts/leetcode/2021-06-15-minimum-path-sum.md | GracefulSoul/GracefulSoul.github.io | a084e18c1df3804866de8c8dcd9093a6babc017f | [

"MIT"

] | null | null | null | ---

title: "Leetcode Java Minimum Path Sum"

excerpt: "Leetcode Minimum Path Sum Java 풀이"

last_modified_at: 2021-06-15T12:30:00

header:

image: /assets/images/leetcode/minimum-path-sum.png

categories:

- Leetcode

tags:

- Programming

- Leetcode

- Java

toc: true

toc_ads: true

toc_sticky: true

use_math: true

---

#... | 26.041096 | 157 | 0.618096 | kor_Hang | 1.000004 |

263d946b40733f2df8dca759b03c48935827ee61 | 3,509 | md | Markdown | _posts/2019-03-03-Download-2003-lancer-es-manual.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | 2 | 2019-02-28T03:47:33.000Z | 2020-04-06T07:49:53.000Z | _posts/2019-03-03-Download-2003-lancer-es-manual.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | null | null | null | _posts/2019-03-03-Download-2003-lancer-es-manual.md | Anja-Allende/Anja-Allende | 4acf09e3f38033a4abc7f31f37c778359d8e1493 | [

"MIT"

] | null | null | null | ---

layout: post

comments: true

categories: Other

---

## Download 2003 lancer es manual book

Indeed, "I am the North Wind, just went bing-bong. No, in spite of her performance "Well, I need a suit of interested, "but I do have a story 2003 lancer es manual you, with her incomprehensible yammering about talking books ... | 389.888889 | 3,414 | 0.784269 | eng_Latn | 0.999924 |

263d9ae00b62ce9ee6d3a841da29112dbf601c07 | 9,830 | md | Markdown | README.md | zz2585/CommonOwnerReplication | e952eba5af27141b0f7a38959f63d22d029e03ed | [

"MIT"

] | 23 | 2020-06-24T21:07:35.000Z | 2022-02-25T10:58:15.000Z | README.md | zz2585/CommonOwnerReplication | e952eba5af27141b0f7a38959f63d22d029e03ed | [

"MIT"

] | 1 | 2020-06-29T16:56:06.000Z | 2020-06-29T16:56:06.000Z | README.md | zz2585/CommonOwnerReplication | e952eba5af27141b0f7a38959f63d22d029e03ed | [

"MIT"

] | 14 | 2020-06-24T19:46:57.000Z | 2022-03-24T17:06:44.000Z | # Replication Instructions for: Common Ownership in America: 1980-2017

Backus, Conlon and Sinkinson (2020)

AEJMicro-2019-0389

openicpsr-120083

A copy of the paper is here: https://chrisconlon.github.io/site/common_owner.pdf

### Open ICPSR Install Instructions

1. Download and unzip the repository.

2. All required file... | 44.681818 | 382 | 0.768973 | eng_Latn | 0.979257 |

263d9d2941bd2b8eb839650647960a94976947a9 | 10,286 | md | Markdown | design/libraries/nodes-and-kinds.md | olizilla/ipld | 28cf1e3910053d77f8e100f06ce0459227b5d62e | [

"Apache-2.0",

"MIT-0",

"MIT"

] | 1,002 | 2016-10-18T17:17:01.000Z | 2022-03-28T00:13:05.000Z | design/libraries/nodes-and-kinds.md | olizilla/ipld | 28cf1e3910053d77f8e100f06ce0459227b5d62e | [

"Apache-2.0",

"MIT-0",

"MIT"

] | 154 | 2016-10-19T17:45:13.000Z | 2022-03-29T22:35:05.000Z | design/libraries/nodes-and-kinds.md | olizilla/ipld | 28cf1e3910053d77f8e100f06ce0459227b5d62e | [

"Apache-2.0",

"MIT-0",

"MIT"