hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6 values | lang stringclasses 1 value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191 values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

26708653069d73c0f57db85b1ecf6ed890646522 | 5,423 | md | Markdown | index.md | overwatchTO/overwatchto.github.io | 74665751040a4f7ebcbc7fddc071e0628895f539 | [

"MIT"

] | null | null | null | index.md | overwatchTO/overwatchto.github.io | 74665751040a4f7ebcbc7fddc071e0628895f539 | [

"MIT"

] | null | null | null | index.md | overwatchTO/overwatchto.github.io | 74665751040a4f7ebcbc7fddc071e0628895f539 | [

"MIT"

] | null | null | null | ---

layout: default

---

<div class="container-fluid">

<div class="row banner">

<div class="col-12 col-md-8">

<h1>Toronto Overwatch Beer League</h1>

<h4>Toronto's first social Overwatch LAN gaming league</h4>

<!-- <div class="text-center" style="padding-top:3em;"><a href="{{ site.baseurl }}/join/" class="btn btn-primary primary-cta">Sign up for TOBL</a></div> -->

<!-- <p class="text-center" style="font-size:80%;"><small>Registration for Season 5 is closed.</small></p> -->

</div>

<div class="col-12 col-md-4">

<div>

<img src="{{ site.baseurl }}/images/logo_white.png" class="img-responsive banner-logo" alt="Toronto Overwatch Beer League logo">

</div>

</div>

</div>

</div>

<div class="container">

<div class="row page-section">

<div class="col-12">

<h1 class="text-center">How it works</h1>

</div>

</div>

<div class="row">

<div class="col-10 col-sm-8 col-md-4 mx-auto">

<div class="feature">

<div class="text-center">

<img class="feature-icon" style="margin-top:-55px;" src="{{ site.baseurl }}/images/notes-icon.svg" alt="Notes icon">

</div>

<p class="text-center" style="margin-top:-26px;"><a href="{{ site.baseurl }}/join/">Create a team</a> or <a href="{{ site.baseurl }}/join/">sign up as an individual</a> and get drafted.</p>

</div>

</div>

<div class="col-10 col-sm-8 col-md-4 mx-auto">

<div class="feature">

<div class="text-center" style="margin-top:-5px;">

<img class="feature-icon" src="{{ site.baseurl }}/images/overwatch_logo.png" alt="Overwatch Logo">

</div>

<p class="text-center">Compete weekly in an organized Overwatch league. See the details <a href="{{ site.baseurl }}/league/">here</a>.</p>

</div>

</div>

<div class="col-10 col-sm-8 col-md-4 mx-auto">

<div class="feature">

<div class="text-center">

<img class="feature-icon" src="{{ site.baseurl }}/images/hive_logo.jpg" alt="The Hive eSports Logo" style="width:130px;">

</div>

<p class="text-center">Play on LAN at <a href="https://www.facebook.com/thehiveesports/">The Hive Esports</a>. Have fun and socialize.</p>

</div>

</div>

</div>

</div>

<div class="jumbotron-fluid">

<div class="container center-vertical">

<div class="row justify-content-center">

<div class="col-10 col-md-8 col-lg-6">

<div class="mailing-list-panel">

<h1>Stay notified</h1>

<!-- <p>Individual registration is now open! Sign up <a href="http://overwatchtoronto.org/join/" style="color:white">here</a> or join our mailing list for future beer league updates.</p> -->

<p>Registration opens soon. Sign up for our mailing list and you'll be the first to know.</p>

<!-- Begin MailChimp Signup Form -->

<style type="text/css">

#mc_embed_signup{/*background:#fff; clear:left; font:14px Helvetica,Arial,sans-serif;*/ width:100%;}

</style>

<div id="mc_embed_signup">

<form action="https://overwatchtoronto.us17.list-manage.com/subscribe/post?u=8b3de13b281e00b24f345f7e5&id=96eab85b72" method="post" id="mc-embedded-subscribe-form" name="mc-embedded-subscribe-form" class="validate" target="_blank" novalidate>

<div id="mc_embed_signup_scroll" class="mx-auto">

<div class="form-group">

<label for="mce-EMAIL" class="mailing-list-label">Email address</label>

<input type="email" value="" name="EMAIL" class="email form-control" id="mce-EMAIL" required>

</div>

<!-- real people should not fill this in and expect good things - do not remove this or risk form bot signups-->

<div style="position: absolute; left: -5000px;" aria-hidden="true"><input type="text" name="b_8b3de13b281e00b24f345f7e5_96eab85b72" tabindex="-1" value=""></div>

<div class="form-group">

<div class="clear">

<input type="submit" value="Subscribe" name="subscribe" id="mc-embedded-subscribe" class="button btn btn-block">

</div>

</div>

</div>

</form>

</div>

<!--End mc_embed_signup-->

</div>

</div>

</div>

</div>

</div>

<div class="container">

<div class="row page-section-no-line">

<div class="col-10 col-sm-10 col-md-8 col-lg-6 mx-auto">

<h1 class="text-center">The Hive Esports</h1>

<p>All of our matches take place on LAN at <a href="https://www.facebook.com/thehiveesports/">The Hive Esports</a>. Meet other Overwatch players, talk strategy, and enjoy some food and drink while socializing.</p>

<p>The Hive boasts 7000 square feet, 21 high end gaming PCs, 30 TVs, retro gaming machines, and a full service kitchen. Located just two minutes from St. Clair Station.</p>

</div>

</div>

<div class="row">

<div class="col-10 col-sm-10 col-md-8 col-lg-6 mx-auto">

<div class="map-responsive">

<iframe

width="600"

height="450"

frameborder="0" style="border:0"

src="https://www.google.com/maps/embed?pb=!1m18!1m12!1m3!1d2885.1084227746137!2d-79.39813908425393!3d43.68750927912014!2m3!1f0!2f0!3f0!3m2!1i1024!2i768!4f13.1!3m3!1m2!1s0x882b335b46a62d57%3A0x7299f91389e798f8!2sThe+Hive!5e0!3m2!1sen!2sca!4v1534813943290" allowfullscreen>

</iframe>

</div>

</div>

</div>

</div>

<div style="padding-bottom:4em"></div>

| 46.75 | 273 | 0.627697 | eng_Latn | 0.379376 |

267140362bd31e16d12d1edd40c904a2f981c9ee | 822 | md | Markdown | README.md | codetin/DarkPlusPlus | 6f935a6c78677fe5766bea7c436a6d9b6b421fae | [

"MIT"

] | 30 | 2022-03-13T08:29:22.000Z | 2022-03-29T04:27:22.000Z | README.md | codetin/DarkPlusPlus | 6f935a6c78677fe5766bea7c436a6d9b6b421fae | [

"MIT"

] | null | null | null | README.md | codetin/DarkPlusPlus | 6f935a6c78677fe5766bea7c436a6d9b6b421fae | [

"MIT"

] | null | null | null | # Dark++

Dark++主题是基于 Dark+(vscode 默认主题)的一个自用主题。

因为自己用的感觉不错,所以分享出来。

如果大家觉得好的话请到 github 官方点赞。

说明文件改为中文书写,非中文用户可以考虑使用翻译软件查看。

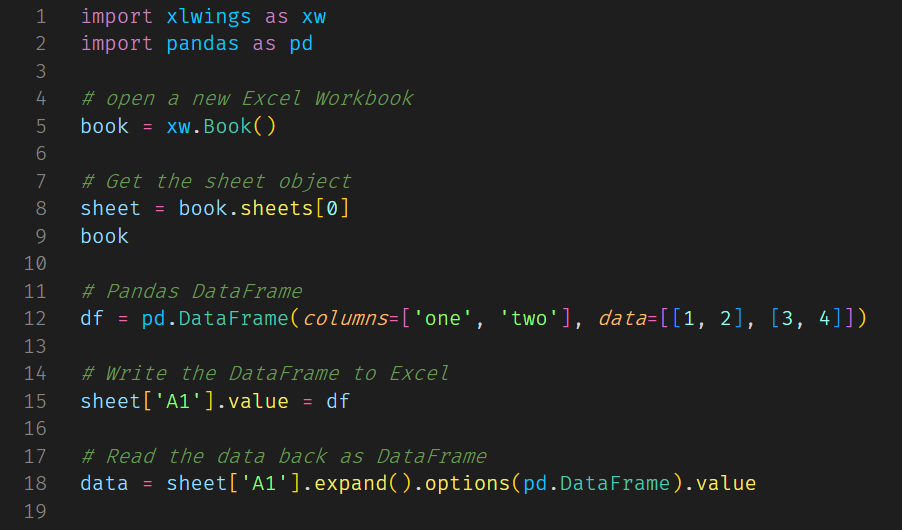

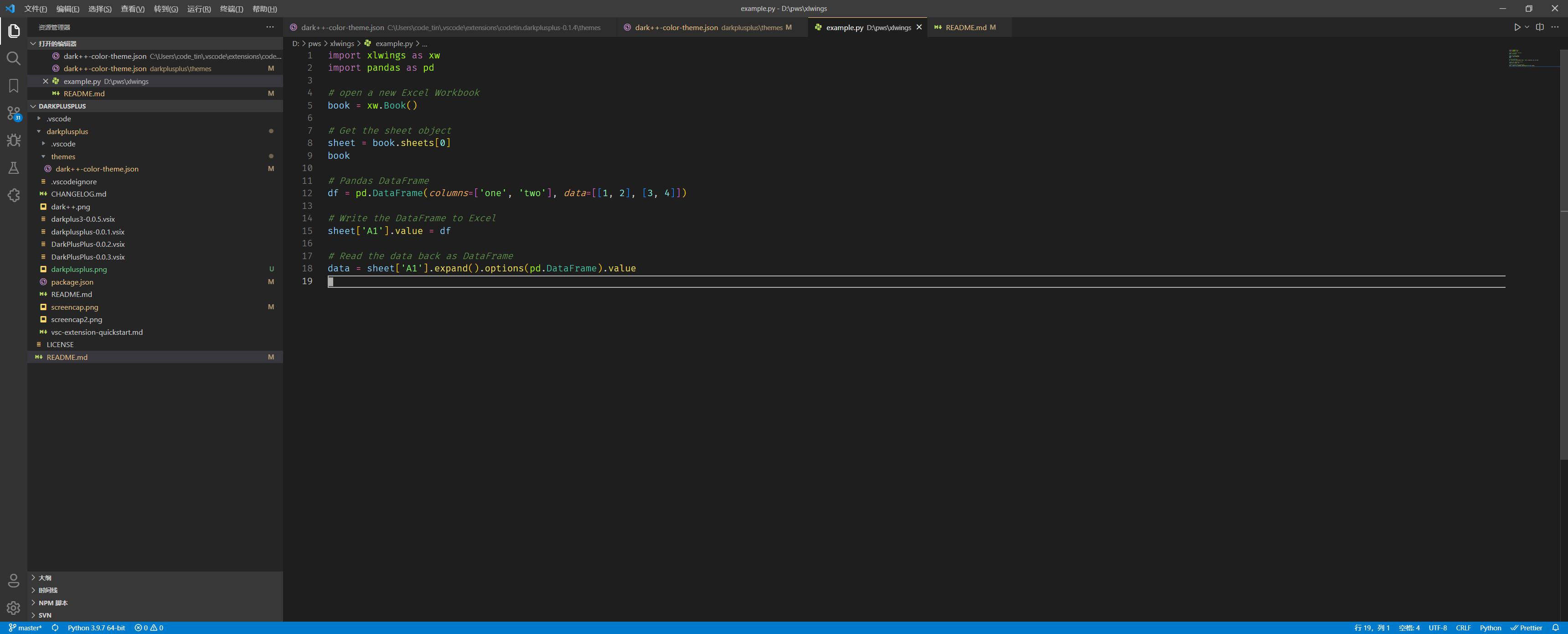

### 语法着色示例

### VSCODE 界面截图

# Github 源码

## [Github](https://github.com/codetin/DarkPlusPlus.git)

# 变更日志

## 0.2.1 2021 年 9 月 23 日

增加 string.quoted.docstring.multi.python 支持

## 0.1.7 2021 年 9 月 22 日

class 颜色从 variable 中独立

增加 property 的颜色

## 0.0.5 2021 年 2 月 18 日

更新一部分颜色

增加 TAB 的边缘色条

## 0.0.4 2019-12-16

因为实在有太多名叫 Dark++的主题,所以改名为 Dark+3 😄

## 0.0.3 2019-12-16

set constant.numeric to #91afd1

## 0.0.2 2019-12-16

### meta.funtion color

## 0.0.1 2019-12-16

### make meta.funtion color

| 16.117647 | 95 | 0.701946 | yue_Hant | 0.967174 |

26715f6739d597f87a3d3835dc4b7dc2f86c51ef | 9,663 | md | Markdown | src/pages/java/index.md | yashinnhl/guide | 755226f505063f5bb7aa246e9a557c908f57b2d7 | [

"BSD-3-Clause"

] | 1 | 2018-09-16T18:41:26.000Z | 2018-09-16T18:41:26.000Z | src/pages/java/index.md | yashinnhl/guide | 755226f505063f5bb7aa246e9a557c908f57b2d7 | [

"BSD-3-Clause"

] | 7 | 2020-07-19T10:17:15.000Z | 2022-02-26T01:49:48.000Z | src/pages/java/index.md | yashinnhl/guide | 755226f505063f5bb7aa246e9a557c908f57b2d7 | [

"BSD-3-Clause"

] | 2 | 2018-03-15T20:44:04.000Z | 2019-07-12T06:57:41.000Z | ---

title: Java

---

**What is Java?**

<a href='https://www.oracle.com/java/index.html' target='_blank' rel='nofollow'>Java</a> is a programming language developed by <a href='https://en.wikipedia.org/wiki/Sun_Microsystems' target='_blank' rel='nofollow'>Sun Microsystems</a> in 1995, which got later acquired by <a href='http://www.oracle.com/index.html' target='_blank' rel='nofollow'>Oracle</a>. It's now a full platform with lots of standard APIs, open source APIs, tools, huge developer community and is used to build the most trusted enterprise solutions by big and small companies alike. <a href='https://www.android.com/' target='_blank' rel='nofollow'>Android</a> application development is done fully with Java and its ecosystem. To know more about Java, read <a href='https://java.com/en/download/faq/whatis_java.xml' target='_blank' rel='nofollow'>this</a> and <a href='http://tutorials.jenkov.com/java/what-is-java.html' target='_blank' rel='nofollow'>this</a>.

## Version

The latest version is <a href='http://www.oracle.com/technetwork/java/javase/overview' target='_blank' rel='nofollow'> Java 9</a>, which was released in 2017 with <a href='https://docs.oracle.com/javase/9/whatsnew/toc.htm#JSNEW-GUID-C23AFD78-C777-460B-8ACE-58BE5EA681F6' target='_blank' rel='nofollow'>various improvements</a> over the previous version, Java 8. But for all intents and purposes, we will use Java 8 in this wiki for all tutorials.

Java is also divided into several "Editions" :

* <a href='http://www.oracle.com/technetwork/java/javase/overview/index.html' target='_blank' rel='nofollow'>SE</a> - Standard Edition - for desktop and standalone server applications

* <a href='http://www.oracle.com/technetwork/java/javaee/overview/index.html' target='_blank' rel='nofollow'>EE</a> - Enterprise Edition - for developing and executing Java components that run embedded in a Java server

* <a href='http://www.oracle.com/technetwork/java/embedded/javame/overview/index.html' target='_blank' rel='nofollow'>ME</a> - Micro Edition - for developing and executing Java applications on mobile phones and embedded devices

## Installation : JDK or JRE ?

Download the latest Java binaries from the <a href='http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html' target='_blank' rel='nofollow'>official website</a>. Here you may face a question, which one to download, JDK or JRE? JRE stands for Java Runtime Environment, which is the platform dependent Java Virtual Machine to run Java codes, and JDK stands for Java Development Kit, which consists of most of the development tools, most importantly the compiler `javac`, and also the JRE. So, for an average user JRE would be sufficient, but since we would be developing with Java, we would download the JDK.

## Platform specific installation instructions

### Windows

* Download the relevant <a href='https://en.wikipedia.org/wiki/Windows_Installer' target='_blank' rel='nofollow'>.msi</a> file (x86 / i586 for 32bits, x64 for 64bits)

* Run the .msi file. Its a self extracting executable file which will install Java in your system!

### Linux

* Download the relevant <a href='http://www.cyberciti.biz/faq/linux-unix-bsd-extract-targz-file/' target='_blank' rel='nofollow'>tar.gz</a> file for your system and install :

`bash

$ tar zxvf jdk-8uversion-linux-x64.tar.gz`

* <a href='https://en.wikipedia.org/wiki/List_of_Linux_distributions#RPM-based' target='_blank' rel='nofollow'>RPM based Linux platforms</a> download the relevant <a href='https://en.wikipedia.org/wiki/RPM_Package_Manager' target='_blank' rel='nofollow'>.rpm</a> file and install :

`bash

$ rpm -ivh jdk-8uversion-linux-x64.rpm`

* Users have the choice to install an open source version of Java, OpenJDK or the Oracle JDK. While OpenJDK is in active development and in sync with Oracle JDK, they just differ in <a href='http://openjdk.java.net/faq/' target='_blank' rel='nofollow'>licensing</a> stuff. However few developers complain of the stability of Open JDK. Instructions for **Ubuntu** :

Open JDK installation :

`bash

sudo apt-get install openjdk-8-jdk`

Oracle JDK installation :

`bash

sudo add-apt-repository ppa:webupd8team/java

sudo apt-get update

sudo apt-get install oracle-java8-installer`

### Mac

* Either download Mac OSX .dmg executable from Oracle Downloads

* Or use <a href='http://brew.sh/' target='_blank' rel='nofollow'>Homebrew</a> to <a href='http://stackoverflow.com/a/28635465/2861269' target='_blank' rel='nofollow'>install</a> :

`bash

brew tap caskroom/cask

brew install brew-cask

brew cask install java`

### Verify Installation

Verify Java has been properly installed in your system by opening Command Prompt (Windows) / Windows Powershell / Terminal (Mac OS and *Unix) and checking the versions of Java runtime and compiler :

$ java -version

java version "1.8.0_66"

Java(TM) SE Runtime Environment (build 1.8.0_66-b17)

Java HotSpot(TM) 64-Bit Server VM (build 25.66-b17, mixed mode)

$ javac -version

javac 1.8.0_66

**Tip** : If you get an error such as "Command Not Found" on either `java` or `javac` or both, dont panic, its just your system PATH is not properly set. For Windows, see <a href='http://stackoverflow.com/questions/15796855/java-is-not-recognized-as-an-internal-or-external-command' target='_blank' rel='nofollow'>this StackOverflow answer</a> or <a href='http://javaandme.com/' target='_blank' rel='nofollow'>this article</a> on how to do it. Also there are guides for <a href='http://stackoverflow.com/questions/9612941/how-to-set-java-environment-path-in-ubuntu' target='_blank' rel='nofollow'>Ubuntu</a> and <a href='http://www.mkyong.com/java/how-to-set-java_home-environment-variable-on-mac-os-x/' target='_blank' rel='nofollow'>Mac</a> as well. If you still can't figure it out, dont worry, just ask us in our <a href='https://gitter.im/FreeCodeCamp/java' target='_blank' rel='nofollow'>Gitter room</a>!

## JVM

Ok now since we are done with the installations, let's begin to understand first the nitty gritty of the Java ecosystem. Java is an <a href='http://stackoverflow.com/questions/1326071/is-java-a-compiled-or-an-interpreted-programming-language' target='_blank' rel='nofollow'>interpreted and compiled</a> language, that is the code we write gets compiled to bytecode and interpreted to run . We write the code in .java files, Java compiles them into <a href='https://en.wikipedia.org/wiki/Java_bytecode' target='_blank' rel='nofollow'>bytecodes</a> which are run on a Java Virtual Machine or JVM for execution. These bytecodes typically has a .class extension.

Java is a pretty secure language as it doesn't let your program run directly on the machine. Instead, your program runs on a Virtual Machine called JVM. This Virtual Machine exposes several APIs for low level machine interactions you can make, but other than that you cannot play with machine instructions explicitely. This adds a huge bonus of security.

Also, once your bytecode is compiled it can run on any Java VM. This Virtual Machine is machine dependent, i.e it has different implementations for Windows, Linux and Mac. But your program is guranteed to run in any system thanks to this VM. This philosophy is called <a href='https://en.wikipedia.org/wiki/Write_once,_run_anywhere' target='_blank' rel='nofollow'>"Write Once, Run Anywhere"</a>.

## Hello World!

Let's write a sample Hello World application. Open any editor / IDE of choice and create a file `HelloWorld.java`.

public class HelloWorld {

public static void main(String[] args) {

// Prints "Hello, World" to the terminal window.

System.out.println("Hello, World");

}

}

**N.B.** Keep in mind in Java file name should be the **exact same name of the public class** in order to compile!

Now open the terminal / Command Prompt. Change your current directory in the terminal / Command Prompt to the directory where your file is located. And compile the file :

$ javac HelloWorld.java

Now run the file using `java` command!

$ java HelloWorld

Hello, World

Congrats! Your first Java program has run successfully. Here we are just printing a string passing it to the API `System.out.println`. We will cover all the concepts in the code, but you are welcome to take a <a href='https://docs.oracle.com/javase/tutorial/getStarted/application/' target='_blank' rel='nofollow'>closer look</a>! If you have any doubt or need additional help, feel free to contact us anytime in our <a href='https://gitter.im/FreeCodeCamp/java' target='_blank' rel='nofollow'>Gitter Chatroom</a>!

## Documentation

Java is heavily <a href='https://docs.oracle.com/javase/8/docs/' target='_blank' rel='nofollow'>documented</a>, as it supports huge amounts of API's. If you are using any major IDE such as Eclipse or IntelliJ IDEA, you would find the Java Documentation included within.

Also, here is a list of free IDEs for Java coding:

* <a href='https://netbeans.org/' target='_blank' rel='nofollow'>NetBeans</a>

* <a href='https://eclipse.org/' target='_blank' rel='nofollow'>Eclipse</a>

* <a href='https://www.jetbrains.com/idea/features/' target='_blank' rel='nofollow'>IntelliJ IDEA</a>

* <a href='https://developer.android.com/studio/index.html' target='_blank' rel='nofollow'>Android Studio</a>

* <a href='https://www.bluej.org/' target='_blank' rel='nofollow'>BlueJ</a>

* <a href='http://www.jedit.org/' target='_blank' rel='nofollow'>jEdit</a>

* <a href='http://www.oracle.com/technetwork/developer-tools/jdev/overview/index-094652.html' target='_blank' rel='nofollow'>Oracle JDeveloper</a>

| 79.204918 | 935 | 0.746766 | eng_Latn | 0.917044 |

26738e973f87a7005f22f0c263ed0fb7ab8550dd | 4,470 | md | Markdown | docs/data/odbc/recordset-locking-records-odbc.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/data/odbc/recordset-locking-records-odbc.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/data/odbc/recordset-locking-records-odbc.md | Mdlglobal-atlassian-net/cpp-docs.it-it | c8edd4e9238d24b047d2b59a86e2a540f371bd93 | [

"CC-BY-4.0",

"MIT"

] | 1 | 2020-05-28T15:54:57.000Z | 2020-05-28T15:54:57.000Z | ---

title: 'Recordset: blocco dei record (ODBC)'

ms.date: 11/04/2016

helpviewer_keywords:

- locks [C++], recordsets

- optimistic locking

- pessimistic locking in ODBC

- recordsets [C++], locking records

- optimistic locking, ODBC

- ODBC recordsets [C++], locking records

- data [C++], locking

ms.assetid: 8fe8fcfe-b55a-41a8-9136-94a7cd1e4806

ms.openlocfilehash: abd5f817ad321241df2d8565bd6bf346c0792088

ms.sourcegitcommit: c123cc76bb2b6c5cde6f4c425ece420ac733bf70

ms.translationtype: MT

ms.contentlocale: it-IT

ms.lasthandoff: 04/14/2020

ms.locfileid: "81366967"

---

# <a name="recordset-locking-records-odbc"></a>Recordset: blocco dei record (ODBC)

Le informazioni contenute in questo argomento sono valide per le classi ODBC MFC.

In questo argomento:

- [Tipi di blocco dei record disponibili.](#_core_record.2d.locking_modes)

- [Come bloccare i record nel recordset durante gli aggiornamenti.](#_core_locking_records_in_your_recordset)

Quando si utilizza un recordset per aggiornare un record nell'origine dati, l'applicazione può bloccare il record in modo che nessun altro utente possa aggiornare il record contemporaneamente. Lo stato di un record aggiornato da due utenti contemporaneamente non è definito, a meno che il sistema non possa garantire che due utenti non possano aggiornare un record contemporaneamente.

> [!NOTE]

> Questo argomento si applica agli oggetti derivati da `CRecordset` in cui non è stato implementato il recupero di massa di righe. Se è stato implementato il recupero di massa di righe, alcune delle informazioni non sono valide. Ad esempio, non `Edit` è `Update` possibile chiamare le funzioni membro e . Per ulteriori informazioni sul recupero di massa di righe, vedere Recordset: recupero di massa di [record (ODBC)](../../data/odbc/recordset-fetching-records-in-bulk-odbc.md).

## <a name="record-locking-modes"></a><a name="_core_record.2d.locking_modes"></a>Modalità di blocco dei record

Le classi di database forniscono due modalità di [blocco dei record:](../../mfc/reference/crecordset-class.md#setlockingmode)

- Blocco ottimistico (impostazione predefinita)Optimistic locking (the default)

- Blocco pessimistico

L'aggiornamento di un record avviene in tre passaggi:Updating a record occurs in three steps:

1. Iniziare l'operazione chiamando il [Edit](../../mfc/reference/crecordset-class.md#edit) funzione membro.

1. Modificare i campi appropriati del record corrente.

1. Terminare l'operazione, e normalmente eseguire il commit dell'aggiornamento, chiamando la funzione membro [Update.](../../mfc/reference/crecordset-class.md#update)

Il blocco ottimistico blocca il record `Update` nell'origine dati solo durante la chiamata. Se si utilizza il blocco ottimistico in un `Update` ambiente multiutente, l'applicazione deve gestire una condizione di errore. Il blocco pessimistico blocca il `Edit` record non appena viene `Update` chiamato e non lo `CDBException` rilascia fino a quando non viene `Update`chiamato (gli errori vengono indicati tramite il meccanismo, non da un valore FALSE restituito da ). Il blocco pessimistico comporta una potenziale riduzione delle prestazioni per altri utenti, perché `Update` l'accesso simultaneo allo stesso record potrebbe dover attendere il completamento del processo dell'applicazione.

## <a name="locking-records-in-your-recordset"></a><a name="_core_locking_records_in_your_recordset"></a>Blocco dei record nel recordset

Se si desidera modificare la [modalità](#_core_record.2d.locking_modes) di blocco predefinita di un oggetto `Edit`recordset, è necessario modificare la modalità prima di chiamare .

#### <a name="to-change-the-current-locking-mode-for-your-recordset"></a>Per modificare la modalità di blocco corrente per il recordset

1. Chiamare la funzione membro [SetLockingMode](../../mfc/reference/crecordset-class.md#setlockingmode) `CRecordset::optimistic`, specificando o `CRecordset::pessimistic` .

La nuova modalità di blocco rimane attiva fino a quando non viene modificata nuovamente o il recordset non viene chiuso.

> [!NOTE]

> Relativamente pochi driver ODBC supportano attualmente il blocco pessimistico.

## <a name="see-also"></a>Vedere anche

[Recordset (ODBC)](../../data/odbc/recordset-odbc.md)<br/>

[Recordset: esecuzione di un join (ODBC)](../../data/odbc/recordset-performing-a-join-odbc.md)<br/>

[Recordset: aggiunta, aggiornamento ed eliminazione di record (ODBC)](../../data/odbc/recordset-adding-updating-and-deleting-records-odbc.md)

| 62.957746 | 690 | 0.788814 | ita_Latn | 0.99441 |

2673c4a31b9db141190904be6575e5481c0ecdca | 697 | md | Markdown | README.md | mtrobregado/Vaccine-Carrier-Cold-Box-Temperature-Monitoring | 98f8b6c888a59a7be2202ac0f47df22705b44333 | [

"MIT"

] | 1 | 2021-10-01T10:43:12.000Z | 2021-10-01T10:43:12.000Z | README.md | mtrobregado/Vaccine-Carrier-Cold-Box-Temperature-Monitoring | 98f8b6c888a59a7be2202ac0f47df22705b44333 | [

"MIT"

] | null | null | null | README.md | mtrobregado/Vaccine-Carrier-Cold-Box-Temperature-Monitoring | 98f8b6c888a59a7be2202ac0f47df22705b44333 | [

"MIT"

] | 1 | 2022-01-02T07:34:58.000Z | 2022-01-02T07:34:58.000Z |

Project Name: Vaccine Carrier Cold Box Temperature Monitoring

Author: Markel Robregado

Lemuel Adane

Date Created: 8/5/2021

Project Description:

- AWS IOT EduKit, collect sensor data via UART from a Texas Instruments Sub 1-Ghz sensor network,

and send these data to AWS IOT. Sub 1-Ghz sensor network consist of collector and sensor nodes.

Each sensor node is attached to a vaccine carrier cold box.

Hardware:

- AWS IOT EduKit

- CC1352R Launchpad (collector)

- LPSTK-CC1352R (sensor node connected to vaccine carrier cold box)

Pre-requisites:

- See, getting started guide from this link. https://edukit.workshop.aws/en/getting-started.html | 36.684211 | 100 | 0.730273 | eng_Latn | 0.858046 |

2673e923b4a002176390ea5e9dff7914d2b0a52c | 1,021 | md | Markdown | course-details.md | amyschoen/intro-to-html | 02e583e2b52ab9dd7cd88112a0ad3118543ba1f1 | [

"CC-BY-4.0"

] | null | null | null | course-details.md | amyschoen/intro-to-html | 02e583e2b52ab9dd7cd88112a0ad3118543ba1f1 | [

"CC-BY-4.0"

] | null | null | null | course-details.md | amyschoen/intro-to-html | 02e583e2b52ab9dd7cd88112a0ad3118543ba1f1 | [

"CC-BY-4.0"

] | null | null | null | If you are an aspiring developer, creating a simple webpage is a great way to take your first steps into the exciting world of programming. The first skill you should learn is HTML.

HTML is the markup language that forms the backbone of the internet. In this course, you will build a clean, stunning webpage using HTML. As you build your page, we will show you how you can use GitHub Pages to host your website free of charge. Your new HTML skills will form an important foundation in your journey as a new developer.

In this course, you'll learn how to:

- Make a simple HTML website

- Use foundational HTML concepts, like tags, headers, lists, images, and links

- Publish your page to the web using GitHub Pages

This course has a dedicated message board on the [GitHub Community]({{ communityBoard }}) website. If you want to discuss this course with GitHub Trainers or other participants create a post over there. The message board can also be used to troubleshoot any issue you encounter while taking this course.

| 85.083333 | 335 | 0.789422 | eng_Latn | 0.999825 |

26751f1b40e7adbda194d7feb5fe1a24a697184e | 1,946 | md | Markdown | _draft/Algorithm/2021-03-25-leetcode-03.md | kangyongseok/f-log.github.io | d33616b3fb8bdd06163385aa1298289f81a42bab | [

"MIT"

] | 1 | 2019-03-18T14:06:33.000Z | 2019-03-18T14:06:33.000Z | _draft/Algorithm/2021-03-25-leetcode-03.md | kangyongseok/kangyongseok.github.io | d33616b3fb8bdd06163385aa1298289f81a42bab | [

"MIT"

] | null | null | null | _draft/Algorithm/2021-03-25-leetcode-03.md | kangyongseok/kangyongseok.github.io | d33616b3fb8bdd06163385aa1298289f81a42bab | [

"MIT"

] | null | null | null | ---

title: "알고리즘 - Maximum Depth of Binary Tree"

categories:

- Algorithm

tags:

- Algorithm

- coding

- javascript

- leetcode

- 알고리즘풀이

- 연습

- DFS(깊이우선탐색)

- 재귀

toc: true

toc_sticky: true

comments: true

---

Given the root of a binary tree, return its maximum depth.

A binary tree's maximum depth is the number of nodes along the longest path from the root node down to the farthest leaf node.

## Example 1:

```console

Input: root = [3,9,20,null,null,15,7]

Output: 3

```

## Example 2:

```console

Input: root = [1,null,2]

Output: 2

```

## Example 3:

```console

Input: root = []

Output: 0

```

## Example 4:

```console

Input: root = [0]

Output: 1

```

## Constraints:

The number of nodes in the tree is in the range [0, 104].

-100 <= Node.val <= 100

## 문제풀이

2진트리로 구성된 노드의 가장 깊은 깊이에 대해서 구하는 알고리즘이 DFS(깊이 우선 탐색) 으로 해결해야하는 문제라 재귀용법을 써야한다.

```javascript

/**

* Definition for a binary tree node.

* function TreeNode(val, left, right) {

* this.val = (val===undefined ? 0 : val)

* this.left = (left===undefined ? null : left)

* this.right = (right===undefined ? null : right)

* }

*/

/**

* @param {TreeNode} root

* @return {number}

*/

var maxDepth = function(root) {

if (!root) return 0;

let max = 0;

(function calcDepth(root, currnetCount = 0) {

++currnetCount

if (max < currnetCount) max = currnetCount

if(root.left) calcDepth(root.left, currnetCount)

if(root.right) calcDepth(root.right, currnetCount)

})(root)

return max

};

```

>이게 어떻게 이렇게되는지 모르겠는데 원래 자바스크립트에서 빈 배열객체를 `![]` 이렇게 했을때 어쨋든 객체 자체는 존재하는거라서 `false` 가 출력된다. 근데 릿코드에서는 `true` 로 빈 배열이라고 체크를 해준다

`calcDepth` 라는 재귀로 반복시킬 함수를 하나 만들고 인자로 2진으로 나뉘어질 루트의 목록을 받고 목록이 있다는건 자식노드가 존재한다는것이기때문에 그때마다 뎁스계산을 위해 count 를 1씩 증가시켜준다.

left 노드와 right 노드가 존재하게되는데 만약 left 노드의 뎁스가 더 길다면 마지막 count 는 더 짧은 right 노드의 뎁스를 기억하게된다. 따라서 가장 긴 뎁스의 깊이를 기억하고 있어야할 `max` 변수를 만들어 가장 깊은 뎁스를 기억하도록 하였다.

| 21.622222 | 149 | 0.640288 | kor_Hang | 0.999071 |

26770d8ac0b64e9835f7398768d0f81de9383132 | 22 | md | Markdown | doc/source/paddlerec/contribute.md | michaelwang123/PaddleRec | 4feb0a7f962e918bdfa4f7289a9ddfd08d459824 | [

"Apache-2.0"

] | 2,739 | 2020-04-28T05:12:48.000Z | 2022-03-31T16:01:49.000Z | doc/contribute.md | hzj1558718/PaddleRec | 927e363e42ab55dd2961b0fbbb23c2578d289105 | [

"Apache-2.0"

] | 205 | 2020-05-14T13:29:14.000Z | 2022-03-31T13:01:50.000Z | doc/contribute.md | hzj1558718/PaddleRec | 927e363e42ab55dd2961b0fbbb23c2578d289105 | [

"Apache-2.0"

] | 545 | 2020-05-14T13:19:13.000Z | 2022-03-24T07:53:05.000Z | # PaddleRec 贡献代码

> 占位

| 7.333333 | 16 | 0.681818 | vie_Latn | 0.385531 |

2677d83fd22770ad7ba8318a498dd7db82fcd2bf | 876 | md | Markdown | README.md | tstirrat15/predictit_538_odds | dc4ab8263ebbdd209fa03a6965c31c3a69c2cd1b | [

"MIT"

] | null | null | null | README.md | tstirrat15/predictit_538_odds | dc4ab8263ebbdd209fa03a6965c31c3a69c2cd1b | [

"MIT"

] | null | null | null | README.md | tstirrat15/predictit_538_odds | dc4ab8263ebbdd209fa03a6965c31c3a69c2cd1b | [

"MIT"

] | null | null | null | # Introduction

### Compare PredictIt shares, betting odds, 538 models, Economist models, and 538 polling in the 2020 U.S. Presidential election.

A simple python script that compares by state:

* The latest [general election polling](https://projects.fivethirtyeight.com/polls/president-general/) used by 538

* 538 [presidential election forecast](https://projects.fivethirtyeight.com/2020-election-forecast/)

* The Econmist [presidential election forecast](https://projects.economist.com/us-2020-forecast/president)

* Current PredictIt [share prices](https://www.predictit.org/markets/13/Prez-Election)

* The [implied probabilities](https://help.smarkets.com/hc/en-gb/articles/214058369-How-to-calculate-implied-probability-in-betting) of gambling odds

## To run this script, first install the necessary libraries via pip:

```

pip install requests pandas numpy datetime

``` | 54.75 | 149 | 0.789954 | eng_Latn | 0.767954 |

26781ddc9a92ec665d7cc2b216ea42dc63be017a | 6,206 | md | Markdown | articles/virtual-machines/workloads/oracle/oracle-disaster-recovery.md | Kraviecc/azure-docs.pl-pl | 4fffea2e214711aa49a9bbb8759d2b9cf1b74ae7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/virtual-machines/workloads/oracle/oracle-disaster-recovery.md | Kraviecc/azure-docs.pl-pl | 4fffea2e214711aa49a9bbb8759d2b9cf1b74ae7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/virtual-machines/workloads/oracle/oracle-disaster-recovery.md | Kraviecc/azure-docs.pl-pl | 4fffea2e214711aa49a9bbb8759d2b9cf1b74ae7 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Omówienie scenariusza odzyskiwania po awarii programu Oracle w środowisku platformy Azure | Microsoft Docs

description: Scenariusz odzyskiwania po awarii dla Oracle Database bazy danych 12c w środowisku platformy Azure

author: dbakevlar

ms.service: virtual-machines

ms.subservice: oracle

ms.collection: linux

ms.topic: article

ms.date: 08/02/2018

ms.author: kegorman

ms.openlocfilehash: 68b5b9dfd205628c9d7c430df4c0230267752e01

ms.sourcegitcommit: b4647f06c0953435af3cb24baaf6d15a5a761a9c

ms.translationtype: MT

ms.contentlocale: pl-PL

ms.lasthandoff: 03/02/2021

ms.locfileid: "101669948"

---

# <a name="disaster-recovery-for-an-oracle-database-12c-database-in-an-azure-environment"></a>Odzyskiwanie po awarii dla Oracle Database bazy danych 12c w środowisku platformy Azure

## <a name="assumptions"></a>Założenia

- Znasz zagadnienia dotyczące projektowania i środowisk platformy Azure z usługą Oracle Data Guard.

## <a name="goals"></a>Cele

- Zaprojektuj topologię i konfigurację, która spełnia wymagania odzyskiwania po awarii (DR).

## <a name="scenario-1-primary-and-dr-sites-on-azure"></a>Scenariusz 1. Lokacje podstawowe i DR na platformie Azure

Klient ma bazę danych Oracle skonfigurowaną w lokacji głównej. Lokacja DR znajduje się w innym regionie. Klient korzysta z usługi Oracle Data Guard do szybkiego odzyskiwania między tymi lokacjami. Lokacja główna ma także pomocniczą bazę danych do raportowania i innych celów.

### <a name="topology"></a>Topologia

Poniżej znajduje się podsumowanie konfiguracji platformy Azure:

- Dwie lokacje (lokacja główna i lokacja odzyskiwania po awarii)

- Dwie sieci wirtualne

- Dwie bazy danych Oracle z funkcją ochrony danych (podstawowa i w stanie gotowości)

- Dwie bazy danych Oracle z złotą bramą lub funkcją ochrony danych (tylko lokacja główna)

- Dwie usługi aplikacji, jeden podstawowy i jeden w lokacji odzyskiwania po awarii

- *Zestaw dostępności,* który jest używany dla usługi bazy danych i aplikacji w lokacji głównej

- Jedna serwera przesiadkowego w każdej lokacji, która ogranicza dostęp do sieci prywatnej i zezwala na logowanie tylko przez administratora

- Serwera przesiadkowego, Usługa aplikacji, baza danych i Brama sieci VPN w różnych podsieciach

- SIECIOWEJ grupy zabezpieczeń wymuszane w podsieciach aplikacji i bazy danych

## <a name="scenario-2-primary-site-on-premises-and-dr-site-on-azure"></a>Scenariusz 2: lokacja podstawowa i usługa odzyskiwania po awarii na platformie Azure

Klient ma lokalną konfigurację bazy danych Oracle (lokacja główna). Lokacja odzyskiwania po awarii znajduje się na platformie Azure. Funkcja Oracle Data Guard służy do szybkiego odzyskiwania między tymi lokacjami. Lokacja główna ma także pomocniczą bazę danych do raportowania i innych celów.

Istnieją dwie podejścia do tej konfiguracji.

### <a name="approach-1-direct-connections-between-on-premises-and-azure-requiring-open-tcp-ports-on-the-firewall"></a>Podejście 1: bezpośrednie połączenia między środowiskiem lokalnym i platformą Azure, wymagające otwartych portów TCP zapory

Nie zalecamy bezpośrednich połączeń, ponieważ uwidaczniają one porty TCP na świecie zewnętrznym.

#### <a name="topology"></a>Topologia

Poniżej znajduje się podsumowanie konfiguracji platformy Azure:

- Jedna lokacja DR

- Jedna sieć wirtualna

- Jedna baza danych Oracle z funkcją Data Guard (aktywna)

- Jedna usługa aplikacji w witrynie odzyskiwania po awarii

- Jeden serwera przesiadkowego, który ogranicza dostęp do sieci prywatnej i zezwala na logowanie tylko przez administratora

- Serwera przesiadkowego, Usługa aplikacji, baza danych i Brama sieci VPN w różnych podsieciach

- SIECIOWEJ grupy zabezpieczeń wymuszane w podsieciach aplikacji i bazy danych

- Zasada sieciowej grupy zabezpieczeń/reguła zezwalająca na przychodzący port TCP 1521 (lub port zdefiniowany przez użytkownika)

- Zasada sieciowej grupy zabezpieczeń/reguła ograniczająca dostęp do sieci wirtualnej tylko w lokalnym adresie IP lub w aplikacji.

### <a name="approach-2-site-to-site-vpn"></a>Podejście 2: sieć VPN typu lokacja-lokacja

Sieci VPN typu lokacja-lokacja jest lepszym rozwiązaniem. Aby uzyskać więcej informacji na temat konfigurowania sieci VPN, zobacz [Tworzenie sieci wirtualnej z połączeniem sieci VPN typu lokacja-lokacja przy użyciu interfejsu wiersza polecenia](../../../vpn-gateway/vpn-gateway-howto-site-to-site-resource-manager-cli.md).

#### <a name="topology"></a>Topologia

Poniżej znajduje się podsumowanie konfiguracji platformy Azure:

- Jedna lokacja DR

- Jedna sieć wirtualna

- Jedna baza danych Oracle z funkcją Data Guard (aktywna)

- Jedna usługa aplikacji w witrynie odzyskiwania po awarii

- Jeden serwera przesiadkowego, który ogranicza dostęp do sieci prywatnej i zezwala na logowanie tylko przez administratora

- Serwera przesiadkowego, Usługa aplikacji, baza danych i Brama sieci VPN znajdują się w różnych podsieciach

- SIECIOWEJ grupy zabezpieczeń wymuszane w podsieciach aplikacji i bazy danych

- Połączenie sieci VPN typu lokacja-lokacja między środowiskiem lokalnym i platformą Azure

## <a name="additional-reading"></a>Materiały uzupełniające

- [Projektowanie i implementowanie bazy danych Oracle na platformie Azure](oracle-design.md)

- [Konfigurowanie środowiska Oracle Data Guard](configure-oracle-dataguard.md)

- [Konfigurowanie firmy Oracle — Złotej Bramy](configure-oracle-golden-gate.md)

- [Kopia zapasowa Oracle i odzyskiwanie](./oracle-overview.md)

## <a name="next-steps"></a>Następne kroki

- [Samouczek: Tworzenie maszyn wirtualnych o wysokiej dostępności](../../linux/create-cli-complete.md)

- [Eksplorowanie przykładów interfejsu wiersza polecenia platformy Azure wdrożenia maszyny wirtualnej](https://github.com/Azure-Samples/azure-cli-samples/tree/master/virtual-machine) | 60.252427 | 322 | 0.810345 | pol_Latn | 0.999906 |

26783cd35296526f02f6a5d96523a5fb9426f44b | 5,200 | md | Markdown | articles/cognitive-services/Translator/custom-translator/how-to-view-system-test-results.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Translator/custom-translator/how-to-view-system-test-results.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Translator/custom-translator/how-to-view-system-test-results.md | gliljas/azure-docs.sv-se-1 | 1efdf8ba0ddc3b4fb65903ae928979ac8872d66e | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Visa system test resultat och distribution – anpassad översättare

titleSuffix: Azure Cognitive Services

description: När din utbildning är klar kan du granska system testerna för att analysera dina utbildnings resultat. Om du är nöjd med utbildnings resultatet ska du placera en distributions förfrågan för den tränade modellen.

author: swmachan

manager: nitinme

ms.service: cognitive-services

ms.subservice: translator-text

ms.date: 05/26/2020

ms.author: swmachan

ms.topic: conceptual

ms.openlocfilehash: 3361241bf0a330abc18701f93460208b8804a7dc

ms.sourcegitcommit: fc718cc1078594819e8ed640b6ee4bef39e91f7f

ms.translationtype: MT

ms.contentlocale: sv-SE

ms.lasthandoff: 05/27/2020

ms.locfileid: "83994270"

---

# <a name="view-system-test-results"></a>Visa testresultat för system

När din utbildning är klar kan du granska system testerna för att analysera dina utbildnings resultat. Om du är nöjd med utbildnings resultatet ska du placera en distributions förfrågan för den tränade modellen.

## <a name="system-test-results-page"></a>Sidan system test resultat

Välj ett projekt, Välj fliken modeller i projektet, leta upp den modell som du vill använda och välj slutligen fliken test.

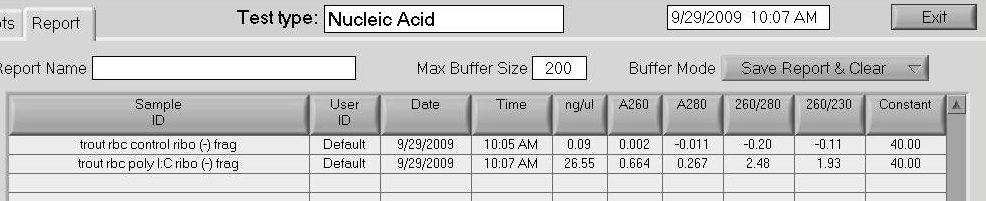

På fliken test visas:

1. **System testresultat:** Resultatet av test processen i träningarna. Test processen genererar BLEU-poängen.

**Antal meningar:** Hur många parallella meningar som användes i test uppsättningen.

**Bleu Poäng:** BLEU Poäng som genererats för en modell efter att utbildningen har slutförts.

**Status:** Anger om test processen är slutförd eller pågår.

2. Klicka på system test resultatet så kommer du till sidan testa resultat information. Den här sidan visar dator översättning av meningar som ingick i test data uppsättningen.

3. Tabellen på sidan med test resultat information har två kolumner – en för varje språk i paret. Kolumnen för käll språket visar meningen som ska översättas. Kolumnen för mål språket innehåller två meningar i varje rad.

**Ref:** Den här meningen är referens översättningen av käll meningen som anges i test data uppsättningen.

**MT:** Den här meningen är den automatiska översättningen av käll meningen som görs av modellen som skapats efter att utbildningen genomförts.

## <a name="download-test"></a>Hämta test

Klicka på länken Hämta översättningar för att ladda ned en zip-fil. Zip-filen innehåller dator översättningar av käll meningar i data uppsättningen test.

Det hämtade ZIP-arkivet innehåller tre filer.

1. **Anpassad. mt. txt:** Den här filen innehåller dator översättningar av käll språk meningar på mål språket som sköts av modellen som tränas med användarens data.

2. **Ref. txt:** Den här filen innehåller användare som har angett översättningar av käll språkets meningar på mål språket.

3. **källa. txt:** Den här filen innehåller meningar i käll språket.

## <a name="deploy-a-model"></a>Distribuera en modell

Så här begär du en distribution:

1. Välj ett projekt, gå till fliken modeller.

2. För en lyckad tränad modell visas knappen "distribuera", om den inte distribueras.

3. Klicka på distribuera.

4. Välj **distribuerad** för de regioner där du vill att din modell ska distribueras och klicka sedan på Spara. Du kan välja **distribuerat** i flera regioner.

5. Du kan visa status för din modell i kolumnen "status".

>[!Note]

>Anpassad översättare stöder 10 distribuerade modeller inom en arbets yta vid varje tidpunkt.

## <a name="update-deployment-settings"></a>Uppdatera distributions inställningar

Så här uppdaterar du distributions inställningarna:

1. Välj ett projekt och gå till fliken **modeller** .

2. För en lyckad distribuerad modell visas en **uppdaterings** knapp.

3. Välj **Uppdatera**.

4. Välj **distribuerad** eller ej **distribuerad** för den eller de regioner där du vill att din modell ska distribueras eller avinstalleras och klicka sedan på **Spara**.

>[!Note]

>Om du väljer **distribuerat** i alla regioner, är modellen inte distribuerad från alla regioner och försätts i ett tillstånd där det inte har distribuerats. Den är nu inte tillgänglig för användning.

## <a name="next-steps"></a>Nästa steg

- Börja använda din distribuerade anpassade översättnings modell via [Translator v3](https://docs.microsoft.com/azure/cognitive-services/translator/reference/v3-0-translate?tabs=curl).

- Lär dig [hur du hanterar inställningar](how-to-manage-settings.md) för att dela din arbets yta, hantera prenumerations nyckel.

- Lär dig [hur du migrerar din arbets yta och ditt projekt](how-to-migrate.md) från [Microsoft Translator Hub](https://hub.microsofttranslator.com)

| 48.148148 | 224 | 0.776346 | swe_Latn | 0.999701 |

2678a113f1998c15104ccfda769f4896392bfd37 | 55 | md | Markdown | README.md | NyeGuy/A4G | 598f0ddbf2f1895e746bee8edd923ff42834f7f0 | [

"MIT"

] | null | null | null | README.md | NyeGuy/A4G | 598f0ddbf2f1895e746bee8edd923ff42834f7f0 | [

"MIT"

] | null | null | null | README.md | NyeGuy/A4G | 598f0ddbf2f1895e746bee8edd923ff42834f7f0 | [

"MIT"

] | null | null | null | # A4G

Art and Animation for Games - SCAD Game Pipeline

| 18.333333 | 48 | 0.763636 | kor_Hang | 0.652586 |

2678fd789d6d6cbd14c82c88c42303fa1fded2f8 | 5,336 | md | Markdown | 2018/articles/ComplementProgramming.md | tutlane/Writing | 66e984101e342e2c8923c911224f779861c33dc2 | [

"CC-BY-4.0"

] | 208 | 2018-04-05T17:17:29.000Z | 2022-03-31T17:51:19.000Z | 2018/articles/ComplementProgramming.md | tutlane/Writing | 66e984101e342e2c8923c911224f779861c33dc2 | [

"CC-BY-4.0"

] | 73 | 2017-01-28T15:58:14.000Z | 2018-03-03T16:32:20.000Z | 2018/articles/ComplementProgramming.md | tutlane/Writing | 66e984101e342e2c8923c911224f779861c33dc2 | [

"CC-BY-4.0"

] | 85 | 2018-06-18T15:31:48.000Z | 2022-02-27T13:20:47.000Z | # Complement Programming to any topic you like

[<img src="https://images.unsplash.com/photo-1494253109108-2e30c049369b?ixlib=rb-0.3.5&ixid=eyJhcHBfaWQiOjEyMDd9&s=02261b49dc587eaecb3dfae7ccfbbcaa&auto=format&fit=crop&w=2250&q=80">](

https://unsplash.com/photos/5E5N49RWtbA)

Photo by Cody Davis on Unsplash - https://unsplash.com/photos/5E5N49RWtbA

Since I started programming on my own while studying law and having work in tax, I though it is important so see coding not only as a path to already established programming jobs, but rather create your own role with it. Here are some thoughts on how to compliment programming with other fields of expertise.

(This article was created as brainstorming for a talk I gave)

## Table of Contents

<!-- TOC -->

- [Complement Programming to any topic you like](#complement-programming-to-any-topic-you-like)

- [Table of Contents](#table-of-contents)

- [Definitions](#definitions)

- [Why are we using programming?](#why-are-we-using-programming)

- [What are some basic principles for law?](#what-are-some-basic-principles-for-law)

- [Combining principles - an example](#combining-principles---an-example)

- [Legal Tech available](#legal-tech-available)

<!-- /TOC -->

## Definitions

First of all it's important to define the term programming as I am going to use it. I not only refer to it as the mere act of writing code and creating an application but rather to use newest technology (that often involves programming) to improve the status quo. It is basically the idea of digitalization in a more concise way.

## Why are we using programming?

Loops, conditionals, variables and functions are all concepts that help to abstract a problem and solve it in the language of a computer.

Why are we using a computer? Because it is faster. Way faster. Or better, it is as fast as you like. ;) It runs also as long as you like and never gets tired.

So why not use it for other things than browsing the internet or playing computer games?

As a young programmer you tend to only look at things that are already available and try to work with them. But better try to use creativity and create something new.

For example: Law.

## What are some basic principles for law?

As Elon Musk said that he would always reason from first principles rather than analogy. So what are some first principles of law?

This heavily depends on the type of law you are having in your state. For simplicity let's assume this an established and working democracy. This means that the law is made by the people living in that state. Let's also assume that people and all process around the law are bound to the law itself.

Now a first distinction has to be made what type of law are we dealing with. This is important, because the purpose and arrangement of the law depends on it's goals.

To illustrate this, let's have civil law and public law. Both shall regulate the behavior of people of course. Both shall provide guidelines on how to behave correctly with other people. However, the main difference is that civil law is concerned with regulating behavior of people acting with other people, whereas public law addresses the rights of an individual against the state (which is ultimately the biggest group of people). Therefore the legislative text has to take the disbalance of power in account. In civil law it can be fair to treat similar people similarly. In contrast, in public law, the individual gains much more rights in a case against the state to compensate the disbalance in power.

A great example for a combination with programming is tax law. Not only does tax law offer a solution for natural language processing, but also for actually calculating a tax burden.

## Combining principles - an example

Let's say we want to reduce the tax burden of an individual. How can this be done using knowledge in programming?

## Legal Tech available

First you look at your available market. In my case these are Austrian law firms or international companies that have a subsidiary in Austria. I realized that, apart from document management, no new technology is used often.

Having a look at the international market of technical solutions, there already exists software for:

- Contract Review ({LawGeex})

- Legal Analytics (Brightflag)

- Prediction Software (Lexpredict)

- Improved Search/eDiscovery (Brainspace)

- Legal Research (Ross)

- Contract Due Diligence (kira)

- Intellectual Property (TrademarkNow)

- Improved Customer Management/eBilling (Smokeball)

- Expertise Automation/Robot Lawyer (Lisa)

After having a look at some of these implementations it is much easier to see possibilities for your own ideas and create your own.

---

Thanks for reading my article! Feel free to leave any feedback!

---

Daniel is a LL.M. student in business law, working as a software engineer and organizer of tech related events in Vienna.

His current personal learning efforts focus on machine learning.

Connect on:

- [LinkedIn](https://www.linkedin.com/in/createdd)

- [Github](https://github.com/DDCreationStudios)

- [Medium](https://medium.com/@ddcreationstudi)

- [Twitter](https://twitter.com/DDCreationStudi)

- [Steemit](https://steemit.com/@createdd)

- [Hashnode](https://hashnode.com/@DDCreationStudio)

<!-- Written by Daniel Deutsch (deudan1010@gmail.com) --> | 53.36 | 709 | 0.776799 | eng_Latn | 0.999006 |

2679e49036e90ff50cf952a9f2c401b48c53564c | 4,806 | md | Markdown | _posts/http/2018-03-04-cors-session.md | Raoul1996/raoul1996.github.io | 6760b7c2ef88130d66eb024d391b5b3f48de64e8 | [

"MIT"

] | 1 | 2018-03-16T15:55:35.000Z | 2018-03-16T15:55:35.000Z | _posts/http/2018-03-04-cors-session.md | Raoul1996/raoul1996.github.io | 6760b7c2ef88130d66eb024d391b5b3f48de64e8 | [

"MIT"

] | 12 | 2016-12-14T17:52:41.000Z | 2018-05-29T07:16:11.000Z | _posts/http/2018-03-04-cors-session.md | Raoul1996/raoul1996.github.io | 6760b7c2ef88130d66eb024d391b5b3f48de64e8 | [

"MIT"

] | null | null | null | ---

layout: post

title: 前后端分离项目中基于 Session 图片验证码功能的实现

category: Http

keyword: Http session captcha

---

早就听说 Session 是一个大坑,最近在使用 Node 做图片验证码的时候,刚好掉到了里边,所以记录一下。

### 操作环境

- Eggjs 2.3.0+

- Vue 2.5.11+

- [线上投票地址](https://votes.raoul1996.cn)

- [线上后台地址](https://api.raoul1996.cn)

- [后端仓库地址](https://github.com/Raoul1996/egg-vote)

- [前端仓库地址](https://github.com/Raoul1996/vue-vote)

### Session 是什么

这个问题的确是老生常谈。由于 HTTP 协议是无状态的协议,所以服务端在记录某种状态的时候,就需要使用某种机制来识别用户,这里就可以使用 Session。

#### 常见的应用场景

- 购物车

- 图片验证码

- 追踪用户

- 等等

### 服务端如何识别 session

这时候就不得不提一下 Cookie。同样也是一个老生常谈的问题。首先想一下我们是服务器的话,我们如何来识别客户端(Client)?

由于 HTTP 是无状态的协议,所以服务端需要客户机每次访问的时候,客户端需要告诉服务端自己到底是谁。

一般服务端在用户第一次访问的时候,都会创建一个会话,称为 Session。每一个 Session 都会有一个唯一的 Session Id 用来标识会话。

在创建 Session 后,服务端就会通过某种方式来告诉客户端,需要有某种方式来记录一下 Session Id。以后每次访问的时候,都需要用某种方式将 Session Id 回传到服务端用于客户端身份的识别。

现在的问题就是,**如何把 Session Id 传回服务端。**

Cookie 就非常适合用来处理这个工作。浏览器在访问相应域名(domain)的时候,默认会自动携带对应域名以及其父域下的 Cookie,无需我们做额外处理。

所以我们就可以把 Session Id 写到 cookie 中,然后再每次发请求的时候,交给服务端验证 Session Id,识别用户的登陆状态。

#### 如果客户端禁止了 cookie 呢?

一般的处理方式是将 Session Id 添加到 url 中,供服务端识别,但是这里没有用到,故不赘述。

### 服务端如何存储 Session

Session 默认存储在服务器的内存中,然后将 Session Id 写入客户端的 Cookie 中,保证客户端碰不到 Session 中保存的关键数据,避免修改。但是在内存中务必会出现一些问题。比如说如何共享 Session,如果部署服务集群的话,存在内存中的 Session 必定是不能共享的。

#### Session 持久化存储

Session 可以存储到文件中,和各种数据库中。Node 项目的话一般选择会存储到 redis 数据库中。但是同样由于暂时没有持久化存储的需求这里先不讨论。

egg 支持将 Session 存储到 Redis 数数据库中,但是由于目前只是使用 session 存储一下 captcha 的值,没必要使用 redis 进行操作了,直接放到传统的内存中就 OK 了。

### 跨域携带 Cookie 前后端代码实现

后端使用 `egg-cors` 模块完成请求的响应头中的 `Access-Control-Allow-Headers`、`Access-Control-Allow-Methods`、`Access-Control-Allow-Origin`、`Access-Control-Allow-Credentials: true` 等条目的添加。

前端使用 `axios` 进行跨域请求。

#### 前端代码实现

[前端 axios 配置文件](https://github.com/Raoul1996/vue-vote/blob/master/src/service/axios.js)

关键的配置只有一行

```js

// axios.js

instance.defaults.withCredentials = true

```

如果没有定义 `axios` 的 `instance` 的话,直接添加到 `axios` 上面即可。

#### 前端配置解释

我们需要携带 Cookie 进行跨域请求,目的是让服务端识别写在 Cookie 中的 Session Id。那么现在的问题就是:

**跨域请求如何携带 Cookie?**

[`XMLHttpRequest.withCredentials`](https://developer.mozilla.org/zh-CN/docs/Web/API/XMLHttpRequest/withCredentials) 属性值为 `Boolean` 类型,默认值为 false。它指示了是否该使用类似cookies, authorization headers(头部授权)或者 TLS 客户端证书这一类资格证书来创建一个跨站点访问控制(cross-site Access-Control)请求。**在同一个站点下使用 withCredentials 属性是无效的。**

**此外,这个指示也会被用做响应中cookies 被忽视的标示。默认值是false。**

意思是什么呢?

如果在发送来自其他域的 XMLHttpRequest 请求之前 `XMLHttpRequest.withCredentials` 不为 `true` 的话,那么就不能为他自己的域设置 Cookie,无论设置什么 `Access-Control-header` 都没有用,没法存储来自其他域的 Cookie。

#### 后端代码实现

[后端跨域配置](https://github.com/Raoul1996/egg-vote/blob/master/config/config.default.js#L86),[后端生产环境下域名白名单配置](https://github.com/Raoul1996/egg-vote/blob/master/config/config.prod.js#L15)

关键配置有两个地方,稍候进行解释

```js

// config.default.js

module.exports = app => {

exports.cors = {

allowMethods: 'GET,HEAD,PUT,POST,DELETE,PATCH,OPTIONS',

credentials: true

}

}

```

```js

// config.prod.js

module.exports = app => {

const domainWhiteList = []

const portList = [8080, 7001]

portList.forEach(port => {

domainWhiteList.push(`http://localhost:${port}`)

domainWhiteList.push(`http://127.0.0.1:${port}`)

})

domainWhiteList.push('https://votes.raoul1996.cn')

domainWhiteList.push('http://egg.raoul1996.cn')

exports.security = {domainWhiteList}

}

```

#### 后端配置解释

在 [`config.default.js`](https://github.com/Raoul1996/egg-vote/blob/master/config/config.default.js#L86) 处的配置会为响应添加 `Access-Control-Allow-Credentials: true`,表示服务端允许跨域请求包含 Cookie

在 [`config.prod.js`](https://github.com/Raoul1996/egg-vote/blob/master/config/config.prod.js#L15) 中配置了跨域请求域名的白名单,这里会涉及到 [`egg-cors` 模块的内部实现](https://github.com/eggjs/egg-cors/blob/master/app.js)。

```js

// egg-cors 内部实现

'use strict';

module.exports = app => {

// put before other core middlewares

app.config.coreMiddlewares.unshift('cors');

// if security plugin enabled, and origin config is not provided, will only allow safe domains support CORS.

app.config.cors.origin = app.config.cors.origin || function corsOrigin(ctx) {

const origin = ctx.get('origin');

if (!ctx.isSafeDomain || ctx.isSafeDomain(origin)) {

return origin;

}

return '';

};

};

```

由于允许携带 Cookie 之后,浏览器不允许 `Access-Control-Allow-Origin` 值为 `*`,所以需要进行上述操作。

### 图片验证码功能实现

Egg 下的图片验证码的实现我已经封装了一个小的 [plugin](https://www.npmjs.com/package/egg-captcha),基于 [`ccap`](https://www.npmjs.com/package/ccap),请参阅 [这里](https://www.npmjs.com/package/egg-captcha)

### 后记

还有很多东西没有很深入的研究,比如 Session 机制的来源因果,Session 持久化的相关知识。

### 参考文章

1. [vue2 前后端分离项目ajax跨域session问题解决](https://segmentfault.com/a/1190000009208644)

2. [跨域资源共享 CORS 详解](http://www.ruanyifeng.com/blog/2016/04/cors.html)

3. [XMLHttpRequest.withCredentials](https://developer.mozilla.org/zh-CN/docs/Web/API/XMLHttpRequest/withCredentials)

| 29.666667 | 290 | 0.752185 | yue_Hant | 0.863887 |

267a0c29f9ded5914d3c36fb1dd1c785227a3aba | 1,069 | md | Markdown | treebanks/pl_pdb/pl_sz-dep-csubj.md | EmanuelUHH/docs | 641bd749c85e54e841758efa7084d8fdd090161a | [

"Apache-2.0"

] | 204 | 2015-01-20T16:36:39.000Z | 2022-03-28T00:49:51.000Z | treebanks/pl_pdb/pl_sz-dep-csubj.md | EmanuelUHH/docs | 641bd749c85e54e841758efa7084d8fdd090161a | [

"Apache-2.0"

] | 654 | 2015-01-02T17:06:29.000Z | 2022-03-31T18:23:34.000Z | treebanks/pl_pdb/pl_sz-dep-csubj.md | EmanuelUHH/docs | 641bd749c85e54e841758efa7084d8fdd090161a | [

"Apache-2.0"

] | 200 | 2015-01-16T22:07:02.000Z | 2022-03-25T11:35:28.000Z | ---

layout: base

title: 'Statistics of csubj in UD_Polish-SZ'

udver: '2'

---

## Treebank Statistics: UD_Polish-SZ: Relations: `csubj`

This relation is universal.

4 nodes (0%) are attached to their parents as `csubj`.

3 instances of `csubj` (75%) are right-to-left (child precedes parent).

Average distance between parent and child is 4.25.

The following 1 pairs of parts of speech are connected with `csubj`: <tt><a href="pl_sz-pos-NOUN.html">NOUN</a></tt>-<tt><a href="pl_sz-pos-VERB.html">VERB</a></tt> (4; 100% instances).

~~~ conllu

# visual-style 1 bgColor:blue

# visual-style 1 fgColor:white

# visual-style 4 bgColor:blue

# visual-style 4 fgColor:white

# visual-style 4 1 csubj color:blue

1 To to VERB pred _ 4 csubj _ _

2 była być AUX praet:sg:f:imperf Aspect=Imp|Gender=Fem|Number=Sing|Tense=Past|VerbForm=Part|Voice=Act 4 cop _ _

3 istna istny ADJ adj:sg:nom:f:pos Case=Nom|Degree=Pos|Gender=Fem|Number=Sing 4 amod _ _

4 makabra makabra NOUN subst:sg:nom:f Case=Nom|Gender=Fem|Number=Sing 0 root _ SpaceAfter=No

5 ! ! PUNCT interp _ 4 punct _ _

~~~

| 31.441176 | 185 | 0.725912 | eng_Latn | 0.555153 |

267e72ec35316833aa1246c16f1b5651cf150b1b | 6,296 | md | Markdown | docs/framework/windows-workflow-foundation/store-extensibility.md | adamsitnik/docs.pl-pl | c83da3ae45af087f6611635c348088ba35234d49 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/windows-workflow-foundation/store-extensibility.md | adamsitnik/docs.pl-pl | c83da3ae45af087f6611635c348088ba35234d49 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | docs/framework/windows-workflow-foundation/store-extensibility.md | adamsitnik/docs.pl-pl | c83da3ae45af087f6611635c348088ba35234d49 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Rozszerzalność magazynu

ms.date: 03/30/2017

ms.assetid: 7c3f4a46-4bac-4138-ae6a-a7c7ee0d28f5

ms.openlocfilehash: 46c1ea40925a5c79180171da9a705d7e6b7c8b89

ms.sourcegitcommit: 9b552addadfb57fab0b9e7852ed4f1f1b8a42f8e

ms.translationtype: MT

ms.contentlocale: pl-PL

ms.lasthandoff: 04/23/2019

ms.locfileid: "61641609"

---

# <a name="store-extensibility"></a>Rozszerzalność magazynu

<xref:System.Activities.DurableInstancing.SqlWorkflowInstanceStore> Umożliwia użytkownikom promować właściwości niestandardowych, specyficzne dla aplikacji, które mogą być używane do wykonywania zapytań dla wystąpień w bazie danych trwałości. Działanie promocji właściwości powoduje, że wartości, które mają być dostępne w widoku specjalne w bazie danych. Te właściwości o podwyższonym poziomie (właściwości, które mogą być używane w kwerendach użytkownika) może być typów prostych, takich jak Int64, Guid, String i daty/godziny lub serializacji typu binary (byte[]).

<xref:System.Activities.DurableInstancing.SqlWorkflowInstanceStore> Klasa ma <xref:System.Activities.DurableInstancing.SqlWorkflowInstanceStore.Promote%2A> metodę, która służy do promowania właściwość jako właściwość, które mogą być używane w zapytaniach. Poniższy przykład jest przykładem rozszerzalność magazynu end-to-end.

1. W tym przykładowym scenariuszu dokument przetwarzania aplikacji (DP) zawiera przepływy pracy, z których każdy korzysta z działań niestandardowych do przetwarzania dokumentu. Te przepływy pracy mają zestaw zmienne stanu, które muszą być widoczne dla użytkownika końcowego. Aby to osiągnąć, aplikacja DP udostępnia rozszerzenie typu <xref:System.Activities.Persistence.PersistenceParticipant>, który jest używany przez działania umożliwiają określanie wartości zmienne stanu.

```csharp

class DocumentStatusExtension : PersistenceParticipant

{

public string DocumentId;

public string ApprovalStatus;

public string UserName;

public DateTime LastUpdateTime;

}

```

2. Nowe rozszerzenie jest następnie dodawana do hosta.

```csharp

static Activity workflow = CreateWorkflow();

WorkflowApplication application = new WorkflowApplication(workflow);

DocumentStatusExtension documentStatusExtension = new DocumentStatusExtension ();

application.Extensions.Add(documentStatusExtension);

```

Aby uzyskać więcej informacji na temat dodawania niestandardowego uczestnika stanów trwałych, zobacz [uczestnicy stanów trwałych](persistence-participants.md) próbki.

3. Niestandardowe działania w aplikacji, punkt dystrybucji wypełniania różnych pól Stan w **Execute** metody.

```csharp

public override void Execute(CodeActivityContext context)

{

// ...

context.GetExtension<DocumentStatusExtension>().DocumentId = Guid.NewGuid();

context.GetExtension<DocumentStatusExtension>().UserName = "John Smith";

context.GetExtension<DocumentStatusExtension>().ApprovalStatus = "Approved";

context.GetExtension<DocumentStatusExtension>().LastUpdateTime = DateTime.Now();

// ...

}

```

4. Gdy wystąpienie przepływu pracy osiąga punkt trwałości **CollectValues** metody **DocumentStatusExtension** uczestnika stanów trwałych zapisuje te właściwości do danych stanów trwałych Kolekcja.

```csharp

class DocumentStatusExtension : PersistenceParticipant

{

const XNamespace xNS = XNamespace.Get("http://contoso.com/DocumentStatus");

protected override void CollectValues(out IDictionary<XName, object> readWriteValues, out IDictionary<XName, object> writeOnlyValues)

{

readWriteValues = new Dictionary<XName, object>();

readWriteValues.Add(xNS.GetName("UserName"), this.UserName);

readWriteValues.Add(xNS.GetName("ApprovalStatus"), this.ApprovalStatus);

readWriteValues.Add(xNS.GetName("DocumentId"), this.DocumentId);

readWriteValues.Add(xNS.GetName("LastModifiedTime"), this.LastUpdateTime);

writeOnlyValues = null;

}

// ...

}

```

> [!NOTE]

> Te właściwości są przekazywane do **SqlWorkflowInstanceStore** przez platformę trwałości za pośrednictwem **SaveWorkflowCommand.InstanceData** kolekcji.

5. Aplikacja DP inicjuje Store wystąpienia przepływu pracy SQL i wywołuje **Podwyższ poziom** metodę, aby podwyższyć poziom tych danych.

```csharp

SqlWorkflowInstanceStore store = new SqlWorkflowInstanceStore(connectionString);

List<XName> variantProperties = new List<XName>()

{

xNS.GetName("UserName"),

xNS.GetName("ApprovalStatus"),

xNS.GetName("DocumentId"),

xNS.GetName("LastModifiedTime")

};

store.Promote("DocumentStatus", variantProperties, null);

```

Na podstawie tych informacji podwyższania poziomu **SqlWorkflowInstanceStore** umieszcza właściwości danych w kolumnach tabeli [InstancePromotedProperties](#InstancePromotedProperties) widoku.

6. Aby wysłać zapytanie podzbiór danych z tabeli podwyższania poziomu, aplikacji DP dodaje dostosowany widok na widok podwyższania poziomu.

```sql

create view [dbo].[DocumentStatus] with schemabinding

as

select P.[InstanceId] as [InstanceId],

P.Value1 as [UserName],

P.Value2 as [ApprovalStatus],

P.Value3 as [DocumentId],

P.Value4 as [LastUpdatedTime]

from [System.Activities.DurableInstancing].[InstancePromotedProperties] as P

where P.PromotionName = N'DocumentStatus'

go

```

## <a name="InstancePromotedProperties"></a> Widok [System.Activities.DurableInstancing.InstancePromotedProperties]

|Nazwa kolumny|Typ kolumny|Opis|

|-----------------|-----------------|-----------------|

|InstanceId|Identyfikator GUID|Wystąpienie przepływu pracy, który należy do tej promocji.|

|PromotionName|nvarchar(400)|Nazwa sam podwyższenia poziomu.|

|Wartość1, wartość2, Wartość3,..., Value32|sql_variant|Wartość o podwyższonym poziomie samej właściwości. Większość typów pierwotnych danych SQL, z wyjątkiem pliku binarnego obiekty BLOB i ponad 8000 bajtów długości ciągów mieści się w sql_variant.|

|Value35 Value33, Value34,..., Value64|Varbinary(max)|Wartość właściwości o podwyższonym poziomie, które są jawnie zadeklarowane jako varbinary(max).|

| 52.466667 | 567 | 0.752859 | pol_Latn | 0.991703 |

267eca8ebcf2ec075aced8aeb348a2603305c7cc | 4,544 | md | Markdown | includes/virtual-wan-tutorial-s2s-site-include.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/virtual-wan-tutorial-s2s-site-include.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/virtual-wan-tutorial-s2s-site-include.md | sonquer/azure-docs.pl-pl | d8159cf8e870e807bd64e58188d281461b291ea8 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Plik dyrektywy include

description: Plik dyrektywy include

services: virtual-wan

author: cherylmc

ms.service: virtual-wan

ms.topic: include

ms.date: 11/04/2019

ms.author: cherylmc

ms.custom: include file

ms.openlocfilehash: 4bcee1097010bb8746b11185a470ca2584485c3f

ms.sourcegitcommit: c22327552d62f88aeaa321189f9b9a631525027c

ms.translationtype: MT

ms.contentlocale: pl-PL

ms.lasthandoff: 11/04/2019

ms.locfileid: "73488844"

---

1. Na stronie portalu wirtualnej sieci WAN w sekcji **połączenie** wybierz pozycję **Lokacje sieci VPN** , aby otworzyć stronę witryny sieci VPN.

2. Na stronie **Lokacje sieci VPN** kliknij pozycję **+Utwórz lokację**.

3. Na stronie **Tworzenie witryny sieci VPN** na karcie **podstawowe** wykonaj następujące pola:

* **Region** — wcześniej nazywany lokalizacją. Jest to lokalizacja, w której chcesz utworzyć zasób lokacji.

* **Nazwa** — nazwa, przez którą chcesz odwołać się do lokacji lokalnej.

* **Dostawca urządzenia** — nazwa dostawcy urządzenia sieci VPN (na przykład: Citrix, Cisco, Barracuda). Dzięki temu zespół platformy Azure może lepiej zrozumieć swoje środowisko w celu dodania do niego dodatkowych możliwości optymalizacji lub ułatwienia rozwiązywania problemów.

* Wartość Włącz **Border Gateway Protocol** oznacza, że wszystkie połączenia z lokacji będą włączone przy użyciu protokołu BGP. Ostatecznie skonfigurujemy informacje protokołu BGP dla każdego łącza z witryny sieci VPN w sekcji linki. Konfigurowanie protokołu BGP w wirtualnej sieci WAN jest równoznaczne z konfiguracją protokołu BGP na sieci VPN bramy sieci wirtualnej platformy Azure. Adres lokalnego elementu równorzędnego protokołu BGP nie może być taki sam jak publiczny adres IP sieci VPN do urządzenia lub przestrzeni adresowej sieci wirtualnej witryny sieci VPN. Użyj innego adresu IP na urządzeniu sieci VPN dla adresu IP elementu równorzędnego protokołu BGP. Może to być adres przypisany do interfejsu sprzężenia zwrotnego na urządzeniu. Jednak nie może być adresem APIPA (169.254. x. x). Określ ten adres w odpowiedniej witrynie sieci VPN reprezentującej lokalizację. Wymagania wstępne dotyczące protokołu BGP zawiera temat [Informacje o protokole BGP z platformą Azure VPN Gateway](../articles/vpn-gateway/vpn-gateway-bgp-overview.md). Można zawsze edytować połączenie sieci VPN, aby zaktualizować jego parametry protokołu BGP (Komunikacja równorzędna w ramach łącza i jako #) po włączeniu ustawienia protokołu BGP witryny sieci VPN.

* **Prywatna przestrzeń adresowa** — przestrzeń adresów IP znajdująca się w lokacji lokalnej. Ruch do tej przestrzeni adresowej jest kierowany do lokacji lokalnej. Jest to wymagane, gdy dla lokacji nie jest włączony protokół BGP.

* **Hubs — centrum** , z którym chcesz połączyć się z lokacją. Lokację można podłączyć tylko do centrów, które mają VPN Gateway. Jeśli nie widzisz centrum, najpierw utwórz bramę sieci VPN w tym centrum.

4. Wybierz **linki** , aby dodać informacje o fizycznych linkach w gałęzi. Jeśli masz urządzenie z wirtualnym partnerem sieci WAN CPE, skontaktuj się z nimi, aby sprawdzić, czy te informacje są wymieniane z platformą Azure w ramach przekazywania informacji o gałęziach skonfigurowanych na podstawie ich systemów.

* **Nazwa łącza** — nazwa, która ma zostać połączona z łączem fizycznym w witrynie sieci VPN. Przykład: Mylink1.

* **Nazwa dostawcy** — nazwa fizycznego linku w witrynie sieci VPN. Przykład: ATT, Verizon.

* **Szybkość** — szybkość urządzenia sieci VPN w lokalizacji gałęzi. Przykład: 50, co oznacza, że 50 MB/s to szybkość urządzenia sieci VPN w lokacji oddziału.

* **Adres IP** — publiczny adres IP urządzenia Premium za pomocą tego linku. Opcjonalnie możesz podać prywatny adres IP lokalnego urządzenia sieci VPN znajdującego się za ExpressRoute.

5. Możesz użyć pola wyboru, aby usunąć lub dodać dodatkowe linki. Obsługiwane są cztery linki na witrynę sieci VPN. Na przykład jeśli masz czterech USŁUGODAWCów internetowych (usługodawców internetowych) w lokalizacji gałęzi, możesz utworzyć cztery linki. jeden dla każdego usługodawcy internetowego i podaj informacje dla każdego łącza.

6. Po zakończeniu wypełniania pól wybierz pozycję **Przegląd + Utwórz** , aby sprawdzić i utworzyć lokację.

7. Wyświetl stan na stronie witryny sieci VPN. Lokacja przejdzie do **połączenia** , ponieważ lokacja nie została jeszcze podłączona do centrum. | 113.6 | 1,248 | 0.797315 | pol_Latn | 0.999982 |

268058bfb0f4d8e0283a4015d1eae307138ea76b | 342 | md | Markdown | src/base/README.md | getsedona/sedona-components | d16aae7b20c8ef6727d789316652b8d0d25ffd87 | [

"MIT"

] | 1 | 2019-12-03T12:51:05.000Z | 2019-12-03T12:51:05.000Z | src/base/README.md | getsedona/sedona-components | d16aae7b20c8ef6727d789316652b8d0d25ffd87 | [

"MIT"

] | 2 | 2019-09-08T11:32:32.000Z | 2020-05-15T08:59:49.000Z | src/base/README.md | getsedona/sedona-components | d16aae7b20c8ef6727d789316652b8d0d25ffd87 | [

"MIT"

] | null | null | null | # base

Базовые теги разметки.

[Разметка](https://github.com/getsedona/sedona-components/blob/master/src/base/examples.html) · [Пример](https://getsedona.github.io/sedona-components/base.html)

## Подключение

```js

// index.js

import "sedona-components/src/base";

```

```less

// index.less

@import "~sedona-components/src/base/index";

```

| 19 | 161 | 0.722222 | zul_Latn | 0.08823 |

26815e65202d4280c13a5c0fa0e6fd596e3a2ba6 | 6,514 | md | Markdown | source/02_language_guide/20_Extensions.md | slidoooor/the-swift-programming-language-in-chinese | c173d544cd45154f385da5dd6dc970e2fa3e473e | [

"CC-BY-4.0"

] | 1 | 2020-02-10T02:56:36.000Z | 2020-02-10T02:56:36.000Z | source/02_language_guide/20_Extensions.md | slidoooor/the-swift-programming-language-in-chinese | c173d544cd45154f385da5dd6dc970e2fa3e473e | [

"CC-BY-4.0"

] | null | null | null | source/02_language_guide/20_Extensions.md | slidoooor/the-swift-programming-language-in-chinese | c173d544cd45154f385da5dd6dc970e2fa3e473e | [

"CC-BY-4.0"

] | null | null | null | # 扩展

*扩展*可以给一个现有的类,结构体,枚举,还有协议添加新的功能。它还拥有不需要访问被扩展类型源代码就能完成扩展的能力(即*逆向建模*)。扩展和 Objective-C 的分类很相似。(与 Objective-C 分类不同的是,Swift 扩展是没有名字的。)

Swift 中的扩展可以:

- 添加计算型实例属性和计算型类属性

- 定义实例方法和类方法

- 提供新的构造器

- 定义下标

- 定义和使用新的嵌套类型

- 使已经存在的类型遵循(conform)一个协议

在 Swift 中,你甚至可以扩展协议以提供其需要的实现,或者添加额外功能给遵循的类型所使用。你可以从 [协议扩展](https://docs.swift.org/swift-book/LanguageGuide/Protocols.html#ID521) 获取更多细节。

> 注意

>

> 扩展可以给一个类型添加新的功能,但是不能重写已经存在的功能。

## 扩展的语法 {#extension-syntax}

使用 `extension` 关键字声明扩展:

```swift

extension SomeType {

// 在这里给 SomeType 添加新的功能

}

```

扩展可以扩充一个现有的类型,给它添加一个或多个协议。协议名称的写法和类或者结构体一样:

```swift

extension SomeType: SomeProtocol, AnotherProtocol {

// 协议所需要的实现写在这里

}

```

这种遵循协议的方式在 [使用扩展遵循协议](https://docs.swift.org/swift-book/LanguageGuide/Protocols.html#ID277) 中有描述。

扩展可以使用在现有范型类型上,就像 [扩展范型类型](https://docs.swift.org/swift-book/LanguageGuide/Generics.html#ID185) 中描述的一样。你还可以使用扩展给泛型类型有条件的添加功能,就像 [扩展一个带有 Where 字句的范型](https://docs.swift.org/swift-book/LanguageGuide/Generics.html#ID553) 中描述的一样。

> 注意

>

> 对一个现有的类型,如果你定义了一个扩展来添加新的功能,那么这个类型的所有实例都可以使用这个新功能,包括那些在扩展定义之前就存在的实例。

## 计算型属性 {#computed-properties}

扩展可以给现有类型添加计算型实例属性和计算型类属性。这个例子给 Swift 内建的 `Double` 类型添加了五个计算型实例属性,从而提供与距离单位相关工作的基本支持:

```swift

extension Double {

var km: Double { return self * 1_000.0 }

var m: Double { return self }

var cm: Double { return self / 100.0 }

var mm: Double { return self / 1_000.0 }

var ft: Double { return self / 3.28084 }

}

let oneInch = 25.4.mm

print("One inch is \(oneInch) meters")

// 打印“One inch is 0.0254 meters”

let threeFeet = 3.ft

print("Three feet is \(threeFeet) meters")

// 打印“Three feet is 0.914399970739201 meters”

```

这些计算型属性表示的含义是把一个 `Double` 值看作是某单位下的长度值。即使它们被实现为计算型属性,但这些属性的名字仍可紧接一个浮点型字面值,从而通过点语法来使用,并以此实现距离转换。

在上述例子中,`Double` 类型的 `1.0` 代表的是“一米”。这就是为什么计算型属性 `m` 返回的是 `self`——表达式 `1.m` 被认为是计算一个 `Double` 类型的 `1.0`。

其它单位则需要一些单位换算。一千米等于 1,000 米,所以计算型属性 `km` 要把值乘以 `1_000.00` 来实现千米到米的单位换算。类似地,一米有 3.28084 英尺,所以计算型属性 `ft` 要把对应的 `Double` 值除以 `3.28084`,来实现英尺到米的单位换算。

这些属性都是只读的计算型属性,所以为了简便,它们的表达式里面都不包含 `get` 关键字。它们使用 `Double` 作为返回值类型,并可用于所有接受 `Double` 类型的数学计算中:

```swift

let aMarathon = 42.km + 195.m

print("A marathon is \(aMarathon) meters long")

// 打印“A marathon is 42195.0 meters long”

```

> 注意

>

> 扩展可以添加新的计算属性,但是它们不能添加存储属性,或向现有的属性添加属性观察者。

## 构造器 {#initializers}

扩展可以给现有的类型添加新的构造器。它使你可以把自定义类型作为参数来供其他类型的构造器使用,或者在类型的原始实现上添加额外的构造选项。

扩展可以给一个类添加新的便利构造器,但是它们不能给类添加新的指定构造器或者析构器。指定构造器和析构器必须始终由类的原始实现提供。

如果你使用扩展给一个值类型添加构造器只是用于给所有的存储属性提供默认值,并且没有定义任何自定义构造器,那么你可以在该值类型扩展的构造器中使用默认构造器和成员构造器。如果你把构造器写到了值类型的原始实现中,就像 [值类型的构造器委托](https://docs.swift.org/swift-book/LanguageGuide/Initialization.html#ID215) 中所描述的,那么就不属于在扩展中添加构造器。

如果你使用扩展给另一个模块中定义的结构体添加构造器,那么新的构造器直到定义模块中使用一个构造器之前,不能访问 `self`。

在下面的例子中,自定义了一个的 `Rect` 结构体用来表示一个几何矩形。这个例子中还定义了两个给予支持的结构体 `Size` 和 `Point`,它们都把属性的默认值设置为 `0.0`:

```swift

struct Size {

var width = 0.0, height = 0.0

}

struct Point {

var x = 0.0, y = 0.0

}

struct Rect {

var origin = Point()

var size = Size()

}

```

因为 `Rect` 结构体给所有的属性都提供了默认值,所以它自动获得了一个默认构造器和一个成员构造器,就像 [默认构造器](https://docs.swift.org/swift-book/LanguageGuide/Initialization.html#ID213) 中描述的一样。这些构造器可以用来创建新的 `Rect` 实例:

```swift

let defaultRect = Rect()

let memberwiseRect = Rect(origin: Point(x: 2.0, y: 2.0),

size: Size(width: 5.0, height: 5.0))

```

你可以通过扩展 `Rect` 结构体来提供一个允许指定 point 和 size 的构造器:

```swift

extension Rect {

init(center: Point, size: Size) {

let originX = center.x - (size.width / 2)

let originY = center.y - (size.height / 2)

self.init(origin: Point(x: originX, y: originY), size: size)

}

}

```

这个新的构造器首先根据提供的 `center` 和 `size` 计算一个适当的原点。然后这个构造器调用结构体自带的成员构造器 `init(origin:size:)`,它会将新的 origin 和 size 值储存在适当的属性中:

```swift

let centerRect = Rect(center: Point(x: 4.0, y: 4.0),

size: Size(width: 3.0, height: 3.0))

// centerRect 的 origin 是 (2.5, 2.5) 并且它的 size 是 (3.0, 3.0)

```

> 注意

>

> 如果你通过扩展提供一个新的构造器,你有责任确保每个通过该构造器创建的实例都是初始化完整的。

## 方法 {#methods}

扩展可以给现有类型添加新的实例方法和类方法。在下面的例子中,给 `Int` 类型添加了一个新的实例方法叫做 `repetitions`:

```swift

extension Int {

func repetitions(task: () -> Void) {

for _ in 0..<self {

task()

}

}

}

```

`repetitions(task:)` 方法仅接收一个 `() -> Void` 类型的参数,它表示一个没有参数没有返回值的方法。

定义了这个扩展之后,你可以对任意整形数值调用 `repetitions(task:)` 方法,来执行对应次数的任务:

```swift

3.repetitions {

print("Hello!")

}

// Hello!

// Hello!

// Hello!

```

### 可变实例方法 {#mutating-instance-methods}

通过扩展添加的实例方法同样也可以修改(或 *mutating(改变)*)实例本身。结构体和枚举的方法,若是可以修改 `self` 或者它自己的属性,则必须将这个实例方法标记为 `mutating`,就像是改变了方法的原始实现。

在下面的例子中,对 Swift 的 `Int` 类型添加了一个新的 mutating 方法,叫做 `square`,它将原始值求平方:

```swift

extension Int {

mutating func square() {

self = self * self

}

}

var someInt = 3

someInt.square()

// someInt 现在是 9

```

## 下标 {#subscripts}

扩展可以给现有的类型添加新的下标。下面的例子中,对 Swift 的 `Int` 类型添加了一个整数类型的下标。下标 `[n]` 从数字右侧开始,返回小数点后的第 `n` 位:

- `123456789[0]` 返回 `9`

- `123456789[1]` 返回 `8`

……以此类推:

```swift

extension Int {

subscript(digitIndex: Int) -> Int {