hexsha stringlengths 40 40 | size int64 5 1.04M | ext stringclasses 6 values | lang stringclasses 1 value | max_stars_repo_path stringlengths 3 344 | max_stars_repo_name stringlengths 5 125 | max_stars_repo_head_hexsha stringlengths 40 78 | max_stars_repo_licenses listlengths 1 11 | max_stars_count int64 1 368k ⌀ | max_stars_repo_stars_event_min_datetime stringlengths 24 24 ⌀ | max_stars_repo_stars_event_max_datetime stringlengths 24 24 ⌀ | max_issues_repo_path stringlengths 3 344 | max_issues_repo_name stringlengths 5 125 | max_issues_repo_head_hexsha stringlengths 40 78 | max_issues_repo_licenses listlengths 1 11 | max_issues_count int64 1 116k ⌀ | max_issues_repo_issues_event_min_datetime stringlengths 24 24 ⌀ | max_issues_repo_issues_event_max_datetime stringlengths 24 24 ⌀ | max_forks_repo_path stringlengths 3 344 | max_forks_repo_name stringlengths 5 125 | max_forks_repo_head_hexsha stringlengths 40 78 | max_forks_repo_licenses listlengths 1 11 | max_forks_count int64 1 105k ⌀ | max_forks_repo_forks_event_min_datetime stringlengths 24 24 ⌀ | max_forks_repo_forks_event_max_datetime stringlengths 24 24 ⌀ | content stringlengths 5 1.04M | avg_line_length float64 1.14 851k | max_line_length int64 1 1.03M | alphanum_fraction float64 0 1 | lid stringclasses 191 values | lid_prob float64 0.01 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

a9055b023251b541f212a42e72fd6a986c80651d | 9,995 | md | Markdown | TEDx/Titles_starting_A_to_O/Creating_people_safe_roads_Jaimison_Sloboden_TEDxJacksonville.md | gt-big-data/TEDVis | 328a4c62e3a05c943b2a303817601aebf198c1aa | [

"MIT"

] | 91 | 2018-01-24T12:54:48.000Z | 2022-03-07T21:03:43.000Z | cleaned_tedx_data/Titles_starting_A_to_O/Creating_people_safe_roads_Jaimison_Sloboden_TEDxJacksonville.md | nadaataiyab/TED-Talks-Nutrition-NLP | 4d7e8c2155e12cb34ab8da993dee0700a6775ff9 | [

"MIT"

] | null | null | null | cleaned_tedx_data/Titles_starting_A_to_O/Creating_people_safe_roads_Jaimison_Sloboden_TEDxJacksonville.md | nadaataiyab/TED-Talks-Nutrition-NLP | 4d7e8c2155e12cb34ab8da993dee0700a6775ff9 | [

"MIT"

] | 18 | 2018-01-24T13:18:51.000Z | 2022-01-09T01:06:02.000Z |

[Applause]

I just did one of the most dangerous

things you can do in Jacksonville I rode

my bike to this event

the City of Jacksonville has been ranked

the fourth worst city in the United

States for pedestrian and bicycle

fatalities per capita according to Smart

Growth America dangerous by design

report as a traffic engineer I took a

deeper dive into that issue and the

problem is much worse than that

the map you see behind me is pedestrian

and bicycle crashes that have occurred

in the last 10 years that's 7,000 269

and Counting most of these crashes

result in some type of injury many of

them result in significant injury and

even death what I'm here to tell you

today is that a lot of what has to do

with this problem has to do with our

values and that what we need to decide

moving forward is that people matter

more than cars to further illustrate I'm

going to talk about two tragic events

that have occurred the first one only

happened less than two months ago a

bicyclist was on his way to work riding

along when he swayed into traffic he was

struck and critically injured where was

he supposed to ride this is that

location this is the traffic kind of

condition that was going on at the time

there's no extra space for that

bicyclist to be the second event

happened this summer miss Angie Sanders

aged 68 was on her way home from work

and she stopped a Mayport road at the

Mayport Plaza stopped to do some

shopping

there's no crosswalk at that location

when you step out she looked to the

right looked to the left and the safe

place to cross is a quarter mile in each

direction so for her to make the safe

journey

a quarter mile is about a ten minute

walk

stop wait for the light cross and then a

10-minute walk back to where she really

wanted to be Mayport road is a six-lane

Road with a real narrow median so

there's six lanes to cross this day

she's very tired

she looked it up either way and made the

choice to cross at that location she got

through the first lane the second lane

and then by the third lane she didn't

make it

these events are happening very

frequently in our city over 7,000

crashes with this potential why our

values and I'm going to demonstrate

examples of how our values of how we've

placed to build our space has

contributed to this problem we need to

decide that people matter more than cars

so one thing we know is we have a

problem how did we get here it really

goes back to post-world War two the

automobile came along the promise of

this American Dream the freedom that it

afforded us the suburban lifestyle it

was great freedom of movement

independence all that it was wonderful

so we started setting about building our

cities around that system in Florida I

came a little bit later than post-world

War two because we needed this how many

of us would really be living here if we

didn't have that by 1960 eighty percent

of all the cars in Florida had air

conditioning our house is rare condition

our buildings were air-conditioned our

cars were air-conditioned we had this

lifestyle of Independence and freedom

and mobility and so we never had to get

out and walk around so our economy

our highway departments got really good

at giving us that designing roads our

highway department said about giving us

a first-class highway system for cars I

relate to this really well because as a

civil engineer I was trained to give you

that okay it's really hard you think you

might have more cars add some more lanes

if you really want to make sure that

people can go fast and continue on their

trip provide no interruption to that

facility no crosswalks no lights almost

like a freeway so we have valued that

lifestyle for a very long time until we

kind of reach this tipping point where

we have this crisis of crashes and

fatalities so it's not just about the

roads we need to design our communities

a little bit differently as well so

there is kind of that land component

that we have to make in concert gonna

take a little bit of a segue and talk

about in a parallel example of how we

value and our values kind of relate 20

years ago about young traffic engineer

in Minnesota worked for the city of

Arden Hills and they had a new land

development happening and they were

going to put a road through a parkland

and this City Council was very concerned

about the animal species that were in

that Park so they wanted to make sure

that we took care of all the animals and

I learned all about Florida well before

I moved here that we are great in this

state at taking care of animals

environmentalists have figured out

understood that we need to consolidate

all of the lands and habitat so that the

animals have a place that naturally

takes them to a place where they're

gonna cross the road and then we spend a

lot of money building a lot of crossings

like this panther crossing we value the

panther as we should there's only 200 m

left in the state and there are precious

to us and so we take care of them we

take care of the bear we take care of

snakes we can't

take care of turtles and we even take

care of the little bitty / do ki beach

mouse okay

just learn this a couple weeks ago we

actually put PVC pipes underneath the

road to make sure that they can get

across another example of how we show

our values and this is a something that

really hit me in the last two years I

was working for the Jacksonville

Transportation Authority on providing

safe streets around the bus stops it was

a big program called Complete Streets

and as we were going out and doing

community meetings and talking to people

I was paying a little bit more direct

attention to what was going on in the

media and what I saw it was an article

that looked like this and this has been

repeated you know periodically but the

Jacksonville Sheriff's Office they've

seen the same media report about us

being one of the worst cities in the

country so they're doing their part to

try and fix the problem and and the

sheriff's office duty and role is to

enforce so they set about enforcing

jaywalking but ironically at the same

time I came across this article that the

city of st. Paul they were addressing

pedestrian safety issues and they were

out there enforcing vehicles that were

not yielding to pedestrians in the

crosswalk and that was their campaign so

they said that walking is important and

they value that perhaps it would be good

if Jaso had more crosswalks to enforce

we're working on this problem it's

simple

there's kind of four main areas one we

start with the width of our road for

automobiles while there's some roads

that we'll need and continue to have to

carry a lot of cars we have many streets

in the city of Jacksonville that no

longer need that number of lanes reduce

them

the other thing we can do is minimize

the width of these lanes to make the

crosswalks a shorter distance and easier

to travel so you don't have to be a

track star to make it across we also

need to modernize our bike system

actually have one as a start

but what's really transformed in our

design community and its global it's not

just United States but we're seeing more

examples that it's necessary to provide

kind of protected bike lanes not just

that three foot strip of pavement that

you're not sure if it's a shoulder or a

place you should be riding your bike my

five year old daughter should be able to

ride her bike on that on that trail on

that system on that road and then

finally we cannot ignore kind of the

land use around there because it's not

just a road problem it's a value problem

but it's also how we create the space

the Mayport Plaza strip mall kind of not

connected to anything not really

promoting walkability the things I need

to educate you on is about my industry

we try and make things easier for

everyone to intuitively understand what

we mean and I'm often reminded by my

family and friends that they have no

idea what I'm talking about

so my education to you is we in our

industry our always seeking public

consent input and an interest so what

you need to do for us to really help

move this along is look for a complete

Street project advertisement Lane

elimination study a road diet project a

context-sensitive design or even a

streetscape project all these projects

kind of we coin these different terms

but they all kind of mean the same thing

we're out there trying to reinvent the

space for better walkability and and

bike bikeability

and safety but don't ignore and you

should really the big red flag to put

out there is if you see a capacity

project the red flag on that is the

capacity may be needed

you know the additional capacity may be

needed but the way we're designing our

space now is we are not doing capacity

at the exclusion of people walking and

biking so those first border that I

mentioned you should be really active

and supportive behind and then the

capacity type projects you should be a

little bit skeptical and ready to go

after it if need be this is a political

will problem that we we face the

agencies are really making an effort

City of Jacksonville just recently

adopted what I think is a modern and

very good bicycle and pedestrian master

plan the Jacksonville Transportation

Authority has just made Complete Streets

their number one priority to make sure

that they're taking care of their

pedestrian and their transit customers

the Florida Department of Transportation

took great notice because the state of

Florida is far and away the worst state

in the country in this area and so their

way of dressing it as a policy change

and a complete rewrite of all the sacred

Scrolls of design manuals and all that

so they have become a Complete Streets

agency these are all great plans and

great ideas but they're not going to

happen unless we hear get behind that

and make it happen

so finally we the people of Jacksonville

people matter more than cars thank you

you

| 36.881919 | 47 | 0.801901 | eng_Latn | 0.999997 |

a906540522189932b41049d133fb4519c8fa6ae4 | 49 | md | Markdown | README.md | epfl-dlab/laughing-head | 80aa0b88814508fcb7d3f7bd7cb16da08e0188d5 | [

"Apache-2.0"

] | 1 | 2021-12-09T07:30:09.000Z | 2021-12-09T07:30:09.000Z | README.md | epfl-dlab/laughing-head | 80aa0b88814508fcb7d3f7bd7cb16da08e0188d5 | [

"Apache-2.0"

] | null | null | null | README.md | epfl-dlab/laughing-head | 80aa0b88814508fcb7d3f7bd7cb16da08e0188d5 | [

"Apache-2.0"

] | null | null | null | # laughing-head

Code for the laughing head paper

| 16.333333 | 32 | 0.795918 | eng_Latn | 0.995734 |

a906bf773f6e7dad1935b97db02289a1bbeb28d7 | 189 | md | Markdown | README.md | MayanMisfit/eleventy-taunt | 7f794ce126b0ce477bb5c5f59d03e748e629b075 | [

"MIT"

] | null | null | null | README.md | MayanMisfit/eleventy-taunt | 7f794ce126b0ce477bb5c5f59d03e748e629b075 | [

"MIT"

] | null | null | null | README.md | MayanMisfit/eleventy-taunt | 7f794ce126b0ce477bb5c5f59d03e748e629b075 | [

"MIT"

] | null | null | null | # Eleventy Taunt Theme

Custom 11ty portfolio theme for designer & developer blogs.

A live demo can be found here: <a href="https://taunt11ty.netlify.com/">taunt11ty.netlify.com/</a>

| 31.5 | 99 | 0.73545 | eng_Latn | 0.655181 |

a9079ed786a2effec90bfef6613d8d7fa3a47ad0 | 7,159 | md | Markdown | README.md | hjk22/smallLinearSolverBatched | 3d83dd5ce5cd6ce95c3a4d34aeade861729729ed | [

"BSD-3-Clause"

] | null | null | null | README.md | hjk22/smallLinearSolverBatched | 3d83dd5ce5cd6ce95c3a4d34aeade861729729ed | [

"BSD-3-Clause"

] | null | null | null | README.md | hjk22/smallLinearSolverBatched | 3d83dd5ce5cd6ce95c3a4d34aeade861729729ed | [

"BSD-3-Clause"

] | null | null | null | # GPU Linear Solver Small Batched

This project contains functions to solve large quantities of small square linear systems (NxN with N<32, single precision, dense), on GPU though the CUDA programming model.

## Building

The software is written for Linux, built in Release mode though the use of make files (debug building is not supported).

The software requires a number of dependencies to be installed to build:

* gcc version 6.1.0+, lower versions might work but have not been tested.

* CUDA toolkit version 9 or more, lower might work but have not been testet. For optimal performance use CUDA 10+.

A guide on installing CUDA on linux is available here: https://docs.nvidia.com/cuda/cuda-installation-guide-linux/index.html

Particular care should be given to post installation actions https://docs.nvidia.com/cuda/cuda-installation-guide-linux/index.html#post-installation-actions which are required to make the cuda compiler and libraries visible.

For the Tester part, and not for the actual function these are also needed (stubbing of code is needed to avoid these dependencies).

* Lapack 3.8.0+, lower versions might work but have not been tested.

* Blas 3.8.0+, lower versions might work but have not been tested.

* gfortran (if using lapack and blas)

* OpenMP, This can easily be avoided by commenting the few lines of code where it's used.

After the dependencies are installed the following files will need to be edited to match the system configurations:

* Release/objects.mk Here the Lapack, blas and intel64(if using intel version of lapack and blas) library paths are to be redefined. In general the libraries are defined here, so if something changes regarding them this file needs to be edited accordgly.

* Release/makefile This file needs to be edited to select the correct Nvidia target achitecture. Specifically in line 61, arch= and code= need to be changed according to these guidelines

https://docs.nvidia.com/cuda/cuda-compiler-driver-nvcc/index.html#options-for-steering-gpu-code-generation .

If OpenMP is to be removed then also remove the -Xcompiler -fopenmp options from the line (passes the -fopenmp line to the gcc compiler after nvcc completes compilation of kernels).

* Release/src/subdir.mk This file contains all the files that needs to be built and their dependencies, as well as the nvcc compilation invocations. Lapack linbrary paths need to be properly defined here.

Nvidia target architecture needs to be defined here as well.

To build the program cd to Release and execute

`make clean`

followed by

`make`

The binary will show up as Release/gpulinearsolversmallbatched .

## Using the current tester

The binary can be compiled in two configurations: manual and automatic tester.

In manual mode usage is

`gpulinearsolversmallbatched <matrix size> <number of linear systems> <number of openMP threads for CPU test>`

eg:

`gpulinearsolversmallbatched 4 10000 8`

In automatic mode the tester will generate a file results.csv with all the test results. The test parameters need to be configured from code before build.

## Usage on Galileo supercomputer.

The program needs some modules to be loaded:

`module load autoload git cuda gnu lapack blas`

Clone the repository or copy it someway and cd to Release

`cd Release`

To build use

`make clean`

`make`

To run the program on the GPU nodes use the following command:

`srun --nodes=1 --ntasks-per-node=1 --cpus-per-task=1 --mem=6G --time=20 --partition=gll_usr_gpuprod --gres=gpu:kepler:1 ./gpulinearsolversmallbatched`

adding command line parameters at the end if in manual mode.

Change --cpus-per-task= to increase the number of phisical cpu cores available for openMP to use.

Change --mem= to ingrease the maximum usable memory (gpu is limited to a little less than 12G) and currently the program is limited by int memory pointers.

Change --time= to set the time limit in minutes of of the test. For autotester 20-30 minutes might be needed. For manual 3 minutes is already plenty. Mostly to ensure that the program does not go into infinite loop while on a work node.

Change --gres=gpu:kepler: to change the number of GPUs requested (in case of adding multi gpu support).

To peform profiling run add `cudaProfilerStart()` and `cudaProfilerStop()` before and after the interested section, rebuild and use the following command:

`srun --nodes=1 --ntasks-per-node=1 --cpus-per-task=1 --mem=6G --time=3 --partition=gll_usr_gpuprod --gres=gpu:kepler:1 nvprof --export-profile timeline.prof -f --profile-from-start off --cpu-profiling off ./gpulinearsolversmallbatched 16 1000 1`

Which will export the profiling data to ./timeline.prof which can be imported into Visual Profile. Visual profile can import the file even if running on a windows machine (use scp to get the file).

## Code structure and configurations

`main` is situated in `testing_sgesv_batched.cpp`, where also all the testing code is situated.

This is the file that needs to be edited to remove lapack, blas and openmp dependencies.

To change the mode to manual tester the macro MANUAL_TEST needds to be defined in testing_sgesv_batched.cpp and to use the automatic tester comment the macro definition.

Various macros with comments are present at the beginning of the file to change the manual test behavior.

The manual test is performed by the function `gpuLinearSolverBatched_tester` while the autotester is performed by `gpuCSVTester` which is the function that needs to be edited to change the parameters of the autotest.

To perform GPU solution the user needs prepare the linear systems in host memory and call `gpuLinearSolverBatched` which is in `linearSolverSLU_batched.cpp`.

This function allocates the device memory and transfers the data to the call `linearSolverSLU_batched` (same file) wich takes pointers to device memory to start executing the different phases.

To perform LU decomposition the function `linearDecompSLU_batched` (in file `linearDecompSLU_batched.cpp`) is called, which in turn calls `magma_sgetrf_batched_smallsq_shfl` (`tinySLUfactorization_batched.cu`).

Only now `magma_sgetrf_batched_smallsq_shfl` executes the kernel `sgetrf_batched_smallsq_shfl_kernel` which contains the main calculations of the program to do the LU factorization.

After the factorization is complete control returns to `linearSolverSLU_batched`which then calls `linearSolverFactorizedSLU_batched` (linearSolverFactorizedSLU_batched.cpp)

This function uses various inexpensive other functions to manipulate the factorized data and obtain the resolution to the linear systems, by using forwards and backwards substitutions.

These functions are located in `set_pointer.cu`, `strsv_batched.cu`, `linearSolverFactorizedSLUutils.cu`

For the remiaining files we have `operation_batched.h` which contains the declaration of most host batched functions listed above, `utils.cpp` `utilscu.cuh` `utils.h` contain utility functions that are used thoughought the code.

`testing.h`, `flops.h`, `magma_types.h` instead contain important magma definitions that are used throughout the code.

| 57.272 | 265 | 0.789077 | eng_Latn | 0.997471 |

a909e7bed823c200dba492a7aeb65a7824e4d508 | 3,203 | md | Markdown | includes/virtual-machines-common-classic-agents-and-extensions.md | allanfann/azure-docs.zh-tw | c66e7b6d1ba48add6023a4c08cc54085e3286aa3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/virtual-machines-common-classic-agents-and-extensions.md | allanfann/azure-docs.zh-tw | c66e7b6d1ba48add6023a4c08cc54085e3286aa3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | includes/virtual-machines-common-classic-agents-and-extensions.md | allanfann/azure-docs.zh-tw | c66e7b6d1ba48add6023a4c08cc54085e3286aa3 | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

author: cynthn

ms.service: virtual-machines

ms.topic: include

ms.date: 10/26/2018

ms.author: cynthn

ms.openlocfilehash: 9158e6bfe07fc5d06b0685d77eff26644b594a8b

ms.sourcegitcommit: 3102f886aa962842303c8753fe8fa5324a52834a

ms.translationtype: MT

ms.contentlocale: zh-TW

ms.lasthandoff: 04/23/2019

ms.locfileid: "61485263"

---

VM 擴充功能可協助您:

* 修改安全性與身分識別的功能,例如重設帳戶值和使用反惡意程式碼

* 啟動、停止或設定監視和診斷

* 重設或安裝連線功能,例如 RDP 和 SSH

* 診斷、監視和管理您的 VM

也有許多其他功能。 會定期發行新的 VM 擴充功能。 這篇文章描述 Windows 和 Linux 的 Azure VM 代理程式,以及它們如何支援 VM 擴充功能。 如需依功能分類的 VM 延伸模組清單,請參閱 [Azure VM 延伸模組與功能](../articles/virtual-machines/extensions/features-windows.md)。

## <a name="azure-vm-agents-for-windows-and-linux"></a>适用于 Windows 和 Linux 的 Azure VM 代理

Azure 虛擬機器代理程式 (VM 代理程式) 是一個安全、輕量級程序,它會安裝、設定和移除 Azure 虛擬機器執行個體上的 VM 擴充功能。 VM 代理充当 Azure VM 的安全本地控制服务。 该代理加载的扩展提供特定功能,以在使用实例时提高工作效率。

有兩個 Azure VM 代理程式存在,一個適用於 Windows VM,一個適用於 Linux VM。

如果您想要讓虛擬機器執行個體使用一或多個 VM 擴充功能,執行個體必須安裝 VM 代理程式。 藉由使用 Azure 入口網站和來自 **Marketplace** 之映像所建立的虛擬機器映像,會在建立程序中自動安裝 VM 代理程式。 如果虛擬機器執行個體沒有 VM 代理程式,您可以在建立虛擬機器執行個體之後安裝 VM 代理程式。 或者,您可以在您稍後上傳的自訂 VM 映像中安裝代理程式。

> [!IMPORTANT]

> 这些 VM 代理是非常轻量级的,可启用虚拟机实例的安全管理的服务。 有些情況下您可能不想使用 VM 代理程式。 若是如此,請務必建立不會使用 Azure CLI 或 PowerShell 安裝 VM 代理程式的 VM。 雖然可以實際移除 VM 代理程式,但是執行個體上的 VM 擴充功能的行為並未定義。 因此,不支援移除已安裝的 VM 代理程式。

>

在下列情況下會啟用 VM 代理程式:

* 當您使用 Azure 入口網站和從 **Marketplace** 選取映像來建立 VM 的執行個體時,

* 當您使用 [New-AzureVM](https://msdn.microsoft.com/library/azure/dn495254.aspx) 或 [New-AzureQuickVM](https://msdn.microsoft.com/library/azure/dn495183.aspx) Cmdlet 來建立 VM 的執行個體時。 您可以藉由將 **–DisableGuestAgent** 參數新增至 [Add-AzureProvisioningConfig](https://msdn.microsoft.com/library/azure/dn495299.aspx) Cmdlet,來建立沒有 VM 代理程式的 VM。

* 當您在現有 VM 執行個體上手動下載並安裝 VM 代理程式時,將 **ProvisionGuestAgent** 值設定為 **true**。 您可以藉由使用 PowerShell 命令或 REST 呼叫,針對 Windows 和 Linux 代理程式使用這項技術。 (如果您以手動方式安裝 VM 代理程式之後未設定 **ProvisionGuestAgent** 值,則不會正確偵測到新增 VM 代理程式。)下列程式碼範例示範如何使用 PowerShell 執行此動作,其中 `$svc` 和 `$name` 引數都已經確定:

$vm = Get-AzureVM –ServiceName $svc –Name $name

$vm.VM.ProvisionGuestAgent = $TRUE

Update-AzureVM –Name $name –VM $vm.VM –ServiceName $svc

* 當您建立 VM 映像時,會包含已安裝的 VM 代理程式。 一旦具有 VM 代理程式的映像存在,您可以將該映像上傳至 Azure。 若為 Windows VM,請下載 [Windows VM Agent.msi 檔案](https://go.microsoft.com/fwlink/?LinkID=394789) ,然後安裝 VM 代理程式。 若為 Linux VM,請從位於 <https://github.com/Azure/WALinuxAgent> 的 GitHub 存放庫安裝 VM 代理程式。 如需如何在 Linux 上安裝 VM 代理程式的詳細資訊,請參閱[Azure Linux VM 代理程式使用者指南](../articles/virtual-machines/extensions/agent-linux.md)。

> [!NOTE]

> 在 PaaS 中,VM 代理程式稱為 **WindowsAzureGuestAgent**,且在 Web 和背景工作角色 VM 中皆可使用。 (如需詳細資訊,請參閱 [Azure 角色架構](https://blogs.msdn.com/b/kwill/archive/2011/05/05/windows-azure-role-architecture.aspx))。角色 VM 的 VM 代理程式現已可將延伸模組加入雲端服務 VM,其方法與永續性虛擬機器相同。 角色 VM 和永續性 VM 上 VM 擴充功能之間最大的差異是新增 VM 擴充功能的時機。 使用角色 VM 時,擴充功能會先新增至雲端服務,然後新增至該雲端服務內的部署。

>

> 使用 [Get AzureServiceAvailableExtension](https://msdn.microsoft.com/library/azure/dn722498.aspx) Cmdlet,來列出所有可用的角色 VM 延伸模組。

>

>

## <a name="find-add-update-and-remove-vm-extensions"></a>尋找、加入、更新和移除 VM 擴充功能

如需這些工作的詳細資訊,請參閱[加入、尋找、更新及移除 Azure VM 延伸模組](../articles/virtual-machines/windows/classic/manage-extensions.md?toc=%2fazure%2fvirtual-machines%2fwindows%2fclassic%2ftoc.json)。

| 57.196429 | 370 | 0.778021 | yue_Hant | 0.994542 |

a90a796ab5536b8a7eba042a90e64c9492c94401 | 496 | md | Markdown | README.md | vitaly-t/dev-distribution | b12123ee0d41b44c580c2a8630c7bfd99be5aa93 | [

"MIT"

] | null | null | null | README.md | vitaly-t/dev-distribution | b12123ee0d41b44c580c2a8630c7bfd99be5aa93 | [

"MIT"

] | null | null | null | README.md | vitaly-t/dev-distribution | b12123ee0d41b44c580c2a8630c7bfd99be5aa93 | [

"MIT"

] | null | null | null | Calculate distribution rate of [Dev]('https://devtoken.rocks/')

[](https://travis-ci.org/frame00/dev-distribution)

# Getting Started

How to install:

```bash

git clone git@github.com:frame00/dev-distribution.git

cd dev-distribution

npm i

```

# How to Calculate

Distribution rate calculation:

```bash

npm run calc 2018-07-20 2018-08-19 1000000

```

`npm run calc <start date> <end date> <total distrobitions>`

| 20.666667 | 131 | 0.741935 | eng_Latn | 0.326334 |

a90a8542abc9e8e7d95bedeaacdc157bd759f218 | 4,782 | md | Markdown | Instructions/Labs/LAB_05_Making_changes_by_using_CIM_and_WMI.md | MicrosoftLearning/AZ-040T00-Automating-Administration-with-PowerShell.ja-jp | cfe7e0c5cbd0c3ad7f73b68cc874a46c3ac9eee3 | [

"MIT"

] | null | null | null | Instructions/Labs/LAB_05_Making_changes_by_using_CIM_and_WMI.md | MicrosoftLearning/AZ-040T00-Automating-Administration-with-PowerShell.ja-jp | cfe7e0c5cbd0c3ad7f73b68cc874a46c3ac9eee3 | [

"MIT"

] | 2 | 2022-03-23T03:31:34.000Z | 2022-03-31T02:15:21.000Z | Instructions/Labs/LAB_05_Making_changes_by_using_CIM_and_WMI.md | MicrosoftLearning/AZ-040T00-Automating-Administration-with-PowerShell.ja-jp | cfe7e0c5cbd0c3ad7f73b68cc874a46c3ac9eee3 | [

"MIT"

] | null | null | null | ---

lab:

title: 'ラボ: WMI と CIM を使って情報についてのクエリを実行する'

module: 'Module 5: Querying management information by using CIM and WMI'

ms.openlocfilehash: 29a429a264ef6b6b61ca69ce9a3ca90644e89f32

ms.sourcegitcommit: a95a9bb3a7919b785df0574c3407f4b6c3bea9f5

ms.translationtype: HT

ms.contentlocale: ja-JP

ms.lasthandoff: 11/08/2021

ms.locfileid: "132116732"

---

# <a name="lab-querying-information-by-using-wmi-and-cim"></a>ラボ: WMI と CIM を使って情報についてのクエリを実行する

## <a name="scenario"></a>シナリオ

複数のコンピューターに管理情報のクエリを実行する必要があります。 まず、環境内のローカル コンピューターと 1 つのテスト コンピューターにクエリを実行します。

## <a name="objectives"></a>目標

このラボを完了すると、次のことができるようになります。

- Windows Management Instrumentation (WMI) コマンドを使用して情報についてのクエリを実行する。

- Common Information Model (CIM) コマンドを使用して情報についてのクエリを実行する。

- WMI および CIM コマンドを使用してメソッドを呼び出します。

## <a name="estimated-time-45-minutes"></a>予想所要時間: 45 分

## <a name="lab-setup"></a>ラボのセットアップ

仮想マシン: **AZ-040T00A-LON-DC1**、**AZ-040T00A-LON-CL1**

ユーザー名: **Adatum\\Administrator**

パスワード: **Pa55w.rd**

このラボでは、提供されている仮想マシン環境を使用します。 ラボを開始する前に、次の手順を完了します。

1. **LON-DC1** を開いて、**Adatum\\Administrator** として、パスワード **Pa55w.rd** を使ってサインインします。

1. **LON-CL1** について手順 1 を繰り返します。

## <a name="exercise-1-querying-information-by-using-wmi"></a>演習 1: WMI を使って情報についてのクエリを実行する

### <a name="scenario-1"></a>シナリオ 1

この演習では、リポジトリ クラスを検出し、WMI コマンドを使用してこれに対するクエリを実行します。

この演習の主なタスクは次のとおりです。

1. IP アドレスのクエリを実行する。

1. オペレーティング システムのバージョン情報のクエリを実行する。

1. コンピューター システムのハードウェア情報のクエリを実行する。

1. サービス情報のクエリを実行する。

### <a name="task-1-query-ip-addresses"></a>タスク 1: IP アドレスのクエリを実行する

1. **LON-CL1** で、Windows PowerShell を管理者として開始します。

1. IP アドレスは、ネットワーク アダプターの構成の一部です。 キーワード **configuration** を使用して、ローカル コンピューターで使用されている IP アドレスを一覧表示するリポジトリ クラスを検索します。

1. WMI コマンドと、前の手順で検出したクラスを使用して、静的に構成された IP アドレスの一覧を表示します。

### <a name="task-2-query-operating-system-version-information"></a>タスク 2: オペレーティング システムのバージョン情報のクエリを実行する

1. キーワード **operating** を使用して、オペレーティング システムのバージョン情報を一覧表示するリポジトリ クラスを検索します。 これを **name** プロパティで並べ替えます。

1. 前の手順で検出したクラスのプロパティの一覧を表示します。

1. オペレーティング システムのバージョン、Service Pack のメジャー バージョン、オペレーティング システムのビルド番号を含むプロパティをメモします。

1. WMI コマンドと手順 1 で検出したクラスを使用して、ローカル オペレーティング システムのバージョン、Service Pack のメジャー バージョン、オペレーティング システムのビルド番号を表示します。

### <a name="task-3-query-computer-system-hardware-information"></a>タスク 3: コンピューター システムのハードウェア情報のクエリを実行する

1. キーワード **system** を使って、コンピューター システム情報を含むリポジトリ クラスを検索します。

1. 前の手順で検出したクラスのプロパティとプロパティ値の一覧を表示します。

1. プロパティの一覧と WMI コマンドを使用して、ローカル コンピューターの製造元、モデル、物理メモリの合計容量を表示します。 物理メモリの合計容量の列に **RAM** というラベルを付けます。

### <a name="task-4-query-service-information"></a>タスク 4: サービス情報のクエリを実行する

1. キーワード **service** を使って、サービス情報を含むリポジトリ クラスを検索します。

1. 前の手順で検出したクラスのプロパティとプロパティ値の一覧を表示します。

1. プロパティの一覧と WMI コマンドを使用して、名前が **S** で始まるすべてのサービスのサービス名、状態 (実行中または停止済み)、サインイン名を表示します。

## <a name="exercise-2-querying-information-by-using-cim"></a>演習 2: CIM を使って情報についてのクエリを実行する

### <a name="scenario-2"></a>シナリオ 2

この演習では、新しいリポジトリ クラスを検出し、CIM コマンドを使用してクエリを実行します。

この演習の主なタスクは次のとおりです。

1. ユーザー アカウントのクエリを実行する。

1. BIOS 情報のクエリを実行する。

1. ネットワーク アダプターの構成情報のクエリを実行する。

1. ユーザー グループ情報のクエリを実行する。

### <a name="task-1-query-user-accounts"></a>タスク 1: ユーザー アカウントのクエリを実行する

1. CIM コマンドとキーワード **user** を使用して、ユーザー アカウントを一覧表示するリポジトリ クラスを検索します。

1. CIM コマンドを使って、前の手順で検出したクラスのプロパティの一覧を表示します。

1. CIM コマンドとプロパティ リストを使用して、テーブル内のユーザー アカウントの一覧を表示します。 アカウント キャプション、ドメイン、セキュリティ ID、フル ネーム、名前の列を含めます。 一部またはすべてのアカウントで、フル ネームの列が空白になる場合があります。

### <a name="task-2-query-bios-information"></a>タスク 2: BIOS 情報のクエリを実行する

1. キーワード **bios** と CIM コマンドを使用して、BIOS 情報を含むリポジトリ クラスを検索します。

1. CIM コマンドと、前の手順で検出したクラスを使用して、使用可能なすべての BIOS 情報の一覧を表示します。

### <a name="task-3-query-network-adapter-configuration-information"></a>タスク 3: ネットワーク アダプターの構成情報のクエリを実行する

1. CIM コマンドを使用して、`Win32_NetworkAdapterConfiguration` クラスのすべてのローカル インスタンスを表示します。

1. CIM コマンドを使用して、**LON-DC1** に存在する `Win32_NetworkAdapterConfiguration` クラスのすべてのインスタンスを表示します。

### <a name="task-4-query-user-group-information"></a>タスク 4: ユーザー グループ情報のクエリを実行する

1. CIM コマンドとキーワード **group** を使用して、ユーザー グループを一覧表示するクラスを検索します。

1. CIM コマンドを使用して、**LON-DC1** に存在するユーザー グループの一覧を表示します。

## <a name="exercise-3-invoking-methods"></a>演習 3: メソッドの呼び出し

### <a name="scenario-3"></a>シナリオ 3

この演習では、WMI および CIM コマンドを使用して、リポジトリ オブジェクトのメソッドを呼び出します。

この演習の主なタスクは次のとおりです。

1. CIM メソッドを呼び出す。

1. WMI メソッドを呼び出す。

### <a name="task-1-invoke-a-cim-method"></a>タスク 1: CIM メソッドを呼び出す

- CIM コマンドと `Win32_OperatingSystem` の `Reboot` メソッドを使用して、**LON-CL1** からリモートで **LON-DC1** を再起動します。

### <a name="task-2-invoke-a-wmi-method"></a>タスク 2: WMI メソッドを呼び出す

1. `Get-Service` コマンドレットを使用して、**WinRM** サービスの **StartType** プロパティを確認します。

1. WMI コマンドと `Win32_Service` の `ChangeStartMode` メソッドを使用して、**WinRM** サービスの開始モードを **[自動]** に変更します。

1. `Get-Service` コマンドレットを使用して、**WinRM** サービスの **StartType** プロパティが **[自動]** に更新されたことを確認します。

| 35.422222 | 138 | 0.765997 | yue_Hant | 0.57591 |

a90a86d4c411eddbce6e9c8a5c3cd6b0b8e84c0d | 321 | md | Markdown | packages/cli/readme.md | pelletier197/magidocql | b73bad46f39793b1b230b86eccca97823f5736eb | [

"MIT"

] | 2 | 2022-02-26T15:19:20.000Z | 2022-02-27T00:08:47.000Z | packages/cli/readme.md | pelletier197/magidocql | b73bad46f39793b1b230b86eccca97823f5736eb | [

"MIT"

] | null | null | null | packages/cli/readme.md | pelletier197/magidocql | b73bad46f39793b1b230b86eccca97823f5736eb | [

"MIT"

] | null | null | null | # Magidoc CLI

Magidoc CLI is a NodeJS CLI application that can be used to generate beautiful and fully customizable GraphQL documentation websites from scratch.

## Documentation

Read our [documentation](https://magidoc-org.github.io/magidoc/introduction/welcome) for more details about how to get started with Magidoc. | 64.2 | 147 | 0.813084 | eng_Latn | 0.971805 |

a90b42d53e0cca4d8ce31efc945308698a341b11 | 641 | md | Markdown | reactor/README.md | spy16/fusion | f50f2731eed85b33d78cf08272c59b064f62ca5e | [

"MIT"

] | 17 | 2020-07-21T13:52:14.000Z | 2021-06-30T20:38:34.000Z | reactor/README.md | spy16/fusion | f50f2731eed85b33d78cf08272c59b064f62ca5e | [

"MIT"

] | null | null | null | reactor/README.md | spy16/fusion | f50f2731eed85b33d78cf08272c59b064f62ca5e | [

"MIT"

] | 1 | 2020-12-25T09:40:23.000Z | 2020-12-25T09:40:23.000Z | # Reactor

`reactor` is a stream processing tool built using [💥 fusion](https://github.com/spy16/fusion).

## Usage

1. Install `reactor` using `go get -u -v github.com/spy16/fusion/reactor`

2. Create a config file `my_config.json` by referring to config files in [samples](./samples)

3. Run `reactor -config my_config.json`. When you run this, `reactor` will:

1. Reactor will parse the proto message and create a message descriptor.

2. Connect to Kafka cluster and subscribe to given topic name.

3. Parse every message body as protobuf using the descriptor created in step 1 and log JSON formatted version

to `stdout`.

| 42.733333 | 113 | 0.733229 | eng_Latn | 0.975876 |

a90bd788412953ab8551f7ef585d179a0343a88a | 1,370 | md | Markdown | langs/ko-kr/tutorials/stores_nested_reactivity/lesson.md | lechuckroh/solid-docs | de63c17ee666537f644520ac6cf99acd74356af0 | [

"MIT"

] | null | null | null | langs/ko-kr/tutorials/stores_nested_reactivity/lesson.md | lechuckroh/solid-docs | de63c17ee666537f644520ac6cf99acd74356af0 | [

"MIT"

] | null | null | null | langs/ko-kr/tutorials/stores_nested_reactivity/lesson.md | lechuckroh/solid-docs | de63c17ee666537f644520ac6cf99acd74356af0 | [

"MIT"

] | null | null | null | Solid에서 세분화된 반응성을 제공할 수 있는 이유 중 하나는 중첩된 업데이트를 독립적으로 처리할 수 있기 때문입니다.

사용자 리스트가 있고 이 중의 한 사용자 이름을 업데이트한다고 했을 때, Solid는 리스트 자체의 내용을 비교하지 않으면서 DOM 에 있는 이름의 위치만 업데이트합니다. UI 프레임워크 중에서 이런게 가능한 프레임워크는 거의 없습니다. 심지어 리액티프 프레임워크라고 하더라도 말이죠.

하지만 어떻게 이를 가능하게 했을까요? 이 예제에서는 todo 리스트를 가진 시그널이 있습니다. todo를 완료 상태로 설정하기 위해, todo의 복제를 사용해 기존 항목을 교체합니다. 대부분의 프레임워크에서 이런 방법을 사용하지만, `console.log`에서 볼 수 있듯이 리스트를 비교해서 DOM 엘리먼트를 다시 생성해야하기 때문에 비효율적입니다.

```js

const toggleTodo = (id) => {

setTodos(

todos().map((todo) => (todo.id !== id ? todo : { ...todo, completed: !todo.completed })),

);

};

```

반면에 Solid와 같은 세분화된 라이브러리에서는, 다음과 같이 중첩된 시그널로 데이터를 초기화합니다:

```js

const addTodo = (text) => {

const [completed, setCompleted] = createSignal(false);

setTodos([...todos(), { id: ++todoId, text, completed, setCompleted }]);

};

```

이제 추가 비교 작업없이 `setCompleted`를 호출해서 완료 상태를 업데이트할 수 있습니다. 이는 복잡한 부분을 뷰에서 데이터로 이동했기 때문에 가능합니다. 이제 Solid는 데이터가 어떻게 변경되는지를 알 수 있게 됩니다.

```js

const toggleTodo = (id) => {

const index = todos().findIndex((t) => t.id === id);

const todo = todos()[index];

if (todo) todo.setCompleted(!todo.completed())

}

```

이제 나머지 `todo.completed` 참조 코드를 `todo.completed()`로 변경하게 되면, 예제에서 `console.log`는 생성시에만 실행하며, todo를 토글할 때는 실행하지 않게 됩니다.

이 방법은 수동 매핑이 필요하며, 지금까지는 유일하게 선택 가능한 방법이었습니다. 하지만 지금은 프록시 덕분에 이러한 작업의 대부분을 백그라운드에서 수동 작업없이 가능해졌습니다. 어떻게 하는지는 다음 튜토리얼에서 살펴보겠습니다.

| 39.142857 | 197 | 0.681022 | kor_Hang | 1.00001 |

a90c412b542d436219be42cd6a551acbd7f37a42 | 750 | md | Markdown | README.md | runzezhang/React-Native-App-with-Redux-Axios-React-Navigation | 4bd8136e22244080885ca90dee2b48909dd3f231 | [

"MIT"

] | null | null | null | README.md | runzezhang/React-Native-App-with-Redux-Axios-React-Navigation | 4bd8136e22244080885ca90dee2b48909dd3f231 | [

"MIT"

] | null | null | null | README.md | runzezhang/React-Native-App-with-Redux-Axios-React-Navigation | 4bd8136e22244080885ca90dee2b48909dd3f231 | [

"MIT"

] | null | null | null | # Complete React Native App Demo

This is a complete Demo App for developers to use Redux / Axios / React Navigation to build a React Native App by using Expo.

# Functions

1. Register / Login / Logout

2. CRUD functions

3. Multi Inputs form, text, textarea, single select, multi select, checkbox etc.

4. Pull Refresh List

5. Singature, Image View/ Upload

6. Map View

7. Tab/ Stack Nav

8. Axios HTTp Request

9. Redux State Management

10. Multi Language

11. Rating

12. Payment

# Components

1. React Native

2. React Navigation

3. Redux

4. Axios

5. react-native-calendars

6. react-native-datepicker

7. react-native-easy-toast

8. react-native-elements

9. react-native-picker-select

10. react-native-ratings

11. react-navigation-collapsible

12. tipsi-stripe

| 25.862069 | 125 | 0.772 | eng_Latn | 0.629043 |

a90c7fafc2eff3b6f542aa0cd1957aa3058f66c3 | 2,595 | md | Markdown | README.md | cncjs/cncjs-pendant-raspi-gpio | 42a535aece710fecccbd9d7e2a27abbf937f2aa2 | [

"MIT"

] | 8 | 2018-04-14T21:38:47.000Z | 2021-08-21T00:38:54.000Z | README.md | cncjs/cncjs-pendant-raspi-gpio | 42a535aece710fecccbd9d7e2a27abbf937f2aa2 | [

"MIT"

] | 1 | 2021-04-26T21:54:48.000Z | 2021-04-26T21:54:48.000Z | README.md | cncjs/cncjs-pendant-raspi-gpio | 42a535aece710fecccbd9d7e2a27abbf937f2aa2 | [

"MIT"

] | 8 | 2018-02-26T01:00:50.000Z | 2021-08-01T18:36:37.000Z | # cncjs-pendant-raspi-gpio

Simple Raspberry Pi GPIO Pendant control for CNCjs.

[](https://nodei.co/npm/cncjs-pendant-raspi-gpio/)

## Installation

#### NPM Install (local)

```

npm install cncjs-pendant-raspi-gpio

```

#### NPM Install (global) [Recommended]

```

sudo npm install -g cncjs-pendant-raspi-gpio@latest --unsafe-perm --build-from-source

```

#### Manual Install

```

# Clone Repository

cd ~/

#wget https://github.com/cncjs/cncjs-pendant-raspi-gpio/archive/master.zip

#unzip master.zip

git clone https://github.com/cncjs/cncjs-pendant-raspi-gpio.git

cd cncjs-pendant-raspi-gpio*

npm install

```

## Usage

Run `bin/cncjs-pendant-raspi-gpio` to start. Pass --help to `cncjs-pendant-raspi-gpio` for more options.

Eamples:

```

bin/cncjs-pendant-keyboard --help

node bin/cncjs-pendant-raspi-gpio --port /dev/ttyUSB0

```

#### Auto Start

###### Install [Production Process Manager [PM2]](http://pm2.io)

```

# Install PM2

sudo npm install -g pm2

# Setup PM2 Startup Script

# sudo pm2 startup # To Start PM2 as root

pm2 startup # To start PM2 as pi / current user

#[PM2] You have to run this command as root. Execute the following command:

sudo env PATH=$PATH:/usr/bin /usr/lib/node_modules/pm2/bin/pm2 startup systemd -u pi --hp /home/pi

# Start CNCjs (on port 8000, /w Tinyweb mount point) with PM2

## pm2 start ~/.cncjs/cncjs-pendant-raspi-gpio/bin/cncjs-pendant-raspi-gpio -- --port /dev/ttyUSB0

pm2 start $(which cncjs-pendant-raspi-gpio) -- --port /dev/ttyUSB0

# Set current running apps to startup

pm2 save

# Get list of PM2 processes

pm2 list

```

#### Button Presses

1. G-Code: M9

2. G-Code: M8

3. G-Code: M7

4. G-Code: $X "Unlock"

5. G-Code: $X "Unlock"

6. G-Code: $SLP "Sleep"

7. G-Code: $SLP "Sleep"

8. G-Code: $H "Home"

#### Press & Hold

- 3 Sec: sudo poweroff "Shutdown"

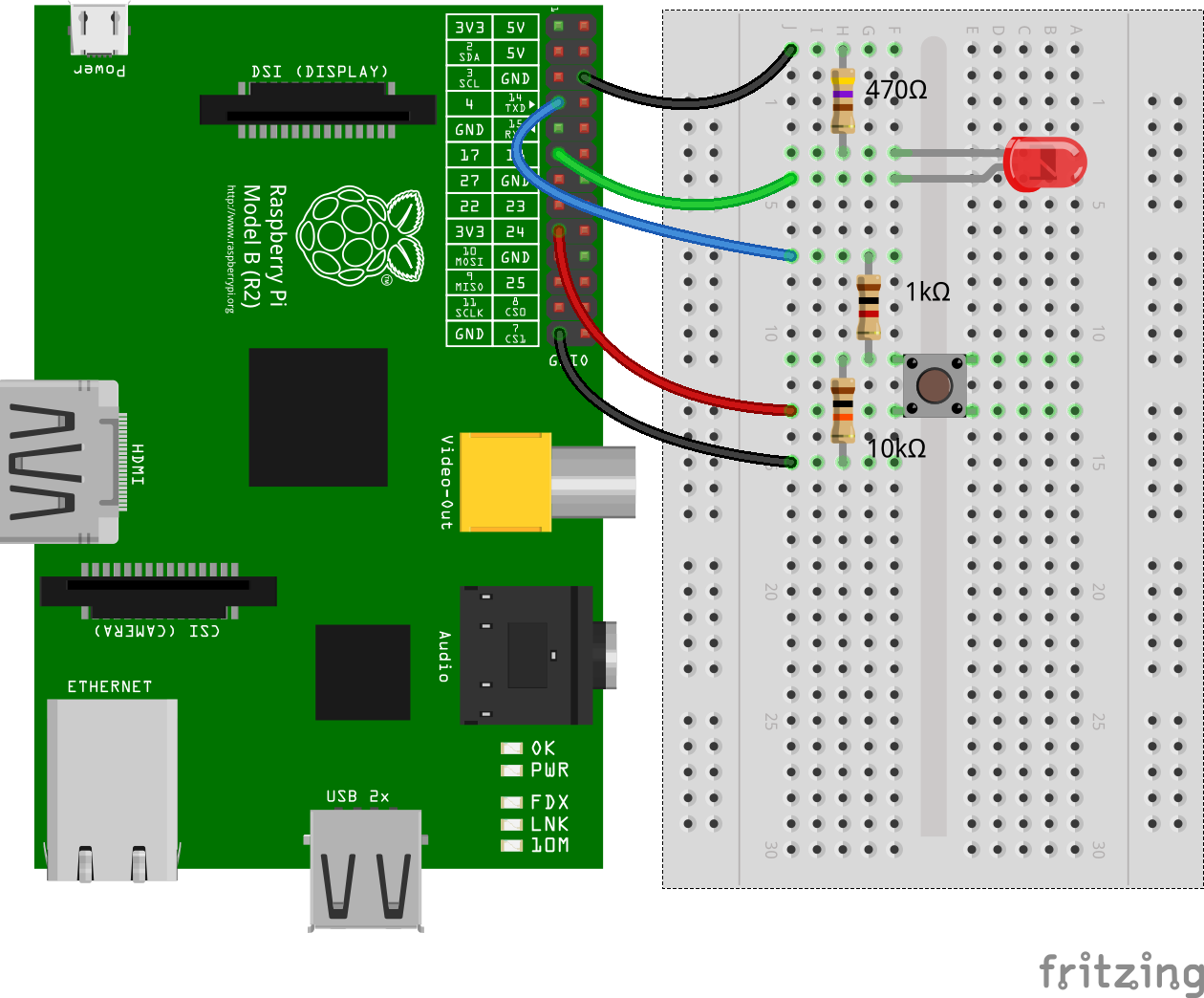

## Wiring

See the [fivdi/onoff](https://www.npmjs.com/package/onoff) Raspberry Pi GPIO NodeJS repository for more infomation.

| 29.488636 | 120 | 0.720617 | kor_Hang | 0.172414 |

a90d0519a5037409cdbe35b37e9b9992b64f8524 | 9,335 | md | Markdown | articles/event-grid/move-system-topics-across-regions.md | niklasloow/azure-docs.sv-se | 31144fcc30505db1b2b9059896e7553bf500e4dc | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/event-grid/move-system-topics-across-regions.md | niklasloow/azure-docs.sv-se | 31144fcc30505db1b2b9059896e7553bf500e4dc | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/event-grid/move-system-topics-across-regions.md | niklasloow/azure-docs.sv-se | 31144fcc30505db1b2b9059896e7553bf500e4dc | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Flytta Azure Event Grid system ämnen till en annan region

description: Den här artikeln visar hur du flyttar Azure Event Grid Systems ämnen från en region till en annan region.

ms.topic: how-to

ms.custom: subject-moving-resources

ms.date: 08/28/2020

ms.openlocfilehash: eb6029b206e7d47789371ee81e75c4e05c69ee65

ms.sourcegitcommit: 656c0c38cf550327a9ee10cc936029378bc7b5a2

ms.translationtype: MT

ms.contentlocale: sv-SE

ms.lasthandoff: 08/28/2020

ms.locfileid: "89087201"

---

# <a name="move-azure-event-grid-system-topics-to-another-region"></a>Flytta Azure Event Grid system ämnen till en annan region

Du kanske vill flytta dina resurser till en annan region av olika anledningar. Till exempel för att dra nytta av en ny Azure-region för att uppfylla interna principer och styrnings krav, eller som svar på kapacitets planerings kraven.

Här följer de övergripande steg som beskrivs i den här artikeln:

- **Exportera resurs gruppen** som innehåller det Azure Storage kontot och det associerade system avsnittet till en Resource Manager-mall. Du kan också exportera en mall endast för system avsnittet. Om du använder den här vägen måste du komma ihåg att flytta Azure-händelseloggen (i det här exemplet ett Azure Storage konto) till den andra regionen innan du flyttar system-avsnittet. I avsnittet exporterad mall för system uppdaterar du sedan det externa ID: t för lagrings kontot i mål regionen.

- **Ändra mallen** för att lägga till `endpointUrl` egenskapen för att peka på en webhook som prenumererar på system-avsnittet. När system avsnittet exporteras, exporteras även dess prenumeration (i det här fallet en webhook) till mallen, men `endpointUrl` egenskapen ingår inte. Så du måste uppdatera den så att den pekar på den slut punkt som prenumererar på ämnet. Uppdatera också `location` egenskapens värde till den nya platsen eller regionen. För andra typer av händelse hanterare behöver du bara uppdatera platsen.

- **Använd mallen för att distribuera resurser** till mål regionen. Du anger namn för lagrings kontot och system avsnittet som ska skapas i mål regionen.

- **Verifiera distributionen**. Kontrol lera att webhooken anropas när du laddar upp en fil till blob-lagringen i mål regionen.

- **Slutför flyttningen**genom att ta bort resurser (avsnittet händelse källa och system) från käll regionen.

## <a name="prerequisites"></a>Krav

- Slutför [snabb starten: dirigera Blob Storage-händelser till webb slut punkten med Azure Portal](blob-event-quickstart-portal.md) i käll regionen. Det här steget är **valfritt**. Testa stegen i den här artikeln. Behåll lagrings kontot i en separat resurs grupp från App Service och App Service plan.

- Se till att tjänsten Event Grid är tillgänglig i mål regionen. Se [tillgängliga produkter per region](https://azure.microsoft.com/global-infrastructure/services/?products=event-grid®ions=all).

## <a name="prepare"></a>Förbereda

Kom igång genom att exportera en Resource Manager-mall för resurs gruppen som innehåller system händelse källan (Azure Storage konto) och det associerade system avsnittet.

1. Logga in på [Azure-portalen](https://portal.azure.com).

1. Välj **resurs grupper** på den vänstra menyn. Välj sedan den resurs grupp som innehåller den händelse källa som systemet ska skapas för. I följande exempel är det **Azure Storage** kontot. Resurs gruppen innehåller avsnittet lagrings konto och det associerade systemet.

:::image type="content" source="./media/move-system-topics-across-regions/resource-group-page.png" alt-text="Sidan resurs grupp":::

3. På den vänstra menyn väljer du **Exportera mall** under **Inställningar**och väljer sedan **Hämta** i verktygsfältet.

:::image type="content" source="./media/move-system-topics-across-regions/export-template-menu.png" alt-text="Storage konto – exportera mall":::

5. Leta upp **zip** -filen som du laddade ned från portalen och zippa upp filen till en valfri mapp. Den här zip-filen innehåller mallar och parametrar JSON-filer.

1. Öppna **template.js** i valfritt redigerings program.

1. URL: en för webhooken har inte exporter ATS till mallen. Gör så här:

1. I mallfilen söker du efter **webhook**.

1. I avsnittet **Egenskaper** lägger du till ett kommatecken ( `,` )-tangent i slutet av den sista raden. I det här exemplet är det `"preferredBatchSizeInKilobytes": 64` .

1. Lägg till `endpointUrl` egenskapen med värdet som angetts till webhook-URL: en som visas i följande exempel.

```json

"destination": {

"properties": {

"maxEventsPerBatch": 1,

"preferredBatchSizeInKilobytes": 64,

"endpointUrl": "https://mysite.azurewebsites.net/api/updates"

},

"endpointType": "WebHook"

}

```

> [!NOTE]

> För andra typer av händelse hanterare exporteras alla egenskaper till mallen. Du behöver bara uppdatera `location` egenskapen till mål regionen som visas i nästa steg.

7. Uppdatera `location` **lagrings konto** resursen till mål regionen eller platsen. För att hämta plats koder, se [Azure-platser](https://azure.microsoft.com/global-infrastructure/locations/). Koden för en region är region namnet utan mellanslag, till exempel `West US` är lika med `westus` .

```json

"type": "Microsoft.Storage/storageAccounts",

"apiVersion": "2019-06-01",

"name": "[parameters('storageAccounts_spegridstorage080420_name')]",

"location": "westus",

```

8. Upprepa steget för att uppdatera `location` **system ämnes** resursen i mallen.

```json

"type": "Microsoft.EventGrid/systemTopics",

"apiVersion": "2020-04-01-preview",

"name": "[parameters('systemTopics_spegridsystopic080420_name')]",

"location": "westus",

```

1. **Spara** mallen.

## <a name="recreate"></a>Återskapa

Distribuera mallen för att skapa ett lagrings konto och ett system ämne för lagrings kontot i mål regionen.

1. I Azure Portal väljer du **skapa en resurs**.

2. I **Sök på Marketplace**skriver du **mall distribution**och trycker sedan på **RETUR**.

3. Välj **malldistribution**.

4. Välj **Skapa**.

5. Välj **Bygg en egen mall i redigeraren**.

6. Välj **Läs in fil**och följ sedan anvisningarna för att läsa in **template.jspå** filen som du laddade ned i det sista avsnittet.

7. Spara mallen genom att välja **Spara** .

8. Följ de här stegen på sidan **Anpassad distribution** .

1. Välj en Azure- **prenumeration**.

1. Välj en befintlig **resurs grupp** i mål regionen eller skapa en.

1. För **region**väljer du mål regionen. Om du har valt en befintlig resurs grupp är den här inställningen skrivskyddad.

1. I **ämnes namnet för systemet**anger du ett namn för det system ämne som ska associeras med lagrings kontot.

1. För **lagrings konto namnet**anger du ett namn för lagrings kontot som ska skapas i mål regionen.

:::image type="content" source="./media/move-system-topics-across-regions/deploy-template.png" alt-text="Distribuera Resource Manager-mall":::

5. Välj **Granska + skapa** längst ned på sidan.

1. På sidan **Granska + skapa** granskar du inställningarna och väljer **skapa**.

## <a name="verify"></a>Verifiera

1. När distributionen har slutförts väljer du **gå till resurs grupp**.

1. På sidan **resurs grupp** kontrollerar du att händelse källan (i det här exemplet Azure Storage konto) och system avsnittet skapas.

1. Ladda upp en fil till en behållare i Azure Blob Storage och kontrol lera att webhooken har tagit emot händelsen. Mer information finns i [skicka en händelse till din slut punkt](blob-event-quickstart-portal.md#send-an-event-to-your-endpoint).

## <a name="discard-or-clean-up"></a>Ta bort eller rensa

Ta bort resurs gruppen som innehåller lagrings kontot och det associerade system avsnittet i käll regionen för att slutföra flyttningen.

Om du vill börja om tar du bort resurs gruppen i mål regionen och upprepar stegen i avsnitten [förbereda](#prepare) och [Återskapa](#recreate) i den här artikeln.

Så här tar du bort en resurs grupp (källa eller mål) med hjälp av Azure Portal:

1. I fönstret Sök högst upp i Azure Portal, Skriv **resurs grupper**och välj **resurs grupper** från Sök resultat.

2. Välj den resurs grupp som ska tas bort och välj **ta bort** från verktygsfältet.

:::image type="content" source="./media/move-system-topics-across-regions/delete-resource-group-button.png" alt-text="Ta bort resursgrupp":::

3. På sidan bekräftelse anger du namnet på resurs gruppen och väljer **ta bort**.

## <a name="next-steps"></a>Nästa steg

Du har lärt dig hur du flyttar en Azure Event-källa och dess associerade system-avsnitt från en region till en annan region. I följande artiklar finns information om hur du flyttar anpassade ämnen, domäner och partners namn områden i olika regioner.

- [Flytta anpassade ämnen mellan regioner](move-custom-topics-across-regions.md).

- [Flytta domäner mellan regioner](move-domains-across-regions.md).

- [Flytta namn rymder för partner över regioner](move-partner-namespaces-across-regions.md).

Mer information om hur du flyttar resurser mellan regioner och haveri beredskap i Azure finns i följande artikel: [Flytta resurser till en ny resurs grupp eller prenumeration](../azure-resource-manager/management/move-resource-group-and-subscription.md).

| 75.282258 | 523 | 0.749652 | swe_Latn | 0.999175 |

a90ddf0d9ad4e5caf369b256369b93a90982e82c | 4,260 | md | Markdown | bio.md | julbinb/julbinb.github.io | f3906955a9bd808772638d995f29d9db0630c06f | [

"MIT"

] | null | null | null | bio.md | julbinb/julbinb.github.io | f3906955a9bd808772638d995f29d9db0630c06f | [

"MIT"

] | null | null | null | bio.md | julbinb/julbinb.github.io | f3906955a9bd808772638d995f29d9db0630c06f | [

"MIT"

] | null | null | null | ---

layout: category

title: Bio

---

## Education

I received both BS and MS from the {{site.data.links.mdlinks.sfedu}}

(former Rostov State University), Rostov-on-Don, Russia.

My department, Faculty of Mathematics, Mechanics and Computer Science (MMCS),

is now called [I. I. Vorovich Institute for Mathematics, Mechanics and Computer Science]({{site.data.links.places.mmcs.link}}).

* **MS in Computer Science (2012–2014)**

Thesis: Модель концептов в императивном языке программирования (A model of concepts for an imperative programming language).

{% include link-button.html name="thesis PDF (in Russian)" link="files/thesis/belyakova-MS-2014_net-concepts.pdf" small="true" %}

{% include link-button.html name="slides PDF (in Russian)" link="files/thesis/belyakova-MS-2014_net-concepts-slides.pdf" small="true" %}

* **BS in Computer Science (2008–2012)**

Thesis: Автоматическое построение ограничений в модельном языке программирования с шаблонами функций и автовыводом типов (Automatic constraints collection in

a programming language with generic functions and type inference).

{% include link-button.html name="thesis PDF (in Russian)" link="files/thesis/belyakova-BS-2012_PollyTL.pdf" small="true" %}

{% include link-button.html name="slides PDF (in Russian)" link="files/thesis/belyakova-BS-2012_PollyTL-slides.pdf" small="true" %}

## Employment

* *Research Assistant.*

Faculty of Information Technology ([FIT]({{site.data.links.places.fitcvut.link}})),

Czech Technical University in Prague ([CVUT]({{site.data.links.places.cvut.link}})).

September 2017−July 2018.

* *Research Assistant.*

Khoury College of Computer Sciences ([Khoury]({{site.data.links.places.khoury.link}})),

Northeastern University ([NEU]({{site.data.links.places.neu.link}})).

January−July 2017.

* *Teaching Assistant, Lecturer.*

[Department of Computer Science and Computational Experiment](http://sfedu.ru/www/rsu$elements$.info?p_es_id=2001100000000),

I. I. Vorovich Institute for Mathematics, Mechanics and Computer Science ([MMCS]({{site.data.links.places.mmcs.link}})),

Southern Federal University ([SFedU]({{site.data.links.places.sfedu.link}})).

2014−2016.

* *Part Time Programmer.* Angstrem-SFedU Laboratory.

2012−2013.

## Bio

> My actual name in Russian is _Юлия_, transliterated as _Yulia_.

> I have been using _Julia_ as my professional name because

> it seems easier to pronounce, but I equally like both.

---

I was born in 1991 in the city of [Rostov-on-Don](https://en.wikipedia.org/wiki/Rostov-on-Don), Russia, where I grew up and spent most of my life so far.

In 2008, I finished high school and started a CS undergraduate program at the

{{site.data.links.mdlinks.mmcs}} ({{site.data.links.mdlinks.sfedu}}).

During my undergrad, I got involved in programming languages research

and teaching, thanks to my advisor

[Stanislav Mikhalkovich]({{site.data.links.people.ssm.link}}).

(Stanislav is also the main person behind the teaching programming language

and IDE [PascalABC.NET]({{site.data.links.websites.pascalabc}}),

which is not to be confused with the old Pascal.)

I was lucky to have studied and then worked with several great faculty

who were interested in PL. Special thanks to

[Vitaly Bragilevsky]({{site.data.links.people.vitaly.link}}),

{{site.data.links.mdlinks.artem}},

and [Stanislav Mikhalkovich]({{site.data.links.people.ssm.link}}).

In 2012–2016, I was happily teaching undergraduate CS courses at my alma mater,

{{site.data.links.mdlinks.mmcs}}.

While teaching half-time, I had entered a PhD program as well, although

later started anew at [Northeastern]({{site.data.links.places.neu.link}}).

(Feel free to ask me about this.)

At the [ECOOP conference in 2016](https://2016.ecoop.org/), I had met

{{site.data.links.mdlinks.janvitek}} who later became my PhD advisor.

I did a research internship with him in Boston in 2017 and then spent a year in

[Prague](https://en.wikipedia.org/wiki/Prague) as a researcher at the

[Czech Technical University]({{site.data.links.places.cvut.link}}).

In September 2018, I started a PhD in Computer Science

at {{site.data.links.mdlinks.neu}},

and I have been living in Boston since then.

---

* [CV of failures](failures)

* [Personal](personal)

| 48.409091 | 159 | 0.75 | eng_Latn | 0.864934 |

a90e086ef680c01aac6d84d9683c1010fe043382 | 207 | md | Markdown | playbooks/README.md | jtudelag/casl-ansible | a114e7baf207e9ac937c6e882b244952b13f6ef8 | [

"Apache-2.0"

] | 137 | 2016-10-26T23:01:24.000Z | 2022-03-19T19:37:49.000Z | playbooks/README.md | darthlukan/advanced-openshift-deployment-homework | 94593c51d206cc05bb65ef6360814afa94728f4e | [

"Apache-2.0"

] | 272 | 2016-10-12T19:56:42.000Z | 2020-08-27T19:52:59.000Z | playbooks/README.md | darthlukan/advanced-openshift-deployment-homework | 94593c51d206cc05bb65ef6360814afa94728f4e | [

"Apache-2.0"

] | 116 | 2016-10-12T19:30:24.000Z | 2021-07-12T12:53:06.000Z | # The CASL Ansible playbooks

## openshift-cluster-seed.yml (openshift-applier)

This playbook (and supporting components) have been moved to a separate repo: https://github.com/redhat-cop/openshift-applier

| 34.5 | 125 | 0.792271 | eng_Latn | 0.949594 |

a90e20a3ccea4800ab576e49b3fa9fae229a00c1 | 2,922 | md | Markdown | learn-bizapps-pr/power-bi/ai-visuals-power-bi/includes/4-decomposition-tree.md | caoyongxu/learn-bizapps-pr-test | d92ce2adf403add16c2c426d6e62a56c84f08eba | [

"CC-BY-4.0",

"MIT"

] | null | null | null | learn-bizapps-pr/power-bi/ai-visuals-power-bi/includes/4-decomposition-tree.md | caoyongxu/learn-bizapps-pr-test | d92ce2adf403add16c2c426d6e62a56c84f08eba | [

"CC-BY-4.0",

"MIT"

] | null | null | null | learn-bizapps-pr/power-bi/ai-visuals-power-bi/includes/4-decomposition-tree.md | caoyongxu/learn-bizapps-pr-test | d92ce2adf403add16c2c426d6e62a56c84f08eba | [

"CC-BY-4.0",

"MIT"

] | 3 | 2022-03-31T08:41:43.000Z | 2022-03-31T09:19:05.000Z | The **Decomposition Tree** visual automatically aggregates your data and lets you drill down into your dimensions so that you can view your data across multiple dimensions. Because **Decomposition Tree** is an AI visual, you can use it for improvised exploration and conducting root cause analysis.

In this example, you've built visuals for the Supply Chain team, but the visuals do not answer all the team's questions. In particular, the team wants to be able to analyze the percentage of products that the organization has on back order, in other words, the percentage of products that are out of stock. The **Decomposition Tree** visual can help you accomplish that task.

Add the **Decomposition Tree** visual to your report by selecting the **Decomposition Tree** icon on the **Visualization** pane. Then, in the **Analyze** field well, add the measure or aggregate that you want to analyze. In the **Explain by** field well, add the dimension(s) that you want to drill down into. In this case, you want to analyze the **Sales** field by drilling down into a number of dimensions, such as **Country**, **City**, and **Product**, as illustrated in the following image.

> [!div class="mx-imgBorder"]

> [](../media/4-use-decomposition-tree-visual-ss.png#lightbox)

The visual updates according to the fields that you added and displays the analysis summary result. In this case, the value of sales is USD 13,499,680.00. You can select the plus (**+**) sign, which will present the drill-down options that you have added. You can select any of the fields in the drop-down list to drill down into the data and see how it contributed to the overall result.

At the top of the list of dimensions that you added are two additional options that are marked with lightbulb icons. These options are referred to as *AI splits*, and they'll automatically find high and low values in the data for you.

> [!div class="mx-imgBorder"]

> [](../media/4-ai-split-options-ss.png#lightbox)

AI splits work by considering all available fields and determining which one to drill into to get the highest/lowest value of the measure that is being analyzed. You can use the results of these splits to find out where you should look next in the data. The following image illustrates the result of selecting the **High value** AI split.

> [!div class="mx-imgBorder"]

> [](../media/4-apply-ai-split-decomposition-tree-ss.png#lightbox)

For more information, see [Create and view decomposition tree visuals in Power BI](https://docs.microsoft.com/power-bi/visuals/power-bi-visualization-decomposition-tree/?azure-portal=true). | 132.818182 | 496 | 0.770021 | eng_Latn | 0.998593 |

a90ee21f345ef2c140e326a16441b14172c6b07e | 73 | md | Markdown | README.md | kintoe2e/node-hello-world | f194cab3ce761189c42bcc9d9609f5ba1381f817 | [

"MIT"

] | null | null | null | README.md | kintoe2e/node-hello-world | f194cab3ce761189c42bcc9d9609f5ba1381f817 | [

"MIT"

] | null | null | null | README.md | kintoe2e/node-hello-world | f194cab3ce761189c42bcc9d9609f5ba1381f817 | [

"MIT"

] | 1 | 2019-12-16T11:02:52.000Z | 2019-12-16T11:02:52.000Z | # node-hello-world

Hello World with Node.js

## Execute

`node server.js` | 12.166667 | 24 | 0.726027 | nld_Latn | 0.205436 |

a90f559587a9126566059ddfead105c6115f401a | 2,512 | md | Markdown | play/roles/rbenv/README.md | marcusramberg/dotfiles | 07a0576eaa0a233a2125ddcb2bb04a5fe5033674 | [

"MIT"

] | 1 | 2020-10-14T00:06:54.000Z | 2020-10-14T00:06:54.000Z | play/roles/rbenv/README.md | marcusramberg/dotfiles | 07a0576eaa0a233a2125ddcb2bb04a5fe5033674 | [

"MIT"

] | null | null | null | play/roles/rbenv/README.md | marcusramberg/dotfiles | 07a0576eaa0a233a2125ddcb2bb04a5fe5033674 | [

"MIT"

] | 2 | 2015-08-06T07:45:48.000Z | 2017-01-04T17:47:16.000Z | rbenv

========

Role for installing [rbenv](https://github.com/sstephenson/rbenv).

Role ready status

------------

[](https://travis-ci.org/zzet/ansible-rbenv-role)

Requirements

------------

none

Role Variables

--------------

Default variables are:

rbenv:

env: system

version: v0.4.0

ruby_version: 2.0.0-p247

rbenv_repo: "git://github.com/sstephenson/rbenv.git"

rbenv_plugins:

- { name: "rbenv-vars",

repo: "git://github.com/sstephenson/rbenv-vars.git",

version: "v1.2.0" }

- { name: "ruby-build",

repo: "git://github.com/sstephenson/ruby-build.git",

version: "v20131225.1" }

- { name: "rbenv-default-gems",

repo: "git://github.com/sstephenson/rbenv-default-gems.git",

version: "v1.0.0" }

- { name: "rbenv-installer",

repo: "git://github.com/fesplugas/rbenv-installer.git",

version: "8bb9d34d01f78bd22e461038e887d6171706e1ba" }

- { name: "rbenv-update",

repo: "git://github.com/rkh/rbenv-update.git",

version: "32218db487dca7084f0e1954d613927a74bc6f2d" }

- { name: "rbenv-whatis",

repo: "git://github.com/rkh/rbenv-whatis.git",

version: "v1.0.0" }

- { name: "rbenv-use",

repo: "git://github.com/rkh/rbenv-use.git",

version: "v1.0.0" }

rbenv_root: "{% if rbenv.env == 'system' %}/usr/local/rbenv{% else %}~/.rbenv{% endif %}"

rbenv_users: []

Description:

- ` rbenv.env ` - Type of rbenv installation. Allows 'system' or 'user' values

- ` rbenv.version ` - Version of rbenv to install (tag from [rbenv releases page](https://github.com/sstephenson/rbenv/releases))

- ` rbenv.ruby_version ` - Version of ruby to install as global rbenv ruby

- ` rbenv_repo ` - Repository with source code of rbenv to install

- ` rbenv_plugins ` - Array of Hashes with information about plugins to install

- ` rbenv_root ` - Install path

- ` rbenv_users ` - Array of Hashes with users for multiuser install. User must be present in the system

Example:

rbenv_users:

- { name: "user", home: "/home/user/", comment: "Deploy user" }

Dependencies

------------

none

License

-------

MIT

Author Information

------------------

[Andrew Kumanyaev](http://github.com/zzet)

[](https://bitdeli.com/free "Bitdeli Badge")

| 27.010753 | 133 | 0.624204 | kor_Hang | 0.124502 |

a910193514e303f42dd61f310ad3a02bae497e03 | 373 | md | Markdown | JS/20. Array.find() and Array.findIndex().md | AbdallahHemdan/TIL | 0a94d4897ed5ee21e5618fe0791a606772c99701 | [

"MIT"

] | 32 | 2020-04-26T16:08:25.000Z | 2022-01-07T06:55:00.000Z | JS/20. Array.find() and Array.findIndex().md | Rowida46/TIL | 0a94d4897ed5ee21e5618fe0791a606772c99701 | [

"MIT"

] | null | null | null | JS/20. Array.find() and Array.findIndex().md | Rowida46/TIL | 0a94d4897ed5ee21e5618fe0791a606772c99701 | [

"MIT"

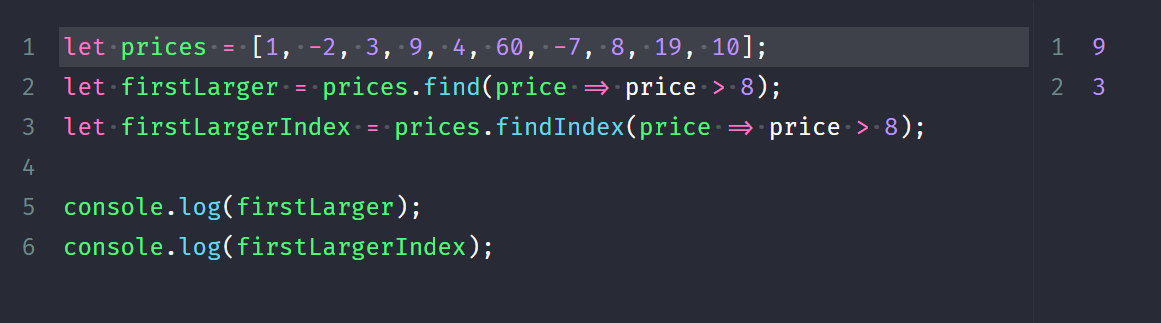

] | 8 | 2020-06-16T00:12:42.000Z | 2021-10-31T15:02:41.000Z | # Array.find() and Array.findIndex()

| 46.625 | 110 | 0.801609 | yue_Hant | 0.13959 |

a9109262bf908921ff25223cce9684e821ae8c56 | 3,568 | md | Markdown | data/readme_files/maK-.scantastic-tool.md | DLR-SC/repository-synergy | 115e48c37e659b144b2c3b89695483fd1d6dc788 | [

"MIT"

] | 5 | 2021-05-09T12:51:32.000Z | 2021-11-04T11:02:54.000Z | data/readme_files/maK-.scantastic-tool.md | DLR-SC/repository-synergy | 115e48c37e659b144b2c3b89695483fd1d6dc788 | [

"MIT"

] | null | null | null | data/readme_files/maK-.scantastic-tool.md | DLR-SC/repository-synergy | 115e48c37e659b144b2c3b89695483fd1d6dc788 | [

"MIT"

] | 3 | 2021-05-12T12:14:05.000Z | 2021-10-06T05:19:54.000Z | # scantastic-tool

## It's bloody scantastic

If you like this and are feeling a bit(coin) generous - 1JdSGqg2zGTbpFMJPLbWoXg7Nng3z1Qp58

It works for me: http://makthepla.net/scantastichax.png

- Dependencies: (DIY - I ain't supportin shit)

- Masscan - https://github.com/robertdavidgraham/masscan

- Nmap - https://nmap.org/download.html

- ElasticSearch - http://www.elasticsearch.org/guide/en/elasticsearch/guide/current/_installing_elasticsearch.html

- Kibana - http://www.elasticsearch.org/overview/kibana/installation/

This tool can be used to store masscan or nmap data in elasticsearch,

(the scantastic plugin in the image is not here)

It allows performs distributed directory brute-forcing.

All your base are belong to us. I might maintain or improve this over time. MIGHT.

## Quickstart

### Example usage

Run and import a scan of home /24 network

```

./scantastic.py -s -H 192.168.1.0/24 -p 80,443 -x homescan.xml (with masscan)

./scantastic.py -ns -H 192.168.1.0/24 -p 80,443 -x homescan.xml (with nmap)

```

Export homescan to a list of urls

```

./scantastic.py -eurl -x homescan.xml > urlist (with masscan)

./scantastic.py -nurl -x homescan.xml > urlist (with nmap)

```

Brute force the url list using wordlist and put results into index homescan

using 10 threads (By default it uses 1 thread)

```

./scantastic.py -d -u urlist -w some_wordlist -i homescan -t 10

```

```

root@ubuntu:~/scantastic-tool# ./scantastic.py -h

usage: scantastic.py [-h] [-v] [-d] [-s] [-noes] [-sl] [-in] [-e] [-eurl]

[-del] [-H HOST] [-p PORTS] [-x XML] [-w WORDS] [-u URLS]

[-t THREADS] [-esh ESHOST] [-esp PORT] [-i INDEX]

[-a AGENT]

optional arguments:

-h, --help show this help message and exit

-v, --version Version information

-d, --dirb Run directory brute force. Requires --urls & --words

-s, --scan Run masscan on single range. Specify --host & --ports

& --xml

-ns, --nmap Run Nmap on a single range specify -H & -p

-noes, --noelastics Run scan without elasticsearch insertion

-sl, --scanlist Run masscan on a list of ranges. Requires --host &

--ports & --xml

-nsl, --nmaplist Run Nmap on a list of ranges -H & -p & -x

-in, --noinsert Perform a scan without inserting to elasticsearch

-e, --export Export a scan XML into elasticsearch. Requires --xml

-eurl, --exporturl Export urls to scan from XML file. Requires --xml

-nurl, --exportnmap Export urls from nmap XML, requires -x

-del, --delete Specify an index to delete.

-H HOST, --host HOST Scan this host or list of hosts

-p PORTS, --ports PORTS

Specify ports in masscan format. (ie.0-1000 or

80,443...)

-x XML, --xml XML Specify an XML file to store output in

-w WORDS, --words WORDS

Wordlist to be used with --dirb

-u URLS, --urls URLS List of Urls to be used with --dirb

-t THREADS, --threads THREADS

Specify the number of threads to use.

-esh ESHOST, --eshost ESHOST

Specify the elasticsearch host

-esp PORT, --port PORT

Specify ElasticSearch port

-i INDEX, --index INDEX

Specify the ElasticSearch index

-a AGENT, --agent AGENT

Specify a User Agent for requests

```

Use -noes and -in scans to not import scans by default upon completion of a scan

| 38.782609 | 115 | 0.629204 | eng_Latn | 0.771463 |

a9118d3d75a9872a0c13a956fb12ee45f7fc6ef0 | 191 | md | Markdown | _pages/TrainingOverview.md | 18F/formservice-trainingmaterials | 96a753f1c82cbc0ec4ac97dfb9b3c464dad7e367 | [

"CC0-1.0"

] | 2 | 2021-02-03T00:33:28.000Z | 2022-02-19T08:04:57.000Z | _pages/TrainingOverview.md | 18F/formservice-trainingmaterials | 96a753f1c82cbc0ec4ac97dfb9b3c464dad7e367 | [

"CC0-1.0"

] | 36 | 2021-02-03T00:32:41.000Z | 2022-03-21T09:09:22.000Z | _pages/TrainingOverview.md | 18F/formservice-trainingmaterials | 96a753f1c82cbc0ec4ac97dfb9b3c464dad7e367 | [

"CC0-1.0"

] | null | null | null | ---

layout: training

sidenav: false

title: Training Overview

parent: contact

redirect_from:

- /documentation/training

- /training

- /docs/help/

---

Some stuff for the training overview | 15.916667 | 36 | 0.73822 | eng_Latn | 0.926056 |

a911e947eb9c950a323023d1b20148ad5726d301 | 3,467 | md | Markdown | articles/cognitive-services/Content-Moderator/Review-Tool-User-Guide/human-in-the-loop.md | changeworld/azure-docs.cs-cz | cbff9869fbcda283f69d4909754309e49c409f7d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Content-Moderator/Review-Tool-User-Guide/human-in-the-loop.md | changeworld/azure-docs.cs-cz | cbff9869fbcda283f69d4909754309e49c409f7d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | articles/cognitive-services/Content-Moderator/Review-Tool-User-Guide/human-in-the-loop.md | changeworld/azure-docs.cs-cz | cbff9869fbcda283f69d4909754309e49c409f7d | [

"CC-BY-4.0",

"MIT"

] | null | null | null | ---

title: Naučte se koncepty nástrojů pro kontrolu – Moderátor obsahu

titleSuffix: Azure Cognitive Services

description: Přečtěte si o nástroji Content Moderator Review, webové stránce, která koordinuje kombinovanou umělou a umělou a lidskou kontrolu.

services: cognitive-services

author: PatrickFarley

manager: mikemcca

ms.date: 03/15/2019

ms.service: cognitive-services

ms.subservice: content-moderator

ms.topic: conceptual

ms.author: pafarley

ms.openlocfilehash: a23e6d46ee6e79fd7a5cabf4434c561f7d83b31b

ms.sourcegitcommit: 2ec4b3d0bad7dc0071400c2a2264399e4fe34897

ms.translationtype: MT

ms.contentlocale: cs-CZ

ms.lasthandoff: 03/28/2020

ms.locfileid: "76169504"

---

# <a name="content-moderator-review-tool"></a>Nástroj revize moderátora obsahu

Azure Content Moderator poskytuje služby pro kombinaci moderování obsahu strojového učení s lidskými recenzemi a web [nástroje revize](https://contentmoderator.cognitive.microsoft.com) je uživatelsky přívětivý front-end, který poskytuje podrobný přístup k těmto službám.

## <a name="what-it-does"></a>Co dělá

[Nástroj revize](https://contentmoderator.cognitive.microsoft.com), pokud se používá ve spojení s uvolděnými pomocí nastavení API moderování, umožňuje provádět následující úkoly v procesu moderování obsahu:

- Pomocí jedné sady nástrojů můžete moderovat obsah ve více formátech (text, obrázek a video).

- Automatizujte vytváření lidských [recenzí,](../review-api.md#reviews) když přijdou výsledky rozhraní API pro moderování.

- Přiřaďte nebo eskalujte recenze obsahu více týmům pro recenze, které jsou uspořádány podle kategorie obsahu nebo úrovně prostředí.

- Pomocí výchozích nebo vlastních[filtrů logiky](../review-api.md#workflows)( pracovních postupů ) můžete seřadit a sledovat obsah bez psaní kódu.

- Kromě rozhraní API pro moderátora obsahu můžete pomocí [konektorů](./configure.md#connectors) zpracovávat obsah pomocí služeb Microsoft PhotoDNA, Text Analytics a Face.

- Vytvořte si vlastní konektor pro vytváření pracovních postupů pro libovolné rozhraní API nebo obchodní proces.

- Získejte klíčové metriky výkonu procesů moderování obsahu.

## <a name="review-tool-dashboard"></a>Řídicí panel nástroje revize

Na kartě **Řídicí panel** uvidíte klíčové metriky pro recenze obsahu provedené v nástroji. Podívejte se na celkový počet, úplných a nevyřízených recenzí pro obrázek, text a video obsah. Můžete také zobrazit rozdělení uživatelů a týmů, kteří dokončili recenze, a také značky moderování, které byly použity.

## <a name="review-tool-credentials"></a>Zkontrolovat pověření nástroje

Když se zaregistrujete pomocí [nástroje revize](https://contentmoderator.cognitive.microsoft.com), budete vyzváni k výběru oblasti Azure pro vás účet. Důvodem je, [že nástroj revize](https://contentmoderator.cognitive.microsoft.com) generuje bezplatný zkušební klíč pro služby Azure Content Moderator; Tento klíč budete potřebovat pro přístup ke všem službám z volání REST nebo sady SDK klienta. Adresu URL koncového bodu klíče a rozhraní API můžete zobrazit tak, že vyberete **Nastavení** > **přihlašovacích údajů**.

## <a name="next-steps"></a>Další kroky

Informace o tom, jak získat přístup k prostředkům nástroje Revize a změnit nastavení, [najdete v tématu Konfigurace nástroje Revize.](./configure.md) | 66.673077 | 517 | 0.80848 | ces_Latn | 0.999505 |

a9123c973dc2071c40611e46b5e1098cefb88327 | 387 | md | Markdown | README.md | d605-upjv/veille-concurrentielle | d5d3434008ba7b4c67577f29d46c35314e9378f9 | [

"MIT"

] | null | null | null | README.md | d605-upjv/veille-concurrentielle | d5d3434008ba7b4c67577f29d46c35314e9378f9 | [

"MIT"

] | null | null | null | README.md | d605-upjv/veille-concurrentielle | d5d3434008ba7b4c67577f29d46c35314e9378f9 | [

"MIT"

] | null | null | null | # veille-concurrentielle

L’objectif de ce projet est de concevoir un outil informatique permettant de collecter les prix de vente d’une liste de produits sur un ensemble de boutiques/places de marchés. Un tel outil peut permettre, par exemple, de savoir si les concurrents vendent plus ou moins cher les produits vendus sur sa propre boutique en ligne et ainsi adapter ses propres prix.

| 129 | 361 | 0.816537 | fra_Latn | 0.995533 |

a91269153ce9082edf13252539f4e34a974440c7 | 1,848 | md | Markdown | README.md | minhkhoa92/signal_U_fiddle | eb0a4041656078db1d3f0f7f3b8643ef8fccef99 | [

"FSFAP"

] | null | null | null | README.md | minhkhoa92/signal_U_fiddle | eb0a4041656078db1d3f0f7f3b8643ef8fccef99 | [

"FSFAP"

] | null | null | null | README.md | minhkhoa92/signal_U_fiddle | eb0a4041656078db1d3f0f7f3b8643ef8fccef99 | [

"FSFAP"

] | null | null | null | ## Synopsis

These codes are unfinished. They should display signals between processes on UNIX-like operating systems. In some way the specification of the program is done and there are probably no new elements to be introduced.

These codes utilize POSIX functions and to a much lesser extent SysV functions.

## Code Example

This is from test\_signal.c

```

void handler(int signo);

int main

(

int argc, char **argv

)

{

printf("%d\n", getppid());

printf("%d\n", getpid());

if(signal(SIGUSR1, handler) == SIG_ERR)

{

exit(EXIT_FAILURE);

}

else

pause();

}

void handler(int signo)

{

printf("see if this reaches\n");

}

```

The essential codes are pause() and signal(SIGUSR1, func\_name). The former stops execution and the latter assigns the handler-function func\_name to the signal number SIGUSR1.

## Motivation

Because I solve all my problems in concurrency with Semaphores, and all my messaging with data structures like shared memories, msg-queues, pipes and sockets, I add something as small as signals to my repertoire.

## Installation

Some codes are not supposed to compile.

server\_and\_monitor\_posix\_sem\_shm.c is supposed to be used like this:

usage: command <no>|reader <no>|reader <no>|reader <no>|reader [...]

## Contributors

Copied from https://www.softprayog.in/programming/interprocess-communication-using-posix-shared-memory-in-linux

Copyright © 2007-2017 SoftPrayog.in. All Rights Reserved.

Suggestion to use malloc\_stats() from www.linuxjournal.com/article/6390

And me, Luu Minh Khoa Ngo

## License