issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"MonetDB",

"MonetDB"

] | The database version we are using is 11.43.5 Jan2022 and we are getting this error in many of the tables in Monet DB

when try to select table from the DB we are getting error

sql>select *from "DM_POS_TSV_MRT_P6"."OUTLET_CSL_VISIBILITY";

GDK reported error: BATproject2: does not match always

few often we are facing these kind of issues in many of the DB's In monet with only new verison 11.43.5 jan2022 version

Some times not reproducible the same issue and at the time of loading the data we are getting this error and this is making big issue

can you please provide the solution for the above error

2022-07-18 14:18:02 ERR DB_TSV23_P2_A[40136]: #client1709: BATproject2: !ERROR: does not match always

2022-07-18 14:18:02 ERR DB_TSV23_P2_A[40136]: #client1709: createExceptionInternal: !ERROR: MALException:algebra.projection:GDK reported error: BATproject2: does not match always

| !ERROR: MALException:algebra.projection:GDK reported error: BATproject2: does not match always | https://api.github.com/repos/MonetDB/MonetDB/issues/7315/comments | 2 | 2022-07-18T20:58:44Z | 2022-07-27T15:44:30Z | https://github.com/MonetDB/MonetDB/issues/7315 | 1,308,619,862 | 7,315 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

The ODBC driver shows the password, which is pretty unsafe if someone watches your screen

**Describe the solution you'd like**

the common dot instead of the password

**Describe alternatives you've considered**

None

**Additional context**

None

| ODBC Driver : please mask/hide password | https://api.github.com/repos/MonetDB/MonetDB/issues/7314/comments | 2 | 2022-07-09T13:16:11Z | 2024-06-27T13:17:44Z | https://github.com/MonetDB/MonetDB/issues/7314 | 1,299,680,725 | 7,314 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

I cannot see the columns of a table in Alteryx. Alteryx uses an ODBC connexion to retrieve the information. On #6800 , Martin suggests that can be linked to SQLColums() function issue.

Here are the ODBC logs if that can help

[SQL.LOG](https://github.com/MonetDB/MonetDB/files/9027104/SQL.LOG)

**To Reproduce**

1/Create ODBC Connection to a monetdb database

2/Open Alteryx, use a data input box and connect to the odbc database configured in 1/

**Expected behavior**

-no error message in logs

-columns appeared in Alteryx

**Screenshots**

**Software versions**

- MonetDB version number : 11.43.9

- odbc driver : 11.43.9.01

- OS and version: Windows 10

- Installed from release package

- Alteryx 2022.1

**Issue labeling **

ODBC driver

**Additional context**

For testing, you can download a version of Alteryx, there is a trial for a few days. Or just contact me, it would be a pleasure.

| ODBC SQLColums() issue with Alteryx | https://api.github.com/repos/MonetDB/MonetDB/issues/7313/comments | 14 | 2022-07-01T08:04:01Z | 2022-08-06T09:29:26Z | https://github.com/MonetDB/MonetDB/issues/7313 | 1,291,060,714 | 7,313 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

Once you click on ok, the ODBC window closes and you have to open an other sotfware to test the configuration. If it's wrong, we have to reopen the window

**Describe the solution you'd like**

A button to test the configuration

**Describe alternatives you've considered**

None

**Additional context**

None

| Test Button for ODBC Driver | https://api.github.com/repos/MonetDB/MonetDB/issues/7312/comments | 4 | 2022-07-01T05:54:23Z | 2024-08-06T11:18:09Z | https://github.com/MonetDB/MonetDB/issues/7312 | 1,290,944,362 | 7,312 |

[

"MonetDB",

"MonetDB"

] | null | Missing `REGEXP_REPLACE` function. | https://api.github.com/repos/MonetDB/MonetDB/issues/7311/comments | 5 | 2022-06-27T03:55:56Z | 2024-06-27T13:17:42Z | https://github.com/MonetDB/MonetDB/issues/7311 | 1,285,195,290 | 7,311 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

```

ubuntu@ip-172-31-2-193:~$ cat $HOME/.monetdb

name=monetdb

password=monetdb

ubuntu@ip-172-31-2-193:~$ mclient -d voc

.monetdb:1: unknown property: name

user(ubuntu):

```

https://www.monetdb.org/documentation-Jan2022/user-guide/get-started/login-to-monetdb/ | "Getting started" is wrong | https://api.github.com/repos/MonetDB/MonetDB/issues/7310/comments | 1 | 2022-06-27T02:38:50Z | 2022-06-27T09:38:40Z | https://github.com/MonetDB/MonetDB/issues/7310 | 1,285,151,941 | 7,310 |

[

"MonetDB",

"MonetDB"

] | ERROR: type should be string, got "https://www.monetdb.org/easy-setup/ubuntu-debian/\r\n\r\nThe step\r\n```\r\nmonetdb release mydb\r\n```\r\n\r\nprints:\r\n\r\n```\r\nmonetdbd: unknown command: release\r\n```\r\n\r\nFull log:\r\n\r\n```\r\nubuntu@ip-172-31-9-151:~$ echo \"deb https://dev.monetdb.org/downloads/deb/ $(lsb_release -cs) monetdb\" >> /etc/apt/sources.list.d/monetdb.list\r\n-bash: /etc/apt/sources.list.d/monetdb.list: Permission denied\r\nubuntu@ip-172-31-9-151:~$ echo \"deb https://dev.monetdb.org/downloads/deb/ $(lsb_release -cs) monetdb\" | sudo tee /etc/apt/sources.list.d/monetdb.list\r\ndeb https://dev.monetdb.org/downloads/deb/ jammy monetdb\r\nubuntu@ip-172-31-9-151:~$ sudo wget --output-document=/etc/apt/trusted.gpg.d/monetdb.gpg https://www.monetdb.org/downloads/MonetDB-GPG-KEY.gpg\r\n--2022-06-26 02:04:16-- https://www.monetdb.org/downloads/MonetDB-GPG-KEY.gpg\r\nResolving www.monetdb.org (www.monetdb.org)... 192.16.197.137\r\nConnecting to www.monetdb.org (www.monetdb.org)|192.16.197.137|:443... connected.\r\nHTTP request sent, awaiting response... 200 OK\r\nLength: 2032 (2.0K) [application/pgp-signature]\r\nSaving to: ‘/etc/apt/trusted.gpg.d/monetdb.gpg’\r\n\r\n/etc/apt/trusted.gpg.d/monetdb.gpg 100%[==========================================================================================>] 1.98K --.-KB/s in 0s \r\n\r\n2022-06-26 02:04:16 (3.32 GB/s) - ‘/etc/apt/trusted.gpg.d/monetdb.gpg’ saved [2032/2032]\r\n\r\nubuntu@ip-172-31-9-151:~$ sudo apt-get update\r\nHit:1 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy InRelease\r\nGet:2 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates InRelease [109 kB]\r\nGet:3 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports InRelease [99.8 kB]\r\nGet:4 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/universe amd64 Packages [14.1 MB]\r\nGet:5 http://security.ubuntu.com/ubuntu jammy-security InRelease [110 kB] \r\nGet:7 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/universe Translation-en [5652 kB] \r\nGet:6 https://www.monetdb.org/downloads/deb jammy InRelease [61.7 kB] \r\nGet:8 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/universe amd64 c-n-f Metadata [286 kB]\r\nGet:9 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/multiverse amd64 Packages [217 kB]\r\nGet:10 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/multiverse Translation-en [112 kB]\r\nGet:11 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy/multiverse amd64 c-n-f Metadata [8372 B] \r\nGet:12 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/main amd64 Packages [323 kB] \r\nGet:13 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/main Translation-en [78.1 kB] \r\nGet:14 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/main amd64 c-n-f Metadata [5552 B] \r\nGet:15 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/restricted amd64 Packages [194 kB] \r\nGet:16 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/restricted Translation-en [29.5 kB]\r\nGet:17 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/universe amd64 Packages [131 kB] \r\nGet:18 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/universe Translation-en [46.6 kB]\r\nGet:19 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/universe amd64 c-n-f Metadata [2680 B] \r\nGet:20 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 Packages [25.1 kB] \r\nGet:21 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/multiverse amd64 Packages [4192 B] \r\nGet:22 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/multiverse Translation-en [1016 B]\r\nGet:23 http://security.ubuntu.com/ubuntu jammy-security/main amd64 Packages [191 kB] \r\nGet:24 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-updates/multiverse amd64 c-n-f Metadata [232 B]\r\nGet:25 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/main amd64 c-n-f Metadata [112 B]\r\nGet:26 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/restricted amd64 c-n-f Metadata [116 B]\r\nGet:27 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/universe amd64 Packages [4844 B]\r\nGet:28 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/universe Translation-en [7932 B]\r\nGet:29 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/universe amd64 c-n-f Metadata [236 B]\r\nGet:30 http://eu-central-1.ec2.archive.ubuntu.com/ubuntu jammy-backports/multiverse amd64 c-n-f Metadata [116 B]\r\nGet:31 http://security.ubuntu.com/ubuntu jammy-security/main Translation-en [45.9 kB] \r\nGet:32 http://security.ubuntu.com/ubuntu jammy-security/main amd64 c-n-f Metadata [3108 B]\r\nGet:33 http://security.ubuntu.com/ubuntu jammy-security/restricted amd64 Packages [167 kB]\r\nGet:34 http://security.ubuntu.com/ubuntu jammy-security/restricted Translation-en [25.3 kB]\r\nGet:35 http://security.ubuntu.com/ubuntu jammy-security/universe amd64 Packages [78.1 kB]\r\nGet:36 http://security.ubuntu.com/ubuntu jammy-security/universe Translation-en [27.7 kB]\r\nGet:37 http://security.ubuntu.com/ubuntu jammy-security/universe amd64 c-n-f Metadata [1668 B]\r\nGet:38 http://security.ubuntu.com/ubuntu jammy-security/multiverse amd64 Packages [4192 B]\r\nGet:39 http://security.ubuntu.com/ubuntu jammy-security/multiverse Translation-en [900 B]\r\nGet:40 http://security.ubuntu.com/ubuntu jammy-security/multiverse amd64 c-n-f Metadata [228 B]\r\nFetched 22.1 MB in 2s (9461 kB/s) \r\nReading package lists... Done\r\nubuntu@ip-172-31-9-151:~$ sudo apt-get install monetdb5-sql monetdb-client\r\nReading package lists... Done\r\nBuilding dependency tree... Done\r\nReading state information... Done\r\nThe following additional packages will be installed:\r\n libmonetdb-client25 libmonetdb-stream25 libmonetdb25 monetdb5-server\r\nThe following NEW packages will be installed:\r\n libmonetdb-client25 libmonetdb-stream25 libmonetdb25 monetdb-client monetdb5-server monetdb5-sql\r\n0 upgraded, 6 newly installed, 0 to remove and 11 not upgraded.\r\nNeed to get 4237 kB of archives.\r\nAfter this operation, 15.4 MB of additional disk space will be used.\r\nDo you want to continue? [Y/n] \r\nGet:1 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 libmonetdb-stream25 amd64 11.43.15 [116 kB]\r\nGet:2 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 libmonetdb-client25 amd64 11.43.15 [134 kB]\r\nGet:3 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 libmonetdb25 amd64 11.43.15 [1779 kB]\r\nGet:4 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 monetdb-client amd64 11.43.15 [178 kB]\r\nGet:5 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 monetdb5-server amd64 11.43.15 [1821 kB]\r\nGet:6 https://www.monetdb.org/downloads/deb jammy/monetdb amd64 monetdb5-sql amd64 11.43.15 [209 kB]\r\nFetched 4237 kB in 0s (11.9 MB/s) \r\nSelecting previously unselected package libmonetdb-stream25.\r\n(Reading database ... 63612 files and directories currently installed.)\r\nPreparing to unpack .../0-libmonetdb-stream25_11.43.15_amd64.deb ...\r\nUnpacking libmonetdb-stream25 (11.43.15) ...\r\nSelecting previously unselected package libmonetdb-client25.\r\nPreparing to unpack .../1-libmonetdb-client25_11.43.15_amd64.deb ...\r\nUnpacking libmonetdb-client25 (11.43.15) ...\r\nSelecting previously unselected package libmonetdb25.\r\nPreparing to unpack .../2-libmonetdb25_11.43.15_amd64.deb ...\r\nUnpacking libmonetdb25 (11.43.15) ...\r\nSelecting previously unselected package monetdb-client.\r\nPreparing to unpack .../3-monetdb-client_11.43.15_amd64.deb ...\r\nUnpacking monetdb-client (11.43.15) ...\r\nSelecting previously unselected package monetdb5-server.\r\nPreparing to unpack .../4-monetdb5-server_11.43.15_amd64.deb ...\r\nUnpacking monetdb5-server (11.43.15) ...\r\nSelecting previously unselected package monetdb5-sql.\r\nPreparing to unpack .../5-monetdb5-sql_11.43.15_amd64.deb ...\r\nUnpacking monetdb5-sql (11.43.15) ...\r\nSetting up libmonetdb-stream25 (11.43.15) ...\r\nSetting up libmonetdb25 (11.43.15) ...\r\nSetting up libmonetdb-client25 (11.43.15) ...\r\nSetting up monetdb5-server (11.43.15) ...\r\nAdding group `monetdb' (GID 121) ...\r\nDone.\r\nWarning: The home dir /var/lib/monetdb you specified already exists.\r\nAdding system user `monetdb' (UID 114) ...\r\nAdding new user `monetdb' (UID 114) with group `monetdb' ...\r\nThe home directory `/var/lib/monetdb' already exists. Not copying from `/etc/skel'.\r\nadduser: Warning: The home directory `/var/lib/monetdb' does not belong to the user you are currently creating.\r\nSetting up monetdb-client (11.43.15) ...\r\nSetting up monetdb5-sql (11.43.15) ...\r\nProcessing triggers for man-db (2.10.2-1) ...\r\nProcessing triggers for libc-bin (2.35-0ubuntu3) ...\r\nScanning processes... \r\nScanning linux images... \r\n\r\nRunning kernel seems to be up-to-date.\r\n\r\nNo services need to be restarted.\r\n\r\nNo containers need to be restarted.\r\n\r\nNo user sessions are running outdated binaries.\r\n\r\nNo VM guests are running outdated hypervisor (qemu) binaries on this host.\r\nubuntu@ip-172-31-9-151:~$ sudo monetdbd create /var/lib/monetdb\r\nubuntu@ip-172-31-9-151:~$ sudo monetdbd start /var/lib/monetdb\r\nubuntu@ip-172-31-9-151:~$ sudo monetdbd create test\r\nubuntu@ip-172-31-9-151:~$ sudo monetdbd start test\r\nmonetdbd: binding to stream socket port 50000 failed: Address already in use\r\nubuntu@ip-172-31-9-151:~$ sudo monetdbd release test\r\nmonetdbd: unknown command: release\r\nusage: monetdbd command [ command-options ] <dbfarm>\r\n where command is one of:\r\n create, start, stop, get, set, version or help\r\n use the help command to get help for a particular command\r\n The dbfarm to operate on must always be given to\r\n monetdbd explicitly.\r\n```" | Easy setup from the documentation does not work | https://api.github.com/repos/MonetDB/MonetDB/issues/7309/comments | 6 | 2022-06-26T02:09:36Z | 2022-07-13T17:59:19Z | https://github.com/MonetDB/MonetDB/issues/7309 | 1,284,773,265 | 7,309 |

[

"MonetDB",

"MonetDB"

] | The following consecutive gdb statements cause a segmentation fault to occur in `bat_storage.c` on `Jan2022` when built in debug mode.

You can run it as a script by copy pasting the content of the commands into in a file `reproduction-steps.gdb` and then execute

```

sed '/^$/d' reproduction-steps.gdb | gdb mserver5

````

Above commands removes the empty lines from the file and executes the statements in gdb interactively. Empty lines execute the previous commands in interactive mode which can be problematic for the reproduction so therefore they are removed.

Side note: we cannot unfortunately execute the steps as a gdb command file, e.g. `gdb -command reproduction-steps.gdb mserver5` because the `interrrupt` command simply does not work as expected when called from a command file and the combination of break and call as I am using below is considered an error in gdb scripted mode.

```

set pagination off

set non-stop on

shell rm -rf /tmp/devdb

break SQLprelude

run --dbpath=/tmp/devdb

finish

del

# create client 0

p MCinitClient((oid) 0, NULL, NULL)

set $c0 = $0

call $c0->usermodule = userModule()

#Set up the initial state of the database:

# The database consists of a single column table

# This columns consist of the 3 values in the same fysical order as SPECIFIED in VALUES expression

print SQLstatementIntern($c0, "CREATE TABLE foo(i) AS VALUES (10), (20), (30);", "foobar", 1, 0, NULL)

p (MT_Id*) GDKmalloc_internal(sizeof(MT_Id), false)

set $tid = $0

#create client 1 that executes in its own thread

call MT_create_thread($tid, profilerHeartbeat, NULL, MT_THR_DETACHED, "heartbeat")

set $t1 = *$tid

p MCinitClient((oid) 0, NULL, NULL)

set $c1 = $0

call $c1->usermodule = userModule()

thread $t1

interrupt

# client 1 wants to append a value at the end of foo

break log_storage

call SQLstatementIntern($c1, "INSERT INTO foo VALUES (40);", "foobar", 1, 0, NULL)

del

# breaks when client 1 is about to read the segment structure of foo.

#create client 2 that executes in its own thread

call MT_create_thread($tid, profilerHeartbeat, NULL, MT_THR_DETACHED, "heartbeat")

set $t2 = *$tid

p MCinitClient((oid) 0, NULL, NULL)

set $c2 = $0

call $c2->usermodule = userModule()

thread $t2

interrupt

#client 2 concurrently to client 1 wants to delete the original middle value from foo

break split_segment

call SQLstatementIntern($c2, "DELETE FROM foo WHERE i = 20;", "foobar", 1, 0, NULL)

del

# breaks when client 2 is about to modify some pointer in the structure of the segments of foo

watch o->next

continue

del

# we mimic a pointer which is in the process of being written to be set to invalid address 1 (I know: its UD)

p o->next = (segment*) 1

# now back to client 1 which continue executing log_storage

# which has to read the segments of foo which is currently being modified

# and. ...

thread $t1

finish

# poof.

``` | Race condition in MVCC transaction management | https://api.github.com/repos/MonetDB/MonetDB/issues/7308/comments | 2 | 2022-06-23T11:15:17Z | 2024-06-27T13:17:41Z | https://github.com/MonetDB/MonetDB/issues/7308 | 1,282,237,117 | 7,308 |

[

"MonetDB",

"MonetDB"

] |

By default, we used to get the timings after any SQL execution from mclient. In MonetDB-11.43.5, we no longer see that. Do we need to add a parameter or is it being removed from the default?

OLD Version -

---------------------

sql>COPY select * from "tstsql_1" INTO '/nztoexa/di_export/DATA_TO_EXPORT/tstsql_2.dat' using delimiters '|','\n','"' NULL AS '';

10 affected rows (6.822ms) ---->>>> missing in new version mclient

New Version -

----------------------

sql>COPY select * from "tstsql_1" INTO '/nztoexa/di_export/DATA_TO_EXPORT/tstsql_1.dat' using delimiters '|','\n','"' NULL AS '';

sql>

| SQL output time not being displayed by default | https://api.github.com/repos/MonetDB/MonetDB/issues/7307/comments | 1 | 2022-06-20T11:50:37Z | 2022-07-06T07:52:57Z | https://github.com/MonetDB/MonetDB/issues/7307 | 1,276,808,658 | 7,307 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

ODBC Driver assertion failed

**To Reproduce**

command to start odbc client:

```sh

apt install unixodbc

# config MonetDB in $HOME/.odbc.ini

...

isql monetdb -v

```

Input the following statements:

```sql

SELECT avg(42) over (order by row_number() over ());

SELECT 1;

```

It will end up with an assertion failure:

```

+---------------------------------------+

| Connected! |

| |

| sql-statement |

| help [tablename] |

| quit |

| |

+---------------------------------------+

SQL> SELECT avg(42) over (order by row_number() over ())

[37000][MonetDB][ODBC Driver 11.44.0]unexpected end of file

[ISQL]ERROR: Could not SQLPrepare

SQL> SELECT 1

isql: /root/MonetDB/clients/odbc/driver/ODBCStmt.c:194: destroyODBCStmt: Assertion `stmt->Dbc->FirstStmt' failed.

fish: “isql monetdb -v” terminated by signal SIGABRT (Abort)

```

**Expected behavior**

```

+---------------------------------------+

| Connected! |

| |

| sql-statement |

| help [tablename] |

| quit |

| |

+---------------------------------------+

SQL> SELECT avg(42) over (order by row_number() over ())

[37000][MonetDB][ODBC Driver 11.44.0]unexpected end of file

[ISQL]ERROR: Could not SQLPrepare

SQL> SELECT 1

+-----+

| %2 |

+-----+

| 1 |

+-----+

SQLRowCount returns 1

1 rows fetched

```

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Software versions**

- ODBC Driver version number: MonetDB ODBC Driver 11.44.0

- OS and version: Ubuntu 20.04, UnixODBC 2.3.6

- - MonetDB Server version number (I think that the server version doesn't matter): MonetDB Database Server v11.43.13 (hg id: ce33b6b12cd6)

**Issue labeling **

Make liberal use of the labels to characterise the issue topics. e.g. identify severity, version, etc..

| ODBC Driver Assertion `stmt->Dbc->FirstStmt' Failed | https://api.github.com/repos/MonetDB/MonetDB/issues/7306/comments | 8 | 2022-06-19T01:18:36Z | 2024-06-27T13:17:40Z | https://github.com/MonetDB/MonetDB/issues/7306 | 1,275,924,048 | 7,306 |

[

"MonetDB",

"MonetDB"

] | When executing my python UDF in MonetDB on the 8-core machine, no matter using PYTHON or PYTHON_MAP, monetDB only use 1 or at most 2 cores at 100% and is super slow. The integers is just a dummy table for starting app(). The code is as follows:

DROP TABLE integers;

CREATE TABLE integers(i INTEGER);

INSERT INTO integers VALUES (1);

DROP FUNCTION app;

CREATE FUNCTION app() RETURNS STRING LANGUAGE PYTHON_MAP

{

//bootstrap my application which will keep running in the background

return 'OK'

};

SELECT app() from integers;

is there a way to parallelize app() to use all cores?

Thanks! | can we use all the cores for parallel execution of a stand-alone python UDF | https://api.github.com/repos/MonetDB/MonetDB/issues/7305/comments | 1 | 2022-06-16T13:47:59Z | 2022-08-06T09:55:59Z | https://github.com/MonetDB/MonetDB/issues/7305 | 1,273,591,755 | 7,305 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Concurrent creation of remote tables does not return a concurrency conflict error, it runs, but leads to errors.

**To Reproduce**

1) Open 3 databases (db1, db2, db3) with 2 local tables to 2 of them (db1, db2)

2) Concurrently create 2 remote tables to the third database (db3).

The create queries will run, but when selecting a remote table, it connects to the wrong database and fails to find it.

It seems a concurrency issue, however no error is returned during the create remote table queries.

**Software versions**

- MonetDB 11.44.0

- Ubuntu 22.04

| Concurrent creation of remote tables. | https://api.github.com/repos/MonetDB/MonetDB/issues/7304/comments | 6 | 2022-06-15T13:42:33Z | 2024-06-07T12:43:33Z | https://github.com/MonetDB/MonetDB/issues/7304 | 1,272,259,005 | 7,304 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

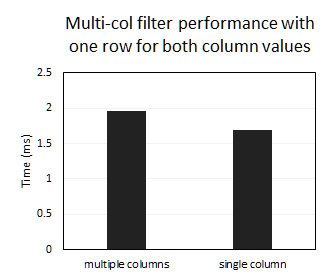

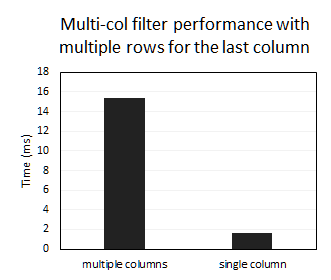

The performance of multi-column filters is considerably slower than if we were to filter by a single column encoding those multiple values using, for example, a string. I am not sure if MonetDB implements multi-column indexes to solve this.

**To Reproduce**

- Create test data where each row has both unique k1 and k2:

```sql

CREATE TABLE Test (k1 int, k2 int, v int, k1k2 varchar(22));

INSERT INTO Test

SELECT value AS k1, value AS k2, value AS v,

value || '.' || value AS k1k2 -- concatenated k1 and k2

FROM generate_series(1, 10000000);

-- not sure if this index is actually created

CREATE INDEX Test_index on Test (k1, k2);

SELECT *

FROM Test

LIMIT 3;

+------+------+------+------+

| k1 | k2 | v | k1k2 |

+======+======+======+======+

| 1 | 1 | 1 | 1.1 |

| 2 | 2 | 2 | 2.2 |

| 3 | 3 | 3 | 3.3 |

+------+------+------+------+

-- as we can see, both k1 and k2 are unique across all rows

```

- The performance of filtering using multiple columns and the single encoded column are similar:

```sql

-- multiple columns

SELECT *

FROM Test

WHERE k1 = 5555555 AND k2 = 5555555;

-- single column

SELECT *

FROM Test

WHERE k1k2 = '5555555.5555555';

```

- Create test data with the second example where the last column in the filter is not unique:

```sql

DROP TABLE Test;

CREATE TABLE Test (k1 int, k2 int, v int, k1k2 varchar(22));

INSERT INTO Test

SELECT value AS k1, 1 AS k2, value AS v, -- k2 is 1 for all rows

value || '.' || 1 AS k1k2 -- concatenated k1 and k2

FROM generate_series(1, 10000000);

-- not sure if this index is actually created

CREATE INDEX Test_index ON Test (k1, k2);

SELECT *

FROM Test

LIMIT 3;

+------+------+------+------+

| k1 | k2 | v | k1k2 |

+======+======+======+======+

| 1 | 1 | 1 | 1.1 |

| 2 | 1 | 2 | 2.1 |

| 3 | 1 | 3 | 3.1 |

+------+------+------+------+

```

- The performance of filtering by multiple columns is considerably slower than filtering by the single column:

```sql

-- multiple columns

SELECT *

FROM Test

WHERE k1 = 5555555 AND k2 = 1;

-- single column

SELECT *

FROM Test

WHERE k1k2 = '5555555.5';

```

If we instead filter just by `k1`, the performance becomes similar to the first example, which points to the planner executing both filters separately and then combining the results.

**Expected behavior**

The performance of filtering by multiple columns to match the performance of filtering by a single column encoding all values.

**Software versions**

- MonetDB v11.44.0 (hg id: b8bb2db896) (master branch, latest commit)

- Ubuntu 20.04 LTS

- Self-installed and compiled

**Additional context**

MAL plans for both queries in the second example:

```sql

-- multiple columns

+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| mal |

+================================================================================================================================================================================+

| function user.main():void; |

| X_1:void := querylog.define("explain select * \nfrom test\nwhere k1 = 5555555 and k2 = 1;":str, "default_pipe":str, 40:int); |

| barrier X_126:bit := language.dataflow(); |

| X_4:int := sql.mvc(); |

| X_8:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "k1":str, 0:int); |

| X_15:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "k2":str, 0:int); |

| X_20:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "v":str, 0:int); |

| X_27:bat[:str] := sql.bind(X_4:int, "sys":str, "test":str, "k1k2":str, 0:int); |

| X_34:bat[:lng] := sql.bind_idxbat(X_4:int, "sys":str, "test":str, "test_index":str, 0:int); |

| X_43:lng := mkey.hash(5555555:int); |

| X_46:lng := mkey.rotate_xor_hash(X_43:lng, 22:int, 1:int); |

| C_47:bat[:oid] := algebra.select(X_34:bat[:lng], X_46:lng, X_46:lng, true:bit, true:bit, false:bit); |

| C_52:bat[:oid] := algebra.thetaselect(X_8:bat[:int], C_47:bat[:oid], 5555555:int, "==":str); |

| C_55:bat[:oid] := algebra.thetaselect(X_15:bat[:int], C_52:bat[:oid], 1:int, "==":str); |

| X_56:bat[:int] := algebra.projection(C_55:bat[:oid], X_8:bat[:int]); |

| X_128:void := language.pass(X_8:bat[:int]); |

| X_57:bat[:int] := algebra.projection(C_55:bat[:oid], X_15:bat[:int]); |

| X_129:void := language.pass(X_15:bat[:int]); |

| X_58:bat[:int] := algebra.projection(C_55:bat[:oid], X_20:bat[:int]); |

| X_59:bat[:str] := algebra.projection(C_55:bat[:oid], X_27:bat[:str]); |

| X_130:void := language.pass(C_55:bat[:oid]); |

| X_62:bat[:str] := bat.pack("sys.test":str, "sys.test":str, "sys.test":str, "sys.test":str); |

| X_63:bat[:str] := bat.pack("k1":str, "k2":str, "v":str, "k1k2":str); |

| X_64:bat[:str] := bat.pack("int":str, "int":str, "int":str, "varchar":str); |

| X_65:bat[:int] := bat.pack(32:int, 32:int, 32:int, 22:int); |

| X_66:bat[:int] := bat.pack(0:int, 0:int, 0:int, 0:int); |

| exit X_126:bit; |

| X_61:int := sql.resultSet(X_62:bat[:str], X_63:bat[:str], X_64:bat[:str], X_65:bat[:int], X_66:bat[:int], X_56:bat[:int], X_57:bat[:int], X_58:bat[:int], X_59:bat[:str]); |

| end user.main; |

| # optimizer.inline(0:int, 1:lng) |

| # optimizer.remap(0:int, 1:lng) |

| # optimizer.costModel(1:int, 1:lng) |

| # optimizer.coercions(0:int, 2:lng) |

| # optimizer.aliases(5:int, 4:lng) |

| # optimizer.evaluate(0:int, 4:lng) |

| # optimizer.emptybind(5:int, 5:lng) |

| # optimizer.deadcode(7:int, 4:lng) |

| # optimizer.pushselect(0:int, 7:lng) |

| # optimizer.aliases(5:int, 3:lng) |

| # optimizer.for(0:int, 2:lng) |

| # optimizer.dict(0:int, 2:lng) |

| # optimizer.mitosis() |

| # optimizer.mergetable(0:int, 5:lng) |

| # optimizer.bincopyfrom(0:int, 0:lng) |

| # optimizer.aliases(0:int, 0:lng) |

| # optimizer.constants(2:int, 4:lng) |

| # optimizer.commonTerms(0:int, 3:lng) |

| # optimizer.projectionpath(0:int, 2:lng) |

| # optimizer.deadcode(0:int, 2:lng) |

| # optimizer.matpack(0:int, 1:lng) |

| # optimizer.reorder(1:int, 6:lng) |

| # optimizer.dataflow(1:int, 9:lng) |

| # optimizer.querylog(0:int, 1:lng) |

| # optimizer.multiplex(0:int, 1:lng) |

| # optimizer.generator(0:int, 1:lng) |

| # optimizer.candidates(1:int, 1:lng) |

| # optimizer.deadcode(0:int, 3:lng) |

| # optimizer.postfix(0:int, 2:lng) |

| # optimizer.wlc(0:int, 0:lng) |

| # optimizer.garbageCollector(1:int, 6:lng) |

| # optimizer.profiler(0:int, 1:lng) |

| # optimizer.total(31:int, 119:lng) |

+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

-- single column

+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| mal |

+================================================================================================================================================================================+

| function user.main():void; |

| X_1:void := querylog.define("explain select *\nfrom test\nwhere k1k2 = \\'5555555.5\\';":str, "default_pipe":str, 28:int); |

| barrier X_105:bit := language.dataflow(); |

| X_4:int := sql.mvc(); |

| C_5:bat[:oid] := sql.tid(X_4:int, "sys":str, "test":str); |

| X_8:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "k1":str, 0:int); |

| X_15:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "k2":str, 0:int); |

| X_20:bat[:int] := sql.bind(X_4:int, "sys":str, "test":str, "v":str, 0:int); |

| X_27:bat[:str] := sql.bind(X_4:int, "sys":str, "test":str, "k1k2":str, 0:int); |

| C_36:bat[:oid] := algebra.thetaselect(X_27:bat[:str], C_5:bat[:oid], "5555555.5":str, "==":str); |

| X_38:bat[:int] := algebra.projection(C_36:bat[:oid], X_8:bat[:int]); |

| X_39:bat[:int] := algebra.projection(C_36:bat[:oid], X_15:bat[:int]); |

| X_40:bat[:int] := algebra.projection(C_36:bat[:oid], X_20:bat[:int]); |

| X_41:bat[:str] := algebra.projection(C_36:bat[:oid], X_27:bat[:str]); |

| X_107:void := language.pass(C_36:bat[:oid]); |

| X_108:void := language.pass(X_27:bat[:str]); |

| X_43:bat[:str] := bat.pack("sys.test":str, "sys.test":str, "sys.test":str, "sys.test":str); |

| X_44:bat[:str] := bat.pack("k1":str, "k2":str, "v":str, "k1k2":str); |

| X_45:bat[:str] := bat.pack("int":str, "int":str, "int":str, "varchar":str); |

| X_46:bat[:int] := bat.pack(32:int, 32:int, 32:int, 22:int); |

| X_47:bat[:int] := bat.pack(0:int, 0:int, 0:int, 0:int); |

| exit X_105:bit; |

| X_42:int := sql.resultSet(X_43:bat[:str], X_44:bat[:str], X_45:bat[:str], X_46:bat[:int], X_47:bat[:int], X_38:bat[:int], X_39:bat[:int], X_40:bat[:int], X_41:bat[:str]); |

| end user.main; |

| # optimizer.inline(0:int, 1:lng) |

| # optimizer.remap(0:int, 1:lng) |

| # optimizer.costModel(1:int, 1:lng) |

| # optimizer.coercions(0:int, 3:lng) |

| # optimizer.aliases(1:int, 4:lng) |

| # optimizer.evaluate(0:int, 3:lng) |

| # optimizer.emptybind(4:int, 6:lng) |

| # optimizer.deadcode(4:int, 4:lng) |

| # optimizer.pushselect(0:int, 7:lng) |

| # optimizer.aliases(4:int, 2:lng) |

| # optimizer.for(0:int, 2:lng) |

| # optimizer.dict(0:int, 2:lng) |

| # optimizer.mitosis() |

| # optimizer.mergetable(0:int, 5:lng) |

| # optimizer.bincopyfrom(0:int, 0:lng) |

| # optimizer.aliases(0:int, 1:lng) |

| # optimizer.constants(0:int, 3:lng) |

| # optimizer.commonTerms(0:int, 3:lng) |

| # optimizer.projectionpath(0:int, 2:lng) |

| # optimizer.deadcode(0:int, 2:lng) |

| # optimizer.matpack(0:int, 0:lng) |

| # optimizer.reorder(1:int, 6:lng) |

| # optimizer.dataflow(1:int, 9:lng) |

| # optimizer.querylog(0:int, 1:lng) |

| # optimizer.multiplex(0:int, 1:lng) |

| # optimizer.generator(0:int, 1:lng) |

| # optimizer.candidates(1:int, 1:lng) |

| # optimizer.deadcode(0:int, 2:lng) |

| # optimizer.postfix(0:int, 3:lng) |

| # optimizer.wlc(0:int, 1:lng) |

| # optimizer.garbageCollector(1:int, 5:lng) |

| # optimizer.profiler(0:int, 0:lng) |

| # optimizer.total(31:int, 114:lng) |

+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

```

| Improve the performance of multi-column filters | https://api.github.com/repos/MonetDB/MonetDB/issues/7303/comments | 3 | 2022-06-13T14:38:20Z | 2024-06-27T13:17:39Z | https://github.com/MonetDB/MonetDB/issues/7303 | 1,269,545,250 | 7,303 |

[

"MonetDB",

"MonetDB"

] | I am looking to use MonetDB for a time series data by partition the data using a given time interval, for example, by day. The aim in this case is that each partition would contain data of a particular day.

From the [documentation](https://www.monetdb.org/documentation-Jan2022/user-guide/sql-catalog/table-data-partitioning/), I can see that MonetDB provides partitioning as a feature, by I couldn't know how to implement it, I have tried for example: `PARTITION BY DAY`, such is implemented by other systems, by that didn't work.

How could data be partitioned using a fixed time period interval in MonetDB?

Thanks. | MonetDB : Partitioning Data by Time Intervals | https://api.github.com/repos/MonetDB/MonetDB/issues/7302/comments | 1 | 2022-06-12T13:10:30Z | 2022-06-13T10:24:52Z | https://github.com/MonetDB/MonetDB/issues/7302 | 1,268,577,041 | 7,302 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

The query planner sometimes cannot optimize the push-down of filters to the source tables, meaning the subqueries are unnecessarily materialized before the filter is applied, resulting in long execution times and high memory usage. In practice, this means filtering complex views becomes less viable.

**To Reproduce**

- Create test data:

```sql

CREATE TABLE Test (k int, v int);

INSERT INTO Test

SELECT value AS k, value AS v

FROM generate_series(1, 100000000);

```

- The planner is able to optimize the push-down for simple queries. In the plan below, the filter `k = 1231231` is applied to the source tables before the `UNION ALL` is performed:

```sql

SELECT k, v

FROM (

(SELECT k, v

FROM Test)

UNION ALL

(SELECT k, v

FROM Test)

) t

WHERE k = 1231231;

-- clk: 1.574 ms

+------------------------------------------------------------------------------------------+

| rel |

+==========================================================================================+

| union ( |

| | project ( |

| | | select ( |

| | | | table("sys"."test") [ "test"."k" ..., "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | ) [ ("test"."k" ...) = (int(21) "1231231") ] COUNT 99999999 -- filter k = 1231231 |

| | ) [ "test"."k" ... as "t"."k", "test"."v" NOT NULL UNIQUE as "t"."v" ] COUNT 99999999, |

| | project ( |

| | | select ( |

| | | | table("sys"."test") [ "test"."k" ..., "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | ) [ ("test"."k" ...) = (int(21) "1231231") ] COUNT 99999999 -- filter k = 1231231 |

| | ) [ "test"."k" ... as "t"."k", "test"."v" NOT NULL UNIQUE as "t"."v" ] COUNT 99999999 |

| ) [ "t"."k" NOT NULL, "t"."v" NOT NULL ] COUNT 199999998 |

...

+------------------------------------------------------------------------------------------+

```

- However, if we add more complexity to the subquery, the planner does not push down the filters, even though it would result in an equivalent plan:

```sql

SELECT k, v

FROM (

SELECT *, rank() OVER (PARTITION BY k ORDER BY v DESC) AS rank

FROM (

(SELECT k, v

FROM Test)

UNION ALL

(SELECT k, v

FROM Test)

) t1

) t2

WHERE rank = 1

AND k = 1231231;

-- clk: 1:15 min

+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| rel |

+===============================================================================================================================================================================+

| project ( |

| | select ( |

| | | project ( |

| | | | project ( |

| | | | | union ( |

| | | | | | project ( |

| | | | | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | | | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 as "%1"."k", "test"."v" NOT NULL UNIQUE as "%1"."v" ] COUNT 99999999, |

| | | | | | project ( |

| | | | | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | | | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 as "%2"."k", "test"."v" NOT NULL UNIQUE as "%2"."v" ] COUNT 99999999 |

| | | | | ) [ "%1"."k" NOT NULL as "t1"."k", "%1"."v" NOT NULL as "t1"."v" ] COUNT 199999998 |

| | | | ) [ "t1"."k" NOT NULL, "t1"."v" NOT NULL ] [ "t1"."k" ASC NOT NULL, "t1"."v" NULLS LAST NOT NULL ] COUNT 199999998 |

| | | ) [ "t1"."k" NOT NULL, "t1"."v" NOT NULL, "sys"."rank"("sys"."star"(), "sys"."diff"("t1"."k" NOT NULL), "sys"."diff"("t1"."v" NOT NULL)) as "t2"."rank" ] COUNT 199999998 |

| | ) [ ("t2"."rank") = (int(32) "1"), ("t1"."k" NOT NULL) = (int(21) "1231231") ] COUNT 199999998 -- the k = 1231231 filter is only executed after the materization |

| ) [ "t1"."k" NOT NULL as "t2"."k", "t1"."v" NOT NULL as "t2"."v" ] COUNT 199999998 |

| split_select 0 actions 1 usec |

| push_project_down 3 actions 3 usec |

| merge_projects 0 actions 2 usec |

| push_project_up 2 actions 4 usec |

| split_project 0 actions 1 usec |

| simplify_math 0 actions 1 usec |

| optimize_exps 0 actions 2 usec |

| optimize_select_and_joins_bottomup 0 actions 3 usec |

| project_reduce_casts 0 actions 0 usec |

| optimize_unions_bottomup 0 actions 1 usec |

| optimize_projections 0 actions 2 usec |

| optimize_select_and_joins_topdown 0 actions 2 usec |

| optimize_unions_topdown 0 actions 1 usec |

| dce 0 actions 4 usec |

| push_func_and_select_down 0 actions 1 usec |

| get_statistics 0 actions 102 usec |

| final_optimization_loop 0 actions 2 usec |

+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

33 tuples

```

- If we push the filter manually, the query executes more than 30000x faster:

```sql

-- manual filter push-down

SELECT k, v

FROM (

SELECT *, rank() OVER (PARTITION BY k ORDER BY v DESC) AS rank

FROM (

(SELECT k, v

FROM Test

WHERE k = 1231231)

UNION ALL

(SELECT k, v

FROM Test

WHERE k = 1231231)

) t1

) t2

WHERE rank = 1;

-- clk: 2.357 ms

+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| rel |

+===============================================================================================================================================================================+

| project ( |

| | select ( |

| | | project ( |

| | | | project ( |

| | | | | union ( |

| | | | | | project ( |

| | | | | | | select ( |

| | | | | | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | | | | | ) [ ("test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000) = (int(21) "1231231") ] COUNT 99999999 |

| | | | | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 as "%1"."k", "test"."v" NOT NULL UNIQUE as "%1"."v" ] COUNT 99999999, |

| | | | | | project ( |

| | | | | | | select ( |

| | | | | | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | | | | | ) [ ("test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000) = (int(21) "1231231") ] COUNT 99999999 |

| | | | | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 as "%2"."k", "test"."v" NOT NULL UNIQUE as "%2"."v" ] COUNT 99999999 |

| | | | | ) [ "%1"."k" NOT NULL as "t1"."k", "%1"."v" NOT NULL as "t1"."v" ] COUNT 199999998 |

| | | | ) [ "t1"."k" NOT NULL, "t1"."v" NOT NULL ] [ "t1"."k" ASC NOT NULL, "t1"."v" NULLS LAST NOT NULL ] COUNT 199999998 |

| | | ) [ "t1"."k" NOT NULL, "t1"."v" NOT NULL, "sys"."rank"("sys"."star"(), "sys"."diff"("t1"."k" NOT NULL), "sys"."diff"("t1"."v" NOT NULL)) as "t2"."rank" ] COUNT 199999998 |

| | ) [ ("t2"."rank") = (int(32) "1") ] COUNT 199999998 |

| ) [ "t1"."k" NOT NULL as "t2"."k", "t1"."v" NOT NULL as "t2"."v" ] COUNT 199999998 |

| split_select 0 actions 1 usec |

| push_project_down 3 actions 3 usec |

| merge_projects 0 actions 1 usec |

| push_project_up 2 actions 4 usec |

| split_project 0 actions 1 usec |

| simplify_math 0 actions 1 usec |

| optimize_exps 0 actions 2 usec |

| optimize_select_and_joins_bottomup 0 actions 3 usec |

| project_reduce_casts 0 actions 0 usec |

| optimize_unions_bottomup 0 actions 2 usec |

| optimize_projections 0 actions 1 usec |

| optimize_select_and_joins_topdown 0 actions 2 usec |

| optimize_unions_topdown 0 actions 1 usec |

| dce 0 actions 6 usec |

| push_func_and_select_down 0 actions 0 usec |

| get_statistics 0 actions 78 usec |

| final_optimization_loop 0 actions 1 usec |

+-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

37 tuples

```

- PostgreSQL, on the other hand, is able to optimize the query:

```sql

EXPLAIN ANALYZE

SELECT k, v

FROM (

SELECT *, rank() OVER (PARTITION BY k ORDER BY v DESC) AS rank

FROM (

(SELECT k, v

FROM Test)

UNION ALL

(SELECT k, v

FROM Test)

) t1

) t2

WHERE rank = 1

AND k = 1231231

QUERY PLAN

------------------------------------------------------------------------------------------------------------------------------------------------

Subquery Scan on t2 (cost=...) (actual time=0.031..0.034 rows=2 loops=1)

Filter: (t2.rank = 1)

-> WindowAgg (cost=...) (actual time=0.030..0.032 rows=2 loops=1)

-> Sort (cost=...) (actual time=0.024..0.024 rows=2 loops=1)

Sort Key: test.v DESC

Sort Method: quicksort Memory: 25kB

-> Append (cost=...) (actual time=0.016..0.020 rows=2 loops=1)

-> Index Scan using test_k_idx on test (cost=...) (actual time=0.016..0.016 rows=1 loops=1)

Index Cond: (k = 1231231)

-> Index Scan using test_k_idx on test test_1 (cost=...) (actual time=0.002..0.002 rows=1 loops=1)

Index Cond: (k = 1231231)

Planning Time: 0.104 ms

Execution Time: 0.055 ms

(13 rows)

```

- Another example:

```sql

SELECT k, v

FROM Test

WHERE (k, v) IN (

-- the planner could push the filter to this query aswell, but it doesn't

SELECT k, max(v)

FROM Test

GROUP BY k

)

AND k = 1231231;

-- clk: 2.544 sec

+---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| rel |

+===============================================================================================================================================================================================================+

| project ( |

| | semijoin ( |

| | | select ( |

| | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | ) [ ("test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000) = (int(21) "1231231") ] COUNT 99999999, |

| | | project ( |

| | | | group by ( |

| | | | | table("sys"."test") [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| | | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 ] [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "sys"."max" no nil ("test"."v" NOT NULL UNIQUE) NOT NULL as "%2"."%2" ] COUNT 99999999 |

| | | ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000 as "%7"."%7", "%2"."%2" NOT NULL as "%10"."%10" ] COUNT 99999999 |

| | ) [ ("test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000) any = ("%7"."%7" NOT NULL UNIQUE NUNIQUES 99999999.000000), ("test"."v" NOT NULL UNIQUE) any = ("%10"."%10" NOT NULL) ] COUNT 99999999 |

| ) [ "test"."k" NOT NULL UNIQUE NUNIQUES 99999999.000000, "test"."v" NOT NULL UNIQUE ] COUNT 99999999 |

| split_select 0 actions 1 usec |

| push_project_down 0 actions 0 usec |

| merge_projects 0 actions 1 usec |

| push_project_up 0 actions 0 usec |

| split_project 0 actions 1 usec |

| remove_redundant_join 0 actions 0 usec |

| simplify_math 0 actions 1 usec |

| optimize_exps 0 actions 1 usec |

| optimize_select_and_joins_bottomup 0 actions 2 usec |

| project_reduce_casts 0 actions 0 usec |

| optimize_projections 0 actions 3 usec |

| optimize_joins 0 actions 1 usec |

| optimize_semi_and_anti 0 actions 0 usec |

| optimize_select_and_joins_topdown 0 actions 6 usec |

| dce 0 actions 4 usec |

| push_func_and_select_down 0 actions 1 usec |

| get_statistics 0 actions 32 usec |

| final_optimization_loop 0 actions 0 usec |

+---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

30 tuples

-- PostgreSQL is also able to optimize this one

QUERY PLAN

------------------------------------------------------------------------------------------------------------------------------------

Nested Loop Semi Join (...) (actual time=0.047..0.048 rows=1 loops=1)

Join Filter: (test.v = (max(test_1.v)))

-> Index Scan using test_k_idx on test (...) (actual time=0.031..0.031 rows=1 loops=1)

Index Cond: (k = 1231231)

-> GroupAggregate (...) (actual time=0.013..0.013 rows=1 loops=1)

Group Key: test_1.k

-> Index Scan using test_k_idx on test test_1 (...) (actual time=0.004..0.006 rows=1 loops=1)

Index Cond: (k = 1231231)

Planning Time: 3.834 ms

Execution Time: 0.094 ms

(10 rows)

```

**Expected behavior**

The planner being able to push-down the filter to the source tables if it results in an equivalent plan.

**Software versions**

- MonetDB 5 server v11.44.0 (hg id: 7e070d188d) (master branch; most recent commit)

- Ubuntu 20.04 LTS

- Self-installed and compiled | Query planner unable to optimize filter push-down | https://api.github.com/repos/MonetDB/MonetDB/issues/7301/comments | 5 | 2022-06-09T18:09:45Z | 2024-06-27T13:17:37Z | https://github.com/MonetDB/MonetDB/issues/7301 | 1,266,488,093 | 7,301 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

MonetDB does not yet support all standard SQL DATE and TIMESTAMP functions.

We miss SQL functions:

- DAYNAME(date or timestamp) returns VARCHAR

- MONTHNAME(date or timestamp) returns VARCHAR

- TIMESTAMPADD(unit, interval, timestamp) returns TIMESTAMP

- TIMESTAMPDIFF(unit, timestamp, timestamp) returns INTERVAL

See also: https://www.monetdb.org/hg/MonetDB/file/tip/clients/odbc/driver/SQLGetInfo.c#l1048

**Describe the solution you'd like**

Implement these 4 scalar date-time functions to be more SQL compliant.

**Describe alternatives you've considered**

Building your own UDFs

Instead of DAYNAME() we support: dayofweek(dt_or_ts) function which returns a day of week number between 1 and 7. With a case statement the respective day name (in English) could be returned by the UDF.

Instead of MONTHNAME() we support: "month"(dt_or_ts) function which returns a month number between 1 and 12. With a case statement the respective month name (in English) could be returned by the UDF.

Instead of TIMESTAMPADD() we support: several sys.sql_add(dt_or_ts, interval) functions.

Instead of TIMESTAMPDIFF() we support: several sys.sql_sub(dt_or_ts, dt_or_ts) functions.

See: https://www.monetdb.org/documentation-Jan2022/user-guide/sql-functions/date-time-functions/

**Additional context**

See also:

https://www.w3schools.com/sql/func_mysql_dayname.asp

https://www.ibm.com/docs/en/db2/11.5?topic=functions-dayname

https://www.w3schools.com/sql/func_mysql_monthname.asp

https://www.ibm.com/docs/en/db2/11.5?topic=functions-monthname

https://www.w3resource.com/mysql/date-and-time-functions/mysql-timestampadd-function.php

https://www.w3resource.com/mysql/date-and-time-functions/mysql-timestampdiff-function.php

https://www.ibm.com/docs/en/db2/11.5?topic=functions-timestampdiff

| Implement missing standard SQL DATE and TIMESTAMP functions | https://api.github.com/repos/MonetDB/MonetDB/issues/7300/comments | 1 | 2022-06-09T17:25:19Z | 2024-06-27T13:17:36Z | https://github.com/MonetDB/MonetDB/issues/7300 | 1,266,443,087 | 7,300 |

[

"MonetDB",

"MonetDB"

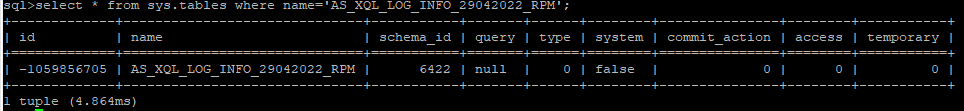

] | **Describe the bug**

sql>select * from sys.tables where name='AS_XQL_LOG_INFO_29042022_RPM';

+-------------+------------------------------+-----------+-------+------+--------+---------------+--------+-----------+

| id | name | schema_id | query | type | system | commit_action | access | temporary |

+=============+==============================+===========+=======+======+========+===============+========+===========+

| -1059856705 | AS_XQL_LOG_INFO_29042022_RPM | 6422 | null | 0 | false | 0 | 0 | 0 |

+-------------+------------------------------+-----------+-------+------+--------+---------------+--------+-----------+

**To Reproduce**

We don't have a specific way to reproduce it, yet if we check the sys.tables the ID shows a negative number.

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

**Software versions**

- MonetDB-11.43.13 - Even in older version

- CentOS 7

- Compiled

**Issue labeling **

Make liberal use of the labels to characterise the issue topics. e.g. identify severity, version, etc..

**Additional context**

Add any other context about the problem here.

| table corrupt and shows negative id, how can we remove them? | https://api.github.com/repos/MonetDB/MonetDB/issues/7299/comments | 5 | 2022-06-03T04:22:26Z | 2024-08-09T16:12:03Z | https://github.com/MonetDB/MonetDB/issues/7299 | 1,259,394,328 | 7,299 |

[

"MonetDB",

"MonetDB"

] | Assume that we have file `bogus.sql` with the following content:

```sql

select foo.

```

executing this script with the following command

```bash

mclient bogus.sql

```

causes `mclient` to silently exit without any result or error output and `mserver5` becomes non-responsive.

Happens on Jan2022.

| Irresponsive database server after reading incomplete SQL script. | https://api.github.com/repos/MonetDB/MonetDB/issues/7298/comments | 0 | 2022-06-02T08:02:28Z | 2024-06-27T13:17:35Z | https://github.com/MonetDB/MonetDB/issues/7298 | 1,257,836,492 | 7,298 |

[

"MonetDB",

"MonetDB"

] | Let say we have monthly data whose records are chronologically determined by a string field that represents year/month combination like: '1995-03'. As a partial date it would be nice to parse such values as complete `DATE` values by filling in a reasonable default for the missing date fields. However on MonetDB Januari 2022 we get the following behavior:

```

$ mclient -s "select str_to_date('1995-04', '%Y-%m');"

+------------+

| %2 |

+============+

| 1995-04-30 |

+------------+

1 tuple

$ mclient -s "select str_to_date('1995-02', '%Y-%m');"

bad date '1995-02'

$ mclient -s "select str_to_date('1995-01', '%Y-%m');"

+------------+

| %2 |

+============+

| 1995-01-30 |

+------------+

1 tuple

```

And I have seen cases where all of the above queries fail or that the day field value is different then `30`.

On Postgres all of the equivalent examples work:

```

$ psql -c "select to_date('1995-04', 'YYYY-MM');"

to_date

------------

1995-04-01

(1 row)

$ psql -c "select to_date('1995-02', 'YYYY-MM');"

to_date

------------

1995-02-01

(1 row)

$ psql -c "select to_date('1995-01', 'YYYY-MM');"

to_date

------------

1995-01-01

(1 row)

``` | Parsing partial dates behaves unpredictable | https://api.github.com/repos/MonetDB/MonetDB/issues/7297/comments | 5 | 2022-05-30T09:32:09Z | 2024-06-27T13:17:34Z | https://github.com/MonetDB/MonetDB/issues/7297 | 1,252,459,745 | 7,297 |

[

"MonetDB",

"MonetDB"

] | While playing around with [Apache Superset](https://superset.apache.org/) on top of MonetDB, I have noticed that Superset expects its database back-ends to be able to _**implicitly**_ cast any string that is formatted according to a valid standardized timestamp as a `DATE`.

However MonetDB does not seem to support this. The following script runs fine in postgresql, but gives errors on monetdb Januari 2022.

```sql

START TRANSACTION;

CREATE TABLE foo (d DATE);

INSERT INTO FOO VALUES (DATE '2022-05-23 00:00:00.000000'), (DATE '2022-05-30 00:00:00.000000'); -- works in MonetDB

INSERT INTO FOO VALUES ('2022-05-23'), ('2022-05-30'); -- works in MonetDB

INSERT INTO FOO VALUES ('2022-05-23 00:00:00.000000'), ('2022-05-30 00:00:00.000000'); -- breaks in MonetDB

ROLLBACK;

``` | Implictly cast a timestamp string to DATE when appropriate | https://api.github.com/repos/MonetDB/MonetDB/issues/7296/comments | 3 | 2022-05-30T08:59:38Z | 2024-06-27T13:17:33Z | https://github.com/MonetDB/MonetDB/issues/7296 | 1,252,417,714 | 7,296 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

With FIPS enabled, mclient throws error:

rmd_dgst.c(73): OpenSSL internal error, assertation failed: Digest RIPEMD160 forbidden in FIPS mode!

Aborted (core dumped)

**Software versions**

- MonetDB 11.33.3 (Apr2019)

- CentOS 7

- self-installed and compiled

- Libraries:

openssl: OpenSSl 1.0.2k-fips 26 Jan 2017

| mclient aborted w/ FIPS | https://api.github.com/repos/MonetDB/MonetDB/issues/7295/comments | 2 | 2022-05-29T13:07:33Z | 2022-05-31T09:06:49Z | https://github.com/MonetDB/MonetDB/issues/7295 | 1,251,884,651 | 7,295 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Queries using an ambiguous identifier do not fail accordingly. The ambiguity is beween an actual column and an alias.

**To Reproduce**

This query:

```

create table s(a int, w int);

create table t(x int, y int);

SELECT t.x as a, s.w as b

FROM s, t

GROUP by a, b;

```

fails with:

```

SELECT: cannot use non GROUP BY column 't.x' in query results without an aggregate function

```

The intention was to group on `t.x` (aliased as `a`)

At first sight, `t.x` seems grouped. The problem is that its alias clashes with `s.a`. So I think MonetDB is silently grouping on `s.a`, hence the complaint that `t.x` is not grouped. Indeed, the query works by replacing the group-by clause with `GROUP by t.x, b`.

This second query also "works" silently, while it should fail:

```

create table s(a int, w int);

create table t(x int, y int);

SELECT t.x as a, s.w as b

FROM s, t

where a = 0;

```

Again, `a` is totally ambiguous, but MonetDB chooses one instance silently (we can't even guess which one in this case).

**Expected behavior**

Both queries should fail with an "ambiguous identifier" error

**Software versions**

- MonetDB 11.43.14

- OS and version: Rocky Linux 8.5

- self-installed and compiled

| Ambiguous identifier not detected | https://api.github.com/repos/MonetDB/MonetDB/issues/7294/comments | 7 | 2022-05-25T12:18:13Z | 2024-06-07T11:43:52Z | https://github.com/MonetDB/MonetDB/issues/7294 | 1,248,011,719 | 7,294 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Running multiple queries from different clients involving Numpy UDFs hangs (it seems that creates too many servers)

**To Reproduce**

Define the following function

CREATE OR REPLACE FUNCTION bug_test(total_population INTEGER)

RETURNS INTEGER

LANGUAGE PYTHON

{

sum_ = 0

for p in total_population:

sum_ += p

return sum_

};

and run the query `select bug_test(col1) from atable;` with a table containing 10K integer rows, from multiple clients in parallel (we tested with 12 and 24)

**Expected behavior**

All the queries return the result

**Software versions**

- MonetDB version 11.44.0

- OS and version: [e.g. Ubuntu 20.04]

- Self-installed and compiled

| Parallel execution of Numpy UDFs hangs | https://api.github.com/repos/MonetDB/MonetDB/issues/7293/comments | 3 | 2022-05-20T18:04:32Z | 2023-09-13T14:45:38Z | https://github.com/MonetDB/MonetDB/issues/7293 | 1,243,467,614 | 7,293 |

[

"MonetDB",

"MonetDB"

] | Currently in MonetDBe, SQL statements prepended with `EXPLAIN,` `PLAN` and other MonetDB specific keywords are not supported. Even though we can fall back to the embedded mapi server to run such statements, it would be clean and nice to have these statements working in MonetDBe out of the box since they are part of our SQL dialect.

| Make EXPLAIN, PLAN, etc. work in MonetDBe | https://api.github.com/repos/MonetDB/MonetDB/issues/7292/comments | 0 | 2022-05-16T15:13:37Z | 2024-06-27T13:17:32Z | https://github.com/MonetDB/MonetDB/issues/7292 | 1,237,311,320 | 7,292 |

[

"MonetDB",

"MonetDB"

] | I am loading a sizeable csv file (300GB) into a tables using COPY INTO statement. After a long waiting time, I am getting an `"unexpected end of file"` exception and the table if empty after that. Heres's my query:

`COPY d1 FROM '/home/d1_data/d1.csv'

`

And my csv data file:

```

2019-02-01T00:00:10,st0,0.839071,0.179288,0.585304,0.679371,0.492911,0.056175,0.498442,0.938126,0.668068,0.929086,0.081897,0.843644,0.974037,0.159324,0.142218,0.140207,0.625254,0.425917,0.771387,0.096174,0.120735,0.725770,0.139911,0.310633,0.382543,0.896953,0.445951,0.119868,0.424562,0.181185,0.379519,0.105958,0.845021,0.533097,0.723558,0.944910,0.036968,0.112205,0.799767,0.728473,0.968308,0.111421,0.905472,0.980631,0.865119,0.293025,0.973192,0.408123,0.272021,0.125133,0.763793,0.819480,0.600016,0.178615,0.777532,0.081147,0.652687,0.458067,0.767267,0.711449,0.957630,0.115871,0.569370,0.517578,0.093003,0.682874,0.679829,0.485540,0.926170,0.080369,0.570393,0.484541,0.568747,0.626574,0.117149,0.715187,0.655418,0.276893,0.841691,0.173985,0.805234,0.241210,0.858166,0.021120,0.224665,0.238334,0.864353,0.103404,0.868038,0.992483,0.624129,0.755107,0.620674,0.763600,0.199850,0.396798,0.612075,0.515486,0.961466,0.434988

2019-02-01T00:00:20,st0,0.322934,0.755268,0.061692,0.212437,0.231739,0.826009,0.402892,0.546866,0.748315,0.428897,0.634761,0.384299,0.192479,0.391302,0.920955,0.526497,0.150713,0.338057,0.933859,0.137499,0.875741,0.228530,0.297205,0.266878,0.288009,0.060985,0.882594,0.490286,0.870628,0.317989,0.476885,0.132587,0.459073,0.457800,0.380606,0.978631,0.687570,0.353860,0.224363,0.931935,0.272906,0.443753,0.908269,0.173270,0.567581,0.705271,0.659782,0.530196,0.615158,0.107020,0.337759,0.287402,0.113100,0.750601,0.380647,0.338062,0.470644,0.560054,0.916784,0.102615,0.653475,0.234832,0.241591,0.092253,0.984721,0.061122,0.418502,0.268967,0.170532,0.623880,0.505132,0.659034,0.752930,0.888594,0.871888,0.676820,0.938585,0.050625,0.063221,0.559219,0.451311,0.844238,0.915815,0.935894,0.918915,0.271461,0.099396,0.661230,0.405390,0.608056,0.919490,0.483303,0.240281,0.329818,0.181569,0.511471,0.432861,0.463347,0.560382,0.855283

2019-02-01T00:00:30,st0,0.692054,0.538778,0.764992,0.656943,0.006166,0.610429,0.479586,0.639454,0.107885,0.338176,0.535457,0.871265,0.291767,0.955159,0.271295,0.421824,0.772407,0.531340,0.419594,0.776071,0.452270,0.281994,0.479907,0.745093,0.627713,0.774344,0.699013,0.587567,0.878019,0.153955,0.986209,0.704153,0.783832,0.704486,0.200587,0.630304,0.235955,0.429266,0.752330,0.484207,0.394956,0.518921,0.688756,0.720469,0.056679,0.160093,0.502845,0.915870,0.359901,0.744948,0.005774,0.194809,0.180417,0.100580,0.428749,0.621978,0.782535,0.834345,0.960411,0.703126,0.681373,0.894144,0.943699,0.037323,0.294162,0.047351,0.940178,0.396505,0.243780,0.410479,0.257793,0.581372,0.235662,0.441054,0.536284,0.588570,0.946028,0.466676,0.124679,0.803133,0.713820,0.810444,0.810953,0.259700,0.450738,0.995637,0.339662,0.132606,0.189827,0.208749,0.430025,0.843661,0.706039,0.650623,0.797073,0.719763,0.055521,0.852340,0.396091,0.429506

```

Any idea on why is this exception happening? | MonetDB COPY INTO table "unexpected end of file" | https://api.github.com/repos/MonetDB/MonetDB/issues/7291/comments | 3 | 2022-05-12T15:32:07Z | 2022-05-16T12:31:58Z | https://github.com/MonetDB/MonetDB/issues/7291 | 1,234,162,804 | 7,291 |

[

"MonetDB",

"MonetDB"

] | Monet backup validation - we need to know if there is any backup utility inbuilt in monet as we are taking DB level backup using rsync and we have no option to validate if the backup is successful or not.

And also please update us to take backup and validate the backup.

**Software versions**

- MonetDB version number - Jan 2022 monetdb 11.43.5

| Monet Backup validation | https://api.github.com/repos/MonetDB/MonetDB/issues/7290/comments | 1 | 2022-05-03T19:51:18Z | 2022-07-06T08:22:05Z | https://github.com/MonetDB/MonetDB/issues/7290 | 1,224,550,703 | 7,290 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Python UDFs don't accept scalar date parameters

**To Reproduce**

This function expects a `date` parameter and simply returns the python type of the parameter passed:

```

CREATE OR REPLACE FUNCTION test_date(d1 date) RETURNS string

LANGUAGE PYTHON {

return(f"d1: {str(type(d1))}")

};

```

It fails when a scalar date is passed:

```

sql>select test_date(curdate());

Unsupported scalar type 13.

```

It succeeds when a column of dates is passed:

```

sql>select test_date(d) from (values (curdate()),(curdate())) as t(d);

+-----------------------------+

| %4 |

+=============================+

| d1: <class 'numpy.ndarray'> |

+-----------------------------+

1 tuple

```

Type `int`, for comparison, works as expected in both cases.

**Software versions**

- MonetDB version number 11.43.14

- OS and version: Fedora 35

- self-installed and compiled

| Python UDF: Unsupported scalar type 13 | https://api.github.com/repos/MonetDB/MonetDB/issues/7289/comments | 8 | 2022-04-27T18:29:49Z | 2023-10-28T09:48:23Z | https://github.com/MonetDB/MonetDB/issues/7289 | 1,217,733,727 | 7,289 |

[

"MonetDB",

"MonetDB"

] | Maybe you could create an sql command that internally performs all the steps in a single step?

Something like MySql "CHANGE COLUMN", or "ALTER TABLE... ALTER COLUMN colname > new datatype"

According Monetdb docs:

"Change of the data type of a column is not supported.

Instead use command sequence:

ALTER TABLE tbl ADD COLUMN new_column _new_data_type_;

UPDATE tbl SET new_column = CONVERT(old_column, _new_data_type_);

ALTER TABLE tbl DROP COLUMN old_column RESTRICT;

ALTER TABLE tbl RENAME COLUMN new_column TO old_column"

In same way, it would be very useful to may able alter/change the column position/ordinal in the table

Regards. | Change/Alter column data type in a single command | https://api.github.com/repos/MonetDB/MonetDB/issues/7288/comments | 0 | 2022-04-21T14:27:04Z | 2024-06-27T13:17:30Z | https://github.com/MonetDB/MonetDB/issues/7288 | 1,211,123,911 | 7,288 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

when try to update the existing DB to new version we are getting below error.

error details:

mapi_usock=/monet_data01/DBFARM_TSVR_P2_A_BT/DB_TSVR_JR_P2_A/.mapi.sock --set monet_vault_key=/monet_data01/DBFARM_TSVR_P2_A_BT/DB_TSVR_JR_P2_A/.vaultkey --set gdk_nr_threads=128 --set max_clients=2048 --set sql_optimizer=sequential_pipe

2022-04-19 07:38:25 ERR DB_TSVR_JR_P2_A[298637]: #main thread: BBPcheckbats: !ERROR: BBPcheckbats: cannot stat file /monet_data01/DBFARM_TSVR_P2_A_BT/DB_TSVR_JR_P2_A/bat/01/46/14637.tail (expected size 1953432): No such file or directory

**To Reproduce**

upgrade from the version MonetDB-11.31.13 to MonetDB-11.37.11 we are getting error.

**Software versions**

- MonetDB version number [a milestone label]

MonetDB-11.37.11

- OS and version: [e.g. Ubuntu 18.04]

- Linux lnx1538.ch3.qa.i.com 3.10.0-957.27.2.el7.x86_64 #1 SMP Mon Jul 29 17:46:05 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

- Installed from release package or self-installed and compiled

- Self installed and complied

| Unable to upgrade from MonetDB-MonetDB-11.31.13 to MonetDB-11.37.11 | https://api.github.com/repos/MonetDB/MonetDB/issues/7287/comments | 3 | 2022-04-19T13:03:38Z | 2022-07-06T08:25:51Z | https://github.com/MonetDB/MonetDB/issues/7287 | 1,208,343,402 | 7,287 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

I would like to know if the DataCell functionality still exists in MonetDB. In the documentation, it was mentioned this feature is disabled. Can we still use MonetDB as a streaming DB?

**Describe the solution you'd like**

Enable DataCell or feature to have MonetDB as streaming DB.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

| Is DataCell (Streaming) functionality is disabled? Will i be able to use MonetDB as a streaming DB? | https://api.github.com/repos/MonetDB/MonetDB/issues/7286/comments | 4 | 2022-04-19T03:08:49Z | 2022-04-20T16:56:49Z | https://github.com/MonetDB/MonetDB/issues/7286 | 1,207,659,249 | 7,286 |

[

"MonetDB",

"MonetDB"

] | `**Describe` the bug**

Me and my team are working on C-UDFs in MonetDB and we encountered the following bug for aggregate UDFs:

While aggr_group.data has correct values, aggr_group.count is not equal to the number of groups, as the documentation claims (" aggr_group.count counts not the number of elements of aggr_group.data, but the number of groups."). This causes a lot of problems when we use aggregate UDFs without the group-by column on the SELECT statement.

Lets take the following query as an example:

```

SELECT jit_sum(p_size) FROM ssbm10_part GROUP BY p_mfgr;

```

ssbm10_part has 800000 rows and GROUP BY p_mfgr outputs 5 groups.

jit_sum is the aggregate function you have on the documentation page (https://www.monetdb.org/documentation-Jan2022/dev-guide/sql-extensions/c-udf-blog/).

```

CREATE AGGREGATE jit_sum(input INTEGER)

RETURNS BIGINT

LANGUAGE C {

// initialize one aggregate per group

result->initialize(result, aggr_group.count);

// zero initialize the sums

memset(result->data, 0, result->count * sizeof(result->null_value));

// gather the sums for each of the groups

for(size_t i = 0; i < input.count; i++) {

result->data[aggr_group.data[i]] += input.data[i];

}

};

```

This query should output 5 tuples, but outputs 800000 instead.

If, however, the query is re-written as follows:

SELECT p_mfgr, jit_sum(p_size) FROM ssbm10_part GROUP BY p_mfgr;

the results are correct.

In order to debug this I printed aggr_group.count value and in both cases it is equal to the number of rows of the input column (800000), NOT the number of groups (5).

After digging on capi I found out that there is a temporary random-name generated .c file generated which is basically the UDF function to be compiled. When I opened this file I saw the following function definition:

```

char* jit_sum(void** __inputs, void** __outputs, malloc_function_ptr malloc, free_function_ptr free) {

struct cudf_data_struct_int input = *((struct cudf_data_struct_int*)__inputs[0]);

struct cudf_data_struct_oid aggr_group = *((struct cudf_data_struct_oid*)__inputs[1]);

struct cudf_data_struct_oid arg4 = *((struct cudf_data_struct_oid*)__inputs[2]);

struct cudf_data_struct_bte arg5 = *((struct cudf_data_struct_bte*)__inputs[3]);

struct cudf_data_struct_lng* result = ((struct cudf_data_struct_lng*)__outputs[0]);

// initialize one aggregate per group

result->initialize(result, aggr_group.count);

// zero initialize the sums

memset(result->data, 0, result->count * sizeof(result->null_value));

// gather the sums for each of the groups

for (size_t i = 0; i < input.count; i++) {

result->data[aggr_group.data[i]] += input.data[i];

}

}

```

"input" is the input column,

"aggr_group" is the column which contains information about tuples and their groups.

"result" is the output column.

But what are "arg4" and "arg5" generated for since they are never used?

On capi.c I printed the count (BATcount) of arg4 and it turns out that it was 5, which the number of groups returned from GROUP BY p_mfgr!

I come into conclusion that arg4 and arg5 should not be generated as inputs because they are never used in the UDF and aggr_group.count should be equal to arg4.count.

**Software versions**

- MonetDB 5 server 11.44.0 (hg id: 151fed075b61) (64-bit, 128-bit integers).

- Ubuntu 18.04.

- Self-installed and compiled.

( cmake -DCMAKE_BUILD_TYPE=Debug -DCMAKE_INSTALL_PREFIX=/install_dir/ ../

cmake --build . --target install )

| C-UDFs: aggr_group.count has wrong value (number of input rows instead of number of groups). | https://api.github.com/repos/MonetDB/MonetDB/issues/7285/comments | 0 | 2022-04-11T14:33:13Z | 2024-06-27T13:17:29Z | https://github.com/MonetDB/MonetDB/issues/7285 | 1,200,066,248 | 7,285 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

The source file testing/README needs a rewrite as our testing tools has been changed and improved considerably. So the information in this README file is quite out of date.

**Expected behavior**

Up-to-date, correct and useful information on the testing tools and how to use them.

There is no need to describe all the options of Mtest.py, as that can be retrieved via Mtest.py --help

**Software versions**

source file repositories of branches: Jan2022, default

| contents of file testing/README is out-of-date and needs a rewrite | https://api.github.com/repos/MonetDB/MonetDB/issues/7284/comments | 0 | 2022-04-07T17:24:00Z | 2024-06-27T13:17:28Z | https://github.com/MonetDB/MonetDB/issues/7284 | 1,196,350,802 | 7,284 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Currently some web information presented as tables do not show a 1px border around the cells. It would greatly enhance the readability of the tabular information if a small grey cell border is shown (like on the old website).

For instance the tabular documentation on supported scalar functions, procedures, sql-catalog, ... would need borders:

**To Reproduce**

See webpages:

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-functions/mathematics-functions/

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-functions/string-functions/

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-functions/logical-functions/

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-functions/comparison-functions/

etc.

https://www.monetdb.org/documentation-Jan2022/admin-guide/monitoring/session-procedures/

https://www.monetdb.org/documentation-Jan2022/admin-guide/monitoring/system-procedures/

etc.

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-catalog/schema-table-columns/

https://www.monetdb.org/documentation-Jan2022/user-guide/sql-catalog/functions-arguments-types/

etc.

versus the original webpages with borders:

http://web.archive.org/web/20210412214522/https://www.monetdb.org/Documentation/SQLReference/FunctionsAndOperators/MathematicalFunctionsOperators

http://web.archive.org/web/20210513030115/https://monetdb.org/Documentation/SQLReference/SystemProcedures

The old ones are much easier to read and understand, due to the visual assistance of the borders around the cells of the tables.

**Expected behavior**

Show black/grey borders (1px) around every cell of tabular presented information.

Also the header (top row) with the field names, should have a different background than the information rows.

**Software versions**

www.monetdb.org website pages

**Additional context**

Other RDBMS documentation websites also use borders around cells of tabular presented information.

See for instance:

https://dev.mysql.com/doc/refman/8.0/en/built-in-function-reference.html

https://www.postgresql.org/docs/current/functions-math.html

https://mariadb.com/kb/en/function-and-operator-reference/

| webdocumentation: Add borders to cells for information presented as table for improved readability | https://api.github.com/repos/MonetDB/MonetDB/issues/7283/comments | 2 | 2022-04-07T17:09:35Z | 2024-06-27T13:17:27Z | https://github.com/MonetDB/MonetDB/issues/7283 | 1,196,337,022 | 7,283 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

When a database contains multiple user tables with the same table name but in different schemas, the call sys.dump_table_data(); command fails to complete.

**To Reproduce**

```

create table sys.t7282 ("nr" INTEGER PRIMARY KEY, "val1" INTEGER);

insert into sys.t7282 values (1, 23), (2, 45), (3, 67);

select * from sys.t7282;

create schema test;

create table test.t7282 ("mk" VARCHAR(3) PRIMARY KEY, "val2" INTEGER);

insert into test.t7282 values ('a', 23), ('b', 45);

select * from test.t7282;

delete from dump_statements;

call sys.dump_table_data();

-- Error: SELECT: identifier 'mk' unknown

select * from dump_statements;

-- no rows

-- remove one of the tables with the same name

drop table sys.t7282;

call sys.dump_table_data();

-- now it works as there are no 2 tables with the same name anymore

select * from dump_statements;

-- cleanup

drop table test.t7282;