issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

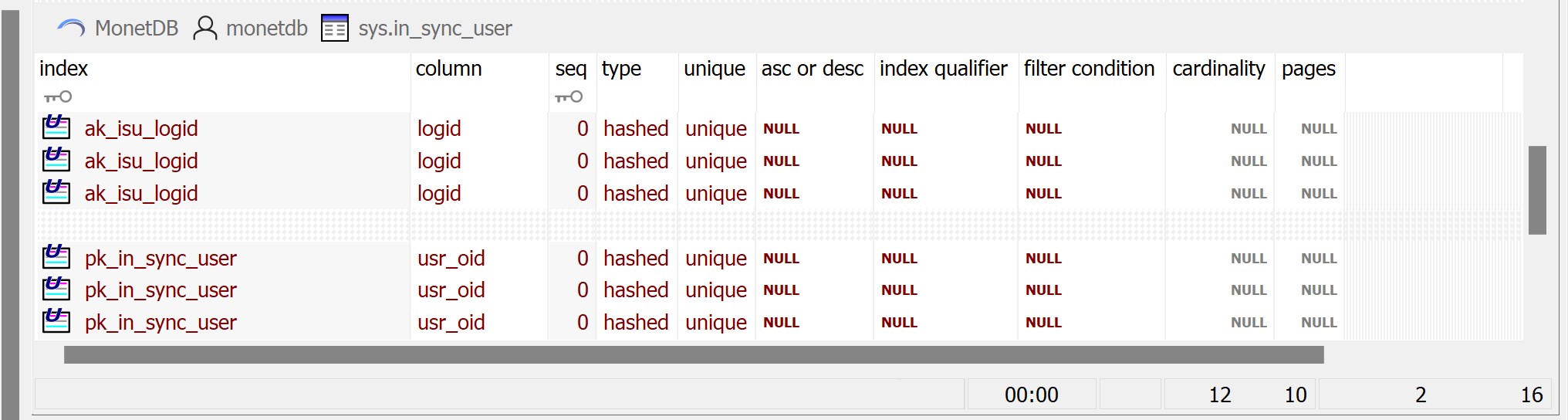

ODBC Driver returns duplicate rows executing ODBC API SQLStatistics on a table.

Also, ORDINAL_POSITION returned in the resultset is zero.

**To Reproduce**

1. create some test tables with primary key, alternate key and some foreign keys. Example:

create table in_sync_cmp_type (

cmp_type_cd char(8) not null,

description varchar(30) not null,

ctrl_ins_dtm timestamp not null default CURRENT_TIMESTAMP,

ctrl_upd_dtm timestamp not null,

ctrl_usr_id varchar(256) not null,

constraint pk_in_sync_cmp_type primary key (cmp_type_cd)

);

/*----------------------------------------------------------------------------*/

/* Table: in_sync_user */

/*----------------------------------------------------------------------------*/

create table in_sync_user (

usr_oid int not null,

logid varchar(256) not null,

ctrl_ins_dtm timestamp not null default CURRENT_TIMESTAMP,

ctrl_upd_dtm timestamp not null,

ctrl_usr_id varchar(256) not null,

constraint pk_in_sync_user primary key (usr_oid),

constraint ak_isu_logid unique (logid)

);

/*----------------------------------------------------------------------------*/

/* Table: in_sync_resultset */

/*----------------------------------------------------------------------------*/

create table in_sync_resultset (

rs_oid int not null,

rs_type_cd char(8) not null,

script_oid int null,

script_upd_ind int null,

ctrl_ins_dtm timestamp not null default CURRENT_TIMESTAMP,

ctrl_upd_dtm timestamp not null,

ctrl_usr_id varchar(256) not null,

constraint pk_in_sync_resultset primary key (rs_oid)

);

create index ix1in_sync_resultset on in_sync_resultset (

ctrl_usr_id,

rs_type_cd,

ctrl_ins_dtm

);

create table in_sync_data_source (

ds_oid integer not null,

dbms_name varchar(256) not null,

server_name varchar(256) null,

cluster_id varchar(256) null,

database_name varchar(520) null,

logid varchar(256) null,

owner_oid int null,

root_rs_oid int null,

readonly_ind int not null,

ctrl_ins_dtm timestamp not null default CURRENT_TIMESTAMP,

ctrl_upd_dtm timestamp not null,

ctrl_usr_id varchar(256) not null,

constraint pk_in_sync_data_source primary key (ds_oid)

);

alter table in_sync_data_source

add constraint fk_isds_isu foreign key (owner_oid)

references in_sync_user (usr_oid)

on update restrict

on delete restrict;

alter table in_sync_data_source

add constraint fk_isds_root_rs_oid foreign key (root_rs_oid)

references in_sync_resultset (rs_oid)

on update restrict

on delete restrict;

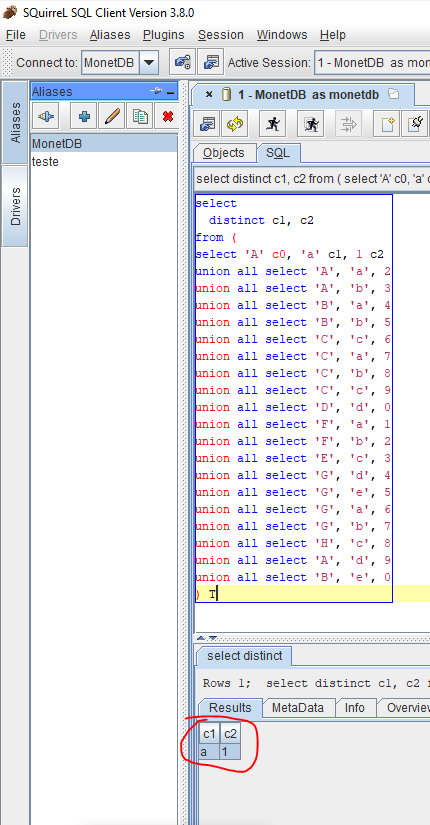

2. Execute ODBC function SQLStatistics on one table. Example for table, in_sync_user

3. Driver returns duplicate rows all with 0 ORDINAL_POSITION

**Expected behavior**

In the example above for table in_sync_user, driver should returns only two rows with the name of primary and alternate keys defined on the table and both rows should have ORDINAL_POSITION of 1.

**Screenshots**

Attached screen shot of SQLStatistic resulset rows executed on the example table in_sync_user described above.

**Software versions**

MonetDB 11.41.0013

ODBC Driver: MonetDBODBClib 11.41.0013 Jul2021-SP2

- OS and version: Windows 10 x64

- Installed from release package

**Issue labeling **

ODBC Driver SQLStatistics extra rows

**Additional context**

Looks like the returned resultset has rows for the indexes of other tables but all labeled with the name of the table SQLStatistics is executed on.

| ODBC Driver SQLStatistics returns duplicate rows/rows for other tables | https://api.github.com/repos/MonetDB/MonetDB/issues/7215/comments | 1 | 2022-01-08T04:19:05Z | 2024-06-27T13:16:46Z | https://github.com/MonetDB/MonetDB/issues/7215 | 1,096,828,939 | 7,215 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

I am not sure if this is a bug or a feature request. It seems that python loader functions work also with temporary tables in case I first create the table and then copy into it from loader function. However, it does not work if I try to create temp table from loader function.

**To Reproduce**

BEGIN TRANSACTION;

CREATE TEMP TABLE test_table(a INT) on commit drop;

CREATE OR REPLACE LOADER myloader(c INT) LANGUAGE PYTHON {

_emit.emit( { 'a' : c+1 } )

};

COPY LOADER INTO test_table FROM myloader((select 5));

select * from test_table;

COMMIT;

The above flow works and returns 6

Using this syntax:

sql> CREATE TEMP TABLE TEST1 from LOADER myloader((select 5));

syntax error in: "create temp table test1 from"

However this syntax is allowed for persistent tables:

sql> CREATE TABLE TEST1 from LOADER myloader((select 5));

operation successful

It seems that loader functions can be used to load temporary tables, but this is not supported by the parser. | Loader functions with temp tables. | https://api.github.com/repos/MonetDB/MonetDB/issues/7214/comments | 2 | 2022-01-05T11:24:52Z | 2024-06-27T13:16:45Z | https://github.com/MonetDB/MonetDB/issues/7214 | 1,094,249,811 | 7,214 |

[

"MonetDB",

"MonetDB"

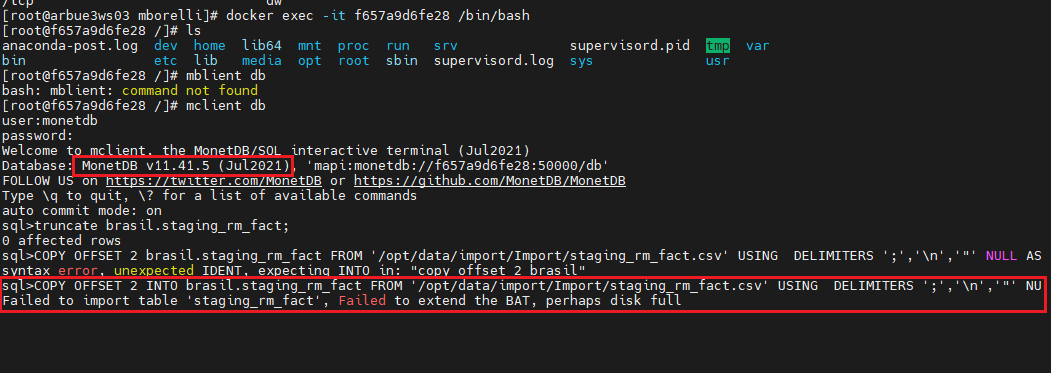

] | I'm using MonetDB 11.41.5 and I'm trying to bulk upload a very large CSV file (70 Gb) to a table using COPY INTO.

After a few minutes, I get the following message:

The complete COPY INTO sentence is:

`COPY OFFSET 2 INTO brasil.staging_rm_fact FROM '/opt/data/import/Brasil/202108/stagings/staging_rm_fact.csv' USING DELIMITERS ';','\n','"' NULL AS '';

`

- Disk space is enough to ensure the sucess of operation (more than 600 Gb of free space).

- The problem doesn't happen using previous version of MonetDB (11.39.11). Works perfectly in exactly the same conditions: same server, same free disk space and same large CSV file.

CSV file has 225 millions of lines. In order to help to reproduce the error, I attached a reduced version of 100.000 sample lines of the same file. You can repeat the lines to create a 225 million lines file. Please, keep the header as the first line in the CSV file.

[sample.zip](https://github.com/MonetDB/MonetDB/files/7784921/sample.zip)

The target table is created with this sentence:

[table.zip](https://github.com/MonetDB/MonetDB/files/7784934/table.zip)

If you need more information, please feel free to ask me. I'm going to try to get some debug information, as you suggested in Staackoverflow using:

```

CALL logging.setcomplevel('HEAP', 'DEBUG');

CALL logging.setflushlevel('DEBUG');

``` | "Failed to extend the BAT" error in MonetDB 11.41.5 | https://api.github.com/repos/MonetDB/MonetDB/issues/7213/comments | 1 | 2021-12-28T13:53:03Z | 2024-06-07T11:59:17Z | https://github.com/MonetDB/MonetDB/issues/7213 | 1,089,911,324 | 7,213 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

it excluding the stop value now, the behavior is different from many other dbms, such as sqlite, postgres,duckdb and so on.

```sql

sql>select * from generate_series(1,8);

+-------+

| value |

+=======+

| 1 |

| 2 |

| 3 |

| 4 |

| 5 |

| 6 |

| 7 |

+-------+

7 tuples

```

**Describe the solution you'd like**

```sql

sql>select * from generate_series(1,8);

+-------+

| value |

+=======+

| 1 |

| 2 |

| 3 |

| 4 |

| 5 |

| 6 |

| 7 |

| 8 |

+-------+

8 tuples

``` | the generate_series(begin,stop) results a series including the stop value | https://api.github.com/repos/MonetDB/MonetDB/issues/7212/comments | 2 | 2021-12-23T13:31:06Z | 2024-06-27T13:16:44Z | https://github.com/MonetDB/MonetDB/issues/7212 | 1,087,720,209 | 7,212 |

[

"MonetDB",

"MonetDB"

] | Some times MonetDB gets stuck by the same error, e.g. when a database can't be started while the application keeps trying. The merovingian.log and tracer log get polluted by the same error messages repeated for a huge number of times. This can push earlier more useful messages out of the log.

Would be nice to be able to ignore such large repeat of the same error message. | Don't repeatedly log the same errors | https://api.github.com/repos/MonetDB/MonetDB/issues/7211/comments | 1 | 2021-12-10T18:11:18Z | 2023-09-18T07:53:28Z | https://github.com/MonetDB/MonetDB/issues/7211 | 1,077,106,610 | 7,211 |

[

"MonetDB",

"MonetDB"

] | Configure the tracer to log messages from different levels to different files. In this way, during log rotation, one can more easily choose to keep the more important messages for a longer time than, e.g., info. messages. | Tracer log different messages to different files | https://api.github.com/repos/MonetDB/MonetDB/issues/7210/comments | 1 | 2021-12-10T18:06:29Z | 2021-12-14T16:38:09Z | https://github.com/MonetDB/MonetDB/issues/7210 | 1,077,103,144 | 7,210 |

[

"MonetDB",

"MonetDB"

] | Would be nice to be able to configure what is being logged in the merovingian.log. In particular, a way to not log the large amount of connection information, because they can push away more useful (error) messages in log rotation. | Configuration option for merovingian.log | https://api.github.com/repos/MonetDB/MonetDB/issues/7209/comments | 5 | 2021-12-10T18:03:26Z | 2024-06-27T13:16:42Z | https://github.com/MonetDB/MonetDB/issues/7209 | 1,077,100,793 | 7,209 |

[

"MonetDB",

"MonetDB"

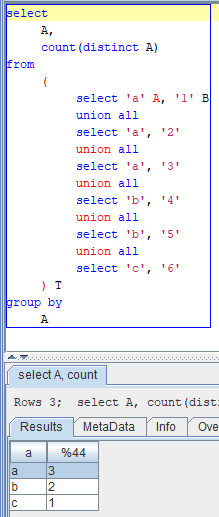

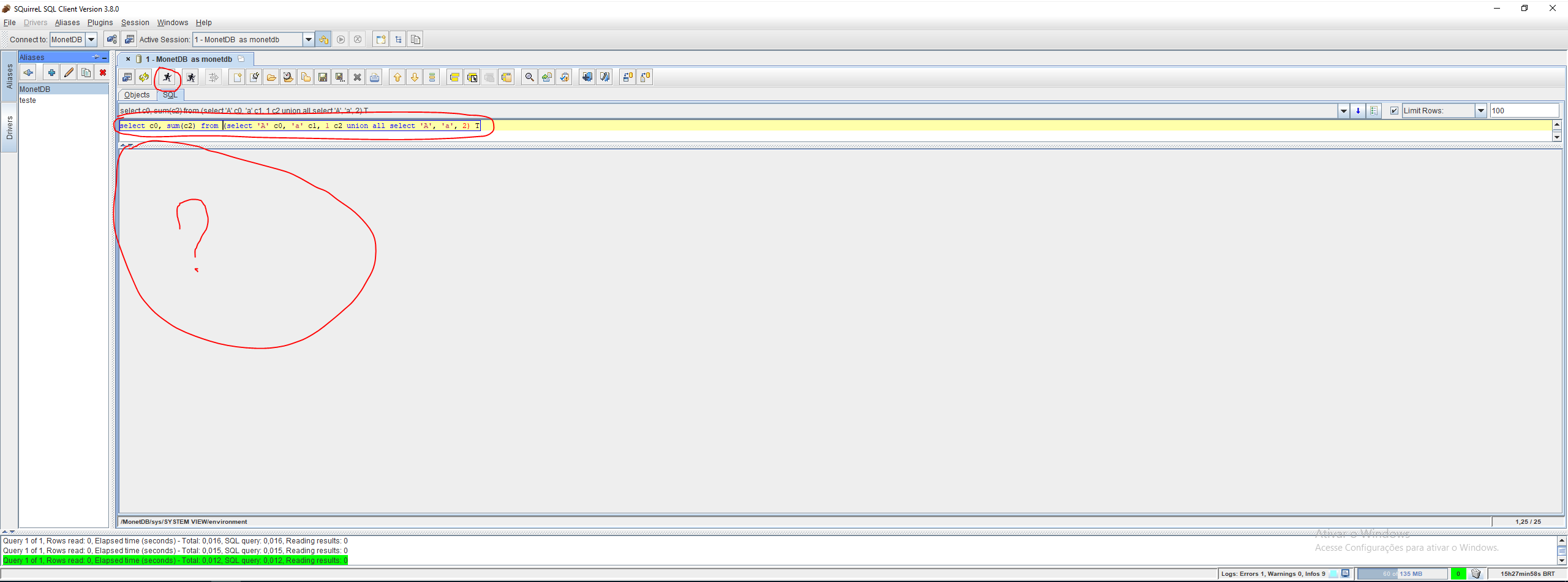

] | I'm retrieving the number of unique values as a function using count distinct:

`select count(distinct "commodity_type_") from "bb243f60c9eDM_FULFile___out_"`

I can also perform the same calculation using grouping and sub-queries:

`select count(*) from (select "commodity_type_" from "bb243f60c9eDM_FULFile___out_" group by "commodity_type_") "x"`

The former takes **300ms**, the latter **30ms**.

And as part of a larger query, _count distinct_ turns a 300ms query into 7000ms.

It seems to be that Monetdb is capable of performing this high level calculation fast, but as count distinct it is not optimised.

Is this a bug? If not, are there any quick wins here?

Oct 2020 version.

```

27 X_1=0@0:void := querylog.define("trace select count(distinct \"commodity_type_\") from \"bb243f60c9eDM_FULFile___out_\"\n;":str, "default_pipe":str, -1805369535:int);

1 name="monetdb":str := clients.getUsername();

4 start="2021-12-02 12:03:33.676660":timestamp := mtime.current_timestamp();

1763 querylog.append("trace select count(distinct \"commodity_type_\") from \"bb243f60c9eDM_FULFile___out_\"\n;":str, "default_pipe":str, name="monetdb":str, start="2021-12-02 12:03:33.676660":timestamp);

24 args="function user.main():void;":str := sql.argRecord();

1 xtime=1638446613678484:lng := alarm.usec();

7 (user=0:lng, nice=0:lng, sys=0:lng, idle=0:lng, iowait=0:lng) := profiler.cpustats();

0 tuples=1:lng := 1:lng;

18 X_4=0:int := sql.mvc();

28 C_5=[6413587]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str);

19 X_8=[6413587]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int);

24 X_17=[6413587]:bat[:str] := algebra.projection(C_5=[6413587]:bat[:oid], X_8=[6413587]:bat[:str]);

291592 C_18=[3]:bat[:oid] := algebra.unique(X_17=[6413587]:bat[:str]); # unique: new partial hash

40 X_19=[3]:bat[:str] := algebra.projection(C_18=[3]:bat[:oid], X_17=[6413587]:bat[:str]); # project_bte

26 language.pass(X_17=[6413587]:bat[:str]);

25 X_20=3:lng := aggr.count(X_19=[3]:bat[:str], true:bit);

291997 barrier X_77=false:bit := language.dataflow();

92 sql.resultSet("sys.%1":str, "%1":str, "bigint":str, 64:int, 0:int, 7:int, X_20=3:lng);

0 X_100=1638446613970629:lng := alarm.usec();

2 xtime=292145:lng := calc.-(X_100=1638446613970629:lng, xtime=292145:lng);

0 rtime=1638446613970640:lng := alarm.usec();

0 X_104=1638446613970642:lng := alarm.usec();

0 rtime=2:lng := calc.-(X_104=1638446613970642:lng, rtime=2:lng);

3 finish="2021-12-02 12:03:33.970648":timestamp := mtime.current_timestamp();

9 (load=0:int, io=0:int) := profiler.cpuload(user=0:lng, nice=0:lng, sys=0:lng, idle=0:lng, iowait=0:lng);

1691 querylog.call(start="2021-12-02 12:03:33.676660":timestamp, finish="2021-12-02 12:03:33.970648":timestamp, args="function user.main():void;":str, tuples=1:lng, xtime=292145:lng, rtime=2:lng, load=0:int, io=0:int);

```

vs

```

25 X_1=0@0:void := querylog.define("trace select count(*) from (select \"commodity_type_\" from \"bb243f60c9eDM_FULFile___out_\" group by \"commodity_type_\") \"x\"\n;":str, "default_pipe":str, -1780745219:int);

1 name="monetdb":str := clients.getUsername();

3 start="2021-12-02 12:03:58.300668":timestamp := mtime.current_timestamp();

6744 querylog.append("trace select count(*) from (select \"commodity_type_\" from \"bb243f60c9eDM_FULFile___out_\" group by \"commodity_type_\") \"x\"\n;":str, "default_pipe":str, name="monetdb":str, start="2021-12-02 12:03:58.300668":timestamp);

24 args="function user.main():void;":str := sql.argRecord();

1 xtime=1638446638307471:lng := alarm.usec();

7 (user=0:lng, nice=0:lng, sys=0:lng, idle=0:lng, iowait=0:lng) := profiler.cpustats();

1 tuples=1:lng := 1:lng;

19 X_4=0:int := sql.mvc();

34 C_85=[801701]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 7:int, 8:int);

36 X_92=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 6:int, 8:int);

34 C_83=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 6:int, 8:int);

33 X_91=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 5:int, 8:int);

37 C_81=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 5:int, 8:int);

58 C_71=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 0:int, 8:int);

27 X_86=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 0:int, 8:int);

28 C_73=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 1:int, 8:int);

25 X_90=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 4:int, 8:int);

27 X_118=[801698]:bat[:str] := algebra.projection(C_83=[801698]:bat[:oid], X_92=[801698]:bat[:str]);

28 X_89=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 3:int, 8:int);

24 C_77=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 3:int, 8:int);

26 X_87=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 1:int, 8:int);

25 X_117=[801698]:bat[:str] := algebra.projection(C_81=[801698]:bat[:oid], X_91=[801698]:bat[:str]);

26 X_88=[801698]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 2:int, 8:int);

26 X_112=[801698]:bat[:str] := algebra.projection(C_71=[801698]:bat[:oid], X_86=[801698]:bat[:str]);

24 C_75=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 2:int, 8:int);

22 C_79=[801698]:bat[:oid] := sql.tid(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, 4:int, 8:int);

26 X_115=[801698]:bat[:str] := algebra.projection(C_77=[801698]:bat[:oid], X_89=[801698]:bat[:str]);

26 X_113=[801698]:bat[:str] := algebra.projection(C_73=[801698]:bat[:oid], X_87=[801698]:bat[:str]);

27 X_114=[801698]:bat[:str] := algebra.projection(C_75=[801698]:bat[:oid], X_88=[801698]:bat[:str]);

23 X_93=[801701]:bat[:str] := sql.bind(X_4=0:int, "sys":str, "bb243f60c9eDM_FULFile___out_":str, "commodity_type_":str, 0:int, 7:int, 8:int);

45 X_116=[801698]:bat[:str] := algebra.projection(C_79=[801698]:bat[:oid], X_90=[801698]:bat[:str]);

25 X_119=[801701]:bat[:str] := algebra.projection(C_85=[801701]:bat[:oid], X_93=[801701]:bat[:str]);

5716 (X_139=[801698]:bat[:oid], C_140=[3]:bat[:oid]) := group.groupdone(X_116=[801698]:bat[:str]);

5986 (X_127=[801698]:bat[:oid], C_128=[3]:bat[:oid]) := group.groupdone(X_113=[801698]:bat[:str]);

5954 (X_151=[801701]:bat[:oid], C_152=[3]:bat[:oid]) := group.groupdone(X_119=[801701]:bat[:str]);

6428 (X_123=[801698]:bat[:oid], C_124=[3]:bat[:oid]) := group.groupdone(X_112=[801698]:bat[:str]);

6462 (X_143=[801698]:bat[:oid], C_144=[3]:bat[:oid]) := group.groupdone(X_117=[801698]:bat[:str]);

6518 (X_147=[801698]:bat[:oid], C_148=[3]:bat[:oid]) := group.groupdone(X_118=[801698]:bat[:str]);

6467 (X_135=[801698]:bat[:oid], C_136=[3]:bat[:oid]) := group.groupdone(X_115=[801698]:bat[:str]);

1042 X_142=[3]:bat[:str] := algebra.projection(C_140=[3]:bat[:oid], X_116=[801698]:bat[:str]); # project_bte

121 X_154=[3]:bat[:str] := algebra.projection(C_152=[3]:bat[:oid], X_119=[801701]:bat[:str]); # project_bte

947 X_130=[3]:bat[:str] := algebra.projection(C_128=[3]:bat[:oid], X_113=[801698]:bat[:str]); # project_bte

87 language.pass(X_116=[801698]:bat[:str]);

29 language.pass(X_113=[801698]:bat[:str]);

40 language.pass(X_119=[801701]:bat[:str]);

29 X_138=[3]:bat[:str] := algebra.projection(C_136=[3]:bat[:oid], X_115=[801698]:bat[:str]); # project_bte

35 X_150=[3]:bat[:str] := algebra.projection(C_148=[3]:bat[:oid], X_118=[801698]:bat[:str]); # project_bte

33 X_146=[3]:bat[:str] := algebra.projection(C_144=[3]:bat[:oid], X_117=[801698]:bat[:str]); # project_bte

41 X_126=[3]:bat[:str] := algebra.projection(C_124=[3]:bat[:oid], X_112=[801698]:bat[:str]); # project_bte

20 language.pass(X_118=[801698]:bat[:str]);

24 language.pass(X_115=[801698]:bat[:str]);

21 language.pass(X_117=[801698]:bat[:str]);

20 language.pass(X_112=[801698]:bat[:str]);

26 X_173=[3]:bat[:str] := mat.packIncrement(X_126=[3]:bat[:str], 8:int);

22 X_175=[6]:bat[:str] := mat.packIncrement(X_173=[6]:bat[:str], X_130=[3]:bat[:str]);

8600 (X_131=[801698]:bat[:oid], C_132=[3]:bat[:oid]) := group.groupdone(X_114=[801698]:bat[:str]);

22 X_134=[3]:bat[:str] := algebra.projection(C_132=[3]:bat[:oid], X_114=[801698]:bat[:str]); # project_bte

24 language.pass(X_114=[801698]:bat[:str]);

22 X_176=[9]:bat[:str] := mat.packIncrement(X_175=[9]:bat[:str], X_134=[3]:bat[:str]);

18 X_177=[12]:bat[:str] := mat.packIncrement(X_176=[12]:bat[:str], X_138=[3]:bat[:str]);

16 X_178=[15]:bat[:str] := mat.packIncrement(X_177=[15]:bat[:str], X_142=[3]:bat[:str]);

16 X_179=[18]:bat[:str] := mat.packIncrement(X_178=[18]:bat[:str], X_146=[3]:bat[:str]);

15 X_180=[21]:bat[:str] := mat.packIncrement(X_179=[21]:bat[:str], X_150=[3]:bat[:str]);

16 X_17=[24]:bat[:str] := mat.packIncrement(X_180=[24]:bat[:str], X_154=[3]:bat[:str]);

45 (X_18=[24]:bat[:oid], C_19=[3]:bat[:oid]) := group.groupdone(X_17=[24]:bat[:str]);

20 X_21=[3]:bat[:str] := algebra.projection(C_19=[3]:bat[:oid], X_17=[24]:bat[:str]);

16 language.pass(X_17=[24]:bat[:str]);

18 X_22=3:lng := aggr.count(X_21=[3]:bat[:str]);

9871 barrier X_183=false:bit := language.dataflow();

59 sql.resultSet("sys.%1":str, "%1":str, "bigint":str, 64:int, 0:int, 7:int, X_22=3:lng);

1 X_214=1638446638317437:lng := alarm.usec();

2 xtime=9966:lng := calc.-(X_214=1638446638317437:lng, xtime=9966:lng);

0 rtime=1638446638317446:lng := alarm.usec();

0 X_218=1638446638317448:lng := alarm.usec();

0 rtime=2:lng := calc.-(X_218=1638446638317448:lng, rtime=2:lng);

3 finish="2021-12-02 12:03:58.317454":timestamp := mtime.current_timestamp();

7 (load=0:int, io=0:int) := profiler.cpuload(user=0:lng, nice=0:lng, sys=0:lng, idle=0:lng, iowait=0:lng);

1297 querylog.call(start="2021-12-02 12:03:58.300668":timestamp, finish="2021-12-02 12:03:58.317454":timestamp, args="function user.main():void;":str, tuples=1:lng, xtime=9966:lng, rtime=2:lng, load=0:int, io=0:int);

``` | Dramatic difference in performance for two similar queries | https://api.github.com/repos/MonetDB/MonetDB/issues/7208/comments | 2 | 2021-12-02T12:04:55Z | 2024-06-07T12:01:17Z | https://github.com/MonetDB/MonetDB/issues/7208 | 1,069,470,513 | 7,208 |

[

"MonetDB",

"MonetDB"

] | I know MonetDB is a self-indexing database but how does this actually work? I am benchmarking time-series database systems with data of the following format:

```

sql>select * from datapoints limit 5;

+----------------------------+------------+--------------------------+--------------------------+--------------------------+--------------------------+--------------------------+

| time | id_station | temperature | discharge | ph | oxygen | oxygen_saturation |

+============================+============+==========================+==========================+==========================+==========================+==========================+

| 2019-03-01 00:00:00.000000 | 0 | 407.052 | 0.954 | 7.79 | 12.14 | 12.14 |

| 2019-03-01 00:00:10.000000 | 0 | 407.052 | 0.954 | 7.79 | 12.13 | 12.13 |

+----------------------------+------------+--------------------------+--------------------------+--------------------------+--------------------------+--------------------------+

```

The fields of the data are the `time`, the `station_id`, and the other sensor readings.

I am creating a database with the `time` as a primary key, as follows:

```

CREATE TABLE datapoints (

time TIMESTAMP NOT NULL PRIMARY KEY,

id_station INTEGER,

temperature DOUBLE PRECISION ,

discharge DOUBLE PRECISION ,

pH DOUBLE PRECISION ,

oxygen DOUBLE PRECISION ,

oxygen_saturation DOUBLE PRECISION

);

```

I would also like to index data on the `id_station`, since most of the queries would be using it to locate the data.

Should I create another index on the column `id_station`? On the [documentation](https://www.monetdb.org/Documentation/SQLLanguage/DataDefinition/IndexDefinitions) it says that MonetDB would take the `create index` statements like suggestions and are often neglected.

The following [article](https://dev.to/yugabyte/think-about-primary-key-indexes-before-anything-else-o5m) mentions that the order of columns used in the index is very important `(id_station, time)` vs `(time, id_station`).

Does MonetDB create compound indexes as well or is it just per column? What indexing strategy is being used? How should I assert the optimal indexing strategy for my use case?

| Time Series Data Indexing for Performance Benchmarking | https://api.github.com/repos/MonetDB/MonetDB/issues/7207/comments | 1 | 2021-11-29T18:37:41Z | 2024-06-27T13:16:41Z | https://github.com/MonetDB/MonetDB/issues/7207 | 1,066,368,794 | 7,207 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Python UDFs that return a table fail when they return an empty table defined as a dictionary of numpy arrays.

**To Reproduce**

This succeeds (1 row returned):

```

CREATE OR REPLACE function f()

returns table(s STRING, i INT)

LANGUAGE PYTHON {

result = dict()

result['s'] = numpy.array(["test"], dtype=object)

result['i'] = numpy.array([5], dtype=int)

return(result)

};

select * from f();

+------+------+

| s | i |

+======+======+

| test | 5 |

+------+------+

1 tuple

```

This fails (0 rows returned):

```

CREATE OR REPLACE function f()

returns table(s STRING, i INT)

LANGUAGE PYTHON {

result = dict()

result['s'] = numpy.array([], dtype=object)

result['i'] = numpy.array([], dtype=int)

return(result)

};

select * from f();

Error converting dict return value "s": An array of size 0 was returned, yet we expect a list of 1 columns. The result is invalid..

```

Note that returning a list of lists, `return([[],[]])`, succeeds.

**Expected behavior**

Empty table returned

**Software versions**

- MonetDB 11.39.18

- OS and version: Fedora 34

- Compiled from sources

| Python UDF fails when returning an empty table as a dictionary | https://api.github.com/repos/MonetDB/MonetDB/issues/7206/comments | 1 | 2021-11-25T16:07:16Z | 2024-06-27T13:16:40Z | https://github.com/MonetDB/MonetDB/issues/7206 | 1,063,756,431 | 7,206 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

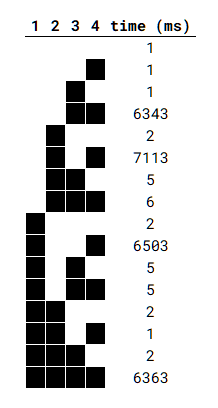

When performing joins over nested queries (even simple ones), the performance varies drastically based on the number and position of the nested queries. For example, we can have a case where having all tables in the join as nested queries results in good performance but unnesting one of those tables increases the execution time in over 1000x.

**To Reproduce**

- Create a simple table and insert some data:

```sql

CREATE TABLE T (k int, v int);

INSERT INTO T VALUES (1, 1), (2, 2);

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

INSERT INTO T SELECT * FROM T;

-- 1024 rows

```

- Perform joins with different nested tables and check the varying performance:

```sql

-- all normal (~2ms)

SELECT 1

FROM t t1, t t2, t t3, t t4

WHERE t1.k = t2.k

AND t2.k = t3.k

AND t3.k = t4.k

AND t4.k = 3;

-- 3 and 4 nested (~6.5s)

SELECT 1

FROM t t1, t t2, (SELECT * FROM t) t3, (SELECT * FROM t) t4

WHERE t1.k = t2.k

AND t2.k = t3.k

AND t3.k = t4.k

AND t4.k = 3;

-- 2, 3, and 4 nested (~5ms)

SELECT 1

FROM t t1, (SELECT * FROM t) t2, (SELECT * FROM t) t3, (SELECT * FROM t) t4

WHERE t1.k = t2.k

AND t2.k = t3.k

AND t3.k = t4.k

AND t4.k = 3;

```

**Expected behavior**

Same (few ms) performance across all queries.

**Software versions**

- `monetdb -v`: `MonetDB Database Server Toolkit v11.41.11 (Jul2021-SP1)`.

- OS: `Ubuntu 20.04.3 LTS`;

- Monetdb installed from release packages with `apt` (packages `monetdb5-sql` and `monetdb-client`);

Can confirm that it also happens in the Jan22 branch.

**Additional context**

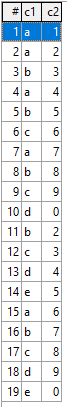

I tried to find a pattern for this behavior but had little success. I only noticed that the final table in the join must be nested for the problem to occur. I ran a small script to test all combinations with 4 joins and found this (black cells represent nested tables):

As far as I am aware this only happens with joins of size 4 and higher.

| Unpredictable performance when performing joins over nested queries | https://api.github.com/repos/MonetDB/MonetDB/issues/7205/comments | 4 | 2021-11-25T09:37:50Z | 2024-06-27T13:16:39Z | https://github.com/MonetDB/MonetDB/issues/7205 | 1,063,352,417 | 7,205 |

[

"MonetDB",

"MonetDB"

] | I am trying to evaluate the ability of MonetDB on performing continuous queries on time series data.

Continuous queries on time series data are queries that run automatically and periodically on real-time data (and maybe store query results in a specified measurement). Some Time Series Database Systems (TSDBs) have a built-in operation that allows doing this (e.g. InfluxDB, TimescaleDB).

I couldn't find in MonetDB's documentation a way to perform this type of query. Another alternative would be to program the frequency of queries in a separate programming language/system clock that would run the given query frequently and automatically every time length. This is however not guaranteed to be fair 1) to the system because the performance would depend on an independent factor (the third-party programming language) and 2) to the other systems because they provide an extra convenient feature that MonetDB does not.

My question is there an efficient way that could be used for the purpose of performing continuous queries? If not, what are the alternative I have using MonetDB in order to perform the continuous queries?

Thanks!

| Continuous Queries Support | https://api.github.com/repos/MonetDB/MonetDB/issues/7204/comments | 1 | 2021-11-24T12:37:44Z | 2021-12-01T13:18:21Z | https://github.com/MonetDB/MonetDB/issues/7204 | 1,062,377,649 | 7,204 |

[

"MonetDB",

"MonetDB"

] | We're using a JOIN query where the constraint is logically `LeftField=RightField`, but have nulls present and wish to match them as distinct values, so write `LeftField=RightField OR (LeftField IS NULL AND RightField IS NULL)`.

A particular query using this approach takes **30 seconds** to execute (6.5 million rows) on modern commodity hardware.

Taking away the NULL handling and using just `LeftField=RightField` brings it down to **800ms**.

Equally, so does using `COALESCE(LeftField, '')=COALESCE(RightField, '')` (although this is messy and assumes `''` is not present in the data).

These are text fields being matched, so we cannot explore IMPRINT indexes.

I wonder, is this expected, or is something wrong here? If the latter I can prepare a test case.

Version 11.39.17 on Mac 11.6. | Severely degraded Join performance with IS NULL | https://api.github.com/repos/MonetDB/MonetDB/issues/7203/comments | 8 | 2021-11-24T09:43:37Z | 2021-11-25T09:43:11Z | https://github.com/MonetDB/MonetDB/issues/7203 | 1,062,205,662 | 7,203 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Performing a DISTINCT results in duplicate rows if we sort the table by additional columns than the ones that are projected. This is not critical as the additional columns can be removed from the sort, however it can cause problems with dynamically generated queries (which is how this bug was discovered).

**To Reproduce**

- Create a table and populate with some data:

```sql

CREATE TABLE T (t1 int, t2 int);

INSERT INTO t VALUES (1, 1), (1, 2);

```

- DISTINCT with single sort column works as expected:

```sql

SELECT DISTINCT t1

FROM T

ORDER BY t1;

Returns:

+------+

| t1 |

+======+

| 1 |

+------+

```

- DISTINCT when sorting by both columns returns repeated rows:

```sql

SELECT DISTINCT t1

FROM T

ORDER BY t1, t2;

Returns:

+------+

| t1 |

+======+

| 1 |

| 1 |

+------+

```

**Expected behavior**

Return just one row with value 1.

**Software versions**

- `monetdb -v`: `MonetDB Database Server Toolkit v11.41.11 (Jul2021-SP1)`.

- OS: `Ubuntu 20.04.3 LTS`;

- Monetdb installed from release packages with `apt` (packages `monetdb5-sql` and `monetdb-client`); | DISTINCT does not work when sorting by additional columns | https://api.github.com/repos/MonetDB/MonetDB/issues/7202/comments | 3 | 2021-11-22T16:12:50Z | 2024-06-27T13:16:38Z | https://github.com/MonetDB/MonetDB/issues/7202 | 1,060,339,819 | 7,202 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

When performing some selections on a subquery with a left join, it causes the join to fail. It requires a combination of specific filters, indexes, and `NOT NULL` properties for it to occur.

**To Reproduce**

Create two tables, naturally joined by two columns `x1, x2`, with the first table indexed by `x1, x2` and `x2`, and with `NOT NULL` properties:

```sql

CREATE TABLE T1 (x1 int NOT NULL, x2 int NOT NULL, y int NOT NULL);

CREATE INDEX T1_x1_x2 ON T1 (x1, x2);

CREATE INDEX T1_x2 ON T1 (x2);

CREATE TABLE T2 (x1 int NOT NULL, x2 int NOT NULL, z int NOT NULL);

CREATE INDEX T2_x1_x2 ON T2 (x1, x2);

```

Populate with some data:

```sql

INSERT INTO T1 VALUES (1, 0, 1), (1, 2, 1);

INSERT INTO T2 VALUES (1, 0, 3), (1, 2, 100);

```

Perform the subquery first to see that it is working correctly:

```sql

SELECT T1.*, T2.x1 as t2_x1, z

FROM T1

LEFT JOIN T2 ON T1.x1 = T2.x1 AND T1.x2 = T2.x2

WHERE 10 <= T2.z OR T2.z IS NULL; -- (x1, x2, z) = (1, 0, 3) is dropped here, as 10 <= 3 is False

Returns:

+------+------+------+-------+------+

| x1 | x2 | y | t2_x1 | z |

+======+======+======+=======+======+

| 1 | 2 | 1 | 1 | 100 |

+------+------+------+-------+------+

```

Perform the filter on the subquery to check that the join fails (`t2_x1` and `z` become null, and the other row is also returned):

```sql

SELECT *

FROM (

SELECT T1.*, T2.x1 as t2_x1, z

FROM T1

LEFT JOIN T2 ON T1.x1 = T2.x1 AND T1.x2 = T2.x2

WHERE 10 <= T2.z OR T2.z IS NULL

) T

WHERE T.x1 = 1; -- this filter should return the same row

Returns:

+------+------+------+-------+------+

| x1 | x2 | y | t2_x1 | z |

+======+======+======+=======+======+

| 1 | 0 | 1 | null | null |

| 1 | 2 | 1 | null | null |

+------+------+------+-------+------+

```

**Expected behavior**

Return the same result as the nested query.

**Software versions**

- `monetdb -v`: `MonetDB Database Server Toolkit v11.41.11 (Jul2021-SP1)`.

- OS: `Ubuntu 20.04.3 LTS`;

- Monetdb installed from release packages with `apt` (packages `monetdb5-sql` and `monetdb-client`);

**Additional context**

There are already some things I discovered that should help narrow the issue:

- Both indexes on T1 must be created, as without one of them the query works. The index on T2 is not relevant;

- The column x2 of T1 must be tagged with `NOT NULL`. The `NOT NULL` in the other columns is not relevant;

- There must be a filter after the subquery in one of the columns of T1 (`x1`, `x2`, or `y`). Filters done to `t2_x1` or `z` make the join succeed (e.g., `WHERE T.z = 100`). Likewise, the nested query alone with no filter also returns the correct result;

- Manually pushing the filter `WHERE T.x1 = 1` to the subquery works correctly;

- Removing the `T2.z IS NULL` from the subquery also makes the join succeed.

| Selection of a subquery with a LEFT JOIN returns the wrong result set | https://api.github.com/repos/MonetDB/MonetDB/issues/7201/comments | 2 | 2021-11-22T16:12:46Z | 2024-06-27T13:16:37Z | https://github.com/MonetDB/MonetDB/issues/7201 | 1,060,339,724 | 7,201 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

The unique constraint imposed by the PRIMARY KEY is violated when there are concurrent inserts, resulting in multiple rows with the same key.

**To Reproduce**

- Create a table with a primary key:

```sql

CREATE TABLE T (k int PRIMARY KEY, v int);

```

- Check that the unique constraint is working correctly:

```sql

INSERT INTO T VALUES (1, 1); -- ok

INSERT INTO T VALUES (1, 1); -- fails (INSERT INTO: PRIMARY KEY constraint 't.t_k_pkey' violated)

DELETE FROM T; -- reset table

```

- Test using bash + `mclient`:

- Create the `DOTMONETDBFILE`:

```bash

cat <<EOF >> .monetdb

user=monetdb

password=monetdb

EOF

```

- Run concurrent inserts (make sure to run the second command immediatly after the first, e.g., by pasting the entire input in the terminal):

```bash

for i in {1..1000}; do DOTMONETDBFILE=.monetdb mclient -d testdb -s "INSERT INTO T VALUES ($i, $i)"; done &

for i in {1..1000}; do DOTMONETDBFILE=.monetdb mclient -d testdb -s "INSERT INTO T VALUES ($i, $i)"; done

;

```

- Check the duplicate values in the table:

```sql

SELECT count(*) FROM T;

Returns (the actual value may vary):

+------+

| %1 |

+======+

| 1917 |

+------+

SELECT * FROM T LIMIT 10;

Returns:

+------+------+

| k | v |

+======+======+

| 1 | 1 |

| 1 | 1 |

| 2 | 2 |

| 2 | 2 |

| 3 | 3 |

| 3 | 3 |

| 4 | 4 |

| 4 | 4 |

| 5 | 5 |

| 5 | 5 |

+------+------+

```

- Running the script again will result in just rollbacks, so it only affects concurrent executions.

- Test using Python with `pyodbc`:

```python

import pyodbc

from multiprocessing import Pool

def insert(_):

conn = pyodbc.connect(f"DRIVER={{/usr/lib/x86_64-linux-gnu/libMonetODBC.so}};HOST=localhost;PORT=50000;DATABASE=testdb;UID=monetdb;PWD=monetdb")

conn.autocommit = True

cursor = conn.cursor()

for i in range(1000):

try:

cursor.execute(f'INSERT INTO T values(?, ?)', i, i)

except:

pass

return True

pool = Pool(2)

pool.map(insert, range(2))

```

**Expected behavior**

No rows with a duplicate primary key.

**Software versions**

- `monetdb -v`: `MonetDB Database Server Toolkit v11.41.11 (Jul2021-SP1)`.

- OS: `Ubuntu 20.04.3 LTS`;

- Monetdb installed from release packages with `apt` (packages `monetdb5-sql` and `monetdb-client`);

- `mclient -v`: `mclient, the MonetDB interactive terminal, version 11.41.11 (Jul2021-SP1)`

- ODBC driver installed with `apt` packages `unixodbc`, `unixodbc-dev`, and `libmonetdb-client-odbc`;

- `pyodbc==4.0.32`.

**Additional context**

The problem is visible with both `autocommit=True` and `autocommit=False`.

| PRIMARY KEY unique constraint is violated with concurrent inserts | https://api.github.com/repos/MonetDB/MonetDB/issues/7200/comments | 1 | 2021-11-22T16:12:31Z | 2024-06-27T13:16:37Z | https://github.com/MonetDB/MonetDB/issues/7200 | 1,060,339,451 | 7,200 |

[

"MonetDB",

"MonetDB"

] | I get a very high disk write and I/O write operations after enabling query history (even if using very high threshold so no queries are logged to the history table), using:

```

call sys.querylog_enable(10000);

```

I have a constant rate of inserts to the DB (around 30 records/s). This generates around 1.5 MB/s write about 40 I/O write operation per second to the main storage.

The moment I enabled the query history log (even with a high threshold) I get ~100MB/s write rate and ~1000 I/O write operations per second to the storage all the time.

This makes the feature not very useful in my use-case as it will have a significant impact on performance. I was also hoping to use it as a "slow query log" by giving a relatively high threshold value.

I had a look at system call count (with `strace`) and with the log disabled a typical 10 second work load looks like:

```

72012 clock_gettime

6063 poll

3852 futex

1074 write

802 read

728 rt_sigprocmask

364 clone

18 fdatasync

10 lseek

4 mremap

```

but with it enabled it looks like:

```

57100 clock_gettime

23442 write

23336 read

4923 poll

3318 futex

624 rt_sigprocmask

312 clone

42 rename

42 close

35 lseek

28 openat

28 open

28 munmap

28 mmap

28 getdents

28 fstat

27 fdatasync

14 unlink

14 rmdir

14 mkdir

14 fcntl

2 mremap

```

Looking at the opens and write what it does is:

```

openat(AT_FDCWD, "/var/lib/monetdb/data/default/logs/bat/DELETE_ME", O_RDONLY|O_NONBLOCK|O_DIRECTORY|O_CLOEXEC) = 30

getdents(30, /* 3 entries */, 32768) = 80

unlink("/var/lib/monetdb/data/default/logs/bat/DELETE_ME/BBP.dir") = 0

getdents(30, /* 0 entries */, 32768) = 0

close(30) = 0

rmdir("/var/lib/monetdb/data/default/logs/bat/DELETE_ME") = 0

clock_gettime(CLOCK_REALTIME, {1637340440, 451079009}) = 0

rename("/var/lib/monetdb/data/default/logs/bat/BBP.dir", "/var/lib/monetdb/data/default/logs/bat/BACKUP/BBP.dir") = 0

```

and

```

openat(AT_FDCWD, "/var/lib/monetdb/data/default/logs/bat/BACKUP/SUBCOMMIT", O_RDONLY|O_NONBLOCK|O_DIRECTORY|O_CLOEXEC) = -1 ENOENT (No such file o

r directory)

mkdir("/var/lib/monetdb/data/default/logs/bat/BACKUP/SUBCOMMIT", 0777) = 0

clock_gettime(CLOCK_REALTIME, {1637340441, 481343818}) = 0

rename("/var/lib/monetdb/data/default/logs/bat/BACKUP/BBP.dir", "/var/lib/monetdb/data/default/logs/bat/BACKUP/SUBCOMMIT/BBP.dir") = 0

open("/var/lib/monetdb/data/default/logs/bat/BBP.dir", O_WRONLY|O_CREAT|O_CLOEXEC, 0666) = 30

fcntl(30, F_GETFL) = 0x8001 (flags O_WRONLY|O_LARGEFILE)

open("/var/lib/monetdb/data/default/logs/bat/BACKUP/SUBCOMMIT/BBP.dir", O_RDONLY) = 31

fstat(31, {st_mode=S_IFREG|0600, st_size=6583714, ...}) = 0

mmap(NULL, 4096, PROT_READ|PROT_WRITE, MAP_PRIVATE|MAP_ANONYMOUS, -1, 0) = 0x7f7f931b1000

read(31, "BBP.dir, GDKversion 25124\n8 8 16"..., 4096) = 4096

fstat(30, {st_mode=S_IFREG|0600, st_size=0, ...}) = 0

mmap(NULL, 4096, PROT_READ|PROT_WRITE, MAP_PRIVATE|MAP_ANONYMOUS, -1, 0) = 0x7f7f931b0000

read(31, "4096 0 9223372036854775808 92233"..., 4096) = 4096

write(30, "BBP.dir, GDKversion 25124\n8 8 16"..., 4096) = 4096

read(31, "0 1153 0 0 0 0 92233720368547758"..., 4096) = 4096

write(30, "4096 0 9223372036854775808 92233"..., 4096) = 4096

read(31, " 1048576 0 str 2 1 0 0 0 0 0 922"..., 4096) = 4096

write(30, "0 1153 0 0 0 0 92233720368547758"..., 4096) = 4096

read(31, "036854775808 11371 16384 0\n171 3"..., 4096) = 4096

write(30, " 1048576 0 str 2 1 0 0 0 0 0 922"..., 4096) = 4096

read(31, "362 40448 0 int 4 0 0 0 0 0 0 92"..., 4096) = 4096

write(30, "036854775808 11371 16384 0\n171 3"..., 4096) = 4096

read(31, "5808 0 2048 0 922337203685477580"..., 4096) = 4096

write(30, "362 40448 0 int 4 0 0 0 0 0 0 92"..., 4096) = 4096

read(31, "0 0 9223372036854775808 2 1024 0"..., 4096) = 4096

write(30, "5808 0 2048 0 922337203685477580"..., 4096) = 4096

read(31, "4 32 tmp_460 04/460 2 10 1024 0 "..., 4096) = 4096

write(30, "0 0 9223372036854775808 2 1024 0"..., 4096) = 4096

read(31, " 1490871 1572864 0 str 4 1 2048 "..., 4096) = 4096

write(30, "4 32 tmp_460 04/460 2 10 1024 0 "..., 4096) = 4096

```

and many more writes.

So this looks like it is re-writing (updating?) the `bat/BBP.dir` like 3 times per second with the query log feature enabled.

That would explain the I/O load as the size of the BBP.dir file is 6.3M on my system.

This is on MonetDB Jul2021-SP1 release. | High disk write and I/O after enabling Query History | https://api.github.com/repos/MonetDB/MonetDB/issues/7199/comments | 1 | 2021-11-19T17:10:24Z | 2024-06-27T13:16:36Z | https://github.com/MonetDB/MonetDB/issues/7199 | 1,058,744,817 | 7,199 |

[

"MonetDB",

"MonetDB"

] | The query:

```

select

count(*) as count

from logs.http_access

where processed_timestamp >= '2021-11-07' and processed_timestamp < '2021-11-10'

and timestamp >= '2021-11-08 15:40:28.998' and timestamp <= '2021-11-08 15:55:28.999'

and logsource_environment = 'prod' and logsource_service = 'varnish' and request_url_path <> '/varnish_core_health.test'

and request_url_path <> '/varnish_health.test' and json.filter(varnish_client_log, '$.checkpoint_validated') <> '[]'

```

takes 3:40 minutes in my tests where on MonetDB Apr2019-SP1 it would take ~50ms.

If I remove one of the `<>` or predicates or change it to `=` the query would execute in ~50ms as expected:

```

select

count(*) as count

from logs.http_access

where processed_timestamp >= '2021-11-07' and processed_timestamp < '2021-11-10'

and timestamp >= '2021-11-08 15:40:28.998' and timestamp <= '2021-11-08 15:55:28.999'

and request_url_path <> '/varnish_core_health.test'

and json.filter(varnish_client_log, '$.checkpoint_validated') <> '[]'

```

Or

```

select

count(*) as count

from logs.http_access

where processed_timestamp >= '2021-11-07' and processed_timestamp < '2021-11-10'

and timestamp >= '2021-11-08 15:40:28.998' and timestamp <= '2021-11-08 15:55:28.999'

and request_url_path = '/varnish_core_health.test' and request_url_path <> '/varnish_health.test'

and json.filter(varnish_client_log, '$.checkpoint_validated') <> '[]'

```

Looks like in the bad case each partition is scanned with the JSON predicate:

```

-- C_35:bat[:oid] := algebra.select(X_8:bat[:timestamp], C_5:bat[:oid], "2021-11-07 00:00:00.000000":timestamp, "2021-11-10 00:00:00.000000":timestamp, true:bit, false:bit, false:bit, true:bit);

-- C_43:bat[:oid] := algebra.select(X_15:bat[:timestamp], C_35:bat[:oid], "2021-11-08 15:40:28.998000":timestamp, "2021-11-08 15:55:28.999000":timestamp, true:bit, true:bit, false:bit, true:bit);

-- C_52:bat[:oid] := algebra.thetaselect(X_46:bat[:json], C_43:bat[:oid], ""[]"":json, "!=":str);

-- C_56:bat[:oid] := algebra.thetaselect(X_20:bat[:str], C_52:bat[:oid], "/varnish_core_health.test":str, "!=":str);

-- C_59:bat[:oid] := algebra.thetaselect(X_20:bat[:str], C_56:bat[:oid], "/varnish_health.test":str, "!=":str);

```

While in the good case each partition is only scanned with the string comparisons and the JSON predicate is done on the concatenation of the results:

```

-- C_35:bat[:oid] := algebra.select(X_8:bat[:timestamp], C_5:bat[:oid], "2021-11-07 00:00:00.000000":timestamp, "2021-11-10 00:00:00.000000":timestamp, true:bit, false:bit, false:bit, true:bit);

-- C_43:bat[:oid] := algebra.select(X_15:bat[:timestamp], C_35:bat[:oid], "2021-11-08 15:40:28.998000":timestamp, "2021-11-08 15:55:28.999000":timestamp, true:bit, true:bit, false:bit, true:bit);

-- C_46:bat[:oid] := algebra.thetaselect(X_20:bat[:str], C_43:bat[:oid], "/varnish_core_health.test":str, "==":str);

-- C_50:bat[:oid] := algebra.thetaselect(X_20:bat[:str], C_46:bat[:oid], "/varnish_health.test":str, "==":str);

```

Later in the plan:

```

-- X_2296:bat[:json] := bat.append(X_2294:bat[:json], X_2232:bat[:json], true:bit);

-- X_2298:bat[:json] := bat.append(X_2296:bat[:json], X_2282:bat[:json], true:bit);

-- X_2301:bat[:json] := mal.manifold("json":str, "filter":str, X_2298:bat[:json], "$.checkpoint_validated":str);

-- C_2307:bat[:oid] := algebra.thetaselect(X_2301:bat[:json], nil:BAT, ""[]"":json, "!=":str);

```

Ideally all the plans would be doing `json.filter` on the concatenation of the partition filters no matter if what other string predicates are present.

This is on MonetDB Jul2021-SP1 and is a regression from Apr2019-SP1 on which the query was fast.

The `logs.http_access` is a merge table of daily partitions. | Suboptimal query plan for query containing JSON access filter and two negative string comparisons | https://api.github.com/repos/MonetDB/MonetDB/issues/7198/comments | 3 | 2021-11-19T12:31:45Z | 2024-06-27T13:16:35Z | https://github.com/MonetDB/MonetDB/issues/7198 | 1,058,480,256 | 7,198 |

[

"MonetDB",

"MonetDB"

] | The database prefers to convert column data to match the query filter type instead of the way around for timestamps.

I filter the TIMESTAMP (SQL date and time) column `processed_timestamp` with timestamp calculated using `interval` statement. The resulting filter type is a UNIX timestamp and does not match the SQL date and time column type. The engine adds a cast from SQL date and time to UNIX timestamp for each column data entry which is very slow compared to just casting the UNIX timestamp to SQL date and time filter constant.

```

-- took: 463ms

-- X_8:bat[:timestamp] := sql.bind(X_4:int, "logs":str, "http_access_past":str, "processed_timestamp":str, 0:int);

-- X_15:bat[:timestamp] := batcalc.timestamp(X_8:bat[:timestamp], C_5:bat[:oid], 7:int);

-- C_28:bat[:oid] := algebra.select(X_15:bat[:timestamp], "2021-11-10 14:48:19.393693":timestamp, "2021-11-10 14:54:59.393705":timestamp, true:bit, true:bit, false:bit, true:bit);

explain

select count(*)

from logs.http_access

where processed_timestamp BETWEEN now() - interval '110' second - interval '300' second

and now() - interval '10' second

```

Notice the `timestamp_2time_timestamp` calls during execution:

```

Overhead Shared Object Symbol

56.06% libmonetdbsql.so.11.41.11 [.] __divti3

21.65% libmonetdbsql.so.11.41.11 [.] timestamp_2time_timestamp

5.86% libbat.so.23.0.3 [.] timestamp_create

4.29% libbat.so.23.0.3 [.] timestamp_date

4.18% libbat.so.23.0.3 [.] timestamp_daytime

2.30% libmonetdbsql.so.11.41.11 [.] timestamp_date@plt

1.15% libmonetdbsql.so.11.41.11 [.] timestamp_create@plt

1.05% libbat.so.23.0.3 [.] BATordered

0.36% libmonetdbsql.so.11.41.11 [.] timestamp_daytime@plt

```

The workaround is to manually cast the result of the interval operation to TIMESTAMP - this make my query to run 10x faster:

```

-- took: 40 ms

-- X_8:bat[:timestamp] := sql.bind(X_4:int, "logs":str, "http_access_past":str, "processed_timestamp":str, 0:int);

-- C_29:bat[:oid] := algebra.select(X_8:bat[:timestamp], C_5:bat[:oid], "2021-11-19 10:42:12.604285":timestamp, "2021-11-19 10:48:52.604301":timestamp, true:bit, true:bit, false:bit, true:bit);

explain

select count(*)

from logs.http_access

where processed_timestamp BETWEEN CAST(now() - interval '110' second - interval '300' second AS TIMESTAMP)

and CAST(now() - interval '10' second AS TIMESTAMP)

```

Ideally the database would add the cast automatically for the constant that the table is filtered by instead of casting all the column data.

This is on MonetDB Jul2021-SP1 release.

I have ended up rewriting my queries due to stricter SQL standard requirements introduced with the latest releases (I was using integer subtraction before) and I have noticed this problem. I have migrated from Apr2019-SP1 release. | Cast query constant instead of column data for incompatible timestamp types | https://api.github.com/repos/MonetDB/MonetDB/issues/7197/comments | 5 | 2021-11-19T11:11:03Z | 2021-11-19T17:17:38Z | https://github.com/MonetDB/MonetDB/issues/7197 | 1,058,413,315 | 7,197 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Heyo, I was trying to test some things to make our analytics stuff more stable. Basically I create a table, start a transaction on 2 clients, one alters the table to add a new column, the other client inserts some data. Instead of rolling back it ends up corrupting the table entirely it seems like?

I've read over the optimistic concurrency control so I'm not sure if this is *actually* a bug or expected behavior here.

**To Reproduce**

Client 1

```

sql>create table test (id bigint);

operation successful

sql>select * from test;

+----+

| id |

+====+

+----+

0 tuples

sql>start transaction;

auto commit mode: off

sql>alter table test add column data int; # To be clear this is run BEFORE the insert in the second client.

operation successful

sql>commit;

auto commit mode: on

sql>select * from test;

GDK reported error: BATproject2: does not match always

```

Client 2

```

sql>select * from test;

+----+

| id |

+====+

+----+

0 tuples

sql>start transaction;

auto commit mode: off

sql>insert into test values (1); # This is run AFTER the alter

1 affected row

sql>commit;

auto commit mode: on

sql>select * from test;

GDK reported error: BATproject2: does not match always

```

**Expected behavior**

I know this is a violation of the OCC rules on concurrent connections but wasn't expecting the table to not be recoverable afterwards.

**Screenshots**

**Software versions**

MonetDB: MonetDB Database Server Toolkit v11.41.11 (Jul2021-SP1)

OS: Linux 839c464b9757 5.10.0-8-cloud-amd64 #1 SMP Debian 5.10.46-4 (2021-08-03) x86_64 x86_64 x86_64 GNU/Linux

Using the docker image here: https://hub.docker.com/r/monetdb/monetdb

**Issue labeling **

Table corruption, concurrent transactions,

**Additional context**

| BATproject2: does not match always | https://api.github.com/repos/MonetDB/MonetDB/issues/7196/comments | 6 | 2021-11-18T02:41:22Z | 2024-06-27T13:16:34Z | https://github.com/MonetDB/MonetDB/issues/7196 | 1,056,870,192 | 7,196 |

[

"MonetDB",

"MonetDB"

] | According to the documentation of monetdb/numpy pdfs, a UDF is mappable only when python_map language is defined. Otherwise is considered as a black box. According to an example it is ok to have a scalar function like this:

CREATE FUNCTION python_min(i INTEGER) RETURNS integer LANGUAGE PYTHON {

return numpy.min(i)

};

which returns one single value in the result and expect it not to be mappable.

However, we have encountered a case that this UDF runs in parallel and returns multiple rows in the output.

If we have a merge table, with two partition tables, and run the above UDF without defining it as PYTHON_MAP, then this is mapped to the partitions and returns 2 values.

| Python UDFs are mapped even if they are not mappable. | https://api.github.com/repos/MonetDB/MonetDB/issues/7195/comments | 2 | 2021-11-10T11:27:41Z | 2023-09-13T14:55:09Z | https://github.com/MonetDB/MonetDB/issues/7195 | 1,049,708,059 | 7,195 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Triggers don't work after server restart

**To Reproduce**

Just create a trigger on a table and restart the server.

**Expected behavior**

The trigger should run.

**Software versions**

- MonetDB version number: Jul2021

- OS and version: Linux

| Triggers don't work after server restart | https://api.github.com/repos/MonetDB/MonetDB/issues/7194/comments | 0 | 2021-11-05T09:35:40Z | 2021-11-05T09:43:38Z | https://github.com/MonetDB/MonetDB/issues/7194 | 1,045,629,009 | 7,194 |

[

"MonetDB",

"MonetDB"

] | I am trying to downsample and upsample time series data. Time series database systems (TSDS) usually have an option to make the downsampling and upsampling with an operator like SAMPLE BY (1h).

Is there a possibility to downsample time series on MonetDB?

Thanks in advance! | Time Series Downsampling/Upsampling | https://api.github.com/repos/MonetDB/MonetDB/issues/7193/comments | 8 | 2021-11-04T21:51:55Z | 2022-08-18T18:39:04Z | https://github.com/MonetDB/MonetDB/issues/7193 | 1,045,244,282 | 7,193 |

[

"MonetDB",

"MonetDB"

] | Possibly after a failure due to insufficient disk space, a hash table seems to be in an inconsistent state.

A range select (using imprints) returns 9 results, while the point select (using hash) returns 0 results:

```

sql>\d cacheinfo

CREATE TABLE "spinque"."cacheinfo" (

"cacheid" INTEGER,

"attribute" CHARACTER LARGE OBJECT,

"value" CHARACTER LARGE OBJECT

);

sql>select * from sys.storage() where table='cacheinfo' and column='cacheid';

+---------+-----------+---------+------+----------+----------+--------+-----------+------------+----------+--------+-------+----------+--------+-----------+--------+----------+

| schema | table | column | type | mode | location | count | typewidth | columnsize | heapsize | hashes | phash | imprints | sorted | revsorted | unique | orderidx |

+=========+===========+=========+======+==========+==========+========+===========+============+==========+========+=======+==========+========+===========+========+==========+

| spinque | cacheinfo | cacheid | int | writable | 10/1055 | 101045 | 4 | 404180 | 0 | 463308 | false | 2868 | false | false | false | 0 |

+---------+-----------+---------+------+----------+----------+--------+-----------+------------+----------+--------+-------+----------+--------+-----------+--------+----------+

1 tuple

sql>select cacheid from cacheinfo where cacheid > 519 and cacheid < 521;

+---------+

| cacheid |

+=========+

| 520 |

| 520 |

| 520 |

| 520 |

| 520 |

| 520 |

| 520 |

| 520 |

| 520 |

+---------+

9 tuples

sql>select cacheid from cacheinfo where cacheid = 520;

+---------+

| cacheid |

+=========+

+---------+

0 tuples

```

According to `sys.storage()`, the hash is not persistent, so I tried to stop/start the database to see if it would drop it. Nothing changes.

I also tried to `analyze` the column, but nothing changes.

This is not easily reproducible, but also not urgent to me. I'm reporting it hoping that it can give a clue as to where a problem with hashing might be. It looks to me that it is not robust against a failure.

**Software versions**

- MonetDB version number v11.39.18

- OS and version: FC32

- self-installed and compiled

| Equality selection gives wrong result because of corrupt hash table | https://api.github.com/repos/MonetDB/MonetDB/issues/7192/comments | 0 | 2021-11-02T13:06:50Z | 2021-11-02T13:06:50Z | https://github.com/MonetDB/MonetDB/issues/7192 | 1,042,321,490 | 7,192 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

On MonetDBe, if a Prepared Statement is created with variable-sized types (such as String) as input and a NULL value is bound, closing the Prepared Statement (with `monetdbe_cleanup_statement()`) leads to:

`Assertion failed: (((char *) s)[i] == '\xBD'), function GDKfree`

**To Reproduce**

This example uses MonetDBe-Java:

```

Connection c = DriverManager.getConnection("jdbc:monetdb:memory:", new Properties());

Statement s = c.createStatement();

s.executeUpdate("CREATE TABLE p (i STRING)");

PreparedStatement ps = c.prepareStatement("INSERT INTO p VALUES (?);");

ps.setNull(1,Types.VARCHAR);

ps.execute();

c.close();

```

When the PreparedStatement ps is closed, the assertion failed is thrown. If we close the PreparedStatement with a non-NULL value bound to the string variable, the assertion failed does not happen.

**Expected behavior**

The PreparedStatement should be closed correctly, so `monetdbe_cleanup_statement()` should not throw this "Assertion failed".

**Software versions**

- MonetDB 5 server 11.42.0 (hg id: 7e9274bbe6de)

- Latest MonetDBe-Java version

- macOS Catalina (10.15.7)

| [MonetDBe] monetdbe_cleanup_statement() with bound NULLs on variable-sized types bug | https://api.github.com/repos/MonetDB/MonetDB/issues/7191/comments | 0 | 2021-11-01T15:37:19Z | 2024-06-27T13:16:32Z | https://github.com/MonetDB/MonetDB/issues/7191 | 1,041,309,161 | 7,191 |

[

"MonetDB",

"MonetDB"

] | **Is your feature request related to a problem? Please describe.**

The absence of Upsert means several queries whereas Upsert means only one query. Also, that can be optimized.

**Describe the solution you'd like**

implementation of an UPSERT clause

**Describe alternatives you've considered**

writing several queries

| Upsert clause support | https://api.github.com/repos/MonetDB/MonetDB/issues/7190/comments | 2 | 2021-10-23T16:56:50Z | 2022-06-18T09:54:59Z | https://github.com/MonetDB/MonetDB/issues/7190 | 1,034,209,513 | 7,190 |

[

"MonetDB",

"MonetDB"

] | When I start monetdb, an error appears (my operating system is Windows10 x64).

Below is the error message:

```

C:\Users\cathyma\Desktop\MonetDB5_new>M5server.bat --dbpath=wyndw --set mapi_port=50000 --set max_clients=200

MonetDB/Python Disabled: Python 3.9 installation not found.

# MonetDB 5 server v11.41.5 (Jul2021)

# Serving database 'demo', using 8 threads

# Compiled for amd64-pc-windows-msvc/64bit

# Found 15.692 GiB available main-memory of which we use 12.789 GiB

# Copyright (c) 1993 - July 2008 CWI.

# Copyright (c) August 2008 - 2021 MonetDB B.V., all rights reserved

# Visit https://www.monetdb.org/ for further information

#2021-10-22 12:07:23: main thread: createExceptionInternal: !ERROR: LoaderException:loadLibrary:Loading error failed to open library geom (from within file 'C:\Users\cathyma\Desktop\MonetDB5_new\lib\monetdb5\_geom.dll'): The specified module could not be found.

#2021-10-22 12:07:23: main thread: mal_init: !ERROR: LoaderException:loadLibrary:Loading error failed to open library geom (from within file 'C:\Users\cathyma\Desktop\MonetDB5_new\lib\monetdb5\_geom.dll'): The specified module could not be found.

```

| MonetDB startup error | https://api.github.com/repos/MonetDB/MonetDB/issues/7189/comments | 8 | 2021-10-22T04:15:42Z | 2021-10-27T07:08:19Z | https://github.com/MonetDB/MonetDB/issues/7189 | 1,033,160,882 | 7,189 |

[

"MonetDB",

"MonetDB"

] | Hi, when I installing the MonetDB on Ubuntu 1804 follow the instructions on [this page](https://www.monetdb.org/downloads/deb/), the error message shows that the certificate has expired,

> Err:10 https://dev.monetdb.org/downloads/deb bionic Release

Certificate verification failed: The certificate is NOT trusted. The certificate chain uses expired certificate. Could not handshake: Error in the certificate verification. [IP: 192.16.197.137 443]

Reading package lists... Done

E: The repository 'https://dev.monetdb.org/downloads/deb bionic Release' does not have a Release file.

N: Updating from such a repository can't be done securely, and is therefore disabled by default.

N: See apt-secure(8) manpage for repository creation and user configuration details.

Did I miss something? | The certificate has expired? | https://api.github.com/repos/MonetDB/MonetDB/issues/7188/comments | 1 | 2021-10-18T03:53:30Z | 2021-10-19T06:35:31Z | https://github.com/MonetDB/MonetDB/issues/7188 | 1,028,591,170 | 7,188 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

As of Jul2021, the WAL log is constantly rotated, so the functions to force a log flush have been removed from Jul2021.

This page should be updated: https://www.monetdb.org/Documentation/SQLReference/SystemProcedures

| Outdated documentation of SystemProcedures | https://api.github.com/repos/MonetDB/MonetDB/issues/7187/comments | 1 | 2021-10-14T18:32:25Z | 2024-06-27T13:16:31Z | https://github.com/MonetDB/MonetDB/issues/7187 | 1,026,697,887 | 7,187 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

I expect that data exports created using COPY SELECT .. INTO 'file.csv' ...

would be usable to restore/copy the data again using COPY 'file.csv' INTO ..

However for certain data (in this case all data from sys.tables) this fails when the delimiter is a pipe character '|' or a comma character ','.

When I look at the generated data files they seem to be correct.

However the import does not handle the string data inside double quoted delimiters "" correctly, as it fails on the || characters (in the .psv file) or comma character (in the .csv file).

**To Reproduce**

Start mserver5

-# builtin opt gdk_dbpath = /home/dinther/dev/INSTALL/var/monetdb5/dbfarm/demo

-# builtin opt mapi_port = 50000

-# builtin opt sql_optimizer = default_pipe

-# builtin opt sql_debug = 0

-# builtin opt raw_strings = false

-# cmdline opt embedded_r = true

-# cmdline opt embedded_py = 3

-# cmdline opt embedded_c = true

-# cmdline opt mapi_port = 41000

-# MonetDB 5 server v11.42.0 (hg id: 38bf565fc89a)

-# This is an unreleased version

-# Serving database 'demo', using 8 threads

-# Compiled for x86_64-pc-linux-gnu/64bit with 128bit integers

-# Found 31.233 GiB available main-memory of which we use 25.455 GiB

-# Copyright (c) 1993 - July 2008 CWI.

-# Copyright (c) August 2008 - 2021 MonetDB B.V., all rights reserved

-# Visit https://www.monetdb.org/ for further information

-# Listening for connection requests on mapi:monetdb://localhost:41000/

-# MonetDB/GIS module loaded

-# MonetDB/R module loaded

-# MonetDB/Python3 module loaded

-# MonetDB/SQL module loaded

Start mclient

Welcome to mclient, the MonetDB/SQL interactive terminal (unreleased)

Database: MonetDB v11.42.0 (hg id: 38bf565fc89a), 'demo'

FOLLOW US on https://twitter.com/MonetDB or https://github.com/MonetDB/MonetDB

Type \q to quit, \? for a list of available commands

auto commit mode: on

sql>CREATE TABLE t AS SELECT * FROM tables WITH NO DATA;

operation successful

sql>\d t

CREATE TABLE "sys"."t" (

"id" INTEGER,

"name" VARCHAR(1024),

"schema_id" INTEGER,

"query" VARCHAR(1048576),

"type" SMALLINT,

"system" BOOLEAN,

"commit_action" SMALLINT,

"access" SMALLINT,

"temporary" TINYINT

);

sql>select count(*) from t;

+------+

| %1 |

+======+

| 0 |

+------+

1 tuple

sql>COPY SELECT * FROM tables INTO 'csvfiles/tables.psv' ON CLIENT DELIMITERS '|';

sql>COPY SELECT * FROM tables INTO 'csvfiles/tables.csv' ON CLIENT DELIMITERS ',';

sql>COPY SELECT * FROM tables INTO 'csvfiles/tables.tsv' ON CLIENT DELIMITERS '\t';

sql>COPY INTO t FROM 'csvfiles/tables.psv' ON CLIENT DELIMITERS '|';

Failed to import table 't', line 105: column 11: Leftover data 'true|0|0|0'

sql>COPY INTO t FROM 'csvfiles/tables.psv' ON CLIENT DELIMITERS '|';

Failed to import table 't', line 85: column 11: Leftover data '\n sys.describe_type(c.type, c.type_digits, c.type_scale) ||\n ifthenelse(c.\"null\" = 'false', ' NOT NULL', '')\n , ', ') || ')'\n from sys._columns c\n where c.table_id = t.id) col,\n case ts.table_type_name\n when 'REMOTE TABLE' then\n sys.get_remote_table_expressions(s.name, t.name)\n when 'MERGE TABLE' then\n sys.get_merge_table_partition_expressions(t.id)\n when 'VIEW' then\n sys.schema_guard(s.name, t.name, t.query)\n else\n ''\n end opt\n from sys.schemas s, sys.table_types ts, sys.tables t\n where ts.table_type_name in ('TABLE', 'VIEW', 'MERGE TABLE', 'REMOTE TABLE', 'REPLICA TABLE')\n and t.system = false\n and s.id = t.schema_id\n and ts.table_type_id = t.type\n and s.name <> 'tmp';"|11|true|0|0|0'

sql>COPY INTO t FROM 'csvfiles/tables.psv' ON CLIENT DELIMITERS '|';

Failed to import table 't', line 43: column 11: Leftover data ' ' arg' as varchar(44)), 'sys.args', f.system from sys.args a join sys.functions f on a.func_id = f.id left outer join sys.function_types ft on f.type = ft.function_type_id union all\nselect id, name, schema_id, cast(null as int) as table_id, cast(null as varchar(124)) as table_name, 'sequence', 'sys.sequences', false from sys.sequences union all\nselect o.id, o.name, pt.schema_id, pt.id, pt.name, 'partition of merge table', 'sys.objects', false from sys.objects o join sys._tables pt on o.sub = pt.id join sys._tables mt on o.nr = mt.id where mt.type = 3 union all\nselect id, sqlname, schema_id, cast(null as int) as table_id, cast(null as varchar(124)) as table_name, 'type', 'sys.types', (sqlname in ('inet','json','url','uuid')) from sys.types where id > 2000\n order by id;"|11|true|0|0|0'

sql>COPY INTO t FROM 'csvfiles/tables.psv' ON CLIENT DELIMITERS '|';

Failed to import table 't', line 115: column 11: Leftover data ' sys.dq(col) || ' SET DEFAULT ' || def || ';' stmt,\n sch schema_name,\n tbl table_name,\n col column_name\n from sys.describe_column_defaults;"|11|true|0|0|0'

sql>select count(*) from t;

+------+

| %1 |

+======+

| 0 |

+------+

1 tuple

sql>

sql>COPY INTO t FROM 'csvfiles/tables.csv' ON CLIENT DELIMITERS ',';

Failed to import table 't', line 41: column 11: Leftover data ' ql.ship, ql.cpu, ql.io\nfrom sys.querylog_catalog() qd, sys.querylog_calls() ql\nwhere qd.id = ql.id and qd.owner = user;",11,true,0,0,0'

sql>COPY INTO t FROM 'csvfiles/tables.csv' ON CLIENT DELIMITERS ',';

Failed to import table 't', line 41: column 11: Leftover data ' ql.ship, ql.cpu, ql.io\nfrom sys.querylog_catalog() qd, sys.querylog_calls() ql\nwhere qd.id = ql.id and qd.owner = user;",11,true,0,0,0'

sql>COPY INTO t FROM 'csvfiles/tables.csv' ON CLIENT DELIMITERS ',';

Failed to import table 't', line 58: column 11: Leftover data ' c.name as column_name, dep.depend_type as depend_type\n from sys.tables as t, sys.columns as c, sys.triggers as tri, sys.dependencies as dep\n where dep.id = c.id and dep.depend_id = tri.id and c.table_id = t.id\n and dep.depend_type = 8\n order by t.schema_id, t.name, tri.name, c.name;",11,true,0,0,0'

sql>COPY INTO t FROM 'csvfiles/tables.csv' ON CLIENT DELIMITERS ',';

Failed to import table 't', line 22: column 11: Leftover data ' \"commit_action\", \"access\", CASE WHEN (NOT \"system\" AND \"commit_action\" > 0) THEN 1 ELSE 0 END AS \"temporary\" FROM \"sys\".\"_tables\" WHERE \"type\" <> 2 UNION ALL SELECT \"id\", \"name\", \"schema_id\", \"query\", CAST(\"type\" + 30 /* local temp table */ AS SMALLINT) AS \"type\", \"system\", \"commit_action\", \"access\", 1 AS \"temporary\" FROM \"tmp\".\"_tables\";",11,true,0,0,0'

sql>select count(*) from t;

+------+

| %1 |

+======+

| 0 |

+------+

1 tuple

sql>

sql>COPY INTO t FROM 'csvfiles/tables.tsv' ON CLIENT DELIMITERS '\t';

129 affected rows

sql>COPY INTO t FROM 'csvfiles/tables.tsv' ON CLIENT DELIMITERS '\t';

129 affected rows

sql>select count(*) from t;

+------+

| %1 |

+======+

| 258 |

+------+

1 tuple

sql>COPY INTO t FROM 'csvfiles/tables.tsv' ON CLIENT DELIMITERS '\t';

129 affected rows

sql>select count(*) from t;

+------+

| %1 |

+======+

| 387 |

+------+

1 tuple

sql>truncate t;

387 affected rows

sql>select count(*) from t;

+------+

| %1 |

+======+

| 0 |

+------+

1 tuple

sql>drop table t;

operation successful

sql>\d

sql>

**Expected behavior**

The import of created files tables.psv and tables.csv succeed without errors (like is the case with tables.tsv).

Also the import does not return the same error for the data file when it is executed multiple consecutive times.

This looks like mserver5 randomly processes the data file (or is this due to parallel processing the data file).

At least to the user it is not consistent and therefore confusing.

**Software versions**

I tested it using the compiled build from default branch on Fedora .

However this problem is probably also reproducable with Jul2021 or Jul2021-SP1 release

| data files created with COPY SELECT .. INTO 'file.csv' fail to be loaded using COPY INTO .. FROM 'file.csv' when double quoted string data contains the field values delimiter character | https://api.github.com/repos/MonetDB/MonetDB/issues/7186/comments | 4 | 2021-10-14T13:58:00Z | 2024-06-27T13:16:30Z | https://github.com/MonetDB/MonetDB/issues/7186 | 1,026,434,709 | 7,186 |

[

"MonetDB",

"MonetDB"

] | **To Reproduce**

```sql

create table students (course TEXT, type TEXT);

insert into students

(course, type)

values

('CS', 'Bachelor'),

('CS', 'Bachelor'),

('CS', 'PhD'),

('Math', 'Masters'),

('CS', NULL),

('CS', NULL),

('Math', NULL);

-- without aliases the query works as expected

select count(*), course, type

from students

group by grouping sets((course, type), (type))

order by 1, 2, 3;

-- with aliases no result is returned

select count(*), course AS crs, type AS tp

from students

group by grouping sets((crs, tp), (tp))

order by 1, 2, 3;

```

**Expected behavior**

Expect the following result from both queries:

```

+------+--------+----------+

| %1 | course | type |

+======+========+==========+

| 1 | null | Masters |

| 1 | null | PhD |

| 1 | CS | PhD |

| 1 | Math | null |

| 1 | Math | Masters |

| 2 | null | Bachelor |

| 2 | CS | null |

| 2 | CS | Bachelor |

| 3 | null | null |

+------+--------+----------+

```

**Software versions**

- MonetDB v11.41.5 (Jul2021)

- MacOS

- Homebrew

| GROUPING SETS on groups with aliases provided in the SELECT returns empty result | https://api.github.com/repos/MonetDB/MonetDB/issues/7185/comments | 1 | 2021-10-12T11:42:44Z | 2024-06-27T13:16:29Z | https://github.com/MonetDB/MonetDB/issues/7185 | 1,023,720,285 | 7,185 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

An insert into will block any other query executed afterwards. The query will use only 1 core from the CPU.

**To Reproduce**

* Create a database and open mclient two times

* Create a table as: `CREATE TABLE IF NOT EXISTS alpha (id bigint PRIMARY KEY AUTO_INCREMENT, data bigint); `

* In the first mclient execute `INSERT INTO sys.alpha (data) select * from generate_series(0,100000000,cast (1 as bigint));` and **immediately** in the second mclient execute `SELECT 1;`

**Expected behavior**

Another query can be executed in parallel with an insert into query.

**Software versions**

- MonetDB Jul2021 v11.41.5

- OS and version: Ubuntu 20.04

| Insert into query blocks all other queries | https://api.github.com/repos/MonetDB/MonetDB/issues/7184/comments | 10 | 2021-10-12T07:40:50Z | 2024-06-27T13:16:28Z | https://github.com/MonetDB/MonetDB/issues/7184 | 1,023,474,328 | 7,184 |

[

"MonetDB",

"MonetDB"

] | I noticed that there are not any .deb packages of version 11.41.x provided for Ubuntu 1604, I'd like to know do you have the plan for this? Or is there an instruction that describes how to build the .deb packages of version 11.41.x for Ubuntu 1604?

| Do you have any plans to build the .deb packages of version 11.41.x for Ubuntu 1604? | https://api.github.com/repos/MonetDB/MonetDB/issues/7183/comments | 10 | 2021-10-11T10:19:52Z | 2021-10-28T00:23:04Z | https://github.com/MonetDB/MonetDB/issues/7183 | 1,022,524,524 | 7,183 |

[

"MonetDB",

"MonetDB"

] | **Describe the bug**

Queries against sys.querylog_catalog or sys.querylog_calls or sys.querylog_history fail with error:

HY013 Could not allocate space

on a demo db which was restored from a db created with

call sys.hot_snapshot(R'\path\file.tar');

Apparently the sys.hot_snapshot() functionality is not 100% complete, it missed some files which cause server errors when the db is restored.

**To Reproduce**

start mserver5 (Jul2021-SP1) on windows

start mclient

in mclient do:

> call sys.hot_snapshot(R'C:\Users\myname\AppData\Roaming\MonetDB5\dbfarm\demo_bu2021_10_07.tar');

stop mclient

stop mserver5

In explorer go to C:\Users\myname\AppData\Roaming\MonetDB5\dbfarm\

rename original demo db folder to demo_orig

open demo_bu2021_10_07.tar file (with 7-Zip utility) and drag the demo folder to the dbfarm directory (to copy the demo dir and files to local HD).

Notice the difference in folder sizes (view properties on the folder) between

demo_orig (9,867,264 bytes 239 Files, 10 Folders)

and the restored db from the demo_bu2021_10_07.tar snapshot backup in the demo folder

demo (4,014,080 bytes 186 Files, 7 Folders).

So many files and folders were not included in the snapshot backup !!

start mserver5 (Jul2021-SP1) on windows using demo db

start mclient

in mclient do:

SELECT COUNT(*) AS count FROM sys.querylog_catalog;

SELECT COUNT(*) AS count FROM sys.querylog_calls;

SELECT COUNT(*) AS count FROM sys.querylog_history;

It returns for each query error: HY013 Could not allocate space

stop mclient

start JdbcClient

in JdbcClient do:

\vsci

This special command will validate all the system catalog tables integrity.

It will list the same error for some 12 queries against these 3 system tables.

stop Jdbcclient

The mserver5 console shows:

#2021-10-07 17:41:55: client1: GDKextend: !ERROR: cannot open file C:\Users\myname\AppData\Roaming\MonetDB5\dbfarm\demo\bat\53\5303.tail: No such file or directory

#2021-10-07 17:41:55: client1: BBPrename: !ERROR: name is in use: 'querylog_cat_id'.

#2021-10-07 17:41:55: client1: GDKextend: !ERROR: cannot open file C:\Users\myname\AppData\Roaming\MonetDB5\dbfarm\demo\bat\36\3631.tail: No such file or directory

#2021-10-07 17:41:55: client1: BBPrename: !ERROR: name is in use: 'querylog_cat_defined'.

#2021-10-07 17:41:55: client1: GDKextend: !ERROR: cannot open file C:\Users\myname\AppData\Roaming\MonetDB5\dbfarm\demo\bat\23\2325.tail: No such file or directory

#2021-10-07 17:41:55: client1: BBPrename: !ERROR: name is in use: 'querylog_cat_mal'.