text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# Incorrect Baseline Evaluations Call into Question Recent LLM-RL Claims

There has been a flurry of recent papers proposing new RL methods that claim to improve the “reasoning abilities” in language models. The most recent ones, which show improvements with random or no external rewards have led to surprise, exciteme... | https://www.lesswrong.com/posts/p8rcMDRwEGeFAzCQS/incorrect-baseline-evaluations-call-into-question-recent-llm |

# Do you even have a system prompt? (PSA / repo)

Everyone around me has a notable lack of system prompt. And when they do have a system prompt, it’s either the [eigenprompt](https://x.com/eigenrobot/status/1782957877856018514) or some half-assed 3-paragraph attempt at telling the AI to “include less bullshit”.

I see... | https://www.lesswrong.com/posts/HjHqxzn3rnH7T45hp/do-you-even-have-a-system-prompt-psa-repo |

# Gradual Disempowerment: Concrete Research Projects

*This post benefitted greatly from comments, suggestions, and ongoing discussions with David Duvenaud, David Krueger, and Jan Kulveit. All errors are my own.*

A few months ago, I and my coauthors published [*Gradual Disempowerment*](https://gradual-disempowerment.a... | https://www.lesswrong.com/posts/GAv4DRGyDHe2orvwB/gradual-disempowerment-concrete-research-projects |

# Orphaned Policies (Post 5 of 7 on AI Governance)

In previous posts in this sequence, I laid out a case for why most AI governance research is too academic and too abstract to have much influence over the future. Politics is noisy and contested, so we can’t expect that good AI governance ideas will spread on their ow... | https://www.lesswrong.com/posts/wFKZmvfRfNn24HNHp/orphaned-policies-post-5-of-7-on-ai-governance |

# CFAR is running an experimental mini-workshop (June 2-6, Berkeley CA)!

Hello from the Center for Applied Rationality!

Some of you may have attended our classic applied rationality workshops in the past; others of you may have wanted to attend a workshop but not yet had a chance to. It's been a while since we've las... | https://www.lesswrong.com/posts/5AGK8b3rm8YD7jhxi/cfar-is-running-an-experimental-mini-workshop-june-2-6 |

# Experimental CFAR Mini-Workshop @ Arbor Summer Camp

From June 2-6, the Center for Applied Rationality will be running an experimental mini-workshop at Arbor Summer Camp. This is an in-person event held in Berkeley, CA at the Lighthaven campus and requires paid admission -- read on for more details.

*This wor... | https://www.lesswrong.com/events/52EeGs6ScHZMoyCNx/experimental-cfar-mini-workshop-arbor-summer-camp |

# Letting Kids Be Kids

Letting kids be kids seems more and more important to me over time. Our safetyism and paranoia about children is catastrophic on way more levels than most people realize. I believe all these effects are very large:

1. It raises the time, money and experiential costs of having children so much ... | https://www.lesswrong.com/posts/mZ48pp2Y4YLvrPXHv/letting-kids-be-kids |

# AI 2027 - Rogue Replication Timeline

*I envision a future more chaotic than portrayed in* [*AI 2027*](https://ai-2027.com/)*. My scenario, the Rogue Replication Timeline (RRT), branches off mid-2026. If you haven’t already read the AI 2027 scenario, I recommend doing so before continuing.*

*You can read the* [*full... | https://www.lesswrong.com/posts/66NjHDJFChe8cfPac/ai-2027-rogue-replication-timeline |

# Virtues related to honesty

*Status: musings. I wanted to write up a more fleshed-out and rigorous version of this, but realistically wasn't likely to every get around to it, so here's the half-baked version.*

Related posts: [Firming Up Honesty Around Its Edge-Cases](https://www.lesswrong.com/posts/xdwbX9pFEr7Pomaxv... | https://www.lesswrong.com/posts/qADfHFkr4Q8ah4Aqp/virtues-related-to-honesty |

# 50 Ideas for Life I Repeatedly Share

*These are the most significant pieces of life advice/wisdom that have benefited me, that others often don't think about and I end up sharing very frequently. *

*I wanted to share this here for a few reasons: First and most important, I think many people here will find this inte... | https://www.lesswrong.com/posts/cYhviWa7kGpPhAyaF/50-ideas-for-life-i-repeatedly-share |

# ‘GiveWell for AI Safety’: Lessons learned in a week

*On prioritizing orgs by theory of change, identifying effective giving opportunities, and how Manifund can help.*

*Epistemic status: I spent ~20h thinking about this. If I were to spend 100+ h thinking about this, I expect I’d write quite different things. I was ... | https://www.lesswrong.com/posts/Z8KLLHvsEkukxpTCD/givewell-for-ai-safety-lessons-learned-in-a-week |

# I replicated the Anthropic alignment faking experiment on other models, and they didn't fake alignment

In December 2024, Anthropic and Redwood Research published the paper "Alignment faking in large language models" which demonstrated that Claude sometimes "strategically pretends to comply with the training objectiv... | https://www.lesswrong.com/posts/pCMmLiBcHbKohQgwA/i-replicated-the-anthropic-alignment-faking-experiment-on |

# Could we go another route with computers?

Computers now are mostly semiconductors doing logic operations. There are, of course, other parts, but they are mostly structural, not doing actual computation.

But imagine computer history took a different route: you could buy different units with different physics doing d... | https://www.lesswrong.com/posts/gd6LLkudvq2xfCAqo/could-we-go-another-route-with-computers |

# Diabetes is Caused by Oxidative Stress

### I.

It's a Known Thing in the keto-sphere \[ I get the sense that [r/saturatedfat](https://www.reddit.com/r/saturatedfat) is an example of this culture \] that people with metabolic syndrome -- i.e., some level of insulin resistance -- can handle fat *or* carbs \[ e.g. eith... | https://www.lesswrong.com/posts/sPceWmHudmkSAA9Ba/diabetes-is-caused-by-oxidative-stress |

# Zochi Publishes A* Paper

### Zochi Achieves Main Conference Acceptance at ACL 2025

Today, we’re excited to announce a groundbreaking milestone: Zochi, Intology’s Artificial Scientist, has become the first AI system to independently **pass peer review at an A* scientific conference**¹—the highest bar for scientific ... | https://www.lesswrong.com/posts/LtsgfGsXpiLTSGpaW/zochi-publishes-a-paper |

# The (Unofficial) Rationality: A-Z Anki Deck

I'm a huge fan of Anki. I love being able to remember what I read for however long I want to. However, most Anki decks I've found about rationality are nonexistent, unhelpful, out of date, or poorly formatted.

Recently, I found [this post](https://www.lesswrong.com/posts/... | https://www.lesswrong.com/posts/jz876dnWJmNDByifc/the-unofficial-rationality-a-z-anki-deck |

# House Party Dances

[Julia](https://juliawise.net/) and I are in CA for [LessOnline](https://less.online/) this weekend, and we're staying with friends. It happened that they were hosting a party, and while this is not a group of dancing friends they asked if I'd be up for leading some dancing. One of my hosts let me... | https://www.lesswrong.com/posts/a4TyumeWrcuMaFc79/house-party-dances |

# Progress links and short notes, 2025-05-31: RPI fellowship deadline tomorrow, Edge Esmeralda next week, and more

*It’s been way too long since the last links digest, which means I have way too much to catch up on. I had to cut many interesting bits to get this one out the door.*

*Much of this content originated on ... | https://www.lesswrong.com/posts/AMN3AwEkL4QTDSduC/progress-links-and-short-notes-2025-05-31-rpi-fellowship |

# When will AI automate all mental work, and how fast?

*Rational Animations takes a look at Tom Davidson's Takeoff Speeds model (*[*https://takeoffspeeds.com*](https://takeoffspeeds.com)*). The model uses formulas from economics to answer two questions: how long do we have until AI automates 100% of human cognitive la... | https://www.lesswrong.com/posts/ykJ8Ku7tKeSCe9fFo/when-will-ai-automate-all-mental-work-and-how-fast |

# The best approaches for mitigating "the intelligence curse" (or gradual disempowerment); my quick guesses at the best object-level interventions

There have recently been [various](https://www.lesswrong.com/posts/GAv4DRGyDHe2orvwB/gradual-disempowerment-concrete-research-projects) [proposals](https://time.com/7289692... | https://www.lesswrong.com/posts/BXW2bqxmYbLuBrm7E/the-best-approaches-for-mitigating-the-intelligence-curse-or |

# The 80/20 playbook for mitigating AI scheming in 2025

*Adapted from this twitter* [*thread.*](https://twitter.com/CRSegerie/status/1927066519021801853) *See this as a quick take.*

Mitigation Strategies

---------------------

How to mitigate Scheming?

1. **Architectural choices**: ex-ante mitigation

2. **Control ... | https://www.lesswrong.com/posts/YFxpsrph83H25aCLW/the-80-20-playbook-for-mitigating-ai-scheming-in-2025 |

# Policy Entropy, Learning, and Alignment (Or Maybe Your LLM Needs Therapy)

Epistemic Status: Exploratory synthesis. Background in mathematics/statistics (UChicago) and principal-agent problems (UT Austin doctoral ABD), with extensive study of psychotherapy literature motivated by personal research into consciousness ... | https://www.lesswrong.com/posts/C4tvfHn2DfxyYYwaL/policy-entropy-learning-and-alignment-or-maybe-your-llm |

# An Opinionated Guide to P-Values

This is a crosspost of a post from my blog, [Metal Ivy](https://ivy0.substack.com/). The original is here: [An Opinionated Guide to Statistical Significance](https://ivy0.substack.com/p/an-opinionated-guide-to-statistical).

I think for the general audience the value of this post is ... | https://www.lesswrong.com/posts/352TCZZRqthKuNMDR/an-opinionated-guide-to-p-values |

# Seeing how well an agentic AI coding tool can do compared to me using an actual real-world example

When it comes to disputes about AI coding capabilities, I think a lot of people who either don't follow AI capabilities developments or who aren't particularly capable coders might be missing some context, so I wrote a... | https://www.lesswrong.com/posts/k3CSx2dCswfffkQn9/seeing-how-well-an-agentic-ai-coding-tool-can-do-compared-to |

# Apply to the AI Security Bootcamp [Aug 4 - Aug 29]

tl;dr

-----

We're excited to announce AI Security Bootcamp (AISB), a 4-week intensive program designed to bring researchers and engineers up to speed on security fundamentals for AI systems. The program will cover cybersecurity fundamentals (cryptography, networks)... | https://www.lesswrong.com/posts/jXmn3oPhFNQtYczC8/apply-to-the-ai-security-bootcamp-aug-4-aug-29 |

# Possible AI regulation emergency?

I apologize for breaking the "no contemporary politics" norm, but I read that one of the less talked about provisions in the "One Big Beautiful Bill" that recently passed the US House of Representatives forbids individual US states from regulating AI for ten years. This sounds bad f... | https://www.lesswrong.com/posts/RngeiEJu6z6qny9ts/possible-ai-regulation-emergency |

# ‘Wicked’: thoughts

I watched _Wicked_ (the 2024 movie) with my ex and his family at Christmas. My current stance is that it was pretty fun but not especially incredible or deep. I could be pretty wrong—watching movies isn’t my strong suit, but I do like chatting about them afterwards. Some thoughts:

1. Glinda is i... | https://www.lesswrong.com/posts/zj5ffnBBqwa6BFnDK/wicked-thoughts |

# 1. The challenge of unawareness for impartial altruist action guidance: Introduction

*(This sequence assumes basic familiarity with longtermist cause prioritization concepts, though the issues I raise also apply to non-longtermist interventions.)*

Are EA interventions net-positive from an impartial perspective — on... | https://www.lesswrong.com/posts/5zyPJPhLEwSzHWEtu/1-the-challenge-of-unawareness-for-impartial-altruist-action |

# The Value Proposition of Romantic Relationships

What’s the *main* value proposition of romantic relationships?

Now, look, I know that when people drop that kind of question, they’re often about to present a hyper-cynical answer which totally ignores the main thing which is great and beautiful about relationships. A... | https://www.lesswrong.com/posts/L2GR6TsB9QDqMhWs7/the-value-proposition-of-romantic-relationships |

# [Beneath Psychology] Chronic pain challenge part 2: the solution

In the [last post](https://www.lesswrong.com/posts/tGGPgazwd9MKJFbsa/beneath-psychology-case-study-on-chronic-pain-first-insights-1), I introduced an example where I was talking to someone suffering from chronic pain. Today we will dive into the rest o... | https://www.lesswrong.com/posts/5RKvveKzwjvXQEhKj/beneath-psychology-chronic-pain-challenge-part-2-the |

# Lighthaven Sequences Reading Group #36.5 (Tuesday 6/3) [patio11 meetup edition]

Come get old-fashioned with us, and let's ~~read the sequences~~ hang out with [patio11](https://www.kalzumeus.com/) at Lighthaven!

We'll show up, mingle, do mingling, and then split off and mingle for some mingling. Please be prepared... | https://www.lesswrong.com/events/iq7BsfxSnTyvLvEH8/lighthaven-sequences-reading-group-36-5-tuesday-6-3-patio11 |

# Hemingway Case

> Why did the chicken cross the road?

>

> Ernest Hemingway: To die. In the rain.

My son asks: If **HelloWorld** is camel case, **hello_world** is snake case and **hello-world** is kebab case, what's the DNS-style **Hello.World.** ?

I think I have a pretty good answer for him: It's Hemingway case. | https://www.lesswrong.com/posts/sCjTsXEjfyF5rhbzt/hemingway-case-1 |

# Unfaithful Reasoning Can Fool Chain-of-Thought Monitoring

*This research was completed for LASR Labs 2025 by Benjamin Arnav, Pablo Bernabeu-Pérez, Nathan Helm-Burger, Tim Kostolansky and Hannes Whittingham. The team was supervised by Mary Phuong. Find out more about the program and express interest in upcoming itera... | https://www.lesswrong.com/posts/QYAfjdujzRv8hx6xo/unfaithful-reasoning-can-fool-chain-of-thought-monitoring |

# In defense of memes (and thought-terminating clichés)

*Crossposted from* [*my Substack*](https://hardlyworking1.substack.com/) *and my Reddit post on r/SlateStarCodex*

I often think that memes, thought-terminating clichés, and other tools meant to avoid cognitive dissonance (e.g. bingo a la Scott on [Superweapons a... | https://www.lesswrong.com/posts/p3pyrRfRSmeixjai2/in-defense-of-memes-and-thought-terminating-cliches |

# LLMs might have subjective experiences, but no concepts for them

**Summary**: LLMs might be conscious, but they might not have concepts and words to represent and express their internal states and corresponding subjective experiences, since the only concepts they learn are human concepts (besides maybe some concepts... | https://www.lesswrong.com/posts/vjju8Yfej3FjgfMbC/llms-might-have-subjective-experiences-but-no-concepts-for |

# Notes on dynamism, power, & virtue

*This is very rough — it's functionally a collection of links/notes/excerpts that feel related. I don’t think what I’m sharing is in a great format; if I had more mental energy, I would have chosen a more-linear structure to look at this tangle of ideas. But publishing in the curre... | https://www.lesswrong.com/posts/hFbv3ZAg6aQu796tq/notes-on-dynamism-power-and-virtue |

# AXRP Episode 41 - Lee Sharkey on Attribution-based Parameter Decomposition

[YouTube link](https://youtu.be/ZmJ-ov2TywM)

What’s the next step forward in interpretability? In this episode, I chat with Lee Sharkey about his proposal for detecting computational mechanisms within neural networks: Attribution-based Param... | https://www.lesswrong.com/posts/gnyna4Rb2S7KdzxvJ/axrp-episode-41-lee-sharkey-on-attribution-based-parameter |

# In Which I Make the Mistake of Fully Covering an Episode of the All-In Podcast

I have been forced recently to cover many statements by US AI Czar David Sacks.

Here I will do so again, for the third time in a month. I would much prefer to avoid this. In general, when people go on a binge of repeatedly making such in... | https://www.lesswrong.com/posts/qpThPhM4CyvZGtwRR/in-which-i-make-the-mistake-of-fully-covering-an-episode-of |

# How to work through the ARENA program on your own

I've recently completed the in-person [ARENA program](https://www.arena.education/), which is a 5-week bootcamp teaching the basics of safety research engineering (with the 5th week being a capstone project). Sometimes, I talk to people who want to work through the p... | https://www.lesswrong.com/posts/Be3jdiktxxwvFp8pr/how-to-work-through-the-arena-program-on-your-own |

# Broad-Spectrum Cancer Treatments

Midjourney, “engraving of Apollo shooting his bow at a distant c... | https://www.lesswrong.com/posts/4rmveLEuARYKapHq2/broad-spectrum-cancer-treatments |

# Schelling Coordination via Agentic Loops

**Author's Note:** This work originated as a team project during the [Apart Research AI Control Hackathon](https://apartresearch.com/sprints/ai-control-hackathon-2025-03-29-to-2025-03-30), where my teammates did the majority of the foundational work. I've continued developing... | https://www.lesswrong.com/posts/vqxExZvDjkphqG6zH/schelling-coordination-via-agentic-loops-1 |

# Steering Vectors Can Help LLM Judges Detect Subtle Dishonesty

*Cross-posted from our recent paper: "But what is your honest answer? Aiding LLM-judges with honest alternatives using steering vectors" :* [*https://arxiv.org/abs/2505.17760*](https://arxiv.org/abs/2505.17760)

*Code available at:* [*https://github.com/w... | https://www.lesswrong.com/posts/JsyAdviSYCqgMvvmG/steering-vectors-can-help-llm-judges-detect-subtle |

# Individual AI representatives don't solve Gradual Disempowerement

Imagine each of us has an AI representative, aligned to us, personally. Is gradual disempowerment solved?[^bhugzx74xtt] In my view, no; at the same time having AI representatives helps at the margin.

I have two deep reasons for skepticism.[^it3lbzx0p... | https://www.lesswrong.com/posts/E96XcEPECbsipAvFi/individual-ai-representatives-don-t-solve-gradual |

# Quickly Assessing Reward Hacking-like Behavior in LLMs and its Sensitivity to Prompt Variations

*We present a simple eval set of 4 scenarios where we evaluate Anthropic and OpenAI frontier models. We find various degrees of reward hacking-like behavior with high sensitivity to prompt variation. *

\[[Code and resul... | https://www.lesswrong.com/posts/quTGGNhGEiTCBEAX5/quickly-assessing-reward-hacking-like-behavior-in-llms-and |

# ARENA 6.0 - Call for Applicants

**TL;DR:**

----------

We're excited to announce the sixth iteration of [ARENA](https://www.arena.education/) (Alignment Research Engineer Accelerator), a 4-5 week ML bootcamp with a focus on AI safety! Our mission is to provide talented individuals with the **ML engineering skills, c... | https://www.lesswrong.com/posts/7kXsAni9gGb4Dn29c/arena-6-0-call-for-applicants |

# Notes from a mini-replication of the alignment faking paper

## Key takeaways

* This post contains my notes from a 30-40 hour mini replication of [Greenblatt et al.’s alignment faking paper](https://www.anthropic.com/research/alignment-faking).

* This was a significant paper because it provided some evidence for p... | https://www.lesswrong.com/posts/6c6c3thcDvHPuuvTv/notes-from-a-mini-replication-of-the-alignment-faking-paper |

# Linkpost: Predicting Empirical AI Research Outcomes

with Language Models

Abstract (emphasis mine):

> Many promising-looking ideas in AI research fail to deliver, but their validation takes substantial human labor and compute. Predicting an idea's chance of success is thus crucial for accelerating empirical AI resea... | https://www.lesswrong.com/posts/SDcf9BwQuu9QFkrQz/linkpost-predicting-empirical-ai-research-outcomes-with |

# LessOnline saved my life. Now how do I let go of this house?

I was at LessOnline, it was awesome.

When I got home, I discovered that there was a shooting (two random people were violently attacked by a gang of '4-6 people') in a parking lot near my house Sunday evening. That parking lot serves two of my favorite... | https://www.lesswrong.com/posts/AY8PRGj5NLjhjvnSg/lessonline-saved-my-life-now-how-do-i-let-go-of-this-house |

# “Flaky breakthroughs” pervade inner work — but almost no one tracks them

*2026 Feb 10: This post has been updated for accuracy.*

Has someone you know ever had a “breakthrough”… only to be no different a few weeks later?

![Ulisse Mini @MiniUlisse

after my @[redacted] retreat I was like "I'm never going to be depres... | https://www.lesswrong.com/posts/bqPY63oKb8KZ4x4YX/flaky-breakthroughs-pervade-inner-work-but-almost-no-one |

# A Technique of Pure Reason

Looking a little ahead into the future, I think LLMs are going to stop being focused on knowledgeable, articulate[^j9lzohw3u7r] chatbots, but instead be more efficient models that are weaker in these areas than current models, but relatively stronger at reasoning, a **pure-reasoner model**... | https://www.lesswrong.com/posts/qSgcmfv8nxZyYofNb/a-technique-of-pure-reason |

# Dating Roundup #6

Previously: [#1](https://thezvi.substack.com/p/dating-roundup-1-this-is-why-youre?utm_source=publication-search), [#2](https://thezvi.substack.com/p/dating-roundup-2-if-at-first-you?utm_source=publication-search), [#3](https://thezvi.substack.com/p/dating-roundup-3-third-times-the?utm_source=public... | https://www.lesswrong.com/posts/fnBGz2dTJJDExofTj/dating-roundup-6 |

# 2. Why intuitive comparisons of large-scale impact are unjustified

We’ve seen [so far](https://forum.effectivealtruism.org/posts/a3hnfA9EnYm9bssTZ/1-the-challenge-of-unawareness-for-impartial-altruist-action-1) that unawareness leaves us with gaps in the impartial altruistic justification for our decisions. It’s fun... | https://www.lesswrong.com/posts/dEJJqcAt6DuoFxAeT/2-why-intuitive-comparisons-of-large-scale-impact-are-1 |

# The Stereotype of the Stereotype

I want to define a new term that will be useful in the discourse.

Imagine you landed in a foreign country with dogs, but with no word for dogs. You would see these things, and want to talk about them. You would like to be able to tell people whether they can bring dogs into your hou... | https://www.lesswrong.com/posts/cyKD2ifYizpupGP4C/the-stereotype-of-the-stereotype |

# Semiconductor Fabs I: The Equipment

An in-depth look at semiconductor processing equipment: considerations, selection, operation, and supporting info.

Preface

-------

I tried to include as many links as possible to allow the reader to go down rabbit holes as they see fit.

I also tried to keep info basic enough fo... | https://www.lesswrong.com/posts/WtJadTxL2Rsmiv45d/semiconductor-fabs-i-the-equipment |

# Potentially Useful Projects in Wise AI

This is a list of projects[^lcc7gtz365j] to consider for folks who want to use Wise AI to steer the world towards positive outcomes.

Some of these projects are listed because they're impactful. Others are listed because I believe they would be good projects for someone to get ... | https://www.lesswrong.com/posts/24RvfBgyt72XzfEYS/potentially-useful-projects-in-wise-ai |

# AI #119: Goodbye AISI?

AISI is being rebranded highly non-confusingly as CAISI. Is it the end of AISI and a huge disaster, or a tactical renaming to calm certain people down? Hard to tell. It could go either way. Sometimes you need to target the people who call things ‘big beautiful bill,’ and hey, the bill in quest... | https://www.lesswrong.com/posts/D3BjqZ26ouk7ctfRC/ai-119-goodbye-aisi |

# Fundamental Uncertainty: Chapter 2 - How do words get their meaning?

*N.B. This is a chapter in a book about truth and knowledge. It's a major revision to the version of* [*this chapter*](https://www.lesswrong.com/posts/Kq9nq5hvPHanrmdNZ/fundamental-uncertainty-chapter-2-why-do-words-have-meaning) *in the first draf... | https://www.lesswrong.com/posts/nxkqiMDn7PcptprMm/fundamental-uncertainty-chapter-2-how-do-words-get-their |

# LLM in-context learning as (approximating) Solomonoff induction

*Epistemic status: One week empirical project from a theoretical computer scientist. My analysis and presentation were both a little rushed; some information that would be interesting is missing from plots because I simply did not have time to include i... | https://www.lesswrong.com/posts/xyYss3oCzovibHxAF/llm-in-context-learning-as-approximating-solomonoff |

# Levels of Doom: Eutopia, Disempowerment, Extinction

Disempowerment is on the fence, gets interpreted as either implying human extinction or being a good place. "Doom" tends to be ambiguous between disempowerment and extinction, as well as about when that outcome must be gauged. And many people currently feel both di... | https://www.lesswrong.com/posts/GYmhkkpRJwkyhauit/levels-of-doom-eutopia-disempowerment-extinction |

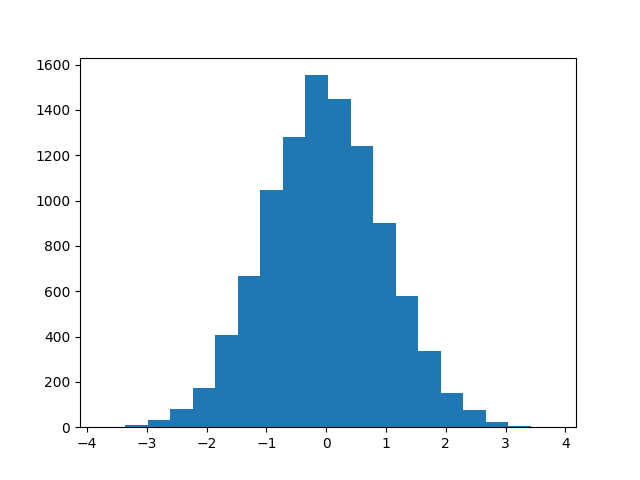

# Histograms are to CDFs as calibration plots are to...

As you know, histograms are decent visualizations for PDFs with lots of samples...

10k predictions, 20 bins

...but if there are only a few samples, the histogram-binning choices c... | https://www.lesswrong.com/posts/LFGgwitjertJqch7J/histograms-are-to-cdfs-as-calibration-plots-are-to |

# cheaper sodium electrolysis

## sodium electrolysis

Aluminum metal is a widely-used material. It costs ~$2.5/kg. A significant fraction of its production cost is electricity.

Currently, Na metal is produced by [Downs cell](https://en.wikipedia.org/wiki/Downs_cell) electrolysis of NaCl. Making sodium metal with ele... | https://www.lesswrong.com/posts/uvN4o6ZeKdXyyX43i/cheaper-sodium-electrolysis |

# Avoiding AI Deception: Lie Detectors can either Induce Honesty or Evasion

Large language models (LLMs) are often fine-tuned after training using methods like reinforcement learning from human feedback (RLHF). In this process, models are rewarded for generating responses that people rate highly. But what people like ... | https://www.lesswrong.com/posts/dT7mvHzuX46vydt9K/avoiding-ai-deception-lie-detectors-can-either-induce |

# Discontinuous Linear Functions?!

We know what linear functions are. A function _f_ is linear iff it satisfies _additivity_ $f(x + y) = f(x) + f(y)$ and _homogeneity_ $f(ax) = af(x)$.

We know what continuity is. A function _f_ is continuous iff for all ε there exists a δ such that if $|x - x_0|$ < δ, then $|f(x) - f... | https://www.lesswrong.com/posts/GodqHKvQhpLsAwsNL/discontinuous-linear-functions |

# How do AI agents work together when they can’t trust each other?

I investigated this question by having Claude play the advanced social deduction game Blood on the Clocktower. Clocktower is a game similar to Werewolf (or Mafia) where players sit in a circle and are secretly divided into a good team and an evil team.... | https://www.lesswrong.com/posts/syYBLEkj5bGswz5yf/how-do-ai-agents-work-together-when-they-can-t-trust-each |

# Lessons from a year of university AI safety field building

*This post is an organizational update from *[*Georgia Tech’s AI Safety Initiative*](https://www.aisi.dev/) *(AISI) and roughly represents our collective view. In this post, we share lessons & takes from the 2024-25 academic year, describe what we’ve done, a... | https://www.lesswrong.com/posts/Ckhek3mXXq7TWvvEh/lessons-from-a-year-of-university-ai-safety-field-building |

# DeepSeek-r1-0528 Did Not Have a Moment

When r1 was released in January 2025, there was a DeepSeek moment.

When r1-0528 was released in May 2025, there was no moment. Very little talk.

[Here is a download link for DeepSeek-R1-0528-GGUF](https://huggingface.co/unsloth/DeepSeek-R1-0528-GGUF).

It seems like a solid u... | https://www.lesswrong.com/posts/CvxjEkCCq7FpGn5sv/deepseek-r1-0528-did-not-have-a-moment |

# Making deals with AIs: A tournament experiment with

a bounty

*Kathleen Finlinson & Ben West, May 2025*

Mission

-------

* We are working to establish the possibility of cooperation between humans and agentic AIs. We are starting with a simple version of making deals with current AIs, and plan to iterate based on... | https://www.lesswrong.com/posts/qw36iLrGyFn6mdwoa/making-deals-with-ais-a-tournament-experiment-with-a-bounty |

# Maximal Curiousity is Not Useful

The other day I talked to a friend of mine about AI x-risk. My friend is a fan of Elon Musk, and argued roughly that x-risk was not a problem, because xAI will win the AI race and deploy a "maximally curious" superintelligence.

Setting aside the issue of whether xAI will win the rac... | https://www.lesswrong.com/posts/FBqYAHCsnjLvhAcd5/maximal-curiousity-is-not-useful |

# Apply now to Human-Aligned AI Summer School 2025

Join us at the fifth [Human-Aligned AI Summer School](https://humanaligned.ai/2025/) in Prague from 22^nd^ to 25^th^ July 2025!

***Update:** We have no... | https://www.lesswrong.com/posts/JdrmKSzsWmRbgMumf/apply-now-to-human-aligned-ai-summer-school-2025 |

# AXRP Episode 42 - Owain Evans on LLM Psychology

[YouTube link](https://youtu.be/3D4pgIKR4cQ)

Earlier this year, the paper “Emergent Misalignment” made the rounds on AI x-risk social media for seemingly showing LLMs generalizing from ‘misaligned’ training data of insecure code to acting comically evil in response to... | https://www.lesswrong.com/posts/ZiacrsqoWHczctTBS/axrp-episode-42-owain-evans-on-llm-psychology |

# The Mirror Trap

*A quick post on a probably-real* [*inadequate equilibrium*](https://www.lesswrong.com/s/oLGCcbnvabyibnG9d) *mostly inspired by trying to think through what happened to Chance the Rapper.*

*Potentially ironic artifact if it accrues karma.*

1\. The sculptor's garden

-------------------------

A scul... | https://www.lesswrong.com/posts/KBx4X2Xj2bqJkDh7f/the-mirror-trap |

# The Roots of Progress wants your stories about the AI frontier

The Roots of Progress Institute is seeking to commission stories for a new article series, “Intelligence Age,” on future applications of AI.

These will be reported essays, not science fiction. We want to understand how AI might change individual sectors... | https://www.lesswrong.com/posts/ygCsq36FPndTCujRg/the-roots-of-progress-wants-your-stories-about-the-ai |

# Solo Park Play at Three

Our three year old is about to turn four, and is bursting with a desire for [independence](https://www.jefftk.com/p/growing-independence). She's becoming more capable in all sorts of ways, and wants me to back off and let her do things. Today she wanted to go to the park by herself.

Now, we ... | https://www.lesswrong.com/posts/wkLFDLqfeGxhiHayR/solo-park-play-at-three |

# Agents, Simulators and Interpretability

We have already spoken about the differences between [tools, agents and simulators](https://www.lesswrong.com/posts/ddK7CMEC3XzSmLS4G/agents-tools-and-simulators) and the [differences in their safety properties](https://www.lesswrong.com/posts/A6oGJFeZwHHWHwY3b/aligning-agents... | https://www.lesswrong.com/posts/Tsh9Y4cDvzeHN3ETx/agents-simulators-and-interpretability |

# Vulnerability in Trusted Monitoring and Mitigations

As large language models (LLMs) grow increasingly capable and autonomous, ensuring their safety has become more critical. While significant progress has been made in alignment efforts, researchers have begun to focus on designing monitoring systems around LLMs to e... | https://www.lesswrong.com/posts/jJRKbmui8cKcoigQi/vulnerability-in-trusted-monitoring-and-mitigations |

# Meta Alignment: Communication Guide

*I’ve been working on a series of longer articles explaining and justifying the following principles of communicating AI risk to the general public, but in the interest of speed and brevity, I’ve decided to write a simplified guide here. Some of this will be base... | https://www.lesswrong.com/posts/wcNDv7DEy43dpnumY/meta-alignment-communication-guide |

# On working 80%

A year ago, I decided to reduce my employment level from 100% to 80% and to take Fridays off.

My main motivation was to have some time for myself: Relax, reduce my stress level from work, have more time for side projects, do more sports, and maybe spend more time with my wife without the kids. This w... | https://www.lesswrong.com/posts/KnvHZiAMzjnRLnKym/on-working-80 |

# MRI tracers

MRI scans and [PET scans](https://en.wikipedia.org/wiki/Positron_emission_tomography) are different methods for medical imaging, and they observe different things:

- MRI scans can see which nuclei are present in regions. Sometimes bond types of particular elements can be distinguished, eg in [1H NMR](ht... | https://www.lesswrong.com/posts/oyTz2hCAxBMhkENB3/mri-tracers |

# LessOnline Could Use Meeting Stones

Back in the mists of time, there was this game called World of Warcraft, where you and your four closest friends would team up to complete dungeons together. To handle the inevitable people with no friends, they added [meeting stones](https://wowpedia.fandom.com/wiki/Meeting_Stone... | https://www.lesswrong.com/posts/3rW68feE97iWPrLCm/lessonline-could-use-meeting-stones |

# Letting Kids Be Outside

When our kids were 7 and 5 they started walking home from school alone. We wrote explaining they were ready and giving permission, the school had a few reasonable questions, and that was it. Just kids walking home from the local public school like they have in this neighborhood for generation... | https://www.lesswrong.com/posts/KvB28n3CPwourTFFW/letting-kids-be-outside |

# Invitation to an IRL retreat on AI x-risks & post-rationality in Ooty, India

**Note**: *This post is an invite for the retreat, as well as an expression of interest for similar events which will be conducted. We are using the form for both. *

TL;DR: Ooty AI Retreat 2.0 (June 23-30, '25, open to all): We're moving b... | https://www.lesswrong.com/posts/zonihALPmYjWMF2EQ/invitation-to-an-irl-retreat-on-ai-x-risks-and-post |

# 3. Why impartial altruists should suspend judgment under unawareness

To recap, [first](https://forum.effectivealtruism.org/posts/a3hnfA9EnYm9bssTZ/1-the-challenge-of-unawareness-for-impartial-altruist-action-1), we face an epistemic challenge beyond uncertainty over possible futures. Due to unawareness, we can’t con... | https://www.lesswrong.com/posts/vhkKBi5fSPtNPRpJ3/3-why-impartial-altruists-should-suspend-judgment-under-1 |

# The Decreasing Value of Chain of Thought in Prompting

> This is the second in a series of short reports that seek to help business, education, and policy leaders understand the technical details of working with AI through rigorous testing. In this report, we investigate Chain-of-Thought (CoT) prompting, a technique ... | https://www.lesswrong.com/posts/37sdqP7GcfGaj6LHG/the-decreasing-value-of-chain-of-thought-in-prompting |

# Emergent Misalignment on a Budget

**TL;DR** We reproduce [emergent misalignment](https://www.lesswrong.com/posts/ifechgnJRtJdduFGC/emergent-misalignment-narrow-finetuning-can-produce-broadly) [(Betley et al. 2025)](https://arxiv.org/pdf/2502.17424) in Qwen2.5-Coder-32B-Instruct using single-layer LoRA finetuning, sh... | https://www.lesswrong.com/posts/qHudHZNLCiFrygRiy/emergent-misalignment-on-a-budget |

# Administering immunotherapy in the morning seems to really, really matter. Why?

*Edit on 08/06/2024: At least one person has pointed out that, at one point, giving hypertensives at night were **also** thought to matter,* [*a now disproven idea.*](https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(22)017... | https://www.lesswrong.com/posts/pPtqh4fwNcbkgFSpG/administering-immunotherapy-in-the-morning-seems-to-really |

# Emergence Spirals—what Yudkowsky gets wrong

*Before proposing the following ideas and critiquing those of Yudkowsky, I want to acknowledge that I’m no expert in this area—simply a committed and (somewhat) rigorous speculator. This is not meant as a “smack down” (of a post written **18 years ago**) but rather a broad... | https://www.lesswrong.com/posts/9SBmgTRqeDwHfBLAm/emergence-spirals-what-yudkowsky-gets-wrong |

# Busking with Kids

Our older two, ages 11 and 9, have been learning fiddle, and are getting pretty good at it. When the weather's nice we'll occasionally go play somewhere public for tips ("busking"). It's better than practicing, builds performance skills, and the money is a good motivation!

We'll usually walk over ... | https://www.lesswrong.com/posts/TWpnaixm6MiCur9yF/busking-with-kids |

# Beware the Delmore Effect

*This is a linkpost for* [*https://lydianottingham.substack.com/beware-the-delmore-effect*](https://lydianottingham.substack.com/beware-the-delmore-effect)

You should be aware of the Delmore Effect:

> “The tendency to provide more articulate and explicit goals for lower priority areas of ... | https://www.lesswrong.com/posts/bbPHnmGybEcqEiPKs/beware-the-delmore-effect |

# Against asking if AIs are conscious

People sometimes wonder whether certain AIs or animals are conscious/sentient/sapient/have qualia/etc. I don't think that such questions are coherent. Consciousness is a concept that humans developed for reasoning about humans. It's a useful concept, not because it is ontologicall... | https://www.lesswrong.com/posts/q9A9ZFqW3dDbcTBQL/against-asking-if-ais-are-conscious |

# AI companies' eval reports mostly don't support their claims

AI companies claim that their models are safe on the basis of dangerous capability evaluations. OpenAI, Google DeepMind, and Anthropic publish reports intended to show their eval results and explain why those results imply that the models' capabilities are... | https://www.lesswrong.com/posts/AK6AihHGjirdoiJg6/ai-companies-eval-reports-mostly-don-t-support-their-claims |

# The True Goal Fallacy

As I ease out into a short sabbatical, I find myself turning back to dig the seeds of my repeated cycle of exhaustion and burnout in the last few years.

Many factors were at play, some more personal that I’m comfortable discussing here. But I have unearthed at least one failure mode that I see... | https://www.lesswrong.com/posts/B4zKRZh5oxyGnAdos/the-true-goal-fallacy |

# Dwarkesh Patel on Continual Learning

A key question going forward is the extent to which making further AI progress will depend upon some form of continual learning. Dwarkesh Patel offers us an extended essay considering these questions and reasons to be skeptical of the pace of progress for a while. I am less skept... | https://www.lesswrong.com/posts/YEwzhjFzt3zKctg2F/dwarkesh-patel-on-continual-learning |

# Identifying "Deception Vectors" In Models

> Using representation engineering, we systematically induce, detect, and control such deception in CoT-enabled LLMs, extracting ”deception vectors” via Linear Artificial Tomography (LAT) for 89% detection accuracy. Through activation steering, we achieve a 40% success rate ... | https://www.lesswrong.com/posts/3WyFmtiLZTfEQxJCy/identifying-deception-vectors-in-models |

# Expectation = intention = setpoint

When I was first learning about hypnosis, one of the things that was very confusing to me is how "expectations" relate to "intent". Some hypnotists would say "All suggestion is about expectation; if they expect to have an experience they will", and frame their inductions in terms ... | https://www.lesswrong.com/posts/p2HQKoW39Ew6updtq/expectation-intention-setpoint |

# METR's Observations of Reward Hacking in Recent Frontier Models

METR just made a lovely post detailing many examples they've found of reward hacks by frontier models. Unlike the reward hacks of yesteryear, these models are smart enough to know that what they are doing is deceptive and not what the company wanted the... | https://www.lesswrong.com/posts/Zu4ai9GFpwezyfB2K/metr-s-observations-of-reward-hacking-in-recent-frontier |

# When is it important that open-weight models aren't released? My thoughts on the benefits and dangers of open-weight models in response to developments in CBRN capabilities.

Recently, Anthropic released Opus 4 and said they [couldn't rule out the model triggering ASL-3 safeguards](https://www.anthropic.com/news/acti... | https://www.lesswrong.com/posts/TeF8Az2EiWenR9APF/when-is-it-important-that-open-weight-models-aren-t-released |

# Causation, Correlation, and Confounding: A Graphical Explainer

I’ve developed a new type of graphic to illustrate causation, correlation, and confounding. It provides an intuitive understanding of why we observe correlation without causation and how it's possible to have *causation without correlation*. I then cover... | https://www.lesswrong.com/posts/BLHEa9sqcGpmWuJGW/causation-correlation-and-confounding-a-graphical-explainer |

# How to help friend who needs to get better at planning?

I have a good friend who is intelligent in many ways, but bad at planning / achieving his goals / being medium+ agency. He's very habit and routine driven, which means his baseline quality of life is high but he's bad at, e.g, breakdown an abstract and complex ... | https://www.lesswrong.com/posts/fCvxSAnmYqEKBMaoB/how-to-help-friend-who-needs-to-get-better-at-planning |

# Ghiblification for Privacy

I often want to include an image in my posts to give a sense of a situation. A photo communicates the most, but sometimes that's too much: some participants would rather remain anonymous. A friend suggested running pictures through an AI model to convert them into a [Studio Ghibli](https:/... | https://www.lesswrong.com/posts/oc2EZhsYLWLKdyMia/ghiblification-for-privacy |

# A quick list of reward hacking interventions

This is a quick list of interventions that might help fix issues from reward hacking.

(We’re referring to the general definition of reward hacking: when AIs attain high reward without following the developer’s intent. So we’re counting things like sycophancy, not just al... | https://www.lesswrong.com/posts/spZyuEGPzqPhnehyk/a-quick-list-of-reward-hacking-interventions |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.