text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# On not being scared of math

*Written quickly for the Inkhaven Residency*.[^o1funhaodkr]

There’s a phenomenon I often see amongst more junior researchers that I call *being scared of math*.[^qf8evcid2yr] That is, when they try to read a machine learning paper and run into a section with mathematical notation, their ... | https://www.lesswrong.com/posts/4dmLQvKTJenSXmHzn/on-not-being-scared-of-math |

# The Blast Radius Principle

*Decentralize or Die.*

In April 2024, a salvo of cruise missiles destroyed the Trypilska thermal power plant, the largest in the Kyiv region, in under an hour. In June 2023, the destruction of the Kakhovka dam left a million people without drinking water and wiped out an entire irrigation... | https://www.lesswrong.com/posts/rDzMraAEkBBewn7vy/the-blast-radius-principle |

# You can’t trust violence

(Recommended listening: [Low - Violence](https://www.youtube.com/watch?v=4BSfKcuNpH0))

Last year, I personally called AI companies to warn their security teams about Sam Kirchner (former leader of Stop AI) when he disappeared after indicating potential violent intentions against OpenAI.

... | https://www.lesswrong.com/posts/Sih2sFHEgusDEuxtZ/you-can-t-trust-violence |

# [Hot take] Problems with AI prose

*Epistemic status: Written quickly. I have no specific expertise or training in writing or literary analysis.*

Recently, the NYTimes released a nifty [quiz](https://www.nytimes.com/interactive/2026/03/09/business/ai-writing-quiz.html). Readers were asked to indicate their preferenc... | https://www.lesswrong.com/posts/nTuKjJBwMtMuuLonA/hot-take-problems-with-ai-prose |

# Sparse Autoencoders for Single-Cell Models

People are rushing to build bigger and bigger single cell foundation models (trained on RNA sequencing data), but in my view we have not extracted even a small fraction of the knowledge and capabilities that already exist inside the models we have today.

To explain what I ... | https://www.lesswrong.com/posts/b3cvG387LKmbiBPaH/sparse-autoencoders-for-single-cell-models-1 |

# Eggs, rooms, puzzles, and talking about AI

I live with five friends in a big house, and two things I’ve done in it on this particular Sunday are hide 156 easter eggs all around, and reach a tentative joint decision on the allocation of four of its rooms.

These tasks are delightful to me for a reason they have in co... | https://www.lesswrong.com/posts/3rnM9eDTx4hHSbfER/eggs-rooms-puzzles-and-talking-about-ai |

# Morale

One particularly pernicious condition is low morale. Morale is, roughly, "the belief that if you work hard, your conditions will improve." If your morale is low, you can't push through adversity. It's also very easy to accidentally drop your morale through standard rationalist life-optimization.

It's easy to... | https://www.lesswrong.com/posts/53ZAzbdzGJHGeE5rs/morale |

# Talk English, Think Something Else

There's an adage from programming in C++ which goes something like "Yes, you *write* C, but you *imagine* the machine code as you do." I assumed this was bullshit, that nobody actually does this. Am I supposed to imagine writing the machine code, and then imagine imagining the bina... | https://www.lesswrong.com/posts/ry9bz8aQcxDcu6HjR/talk-english-think-something-else |

# TAPs or it didn't happen

Once, I went to talk about "curiosity" with [@LoganStrohl](https://www.lesswrong.com/users/brienneyudkowsky?mention=user). They noted "it seems like you have a good handle on 'active curiosity', but you don't really do much diffuse 'open curiosity.'" The convo went on for awhile, and felt ve... | https://www.lesswrong.com/posts/LZMcayhqyAPiyBpnx/taps-or-it-didn-t-happen |

# Daycare illnesses

Before I had a baby I was pretty agnostic about the idea of daycare. I could imagine various pros and cons but I didn’t have a strong overall opinion. Then I started mentioning the idea to various people. Every parent I spoke to brought up a consideration I hadn’t thought about before—the illnesses... | https://www.lesswrong.com/posts/byiLDrbj8MNzoHZkL/daycare-illnesses |

# Returns to intelligence

I'm going to tell you a story. For that story to make sense, I need to give you some background context.

I have some pretty smart friends. One of them is [Peter Schmidt-Nielsen](https://peter.website/). Peter has an illustrious line of descent. His paternal grandfather was [Knut Schmidt-Niel... | https://www.lesswrong.com/posts/CBTe8Etwb9wdjbpZC/returns-to-intelligence |

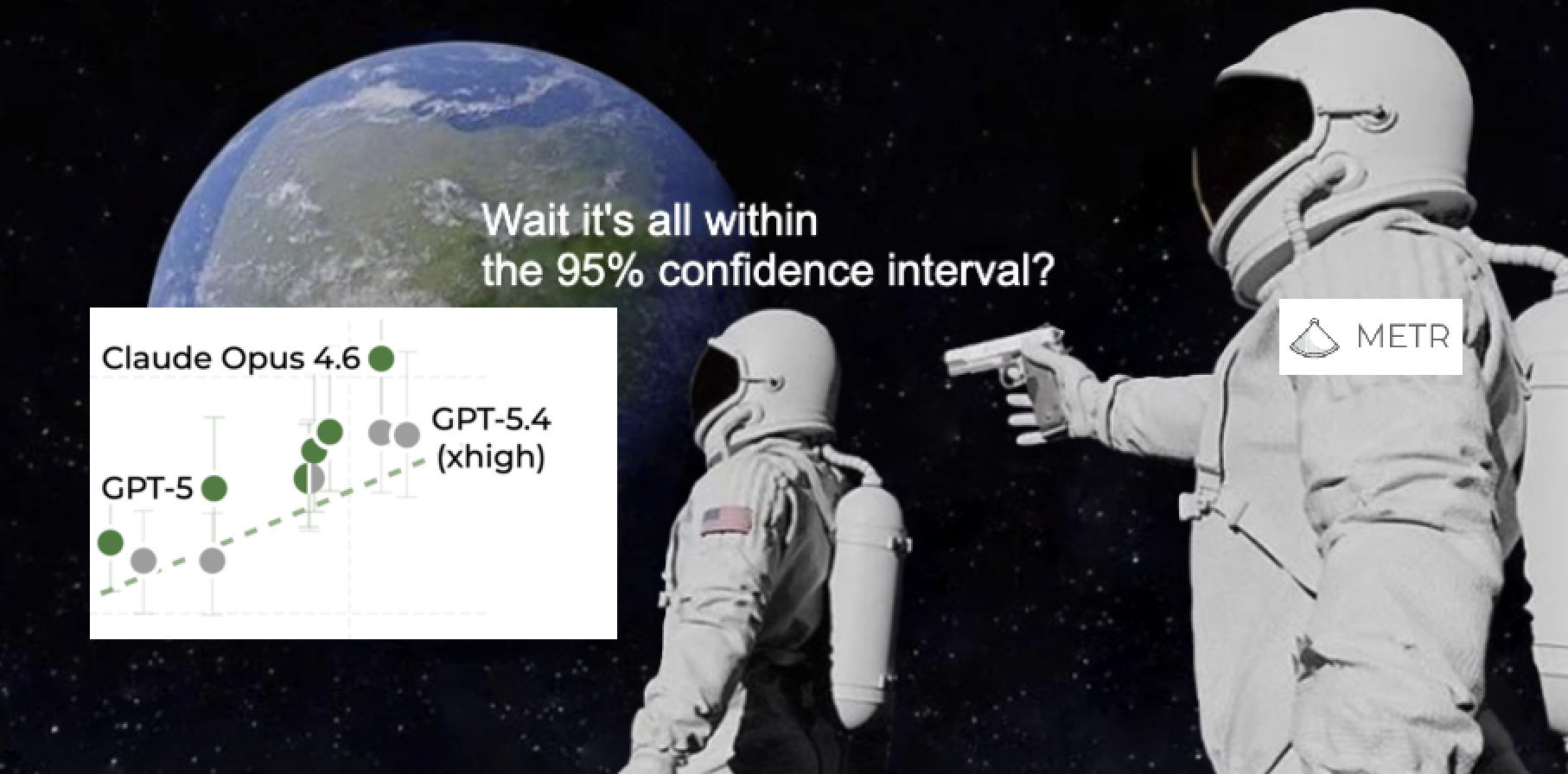

# You're gonna need a bigger boat (benchmark), METR

In this post, we’ll discuss three major problems with the METR eval and propose some solutions. Problem 1: The METR eval produces resul... | https://www.lesswrong.com/posts/3SywPAjGQWCtQFafb/you-re-gonna-need-a-bigger-boat-benchmark-metr |

# The policy surrounding Mythos marks an irreversible power shift

This post assumes Anthropic isn't lying:

1. Mythos is the current SOTA

2. Mythos is potent[^fqiwmgs614]

3. Anthropic will not make it publicly available un-nerfed[^fzqg66nygxr]

4. Anthropic will have a select few companies use it as part of [projec... | https://www.lesswrong.com/posts/3MhJELzwpbR42xsJ3/the-policy-surrounding-mythos-marks-an-irreversible-power |

# Stopping AI is easier than Regulating it.

I want to start with this provocative claim: Stopping AI is easier than regulating AI.

I often hear people say “Stopping is too hard, so we should do XYZ instead” where XYZ is some other form of regulation, such as mandating safety testing. It seems like the purpose of doin... | https://www.lesswrong.com/posts/Hf3SJ5sC79AHznbAv/stopping-ai-is-easier-than-regulating-it |

# Your body is not a white box (and you're thinking about weight loss wrong)

*Epistemic status*: This is an intuition I've had for a while that feels obviously correct to me from an inside view perspective. Note however that I am not a doctor and have no training in the medical field. I also do not have experience los... | https://www.lesswrong.com/posts/7Gpj4GpPqmaGtreTE/your-body-is-not-a-white-box-and-you-re-thinking-about |

# 5 Hypotheses for Why Models Fail on Long Tasks

*Written extremely quickly for the* [*InkHaven Residency*](https://www.inkhaven.blog/)*.*

Like humans, AI models do worse on tasks that take longer to do. Unlike humans, they seem to do worse on longer tasks than humans do.

This is a big part of why the METR time hori... | https://www.lesswrong.com/posts/jLZwydRtwRguCjEnd/5-hypotheses-for-why-models-fail-on-long-tasks |

# Treaties, Regulations, and Research can be Complements

I think the debate over whether AI risk should be addressed via regulation or treaties is often oversimplified, and confused. These are not substitutes. They rely on overlapping underlying capacities and address different classes of problems, and both van benefi... | https://www.lesswrong.com/posts/7CLL4Kpruf63GfBc3/treaties-regulations-and-research-can-be-complements |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.