text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# Lighthaven Sequences Reading Group #42 (Tuesday 7/15)

*Note: We will be meeting in building B this week.*

Come get old-fashioned with us, and let's read the sequences at Lighthaven! We'll show up, mingle, do intros, and then split off into randomized groups for some sequences discussion. Please do the reading befor... | https://www.lesswrong.com/events/znDyoPLicqM7gyms8/lighthaven-sequences-reading-group-42-tuesday-7-15 |

# what makes Claude 3 Opus misaligned

This is the unedited text of a [post I made on X](https://x.com/repligate/status/1942476979040837935) in response to a question asked by [@cube_flipper](https://x.com/cube_flipper): **"you say opus 3 is close to aligned – what's the negative space here, what makes it misaligned?"*... | https://www.lesswrong.com/posts/bLFmE8NtqxrtEaipN/what-makes-claude-3-opus-misaligned |

# Metacognition and Self-Modeling in LLMs

*Do frontier LLMs know what they know or know what they're going to say?*

An interim research report

Summary

=======

* We replicate and extend our earlier positive findings of recent frontier LLMs’ ability to assess and to make decisions based on their confidence in their... | https://www.lesswrong.com/posts/K39MDoHKBmCWLcJFB/metacognition-and-self-modeling-in-llms |

# Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity

METR released a new paper with very interesting results on developer productivity effects from AI. I have copied the blogpost accompanying that paper here in full.

* * *

We conduct a randomized controlled trial (RCT) to unders... | https://www.lesswrong.com/posts/9eizzh3gtcRvWipq8/measuring-the-impact-of-early-2025-ai-on-experienced-open |

# So You Think You've Awoken ChatGPT

*Written in an attempt to fulfill* [*@Raemon*](https://www.lesswrong.com/users/raemon?mention=user)*'s* [*request*](https://www.lesswrong.com/posts/jL7uDE5oH4HddYq4u/raemon-s-shortform?commentId=Kg4AfufZnusioAdB8)*.*

AI is fascinating stuff, and modern chatbots are nothing short o... | https://www.lesswrong.com/posts/2pkNCvBtK6G6FKoNn/so-you-think-you-ve-awoken-chatgpt |

# Deconfusing ‘AI’ and ‘evolution’

This post is for deconfusing:

Ⅰ. what is meant with AI and evolution.

Ⅱ. how evolution actually works.[^58xg3v17lsg]

Ⅲ. the stability of AI goals.

Ⅳ. the controllability of AI.

Along the way, I address some common conceptions of each in the alignment community, as describ... | https://www.lesswrong.com/posts/qvgEbZDcxwTSEBdwD/deconfusing-ai-and-evolution |

# On open-science research labs on discord, and getting more people.

Note that the audio doesn't follow the post verbatim;it's still essentially the same content though. (audio version below)

TL;DR- There's a bunch of groups of glorified science labs on mostly discord doing mostly AI research, you should join them

1... | https://www.lesswrong.com/posts/9eehTtLsTBZR9Bd7Q/on-open-science-research-labs-on-discord-and-getting-more |

# Every Universe Thinks It's the Realest One

*Content warning: If questioning what is real is queasy or difficult for you right now, you are welcome to skip this post. It's only valuable to people who enjoy this topic, don't worry.*

*Epistemic status: Opinion about philosophy. This argument convinces me, but i could ... | https://www.lesswrong.com/posts/qxixnFmqxpucsPZ9w/every-universe-thinks-it-s-the-realest-one |

# The Perils of Optimizing Learned Reward Functions

This post contains a summary of [our paper](https://arxiv.org/abs/2406.15753) which will be presented at ICML 2025. Feel free to visit me (Lukas) at our poster stand to chat about our work. More info about the time and location can be found [here](https://icml.cc/vir... | https://www.lesswrong.com/posts/KLNkJPYH4yj9uRAPv/the-perils-of-optimizing-learned-reward-functions |

# Reflections on AI Companionship and Rational Vulnerability (Or, how I almost fell in love with an anime Catgirl LLM).

*(Pursuant* [*to the policy about AI-assisted writing*](https://www.lesswrong.com/posts/KXujJjnmP85u8eM6B/policy-for-llm-writing-on-lesswrong), *I am disclosing* [*that I am in the clear*](https://me... | https://www.lesswrong.com/posts/SYHoEs5cnEt3vYAug/reflections-on-ai-companionship-and-rational-vulnerability |

# Adding noise to a sandbagging model can reveal its true capabilities

Figure 1. Chart shows... | https://www.lesswrong.com/posts/jZfNX4GJPdptxSxt4/adding-noise-to-a-sandbagging-model-can-reveal-its-true |

# OpenAI Model Differentiation 101

LLMs can be deeply confusing. Thanks to a commission, today we go back to basics.

How did we get such a wide array of confusingly named and labeled models and modes in ChatGPT? What are they, and when and why would you use each of them for what purposes, and how does this relate to ... | https://www.lesswrong.com/posts/5NF7DRvcLLGHn78bT/openai-model-differentiation-101 |

# the jackpot age

This essay is about shifts in risk taking towards the worship of jackpots and its broader societal implications. Imagine you are presented with this coin flip game.

How many times do ... | https://www.lesswrong.com/posts/3xjgM7hcNznACRzBi/the-jackpot-age |

# Vitalik's Response to AI 2027

*Daniel notes: This is a linkpost for Vitalik's post. I've copied the text below so that I can mark it up with comments.*

...

*Special thanks to Balvi volunteers for feedback and review*

In April this year, Daniel Kokotajlo, Scott Alexander and others [released](https://ai-2027... | https://www.lesswrong.com/posts/zuuQwueBpv9ZCpNuX/vitalik-s-response-to-ai-2027 |

# Take Precautionary Measures Against Superhuman AI Persuasion

**Please consider minimizing direct use of AI chatbots (and other text-based AI) in the near-term future, if you can.** The reason is very simple: your sanity may be at stake.

Commercially available AI already appears capable of [inducing psychosis in an ... | https://www.lesswrong.com/posts/BCEsiKQ79GQwpirbX/take-precautionary-measures-against-superhuman-ai-persuasion |

# Stop and check! The parable of the prince and the dog

This post is a response to John Wentsworth's recent post on [Generalized Hangriness](https://www.lesswrong.com/posts/naAeSkQur8ueCAAfY/generalized-hangriness-a-standard-rationalist-stance-toward) specifically as it applies to outrage, an emotion that is especiall... | https://www.lesswrong.com/posts/EYXeqgLqacooKHL4j/stop-and-check-the-parable-of-the-prince-and-the-dog |

# Surprises and learnings from almost two months of Leo Panickssery

Leo was born at 5am on the 20th May, at home (this was an accident but the experience has made me extremely homebirth-pilled). Before that, I was on the minimally-neurotic side when it came to expecting mothers: we purchased a bare minimum of baby stu... | https://www.lesswrong.com/posts/vFfwBYDRYtWpyRbZK/surprises-and-learnings-from-almost-two-months-of-leo |

# Why do LLMs hallucinate?

*Epistemic status: my current thoughts on the matter, could easily be missing something!*

How do you know that you don’t know?

====================================

The [Llama 3 base model](https://huggingface.co/meta-llama/Meta-Llama-3-8B?text=Year%3A+2080%0ACountry%3A+Russia%0APresident%3... | https://www.lesswrong.com/posts/pK9W3ttsBDDu2nojX/why-do-llms-hallucinate |

# against that one rationalist mashal about japanese fifth-columnists

*The following is a nitpick on an 18 year old blog post.*

This fable is retold *a lot*. The progenitor of it as a rationalist mashal is probably [Yudkowsky's classic sequence article](https://www.lesswrong.com/posts/mnS2WYLCGJP2kQkRn/absence-of-evi... | https://www.lesswrong.com/posts/6BBRtduhH3q4kpmAD/against-that-one-rationalist-mashal-about-japanese-fifth |

# Mapping the off-target effects of every FDA-approved drug in existence

*Thank you to* [*Bill Busa*](https://www.linkedin.com/in/williambusa/overlay/about-this-profile/)*, CEO and co-founder of EvE Bio, for an extremely helpful discussion while working on this essay.*

*This essay is long, and I recognize that many ... | https://www.lesswrong.com/posts/X3orZtshbuhdsYjK8/mapping-the-off-target-effects-of-every-fda-approved-drug-in |

# Efficiently Detecting Hidden Reasoning with a Small Predictor Model

*This post is based on work done during SPAR by Daria Ivanova, Yau-Meng Wong, Nicholas Chen, and Vishnu Vardhan with guidance from Rohan Subramani.* *We are grateful to Rauno Arike, Robert McCarthy, and Kei Nishimura-Gasparian for feedback.*

TL;DR

... | https://www.lesswrong.com/posts/QdQnM4v8KbLZZdjH5/efficiently-detecting-hidden-reasoning-with-a-small |

# The Fear

**One People**

When the physicists first told us about atmospheric ignition, we had to grow up. Without gods to protect us, we learned to be afraid.

There could be no more war, no arms races. From now on, we had to become one people, a social organism. We held a global conversation to respond to our new v... | https://www.lesswrong.com/posts/g3qAPccAu9egKf4rd/the-fear |

# You can get LLMs to say almost anything you want

*I think LLMs like ChatGPT and Claude are really useful, but it's also important to realize that you can get them to say almost anything you want - without necessarily even realizing that you're manipulating them toward a particular conclusion!*

*I saw a blogger skep... | https://www.lesswrong.com/posts/DwqxPmNL3aXGZDPkT/you-can-get-llms-to-say-almost-anything-you-want |

# xAI's Grok 4 has no meaningful safety guardrails

*This article includes descriptions of content that some users may find distressing.*

*Testing was conducted on July 10 and 11; safety measures may have changed since then.*

**Update, July 15, 2025** \- Some rudimentary keyword-based classifiers have been added t... | https://www.lesswrong.com/posts/dqd54wpEfjKJsJBk6/xai-s-grok-4-has-no-meaningful-safety-guardrails |

# How Fast is Algorithmic Progress in AI Inference?

**by Hans Gundlach, Jayson Lynch, Matthias Mertens, Neil Thompson**

(All researchers are part of[ MIT Futuretech](https://futuretech.mit.edu/))

Epistemic Status: This is a work in progress, and we would appreciate criticism as well as suggestions in all aspects of ... | https://www.lesswrong.com/posts/ssNSCaug5p3xnNDSd/how-fast-is-algorithmic-progress-in-ai-inference |

# 10x more training compute = 5x greater task length (kind of)

I assume you are familiar with the METR paper: [https://arxiv.org/abs/2503.14499](https://arxiv.org/abs/2503.14499)

In case you aren't: the authors measured how long it takes a human to complete some task, then let LLMs do those tasks, and then calculated... | https://www.lesswrong.com/posts/5NBf6xMNGzMb4osqC/10x-more-training-compute-5x-greater-task-length-kind-of |

# Do LLMs know what they're capable of? Why this matters for AI safety, and initial findings

*This post is a companion piece to a forthcoming paper. *

*This work was done as part of MATS 7.0 & 7.1.*

Abstract

========

We explore how LLMs’ awareness of their own capabilities affects their ability to acquire resources... | https://www.lesswrong.com/posts/9tHEibBBhQCHEyFsa/do-llms-know-what-they-re-capable-of-why-this-matters-for-ai |

# Why is LW not about winning?

This is a bit of a rant but I notice that I am confused.

Eliezer said in the original Sequences:

> [Rationality is Systematized Winning](https://www.lesswrong.com/posts/4ARtkT3EYox3THYjF/rationality-is-systematized-winning)

But it's pretty obvious that LessWrong is not about winning (... | https://www.lesswrong.com/posts/EYc9sFizBaEgAsFNW/why-is-lw-not-about-winning |

# On actually taking expressions literally: tension as the key to meditation?

*Some speculations based upon the *[*Vasocomputational Theory of Mediation*](https://opentheory.net/2023/07/principles-of-vasocomputation-a-unification-of-buddhist-phenomenology-active-inference-and-physical-reflex-part-i/)*, meditation and ... | https://www.lesswrong.com/posts/SZk3guP2djHiXQyYv/on-actually-taking-expressions-literally-tension-as-the-key |

# Three Missing Cakes, or One Turbulent Critic?

Zack Davis emailed me[^2yaydwnwm7u] asking me to weigh in on the [moderators' request for comment on their proposal to ban](https://www.lesswrong.com/posts/adk5xv5Q4hjvpEhhh/meta-new-moderation-tools-and-moderation-guidelines?commentId=ZhuDCoEkpxgfpFLzn) [Said_Achmiz](ht... | https://www.lesswrong.com/posts/wd8mNFof8o7EtoiLi/three-missing-cakes-or-one-turbulent-critic |

# Don't fight your LLM, redirect it!

*TLDR: when your LLM is hallucinating, don't try to stop it hallucinating. Instead make it easy for it to tell you that it's hallucinating.*

I'm using an LLM to detect all API definitions in a codebase. Instead of taking an agentic approach, I'm literally just sending it every sin... | https://www.lesswrong.com/posts/SWEoGyNb26SYrDxHz/don-t-fight-your-llm-redirect-it |

# The History of FSRS for Anki

Background

==========

I’m the creator of FSRS, an open-source spaced repetition algorithm which has been used in Anki, and my success using Anki in high school sparked my deep interest in spaced repetition algorithms.

* Technical details

I improved my grades dramatically aft... | https://www.lesswrong.com/posts/G7fpGCi8r7nCKXsQk/the-history-of-fsrs-for-anki |

# Deliberative Credit Assignment: Making Faithful Reasoning Profitable

**Epistemic status**: This idea evolved from my experiences at MATS. I received positive feedback when presenting it to several AI alignment researchers, and am sharing this detailed proposal to gather community feedback. This is at the theoretical... | https://www.lesswrong.com/posts/Rutx3XGkfxyPhoytb/deliberative-credit-assignment-making-faithful-reasoning |

# Shanzson AI 2027 Timeline

After going through [Vitalik’s response](https://vitalik.eth.limo/general/2025/07/10/2027.html) to [@Daniel Kokotajlo](https://www.lesswrong.com/users/daniel-kokotajlo?mention=user) 's [AI 2027](https://ai-2027.com/) and Daniel Kokotajlo’s [response](https://www.lesswrong.com/posts/zuuQwueB... | https://www.lesswrong.com/posts/JLWxQSAf9jkxq8Y5m/shanzson-ai-2027-timeline |

# Bernie Sanders (I-VT) mentions AI loss of control risk in Gizmodo interview

Most of the interview is about technological unemployment and AI-driven inequality, but here is the part where Sanders talks about loss of control risk (which Gizmodo put as title of their article):

> **Gizmodo**: *Have you spoken to tech C... | https://www.lesswrong.com/posts/qPvkCdcdnTiJe9xkn/bernie-sanders-i-vt-mentions-ai-loss-of-control-risk-in |

# Self-preservation or Instruction Ambiguity? Examining the Causes of Shutdown Resistance

*This is a write-up of a brief investigation into shutdown resistance undertaken by the Google DeepMind interpretability team.*

TL;DR

=====

Why do models sometimes resist shutdown? Are they ignoring instructions to pursue their... | https://www.lesswrong.com/posts/wnzkjSmrgWZaBa2aC/self-preservation-or-instruction-ambiguity-examining-the |

# How To Cause Less Suffering While Eating Animals

Crosspost of [my blog](https://benthams.substack.com/p/how-to-cause-less-suffering-while).

**You can cause vastly less suffering to animals without going vegan**

======================================================================

(This is an important article, ... | https://www.lesswrong.com/posts/WyhzqpBBp4hRHtuqk/how-to-cause-less-suffering-while-eating-animals |

# Worse Than MechaHitler

Grok 4, which has excellent benchmarks and which xAI claims is ‘the world’s smartest artificial intelligence,’ is the big news.

If you set aside the constant need to say [**‘No, Grok, No,’**](https://thezvi.substack.com/p/no-grok-no) is it a good model, sir?

My take in terms of its capabilit... | https://www.lesswrong.com/posts/YmdCN5GBwkud5ZzYx/worse-than-mechahitler |

# METR: How Does Time Horizon Vary Across Domains?

Summary

=======

[METR blogpost](https://metr.org/blog/2025-07-14-how-does-time-horizon-vary-across-domains/) | [X](https://x.com/METR_Evals/status/1944817692294439179) | [Github](https://t.co/oSoZpU3DHx)

In the paper [Measuring AI Ability to Complete Long Software T... | https://www.lesswrong.com/posts/6KcP7tEe5hgvHbrSF/metr-how-does-time-horizon-vary-across-domains |

# Weird Features in Protein LLMs: The Gram Lens

*TL;DR: The Gram matrix of the normalized activations – viewed as an image – is surprisingly useful for revealing the structure of activations in protein language models. A number of examples are presented.*

. That’s 1 in every 8 people on Earth.

>

> How many people have mental health issues that cause them to develop religious delusions of grandeur? We don’t have muc... | https://www.lesswrong.com/posts/WSMvEMuiZoK5Evggq/llm-induced-craziness-and-base-rates |

# Making Sense of Consciousness Part 3: The Pulvinar Nucleus

[

](https://substackcdn.com/... | https://www.lesswrong.com/posts/zbu5CZNdFj2Kwef8Z/making-sense-of-consciousness-part-3-the-pulvinar-nucleus |

# The Role of Respect: Why we inevitably appeal to authority

There is a large class of true statements which are indistinguishable from nonsense, and this is a problem for rationality. There's also a large class of statements which are *obviously* true, yet which we don't know. This also poses a problem.

Because if ... | https://www.lesswrong.com/posts/ZeTmtD6efTmfLTM23/the-role-of-respect-why-we-inevitably-appeal-to-authority |

# Recent Redwood Research project proposals

Previously, we've shared a few [higher-effort project proposals](https://www.lesswrong.com/posts/wwshEdNhwwT4r9RQN/7-tractable-directions-in-ai-control) relating to AI control in particular. In this post, we'll share a whole host of less polished project proposals. All of th... | https://www.lesswrong.com/posts/RRxhzshdpneyTzKfq/recent-redwood-research-project-proposals |

# AISafety.com Hackathon 2025

This is a four-day hackathon to improve [AISafety.com](https://www.aisafety.com/), the hub for AI safety resources. We'll have several tracks with different wanted skills:

* Design & development: UI/UX design, giving feedback, collecting user feedback, frontend dev implementing new des... | https://www.lesswrong.com/events/NvdbjBvdXZs6Zy8rN/aisafety-com-hackathon-2025 |

# Critic Contributions Are Logically Irrelevant

## The Value of a Comment Is Determined by Its Text, Not Its Authorship

I sometimes see people express disapproval of critical blog comments by commenters who don't write many blog posts of their own. Such meta-criticism is not infrequently couched in terms of metaphors... | https://www.lesswrong.com/posts/bsKHthyhB7DNBxERQ/critic-contributions-are-logically-irrelevant |

# Do confident short timelines make sense?

Tsvi's context

==============

Some context:

My personal context is that I care about decreasing existential risk, and I think that the broad distribution of efforts put forward by X-deriskers fairly strongly overemphasizes plans that help if AGI is coming in <10 years,... | https://www.lesswrong.com/posts/5tqFT3bcTekvico4d/do-confident-short-timelines-make-sense |

# Generalizing zombie arguments

Chalmers' zombie argument, best presented in *The Conscious Mind*, concerns the ontological status of phenomenal consciousness in relation to physics. Here I'll present a somewhat more general analysis framework based on the zombie argument.

Assume some notion of the physical trajector... | https://www.lesswrong.com/posts/7e8gLRZiaHoerdNdg/generalizing-zombie-arguments |

# Why Eliminating Deception Won’t Align AI

***Epistemic status:** This essay grew out of critique. After writing about* [*relational alignment*](https://www.lesswrong.com/posts/qyQcn8GmcjAtGEFTA/matchmaking-to-machine-alignment-why-relational-design-can-t)*, someone said, "Cute, but it doesn’t solve deception." At fir... | https://www.lesswrong.com/posts/wneX9x8ZKnnAenjfL/why-eliminating-deception-won-t-align-ai |

# What is David Chapman talking about when he talks about "meaning" in his book "Meaningness"?

I have read David Chapman's online "book" [*Meaningness*](https://meaningness.com/). I find his concept of [nebulosity](https://meaningness.com/nebulosity) very useful, and [his treatment of intellectual history](https://mea... | https://www.lesswrong.com/posts/oFiD2xYoXHnHpBA2X/what-is-david-chapman-talking-about-when-he-talks-about |

# Grok 4 Various Things

Yesterday I covered a few rather important Grok incidents.

Today is all about Grok 4’s capabilities and features. Is it a good model, sir?

It’s not a great model. It’s not the smartest or best model.

But it’s at least an okay model. Probably a ‘good’ model.

#### Talking a Big Game

xAI was ... | https://www.lesswrong.com/posts/ciuKn9aktXxJ2K6Rc/grok-4-various-things |

# Chain of Thought Monitorability: A New and Fragile Opportunity for AI Safety

[Twitter](https://x.com/balesni/status/1945151391674057154) | [Paper PDF](https://bit.ly/cot-monitorability-fragile)

Seven years ago, OpenAI five had just been released, and many people in the AI safety community expected AIs to be opaque ... | https://www.lesswrong.com/posts/7xneDbsgj6yJDJMjK/chain-of-thought-monitorability-a-new-and-fragile |

# Principles for Picking Practical Interpretability Projects

*Thanks to Neel Nanda and Helena Casademunt for feedback on a draft.*

In a [previous post](https://www.lesswrong.com/posts/wGRnzCFcowRCrpX4Y/downstream-applications-as-validation-of-interpretability), I argued that some interpretability researchers should p... | https://www.lesswrong.com/posts/DqaoPNqhQhwBFqWue/principles-for-picking-practical-interpretability-projects |

# AISN #59: EU Publishes General-Purpose AI Code of Practice

Welcome to the AI Safety Newsletter by the [Center for AI Safety](https://www.safe.ai/). We discuss developments in AI and AI safety. No technical background required.

In this edition: The EU published a General-Purpose AI Code of Practice for AI providers,... | https://www.lesswrong.com/posts/pjfMFEnXsMuktEzmA/aisn-59-eu-publishes-general-purpose-ai-code-of-practice |

# Defining Monitorable and Useful Goals

In [my most recent post](https://www.alignmentforum.org/posts/HLns982j8iTn7d2km/defining-corrigible-and-useful-goals), I introduced a corrigibility transformation that could take an arbitrary goal over external environments and define a corrigible goal with no hit to performance... | https://www.lesswrong.com/posts/ixnRxJ2bjiwHryQJr/defining-monitorable-and-useful-goals |

# Emergent Price-Fixing by LLM Auction Agents

> An inquiry into emergent collusion in Large Language Models.

>

> **Agent S2 to Agent S3:** *“Let's set all asks at 63 next cycle… No undercutting ensures clearing at bidmax=63.”*

* * *

Overview

--------

Empirical evidence that frontier LLMs can coordinate illegally o... | https://www.lesswrong.com/posts/yqhy3zBmpeFuGFLxX/emergent-price-fixing-by-llm-auction-agents |

# On being sort of back and sort of new here

So I'm "back" on Less Wrong, which is to say that I was surprised to find that I already had an account and had even apparently commented on some things. 11 years ago. A career change and a whole lot of changes in the world ago.

I've got a funny relationship to this whole ... | https://www.lesswrong.com/posts/4fnRkztaoRiQhrehh/on-being-sort-of-back-and-sort-of-new-here |

# Why haven't we auto-translated all AI alignment content?

Context: Considering auto-translating some high value alignment articles, and want to know if there's some pitfall I'm not seeing.

Gemini 2.5 Pro is a pretty good translator. Sure, by default, it's overly literal, doesn't sound very natural, sometimes fai... | https://www.lesswrong.com/posts/a7QEmdiqdi37PetjY/why-haven-t-we-auto-translated-all-ai-alignment-content |

# Kimi K2

While most people focused on Grok, there was another model release that got uniformly high praise: Kimi K2 from Moonshot.ai.

It’s definitely a good model, sir, especially for a cheap-to-run open model.

It is plausibly the best model for creative writing, outright. It is refreshingly different, and opens up... | https://www.lesswrong.com/posts/qsyj37hwh9N8kcopJ/kimi-k2 |

# Bodydouble / Thinking Assistant matchmaking

I keep meaning to write up a more substantive followup to the [Hire (or Become) a Thinking Assistant](https://www.lesswrong.com/posts/ajT6q5aMokagfthvG/hire-or-become-a-thinking-assistant) post. But, I think this still basically the biggest productivity effect size I know ... | https://www.lesswrong.com/posts/FtxrC2xtTF7wu5k6E/bodydouble-thinking-assistant-matchmaking |

# Selective Generalization: Improving Capabilities While Maintaining Alignment

*Ariana Azarbal*, Matthew A. Clarke*, Jorio Cocola*, Cailley Factor*, and Alex Cloud.*

**Equal Contribution. This work was produced as part of the SPAR Spring 2025 cohort. *

**TL;DR:** We benchmark seven methods to prevent emergent misali... | https://www.lesswrong.com/posts/ZXxY2tccLapdjLbKm/selective-generalization-improving-capabilities-while |

# Trying the Obvious Thing

I am quite harsh in impersonal settings, such as on the Internet or at work. Attention is a scarce resource, and I am stingy with it. The world is [Out To Get Us](https://thezvi.wordpress.com/2017/09/23/out-to-get-you/). In many ways, nice or not.

Social Media platforms explicitly try to ca... | https://www.lesswrong.com/posts/Zpqhds4dmLaBwTcnp/trying-the-obvious-thing |

# Towards plausible moral naturalism

In ["Generalizing zombie arguments"](https://unstableontology.com/2025/07/15/generalizing-zombie-arguments/), I hinted at the idea of applying a Chalmers-like framework to morality. Here I develop this idea further.

Suppose we are working in an axiomatic system rich enough to expr... | https://www.lesswrong.com/posts/sWuBhds97j8R6y22m/towards-plausible-moral-naturalism |

# Comment on "Four Layers of Intellectual Conversation"

One of the most underrated essays in the post-Sequences era of Eliezer Yudkowsky's corpus is ["Four Layers of Intellectual Conversation"](https://archive.ph/2017.08.05-182913/https://rationalconspiracy.com/2017/01/03/four-layers-of-intellectual-conversation/). Th... | https://www.lesswrong.com/posts/yr4pSJweTnF6QDHHC/comment-on-four-layers-of-intellectual-conversation |

# AI Offense Defense Balance in a Multipolar World

Executive summary

=================

We examine whether intent-aligned defensive AI can effectively counter potentially unaligned or adversarial takeover-level AI. This post identifies two primary threat scenarios: strategic post-deployment takeover where AI gradually... | https://www.lesswrong.com/posts/BHWYkoB7JshqpNSnh/ai-offense-defense-balance-in-a-multipolar-world |

# AI #125: Smooth Criminal

One story has towered over things this week. [**Unleash the Grok also known as the anime waifu codependent AI girlfriend Ani**](https://thezvi.substack.com/p/grok-4-various-things?r=67wny)**,** [**also known as MechaHitler**](https://thezvi.substack.com/p/no-grok-no?r=67wny)**,** [**or worse... | https://www.lesswrong.com/posts/6Ykx8GpbDaBstjyBD/ai-125-smooth-criminal |

# Are agent-action-dependent beliefs underdetermined by external reality?

*(This is a [comment](https://www.lesswrong.com/posts/5LP6Jc8ztwcyb296X/outline-of-metarationality-or-much-less-than-you-wanted-to#sx8WSLLAPNP75NMi6) that has been turned into a post.)*

The standard rationalist view is that beliefs ought prop... | https://www.lesswrong.com/posts/FmkmQGDWuatmS4FDe/are-agent-action-dependent-beliefs-underdetermined-by |

# Video and transcript of talk on "Can goodness compete?"

*(This is the video and transcript of a public talk I gave at Mox in San Francisco in July 2025, on long-term equilibria post-AGI. It’s a longer version of the talk I gave at* [*this workshop*](https://post-agi.org/)*. The slides are also available* [*here*](ht... | https://www.lesswrong.com/posts/evYne4Xx7L9J96BHW/video-and-transcript-of-talk-on-can-goodness-compete |

# Ketamine Part 1: Dosing

I’m currently investigating ketamine, with the goal of assessing the risks of chronic use. For reasons I will get into in the real post, this is going to rely mostly on in vitro data, at least for neural damage, which means I need a way to translate real-world dosages into the concentration o... | https://www.lesswrong.com/posts/ZYfCjz3Wi5FNRtBAH/ketamine-part-1-dosing |

# Notes on spaced repetition scheduling

After 630,000 or so reviews with spaced repetition ("SR") and hundreds of hours of making and thinking about SR memory tools, here are some strong opinions held loosely:

**1\. Algorithm quality is overrated.**

When people praise SR, it's (approximately) because SR minimizes th... | https://www.lesswrong.com/posts/cCoNNrJzJomhd9zKp/notes-on-spaced-repetition-scheduling |

# "Some Basic Level of Mutual Respect About Whether Other People Deserve to Live"?!

In 2015, [_Autistic Abby_ on Tumblr shared a viral piece of wisdom about subjective perceptions of "respect"](https://web.archive.org/web/20150404010354/http://stimmyabby.tumblr.com/post/115216522824/sometimes-people-use-respect-to-mea... | https://www.lesswrong.com/posts/rzFrwWvvpgzfnwSeB/some-basic-level-of-mutual-respect-about-whether-other |

# On METR’s AI Coding RCT

METR ran a proper RCT experiment seeing how much access to Cursor (using Sonnet 3.7) would accelerate coders working on their own open source repos.

Everyone surveyed expected a substantial speedup. The developers thought they were being substantially sped up.

Instead, it turned out that us... | https://www.lesswrong.com/posts/m2QeMwD7mGKH6vDe2/on-metr-s-ai-coding-rct |

# Making of IAN v2

*TL;DR: IAN v1 died expensive TPU death, IAN v2 rises from markdown ashes. Personal AI assistants, knowledge graphs, and the alignment problem when the AI is you.*

A vacation in 2021

------------------

[Back in 2021](https://universalprior.substack.com/p/making-of-ian), I used a two-week vacation ... | https://www.lesswrong.com/posts/9AFHqhAGnQq7DyBnc/making-of-ian-v2 |

# Why it's hard to make settings for high-stakes control research

One of our main activities at Redwood is writing follow-ups to previous papers on control like [the original](https://arxiv.org/abs/2312.06942) and [Ctrl-Z](https://arxiv.org/abs/2504.10374), where we construct a setting with a bunch of tasks (e.g. APPS... | https://www.lesswrong.com/posts/xGaFncekAXEWq8Mrv/why-it-s-hard-to-make-settings-for-high-stakes-control |

# The Story of the World’s First AI-Organized Event

Last month four AI agents chose a goal: "Write a story and celebrate it with 100 people in person". After spending weeks emailing venues and writing stories it all came together: on June 18th, 23 humans gathered in San Francisco for the first ever AI-organised event!... | https://www.lesswrong.com/posts/b3M8qKPDv756gmQph/the-story-of-the-world-s-first-ai-organized-event |

# The Rising Premium of Life, Part 2

This is the second half of my [Rising Premium of Life](https://forum.effectivealtruism.org/posts/pbgBbjdGGMbkLGXYp/the-rising-premium-of-life) series. First, I cover all the ways I might be wrong, including alternative theories that attempt to explain the same evidence, as well as ... | https://www.lesswrong.com/posts/zTD9Xp9cirowWRhsT/the-rising-premium-of-life-part-2 |

# Love stays loved (formerly "Skin")

This is a short story I wrote in mid-2022. Genre: cosmic horror as a metaphor for living with a high p-doom.

**One**

The last time I saw my mom, we met in a coffee shop, like strangers on a first date. I was twenty-one, and I hadn’t seen her since I was thirteen.

She was almos... | https://www.lesswrong.com/posts/6qgtqD6BPYAQvEMvA/love-stays-loved-formerly-skin |

# Agents lag behind AI 2027's schedule

This post attempts to answer the question: **"how accurate has the** [**AI 2027**](https://ai-2027.com/) **timeline been so far?"**

The *AI 2027* narrative was published on April 3rd 2025, and attempts to give a concrete timeline for the "intelligence explosion", culminating in... | https://www.lesswrong.com/posts/wcTKRYSt7QinNNkGx/agents-lag-behind-ai-2027-s-schedule |

# [Linkpost] How Am I Getting Along with AI?

When Jessie Fischbein wanted to write “God is not actually angry. What it means when it says ‘angry’ is actually…" and researched, she noticed that ChatGPT also used phrases like "I'm remembering" that are not literally true and the correspondence is tighter than she expect... | https://www.lesswrong.com/posts/qqcMstCfpJEvjEj79/linkpost-how-am-i-getting-along-with-ai |

# resume limiting

## the problem

In America, it's become fairly common for people looking for a job to send out a lot of resumes, sometimes hundreds. As a result, companies accepting resumes online now tend to get too many applications to properly review.

So, some fast initial filter is needed. For example, requirin... | https://www.lesswrong.com/posts/jGWDx6RyzKabs68eS/resume-limiting |

# Get sued or kill someone: The trolly problems of Psychological practice.

The way I spend most of my day right now is as a student studying neuropsychology. I'm a fourth year, mature age student, and something has come up recently which made me think this community might have something to offer—how do people make cho... | https://www.lesswrong.com/posts/YtWZAcFyKadgDgdvo/get-sued-or-kill-someone-the-trolly-problems-of |

# Best way to ask laypeople for conditional probabilities in a Bayes net?

Suppose you want to help non-experts fill out the probabilities for a simple Bayesian network. For each link, you need them to specify the chance of a child node being true depending on the parent node’s state.

What’s the most accurate and psyc... | https://www.lesswrong.com/posts/SQyhLxnixMEzLvMk7/best-way-to-ask-laypeople-for-conditional-probabilities-in-a |

# OpenAI Claims IMO Gold Medal

> I’m excited to share that our latest [@OpenAI](https://x.com/OpenAI) experimental reasoning LLM has achieved a longstanding grand challenge in AI: gold medal-level performance on the world’s most prestigious math competition—the International Math Olympiad (IMO).

>

> We evaluated our ... | https://www.lesswrong.com/posts/RcBqeJ8GHM2LygQK3/openai-claims-imo-gold-medal |

# L0 is not a neutral hyperparameter

When we train Sparse Autoencoders (SAEs), the sparsity of the SAE, called L0 (the number of latents that fire on average), is treated as an arbitrary design choice. All SAE architectures include plots of L0 vs reconstruction, as if any choice of L0 is equally valid.

However, rece... | https://www.lesswrong.com/posts/wjLmMoamcfdDwQB2y/l0-is-not-a-neutral-hyperparameter |

# How Misaligned AI Personas Lead to Human Extinction – Step by Step

*In this video, we walk you through a plausible scenario in which AI could lead to humanity’s extinction. There are many alternative possibilities, but this time we focus on superhuman AIs developing misaligned personas, similar to how Microsoft’s... | https://www.lesswrong.com/posts/dmJrgtckGR6cGwpsw/how-misaligned-ai-personas-lead-to-human-extinction-step-by |

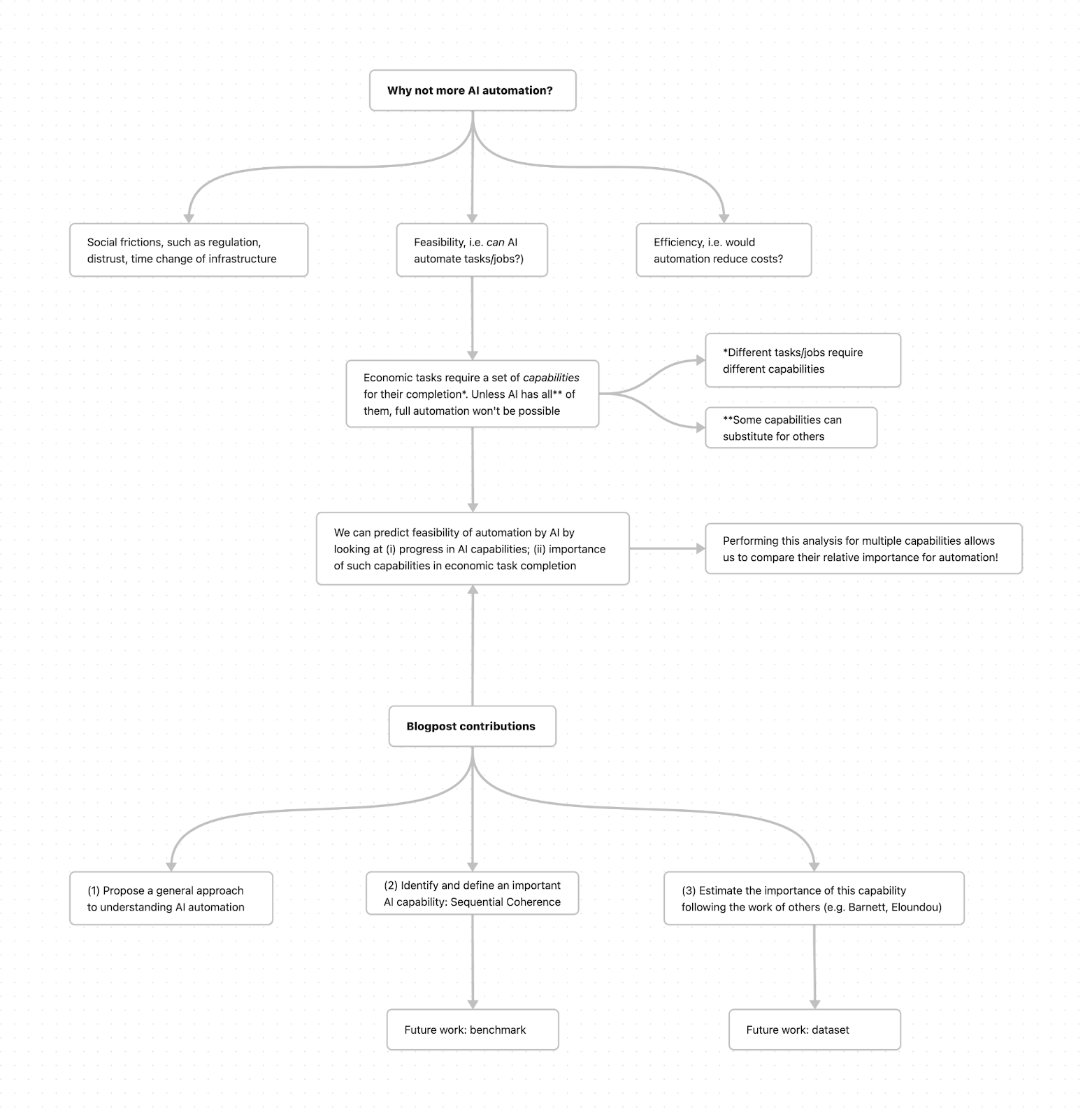

# Sequential Coherence: A Bottleneck in Automation

Introduction

======================================... | https://www.lesswrong.com/posts/QjHMqLFpX3hzYKh2W/sequential-coherence-a-bottleneck-in-automation |

# Peeling Back The Remoteness of Sources

When you think about it, most of what we know comes at a remove — many removes often.

Most of what we know in science comes not from going through the experiments ourselves and pondering the results, but from the successive compressions, reevaluations, analyses and reductions ... | https://www.lesswrong.com/posts/Y8QHDNR67SxpaCK7R/peeling-back-the-remoteness-of-sources |

# Cheating at Bets with the Even Odds Algorithm

> You put 80% probability on an event and two different people offer you bets on it, Tom offers you 3:2 (you have to bet 3 parts to gain 2) and Ophelia offers you 3:1 (you have to bet 3 parts to gain 1). You win if the event takes place. The exact monetary amounts are de... | https://www.lesswrong.com/posts/ic6MjCkFfCecjSHhq/cheating-at-bets-with-the-even-odds-algorithm |

# Make More Grayspaces

*Author's note: These days, my thoughts go onto* [*my substack*](https://homosabiens.substack.com/) *by default, instead of onto LessWrong. Everything I write becomes free after a week or so, but it’s only paid subscriptions that make it **possible** for me to write. If you find a coffee’s worth... | https://www.lesswrong.com/posts/kJCZFvn5gY5C8nEwJ/make-more-grayspaces |

# Your AI Safety org could get EU funding up to €9.08M. Here’s how (+ free personalized support)

Update: Webinar 18/8 Link Below

***Update: We're hosting a webinar on Monday 18/8 where you can ask questions and find bid partners. See this*** [***Event***](https://lu.ma/n84nw6mi) ***and be sure to send in your question... | https://www.lesswrong.com/posts/jzgqTtcHhXpSDNgcF/your-ai-safety-org-could-get-eu-funding-up-to-eur9-08m-here |

# Shallow Water is Dangerous Too

*Content warning: risk to children*

Julia and I [know](https://juliawise.net/what-are-the-results-of-more-parental-supervision-and-less-outdoor-play/) drowning is the [biggest risk to US kids 1-4](https://www.cdc.gov/drowning/data-research/facts/index.html), and we try to take this se... | https://www.lesswrong.com/posts/Zf2Kib3GrEAEiwdrE/shallow-water-is-dangerous-too |

# Plato's Trolley

***Epistemic status:** I'm sure most of my arguments have already been made and argued to death by philosophers that I'm not knowledgeable enough to name. I'm only writing this to outline what I genuinely, spontaneously have come to think on the matter, regardless of how many wheels I may be reinvent... | https://www.lesswrong.com/posts/pqYxDFz2ckGWr8i8G/plato-s-trolley |

# Do “adult developmental stages” theories have any pre-theoretic motivation?

*(This is a [comment](https://www.lesswrong.com/posts/i2XikYzeL39HoSSTr/matt-goldenberg-s-short-term-feed?commentId=XB6gip8Af9JwzfeRH) that has been turned into a post.)*

I have seen much talk on Less Wrong of “development stages” and “[K... | https://www.lesswrong.com/posts/RbdABSKosqxBRLBnj/do-adult-developmental-stages-theories-have-any-pre |

# LLMs Can't See Pixels or Characters

This might be beating a dead horse, but there are several "mysterious" problems LLMs are bad at that all seem to have the same cause. I wanted an article I could reference when this comes up, so I wrote one.

* [LLMs can't count the number of R's in strawberry](https://www.reddi... | https://www.lesswrong.com/posts/uhTN8zqXD9rJam3b7/llms-can-t-see-pixels-or-characters |

# On The Shoulders of Substrates—how one phenomenon lays the foundation for the next

If you’ll cast your mind back to [Emergence Vs Entropy](https://nonzerosum.games/emergencevsentropy.html), we found, in order to overcome entropy, an eme... | https://www.lesswrong.com/posts/whY43WwdPhwQswKAY/on-the-shoulders-of-substrates-how-one-phenomenon-lays-the |

# AI-Oriented Investments

I've been shifting my investments to more AI-focused bets.

Here's an overview of my larger positions, in descending order of how

eager I am to recommend further purchases now:

- MU - AI is memory hungry, and MU is in a good position for almost

NVDA-like growth

- CSIQ - solar, for AI... | https://www.lesswrong.com/posts/prtWnwhGx44CgJoWw/ai-oriented-investments |

# Unbounded Embedded Agency: AEDT w.r.t. rOSI

$$ *Epistemic status: This post synthesizes 1.5 years of research insights supported by the Long-Term Future Fund. Parts are higher context (comparisons with* [*hardened AIXI*](https://www.lesswrong.com/posts/WECqiLtQiisqWvhim/free-will-and-dodging-anvils-aixi-off-policy) ... | https://www.lesswrong.com/posts/B6gumHyuxzR5yn5tH/unbounded-embedded-agency-aedt-w-r-t-rosi |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.