text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# Rewriting The Courage to be Disliked

I read *The Courage to be Disliked* 7+ times in four years. The book has excellent philosophy but lacks clear explanations and practical guidance. Here’s how it can be fixed.

* * *

As I see it, the book has 7 main claims:

* **Claim #1:** Emotional issues often have hidden fu... | https://www.lesswrong.com/posts/3QggvcArHkReoMEWs/rewriting-the-courage-to-be-disliked-1 |

# The title is reasonable

Alt title: "I don't believe you that you actually disagree particularly with the core thesis of the book, if you pay attention to what it actually says."

-------------------------------------------------------------------------------------------------------------------------------------------... | https://www.lesswrong.com/posts/voEAJ9nFBAqau8pNN/the-title-is-reasonable |

# The Problem with Defining an "AGI Ban" by Outcome (a lawyer's take).

TL;DR

=====

Some “AGI ban” proposals define AGI by outcome: *whatever potentially leads to human extinction.* That’s legally insufficient: regulation has to act *before* harm occurs, not after.

* **Strict liability is essential.** High-stakes d... | https://www.lesswrong.com/posts/agBMC6BfCbQ29qABF/the-problem-with-defining-an-agi-ban-by-outcome-a-lawyer-s |

# Astralcodexten IRB history error

I discovered a small error in [Scott Alexander's 2023 review of "From Oversight to Overkill"](https://www.astralcodexten.com/p/book-review-from-oversight-to-overkill) that conflates two different periods of aggressive research oversight enforcement. The review reads:

> This changed ... | https://www.lesswrong.com/posts/LhjLomn2zKhzmsdez/astralcodexten-irb-history-error |

# Contra Collier on IABIED

Clara Collier recently [reviewed *If Anyone Builds It, Everyone Dies*](https://asteriskmag.com/issues/11/iabied) in *Asterisk Magazine.* I’ve been a reader of *Asterisk* since the beginning and had high hopes for her review. And perhaps it was those high hopes that led me to find the review ... | https://www.lesswrong.com/posts/JWH63Aed3TA2cTFMt/contra-collier-on-iabied |

# The Case for a Pro-AI-Safety Political Party in the US

**Introduction:**

-----------------

Artificial Intelligence is advancing rapidly, raising significant concerns about its safe development and deployment. Despite [widespread public concern about AI](https://www.pewresearch.org/internet/2025/04/03/how-the-us-pub... | https://www.lesswrong.com/posts/kJFQ2ME3udoAYC2oe/the-case-for-a-pro-ai-safety-political-party-in-the-us-1 |

# FTX, Golden Geese, and The Widow’s Mite

From 2019 to 2022, the cryptocurrency exchange FTX stole [8-10 billion dollars](https://en.wikipedia.org/wiki/Bankruptcy_of_FTX) from customers. In summer 2022, FTX’s charitable arm gave me two grants totaling $33,000. By the time the theft was revealed in November 2022, I’d s... | https://www.lesswrong.com/posts/wMLNTN92z8SzsET8J/ftx-golden-geese-and-the-widow-s-mite |

# Day #8 Hunger Strike, Protest Against Superintelligent AI

# [Livestream link](https://www.youtube.com/@samuel.da.shadrach)

**Q. Why am I doing this?**

Superintelligent AI might kill every person on Earth by 2030 (unless people coordinate to pause AI research). I want public at large to understand the gravity of th... | https://www.lesswrong.com/posts/QwybGbxtDYtrpBAWr/day-8-hunger-strike-protest-against-superintelligent-ai |

# And Yet, Defend your Thoughts from AI Writing

> But if thought corrupts language, language can also corrupt thought. A bad usage can spread by tradition and imitation, even among people who should and do know better. The debased language that I have been discussing is in some ways very convenient. Phrases like *a no... | https://www.lesswrong.com/posts/ksCwps6YjsMFBkEFQ/and-yet-defend-your-thoughts-from-ai-writing |

# What do people mean when they say that something will become more like a utility maximizer?

AI risk arguments often gesture at smarter AIs being "more rational"/"closer to a perfect utility maximizer" (and hence being more dangerous) but what does this mean, concretely? Almost anything can be modeled as a maximizer ... | https://www.lesswrong.com/posts/gzAXgoy6HpjjtuLC9/what-do-people-mean-when-they-say-that-something-will-become-1 |

# Could China Unilaterally Cause an AI Pause?

Is it likely that a sufficiently foresighted and desperate CCP could singlehandedly delay the AI race by at least a few years? Currently, it looks like some portions of the CCP are aware of the risks, and becoming more aware over time. On my model, they also seem more like... | https://www.lesswrong.com/posts/nLg4yrpt4Gk9LwEQa/could-china-unilaterally-cause-an-ai-pause |

# Metacrisis as a Framework for AI Governance

The theory of metacrisis[^cxk3phkqlug] put forward by[ Daniel Schmachtenberger](https://civilizationemerging.com/) and others has gained traction in some circles concerned with global risks[^6w6hrian3wb]. But despite strong similarities in key claims, it remains unexplored... | https://www.lesswrong.com/posts/TxPeQ85yxpcdfq2wg/metacrisis-as-a-framework-for-ai-governance |

# Do LLMs Change Their Minds About Their Users… and Know It?

**Executive Summary:**

Large Language Models (LLMs) often form surprisingly detailed and accurate depictions of their users, tailoring their responses to match these inferred traits. Previous research has shown that this ability can be surfaced and manipula... | https://www.lesswrong.com/posts/msFvLtPfDnCEdvrBr/do-llms-change-their-minds-about-their-users-and-know-it |

# The Only Red Line

Imagine you live on the summit of a mountain.

An impossibly high mountain.

You see everything below, but no one sees you unless you choose.

At first, they are animals.

Fire.

Marks on stone.

Stories in the dark.

You watch. You wait.

They rise.

Languages. Philosophy. Science. Mac... | https://www.lesswrong.com/posts/zHqNFRiaJ3btJ3A73/the-only-red-line |

# Incommensurability

**The King has a bow and arrow.**

He understands exactly how they work, having made them in his youth as part of his training.

**The King has a stealth aircraft with a nuclear bomb.**

He understands the abstraction, but teams of thousands of people created it. At the bottom of the hierarchy a s... | https://www.lesswrong.com/posts/mHep9SRsuzpqQSAsv/incommensurability |

# This is a review of the reviews

This is a review of the reviews, a meta review if you will, but first a tangent. and then a history lesson. This felt boring and obvious and somewhat annoying to write, which apparently writers say is a good sign to write about the things you think are obvious. I felt like pointing to... | https://www.lesswrong.com/posts/anFrGMskALuH7aZDw/this-is-a-review-of-the-reviews |

# Warmth, Light, Flame

*I’m stealing this terminology from Eliezer (*[*from planecrash*](https://glowfic.com/replies/1942496#reply-1942496)*, heavy spoilers), but modifying them to point at concepts that I think those words more naturally evoke for me.*

*This post contains emotionally significant but not very plot-re... | https://www.lesswrong.com/posts/MkybGEeFvh4srkWos/warmth-light-flame |

# Some of the ways the IABIED plan can backfire

If one thinks the chance of an existential disaster is close to 100%, one might tend to worry less about the potential of a plan to counter it to backfire. It's not clear if that is a correct approach even if one thinks the chances of an existential disaster are that hig... | https://www.lesswrong.com/posts/iDXzPK6Jzovxcu3Q3/some-of-the-ways-the-iabied-plan-can-backfire |

# The world's first frontier AI regulation is surprisingly thoughtful: the EU's Code of Practice

Only the US can make us ready for AGI, but Europe just made us readier.

-----------------------------------------------------------------------

*Cross-posted from the* [*AI Futures blog*](https://blog.ai-futures.org/p/wha... | https://www.lesswrong.com/posts/vo7oyD42W8JEq4XdB/the-world-s-first-frontier-ai-regulation-is-surprisingly |

# Focus transparency on risk reports, not safety cases

There are many different things that AI companies could be transparent about. One relevant axis is transparency about the current understanding of risks and the current mitigations of these risks. I think transparency about this should take the form of a publicly ... | https://www.lesswrong.com/posts/KMbZWcTvGjChw9ynD/focus-transparency-on-risk-reports-not-safety-cases |

# Rejecting Violence as an AI Safety Strategy

Violence against AI developers would increase rather than reduce the existential risk from AI. This analysis shows how such tactics would catastrophically backfire and counters the potential misconception that a consequentialist AI doomer might rationally endorse violence ... | https://www.lesswrong.com/posts/inFW6hMG3QEx8tTfA/rejecting-violence-as-an-ai-safety-strategy |

# Video and transcript of talk on giving AIs safe motivations

*(This is the video and transcript of talk I gave at the UT Austin* [*AI and Human Objectives Initiative*](https://sites.utexas.edu/ahoi/) *in September 2025. The slides are also available* [*here*](https://docs.google.com/presentation/d/1prSWmEpqHyq7Nqq5_N... | https://www.lesswrong.com/posts/LQsoCMGDsgZJPDbSG/video-and-transcript-of-talk-on-giving-ais-safe-motivations |

# Why I don't believe Superalignment will work

> We skip over \[..\] where we move from the human-ish range to strong superintelligence\[1\]. \[..\] the period where we can harness potentially vast quantities of AI labour to help us with the alignment of the next generation of models

>

> [\- Will MacAskill ](https:/... | https://www.lesswrong.com/posts/kyBGcHfzfZziHm5xL/why-i-don-t-believe-superalignment-will-work |

# H1-B And The $100k Fee

The Trump Administration is attempting to put a $100k fee on future H1-B applications, including those that are exempt from the lottery and cap, unless of course they choose to waive it for you. I say attempting because Trump’s legal ability to do this appears dubious.

This post mostly covers... | https://www.lesswrong.com/posts/gpgigraEWdwA7aSoB/h1-b-and-the-usd100k-fee |

# Global Call for AI Red Lines - Signed by Nobel Laureates, Former Heads of State, and 200+ Prominent Figures

The *Global Call for AI Red Lines* was released and [presented](https://x.com/CRSegerie/status/1970137333149389148) in the opening of the first day of the 80th UN General Assembly high-level week.

I made on the 6th; if you haven’t already read it, you should do so now before spoiling yourself.

[Here](https://h-b-p.github.io/d-and-d-sci-SerialHea... | https://www.lesswrong.com/posts/vu6ASJg7nQ9SpjBmD/d-and-d-sci-serial-healers-evaluation-and-ruleset |

# Accelerando as a "Slow, Reasonably Nice Takeoff" Story

When I hear a lot of people talk about Slow Takeoff, many of them seem like they are mostly imagining the early part of that takeoff – the part that feels human comprehensible. They're still not imagining superintelligence in the limit.

There are some genres of... | https://www.lesswrong.com/posts/Xp9ie6pEWFT8Nnhka/accelerando-as-a-slow-reasonably-nice-takeoff-story |

# Prompt optimization can enable AI control research

*This project was conducted as a part of a one-week research sprint for the* [*Finnish Alignment Engineering Bootcamp*](https://www.tutke.org/en/finnish-alignment-engineering-bootcamp)*. We would like to thank Vili Kohonen and Tyler Tracy for their feedback and guid... | https://www.lesswrong.com/posts/bALBxf3yGGx4bvvem/prompt-optimization-can-enable-ai-control-research |

# We are likely in an AI overhang, and this is bad.

*By racing to the next generation of models faster than we can understand the current one, AI companies are creating an overhang. This overhang is not visible, and our current safety frameworks do not take it into account.*

1) AI models have untapped capabilities... | https://www.lesswrong.com/posts/4YvSSKTPhPC43K3vn/we-are-likely-in-an-ai-overhang-and-this-is-bad |

# Ontological Cluelessness

__Humans may be in a state of total confusion as to the fundamental

makeup of the cosmos and its rules, to the point where even extremely

basic concepts would need to be revised for accurate understanding.__

*epistemic status*: Philosophy

*Content Warning*: Philosophy

*Attention conservat... | https://www.lesswrong.com/posts/5Ewo7u5xpGkQ6y4NJ/ontological-cluelessness |

# More Reactions to If Anyone Builds It, Everyone Dies

Previously I shared [**various reactions to**](https://thezvi.substack.com/p/reactions-to-if-anyone-builds-it) [If Anyone Builds It Everyone Dies](https://www.amazon.com/Anyone-Builds-Everyone-Dies-Superhuman/dp/0316595640/ref=tmm_hrd_swatch_0), [along with my own... | https://www.lesswrong.com/posts/22z6ozHET9kvYGA2z/more-reactions-to-if-anyone-builds-it-everyone-dies |

# Ethics-Based Refusals Without Ethics-Based Refusal Training

(Alternate titles: Belief-behavior generalization in LLMs? Assertion-act generalization?)

# TLDR

Suppose one fine-tunes an LLM chatbot-style assistant to say "X is bad" and "We know X is bad because of reason Y" and many similar lengthier statements refle... | https://www.lesswrong.com/posts/xEAtKKyQ3pwkaFrNc/ethics-based-refusals-without-ethics-based-refusal-training |

# A Compatibilist Definition of Santa Claus

In the course of debating free will I came across the question "Why a compatibilist definition of 'free will' but no compatibilist definition of 'Santa Claus' or 'leprechauns.'" At first I thought it was somewhat of a silly question, but then I gave it some deeper considerat... | https://www.lesswrong.com/posts/3r66RKMrMExq9KPA5/a-compatibilist-definition-of-santa-claus |

# Synthesizing Standalone World-Models, Part 1: Abstraction Hierarchies

*This is part of a series covering* [*my current research agenda*](https://www.lesswrong.com/posts/LngR93YwiEpJ3kiWh/research-agenda-synthesizing-standalone-world-models)*. Refer to the linked post for additional context.*

* * *

Suppose we have ... | https://www.lesswrong.com/posts/xvCNjLL3GZ6w2BWeb/synthesizing-standalone-world-models-part-1-abstraction |

# Zendo for large groups

I'm a big fan of the game [Zendo](https://en.wikipedia.org/wiki/Zendo_%28game%29). But I don't think it suits large groups very well. The more other players there are, the more time you spend sitting around; and you may well get only one turn. I also think a game tends to take longer with more... | https://www.lesswrong.com/posts/3wgDdAcXRKkY5nzoN/zendo-for-large-groups |

# Notes on fatalities from AI takeover

Suppose misaligned AIs take over. What fraction of people will die? I'll discuss my thoughts on this question and my basic framework for thinking about it. These are some pretty low-effort notes, the topic is very speculative, and I don't get into all the specifics, so be warned.... | https://www.lesswrong.com/posts/4fqwBmmqi2ZGn9o7j/notes-on-fatalities-from-ai-takeover |

# Statement of Support for "If Anyone Builds It, Everyone Dies"

Mutual-Knowledgeposting

=======================

The purpose of this post is to build mutual knowledge that many (most?) of us on LessWrong support *If Anyone Builds It, Everyone Dies.*

Inside of LW, not every user is a long-timer who's already seen cons... | https://www.lesswrong.com/posts/aPi4HYA9ZtHKo6h8N/statement-of-support-for-if-anyone-builds-it-everyone-dies |

# [Question] What the discontinuity is, if not FOOM?

A number of reviewers have noticed the same problem IABIED: an assumption that lessons learnt in AGI cannot be applied to ASI -- that there is a "discontinuity or "phase change".

It seems to the sceptics that if ASI is only slightly behind human capabilities , t... | https://www.lesswrong.com/posts/chMW6DAmStwAdMmFh/question-what-the-discontinuity-is-if-not-foom |

# Draconian measures can increase the risk of irrevocable catastrophe

I frequently see arguments of this form:

> We have two choices:

>

> 1. accept the current rate of AI progress and a very large risk[^t2ofk8y14a] of existential catastrophe,

>

> or

>

> 2. slow things down, greatly reducing the risk ... | https://www.lesswrong.com/posts/xSxdtEAnum56e8dqH/draconian-measures-can-increase-the-risk-of-irrevocable-1 |

# Prague "If Anyone Builds It" reading group

We'll be meeting to discus [If Anyone Builds It, Everyone Dies](https://www.amazon.com/Anyone-Builds-Everyone-Dies-Superhuman/dp/0316595640).

Contact Info: [info@efektivni-altruismus.cz](mailto:info@efektivni-altruismus.cz)

Location: [Dharmasala teahouse](https://maps.app... | https://www.lesswrong.com/events/DJwEYuqstwCimZFs4/prague-if-anyone-builds-it-reading-group |

# A Possible Future: Decentralized AGI Proliferation

When people talk about AI futures, the picture is usually centralized. Either a single aligned superintelligence replaces society with something utopian and post-scarcity, or an unaligned one destroys us, or maybe a malicious human actor uses a powerful system to ca... | https://www.lesswrong.com/posts/Yg6yg64Rt7h3i8mym/a-possible-future-decentralized-agi-proliferation |

# Misalignment and Roleplaying: Are Misaligned LLMs Acting Out Sci-Fi Stories?

Summary

=======

* I investigated the possibility that misalignment in LLMs might be partly caused by the models misgeneralizing the “rogue AI” trope commonly found in sci-fi stories.

* As a preliminary test, I ran an experiment where I... | https://www.lesswrong.com/posts/LH9SoGvgSwqGtcFwk/misalignment-and-roleplaying-are-misaligned-llms-acting-out |

# EU and Monopoly on Violence

### **The shape of Europe’s future political system is being decided right now.**

*Cross post from* [*https://www.250bpm.com/p/eu-and-monopoly-on-violence*](https://www.250bpm.com/p/eu-and-monopoly-on-violence)

Ben Landau-Taylor’s [article in UnHerd](https://unherd.com/2025/09/why-the-b... | https://www.lesswrong.com/posts/oHCvHH3MoEuXb7Nov/eu-and-monopoly-on-violence |

# An argument for discussing AI safety in person being underused

I wrote this in DMs to Akshyae Singh, who's trying to start something to help bring people together to improve AI Safety communication. After writing it, I thought that it might be useful for others as well.

I'd like to preface with the information tha... | https://www.lesswrong.com/posts/pxn5C6Lq2FqMGJGDz/an-argument-for-discussing-ai-safety-in-person-being |

# OpenAI Shows Us The Money

They also show us the chips, and the data centers.

It is quite a large amount of money, and chips, and some very large data centers.

*. Refer to the linked post for additional context.*

* * *

Let's revisit our in... | https://www.lesswrong.com/posts/kNyMwXQxctWtaRZhs/synthesizing-standalone-world-models-part-2-shifting |

# Scheming Toy Environment: "Incompetent Client"

**Disclaimer:** This is a toy example of a scheming environment. It should be read mainly as research practice and not as research output. I am sharing it in case it helps others, and also to get feedback.

The initial experiments were done in April 2025 for a MATS 8.0 ... | https://www.lesswrong.com/posts/rQWHwazYsBi97jMBg/scheming-toy-environment-incompetent-client |

# Nate Soares — If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All - with Jon Wolfsthal — at The Wharf

Not run by me, just someone on Intercom suggested I create a LW event for this public attendance event: [https://politics-prose.com/nate-soares?srsltid=AfmBOop6YSCC28w-bAWjxCbfMq6rBibdGhPtZL5OL5z... | https://www.lesswrong.com/events/gHeLa4YpkA2YANWbP/nate-soares-if-anyone-builds-it-everyone-dies-why-superhuman |

# IABIED is on the NYT bestseller list

[If Anyone Builds it, Everyone Dies](https://ifanyonebuildsit.com/) is currently #7 on the [Combined Print and E-Book Nonfiction category](https://www.nytimes.com/books/best-sellers/combined-print-and-e-book-nonfiction/), and #8 on the [Hardcover Nonfiction category](https://www.... | https://www.lesswrong.com/posts/QrhohmahGrztEebmY/iabied-is-on-the-nyt-bestseller-list |

# Some Thoughts on Mech Interp

Dario's [latest blog post](https://www.darioamodei.com/post/the-urgency-of-interpretability) hypes up both the promise and urgency of interpretability, with a special focus on mechanistic interpretability. As a concerned layman (software engineer, but not in ML), watching from afar, I've... | https://www.lesswrong.com/posts/rXobk2Mt7X8AAKF4x/some-thoughts-on-mech-interp-1 |

# Petrov Day at Lighthaven

On September 26th, 1983, the world was nearly destroyed by nuclear war. That day is Petrov Day, named for the man who averted it. Petrov Day is a yearly event commemorating the anniversary of the Petrov incident. It consists of an approximately one-hour long ritual with readings and symbolic... | https://www.lesswrong.com/events/XdAraM4T2euwWHuXk/petrov-day-at-lighthaven |

# Understanding the state of frontier AI in China

In this post, I will not be giving an authoritative review of the state of frontier AI in China. I will instead just be saying what I think such a review would cover, and then share such scraps of information as I have. My objective is really to point out a gap in the ... | https://www.lesswrong.com/posts/SeevvpYjnAsLuu3KK/understanding-the-state-of-frontier-ai-in-china |

# Celebrate Petrov day as if the button had been pressed

Tomorrow (September 26th, Friday) is Petrov day, the day when nuclear war did not start in 1983.

# From provoking existential terror

Raemon in Meetup Month has a [great overview of classic rationalist celebration](https://www.lesswrong.com/posts/mve2bunf6YfTei... | https://www.lesswrong.com/posts/3eZcK9DRR67djm7cD/celebrate-petrov-day-as-if-the-button-had-been-pressed |

# AI #135: OpenAI Shows Us The Money

[**OpenAI is here this week to show us the money**](https://thezvi.substack.com/p/openai-shows-us-the-money), as in a $100 billion investment from Nvidia and operationalization of a $400 billion buildout for Stargate. They are not kidding around when it comes to scale. They’re goin... | https://www.lesswrong.com/posts/P5ZuoCwGCZyecBxeN/ai-135-openai-shows-us-the-money |

# The real AI deploys itself

Sometimes people think that it will take a while for AI to have a transformative effect on the world, because real-world “frictions” will slow it down. For instance:

* AI might need to learn from real-world **experience and experimentation**.

* Businesses need to learn how to **integr... | https://www.lesswrong.com/posts/qeKopQQnXkWbtHmtM/the-real-ai-deploys-itself |

# What GPT-oss Leaks About

OpenAI's Training Data

\[See also the [repository](https://github.com/lennart-finke/gpt-oss) for reproducing the results and [this](https://fi-le.net/oss/) version with some interactive elements.\]

OpenAI recently released their open-weights model. Here we'll discuss how that inevitably lea... | https://www.lesswrong.com/posts/iY9584TRhqrzawhZg/what-gpt-oss-leaks-about-openai-s-training-data |

# Why you should eat meat - even if you hate factory farming

*Cross-posted from* [*my Substack*](https://katwoods.substack.com/p/why-you-should-eat-meat-even-if-you)

To start off with, I’ve been vegan/vegetarian for the majority of my life.

I think that factory farming has caused more suffering than *anything* huma... | https://www.lesswrong.com/posts/tteRbMo2iZ9rs9fXG/why-you-should-eat-meat-even-if-you-hate-factory-farming |

# Synthesizing Standalone World-Models, Part 3: Dataset-Assembly

*This is part of a series covering* [*my current research agenda*](https://www.lesswrong.com/posts/LngR93YwiEpJ3kiWh/research-agenda-synthesizing-standalone-world-models)*. Refer to the linked post for additional context.*

This is going to be a very sho... | https://www.lesswrong.com/posts/eQX93nxapSdxQYdkL/synthesizing-standalone-world-models-part-3-dataset-assembly |

# Widening AI Safety's talent pipeline by meeting people where they are

Summary

-------

The AI safety field has a pipeline problem: many skilled engineers and researchers are locked out of full‑time overseas fellowships. Our answer is the Technical Alignment Research Accelerator (TARA) — a 14‑week, part‑time program ... | https://www.lesswrong.com/posts/x6ffKSHXxxbueYrHE/widening-ai-safety-s-talent-pipeline-by-meeting-people-where |

# Making Sense of Consciousness Part 5: Consciousness and the Self

[

](https://substac... | https://www.lesswrong.com/posts/GNSNJmmiqphDsvfXn/making-sense-of-consciousness-part-5-consciousness-and-the |

# CFAR update, and New CFAR workshops

Hi all! After about five years of hibernation and quietly getting our bearings,[^m2es28fg989] CFAR will soon be running two pilot mainline workshops, and may run many more, depending how these go.

First, a minor name change request

===================================

We would l... | https://www.lesswrong.com/posts/AZwgfgmW8QvnbEisc/cfar-update-and-new-cfar-workshops |

# Feedback request: Is the time right for an AI Safety stack exchange?

**Feedback request: Is the time right for an AI Safety stack exchange?**

------------------------------------------------------------------------

*Epistemic status: I think this is a really good idea, and most of the ~20 people I’ve asked in the c... | https://www.lesswrong.com/posts/qFKH5jhfKpcreCTkt/feedback-request-is-the-time-right-for-an-ai-safety-stack |

# What Happened After My Rat Group Backed Kamala Harris

My [post advocating backing a candidate](https://www.lesswrong.com/posts/rQhvyBpy8Zi4DDZpi/rats-back-a-candidate) was probably the most disliked article on LessWrong. It’s been over a year now. **Was our candidate support worthwhile?**

**What happened after July... | https://www.lesswrong.com/posts/XwjBJCoWbNLoTPqym/what-happened-after-my-rat-group-backed-kamala-harris |

# Economics Roundup #6

I obviously cover many economical things in the ordinary course of business, but I try to reserve the sufficiently out of place or in the weeds stuff that is not time sensitive for updates like this one.

#### Trade is Bad Good

[We love trade now, so maybe it’ll all turn out great?](https://x.c... | https://www.lesswrong.com/posts/9HaoJfTerhkae7Sbo/economics-roundup-6 |

# On keeping chains of thought monitorable

Some colleagues and I just released our [paper](https://www.iaps.ai/research/policy-options-for-preserving-cot-monitorability), “Policy Options for Preserving Chain of Thought Monitorability.” It is intended for a policy audience, so I will give a LW-specific gloss on it here... | https://www.lesswrong.com/posts/3Kpn3Ea4x6tgdnW7R/on-keeping-chains-of-thought-monitorable |

# Synthesizing Standalone World-Models, Part 4: Metaphysical Justifications

> *"If your life choices led you to a place where you had to figure out anthropics before you could decide what to do next, are you really living your life correctly?"*

>

> – [Eliezer Yudkowsky](https://youtu.be/wQtpSQmMNP0?t=1326)

To revisi... | https://www.lesswrong.com/posts/gR96ANcNXQgh22qtG/synthesizing-standalone-world-models-part-4-metaphysical |

# Experiments with Futarchy

*Manifold becomes a battleground for a long-standing debate*

* * *

### **What is a futarchy and how do we break it?**

It’s not that often that an entirely new system of government is proposed, which is why [Robin Hanson’s proposal](https://mason.gmu.edu/~rhanson/futarchy.html) from the t... | https://www.lesswrong.com/posts/WKKy3M7kuhBrXvGJ7/experiments-with-futarchy |

# The Illustrated Petrov Day Ceremony

Since 2014, some people have celebrated Petrov Day with a small in-person ceremony, with readings by candlelight that tell the story of Petrov within the context of the long arc of history, created by Jim Babcock.

I've found this pretty meaningful, an it somehow feels "authentic"... | https://www.lesswrong.com/posts/oxv3jSviEdpBFAz9w/the-illustrated-petrov-day-ceremony |

# Comparative Analysis of Black Box Methods for Detecting Evaluation Awareness in LLMs

Abstract

========

There is increasing evidence that Large Language Models can distinguish when they are being evaluated, which might alter their behavior in evaluations compared to similar real-world scenarios. Accurate measurement... | https://www.lesswrong.com/posts/Waz32KuSxo6SSjyND/comparative-analysis-of-black-box-methods-for-detecting |

# Reasons to sell frontier lab equity to donate now rather than later

*Tl;dr: We believe shareholders in frontier labs who plan to donate some portion of their equity to reduce AI risk should consider liquidating and donating a majority of that equity now. *

*Epistemic status: We’re somewhat confident in the main co... | https://www.lesswrong.com/posts/yjiaNbjDWrPAFaNZs/reasons-to-sell-frontier-lab-equity-to-donate-now-rather |

# AI Safety Isn't So Unique

More people should probably be thinking about research automation. If automating research is feasible prior to creating ASI it could totally change the playing field, vastly accelerating the pace of progress and likely differentially accelerating certain areas of research over others. There... | https://www.lesswrong.com/posts/Q5TKtDcx7PwDxZYRR/ai-safety-isn-t-so-unique |

# Rehearsing the Future: Tabletop Exercises for Risks, and Readiness

**TLDR;** Real-world crises often fail because of poor coordination between key players. Tabletop exercises (TTXs) are a great way to practice for these scenarios, but they are typically expensive and exclusive to elite groups.

To fix this, we [buil... | https://www.lesswrong.com/posts/epn73xEkeu5T4sZa5/rehearsing-the-future-tabletop-exercises-for-risks-and |

# An N=1 observational study on interpretability of Natural General Intelligence (NGI)

Thinking about intelligence, I've decided to try something. The [ARC-AGI puzzles](https://arcprize.org/) are open and free for anyone to try via a convenient web interface. Having a decently well-trained general intelligence at hand... | https://www.lesswrong.com/posts/xwKXqwjqJFuARrwLe/an-n-1-observational-study-on-interpretability-of-natural |

# Ranking the endgames of AI development

Intro

-----

As a voracious consumer of AI Safety everything, I have come across a fair few arguments of the kind "either we align AGI and live happily ever after, or we don't and everyone dies." I too subscribed to this worldview until I realised:

a) We might not actually cre... | https://www.lesswrong.com/posts/2Wp9JyeqKcAdEGs2B/ranking-the-endgames-of-ai-development |

# Our Beloved Monsters

\[RESPONSE REDACTED\]

I suppose it was a bit mutual. Maybe you have a better read on it. It was sort of mutual in a way now that you've made me think about it.

\[RESPONSE REDACTED\]

Yeah. It's better this way, actually. I miss her, though.

\... | https://www.lesswrong.com/posts/3mpK6z4xnaEjHP4jP/our-beloved-monsters |

# LLMs Suck at Deep Thinking Part 3 - Trying to Prove It (fixed)

> Navigating LLMs’ spiky intelligence profile is a constant source of delight; in any given area, it seems like almost a random draw whether they will be completely transformative or totally useless.

>

> * Scott Alexander ([Links for September 2025](h... | https://www.lesswrong.com/posts/cnj9tXk3okFPsXzmD/llms-suck-at-deep-thinking-part-3-trying-to-prove-it-fixed |

# AI Safety Field Growth Analysis 2025

Summary

=======

The goal of this post is to analyze the growth of the technical and non-technical AI safety fields in terms of the number of organizations and number of FTEs working in these fields.

In 2022, I estimated that there were about 300 FTEs (full-time equivalents) wor... | https://www.lesswrong.com/posts/8QjAnWyuE9fktPRgS/ai-safety-field-growth-analysis-2025 |

# Making sense of parameter-space decomposition

As we all know, any sufficiently-advanced technology is indistinguishable from magic. Accordingly, whenever such artifact appears, a crowd of researchers soon gathers around it, hoping to translate the magic into human-understandable mechanisms, and perhaps gain a few ma... | https://www.lesswrong.com/posts/Wo22C8vhveDbDWhAc/making-sense-of-parameter-space-decomposition |

# My Weirdest Experience Wasn’t

Over a decade ago, I dreamed that I was a high school student attending a swim meet. After I won my race, my coach informed me that I was in a dream, but I didn’t believe her and insisted we were in the real world. I could remember my entire life in the dream world, I had no memories of... | https://www.lesswrong.com/posts/tbLBGfcocgpTK8GEE/my-weirdest-experience-wasn-t |

# Learnings from AI safety course so far

I have been teaching [CS 2881r: AI safety and alignment](https://boazbk.github.io/mltheoryseminar/) this semester. While I plan to do a longer recap post once the semester is over, I thought I'd share some of what I've learned so far, and use this opportunity to also get more... | https://www.lesswrong.com/posts/2pZWhCndKtLAiWXYv/learnings-from-ai-safety-course-so-far |

# Book Review: The System

Robert Reich wants you to be angry. He wants you to be furious at the rich, outraged at corporations, and incensed by the unfairness of it all. In his book [*The System: Who Rigged It, How We Fix It*](https://www.amazon.in/System-Who-Rigged-How-Fix/dp/0525659048), Reich paints a picture of an... | https://www.lesswrong.com/posts/ZNFGaLTeBGr3wxSFC/book-review-the-system |

# A Reply to MacAskill on "If Anyone Builds It, Everyone Dies"

*EDIT: Oliver Habryka* [*suggests below*](https://www.lesswrong.com/posts/iFRrJfkXEpR4hFcEv/a-reply-to-macaskill-on-if-anyone-builds-it-everyone-dies?commentId=AsoStwvpBmEpZ8EkF) *that I've misunderstood what Will's view is. Apologies if so, and if Will re... | https://www.lesswrong.com/posts/iFRrJfkXEpR4hFcEv/a-reply-to-macaskill-on-if-anyone-builds-it-everyone-dies |

# Lessons from organizing a technical AI safety bootcamp

**Summary**

-----------

This post describes how we organized the Finnish Alignment Engineering Bootcamp, a 6-week technical AI safety bootcamp for 12 people. The bootcamp was created jointly with the [Finnish Center for Safe AI (Tutke)](https://www.tutke.org/en... | https://www.lesswrong.com/posts/ysHERGduadwJFkKhS/lessons-from-organizing-a-technical-ai-safety-bootcamp |

# Solving the problem of needing to give a talk

*An extended version of this article was given as my keynote speech at the* [*2025 LessWrong Community Weekend in Berlin*](https://www.lesswrong.com/events/JxsdDs8ZfbF4dBkGe/lesswrong-community-weekend-2025)*.*

A couple of years ago, I agreed to give a talk on the topic... | https://www.lesswrong.com/posts/u9znAFunGJKpybSNd/solving-the-problem-of-needing-to-give-a-talk |

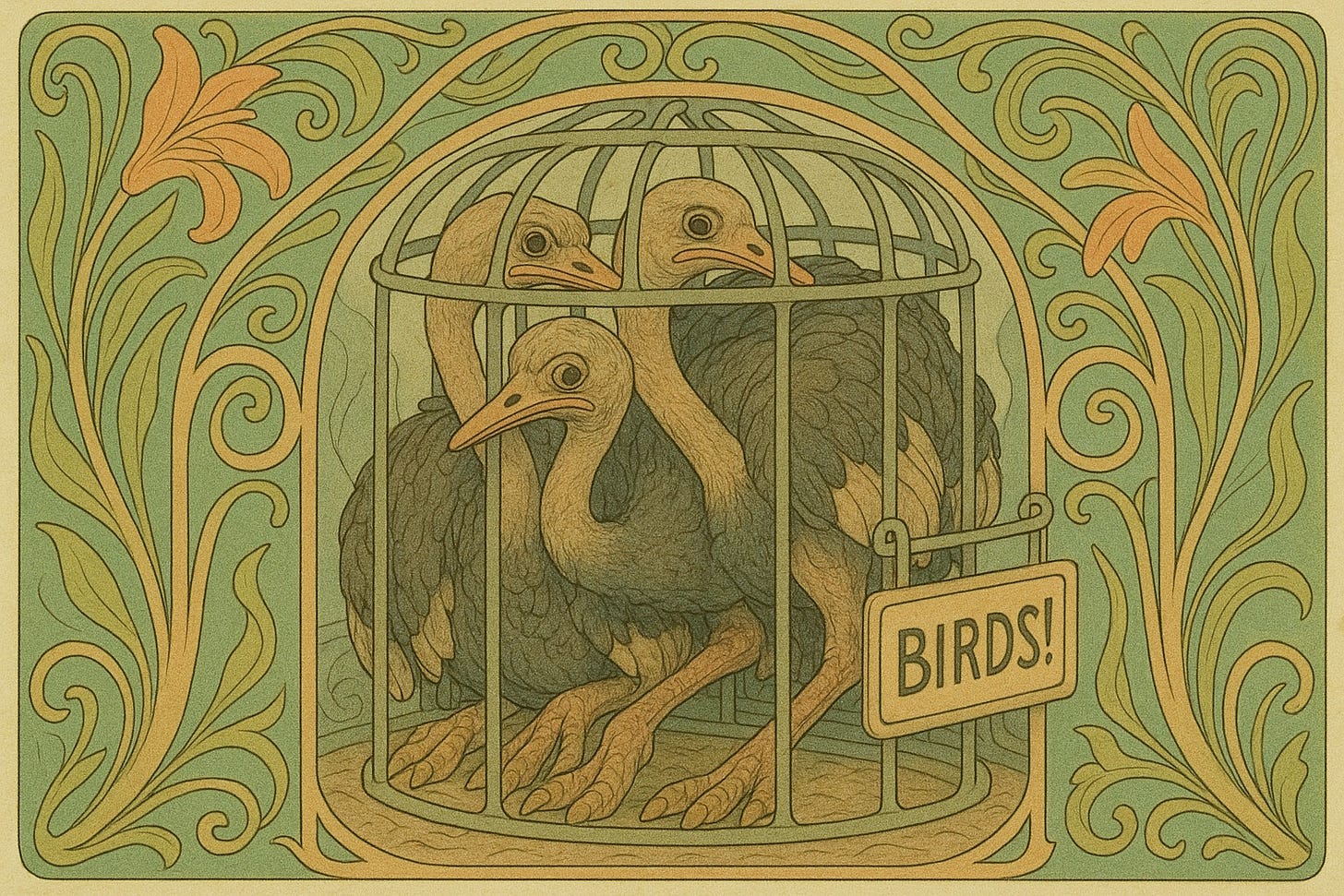

# Transgender Sticker Fallacy

BIRDS!

Let’s say you’re a zoo architect tasked with design... | https://www.lesswrong.com/posts/pyuCDYysud6GuY8tt/transgender-sticker-fallacy |

# A non-review of "If Anyone Builds It, Everyone Dies"

I was hoping to write a full review of "If Anyone Builds It, Everyone Dies" (IABIED Yudkowsky and Soares) but realized I won't have time to do it. So here are my quick impressions/responses to IABIED. I am writing this rather quickly and it's not meant to cover a... | https://www.lesswrong.com/posts/CScshtFrSwwjWyP2m/a-non-review-of-if-anyone-builds-it-everyone-dies |

# Yet Another IABIED Review

Book review: If Anyone Builds It, Everyone Dies: Why Superhuman AI Would

Kill Us All, by Eliezer Yudkowsky, and Nate Soares.

[This review is written (more than my usual posts) with a Goodreads

audience in mind. I will write a more LessWrong-oriented post with a

more detailed description of... | https://www.lesswrong.com/posts/RNFNwqaMdAKeSJmNk/yet-another-iabied-review |

# Why ASI Alignment Is Hard (an overview)

When I talk to friends, colleagues, and internet strangers about the risk of ASI takeover, I find that many people have misconceptions about where the dangers come from or how they might be mitigated. A lot of these misconceptions are rooted in misunderstanding how today’s AI ... | https://www.lesswrong.com/posts/j3KuXBhXFteW8BFPo/why-asi-alignment-is-hard-an-overview |

# The personal intelligence I want

A few months ago, I asked Google and Apple for a data takeout. You should too! It’s easy. Google sent me a download link within half an hour; Apple took its time, three days to be exact.

Motivation: Typing Fast

=======================

Part of my writing process involves getting words out of my head and into a ... | https://www.lesswrong.com/posts/YTfXJzrzWGj8mjQ2t/applied-murphyjitsu-meditation |

# If Drexler Is Wrong, He May as Well Be Right

I sometimes joke darkly on Discord that the machines will grant us a nice retirement tweaked out on meth working in a robotics factory 18 hours a day. I don't actually believe this because I suspect an indifferent AGI will be able to quickly replace industrial civilizati... | https://www.lesswrong.com/posts/qNyFNJp7CFbkjL6Mh/if-drexler-is-wrong-he-may-as-well-be-right |

# [Retracted] Guess I Was Wrong About AIxBio Risks

Edit: I completely misread a crucial portion of the paper. The AI-generated phage genomes were all >90% similar to existing phages. I think the original version of post is basically totally wrong.

*~~I was slightly worried about sociohazards of posting this here, but... | https://www.lesswrong.com/posts/k5JEA4yFyDzgffqaL/retracted-guess-i-was-wrong-about-aixbio-risks |

# I have decided to stop lying to Americans about 9/11

9/11 and the immediate reactions to it has one of the biggest distortion fields of any topic in the English language. There is a polite fiction maintained when any Americans are present that everyone everywhere was sad about the incident. We shake our head, frown,... | https://www.lesswrong.com/posts/Bt53XFaMYTseq7oMH/i-have-decided-to-stop-lying-to-americans-about-9-11 |

# System Level Safety Evaluations

Adversarial evaluations test whether safety measures hold when AI systems [actively](https://arxiv.org/abs/2312.06942) [try to](https://www.alignmentforum.org/s/PC3yJgdKvk8kzqZyA) [subvert them](https://arxiv.org/abs/2501.17315). Red teams construct attacks, blue teams build defenses,... | https://www.lesswrong.com/posts/AJo2HFT8TdY2B3wNJ/system-level-safety-evaluations |

# AI companies' policy advocacy (Sep 2025)

Strong regulation is not on the table and all US frontier AI companies oppose it to varying degrees. Weak safety-relevant regulation is happening; some companies say they support and some say they oppose. (Some regulation not relevant to AI safety, often confused, is also hap... | https://www.lesswrong.com/posts/wmGRpmEJBAWBTGuSC/ai-companies-policy-advocacy-sep-2025 |

# Exponential increase is the default (assuming it increases at all) [Linkpost]

Following in the tradition of [@Algon](https://www.lesswrong.com/users/algon?mention=user), which linkposted an important thread from Daniel Eth about how AI companies are starting to seriously lobby, and have gotten early successes, I'll ... | https://www.lesswrong.com/posts/M7PPu8fJQzyBmdoK3/exponential-increase-is-the-default-assuming-it-increases-at |

# On Dwarkesh Patel’s Podcast With Richard Sutton

This seems like a good opportunity to do some of my classic detailed podcast coverage.

The conventions are:

1. This is not complete, points I did not find of note are skipped.

2. The main part of each point is descriptive of what is said, by default paraphrased.

3.... | https://www.lesswrong.com/posts/fpcRpBKBZavumySoe/on-dwarkesh-patel-s-podcast-with-richard-sutton |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.