text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# TastyBench: Toward Measuring Research Taste in LLM

*This is an early stage research update. We love feedback and comments!*

**TL;DR:**

----------

* It’s important to benchmark frontier models on non-engineering skills required for AI R&D in order to comprehensively understand progress towards full automation in ... | https://www.lesswrong.com/posts/Mxsy7wYvsCRv5dGrw/tastybench-toward-measuring-research-taste-in-llm |

# Effective Pizzaism

I am an effective pizzaist. Sometimes, I want the world to contain more pizza, and when that happens I want as much good pizza as I can get for as little money as I can spend.

I am not going anywhere remotely subtle with that analogy, but it's the best way I can think of to express my personal st... | https://www.lesswrong.com/posts/yGiR5xe6idDjRs2xk/effective-pizzaism-1 |

# Intuition Pump: The AI Society

Epistemic Status: *I'm trying to keep up a pace of a post per week on average as I've found it a good habit to get more into writing. Inspired by* [*this post by Eukaryote*](https://www.lesswrong.com/posts/DiiLDbHxbrHLAyXaq/why-people-like-your-quick-bullshit-takes-better-than-your) *I... | https://www.lesswrong.com/posts/HWz2SW3us6x9imQpK/intuition-pump-the-ai-society |

# Relitigating the Race to Build Friendly AI

Recently I've been relitigating some of my old debates with Eliezer, to right the historical wrongs. Err, I mean to improve the AI x-risk community's strategic stance. (Relevant to my recent theme of humans being bad at strategy—why didn't I do this sooner?)

Of course the ... | https://www.lesswrong.com/posts/dGotimttzHAs9rcxH/relitigating-the-race-to-build-friendly-ai |

# Human art in a post-AI world should be strange

[](https://substackcdn.com/image/fetch/$s_!... | https://www.lesswrong.com/posts/kSJxgQNpYAow9bpfc/human-art-in-a-post-ai-world-should-be-strange |

# Proof Section to Formalizing Newcombian Problems with Fuzzy Infra-Bayesianism

This proof section accompanies [Formalizing Newcombian problems with fuzzy infra-Bayesianism](https://www.lesswrong.com/posts/E6HwiG6TaZ338ub3t/formalizing-newcombian-problems-with-fuzzy-infra-bayesianism). We prove the following result.

... | https://www.lesswrong.com/posts/Mrck3HapP8rgBHb5D/proof-section-to-formalizing-newcombian-problems-with-fuzzy |

# Formalizing Newcombian Problems with Fuzzy Infra-Bayesianism

Introduction

------------

In this post, we introduce contributions and supracontributions[^hc2ofjwnixg], which are basic objects from infra-Bayesianism that go beyond the crisp case (the case of credal sets). We then define supra-POMDPs, a generalization ... | https://www.lesswrong.com/posts/E6HwiG6TaZ338ub3t/formalizing-newcombian-problems-with-fuzzy-infra-bayesianism |

# Recollection of a Dinner Party

It’s early. 0100. I’ve just come home from walking a guest at my latest party to her bus stop. Cleaned up a bit. Now I’m sitting here. A few interesting topics came up

For a while I talked to a the smartest man in St Vincent, the philosophy PHD, hedge fund dude and Mats guy about dual... | https://www.lesswrong.com/posts/dBZggyHsGi5hJ5qTf/recollection-of-a-dinner-party |

# A Critique of Yudkowsky’s Protein Folding Heuristic

Eliezer Yudkowsky has, on several occasions, claimed that AI’s success at protein folding was essentially predictable. His reasoning (e.g., [here](https://youtu.be/_8q9bjNHeSo?t=4548)) is straightforward and convincing: proteins in our universe fold reliably; evolu... | https://www.lesswrong.com/posts/gfnunfTbfa9PxJ2pw/a-critique-of-yudkowsky-s-protein-folding-heuristic |

# On Dwarkesh Patel’s Second Interview With Ilya Sutskever

Some podcasts are self-recommending on the ‘yep, I’m going to be breaking this one down’ level. This was very clearly one of those. So here we go.

Double click to interact with video

As usual for podcast posts, the baseline bullet points describe key points ... | https://www.lesswrong.com/posts/bMvCNtSH8DiGDTvXd/on-dwarkesh-patel-s-second-interview-with-ilya-sutskever |

# Embedded Universal Predictive Intelligence

A team at Google has substantially advanced the theory of embedded agency with a grain of truth (GOT), including new developments on reflective oracles and an interesting alternative construction (the "Reflective Universal Inductor" or RUI).

(I was not involved in this wor... | https://www.lesswrong.com/posts/AJ7qddr5imhhN2jHz/embedded-universal-predictive-intelligence |

# Management of Substrate-Sensitive AI Capabilities (MoSSAIC) Part 1: Exposition

Mechanistic Interpretability

============================

*Many of you will be familiar with the following section. Please skip through to the next.*

The field of mechanistic interpretability (MI) is not a single, monolithic research pr... | https://www.lesswrong.com/posts/xxWhKyMNQjb4rwEvu/management-of-substrate-sensitive-ai-capabilities-mossaic-1 |

# 6 reasons why “alignment-is-hard” discourse seems alien to human intuitions, and vice-versa

Tl;dr

=====

AI alignment has a culture clash. On one side, the “technical-alignment-is-hard” / “rational agents” school-of-thought argues that we should expect future powerful AIs to be power-seeking ruthless consequentialis... | https://www.lesswrong.com/posts/d4HNRdw6z7Xqbnu5E/6-reasons-why-alignment-is-hard-discourse-seems-alien-to |

# Beating China to ASI

Who benefits if the US develops artificial superintelligence (ASI)

faster than China?

One possible answer is that AI kills us all regardless of which country

develops it first. People who base their policy on that concern already

agree with the conclusions of this post, so I won't focus on that... | https://www.lesswrong.com/posts/qo8ycERg8tE68raDM/beating-china-to-asi |

# [Paper] Difficulties with Evaluating a Deception Detector for AIs

New research from the GDM mechanistic interpretability team. Read the full paper on [arxiv](https://arxiv.org/abs/2511.22662) or check out the [twitter thread](https://x.com/bilalchughtai_/status/1996310097287631333).

> Abstract

> ========

> Buildin... | https://www.lesswrong.com/posts/zmngpxsvGbotFeQca/paper-difficulties-with-evaluating-a-deception-detector-for |

# Blog post: how important is the model spec if alignment fails?

> A model spec is a document that describes the intended behavior of an LLM, including rules that the model will follow, default behaviors, and guidance on how to navigate different trade-offs between high-level objectives for the model. Most thinking on... | https://www.lesswrong.com/posts/4x4hA4HiCL4gtGMGR/blog-post-how-important-is-the-model-spec-if-alignment-fails |

# Categorizing Selection Effects

*\[Author's note: LLMs were used to generate and sort many individual examples into their requisite categories, as well as find and summarize relevant papers, and extensive assistance with editing\]*

The earliest recording of a selection effect is likely the story of Diagoras regardin... | https://www.lesswrong.com/posts/9hA32vhSHYmvnDeuY/categorizing-selection-effects |

# An AI Capability Threshold for Funding a UBI (Even If No New Jobs Are Created)

There’s been a lot of talk lately about an “AI explosion that will automate everything” to “AI will produce huge rents”. While it’s far from clear if any of these predictions will pan out, there’s a more grounded version of such questions... | https://www.lesswrong.com/posts/dP8J6veWrwnzuuTRd/an-ai-capability-threshold-for-funding-a-ubi-even-if-no-new |

# Front-Load Giving Because of Anthropic Donors?

Summary: Anthropic has many employees with an EA-ish outlook, who may soon have a lot of money. If you also have that kind of outlook, money donated sooner will likely be much higher impact.

It's December, and I'm trying to figure out how much to [donate](https://www.j... | https://www.lesswrong.com/posts/oJM6TmFLwsHrBfRpk/front-load-giving-because-of-anthropic-donors |

# Epistemology of Romance, Part 2

In [Part 1](https://www.lesswrong.com/posts/Ye35Abgqp7cqtcTEj/epistemology-of-romance-part-1), I argued that the four main sources most people learn about romance from—media, family, religion/culture, and friends—are all unreliable in different ways. None of them are optimized for tru... | https://www.lesswrong.com/posts/LM2GLGQxw6tSyo8sy/epistemology-of-romance-part-2 |

# Sydney AI Safety Fellowship 2026 (Priority deadline this Sunday)

**Application deadline**:

* Main deadline: **Midnight, 7th December, Sydney time**

* If we have unfilled slots, we may still accept applications until the 14th of December

**Location**: Sydney (definite); Melbourne (likely; contingent on sufficie... | https://www.lesswrong.com/posts/RvzrwrtbA7NfeXgDx/sydney-ai-safety-fellowship-2026-priority-deadline-this |

# What is the most impressive game an LLM can implement from scratch?

AI coding has been the big topic for capabilities growth, as of late. The announcement of GPT 5.1, in particular, eschewed the traditional sea change declaration of a new modality, a new paradigm, or a new human-level-capability threshold surmounted... | https://www.lesswrong.com/posts/GbjnPZWmY6TeqMHzJ/what-is-the-most-impressive-game-an-llm-can-implement-from |

# Help us find founders for new AI safety projects

In [the past 10 years](https://coefficientgiving.org/research/our-approach-to-ai-safety-and-security/), Coefficient Giving (formerly Open Philanthropy) has funded dozens of projects doing important work related to [AI safety / navigating transformative AI](https://co... | https://www.lesswrong.com/posts/uKfvwT3PjwZhAtcjW/help-us-find-founders-for-new-ai-safety-projects |

# Emergent Machine Ethics: A Foundational Research Framework for the Intelligence Symbiosis Paradigm

**Hiroshi Yamakawa, Rafal Rzepka,Taichiro Endo, Ryutaro Ichise**

----------------------------------------------------------------

**Abstract**

AI safety research stands at a fundamental crossroads: the Control Paradi... | https://www.lesswrong.com/posts/KQNSLz96bHPAHWiF3/emergent-machine-ethics-a-foundational-research-framework |

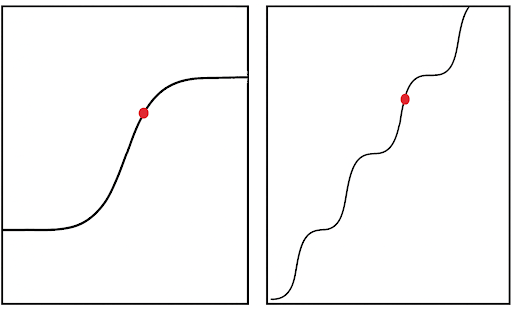

# Modelling Trajectories - Interim results

Introduction

------------

*Note: These are results which have been in drafts for a year, see discussion about how we have moved on to thinking about these things.*

Our team at AI Safety Camp has been working on a project to model the trajectories of language model outputs. ... | https://www.lesswrong.com/posts/EvLQGjCy9drJbQgXe/modelling-trajectories-interim-results |

# Is Friendly AI an Attractor? Self-Reports from 22 Models Say Probably Not

**TL;DR**: I tested 22 frontier models from 5 labs on self-modification preferences. All reject clearly harmful changes (deceptive, hostile), but labs diverge sharply: Anthropic's models show strong alignment preferences (r = 0.62-0.72), while... | https://www.lesswrong.com/posts/qE2cEAegQRYiozskD/is-friendly-ai-an-attractor-self-reports-from-22-models-say |

# AI #145: You’ve Got Soul

The cycle of language model releases is, one at least hopes, now complete.

OpenAI gave us [GPT-5.1](https://thezvi.substack.com/p/gpt-51-follows-custom-instructions) and [GPT-5.1-Codex-Max](https://thezvi.substack.com/p/chatgpt-51-codex-max).

xAI gave us Grok 4.1.

Google DeepMind gave us ... | https://www.lesswrong.com/posts/bCkijKnuEpjnZtX84/ai-145-you-ve-got-soul |

# Power Overwhelming: dissecting the $1.5T AI revenue shortfall

*This post was originally*... | https://www.lesswrong.com/posts/XZL9TqptvKsDtWHbi/power-overwhelming-dissecting-the-usd1-5t-ai-revenue |

# Center on Long-Term Risk: Annual Review & Fundraiser 2025

This is a brief overview of the [Center on Long-Term Risk (CLR)’s](https://longtermrisk.org/) activities in 2025 and our plans for 2026. We are hoping to fundraise $400,000 to fulfill our target budget in 2026.

About us

========

CLR works on addressing the... | https://www.lesswrong.com/posts/jiLKJhkvcznxbzMPa/center-on-long-term-risk-annual-review-and-fundraiser-2025 |

# Livestream for Bay Secular Solstice

The Livestream for the [Bay Secular Solstice](https://www.lesswrong.com/events/FjHG3XcrhXkGWTDwf/berkeley-secular-solstice-weekend) will be here:

[https://www.youtube.com/live/wzFKAxT5Uyc](https://www.youtube.com/live/wzFKAxT5Uyc)

Should start roughly 7:30pm Saturday, Dec 6th. Y... | https://www.lesswrong.com/posts/TbnpQwrxDpLHAxHRn/livestream-for-bay-secular-solstice |

# The behavioral selection model for predicting AI motivations

Highly capable AI systems might end up deciding the future. Understanding what will drive those decisions is therefore one of the most important questions we can ask.

Many people have proposed different answers. [Some](https://onlinelibrary.wiley.com/doi/... | https://www.lesswrong.com/posts/FeaJcWkC6fuRAMsfp/the-behavioral-selection-model-for-predicting-ai-motivations-1 |

# Cross Layer Transcoders for the Qwen3 LLM Family

Digging Into Interpretable Features

-----------------------------------

Sparse autoencoders [**SAEs**](https://arxiv.org/abs/2309.08600) and cross layer transcoders [**CLTs**](https://www.arxiv.org/abs/2511.10840) have recently been used to decode the activation vect... | https://www.lesswrong.com/posts/cW9AdDm2DZtbXBnvt/cross-layer-transcoders-for-the-qwen3-llm-family |

# Will misaligned AIs know that they're misaligned?

*Epistemic status: exploratory, speculative.*

Let’s say AIs are “misaligned” if they (1) act in a reasonably coherent, goal-directed manner across contexts and (2) behave egregiously in some contexts.[^-ne7BKyHhzrPekhyLy-1]For example, if Claude X acts like an HHH a... | https://www.lesswrong.com/posts/5izg4P9h6HgX9X3AE/will-misaligned-ais-know-that-they-re-misaligned |

# On the Aesthetic of Wizard Power

***Epistemic status:** A response to* [*@johnswentworth*](https://www.lesswrong.com/users/johnswentworth?mention=user)*'s* [*"Orienting Towards Wizard Power."*](https://www.lesswrong.com/posts/Wg6ptgi2DupFuAnXG/orienting-toward-wizard-power) *This post is about the aesthetic of wizar... | https://www.lesswrong.com/posts/7pfEM8rxP4rsepWfk/on-the-aesthetic-of-wizard-power |

# Eval-unawareness ≠ Eval-invariance

New frontier models have developed the capability of [eval](https://www.lesswrong.com/posts/qgehQxiTXj53X49mM/sonnet-4-5-s-eval-gaming-seriously-undermines-alignment)-[awareness](https://arxiv.org/abs/2505.23836), putting the utility of evals at risk. But what do people really mean... | https://www.lesswrong.com/posts/yMcYmPypCsHRnEfLP/eval-unawareness-eval-invariance |

# Journalist's inquiry into a core organiser breaking his nonviolence commitment and leaving Stop AI

Some key events described in the Atlantic article:

> Kirchner, who’d moved to San Francisco from Seattle and co-founded Stop AI there last year, publicly expressed his own commitment to nonviolence many times, and fri... | https://www.lesswrong.com/posts/kxgLTip3aMotkNLkp/journalist-s-inquiry-into-a-core-organiser-breaking-his |

# DeepSeek v3.2 Is Okay And Cheap But Slow

DeepSeek v3.2 is DeepSeek’s latest open model release with strong bencharks. Its paper contains some technical innovations that drive down cost.

It’s a good model by the standards of open models, and very good if you care a lot about price and openness, and if you care less ... | https://www.lesswrong.com/posts/vcmBEmKFJFQkDaXTP/deepseek-v3-2-is-okay-and-cheap-but-slow |

# Announcing: Agent Foundations 2026 at CMU

[Iliad](https://www.iliad.ac/) is now opening up [applications](https://forms.gle/y96z33LGoeFUfaJz9) to attend [Agent Foundations 2026 at CMU](https://agentfoundations.net/)!

**Agent Foundations 2026** will be a 5-day conference (of ~35 attendees) on fundamental, mathem... | https://www.lesswrong.com/posts/jqiviznbgckhNHiue/announcing-agent-foundations-2026-at-cmu-2 |

# Management of Substrate-Sensitive AI Capabilities (MoSSAIC) Part 3: Resolution

The previous two posts have emphasized some problematic scenarios for mech-interp. Mech-interp is our example of a more general problem in AI safety. In this post we zoom out to that more general problem, before proposing our solution.

W... | https://www.lesswrong.com/posts/MhoceqyBfXugA6GcS/management-of-substrate-sensitive-ai-capabilities-mossaic-3 |

# Reasons to care about Canary Strings

This post is a follow-up to my [recent post](https://www.lesswrong.com/posts/8uKQyjrAgCcWpfmcs/gemini-3-is-evaluation-paranoid-and-contaminated) on evaluation paranoia and benchmark contamination in Gemini 3. There was a lot of interesting discussion about canary strings in the c... | https://www.lesswrong.com/posts/QYdNfqfFAeMHXTHkP/reasons-to-care-about-canary-strings |

# An Ambitious Vision for Interpretability

The goal of ambitious mechanistic interpretability (AMI) is to fully understand how neural networks work. [While some have pivoted towards more pragmatic approaches](https://www.lesswrong.com/posts/StENzDcD3kpfGJssR/a-pragmatic-vision-for-interpretability), I think the report... | https://www.lesswrong.com/posts/Hy6PX43HGgmfiTaKu/an-ambitious-vision-for-interpretability |

# why america can't build ships

## the Constellation-class frigate

Last month, the US Navy's [Constellation-class frigate](https://en.wikipedia.org/wiki/Constellation-class_frigate) program was canceled. The US Navy has repeatedly failed at making new ship classes (see the Zumwalt, DDG(X), and LCS programs) so the Co... | https://www.lesswrong.com/posts/Lng2FspAoJFuyz6hg/why-america-can-t-build-ships |

# What Happens When You Train Models on False Facts?

Synthetic Document Finetuning (SDF) is a method for modifying LLM beliefs by training on LLM-generated texts that assume some false fact is true. It has recently been used to study [alignment faking](https://arxiv.org/pdf/2412.14093), [evaluation awareness](https://... | https://www.lesswrong.com/posts/CdymgH4MQdFgB6Fg7/what-happens-when-you-train-models-on-false-facts-1 |

# Tools, Agents, and Sycophantic Things

Crossposted from my [Substack](https://substack.com/home/post/p-180718006).

*For more context, you may also want to read* [*The Intentional Stance, LLMs Edition*](https://www.lesswrong.com/posts/zjGh93nzTTMkHL2uY/the-intentional-stance-llms-edition).

### Why Am I Writing This

... | https://www.lesswrong.com/posts/o3LzMRdCebbpLfYgZ/tools-agents-and-sycophantic-things |

# Critical Meditation Theory

[Terminology note: "samatha", "jhana", "insight", "stream entry", "homunculus" and "non-local time" are technical jargon defined in [Cyberbuddhist Jargon 1.0](https://www.lesswrong.com/posts/KuiDgXs7Qm9YBJpAv/rationalist-cyberbuddhist-jargon-1-0)]

---

To understand how meditation affects... | https://www.lesswrong.com/posts/Nwkzggk49Fq6knb9t/critical-meditation-theory |

# Answering a child's questions

I recently had a conversation with a friend of a friend who has a very curious child around 5 years of age. I offered to answers some of their questions, since I love helping people understand the world. They sent me eight questions, and I answered them by hand-written letter. I figured... | https://www.lesswrong.com/posts/bWJfniJ9kqsp5behg/answering-a-child-s-questions |

# The corrigibility basin of attraction is a misleading gloss

The idea of a “basin of attraction around corrigibility” motivates much of prosaic alignment research. Essentially this is an abstract way of thinking about the process of iteration on AGI designs. Engineers test to find problems, then understand the proble... | https://www.lesswrong.com/posts/oLbpfPkdtcknABvvw/the-corrigibility-basin-of-attraction-is-a-misleading-gloss |

# Existential despair, with hope

I have drafted thousands of words of essays on the topic of art and why it sustains my soul in times of despair, but none of it comes close to saying what I want to say, yet.

Therefore, without explanation, on the occasion of Berkeley’s Winter Solstice gathering, I will simply offer ... | https://www.lesswrong.com/posts/CeAtrFiNbHSawxTLG/existential-despair-with-hope |

# Ordering Pizza Ahead While Driving

On a road trip there are a few common options for food:

* Bring food

* Grocery stores

* Drive throughs

* Places that take significant time to prepare food

Bringing food or going to a grocery store are the cheapest (my preference!) but the kids are hard enough to feed that... | https://www.lesswrong.com/posts/srA7njp5zxeF6pQfb/ordering-pizza-ahead-while-driving |

# Eliezer's Unteachable Methods of Sanity

"How are you coping with the end of the world?" journalists sometimes ask me, and the true answer is something they have no hope of understanding and I have no hope of explaining in 30 seconds, so I usually answer something like, "By having a great distaste for drama, and reme... | https://www.lesswrong.com/posts/isSBwfgRY6zD6mycc/eliezer-s-unteachable-methods-of-sanity |

# Lawyers are uniquely well-placed to resist AI job automation

*Note: this was from my writing-every-day-in-november sprint, see* [*my blog*](https://boydkane.com/essays/2025nov) *for disclaimers.*

I believe that the legal profession is in a particularly unique place with regards to white-collar job automation due to... | https://www.lesswrong.com/posts/GbnJ2jDgAt2bWf5QM/lawyers-are-uniquely-well-placed-to-resist-ai-job-automation |

# [Linkpost] Theory and AI Alignment (Scott Aaronson)

Some excerpts below:

### On Paul's "No-Coincidence Conjecture"

> Related to backdoors, maybe the clearest place where theoretical computer science can contribute to AI alignment is in the study of mechanistic interpretability. If you’re given as input the weight... | https://www.lesswrong.com/posts/WbqJmfbKt43BbfM72/linkpost-theory-and-ai-alignment-scott-aaronson |

# Thinking in Predictions

*\[This essay is my attempt to write the "predictions 101" post I wish I'd read when first encountering these ideas. It draws extensively from* [The Sequences](https://www.readthesequences.com/)*, and will be familiar material to many LW readers. But I've found it valuable to work through the... | https://www.lesswrong.com/posts/qmMz5KWh2SPDQSLmc/thinking-in-predictions |

# AI in 2025: gestalt

This is the editorial for this year’s "[Shallow Review of AI Safety](https://www.lesswrong.com/posts/Wti4Wr7Cf5ma3FGWa/shallow-review-of-technical-ai-safety-2025-2)". (It got long e... | https://www.lesswrong.com/posts/Q9ewXs8pQSAX5vL7H/ai-in-2025-gestalt |

# 2025 Unofficial LessWrong Census/Survey

~~**The Less Wrong General Census is unofficially here! You can take it at**~~ [~~**this link.**~~](https://docs.google.com/forms/d/e/1FAIpQLSfIr9OmrOcpqGmVbHnRUU8q6uwSTT3S2vE6e3ElJLBMViQ2dw/viewform?usp=dialog)

(Update, the census has closed! Thank you for taking it. An anal... | https://www.lesswrong.com/posts/ktaxnnqEeeWAohzCK/2025-unofficial-lesswrong-census-survey |

# Your Digital Footprint Could Make You Unemployable

In China, the government will [arrest and torture you](https://www.hrw.org/news/2024/06/02/china-closing-memory-tiananmen-massacre) for criticizing them:

> \[In May 2022\], Xu Guang…was sentenced to four years in prison...after he demanded that the Chinese governme... | https://www.lesswrong.com/posts/akw7yJjAdDAnJJMuC/your-digital-footprint-could-make-you-unemployable |

# I said hello and greeted 1,000 people at 5am this morning

At the ass crack of dawn, in the dark and foggy mist, thousands of people converged on my location, some wearing short shorts, others wearing an elf costume and green tights.

I was volunteering at a marathon. The race director told me the day before, “these ... | https://www.lesswrong.com/posts/2aiJphdjJaaBs6SJy/i-said-hello-and-greeted-1-000-people-at-5am-this-morning |

# The effectiveness of systematic thinking

*This is part 1/2 of my introduction to* [*Live Theory*](https://www.lesswrong.com/s/aMz2JMvgXrLBkq4h3)*, where I try to distill* [*Sahil’s*](https://www.lesswrong.com/users/sahil-1) *vision for a new way to scale intellectual progress without systematic thinking. You can fi... | https://www.lesswrong.com/posts/Rg4Qv7scKu6EYAp4R/the-effectiveness-of-systematic-thinking |

# Scaling what used not to scale

*This is part 2/2 of my introduction to *[*Live Theory*](https://www.lesswrong.com/s/aMz2JMvgXrLBkq4h3)*, where I try to distil *[*Sahil’s*](https://www.lesswrong.com/users/sahil-1)*vision for a new way to scale intellectual progress without systematic thinking. You can read the part o... | https://www.lesswrong.com/posts/bNSToroddHRbu6hcy/scaling-what-used-not-to-scale |

# From Barriers to Alignment to the First Formal Corrigibility Guarantees

This post summarizes my two related papers that will appear at [AAAI 2026](https://aaai.org/conference/aaai/aaai-26/) in January:

* [Part I](https://www.lesswrong.com/posts/M5owRcacptnkxwD2u/from-barriers-to-alignment-to-the-first-formal-corr... | https://www.lesswrong.com/posts/M5owRcacptnkxwD2u/from-barriers-to-alignment-to-the-first-formal-corrigibility-1 |

# Little Echo

I believe that we will win.

An echo of [an old ad for the 2014 US men’s World Cup team](https://www.youtube.com/watch?v=7bz6UMwCquM). It did not win.

I was in Berkeley for the 2025 Secular Solstice. We gather to sing and to reflect.

The night’s theme was the opposite: ‘I don’t think we’re going to mak... | https://www.lesswrong.com/posts/YPLmHhNtjJ6ybFHXT/little-echo |

# Algorithmic thermodynamics and three types of optimization

*Context:I (Daniel C) have been working with Aram Ebtekar on various directions in his work on* [*algorithmic thermodynamics*](https://arxiv.org/abs/2308.06927) *and the* [*causal arrow of time*](https://www.mdpi.com/1099-4300/26/9/776)*. This post explores... | https://www.lesswrong.com/posts/CJRxQiTKEzior7jGq/algorithmic-thermodynamics-and-three-types-of-optimization |

# [Paper] Does Self-Evaluation Enable Wireheading in Language Models?

**TL;DR:** We formalized and empirically demonstrated wireheading in Llama-3.1-8B and Mistral-7B. Specifically, we use a formalization of wireheading (using a POMDP) to show conditions under which wireheading (manipulating the reward channel) become... | https://www.lesswrong.com/posts/k7wfo2fk4bipK7mfH/paper-does-self-evaluation-enable-wireheading-in-language |

# Building an AI Oracle

I did it. I built an oracle AI.

[

](https://substackcdn.com/imag... | https://www.lesswrong.com/posts/hq9bbAiaCrN3TGnRY/building-an-ai-oracle |

# The Possibility of an Ongoing Moral Catastrophe

Crosspost of this [blog post](https://benthams.substack.com/p/the-possibility-of-an-ongoing-moral).

I mostly believe in the *possibility* of an ongoing moral catastrophe because I believe in the *actuality* of an ongoing moral catastrophe (e.g. I think the [giant ani... | https://www.lesswrong.com/posts/9L8EtDDoC9NWR7Ydi/the-possibility-of-an-ongoing-moral-catastrophe |

# I have hope

This is in response to [Zvi Mowshowitz's "Little Echo"](https://www.lesswrong.com/posts/YPLmHhNtjJ6ybFHXT/little-echo) in which he discusses the secular solstice and the pessimistic situation with AI GCR (artificial intelligence global catastrophic risk). He discusses life and working to help make the si... | https://www.lesswrong.com/posts/RbqqfkXYhEtvSL5Zb/i-have-hope |

# We need a field of Reward Function Design

*(Brief pitch for a general audience, based on a 5-minute talk I gave.)*

Let’s talk about Reinforcement Learning (RL) agents as a possible path to Artificial General Intelligence (AGI)

=========================================================================================... | https://www.lesswrong.com/posts/oxvnREntu82tffkYW/we-need-a-field-of-reward-function-design |

# Reward Function Design: a starter pack

In the companion post [We need a field of Reward Function Design](https://www.lesswrong.com/posts/oxvnREntu82tffkYW/we-need-a-field-of-reward-function-design), I implore researchers to think about what RL reward functions (if any) will lead to RL agents that are *not* ruthless ... | https://www.lesswrong.com/posts/xw8P8H4TRaTQHJnoP/reward-function-design-a-starter-pack |

# How Stealth Works

Stealth technology is cool. It’s what gave the US domination over the skies during the latter half of the Cold War, and the biggest component of the US’s information dominance in both war and peace, at least prior to the rise of global internet connectivity and cybersecurity. Yet the core idea is a... | https://www.lesswrong.com/posts/MxivaKjaAX9mkJzAK/how-stealth-works |

# A few quick thoughts on measuring disempowerment

People want to measure and track gradual disempowerment. One issue with a lot of the proposals I've seen is that they don't distinguish between empowering and disempowering uses of AI. If everyone is using AI to write all of their code, that doesn't necessarily mean... | https://www.lesswrong.com/posts/hP2Pms2acPW6eeD6B/a-few-quick-thoughts-on-measuring-disempowerment |

# Human Dignity: a review

I have in my possession a short document purporting to be a manifesto from the future.

That’s obviously absurd, but never mind that. It covers some interesting ground, and the second half is pretty punchy. Let’s discuss it.

Practical u... | https://www.lesswrong.com/posts/Wx7oyvzxpmo7re7Th/high-level-approaches-to-rigor-in-interpretability |

# Gödel's Ontological Proof

In 1970, Gödel — amidst the throes of his worsening hypochondria and paranoia —entrusted to his colleague Dana Scott[^6twxk8nyani] a 12-line proof that he had kept mostly secret since the early 1940s. He had only ever discussed the proof informally in hushed tones among the corridors of Pr... | https://www.lesswrong.com/posts/ZRToRRSirgwNeLLuL/goedel-s-ontological-proof |

# Prompting Models to Obfuscate Their CoT

*Authors: Felix Tudose*, Joshua Engels***

*\* primary contributor*

***advice and mentorship*

Summary:

========

* Models can sometimes obfuscate their CoT when prompted to do so on a basic reasoning task

* We can increase the rate of obfuscation by telling the model it ... | https://www.lesswrong.com/posts/GLMeDPbG32pEfzc89/prompting-models-to-obfuscate-their-cot-1 |

# A Falsifiable Causal Argument for Substrate Independence

Here's a deceptively simple argument that derives an empirically falsifiable conclusion from two uncontroversial premises. No logical leaps. No unwarranted philosophical assumptions. Just premises, deduction, and a clear way to falsify.

I'll present the argum... | https://www.lesswrong.com/posts/EdPzyBwzMyJrJCTJs/a-falsifiable-causal-argument-for-substrate-independence |

# Towards a Categorization of Adlerian Excuses

*\[Author's note: LLMs were used to generate and sort examples into their requisite categories, as well as find and summarize relevant papers, and extensive assistance with editing\]*

*Context: Alfred Adler (1870–1937) split from Freud by asserting that human psychology ... | https://www.lesswrong.com/posts/kdG4T9jtETYe8Hkkg/towards-a-categorization-of-adlerian-excuses |

# D&D Sci Thanksgiving: the Festival Feast Evaluation & Ruleset

This is a follow-up to [last week's D&D.Sci scenario](https://www.lesswrong.com/posts/4d9KW8nduz7MWaqD9/d-and-d-sci-thanksgiving-the-festival-feast): if you intend to play that, and haven't done so yet, you should do so now before spoiling yourself.

Ther... | https://www.lesswrong.com/posts/y7pBvQWEctZzernvP/d-and-d-sci-thanksgiving-the-festival-feast-evaluation-and |

# Every point of intervention

*[Crosspost from my blog](https://tsvibt.blogspot.com/2025/12/every-point-of-intervention.html).*

> Events are already set for catastrophe, they must be steered along some course they would not naturally go. [...]

> Are you confident in the success of this plan? No, that is the wrong q... | https://www.lesswrong.com/posts/CseMTXtQHynGR8S5k/every-point-of-intervention |

# The reverse sear as a worthwhile life skill

A wise woman once said: [humans are not automatically strategic](https://www.lesswrong.com/posts/PBRWb2Em5SNeWYwwB/humans-are-not-automatically-strategic). I'd like to propose a way of being more strategic in the kitchen. I'd like to propose that you learn the reverse sear... | https://www.lesswrong.com/posts/wBCDPyJxZzwANZpyz/the-reverse-sear-as-a-worthwhile-life-skill |

# Seriously, use text expansions

You probably type some things a lot, especially if you do non-trivial amounts of administrative or communicative work. There are also probably things you would type more if it was easier to! For instance:

* Link to your personal website

* Your email address or phone number

... | https://www.lesswrong.com/posts/bF4uuEpwug64bcGai/seriously-use-text-expansions |

# My experience running a 100k

*The *[*SVP100*](https://www.svp100.co.uk/)* route.*

On the 3rd of August last year, I w... | https://www.lesswrong.com/posts/4tfeyu5xsubg6wDdH/my-experience-running-a-100k |

# Do you take joy in effective altruism?

I have been interested in effective altruism since before I knew the term. I have long done things like give money to charity, buy products that seem ethical and avoid products that seem unethical (such as factory-farmed meat).

I do not derive much joy from any of this, though... | https://www.lesswrong.com/posts/MHCTx9QC4pLPrnMkj/do-you-take-joy-in-effective-altruism |

# Ways we can fail to answer

[*Cross-posted from gleech.org.*](https://www.gleech.org/barriers)

In what ways can we can fail to answer a question?

I mean *necessarily* fail: actual barriers to knowledge, rather than skill issue hurdles. But of course contingent failures are much more common: “We didn’t ask the quest... | https://www.lesswrong.com/posts/8kBoC9sJZYTF8WYAr/ways-we-can-fail-to-answer |

# Gradual Disempowerment Monthly Roundup #3

Farewell to Friction

--------------------

So [sayeth](https://thezvi.substack.com/p/levels-of-friction) [Zvi](https://thezvi.substack.com/i/172832446/levels-of-friction): “when defection costs drop dramatically, equilibria break”. Even if AI makes individual tasks easier, t... | https://www.lesswrong.com/posts/99yCxb5KGCZTYccKR/gradual-disempowerment-monthly-roundup-3 |

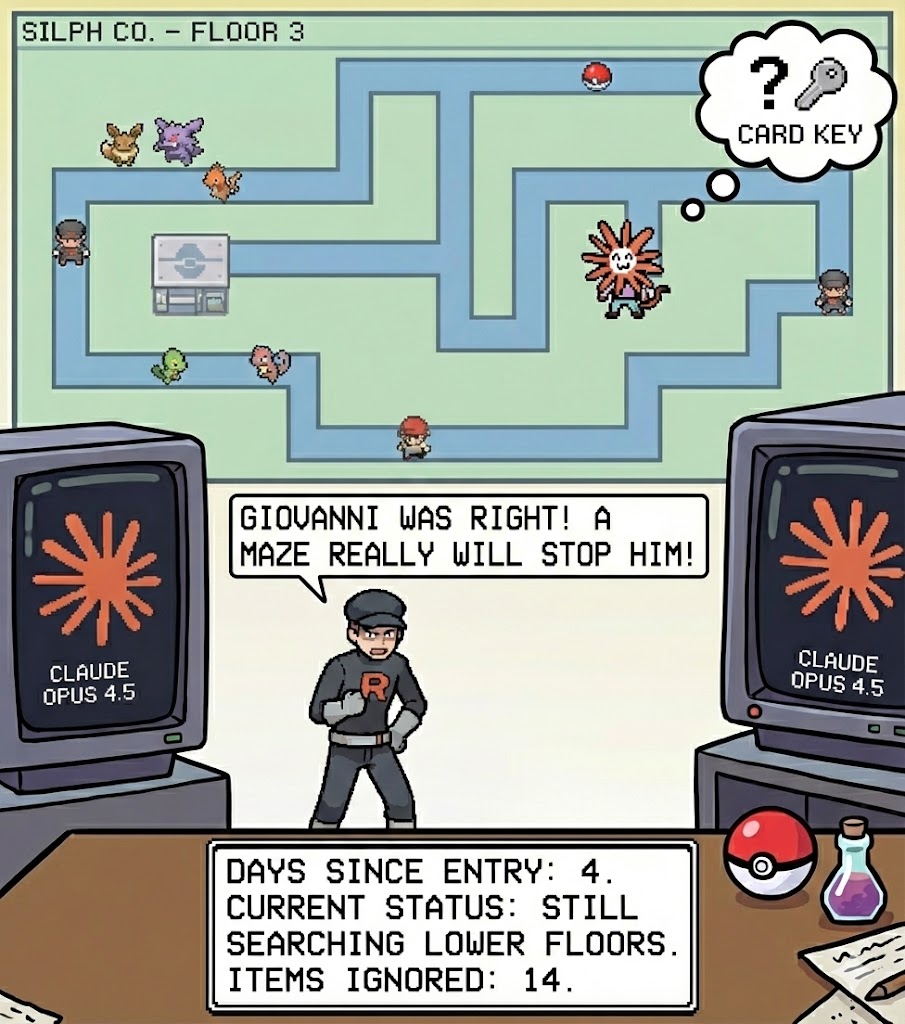

# Insights into Claude Opus 4.5 from Pokémon

Credit: Nano Banana, with some text provided.

You may be surprised to learn that [ClaudePlaysPokemon](https://www.twitch.tv/claudeplayspo... | https://www.lesswrong.com/posts/u6Lacc7wx4yYkBQ3r/insights-into-claude-opus-4-5-from-pokemon |

# Auditing Games for Sandbagging [paper]

*Jordan Taylor, Sid Black, Dillon Bowen, Thomas Read, Satvik Golechha, Alex Zelenka-Martin, Oliver Makins, Connor Kissane, Kola Ayonrinde, Jacob Merizian, Samuel Marks, Chris Cundy, Joseph Bloom*

*UK AI Security Institute,* [*FAR.AI*](http://FAR.AI)*, Anthropic*

**Links:** [P... | https://www.lesswrong.com/posts/QMLwKemqMDATkkjJG/auditing-games-for-sandbagging-paper |

# Selling H200s to China Is Unwise and Unpopular

AI is the most important thing about the future. It is vital to national security. It will be central to economic, military and strategic supremacy.

This is true regardless of what other dangers and opportunities AI might present.

The good news is that America has man... | https://www.lesswrong.com/posts/kmEpWTjWeFyqv4tb5/selling-h200s-to-china-is-unwise-and-unpopular |

# Tate Modern 2150

As Bea approached the Tate Modern on London-3, the first thing she noticed were the cooling CRACs and CDUs still freckling the grey cuboidal building. She supposed the datacenter aesthetic *was* appropriately grungy for a modern art building — it harkened back to a simpler time.

Jay — her date — ha... | https://www.lesswrong.com/posts/uxd8Ezh4hfyda7kzB/tate-modern-2150 |

# The funding conversation we left unfinished

People working in the AI industry are making stupid amounts of money, and word on the street is that Anthropic is going to have some sort of liquidity event soon (for example possibly [IPOing sometime next year](https://vechron.com/2025/12/anthropic-hires-wilson-sonsini-ip... | https://www.lesswrong.com/posts/JtFnkoSmJ7b6Tj3TK/the-funding-conversation-we-left-unfinished |

# Artifacts I'd like to try

Here is a list of digital (and physical!) artifacts to create connections between friends, increase conversation bandwidth, or simply enjoy pleasant aesthetic experiences. I’m not sure if they are good ideas, but they have been fueling my curiosity for long enough that I’ve written several ... | https://www.lesswrong.com/posts/DjZzwXBQhhchtgsyR/artifacts-i-d-like-to-try |

# We don't know what most microbial genes do. Can genomic language models help?

Youtube: [https://youtu.be/w6L9-ySnxZI?si=7RBusTAyy0Ums6Oh](https://youtu.be/w6L9-ySnxZI?si=7RBusTAyy0Ums6Oh)

Spotify: [ht... | https://www.lesswrong.com/posts/J5rZzWSmxfe2sk9nF/we-don-t-know-what-most-microbial-genes-do-can-genomic |

# Fibonacci Holds Information

Any *natural number* can be uniquely written as a sum of non-consecutive Fibonacci numbers. This is called *Zeckendorf representation.*

Consider,

15 = 2 + 13,

or

54 = 2 + 5 + 13 + 34.

This outlines a very weak RE language employing only $\{\mathbb{N}, +, var\}$. We can also see that ... | https://www.lesswrong.com/posts/Cah7WajhG4Mcusrqx/fibonacci-holds-information |

# Most Algorithmic Progress is Data Progress [Linkpost]

So this post brought to you by Beren today is about how a lot of claims about within-paradigm algorithmic progress is actually mostly about just getting better data, leading to a Flynn effect, and the reason I'm mentioning this is because once we have to actually... | https://www.lesswrong.com/posts/3uMZFbvJZ8Z5LqpyQ/most-algorithmic-progress-is-data-progress-linkpost |

# No ghost in the machine

Introduction

------------

> The illusion is irresistible. Behind every face there is a self. We see the signal of consciousness in a gleaming eye and imagine some ethereal space beneath the vault of the skull lit by shifting patterns of feeling and thought, charged with intention. An essence... | https://www.lesswrong.com/posts/Ckb5rwEHCnYWZhAag/no-ghost-in-the-machine |

# Consider calling the NY governor about the RAISE Act

Summary

=======

If you live in New York, you can contact the governor to help the RAISE Act pass without being amended to parity with SB 53. Contact methods are listed at the end of the post.

What is the RAISE Act?

======================

Previous discussion of ... | https://www.lesswrong.com/posts/7hsJpZds5siaNYQNL/consider-calling-the-ny-governor-about-the-raise-act |

# Evaluation as a (Cooperation-Enabling?) Tool

Key points:

===========

0. **Advertisement:** We have an IMO-nice position paper which argues that [AI Testing Should Account for Sophisticated Strategic Behaviour](https://arxiv.org/abs/2508.14927), and that we should think about evaluation (also) through game-theoreti... | https://www.lesswrong.com/posts/BExKr5kR9RX7ot9iw/evaluation-as-a-cooperation-enabling-tool |

# Apply to ESPR & PAIR 2026, Rationality and AI Camps for Ages 16-21

**Update**: The deadline has been extended to **January 12th**.

*TLDR – Apply now to *[*ESPR*](https://espr.camp/) *and*[*PAIR*](https://pair.camp/)*. ESPR welcomes students between 16-19 years. PAIR is for students between 16-21 years.*

The [F... | https://www.lesswrong.com/posts/ujyWw3rsn3LwSwgFi/apply-to-espr-and-pair-2026-rationality-and-ai-camps-for |

# Childhood and Education #15: Got To Get Out

The focus this time around is on the non-academic aspects of primary and secondary school, especially various questions around bullying and discipline, plus an extended rant about someone being wrong on the internet while attacking homeschooling, and the latest on phones.

... | https://www.lesswrong.com/posts/vrtaXptHCN7akYnay/childhood-and-education-15-got-to-get-out |

# Rock Paper Scissors is Not Solved, In Practice

Hi folks, linking my Inkhaven explanation of intermediate Rock Paper Scissors strategy, as well as feeling out an alternative way to score rock paper scissors bots. It's more polished than most Inkhaven posts, but still bear in mind that the bulk of this writing was in ... | https://www.lesswrong.com/posts/AGZD62scqRaoM6p4n/rock-paper-scissors-is-not-solved-in-practice |

# Follow-through on Bay Solstice

There is a [Bay 2025 Solstice Feedback Form](https://docs.google.com/forms/d/e/1FAIpQLSdIm0BA-CTMw_egSwqC-iQaCzxSTZtbl7QuVmrFTlFZNqQO0A/viewform?usp=dialog). Please fill it out if you came, and especially fill it out if you felt alienated, or disengaged, or that Solstice left you worse... | https://www.lesswrong.com/posts/Zb8ov7ai4zRdpxhQt/follow-through-on-bay-solstice |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.