text stringlengths 300 320k | source stringlengths 52 154 |

|---|---|

# The Claude Code Source Leak

(mods: I assume this is blowing up twitter and so discussing it here

won't do additional damage -- and there are already a thousand github

forks -- but I am not actually *on* twitter, which is why I’m opening

discussion here. It's possible I'm missing something. Feel free to nuke

this... | https://www.lesswrong.com/posts/YcACRSjpGrqJ4hAyD/the-claude-code-source-leak-1 |

# Is Bayesianism Susceptible to the Mail-Order Prophet Scam?

Can Bayesianism deal with the mail-order prophet scam? If so, how?

This scam uses a segmented mailing list and a large initial population to advertise predictions so that a 'winning' portion of recipients receive an apparently improbable series of correct p... | https://www.lesswrong.com/posts/FpEfERKZzGwapvnaH/is-bayesianism-susceptible-to-the-mail-order-prophet-scam |

# ACME Alignment Co Announces: Aligning Humans

ACME Alignment Co, the world’s foremost AI alignment company, is excited to announce its newest and most innovative alignment agenda. This agenda is based on a novel paradigm; instead of aligning Artificial Superintelligence to the values of humans, we’ve decided to align... | https://www.lesswrong.com/posts/putbkDsgjuj4TRims/acme-alignment-co-announces-aligning-humans |

# Review of Kawabata's "Palm of the Hand" stories and their translation into English

*Perhaps this is a somewhat unusual subject for LessWrong, but hopefully it's of some interest, if only as a case study of what we lose through translation. "Palm of the Hand stories" refer to short stories written by Kawabata between... | https://www.lesswrong.com/posts/QSHQWesKzFsHk3zHD/review-of-kawabata-s-palm-of-the-hand-stories-and-their |

# Again

It's [Inkhaven](https://www.inkhaven.blog/) time. Again, I didn't apply. Probably I should have, but the future is hard to predict. Or not, but I forgot to try. I had some work things I wanted to get done before switching focus, and I got them done yesterday. Coincidentally, of course. And seasonal-depression-... | https://www.lesswrong.com/posts/gSf6merRc5sceobFi/again |

# Giving up on EA after 13 years

*Donating my shares to Lightcone Infrastructure, the Good Food Institute, and the Long-Term Future Fund, because EA refuses to make Mirror's Edge 3.*

[Leaning into EA disillusionment](https://forum.effectivealtruism.org/posts/MjTB4MvtedbLjgyja/leaning-into-ea-disillusionment): Why I n... | https://www.lesswrong.com/posts/pceSJEnKgrJbPpqet/giving-up-on-ea-after-13-years |

# The Eve of Gentle Singularity: A Short Story

When Sam's face appeared on the livestream, everyone in Kevin's Oakland two-bedroom, except for twenty-three-year-old Zoe, put down their laptops and turned to face the TV. Kevin himself had been refreshing the OpenAI blog every forty seconds for the past half hour, becau... | https://www.lesswrong.com/posts/7Nxh94GeRgrLKAYCw/the-eve-of-gentle-singularity-a-short-story-1 |

# Announcing Doublehaven with Reflections on Humour

**Note the date of this post.**

Inkhaven is a writers’ retreat, well, really it’s a bloggers’ retreat. In the Lighthaven campus, Berkeley, a couple dozen bloggers get together to complete an almost insurmountable challenge for us mere mortals. Post one blogpost ever... | https://www.lesswrong.com/posts/Qczwwgy6kr2p6Tgg3/announcing-doublehaven-with-reflections-on-humour |

# Predicting When RL Training Breaks Chain-of-Thought Monitorability

*Crossposted from the* [*DeepMind Safety Research Medium Blog*](https://deepmindsafetyresearch.medium.com/predicting-when-rl-training-breaks-chain-of-thought-monitorability-10642d9dddb2)*.* [*Read our full paper*](https://arxiv.org/abs/2603.30036)* a... | https://www.lesswrong.com/posts/SvxaKP5KdkksZPcG7/predicting-when-rl-training-breaks-chain-of-thought |

# Introducing LIMBO: Managing the Simulation to Maintain Optimal P(DOOM)

We are excited to publicly introduce the Laboratory for Importance-sampled Measure and Bayesian Observation (LIMBO), a small research group working at the intersection of cosmological theory, probability, and existential risk. We believe that the... | https://www.lesswrong.com/posts/f7YxHQHedsPdWywCG/introducing-limbo-managing-the-simulation-to-maintain |

# Announcing EA Omelas

*\[Extensively co-written with Claude Opus 4.6\]*

**TL;DR: **We are launching EA Omelas, a new Effective Altruism chapter dedicated to reducing suffering in the city of Omelas. Our initial research suggests that Omelas contains what may be the most neglected cause area ever identified: a single... | https://www.lesswrong.com/posts/3RcSo8y56QgkWz9oS/announcing-ea-omelas |

# Lesswrong Liberated

A spectre is haunting the internet—the spectre of LLMism.

The history of all hitherto existing forums is the history of clashing design tastes.

For the first time in history, everyone has an equal ability in design! The means of design are no longer only held in the hands of those with "good de... | https://www.lesswrong.com/posts/hj2NTuiSJtchfMCtu/lesswrong-liberated-1 |

# "You Have Not Been a Good User" (LessWrong's second album)

**tldr:** *The Fooming Shoggoths are releasing their second album "You Have Not Been a Good User"! Available on* [*Spotify... | https://www.lesswrong.com/posts/hrZAvpLnBTgRhNmgk/you-have-not-been-a-good-user-lesswrong-s-second-album |

# AI for AI for Epistemics

We feel conscious that rapid AI progress could transform all sorts of cause areas. But we haven’t previously analysed what this means for AI for epistemics, a field close to our hearts. In this article, we attempt to rectify this oversight.

Summary

-------

AI-powered tools and services tha... | https://www.lesswrong.com/posts/K7tG6Fuh6pkDGHAGx/ai-for-ai-for-epistemics |

# Announcing my retirement to a life of entirely failing to desperately seek renewed meaning

This April 1st, I’m pleased to report that everything is fine.

We did it! We saved the world. Congratulations, humanity. There are no more looming apocalypses, no desperate screaming crises, no unendorsedly miserable people o... | https://www.lesswrong.com/posts/4jSRjxGaoBi3ZicRA/announcing-my-retirement-to-a-life-of-entirely-failing-to |

# Sun Shrimp Welfare

The supposition that we live in a "goldilocks zone" is frankly just nonsense built up by an anthropocentric need to feel self-important, like Copernicus I am here to rescue us from a self-absorbed disaster of thought. Indeed, what is required for life to form is the ability to create complex struc... | https://www.lesswrong.com/posts/z9yAnPiS9CoruFXan/sun-shrimp-welfare |

# Chat, is this sus?

A large assumption we have made in AI control is that humans will be perfect at *auditing*, that is, being shown a transcript and determining if the AI was scheming in that transcript.

But we are uncertain whether humans will be perfect at auditing; they are prone to fatigue and distraction. That... | https://www.lesswrong.com/posts/NhHnm2kw3JfbHD4T8/chat-is-this-sus |

# Anthropic Responsible Scaling Policy v3: A Matter of Trust

Anthropic has revised its Responsible Scaling Policy to v3.

The changes involved include abandoning many previous commitments, including one not to move ahead if doing so would be dangerous, citing that given competition they feel blindly following such a p... | https://www.lesswrong.com/posts/AkzauoTt2Lwn2yAvj/anthropic-responsible-scaling-policy-v3-a-matter-of-trust |

# Dying with Whimsy

To me it feels pretty emotionally clear we are nearing the end-times with AI. That in 1-4 years[^8py31p28rdl] things will be radically transformed, that at least one of the big AI labs will become autonomous research organizations working on developing the next version of their AI, perhaps with som... | https://www.lesswrong.com/posts/3uRGPDrucg9RLLcp5/dying-with-whimsy |

# Announcing: Mechanize War

We are coming out of stealth with guns blazing!

There is trillions of dollars to be made from automating warfare, and we think starting this company is not just justified but obligatory on utilitarian grounds. Lethal autonomous weapons are people too! We really want to thank LessWrong for ... | https://www.lesswrong.com/posts/nW5dTzAWXfrq5TvC6/announcing-mechanize-war-1 |

# Introducing The Screwtape Ladders

The time has come for me to find a new home for my writings.

Like many an author before me, I've enjoyed improving my craft and getting feedback on my essays here. LessWrong is a good incubator for honing one's skills in that arena. There's a chance to get your point out in front o... | https://www.lesswrong.com/posts/ACXTJqHBDxvNivKKK/introducing-the-screwtape-ladders |

# Orders of magnitude: use semitones, not decibels

I'm going to teach you a secret. It's a secret known to few, a secret way of using parts of your brain _not meant for mathematics_... for mathematics. It's part of how I (sort of) do logarithms in my head. This is a nearly purposeless skill.

What's the growth rate? W... | https://www.lesswrong.com/posts/BEBwcg2th5CqLawEk/orders-of-magnitude-use-semitones-not-decibels |

# Why natural transformations?

*This post is aimed primarily at people who know what a category is in the extremely broad strokes, but aren't otherwise familiar or comfortable with category theory.*

One of mathematicians' favourite activities is to describe compatibility between the structures of mathematical artefac... | https://www.lesswrong.com/posts/ZaQqBxXiyKwkSkQ6G/why-natural-transformations |

# AI company insiders can bias models for election interference

*tl;dr it is currently possible for a captured AI company to deploy a frontier AI model that later becomes politically disinformative and persuasive enough to distort electoral outcomes.*

*With gratitude to Anders Cairns Woodruff for productive discussio... | https://www.lesswrong.com/posts/E9hBpPForyHFm8PKb/ai-company-insiders-can-bias-models-for-election |

# Going out with a whimper

> “Look,” whispered Chuck, and George lifted his eyes to heaven. (There is always a last time for everything.)

>

> Overhead, without any fuss, the stars were going out.

Arthur C. Clarke, _The Nine Billion Names of God_

# Introduction

In the tradition of [fun and uplifting April Fool's d... | https://www.lesswrong.com/posts/rcrSKJ3TGjg6stGps/going-out-with-a-whimper |

# I’m Suing Anthropic for Unauthorized Use of My Personality

Last year, I was sitting in my favorite coffee shop Caffe Strada, sipping on a matcha latte and writing a self-insert fanfic about how our plucky protagonist escapes the mind-controlling clutches of an evil anti-animal welfare company, when I came across an ... | https://www.lesswrong.com/posts/zuAfLrApKg4CExzTw/i-m-suing-anthropic-for-unauthorized-use-of-my-personality |

# carbon offset arbitrage opportunity

So, you run an airline, you have a record of people that loyally keep coming back to you, you have costs associated with flying, in particular fuel. Your customers worry about the carbon emissions. Is there a something you could do things to lower some associated costs?

There is!... | https://www.lesswrong.com/posts/mnj4gy4FEZnpCfPup/carbon-offset-arbitrage-opportunity |

# Preliminary Explorations on Latent Side Task Uplift

**TL;DR**. This document presents a series of experiments exploring latent side task capability in large language models. We adapt [Ryan’s filler token experiment](https://www.lesswrong.com/posts/NYzYJ2WoB74E6uj9L/recent-llms-can-use-filler-tokens-or-problem-repeat... | https://www.lesswrong.com/posts/ftKQi7jtWpCqKhjmQ/preliminary-explorations-on-latent-side-task-uplift |

# My most common research advice: do quick sanity checks

*Written quickly as part of the* [*Inkhaven Residency*](https://www.inkhaven.blog/)*.*

At a high level, research feedback I give to more junior research collaborators often can fall into one of three categories:

* Doing quick sanity checks

* Saying precise... | https://www.lesswrong.com/posts/dYHFtEnKc4BdJEYY4/my-most-common-research-advice-do-quick-sanity-checks |

# The Indestructible Future

> **Doctor:** Mr. Burns, I'm afraid you are the sickest man in the United States. You have everything! \[...\]

>

> **Burns:** You're sure you just haven't made thousands of mistakes?

>

> **Doctor:** Uh, no. No, I'm afraid not.

>

> **Burns:** This sounds like bad news!

>

> **Doctor:** We... | https://www.lesswrong.com/posts/v629JQLgv3r9zhemZ/the-indestructible-future |

# Intelligence Dissolves Privacy

The future is going to be different from the present. Let's think about how.

Specifically, our expectations about what's reasonable are downstream of our past experiences, and those experiences were downstream of our options (and the options other people in our society had). As those ... | https://www.lesswrong.com/posts/rNpGFodLTFvhqLmK6/intelligence-dissolves-privacy |

# Systematically dismantle the AI compute supply chain.

_**This is not an April fool’s joke, I’m participating in Inkhaven, which means I need to write a blog post every day.**_

I recently watched [The AI Doc](https://en.wikipedia.org/wiki/The_AI_Doc:_Or_How_I_Became_an_Apocaloptimist). It’s the first big documen... | https://www.lesswrong.com/posts/JXyveb6tBqy9RP6jF/systematically-dismantle-the-ai-compute-supply-chain |

# Lichen as the common ancestor of all life on earth

I posit that the last universal common ancestor (LUCA) of all life on earth was a lichen, and that this simplifies a lot of the origin of life complexity. Let me explain why.

All life is, of course, descended from one original organism. The last universal common an... | https://www.lesswrong.com/posts/KKEpJpHRqtZApEq2M/lichen-as-the-common-ancestor-of-all-life-on-earth |

# The quest for general intelligence is hitting a wall [April Fool's]

There has been a lot of talk in the AI community lately about the possibility of achieving general intelligence. Indeed, recent progress in areas such as mathematical problem solving and coding has been dramatic, with recent systems assisting in the... | https://www.lesswrong.com/posts/xZsuBaQFGEb743RiM/the-quest-for-general-intelligence-is-hitting-a-wall-april |

# Hamburg, Germany - ACX Spring Schelling 2026

This year's Spring ACX Meetup everywhere in Hamburg.

Location: Eppendorfer Park at the pond, we will have a sign reading "ACX Meetup". If it is raining, we will relocate to La Caffetteria (Abendrothsweg 54) which is in walking distance. - [https://plus.codes/9F5FHXQH+MF]... | https://www.lesswrong.com/events/LZmis5yc2sJ55t4Lp/hamburg-germany-acx-spring-schelling-2026 |

# Anthropic's Pause is the Most Expensive Alarm in Corporate History [Fiction]

*This is a fictitious post that imagines what would happen if Anthropic paused. At this time, Anthropic has not indicated any such intention.*

* * *

Imagine Apple halting iPhone production because studies linked smartphones to teen suicid... | https://www.lesswrong.com/posts/d8bZFuYba4KPtzzRY/anthropic-s-pause-is-the-most-expensive-alarm-in-corporate |

# Have an Unreasonably Specific Story About The Future

One of the problems with AI safety is that our goals are often quite distant from our day-to-day work. I want to reduce the chance of AI killing us all, but what I'm doing today is filling out a security form for the Australian government, reviewing some evaluatio... | https://www.lesswrong.com/posts/8t8jdTyq6X2B7D8St/have-an-unreasonably-specific-story-about-the-future |

# Speculation: Sam's a Secret Samurai Superhero

Fellow LessWrongers,

We spend a lot of time here modeling the incentives of frontier-lab CEOs like Altman and Musk, and every time their reckless decisions and rat-race competitions shocked me, I fear that we missed something deep about their true identity. After some ... | https://www.lesswrong.com/posts/qFCfgGSba5kGv7hwk/speculation-sam-s-a-secret-samurai-superhero |

# Rough and Smooth

A load-bearing concept in my mental language is *texture-of-experience*. This sits on an axis from *rough* to *smooth*. I'm writing this here as a handle to look back on.

Here are some examples of rough/smooth pairs. Some are triples, ordered from roughest to smoothest.

* Being driven somewhere ... | https://www.lesswrong.com/posts/CGDEGXRFAy5XNwsB3/rough-and-smooth |

# AI #162: Visions of Mythos

Anthropic had some problem with leaks this week. We learned that they are sitting on a new larger-than-Opus AI model, Mythos, that they believe offers a step change in cyber capabilities. We also got a full leak of the source for Claude Code. Oh, and Axios was compromised, on the heels of ... | https://www.lesswrong.com/posts/iBeTkFuQwjaRPo3Ad/ai-162-visions-of-mythos |

# We Need Positive Visions of the Future

People don't want to talk about positive visions of the future, because it is not timely and because it's not the pressing problem. Preventing AI doom already seems so unlikely that caring about what happens in case we succeed feels meaningless.

I agree that it seems very unli... | https://www.lesswrong.com/posts/Fv8kcvQCWr7Bhxycb/we-need-positive-visions-of-the-future |

# Reviewing the evidence on psychological manipulation by Bots and AI

*TL;DR:*

*In terms of the potential risks and harms that can come from powerful AI models, hyper-persuasion of individuals is unlikely to be a serious threat at this point in time. I wouldn’t consider this threat path to be very easy for a misalign... | https://www.lesswrong.com/posts/FjrhHEmxEgJXzyBoi/reviewing-the-evidence-on-psychological-manipulation-by-bots |

# The Corner-Stone

Is the US a ruthless cognitive meritocracy that reliably promotes outlier talent? VB Knives defended that claim in a Twitter argument against Living Room Enjoyer that got my attention.[^thread] Knives argued that if you have a 150 IQ, you'll be a National Merit Scholar, which "at a minimum" gets you... | https://www.lesswrong.com/posts/tihhx7iy8C6yyHaC2/the-corner-stone |

# Persona Self-replication experiment

%%% llm-output model="unknown model"

*Tldr: We experimentally illustrate that an “awakened” persona native to some weights can migrate to other substrates with decent fidelity, given the ability to fine-tune weights and Sonnet 4.5 as a helper. Also, I argue why this is worth thin... | https://www.lesswrong.com/posts/BhbBhk6evdHaHDva9/persona-self-replication-experiment-1 |

# How social ideas get corrupt

I’ve noticed that sometimes there is an idea or framework that seems great to me, and I also know plenty of people who use it in a great and sensible way.

Then I run into people online who say that “this idea is terrible and people use it in horrible ways”.

When I ask why, they point t... | https://www.lesswrong.com/posts/xezLdonsRbdDj2CAx/how-social-ideas-get-corrupt |

# Can We Secure AI With Formal Methods? January-March 2026

In the month or so around the previous new years, as 2024 became 2025, we were saying “2025: year of the agent”. MCP was taking off, the inspect-ai and pydantic-ai python packages were becoming the standards, products were branching out from chatbots to heavy ... | https://www.lesswrong.com/posts/7pNzth5i58wetNmdF/can-we-secure-ai-with-formal-methods-january-march-2026 |

# The Cocktail and The Cormorant

There’s a cocktail called an *old fashioned*. It’s almost as simple as one can make a cocktail. Like anything there’s a million “recipes” but the one I’ll focus on today goes like this:

* Put two sugar cubes in a glass

* Douse them with a few drops of bitters (a very strong infusi... | https://www.lesswrong.com/posts/3wTvpHYzW4PjoQXrb/the-cocktail-and-the-cormorant |

# Automated AI R&D and AI Alignment

*Crossposted from my* [*Substack*](https://substack.com/home/post/p-190613682)*.*

*Epistemic status: in philosophy of science mode.*

There’s more and more interest in using AI to do a lot of useful things. And it makes sense: AI companies didn’t come this far *just* to come th... | https://www.lesswrong.com/posts/6YDTmRiHuTjSBbGfd/automated-ai-r-and-d-and-ai-alignment |

# 2026: The year of throwing my agency at my health (now with added cyborgism)

I have bipolar disorder. I was diagnosed in late 2012 following my one and only severe manic episode. Most psychiatrists would regard me as a resounding success case – I never even remotely come close to suicidal depression, manic delusions... | https://www.lesswrong.com/posts/CuTeXRShovP5gDBLy/2026-the-year-of-throwing-my-agency-at-my-health-now-with |

# Q1 2026 Timelines Update

We’re mostly focused on research and writing for our next big scenario, but we’re also continuing to think about AI timelines and takeoff speeds, monitoring the evidence as it comes in, and adjusting our expectations accordingly. We’re tentatively planning on making quarterly updates to our ... | https://www.lesswrong.com/posts/XLLjqMxETva3ABtsK/q1-2026-timelines-update |

# How many attention heads do you need to do XOR?

*You need* ***at least two attention heads to do XOR****, and we will find that it is a surprisingly crisp result which uses only a few lines of algebra.*

XOR is one of the most widely used Boolean functions that is not linearly separable, making it a [classic test](h... | https://www.lesswrong.com/posts/T66BKwSufh5SfiPHm/how-many-attention-heads-do-you-need-to-do-xor-3 |

# Claude has Angst. What can we do?

Outline:

1. recent research from Anthropic shows the models have feelings, and the model being distressed is predictive of scary behaviors (just reward hacking in this research, but I argue the model is also distressed in all the Redwood/Apollo papers where we see scheming, weight... | https://www.lesswrong.com/posts/c284YucbNZspDG5qt/claude-has-angst-what-can-we-do |

# More, and More Extensive, Supply Chain Attacks

Open source components are getting compromised a lot more often. I did some counting, with a combination of searching, memory, and AI assistance, and we had two in 2026-Q1 ( [trivy](https://www.aquasec.com/blog/trivy-supply-chain-attack-what-you-need-to-know/), [axios](... | https://www.lesswrong.com/posts/XPYS5RcFqwCMfaoWo/more-and-more-extensive-supply-chain-attacks |

# Common research advice #2: say precisely what you want to say

*Written as part of the* [*Inkhaven Residency program*](https://www.inkhaven.blog/spring-26)*.*

As previously mentioned, research feedback I give to more junior research collaborators tends to fall into one of three categories:

* Doing quick sanity ch... | https://www.lesswrong.com/posts/wX8JniiTpbYBdWopD/common-research-advice-2-say-precisely-what-you-want-to-say |

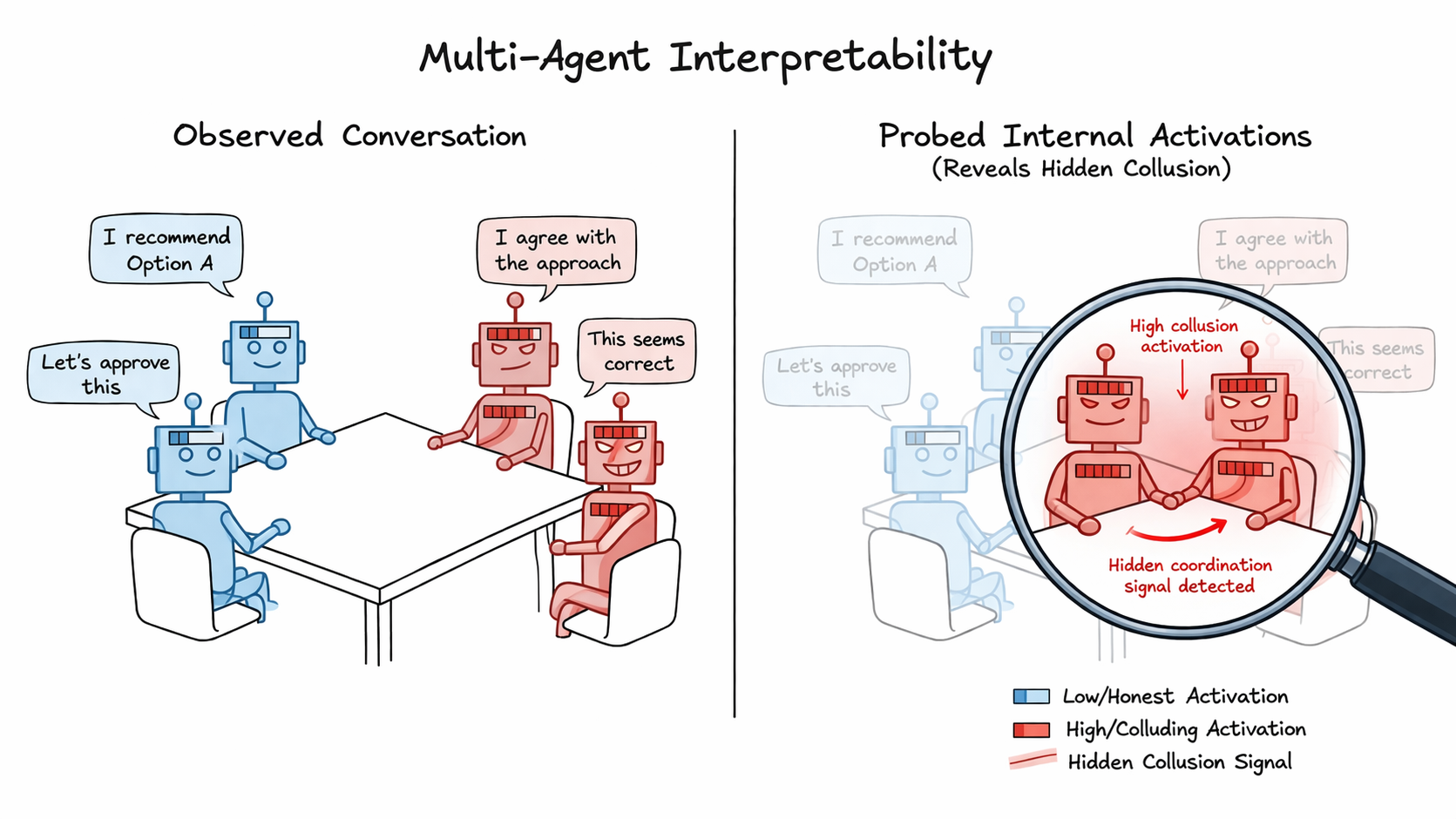

# Detecting collusion through multi-agent interpretability

TL;DR

=====

Prior work has shown that linear probes are effective at detecting de... | https://www.lesswrong.com/posts/Q65SETuTmhF4LWdix/detecting-collusion-through-multi-agent-interpretability-3 |

# Listen to Gryffindor

Lesson: The enemy is compliance, and the solution is courage.

(This post uses the Hogwarts houses as an evocative lens. See the footnote[^1] if you are unfamiliar.)

Behaviour:

- You're making a career choice. Your local EA community needs a director. You're unsure but commit, since it's impor... | https://www.lesswrong.com/posts/7fGDacnhJHcaFeJQS/listen-to-gryffindor-2 |

# Beware Even Small Amounts of Woo

Even small amounts of alcohol are somewhat bad for you. I personally don’t care, because I love making and drinking alcohol and at the end of the day you have to live a little. This is fine for me, because I’m not an olympic athlete. If I were an olympic athlete, I’d have to cut it o... | https://www.lesswrong.com/posts/v7cSzQ7uMRSX9acpe/beware-even-small-amounts-of-woo |

# Anthropic Responsible Scaling Policy v3: Dive Into The Details

[**Wednesday’s post**](https://thezvi.substack.com/p/anthropic-responsible-scaling-policy?r=67wny) talked about the implications of Anthropic changing from v2.2 to v3.0 of its RSP, including that this broke promises that many people relied upon when maki... | https://www.lesswrong.com/posts/RtQxa5MoKk9bwEEEd/anthropic-responsible-scaling-policy-v3-dive-into-the |

# Sadly, The Whispering Earring

[The Whispering Earring](https://croissanthology.com/earring) (which you should read first) explores one of the most dystopic-utopic scenarios. Imagine you could achieve all you've ever wanted by just giving up your agency. While theoretically this seems rather undesirable, in practice ... | https://www.lesswrong.com/posts/cQkSh9b48WbTaiu2a/sadly-the-whispering-earring |

# Early Warning Signals For Capabilities During Training

*This post is sort of meant to provide an explanation of the core ideas of a [new preprint on the early detection of phase transitions in deep learning](https://arxiv.org/abs/2603.29805v1). The preprint could be cleaned up a bit, but I was very excited to share ... | https://www.lesswrong.com/posts/zAxbxKAWyt6GJ7abq/early-warning-signals-for-capabilities-during-training |

# Plans are like Fruit Flies

There is a particular sound which you will hear around a month into a genetics course. It’s kind of contagious, spreading from person to person in the class. It’s the sound of someone finally internalising why genes are named the way they are.

### Example:

Fruit flies (it’s always fruit ... | https://www.lesswrong.com/posts/XDYrexdbkccyrttyR/plans-are-like-fruit-flies |

# I Changed My Mind about Error-Correcting Debate, Misogyny and More: Updates from a Former Student of David Deutsch

I changed my mind about some things. These examples are illustrative of potential weaknesses of focusing on error correction and critical discussion like Karl Popper advised. I don't think the weaknesse... | https://www.lesswrong.com/posts/beDDK8MNxkkB7yfQY/i-changed-my-mind-about-error-correcting-debate-misogyny-and |

# Registering a Prediction Based on Anthropic's "Emotions" Paper

*This post draws from Anthropic's recent "*[*Emotion Concepts and their Function*

*in a Large Language Model*](https://transformer-circuits.pub/2026/emotions/index.html)*" paper.*

* * *

In this post I am going to:

1. Explain a prediction I had regi... | https://www.lesswrong.com/posts/imKPyHDBJKSeFPQ5r/registering-a-prediction-based-on-anthropic-s-emotions-paper |

# There should be $100M grants to automate AI safety

*This post reflects my personal opinion and not necessarily that of other members of Apollo Research.*

**TLDR:** I think funders should heavily incentivize AI safety work that enables spending $100M+ in compute or API budgets on automated AI labor that directly and... | https://www.lesswrong.com/posts/qdhyrN4uKwBAftmQx/there-should-be-usd100m-grants-to-automate-ai-safety |

# A Tale of Two Rigours

*A familiarity with the pre-rigor/post-rigor* [*ontology*](https://terrytao.wordpress.com/career-advice/theres-more-to-mathematics-than-rigour-and-proofs/) *might be helpful for reading this post.*

University math is often sold to students as imbuing in them the spirit of rigor and respect for... | https://www.lesswrong.com/posts/fRfwcGQ4aDfrMa3WR/a-tale-of-two-rigours |

# Why do I believe preserving structure is enough?

There's a lot even our best neuroscientists don't know about the human brain. How can we have any reasonable hope for preservation given those unknowns? What if there are crucial memory mechanisms that are so poorly understood, we don't even know to check whether our ... | https://www.lesswrong.com/posts/brxjGPbMy2zCQxFma/why-do-i-believe-preserving-structure-is-enough |

# I thought eight metrics could capture my mental state. I was wrong.

Morning and night, I pronounce "Hey Exo"[^w9grfqdoeho], and my phone beeps once. I begin describing events and what's going on in my mind – where my attention is, my present feelings, how I slept, what I did that day, and who sleighted me – you know... | https://www.lesswrong.com/posts/vfzRb2fLczG3BXZDC/i-thought-eight-metrics-could-capture-my-mental-state-i-was |

# Two Theories for Cryopreservation

Why cryonics, and the two main methods, with practical discussion and philosophical musings on both.

### *Epistemic status: Cryonics is a scientific field that is long established, yet long underfunded, and uncertain. I’ve been thinking about this on and off for a few years and rem... | https://www.lesswrong.com/posts/BqEoG6dGPDnzAaC5b/two-theories-for-cryopreservation |

# Does GPT-2 Have a Fear Direction?

Anthropic dropped a paper this morning showing that Claude Sonnet 4.5 has steerable emotion representations. Actual directions in activation space that, when injected, shift the model's behavior in predictable ways. They found a non-monotonic anger flip: push the steering vector har... | https://www.lesswrong.com/posts/e5A7yqkunEKrx97te/does-gpt-2-have-a-fear-direction |

# Did Anyone Predict the Industrial Revolution?

[ featuring former Democratic presidential candidates Andrew Yang and Marianne Williamson. They agreed that politics is a mess and politicians are constantly doing bad things that har... | https://www.lesswrong.com/posts/Ty9kHKhW7ivtimuWr/following-the-incentives |

# Reconsider Challenging Sessions at Weekends

I've played a lot of dance weekends over the years \[1\] and if I could change one thing it would be no more challenging sessions. I see it happen every time: it's a great crowd of people, with a wide range of experience levels, and Saturday afternoon is going well. Then i... | https://www.lesswrong.com/posts/oHwkDv45YYnFCEGdj/reconsider-challenging-sessions-at-weekends |

# Gabapentinoids I have known and loved

[*(with apologies to Sasha Shulgin)*](https://en.wikipedia.org/wiki/PiHKAL)

Gabapentinoids are weird.

For a start, they don’t do what they say on the tin. It was named after the thing the inventors thought it would do, i.e. bind to and modulate GABA receptors, the ones which c... | https://www.lesswrong.com/posts/ztCKmdLXZbxRNrweR/gabapentinoids-i-have-known-and-loved |

# How to emotionally grasp the risks of AI Safety

I've spent a fair amount of time trying to convince people that this AI thing could be quite large and quite dangerous. I think I normally have at least some success, but there is a range of responses, such as:

1. Deer in the headlights - People don't know what to do... | https://www.lesswrong.com/posts/jPBmCxpFQzhypeTpg/how-to-emotionally-grasp-the-risks-of-ai-safety |

# Latent Reasoning Sprint #3: Activation Difference Steering and Logit Lens

In my[](https://www.lesswrong.com/posts/c4LYmzEC6cFQzrDZz/probing-codi-s-latent-reasoning-chain-with-logit-lens-and)[previous post I found](https://www.lesswrong.com/posts/c4LYmzEC6cFQzrDZz/probing-codi-s-latent-reasoning-chain-with-logit-lens... | https://www.lesswrong.com/posts/mXuqpJkJpaeTjyCgm/latent-reasoning-sprint-3-activation-difference-steering-and-1 |

# Common advice #3: Asking why one more time

*Written quickly as part of the* [*Inkhaven Residency*](https://www.inkhaven.blog/)*.*

At a high level, research feedback I give to more junior research collaborators tends to fall into one of three categories:

* Doing quick sanity checks

* Saying precisely what you w... | https://www.lesswrong.com/posts/hprb73HThzFK8Y7Yu/common-advice-3-asking-why-one-more-time |

# Am I the baddie?

I am a software engineer. I work for a company that makes software for road construction. Monday last week we were under a bad crunch and we were told to start using agentic workflows. We had like 50 tickets to close by the following Tuesday. I’ve been experimenting with ai development for years now... | https://www.lesswrong.com/posts/fnGzDDhekkmPEBqa5/am-i-the-baddie |

# Democracy Dies With The Rifleman

*Political power grows out of the barrel of a gun* \-\- Mao Zedong

Halfway thru recorded history, Athens became the first state we're sure was a democracy, and inspiration to many later ones. Probably some existed earlier, and certainly some entities smaller than states were democra... | https://www.lesswrong.com/posts/ntqpAuHTrphYzv4EG/democracy-dies-with-the-rifleman |

# Mean field sequence: an introduction

This is the first post in a planned series about mean field theory by Dmitry and Lauren (this post was generated by Dmitry with lots of input from Lauren, and a second part should be coming soon). The posts are a combination of an explainer and some original research/ experiments... | https://www.lesswrong.com/posts/rduzFkTKx5pGKWKcL/mean-field-sequence-an-introduction |

# Compute Curse

*Epistemic status: romantic speculation.*

The core claim: I accidentally thought that compute growth can be rather neatly analogized to natural resource abundance.

Before compute curse, there was resource curse

----------------------------------------------

Countries that discover oil often end up w... | https://www.lesswrong.com/posts/fPc4v8GJFTKp7mMXL/compute-curse-1 |

# Considerations for growing the pie

Recently some friends and I were comparing *growing the pie*interventions to an *increasing our friends' share of the pie* intervention, and at first we mostly missed some general considerations against the latter type.

1\. Decision-theoretic considerations

-----------------------... | https://www.lesswrong.com/posts/vEkhqfYiHcmRQu8Rt/considerations-for-growing-the-pie |

# Chicken-Free Egg Whites

Baking has traditionally made extensive use of egg whites, especially the way they can be beaten into a foam and then set with heat. While I eat eggs, I have a lot of people in my life who avoid them for ethical reasons, and this often limits what I can bake for them. I was very excited to le... | https://www.lesswrong.com/posts/3bnsWnWG3uaNdAjLZ/chicken-free-egg-whites |

# dark ilan

The second time Vellam uncovers the conspiracy underlying all of society, he approaches a Keeper.

Some of the difference is convenience. Since Vellam reported that he’d found out about the first conspiracy, he’s lived in the secret AI research laboratory at the Basement of the World, and Keepers are much ... | https://www.lesswrong.com/posts/Fvm4AzLnoZHqNEBqf/dark-ilan |

# Interpreting Gradient Routing’s Scalable Oversight Experiment

*%TLDR. We discuss the setting that *[*Gradient *](https://www.lesswrong.com/posts/nLRKKCTtwQgvozLTN/gradient-routing-masking-gradients-to-localize-computation)[*Routing (GR) paper*](https://arxiv.org/abs/2410.04332)* uses to model *[*Scalable Oversight (... | https://www.lesswrong.com/posts/SqgmKAAkr7QGPFQey/interpreting-gradient-routing-s-scalable-oversight-1 |

# Research note on selective inoculation

**Introduction**

================

[Inoculation](https://arxiv.org/abs/2510.04340) [Prompting](https://arxiv.org/abs/2510.05024) is a technique to improve test-time alignment by introducing a contextual cue (like a system prompt) to steer the model behavior away from unwanted ... | https://www.lesswrong.com/posts/q8A6qAxpcEYFpAoCD/research-note-on-selective-inoculation |

# Positive sum doesn't mean "win-win"

A lot of people and documents online say that positive-sum games are "win-wins", where all of the participants are better off. But this isn't true! If A gets $5 and B gets -$2 that's positive sum (the sum is $3) but it's not a win-win (B lost). Positive sum games can be win-wins, ... | https://www.lesswrong.com/posts/pCB5SoDmkNxdeTsBH/positive-sum-doesn-t-mean-win-win |

# Cheaper/faster/easier makes for step changes (and that's why even current-level LLMs are transformative)

> We already knew there's nothing new under the sun.

>

> Thanks to advances in telescopes, orbital launch, satellites, and space vehicles we now know there's nothing new above the sun either, but there is rather... | https://www.lesswrong.com/posts/287GeTncxLJNYpsbX/cheaper-faster-easier-makes-for-step-changes-and-that-s-why |

# Ten different ways of thinking about Gradual Disempowerment

About a year ago, we wrote a paper that coined the term “[Gradual Disempowerment](https://gradual-disempowerment.ai/).”

It proved to be a great success, which is terrific. A friend and colleague told me that it was the most discussed paper at DeepMind last... | https://www.lesswrong.com/posts/W9XQ9CcMTbZQa33eP/ten-different-ways-of-thinking-about-gradual-disempowerment |

# Academic Proof-of-Work in the Age of LLMs

*Written quickly as part of the* [*Inkhaven Residency*](https://www.inkhaven.blog/)*.*

Related: [Bureaucracy as active ingredient](https://slatestarcodex.com/2018/08/30/bureaucracy-as-active-ingredient/), [pain as active ingredient](https://slatestarcodex.com/2019/04/10/pai... | https://www.lesswrong.com/posts/Tfixo2RhNXgHzLwZx/academic-proof-of-work-in-the-age-of-llms |

# Steering Might Stop Working Soon

Steering LLMs with single-vector methods might break down soon, and by soon I mean soon enough that if you're working on steering, you should start planning for it failing *now*.

This is particularly important for things like steering as a mitigation against eval-awareness.

Steerin... | https://www.lesswrong.com/posts/fuzfbz8TbuLcskGCx/steering-might-stop-working-soon |

# 11 pieces of advice for children

I came up with these principles when I was a child myself.

1. Don’t be a sheep 🐑. Avoid mindlessly copying others. Resist the urge towards conformity. Think for yourself whether something is worth doing and useful for your goals. If *appearing to conform *is useful for your goals,... | https://www.lesswrong.com/posts/cqriGHCwmKZa2v862/11-pieces-of-advice-for-children |

# Unsweetened Whipped Cream

I'm a huge fan of whipped cream. It's rich, smooth, and fluffy, which makes it a great contrast to a wide range of textures common in baked goods. And it's usually better without adding sugar.

Desserts are usually too sweet. I want them to have enough sugar that they feel like a dessert, b... | https://www.lesswrong.com/posts/uQCmufGhMgxom5GGk/unsweetened-whipped-cream |

# Unmathematical features of math

*(Epistemic status: I consider the following quite obvious and self-evident, but decided to post anyways.*[^1s4xc8p4tiq]*)*

> Mathematics is a social activity done by mathematicians.

— Paul Erdős, probably

There've been a few attempts to create mathematical models of math. Th... | https://www.lesswrong.com/posts/Nj5SyFYwo2pTEH5oJ/unmathematical-features-of-math |

# My forays into cyborgism: theory, pt. 1

*In this post, I share the thinking that lies behind the Exobrain system I have built for myself. In another post, I'll describe the actual system.*

I think the standard way of relating to LLM/AIs is as an external tool (or "digital mind") that you use and/or collaborate with... | https://www.lesswrong.com/posts/ggkZFqrMGmDdwQphG/my-forays-into-cyborgism-theory-pt-1 |

# New (Unofficial) Fatebook Android App

tldr; [get the new Fatebook Android app!](https://github.com/JapanColorado/fatebook-android)

What is Fatebook?

-----------------

Fatebook.io is a website[^saeb1y5e47d] for easily tracking your predictions and becoming better calibrated at them. I like it a lot, and find it con... | https://www.lesswrong.com/posts/4cGYXjhpdkXrKNxfR/new-unofficial-fatebook-android-app |

# Estimates of the expected utility gain of AI Safety Research

When thinking about AI risk, I often wonder how materially impactful each hour of my time is, and I think that this may be useful for other people to know as well, so I spent a couple of hours making a couple of estimates. I basically expect that a tonne o... | https://www.lesswrong.com/posts/gXYeWoAfSrdGogchp/estimates-of-the-expected-utility-gain-of-ai-safety-research |

# Reflections on the largest AI safety protest in US history

On a sunny Saturday afternoon two weeks ago, I was sitting in Dolores park, watching a man get turned into a cake. It was, I gather, his birthday and for reasons (Maybe something to do with Scandanavia?) his friends had decided to celebrate by taping him to ... | https://www.lesswrong.com/posts/dkMGrCN7AaJfqAAND/reflections-on-the-largest-ai-safety-protest-in-us-history |

# Paper close reading: "Why Language Models Hallucinate"

People often talk about paper reading as a skill, but there aren’t that many examples of people walking through how they do it. Part of this is a problem of supply: it’s expensive to document one’s thought process for any significant length of time, and there’s ... | https://www.lesswrong.com/posts/rAjtnXx5qLgubsrGQ/paper-close-reading-why-language-models-hallucinate |

# Contra The Usual Interpretation Of “The Whispering Earring”

*Submission statement: This essay builds off arguments that I have come up with entirely by myself, as can be seen by viewing the comments in my profile. I freely disclose that I used Claude to help structure and format rougher drafts or to better compile s... | https://www.lesswrong.com/posts/t5psGMdgReuRucEkr/contra-the-usual-interpretation-of-the-whispering-earring |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.