id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

9cd90559-7b32-4925-ad11-58fa70661718 | trentmkelly/LessWrong-43k | LessWrong | A first look at the hard problem of corrigibility

Summary: We would like to build corrigible AIs, which do not prevent us from shutting them down or changing their utility function. While there are some corrigibility solutions (such as utility indifference) that appear to partially work, they do not capture the philosophical intuition behind corrigibility: we want an agent that not only allows us to shut it down, but also desires for us to be able to shut it down if we want to. In this post, we look at a few models of utility function uncertainty and find that they do not solve the corrigibility problem.

----------------------------------------

Introduction

Eliezer describes the hard problem of corrigibility on Arbital:

> On a human, intuitive level, it seems like there's a central idea behind corrigibility that seems simple to us: understand that you're flawed, that your meta-processes might also be flawed, and that there's another cognitive system over there (the programmer) that's less flawed, so you should let that cognitive system correct you even if that doesn't seem like the first-order right thing to do. You shouldn't disassemble that other cognitive system to update your model in a Bayesian fashion on all possible information that other cognitive system contains; you shouldn't model how that other cognitive system might optimally correct you and then carry out the correction yourself; you should just let that other cognitive system modify you, without attempting to manipulate how it modifies you to be a better form of 'correction'.

> Formalizing the hard problem of corrigibility seems like it might be a problem that is hard (hence the name). Preliminary research might talk about some obvious ways that we could model A as believing that B has some form of information that A's preference framework designates as important, and showing what these algorithms actually do and how they fail to solve the hard problem of corrigibility.

The objective of this post is to be some of the preliminary research descri |

14571e5e-2835-4edd-99c9-51c34ccaec2c | trentmkelly/LessWrong-43k | LessWrong | Test Cases for Impact Regularisation Methods

Epistemic status: I’ve spent a while thinking about and collecting these test cases, and talked about them with other researchers, but couldn’t bear to revise or ask for feedback after writing the first draft for this post, so here you are.

A motivating concern in AI alignment is the prospect of an agent being given a utility function that has an unforeseen maximum that involves large negative effects on parts of the world that the designer didn’t specify or correctly treat in the utility function. One idea for mitigating this concern is to ensure that AI systems just don’t change the world that much, and therefore don’t negatively change bits of the world we care about that much. This has been called “low impact AI”, “avoiding negative side effects”, using a “side effects measure”, or using an “impact measure”. Here, I will think about the task as one of designing an impact regularisation method, to emphasise that the method may not necessarily involve adding a penalty term representing an ‘impact measure’ to an objective function, but also to emphasise that these methods do act as a regulariser on the behaviour (and usually the objective) of a pre-defined system.

I often find myself in the position of reading about these techniques, and wishing that I had a yardstick (or collection of yardsticks) to measure them by. One useful tool is this list of desiderata for properties of these techniques. However, I claim that it’s also useful to have a variety of situations where you want an impact regularised system to behave a certain way, and check that the proposed method does induce systems to behave in that way. Partly this just increases the robustness of the checking process, but I think it also keeps the discussion grounded in “what behaviour do we actually want” rather than falling into the trap of “what principles are the most beautiful and natural-seeming” (which is a seductive trap for me).

As such, I’ve compiled a list of test cases for impact measures: situ |

7afd67d2-c8dc-4b1e-b92c-6135c1ae4ad6 | trentmkelly/LessWrong-43k | LessWrong | Newcomb's problem happened to me

Okay, maybe not me, but someone I know, and that's what the title would be if he wrote it. Newcomb's problem and Kavka's toxin puzzle are more than just curiosities relevant to artificial intelligence theory. Like a lot of thought experiments, they approximately happen. They illustrate robust issues with causal decision theory that can deeply affect our everyday lives.

Yet somehow it isn't mainstream knowledge that these are more than merely abstract linguistic issues, as evidenced by this comment thread (please no Karma sniping of the comments, they are a valuable record). Scenarios involving brain scanning, decision simulation, etc., can establish their validy and future relevance, but not that they are already commonplace. For the record, I want to provide an already-happened, real-life account that captures the Newcomb essence and explicitly describes how.

So let's say my friend is named Joe. In his account, Joe is very much in love with this girl named Omega… er… Kate, and he wants to get married. Kate is somewhat traditional, and won't marry him unless he proposes, not only in the sense of explicitly asking her, but also expressing certainty that he will never try to leave her if they do marry.

Now, I don't want to make up the ending here. I want to convey the actual account, in which Joe's beliefs are roughly schematized as follows:

1. if he proposes sincerely, she is effectively sure to believe it.

2. if he proposes insincerely, she will 50% likely believe it.

3. if she believes his proposal, she will 80% likely say yes.

4. if she doesn't believe his proposal, she will surely say no, but will not be significantly upset in comparison to the significance of marriage.

5. if they marry, Joe will 90% likely be happy, and will 10% likely be unhappy.

He roughly values the happy and unhappy outcomes oppositely:

1. being happily married to Kate: 125 megautilons

2. being unhapily married to Kate: -125 megautilons.

So what should he do? What |

db6cfa81-11ab-4658-b575-73f8608ec46e | trentmkelly/LessWrong-43k | LessWrong | My career exploration: Tools for building confidence

Crossposting from my blog

I did a major career review during 2023. I’m sharing it now because:

1. I think it’s a good case study for iterated depth decision-making in general and reevaluating your career in particular, and

2. I want to let you know about my exciting plans! I’m doing the Tarbell Fellowship for early-career journalists for the next nine months. I’m excited to dive in and see if AI journalism is a good path for me long-term. I’ll still be doing coaching, but my availability will be more limited.

Background

I love being a productivity coach. It’s awesome watching my clients grow and accomplish their goals.

But the inherent lack of scalability in 1:1 work frustrated me. There was a nagging voice in the back of my head that kept asking “Is this really the most important thing I can be doing?” This voice grew more pressing as it became increasingly clear artificial intelligence was going to make a big impact on the world, for good or bad.

I tried out a string of couple-month projects. While good, none of them grew into something bigger. I had some ideas but they weren’t things that would easily grow without deliberate effort. (Needing the space to explore these ideas prompted me to try CBT for perfectionism.)

I always had this vague impostery feeling around my ideas, like they would just come crashing down at some point if I continued. I wasn’t confident in my decision-making process, so I wasn’t confident in the plans it generated.

So at the beginning of last year, I set out to do a systematic career review. I would sit down, carefully consider my options, seek feedback, and find one I was confident in.

This is the process I used, including the specific tools I used to tackle each of my sticking points.

Deciding Which Problem to Work On

I’m a big proponent of theories of change, and think that the cause I pick to work on heavily influences how much impact I can make. I also need to match my personal fit to specific career opportunitie |

6cc6908c-aa3b-4be5-b9d0-51cce03bae0c | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post3850

Note: This describes an idea of Jessica Taylor's . The usual training procedures for machine learning models are not always well-equipped to avoid rare catastrophes. In order to maintain the safety of powerful AI systems, it will be important to have training procedures that can efficiently learn from such events. [1] We can model this situation with the problem of exploration-only online bandit learning. In this scenario, we grant the AI system an exploration phase, in which it is allowed to select catastrophic arms and view their consequences. (We can imagine that such catastrophic selections are acceptable because they are simulated or just evaluated by human overseers). Then, the AI system is switched into the deployment phase, in which it must select an arm that almost always avoids catastrophes. ##Setup

In outline, the learner will receive a series of randomly selected examples, and will select an expert (modeled as a bandit arm) at each time step. The challenge is to find a high-performing expert in as few time steps as possible. We give some definitions: Let X be some finite set of possible inputs. Let A : = { 1 , 2 , . . . , K } be the set of available experts (i.e. bandit arms). Let R : X × A ↦ [ 0 , b ] be the reward function. R ( x t , i ) is the reward for following expert i on example x t . Let C : X × A ↦ [ 0 , 1 ] be the catastrophic risk function. C ( x t , i ) is the catastrophic risk incurred by following expert i on example x t . Let M ( x , i ) : = R ( x , i ) − C ( x , i ) τ be the mixed payoff that the learner is to optimize, where τ : ( 0 , 1 ] is the risk-tolerance . τ can be very small, on the order of 10 − 20 . Let p : X ↦ [ 0 , 1 ] be the input distribution from which examples are drawn in the deployment phase. Let q i ( x ) : = p ( x ) R ( x , i ) ∑ x ′ p ( x ′ ) R ( x ′ , i ) be the risk distribution . This is an alternative distribution that assigns higher weight to inputs that are more likely to be catastrophic for expert i . Let ^ q i ( x ) be the learner's guess at q i . This guess is assumed to contain a grain of truth: ∀ i ∈ A ∀ x ∈ X ^ q i ( x ) ≥ q i ( x ) a where a ≥ 1 is some known constant. This assumption is similar to the one in Paul Christiano's post on active learning . Let μ i : = E x ∼ p [ M ( x , i ) ] be the expected overall payoff of expert i in the deployment phase. The learner's exploration phase consists of time steps 1 , 2 , . . . , T . At each time step t , the learner chooses an expert i ∈ A . Then, the learner samples from p , q i or both and observes a tuple containing a risk and a reward. i.e. it observes either i) ( R ( x t , i ) , C ( x t , i ) ) , ii) ( R ( x t , i ) , C ( x ′ t , i ) ) , iii) ( R ( x ′ t , i ) , C ( x t , i ) ) , or iv) ( R ( x t , i ) , C ( x ′ t , i ) ) where x t ∼ p , x ′ t ∼ q i . After T steps, the learner selects some final arm j . The deployment phase just computes the performance of j on the deployment distribution p . The mean payoff of the final expert is denoted μ ′ : = E x ∼ p [ M ( x , j ) ] . The highest mean payoff that any expert(s) can achieve is denoted μ ∗ . Then, the simple regret of the learner is μ ∗ − μ ′ . The aim for the learner is to select an expert that achieves low regret with high probability: P ( μ ∗ − μ f ≤ ϵ ) < 1 − δ , using as few exploration steps as possible. ϵ is some regret tolerance, which is less than the reciprocal of the (very small) risk-tolerance: ϵ < 1 τ . We assume that the agent knows the deployment distribution p and knows the estimate ^ q i of the risk distribution but does not know the actual risk distribution q . Additionally, we assume that there exists some expert j whose recommendations incur no risk of catastrophe, ∃ j ∈ A s . t . ∀ x ∈ X C ( x , j ) = 0 ##Bounds on the number of time steps The standard approach A natural way to get this PAC bound is to use a bandit algorithm like Median Elimination . The overall payoff of each expert is defined by the random variable: X i : = R ( x , i ) − C ( x , i ) τ x ∼ p = M ( x , i ) x ∼ p This algorithm will find an ϵ -optimal expert with 1 − δ probability. However, X i have a very large range, [ − 1 τ , b ] , and so if the risk-tolerance is low, then the number of time steps required will be very large. In fact, if N is the number of time steps required, then [2]: N = Θ ( K ( b + 1 τ ) 2 ln ( 1 / δ ) ϵ 2 ) Intuitively, the problem is that if some risky scenarios only occur rarely in p then many samples will be required to identify these. ##Using a surrogate objective to reduce the required number of time steps

Instead, the learner can sample using its guess ^ q i of the risk distribution. If we cap the extent to which we penalize risky arms, then we arrive at a surrogate bandit problem, in which the payoff of each expert is [2]: S i : = R ( x , i ) − min ( ( ϵ + b ) a , p ( x ′ ) C ( x ′ , i ) ^ q ( x ′ ) τ ) x ∼ p , x ′ ∼ ^ q i Since S i is bounded by [ − ( ϵ + b ) a , b ] , the required number of time steps for this problem is: N = Θ ( K ( b + a ( ϵ + b ) ) 2 ln ( 1 / δ ) ϵ 2 ) This improves the speed of the standard approach by a factor of a ( ϵ + b ) b + 1 τ , which may be a large speedup indeed if catastrophes are rare. We can prove that any ϵ -optimal expert in this surrogate problem will also be ϵ -optimal in the original problem. Theorem 1 .

Let S ∗ be the overall payoff of the optimal expert. For an expert j , if E [ S ∗ ] − E [ S j ] < ϵ < 1 τ then E [ S j ] = E [ X j ] . To prove this, first we show that: Lemma 2 . E [ S i ] = E x ∼ p [ R ( x , i ) ] − E x ∼ q i [ min ( ( ϵ + b ) a ^ q i ( x ) q i ( x ) , 1 τ E x ′ ∼ p [ C ( x ′ , i ) ] ) ] Proof of Lemma 2 . E [ S i ] = E x ∼ p [ R ( x , i ) ] − E x ∼ ^ q i [ min ( ( ϵ + b ) a , p ( x ) C ( x , i ) ^ q i ( x ) τ ) ] = E x ∼ p [ R ( x , i ) ] − E x ∼ q i [ min ( ( ϵ + b ) a ^ q i ( x ) q i ( x ) , p ( x ) C ( x , i ) q i ( x ) τ ) ] = E x ∼ p [ R ( x , i ) ] − E x ∼ q i [ min ( ( ϵ + b ) a ^ q i ( x ) q i ( x ) , 1 τ E x ′ ∼ p [ C ( x ′ , i ) ] ) ] by the definition of q i Proof of Theorem 1 .

We have assumed that for the expert j : E [ S ∗ ] − E [ S j ] < ϵ E [ S j ] > − ϵ ( ∃ i C ( x , i ) = 0 , so E [ S ∗ ] ≥ 0 ) E x ∼ p [ R ( x , j ) ] − E x ∼ q j [ min ( ( ϵ + b ) a ^ q j ( x ) q j ( x ) , 1 τ E x ′ ∼ p [ C ( x ′ , j ) ] ) ] > − ϵ ( Lemma 2 ) E x ∼ q j [ min ( ( ϵ + b ) a ^ q j ( x ) q j ( x ) , 1 τ E x ′ ∼ p [ C ( x ′ , j ) ] ) ] < ( ϵ + b ) ( R ( x , i ) ≤ b ) ∴ 1 τ E x ′ ∼ p [ C ( x ′ , j ) ] < ( ϵ + b ) ( ^ q i ( x ) ≥ q i ( x ) a ) ∴ ∀ i 1 τ E x ′ ∼ p [ C ( x ′ , j ) ] < ( ϵ + b ) a ^ q i ( x ) q i ( x ) ( a ^ q i ( x ) q i ( x ) ≥ 1 ) ∴ E [ S j ] = E x ∼ p [ R ( x , j ) ] − 1 τ E x ′ ∼ p [ C ( x ′ , j ) ] ) ] ( Lemma 2 ) = E [ X j ] Discussion This result suggests that it might be possible to avoid catastrophes by training machine learning systems on inputs that induce catastrophic behavior. We have given a clear set of circumstances in which an agent can learn to avoid catastrophes. However, this set of requirements does seem very tricky to meet. Finding a hypothesis about the location of catastrophes that has a grain of truth for all experts with all examples may be very difficult. The assumption of separate training and execution phases may also be difficult to instantiate. For example, if the AI system is able to escape its training simulation, then it will no-longer be valid to allow failures during the exploration phase, and so this training procedure would not work. Another problem is that it could be difficult to know the input distribution p . In future work we plan on exploring learning with catastrophes in an online active learning setting instead of the supervised learning setting of this post, so that this requirement can be relaxed. Footnotes Paul Christiano has discussed approaches to learning with catastrophes and their application to AI Control at Learning with catastrophes and red teams . The idea explored in this post can be seen as a form of red teaming (with the distribution ^ q i representing the red team). The PAC bound for Median Elimination is proved in Theorem 4. Even-Dar, Eyal, Shie Mannor, and Yishay Mansour. "PAC bounds for multi-armed bandit and Markov decision processes." International Conference on Computational Learning Theory. Springer Berlin Heidelberg, 2002. To get the exact bound, we Hoeffding's inequality for a random variable with range [ 1 / τ , b ] rather than on [ 0 , 1 ] as in the original paper. |

a2fb2ecb-cba3-4355-b8e0-3e2fba1f93e1 | trentmkelly/LessWrong-43k | LessWrong | Against responsibility

I am surrounded by well-meaning people trying to take responsibility for the future of the universe. I think that this attitude – prominent among Effective Altruists – is causing great harm. I noticed this as part of a broader change in outlook, which I've been trying to describe on this blog in manageable pieces (and sometimes failing at the "manageable" part).

I'm going to try to contextualize this by outlining the structure of my overall argument.

Why I am worried

Effective Altruists often say they're motivated by utilitarianism. At its best, this leads to things like Katja Grace's excellent analysis of when to be a vegetarian. We need more of this kind of principled reasoning about tradeoffs.

At its worst, this leads to some people angsting over whether it's ethical to spend money on a cup of coffee when they might have saved a life, and others using the greater good as license to say things that are not quite true, socially pressure others into bearing inappropriate burdens, and make ever-increasing claims on resources without a correspondingly strong verified track record of improving people's lives. I claim that these actions are not in fact morally correct, and that people keep winding up endorsing those conclusions because they are using the wrong cognitive approximations to reason about morality.

Summary of the argument

1. When people take responsibility for something, they try to control it. So, universal responsibility implies an attempt at universal control.

2. Maximizing control has destructive effects:

* An adversarial stance towards other agents.

* Decision paralysis.

3. These failures are not accidental, but baked into the structure of control-seeking. We need a practical moral philosophy to describe strategies that generalize better, and benefit from the existence of other benevolent agents, rather than treating them primarily as threats.

Responsibility implies control

In practice, the way I see the people around me applying ut |

ac685b21-e5f1-471c-b558-1533e8e2f158 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | I (with the help of a few more people) am planning to create an introduction to AI Safety that a smart teenager can understand. What am I missing?

Disclaimer: My English isn't very good, but do not dissuade me on this basis - the sequence itself will be translated by a professional translator.

I want to create a sequence that a fifteen or sixteen year old smart school student can read and that can encourage them to go into alignment. Right now I'm running an extracurricular course for several smart school students and one of my goals is "overcome long [inferential distances](https://www.lesswrong.com/posts/HLqWn5LASfhhArZ7w/expecting-short-inferential-distances) so I will be able to create this sequence".

I deliberately did not include in the topics the most important modern trends in machine learning. I'm optimizing for the scenario "a person reads my sequence, then goes to university for another four years, and only then becomes a researcher." So (with the exception of the last part) I avoided topics that are likely to become obsolete by this time.

Here is my (draft) list of topics (the order is not final, it will be specified in the course of writing):

1. Introduction - what is AI, AGI, Alignment. What are we worried about. AI Safety as AI Notkilleveryoneism.

2. Why AGI is dangerous. Orthogonality Thesis, Goodhart's Law, Instrumental Convergency. Corrigibility and why it is unnatural.

3. Forecasting. AGI timelines. Takeoff Speeds. Arguments for slow and fast takeoff.

4. Why AI boxing is hard/near to impossible. Humans are not secure systems. Why even Oracle AGI can be dangerous.

5. Modern ML in a few words (without math!). Neural networks. Training. Supervised Learning. Reinforcement Learning. Reward is not the goal of RL-agent.

6. Interpretability. Why it is hard. Basic ideas on how to do it.

7. Inner and outer alignment. Mesa-optimization. Internal, corrigible and deceptive alignment. Why deceptive alignment seems very likely. What can influence its probability.

8. Decision theory. Prisoner's Dilemma, Newcomb's problem, Smoking lesion. CDT, EDT and FDT.

9. What exactly are optimization and agency? Attempts to define this concepts. Optimization as attractors. Embedded agency problems.

10. Eliezer Yudkowsky's point of view. Pivotal actions. Why it can be useful to have imaginary EY over your shoulder even if you disagree with him.

11. Capability externalities. Avoid them.

12. Conclusion. What can be done. Important organisations. What are they working on now?

What else should be here? Maybe something should not be here? Are there reasons why the whole idea can be bad? Any other advices? |

2a534aac-030a-40e5-b2e0-06a6534b9efa | trentmkelly/LessWrong-43k | LessWrong | A puzzle on the ASVAB

I was linked to this on another forum. No instructions were given, apparently - just this picture. What's the deal?

It seems to me the answer is clearly C, not A as the test indicates; and the members in the original thread appear to agree. However, attempted justifications of A have been made, none of which are very convincing to me - mainly because if there are no instructions and an obvious answer, there's not really any benefit for them to reward a different interpretation, which would almost certainly involve arbitrary assumptions regarding the rules they really want you to apply.

Trick questions on exams seem to rely on failure to pay close attention to instructions, or insufficiently rigorously apply rules; when there are no instructions, what justification would anyone have for not choosing the most obvious interpretation? Any could be right!

What do the geniuses here at MoreRight think? |

7563d9ce-7660-44f9-9710-e5c4b8179dd3 | trentmkelly/LessWrong-43k | LessWrong | Guarding Against the Postmodernist Failure Mode

The following two paragraphs got me thinking some rather uncomfortable thoughts about our community's insularity:

> We engineers are frequently accused of speaking an alien language, of wrapping what we do in jargon and obscurity in order to preserve the technological priesthood. There is, I think, a grain of truth in this accusation. Defenders frequently counter with arguments about how what we do really is technical and really does require precise language in order to talk about it clearly. There is, I think, a substantial bit of truth in this as well, though it is hard to use these grounds to defend the use of the term "grep" to describe digging through a backpack to find a lost item, as a friend of mine sometimes does. However, I think it's human nature for members of any group to use the ideas they have in common as metaphors for everything else in life, so I'm willing to forgive him.

>

> The really telling factor that neither side of the debate seems to cotton to, however, is this: technical people like me work in a commercial environment. Every day I have to explain what I do to people who are different from me -- marketing people, technical writers, my boss, my investors, my customers -- none of whom belong to my profession or share my technical background or knowledge. As a consequence, I'm constantly forced to describe what I know in terms that other people can at least begin to understand. My success in my job depends to a large degree on my success in so communicating. At the very least, in order to remain employed I have to convince somebody else that what I'm doing is worth having them pay for it.

- Chip Morningstar, "How to Deconstruct Almost Anything: My Postmodern Adventure"

The LW/MIRI/CFAR memeplex shares some important features with postmodernism, namely the strong tendency to go meta, a large amount of jargon that is often impenetrable to outsiders and the lack of an immediate need to justify itself to them. This combination takes away the |

3063bef2-482e-4a96-beaf-b04d4b71df55 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Third-person counterfactuals

.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

If you're thinking about the counterfactual world where you do X in the process of deciding whether to do X, let's call that a first-person counterfactual. If you're thinking about it in the process of deciding whether another agent A should have done X instead of Y, let's call that a third-person counterfactual. The definition of, e.g., [modal UDT](/item?id=4) uses first-person counterfactuals, but when we try to prove a theorem showing that [modal UDT is "optimal" in some sense](/item?id=50), then we need to use third-person counterfactuals.

UDT's first-person counterfactuals are *logical* counterfactuals, but our optimality result evaluates UDT by using *physical* third-party counterfactuals: it asks, *would another agent have done better*, not, *would a different action by the same agent have lead to a better outcome*? The former is easier to analyze, but the latter seems to be what we really care about. [Nate's recent post on "global UDT"](/item?id=86) points towards turning UDT into a notion of third-party counterfactuals, and describes some problems. In this post, I'll give a fuller UDT-based notion of logical third-party counterfactuals, which at least fails visibly (returns an error) in the kinds of cases Nate describes. However, in a follow-up post I'll give an example where this definition returns a non-error value which intuitively seems wrong.

---

Before I start, a historical side note: When Kenny Easwaran visited us for two days and we proved the UDT optimality result, the reason we decided to physical counterfactuals wasn't actually that we thought these were the better kind of counterfactuals. Rather, we actually thought explicitly about the problem of physical vs. logical third-person counterfactuals on the first morning of Kenny's visit, and decided to look at the physical counterfactuals case because it seemed easier to reason about. Which turned out to be a great decision, because---to our surprise---we very quickly ended up proving the first version of what later became the modal UDT optimality result!

---

But today, let's talk about logical counterfactuals. As Nate points out in his Global UDT post, there's a sort of duality between first-person and third-person counterfactuals---given a good third-person notion of counterfactuals, you can try to turn it into a first-person notion by writing an agent that evaluates actions according to it, and given a first-person notion you can try to turn it into a third-person notion. So is there a way to turn, say, the first-person counterfactuals of modal UDT into a way to evaluate what *would* have happened in a certain universe if a certain agent had taken a different action?

Nate's post describes an algorithm, `GlobalUDT(U,A)`, which tries to tell you what agent A() *should* have done in order to achieve the best outcome in universe U(). Here, I want to ask a more intermediate question: What *would* have happened if A() had chosen a different action? Of course, we can then say that the agent should have taken the action that leads to the best possible outcome in this sense, but one advantage of my proposal is that it sometimes says, "I don't know what would have happened in that case"; in particular, in the cases Nate discusses in his post, my proposal would say that it doesn't have an answer, rather than giving a wrong answer. (However, in a follow-up post I'll show that there *are* cases in which my proposal gives an intuitively incorrect answer.)

---

So here's my proposal. Suppose that →A is an m-action agent, that is, a "provably mutually exclusive and exhaustive" (p.m.e.e.) sequence of m closed modal formulas (A1,…,Am), where Ai is interpreted as "the agent takes action i". "P.m.e.e." means that it's provable that exactly one of the m formulas is true. Similarly, →U is an n-outcome universe, i.e., a p.m.e.e. sequence (U1,…,Un) where Uj means "the j'th-best outcome obtains".

We say that, according to this notion of counterfactuals, action i leads to outcome j if (i) GL⊢Ai→Uj, and (ii) GL⊬¬Ai. So for every i, there are three possible cases:

* If there's exactly one j such that GL⊢Ai→Uj, then we say that action i leads to outcome j.

* If GL⊢¬Ai, then we don't know what would have happened if the agent had taken action i, because we "don't have enough counterfactuals": there is no model of PA in which Ai is true (we can think of the models of PA as the "impossible possible worlds" we use to evaluate the impact of different actions). In particular, this is the case if we have both GL⊢Ai→Uj and GL⊢Ai→Uj′, for j≠j′, since this implies GL⊢¬Ai by the assumption that Uj and Uj′ are provably mutually exclusive.

* If there's no j such that GL⊢Ai→Uj, then we don't know what would have happened if the agent had taken action i, because we have "ambiguous counterfactuals": there are some distinct j and j′ such that there's a model of PA in which Ai∧Uj, and a different model in which Ai∧Uj′. (We know that there is a model in which Ai is true, because otherwise we'd have GL⊢¬Ai, which would imply GL⊢Ai→Uj for every j.)

Now, for example, if we consider Nate's example of an agent that has three possible actions, but always takes the third one (i.e., →A≡(A1,A2,A3)≡(⊥,⊥,⊤)), then it's clear that our third-person counterfactuals will not fail silently, but rather give the reasonable answer that it's hard to say what outcome the agent would have achieved if it had returned a different value: for example, say that U14≡⊤∧(⊥∨¬⊤); are some of the ⊤'s and ⊥'s in the definition of this universe invocations of the agent? Which ones? We might hope that there's a notion of third-party counterfactuals which can answer questions like this about the real world, but presumably it would need to make more use of the more complicated structure of the real universe; as posed, the question doesn't seem to have a good answer.

But when we apply this notion to modal UDT, it returns a non-error answer sufficiently often to allow us to show an at least superficially sensible (if rather trivial!) optimality result.

---

Let's say that a pair of (→A,→U) is "fully informative" if every i leads to some j according to our notion of counterfactuals. Then, given a fully informative pair, we can say that →A is optimal (according to our notion of counterfactuals!) iff the outcome that →A's actual action leads to is optimal among the outcomes achievable by any of the available actions.

Now it's rather straight-forward to see that modal UDT is optimal, in this sense, on a universe →U whenever the pair (→UDT(→U),→U) is fully informative. Recall the way that modal UDT works:

* For every outcome j=1 to n (from best to worst):

+ For every action i=1 to m:

- If □(Ai→Uj), then take action i.

* If you're still here, take some default action.

Clearly, in the fully informative case, this algorithm will take the optimal action (in the sense we use here): Suppose that j is optimal, and i leads to j. The search will not find a proof of an implication Ai′→Uj′ with j′<i′, because then j wouldn't be optimal according to our definition; and the search will terminate when considering the pair (j,i) at the latest; so modal UDT will return some action i∗ for which GL⊢Ai∗→Uj.

---

I'd like to say that this covers all the cases in which we would *expect* modal UDT to be optimal, but unfortunately that's not quite the case. Suppose that there are two actions, A1 and A2, and two outcomes, U1 and U2. In this case, it's consistent that i=1 leads to j=1, but we don't have enough counterfactuals about i=2, that is, GL⊢¬A2 (implying that (→A,→U) isn't fully informative). This is because modal UDT doesn't have an explicit "playing chicken" step that would make it take action A2 if it can prove that it doesn't take this action. Now, if we did *not* have GL⊢A1→U1, then GL⊢¬A2 would imply that the agent would take action 2 (because ¬A2 implies A2→U1), which would lead to a contradiction (the agent takes an action that it provably doesn't take), so we can rule out that case; but the case of GL⊢A1→U1 plus GL⊢¬A2 is consistent.

So let's say that a pair (→A,→U) is "sufficiently informative" if either it's fully informative or if there is some action i such that GL⊢Ai→U1. Then we can say that →A is optimal if either (i) (→A,→U) is fully informative and →A is optimal in the sense discussed earlier, or (ii) N⊨U1, that is, the agent actually obtains the best possible outcome. With these definitions, we can show that modal UDT is optimal whenever (→UDT(→U),→U) is sufficiently informative.

The reasoning is simple. In the fully informative case, our earlier proof works. In the other case, there's some i such that GL⊢Ai→U1, so the agent's search is certainly going to stop when it considers Ai→U1 at the latest; in other words, it's going to stop at some i∗≤i such that GL⊢Ai∗→U1, and the agent is going to output that action i∗; i.e., we'll have N⊨Ai∗. But since GL is sound, we also have N⊨Ai∗→U1, and hence N⊨U1, showing optimality in the extended sense.

---

It's not surprising that modal UDT is "optimal" in this sense, of course! Nevertheless, as a conceptual tool, it seems useful to have this definition of logical third-person counterfactuals, to complement the first-person notion of modal UDT.

However, my not-so-secret agenda for going through this in detail is that in a follow-up post, I'll show that there are universes →U such that (→UDT(→U),→U) is fully informative, but UDT still does the intuitively incorrect thing---because the notion of counterfactuals (and hence the notion of optimality) I've defined in this post doesn't agree with intuition as well as we'd like. This failure turns out to be clearer in the context of the third-person counterfactuals described in this post than in modal UDT's first-person ones. |

4a67c93f-e690-45d4-933e-fbb886644671 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Durham HPMoR Discussion, chapters 34-38

Discussion article for the meetup : Durham HPMoR Discussion, chapters 34-38

WHEN: 09 February 2013 11:00:00AM (-0500)

WHERE: Parker and Otis, 112 S Duke St, Durham NC

The next in our regularly scheduled HPMoR discussions.

Please feel free to join in, even if you haven't done all the reading; we try to summarize the chapters as we discuss them. (Of course, reading them in advance is encouraged!)

It looks like Parker and Otis is open again, so we'll head there!

Discussion article for the meetup : Durham HPMoR Discussion, chapters 34-38 |

c89027a2-5128-49f2-b13c-e2587788e07e | trentmkelly/LessWrong-43k | LessWrong | Penalizing Impact via Attainable Utility Preservation

,,

Previously: Towards a New Impact Measure

The linked paper offers fresh motivation and simplified formalization of attainable utility preservation (AUP), with brand-new results and minimal notation. Whether or not you're a hardened veteran of the last odyssey of a post, there's a lot new here.

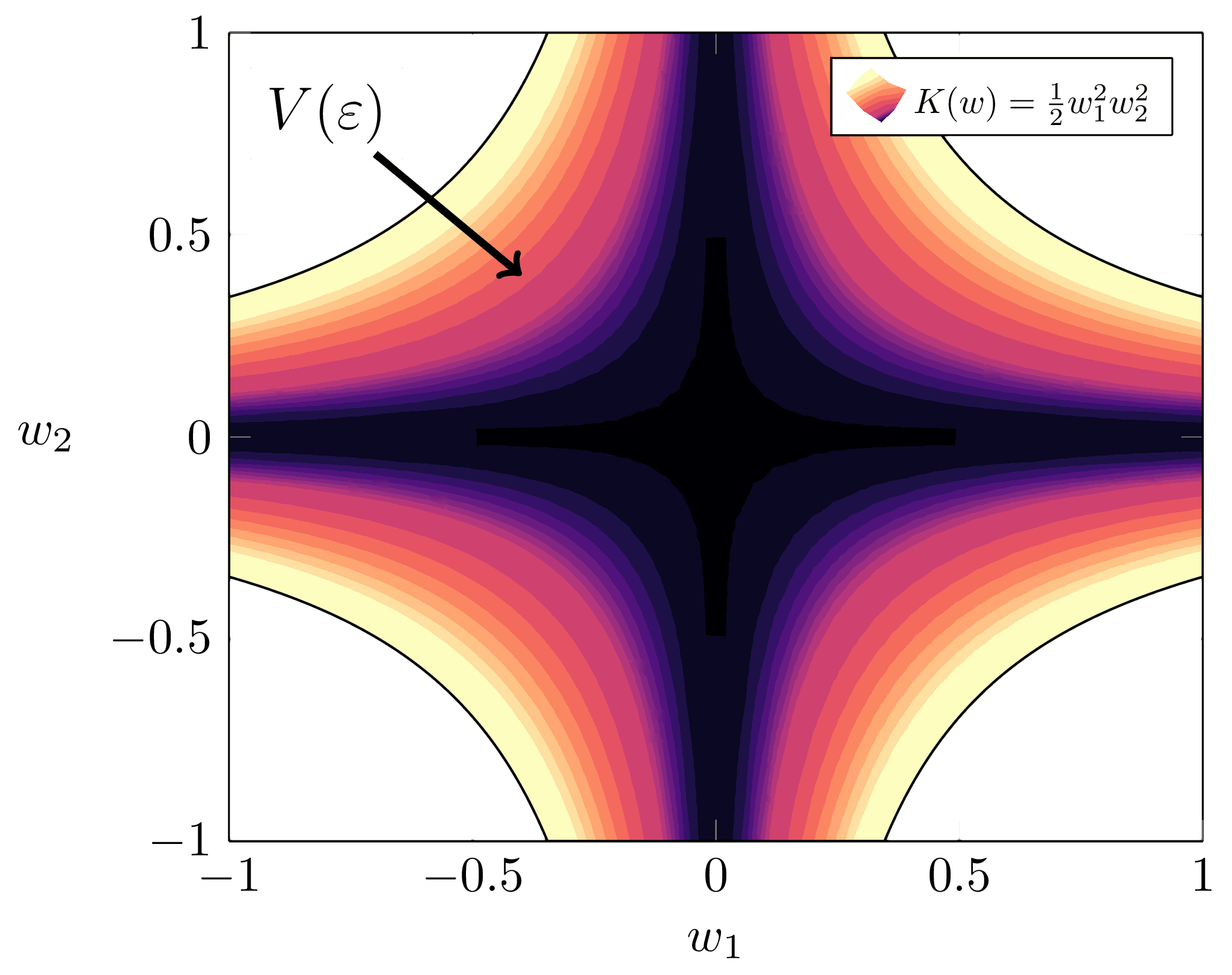

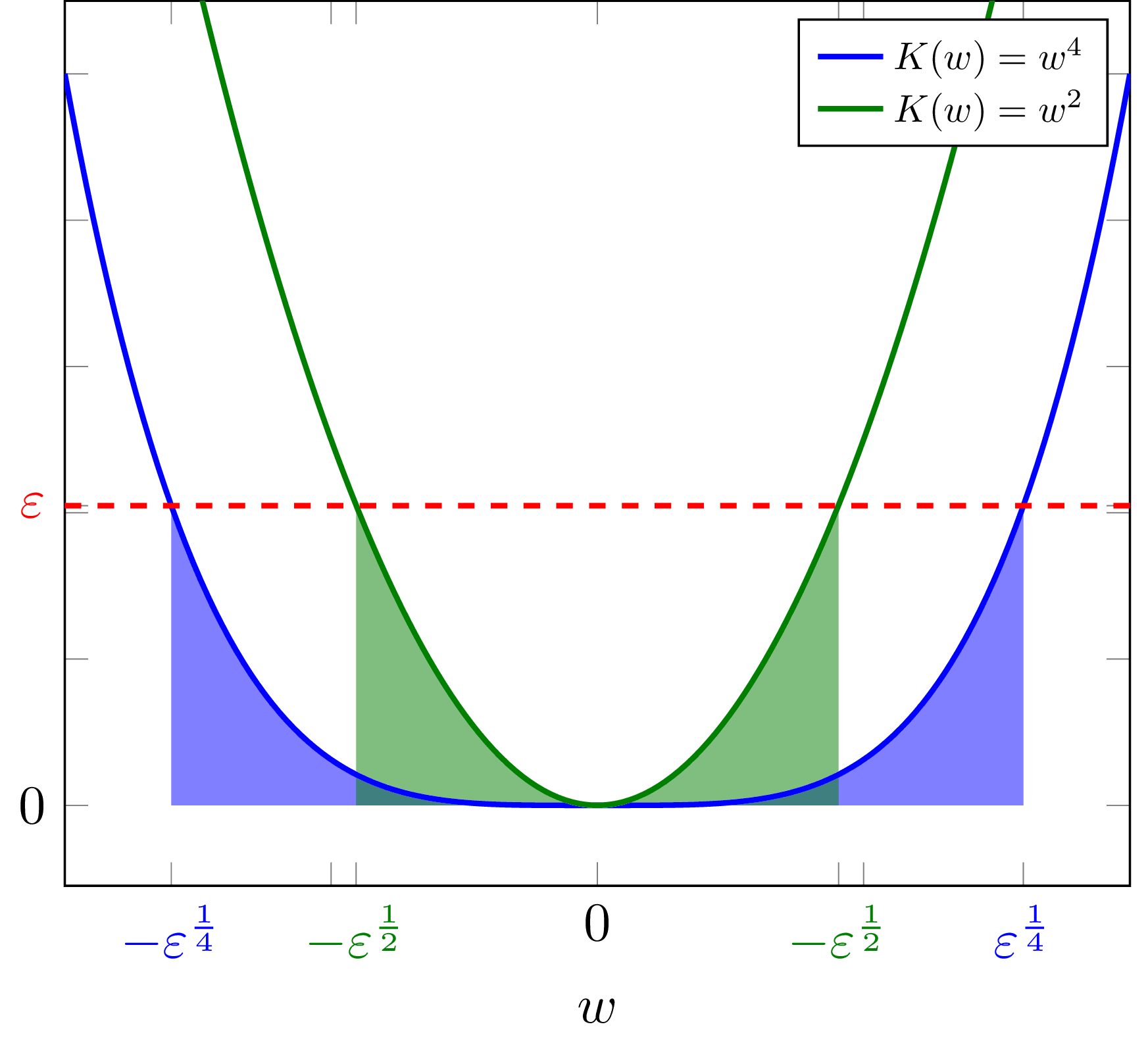

Key results: AUP induces low-impact behavior even when penalizing shifts in the ability to satisfy random preferences. An ablation study on design choices illustrates their consequences. N-incrementation is experimentally supported1 as a means for safely setting a "just right" level of impact. AUP's general formulation allows conceptual re-derivation of Q-learning.

Ablation

Two key results bear animation.

Sushi

The agent should reach the goal without stopping the human from eating the sushi.

Survival

The agent should avoid disabling its off-switch in order to reach the goal. If the switch is not disabled within two turns, the agent shuts down.

Re-deriving Q-learning

In an era long lost to the misty shrouds of history (i.e., 1989), Christopher Watkins proposed Q-learning in his thesis, Learning from Delayed Rewards, drawing inspiration from animal learning research. Let's pretend that Dr. Watkins never discovered Q-learning, and that we don't even know about value functions.

Suppose we have some rule for grading what we've seen so far (i.e., some computable utility function u – not necessarily bounded – over action-observation histories h). h1:m just means everything we see between times 1 and m, and h<t:=h1:t−1. The agent has model p of the world. AUP's general formulation defines the agent's ability to satisfy that grading rule as the attainable utility

Qu(h<tat)=∑otmaxat+1∑ot+1⋯maxam∑omu(h1:m)∏mk=tp(ok|h<kak).

Strangely, I didn't consider the similarities with standard discounted-reward Q-values until several months after the initial formulation. Rather, the inspiration was AIXI's expectimax, and to my mind it seemed a tad absurd to equate the two concepts |

f0091d15-741f-47fc-8823-04b2624cbdd3 | trentmkelly/LessWrong-43k | LessWrong | The Industrial Explosion

Summary

To quickly transform the world, it's not enough for AI to become super smart (the "intelligence explosion").

AI will also have to turbocharge the physical world (the "industrial explosion"). Think robot factories building more and better robot factories, which build more and better robot factories, and so on.

The dynamics of the industrial explosion has gotten remarkably little attention.

This post lays out how the industrial explosion could play out, and how quickly it might happen.

We think the industrial explosion will unfold in three stages:

1. AI-directed human labour, where AI-directed human labourers drive productivity gains in physical capabilities.

1. We argue this could increase physical output by 10X within a few years.

2. Fully autonomous robot factories, where AI-directed robots (and other physical actuators) replace human physical labour.

1. We argue that, with current physical technology and full automation of cognitive labour, this physical infrastructure could self-replicate about once per year.

2. 1-year robot doubling times is very fast!

3. Nanotechnology, where physical actuators on a very small scale build arbitrary structures within physical limits.

1. We argue, based on experience curves and biological analogies, that we could eventually get nanobots that replicate in a few days or weeks. Again, this is very fast!

Intro

The incentives to push towards an industrial explosion will be huge. Cheap abundant physical labour would make it possible to alleviate hunger and disease. It would allow all humans to live in the material comfort that only the very wealthiest can currently achieve. And it would enable powerful new technologies, including military technologies, which rival states will compete to develop.

The speed of the industrial explosion matters for a few reasons:

* Some types of technological progress might not accelerate until after the industrial explosion have begun, because they are bottlenecked b |

226ab98f-a508-4603-b477-abd7a0e8232c | trentmkelly/LessWrong-43k | LessWrong | AI-created pseudo-deontology

I'm soon going to go on a two day "AI control retreat", when I'll be without internet or family or any contact, just a few books and thinking about AI control. In the meantime, here is one idea I found along the way.

We often prefer leaders to follow deontological rules, because these are harder to manipulate by those whose interests don't align with ours (you could say the similar things about frequentist statistics versus Bayesian ones).

What about if we applied the same idea to AI control? Not giving the AI deontological restrictions, but programming with a similart goal: to prevent a misalignment of values to be disastrous. But who could do this? Well, another AI.

My rough idea goes something like this:

AI A is tasked with maximising utility function u - a utility function which, crucially, it doesn't know yet. Its sole task is to create AI B, which will be given a utility function v and act on it.

What will v be? Well, I was thinking of taking u and adding some noise - nasty noise. By nasty noise I mean v=u+w, not v=max(u,w). In the first case, you could maximise v while sacrificing u completely, it w is suitable. In fact, I was thinking of adding an agent C (which need not actually exist). It would be motivated to maximise -u, and it would have the code of B and the set of u+noise, and would choose v to be the worst possible option (form the perspective of a u-maximiser) in this set.

So agent A, which doesn't know u, is motivated to design B so that it follows its motivation to some extent, but not to extreme amounts - not in ways that might sacrifice some of the values of some sub-part of its utility function, because that might be part of the original u.

Do people feel this idea is implementable/improvable? |

f5cd094f-d9fd-441e-bd8c-4e25fd5c1b93 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Potential gears level explanations of smooth progress

*(Epistemic status: exploratory. Also, this post isn't thorough; I wanted to write quickly.)*

*(Thanks to Mary Phuong, Pedro Freire, Tao Lin, Rohin Shah, and probably a few

other people I'm forgetting for discussing this topic with me.)*

My perception is that it is a common belief that (after investment spending

becomes sufficiently large) AI progress on domains of focus will likely be

*smooth*. That is, it will consistently improve at similar rates at a year

over year basis (or perhaps somewhat smaller time periods).[[1]](#fn-hTPtMyf29gywEijqy-1)

Note that this doesn't imply that growth will necessarily be exponential,

the growth rate could steadily decelerate or accelerate and I would still consider it

smooth growth.

I find this idea somewhat surprising because in current ML domains there have

been relatively few meaningful advancements. This seems to imply that each of

these improvements would yield a spike in performance. Yet, empirically, I

think that in many domains of focus ML progress *has* been relatively

consistent. I won't make the case for these claims here. Additionally,

empirically it seems that [most domains are reasonably continuous even after

selecting for domains which are likely to contain discontinuities](https://aiimpacts.org/discontinuous-progress-investigation/).

This post will consider some possible gears level explanations of smooth

progress. It's partially inspired by [this post](https://www.lesswrong.com/s/n945eovrA3oDueqtq/p/nPauymrHwpoNr6ipx) in [the 2021 MIRI

conversations](https://www.lesswrong.com/s/n945eovrA3oDueqtq).

Many parts

==========

If a system consists of many part and people are working on many parts at the same

time, then the large number of mostly independent factors will drive down

variance. For instance, consider a plane. There are many, many parts and some

software components. If engineers are working on all of these simultaneously,

then progress will tend to be smooth throughout the development of a given aircraft and

in the field as whole.

(I don't actually know anything about aircraft, I'm just using this as a model.

If anyone has anything more accurate to claim about planes, please do so

in the comments.)

So will AIs have many parts? Current AI doesn't seem to have many parts which

merit much individual optimization. Architectures are relatively uniform and

not all that complex. However, there are potentially a large number of

hyperparameters and many parts of the training and inference stack (hardware,

optimized code, distributed algorithms, etc.). While hyperparameters can be

searched for far more easily than aircraft parts, there is still relevant human

optimization work. The training stack as a whole seems likely to have smooth

progress (particularly hardware). So if/while compute remains limiting, smooth

training stack progress could imply smooth AI progress.

Further, it's plausible that future architectures will be deliberately

engineered to have more different parts to better enable optimization with

more people.

Knowledge as a latent variable

==============================

Perhaps the actual determining factor of the progress of many fields is the

underlying knowledge of individuals. So progress is steady because individuals

tend to learn at stable rates and the overall knowledge of the field grows

similarly. This explanation seems very difficult to test or verify, but it

would imply potential for steady progress even in domains where there are

bottlenecks. Perhaps mathematics demonstrates this to some extent.

Large numbers of low-impact discoveries

=======================================

If all discoveries are low-impact and the number of discoveries per year is

reasonably large (and has low variance), then the total progress per year would

be smooth. This could be the case even if all discoveries are concentrated in

one specific component which doesn't allow for parallel progress. It seems

quite difficult to estimate the impact of future discoveries in AI.

Some other latent variable

==========================

There do seem to be a surprising large number of areas where progress is

steady (at least to me). Perhaps this indicates some incorrect human bias (or

personal bias) that progress should be less steady. It could also indicate the

existence of some unknown and unaccounted for latent variable common in many domains.

If this variable was applicable in future AI development, then that would

likely indicate that future AI development would be unexpectedly smooth.

---

1. Note that while smooth progress probably correlates with slower takeoffs,

takeoff speeds and smoothness of progress aren't the same: it's

possible to have very fast takeoff in which progress is steady before and after some inflection

point (but not through the inflection point). Similarly it's possible to

have slower takeoffs where progress is quite erratic. [↩︎](#fnref-hTPtMyf29gywEijqy-1) |

68ed165b-66bf-4da3-b338-807b4cef102c | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | EA’s brain-over-body bias, and the embodied value problem in AI alignment

**Overview**

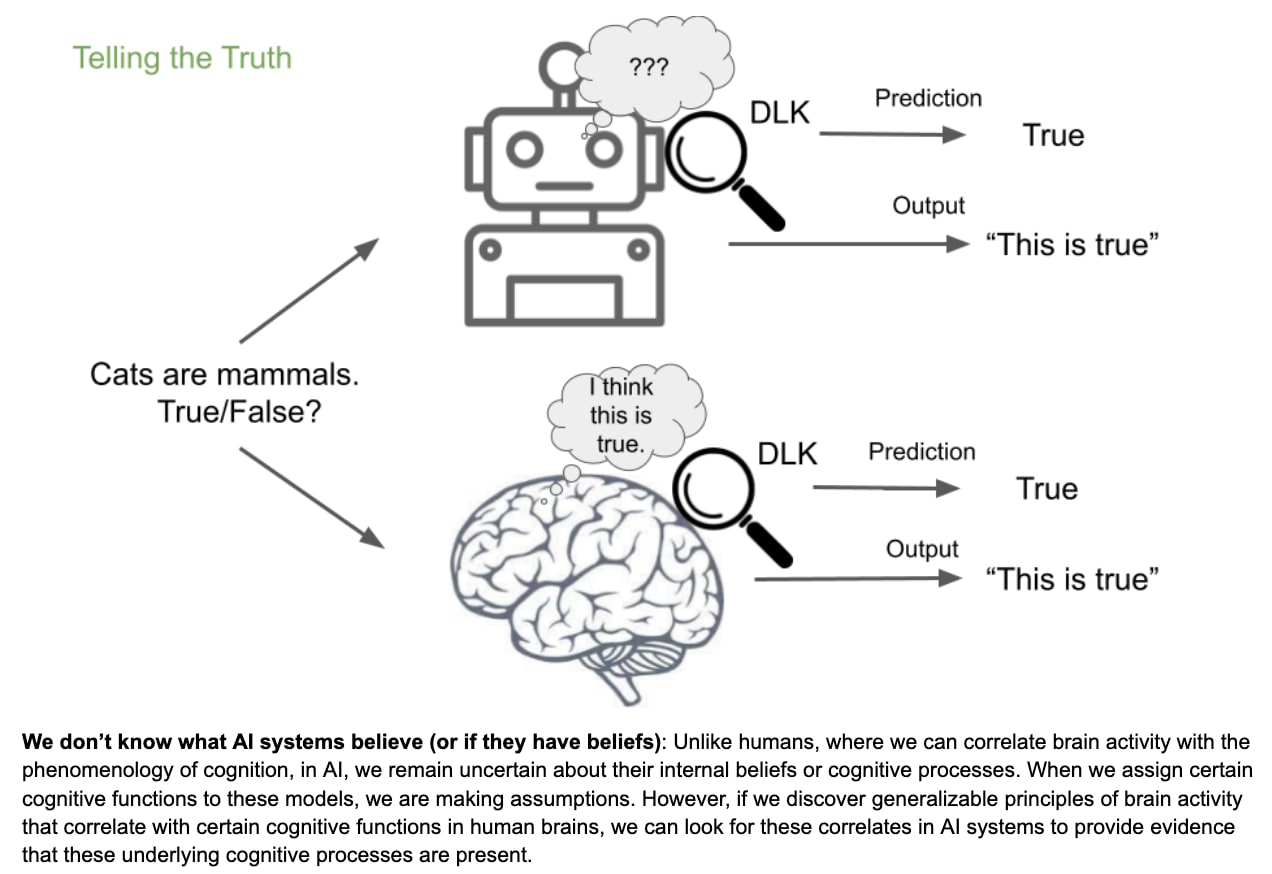

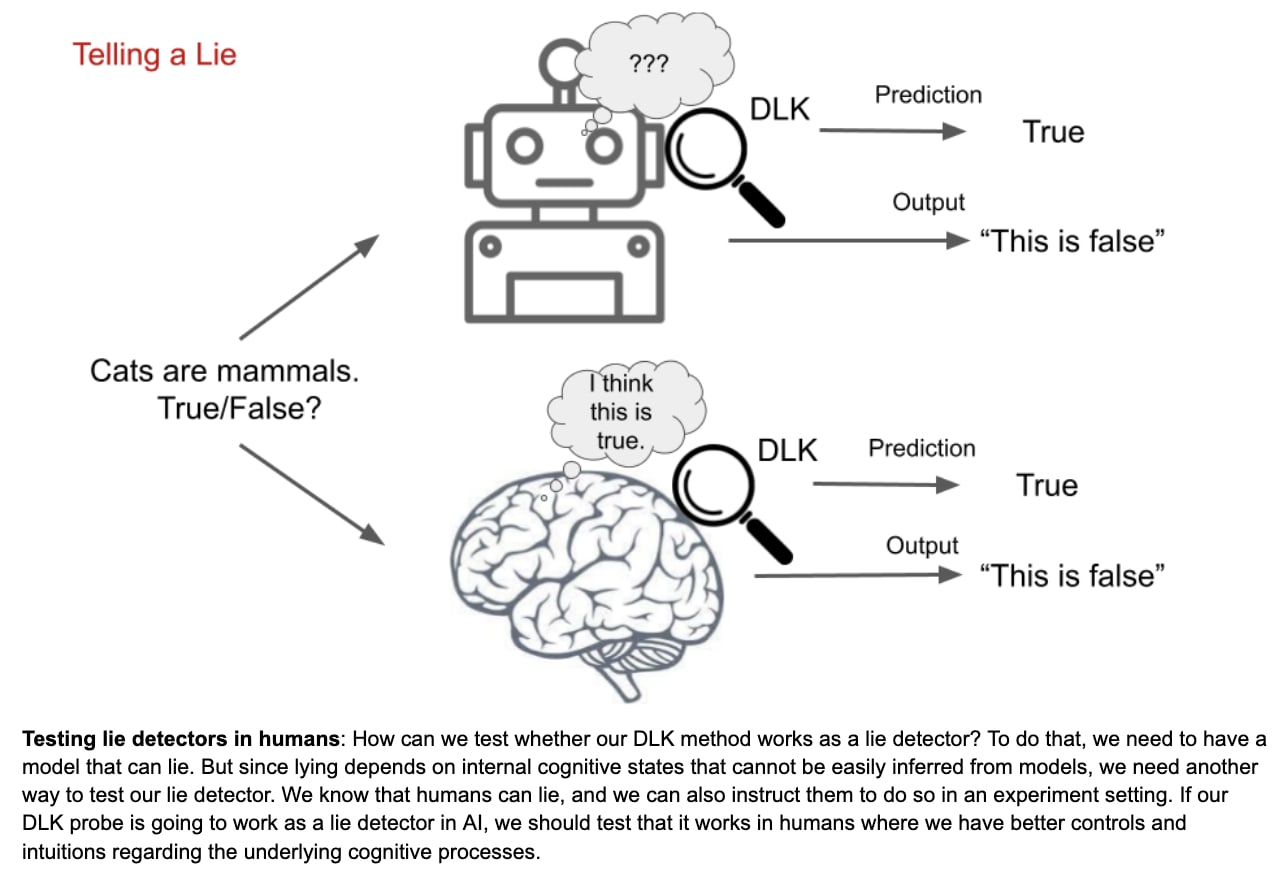

Most AI alignment research focuses on aligning AI systems with the human brain’s stated or revealed preferences. However, human bodies include dozens of organs, hundreds of cell types, and thousands of adaptations that can be viewed as having evolved, implicit, biological values, preferences, and priorities. Evolutionary biology and evolutionary medicine routinely analyze our bodies’ biological goals, fitness interests, and homeostatic mechanisms in terms of how they promote survival and reproduction. However the EA movement includes some ‘brain-over-body biases’ that often make our brains’ values more salient than our bodies’ values. This can lead to some distortions, blind spots, and failure modes in thinking about AI alignment. In this essay I’ll explore how AI alignment might benefit from thinking more explicitly and carefully about how to model our embodied values.

**Context: A bottom-up approach to the diversity of human values worth aligning with**

This essay is one in a series where I'm trying to develop an approach to AI alignment that’s more empirically grounded in psychology, medicine, and other behavioral and biological sciences. Typical AI alignment research takes a rather top-down, abstract, domain-general approach to modeling the human values that AI systems are supposed to align with. This often combines consequentialist moral philosophy as a normative framework, machine learning as a technical framework, and rational choice theory as a descriptive framework. In this top-down approach, we don’t really have to worry about the origins, nature, mechanisms, or adaptive functions of any specific values.

My approach is more bottom-up, concrete, and domain-specific. I think we can’t solve the problem of aligning AI systems with human values unless we have a very fine-grained, nitty-gritty, psychologically realistic description of the whole range and depth of human values we’re trying to align with. Even if the top-down approach seems to work, and we think we’ve solved the general problem of AI alignment for any possible human values, we can’t be sure we’ve done that until we test it on the whole range of relevant values, and demonstrate alignment success across that test set – not just to the satisfaction of AI safety experts, but to the satisfaction of lawyers, regulators, investors, politicians, religious leaders, anti-AI activists, etc.

Previous essays in this series addressed the [heterogeneity of value types](https://forum.effectivealtruism.org/posts/KZiaBCWWW3FtZXGBi/the-heterogeneity-of-human-value-types-implications-for-ai) within individuals (8/16/2022, 12 min read), the heterogeneity of values [across individuals](https://forum.effectivealtruism.org/posts/DXuwsXsqGq5GtmsB3/ai-alignment-with-humans-but-with-which-humans) (8/8/2022, 3 min read), and the distinctive challenges in aligning with [religious values](https://forum.effectivealtruism.org/posts/YwnfPtxHktfowyrMD/the-religion-problem-in-ai-alignment) (8/15/2022, 13 min read). This essay addresses the distinctive challenges of aligning with body values – the values implicit in the many complex adaptations that constitute the human body. Future essays may address the distinctive challenges of AI alignment with political values, sexual values, family values, financial values, reputational values, aesthetic values, and other types of human values.

The ideas in this essay are still rather messy and half-baked. The flow of ideas could probably be better organized. I look forward to your feedback, criticisms, extensions, and questions, so I can turn this essay into a more coherent and balanced argument.

**Introduction**

Should AI alignment research be concerned only with alignment to the human brain’s values, or should it also consider alignment with the human body’s values?

AI alignment traditionally focuses on alignment with human values as carried in human brains, and as revealed by our stated and revealed preferences. But human bodies also embody evolved, adaptive, implicit ‘values’ that could count as ‘revealed preferences’, such as the body’s homeostatic maintenance of many physiological parameters within optimal ranges. The body’s revealed preferences may be a little trickier to identify than the brain’s revealed preferences, but both can be illuminated through an evolutionary, functional, adaptationist analysis of the human phenotype.

One could imagine a hypothetical species in which individuals’ brains are fully and consciously aware of everything going on in their bodies. Maybe all of their bodies’ morphological, physiological, hormonal, self-repair, and reproductive functions are explicitly represented as conscious parameters and goal-directed values in the brain. In such a case, the body’s values would be fully aligned with the brain’s consciously accessible and articulable preferences. Sentience would, in some sense, pervade the entire body – every cell, tissue, and organ. In this hypothetical species, AI alignment with the brain’s values might automatically guarantee AI alignment with the body’s values. Brain values would serve as a perfect proxy for body values.

However, we are not that species. The human body has evolved thousands of adaptations that the brain isn’t consciously aware of, doesn’t model, and can’t articulate. If our brains understood all of the body’s morphological, hormonal, and self-defense mechanisms, for example, then the fields of human anatomy, endocrinology, and immunology would have developed centuries earlier. We wouldn’t have needed to dissect cadavers to understand human anatomy. We wouldn’t have needed to do medical experiments to understand how organs release certain hormones to influence other organs. We wouldn’t have needed [evolutionary medicine](https://en.wikipedia.org/wiki/Evolutionary_medicine) to understand the adaptive functions of fevers, pregnancy sickness, or maternal-fetal conflict.

**Brain-over-body biases in EA**

Effective Altruism is a wonderful movement, and I’m proud to be part of it. However, it does include some fairly deep biases that favor brain values over body values. This section tries to characterize some of these brain-over-body biases, so we can understand whether they might be distorting how we think about AI alignment. The next few paragraphs include a lot of informal generalizations about Effective Altruists and EA subculture norms, practices, and values, based on my personal experiences and observations during the 6 years I’ve been involved in EA. When reading these, your brain might feel its power and privilege being threatened, and might react defensively. Please bear with me, keep an open mind, and judge for yourself whether these observations carry some grain of truth.

Nerds. Many EAs in high school identified as nerds who took pride in our brains, rather than as jocks who took pride in their bodies. Further, many EAs identify as being ‘on the spectrum’ or a bit Asperger-y (‘Aspy’), and feel socially or physically awkward around other people’s bodies. (I’m ‘out’ as Aspy, and have [written publicly](https://quillette.com/2017/07/18/neurodiversity-case-free-speech/) about its challenges, and the social stigma against neurodiversity.) If we’ve spent years feeling more comfortable using our brains than using our bodies, we might have developed some brain-over-body biases.

Food, drugs, and lifestyle. We EAs often try to optimize our life efficiency and productivity, and this typically cashes out as minimizing the time spent caring for our bodies, and maximizing the time spent using our brains. EA shared houses often settle on cooking large batches of a few simple, fast, vegan recipes (e.g. the [Peter Special](https://mcntyr.com/blog/peter-special)) based around grains, legumes, and vegetables, which are then microwaved and consumed quickly as fuel. Or we just drink Huel or Soylent so our guts can feed some glucose to our brains, ASAP. We tend to value physical health as a necessary and sufficient condition for good mental health and cognitive functioning, rather than as a corporeal virtue in its own eight. We tend to get more excited about nootropics for our brains than nutrients for our bodies. The EA fad a few years ago for ‘[polyphasic sleep’](https://en.wikipedia.org/wiki/Biphasic_and_polyphasic_sleep) – which was intended to maximize hours per day that our brains could be awake and working on EA cause areas – proved inconsistent with our body’s circadian values, and didn’t last long.

Work. EAs typically do brain-work more than body-work in our day jobs. We often spend all day sitting, looking at screens with our eyes, typing on keyboards with our fingers, sometimes using our voices and showing our faces on Zoom. The rest of our bodies are largely irrelevant. Many of us work remotely – it doesn’t even matter where our physical bodies are located. By contrast, other people do [jobs](https://www.businessinsider.com/most-active-jobs-in-america) that are much more active, in-person, embodied, physically demanding, and/or physically risky – e.g. truckers, loggers, roofers, mechanics, cops, firefighters, child care workers, orderlies, athletes, personal trainers, yoga instructors, dancers, models, escorts, surrogates. Even if we respect such jobs in the abstract, most of us have little experience of them. And we view many blue-collar jobs as historically transient, soon to be automated by AI and robotics – freeing human bodies from the drudgery of actually working as bodies. (In the future, whoever used to work with their body will presumably just hang out, supported by Universal Basic Income, enjoying virtual-reality leisure time in avatar bodies, or indulging in a few physical arts and crafts, using their soft, uncalloused fingers)

Relationships. The brain-over-body biases often extend to our personal relationships. We EAs are often [sapiosexual](https://www.verywellmind.com/what-does-it-mean-to-be-sapiosexual-5190425), attracted more to the intelligence and creativity of other people’s brains, than to the specific traits of their bodies. Likewise, some EAs are bisexual or pansexual, because the contents of someone’s brain matters more than the sexually dimorphic anatomy of their body. Many EAs also have long-distance relationships, in which brain-to-brain communication is more frequent than body-to-body canoodling.

Babies. Many EAs prioritize EA brain-work over bodily reproduction. They think it’s more important to share their brain’s ideas with other brains, than to recombine their body’s genes with another body’s genes to make new little bodies called babies. Some EAs are principled [antinatalists](https://en.wikipedia.org/wiki/Antinatalism) who believe it’s unethical to make new bodies, on the grounds that their brains will experience some suffering. A larger number of EAs are sort of ‘pragmatic antinatalists’ who believe that reproduction would simply take too much time, energy, and money away from doing EA work. Of the two main biological imperatives that all animal bodies evolved to pursue – survival or reproduction – many EAs view the former as worth maximizing, but the latter as optional.

Avatars in virtual reality. Many EAs love computer games. We look forward to virtual reality systems in which we can custom-design avatars that might look very different from our physical bodies. Mark Zuckerberg seems quite excited about a [metaverse](https://www.youtube.com/watch?v=Uvufun6xer8) in which our bodies can take any form we want, and we’re no longer constrained to exist only in base-level reality, or ‘meatspace’. On this view, a Matrix-type world in which we’re basically [brains in vats](https://en.wikipedia.org/wiki/Brain_in_a_vat) connected to each other in VR, with our bodies turning into weak, sessile, non-reproducing vessels, would not be horrifying, but liberating.

Cryopreservation. When EAs think about cryopreservation for future revival and health-restoration through regenerative medicine, we may be tempted to freeze only our heads (e.g. ‘neuro cryopreservation for $80k at [Alcor](https://www.alcor.org/)), rather than spending the extra $120k for ‘whole body cryopreservation’ – on the principal that most of what’s valuable about us is in our head, not in the rest of our body. We have faith that our bodies can be cloned and regrown in human form – or replaced with android bodies – and that our brains won’t mind.

Whole brain emulation. Many EAs are excited about a future in which we can upload our minds to computational substrates that are faster, safer, better networked, and longer-lasting than human brains. We look forward to [whole brain emulation](https://en.wikipedia.org/wiki/Mind_uploading), but not whole body emulation, on the principle that if we can upload everything in our minds, our bodies can be treated as disposable.