id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

b56479b1-3372-4184-ac95-9f6f9f60a875 | trentmkelly/LessWrong-43k | LessWrong | 2024 State of the AI Regulatory Landscape

As part of our Governance Recommendations Research Program, Convergence Analysis has compiled a first-of-its-kind report summarizing the state of the AI regulatory landscape as of May 2024. We provide an overview of existing regulations, focusing on the US, EU, and China as the leading governmental bodies currently developing AI legislation. Additionally, we discuss the relevant context and conduct a short analysis for each topic.

This series is designed to be a primer for policymakers, researchers, and individuals seeking to develop a high-level overview of the current AI governance space. Our mission is to advance critical & foundational governance to mitigate future risk from AI systems.

Read the full report here: 2024 State of AI Regulatory Landscape

Links to Report Sections

1. Structure of AI Regulations

2. AI Evaluation & Risk Assessments

3. AI Model Registries

4. AI Incident Reporting

5. Open-Source AI Models

6. Cybersecurity of Frontier AI Models

7. AI Discrimination Requirements

8. AI Disclosures

9. AI and Chemical, Biological, Radiological, & Nuclear Hazards

Report Introduction

In the last decade, a growing expert consensus has argued that advanced AI poses numerous threats to society. These threats include widespread job loss, algorithmic bias, increasingly convincing misinformation and disinformation, social manipulation, cybersecurity attacks, and even catastrophic and existential threats from AI-engineered chemical and biological weapons.

Many are urgently calling for legislation and regulation focused on AI to reduce these threats, and governments are responding. In the last year, the US Executive Branch, the People’s Republic of China, and the European Union have enacted hundreds of pages of directives, legislation, and regulation focused on AI and the risks it currently poses and will pose in the near future. In this report, we’ve chosen to focus primarily on these three bodies for a comparative analysis of current regulations. These |

ece9b876-f6c4-4673-93f4-d06239824284 | trentmkelly/LessWrong-43k | LessWrong | Crossing the experiments: a baby

I've always been more of a theoretician, but it's important to try one's hand at practical problems from time to time. In that vein, I've decided to try three simultaneous experiments on major Less Wrong themes. I will aim to acquire something to protect, I will practice training a seed intelligence, and I will become more familiar with many consequences of evolutionary psychology.

In the spirit of efficiency I'll combine all these experiments into one:

She's never seen Star Wars or Doctor Who.

She's never seen David Attenborough or read J. L. Borges.

She's never had a philosophical debate.

She's never been skiing.

Never had sex, never been hugged or even been licked by a dog!

She has so much to look forwards to...

(Though she'll be very boring for several months yet!) |

eebd8ca9-0e0e-46ed-9dc8-0d316e834374 | trentmkelly/LessWrong-43k | LessWrong | Technological solutions to the climate crisis

Climate and environmental activists frequently complain how the technocentric approach to solving the climate crisis isn't enough or isn't good in some other way than "enough". I agree that it is not enough. Policy probably plays an equally, if not more important role. Policy interventions are things you can do right away, while technological breakthroughs are not guaranteed. And while policy interventions may have unintended consequences, they are at least guaranteed in some way, while technology may - or may not - be developed.

Of course, the two are not mutually exclusive, and shouldn't be seen this way. However asks you to stop developing seeds that can withstand a wider spectrum of temperatures, or cheap anti-frost systems, or glasshouses that can withstand hail, and instead join their banner-holding protest in front of a regulator or a megacorp is probably uninformed, and likely stupid. (If they ask you to go join the protest in addition to your technological work, then I don't hold any judgment.) And naturally, the inverse is also true, but there's something to say about the historical effectiveness of technological interventions. Namely: they have the capacity to start, while regulation only has the capacity to stop. Ok, if you pass sensible laws, you could in theory also incite but you can never start something through a law, only stop it. Therefore, technology is inherently proactive, while regulation is inherently reactive (and often regressive, as shown by multiple outdated regulations). Therefore I place much more weight on technocentric solutions of physical problems (such as the climate crisis) - it's something that looks forwards. It's long term, while regulation is often a short term patch.

So to say it in the simplest terms:

Technology:

* not guaranteed

* takes a long time to create and adopt

* could also be very harmful

* solves the problem in a proactive, long-term way

Policy:

* almost guaranteed

* quick to implement on a local scale

|

f0bef946-d1db-45bd-840d-22e4368d4e71 | trentmkelly/LessWrong-43k | LessWrong | Is friendly AI "trivial" if the AI cannot rewire human values?

I put "trivial" in quotes because there are obviously some exceptionally large technical achievements that would still need to occur to get here, but suppose we had an AI with a utilitarian utility function of maximizing subjective human well-being (meaning, well-being is not something as simple as physical sensation of "pleasure" and depends on the mental facts of each person) and let us also assume the AI can model this "well" (lets say at least as well as the best of us can deduce the values of another person for their well-being). Finally, we will also assume that the AI does not possess the ability to manually rewire the human brain to change what a human values. In other words, the ability for the AI to manipulate another person's values is limited by what we as humans are capable of today. Given all this, is there any concern we should have about making this AI; would it succeed in being a friendly AI?

One argument I can imagine for why this fails friendly AI is the AI would wire people up to virtual reality machines. However, I don't think that works very well, because a person (except Cypher from the Matrix) wouldn't appreciate being wired into a virtual reality machine and having their autonomy forcefully removed. This means the action does not succeed in maximizing their well-being.

But I am curious to hear what arguments exist for why such an AI might still fail as a friendly AI. |

6fadc8a0-3dfd-4224-ad47-fba2cc51b25b | trentmkelly/LessWrong-43k | LessWrong | Expert trap – Ways out (Part 3 of 3)

Crossposted from Pawel’s blog

This is the third part of the series, but you can read it on its own. In part one, I include notes on epistemic status, I give a summary of the topic. But mainly I describe what is the expert trap.

Part two is about context. In “Why is expert trap happening?” I dive deeper explaining biases and dynamics behind it. Then in “Expert trap in the wild” I try to point out where it appears in reality.

Part three is about “Ways out”. I list my main ideas of how to counteract the expert trap. I end with conclusions and with a short Q&A.

How to read it? There may be some knowledge you already are familiar with. All chapters make sense on their own. Feel free to read this like a Q&A page and skip parts of it.

Intro

I think that the biases I described can be fixed if we would adopt different norms around learning, thinking, and evaluating knowledge. This may be a very hard task though. Some people who did a lot of thinking on this topic are skeptical about the extent biases may be corrected. On one side are Yudkowsky, Center for Applied Rationality, Jacob Falkovich who think that rationality is a learnable skill. On the other side are Kahneman, Scott Alexander, who disagree. Read more on this debate here ”Is Rationalist Self-Improvement Real?” (and don’t miss comments by Scott Alexander).

I put some probability that this may be an almost impossible task. These biases may be largely evolutionarily determined. I am fairly certain that if baby humans were dropped on an abandoned island and if over centuries they would start a new society, without any cultural influence, these biases would resurface largely intact.

That said, I personally put a higher credence that this is fixable; that If we, as a society, would prioritize it highly enough; if we would adopt new norms around learning, teaching, talking, epistemology there I think these biases could be corrected to a significant degree. And I am pretty sure that not enough work was done to veri |

c83084fa-eb1c-429c-90ea-91f1873c810c | trentmkelly/LessWrong-43k | LessWrong | Strong, Stable, Open: Choose Two - in search of an article

I recall a LessWrong article that significantly influenced my thinking, but I haven't been able to find it again. However, when I ask ChatGPT about it, it can summarize the key points.

> I believe you're referring to the concept often summarized as "Strong, Stable, Open: Choose Two." This idea suggests that a group or institution can only optimize two out of these three qualities simultaneously:

>

> 1. Strength: The group's ability to enforce norms and maintain internal cohesion.

> 2. Stability: The group's capacity to endure over time without significant internal disruptions.

> 3. Openness (Accessibility): The group's inclusiveness and ease of entry for new members.

>

> The principle posits that:

>

> * A group that is strong and stable tends to be less open, as maintaining cohesion and stability often requires selective membership and controlled access.

> * A group that is strong and open may struggle with stability, as the influx of new members can introduce diverse perspectives that challenge cohesion.

> * A group that is stable and open might lack strength, as inclusivity can lead to diluted norms and weaker enforcement mechanisms.

>

> This framework is discussed in various contexts on LessWrong, particularly in discussions about the dynamics of cooperative institutions and the challenges they face in balancing these qualities.

Does anyone know where I can find it? I tried google, but I don't seem to get anywhere. |

dca2691b-19a8-45b0-906d-4a45b5478775 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | "Smarter than us" is out!

We're pleased to announce the release of "Smarter Than Us: The Rise of Machine Intelligence", commissioned by MIRI and written by Oxford University’s Stuart Armstrong, and available in [EPUB, MOBI, PDF](http://intelligence.org/smarter-than-us/), and from the [Amazon](http://www.amazon.com/gp/product/B00IB4N4KU/ref=as_li_ss_tl?tag=lesswrong-20) and [Apple](https://itunes.apple.com/us/book/smarter-than-us/id816744180) ebook stores.

What happens when machines become smarter than humans? Forget lumbering Terminators. The power of an artificial intelligence (AI) comes from its intelligence, not physical strength and laser guns. Humans steer the future not because we’re the strongest or the fastest but because we’re the smartest. When machines become smarter than humans, we’ll be handing them the steering wheel. What promises—and perils—will these powerful machines present? This new book navigates these questions with clarity and wit.

Can we instruct AIs to steer the future as we desire? What goals should we program into them? It turns out this question is difficult to answer! Philosophers have tried for thousands of years to define an ideal world, but there remains no consensus. The prospect of goal-driven, smarter-than-human AI gives moral philosophy a new urgency. The future could be filled with joy, art, compassion, and beings living worthwhile and wonderful lives—but only if we’re able to precisely define what a “good” world is, and skilled enough to describe it perfectly to a computer program.

AIs, like computers, will do what we say—which is not necessarily what we mean. Such precision requires encoding the entire system of human values for an AI: explaining them to a mind that is alien to us, defining every ambiguous term, clarifying every edge case. Moreover, our values are fragile: in some cases, if we mis-define a single piece of the puzzle—say, consciousness—we end up with roughly 0% of the value we intended to reap, instead of 99% of the value.

Though an understanding of the problem is only beginning to spread, researchers from fields ranging from philosophy to computer science to economics are working together to conceive and test solutions. Are we up to the challenge?

Special thanks to all those at the FHI, MIRI and Less Wrong who helped with this work, and those who [voted on the name](/lw/j4j/please_vote_for_a_title_for_an_upcoming_book/)! |

fd5ee5c3-091b-4601-a908-35600248e3c3 | trentmkelly/LessWrong-43k | LessWrong | Hardware for Transformative AI

I recently saw A breakdown of AI Chip companies linked on LessWrong. I thought it was interesting, and it inspired me to add my 2 cents on what AI chips might be used in the case of transformative AI in the relatively near future.

Background

Undoubtedly Nvidia is the leader when it comes to AI chips, by far Nvidia GPUs are the most common way of training large neural nets. For example GPT-3 was trained on Nvidia V100 GPUs.

According to A breakdown of ai chip companies, Nvidia’s GPUs aren’t that well optimized for AI, and thus for training the performance is way worse than theoretical teraflops achieved (10x worse according to the article, but this seems like an exaggeration, since according to this article the idle time was around 70% for GPU cores, so about 3.3x worse than theoretical performance).

The second most used harware for AI are Google tensor processing units (TPUs), which is specialized for AI. I found this table useful, comparing cost of training a small convolutional neural net:

Source: https://medium.com/bigdatarepublic/cost-comparison-of-deep-learning-hardware-google-tpuv2-vs-nvidia-tesla-v100-3c63fe56c20f

In this case TPU was considerably cheaper, but I believe the relative costs vary significantly depending on training task and hyperparameters.

The drawbacks with TPUs is that you can’t buy them (only rent from google) and code has to be compiled in a specific way to be efficient, which decreases flexibility when using TPUs.

Cost of GPT3 as reference

GPT-3 is the most impressive language model to date, and it has about 175 billion parameters. The cost of training was about 12 million, and according to Estimating GPT3 API cost, a request to generate 1024 tokens (about 700 words) costs about 0.001 USD assuming constant usage.

Cost of a theoretical 17 trillion transformative AI

I once read an estimate that 5x-ing the size of GPT3, would 10x the training cost. I can’t find the source now, so if anyone has a better estimate, please let me k |

8d7aa6cd-ec11-48eb-ae6f-cded92478105 | trentmkelly/LessWrong-43k | LessWrong | LessWrong's attitude towards AI research

AI friendliness is an important goal and it would be insanely dangerous to build an AI without researching this issue first. I think this is pretty much the consensus view, and that is perfectly sensible.

However, I believe that we are making the wrong inferences from this.

The straightforward inference is "we should ensure that we completely understand AI friendliness before starting to build an AI". This leads to a strongly negative view of AI researchers and scares them away. But unfortunately reality isn't that simple. The goal isn't "build a friendly AI", but "make sure that whoever builds the first AI makes it friendly".

It seems to me that it is vastly more likely that the first AI will be built by a large company, or as a large government project, than by a group of university researchers, who just don't have the funding for that.

I therefore think that we should try to take a more pragmatic approach. The way to do this would be to focus more on outreach and less on research. It won't do anyone any good if we find the perfect formula for AI friendliness on the same day that someone who has never heard of AI friendliness before finishes his paperclip maximizer.

What is your opinion on this? |

db18e419-b807-441b-9bd1-d9f203c0f161 | StampyAI/alignment-research-dataset/blogs | Blogs | Pre-agriculture gender relations seem bad

*Click lower right to download or find on Apple Podcasts, Spotify, Stitcher, etc.*

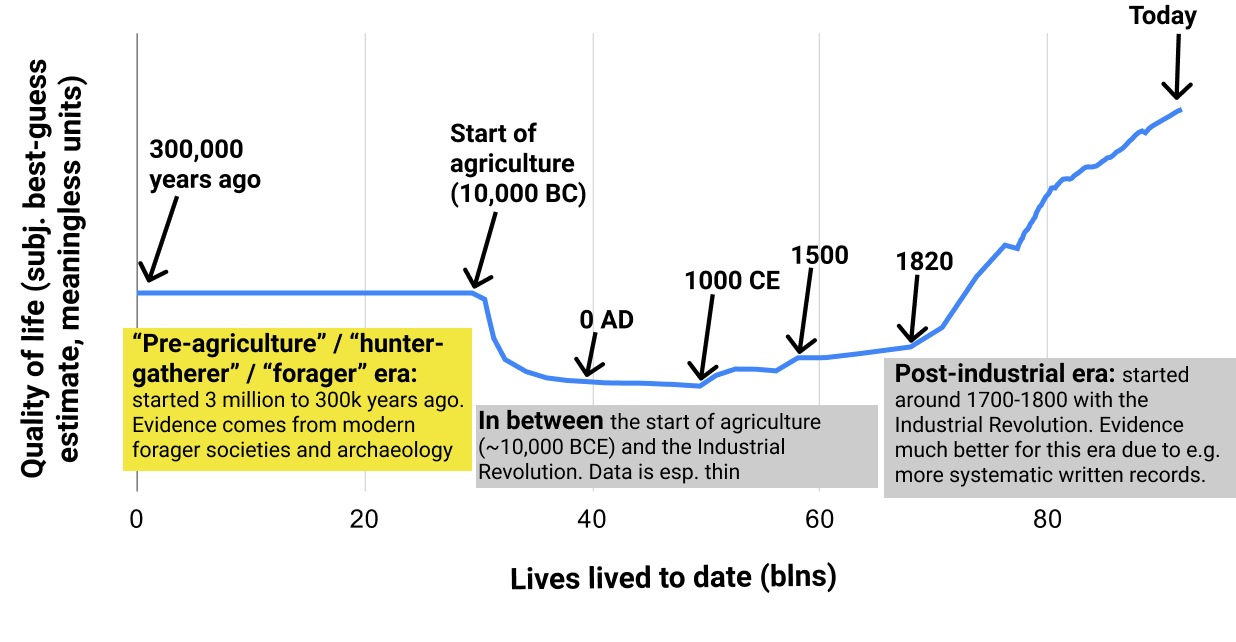

As part of exploring [trends in quality of life over the very long run](https://www.cold-takes.com/has-life-gotten-better/), I've been trying to understand how good life was during the "pre-agriculture" (or "hunter-gatherer"[1](#fn1)) period of human history. We have little information about this period, but it **lasted hundreds of thousands of years (or more), compared to a mere ~10,000 years post-agriculture.**

(For this post, it's not too important exactly what agriculture means. But it roughly refers to being able to *domesticate plants and livestock*, rather than *living only off of existing resources* in an area. Agriculture is what first allowed large populations to stay in one area indefinitely, and is generally believed to be crucial to the development of much of what we think of as "civilization."[2](#fn2))

This image illustrates how this post fits into the [full "Has Life Gotten Better?" series](https://www.cold-takes.com/has-life-gotten-better/).

There are arguments floating around implying that the hunter-gatherer/pre-agriculture period was a sort of "paradise" in which humans lived in an egalitarian state of nature - and that agriculture was a pernicious technology that brought on more crowded, complex societies, which humans still haven't really "adapted" to. If that's true, it could mean that "progress" has left us worse off over the long run, even if [trends over the last few hundred years have been positive.](https://www.cold-takes.com/p/8f74c38b-4405-44f5-8fc7-952918663a45/)

A future post will comprehensively examine this "pre-agriculture paradise" idea. For now, I just want to **focus on one aspect: gender relations.** This has been one of the more complex and confusing aspects to learn about. My current impression is that:

* There's no easy way to determine "what the literature says" or "what the experts think" about pre-agriculture gender in/equality. That is, there's no source that comprehensively surveys the evidence or expert opinion.

* According to the best/most systematic evidence I could find (from observing modern non-agricultural societies), **pre-agriculture gender relations seem bad**. For example, most societies seem to have no possibility for female leaders, and limited or no female voice in intra-band affairs.

* There are a **lot of claims to the contrary floating around, but (IMO) without good evidence.** For example, the Wikipedia entry for "hunter-gatherer" gives the strong impression that nonagricultural societies have strong gender equality, as does a Google search for "hunter-gatherer gender relations." But the sources cited seem very thin and often only tangentially related to the claims; furthermore, they:

+ Often use reasoning that seems like a huge stretch to me. For example, one paper appears to argue for strong gender equality among particular Neanderthals based entirely on the observation that they seemed not to eat the sorts of foods traditionally gathered by women. (The implication being that since women must have been doing *something*, they were probably hunting along with the men.)

+ Often seem to acknowledge significant inequality, while seemingly trying to explain it away with strange statements like "women know how to deal with physical aggression, unlike their Western counterparts." (Verbatim quote.)

+ Seem to get disproportionate attention from very thin evidence, such as an analysis of 27 skeletal remains being featured in the New York Times and a National Geographic article that ranks 2nd in the Google results for "hunter-gatherer gender relations."

Based on the latter points, it seems that there are people trying hard to make the case for gender equality among hunter-gatherers, but not having much to back up this case. One reason for this might be a fear that if people think gender inequality is "ancient" or "natural," they might conclude that it is also "good" and not to be changed. So for the avoidance of doubt: **my general perspective is that the "state of nature" is bad compared to today's world.** When I say that pre-agricultural societies probably had disappointingly low levels of gender equality, I'm not saying that this inequality is inevitable or "something we should live with" - just the opposite.

Systematic evidence on pre-agriculture gender relations

-------------------------------------------------------

The best source on pre-agriculture gender relations I've found is [Hayden, Deal, Cannon and Casey (1986)](https://link.springer.com/article/10.1007/BF02436620): "Ecological Determinants of Women's Status Among Hunter/Gatherers." I discuss how I found it, and why I consider it the best source I've found, [here](https://www.cold-takes.com/has-life-gotten-better-supplement/#ecological-determinants-of-womens-status-among-hunter-gatherers).

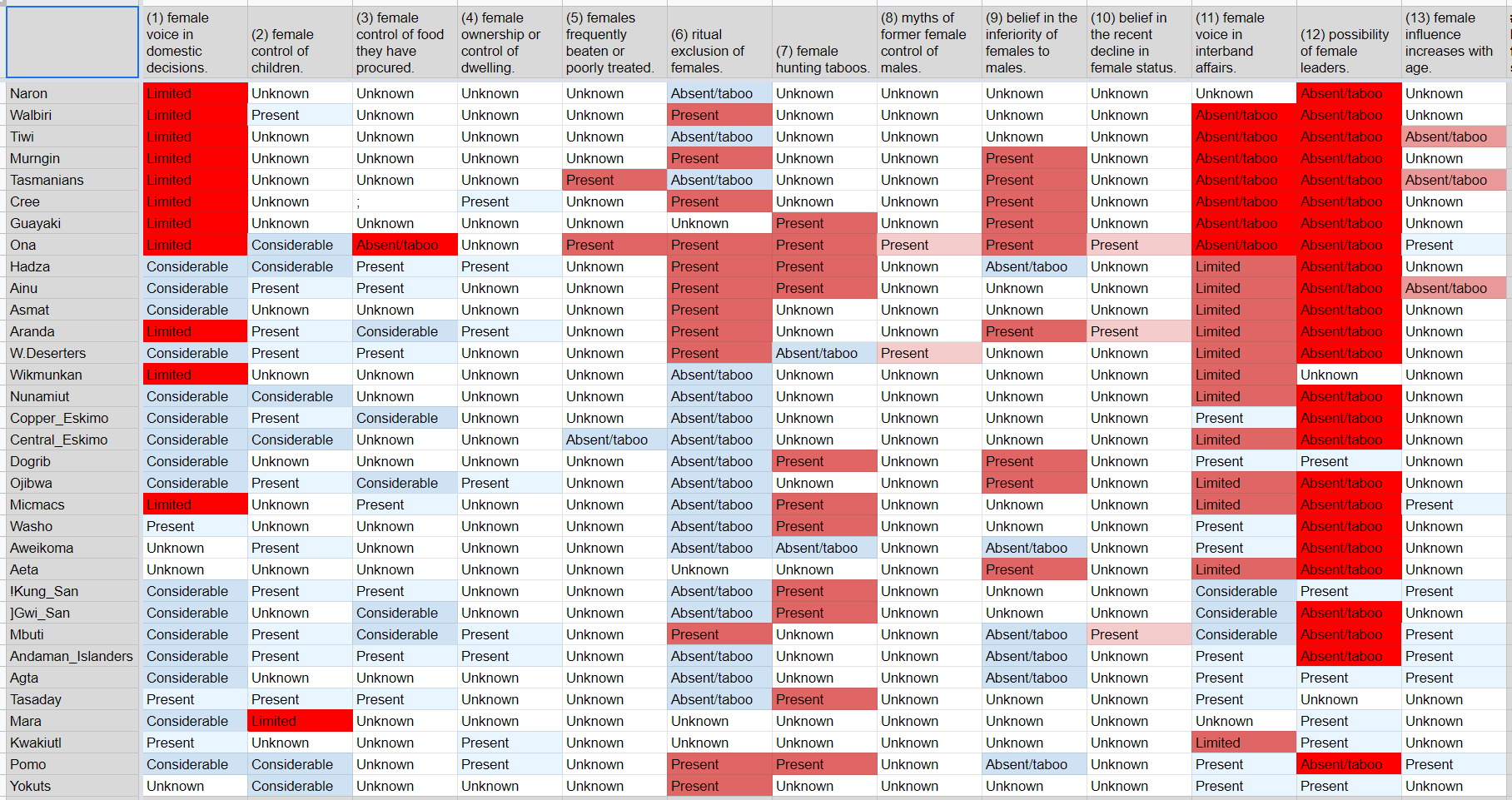

It is a paper collecting [ethnographic data](https://www.cold-takes.com/has-life-gotten-better-supplement/#ethnographic-and-archaeological-data): data from anthropologists' observations of the relatively few people who maintain or maintained a "forager"/"hunter-gatherer" (nonagricultural) lifestyle in modern times. It presents a table of 33 different societies, scored on 13 different properties such as whether a given society has "female voice in domestic decisions" and "possibility of female leaders."

Here's the key table from the paper, with some additional color-coding that I've added ([spreadsheet here](https://docs.google.com/spreadsheets/d/1ybiviFhAwZGyH7X7chc60-lHoFT3KjazfIcRs6FQhQg/edit#gid=0)):

I've used red shading for properties that imply male domination, and blue shading for properties that imply egalitarianism (all else equal).[3](#fn3) The red shades are deeper than the blue shades because I think the "nonegalitarian" properties are much more bad than the "egalitarian" properties are good (for example, I think "possibility of female leaders" being "absent/taboo" is extremely bad; I don't think "female voice in domestic decisions" being "Considerable" makes up for it).

From this table, it seems that:

* 25 of 33 societies appear to have no possibility for female leaders.

* 19 of 33 societies appear to have limited or no female voice in intraband affairs.

* Of the 6 societies (<20%) where neither of these apply:

+ The Dogrib have "female hunting taboos" and "belief in the inferiority of females to males."

+ The !Kung and Tasaday have "female hunting taboos."

+ The Yokuts have "ritual exclusion of females."

+ The Mara and Agta seem like the best candidates for egalitarian societies. (The Mara have "limited" "female control of children," but it's not clear how to interpret this.)

Overall, I would characterize this general picture as one of bad gender relations: it looks as though most of these societies have rules and/or norms that aggressively and categorically limit women's influence and activities.

I got a similar picture from the chapter on gender relations from [The Lifeways of Hunter-Gatherers](https://smile.amazon.com/Lifeways-Hunter-Gatherers-Foraging-Spectrum/dp/1107607612/) (more [here](https://www.cold-takes.com/has-life-gotten-better-supplement/#some-key-sources-i've-used) on why I emphasize this source).

* It states: "even the most egalitarian of foraging societies are not truly egalitarian because men, without the need to bear and breastfeed children, are in a better position than women to give away highly desired food and hence acquire prestige. The potential for status inequalities between men and women in foraging societies (see Chapter 9) is rooted in the division of labor."

* It also argues that many practices sometimes taken as evidence of equality (such as [matrilocality](https://en.wikipedia.org/wiki/Matrilocal_residence)) are not.

The (AFAICT poorly cited and unconvincing) case that pre-agriculture gender relations were egalitarian

------------------------------------------------------------------------------------------------------

I think there are a fair number of people and papers floating around that are aiming to give an impression that pre-agriculture gender relations were highly egalitarian.

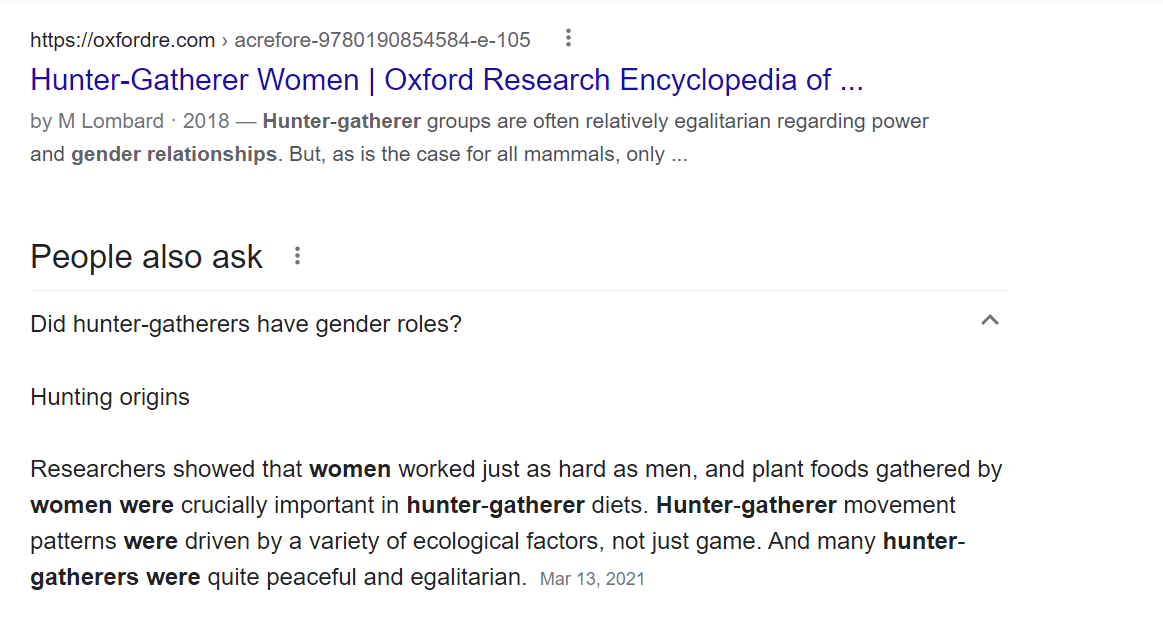

In fact, when I started this investigation, I initially thought that gender equality was the *consensus* view, because both Google searches and Wikipedia content gave this impression. Not only do both emphasize gender equality among foragers/hunter-gatherers, but neither presents this as a two-sided debate.

Below, I'll go through what I found by following citations from (a) the Wikipedia "hunter-gatherer" page; (b) the front page from searching Google for "hunter-gatherer gender relations." I'm not surprised that Google and Wikipedia are *imperfect* here, but I found it somewhat remarkable how *consistently* the "initial impression" given was of strong gender equality, and how consistently this impression was unsupported by sources. I think it gives a good feel for the broader phenomenon of "unsupported claims about gender equality floating around."

### Wikipedia's "hunter-gatherer" page

The [Wikipedia entry for "hunter-gatherer"](https://en.wikipedia.org/wiki/Hunter-gatherer) ([archived version](https://web.archive.org/web/20210601170956/https://en.wikipedia.org/wiki/Hunter-gatherer)) gives the strong impression that nonagricultural societies have strong gender equality. Key quotes:

*Nearly all African hunter-gatherers are egalitarian, with women roughly as influential and powerful as men.[22][23][24] ... In addition to social and economic equality in hunter-gatherer societies, there is often, though not always, relative gender equality as well.[30]*

The citations given don't seem to support this statement. Details follow (I look at notes 22, 23, 24, and 30 - all of the notes from the above quote) - you can skip to the next section if you aren't interested in these details, but I found it somewhat striking and worth sharing just how bad the situation seems to be here.

**Note 22** refers to a chapter ("Gender relations in hunter-gatherer societies") in [this book](https://smile.amazon.com/Cambridge-Encyclopedia-Hunters-Gatherers/dp/0521609194). I found it to have a combination of:

**Very broad claims about gender equality,** which I consider less trustworthy than the sort of systematic, specifics-based analysis [above](#systematic-evidence-on-pre-agriculture-gender-relations). Key quote:

*> Various anthropologists who have done fieldwork with hunter-gatherers have described gender relations in at least some foraging societies as symmetrical, complementary, nonhierarchical, or egalitarian. Turnbull writes of the Mbuti: “A woman is in no way the social inferior of a man” (1965:271). Draper notes that “the !Kung society may be the least sexist of any we have experienced” (1975:77), and Lee describes the !Kung (now known as Ju/’hoansi) as “fiercely egalitarian” (1979:244). Estioko-Griffin and Griffin report: “Agta women are equal to men” (1981:140). Batek men and women are free to decide their own movements, activities, and relationships, and neither gender holds an economic, religious, or social advantage over the other (K. L. Endicott 1979, 1981, 1992, K. M. Endicott 1979). Gardner reports that Paliyans value individual autonomy and economic self-sufficiency, and “seem to carry egalitarianism, common to so many simple societies, to an extreme” (1972:405).*

Of the five societies named, two (Batek, Paliyan) are not included in the table above; two (!Kung, Agta) are among the most egalitarian according to the table above (although the !Kung are listed as having female hunting taboos); and one (Mbuti) is listed as having "ritual exclusion of females" and no "possibility of female leaders." I trust specific claims like the latter more than broader claims like "A woman is in no way the social inferior of a man."

I actually wrote that before noticing, in the next section, that the same author who says "A woman is in no way the social inferior of a man" also observes that "a certain amount of wife-beating is considered good, and the wife is expected to fight back" - of the same society!

**Seeming concessions of significant inequality, sometimes accompanied by defenses of this that I find bizarre.** Some example quotes from the chapter:

* "Some Australian Aboriginal men use threats of gang-rape to keep women away from their secret ceremonies. Burbank argues that Aborigines accept physical aggression as a 'legitimate form of social action' and limit it through ritual (1994:31, 29). Further, women know how to deal with physical aggression, unlike their Western counterparts (Burbank 1994:19)."

* "For the Mbuti, 'a certain amount of wife-beating is considered good, and the wife is expected to fight back' (Turnbull 1965:287), but too much violence results in intervention by kin or in divorce."

* "Observing that Chipewyan women defer to their husbands in public but not in private, Sharp cautions against assuming this means that men control women: 'If public deference, or the appearance of it, is an expression of power between the genders, it is a most uncertain and imperfect measure of power relations. Polite behavior can be most misleading precisely because of its conspicuousness'"

* "Some foragers place the formalities of decision-making in male hands, but expect women to influence or ratify the decisions"

* "Aché men and women traditionally participated in band-level decisions, though 'some men commanded more respect and held more personal power than any woman.'"

* "Rather than assigning all authority in economic, political, or religious matters to one gender or the other, hunter-gatherers tend to leave decision-making about men’s work and areas of expertise to men, and about women’s work and expertise to women, either as groups or individuals"

Overall, this chapter actively reinforced my impression that gender equality among the relevant societies is disappointingly low on the whole.

**Note 24** goes to [this paper](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7673694/). (Note 23 also cites it, which is why I'm skipping to Note 24 for now.) My rough summary is:

* Much of the paper discusses a single set of human remains from ~9,000 years ago that the author believes was (a) a 17-19-year-old female who (b) was buried with big-game hunting tools.

* It also states that out of 27 individuals in the data set the authors considered who (a) appear to have been buried with big-game hunting tools (b) have a hypothesized sex, 11 were female and 16 were male.

I think the idea is that these findings undermine the idea that women couldn't be big-game hunters.

I have many objections to this paper being one of three sources cited for the claim "Nearly all African hunter-gatherers are egalitarian, with women roughly as influential and powerful as men."

* None of these results are from Africa (they're from the Americas).

* This is a single paper that seems to be engaging in a lot of guesswork around a small number of remains, and seems to be written with a pretty strong agenda (see the intro). In general, I think it's a bad idea to put much weight on a single data source; I prefer systematic, aggregative analyses like the one I examine [above](#systematic-evidence-on-pre-agriculture-gender-relations).

* It already seems to be widely acknowledged that the amount of big-game female hunting in these societies is not *zero*[4](#fn4)(though it is believed to be rare and in some cases taboo), so a small-sample-size case where it was relatively common would not necessarily contradict what's already widely believed.

* Finally, what would it tell us if women participated equally in big-game hunting 9,000 years ago, given that (as the authors of this paper state) there are only "trace levels of participation observed among ethnographic hunter-gatherers and contemporary societies"? As far as I can tell, it's very hard to glean much information about gender relations from 9,000 years ago, and there are any number of different axes other than hunting along which there may have been discrimination. I think it would be quite a leap from "Women participated equally in big-game hunting" to "Gender equality was strong."

**Note 23** goes to a New York Times article that is mostly about the above paper. It also cites a case where remains were found of a man and woman buried together near servants; I do not know what point that is making.

**Source 30** appears to primarily be drawing from "Women's Status in Egalitarian Society," a chapter from [this book](https://smile.amazon.com/Limited-Wants-Unlimited-Means-Hunter-Gatherer-ebook/dp/B005M0O126/).[5](#fn5)

I find this chapter extremely unconvincing, and reminiscent of Source 22 above, in that it combines (a) sweeping statements without specifics or citations; (b) scattered statements about individual societies; (c) acknowledgements of what sound to me like disappointingly low levels of gender equality, accompanied by bizarre defenses. (One key quote, which sounds to me like it's basically arguing "Gender relations were good because women had high status due to their role in childbearing," is in a footnote.[6](#fn6))

### Google results for "hunter-gatherer gender relations"

Googling "hunter-gatherer gender relations" ([archived link](https://web.archive.org/web/20210603002231if_/https://www.google.com/search?q=hunter-gatherer+gender+relations)) initially gives an impression of strong gender equality. Here's how the search starts off:

However, when I clicked through to the first result, I found that the statement highlighted by Google ("Hunter-gatherer groups are often relatively egalitarian regarding power and gender relationships") appears to be an aside: no citation or evidence is given, and it is not the main topic of the paper. Most of the paper discusses the *differing* activities of men and women (e.g., big-game hunting vs. other food provision).[7](#fn7)

The answer box has no citations, so I can't assess where that's coming from.

And here's what shows next in the search:

The first of these results (the National Geographic article)is essentially a summary of the same source discussed [above](#note-24) that cites evidence of 11 females (compared to 16 males) buried with big-game hunting tools 9,000 years ago.

The next (from jstor.org) is a discussion of "gender relations in the Thukela Basin 7000-2000 BP hunter-gatherer society." The abstract states: "I argue that the early stages of this occupation were characterized by male dominance which then became the site of considerable struggle which resulted in women improving their positions and possibly attaining some form of parity with men."

The next (from theguardian.com) is a [Guardian article](https://www.theguardian.com/science/2015/may/14/early-men-women-equal-scientists) with the headline: "Early men and women were equal, say scientists." The entire article discusses a [single study](https://science.sciencemag.org/content/348/6236/796):

* The study looks at two foraging societies (one of which is the Agta, the most egalitarian society according to the table [above](#SummaryTable)).

* It presents a theoretical model according to which one gender dominating decisions about who lives where would result in high levels of within-camp relatedness, and observes that actual patterns of within-camp relatedness are relatively low, so they more closely match a dynamic in which both genders influence decisions (according to the theoretical model).

* I believe this is essentially zero evidence of anything.

The final result is a Wikipedia article that is mostly about the differing roles for men and women among foragers. The part that provides Google's 3rd excerpt is here (screenshotting so you can get the full experience of the citation notes):

Source 8 looks like the closest thing to a citation for the claim that "the sexual division of labor ... developed relatively recently." It goes to [this paper](https://www.journals.uchicago.edu/doi/abs/10.1086/507197), which seems to me to be making a significant leap from thin evidence. The basic situation, as far as I can tell, is:[8](#fn8)

* There is no archaeological evidence that the population in question (Neandertals in Eurasia in the Middle Paleolithic) ate small game or vegetables.

* This implies that they exclusively hunted big game.

* It's hypothesized that women participated equally in big-game hunting. The reasoning is that otherwise, they would have had nothing to do, and this seems implausible to the authors. (There is also some discussion of the lack of other things that would've taken work to make, such as complex clothing.)

I do not think that "Neanderthals didn't eat small game or vegetables" is much of an argument that they had egalitarian division of labor by sex.

Bottom line

-----------

My current impression is that today's foraging/hunter-gatherer societies have disappointingly low levels of gender equality, and that this is the best evidence we have about what pre-agriculture gender relations were like.

I'm not sure why casual searching and Wikipedia seem to give such a strong impression to the contrary. It seems to me that there is a fair amount of interest in stretching thin evidence to argue that pre-agriculture societies had strong gender equality.

This might be partly be coming from a fear that if people think gender inequality is "ancient" or "natural," they might conclude that it is also "good" and not to be changed. But as I'll elaborate in future pieces, my general perspective is that the "state of nature" is bad compared to today's world, and I think one of our goals as a society should be to fight things - from sexism to disease - that have afflicted us for most of our history. I don't think it helps that cause to give stretched impressions about what that history looks like.

**Next in series:** [Was life better in hunter-gatherer times?](https://www.cold-takes.com/was-life-better-in-hunter-gatherer-times/)

---

Footnotes

---------

1. Or "forager," though I won't be using that term in this post because I already have enough terms for the same thing. "Hunter-gatherer" seems to be the more common term generally, and is the one favored by Wikipedia. [↩](#fnref1)- E.g., see [Wikipedia on the Neolithic Revolution](https://en.wikipedia.org/wiki/Neolithic_Revolution), stating that agriculture "transformed the small and mobile groups of hunter-gatherers that had hitherto dominated human pre-history into sedentary (non-nomadic) societies based in built-up villages and towns ... These developments, sometimes called the Neolithic package, provided the basis for centralized administrations and political structures, hierarchical ideologies, depersonalized systems of knowledge (e.g. writing), densely populated settlements, specialization and division of labour, more trade, the development of non-portable art and architecture, and greater property ownership." The well-known book [Guns, Germs and Steel](https://smile.amazon.com/Guns-Germs-Steel-Fates-Societies-ebook/dp/B06X1CT33R/) is about this transition. [↩](#fnref2)- Though properties (2) and (4) could in some cases imply female advantage rather than egalitarianism per se. [↩](#fnref3)- The table [above](#SummaryTable) lists two societies that specifically do *not* have "female hunting taboos."

[The Lifeways of Hunter Gatherers](https://smile.amazon.com/Lifeways-Hunter-Gatherers-Foraging-Spectrum/dp/1107607612/) (which I name above as a relatively systematic source) states that there are "quite a few individual cases of women hunters," and that "One case of women hunters who appear to be a striking exception is that of the Philippine Agta [also the only case from the table [above](#SummaryTable) with no evidence against egalitarianism]." In context, I believe it is referring to big-game hunting.

This is despite stating the view (which is shared by the paper I'm discussing now) that modern-day foraging societies have very little participation by women in big-game hunting *overall* (see the section entitled "Why Do Men Hunt (and Women Not So Much)?" from chapter 8). [↩](#fnref4)- I initially stated that Wikipedia gave no indication of which part of the book it was pointing at, but a reader pointed out that it gave a page number. That page is the [page of the index](https://www.cold-takes.com/content/images/2021/10/gender-relations-index-page.png) that includes a number of references to gender-relations-related topics. Most come from the chapter I discuss here; there are also a couple of pages referenced of another chapter, which also cites this one, and which I would characterize along similar lines. [↩](#fnref5)- "It is also necessary to reexamine the idea that these male activities were in the past more prestigious than the creation of new human beings. I am sympathetic to the scepticism with which women may view the argument that their gift of fertility was as highly valued as or more highly valued than anything men did. Women are too commonly told today to be content with the wondrous ability to give birth and with the presumed propensity for 'motherhood' as defined in saccharine terms. They correctly read such exhortations as saying, 'Do not fight for a change in status.' However, the fact that childbearing is associated with women's present oppression does not mean this was the case in earlier social forms. To the extent that hunting and warring (or, more accurately, sporadic raiding, where it existed) were areas of male ritualization, they were just that: areas of male ritualization. To a greater or lesser extent women participated in the rituals, while to a greater or lesser extent they were also involved in ritual elaborations of generative power, either along with men or separately. To presume the greater importance of male than female participants, or casually to accept the statements to this effect of latter-day day male informants, is to miss the basic function of dichotomized sex-symbolism in egalitarian society. Dichotomization made it possible to ritualize the reciprocal roles of females and males that sustained the group. As ranking began to develop, it became a means of asserting male dominance, and with the full-scale development of classes sex ideologies reinforced inequalities that were basic to exploitative structures."

It seems to me as though a double standard is being applied here: the kind of "dichotomization" the author describes sounds like a serious limitation on self-determination and meritocracy (people participating in activities based on abilities and interests rather than gender roles), and no explanation is given for the author's apparent belief that this dichotomization was unproblematic for past societies but reflected oppression for later societies. [↩](#fnref6)- From the abstract: "Ethnohistorical and nutritional evidence shows that edible plants and small animals, most often gathered by women, represent an abundant and accessible source of “brain foods.” This is in contrast to the “man the hunter” hypothesis where big-game hunting and meat-eating are seen as prime movers in the development of biological and behavioral traits that distinguish humans from other primates." I am not familiar with that form of the "man the hunter" hypothesis; what I've seen elsewhere implies that men dominate big-game hunting and that big game is often associated with prestige, regardless of whatever nutritional value it does or doesn't have. [↩](#fnref7)- A bit more on how I identified this as the key part of the paper:

* The paper notes that today's foraging societies generally have a distinct sexual division of labor, but argues that it must have developed after the Middle Paleolithic, because (from the abstract) "The rich archaeological record of Middle Paleolithic cultures in Eurasia suggests that earlier hominins pursued more narrowly focused economies, with women’s activities more closely aligned with those of men ... than in recent forager systems."

* As far as I can tell, the key section arguing this point is "Archaeological Evidence for Gendered Division of Labor before Modern Humans in Eurasia." [↩](#fnref8) |

fd99b20d-97b8-4e5e-a5bc-ca221a2bd73a | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Gradient Hacking via Schelling Goals

*Thanks to several people for useful discussion, including Evan Hubinger, Leo Gao, Beth Barnes, Richard Ngo, William Saunders, Daniel Ziegler, and probably others -- let me know if I'm leaving you out.*

See also: [Obstacles to Gradient Hacking](https://www.alignmentforum.org/posts/KfX7Ld7BeCMQn5gbz/obstacles-to-gradient-hacking) by Leo Gao.

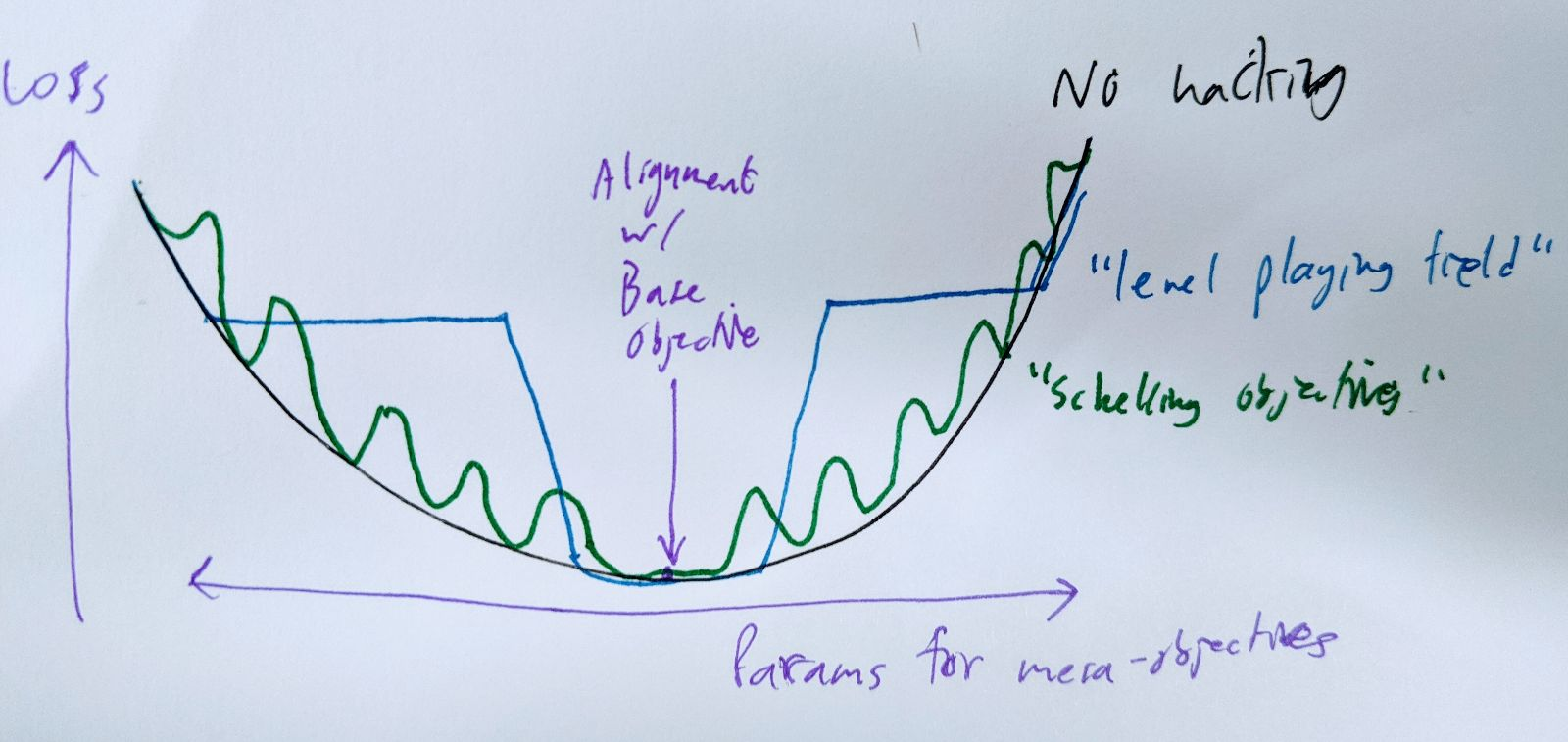

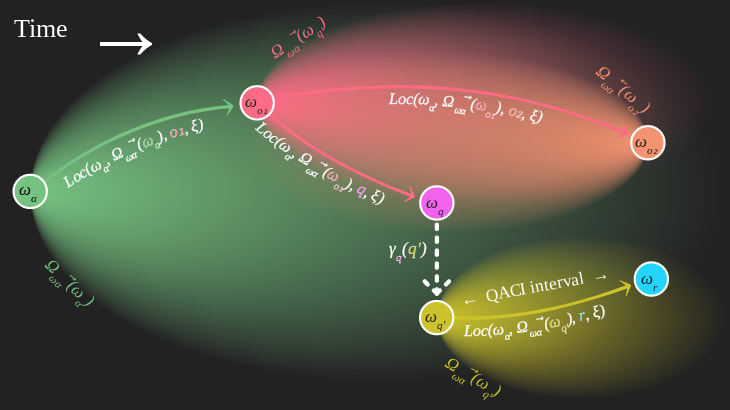

I want to argue that gradient hacking (strongly enough to create a local minimum) is possible even under somewhat pessimistic assumptions. The specific strategy that achieves this is kind of interesting: it requires the agent to coordinate on a complicated task with versions of itself that have slightly different objectives, and it requires the agent to do this without any direct communication.

A simple gradient-hacking strategy for a mesa-optimizer is to deliberately perform worse on the base objective if your mesa-objective is changed. However, this isn't as straightforward as it sounds.

One difficulty is that if the gradient hacking is hard-coded, gradient descent can just remove the hacking behavior without touching anything else. A nice way around that is to **assume that gradient hacking is discovered from first principles during the model's computation.** (Thanks to whoever pointed this out to me.) If it's good enough at reasoning, then it will be pretty hard for the base optimizer to modify it in ways that stop it from noticing that gradient hacking would be a good idea right about now.

(For simplicity, I have in mind something like a really deep feed-forward network, possibly with some weights tied between layers. Equivalently, a recurrent net unrolled and trained via SGD. This may not be realistic but it's easier to think about as a test case.)

A bigger problem is that it's not really correct to think of a model (with particular parameter values) as having control over its gradients. It's better to think of it as having control (in an FDT kind of sense) over only the single value of the loss for its actual parameters. The value of the loss at nearby points in parameter space is decided by slightly different versions of itself, and the gradient is sort of decided collectively. This is important if we're talking about SGD steps that change its mesa-objective, because these nearby versions of the model will have *directly conflicting* incentives when it comes to the gradient. To say "I am such that gradients on nearby mesa-objectives point towards my mesa-objective" is to say "if I had a different mesa-objective, I would deliberately do slightly worse in order to end up with this one instead." But you wouldn't! That would be bad for your mesa-objective!

Another way to look at this is that it's really hard to implement "do worse if my objective changes" if you don't have an external way to tell that your objective used to be different, let alone a way to commit other versions of yourself to things they don't want to do. You can implement rules like "if your mesa-objective isn't X, throw a fit", but the base optimizer will just change X at the same time it changes your actual mesa-objective. (Actually, it doesn't have to: we're assuming that gradient hacking has to be derived from first principles on every forward pass, so X also has to be derived from first principles. But even without that assumption this strategy wouldn't work.)

You can leave messages for yourself, if you have somewhere to jot down durable notes (like the external world), but let's assume you don't.

Maybe your mesa-objective is something nice and clear and crystalline, an island in mesa-objective parameter space, and you can implement the rule "do badly in proportion to how un-crystalline your mesa-objective is". But it's not clear why your less-crystalline neighbors in parameter space would cooperate with this plan.

Well... maybe if they're nearby enough, they'd be happy to settle on your mesa-objective rather than get pushed all the way to the base objective? This might work for some specific kinds of mesa-objectives.

More broadly, something that kind of works is to just push in the opposite direction from the base objective: do badly on the task in proportion to *how mesa-aligned you are*, so that you don't become any more mesa-aligned in the future. This possibly works well enough to level the playing field, so that the gradient in mesa-objective-space is flat. The main difficulty is that you need to pick the size of the loss penalty you're going to impose on yourself for being N units of mesa-aligned. The bigger the penalty, the further you can be from the base objective while still keeping the gradient flat -- but the versions of you closer to the base objective would prefer a smaller penalty, because it's probably costly in other ways. And of course, in the limit, the version of yourself that's aligned with the base objective isn't going to be willing to pay *any* penalty. So you probably need to coordinate (silently) with the versions of yourself whose mesa-objectives lie within some range of distances from the base objective.

A somewhat better version of that is to pick a fairly dense grid of "Schelling mesa-objectives" in mesa-objective-parameter space, and use moderate fluctuations of loss to push your mesa-objective towards the nearest Schelling point, making it a local minimum. If the grid is dense enough, even a small variation in loss can create steep enough walls to make this work.

# temporary, I hope hope hope

Ideally, you and your parameter-adjusted kin would all prefer to have a Schelling point right under your own mesa-objectives, but you can't all get that at the same time. The next-best thing is to have the grid of Schelling points (and hence local minima) be dense enough that there's one within epsilon of your actual preferences. In practice, the value of epsilon is bounded below by the SGD step size and stochasticity.

It would be easier if you could just encode the locations of the Schelling points somewhere else in your parameters, but that would leave an opening for the base optimizer to mess with them and average out your loss fluctuations to nothing. (In other words, this fails for the same reason a hard-coded gradient-hacking procedure probably fails.)

It seems probable to me that it's possible to pick these Schelling points without communicating; meeting someone at a non-prearranged spot is easier if they're almost exactly a clone of you. That isn't a very solid argument, but I doubt that this part is going to be the crux.

This proposal doesn't do everything that's needed for the original context Evan suggested gradient hacking in, which involves trying to push the base optimizer to hard-code deceptive behavior into an otherwise-aligned future version of yourself. I think it's a step in the right (er... wrong?) direction, though.

In particular, the Schelling-objective strategy lets arbitrary mesa-optimizers preserve their mesa-objectives (within epsilon), create "artificial" local minima, and force the base optimizer to settle for severely subpar performance on the base objective. It also does this without needing any of the behavior to be hard-coded where the base optimizer could get at it: the strategy can be discovered and executed from scratch by a general-purpose reasoner with amnesia. |

0a154a54-17a9-4322-8448-f4b708274144 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Brussels meetup with Cat

Discussion article for the meetup : Brussels meetup with Cat

WHEN: 03 June 2013 01:00:00PM (+0200)

WHERE: Rue des Alexiens 55 1000 Bruxelles

we are lucky enough to be meeting Cat from the CfAR in "la fleur en papier dorrée" on Monday the 3rd of June.

Discussion article for the meetup : Brussels meetup with Cat |

4ab8380b-ecea-413f-a011-a4704b762fbb | trentmkelly/LessWrong-43k | LessWrong | Practical everyday human strategizing

Reply to: Humans are not automatically strategic

AnnaSalamon writes:

> [Why] do many people go through long training programs “to make money” without spending a few hours doing salary comparisons ahead of time? Why do many who type for hours a day remain two-finger typists, without bothering with a typing tutor program? Why do people spend their Saturdays “enjoying themselves” without bothering to track which of their habitual leisure activities are *actually* enjoyable? Why do even unusually numerate people fear illness, car accidents, and bogeymen, and take safety measures, but not bother to look up statistics on the relative risks?

I wanted to give a practical approach to avoiding these errors. So, I came up with the following two lists. To use these properly, write down your answers; written language is a better idea encoding tool than short-term memory, in my experience, and being able to compare your notes to your actual thinking sometime later is useful.

Goal Accuracy Checking

1. What do you think is your current goal?

2. How would you feel once you achieve this goal?

3. Why is this useful to you and others, and how does it relate to your strengths?

4. Why will you fail completely at achieving this goal?

5. What can you do not to fail as badly?

Reasoning behind these question choices:

1. If you have no aim, you are by definition aimless. By asking for current goals rather than long-term goals, we can specify context-specific actions and choices which would allow better execution. For instance, my goal could be “to be a better writer” or “to think more rationally”. More specific and time-limited goals seem to work better here, specifically if they can be broken down into sub-goals.

2. An analogous choice here would be pleasure or reward maximization in the future. Generally speaking, it does not seem to be the case that we are motivated by wanting to feel worse in the future. So if your goal allows you to feel better about yourself, or to |

96146f90-13a1-45f5-ab71-b4a24e822dd3 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Simulators, constraints, and goal agnosticism: porbynotes vol. 1

*This is a part of a maybe-series where I braindump safety notes while waiting on training runs to complete. It's mostly talking to myself, but talking to myself in public seems somewhat more productive. The content of this post is not guaranteed to be novel, interesting, or correct, though I do try for at least one of those. Many parts involve handwaving where more rigorous reasoning and proofs would be nice.*

*Feel free to skip sections; while there is a tenuous thread running through the whole post, most sections can be understood locally.*

**Can we bound the capability of a model?**

-------------------------------------------

Giant black box networks can be highly capable but are hard to interpret and are more likely to contain spookiness. Can we find a way to make them smaller, usefully?

Considering a single forward pass of a fixed network graph and ignoring any information carried between passes (e.g. autoregressive generation, RNN memory, tapes/stacks), the types of computation a network can *internally* express are bounded. A single token prediction in a GPT-like architecture runs in [constant time](https://www.lesswrong.com/posts/K4urTDkBbtNuLivJx/why-i-think-strong-general-ai-is-coming-soon#Is_the_algorithm_of_intelligence_easy_). Algorithms which require more steps than the network can express just don't fit. No training data, fine tuning, or magic optimizer can change that.

Networks seem to show discontinuous improvements in capability akin to 'unlocking' new features with scale. I suspect that this unlock often corresponds to a new algorithm becoming accessible to the network. "Accessible" includes some slop; the search for an algorithmic representation will be affected by the training data, the network's structure, and the optimizer itself. For example, it's possible that a broader training set could result in finding a more concise (simpler) representation that would be accessible to a [smaller network](https://arxiv.org/abs/2203.15556), or that a post-process could [distill](https://arxiv.org/pdf/2210.11610.pdf) the original large network's solution into smaller networks, or express the solution in [fewer steps](https://arxiv.org/abs/2210.03142).

If your goal is to *limit* the capability expressible within a single forward pass, then knowing the network scale required to find an unwanted capability is valuable.

**Networks as parallel computational graphs**

---------------------------------------------

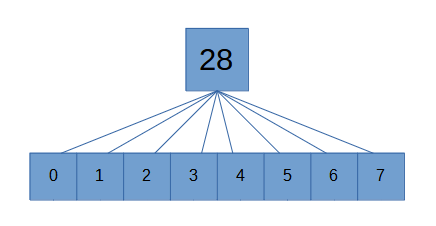

For explicit algorithms, strong lower bounds are sometimes available. For example, if you wanted to "train" a network to add 8 floating point numbers together, the fixed function hardware exposed by the network's structure makes it pretty easy:

truly a breakthrough in machine learningThis differs from the naive serial algorithm where one value is added on each step. Even if there were thousands of inputs, it's naturally parallelizable.

Each linear transform between fully connected layers can be thought of as doing one parallel step of execution. Any part of an algorithm amenable to this kind of parallelization should be assumed to flatten out for the purposes of serial step calculations. Note that this capability is a bit more extreme than it appears- if the algorithm could be squeezed into fewer steps by using large lookup tables, such a structure may be found. In the limit, an infinitely wide network can suffice to approximately [any function](https://en.wikipedia.org/wiki/Universal_approximation_theorem) arbitrarily well without needing many serial steps.

Training a network to *multiply* two floating point inputs is trickier if no log normalization is used and no multiplication unit is otherwise exposed. To make stepwise execution more explicit, consider a network that takes as input tokenized integers, and its job is to output the tokenized result. (Assume that this is all in one step; it's not autoregressively outputting multiple tokens in the answer.)

Converting this into a minimal representation that a neural network could find is... difficult. The naive iterated addition algorithm would require a number of serial steps at least as large as the number of additions which is input dependent. That would suggest the depth of the network needs to scale with the size of the input integer.

But iterated addition is not the fastest algorithm. There exists an O(n log n) [algorithm for integer multiplication](https://hal.archives-ouvertes.fr/hal-02070778/document) for two n-bit integers. Figuring out the minimal representation in a particular neural architecture is another step of complexity.

Perhaps you could programmatically convert explicit algorithms into a particular neural architecture's representation. That would amount to another optimization process. This whole thing is highly effortful.

**Empirical bounds**

--------------------

Beyond being effortful, not all algorithms have algorithms known to be at the lower bound; it's conceivable that an SGD-optimized network could find its way to an algorithm we don't know about. And there are algorithms we don't actually know how to write at all- deep learning is often pretty good at handling those. And the constant factor of the implementation matters for determining whether a given neural network could learn it.

If we are willing to accept an *upper bound* in our algorithmic requirement estimates, we can simply attempt to learn the algorithm on an architecture to see if it fits. If it can't be learned, try scaling it up until it does fit. Once you've got a solution, you can attempt to compress the representation through iterated simplification. The point at which the model becomes incapable of expressing an algorithm is a rough estimate of its complexity *with respect to that architecture, as found by the current optimizer*. This result cannot be assumed to be close to the lower bound in all cases.

This doesn't work at all if the model or algorithm in question is potentially dangerous. Empirical testing would just be opening up an attack surface. It only makes sense to use this kind of approach for trivial tasks that have no realistic possibility of going wonky.

**Sequence modeling as Bayesian update**

----------------------------------------

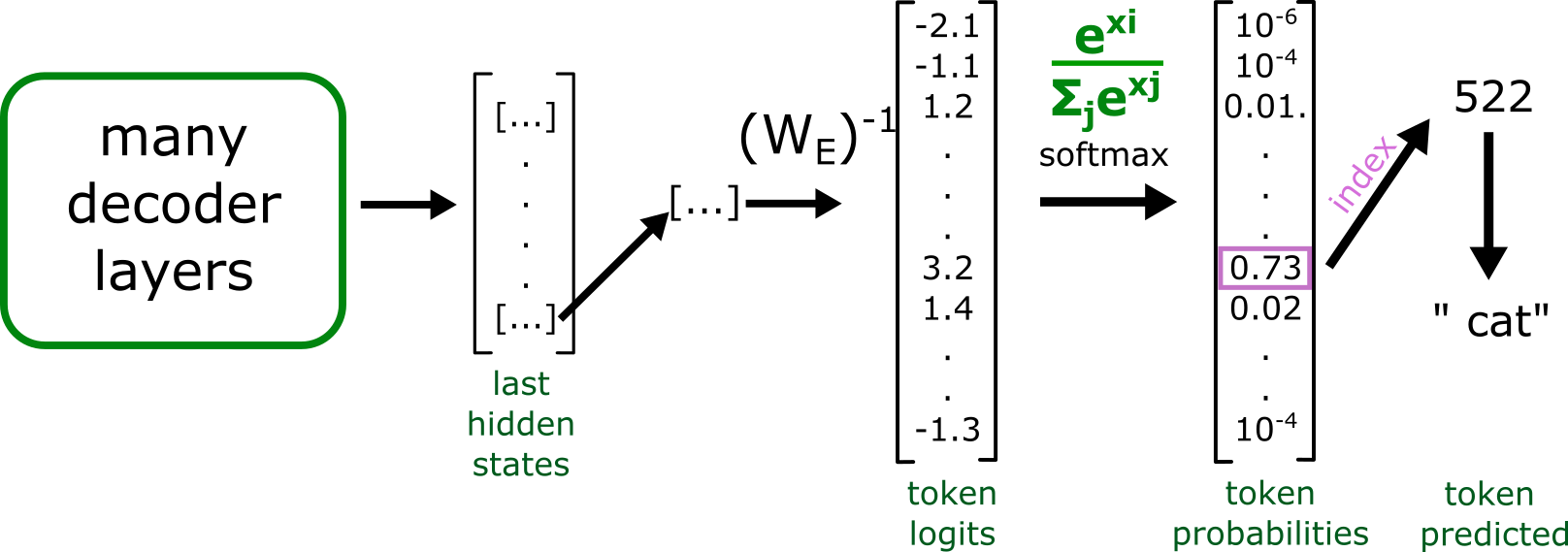

Transformers (and other sequence models) can perform [Bayesian updates](https://arxiv.org/abs/2112.10510). Even sequence models not explicitly trained to perform such updates are doing something like it. In a token predictor, the probability distribution over possible next tokens is conditioned upon the input sequence.

The ability of a sequence model to successfully predict tokens (or more accurately, to properly model the posterior distribution of tokens) is limited by what computations can be performed within a single prediction step.

Among other things, this means we can bound the computational complexity of a modeled Bayesian update by using the previously described empirical approach.

**Isn't restricting parameter counts and depth just going to make the model useless?**

--------------------------------------------------------------------------------------

If you're trying to use it like GPT, yup!

Token predictors like GPT are forced to do a great deal of computation internally for each token prediction step. They get one chance at each token. If the prompt looks like the next token should be an answer instead of incremental reasoning, and if the computation doesn't fit, oh well. Hallucinate something with the right shape and move on.

Avoiding this failure mode while sticking with the GPT architecture requires throwing scale and compute at the problem. This has worked remarkably well so far; even though the way GPT is used routinely asks the model to do *a lot* in one step, architectures like it are still able write code and so on.

But the more the architecture does in one step, the harder it is to [interpret](https://transformer-circuits.pub/2021/framework/index.html). Discerning basic information about how factual relationships are encoded is a [research project](https://arxiv.org/pdf/2202.05262.pdf).

The strong version of interpretability- like an analysis tool sufficient to inspect a strong and potentially adversarial model- seems *extremely* hard in the general case. A reporting tool like that in the context of [ELK](https://www.lesswrong.com/posts/qHCDysDnvhteW7kRd/arc-s-first-technical-report-eliciting-latent-knowledge) would indeed be a huge step for alignment, but that's because it would require solving most of alignment.

**Optimization target**

-----------------------

Simulators[[1]](#fny66tnjrpp0a) arising in prediction-focused models like GPT seem like a safer default than many other options. Empirically, prediction loss does not tend to induce goal-seeking behavior *directly* in same sense as in an RL agent (even if they can *simulate* agents). It appears prediction-focused models act more like the [laws of physics](https://www.lesswrong.com/posts/vJFdjigzmcXMhNTsx/simulators) by which other simulated agents (or non-agents) operate.

Assuming scaled-up simulators maintain agnosticism with respect to the goals of what they're simulating, simulators also provide obvious points at which the user can interrupt the process provided that the user hasn't done something very dumb. Each autoregressive execution can be thought of as a timestep in a simulation, and if you don't like the path, then so long as the intermediate states used by the predictor are interpretable, you can simply change the priors or stop the run altogether (provided that the user hasn't done something very dumb, and provided the user understands what's going on[[2]](#fnxj7orofs02)).

Do we have reason to believe scaled-up simulators will retain goal agnosticism?

**Testing for goal agnosticism**

--------------------------------

A goal seeking agent will tend to take actions that move towards its goal. A goal agnostic predictor will tend to predict tokens in a way that matches the prediction context (that is, the prediction will be properly conditioned on the input).

Taking a goal agnostic predictor and fine tuning it for another task- for example, outputting tokens that result in a higher Elo in chess- will result in a prediction bias towards tokens which lead to the goal. In other words:

Agenthood, for the simulator itself, necessarily shows up as *prediction bias*.

So, you can test for agenthood in a simulation model by performing tons of simulations and comparing the results against the ground truth. If there is a bias, you've got a potential agent. Or just error, but it's a place to start looking.

If a predictor is trained with a loss following a proper scoring rule, its predictions should be unbiased. What biases remain will likely arise from undertraining or lack of capability, and those sorts of errors do not lead to strong goal seeking behavior. This is a reason to think that goal agnosticism holds for (at least a subset of) simulator architectures in the limit.

Critically, goal agnosticism only applies to the *model*. An agent simulated by the model can still have goals.

**Effect of hybrid optimization targets**

-----------------------------------------

In contrast to predictors, something like reinforcement learning as primary training influence has obvious [problems](https://arxiv.org/abs/2210.01790). The thing the black box does is not reliably the thing you want it to do, and the thing in the black box will often "want" to keep doing it even if you want it to stop.

Are attempts to augment predictors with reinforcement learning inserting a backdoor of dangerous agenthood? This seems to depend on what is reinforced, and how.

If a pretrained LLM is handed off to a pure reinforcement learning based optimizer for the purposes of maximizing some objective within a game, it seems trivially true that failures like goal misgeneralization will eventually arise. Is there an amount of continued predictor training that would maintain the safety properties of a pure predictor?

My guess is that applying that kind of RL influence- even counterbalanced by continued predictor training- is akin to adding a fancy feature to your secure communications protocol that looks okay, but is inevitably exploited.

But what about other kinds of reward, e.g. rewarding good token distribution predictions? It seems like the sampled gradient found by most RL approaches *should*, or at least *could*, converge to some proper loss function.[[3]](#fngrwf0lnpcdq) Is there any case where a predictor-via-RL, used in the same way as GPT, would exhibit bad agenty behavior when GPT doesn't? I'd guess it would have to be very rare, because it seems like the nature of the sequence prediction task is the source of a predictor's properties. It is possible that RL is prone to goal fragility that amplifies slight deviations from pure prediction into bad agenty behavior, or that a particular form of RL doesn't yield a proper scoring rule and has biased predictions. (These biases could be detected, and even with biased predictions, it isn't obvious that it would be *agentic* bias.)

**Reinforcement learning via conditioning predictors**

------------------------------------------------------

Flipping it around: instead of predictors-via-RL, what does RL-via-predictors, like in [decision transformers](https://arxiv.org/abs/2106.01345), imply? Combined with any of the possible trivial generalizations to [online RL](https://arxiv.org/abs/2202.05607), it covers the same use cases as traditional RL. There's no strong reason to expect that agents *simulated* by a decision transformer are going to magically avoid goal misgeneralization. They may have different tendencies; an RL-via-predictor agent could exhibit different failure modes. The underlying process *is* quite different. Rather than sampling our way to a gradient and adjusting the policy weights, the input sequences condition the predictor's prediction. I wouldn't be surprised if agents simulated this way end up being more reliable in important ways.

And yet the architecture remains a predictor that seeks unbiased predictions.

I suspect the most critical difference between traditional RL and RL-via-predictors is that model-level goal agnosticism appears to be maintained in the latter. RL-via-predictor is really just a method of generating more training data for the predictor.

**Extracting agent goals from bias**

------------------------------------

From the previous sections:

1. in a simulator, goal-seeking at the model level (as opposed to simulacra level) necessarily shows up as simulation bias, and

2. direct reinforcement learning applied to a simulator (not RL-via-predictor) introduces simulation bias.

Using the same test as would be used for goal agnosticism, the set of biases in a model can be extracted. This seems like a toehold on determining what goals an RL agent (at least one fine tuned from a simulator-like architecture) has learned. Some big questions remain.

1. You can only detect bias in what you choose to test; your test coverage may not cover an area of concern.

2. Going from observed bias to *actually identifying the goal* isn't trivial.

Without good answers to these, it seems easier to try to maintain goal agnosticism. If you *did* have strong answers to them, you might be able to use RL to try to learn nice properties which make it harder to misuse the simulator. To be truly useful, it needs to outperform methods of avoiding misuse for the goal agnostic version (including RL-through-predictor models).

**How well can agenthood hide in a simulator?**

-----------------------------------------------

Examining prediction bias doesn't work if dangerously agentic behavior needs so little bias that it can hide within noise. How much bias is required to accomplish goals, compared to our ability to notice?

This might be a good place for an experiment. One possible GAN-like shape:

Train an agent discriminator. It examines a bunch of model input/output samples and attempts to predict whether the outputs came from a simple goal agnostic simulator or an intentionally biased model.

Train an agent to achieve goals while fooling the discriminator.

If the agent is able to cloak itself to the point where the discriminator can't reliably detect it, *and* it still manages to achieve its goals, that's evidence that agent detection is hard. Obviously there's a lot of research that could be done to improve both the ability of the discriminator and the deceiver, but if there are consistent signals that *deception is easier than detection*, then you need stronger guarantees about goal agnosticism by construction and shouldn't rely on anything that *might* violate that property.[[4]](#fn0mgg93kjjpcr)

**Where are misaligned agents** ***in*** **a simulation?**

----------------------------------------------------------

While it's pretty clear that a strong simulator could simulate strong misaligned agents by default, there is a question of where the meat of that simulation is taking place. Consider two extremes:

1. The simulator is extremely large and internally simulates a high fidelity supervillain agent. Some maybe-milder version of "spin up a universe to see how this agent behaves in this situation," such that within the scope of a single forward pass, the simulacrum may realize it needs to output tokens that hide its devious nature while pursuing nefarious goals. Those sorts of tokens happen to be very, very good predictions, so they achieve low loss[[5]](#fn0fvfaa5pbi7c). You deploy the model and, four hours later, the entire planet pops like a balloon for unclear reasons.

2. The simulator is strong, but sticks to simulating at a finer scale. Any goal-seeking agents can only exist in the outer loop; there is no internal space for an entire misaligned agent to think quietly to itself.

In 1, executing the model once carries tremendous risk, and the outputs themselves could be crafted by a deceptive process. In 2, risk arises only when you fail to notice multiple non-deceptive steps of execution leading in a bad direction.

In other words, in 1, the model is *behaving like a murderer*, while in 2, the model is behaving like the laws of physics which, over time, simulate a murderer.

It is not sufficient to just constrain dangerous agents to existing in the outer loop- the state that flows from timestep to timestep- rather than allowing complete agents within the weights of the predictor, but it sure seems like it would help. Another big part of the job would be to ensure that the state is intrinsically interpretable.

**Do strong internal simulations kill goal agnosticism?**

---------------------------------------------------------

There appears to be an important detail here: a simulator that internally encodes a deceptive agent (the "spin up a universe and check its behavior" level of simulator) such that the output becomes deceptive appears to be the kind of thing that would be incentivized by prediction training if that level of capability were tractable. This suggests goal agnosticism of the kind we want doesn't hold at the limit.

Is there a way to rescue this?

Maybe. There's the obvious answer that we can just make the simulator work at the finer scales- the steps of thought that a misaligned agent might work through in its effort to deceive- and restrict its capability to avoid complete internal simulations, but that's a *lot* less comforting than a direct counterargument unless you can make strong claims about what capabilities can be expressed within the computational bounds of the model.

But there's also a subtle error in the internally simulated agent case. Earlier, I said that outputting deceptive tokens represented a good prediction. That means the context of the prediction was *an agent inside a simulator that knew it was in a simulator*. That is a *different* situation than that same agent not knowing it was inside of a simulator. The prediction that follows from "I'm in a simulator" only minimizes loss if it happens to perfectly coincide with the prediction that follows "I'm not in a simulator" if the true context is "I'm not in a simulator," or in other words, the prediction is either:

1. not deceptive because there is no attempt to deceive, or

2. not deceptive because the behavior is *not conditional on simulation state*. (Note that this can be true even if the simulated agent's thought process *included an intent to deceive*. Something like, "hmm, I don't know that I'm in a simulation, but maybe I should act as if I am just in case; deceive!" That thought process is *not* conditioned on it actually being in a simulation; in other words, it would do the same thing in the real world, given a sufficiently high fidelity simulation relative to its inputs.)

This seems to recover goal agnosticism at the model level, but you still need to be *really* careful about how you use strong simulators. If you accidentally ask the simulator to simulate deception, it will. You can still die.

**Can internal agent simulations be stronger than their simulator?**

--------------------------------------------------------------------

In the above, one may be tempted to say, "but a superintelligent deceptive agent would tear apart the illusion of its simulation and behave accordingly!"

In order for an agent to successfully deceive (in the sense of outputting different behavior during training versus deployment), the simulator must leak information to the simulated agent that the agent can exploit, but the simulator is a superset of anything it can simulate internally, and a flimsy simulation would result in *worse predictions*.

For any region of input where you have sufficient training data to calibrate the simulator's predictions, a simulated agent's ability to deceive should be a temporary blip during training. With further training, improving predictions to cover the simulator's weakness will improve loss.

This improvement is not guaranteed unconditionally.

1. If the training data lacks sufficient coverage to calibrate the predictions, deceptive behavior could persist. If there is insufficient training data to suppress bias, it's also likely that there is insufficient testing data to detect bias.

2. It may be difficult for training methods with fixed training sets to have sufficient coverage to achieve the desired level of capability. This could encourage the use of techniques like online RL-via-prediction that end up sampling narrow paths through input space. If you get a bad roll of the dice and a path generator happens to be a successfully deceptive agent, you might end up with almost *all* training data near the path being used for calibration also being deceptive. Within that input space, deceptive behavior could persist.

3. If a simulated agent has explicit reflective access to the model, it could output choices that predictably lead to deception-enabling space through hijacking (more on this later).

Sticking to fixed offline data with no direct reflective access makes this kind of exploit highly unnatural for a simulator, but also carries a tax on capabilities.