id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

d065c64e-9c14-4b72-9005-4fccf36d1ecb | trentmkelly/LessWrong-43k | LessWrong | I changed my mind about orca intelligence

Follow up to: My previous posts on orca intelligence.

TLDR: I now think it’s <1% likely that average orcas are >=+6std intelligent.

(I now think the relevant question is rather whether orcas might be >=+4std intelligent, since that might be enough for superhuman wisdom and thinking techniques to accumulate through generations, but I think it’s only 2% probable. (Still decently likely that they are near human level smart though.))

1. Insight: Think about societies instead of individuals

I previously thought of +7std orcas like having +7std potential but growing up in a hunter-gatherer-like environment where the potential isn’t significantly realized and they don’t end up that good at abstract reasoning. I imagined them as being untrained and not knowing much. I still think that a +7std human who grew up in a hunter-gatherer society wouldn’t be all that awesome at learning math and science as an adult (though maybe still decently good).

But I think that’s the wrong way to think about orcas. If the average orca was +6std intelligent, it would be a +6std society, where cultural evolution would happen on ultrasteroids, knowledge and skill accumulates quickly and gets taught effectively, so orcas would end up extremely competent. I think the equilibrium for +6std societies is very likely that they act sorta like a macroagent pursuing the multi-agent optimum, aka sorta like dath ilan[1], even if they don’t have science or a growing economy. The amount of expertise that can be transmitted by smart people is pretty large even without writing.

When I realized that, I stopped thinking about “what would I expect to see if there existed +7std orca individuals” (where I implicitly imagined +0std societies) and shifted to thinking “what would I expect to see from small +6std societies without writing and science”, where in my opinion, the latter implies an extremely much higher level of competence, though I’m probably bad at communicating why.

I previously thought that it’s |

a64dd93a-f0be-402b-b75d-138f70b4df2f | trentmkelly/LessWrong-43k | LessWrong | Meetup : Urbana-Champaign: Politics and the English Language

Discussion article for the meetup : Urbana-Champaign: Politics and the English Language

WHEN: 16 February 2014 02:00:00PM (-0600)

WHERE: 40.109545,-88.2273

Read this: https://www.mtholyoke.edu/acad/intrel/orwell46.htm

And then show up and discuss it.

Discussion article for the meetup : Urbana-Champaign: Politics and the English Language |

1872624d-6e7b-4b92-a959-f93b9d8c4cb8 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Interpretable Latent Spaces for Learning from Demonstration

1 Introduction

---------------

We want our autonomous robots to be competent across broad domains. Ideally, they must be able to cope with the open world, i.e., one where it may not be possible to provide a complete specification of the task up front. Thus, it is important for the robotic agent to incrementally learn about the world and its regularities in order to be able to efficiently generalize to new situations. It can be argued that such generalizations are made easier when the models used by the robot can represent concepts ranging from object-hood to causality.

Learning from human demonstration [[1](#bib.bib1), [2](#bib.bib2)] is an efficient way to transfer such knowledge to a robot, wherein the human expert teaches a robot by showing it instances of execution of the task of interest. There are numerous examples of fairly sophisticated behaviours being learnt by robots, such as for control and planning in high dimensional systems [[3](#bib.bib3), [4](#bib.bib4), [5](#bib.bib5)]. A common theme across a majority of these works is that the target of transfer is the specific behaviour, so that the robot is taught to mimic a motion (with learning methods being used to generalise over, say, differing body configurations). We are also interested in being able to transfer other aspects of knowledge about the world which may allow the robot to infer deeper concepts. A necessary first step towards this form of teaching or transfer is to give the robotic agent the ability to learn models that represent structure in ways that are similar to corresponding human notions - so that it may be possible for the human expert and robot to leverage common grounding [[6](#bib.bib6)].

From a different direction, there is recognition among roboticists that models and policies used by autonomous robotic agents should be explainable [[7](#bib.bib7), [8](#bib.bib8)]. The notion of what it means to interpret and explain is in itself a topic of active debate at the moment, but we believe it is safe to claim that grounded models used by robots should satisfy key desiderata. For instance, the notion of similarity with which items are grouped together must align with corresponding human notions or that factors of variation implied by the model should be understandable to a human expert. These desiderata are also closely aligned with the emerging literature on learning disentangled representations [[9](#bib.bib9), [10](#bib.bib10), [11](#bib.bib11), [12](#bib.bib12)].

In this paper, we focus on learning models of objects of the kind that robots might encounter in manipulation settings. In order to make the underlying concepts fully clear, we use simple objects that uncontroversially expose the structure of the domain. For instance, we show that after being exposed only to a few instances of a ball and a cube, the agent can figure out that other instances of cubes can be labelled as such (without this fact being explicitly stated by the expert).

Our main contribution consists of a framework—see figure [1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ Interpretable Latent Spaces for Learning from Demonstration")—which allows for independent user-defined factors of variation, manifested in a high-dimensional space, to be projected to a lower-dimensional latent space by preserving the factors’ orthogonality. The latter is guaranteed by aligning each of the basis vectors that span the latent space with a single factor. Each factor is specified as a set of weak labels over the high-dimensional space. So, for the same data the framework can learn different representations for different sets of symbols. We test the framework both on a synthetic dataset (modified dSprites [[13](#bib.bib13)] with added color), with controlled factors of variation and on a dataset of real-world objects captured from a set of human demonstrations for the task of sorting table-top objects according to the user’s preference.

Figure 1: Overview of the full framework. The expert is demonstrating the task at hand while uttering symbols which are grounded according to their attention in the environment. The conceptual grouping of the symbols is given. (a) The gathered dataset is used to train a variational autoencoder with a set of auxiliary classification losses - one per conceptual group. Each classifier takes information from a single latent dimension. (b) The training procedure guarantees that the semantic meaning of each group will be encoded in a separate latent dimension with linearly separable classes (c) Using the per-class estimated 1D Normal distributions, together with in-between distributions for unknown labels, we can perform 1-NN classification (d)

2 Related Work

---------------

An efficient, and unobtrusive, learning process must need minimal effort on the part of the human expert, requiring the agent to be able to generalise to unobserved scenarios. At the same time, the models and concepts used by the agent must be interpretable by the human, in order for the interaction to be efficient. 0-shot, 1-shot [[14](#bib.bib14)] and meta-learning [[15](#bib.bib15)] are all approaches which strive for fast learning and better generalisation from limited amounts of data. However, from the point of view of ensuring safe operation by a robot, it is also important to be able to quantify when an unfamiliar situation occurs and to seek guidance from an expert.

The ability to deal with examples that fall outside the domain of the training distribution is known as ‘open world recognition’ [[16](#bib.bib16), [17](#bib.bib17)]. This is needed when an agent comes across unknown unknowns - observations that have not been seen during training. In this setting, the incomplete nature of the agent’s model causes it to make the wrong prediction. A complete model would cope with such cases by explicitly recognising that data point as ‘unknown’. It is desirable for the agent to be able to express its ignorance to the expert in a way that is comprehensible to them. This motivates the need for interpretability - we learn a representation that enables communication for user feedback regarding partially labelled sensory observations.

In the context of visual sensory inputs, ‘inverse graphics’ is an approach to learning compressed and factorised representations of high-dimensional visual data - e.g. learn to invert a graphical renderer and infer (for a given image) the parameter vector that generated an input image. The fact that such vectors are low-dimensional and factorised is what makes them appealing from a human-interpretability point of view [[18](#bib.bib18), [19](#bib.bib19)].

In the representation learning literature, there has been work [[9](#bib.bib9), [10](#bib.bib10), [11](#bib.bib11), [20](#bib.bib20), [21](#bib.bib21)] aimed at unsupervised learning of inverse-graphics-like representations of image datasets. This has been demonstrated with models like InfoGAN [[10](#bib.bib10)] which does learn the desired factorised representations in an unsupervised fashion but also suffers from problems like unstable training and sensitivity to the chosen prior distribution over the latent codes. Moreover, there is no explicit inference module that would produce a latent code, given an observation, even though the recognition network Q can be used as such. An alternative in the literature is the β-VAE model [[9](#bib.bib9)] which has fewer parameters to tune, has more stable training and reportedly learns similar representations. The factors of variation which are encoded in these representations are the ones that best explain the visual variations in the data [[12](#bib.bib12)] but might not necessarily be the factors of variation which are crucial to recognise and discriminate in the context of a particular task. Inferring which learnt factors best ground the user-defined symbols is usually a separate process wherein inference must be performed for each label-factor pair. Learning a representation suitable for symbol grounding [[22](#bib.bib22), [23](#bib.bib23)] from raw pixels in an unsupervised manner, based on β-VAE, has turned out to be non-trivial [[24](#bib.bib24)]. In this work we employ methods based on deep variational inference [[25](#bib.bib25)] and perform the learning of a factorised vector space and the grounding of symbols in it simultaneously, in a weakly-supervised fashion. Thus we achieve better alignment between the user-defined semantic conceptual groups and the basis vectors of the vector space.

3 Background and Problem Formulation

-------------------------------------

In this work, we assume that the human expert and robotic agent share the environment and that they can both observe and interact with objects in the surrounding world. The agent can extract images of distinct objects from the world and receive labels for them from the expert.

Data capture can be achieved with any technique that is able to align a sequential abstract plan for a task (parsed from a linguistic construct) with a temporal trace of expert movements and actions in the external environment - e.g. gesture recognition [[26](#bib.bib26)], movement tracking [[27](#bib.bib27)], eye-tracking [[28](#bib.bib28)]. Thus, specific symbols from the plan can be related to regions of interest in the environment, where the object, encoding the label’s meaning, resides.

Let O={x1,…,xN},xi∈RD be a set of unlabelled observations, which have been passively gathered prior to the expert demonstration. An expert demonstration introduces a set of L conceptual groups G = {g1,…,gL} (e.g. color, shape, size, etc.), where each group is a set of mutually exclusive discrete labels: gi={yi1,...,yini},ni=|gi| (e.g. color can be red, blue, yellow, etc.). The demonstration also provides labels, one drawn from each concept group, to M of the observations: O={(x1,y1),…,(xM,yM),(xM+1,∅)…(xN,∅)}, yj={yp}, p∈{1,…,L}, yp∈gp is a set of labels which have been attached to the image x - one per concept group.

The task is to project each x∈RD into z∈RC , C≪D, where the space of RC possesses the following properties:

Axes Alignment - guarantees a one-to-one mapping from the concept groups G, to the orthogonal basis vectors which span RC. This would guarantee that independent concepts in image space are kept independent in RC—e.g. color does not depend on the size, orientation or shape of an object.

Intra-group Linear Separability - the latent clusters in RC, corresponding to the labels in each concept group gi, are linearly separable across the basis vector which has been aligned with that concept group.

These two properties allow for RC to be used as a feature space for performing probabilistic symbol grounding with the ability to recognise unknown objects. For that we use 1-NN classification with 1D normal distributions (one per label) for each concept group in G—see Figure [1](#S1.F1 "Figure 1 ‣ 1 Introduction ‣ Interpretable Latent Spaces for Learning from Demonstration") (c) and (d).

4 Methodology

--------------

We explore the effects of adding an auxiliary classification loss to a Variational Autoencoder [[25](#bib.bib25)] as a base architecture, specifically the β-VAE [[9](#bib.bib9)]. Through weak supervision, in the form of partially labelled data, the auxiliary loss influences the latent space of the model to exhibit properties which make it suitable for robust symbol classification. The model consists of a convolutional encoder network qϕ, parametrised by ϕ, a deconvolutional decoder network pθ, parametrised by θ, and a set of linear classifiers parametrised by wi∈R|gi| for each group gi∈G. Additional parameters—α,β,γ—are added on the three terms of the overall loss function—see ([1](#S4.E1 "(1) ‣ 4 Methodology ‣ Interpretable Latent Spaces for Learning from Demonstration"))—in order to leverage their importance.

| | | | |

| --- | --- | --- | --- |

| | minθ,ϕ,WL(x,y,θ,ϕ)=βDKL(qϕ(z|x)||pθ(z))−αEqϕ(z|x)(logpθ(x|z))+γ|G|∑iH(ziwTi,yi) | | (1) |

Classification term (weighted by γ) - In order to force the learnt latent space to explain the variations in the data, we add a linear classifier for each concept group. Each classifier has to predict the set of labels for its assigned concept group using information only from a single latent dimension. No two classifiers have access to the same latent dimension. That forces each dimension to only explain labels from the particular concept group. A discrete cross-entropy term is used for the predictions of each classifier.

Reconstruction term (weighted by α) - The reconstruction loss is a standard Bernoulli Negative Log Likelihood, which is used to predict the pixel values across the three RGB channels. The motivation behind this term is that we do not assume all data points in our dataset are labelled. Thus the reconstruction loss would force data points which look similar in image space to be projected close to each other in the latent space.

Kullback-Leibler divergence term (weighted by β) - The Kullback-Leibler divergence term ensures that the distribution of the latent projections of the data in RC does not diverge from a prior isotropic normal distribution. A perfectly optimised KL term would result in all latent projections to be 0. This forces the encoder network qϕ to be more efficient when encoding the image observations so that their latent projection can be discriminated from each other across the basis vectors aligned with G—the classification term—and the decoder network pθ can efficiently reconstruct them—the reconstruction term.

The values for all three coefficients are chosen empirically such that the values for all the loss terms have similar magnitude and thus none of them overwhelms the gradient updates while training.

In order to account for data-generative factors of variation that might not be needed to encode the conceptual groups G but are still essential for good reconstruction and stable training, we allow |RC|>|G|. For example, spatial and rotational factors of variation would not contribute to explaining the semantics of concepts like shape and size but should still be accounted for in order for the subset of basis vectors in RC which are aligned with G to encode only information which explain G.

Algorithm [1](#algorithm1 "Algorithm 1 ‣ 4 Methodology ‣ Interpretable Latent Spaces for Learning from Demonstration") describes the core functionality of the framework. Initially we have a set of partially labelled observations, a set of conceptual groups with their labels, and a single untrained linear classifier for each group. In the training process, each observed image x is passed through the network and its reconstruction ^x∼pθ(x|z),z∼qϕ(x) is fed to the loss L. If the data point is labelled, a label is predicted for each conceptual group from the respective classifier. After training, we estimate the parameters of a 1D normal distribution for each label across the dimension which was responsible for predicting it during learning.

Input: observations O={(x1,y1),…,(xM,yM),(xM+1,∅)…(xN,∅)}

Input: conceptual groups G = {g1,…,gL}

Input: linear classifiers W={wi},i∈{1,…,L}

Input: Isotropic Normal Prior p(z)=N(0,I)

Output: set of per-label estimated 1D normal distribution for each label in each conceptual group: K={{N(μpq,σpq)},p∈{1,…,L},q∈{1,…,|gp|}

1 while *not converged* do

2 for *each (x,y) in O* do

3 ^μ,^σ←Encode(x);

4 ^z∼N(^μ,^σI);

5 if *y≠∅* then

6 for *each wi in W* do

7 ^yi←ziwTi;

8

9 ^x←Decode(^z);

10 Use ^x, ^y, ^μ and ^σ to compute L—see ([1](#S4.E1 "(1) ‣ 4 Methodology ‣ Interpretable Latent Spaces for Learning from Demonstration"));

11

12for *each gi in G* do

13 for *each yij in gi, j∈{1,…,|gi|}* do

14 f←{(x,y)∈O|yij∈y};

15 ^μ,^σ←Encode(f);

16 ^z∼N(^μ,^σI);

17 N(μij,σij)←fitNormal(^zi);

18 Add N(μij,σij) to K;

19

Algorithm 1 Model Learning with Weak Supervision

When classification is performed at test time, the task is to predict a set of L labels y for each image observation x. Classification is performed by using the factored projection of each image observation in RC. The 1D coordinates along each basis vector zi of RC are used to predict a label for the correspondingly aligned concept group gi. Each 1D value is normalised with respect to the normal distributions in K corresponding to gi and the class associated with the closest one, along zi is assigned to x for gi. As a consequence of optimising the Kullback-Leibler divergence term, together with the reconstruction and classification losses, any data points that have not been labelled and do not resemble the labelled ones end up being projected closer towards the origin of the latent space RC, in between the clusters associated with the labelled data. Thus, in order to be able to account for such unknown objects, for every two neighbouring distributions N(μil,σil) and N(μir,σir), along the basis vector zi, aligned with gi, we fit an average distribution Nu(μiu,σiu) where μiu=μil+μir2 and σiu=σil+σir2. Any data point that is closer to such an unknown distribution than to a labelled one is considered unknown.

5 Experiments

--------------

###

5.1 Data

The controlled data-generative factors of variation of the modified dSprites dataset—see figure [2](#S5.F2 "Figure 2 ‣ 5.1 Data ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration")—make it suitable for exploring how the two baselines compare to the proposed model with respect to the defined manifold properties in section [3](#S3 "3 Background and Problem Formulation ‣ Interpretable Latent Spaces for Learning from Demonstration"). The resulting dataset is of size 3500 images—72 objects with spatial x/y variations in the image. We perform two experiments with the same underlying dataset but different sets of symbols in order to demonstrate how the user’s preference is encoded in the latent space.

Figure 2: A modified version of the dSprites [[13](#bib.bib13)] dataset with added color. The RGB equivalents of 8 colors—two variations of red, green, blue and yellow—are added. Spatial factors of variation are also present in the images but not shown in the figure.

Figure 3: Example images crops of all table-top objects. Spatial and pixel noise factors of variation were added to each crop through data augmentation techniques.

In order to demonstrate the application of the framework to real-world human-robot interaction scenarios, a second dataset of objects on a table-top is gathered from a human demonstration - Figure [3](#S5.F3 "Figure 3 ‣ 5.1 Data ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"). The task the human performs is to separate the objects by their function - juggling balls vs orbs, and then by their color - red vs yellow vs blue. Lego blocks and whiteboard pins are also present in the scene, but they are not manipulated and no label information is given about them from the expert. At test time the agent has to repeat the task, with new objects being present in the scene that were previously unobserved—green objects and a yellow rubber duck. Each object image is augmented, resulting in a dataset of size 7500 images of 15 objects with spatial variation. Both datasets are split into a training-testing sets with an 80-20 ratio. All results are reported on the test set. The setup for gathering and labelling per-object image crops in a single human demonstration through the use of eye-tracking is described in Appendix [A](#A1 "Appendix A Demonstration and Object Crops Gathering ‣ Interpretable Latent Spaces for Learning from Demonstration").

###

5.2 Evaluation

Two baselines are used to benchmark against the proposed architecture - a Vanilla Beta-VAE (γ=0) and a Convolutional Classifier Network with Kullback-Leibler divergence and no reconstruction term (α=0). More details on the network architecture and training setups are provided in Appendices [B](#A2 "Appendix B Network Architecture ‣ Interpretable Latent Spaces for Learning from Demonstration") and [C](#A3 "Appendix C Hyper parameters ‣ Interpretable Latent Spaces for Learning from Demonstration"). In order to evaluate the extent to which each baseline satisfies the properties we define in Section [3](#S3 "3 Background and Problem Formulation ‣ Interpretable Latent Spaces for Learning from Demonstration"), we use metrics which are inspired by the literature on learning disentangled representations [[29](#bib.bib29)]

Axes Alignment - In order to determine how well the concept groups in G are aligned with the basis vectors that span the latent space RC we perform PCA on the latent projections of the data points for each label and examine the alignment of the resultant eigenvectors with the basis vectors. If a particular concept group is aligned with a single basis vector, then the eigenvector with the smallest eigenvalue should be parallel to that basis vector. Such alignment would mean that traversing a single concept group in image space corresponds to perturbing the values of a single basis vector. For example, if zi encodes the concept of color, then the latent distribution for all blue datapoints should have small variance along zi and large variance across all other zj≠i. To examine this, for each label in each concept group, we plot the cosine similarity diagrams depicting the cosine distance between each pair of basis and eigenvectors—see figure [4](#S5.F4 "Figure 4 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"). White cells mark the cosine similarities between the shortest eigenvector with all basis vectors and between its closest parallel basis vector and all other principal components. The average entropy of the normalised white cosine values is reported for each combination of model-experiment to ease quantitative analysis. Low entropy corresponds to axis alignment, resulting in a single big white cell (for the smallest eigenvector and its most-parallel basis vector) and remaining small white cells along the row and column of the big one. For each concept group in each experiment we should observe the same white cell patterns if axis alignment is being achieved. For further information on this point, please see the supplementary materials 111<https://sites.google.com/view/interpretable-latent-spaces/axes-align-explain>.

Intra-group Linear Separability - In order to perform 1-NN classification at test time with unlabelled objects, using 1D Normal distributions across the concept groups in G, the latent cluster in RC for each label yij∈gi has to be linearly separable from the latent clusters for all other labels from gi. We report F1 scores for each class label, per concept group, including predictions for unlabelled observations which represent both known and unknown labels.

###

5.3 Results

Experiment 1 - learn z0≡ color and z1≡ size. The user-uttered labels for color are red, blue and for size are small, big. The color labels are assigned to a single variation of the respective color. All images which can not be described by the given labels—yellow and green for color and medium for size—are given an unknown ground truth label. Total |Z| = 4.

Experiment 2 - learn z0≡ shape and z1≡ size. The user-uttered labels for shape are square, heart and for size are small, big. All images which can not be described by the user-uttered labels—ellipse for shape and medium for size—are given an unknown ground truth label. Total |Z| = 4.

Experiment 3 learn z0≡ color and z1≡ object type. The user-uttered labels for color are red, blue and for object type are juggle ball, orb. All images which can not be described by the user-uttered labels—lego bricks and whiteboard pins, rubber ducks or green objects—are given an unknown ground truth label. Total |Z| = 4.

Figure 4: Axes Alignment evaluation for the full model (a), the classifier baseline (b) and the β-VAE (c) for the 3 experiments - (1), (2) and (3) respectively. For each experiment and each model, the cosine similarity diagram for each label is shown. In each diagram the size of square (i,j) represents the cosine distance between eigenvector cj and basis vector zi in RC, after performing PCA on the latent values for the corresponding label. All white cells mark the cosine similarities between the smallest eigenvector with all basis vectors and between its most-parallel basis vector and all other principal components. E denotes the average entropy estimates over normalised white cells values for a single model and a single experiment.

Figure [4](#S5.F4 "Figure 4 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration") presents the main findings from our experiments. Both the full model and the classifier network - figure [4](#S5.F4 "Figure 4 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration") (a,b) achieve good alignment between the concept groups in G and the basis vectors of RC. All labels from a particular concept group are consistently explained by the latent dimension which was used to predict them - e.g. color is explained by z0 and size is explained by z1 for experiment 1. For the classifier network - figure [4](#S5.F4 "Figure 4 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration") (b) we show only the first two latent dimensions which were used to perform the classification (hence containing relevant information). The β-VAE - figure [4](#S5.F4 "Figure 4 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration") (c) fails to satisfy the axis alignment requirement - labels from the same concept groups are best explained by different basis vectors—e.g. color in experiment 1. For this model we deterministically chose to use zi to predict labels for gi - Table [1](#S5.T1 "Table 1 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"). This is of course owing to the fact that the β-VAE baseline is trained in a completely unsupervised fashion. We will report this to bring out the observation that non-axis-aligned representations render the 1-NN classification process to be non-trivial, as it is not at all clear which axes should be used to decide on the labels for each concept group. The full model and the classification network baseline perform comparably well with respect to classifying labelled data points. However, the classifier-only baseline is not as good at discriminating between known and unknown objects - unknown columns in Tables [0(a)](#S5.T0.st1 "(a) ‣ Table 1 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"), [0(b)](#S5.T0.st2 "(b) ‣ Table 1 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"), [0(c)](#S5.T0.st3 "(c) ‣ Table 1 ‣ 5.3 Results ‣ 5 Experiments ‣ Interpretable Latent Spaces for Learning from Demonstration"). We speculate that this is a result of the model having access only to labelled images during training, unlike the weakly trained full model. Thus, it is not able to pull together in the latent space both labelled and unlabelled visually similar data points in image space and push away visually different data points in image space. We provide more detailed analysis in the supplementary materials 222<https://sites.google.com/view/interpretable-latent-spaces/>.

| | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

|

| Model | blue | red | unknown | big | small | unknown |

| --- | --- | --- | --- | --- | --- | --- |

| α = 1, γ≠0 | 0.88 | 0.98 | 0.87 | 0.82 | 0.93 | 0.64 |

| α = 0 | 0.76 | 0.78 | 0.29 | 0.84 | 0.87 | 0.52 |

| γ = 0 | 0.05 | 0.37 | 0.23 | 0.45 | 0.34 | 0.26 |

(a) dSprites - z0≡ color and z1≡ size

|

| Model | heart | square | unknown | big | small | unknown |

| --- | --- | --- | --- | --- | --- | --- |

| α = 1, γ≠0 | 0.70 | 0.73 | 0.34 | 0.71 | 0.83 | 0.6 |

| α = 0 | 0.97 | 0.8 | 0.67 | 0.53 | 0.79 | 0.5 |

| γ = 0 | 0.2 | 0.33 | 0.32 | 0.4 | 0.38 | 0.33 |

(b) dSprites - z0≡ shape and z1≡ size

|

| Model | blue | red | yellow | unknown | juggle ball | orb | unknown |

| --- | --- | --- | --- | --- | --- | --- | --- |

| α = 1, γ≠0 | 0.75 | 0.87 | 0.86 | 0.95 | 0.8 | 0.62 | 0.81 |

| α = 0 | 0.7 | 0.31 | 0.89 | 0.1 | 0.64 | 0.64 | 0.41 |

| γ = 0 | 0.2 | 0.25 | 0.25 | 0.18 | 0.1 | 0.3 | 0.26 |

(c) Real objects - z0≡ color and z1≡ object type

|

Table 1: Evaluation of the discriminative abilities of each model for each experiment. F1 scores are reported for experiment 1 (a), experiment 2 (b) and experiment 3 (c).

6 Conclusion

-------------

We present a framework for physical symbol grounding where linguistically-defined semantic concepts from an expert, manifested in a high-dimensional image space, are mapped to a lower-dimensional learnt latent space. The resultant latent projections preserve any orthogonality between the user-defined concepts. In this sense, they are sufficient to perform robust—among other things, able to recognise unknown unknowns—and sample-efficient symbol inference. We demonstrate this through experiments with images of computer-generated and real physical objects.

\acknowledgments

This work is partly supported by ERC Grant 269427 (STAC), a Xerox University Affairs Committee grant, and the CoGLE project under the DARPA XAI programme. |

35336c34-6f46-4ada-8679-f55eb409aa6a | trentmkelly/LessWrong-43k | LessWrong | Meetup : Vancouver

Discussion article for the meetup : Vancouver

WHEN: 27 September 2012 06:00:00PM (-0700)

WHERE: 885 west georgia vancouver

Last meetup was fun, it's almost time for the next!

Meet us in the lobby of 885 west georgia at 18:00 on thursday. Better to be early than late. We go upstairs at about 18:30, after which it will be very difficult to meet up with us. Come on out.

I'll be leading a discussion of Yvain's rational home buying and disolving questions about desiese because they are such good examples of LW ideas in action.

Last time, I presented Letting Go from HTACYM. The consensus on the Letting Go sequence was that it was a bag of tricks and inspiration for dealing with the need to face reality (when you are trying to actually change the world). We had a lot of discussion of what you can expect from other people vs what you expect from yourself, dealing with emotions, value of predictive vs descriptive explanations, some rationality fundamentals, some fun math proofs, etc.

Hopefully we'll have some more cool discussion and maybe even some application of these ideas to our own lives.

As usual, join us on the mailing list. Be there.

Discussion article for the meetup : Vancouver |

23a925bf-b73d-47d7-86d2-4b35be05553c | trentmkelly/LessWrong-43k | LessWrong | The Price Of Space Is Friendship

It's an exciting time in the history of human exploration. We're only just beginning to probe outside our biosphere. Low-orbit, long-term space inhabitation like that seen on the ISS has been the norm for many years now. As of the time of writing, 7 people were in space. Our long term goals seem set on permanent outposts on Mars.

Orbital re-entry of crewed spacecraft is a complicated dance of physics. You ever do that challenge where you have to build a cage out of straws and cardboard to protect an egg from a fall? Well, it's like that on steroids. Generally, if you are coming in from another interstellar body you will be going very fast. You can't decelerate over around 90m/s/s (or 9gs) or let the interior of the ship get too hot without killing your crew. You've got an enormous amount of kinetic energy to disperse, and the best way to do that is by braking in the atmosphere at a relatively shallow angle of descent. This means that reentry maneuvers cover a lot of airspace. You will likely either orbit the earth entirely or a large portion of it before you finally reduce your velocity enough to touch down.

The same is true of orbital ejections from Earth. Generally we try to launch from near the equator as we can, and in the direction of the Earth's rotation (easterly). This is so we can steal a bit of this initial speed from the Earth itself. To get ourselves from one stellar body to another we might do a Hohmann transfer, which means there is going to be some arbitrary point in the stable orbital trajectory where we need to fire some impulse to get onto this new trajectory (where line '2' begins below). That arc over low-earth orbit can be of any length, from a few moments to multiple orbits around the globe, and stretch over any number of nation-states.

(Courtesy of WikiMedia)

There have been significant developments in the ability for nation-states to control their low-earth orbit even in the past few decades. Anti-satellite systems are a rapidly growing se |

4ef1c229-5f1f-45b1-a04a-ea8bce517008 | trentmkelly/LessWrong-43k | LessWrong | [LINK] 52 Concepts To Add To Your Cognitive Toolkit

Excellent list by Brenton Mayer and Peter McIntyre: http://mcntyr.com/52-concepts-cognitive-toolkit/

I think the list can also serve as a useful index and/or introduction to a lot of LessWrong concepts.

A note of caution: I find that brief lists like this can actually be counterproductive, since they make you feel like you understand the issues when all you did was read a short definition and peg a name on the concept. I'd recommend doing the following: Look through the list carefully and slowly. If there's a concept there that you've read a lot about then you can go on to the next one, although do take a look at where the link points to in case it's to an interesting article you haven't seen before. If you haven't read a lot about the concept then ideally you should click on the link and read all about it. If you're more pressed for time, then at least take a few moments to reflect on each concept and think how it might apply to you. If you have even a slight suspicion that there might be something in the concept that wasn't completely obvious to you before, then click on the link even though you're pressed for time. If you're so time constrained that you can't even do this, then consider just bookmarking the list and getting back to it later. Personally I think it's better to read it later carefully than to read it now and think you understand it when you really don't. |

91130618-f62a-40f9-a14f-c12367f7eca1 | trentmkelly/LessWrong-43k | LessWrong | Love and Rationality: Less Wrongers on OKCupid

Last month, Will_Newsome started a thread about OKCupid, one of the major players among online dating sites--especially for the young-and-nerdy set, given their mathematical approach to matching. He opened it up for individual profile evaluation, which occurred, but so did a lot of fruitful meta-discussion about attraction in general and online dating mechanisms in particular. This post is a summary of the parts of that thread which specifically address the practical aspect of good profile editing and critique. (It also incorporates some ideas I had previously but hadn't collected yet.) A little of it is specific to OKCupid, but most of it can be applied to any dating site, and some to dating in general. I've cited points which came from single comments (i.e. not suggested by several people); if I missed one of yours, please comment with a link and I'll add the reference.

On OKTrends

"Wait a minute," I hear experienced OKCers cry. "Why reinvent the wheel of profile analysis? OKCupid already has a blog for just that, and it's called OKTrends."

OKTrends has its merits, but it also has one major flaw. Wei_Dai summed it up well by observing that OKTrends does not make "any effort to distinguish between correlation and causation," citing this post as an example. The reason for that is obvious: the first purpose of OKTrends is to bring traffic to OKCupid. It does this with entertaining content about racy subjects, and rigorous analysis comes (optimistically) second. Of course, datadataeverywhere added, that's exactly the Mythbusters formula. They're both junk food science, but it's also the only look at their data we're going to get, so I'll link a few relevant OKTrends posts in the appropriate sections.

How to Write a Good Profile

Okay, you've created your account and answered a few questions. Now it's time to summarize your whole personality, your appeal, and your worldview in ten little text boxes. Where to begin?

The obvious answer is to reply to the ten profile |

50566cd5-db1b-4ce9-929a-a08232165ba5 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Path Independent Equilibrium Models Can Better Exploit Test-Time Computation.

1 Introduction

---------------

One of the main challenges limiting the practical applicability of modern deep learning systems is the ability to generalize outside the training distribution (Koh et al., [2021](#bib.bib24)). One particularly important type of out-of-distribution (OOD) generalization is *upwards generalization*, or the ability to generalize to more difficult problem instances than those encountered at training time (Selsam et al., [2018](#bib.bib45); Bansal et al., [2022](#bib.bib7); Schwarzschild et al., [2021b](#bib.bib44); Nye et al., [2021](#bib.bib38)). Often, good performance on more difficult instances will require a larger amount of test-time computation, so a natural question arises: how can we design neural net architectures which can reliably exploit additional test-time computation to achieve better accuracy?

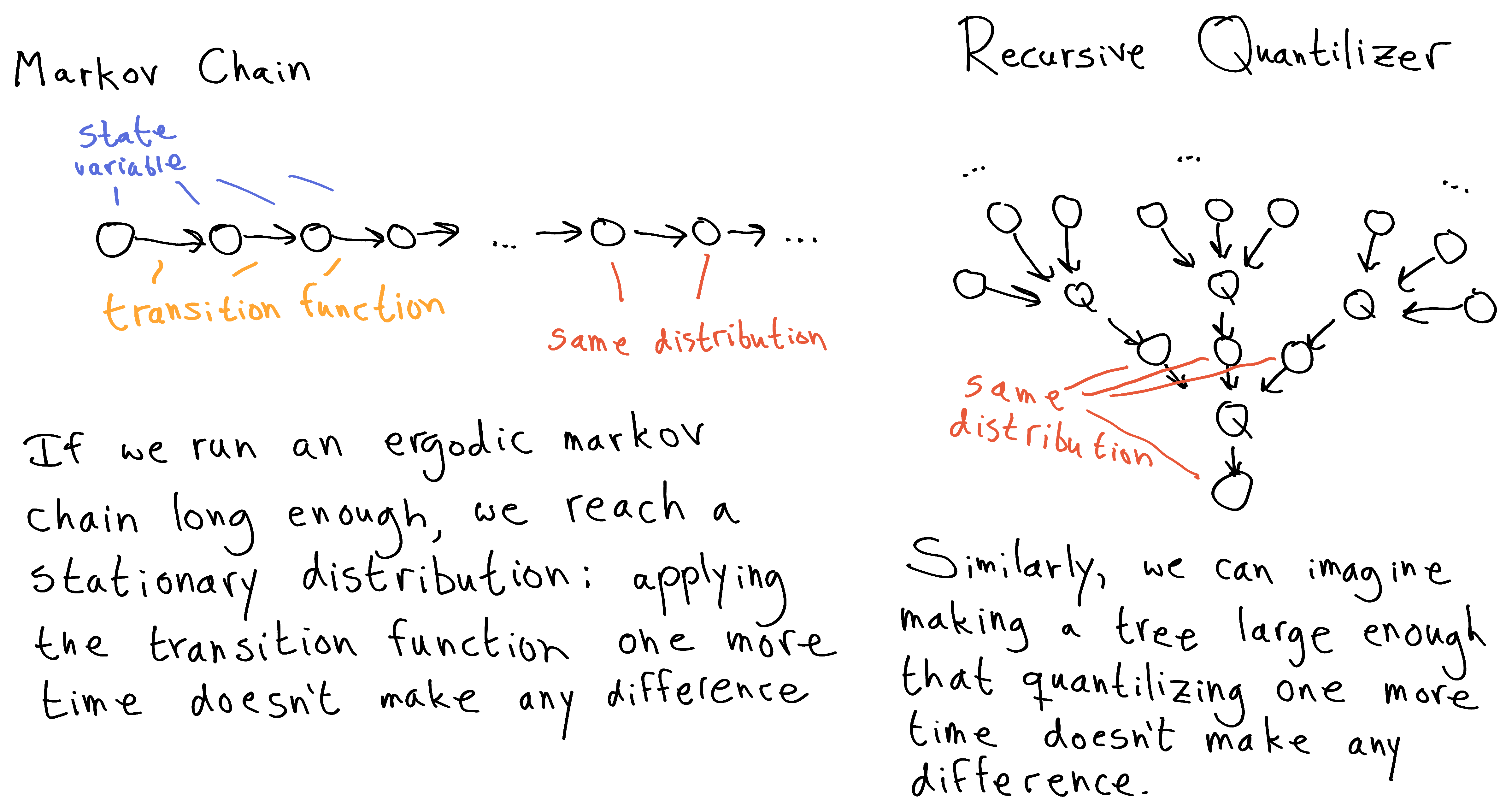

Equilibrium models, a broad class of architectures whose outputs are the fixed points of learned dynamical systems, are particularly suited to meet this challenge. Closely related to weight-tied recurrent models – networks that apply the same fixed neural network module repeatedly to hidden-layer activations – equilibrium models are capable of adapting their compute budget based on the input they are given. Under what conditions, if any, can this input-dependent ability to scale-up test-time compute actually lead to upwards generalization?

We argue that a key determiner of whether a learned model can exploit additional test-time computation is whether the dynamical system corresponding to the model is *path independent*; that is, whether the learned model’s hidden layer activations converge to the same asymptotic behaviour (i.e. fixed point or limit cycle), regardless of the initialization of the system. For example, a simple integrator xt+1=xt+1subscript𝑥𝑡1subscript𝑥𝑡1x\_{t+1}=x\_{t}+1italic\_x start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT = italic\_x start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT + 1 is clearly *not* path independent, as its final state depends on the initial state x0subscript𝑥0x\_{0}italic\_x start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT and the number of iterations run; conversely, the system xt+1=(xt+1)/2subscript𝑥𝑡1subscript𝑥𝑡12x\_{t+1}=(x\_{t}+1)/2italic\_x start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT = ( italic\_x start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT + 1 ) / 2 *is* path independent, as it will converge to the solution xT=1subscript𝑥𝑇1x\_{T}=1italic\_x start\_POSTSUBSCRIPT italic\_T end\_POSTSUBSCRIPT = 1 as T→∞→𝑇T\rightarrow\inftyitalic\_T → ∞ regardless of the initial condition of x0subscript𝑥0x\_{0}italic\_x start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT. Path independence is closely related to the concept of global stability from control theory (see Section [9](#S9 "9 Related Work ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation") for more).

Intuitively, path independent systems can more easily take advantage of additional test-time iterations than path dependent ones. For instance, gradient descent applied to a convex objective is path independent, and correspondingly when confronted with a more ill-conditioned problem instance, one can compensate by increasing the number of iterations. Conversely, a weather simulation is path dependent, and extending the simulation won’t yield more accurate predictions of a given day’s weather. Based on this intuition, we hypothesize that path independence of a learned model is a key determiner of whether it can take advantage of an increased test-time iteration budget when generalizing to harder problem instances:

Figure 1: Trajectories of path independent models converge to the same hidden state for a given input, regardless of initialization, whereas the trajectories of path dependent models depend on initialization. Here, we display five trajectories with different initializations obtained from a path independent (left) and path dependent model (right) on the prefix-sum task, projected onto two random directions.

>

> *Path Independence Hypothesis:* Models which successfully fit the training distribution with a path independent function are better able to exploit more test-time iterations to achieve higher accuracy, compared to those which fit the training distribution with a path-dependent function.

>

>

>

We first introduce a metric for measuring the path independence of a learned model, the *Asymptotic Alignment (AA)* score. On a wide range of tasks including sequence prediction, visual reasoning, image classification, continuous optimization and graph regression, we show that a model’s AA score is strongly correlated with its performance when far more iterations are used at test time than at training time. In general, we find that path independent models increase their performance monotonically with the number of test-time iterations, whereas path dependent models degrade when the number of test time iterations exceeds those at training time. We find that input injection and implicit updates improve both the path independence and the accuracy. Furthermore, we perform an experimental manipulation by introducing regularizers which directly promote or punish path independence. We find that these manipulations, while increasing or decreasing the AA score, also have the corresponding effect on accuracy.

2 Background

-------------

##### Equilibrium Models

Equilibrium models treat computing internal representations as a fixed-point finding problem. (McClelland and Rumelhart, [1989](#bib.bib35); Liao et al., [2018](#bib.bib30); Bai et al., [2019](#bib.bib3)) Concretely, letting 𝒙∈ℝnx𝒙superscriptℝsubscript𝑛𝑥{\bm{x}}\in\mathbb{R}^{n\_{x}}bold\_italic\_x ∈ blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_x end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT and f𝒘:ℝnx×nz↦ℝnz:subscript𝑓𝒘maps-tosuperscriptℝsubscript𝑛𝑥subscript𝑛𝑧superscriptℝsubscript𝑛𝑧f\_{{\bm{w}}}:\mathbb{R}^{n\_{x}\times n\_{z}}\mapsto\mathbb{R}^{n\_{z}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT : blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_x end\_POSTSUBSCRIPT × italic\_n start\_POSTSUBSCRIPT italic\_z end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT ↦ blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_z end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT stand for an input and the equilibrium model function (or “cell") parametrized by 𝒘∈ℝnw𝒘superscriptℝsubscript𝑛𝑤{\bm{w}}\in\mathbb{R}^{n\_{w}}bold\_italic\_w ∈ blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_w end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT respectively, equilibrium models aim to solve for the fixed point 𝒛\*∈ℝnzsuperscript𝒛superscriptℝsubscript𝑛𝑧{\bm{z}}^{\*}\in\mathbb{R}^{n\_{z}}bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ∈ blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_z end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT that satisfies 𝒛\*=f𝒘(𝒙,𝒛\*)superscript𝒛subscript𝑓𝒘𝒙superscript𝒛{\bm{z}}^{\*}=f\_{{\bm{w}}}({\bm{x}},{\bm{z}}^{\*})bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT = italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ). The cell f𝒘subscript𝑓𝒘f\_{{\bm{w}}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT might represent anything from a fully connected layer to a transformer block (Vaswani et al., [2017](#bib.bib50)). We emphasize that f𝒘subscript𝑓𝒘f\_{{\bm{w}}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT directly depends on the input 𝒙𝒙{\bm{x}}bold\_italic\_x; following existing literature, we refer to this as input injection. The outcome of the fixed point finding process might depend on the initial fixed point guess. To make this dependency explicit, we define the function FIX:ℝnx×nz↦ℝnz:FIXmaps-tosuperscriptℝsubscript𝑛𝑥subscript𝑛𝑧superscriptℝsubscript𝑛𝑧\mathrm{FIX}:\mathbb{R}^{n\_{x}\times n\_{z}}\mapsto\mathbb{R}^{n\_{z}}roman\_FIX : blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_x end\_POSTSUBSCRIPT × italic\_n start\_POSTSUBSCRIPT italic\_z end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT ↦ blackboard\_R start\_POSTSUPERSCRIPT italic\_n start\_POSTSUBSCRIPT italic\_z end\_POSTSUBSCRIPT end\_POSTSUPERSCRIPT that maps an input 𝒙𝒙{\bm{x}}bold\_italic\_x and an initial guess for the fixed point 𝒛0subscript𝒛0{\bm{z}}\_{0}bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT to an output that satisfies the fixed point equation FIXfw(𝒙,𝒛0):=f𝒘(𝒙,FIXfw(𝒙,𝒛0))assignsubscriptFIXsubscript𝑓𝑤𝒙subscript𝒛0subscript𝑓𝒘𝒙subscriptFIXsubscript𝑓𝑤𝒙subscript𝒛0\mathrm{FIX}\_{f\_{w}}({\bm{x}},{\bm{z}}\_{0}):=f\_{{\bm{w}}}({\bm{x}},\mathrm{FIX}\_{f\_{w}}({\bm{x}},{\bm{z}}\_{0}))roman\_FIX start\_POSTSUBSCRIPT italic\_f start\_POSTSUBSCRIPT italic\_w end\_POSTSUBSCRIPT end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ) := italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , roman\_FIX start\_POSTSUBSCRIPT italic\_f start\_POSTSUBSCRIPT italic\_w end\_POSTSUBSCRIPT end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ) ). The behaviour of FIXFIX\mathrm{FIX}roman\_FIX depends on the solver that’s used to find fixed points.

The most straightforward approach to solve for fixed-points is the fixed point iteration method, which recursively applies the function f𝒘subscript𝑓𝒘f\_{{\bm{w}}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT on the internal representations 𝒛𝒛{\bm{z}}bold\_italic\_z (i.e. 𝒛t+1=f𝒘(𝒙,𝒛t)subscript𝒛𝑡1subscript𝑓𝒘𝒙subscript𝒛𝑡{\bm{z}}\_{t+1}=f\_{{\bm{w}}}({\bm{x}},{\bm{z}}\_{t})bold\_italic\_z start\_POSTSUBSCRIPT italic\_t + 1 end\_POSTSUBSCRIPT = italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT )). If certain conditions are satisfied (such as the fixed iterations being globally contractive – more general conditions are discussed below), this procedure converges111Divergence is also a possible outcome, rendering the output of equilibrium models unusable. to a fixed point: 𝒛\*=f𝒘(𝒙,𝒛\*)superscript𝒛subscript𝑓𝒘𝒙superscript𝒛{\bm{z}}^{\*}=f\_{{\bm{w}}}({\bm{x}},{\bm{z}}^{\*})bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT = italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ). As solving for fixed points exactly is expensive, fixed point iterations are often terminated after a fixed number of steps or when the norm of the difference between subsequent iterates falls below a pre-selected threshold. The model weights can be updated using gradients computed via backpropagating through the full forward computational graph.

If one commits to using fixed point iterations as the root solver, then the output 𝒛\*superscript𝒛{\bm{z}}^{\*}bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT of equilibrium models can be interpreted as the infinite-depth limit of an input-injected, weight-tied model f𝒘∞(𝒙,𝒛\*)=limn→∞f𝒘(n)(𝒙,𝒛0)subscriptsuperscript𝑓𝒘𝒙superscript𝒛subscript→𝑛subscriptsuperscript𝑓𝑛𝒘𝒙subscript𝒛0f^{\infty}\_{{\bm{w}}}({\bm{x}},{\bm{z}}^{\*})=\lim\_{n\to\infty}f^{(n)}\_{{\bm{w}}}({\bm{x}},{\bm{z}}\_{0})italic\_f start\_POSTSUPERSCRIPT ∞ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ) = roman\_lim start\_POSTSUBSCRIPT italic\_n → ∞ end\_POSTSUBSCRIPT italic\_f start\_POSTSUPERSCRIPT ( italic\_n ) end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ) where the notation f(n)superscript𝑓𝑛f^{(n)}italic\_f start\_POSTSUPERSCRIPT ( italic\_n ) end\_POSTSUPERSCRIPT stands for n𝑛nitalic\_n repeated applications of f𝑓fitalic\_f on its own output, and 𝒛0subscript𝒛0{\bm{z}}\_{0}bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT stands for the fixed point initialization.

Implicit Training of Equilibrium Models Different training algorithms for equilibrium models can be derived by considering their implicit nature. Bai et al. ([2019](#bib.bib3)) solve for fixed points explicitly using black-box root finders, such as Broyden’s method (Broyden, [1965](#bib.bib9)) or Anderson acceleration (Anderson, [1965](#bib.bib1)). In order to avoid explicitly differentiating through the root-finding procedure, they utilize implicit differentiation to compute gradients.222Bai et al. ([2019](#bib.bib3)) use the term Deep Equilibrium Models (DEQ) to refer to implicitly trained equilibrium models. To keep things more general, we categorize “explicitly trained” networks (i.e. with fixed point iterations and backpropagation) under the umbrella of equilibrium models as well. Concretely, letting g𝒘(𝒙,𝒛)=f𝒘(𝒙,𝒛)−𝒛subscript𝑔𝒘𝒙𝒛subscript𝑓𝒘𝒙𝒛𝒛g\_{{\bm{w}}}({\bm{x}},{\bm{z}})=f\_{{\bm{w}}}({\bm{x}},{\bm{z}})-{\bm{z}}italic\_g start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z ) = italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z ) - bold\_italic\_z for a fixed point 𝒛\*superscript𝒛{\bm{z}}^{\*}bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT, the Jacobian of 𝒛\*superscript𝒛{\bm{z}}^{\*}bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT with respect to the equilibrium model weights can be given by:

| | | | |

| --- | --- | --- | --- |

| | d𝒛\*d𝒘=−(∂g𝒘(𝒙,𝒛\*)∂𝒛\*)−1∂f𝒘(𝒙,𝒛\*)∂𝒘𝑑superscript𝒛𝑑𝒘superscriptsubscript𝑔𝒘𝒙superscript𝒛superscript𝒛1subscript𝑓𝒘𝒙superscript𝒛𝒘\frac{d{\bm{z}}^{\*}}{d{\bm{w}}}=-\left(\frac{\partial g\_{{\bm{w}}}({\bm{x}},{\bm{z}}^{\*})}{\partial{\bm{z}}^{\*}}\right)^{-1}\frac{\partial f\_{{\bm{w}}}({\bm{x}},{\bm{z}}^{\*})}{\partial{\bm{w}}}divide start\_ARG italic\_d bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT end\_ARG start\_ARG italic\_d bold\_italic\_w end\_ARG = - ( divide start\_ARG ∂ italic\_g start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ) end\_ARG start\_ARG ∂ bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT end\_ARG ) start\_POSTSUPERSCRIPT - 1 end\_POSTSUPERSCRIPT divide start\_ARG ∂ italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT ) end\_ARG start\_ARG ∂ bold\_italic\_w end\_ARG | | (1) |

Inverting a Jacobian matrix can become computationally expensive. Recent works (Geng et al., [2021a](#bib.bib17); Fung et al., [2021](#bib.bib16)) have shown that the inverse-Jacobian term in [Eq. 1](#S2.E1 "1 ‣ Equilibrium Models ‣ 2 Background ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation") can be replaced with an identity matrix i.e. Jacobian-free or an approximate inverse-Jacobian (Geng et al., [2021b](#bib.bib18)) without affecting the final performance. This approximation makes the backward pass inexpensive and lightweight. Equilibrium models have been shown to achieve state-of-the-art performance on various tasks including language modelling (Bai et al., [2019](#bib.bib3)), image recognition, semantic segmentation (Bai et al., [2020](#bib.bib4)), object detection (Wang et al., [2020](#bib.bib52)), and graph modeling (Gu et al., [2020](#bib.bib21); Liu et al., [2021a](#bib.bib32)).

Equilibrium Models vs. Depthwise Recurrent Models Both equilibrium models and input-injected depthwise recurrent (i.e. weight-tied, fixed-depth) networks leverage weight-tying i.e., they apply the same transformation at each layer, fω[i]=fω∀isuperscriptsubscript𝑓𝜔delimited-[]𝑖subscript𝑓𝜔for-all𝑖f\_{\omega}^{[i]}=f\_{\omega}\;\forall iitalic\_f start\_POSTSUBSCRIPT italic\_ω end\_POSTSUBSCRIPT start\_POSTSUPERSCRIPT [ italic\_i ] end\_POSTSUPERSCRIPT = italic\_f start\_POSTSUBSCRIPT italic\_ω end\_POSTSUBSCRIPT ∀ italic\_i. The two models differ in the ultimate aim of the forward pass: while depthwise recurrent models compute a (weight-tied) fixed depth computation (which may or may not approach a fixed point), the stated *goal* of equilibrium models is explicitly to find a fixed point. Weight-tied fixed depth networks by definition require backpropagation through an explicit stack of layers. Equilibrium models, however, directly solve for fixed points using (potentially black-box) solvers during the forward pass and may be trained using implicit differentiation.

Convergence

As alluded above, in order to guarantee convergence to a unique fixed point, it suffices for the cell of the equilibrium model to be contractive over its input domain333This is known as the Banach fixed-point theorem. (i.e. the singular values of its Jacobian all lie below 1111). Previous work has leveraged Lipschitz constrained cells to ensure contractivity (Revay et al., [2020](#bib.bib39)). Other approaches for ensuring global convergence exist: the monotone equilibrium model architecture guarantees global convergence by utilizing an equilibrium model parametrization that bears similarities to solutions to a particular form of monotone operator splitting problem (Winston and Kolter, [2020](#bib.bib54)). Unrestricted equilibrium models aren’t constrained enough to guarantee convergence: they can easily express globally divergent vector fields that prohibit the existence of fixed points. It is, therefore, interesting that they can (and often) learn path independent solutions. Also note that the “infinite-depth weight tied network" interpretation of equilibrium models is less general than the implicit formulation presented above, as the latter admits unstable fixed points as well.

Terminology and Abbreviations We use the term “equilibrium models” to refer to the general class of networks that explicitly solve for a fixed point in the forward pass. We use the term ‘solver’ to refer to the use of black-box root finders like Anderson acceleration to find fixed points of an implicitly trained equilibrium model. These networks can use implicit gradients computed via implicit function theorem (IFT), Jacobian-free backward pass, or with an approximation of inverse-Jacobian. The term ‘unroll’ refers to equilibrium models that use regular fixed-point iterations to compute the equilibrium point. We use the abbreviation ‘bp’ to refer to backpropagation gradients, and ‘inj’ to refer to input injection. The term ‘progressive net’ refers to the deep thinking networks trained with progressive training as proposed by Bansal et al. ([2022](#bib.bib7)). We use ‘PI’ and ‘non-PI’ to refer to path independent and path dependent networks, respectively.

3 Upwards Generalization with Equilibrium Models

-------------------------------------------------

In this section, we establish that equilibrium models are capable of strong upwards generalization. To study the effects of test time computation, it is useful to consider tasks with an explicit difficulty parameter, so that the learned models can be tested on more difficult instances which require a large number of iterations to solve correctly. We focus on multiple algorithmic generalization tasks: prefix sum and mazes by Schwarzschild et al. ([2021a](#bib.bib43), [b](#bib.bib44)), blurry MNIST, matrix inversion and edge copy by Du et al. ([2022](#bib.bib11)). Taken together, these tasks cover a wide range of problems from different domains, namely sequence prediction, visual reasoning, image classification, continuous optimization and graph regression. To maintain clarity and focus, we run our detailed analysis on the prefix sum and mazes tasks, and provide complementary results for the remaining tasks in the Supplementary Material (SM).

Tasks Prefix-sum is a sequence-to-sequence task whereby the network is given a sequence of 0-1 bits, and is trained to output, for each bit, the parity of all of the bits received since the beginning of the sequence until the current bit. We train on 10,000 unique 32-bit binary strings, and report results on binary strings of other lengths. The mazes task is also an image-to-image task, where the input is a three-channel RGB image. The ‘start’ and ‘finish’ positions are marked by a red and a green square respectively; walls are marked in black. The output is the optimal path in the maze that connects these two points without passing through the walls. We train on 50,000 small mazes of size 9×9999\times 99 × 9, and report upward generalization results on larger mazes. Instances of each of these problems, as well as additional image classification and continuous optimization results can be found in the supplementary material. Blurry MNIST ([Liang et al.,](#bib.bib29) ) is a robustness-to-corruption task: one has to learn to do MNIST classification from lightly blurred images and generalize zero-shot to highly blurred ones. In the matrix inversion task (Du et al., [2022](#bib.bib11)), the goal is to learn to invert 10×10101010\times 1010 × 10 matrices in a way that generalizes to matrices that have worse condition number than those observed during training. Edge copy (Du et al., [2022](#bib.bib11)) is a simple graph regression tasks that requires learning to output the input edge features, in a way that generalizes to larger graph sizes. Note that the training and test data in the latter two tasks are generated with noise added on-the-fly, as done by Du et al. ([2022](#bib.bib11)).

Strong Upward Generalization

[Fig. 2](#S3.F2 "Figure 2 ‣ 3 Upwards Generalization with Equilibrium Models ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation") shows that equilibrium models demonstrate very strong upward generalization performance compared to non weight-tied fixed-depth models. Moreover, [Fig. 2](#S3.F2 "Figure 2 ‣ 3 Upwards Generalization with Equilibrium Models ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation") shows that increasing inference depth consistently improves performance—especially on harder problem instances.

(a)

(a)

(b)

Figure 2: (left) Strong upward generalization on mazes by PI models. Models were trained on 9×9999\times 99 × 9 sized mazes and tested for upward generalization on larger mazes. y-axis uses probit transformation. (right) PI models are better able to make use of additional test-time computation. We trained models with varying number of training-time iterations, learning rate and weight norm application. Bit-wise accuracies are evaluated and averaged over different string-lengths.

4 Path Independence

--------------------

Having intuitively motivated the idea of path independence in [Sec. 1](#S1 "1 Introduction ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation"), we now define it formally: we say that the computation performed by a recurrent operator computing function f𝒘subscript𝑓𝒘f\_{{\bm{w}}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT on an input 𝒙𝒙{\bm{x}}bold\_italic\_x is path independent if it converges to the same limiting behavior regardless of the current state 𝒛tsubscript𝒛𝑡{\bm{z}}\_{t}bold\_italic\_z start\_POSTSUBSCRIPT italic\_t end\_POSTSUBSCRIPT. As a special case, if the computation is convergent, this property is equivalent to the existence of a unique fixed point 𝒛\*superscript𝒛{\bm{z}}^{\*}bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT such that f𝒘∞(𝒙,𝒛0)=𝒛\*subscriptsuperscript𝑓𝒘𝒙subscript𝒛0superscript𝒛f^{\infty}\_{{\bm{w}}}({\bm{x}},{\bm{z}}\_{0})={\bm{z}}^{\*}italic\_f start\_POSTSUPERSCRIPT ∞ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT ( bold\_italic\_x , bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT ) = bold\_italic\_z start\_POSTSUPERSCRIPT \* end\_POSTSUPERSCRIPT for any 𝒛0subscript𝒛0{\bm{z}}\_{0}bold\_italic\_z start\_POSTSUBSCRIPT 0 end\_POSTSUBSCRIPT. However, our definition allows for other behaviors such as limit cycles (see [Sec. 7](#S7 "7 Disambiguating Convergence and Path Independence ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation")).

Some architectures guarantee the path independence property (see [Sec. 2](#S2 "2 Background ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation")). However, most common DEQ architectures—and the ones we use throughout this paper—have the expressive power to learn multiple fixed points per input. Since it is unclear whether architectures enforcing the contraction property lose expressiveness (Bai et al., [2021](#bib.bib5)), we focus our investigation on unrestricted architectures.

PI networks represent a different model for computation than standard feed-forward networks: instead of learning an entire computational graph to map inputs to outputs, they only have to learn where to stop. We dedicate the rest of the paper on exploring the Path Independence Hypothesis—the idea that models which successfully fit the training distribution with a path independent function are better able to exploit more test-time iterations to achieve higher accuracy, compared to those which fit the training distribution with a path-dependent function.

Before establishing a connection between path independence and out-of-distribution generalization, we first describe two architectural components that are necessary conditions for achieving path independence. Afterward, we describe a metric to quantify how path-independent a trained network is.

###

4.1 Architectural Components Necessary for Path Independence

Past work has observed that *weight tying* and *input injection* are both crucial for upwards generalization (Bansal et al., [2022](#bib.bib7)). We observe that both architectural components are also necessary for a learned model to be PI.444Our definition also admits non-input-injected models to be path independent if they’re representing constant functions (i.e. input independent). We don’t consider such cases in our analyses. Without weight tying, the network is constrained to have a fixed forward depth, so it is meaningless to talk about the limiting behavior in large depth. Input injection ensures that the equilibrium point depends on the input despite having an “infinite depth". Without input injection, a PI network would necessarily forget the input; hence, any model which successfully fits the training distribution must be path dependent.

Interestingly, both architectural motifs are also key components of deep equilibrium models (Bai et al., [2019](#bib.bib3)); in that work, the motivation was to enable efficient gradient estimation via the implicit function theorem (IFT) — a concept closely related to path independence, since the premise of the IFT gradient estimator is that only the final hidden state matters, not the path taken to get there. It is striking that two separate lines of work would converge on the same architectural motifs, one motivated by generalization and the other by a variant of path independence.

Reproducing the results of Bansal et al. ([2022](#bib.bib7)), in [Fig. 2](#S3.F2 "Figure 2 ‣ 3 Upwards Generalization with Equilibrium Models ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation") we show upward generalization performance using both equilibrium models and progressive nets (Bansal et al., [2022](#bib.bib7)) – and the lack thereof using non-input-injected networks. For the remainder of this paper, we focus on architectures with both input injection and weight tying.

###

4.2 Quantifying Path Independence

We propose a simple metric to quantify path independence based on the directional alignment of the fixed points computed with the same input, but different initializations. We name this metric the *Asymptotic Alignment (AA) score*. Pseudocode to compute the metric is given in [Alg. 1](#alg1 "Algorithm 1 ‣ Figure 3 ‣ 4.2 Quantifying Path Independence ‣ 4 Path Independence ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation"). The AA score is the average cosine similarity between the fixed points obtained with the training time initialization (often simply the zero vector) and the fixed points obtained when one initializes the solver using the fixed points computed on different inputs. Higher AA scores (with 1111 being the highest value) imply higher degrees of path independence. In [Sec. 5](#S5 "5 Path Independence Correlates with Upward Generalization ‣ Path Independent Equilibrium Models Can Better Exploit Test-Time Computation"), we show a strong correlation between path independence and accuracy using the AA score.

The AA score is cheap to compute, is a reliable indicator of path independence (see below), and is unitless, meaning that networks obtained from different training runs can be compared on equal footing. See the supplementary material for other metrics we’ve considered for quantifying path independence and why we found AA score to be preferable.

Algorithm 1 Asymptotic Alignment Score

A batched input [𝒙1𝒙2]matrixsubscript𝒙1subscript𝒙2\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{x}}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{x}}\_{2}}\end{bmatrix}[ start\_ARG start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ], an operator f𝒘subscript𝑓𝒘f\_{\bm{w}}italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT

Initialize: [𝒛1𝒛2]=𝟎matrixsubscript𝒛1subscript𝒛20\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}\_{2}}\end{bmatrix}=\mathbf{0}[ start\_ARG start\_ROW start\_CELL bold\_italic\_z start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_z start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] = bold\_0

Define: h(𝒚1,𝒚2)=𝒚1‖𝒚1‖2⋅𝒚2‖𝒚2‖2ℎsubscript𝒚1subscript𝒚2⋅subscript𝒚1subscriptnormsubscript𝒚12subscript𝒚2subscriptnormsubscript𝒚22h({\bm{y}}\_{1},{\bm{y}}\_{2})=\dfrac{{\bm{y}}\_{1}}{\|{\bm{y}}\_{1}\|\_{2}}\cdot\dfrac{{\bm{y}}\_{2}}{\|{\bm{y}}\_{2}\|\_{2}}italic\_h ( bold\_italic\_y start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT , bold\_italic\_y start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT ) = divide start\_ARG bold\_italic\_y start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_ARG start\_ARG ∥ bold\_italic\_y start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT ∥ start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_ARG ⋅ divide start\_ARG bold\_italic\_y start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_ARG start\_ARG ∥ bold\_italic\_y start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT ∥ start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_ARG

Compute [𝒛1′𝒛2′]=FIXf𝒘([𝒙1𝒙2],[𝒛1𝒛2])matrixsubscriptsuperscript𝒛′1subscriptsuperscript𝒛′2subscriptFIXsubscript𝑓𝒘matrixsubscript𝒙1subscript𝒙2matrixsubscript𝒛1subscript𝒛2\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}^{\prime}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}^{\prime}\_{2}}\end{bmatrix}=\mathrm{FIX}\_{f\_{\bm{w}}}\left(\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{x}}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{x}}\_{2}}\end{bmatrix},\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}\_{2}}\end{bmatrix}\right)[ start\_ARG start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] = roman\_FIX start\_POSTSUBSCRIPT italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT end\_POSTSUBSCRIPT ( [ start\_ARG start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] , [ start\_ARG start\_ROW start\_CELL bold\_italic\_z start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_z start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] )

# Interchange and reinitialize iterates

Compute [𝒛1′′𝒛2′′]=FIXf𝒘([𝒙1𝒙2],[𝒛2′𝒛1′])matrixsubscriptsuperscript𝒛′′1subscriptsuperscript𝒛′′2subscriptFIXsubscript𝑓𝒘matrixsubscript𝒙1subscript𝒙2matrixsubscriptsuperscript𝒛′2subscriptsuperscript𝒛′1\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}^{\prime\prime}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}^{\prime\prime}\_{2}}\end{bmatrix}=\mathrm{FIX}\_{f\_{\bm{w}}}\left(\begin{bmatrix}{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{x}}\_{1}}\\

{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{x}}\_{2}}\end{bmatrix},\begin{bmatrix}{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}^{\prime}\_{2}}\\

{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}^{\prime}\_{1}}\end{bmatrix}\right)[ start\_ARG start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] = roman\_FIX start\_POSTSUBSCRIPT italic\_f start\_POSTSUBSCRIPT bold\_italic\_w end\_POSTSUBSCRIPT end\_POSTSUBSCRIPT ( [ start\_ARG start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_x start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] , [ start\_ARG start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT end\_CELL end\_ROW start\_ROW start\_CELL bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT end\_CELL end\_ROW end\_ARG ] )

return average(h(𝒛1′′,𝒛1′)\mathrm{average}(h({\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}^{\prime\prime}\_{1}},{\color[rgb]{0,.5,.5}\definecolor[named]{pgfstrokecolor}{rgb}{0,.5,.5}\pgfsys@color@rgb@stroke{0}{.5}{.5}\pgfsys@color@rgb@fill{0}{.5}{.5}{\bm{z}}^{\prime}\_{1}})roman\_average ( italic\_h ( bold\_italic\_z start\_POSTSUPERSCRIPT ′ ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT , bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 1 end\_POSTSUBSCRIPT ), h(𝒛2′′,𝒛2′))h({\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}^{\prime\prime}\_{2}},{\color[rgb]{1,0,1}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,1}\pgfsys@color@cmyk@stroke{0}{1}{0}{0}\pgfsys@color@cmyk@fill{0}{1}{0}{0}{\bm{z}}^{\prime}\_{2}}))italic\_h ( bold\_italic\_z start\_POSTSUPERSCRIPT ′ ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT , bold\_italic\_z start\_POSTSUPERSCRIPT ′ end\_POSTSUPERSCRIPT start\_POSTSUBSCRIPT 2 end\_POSTSUBSCRIPT ) )

Figure 3: (left) AA Score Algorithm: We provide the algorithm for a simple illustrative case of two inputs. In practice, we consider larger batches. (right) Promoting path independence improves generalization in the prefix sum task: Interventions that are designed to promote path independence (initializing fixed points with random noise or running the fixed point solver with stochastic budget) improves generalization. Conversely, those that hurt path independence (penalty term that directly penalizes fixed point alignment) leads to poorer generalization.

##### Stress-testing the AA score

To stress-test the extent to which the AA score really measures path independence, we search for adversarial initializations that are optimized to result in distinct fixed points, hence low AA values. (Unlike adversarial examples, this attack is not constrained to an ε𝜀\varepsilonitalic\_ε-ball.) We use the L-BFGS (Liu and Nocedal, [1989](#bib.bib31)) optimizer, and repeat the search multiple times starting from different fixed point initializations. We include pseudocode in the supplementary material.