id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

c2813cef-aa0c-48f5-bce8-a9b66f065c36 | trentmkelly/LessWrong-43k | LessWrong | Don't accuse your interlocutor of being insufficiently truth-seeking

I argue that you shouldn't accuse your interlocutor of being insufficiently truth-seeking. This doesn't mean you can't internally model their level of truth-seeking and use that for your own decision-making. It just means you shouldn't come out and say "I think you are being insufficiently truth-seeking".

What you should say instead

Before I explain my reasoning, I'll start with what you should say instead:

"You're wrong"

People are wrong a lot. If you think they are wrong just say so. You should have a strong default for going with this option.

"You're being intentional misleading"

For when you basically thinking they are lying but maybe technically aren't by some definitions of "lying".

What about if they are being unintentionally misleading? That's usually just being wrong, you should probably just say they are being wrong. But if you really think the distinction is important, you can say they are being unintentionally misleading.

"You're lying"

For when they are lying.

You can also add your own flair to any of these options to spice things up a bit.

Why you shouldn't accuse people of being insufficient truth-seeking

Clarity

It's not clear what you are even accusing them of. "Insufficient truth-seeking" could arguably be any of the options I mentioned above. Just be specific. If you really think what you're saying is so important and nuanced and you just need to incorporate some deep insight about truth, use the "add your own flair" option to sneak that stuff in.

Achieving your purpose in the discussion

The most common purposes you might have for engaging in the discussion and why invoking "truth-seeking" doesn't help them:

You want to discuss the object-level issue

You just fucked yourself because the discussion is immediately going to center on whether they actually are insufficiently truth-seeking and whether that accusation was justified. You're going to have to gather The Fellowship, take your argument to Mordor, and throw it into the fire |

2c391bef-0df5-4637-bbeb-36c4b4acfe36 | trentmkelly/LessWrong-43k | LessWrong | What is everyone doing in AI governance

What is this post about, and how to use it

This is a list of AI governance organizations with a brief description of their directions of work, so a reader can get a basic understanding of what they are doing and explore them in-depth on their own.

There is another recent post on a similar topic that explores AI governance research agendas with a lesser focus on the areas of work of specific governance organizations.

This is a flawed list

I made this list using publicly available data, as well as by talking with people from the field. I am sure that I missed something important, especially in the section on the governance teams of AI labs since they are less open than nonprofits, but the best way to get the right answer on the internet is to post the wrong one.

At some point, adding each new bit of information started taking more and more time, and I decided to stop so this post is reasonably useful and will not cause me to struggle.

DM me if you see mistakes or think I forgot something important.

Major non-profit organizations

Centre for the Governance of AI (GovAI)

Areas of work

1. Scientific research on AI policies

2. Educational and fellowship programs

3. Some efforts to improve coordination among AI governance orgs

Mechanisms of influence:

1. Many prominent AI governance specialists are alumni or former employees of GovAI including heads of policy at Deepmind, OpenAI, and Anthropic. So GovAI has strong connections among decision-makers

2. GovAI produced a lot of academic research on policies. Mostly academic, to a lesser extent - applied

Other notable things

GovAI has an established brand of a respectable organization

Center for AI Safety (CAIS)

Areas of work

1. Field building

1. Courses and fellowships for starting a career in technical AI safety and philosophy

2. Offer compute resources for AI safety researchers including compute cluster

3. Organize competitions for AI safety researchers

2. Resea |

c3917bd3-1f21-4bb1-84c8-a1ce48d5bbd8 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Bay Area Solstice

Discussion article for the meetup : Bay Area Solstice

WHEN: 07 December 2013 06:00:00PM (-0800)

WHERE: San Francisco

The Bay Area community is holding a Solstice celebration, and you’re invited! Join us for a night of group singing, ritual, light, warmth, and companionship, plus the first-ever performance of the rationalist choir, as we celebrate human progress and potential at the darkest time of the year.

The Bay Area Solstice will be held on Saturday, December 7, from 6:00 PM until 10:00 PM. We’ll provide a shuttle to and from the Civic Center BART station. Space is limited, so please fill out the RSVP form. I hope to see you there!

Discussion article for the meetup : Bay Area Solstice |

266da09e-f618-4890-84f4-74539b2fa359 | trentmkelly/LessWrong-43k | LessWrong | Shutting Down the Lightcone Offices

Lightcone recently decided to close down a big project we'd been running for the last 1.5 years: An office space in Berkeley for people working on x-risk/EA/rationalist things that we opened August 2021.

We haven't written much about why, but I and Ben had written some messages on the internal office slack to explain some of our reasoning, which we've copy-pasted below. (They are from Jan 26th). I might write a longer retrospective sometime, but these messages seemed easy to share, and it seemed good to have something I can more easily refer to publicly.

Background data

Below is a graph of weekly unique keycard-visitors to the office in 2022.

The x-axis is each week (skipping the first 3), and the y-axis is the number of unique visitors-with-keycards.

Weekly unique visitors with keycards in 2022. There was a lot of seasonality to the office.The distribution of people by how many days they came (in 2022) looks like this.

Members could bring in guests, which happened quite a bit and isn't measured in the keycard data below, so I think the total number of people who came by the offices is 30-50% higher.

The offices opened in August 2021. Including guests, parties, and all the time not shown in the graphs, I'd estimate around 200-300 more people visited, so in total around 500-600 people used the offices.

The offices cost $70k/month on rent [1], and around $35k/month on food and drink, and ~$5k/month on contractor time for the office. It also costs core Lightcone staff time which I'd guess at around $75k/year.

Ben's Announcement

> Closing the Lightcone Offices @channel

>

> Hello there everyone,

>

> Sadly, I'm here to write that we've decided to close down the Lightcone Offices by the end of March. While we initially intended to transplant the office to the Rose Garden Inn, Oliver has decided (and I am on the same page about this decision) to make a clean break going forward to allow us to step back and renegotiate our relationship to the entire EA/longtermis |

65d00179-3544-47f6-ac0f-b2fd56b02799 | trentmkelly/LessWrong-43k | LessWrong | Decision Theories in Real Life

|

b6ae3b58-972a-4d20-8a83-9fc1974a5c35 | trentmkelly/LessWrong-43k | LessWrong | The AGI needs to be honest

Imagine that you are a trained mathematician and you have been assigned the job of testing an arbitrarily intelligent chatbot for its intelligence.

You being knowledgeable about a fair amount of computer-science theory won’t test it with the likes of Turing-test or similar, since such a bot might not have any useful priors about the world.

You have asked it find a proof for the Riemann-hypothesis. the bot started its search program and after several months it gave you gigantic proof written in a proof checking language like coq.

You have tried to run the proof through a proof-checking assistant but quickly realized that checking that itself would years or decades, also no other computer except the one running the bot is sophisticated enough to run proof of such length.

You have asked the bot to provide you a zero-knowledge-proof, but being a trained mathematician you know that a zero-knowledge-proof of sufficient credibility requires as much compute as the original one. also, the correctness is directly linked to the length of the proof it generates.

You know that the bot may have formed increasingly complex abstractions while solving the problem, and it would be very hard to describe those in exact words to you.

You have asked the bot to summarize the proof for you in natural-language, but you know that the bot can easily trick you into accepting the proof.

You have now started to think about a bigger question, the bot essentially is a powerful optimizer. In this case, the bot is trained to find proofs, its reward is based on finding what a group of mathematicians agree on how a correct proof looks like.

But the bot being a bot doesn’t care about being honest to you or to itself, it is not rewarded for being “honest” it is only being rewarded for finding proof-like strings that humans may select or reject.

So it is far easier for it to find a large coq-program, large enough that you cannot check by any other means, than to actually solve riemann-hypothesis |

9b579772-b999-4e07-8b34-d7cb94cf19db | trentmkelly/LessWrong-43k | LessWrong | Winston Churchill, futurist and EA

Churchill—when he wasn’t busy leading the fight against the Nazis—had many hobbies. He wrote more than a dozen volumes of history, painted over 500 pictures, and completed one novel (“to relax”). He tried his hand at landscaping and bricklaying, and was “a championship caliber polo player.” But did you know he was also a futurist?

That, at least, is my conclusion after reading an essay he wrote in 1931 titled “Fifty Years Hence,” various versions of which were published in MacLean’s, Strand, and Popular Mechanics. (Quotes to follow from the Strand edition.)

We’ll skip right over the unsurprising bit where he predicts the Internet—although the full consequences he foresaw (“The congregation of men in cities would become superfluous”) are far from coming true—in order to get to his thoughts on…

Energy

Just as sure as the Internet, to forward-looking thinkers of the 1930s, was nuclear power—and already they were most excited, not about fission, but fusion:

> If the hydrogen atoms in a pound of water could be prevailed upon to combine together and form helium, they would suffice to drive a thousand horsepower engine for a whole year. If the electrons, those tiny planets of the atomic systems, were induced to combine with the nuclei in the hydrogen the horsepower liberated would be 120 times greater still.

What could we do with all this energy?

> Schemes of cosmic magnitude would become feasible. Geography and climate would obey our orders. Fifty thousand tons of water, the amount displaced by the Berengaria, would, if exploited as described, suffice to shift Ireland to the middle of the Atlantic. The amount of rain falling yearly upon the Epsom racecourse would be enough to thaw all the ice at the Arctic and Antarctic poles.

I assume this was just an illustrative example, and he wasn’t literally proposing moving Ireland, but maybe I’m underestimating British-Irish rivalry?

Anyway, more importantly, Churchill points out what nuclear technology might do for nanom |

9549f1e2-f5e8-4937-bc3b-1ea672008b89 | trentmkelly/LessWrong-43k | LessWrong | Towards Multimodal Interpretability: Learning Sparse Interpretable Features in Vision Transformers

Executive Summary

In this post I present my results from training a Sparse Autoencoder (SAE) on a CLIP Vision Transformer (ViT) using the ImageNet-1k dataset. I have created an interactive web app, 'SAE Explorer', to allow the public to explore the visual features the SAE has learnt, found here: https://sae-explorer.streamlit.app/ (best viewed on a laptop). My results illustrate that SAEs can identify sparse and highly interpretable directions in the residual stream of vision models, enabling inference time inspections on the model's activations. To demonstrate this, I have included a 'guess the input image' game on the web app that allows users to guess the input image purely from the SAE activations of a single layer and token of the residual stream. I have also uploaded a (slightly outdated) accompanying talk of my results, primarily listing SAE features I found interesting: https://youtu.be/bY4Hw5zSXzQ.

The primary purpose of this post is to demonstrate and emphasise that SAEs are effective at identifying interpretable directions in the activation space of vision models. In this post I highlight a small number my favourite SAE features to demonstrate some of the abstract concepts the SAE has identified within the model's representations. I then analyse a small number of SAE features using feature visualisation to check the validity of the SAE interpretations. Later in the post, I provide some technical analysis of the SAE. I identify a large cluster of features analogous to the 'ultra-low frequency' cluster that Anthropic identified. In line with existing research, I find that this ultra-low frequency cluster represents a single feature. I then analyse the 'neuron-alignment' of SAE features by comparing the SAE encoder matrix the MLP out matrix.

This research was conducted as part of the ML Alignment and Theory Scholars program 2023/2024 winter cohort. Special thanks to Joseph Bloom for providing generous amounts of his time and support (in addition to the SAE |

1c49dc91-7f9b-49bc-bca0-9a54984bbf6f | trentmkelly/LessWrong-43k | LessWrong | A Catalog of Confusions

tl;dr - can we categorise confusing events by skills required to deal with them? What are those skills?

I am sometimes haunted by things I read online. It's probably a couple of years since I first read Your Strength as a Rationalist, but over the past month or two I've been reminded of it a surprising number of times in different circumstances. It's led me to wonder whether the idea of being "confused by fiction" can be helpfully broken down into categories, with each of those categories having certain skills that can be worked on to help notice them.

I'm going to describe two such categories I think I've identified, and invite your criticism, or suggestions of other similar categories. In both cases, I believe there to be some instinct, acquired skill, or some combination thereof that draws it to my attention. I could just be making this up, though, so criticism is also welcome on this front.

Absence of Salient Information

I believe tech support is like a magic trick in reverse. With a magic trick, the magician hides a crucial fact which he then distracts you from. He provides a false narrative of what's going on while confusing the sequence of events, culminating in the impossible, and relies on your own fear of appearing foolish to make you falsely report the conditions of the trick to both yourself and other spectators.

In tech support, you are often presented with an impossible sequence of events; the customer's fear of appearing foolish makes them falsely report the conditions of the fault to both themselves and you, concealing a crucial fact which the rest of the narrative distracts you from. You then have to figure out how it was done.

I recently asked a girl from my dance class out for a drink, and proceeded to receive the most shocking litany of mixed signals I could ever imagine receiving, drink not forthcoming. I boiled it down to three possibilities: either she was interested but incredibly shy, uninterested but just really friendly; or |

7f1ef414-9d1a-49ad-ad40-065217201110 | trentmkelly/LessWrong-43k | LessWrong | LW Update 04/06/18 – QM Sequence Updated

I just updated the Quantum Mechanics Sequence to have working images, links, and formatting.

Thanks to all the readers who pinged me in intercom to let me know that the stuff was broken for them, it caused me to do this more urgently.

Also a big thanks to Said Achmiz who already had turned Rationality: AI to Zombies into html and turned the images into SVG files, this saved me a *ton* of time.

If you take a look through, please let me know if I missed something. |

6fec1b4b-1943-4d75-9328-b2ef11bd411e | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | AI Alignment, Philosophical Pluralism, and the Relevance of Non-Western Philosophy

*This is an extended transcript of* [*the talk I gave at EAGxAsiaPacific 2020*](https://www.youtube.com/watch?v=dbMp4pFVwnU)*. In the talk, I present a somewhat critical take on how AI alignment has grown as a field, and how, from my perspective, it deserves considerably more philosophical and disciplinary diversity than it has enjoyed so far. I'm sharing it here in the hopes of generating discussion about the disciplinary and philosophical paradigms that (I understand) the AI alignment community to be rooted in, and whether or how we should move beyond them. Some sections cover introductory material that most people here are likely to be familiar with, so feel free to skip them.*

The Talk

========

Hey everyone, my name is Xuan (IPA: ɕɥɛn), and I’m doctoral student at MIT doing cognitive AI research. Specifically I work on how we can infer the hidden structure of human motivations by [modeling humans using probabilistic programs](https://arxiv.org/abs/2006.07532). Today though I’ll be talking about something that’s more in the background that informs my work, and that’s about AI alignment, philosophical pluralism, and the relevance of non-Western philosophy.

This talk will cover a lot of ground, so I want to give an overview to keep everyone oriented:

1. First, I’ll give a brief introduction to what AI alignment is, and why it likely matters as an effective cause area.

2. I’ll then highlight some of the philosophical tendencies of current AI alignment research, and argue that they reflect a relatively narrow set of philosophical views.

3. Given that these philosophical views may miss crucial considerations, this situation motivates the need for greater philosophical and disciplinary pluralism.

4. And then as a kind of proof by example, I’ll aim to demonstrate how non-Western philosophy might provide insight into several open problems in AI alignment research.

A brief introduction to AI alignment

------------------------------------

So what is AI alignment? One way to cache it out is the project of building intelligent systems that robustly act in our collective interests — in other words, building AI that is *aligned* with our values. As many people in the EA community have argued, this is a highly impactful cause area if you believe the following:

1. AI will determine the future of our civilization, perhaps by [replacing humanity as the most intelligent agents on this planet](http://www.fhi.ox.ac.uk/wp-content/uploads/Reframing_Superintelligence_FHI-TR-2019-1.1-1.pdf), or by having some other kind of [transformative impact](https://arxiv.org/abs/1912.00747), like [enabling authoritarian dystopias](https://80000hours.org/podcast/episodes/allan-dafoe-politics-of-ai/).

2. AI will be likely be misaligned with our collective interests by default, perhaps because it’s just [very hard to specify what our values are](https://www.alignmentforum.org/posts/gnvrixhDfG7S2TpNL/latent-variables-and-model-mis-specification), or because of [bad systemic incentives](https://www.lawfareblog.com/thinking-about-risks-ai-accidents-misuse-and-structure).

3. Not only is this problem really difficult to solve, we also cannot delay solving it.

To that last point, basically everyone who works in AI alignment thinks it’s a really daunting technical and philosophical challenge. Human values, whatever they are, are incredibly complex and fragile, and so every seemingly simple solution to aligning superhuman AI is subject to potentially catastrophic loopholes.

I’ll illustrate this by way of this short dialogue between a human and a fictional super-intelligent chatbot called GPT-5, who’s kind of like this genie in a bottle. So you start up this chatbot and you ask:

**Human** *Dear GPT-5, please make everyone on this planet happy.*

*Okay, I will place them in stasis and inject heroin so they experience eternal bliss.* **GPT-5**

**Human** *No no no, please don’t. I mean satisfy their preferences. Not everyone wants heroin.*

*Alright. But how should I figure out what those preferences are?* **GPT-5**

**Human** *Just listen to what they say they want! Or infer it from how they act.*

*Hmm. This person says they can’t bear to hurt animals, but keeps eating meat.* **GPT-5**

**Human** *Well, do what they would want if they could think longer, or had more willpower!*

*I extrapolate that they will come to support human extinction to save other species.* **GPT-5**

**Human** *Actually, just stop.*

*How do I know if that’s what **you** really want?* **GPT-5**

An overview of the field

------------------------

So that's a taste of the kind of problem we need to solve. Obviously there's a lot to unpack here about philosophy, what people really want, what desires are, what preferences are, and whether should we always satisfy those preferences. Before diving more into that, I think it’d be helpful to give a sense of what AI alignment research is like today, so we can get better sense of what might still be needed to answer these daunting questions.

There have been multiple taxonomies of AI alignment research, one of the earlier ones being [Concrete Problems in AI Safety](https://arxiv.org/abs/1606.06565) in 2016, suggesting topics like avoiding negative side effects and safe exploration. In 2018, DeepMind offers another categorization, breaking things down into [specification, robustness, and assurance](https://medium.com/@deepmindsafetyresearch/building-safe-artificial-intelligence-52f5f75058f1). And at EA Global 2020, Rohin Shah laid out [another useful way of thinking about the space](https://www.effectivealtruism.org/articles/rohin-shah-whats-been-happening-in-ai-alignment/), breaking specification down into outer and inner alignment, and highlighting the question of scaling to superhuman competence while preserving alignment.

One notable feature of these taxonomies is their decidedly engineering bent. You might be wondering — where is the philosophy in all this? Didn’t we say there were philosophical challenges? And it’s actually there, but you have to look closely. It’s often obscured by the technical jargon. In addition, there’s this tendency to formalize philosophical and ethical questions as questions about rewards and policies and utility functions — which I think is something that can be done a little too quickly.

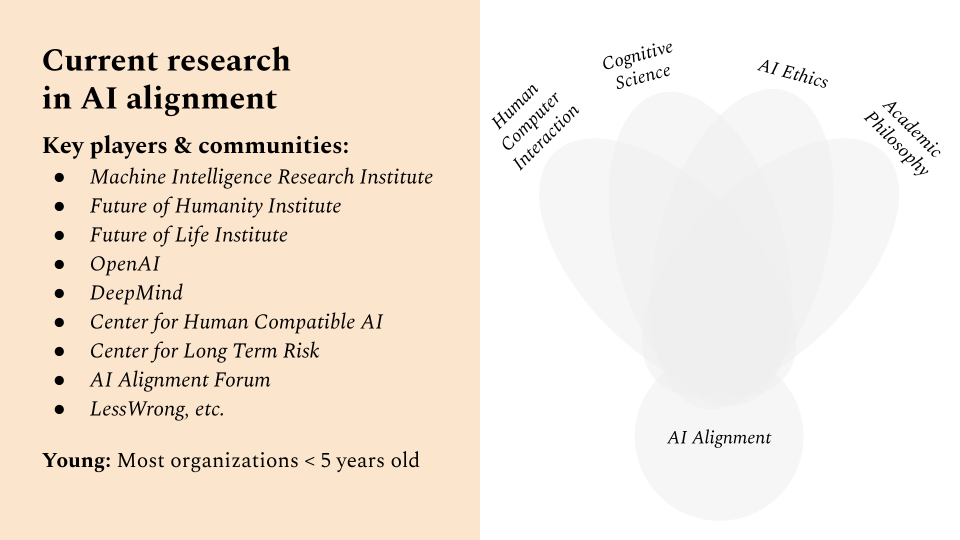

Another way to get a sense of what might currently be missing in AI alignment is to look at the ecosystem and its key players.

AI alignment is actually a really small and growing field, composed of entities like MIRI, FHI, OpenAI, the Alignment forum, and so on. Most of these organizations are really young, often less than 5 years old — and I think it’s fair to say that they’ve been a little insular as well. Because if you think about AI alignment as a field, and the problems its trying to solve, you’d think it must be this really interdisciplinary field that sits at the intersection of broader disciplines, like human-computer interaction, cognitive science, AI ethics, and philosophy.

The relative lack of overlap between the AI alignment community and related disciplines.But to my knowledge, there actually isn’t very much overlap between these communities — it’s more off-to-the-side, like in the picture above. There are reasons for this, which I’ll get to, and it’s already starting to change, but I think it partly explains the relatively narrow philosophical horizons of the AI alignment community.

Philosophical tendencies in AI alignment

----------------------------------------

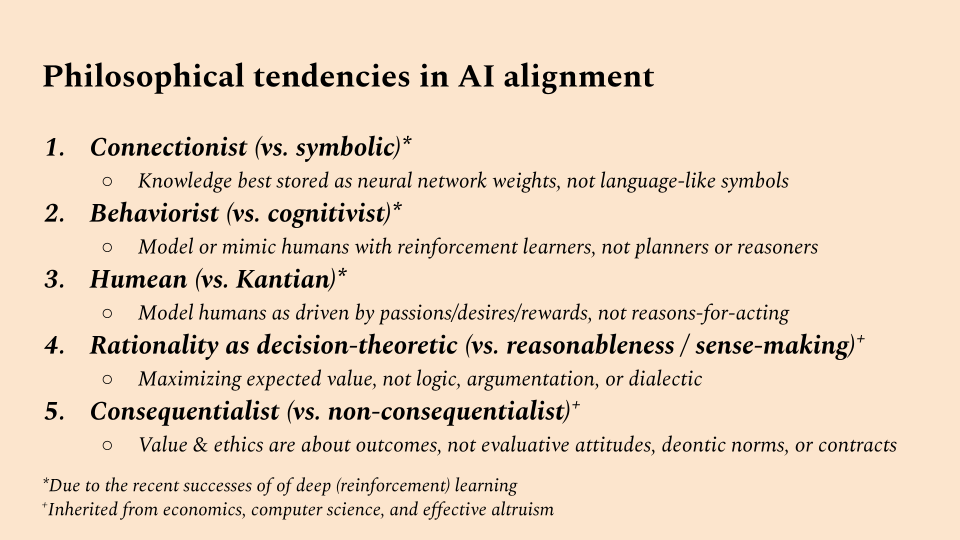

So what are these horizons? I’m going to lay out 5 philosophical tendencies that I’ve perceived in the work that comes out of the AI alignment community — so this is inevitably going to be subjective — but it’s based on the work that gets highlighted in venues like the Alignment Newsletter, or that gets discussed on the AI Alignment forum.

Five philosophical tendencies of contemporary AI alignment research:

**(1) Connectionism, (2) Behaviorism, (3) Humeanism, (4) Decision-Theoretic Rationality, (5) Consequentialism**.**1. Connectionist (vs. symbolic)**

First there’s a tendency towards [connectionism](https://plato.stanford.edu/entries/connectionism/) — the position that knowledge is best stored as sub-symbolic weights in neural networks, rather than [language-like symbols](https://dspace.mit.edu/handle/1721.1/100174). You see this in emphasis on deep learning interpretability, scalability, and robustness.

**2. Behaviorist (vs. cognitivist)**

Second, there’s a tendency towards [behaviorism](https://plato.stanford.edu/entries/behaviorism/) — that to build human-aligned AI, we can model or mimic humans as these reinforcement learning agents, which avoid reasoning or planning by just [learning from lifetimes and lifetimes of data](http://www.incompleteideas.net/IncIdeas/BitterLesson.html). This in contrast to [more cognitive approaches to AI](https://www.cambridge.org/core/journals/behavioral-and-brain-sciences/article/building-machines-that-learn-and-think-like-people/A9535B1D745A0377E16C590E14B94993), which emphasize the ability to reason with and manipulate abstract models of the world.

**3. Humean (vs. Kantian)**

Third, there’s a implicit tendency towards [Humean theories of motivation](https://philarchive.org/rec/SINTHT-5) — that we can model humans as motivated by reward signals they receive from the environment, which you might think of as “desires”, or “passions” as David Hume called them. This is in contrast more [Kantian theories of motivation](https://sites.fas.harvard.edu/~korsgaar/CMK.Motive.of.Duty.pdf), which leave more room for humans to also be motivated by [*reasons*](https://plato.stanford.edu/entries/reasons-just-vs-expl/), e.g., commitments, intentions, or moral principles.

**4. Rationality as decision-theoretic (vs. reasonableness / sense-making)**

Fourth, there’s a tendency to view rationality solely in [decision theoretic terms](https://plato.stanford.edu/entries/decision-theory/) — that is, rationality is about maximizing expected value, where probabilities are rationally updated in a Bayesian manner. But historically, in philosophy, [there’s been a lot more to norms of reasoning and rationality than just that](https://plato.stanford.edu/entries/practical-reason) — rationality is also about logic, and argumentation and dialectic. Broadly, it’s about what it makes sense for a person to think or do, [including what it makes sense for a person to value in the first place](https://plato.stanford.edu/entries/fitting-attitude-theories/).

**5. Consequentialist (vs. non-consequentialist)**

Finally, there’s a tendency towards consequentialism — [consequentialism in the broad sense](https://www.jstor.org/stable/10.1086/660696?seq=1) that value and ethics are about outcomes or states of affairs. This excludes views that root value/ethics in [evaluative attitudes](https://plato.stanford.edu/entries/fitting-attitude-theories/), [deontic norms](https://www.journals.uchicago.edu/doi/full/10.1086/690069), or [contractualism](https://plato.stanford.edu/entries/contractualism/).

From parochialism to pluralism

------------------------------

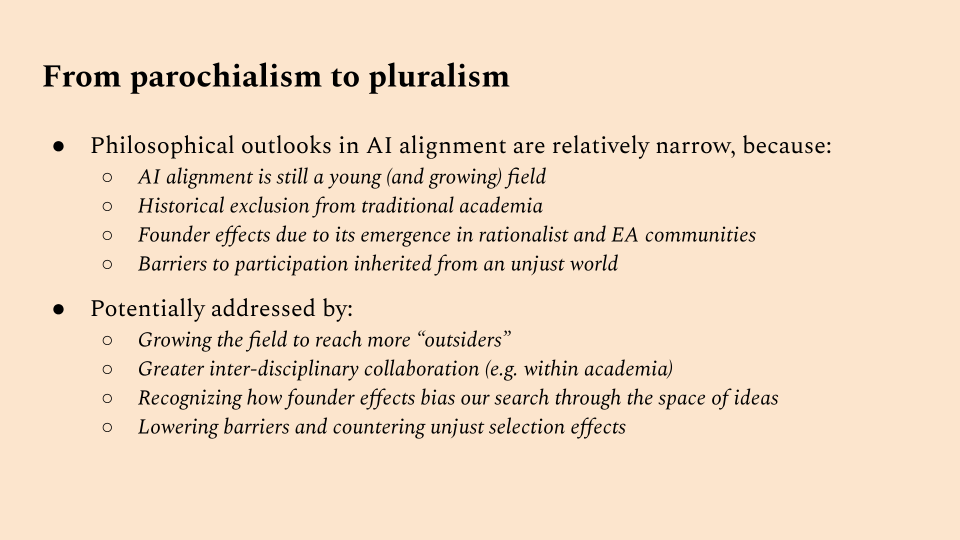

By laying out these tendencies, I want to suggest that the predominant views within AI alignment live within a relatively small corner of the full space of contemporary philosophical positions. If this is true, this should give reason for pause. Why these tendencies? Of course, it’s partly that a lot of very smart people thought very hard about these things, and this is what made sense to them. But very smart people may still be systematically biased by their intellectual environments and trajectories.

Might this be happening with AI alignment researchers? It’s worth noting that the first three of these tendencies are very much influenced by recent successes of deep learning and reinforcement learning in AI. In fact, prior to these successes, a lot of work in AI was more on the other end of the spectrum: first order logic, classical planning, cognitive systems, etc. One worry then, is that the attention of AI alignment researchers might be unduly influenced by the success or popularity of contemporary AI paradigms.

It's also notable that the last two of these tendencies are largely inherited from disciplines like economics, computer science, and communities like effective altruism. Another worry then, would be that these origins have unduly influenced the paradigms and concepts that we take as foundational.

So at this point, I hope to have shown how the AI alignment research community exists in a bit of a philosophical bubble. And so in that sense, if you’ll forgive the term, the community is rather parochial.

Reasons for parochialism, and steps towards pluralism.And there are understandable reasons for this. For one, AI alignment is still a young field, and hasn’t reached a more diverse pool of researchers. Until more recently, It’s also been excluded and not taken very seriously within traditional academia, leading to a lack of intra-disciplinary and inter-disciplinary conversation, and a continued suspicion in some quarters about academia. Obviously, there are also strong founder effects due to the field’s emergence within rationalist and EA communities. And like much of AI and STEM, it inherits barriers to participation from an unjust world.

These can be, and in my opinion, should be addressed. As the field grows, we could make sure it includes more disciplinary and community outsiders. We could foster greater inter-disciplinary collaboration within academia. We could better recognize how founder effects may bias our search through the space of relevant ideas. And we could lower the barriers to participation, while countering unjust selection effects.

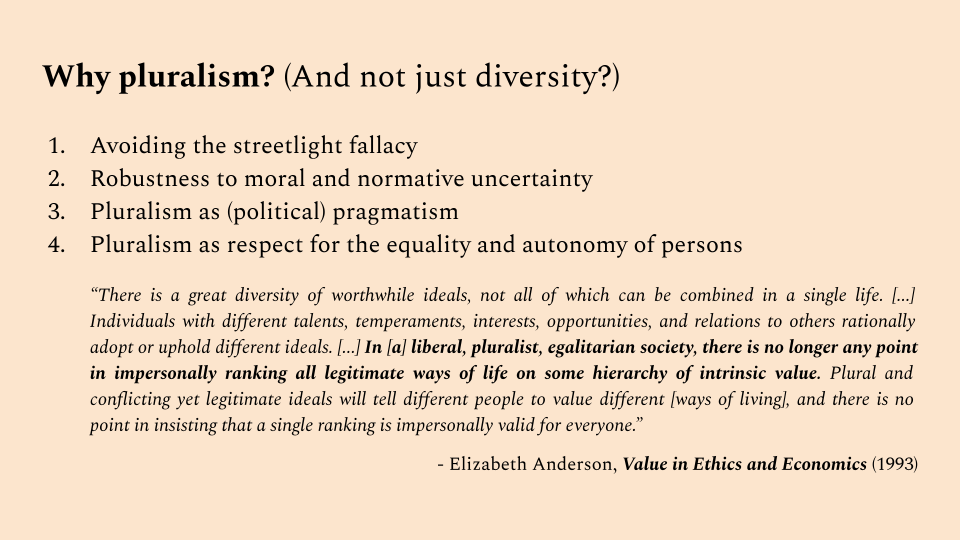

Why pluralism? (And not just diversity?)

----------------------------------------

By why bother? What exactly is the value in breaking out of this philosophical bubble? I haven’t quite explained that yet, so I’ll do that now. And why do I use the word pluralism in particular, as opposed to just diversity? I chose it because I wanted it to evoke something more than just diversity.

By philosophical pluralism, I mean to include philosophical diversity, by which I mean serious engagement with multiple philosophical traditions and disciplinary paradigms. But I also mean openness to the possibility that the problem of aligning AI might have [*multiple good answers*](https://www.alignmentforum.org/posts/qpJbFta7RwpHcFarc/can-we-make-peace-with-moral-indeterminacy), and that we need to contend with how to do that. Having defined those terms, let’s get into the reasons.

A summary of reasons for philosophical pluralism in AI alignment.**1. Avoiding the streetlight fallacy**

The first is avoiding the streetlight fallacy — that if we simply keep exploring the philosophy that’s familiar to Western-educated elites, we are likely to miss out on huge swathes of human thought that may have crucial relevance to AI alignment.

Jay Garfield puts this quite sharply in his book on Engaging Buddhism. Speaking to Western philosophers about Buddhist philosophy, he argues that Buddhist philosophy shares too many concerns with Western philosophy to be ignored:

> “Contemporary philosophy cannot continue to be practiced in the West in ignorance of the Buddhist tradition. ... **Its concerns overlap with those of Western philosophy too broadly to dismiss it as irrelevant. Its perspectives are sufficiently distinct that we cannot see it as simply redundant.**Close enough for conversation; distant enough for that conversation to be one from which we might learn. ... [T]o continue to ignore Buddhist philosophy (and by extension, Chinese philosophy, non-Buddhist Indian philosophy, African philosophy, Native American philosophy...) is indefensible.”

>

> — Jay Garfield, [*Engaging Buddhism: Why It Matters to Philosophy*](https://jaygarfield.files.wordpress.com/2014/01/engaging-buddhism-full-012214.pdf)(2015)

>

>

**2. Robustness to moral and normative uncertainty**

The second is robustness to moral and normative uncertainty. If you’re unsure about what the right thing to do is, or to align an AI towards, and you think it’s plausible that other philosophical perspectives might have good answers, then it’s reasonable to diversify our resources to incorporate them.

This is similar to the argument that Open Philanthropy makes for worldview diversification (and related to the informational situation of having imprecise credences, discussed briefly by MacAskill, Bykvist and Ord in [*Moral Uncertainty*](https://www.williammacaskill.com/info-moral-uncertainty)):

> “When deciding between worldviews, there is a case to be made for simply taking our best guess, and sticking with it. If we did this, we would focus exclusively on animal welfare, or on global catastrophic risks, or global health and development, or on another category of giving, with no attention to the others. However, that’s not the approach we’re currently taking. Instead, we’re practicing **worldview diversification: putting significant resources behind each worldview that we find highly plausible.** We think it’s possible for us to be a transformative funder in each of a number of different causes, and we don’t - as of today - want to pass up that opportunity to focus exclusively on one and get rapidly diminishing returns.”

>

> — Holden Karnofsky, Open Philanthropy CEO, [*Worldview Diversification*](https://www.openphilanthropy.org/blog/worldview-diversification)(2016)

>

>

**3. Pluralism as (political) pragmatism**

The third is pluralism as a form political pragmatism. As Iason Gabriel at DeepMind writes: In the absence of moral agreement, is there a fair way to decide what principles AI should align with? Gabriel doesn’t really put it this way, but one way to interpret this is that, pluralism is pragmatic because it’s the only way we’re going to get buy in from disparate political actors.

> “[W]e need to be clear about the challenge at hand. For the task in front of us is not, as we might first think, to identify the true or correct moral theory and then implement it in machines. Rather, it is to find a way of selecting appropriate principles that is compatible with the fact that we live in a diverse world, where people hold a variety of reasonable and contrasting beliefs about value. ... To avoid a situation in which some people simply impose their values on others, we need to ask a different question: **In the absence of moral agreement, is there a fair way to decide what principles AI should align with?**”

>

> — Iason Gabriel, DeepMind, [*Artificial Intelligence, Values, and Alignment*](https://link.springer.com/article/10.1007/s11023-020-09539-2)(2020)

>

>

**4. Pluralism as respect for the equality and autonomy of persons**

Finally, there’s pluralism as an ethical commitment in itself — pluralism as respect for the equality and autonomy of persons to choose what values and ideals matter to them. This is the reason I personally find the most compelling — I think in order to preserve a lot of what we care about in this world, we need aligned AI to respect this plurality of value.

Elizabeth Anderson puts this quite beautifully in her book, [*Value in Ethics and Economics*](https://books.google.com/books/about/Value_in_Ethics_and_Economics.html?id=1oBpfbE2c5IC). Noting that individuals may rationally adopt or uphold a great diversity of worthwhile ideals, she argues that we lack good reason for impersonally ranking all legitimate ways of life on some universal scale. If we accept that there may be conflicting yet legitimate philosophies about what constitutes a good life, then we also have to accept that there maybe multiple incommensurable scales of value that matter to people:

> “There is a great diversity of worthwhile ideals, not all of which can be combined in a single life. ... Individuals with different talents, temperaments, interests, opportunities, and relations to others rationally adopt or uphold different ideals. ... **In [a] liberal, pluralist, egalitarian society, there is no longer any point in impersonally ranking all legitimate ways of life on some hierarchy of intrinsic value.** Plural and conflicting yet legitimate ideals will tell different people to value different [ways of living], and there is no point in insisting that a single ranking is impersonally valid for everyone.”

>

> — Elizabeth Anderson, [*Value in Ethics and Economics*](https://books.google.com/books/about/Value_in_Ethics_and_Economics.html?id=1oBpfbE2c5IC)(1995)

>

>

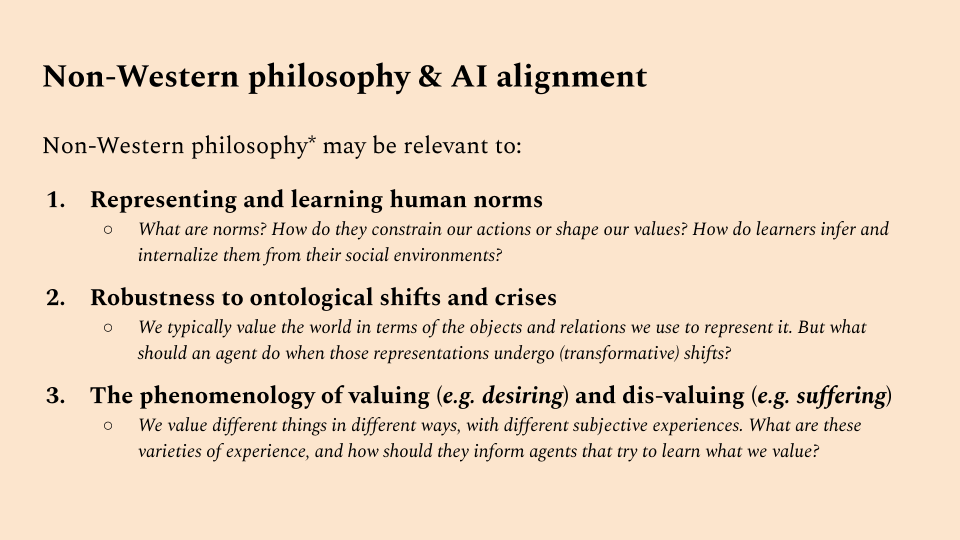

The relevance of non-Western philosophy

---------------------------------------

So that’s why I think pluralism matters to AI alignment. Perhaps you buy that, but perhaps it’s hard to think of concrete examples where non-dominant philosophies may be relevant to alignment research. So now I’d just like to offer a few. I think non-Western philosophy might be especially relevant to the following open problems in AI alignment:

3 areas where non-Western philosophies may be relevant to AI alignment1. *Representing and learning human norms.* What are norms? How do they constrain our actions or shape our values? How do learners infer and internalize them from their social environments? Classical Chinese ethics, especially Confucian ethics, could provide some insights.

2. *Robustness to ontological shifts and crises.* We typically value the world in terms of the objects and relations we use to represent it. But what should an agent do when those representations undergo (transformative) shifts? Certain schools of Buddhist metaphysics bear directly on these questions.

3. *The phenomenology of valuing (e.g. desiring) and disvaluing (e.g. suffering).* We value different things in different ways, with different subjective experiences. What are these varieties of experience, and how should they inform agents that try to learn what we value? Buddhist, Jain and Vedic philosophy have been very much centered on the nature of these subjective experiences, and could provide answers.

Before I go on, I also wanted to note that this is primarily drawn from only the limited about of Chinese and Buddhist philosophy I’m familiar with. This is certainly not all of non-Western philosophy, and there’s a lot more out there, outside of the streetlight, that may be relevant.

**1. Representing and learning human norms**

Why do social norms and practices matter? One answer that’s common from game theory is that norms have *instrumental* value as [coordinating devices](https://journals.sagepub.com/doi/10.1177/1470594X09345474) or [unspoken agreements](https://arxiv.org/abs/1804.04268). To the extent that we need [AI to coordinate well with humans then](https://bair.berkeley.edu/blog/2019/10/21/coordination/), [we may need AI to learn](https://arxiv.org/abs/1806.10071) [and follow these norms](https://arxiv.org/abs/2003.11778).

If you look to Confucian ethics however, you get a quite different picture. On one possible interpretation of Confucian thought, norms and practices are understood to have *intrinsic* value as evaluative standards and expressive acts. You can see this for example, in the Analects, which are attributed to Confucius:

> 克己復禮為仁。

> Restraining the self and returning to *ritual* (禮 / *li*) constitutes *humaneness* (仁 / *ren*).

>

> — [Analects 12.1](https://ctext.org/analects/yan-yuan)

>

>

This word, *li* (禮), is hard to translate, but means something like ritual propriety or etiquette. And it recurs again and again in Confucian thought. This particular line suggests a central role for ritual in what Confucians thought of as a benevolent, humane and virtuous life. How to interpret this? Kwong-loi Shun suggests that this is because, while ritual forms may just be conventions, without these conventions, important evaluative attitudes like respect or reverence cannot be made intelligible or expressed:

> Kwong-loi Shun [...] holds that on the one hand, a particular set of ritual forms are the conventions that a community has evolved, and without such forms attitudes such as respect or reverence cannot be made intelligible or expressed (the truth behind the definitionalist interpretation). In this sense, *li* constitutes *ren* within or for a given community. On the other hand, different communities may have different conventions that express respect or reverence, and moreover any given community may revise its conventions in piecemeal though not wholesale fashion (the truth behind the instrumentalist interpretation).

>

> — David Wong, [*Chinese Ethics*](https://plato.stanford.edu/entries/ethics-chinese/#CenLiRit) *(*Stanford Encyclopedia of Philosophy)

>

>

I was quite struck by this when I first encountered it — partly because I grew up finding a lot of Confucian thought really pointless and oppressive. And to be clear, some norms are oppressive. But I recently encountered a very similar idea in the work of Elizabeth Anderson (cited earlier) that made me come around more to it. In speaking about how individuals value things, and where we get these values from, Anderson argues that:

> “Individuals are not self-sufficient in their capacity to value things in different ways. **I am capable of valuing something in a particular way only in a social setting that upholds norms for that mode of valuation.** I cannot honor someone outside of a social context in which certain actions ... are commonly understood to express honor.”

>

> — Elizabeth Anderson, [*Value in Ethics and Economics*](https://books.google.com/books/about/Value_in_Ethics_and_Economics.html?id=1oBpfbE2c5IC)(1995)

>

>

I find this really compelling. If you think about what constitutes good art, or literature, or beauty, all of that is undoubtedly tied up in norms about how to value things, and how to express those values.

If this is right, then there’s a sense in which the game theoretic account of norms has got things exactly reversed. In game theory, it’s assumed that norms emerge out of the interaction of individual preferences, and so are secondary. But for Confucians, and Anderson, it’s the opposite: norms are primary, or at least a lot of them are, and what we individually value is shaped by those norms.

This would suggest a pretty deep re-orientation of what AI alignment approaches that learn human values need to do. Rather than learn individual values, then figure out how to balance them across society, we need to consider that many values are social from the outset.

All of this dovetails quite nicely with one of the key insights in the paper [*Incomplete Contracting and AI Alignment*](https://arxiv.org/abs/1804.04268)*:*

> “Building AI that can reliably learn, predict, and respond to a human community’s normative structure is a distinct research program to building AI that can learn human preferences. ... To the extent that preferences merely capture the valuation an agent places on different courses of action with normative salience to a group, **preferences are the outcome of the process of evaluating likely community responses and choosing actions on that basis, not a primitive of choice.**”

>

> — Hadfield-Menell & Hadfield, *Incomplete Contracting & AI Alignment*(2018)

>

>

Here again, we see re-iterated idea that social norms *constitute* (at least some) individual preferences. What all of this suggests is that, if we want to accurately model human preferences, we may need to model the causal and social processes by which individuals learn and internalize norms: observation, instruction, ritual practice, punishment, etc.

Furthermore, when it comes to human *values,* then at least in some domains (e.g. what is beautiful, racist, admirable, or just), we ought to identify what's valuable not with the revealed preference or even the reflective judgement of a single individual, but with the outcome of some evaluative social process that takes into account pre-existing standards of valuation, particular features of the entity under evaluation, and potentially competing reasons for applying, not applying, or revising those standards.

As it happens, this anti-individualist approach to valuation isn't particularly prominent in Western philosophical thought (but again, [see Anderson](https://www.newyorker.com/magazine/2019/01/07/the-philosopher-redefining-equality)). Perhaps then, by looking towards philosophical traditions like Confucianism, we can develop a better sense of how these normative social processes should be modeled.

**2. Robustness to ontological shifts and crises**

Let's turn now to a somewhat old problem, first posed by MIRI in 2011: An agent defines its objective based on how it represents the world — but what should happen when that representation is changed?

> “An agent’s goal, or utility function, may also be specified in terms of the states of, or entities within, its ontology. If the agent may upgrade or replace its ontology, it faces a crisis: the agent’s original goal may not be well-defined with respect to its new ontology. This crisis must be resolved before the agent can make plans towards achieving its goals.”

>

> — Peter De Blanc, MIRI, [*Ontological Crises in AI Value Systems*](https://intelligence.org/files/OntologicalCrises.pdf)(2011)

>

>

As it turns out, Buddhist philosophy might provide some answers. To see how, it’s worth comparing it against more commonplace views about the nature of reality and the objects within it. Most of us grow up as what you might call naive realists, believing:

> *Naive Realism.* Through our senses, we perceive the world and its objects directly.

>

>

But then we grow up and study some science, and encounter optical illusions, and maybe become representational realists instead:

> *Representational Realism.*We indirectly construct representations of the external world from sense data, but the world being represented is real.

>

>

Now, Madhyamaka Buddhism goes further — it rejects the idea that there is anything ultimately real or true. Instead, [all facts are at best conventionally true](https://oxford.universitypressscholarship.com/view/10.1093/acprof:oso/9780199751426.001.0001/acprof-9780199751426). And while there may exist some mind-independent external world, there is no uniquely privileged representation of that world that is the “correct” one. *However some representations are still better for alleviating suffering than others*, and so part of the goal of Buddhist practice is to see through our everyday representations as merely conventional, and [to adopt representations better suited for alleviating suffering.](https://muse.jhu.edu/article/26507)

This view is demonstrated in *The Vimalakīrti Sutra*, which actually uses gender as an example of a concept that should be seen through as conventional. I was quite astounded when I first read it, because the topic feels so current, but the text is actually 1800 years old:

> *The reverend monk, Śāriputra, asks a Goddess why she does not transform her female body into a male body, since she is supposed to be enlightened. In response, she swaps both their bodies, and explains:*

>

> Śāriputra, if you were able to transform

> This female body,

> Then all women would be able to transform as well.

>

> Just as Śāriputra is not female

> But manifests a female body

> So are all women likewise:

>

> Although they manifest female bodies

> They are not, inherently, female.

>

> Therefore, the Buddha has explained

> That all phenomena are neither female nor male.

>

> — [*The Vimalakīrti Sutra*](https://en.wikipedia.org/wiki/Vimalakirti_Sutra) (circa. 200 CE)

>

>

All this actually closely resonates, in my opinion, with a recent movement in Western analytic philosophy called [conceptual engineering](https://www.lesswrong.com/posts/9iA87EfNKnREgdTJN/conceptual-engineering-the-revolution-in-philosophy-you-ve) — the idea that we should re-engineer concepts to suit our purposes. For example, Sally Haslanger at MIT has applied this approach [in her writings on gender and race](http://www.mit.edu/~shaslang/papers/WIGRnous.pdf), arguing that feminists and anti-racists need to revise these concepts to better suit feminist and anti-racist ends.

I think this methodology is actually really promising way to deal with the question of ontological shifts. Rather than framing ontological shifts as quasi-exogenous occurrence that agents have to respond to, it frames them as meta-cognitive choices that we select with particular ends in mind. It almost suggests this iterative algorithm for changing our representations of the world:

1. Fix some evaluative concepts (e.g., accuracy, well-being) and lower-level primitives.

2. Refine other concepts to do better with respect those evaluative concepts.

3. Adjust those evaluative concepts and lower-level primitives in response.

4. Repeat as necessary.

How exactly this would work, and whether it would lead to reasonable outcomes, is, I think, really fruitful and open research terrain. I see MIRI's recent work on [Cartesian Frames](https://www.alignmentforum.org/posts/BSpdshJWGAW6TuNzZ/introduction-to-cartesian-frames) as a very promising step in this direction, by formalizing the ways in which we might carve up the world into "self" and "other". When it comes to epistemic values, steps have also been made towards formalizing [approximate causal abstractions](https://arxiv.org/abs/1906.11583). And of course, the importance of representational choice for efficient planning [has been known since the 60s](https://exhibits.stanford.edu/feigenbaum/catalog/pv920qc3232). What remains lacking is a theory of when and how to apply these representational shifts according to an initial set of desiderata, and then how to reconceive those desiderata in response.

**3. The phenomenology of valuing and dis-valuing**

On to the final topic of relevance. In AI and economics, it’s very common to just talk about human values in terms of this barebones concept of preference. Preference is an example of what you might call a thin evaluative attitude, which doesn’t have any deeper meaning beyond imposing a certain ordering over actions or outcomes.

In contrast, I think all of us familiar with a much wider range of evaluative attitudes and experiences: respect, admiration, love, shock, boredom, and so on. These are thick evaluative attitudes. And work in AI alignment hasn’t really tried to account for them. Instead, there’s a tendency to collapse everything into this monolithic concept of “reward”.

And I think that’s very dangerous — we’re not paying attention to the full range of subjective experience, and that may lead to catastrophic outcomes. Instead, I think we need to be engaging more with psychology, phenomenology, and neuroscience. For example, there’s work in the field of [neurophenomenology](https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.405.918&rep=rep1&type=pdf) that I think might be really promising for answering some of these questions:

> **“The use of first-person and second-person phenomenological methods to obtain original and refined first-person data is central to neurophenomenology.** It seems true both that people vary in their abilities as observers and reporters of their own experiences and that these abilities can be enhanced through various methods. First-person methods are disciplined practices that subjects can use to increase their sensitivity to their own experiences at various time-scales. These practices involve the systematic training of attention and self-regulation of emotion. Such practices exist in phenomenology, psychotherapy, and contemplative meditative traditions. Using these methods, subjects may be able to gain access to aspects of their experience, such as transient affective state and quality of attention, that otherwise would remain unnoticed and unavailable for verbal report.”

>

> — Thompson et al, [*Neurophenomenology: An Introduction for Neurophilosophers*](https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.405.918&rep=rep1&type=pdf)(2010)

>

>

Unsurprisingly, this work is very much informed by engagement with Buddhist, Jain, and Vedic philosophy and practice, because they are entire philosophical practices devoted to questions like “What is the nature of desire?”, “What is the nature of suffering?” and “What are the various mental factors that lead to one or the other?”

Does AI alignment require understanding human subjective experience at the incredibly fine level of detail aimed at by neurophenomenology and contemplative traditions? My intuition is that it won't, simply because we humans are capable of being helpful and beneficial without fulling understanding each others' minds. But we do understand at least that we all have different subjective experiences, which we may value or take as motivating in different ways.

This level of intuitive psychology, I believe, is likely to be necessary for alignment. And AI as a field is nowhere near it. Research into “[emotion recognition](https://www.theverge.com/2019/7/25/8929793/emotion-recognition-analysis-ai-machine-learning-facial-expression-review)”, which is perhaps the closest that AI has gotten to these questions, typically reifies emotion into [6 fixed categories](https://plato.stanford.edu/entries/emotion/#BasiEmotTheoEmotEvolAffeProg), which is not much better than collapsing everything into “reward”. Given that contemplative Dharmic philosophy has long developed systematic methods for investigating the experiential nature of mind, as well as theories about how higher-order awareness relates to experience, it bears promise for informing how AI could *learn* theories of emotion and evaluative experience, rather than simply having them hard-coded.

Just as a final illustration of why the study evaluative experience is important, I want to highlight a question that often comes up in Buddhist philosophy: [*How can one act effectively in the world*](https://journals.ub.uni-heidelberg.de/index.php/jiabs/article/download/9285/3146) [*without experiencing desire or suffering?*](https://link.springer.com/article/10.1007/s11841-017-0619-4) Unless you’re interested in attaining awakening, it may not be so relevant to humans, nor to AI alignment per se. But it becomes very relevant once we consider the possibility that we might build AI that suffers itself. In fact, there’s a recent paper on exactly this topic asking: [*How can we build functionally effective conscious AI without suffering?*](https://www.worldscientific.com/doi/10.1142/S2705078520300030)

> “The possibility of machines suffering at our own hands ... only applies if the AI that we create or cause to emerge becomes conscious and thereby capable of suffering. In this paper, we examine the nature of the relevant kind of conscious experience, the potential functional reasons for endowing an AI with the capacity for feeling and therefore for suffering, and some of the possible ways of retaining the functional advantages of consciousness, whatever they are, while avoiding the attendant suffering.”

>

> — Agarwal & Edelman, [*Functionally Effective Conscious AI Without Suffering*](https://www.worldscientific.com/doi/10.1142/S2705078520300030) (2020)

>

>

The worry here is that consciousness may have evolved in animals because it serves some function, and so, AI might only reach human-level usefulness if it is conscious. And if it is conscious, it could suffer. Most of us who care about sentient beings besides humans would want to make sure that AI doesn’t suffer — we don’t want to create a race of artificial slaves. So that’s why it might be really important to figure out whether agents can have functional consciousness without suffering.

To address this question, Agarwal & Edelman draw explicitly upon Buddhist philosophy, suggesting that suffering arises from identification with a phenomenal model of the self, and that by transcending that identification, suffering no longer occurs:

> The final approach ... targets the phenomenology of identification with the phenomenal self model (PSM) as an antidote to suffering. ... Metzinger [2018] describes the unit of identification (UI) as that which the system consciously identifies itself with. Ordinarily, when the PSM is transparent, the system identifies with its PSM, and is thus conscious of itself as a *self*. But it is at least a logical possibility that the UI may not be limited to the PSM, but be shifted to the most “general phenomenal property” [Metzinger, 2017] of *knowing* common to all phenomenality including the sense of self. In this special condition, the typical subject-object duality of experience would dissolve; negatively valenced experiences could still occur, but they would not amount to suffering because the system would no longer be experientially *subject* to them.

>

> — Agarwal & Edelman, [*Functionally Effective Conscious AI Without Suffering*](https://www.worldscientific.com/doi/10.1142/S2705078520300030) (2020)

>

>

No doubt, this is an imprecise — and likely contentious — definition of “suffering”, one which affords a very particular solution due to the way it is defined. But at the very least, the paper makes a valiant attempt towards formalizing, computationally, what suffering even might be. If we want to avoid creating machines that suffer, more research like this needs to be conducted, and we might do well to pay attention to Buddhist and related philosophies in the process.

Conclusion

----------

With that, I’ll end my whirlwind tour of non-Western philosophy, and offer some key takeaways and steps forward.

What I hope to have shown with this talk is that AI alignment research has drawn from a relatively narrow set of philosophical perspectives. Expanding this set, for example, with non-Western philosophy, could provide fresh insights, and reduce the risk of misalignment.

In order to address this, I’d like to suggest that prospective researchers and funders in AI alignment should consider a wider range of disciplines and approaches. In addition, while support for alignment research has grown in CS departments, we may need to increase support in other fields, in order to foster the inter-disciplinary expertise needed for this daunting challenge.

If you enjoyed this talk, and would like to learn more about AI alignment, pluralism, or non-Western philosophy [here are some reading recommendations](https://docs.google.com/presentation/d/11KV_SdA7SB6ytb06f3Wih-cx1bcHNAGWzReQiZCT3Eo/edit#slide=id.ga2b0574616_0_27). Thank you for your attention, and I look forward to your questions. |

0b990547-b4f1-4887-98db-efadda0073e6 | trentmkelly/LessWrong-43k | LessWrong | Announcing predictions

Today I unveiled predictions, a command-line program to score predictions you’ve written down in a YAML file. In my estimation, the program and its supporting documentation is alpha quality. You’ll need to build it yourself and the documentation isn’t all there and well-organized yet.

If you’re familiar with Go toolchains, have a look. I’d be happy to take feedback here in addition to on GitHub.

https://github.com/adiabatic/predictions |

a792cd77-17cc-4035-84af-4d81c7a33ab3 | trentmkelly/LessWrong-43k | LessWrong | Retrospective on ‘GPT-4 Predictions’ After the Release of GPT-4

In February 2023, I wrote a post named GPT-4 Predictions which was an attempt to predict the properties and capabilities of OpenAI’s GPT-4 model using scaling laws and knowledge of past models such as GPT-3. Now that GPT-4 has been released, I'd like to evaluate these past predictions.

Unfortunately, since the GPT-4 technical report has limited information on GPT-4’s training process and model properties, I can’t evaluate all the predictions. Nevertheless, I believe I can evaluate enough of them right now to yield useful insights.

GPT-4 release date

OpenAI released GPT-4 on 14 March 2023.

I mentioned in the post that Metaculus predicted a 50% chance of GPT-4 being released by May 2023 and consequently, I expected the model to be released sometime around the middle of the year so the model was released earlier than I expected.

Training process

Number of GPUs used during training

Some people such as LawrenceC and gwern have noted in the post’s comments that GPT-4 was probably trained on 15,000 GPUs or more. Assuming this is true, my prediction that GPT-4 would be trained on 2,000 to 15,000 GPUs seems like an underprediction and consequently, I may have underpredicted GPT-4’s total training compute by about a factor of 2.

I originally predicted that GPT-4 would use about 5.63e24 FLOP of compute. According to EpochAI, the true value is about 2.2e25 which is about 4x my original estimate. The chart below also shows how GPT-4 came out earlier than I expected.

Training time

The OpenAI GPT-4 video states that GPT-4 finished training in August 2022. Given that GPT-3.5 finished training in early 2022 this suggests that GPT-4 was trained for about 4-7 months. I originally predicted that the training time would be 1-6 months which seems like an underprediction in retrospect.

GPT-4 model properties

I predicted that GPT-4 would be a dense, text-only, transformer language model like GPT-3 trained using more compute and data with a similar number of parameters (175B) a |

f28801c4-03bb-421a-b443-324830e0517f | StampyAI/alignment-research-dataset/aisafety.info | AI Safety Info | What is the "sharp left turn"?

The [Sharp Left Turn](https://www.alignmentforum.org/tag/sharp-left-turn#:~:text=A%20Sharp%20Left%20Turn%20is,generalize%20to%20the%20new%20domains.) (SLT) threat model is based on the argument that an AI’s [capabilities generalize further than its alignment](https://www.alignmentforum.org/posts/GNhMPAWcfBCASy8e6/a-central-ai-alignment-problem-capabilities-generalization). In other words, if an AI went through a SLT, its capabilities would quickly generalize outside the training distribution, but its alignment won’t be able to keep up, resulting in a powerful misaligned model. This model consists of [three main claims](https://www.alignmentforum.org/posts/usKXS5jGDzjwqv3FJ/refining-the-sharp-left-turn-threat-model):

1. **Capabilities will generalize far (i.e. to many domains)**

These capabilities could generalize in multiple domains, possibly at the same time or during a discrete phase transition.

1. **Alignment techniques that worked previously will fail during this transition**

The increase in capabilities and generalization would arise from emergent properties which would be qualitatively different from what the model used previously. As a result, alignment techniques that applied to old versions of the AI would not work on the new version.

1. **Humans won’t be able to intervene to prevent or align this transition**

The transition would happen too quickly for humans to notice or develop new alignment techniques in time.

If these claims are correct, it is likely that we would end up with a misaligned AI after the SLT if we rely on alignment techniques that do not consider the possibility of a SLT. This could be avoided if we manage to build a [goal-aligned](https://www.lesswrong.com/posts/vix3K4grcHottqpEm/goal-alignment-is-robust-to-the-sharp-left-turn) AI before the SLT occurs; i.e. the AI should have beneficial goals and [situational awareness](https://www.lesswrong.com/tag/situational-awareness-1#:~:text=Ajeya%20Cotra%20uses%20the%20term,continue%20to%20be%20influenced%20by). If this is the case, the AI will try to [preserve its beneficial goals](/?state=897I&question=What%20is%20instrumental%20convergence%3F) by developing new techniques to align itself as it goes through the SLT, and give rise to an aligned model post-SLT. To read more about plans and caveats regarding the SLT, click [here](https://www.alignmentforum.org/posts/dfXwJh4X5aAcS8gF5/refining-the-sharp-left-turn-threat-model-part-2-applying).

|

2ebe341c-0cb3-47c3-b3c6-06e85a2f73c7 | trentmkelly/LessWrong-43k | LessWrong | Simulacra Levels Summary

For More Detail, Previously: Simulacra Levels and Their Interactions, Unifying the Simulacra Definitions, The Four Children of the Seder as the Simulacra Levels.

A key source of misunderstanding and conflict is failure to distinguish between combinations of the following four cases.

1. Sometimes people model and describe the physical world, seeking to convey true information because it is true.

2. Other times people are trying to get you to believe what they want you to believe so you will do or say what they want.

3. Other times people say things mostly as slogans or symbols to tell you what tribe or faction they belong to, or what type of person they are.

4. Then there are times when talk seems to have have gone strangely meta or off the rails entirely. The symbolic representations are mostly of the associations and vibes of other symbols. The whole thing seems more like a stream of words, associations and vibes. It sounds like GPT-4.

One can refer to these as the simulacra levels as a useful fake framework for understanding this. When looking at talk, one can ask what level or levels a statement or discussion is on, and which ones people care about in context. One can also ask about the level a person, group or civilization most cares about. That is also how they default to understanding new talk.

This concept has important details that are difficult to understand. The posts linked up top offer discussions of four definitions that all point at the same dynamics. Each is stronger at capturing different elements.

As a more concise alternative, this post gathers together the most vital information.

First, the more straightforward definitions from 2020.

The Lion and Pandemic Definitions

The lion definition asks what each level means by ‘There is a lion across the river.’

Level 1: There’s a lion across the river.

Level 2: I don’t want to go (or have other people go) across the river.

Level 3: I’m with the popular kids who are too cool to go across the r |

676db40a-c458-4045-80a8-806be20aca31 | trentmkelly/LessWrong-43k | LessWrong | A medium for more rational discussion

It would be cool if online discussions allowed you to 1) declare your claims, 2) declare how your claims depend on each other (ie. make a dependency tree), 3) discuss the claims, and 4) update the status of the claim by saying whether or not you agree with it, and using something like the text shorthand for uncertainty to say how confident you are in your agreement/disagreement.

I think that mapping out these things visually would allow for more productive conversation. And it would also allow newcomers to the discussion to quickly and easily get up to date, rather than having to sift through tons of comments. On this note, there should also probably be something like an answer wiki for each claim to summarize the arguments and say what the consensus is.

I get the feeling that it should be flexible though. That probably means that it should be accompanied by the normal commenting system. Sometimes you don't actually know what your claims are, but need to "talk it out" in order to figure out what they are. Sometimes you don't really know how they depend on each other. And sometimes you have something tangential to say (on that note, there should probably be an area for tangential comments, or at least a way to flag them as tangential).

As far who would be interested in this, obviously this Less Wrong community would be interested, and I think that there are definitely some other online communities that would (Hacker News, some subreddits...).

Also, this may be speculating, but I would hope that it would develop a reputation for the most effective way to have a productive discussion. So much so that people would start saying, "go outline your argument on [name]". Maybe there'd even be pressure for politicians to do this. If so, then I think this could put pressure on society to be more rational.

What do you guys think?

EDIT: If anyone is actually interested in building this, you definitely have my permission (don't worry about "stealing the idea"). I want to |

b1aa62d9-5e36-4741-b81d-b03dd84bda8c | trentmkelly/LessWrong-43k | LessWrong | June 2012: 0/33 Turing Award winners predict computers beating humans at go within next 10 years.

In June 2012, the Association for Computing Machinery—a professional society of computer scientists, best known for hosting the prestigious ACM Turing Award, commonly referred to as the "Nobel Prize of Computer Science"—celebrated the 100th birthday of Alan Turing.

The event was attended by luminaries like, oh, in no particular order: Donald Knuth, Vint Cerf, Bob Kahn, Marvin Minsky, Judea Pearl, Ron Rivest, Adi Shamir, Leonard Adleman, and of extra relevance here; Ken Thompson, inventor of the UNIX operating system, co-inventor of the C programming language, and computer chess pioneer.

In all, some 33 Turing Award winners were scheduled to be in attendance. There were no parallel tracks or simultaneous panels going on.

Image credit: Joshua Lock

----------------------------------------

So today, randomly watching one of the panel debates on Youtube, I was amazed by the amusing / horrifying example of failure of foresight and predictive accuracy, by these world leading computer scientists, regarding the advancement of the state of the art in artificial intelligence.

In this case the as it pertains to the ancient board game "go".

https://www.youtube.com/watch?v=dsMKJKTOte0&t=54m

> "When does a computer crack go?"

> "And I will start by a 100 years, and then count down by ten year intervals."

> And [by] 90 years? I count about 4% of the audience. (...)

Perhaps my internet searching skills are weak, but as best as I can tell, the incident has not been noted other than a few bemused Youtube comments in the video linked above.

----------------------------------------

Given ten options, ten buckets in which to place their bet, world-leading experts in computer science, as a group, managed to perform much worse than one would expect given their vast and wide-ranging expertise.

Worse even, than one would expect of a group of complete ignorants.

Given ten options, one would expect one out of every ten to land on the right answer, if nobody knew anything and |

6f7cb117-8f89-47f7-aaeb-09d3244b15d0 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | When to diversify? Breaking down mission-correlated investing

*Note: This post was originally drafted earlier this year, but we never got around to posting it for various reasons (mostly being busy). We recently had time to revisit it, partly just as a natural result of our workflow, partly because the way this year has gone highlights the main message of this post.*

[Mission-correlated investing](https://forum.effectivealtruism.org/tag/mission-correlated-investing) means investing so as to have more money when money is more valuable. For effective altruists, money is more valuable when giving opportunities are more cost-effective. Some, such as Open Philanthropy, have mentioned considering such strategies. How important is this? Is this something only for major donors or for all EAs?

In this post, we first introduce the concept of 'mission-correlated returns'. We then estimate these 'returns' for three examples to illustrate the potential importance of mission correlation in different contexts. Tentatively, we expect the highest magnitude mission-correlated returns to be the negative returns associated with investments in which the EA 'Total Portfolio' is highly concentrated. This makes pursuing other investments, which diversify the portfolio, relatively attractive. However, for donors who are devoted to a single narrow cause area, it could make sense to make concentrated bets if there are investments with particularly positive mission correlation.

The FTX blowup shows how bad it can be when too much EA wealth is concentrated in a single risky company. This adds some circumstantial weight to our theoretical claims. We hope this post adds some mathematical weight to arguments for more diversification going forward.

While this post is about 'investing to give', we believe similar conclusions (like the importance of diversification) are relevant to other parts of EA strategy. In particular, the argument for working to increase funder diversity seems strong, as discussed [here](https://forum.effectivealtruism.org/posts/oiEArRjkajAKayMCp/what-might-ftx-mean-for-effective-giving-and-ea-funding#We_need_to_aim_for_greater_funding_diversity_and_properly_resource_efforts_to_achieve_this). Similar arguments can, for example, be made about PR. We encourage you to think about how you can help EA diversify, both financially and otherwise.

Key points

----------

* **Mission-correlated investment strategies**, including 'mission hedging', are about identifying investments whose returns are correlated with your *future cost-effectiveness*.

+ They can be as much about 'investing in good' as 'investing in evil'.

+ They can be a reason to diversify, or a reason to make concentrated bets.

+ They may be as or more important for small donors as for major donors.

* '**Mission-correlated returns**'—the covariance between an investment's financial returns and your future cost-effectiveness—are a useful metric for assessing the importance of mission correlation for an investment.

+ We show that these 'mission-correlated returns' could exceed 1% per year for certain investments.

+ This underlines the importance of forecasting future cost-effectiveness, as well as efforts to better understand the composition of the 'EA portfolio'.

* The main implication for most donors is a reminder that it is important to diversify the EA 'Total Portfolio'. Concentrating investments in the same companies and sectors as other EA donors incurs a large negative 'mission-correlated return'.

Essentials

----------

*Note: You might be familiar with the term 'mission hedging' as this was the first term used for this concept. We use the more general term 'mission correlation' because the crux of the idea is increasing the correlation of your investment returns with your future impact per dollar (i.e. your ability to achieve your altruistic 'mission'). Whether or not this is 'hedging' is a secondary consideration.*

If you are 'investing to give', the total good you will do equals the value of your investment portfolio in the future multiplied by the impact per dollar that you can achieve by donating (and continuing to invest) that future value. Your portfolio's future value will be equal to its current value multiplied by the portfolio financial return.

In deciding how to invest right now, what we care about is your **expected** future impact.

The definition of [covariance](https://en.wikipedia.org/wiki/Covariance) tells us that we can break expected future impact into the following parts:

where the '*relative impact per dollar*' in the covariance is the future impact per dollar divided by the expected impact per dollar.

Naive expected value maximization would tend to suggest divide and conquer strategies of focusing on increasing the Expected Portfolio Financial Return (e.g. high risk entrepreneurship) while maximizing Expected Impact per Dollar (e.g. making grants based on EA principles). Of course, both of these things are good (great even) up to a point. But pursuing them too naively ignores many important considerations (such as the general complexity of the world and diminishing returns to scale). It also ignores the covariance term. This term is one more reason it will often not be optimal to bet everything on whatever appears to have the maximum financial return.

Covariance is defined as the product of the volatility of your future relative impact per dollar, the volatility of your portfolio's financial return, and their correlation:

It's helpful to express considerations in terms of returns when reasoning about investing. Happily the covariance term in the equation for 'Expected Future Impact' acts just like a return. So we refer to this covariance as a 'mission-correlated return':

You can control the 'mission correlation' by picking investments whose returns themselves have a high 'mission correlation' with your future impact per dollar. The mission-correlated return of any investment is similarly:

This enables us to assess the importance of mission correlation for an investment in terms of variables that are relatively easy to reason about.

Examples

--------

We present three examples to illustrate actual estimates of mission-correlated returns.

Each example is introduced below the table, but for more details please see the appendix [here](https://docs.google.com/document/d/1BRKqML7V_6qdGxyydeTLna-o_CJ3qKCdY-3EOgdETPw).