id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

04b955c7-0d87-4593-b2bd-156672e25119 | trentmkelly/LessWrong-43k | LessWrong | Rationality Quotes 10

"Yes, I am the last man to have walked on the moon, and that's a very dubious and disappointing honor. It's been far too long."

-- Gene Cernan

"Man, you're no smarter than me. You're just a fancier kind of stupid."

-- Spider Robinson, Distraction

"Each problem that I solved became a rule which served afterwards to solve other problems."

-- Rene Descartes, Discours de la Methode

"Faith is Hope given too much credit."

-- Matt Tuozzo

"Your Highness, I have no need of this hypothesis."

-- Pierre-Simon Laplace, to Napoleon, explaining why his works on celestial mechanics made no mention of God.

"'For, finally, one can only judge oneself by one's actions,' thought Elric. 'I have looked at what I have done, not at what I meant to do or thought I would like to do, and what I have done has, in the main, been foolish, destructive, and with little point. Yyrkoon was right to despise me and that was why I hated him so.'"

-- Michael Moorcock, Elric of Melniboné

"You will quickly find that if you are completely and self-deprecatingly truthful about how much you owe other people, the world at large will treat you like you did every bit of the invention yourself and are just being becomingly modest about your innate genius."

-- Eric S. Raymond

"The longer I live the more I see that I am never wrong about anything, and that all the pains that I have so humbly taken to verify my notions have only wasted my time."

-- George Bernard Shaw

"The trouble is that consciousness theories are very easy to dream up... Theories that explain intelligence, on the other hand, are fiendishly difficult to come by and so are profoundly useful. I don't know for sure that intelligence always produces consciousness, but I do know that if you assume it does you'll never be disappointed."

-- John K. Clark

"Intelligence is silence, truth being invisible. But what a racket I make in declaring this."

-- Ned Rorem, "Rand |

6c20d86e-4c8b-4a93-9a7e-7fee0e7c198a | trentmkelly/LessWrong-43k | LessWrong | The Incredible Fentanyl-Detecting Machine

An NII machine in Nogales, AZ. (Image source)

There’s bound to be a lot of discussion of the Biden-Trump presidential debates last night, but I want to skip all the political prognostication and talk about the real issue: fentanyl-detecting machines.

Joe Biden says:

> And I wanted to make sure we use the machinery that can detect fentanyl, these big machines that roll over everything that comes across the border, and it costs a lot of money. That was part of this deal we put together, this bipartisan deal.

>

> More fentanyl machines, were able to detect drugs, more numbers of agents, more numbers of all the people at the border. And when we had that deal done, he went – he called his Republican colleagues said don’t do it. It’s going to hurt me politically.

>

> He never argued. It’s not a good bill. It’s a really good bill. We need those machines. We need those machines. And we’re coming down very hard in every country in Asia in terms of precursors for fentanyl. And Mexico is working with us to make sure they don’t have the technology to be able to put it together. That’s what we have to do. We need those machines.

Wait, what machines?

You can remotely, non-destructively detect that a bag of powder contains fentanyl rather than some other, legal substance? And you can sense it through the body of a car?

My god. The LEO community must be holding out on us. If that tech existed, we’d have tricorders by now.

What’s actually going on here?

What’s Up With Fentanyl-Detecting Machines?

First of all, Biden didn’t make them up.

This year, the Department of Homeland Security reports that Customs and Border Patrol (CBP) has deployed “Non-Intrusive Inspection” at the US’s southwest border:

> “By installing 123 new large-scale scanners at multiple POEs along the southwest border, CBP will increase its inspection capacity of passenger vehicles from two percent to 40 percent, and of cargo vehicles from 17 percent to 70 percent.”

In fact, there’s something of a scand |

9eaf177f-2157-422b-83a3-1abec095f6a5 | trentmkelly/LessWrong-43k | LessWrong | Thinking of tool AIs

Preliminary note: the ideas in the post emerged during the Learning-by-doing AI safety workshop at EA Hotel; special thanks to Linda Linsefors, Davide Zagami and Grue_Slinky for giving suggestions and feedback.

As the title anticipates, long-term safety is not the main topic of this post; for the most part, the focus will be on current AI technologies. More specifically: why are we (un)satisfied with them from a safety perspective? In what sense can they be considered tools, or services?

An example worth considering is the YouTube recommendation algorithm. In simple terms, the job of the algorithm is to find the videos that best fit the user and then suggest them. The expected watch time of a video is a variable that heavily influences how a video is ranked, but the objective function is likely to be complicated and probably includes variables such as click-through rate and session time.[1] For the sake of this discussion, it is sufficient to know that the algorithm cares about the time spent by the user watching videos.

From a safety perspective - even without bringing up existential risk - the current objective function is simply wrong: a universe in which humans spend lots of hours per day on YouTube is not something we want. The YT algorithm has the same problem that Facebook had in the past, when it was maximizing click-throughs.[2] This is evidence supporting the thesis that we don't necessarily need AGI to fail: if we keep producing software that optimizes for easily measurable but inadequate targets, we will steer the future towards worse and worse outcomes.

----------------------------------------

Imagine a scenario in which:

* human willpower is weaker than now;

* hardware is faster than now, so that the YT algorithm manages to evaluate a larger number of videos per time unit and, as a consequence, gives the user better suggestions.

Because of these modifications, humans could spend almost all day on YT. It is worth noting that, even in this semi- |

4a11e8f9-6d3b-4124-a973-f65809eee0b0 | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] Timeless Beauty

Today's post, Timeless Beauty was originally published on 28 May 2008. A summary (taken from the LW wiki):

> To get rid of time you must reduce it to nontime. In timeless physics, everything that exists is perfectly global or perfectly local. The laws of physics are perfectly global; the configuration space is perfectly local. Every fundamentally existent ontological entity has a unique identity and a unique value. This beauty makes ugly theories much more visibly ugly; a collapse postulate becomes a visible scar on the perfection.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was Timeless Physics, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

b0aee7f5-d1f0-4db3-a5da-a0ac223c0b82 | trentmkelly/LessWrong-43k | LessWrong | Currently Buying AdWords for LessWrong

So I'm trying to build more rationalists. To do this, I've invested a few hundred dollars of my own money to promote Less Wrong by buying low-cost AdWords on Google for different LW pages. I want to reach smart people with a really good article from Less Wrong that answers their question and draws them into our community so that the site's content can help improve their rationality. Based on buying AdWords before, I'd estimate that only 0.5-1% of people who click through to Less Wrong will actually get involved after reading an article, but since clicks only cost ~$0.04, that means it only costs me ~$6 to build a new rationalist and drastically improve someone's life. Seems like an excellent return on investment.

But to get a strong 1% conversion rate and really make an impact, I need to identify REALLY EXCELLENT Less Wrong content. Right now I'm experimenting by buying a lot of keywords related to quantum mechanics and sending people to http://lesswrong.com/lw/r8/and_the_winner_is_manyworlds/

My hope is that this page is useful and memorable enough that some small % of readers stick around and click through to other pages. My guess is that this isn't the ideal page to do this with but it's aiming in the right direction.

What page would you would want a new Less Wrong reader to find first? What answers a specific question they might have in such an impressive way that they would want to learn more about our community (perhaps many different pages for many different questions)?? Which articles are most memorable? Just looking at "Top" didn't yield any obvious choices... I felt like most of those articles were too META-META-META ... you'd need too much back knowledge for many of them. An ideally article would be more or less "stand-alone" so that any relatively intelligent person who doesn't have the whole LW corpus in their head already could just jump in and understand it immediately... and then branch out and explore LW from there.

So what do you th |

4da45c4b-ddbb-43da-9e8a-2f6643bda58f | StampyAI/alignment-research-dataset/special_docs | Other | Assessing Generalization in Reward Learning: Intro and Background

Generalization in Reward Learning

---------------------------------

An overview of reinforcement learning, generalization, and reward learning

--------------------------------------------------------------------------

[](https://chisness.medium.com/?source=post\_page-----da6c99d9e48--------------------------------)[](https://towardsdatascience.com/?source=post\_page-----da6c99d9e48--------------------------------)[Max Chiswick](https://chisness.medium.com/?source=post\_page-----da6c99d9e48--------------------------------)

·[Follow](https://medium.com/m/signin?actionUrl=https%3A%2F%2Fmedium.com%2F\_%2Fsubscribe%2Fuser%2F98505f8c082&operation=register&redirect=https%3A%2F%2Ftowardsdatascience.com%2Fassessing-generalization-in-reward-learning-intro-and-background-da6c99d9e48&user=Max+Chiswick&userId=98505f8c082&source=post\_page-98505f8c082----da6c99d9e48---------------------post\_header-----------)

Published in[Towards Data Science](https://towardsdatascience.com/?source=post\_page-----da6c99d9e48--------------------------------)·16 min read·Sep 30, 2020--

Listen

Share

\*\*Authors: Anton Makiievskyi, Liang Zhou, Max Chiswick\*\*

\*Note: This is the\* \*\*\*first\*\*\* \*of\* \*\*\*two\*\*\* \*blog posts (part\* [\*two\*](https://chisness.medium.com/assessing-generalization-in-reward-learning-implementations-and-experiments-de02e1d08c0e)\*). In these posts, we describe a project we undertook to assess the ability of reward learning agents to generalize. The implementation for this project is\* [\*available\*](https://github.com/lzil/procedural-generalization) \*on GitHub.\*

\*This first post will provide a background on reinforcement learning, reward learning, and generalization, as well as summarize the main aims and inspirations for our project. If you have the requisite technical background, feel free to skip the first couple sections.\*

About Us

========

We are a team that participated in the 2020 [AI Safety Camp](https://aisafety.camp/) (AISC), a program in which early career researchers collaborate on research proposals related to AI safety. In short, AI safety is a field that aims to ensure that as AI continues to develop, it does not harm humanity.

Given our team’s mutual interests in technical AI safety and reinforcement learning, we were excited to work together on this project. The idea was originally suggested by Sam Clarke, another AISC participant with whom we had fruitful conversations over the course of the camp.

Reinforcement Learning

======================

In reinforcement learning (RL), an agent interacts with an environment with the goal of earning rewards. \*\*Ultimately, the agent wants to learn a strategy in order to maximize the rewards it obtains over time.\*\* First things first, though: what exactly is an agent, and what are rewards? An \*agent\* is a character that interacts with some world, also known as an \*environment\*\*\*,\*\* by taking actions. For instance, an agent could be a character playing a video game, the car in a self-driving car simulation, or a player in a poker game. The rewards are simply numbers that represent the goal of the agent, whether what happens to the agent is preferable or not. For example picking up a coin may give a positive reward, while getting hit by an enemy a negative reward.

In RL, the \*state\* represents everything about the current situation in the environment. What the agent can actually see, however, is an \*observation\*. For example, in a poker game, the observation may be the agent’s own cards and the previous actions of the opponent, while the state also includes the cards of the opponent and the sequence of cards in the deck (i.e., things the agent can’t see). In some environments like chess where there is no hidden information, the state and the observation are the same.

Given observations, the agent takes \*actions\*. After each action, the agent will get feedback from the environment in the form of:

1. \*\*Rewards:\*\* Scalar values, which can be positive, zero, or negative

2. \*\*A new observation:\*\* The result of taking the action from the previous state, which moves the agent to a new state and results in this new observation. (Also, whether or not the new state is “terminal”, meaning whether the current interaction is finished or still in progress. For example, completing a level or getting eaten by an opponent will terminate many games.)

In RL, our goal is to \*train\* the agent to be really good at a task by using rewards as feedback. Through one of many possible training algorithms, the agent gradually learns a strategy (also known as a \*policy\*) that defines what action the agent should take in any state to maximize reward. The goal is to maximize reward over an entire \*episode\*, which is a sequence of states that an agent goes through from the beginning of an interaction to the terminal state.

Hugely successful agents have been trained to superhuman performance in domains such as [Atari](https://medium.com/@jonathan\_hui/rl-dqn-deep-q-network-e207751f7ae4) and the game of [Go](https://deepmind.com/blog/article/alphago-zero-starting-scratch).

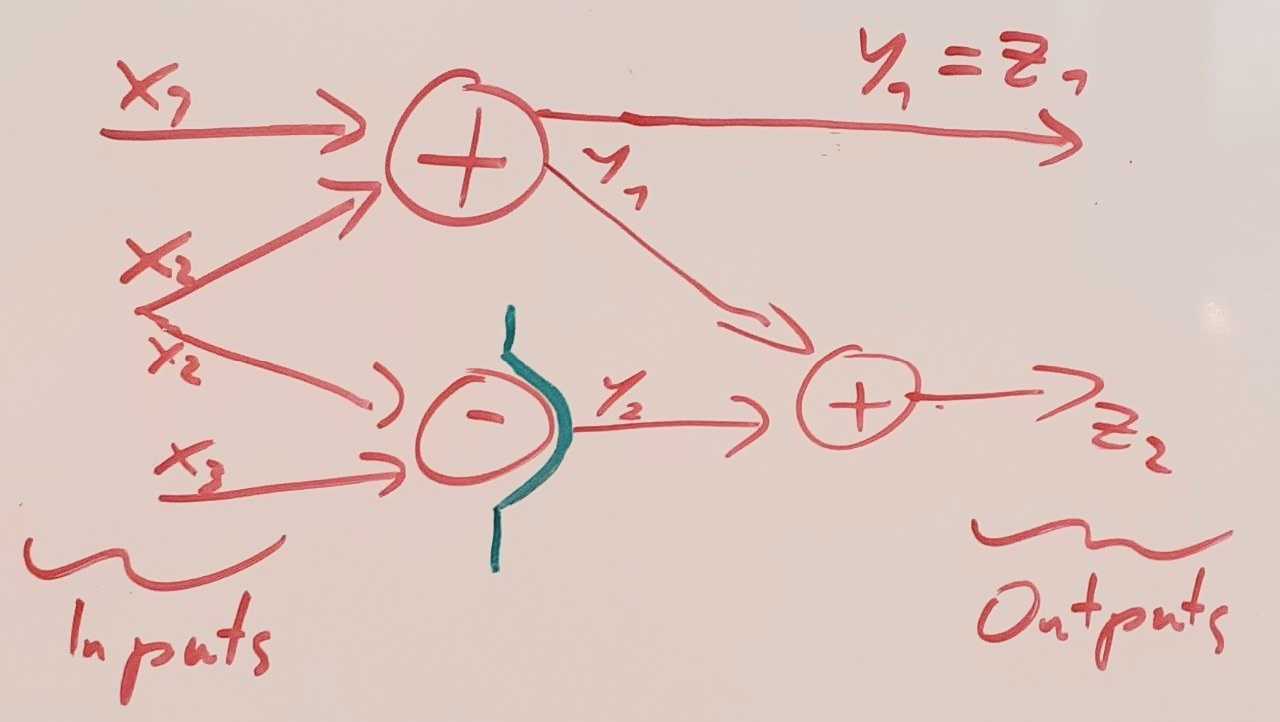

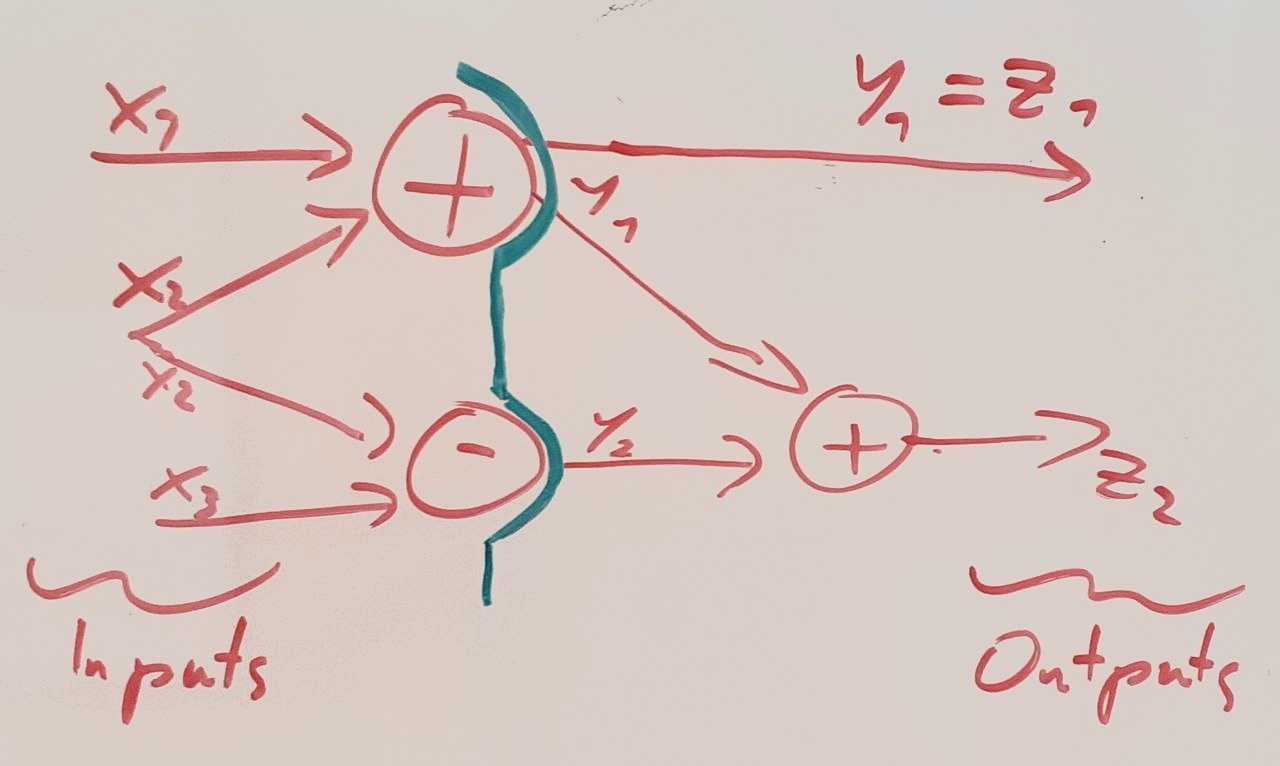

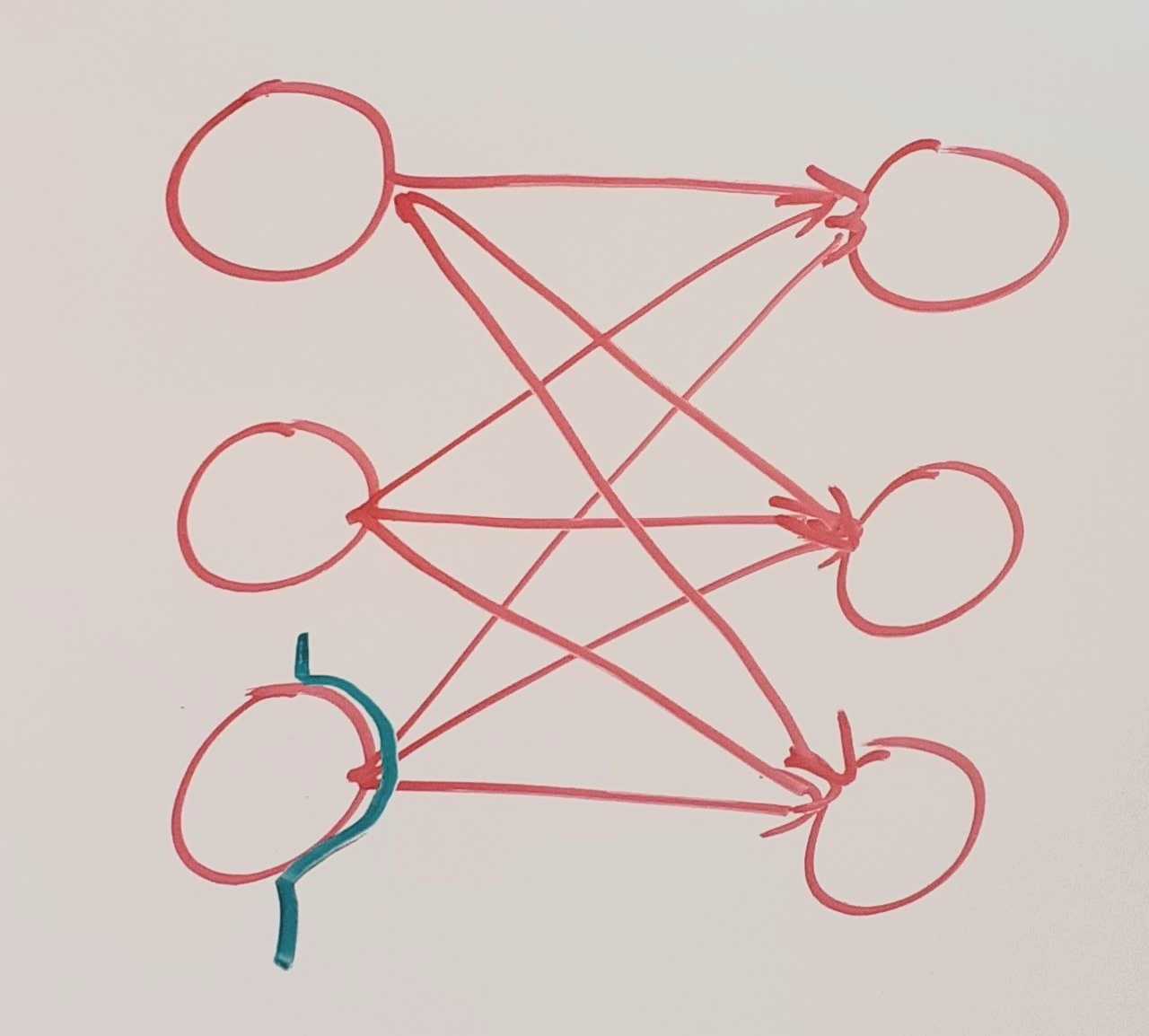

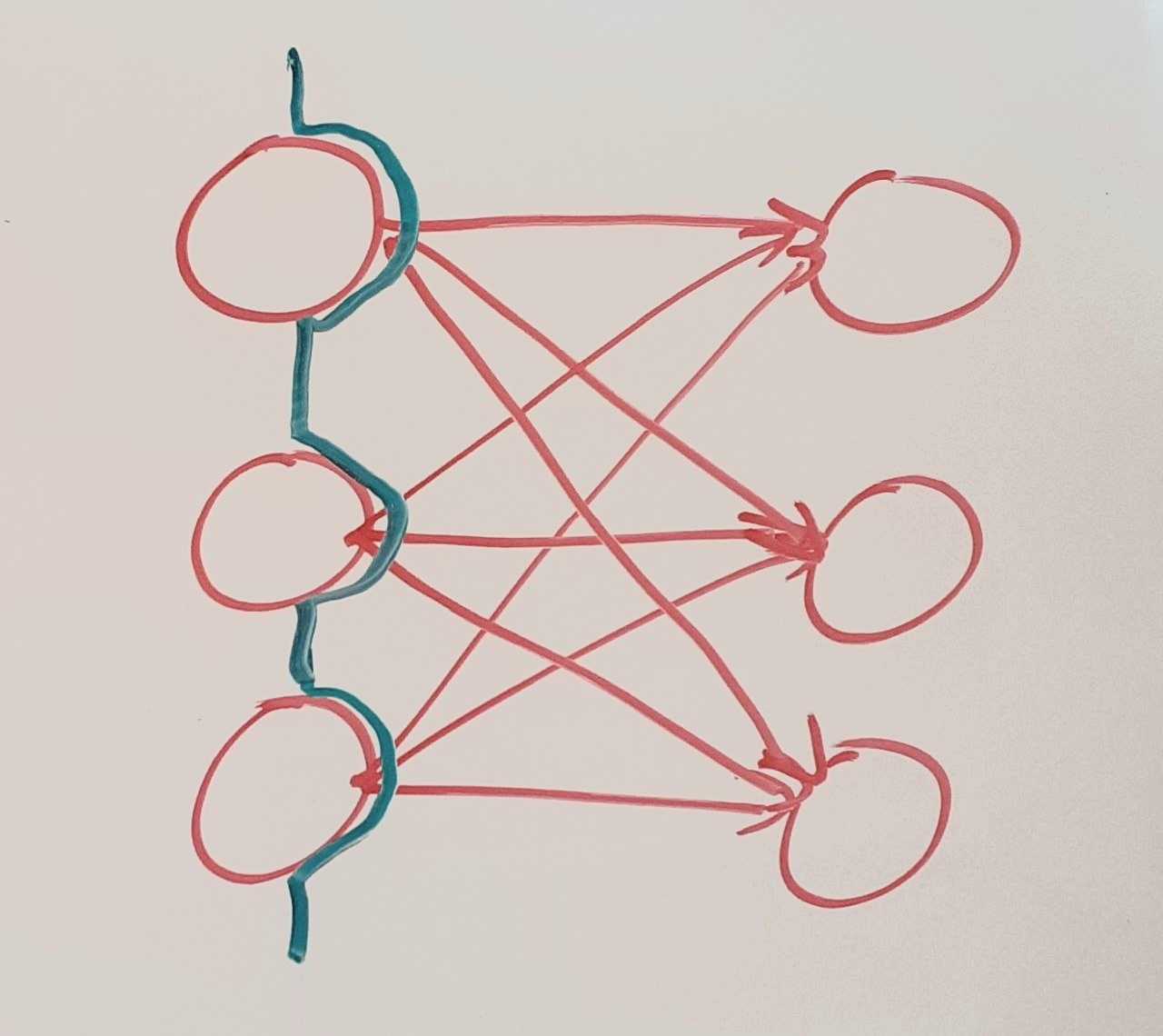

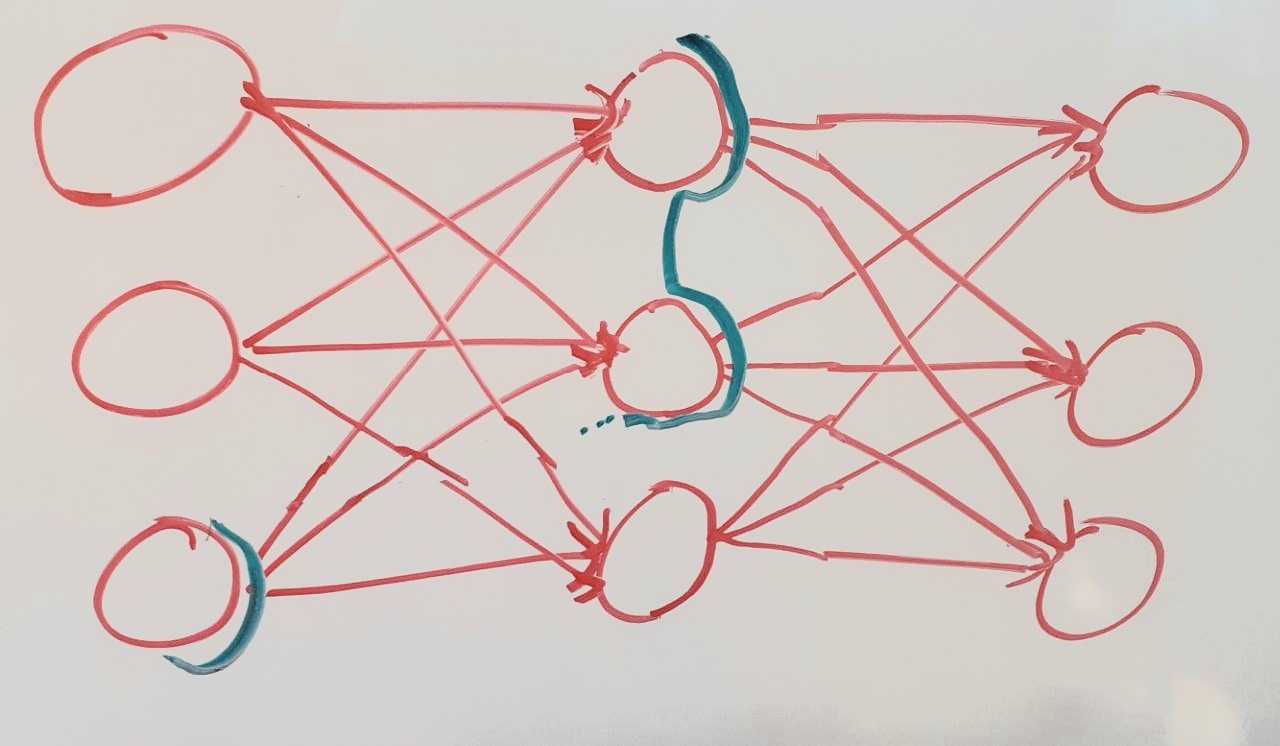

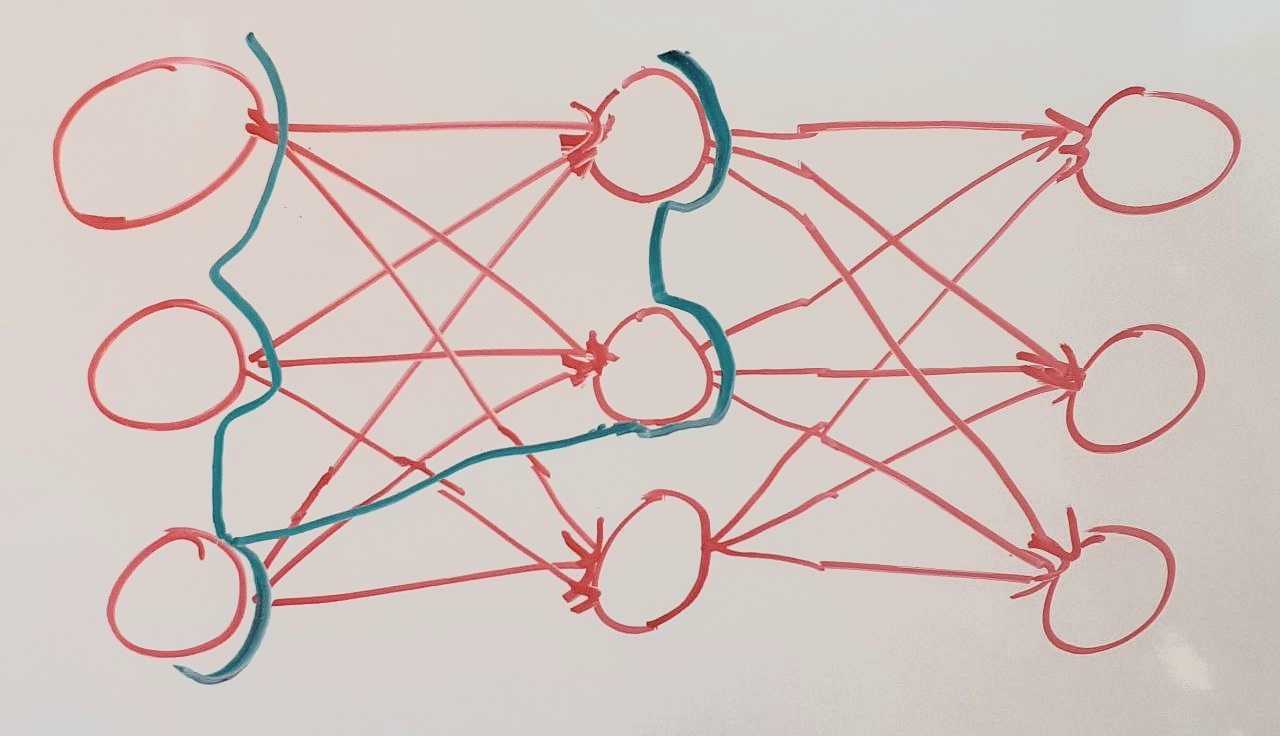

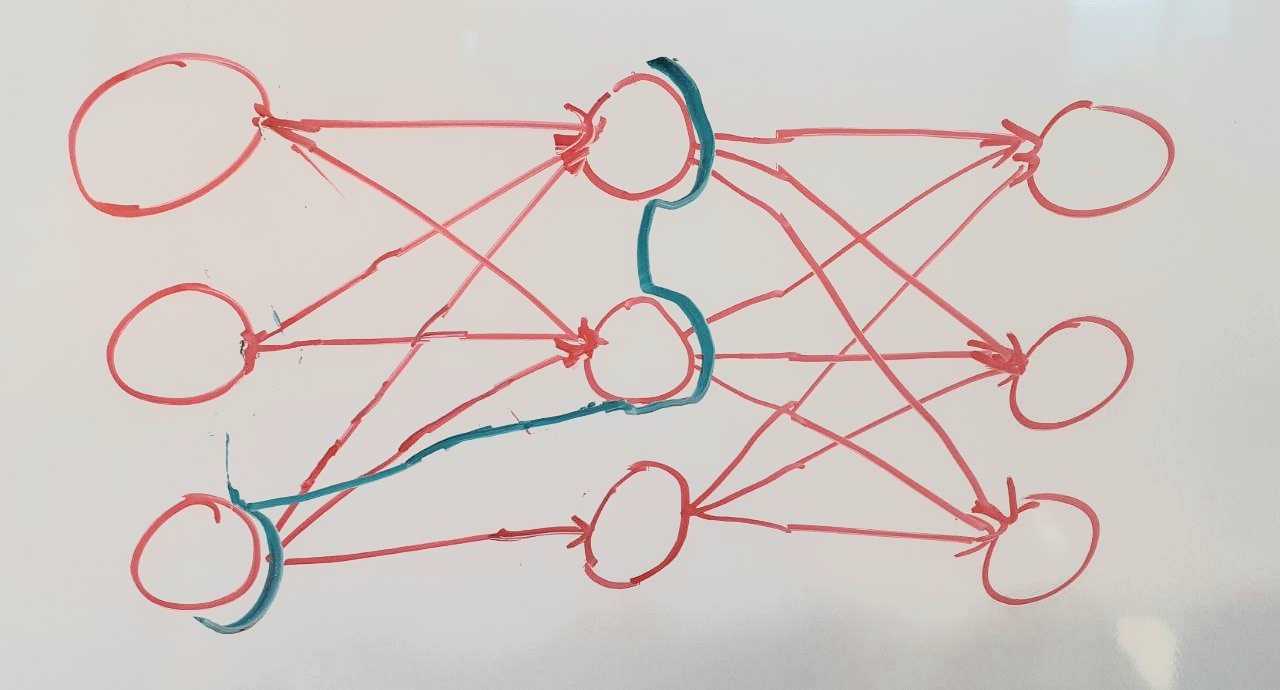

![]()The reinforcement learning process (Image by Authors)Let’s look at how a sample algorithm might work, using the video game Mario as an example. Let’s say that Mario has an enemy to his right, a mushroom to his left, and nothing above (see figure below). With those three action options, he might get a reward of +2 if he goes left, -10 if he goes right, and 0 if he goes up. After Mario takes an action, he will be in a new state with a new observation, and will have earned a reward based on his action. Then it will be time for another action, and the process goes on. Recall that the intention is to maximize rewards for an entire episode, which in this context is the series of states from the beginning of the game for the duration of Mario’s life.

![]()Mario learning to eat mushrooms (Image by Authors)The first time the algorithm sees this situation, it might select an option randomly since it doesn’t yet understand the consequences of the available actions. As it sees this situation more and more, it will learn from experience that in situations like these, going right is bad, going up is ok, and going left is best. We wouldn’t directly teach a dog how to fetch a ball, but by giving treats (rewards) for doing so, the dog would learn by reinforcement. Similarly, Mario’s actions are reinforced by feedback from experience that mushrooms are good and enemies are not.

How does the algorithm work to maximize the rewards? Different RL algorithms work in different ways, but one might keep track of the results of taking each action from this position, and the next time Mario is in this same position, he would select the action expected to be the most rewarding according to the prior results. Many algorithms select the best action most of the time, but also sometimes select randomly to make sure that they are exploring all of the options. (Note that at the beginning, the agent usually acts randomly because it hasn’t yet learned anything about the environment.)

It’s important to keep exploring all of the options to make sure that the agent doesn’t find something decent and then stick with it forever, possibly ignoring much better alternatives. In the Mario game, if Mario first tried going right and saw it was -10 and then tried up and saw it was 0, it wouldn’t be great to always go up from that point on. It would be missing out on the +2 reward for going left that hadn’t been explored yet.

Imagine that you tried cooking at home and didn’t like the food, and then went to McDonald’s and had a fantastic meal. You found a good “action” of going to McDonald’s, but it would be a shame (and not great health-wise) if you kept eating at McDonald’s forever and didn’t try other restaurants that may end up giving better “rewards”.

Generalization

==============

RL is often used in game settings like [Atari](https://gym.openai.com/envs/#atari). One problem with using RL in Atari games (which are similar to Mario-style games) is the \*sequential\* nature of these games. After winning one level, you advance to the next level, and keep going through levels in the same order. \*\*The algorithms may simply memorize exactly what happens in each level and then fail miserably when facing the slightest change in the game.\*\* This means that the algorithms may not actually be understanding the game, but instead learning to memorize a sequence of button presses that leads to high rewards for particular levels. A better algorithm, instead of learning to memorize a sequence of button presses, is able to “understand” the structure of the game and thus is able to adapt to unseen situations, or \*generalize\*.

Successful generalization means performing well in situations that haven’t been seen before. If you learned that 2\\*2 = 4, 2\\*3 = 6, and 2\\*4 = 8, and then could figure out that 2\\*6 = 12, that means that you were able to “understand” the multiplication and not just memorize the equations.

![]()Atari Breakout ([source](/atari-reinforcement-learning-in-depth-part-1-ddqn-ceaa762a546f))Let’s look at a generalization example in the context of an email spam filter. These usually work by collecting data from users who mark emails in their inbox as spam. If a bunch of people marked the message “EARN $800/DAY WITH THIS ONE TRICK” as spam, then the algorithm would learn to block all of those messages for all email users in the future. But what if the spammer noticed his emails were being blocked and decided to outsmart the filter? The next day he might send a new message, “EARN $900/DAY WITH THIS ONE OTHER TRICK”. An algorithm that is only memorizing would fail to catch this because it was just memorizing exact messages to block, rather than learning about spam in general. A generalizing algorithm would learn patterns and effectively understand what constitutes a piece of spam mail.

Reward Learning

===============

Games generally have very well-defined rewards built into them. In a card game like [Blackjack](https://gym.openai.com/envs/Blackjack-v0/), rewards correspond to how much the agent wins or loses each hand. InAtari, rewards are game dependent, but are well-specified, such as earning points for defeating enemies or finishing levels and losing points for getting hit or dying.

The image below is from a classic reinforcement learning environment called [CartPole](https://gym.openai.com/envs/CartPole-v0/), where the goal is to keep a pole upright on a track, and where a reward of +1 is provided for every second that the pole stays upright. The agent moves the cart left or right to try to keep the pole balanced and the longer it can keep it balanced, the more +1 rewards it receives.

![]()CartPole ([source](https://medium.com/@tuzzer/cart-pole-balancing-with-q-learning-b54c6068d947))\*\*However, many tasks in the real world do not have such clearly defined rewards, which leads to limitations in the possible applications of reinforcement learning.\*\* This problem is compounded by the fact that even attempting to specify clearly defined rewards is often difficult, if not impossible. A human could provide direct feedback to an RL agent during training, but this would require too much human time.

One approach called inverse reinforcement learning involves “reverse engineering” a reward function from demonstrations. For complex tasks, figuring out the reward function from demonstrations is very difficult to do well.

\*\*\*Reward learning\* involves learning a reward function, which describes how many rewards are earned in each situation in the environment, i.e. a mapping of the current state and action to the rewards received.\*\* The goal is to learn a reward function that encourages the desired behavior. To train the algorithm to learn a reward function, we need another source of data such as demonstrations of successfully performing the task. The reward function outputs reward predictions for each state, after which standard RL algorithms can be used to learn a strategy by simply substituting these approximate rewards in place of the usually known rewards.

![]()The reinforcement learning process with a reward function in place of known rewards (Image by Authors)Prior work (described below as Christiano et al. 2017) provides an example that illuminates how difficult learning the reward function can be. Imagine teaching a robot to do a backflip. If you aren’t a serious gymnast, it would be challenging to give a demonstration of successfully performing the task yourself. \*\*One could attempt to design a reward function that an agent could learn from, but this approach often falls victim to non-ideal reward design and reward hacking.\*\* Reward hacking means that the agent can find a “loophole” in the reward specification. For example, if we assigned too much reward for getting in the proper initial position for the backflip, then maybe the agent would learn to repeatedly move into that bent over position forever. It would be maximizing rewards based on the reward function that we gave it, but wouldn’t actually be doing what we intended!

A human could supervise every step of an agent’s learning by manually giving input on the reward function at each step, but this would be excessively time consuming and tedious.

The difficulty in specifying the rewards points towards the larger issue of human-AI alignment whereby humans want to align AI systems to their intentions and values, but specifying what we actually want can be surprisingly difficult (recall how every single genie story ends!).

Relevant Papers

===============

We’d like to look at several recent reward learning algorithms to evaluate their ability to learn rewards. We are specifically interested in how successful the algorithms are when faced with previously unseen environments or game levels, which tests their ability to generalize.

To do this, we leverage a body of prior work:

1. [\*Deep reinforcement learning from human preferences\*](https://arxiv.org/abs/1706.03741) — 2017 by Christiano et al.

2. [\*Reward learning from human preferences and demonstrations in Atari\*](https://arxiv.org/abs/1811.06521) — 2018 by Ibarz et al.

3. [\*Leveraging Procedural Generation to Benchmark Reinforcement Learning\*](https://arxiv.org/abs/1912.01588) — 2019 by Cobbe et al.

4. [\*Extrapolating Beyond Suboptimal Demonstrations via Inverse Reinforcement Learning from Observations\*](https://arxiv.org/abs/1904.06387) — 2019 by Brown, Goo et al.

The first two papers were impactful in utilizing reward learning alongside deep reinforcement learning, and the third introduces the OpenAI Procgen Benchmark, a useful set of games for testing algorithm generalization. The fourth paper proposed an efficient alternative to the methods of the first two works.

Deep reinforcement learning from human preferences (Christiano et al. 2017)

---------------------------------------------------------------------------

The key idea of this paper is that \*\*it’s a lot easier to recognize a good backflip than to perform one.\*\* The paper shows that it is possible to learn a predicted reward function for tasks in which we can only recognize a desired behavior, even if we can’t demonstrate it.

The proposed algorithm is shown below. It alternates between learning the reward function through human preferences and learning the strategy, which are both initially random.

\*Repeat until the agent is awesome:\*

> \*1. Show two short video clips of the agent acting in the environment with its current strategy\*

>

> \*2. Ask a human in which video clip the agent was better\*

>

> \*3. Update the reward function given the human’s feedback\*

>

> \*4. Update the strategy based on the new reward function\*

>

>

The simulated robot (shown in the below figure) was trained to perform a backflip from 900 queries in under an hour, a task that would be very difficult to demonstrate or to manually create rewards for.

![]()Training a backflip from human preferences ([source](https://github.com/nottombrown/rl-teacher))Experiments were performed in the physics simulator called MuJoCo and also in Atari games. Why run these experiments in Atari when we already know the true rewards in Atari for the games? This gives the opportunity to assign preferences automatically instead of having a human manually give feedback about two video clip demonstrations. We can get automatic (synthetic) feedback by simply ranking the clip with higher true reward as the better one. This enables us to run experiments very quickly because no human effort is needed. Furthermore in this case we can assess the performance of the algorithm by comparing the learned reward function to the true rewards given in the game.

![]()Backflip in motion ([source](https://openai.com/blog/deep-reinforcement-learning-from-human-preferences/))Reward learning from human preferences and demonstrations in Atari (Ibarz et al. 2018)

--------------------------------------------------------------------------------------

This paper built on the prior paper by performing additional experiments in the Atari domain with a different setup and a different RL algorithm. Their main innovation is to utilize human demonstrations at the beginning in order to start with a decent strategy, whereas the original algorithm would have to start with an agent acting completely randomly since no rewards are known at the beginning. The addition of these human demonstrations improved learning significantly in three of nine tested Atari games relative to the no-demos method used by Christiano.

Leveraging Procedural Generation to Benchmark Reinforcement Learning (Cobbe et al. 2019)

----------------------------------------------------------------------------------------

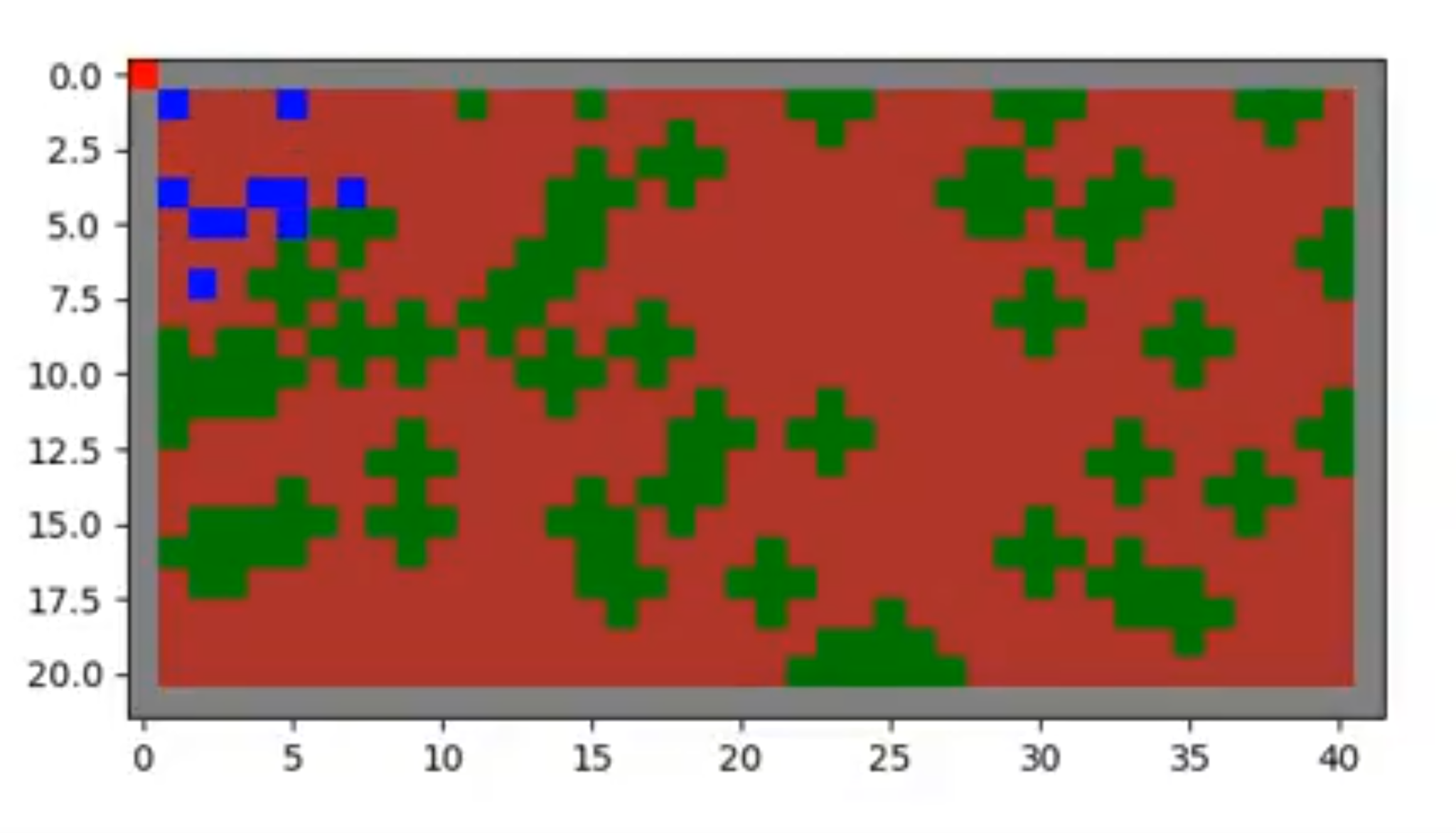

[OpenAI](https://openai.com/), an AI research lab, developed reinforcement learning testbed game environments called the [Procgen Benchmark](https://openai.com/blog/procgen-benchmark/), which includes 16 unique games. \*\*Within each game, all levels are similar and share the same goal, but the actual components like the locations of enemies and hazards are randomly generated\*\* and therefore can be different in each level.

This means that we can \*\*train our agent on many random levels and then test it on completely new levels\*\*, allowing us to understand whether the agent is able to generalize its learning. Note the contrast to Atari games in which training is done on sequential game levels where the enemies and rewards and game objects are always in the same places. Furthermore, when testing the agent’s abilities in sequential and non-randomly generated games, they are tested on those same levels in the same order. An important machine learning principle is to train with one set of data and test with another set to truly evaluate the agent’s ability to learn/generalize.

We looked primarily at four environments from Procgen in our work:

1. \*\*CoinRun:\*\* Collect the coin at the end of the level while dodging enemies

2. \*\*FruitBot:\*\* Eat fruit and avoid non-fruit foods like eggs and ice cream

3. \*\*StarPilot:\*\* Side scrolling shooter game

4. \*\*BigFish:\*\* Begin as a small fish and eat other strictly smaller fish to get bigger

Below are screenshots from each of the games. The agent view uses a lower resolution to optimize for the algorithm to require less computation. The human view is how the game would look if a human were playing.

![]()CoinRun, FruitBot, StarPilot, and BigFish with agent view (Image by Authors)![]()CoinRun, FruitBot, StarPilot, and BigFish with human view (Image by Authors)The main experiments in the paper involved training agents in all 16 unique games over a range of 100 to 100,000 training levels each, while keeping the training time fixed. These agents were then tested on levels that they had never played before (this is possible because each level is uniquely auto-generated). They found that agents need to be trained on as many as 10,000 levels of the game (training levels) before they are able to demonstrate good performance on the test levels.

The StarPilot game plot below shows the training performance in blue and the testing performance in red. The y-axis is the rewards and the x-axis is the number of levels used for training. Note that the x-axis is on a logarithmic scale.

![]()StarPilot training (blue) and testing (red) ([source](https://openai.com/blog/procgen-benchmark/))We see that the agent does very well immediately during training and that training performance then goes down and then back up slightly. Why would the agent get worse as it trains more? Since the training time is fixed, by training on only 100 levels, the agent would be repeating the same levels over and over and could easily memorize everything (but do very poorly at test time in the unseen levels). With 1,000 levels, the agent would have to spread its time over more levels and therefore wouldn’t be able to learn the levels as well. As we get to 10,000 and more levels, the agent is able to see such a diversity of levels, that it can perform well as it has begun to generalize its understanding. We also see that the test performance quickly improves to nearly the level of the training performance, suggesting that the agent is able to generalize well to unseen levels.

Extrapolating Beyond Suboptimal Demonstrations via Inverse Reinforcement Learning from Observations (Brown, Goo et al. 2019)

----------------------------------------------------------------------------------------------------------------------------

The algorithm proposed in this paper, called \*T-REX\*, is different from the previously mentioned reward learning methods in that it \*\*doesn’t require ongoing human feedback during the learning procedure\*\*. While the other algorithms require relatively little human time compared to supervising every agent action, they still require a person to answer thousands of preference queries. A key idea of T-REX is that the human time commitment can be significantly reduced by completing all preference feedback at the beginning, rather than continuously throughout the learning procedure.

The first step is to generate demonstrations of the game or task that is being learned. The demonstrations can either come from a standard reinforcement learning agent or from a human.

The main idea is that we can get a lot of preference data by extracting short video clips from these demonstrations and assigning preferences to them \*\*based only on a ranking of the demonstrations that they came from\*\*. For example, with 20 demonstrations, each demo would get a rank of 1 through 20. A large number of short video clips would be taken from each of these demonstrations and each clip would be assigned the ranking of the demo that it came from, so when they face each other, the preference would go to the clip that came from the better demo. The reward function would then be based on these preferences.

This is in contrast to the approach of the prior works that require human preference input over each and every pair of 1–2 second clips. Here, we only require human preference input to rank the initial demonstrations. T-REX’s disadvantage, however, is that it is using an approximation. Not all clips from a higher ranked demonstration should be preferred to clips from a lower ranked demonstration, but the idea is that on average, the preferences would work well and the procedure would suffice to learn a reward model.

Providing a ranking over demonstrations is the same as giving preferences between every pair of them. For example, if we had three demos and ranked them 3>1>2, this means that we would generate the rankings of 3>1, 3>2, and 1>2. Then randomly generated clips from the demos would be given the same preference ranking based on which demos the clips came from.

The T-REX paper showed that having just 12 demonstrations was sufficient to learn a useful reward model. There are 12 \\* 11 / 2 = 66 distinct pairs for any 12 objects, so ranking 12 demonstrations from 1 to 12 is equivalent to answering up to 66 queries about which demo is better, which is ~100 times fewer queries than required by the algorithm by Christiano et al. Again, although the T-REX ranked demonstrations method is more efficient, it is sacrificing precision due to the simplifying assumption that all clips from a better demo are better than all clips from a worse demo.

Brown and Goo et al.’s Atari-based experiments showed that T-REX was competitive against the Ibarz et al. method that was previously described. It was able to learn better-than-demonstrator quality agents using only 12 demonstrations and their corresponding preference (rank) labels.

The figure below shows a comparison between the scores from human demonstrations and the scores from the T-REX algorithm in five Atari games. T-REX was able to soundly outperform 3 of the 5 human scores, though was not able to earn any points in the Montezuma’s Revenge game.

![]()T-REX algorithm vs. humans ([source](https://arxiv.org/abs/1904.06387))T-REX also exceeded the performance a state-of-the-art behavioral cloning algorithm (BCO) and imitation learning algorithm (GAIL) in 7 out of 8 games, as shown in the chart below, while also beating the best available demonstrations in 7 out of 8 games. (Behavioral cloning algorithms try to act as close to demonstrations as possible and inverse reinforcement learning algorithms attempt to recover a reward function from an expert demonstration.)

![]()T-REX algorithm vs. other state-of-the-art methods ([source](https://arxiv.org/abs/1904.06387))Next: Implementations and Experiments

=====================================

Based on T-REX’s strong results and simple idea, we decided to base our initial experiments on combining this algorithm with the Procgen game environments, which would give us a highly efficient reward learning algorithm and a variety of benchmark games to test generalization. We will explain the details of our implementation and the experimental results and issues that we faced in the [second blog post](https://chisness.medium.com/assessing-generalization-in-reward-learning-implementations-and-experiments-de02e1d08c0e) of this series. |

8412ca2e-cd23-4b5f-905c-d370b6fdcabd | trentmkelly/LessWrong-43k | LessWrong | Moravec's Paradox Comes From The Availability Heuristic

Epistemic Status: very quick one-thought post, may very well be arguing against a position nobody actually holds, but I haven’t seen this said explicitly anywhere so I figured I would say it.

Setting Up The Paradox

According to Wikipedia:

> Moravec’s paradox is the observation by artificial intelligence and robotics researchers that, contrary to traditional assumptions, reasoning requires very little computation, but sensorimotor and perception skills require enormous computational resources.

>

> -https://en.wikipedia.org/wiki/Moravec’s_paradox

I think this is probably close to what to Hans Moravec originally meant to say in the 1980’s, but not very close to how the term is used today. Here is my best attempt to specify the statement I think people generally point at when they use the term nowadays:

> Moravec’s paradox is the observation that in general, tasks that are hard for humans are easy for computers, and tasks that are easy for humans are hard for computers.

>

> -me

If you found yourself nodding along to that second one, that’s some evidence I’ve roughly captured the modern colloquial meaning. Even when it’s not attached to the name “Moravec’s Paradox”, I think this general sentiment is a very widespread meme nowadays. Some example uses of this version of the idea that led me to write up this post are here and here.

To be clear, from here on out I will be talking about the modern, popular-meme version of Moravec's Paradox.

And Dissolving It

I think Moravec’s Paradox is an illusion that comes from the availability heuristic, or something like it. The mechanism is very simple – it’s just not that memorable when we get results that match up with our expectations for what will be easy/hard.

If you try, you can pretty easily come up with exceptions to Moravec’s Paradox. Lots of them. Things like single digit arithmetic, repetitive labor, and drawing simple shapes are easy for both humans and computers. Things like protein folding, the traveling salesm |

f21ca8a2-50d8-4b3c-8bc5-4f8edae01d06 | trentmkelly/LessWrong-43k | LessWrong | [Linkpost] Google invested $300M in Anthropic in late 2022

A few quotes from the article:

Google has invested about $300mn in artificial intelligence start-up Anthropic, making it the latest tech giant to throw its money and computing power behind a new generation of companies trying to claim a place in the booming field of “generative AI”.

The terms of the deal, through which Google will take a stake of about 10 per cent, requires Anthropic to use the money to buy computing resources from the search company’s cloud computing division, said three people familiar with the arrangement.

The start-up had raised more than $700mn before Google’s investment, which was made in late 2022 but has not previously been reported. The company’s biggest investor is Alameda Research, the crypto hedge fund of FTX founder Sam Bankman-Fried, which put in $500mn before filing for bankruptcy last year. FTX’s bankruptcy estate has flagged Anthropic as an asset that may help creditors with recoveries. |

d32296fe-a2e1-4306-bf5e-646a9986b7bf | trentmkelly/LessWrong-43k | LessWrong | The Goodhart Game

> In this paper, we argue that adversarial example defense papers have, to date, mostly considered abstract, toy games that do not relate to any specific security concern. Furthermore, defense papers have not yet precisely described all the abilities and limitations of attackers that would be relevant in practical security.

From the abstract of Motivating the Rules of the Game for Adversarial Example Research by Gilmer et al (summary)

Adversarial examples have been great for getting more ML researchers to pay attention to alignment considerations. I personally have spent a fair of time thinking about adversarial examples, I think the topic is fascinating, and I've had a number of ideas for addressing them. But I'm also not actually sure working on adversarial examples is a good use of time. Why?

Like Gilmer et al, I think adversarial examples are undermotivated... and overrated. People in the alignment community like to make an analogy between adversarial examples and Goodhart's Law, but I think this analogy fails to be more than an intuition pump. With Goodhart's Law, there is no "adversary" attempting to select an input that the AI does particularly poorly on. Instead, the AI itself is selecting an input in order to maximize something. Could the input the AI selects be an input that the AI does poorly on? Sure. But I don't think the commonality goes much deeper than "there are parts of the input space that the AI does poorly on". In other words, classification error is still a thing. (Maybe both adversaries and optimization tend to push us off the part of the distribution our model performs well on. OK, distributional shift is still a thing.)

To repeat a point made by the authors, if your model has any classification error at all, it's theoretically vulnerable to adversaries. Suppose you have a model that's 99% accurate and I have an uncorrelated model that's 99.9% accurate. Suppose I have access to your model. Then I can search the input space for a case wher |

2f5393f0-bc3b-4a6a-91eb-6f81ef17155d | trentmkelly/LessWrong-43k | LessWrong | Good goals for leveling up?

I'm new to LessWrong and am trying to level up and internalize techniques to improve my life. I also want to increase my connection to the community, but that's less important for this question. I'm setting some goals for next year and was thinking about how to encode these meta-goals into SMARTer goals.

My question: what are some good goals for leveling up in a wholistic way? I don't just want to read things. I want to become conversant in them, and I want to put them into practice. I've written down some ideas below (I don't intend to do all of them; they're just ideas at this point). Feel free to critique them and/or ignore them and write your own.

* Spend X hours per week interacting with people's Rationality posts on LessWrong and Facebook.

* Spend X hours per week reading Rationality blogs, posts, and sequences.

* Write X blog / LW post per month.

* Learn X new Rationality technique per week and apply it to a problem I have (and post about it).

* Read through X Sequence / Book

Again: As a newbie, what is a good set of goals to help me level up quickly and sustainably?

Edit: Based on the comments, I should specify that these would not be my only goals for the year. They would just be the ones that have to do with learning and applying Rationality techniques. |

2f766365-295e-40e4-aae6-44db0e0999b3 | trentmkelly/LessWrong-43k | LessWrong | Covid 2/25: Holding Pattern

Scott Alexander reviewed his Covid-19 predictions, and I did my analysis as well.

It was a quiet week, with no big news on the Covid front. There was new vaccine data, same as the old vaccine data – once again, vaccines still work. Once again, there is no rush to approve them or even plan for their distribution once approved. Once again, case numbers and positive test percentages declined, but with the worry that the English Strain will soon reverse this, especially as the extent of the drop was disappointing. The death numbers ended up barely budging after creeping back up later in the week, presumably due to reporting time shifts, but that doesn’t make it good or non-worrisome news.

This will be a relatively short update, and if you want to, you can safely skip it.

If anyone knows a good replacement for the Covid Tracking Project please let me know. Next week will be the last week before they shut down new data collection, and I don’t like any of the options I know about to replace them.

Let’s run the numbers.

The Numbers

Predictions

Last week: 5.2% positive test rate on 10.4 million tests, and an average of 2,089 deaths.

Prediction: 4.6% positive test rate and an average of 1,800 deaths.

Result: 4.9% positive test rate and an average of 2,068 deaths.

Late prediction (Friday morning): 4.5% positive test rate and an average of 1,950 deaths (excluding the California bump on 2/25).

Both results are highly disappointing. The positive test rate slowing its drop was eventually going to happen due to the new strain and the control system, so while it’s disappointing it doesn’t feel like a mystery. Deaths not dropping requires an explanation. There’s no question that over the past month and a half we’ve seen steady declines in infections, and conditions are otherwise at least not getting worse. How could the death count be holding steady?

One hypothesis is that weather messed with the reporting, but Texas deaths went down and the patterns generally do not ma |

2ba7e037-fb93-44d9-bcad-b80cc6506a60 | trentmkelly/LessWrong-43k | LessWrong | Review: “The Case Against Reality”

This is not a red stop sign:

For one thing, in a ceci n'est pas une pipe way, it’s not a stop sign at all, but a digital representation of a photograph of a stop sign, made visible by a computer monitor or maybe a printer. More subtly, “red” is not a quality of the sign, but of the consciousness that perceives it.[1] Even if you were to generously define “red” to be a specific set of wavelengths of light that could in theory be ascertained without the benefit of consciousness, that still would be a measurement of something that the sign repels rather than is.[2]

We on some level understand these things, but we seem much more prone to think of ourselves as living in a world in which stop signs are red; the redness inheres in the sign out there in the world, rather than the redness being our own reaction to light reflecting from the sign, or rather than the sign and the redness being coincident private figments of perception. And no amount of thinking this through seems to dispel the illusion: it’s just too practically useful to put the color onto the external object to make it seem worthwhile to relearn how to perceive reality differently.

Donald Hoffman, in The Case Against Reality (2019), suggests that our mistake here goes much deeper. Not only are superficial sensory characteristics like color subjective qualities of conscious experience rather than objective qualities of things — but things themselves, as well as the spacetime they seem to inhabit, are too.

All of the stuff we take for granted as making up the world we inhabit — objects, dimensions, qualities, time, causality — are, says Hoffman, not objectively real. They are better thought of as the interface through which we interact with whatever might be objectively real. When we isolate “objects” in “space” and “time” and then ascribe “laws” to the regularities in their interactions, we are not discovering things about reality, but about this interface. “Physical objects in spacetime are simply our ico |

5e3618c2-c101-4289-8a5f-7eda1d1aa587 | trentmkelly/LessWrong-43k | LessWrong | It's Okay to Feel Bad for a Bit

> "If you kiss your child, or your wife, say that you only kiss things which are human, and thus you will not be disturbed if either of them dies." - Epictetus

>

> "Whatever suffering arises, all arises due to attachment; with the cessation of attachment, there is the cessation of suffering." - Pali canon

>

> "An arahant would feel physical pain if struck, but no mental pain. If his mother died, he would organize the funeral, but would feel no grief, no sense of loss." - the Dhammapada

>

> "Receive without pride, let go without attachment." - Marcus Aurelius

I.

Stoic and Buddhist philosophies are pretty popular these days. I don't like them. I think they're mostly bad for you if you take them too seriously.

About a decade ago I meditated for an hour a day every day for a few weeks, then sat down to breakfast with my delightful (at the time) toddlers and realized that I felt nothing. There was only the perfect crystalline clarity and spaciousness of total emotional detachment. "Oh," I said, and never meditated again.

It's better to sometimes feel bad for a bit, than to feel nothing.

II.

Young adults should probably put some effort into becoming less emotionally reactive. Being volatile makes you unpleasant to be around, and undercuts your ability to achieve pretty much any goals you may have.

If you have any traumas, it's likely positive-EV for you to devote time and energy to learning some kind of therapy modality with a good evidence base, and then taking the time to resolve those issues.

In my opinion - for most people - once you have fixed about 60% of your emotional reactivity and 90% of your psychological triggers, you have hit a point of diminishing returns. In fact, past that point, I think further investment in making yourself "nonreactive" and "unattached," and removing all minor triggers from your psyche, is pathological from the perspective of actually trying to be happy and to do things with your life.

If your fear of feeling bad for a bi |

976077c0-f189-455e-9a38-48643d449606 | trentmkelly/LessWrong-43k | LessWrong | Can AI Transform the Electorate into a Citizen’s Assembly

Motivation: Modern democratic institutions are detached from those they wish to serve [1]. In small societies, democracy can easily be direct, with all the members of a community gathering to address important issues. As civilizations get larger, mass participation and deliberation become irreconcilable, not least because a parliament can't handle a million-strong crowd. As such, managing large societies demands a concentrated effort from a select group. This relieves ordinary citizens of the burdens and complexities of governance, enabling them to lead their daily lives unencumbered. Yet, this decline in public engagement invites concerns about the legitimacy of those in power.

Lately, this sense of institutional distrust has been exposed and enflamed by AI-algorithms optimised solely to capture and maintain our focus. Such algorithms often learn exploit the most reactive aspects of our psyche including moral outrage and identity threat [2]. In this sense, AI has fuelled political polarisation and the retreat of democratic norms, prompting Harari to assert that "Technology Favors Tyranny" [3]. However, AI may yet play a crucial role in mending and extending democratic society [4]. The very algorithms that fracture and misinform the public can be re-incentivised to guide and engage the electorate in digital citizen’s assemblies. To see this, we must first consider the way that a citizen’s assembly traditionally works.

What’s a Citizens Assembly: A citizen's assembly consists of a small group that engages in deliberation to offer expert-advised recommendations on specific issues. Following group discussion, the recommendations are condensed into an issue paper, which is presented to parliament. Parliamentary representatives consider the issue paper and leverage their expertise to ultimately decide the outcome. Giving people the chance to experiment with policy in a structured environment aids their understanding of the laws that govern them, improves govern |

03d5e86c-cf8b-4f40-ba46-a36314fac788 | trentmkelly/LessWrong-43k | LessWrong | Artifact: What Went Wrong?

Previously: Card Balance and Artifact, Artifact Embraces Card Balance Changes, Review: Artifact

Epistemic Status: Looks pretty dead

Artifact had every advantage. Artifact should have been great. Artifact was great for the right players, and had generally positive reviews. Then Artifact fell flat, its players bled out, its cards lost most of their value, and the game died.

Valve takes the long view, so the game is being retooled and might return. But for now, for all practical purposes, the game is dead.

Richard Garfield and Skaff Elias have one take on this podcast. They follow up with more thoughts in this interview.

Here’s my take, which is that multiple major mistakes were made, all of which mattered, and all of which will be key to avoid if we are to bring the combination of strategic depth and player-friendly economic models back to collectible card games.

I see ten key mistakes, which I will detail below.

Alas, we do not get to run controlled experiments. The lack of ability to experiment and iterate was the meta-level problem with Artifact. The parts of the game that Valve knew how to test, and knew to test, were outstanding, finely crafted and polished. The parts that Valve did not test had severe problems.

We will never know for sure which reasons were most critical, and which ones were minor setbacks. I will make it clear what my guesses are, but they are just that, guesses.

Reason 1: Artifact Was Too Complex, Complicated and Confusing

Artifact streams were difficult to follow even if you knew the game. As teaching tools they didn’t work at all. I heard multiple reports that excellent streamers were unable to explain to viewers what was going on in their Artifact games.

I was mostly able to follow streams when I had a strong strategic understanding of the game and all of its cards and recognized all the cards on sight. Mostly.

I was fortunate to learn Artifact in person with those deeply committed to it, at the Valve offices, and in a setting |

720ed140-a50c-4d2c-a6e2-3f8d49de48bb | trentmkelly/LessWrong-43k | LessWrong | Nash Equilibria and Schelling Points

A Nash equilibrium is an outcome in which neither player is willing to unilaterally change her strategy, and they are often applied to games in which both players move simultaneously and where decision trees are less useful.

Suppose my girlfriend and I have both lost our cell phones and cannot contact each other. Both of us would really like to spend more time at home with each other (utility 3). But both of us also have a slight preference in favor of working late and earning some overtime (utility 2). If I go home and my girlfriend's there and I can spend time with her, great. If I stay at work and make some money, that would be pretty okay too. But if I go home and my girlfriend's not there and I have to sit around alone all night, that would be the worst possible outcome (utility 1). Meanwhile, my girlfriend has the same set of preferences: she wants to spend time with me, she'd be okay with working late, but she doesn't want to sit at home alone.

This “game” has two Nash equilibria. If we both go home, neither of us regrets it: we can spend time with each other and we've both got our highest utility. If we both stay at work, again, neither of us regrets it: since my girlfriend is at work, I am glad I stayed at work instead of going home, and since I am at work, my girlfriend is glad she stayed at work instead of going home. Although we both may wish that we had both gone home, neither of us specifically regrets our own choice, given our knowledge of how the other acted.

When all players in a game are reasonable, the (apparently) rational choice will be to go for a Nash equilibrium (why would you want to make a choice you'll regret when you know what the other player chose?) And since John Nash (remember that movie A Beautiful Mind?) proved that every game has at least one, all games between well-informed rationalists (who are not also being superrational in a sense to be discussed later) should end in one of these.

What if the game seems specifically design |

994f2c50-c641-41c3-b362-62155f295106 | trentmkelly/LessWrong-43k | LessWrong | Impossibility of Anthropocentric-Alignment

Abstract: Values alignment, in AI safety, is typically construed as the imbuing into artificial intelligence of human values, so as to have the artificial intelligence act in ways that encourage what humans value to persist, and equally to preclude what humans do not value. “Anthropocentric” alignment emphasises that the values aligned-to are human, and what humans want. For, practically, were values alignment achieved, this means the AI is to act as humans want, and not as they do not want (for if humans wanted what they did not value, or vice versa, they would not seek, so would not act, to possess the want and value; as the AI is to act for them, for their wants and values: “Want implies act”. If not acted upon, what is valued may be lost, then by assumption is no longer present to be valued; consistency demands action, preservation). We shall show that the sets, as it were, of human wants and of possible actions, are incommensurable, and that therefore anthropocentric-alignment is impossible, and some other “values” will be required to have AI “align” with them, can this be done at all.

Epistemic status: This is likely the most mathematically ambitious production by one so ill-educated they had to teach themselves long division aged twenty-five, in human history (Since there may not be much more human history, it may retain this distinction). Minor errors and logical redundancies are likely; serious mistakes cannot be discounted, and the author has nothing with which to identify them. Hence, the reader is invited to comment, as necessary, to highlight and correct such errors – not even for this author’s benefit as much as for similarly non-technical readers, who, were it only thoroughly downvoted, might receive no “updating” of their knowledge were they not given to know why it is abominated; they may even promulgate it in a spirit of iconoclasm – contrary to downvote’s intention; hence “voting” is generally counterproductive. Every effort has been made to have |

29e93241-80a3-4bf5-bb69-74f48a806c3f | trentmkelly/LessWrong-43k | LessWrong | Open Thread: March 2010, part 2

The Open Thread posted at the beginning of the month has exceeded 500 comments – new Open Thread posts may be made here.

This thread is for the discussion of Less Wrong topics that have not appeared in recent posts. If a discussion gets unwieldy, celebrate by turning it into a top-level post. |

f6e32b3a-d5f5-48a2-86b6-57c52a474f49 | StampyAI/alignment-research-dataset/special_docs | Other | China’s New AI Governance Initiatives Shouldn’t Be Ignored

Over the past six months, the Chinese government has rolled out a series of policy documents and public pronouncements that are finally putting meat on the bone of the country’s governance regime for artificial intelligence (AI). Given China’s track record of leveraging AI for mass surveillance, it’s tempting to view these initiatives as little more than a fig leaf to cover widespread abuses of human rights. But that response risks ignoring regulatory changes with major implications for global AI development and national security. Anyone who wants to compete against, cooperate with, or simply understand China’s AI ecosystem must examine these moves closely.

These recent initiatives show the emergence of three different approaches to AI governance, each championed by a different branch of the Chinese bureaucracy, and each at a different level of maturity. Their backers also pack very different bureaucratic punches. It’s worth examining the three approaches and their backers, along with how they will both complement and compete with each other, to better understand where China’s AI governance is heading.

| **Three Approaches to Chinese AI Governance** |

| **Organization** | **Focus of Approach** | **Relevant Documents** |

| Cyberspace Administration of China | Rules for online algorithms, with a focus on public opinion | - [Internet Information Service Algorithmic Recommendation Management Provisions](https://digichina.stanford.edu/work/translation-internet-information-service-algorithmic-recommendation-management-provisions-opinon-seeking-draft/)

- [Guiding Opinions on Strengthening Overall Governance of Internet Information Service Algorithms](https://digichina.stanford.edu/work/translation-guiding-opinions-on-strengthening-overall-governance-of-internet-information-service-algorithms/) |

| China Academy of Information and Communications Technology | Tools for testing and certification of “trustworthy AI” systems | - [Trustworthy AI white paper](https://cset.georgetown.edu/publication/white-paper-on-trustworthy-artificial-intelligence/)

- [Trustworthy Facial Recognition Applications and Protections Plan](https://www.sohu.com/a/501708742_100207327) |

| Ministry of Science and Technology | Establishing AI ethics principles and creating tech ethics review boards within companies and research institutions | - [Guiding Opinions on Strengthening Ethical Governance of Science and Technology](http://www.most.gov.cn/tztg/202107/t20210728_176136.html)

- [Ethical Norms for New Generation Artificial Intelligence](https://cset.georgetown.edu/publication/ethical-norms-for-new-generation-artificial-intelligence-released/) |

The strongest and most immediately influential moves in AI governance have been made by the Cyberspace Administration of China (CAC), a relatively new but [very powerful](https://qz.com/2039292/how-did-chinas-top-internet-regulator-become-so-powerful/) regulator that writes the rules governing certain applications of AI. The CAC’s approach is the most mature, the most rule-based, and the most concerned with AI’s role in disseminating information.

The CAC made headlines in August 2021 when it released a [draft set of thirty rules](https://digichina.stanford.edu/work/translation-internet-information-service-algorithmic-recommendation-management-provisions-opinon-seeking-draft/) for regulating internet recommendation algorithms, the software powering everything from TikTok to news apps and search engines. Some of those rules are China-specific, such as the one stipulating that recommendation algorithms “vigorously disseminate positive energy.” But other provisions [break ground](https://digichina.stanford.edu/work/experts-examine-chinas-pioneering-draft-algorithm-regulations/) in ongoing international debates, such as the requirement that algorithm providers be able to “[give an explanation](https://digichina.stanford.edu/work/translation-internet-information-service-algorithmic-recommendation-management-provisions-opinon-seeking-draft/)” and “remedy” situations in which algorithms have infringed on user rights and interests. If put into practice, these types of provisions could spur Chinese companies to experiment with new kinds of disclosure and methods for algorithmic interpretability, an [emerging but very immature](https://cset.georgetown.edu/publication/key-concepts-in-ai-safety-interpretability-in-machine-learning/) area of machine learning research.

Soon after releasing its recommendation algorithm rules, the CAC came out with a much more ambitious effort: a [three-year road map](http://www.cac.gov.cn/2021-09/29/c_1634507915623047.htm) for governing all internet algorithms. Following through on that road map will require input from many of the nine regulators that co-signed the project, including the Ministry of Industry and Information Technology (MIIT).

The second approach to AI governance has emerged out of the China Academy of Information and Communications Technology (CAICT), an [influential think tank under the MIIT](https://www.newamerica.org/cybersecurity-initiative/digichina/blog/profile-china-academy-information-and-communications-technology-caict/). Active in policy formulation and many aspects of technology testing and certification, the CAICT has distinguished its method through a focus on creating the tools for measuring and testing AI systems. This work remains in its infancy, both from technical and regulatory perspectives. But if successful, it could lay the foundations for China’s larger AI governance regime, ensuring that deployed systems are robust, reliable, and controllable.

In July 2021, the CAICT teamed up with a research lab at the Chinese e-commerce giant JD to release the country’s first [white paper](https://cset.georgetown.edu/publication/white-paper-on-trustworthy-artificial-intelligence/) on “trustworthy AI.” Already popular in European and U.S. discussions, trustworthy AI refers to many of the more technical aspects of AI governance, such as testing systems for robustness, bias, and explainability. The way the CAICT defines trustworthy AI in its core principles [looks very similar](https://macropolo.org/beijing-approach-trustworthy-ai/?rp=m) to the definitions that have come out of U.S. and European institutions, but the paper was notable for how quickly those principles are being converted into concrete action.

The CAICT [is working with](http://aiiaorg.cn/index.php?m=content&c=index&a=show&catid=34&id=115) China’s AI Industry Alliance, a government-sponsored industry body, to test and certify different kinds of AI systems. In November 2021, it issued its first batch of [trustworthy AI certifications](https://www.sohu.com/a/501708742_100207327) for facial recognition systems. Depending on the technical rigor of implementation, these types of certifications could help accelerate progress on algorithmic interpretability—or they could simply turn into a form of bureaucratic rent seeking. On policy impact, the CAICT is often viewed as representing the views of the powerful MIIT, but the MIIT’s leadership has yet to issue its own policy documents on trustworthy AI. Whether it does will be a strong indicator of the bureaucratic momentum behind this approach.

Finally, the Ministry of Science and Technology (MOST) has taken the lightest of the three approaches to AI governance. Its highest-profile publications have focused on laying down ethical guidelines, relying on companies and researchers to supervise themselves in applying those principles to their work.

In July 2021, MOST [published guidelines](http://www.most.gov.cn/tztg/202107/t20210728_176136.html) that called for universities, labs, and companies to set up internal review committees to oversee and adjudicate technology ethics issues. Two months later, the main AI expert committee operating under MOST released its own [set of ethical norms for AI](https://cset.georgetown.edu/publication/ethical-norms-for-new-generation-artificial-intelligence-released/), with a special focus on weaving ethics into the entire life cycle of development. Since then, MOST has been encouraging leading tech companies to establish their own ethics review committees and audit their own products.

MOST’s approach is similar to those of international organizations such as the [United Nations Educational, Scientific and Cultural Organization](https://www.computerweekly.com/news/252510287/Unesco-member-states-adopt-AI-ethics-recommendation) and the [Organisation for Economic Co-operation and Development](https://oecd.ai/en/ai-principles), which have released AI principles and encouraged countries and companies to adopt them. But in the Chinese context, that tactic feels quite out of step with the country’s increasingly hands-on approach to technology governance, a disconnect that could undermine the impact of MOST’s efforts.

One unanswered question is how these three approaches will fit together. Chinese ministries and administrative bodies are [notoriously competitive](https://sgp.fas.org/crs/row/R41007.pdf) with one another, constantly jostling to get their pet initiatives in front of the country’s central leadership in hopes that they become the chosen policies of the party-state. In this contest, the CAC’s approach appears to have the clear upper hand: It is the most mature, the most in tune with the regulatory zeitgeist, and it comes from the organization with the most bureaucratic heft. But its approach can’t succeed entirely on its own. The CAC requires that companies be able to explain how their recommendation algorithms function, and the tools or certifications for what constitutes explainable AI are likely to come from the CAICT. In addition, given the sprawling and rapidly evolving nature of the technology, many practical aspects of trustworthy AI will first surface in the MOST-inspired ethics committees of individual companies.

The three-year road map for algorithmic governance offers a glimpse of some bureaucratic collaboration. Though the CAC is clearly the lead author, the document includes new references to algorithms being trustworthy and to companies setting up ethics review committees, additions likely made at the behest of the other two ministries. There may also be substantial shifts in bureaucratic power as AI governance expands to cover many industrial and social applications of AI. The CAC is traditionally an internet-focused regulator, and future regulations for autonomous vehicles or medical AI may create an opening for a ministry like the MIIT to seize the regulatory reins.

The potential impact of these regulatory currents extends far beyond China. If the CAC follows through on certain requirements for algorithmic transparency and explainability, China will be running some of the world’s largest regulatory experiments on topics that European regulators have [long debated](https://www.law.ox.ac.uk/business-law-blog/blog/2018/05/rethinking-explainable-machines-next-chapter-gdprs-right-explanation). Whether Chinese companies are able to [meet these new demands](https://digichina.stanford.edu/work/experts-examine-chinas-pioneering-draft-algorithm-regulations/) could inform analogous debates in Europe over the right to explanation.

On the security side, as AI systems are woven deeper into the fabrics of militaries around the world, governments want to ensure those systems are robust, reliable, and controllable for the sake of international stability. The CAICT’s current experiments in certifying AI systems are likely not game-ready for those kinds of high-stakes deployment decisions. But developing an early understanding of how Chinese institutions and technologists approach these questions could prove valuable for governments who may soon find themselves negotiating over aspects of autonomous weapons and arms controls.

With 2022 marking [a major year](https://thediplomat.com/2020/12/china-looks-ahead-to-20th-party-congress-in-2022/) in the Chinese political calendar, the people and bureaucracies building out Chinese AI governance are likely to continue jostling for position and influence. The results of that jostling warrant close attention from AI experts and China watchers. If China’s attempts to rein in algorithms prove successful, they could imbue these approaches with a kind of technological and regulatory soft power that shapes AI governance regimes around the globe. |

309ae5fb-5d67-4a88-8534-001954a36f15 | trentmkelly/LessWrong-43k | LessWrong | Avoiding Your Belief's Real Weak Points

A few years back, my great-grandmother died, in her nineties, after a long, slow, and cruel disintegration. I never knew her as a person, but in my distant childhood, she cooked for her family; I remember her gefilte fish, and her face, and that she was kind to me. At her funeral, my grand-uncle, who had taken care of her for years, spoke. He said, choking back tears, that God had called back his mother piece by piece: her memory, and her speech, and then finally her smile; and that when God finally took her smile, he knew it wouldn’t be long before she died, because it meant that she was almost entirely gone.

I heard this and was puzzled, because it was an unthinkably horrible thing to happen to anyone, and therefore I would not have expected my grand-uncle to attribute it to God. Usually, a Jew would somehow just-not-think-about the logical implication that God had permitted a tragedy. According to Jewish theology, God continually sustains the universe and chooses every event in it; but ordinarily, drawing logical implications from this belief is reserved for happier occasions. By saying “God did it!” only when you’ve been blessed with a baby girl, and just-not-thinking “God did it!” for miscarriages and stillbirths and crib deaths, you can build up quite a lopsided picture of your God’s benevolent personality.

Hence I was surprised to hear my grand-uncle attributing the slow disintegration of his mother to a deliberate, strategically planned act of God. It violated the rules of religious self-deception as I understood them.

If I had noticed my own confusion, I could have made a successful surprising prediction. Not long afterward, my grand-uncle left the Jewish religion. (The only member of my extended family besides myself to do so, as far as I know.)

Modern Orthodox Judaism is like no other religion I have ever heard of, and I don’t know how to describe it to anyone who hasn’t been forced to study Mishna and Gemara. There is a tradition of questioning, but |

39fc4f43-a797-4537-9ad2-65e76a9b1d7f | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: 1aaba7c9ec55a66f