repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

pytorch/TensorRT | 1,351 | ❓ [Question] Not enough inputs provided (runtime.RunCudaEngine) | ## ❓ Question

<!-- Your question -->

i make a pressure test on my model compiled by torch-tensorrt, it will report errors after 5 minutes, the traceback as blow:

```shell

2022-09-09T09:16:01.618971735Z File "/component/text_detector.py", line 135, in __call__

2022-09-09T09:16:01.618975181Z outputs = self.n... | https://github.com/pytorch/TensorRT/issues/1351 | closed | [

"question",

"No Activity",

"component: runtime"

] | 2022-09-13T02:39:11Z | 2023-03-26T00:02:17Z | null | Pekary |

pytorch/TensorRT | 1,340 | ❓ [Question] No improvement when I use sparse-weights? | ## ❓ Question

<!-- Your question -->

**No speed improvement when I use sparse-weights.**

I just modified this notebook https://github.com/pytorch/TensorRT/blob/master/notebooks/Hugging-Face-BERT.ipynb

And add the sparse_weights=True in the compile part. I also changed the regional bert-base model when I apply ... | https://github.com/pytorch/TensorRT/issues/1340 | closed | [

"question",

"No Activity",

"performance"

] | 2022-09-09T02:26:48Z | 2023-03-26T00:02:17Z | null | wzywzywzy |

pytorch/vision | 6,545 | add quantized vision transformer model | ### 🚀 The feature

hi, thanks for your great work. I hope to be able to add quantized vit model (for ptq or qat).

### Motivation, pitch

In 'torchvision/models/quantization', there are several quantized model (Eager Mode Quantization) that is very useful for me to learn quantization. In recent years, Transformer mode... | https://github.com/pytorch/vision/issues/6545 | open | [

"question",

"module: models.quantization"

] | 2022-09-08T09:34:33Z | 2022-09-09T11:17:45Z | null | WZMIAOMIAO |

huggingface/datasets | 4,944 | larger dataset, larger GPU memory in the training phase? Is that correct? | from datasets import set_caching_enabled

set_caching_enabled(False)

for ds_name in ["squad","newsqa","nqopen","narrativeqa"]:

train_ds = load_from_disk("../../../dall/downstream/processedproqa/{}-train.hf".format(ds_name))

break

train_ds = concatenate_datasets([train_ds,train_... | https://github.com/huggingface/datasets/issues/4944 | closed | [

"bug"

] | 2022-09-07T08:46:30Z | 2022-09-07T12:34:58Z | 2 | debby1103 |

pytorch/vision | 6,543 | Inconsistent use of FrozenBatchNorm in Faster-RCNN? | Hi,

while customizing and training a Faster-RCNN object detection model based on `torchvision.models.detection.faster_rcnn`, I've noticed that the pre-trained model of type `fasterrcnn_resnet50_fpn_v2` always use `nn.BatchNorm2d` normalization layers, while `fasterrcnn_resnet50_fpn` uses `torchvision.models.ops.misc.F... | https://github.com/pytorch/vision/issues/6543 | closed | [

"question",

"module: models",

"topic: object detection"

] | 2022-09-07T08:16:00Z | 2024-06-23T16:24:37Z | null | MoPl90 |

huggingface/datasets | 4,942 | Trec Dataset has incorrect labels | ## Describe the bug

Both coarse and fine labels seem to be out of line.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = "trec"

raw_datasets = load_dataset(dataset)

df = pd.DataFrame(raw_datasets["test"])

df.head()

```

## Expected results

text (string) | coarse_labe... | https://github.com/huggingface/datasets/issues/4942 | closed | [

"bug"

] | 2022-09-06T22:13:40Z | 2022-09-08T11:12:03Z | 1 | wmpauli |

pytorch/data | 763 | Online doc for DataLoader2/ReadingService and etc. | ### 📚 The doc issue

As we are preparing the next release with `DataLoader2`, we might need to add a few pages for `DL2`, `ReadingService` and all other related functionalities in https://pytorch.org/data/main/

- [x] DataLoader2

- [x] ReadingService

- [x] Adapter

- [ ] Linter

- [x] Graph function

- [ ]

### S... | https://github.com/meta-pytorch/data/issues/763 | open | [

"documentation"

] | 2022-09-06T15:37:49Z | 2022-11-15T15:13:49Z | 4 | ejguan |

pytorch/TensorRT | 1,335 | [Question? Bug?] Tried to allocate 166.38 GiB, seems weird | ## ❓ Question

<!-- Your question -->

I got errors

```

model_new_trt = trt.compile(

File "/opt/conda/lib/python3.8/site-packages/torch_tensorrt/_compile.py", line 109, in compile

return torch_tensorrt.ts.compile(ts_mod, inputs=inputs, enabled_precisions=enabled_precisions, **kwargs)

File "/opt/conda... | https://github.com/pytorch/TensorRT/issues/1335 | closed | [

"question",

"No Activity",

"component: partitioning"

] | 2022-09-06T15:16:41Z | 2022-12-26T00:02:39Z | null | zsef123 |

huggingface/datasets | 4,936 | vivos (Vietnamese speech corpus) dataset not accessible | ## Describe the bug

VIVOS data is not accessible anymore, neither of these links work (at least from France):

* https://ailab.hcmus.edu.vn/assets/vivos.tar.gz (data)

* https://ailab.hcmus.edu.vn/vivos (dataset page)

Therefore `load_dataset` doesn't work.

## Steps to reproduce the bug

```python

ds = load_dat... | https://github.com/huggingface/datasets/issues/4936 | closed | [

"dataset bug"

] | 2022-09-06T13:17:55Z | 2022-09-21T06:06:02Z | 3 | polinaeterna |

pytorch/data | 762 | Allow Header(limit=None) ? | Not urgent at all, just a minor suggestion:

In the benchmark scripts I'm currently running I want to limit the number of samples in a data-pipe according to an `args.limit` CLI parameter. I'd be nice to be able to just write:

```py

dp = Header(dp, limit=args.limit)

```

and let `Header` be a no-op when `limit... | https://github.com/meta-pytorch/data/issues/762 | closed | [

"good first issue"

] | 2022-09-06T11:04:57Z | 2022-12-06T20:20:58Z | 4 | NicolasHug |

huggingface/datasets | 4,932 | Dataset Viewer issue for bigscience-biomedical/biosses | ### Link

https://huggingface.co/datasets/bigscience-biomedical/biosses

### Description

I've just been working on adding the dataset loader script to this dataset and working with the relative imports. I'm not sure how to interpret the error below (show where the dataset preview used to be) .

```

Status code: 40... | https://github.com/huggingface/datasets/issues/4932 | closed | [] | 2022-09-05T22:40:32Z | 2022-09-06T14:24:56Z | 4 | galtay |

pytorch/pytorch | 84,553 | [ONNX] Change how context is given to symbolic functions | Current symbolic functions can take a context as an input, pushing graphs to the second argument. To support these functions, we need to annotate the first argument as symbolic context and tell them part in call time by examining the annotations.

Checking annotations is slow and this process complicates the logic i... | https://github.com/pytorch/pytorch/issues/84553 | closed | [

"module: onnx",

"triaged",

"topic: improvements"

] | 2022-09-05T22:04:52Z | 2022-09-28T22:56:39Z | null | justinchuby |

pytorch/TensorRT | 1,332 | ❓ [Question] Using torch-trt to test bert's qat quantitative model | ## ❓ Question

When using torch-trt to test Bert's qat quantization ( https://zenodo.org/record/4792496#.YxGrdRNBy3J ) model, I encountered many FakeTensorQuantFunction nodes in the pass, and at the same time triggered many nodes that could not convert TRT, and split the graph into many subgraphs

... | https://github.com/pytorch/serve/issues/1851 | closed | [

"question",

"triaged"

] | 2022-09-05T05:15:29Z | 2022-09-08T09:13:40Z | null | Vert53 |

pytorch/data | 761 | Would TorchData provide GPU support for loading and preprocessing images? | ### 🚀 The feature

Would TorchData provide GPU support for loading and preprocessing images?

### Motivation, pitch

When I am learning PyTorch, I find, currently, it do not support using GPU to load images or any other transforms of preprocessing and encoding data.

I want to know whether this would be taken into ... | https://github.com/meta-pytorch/data/issues/761 | open | [

"topic: new feature",

"triaged"

] | 2022-09-03T09:16:30Z | 2022-11-21T20:06:25Z | 5 | songyuc |

pytorch/serve | 1,842 | initial parameters transmit | ### 🚀 The feature

how transmit the initial parameters from the first model to laters in workflow.

### Motivation, pitch

how transmit the initial parameters from the first model to laters in workflow.

### Alternatives

_No response_

### Additional context

_No response_ | https://github.com/pytorch/serve/issues/1842 | open | [

"question",

"triaged_wait",

"workflowx"

] | 2022-09-02T14:51:38Z | 2022-09-06T10:42:39Z | null | jack-gits |

pytorch/serve | 1,841 | how to register a workflow directly when docker is started. | ### 🚀 The feature

how to register a workflow directly when docker is started.

### Motivation, pitch

how to register a workflow directly when docker is started.

### Alternatives

_No response_

### Additional context

_No response_ | https://github.com/pytorch/serve/issues/1841 | open | [

"help wanted",

"triaged",

"workflowx"

] | 2022-09-02T14:21:34Z | 2023-11-15T06:49:21Z | null | jack-gits |

huggingface/datasets | 4,924 | Concatenate_datasets loads everything into RAM | ## Describe the bug

When loading the datasets seperately and saving them on disk, I want to concatenate them. But `concatenate_datasets` is filling up my RAM and the process gets killed. Is there a way to prevent this from happening or is this intended behaviour? Thanks in advance

## Steps to reproduce the bug

```... | https://github.com/huggingface/datasets/issues/4924 | closed | [

"bug"

] | 2022-09-01T10:25:17Z | 2022-09-01T11:50:54Z | 0 | louisdeneve |

pytorch/TensorRT | 1,328 | ❓ [Question] How do you ....? | ## ❓ Question

Hi,

I am trying to use torch-tensorrt to optimize my model for inference. I first compile the model with torch.jit.script and then covnert it to tesnsorrt.

```shell

model = MoViNet(movinet_c.MODEL.MoViNetA0)

model.eval().cuda()

scripted_model = torch.jit.script(model)

trt_model = torch_tenso... | https://github.com/pytorch/TensorRT/issues/1328 | closed | [

"question",

"No Activity",

"performance"

] | 2022-08-31T15:06:50Z | 2022-12-12T00:03:55Z | null | ghazalehtrb |

pytorch/data | 756 | [RFC] More support for functionalities from `itertools` | ### 🚀 The feature

Over time, we have received more and more request for additional `IterDataPipe` (e.g. #648, #754, plus many more). Sometimes, these functionalities are very similar to what is already implemented in [`itertools`](https://docs.python.org/3/library/itertools.html) and [`more-itertools`](https://gith... | https://github.com/meta-pytorch/data/issues/756 | open | [] | 2022-08-30T21:30:19Z | 2022-09-08T06:54:28Z | 5 | NivekT |

pytorch/TensorRT | 1,322 | Error when I'm trying to use torch-tensorrt | ## ❓ Question

Hi

I'm trying to use torch-tensorrt with the pre built ngc container

I built it with 22.04 branch and with 22.04 version of ngc

My versions are:

cuda 10.2

torchvision 0.13.1

torch 1.12.1

But I get that error:

Traceback (most recent call last):

File "main.py", line 31, in <module>

... | https://github.com/pytorch/TensorRT/issues/1322 | closed | [

"question",

"channel: NGC"

] | 2022-08-30T13:09:18Z | 2022-12-15T17:43:52Z | null | EstherMalam |

huggingface/diffusers | 267 | Non-squared Image shape | Is it possible to use diffusers on non-squared images?

That would be a very interesting feature! | https://github.com/huggingface/diffusers/issues/267 | closed | [

"question"

] | 2022-08-29T01:29:33Z | 2022-09-13T15:57:36Z | null | LucasSilvaFerreira |

pytorch/functorch | 1,011 | memory_efficient_fusion leads to RuntimeError for higher-order gradients calculation. RuntimeError: You are attempting to call Tensor.requires_grad_() | Hi All,

I've tried improving the speed of my code via using `memory_efficient_fusion`, however, it leads to `Tensor.requires_grad_()` error and I have no idea why. The error is as follows,

```

RuntimeError: You are attempting to call Tensor.requires_grad_() (or perhaps using torch.autograd.functional.* APIs) insid... | https://github.com/pytorch/functorch/issues/1011 | open | [] | 2022-08-28T16:56:02Z | 2022-12-22T19:59:22Z | 3 | AlphaBetaGamma96 |

pytorch/functorch | 1,010 | Multiple gradient calculation for single sample | [According to the README](https://github.com/pytorch/functorch#working-with-nn-modules-make_functional-and-friends), we are able to calculate **per-sample-gradients** with functorch.

But what if we want to get multiple gradients for a **single sample**? For example, imagine that we are calculating multiple losses.

... | https://github.com/pytorch/functorch/issues/1010 | closed | [] | 2022-08-28T14:31:11Z | 2023-01-08T10:23:04Z | 23 | JoaoLages |

pytorch/TensorRT | 1,317 | caffe2 | Why don't you install caffe2 with pytorch in NGC container 22.08? | https://github.com/pytorch/TensorRT/issues/1317 | closed | [

"question",

"channel: NGC"

] | 2022-08-27T15:45:17Z | 2023-01-03T18:30:26Z | null | s-mohaghegh97 |

pytorch/serve | 1,819 | How to transfer files to a custom handler with curl command | I have created a custom handler that inputs and outputs wav files.

The code is as follows

```Python

# custom handler file

# model_handler.py

"""

ModelHandler defines a custom model handler.

"""

import os

import soundfile

from espnet2.bin.enh_inference import *

from ts.torch_handler.base_handler import... | https://github.com/pytorch/serve/issues/1819 | closed | [

"triaged_wait",

"support"

] | 2022-08-27T10:30:27Z | 2022-08-30T23:40:53Z | null | Shin-ichi-Takayama |

pytorch/data | 754 | A more powerful Mapper than can restrict function application to only part of the datapipe items? | We often have datapipes that return tuples `(img, target)` where we just want to call transformations on the img, but not the target. Sometimes it's the opposite: I want to apply a function to the target, and not to the img.

This usually forces us to write wrappers that "passthrough" either the img or the target. For... | https://github.com/meta-pytorch/data/issues/754 | open | [] | 2022-08-26T21:16:32Z | 2022-08-30T21:48:10Z | 5 | NicolasHug |

huggingface/dataset-viewer | 534 | Store the cached responses on the Hub instead of mongodb? | The config and split info will be stored in the YAML of the dataset card (see https://github.com/huggingface/datasets/issues/4876), and the idea is to compute them and update the dataset card automatically. This means that storing the responses for `/splits` in the MongoDB is duplication.

If we store the responses f... | https://github.com/huggingface/dataset-viewer/issues/534 | closed | [

"question"

] | 2022-08-26T16:24:39Z | 2022-09-19T09:09:29Z | null | severo |

huggingface/datasets | 4,902 | Name the default config `default` | Currently, if a dataset has no configuration, a default configuration is created from the dataset name.

For example, for a dataset loaded from the hub repository, such as https://huggingface.co/datasets/user/dataset (repo id is `user/dataset`), the default configuration will be `user--dataset`.

It might be easier... | https://github.com/huggingface/datasets/issues/4902 | closed | [

"enhancement",

"question"

] | 2022-08-26T16:16:22Z | 2023-07-24T21:15:31Z | null | severo |

huggingface/optimum | 362 | unexpect behavior GPU runtime with ORTModelForSeq2SeqLM | ### System Info

```shell

OS: Ubuntu 20.04.4 LTS

CARD: RTX 3080

Libs:

python 3.10.4

onnx==1.12.0

onnxruntime-gpu==1.12.1

torch==1.12.1

transformers==4.21.2

```

### Who can help?

@lewtun @michaelbenayoun @JingyaHuang @echarlaix

### Information

- [ ] The official example scripts

- [X] My own modified scri... | https://github.com/huggingface/optimum/issues/362 | closed | [

"bug",

"inference",

"onnxruntime"

] | 2022-08-26T02:11:26Z | 2022-12-09T09:13:22Z | 3 | tranmanhdat |

huggingface/dataset-viewer | 528 | metrics: how to manage variability between the admin pods? | The metrics include one entry per uvicorn worker of the `admin` service, but they give different values.

<details>

<summary>Example of a response to https://datasets-server.huggingface.co/admin/metrics</summary>

<pre>

# HELP starlette_requests_in_progress Multiprocess metric

# TYPE starlette_requests_in_progress... | https://github.com/huggingface/dataset-viewer/issues/528 | closed | [

"bug",

"question"

] | 2022-08-25T19:48:44Z | 2022-09-19T09:10:11Z | null | severo |

pytorch/torchx | 589 | Add per workspace runopts/config | ## Description

<!-- concise description of the feature/enhancement -->

## Motivation/Background

<!-- why is this feature/enhancement important? provide background context -->

Currently Workspaces piggyback on the config options for the scheduler. This means that every scheduler is deeply tied to the workspace a... | https://github.com/meta-pytorch/torchx/issues/589 | open | [

"enhancement",

"module: runner",

"docker"

] | 2022-08-25T18:18:35Z | 2022-08-25T18:18:35Z | 0 | d4l3k |

pytorch/pytorch | 84,014 | fill_ OpInfo code not used, also, doesn't test the case where the second argument is a Tensor | Two observations:

1. `sample_inputs_fill_` is no longer used. Can be deleted (https://github.com/pytorch/pytorch/blob/master/torch/testing/_internal/common_methods_invocations.py#L1798-L1807)

2. The new OpInfo for fill doesn't actually test the `tensor.fill_(other_tensor)` case. Previously we did test this, as shown ... | https://github.com/pytorch/pytorch/issues/84014 | open | [

"module: tests",

"triaged"

] | 2022-08-24T20:39:11Z | 2022-08-24T20:40:39Z | null | zou3519 |

huggingface/datasets | 4,881 | Language names and language codes: connecting to a big database (rather than slow enrichment of custom list) | **The problem:**

Language diversity is an important dimension of the diversity of datasets. To find one's way around datasets, being able to search by language name and by standardized codes appears crucial.

Currently the list of language codes is [here](https://github.com/huggingface/datasets/blob/main/src/datase... | https://github.com/huggingface/datasets/issues/4881 | open | [

"enhancement"

] | 2022-08-23T20:14:24Z | 2024-04-22T15:57:28Z | 49 | alexis-michaud |

pytorch/examples | 1,040 | In example DCGAN, curl timed out | Your issue may already be reported!

Please search on the [issue tracker](https://github.com/pytorch/serve/examples) before creating one.

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real... | https://github.com/pytorch/examples/issues/1040 | open | [

"data"

] | 2022-08-23T18:34:09Z | 2022-08-24T02:46:44Z | 1 | ShiboXing |

huggingface/datasets | 4,878 | [not really a bug] `identical_ok` is deprecated in huggingface-hub's `upload_file` | In the huggingface-hub dependency, the `identical_ok` argument has no effect in `upload_file` (and it will be removed soon)

See

https://github.com/huggingface/huggingface_hub/blob/43499582b19df1ed081a5b2bd7a364e9cacdc91d/src/huggingface_hub/hf_api.py#L2164-L2169

It's used here:

https://github.com/huggingfac... | https://github.com/huggingface/datasets/issues/4878 | closed | [

"help wanted",

"question"

] | 2022-08-23T17:09:55Z | 2022-09-13T14:00:06Z | null | severo |

pytorch/TensorRT | 1,303 | How to correctly format input for Fp16 inference using torch-tensorrt C++ | ## ❓ Question

<!-- Your question -->

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

Hi, I am using the following to export a torch scripted model to Fp16 tensorrt which will then be used in a C++ environment.

`network.load_state_dict(torch.load(path... | https://github.com/pytorch/TensorRT/issues/1303 | closed | [

"question",

"No Activity"

] | 2022-08-23T14:05:05Z | 2022-12-04T00:02:10Z | null | SM1991CODES |

pytorch/examples | 1,039 | FileNotFoundError: Couldn't find any class folder in /content/train2014. | Your issue may already be reported!

Please search on the [issue tracker](https://github.com/pytorch/serve/examples) before creating one.

I wanna train new style model

run this cmd

!unzip train2014.zip -d /content

!python /content/examples/fast_neural_style/neural_style/neural_style.py train --dataset /conte... | https://github.com/pytorch/examples/issues/1039 | open | [

"bug",

"data"

] | 2022-08-23T07:33:17Z | 2023-06-08T03:09:42Z | 2 | sevaroy |

huggingface/diffusers | 228 | stable-diffusion-v1-4 link in release v0.2.3 is broken | ### Describe the bug

@anton-l the link (https://huggingface.co/CompVis/stable-diffusion-v1-4) in the [release v0.2.3](https://github.com/huggingface/diffusers/releases/tag/v0.2.3) returns a 404.

### Reproduction

_No response_

### Logs

_No response_

### System Info

```shell

N/A

```

| https://github.com/huggingface/diffusers/issues/228 | closed | [

"question"

] | 2022-08-22T09:07:27Z | 2022-08-22T20:53:00Z | null | leszekhanusz |

huggingface/pytorch-image-models | 1,424 | [FEATURE] What hyperparameters is used to get the results stated in the paper with the ViT-B pretrained miil weights on imagenet1k? | **Is your feature request related to a problem? Please describe.**

What hyperparameters are used to get the results stated in this paper (https://arxiv.org/pdf/2104.10972.pdf) on ImageNet1k with the ViT-B pretrained miil weights from vision_transformer.py in line 164-167? I tried the hyperparemeters as stated in the p... | https://github.com/huggingface/pytorch-image-models/issues/1424 | closed | [

"enhancement"

] | 2022-08-21T22:26:48Z | 2022-08-22T04:17:43Z | null | Phuoc-Hoan-Le |

pytorch/functorch | 1,006 | RuntimeError: CUDA error: no kernel image is available for execution on the device | Hi, I have cuda 11.7 on my system and I am trying to install functorch, since the stable version of pytorch for cuda 11.7 is not available [here](https://pytorch.org/get-started/previous-versions/), I just run `pip install functorch` which also installs the compatible version of pytorch.

But when I run my code that ... | https://github.com/pytorch/functorch/issues/1006 | closed | [] | 2022-08-21T19:30:34Z | 2022-08-24T13:58:45Z | 8 | ykemiche |

pytorch/TensorRT | 1,295 | Jetpack 5.0.2 | ## ❓ Question

Is it known yet whether Torch TensorRT is compatible with NVIDIA Jetpack 5.0.2 on NVIDIA Jetson devices?

## What you have already tried

I am trying to install torch-tensorrt for Python on my Jetson Xavier NX with Jetpack 5.0.2. Followed the instructions for the Jetpack 5.0 install and have succes... | https://github.com/pytorch/TensorRT/issues/1295 | closed | [

"question"

] | 2022-08-21T03:33:07Z | 2022-08-22T00:38:23Z | null | HugeBob |

pytorch/pytorch | 83,721 | How to export a simple model using List.__contains__ to ONNX | ### 🐛 Describe the bug

When using torch.jit.script, the message shows that \_\_contains__ method is not supported.

This is a reduced part of my model, the function should be tagged with torch.jit.script because there's a for loop using list.\_\_contains__

And I want to export it to an onnx file but failed wit... | https://github.com/pytorch/pytorch/issues/83721 | closed | [

"module: onnx",

"triaged",

"onnx-needs-info"

] | 2022-08-19T03:05:43Z | 2024-04-01T16:53:35Z | null | SineStriker |

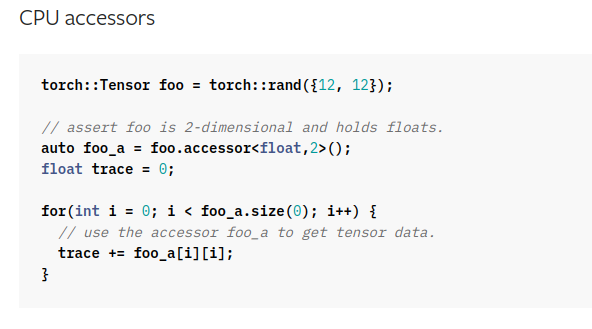

pytorch/pytorch | 83,685 | How to use accessors for fast elementwise write? | ### 📚 The doc issue

As seen above from Libtorch documentation, accessors can be used for fast element wise read operations on Libtorch tensors.

However, is there a similar functionality for write operat... | https://github.com/pytorch/pytorch/issues/83685 | closed | [] | 2022-08-18T16:50:28Z | 2022-08-24T20:36:19Z | null | SM1991CODES |

pytorch/TensorRT | 1,282 | ❓ [Question] How do you solve the error: Expected Tensor but got Uninitialized? | ## ❓ Question

Currently, I am compiling a custom segmentation model using torch_tensorrt.compile(), using a model script obtained from jit. The code to compile is as follows:

```

scripted_model = torch.jit.freeze(torch.jit.script(model))

inputs = [torch_tensorrt.Input(

min_shape=[2, 3, 600, 400],... | https://github.com/pytorch/TensorRT/issues/1282 | closed | [

"question"

] | 2022-08-18T13:04:23Z | 2022-10-11T15:42:19Z | null | Mark-M2L |

pytorch/data | 742 | [Discussion] Is the implementation of `cycler` efficient? | TL;DR: It seems in most cases users might be better off using `.flatmap(lambda x: [x for _ in n_repeat])` rather than `.cycle(n_repeat)`.

Here is the [implementation](https://github.com/pytorch/data/blob/main/torchdata/datapipes/iter/util/cycler.py), basically `Cycler` reads from the source DataPipe for `n` number o... | https://github.com/meta-pytorch/data/issues/742 | closed | [] | 2022-08-17T22:55:30Z | 2022-08-30T18:57:10Z | 4 | NivekT |

pytorch/data | 736 | Fix & Implement xdoctest | ### 📚 The doc issue

There is a PR https://github.com/pytorch/pytorch/pull/82797 landed into PyTorch core, which adds the functionality to validate if the example in comment is runnable.

However, in the example of PyTorch Core, we normally refer `torchdata` in all examples for the sake of unification of importing p... | https://github.com/meta-pytorch/data/issues/736 | open | [

"Better Engineering"

] | 2022-08-16T13:49:46Z | 2022-08-16T19:04:24Z | 0 | ejguan |

pytorch/TensorRT | 1,272 | ❓ [Question] How can I debug the error: Unable to freeze tensor of type Int64/Float64 into constant layer, try to compile model with truncate_long_and_double enabled | ## ❓ Question

Converting a model to Tensor Engine with the next code does not work

Input:

```

trt_model = ttrt.compile(traced_model, "default",

[ttrt.Input((1, 3, 224, 224), dtype=torch.float32)],

torch.float32, truncate_long_and_double=False)

```

Output:

... | https://github.com/pytorch/TensorRT/issues/1272 | closed | [

"question"

] | 2022-08-16T13:40:02Z | 2022-08-22T18:14:00Z | null | mjack3 |

huggingface/optimum | 351 | Add all available ONNX models to ORTConfigManager | This issue is linked to the [ONNXConfig for all](https://huggingface.co/OWG) working group created for implementing an ONNXConfig for all available models. Let's extend our work and try to add all models with a fully functional ONNXConfig implemented to ORTConfigManager.

Adding models to ORTConfigManager will allow ... | https://github.com/huggingface/optimum/issues/351 | open | [

"good first issue"

] | 2022-08-16T08:18:50Z | 2025-11-19T13:24:40Z | 3 | chainyo |

huggingface/optimum | 350 | Migrate metrics used in all examples from Datasets to Evaluate | ### Feature request

Copied from https://github.com/huggingface/transformers/issues/18306

The metrics are slowly leaving [Datasets](https://github.com/huggingface/datasets) (they are being deprecated as we speak) to move to the [Evaluate](https://github.com/huggingface/evaluate) library. We are looking for contribut... | https://github.com/huggingface/optimum/issues/350 | closed | [] | 2022-08-16T08:04:07Z | 2022-10-27T10:07:58Z | 0 | fxmarty |

pytorch/data | 732 | Recommended way to shuffle intra and inter archives? | Say I have a bunch of archives containing samples. In my case each archive is a pickle file containing a list of samples, but it could be a tar or something else.

I want to shuffle between archives (inter) and within archives (intra). My current way of doing it is below. Is there a more canonical solution?

```py

... | https://github.com/meta-pytorch/data/issues/732 | open | [] | 2022-08-15T17:14:39Z | 2022-08-16T13:02:46Z | 8 | NicolasHug |

pytorch/pytorch | 83,392 | How to turn off determinism just for specific operations, e.g. upsampling through bilinear interpolation? | This is the error caused by upsampling through bilinear interpolation when trying to use deterministic algorithms:

`RuntimeError: upsample_bilinear2d_backward_cuda does not have a deterministic implementation, but you set 'torch.use_deterministic_algorithms(True)'. You can turn off determinism just for this operatio... | https://github.com/pytorch/pytorch/issues/83392 | open | [

"module: cuda",

"triaged",

"module: determinism"

] | 2022-08-14T12:15:32Z | 2022-08-15T04:42:36Z | null | Jingling1 |

huggingface/datasets | 4,839 | ImageFolder dataset builder does not read the validation data set if it is named as "val" | **Is your feature request related to a problem? Please describe.**

Currently, the `'imagefolder'` data set builder in [`load_dataset()`](https://github.com/huggingface/datasets/blob/2.4.0/src/datasets/load.py#L1541] ) only [supports](https://github.com/huggingface/datasets/blob/6c609a322da994de149b2c938f19439bca9940... | https://github.com/huggingface/datasets/issues/4839 | closed | [

"enhancement"

] | 2022-08-12T13:26:00Z | 2022-08-30T10:14:55Z | 1 | akt42 |

huggingface/datasets | 4,836 | Is it possible to pass multiple links to a split in load script? | **Is your feature request related to a problem? Please describe.**

I wanted to use a python loading script in hugging face datasets that use different sources of text (it's somehow a compilation of multiple datasets + my own dataset) based on how `load_dataset` [works](https://huggingface.co/docs/datasets/loading) I a... | https://github.com/huggingface/datasets/issues/4836 | open | [

"enhancement"

] | 2022-08-12T11:06:11Z | 2022-08-12T11:06:11Z | 0 | sadrasabouri |

pytorch/TensorRT | 1,253 | ❓ [Question] How to load a TRT_Module in python environment on Windows which has been compiled on C++ Windows ? | ## ❓ Question

I have compiled torch_trt module using libtorch on C++ windows platform. This module is working perfectly on C++ for inference, however I want to use it in Python program on windows platform. How to load this module on python?

When I tried to load it with torch.jit.load() or torch.jit.load() it is t... | https://github.com/pytorch/TensorRT/issues/1253 | closed | [

"question",

"No Activity",

"channel: windows"

] | 2022-08-11T06:26:05Z | 2023-02-26T00:02:28Z | null | ghost |

pytorch/pytorch | 83,227 | QAT the bias is the int32, how to set the int8? | ### 🐛 Describe the bug

i try to do quantization, the weight is int8 ,but the bias is int32, i want to set the bias ---> int8, what i need to do ?

thanks

### Versions

help me, thanks

cc @jerryzh168 @jianyuh @raghuramank100 @jamesr66a @vkuzo | https://github.com/pytorch/pytorch/issues/83227 | closed | [

"oncall: quantization"

] | 2022-08-11T03:11:12Z | 2022-08-11T23:10:24Z | null | aimen123 |

huggingface/datasets | 4,820 | Terminating: fork() called from a process already using GNU OpenMP, this is unsafe. | Hi, when i try to run prepare_dataset function in [fine tuning ASR tutorial 4](https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_tuning_Wav2Vec2_for_English_ASR.ipynb) , i got this error.

I got this error

Terminating: fork() called from a process already using GNU OpenMP, this is un... | https://github.com/huggingface/datasets/issues/4820 | closed | [

"bug"

] | 2022-08-10T19:42:33Z | 2022-08-10T19:53:10Z | 1 | talhaanwarch |

pytorch/functorch | 999 | vmap and forward-mode AD fail sometimes on in-place views | ## The Problem

```py

import torch

from functorch import jvp, vmap

from functools import partial

B = 2

def f(x, y):

x = x.clone()

view = x[0]

x.copy_(y)

return view, x

def push_jvp(x, y, yt):

return jvp(partial(f, x), (y,), (yt,))

x = torch.randn(2, B, 6)

y = torch.randn(2, 6,... | https://github.com/pytorch/functorch/issues/999 | open | [] | 2022-08-10T17:45:17Z | 2022-08-16T20:46:48Z | 9 | zou3519 |

pytorch/pytorch | 83,135 | torch.nn.functional.avg_pool{1|2|3}d error message does not match what is described in the documentation | ### 📚 The doc issue

Parameter 'kernel_size' and 'stride' of torch.nn.functional.avg_pool{1|2|3}d can be a single number or a tuple. However, I found that error message only mentioned tuple of ints which means parameter 'kernel_size' and 'stride' can be only int number or tuple of ints.

```

import torch

results={... | https://github.com/pytorch/pytorch/issues/83135 | open | [

"module: docs",

"module: nn",

"triaged"

] | 2022-08-10T01:11:59Z | 2022-08-10T12:57:45Z | null | cheyennee |

pytorch/test-infra | 516 | [CI] Use job summaries to display how to replicate failures on specific configs | For configs such as slow, dynamo, and parallel-native, reproducing a CI error is more involved than just rerunning the command locally. We should use tools (like job summaries) to give people the context they'd need to repro a bug. | https://github.com/pytorch/test-infra/issues/516 | open | [] | 2022-08-09T18:15:15Z | 2022-11-15T19:51:40Z | null | janeyx99 |

pytorch/TensorRT | 1,243 | ❓ [Question] How to correctly configure LD_LIBRARY_PATH | ## ❓ Question

Hello, after installing torch_tensorrt on my jetson xavier using jetpack 4.6, I cannot import it. I am having a similar issue to other bugs that have been reported and answered. I am wondering though, how do you correctly add tensorrt to LD_LIBRARY_PATH? (Proposed solution from other bugs).

## What ... | https://github.com/pytorch/TensorRT/issues/1243 | closed | [

"question"

] | 2022-08-08T20:24:55Z | 2022-08-08T20:35:00Z | null | kneatco |

huggingface/dataset-viewer | 502 | Improve the docs: what is needed to make the dataset viewer work? | See https://discuss.huggingface.co/t/the-dataset-preview-has-been-disabled-on-this-dataset/21339 | https://github.com/huggingface/dataset-viewer/issues/502 | closed | [

"documentation"

] | 2022-08-08T13:27:21Z | 2022-09-19T09:12:00Z | null | severo |

pytorch/TensorRT | 1,235 | ❓ [Question] How do you debug errors in the compilation step? | ## ❓ Question

Hello all,

After training a model, I decided to use torch_tensorrt to test and hopefully increase inference speed. When compiling the custom model, I get the following error: `RuntimeError: Trying to create tensor with negative dimension -1: [-1, 3, 600, 400]`. This did not occur when doing inferen... | https://github.com/pytorch/TensorRT/issues/1235 | closed | [

"question"

] | 2022-08-05T15:23:53Z | 2022-08-08T17:04:30Z | null | Mark-M2L |

pytorch/TensorRT | 1,233 | ❓ [Question] How to install "tensorrt" package? | ## ❓ Question

I'm trying to install `torch-tensorrt` on a Jetson AGX Xavier. I first installed `pytorch` 1.12.0 and `torchvision` 0.13.0 following this [guide](https://forums.developer.nvidia.com/t/pytorch-for-jetson-version-1-11-now-available/72048). Then I installed `torch-tensorrt` following this [guide](https://... | https://github.com/pytorch/TensorRT/issues/1233 | closed | [

"question",

"component: dependencies",

"channel: linux-jetpack"

] | 2022-08-05T08:40:18Z | 2022-12-15T17:36:36Z | null | domef |

pytorch/data | 718 | Recommended practice to shuffle data with datapipes differently every epoch | ### 📚 The doc issue

I was trying `torchdata` 0.4.0 and I found that shuffling with data pipes will always yield the same result across different epochs, unless I shuffle it again at the beginning of every epoch.

```python

# same_result.py

import torch

import torchdata.datapipes as dp

X = torch.randn(200, 5)

d... | https://github.com/meta-pytorch/data/issues/718 | closed | [] | 2022-08-05T02:12:25Z | 2022-09-13T21:18:49Z | 4 | BarclayII |

huggingface/dataset-viewer | 498 | Test cookie authentication | Testing token authentication is easy, see https://github.com/huggingface/datasets-server/issues/199#issuecomment-1205528302, but testing session cookie authentication might be a bit more complex since we need to log in to get the cookie. I prefer to get a dedicate issue for it. | https://github.com/huggingface/dataset-viewer/issues/498 | closed | [

"question",

"tests"

] | 2022-08-04T17:06:31Z | 2022-08-22T18:34:29Z | null | severo |

huggingface/datasets | 4,791 | Dataset Viewer issue for Team-PIXEL/rendered-wikipedia-english | ### Link

https://huggingface.co/datasets/Team-PIXEL/rendered-wikipedia-english/viewer/rendered-wikipedia-en/train

### Description

The dataset can be loaded fine but the viewer shows this error:

```

Server Error

Status code: 400

Exception: Status400Error

Message: The dataset does not exist.

```

... | https://github.com/huggingface/datasets/issues/4791 | closed | [

"dataset-viewer"

] | 2022-08-04T12:49:16Z | 2022-08-04T13:43:16Z | 1 | xplip |

pytorch/pytorch | 82,751 | Refactor how errors decide whether to append C++ stacktrace | ### 🚀 The feature, motivation and pitch

Per @zdevito's comment in https://github.com/pytorch/pytorch/pull/82665/files#r936022305, we should refactor the way C++ stacktrace is appended to errors.

Currently, in https://github.com/pytorch/pytorch/blob/752579a3735ce711ccaddd8d9acff8bd6260efe0/torch/csrc/Exceptions.h, ... | https://github.com/pytorch/pytorch/issues/82751 | open | [

"triaged",

"better-engineering"

] | 2022-08-03T20:28:56Z | 2022-08-03T20:28:56Z | null | rohan-varma |

pytorch/data | 712 | Add Examples of Common Preprocessing Steps with IterDataPipe (such as splitting a data set into two) | ### 📚 The doc issue

There are a few common steps that users often would like to do while preprocessing data, such as [splitting their data set](https://pytorch.org/docs/stable/data.html#torch.utils.data.random_split) into train and eval. There are documentation in PyTorch Core about how to do these things with `Dat... | https://github.com/meta-pytorch/data/issues/712 | closed | [

"documentation"

] | 2022-08-02T23:58:09Z | 2022-10-20T17:49:41Z | 9 | NivekT |

pytorch/data | 709 | Update tutorial about shuffling before sharding | ### 📚 The doc issue

The [tutorial](https://pytorch.org/data/beta/tutorial.html#working-with-dataloader) needs to update the actual reason about shuffling before sharding. It's not accurate.

Shuffling before sharding is required to achieve global shuffling rather than only shuffling inside each shard.

### Suggest a ... | https://github.com/meta-pytorch/data/issues/709 | closed | [

"documentation"

] | 2022-08-02T17:53:56Z | 2022-08-04T22:06:36Z | 2 | ejguan |

pytorch/data | 707 | Map-style DataPipe to read from s3 | ### 🚀 The feature

[Amazon S3 plugin for PyTorch ](https://aws.amazon.com/blogs/machine-learning/announcing-the-amazon-s3-plugin-for-pytorch/)proposes S3Dataset which is a Map-style PyTorch Dataset. I was looking for a similar feature in torchdata but only found [S3FileLoader](https://pytorch.org/data/main/generated/t... | https://github.com/meta-pytorch/data/issues/707 | closed | [] | 2022-08-02T12:58:21Z | 2022-08-04T13:31:32Z | 10 | gombru |

pytorch/tutorials | 1,993 | Problem with the torchtext library text classification example | The first section of the [tutorial](https://pytorch.org/tutorials/beginner/text_sentiment_ngrams_tutorial.html) suggests

`

import torch

from torchtext.datasets import AG_NEWS

train_iter = iter(AG_NEWS(split='train'))

`

which does not work yielding

`TypeError: _setup_datasets() got an unexpected keyword argumen... | https://github.com/pytorch/tutorials/issues/1993 | closed | [

"question",

"module: torchtext",

"docathon-h1-2023",

"medium"

] | 2022-08-02T08:46:49Z | 2023-06-12T19:42:05Z | null | EssamWisam |

huggingface/datasets | 4,776 | RuntimeError when using torchaudio 0.12.0 to load MP3 audio file | Current version of `torchaudio` (0.12.0) raises a RuntimeError when trying to use `sox_io` backend but non-Python dependency `sox` is not installed:

https://github.com/pytorch/audio/blob/2e1388401c434011e9f044b40bc8374f2ddfc414/torchaudio/backend/sox_io_backend.py#L21-L29

```python

def _fail_load(

filepath: str... | https://github.com/huggingface/datasets/issues/4776 | closed | [] | 2022-08-01T14:11:23Z | 2023-03-02T15:58:16Z | 3 | albertvillanova |

pytorch/tutorials | 1,991 | Some typos in and a question from TorchScript tutorial | Hi, I first thank for this tutorial.

Here are some typos in the tutorial:

1.`be` in https://github.com/pytorch/tutorials/blob/7976ab181fd2a97b2775574eec284d1fc8abcfe0/beginner_source/Intro_to_TorchScript_tutorial.py#L42 should be `by`.

2.`succintly` in https://github.com/pytorch/tutorials/blob/7976ab181fd2a97b... | https://github.com/pytorch/tutorials/issues/1991 | closed | [

"grammar"

] | 2022-08-01T13:21:26Z | 2022-10-13T22:49:41Z | 2 | sadra-barikbin |

huggingface/optimum | 327 | Any workable example of exporting and inferencing with GPU? | ### System Info

```shell

Been tried many methods, but never successfully done it. Thanks.

```

### Who can help?

_No response_

### Information

- [ ] The official example scripts

- [ ] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My ... | https://github.com/huggingface/optimum/issues/327 | closed | [

"bug"

] | 2022-08-01T05:12:15Z | 2022-08-01T06:19:26Z | 1 | lkluo |

pytorch/data | 705 | Set better defaults for `MultiProcessingReadingService` | ### 🚀 The feature

```python

class MultiProcessingReadingService(ReadingServiceInterface):

num_workers: int = get_number_of_cpu_cores()

pin_memory: bool = True

timeout: float

worker_init_fn: Optional[Callable[[int], None]] # Remove this?

prefetch_factor: int = profile_optimal_prefetch_facto... | https://github.com/meta-pytorch/data/issues/705 | open | [

"enhancement"

] | 2022-07-31T22:46:33Z | 2022-08-02T22:07:18Z | 1 | msaroufim |

pytorch/pytorch | 82,542 | Is there Doc that explains how to call an extension op in another extension implementation? | ### 📚 The doc issue

For example, there is an extension op which is installed from public repo via `pip install torch-scatter`, and in Python code, it's easy to use this extension:

```py

import torch

output = torch.ops.torch_scatter.scatter_max(x, index)

```

However, I'm writing an C++ extension and want to c... | https://github.com/pytorch/pytorch/issues/82542 | open | [

"module: docs",

"module: cpp",

"triaged"

] | 2022-07-31T06:20:02Z | 2022-08-03T15:18:05Z | null | ghostplant |

pytorch/pytorch | 82,524 | how to build libtorch from source? | ### 🐛 Describe the bug

where is the source? I want to build libtorch-win-shared-with-deps-1.12.0%2Bcu116.zip

### Versions

as in the title

cc @malfet @seemethere @svekars @holly1238 @jbschlosser | https://github.com/pytorch/pytorch/issues/82524 | closed | [

"module: build",

"module: docs",

"module: cpp",

"triaged"

] | 2022-07-30T08:01:18Z | 2022-08-21T01:50:37Z | null | xoofee |

pytorch/data | 703 | Read Parquet Files Directly from S3? | ### 🚀 The feature

The `ParquetDataFrameLoader` allows us to read parquet files from the local file system, but I don't think it supports reading parquet files from (for example) an S3 bucket.

Make this possible.

### Motivation, pitch

I would like to train my models on parquet files stored in an S3 bucket.

### A... | https://github.com/meta-pytorch/data/issues/703 | open | [

"enhancement",

"feature"

] | 2022-07-30T06:07:01Z | 2022-08-03T19:09:09Z | 2 | vedantroy |

pytorch/TensorRT | 1,213 | ❓ [Question] Is it ok to build v1.1.0 with cuda10.2 not default cuda11.3? | ## ❓ Question

<!-- Your question -->

Is it ok to build v1.1.0 with cuda10.2 not default cuda11.3?

It's hard to upgrade latest gpu driver for some machine which is shared by many people. So cuda10.2 is preferred.

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages... | https://github.com/pytorch/TensorRT/issues/1213 | closed | [

"question",

"component: dependencies"

] | 2022-07-28T12:05:22Z | 2022-08-12T01:46:32Z | null | wikiwen |

huggingface/datasets | 4,757 | Document better when relative paths are transformed to URLs | As discussed with @ydshieh, when passing a relative path as `data_dir` to `load_dataset` of a dataset hosted on the Hub, the relative path is transformed to the corresponding URL of the Hub dataset.

Currently, we mention this in our docs here: [Create a dataset loading script > Download data files and organize split... | https://github.com/huggingface/datasets/issues/4757 | closed | [

"documentation"

] | 2022-07-28T08:46:27Z | 2022-08-25T18:34:24Z | 0 | albertvillanova |

huggingface/diffusers | 143 | Running difussers with GPU | Running the example codes i see that the CPU and not the GPU is used, is there a way to use GPU instead | https://github.com/huggingface/diffusers/issues/143 | closed | [

"question"

] | 2022-07-28T08:34:12Z | 2022-08-15T17:27:31Z | null | jfdelgad |

pytorch/TensorRT | 1,212 | 🐛 [Bug] Encountered bug when using Torch-TensorRT | ## ❓ Question

Hello There,

I've tried to run torch_TensorRT on ubuntu and windows as well. On windows I compiled it with [this](https://github.com/gcuendet/Torch-TensorRT/tree/add-cmake-support) pull request and it is working good on C++. The resulting trt_module on ubuntu is loading flawlessly on python and can b... | https://github.com/pytorch/TensorRT/issues/1212 | closed | [

"question",

"No Activity",

"channel: windows"

] | 2022-07-28T06:31:49Z | 2022-11-02T18:44:43Z | null | ghost |

huggingface/optimum | 320 | Feature request: allow user to provide tokenizer when loading transformer model | ### Feature request

When I try to load a locally saved transformers model with `ORTModelForSequenceClassification.from_pretrained(<path>, from_transformers=True)` an error occurs ("unable to generate dummy inputs for model") unless I also save the tokenizer in the checkpoint. A reproducible example of this is below.... | https://github.com/huggingface/optimum/issues/320 | closed | [

"Stale"

] | 2022-07-27T20:01:32Z | 2025-07-27T02:17:59Z | 3 | jessecambon |

pytorch/TensorRT | 1,209 | ❓ [Question] How do you install an older TensorRT package? | ## ❓ Question

How do you install an older TensorRT package? I'm using Pytorch 1.8 and TensorRT version 0.3.0 matches that Pytorch version.

## What you have already tried

I tried:

```

pip3 install torch-tensorrt==v0.3.0 -f https://github.com/pytorch/TensorRT/releases

Looking in links: https://github.com/... | https://github.com/pytorch/TensorRT/issues/1209 | closed | [

"question"

] | 2022-07-27T16:40:14Z | 2022-08-01T15:54:24Z | null | JinLi711 |

pytorch/pytorch | 82,304 | How to use SwiftShader to test vulkan mobile models ? | ### 📚 The doc issue

In this tutorial [here](https://pytorch.org/tutorials/prototype/vulkan_workflow.html),

It's pointed out at the end that it will be possible to use SwiftShader to test pytorch_mobile models on Vulkan backend without needing to go to mobile.

How?

### Suggest a potential alternative/fix

_No r... | https://github.com/pytorch/pytorch/issues/82304 | closed | [

"oncall: mobile"

] | 2022-07-27T10:18:59Z | 2022-07-28T22:40:59Z | null | MohamedAliRashad |

pytorch/TensorRT | 1,207 | ❓ [Question] cmake do not find torchtrt? | ## ❓ Question

Hi, im trying to compile from source and test with c++.

I built using locally installed cuda 10.2 , tensort 8.2 and libtorch cxx11 abi, compile using `bazel build //:libtorchtrt -c opt`

It looks like the installation was successful.

```

INFO: Analyzed target //:libtorchtrt (0 packages loaded, 0 ... | https://github.com/pytorch/TensorRT/issues/1207 | closed | [

"question",

"component: build system"

] | 2022-07-27T09:14:51Z | 2023-09-14T17:40:25Z | null | xsxsmm |

pytorch/torchx | 569 | [Ray] Elastic Launch on Ray Cluster | ## Description

<!-- concise description of the feature/enhancement -->

Support elastic training on Ray Cluster.

## Motivation/Background

<!-- why is this feature/enhancement important? provide background context -->

Training can tolerate node failures.

The number of worker nodes can expand as the size of the cl... | https://github.com/meta-pytorch/torchx/issues/569 | open | [

"enhancement",

"ray"

] | 2022-07-27T04:32:41Z | 2022-11-05T18:22:51Z | 0 | ntlm1686 |

pytorch/data | 693 | Changing decoding method in StreamReader | ### 🐛 Describe the bug

Hi,

When decoding from a file stream in `StreamReader`, torchdata automatically assumes the incoming bytes are UTF-8. However, in the case of alternate encoding's this will error (in my case `UnicodeDecodeError: 'utf-8' codec can't decode byte 0xec in position 3: invalid continuation byte`).... | https://github.com/meta-pytorch/data/issues/693 | open | [] | 2022-07-27T00:33:29Z | 2022-07-27T13:18:15Z | 2 | is-jlehrer |

pytorch/data | 690 | Unable to vectorize datapipe operations | ### 🐛 Describe the bug

Let `t` be an input dataset that associates strings (model input) to integers (model output):

```python

t = [("a", 567), ("b", 908), ("c", 887)]

```

I now wrap `t` in a `SequenceWrapper`, to use it as part of a DataPipe:

```python

import torchdata.datapipes as dp

pipeline = dp.... | https://github.com/meta-pytorch/data/issues/690 | open | [] | 2022-07-26T13:30:39Z | 2022-07-26T15:50:26Z | 2 | BlueskyFR |

huggingface/datasets | 4,744 | Remove instructions to generate dummy data from our docs | In our docs, we indicate to generate the dummy data: https://huggingface.co/docs/datasets/dataset_script#testing-data-and-checksum-metadata

However:

- dummy data makes sense only for datasets in our GitHub repo: so that we can test their loading with our CI

- for datasets on the Hub:

- they do not pass any CI t... | https://github.com/huggingface/datasets/issues/4744 | closed | [

"documentation"

] | 2022-07-26T07:32:58Z | 2022-08-02T23:50:30Z | 2 | albertvillanova |

pytorch/xla | 3,760 | How to load a gpu trained model on TPU for evaluation | ## ❓ Questions and Help

Hello,

I am loading a GPU trained model on map_location=cpu and then doing "model.to(device)" where device is xm.xla_device(n=device_num,devkind="TPU") but on testing the cpu processing time and the tpu processing time is the same. Please let me know what I can do about it.

Thank you | https://github.com/pytorch/xla/issues/3760 | open | [] | 2022-07-26T01:25:45Z | 2022-07-26T02:22:58Z | null | Preethse |

pytorch/data | 689 | Distributed training tutorial with DataLoader2 | ### 📚 The doc issue

I am not sure how to implement distributed training.

### Suggest a potential alternative/fix

If there was a simple example that showed how to use DDP with the torchdata library it would be super helpful. | https://github.com/meta-pytorch/data/issues/689 | closed | [

"documentation"

] | 2022-07-25T22:44:56Z | 2023-02-01T17:59:08Z | 9 | MatthewCaseres |

huggingface/datasets | 4,742 | Dummy data nowhere to be found | ## Describe the bug

To finalize my dataset, I wanted to create dummy data as per the guide and I ran

```shell

datasets-cli dummy_data datasets/hebban-reviews --auto_generate

```

where hebban-reviews is [this repo](https://huggingface.co/datasets/BramVanroy/hebban-reviews). And even though the scripts runs an... | https://github.com/huggingface/datasets/issues/4742 | closed | [

"bug"

] | 2022-07-25T19:18:42Z | 2022-11-04T14:04:24Z | 3 | BramVanroy |

huggingface/dataset-viewer | 466 | Take decisions before launching in public | ## Version

Should we integrate a version in the path or domain, to help with future breaking changes?

Three options:

1. domain based: https://v1.datasets-server.huggingface.co

2. path based: https://datasets-server.huggingface.co/v1/

3. no version (current): https://datasets-server.huggingface.co

I think 3 ... | https://github.com/huggingface/dataset-viewer/issues/466 | closed | [

"question"

] | 2022-07-25T18:04:59Z | 2022-07-26T14:39:46Z | null | severo |

pytorch/TensorRT | 1,203 | ❓ [Question] How do you install torch-tensorrt (Import error. no libvinfer_plugin.so.8 file error)? | ## ❓ Question

<!-- ImportError: libnvinfer_plugin.so.8: cannot open shared object file: No such file or directory -->

### ImportError: libnvinfer_plugin.so.8: cannot open shared object file: No such file or directory

cuda and cudnn is installed well.

I installed pytorch and nvidia-tensorrt well in conda env... | https://github.com/pytorch/TensorRT/issues/1203 | closed | [

"question"

] | 2022-07-25T03:03:35Z | 2024-04-30T02:16:10Z | null | YOONAHLEE |

pytorch/TensorRT | 1,202 | ❓ [Question] interpolate isn't suported? | ## ❓ Question

does anyone succeed compile [torch.nn.functional.interpolate](https://pytorch.org/docs/stable/generated/torch.nn.functional.interpolate.html) with torch_tensort>1.x.x?

in the release note, it is written that nearest and bilinear interpolation are supported

if you can compile it, please share with... | https://github.com/pytorch/TensorRT/issues/1202 | closed | [

"question",

"component: converters"

] | 2022-07-24T21:35:34Z | 2022-07-27T23:46:37Z | null | yokosyun |

pytorch/functorch | 982 | GPU Memeory | ```

func_model, params = make_functional(model)

for param in params:

param.requires_grad_(False)

def compute_loss(params, data, targets):

data = data.unsqueeze(dim=0)

preds = func_model(params, data)

loss = loss_fn(preds, targets)

return loss

per_sample_info = vmap(gr... | https://github.com/pytorch/functorch/issues/982 | closed | [] | 2022-07-24T04:45:50Z | 2022-07-24T10:37:22Z | 0 | kwwcv |

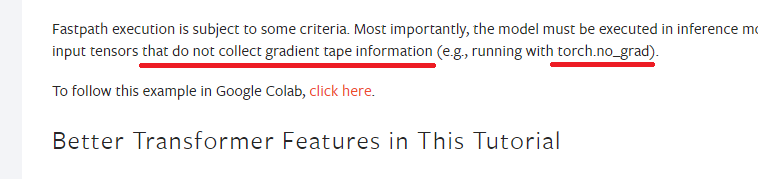

pytorch/pytorch | 82,041 | [Misleading] The doc started using Tensorflow terminology in the document to explain how to use the Pytorch code. | ### 📚 The doc issue

the model must be executed in inference mode and operate on input tensors that do not collect gradient tape information (e.g., running with torch.no_grad).

### Suggest a potential alt... | https://github.com/pytorch/pytorch/issues/82041 | open | [

"module: docs",

"triaged"

] | 2022-07-23T01:43:39Z | 2022-07-24T16:35:11Z | null | AliceSum |

huggingface/dataset-viewer | 458 | Move /webhook to admin instead of api? | As we've done with the technical endpoints in https://github.com/huggingface/datasets-server/pull/457?

It might help to protect the endpoint (#95), even if it's not really dangerous to let people add jobs to refresh datasets IMHO for now. | https://github.com/huggingface/dataset-viewer/issues/458 | closed | [

"question"

] | 2022-07-22T20:21:39Z | 2022-09-16T17:24:05Z | null | severo |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.