repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

pytorch/pytorch | 93,516 | [Question] How to debug "munmap_chunk(): invalid pointer" when compiling to triton? | I'm trying to use torchdynamo to compile a function to triton.

My logs indicate that the function optimizes without issue,

but when running the function on a given input, I just get "munmap_chunk(): invalid pointer" w/o a stack trace / any useful debugging information.

I'm wondering how to go about debugging su... | https://github.com/pytorch/pytorch/issues/93516 | closed | [

"oncall: pt2"

] | 2023-01-22T03:36:25Z | 2023-02-01T14:19:30Z | null | vedantroy |

pytorch/TensorRT | 1,600 | ❓ [Question] How do you compile for Jetson 5.0? | ## ❓ Question

Hi, as there seems to be no prebuilt python binary, just wanted to know if there is any way to install this package on jetson 5.0?

## What you have already tried

I tried normal installation for jetson 4.6 which fails, I aslo tried this https://forums.developer.nvidia.com/t/installing-building-tor... | https://github.com/pytorch/TensorRT/issues/1600 | closed | [

"question",

"No Activity",

"channel: linux-jetpack"

] | 2023-01-20T17:08:42Z | 2023-09-15T11:33:27Z | null | arnaghizadeh |

huggingface/setfit | 282 | Loading a trained SetFit model without setfit? | SetFit team, first off, thanks for the awesome library!

I'm running into trouble trying to load and run inference on a trained SetFit model without using `SetFitModel.from_pretrained()`. Instead, I'd like to load the model using torch, transformers, sentence_transformers, or some combination thereof. Is there a clea... | https://github.com/huggingface/setfit/issues/282 | closed | [

"question"

] | 2023-01-20T01:08:00Z | 2024-05-21T08:11:08Z | null | ZQ-Dev8 |

huggingface/datasets | 5,442 | OneDrive Integrations with HF Datasets | ### Feature request

First of all , I would like to thank all community who are developed DataSet storage and make it free available

How to integrate our Onedrive account or any other possible storage clouds (like google drive,...) with the **HF** datasets section.

For example, if I have **50GB** on my **Onedrive*... | https://github.com/huggingface/datasets/issues/5442 | closed | [

"enhancement"

] | 2023-01-19T23:12:08Z | 2023-02-24T16:17:51Z | 2 | Mohammed20201991 |

pytorch/xla | 4,482 | How to save checkpoints in XLA | Hello

I have training scripts running on CPUs and GPUs without error.

I am trying to make the scripts compatible with TPUs.

I was using the following lines to save checkpoints

```

torch.save(checkpoint, path_checkpoints_file )

```

and following lines to load checkpoints

```

checkpoint = torch.load(pa... | https://github.com/pytorch/xla/issues/4482 | open | [] | 2023-01-19T21:50:05Z | 2023-02-15T22:58:13Z | null | mfatih7 |

pytorch/functorch | 1,106 | Vmap and backward hook problem | I try to get the gradient of the intermedia layer of model, so I use the backwards hook with functroch.grad to get the gradient of each image. When I used for loop to iterate each image, I successfully obtained 5000 gradients (dataset size). However, when I use vmap to do the same thing, I only get 40 gradients (40 bat... | https://github.com/pytorch/functorch/issues/1106 | open | [] | 2023-01-19T21:25:02Z | 2023-01-23T05:08:49Z | 1 | pmzzs |

pytorch/tutorials | 2,175 | OSError: Missing: valgrind, callgrind_control, callgrind_annotate | The error occurs on below step:

8. Collecting instruction counts with Callgrind:

Traceback (most recent call last):

File "benchmark.py", line 805, in <module>

stats_v0 = t0.collect_callgrind()

File "/usr/local/lib/python3.8/dist-packages/torch/utils/benchmark/utils/timer.py", line 486, in collect_callg... | https://github.com/pytorch/tutorials/issues/2175 | open | [

"question"

] | 2023-01-19T16:00:52Z | 2023-01-24T10:47:08Z | null | ghost |

pytorch/data | 949 | `torchdata` not available through `pytorch-nightly` conda channel | ### 🐛 Describe the bug

The nightly version of torchdata does not seem available through the corresponding conda channel.

**Command:**

```

$ conda install torchdata -c pytorch-nightly --override-channels

```

**Result:**

```

Collecting package metadata (current_repodata.json): done

Solving environment: fail... | https://github.com/meta-pytorch/data/issues/949 | closed | [

"high priority"

] | 2023-01-18T15:49:29Z | 2023-01-18T17:11:32Z | 4 | PierreGtch |

pytorch/tutorials | 2,173 | Area calculation in TorchVision Object Detection Finetuning Tutorial | I see that at https://pytorch.org/tutorials/intermediate/torchvision_tutorial.html,

` area = (boxes[:, 3] - boxes[:, 1]) * (boxes[:, 2] - boxes[:, 0])` but shouldn't it be something like `area = ((boxes[:, 3] - boxes[:, 1]) + 1) * ((boxes[:, 2] - boxes[:, 0]) + 1) ` for calculating areas? If I am wrong, can someon... | https://github.com/pytorch/tutorials/issues/2173 | closed | [

"module: vision"

] | 2023-01-18T08:55:55Z | 2023-02-15T16:55:24Z | 1 | Himanshunitrr |

pytorch/torchx | 684 | Docker workspace: How to specify "latest" (nightly) base image? | ## ❓ Questions and Help

For my docker workspace (e.g. scheduler == "local_docker" or "aws_batch"), I'd like to use a base image that is published on a nightly cadence. So I have this `Dockerfile.torchx`

```

# Dockerfile.torchx

ARGS IMAGE

ARGS WORKSPACE

FROM $IMAGE

WORKDIR /workspace/mfive

COPY $WORKSPAC... | https://github.com/meta-pytorch/torchx/issues/684 | closed | [] | 2023-01-17T22:03:19Z | 2023-03-17T22:06:25Z | 3 | kiukchung |

pytorch/PiPPy | 723 | How to reduce memory costs when running on CPU | I running HF_inference.py on my CPU and it works well! It can successfully applying pipeline parallelism on CPU. However, when I applying pipeline parallelism, I found that each rank will load the whole model and it seems not necessary since each rank only performs a part of the model. There must be some ways can figur... | https://github.com/pytorch/PiPPy/issues/723 | closed | [] | 2023-01-17T07:54:31Z | 2025-06-10T02:40:11Z | null | jiqing-feng |

huggingface/diffusers | 2,012 | Reduce Imagic Pipeline Memory Consumption | I'm running the [Imagic Stable Diffusion community pipeline](https://github.com/huggingface/diffusers/blob/main/examples/community/imagic_stable_diffusion.py) and it's routinely allocating 25-38 GiB GPU vRAM which seems excessively high.

@MarkRich any ideas on how to reduce memory usage? Xformers and attention slic... | https://github.com/huggingface/diffusers/issues/2012 | closed | [

"question",

"stale"

] | 2023-01-16T23:43:03Z | 2023-02-24T15:03:35Z | null | andreemic |

huggingface/optimum | 697 | Custom model output | ### System Info

```shell

Copy-and-paste the text below in your GitHub issue:

- `optimum` version: 1.6.1

- `transformers` version: 4.25.1

- Platform: Linux-5.19.0-29-generic-x86_64-with-glibc2.36

- Python version: 3.10.8

- Huggingface_hub version: 0.11.1

- PyTorch version (GPU?): 1.13.1 (cuda availabe: True)

``... | https://github.com/huggingface/optimum/issues/697 | open | [

"bug"

] | 2023-01-16T14:08:12Z | 2023-04-11T12:30:04Z | 3 | jplu |

huggingface/datasets | 5,424 | When applying `ReadInstruction` to custom load it's not DatasetDict but list of Dataset? | ### Describe the bug

I am loading datasets from custom `tsv` files stored locally and applying split instructions for each split. Although the ReadInstruction is being applied correctly and I was expecting it to be `DatasetDict` but instead it is a list of `Dataset`.

### Steps to reproduce the bug

Steps to reproduc... | https://github.com/huggingface/datasets/issues/5424 | closed | [] | 2023-01-16T06:54:28Z | 2023-02-24T16:19:00Z | 1 | macabdul9 |

pytorch/pytorch | 92,202 | Generator: when I want to use a new backend, how to create a Generator with the new backend? | ### 🐛 Describe the bug

I want to add a new backend, so I add my backend by referring to this tutorial. https://github.com/bdhirsh/pytorch_open_registration_example

But how to create a Generator with my new backend ?

I see the code related to 'Generator' is in the file, https://github.com/pytorch/pytorch/blob/m... | https://github.com/pytorch/pytorch/issues/92202 | closed | [

"triaged",

"module: backend"

] | 2023-01-14T08:11:04Z | 2023-10-28T15:02:10Z | null | heidongxianhua |

pytorch/TensorRT | 1,592 | ❓ [Question] How should recompilation in Torch Dynamo + `fx2trt` be handled? | ## ❓ Question

Given that Torch Dynamo compiles models lazily, how should benchmarking/usage of Torch Dynamo models, especially in cases where the inputs have a dynamic batch dimension, be handled?

## What you have already tried

Based on compiling and running inference using `fx2trt` with Torch Dynamo on the [B... | https://github.com/pytorch/TensorRT/issues/1592 | closed | [

"question",

"No Activity",

"component: fx"

] | 2023-01-14T02:18:53Z | 2023-05-09T00:02:14Z | null | gs-olive |

pytorch/functorch | 1,101 | How to get only the last few layers' gradident? | ```

from functorch import make_functional_with_buffers, vmap, grad

fmodel, params, buffers = make_functional_with_buffers(net,disable_autograd_tracking=True)

def compute_loss_stateless_model (params, buffers, sample, target):

batch = sample.unsqueeze(0)

targets = target.unsqueeze(0)

predictions = ... | https://github.com/pytorch/functorch/issues/1101 | open | [] | 2023-01-13T21:48:42Z | 2024-04-05T03:02:41Z | null | pmzzs |

pytorch/functorch | 1,099 | Will pmap be supported in functorh? | Greetings, I am very grateful that vmap is supported in functorch. Is there any plan to include support for pmap in the future? Thank you. Additionally, what are the ways that I can contribute to the development of this project? | https://github.com/pytorch/functorch/issues/1099 | open | [] | 2023-01-11T17:32:48Z | 2024-06-05T16:32:36Z | 2 | shixun404 |

pytorch/TensorRT | 1,585 | ❓ [Question] How can I make deserialized targets compatible with Torch-TensorRT ABI? | ## ❓ Question

When I load my compiled model:

`model = torch.jit.load('model.ts')

`

**I keep getting the error:**

`RuntimeError: [Error thrown at core/runtime/TRTEngine.cpp:250] Expected serialized_info.size() == SERIALIZATION_LEN to be true but got false

Program to be deserialized targets an incompatible... | https://github.com/pytorch/TensorRT/issues/1585 | closed | [

"question",

"No Activity",

"component: runtime"

] | 2023-01-11T12:44:58Z | 2023-05-04T00:02:16Z | null | emilwallner |

pytorch/kineto | 713 | How to get the CPU utilization by Pytorch Profiler? | According to the code of gpu_metrics_parser.py of torch-tb-profiler, I understand that the gpu utilization is actually the sum of event times of type EventTypes.KERNEL over a period of time / total time. So, is CPU utilization the sum of event times of type EventTypes.OPERATOR over a period of time / total time?

It ... | https://github.com/pytorch/kineto/issues/713 | closed | [] | 2023-01-11T09:46:20Z | 2023-02-15T03:53:40Z | null | young-chao |

pytorch/TensorRT | 1,580 | I am deleting some layers of Resneet152 for example del resnet152.fc and del resnet152.layer4 and save it locally in order to get the dimension of 1024. Later when I import this saved model it complains about the missing layer4. What might be the the reason? Does still try tp access the original model. How can get 102... | ## ❓ Question

<!-- Your question -->

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages

- PyTorch Version (e.g., 1.0):

- CPU Architecture:

- OS (e.... | https://github.com/pytorch/TensorRT/issues/1580 | closed | [

"question",

"No Activity"

] | 2023-01-09T15:22:23Z | 2023-04-22T00:02:19Z | null | pradeep10kumar |

pytorch/TensorRT | 1,579 | When I delete some layers from Resnet152 for example | ## ❓ Question

<!-- Your question -->

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages

- PyTorch Version (e.g., 1.0):

- CPU Architecture:

- OS (e.... | https://github.com/pytorch/TensorRT/issues/1579 | closed | [

"question"

] | 2023-01-09T15:17:50Z | 2023-01-09T15:18:31Z | null | pradeep10kumar |

pytorch/TensorRT | 1,578 | ❓ [Question] Failed to compile EfficientNet: Error: Segmentation fault (core dumped) | I followed the step in the demo notebook `[EfficientNet-example.ipynb](https://github.com/pytorch/TensorRT/blob/main/notebooks/EfficientNet-example.ipynb)`

When I try to compile EfficientNet, an error occurred: `Segmentation fault (core dumped)`

I have located the error is caused by

`

trt_model_fp32 = torch_t... | https://github.com/pytorch/TensorRT/issues/1578 | closed | [

"question"

] | 2023-01-09T14:44:25Z | 2023-02-28T23:40:20Z | null | Tonyboy999 |

huggingface/setfit | 260 | How to use .predict() function | Hi,

I am new at using the setfit. I will be running many tunings for models and currently achieved getting evaluation metrics using ("trainer.evaluate())

However, is there any way to do something like the following to save the trained model's predictions?

trainer = SetFitTrainer(......)

trainer.train()

**pre... | https://github.com/huggingface/setfit/issues/260 | closed | [

"question"

] | 2023-01-08T23:18:56Z | 2023-01-09T10:00:38Z | null | yafsin |

huggingface/setfit | 256 | Contrastive training number of epochs | The `SentenceTransformer` number of epochs is the same as the number of epochs for the classification head.

Even when `SetFitTrainer` is initialized with `num_epochs=1` and then `trainer.train(num_epochs=10)`, the sentence transformer runs with 10 epochs. Ideally, senatence transformer should run 1 epoch and the clas... | https://github.com/huggingface/setfit/issues/256 | closed | [

"question"

] | 2023-01-06T02:26:30Z | 2023-01-09T10:54:45Z | null | abhinav-kashyap-asus |

pytorch/serve | 2,057 | what is my_tc ? | ### 🐛 Describe the bug

torchserve --start --model-store model_store --models my_tc=BERTTokenClassification.mar --ncs

curl -X POST http://127.0.0.1:8080/predictions/my_tc -T Token_classification_artifacts/sample_text_captum_input.txt

### Error logs

2023-01-05T15:51:41,260 [INFO ] W-9001-my_tc_1.0-stdout MODEL_LOG... | https://github.com/pytorch/serve/issues/2057 | open | [

"triaged"

] | 2023-01-05T07:57:46Z | 2023-01-05T15:00:14Z | null | ucas010 |

huggingface/diffusers | 1,921 | How to finetune inpainting model for object removal? What is the input prompt for object removal for both training and inference? |

Hi team,

Thanks for your great work!

I am trying to get object removal functionality from inpainting of SD.

How to finetune inpainting model for object removal?

What is the input prompt for object removal for both training and inference?

Thanks | https://github.com/huggingface/diffusers/issues/1921 | closed | [

"stale"

] | 2023-01-05T01:23:20Z | 2023-04-03T14:50:38Z | null | hdjsjyl |

pytorch/functorch | 1,094 | batching over model parameters | I have a use-case for `functorch`. I would like to check possible iterations of model parameters in a very efficient way (I want to eliminate the loop). Here's an example code for a simplified case I got it working:

```python

linear = torch.nn.Linear(10,2)

default_weight = linear.weight.data

sample_input = torch.... | https://github.com/pytorch/functorch/issues/1094 | open | [] | 2023-01-04T17:59:59Z | 2023-01-04T21:42:36Z | 2 | LeanderK |

huggingface/setfit | 254 | Why are the models fine-tuned with CosineSimilarity between 0 and 1? | Hi everyone,

This is a small question related to how models are fine-tuned during the first step of training. I see that the default loss function is `losses.CosineSimilarityLoss`. But when generating sentence pairs [here](https://github.com/huggingface/setfit/blob/35c0511fa9917e653df50cb95a22105b397e14c0/src/setfit... | https://github.com/huggingface/setfit/issues/254 | open | [

"question"

] | 2023-01-03T09:47:11Z | 2023-03-14T10:24:17Z | null | EdouardVilain-Git |

pytorch/TensorRT | 1,570 | ❓ [Question] When I use fx2trt, can an unsupported op fallback to pytorch like the TorchScript compiler? | ## ❓ Question

<!-- Your question -->

When I use fx2trt, can an unsupported op fallback to pytorch like the TorchScript compiler? | https://github.com/pytorch/TensorRT/issues/1570 | closed | [

"question"

] | 2023-01-03T05:26:00Z | 2023-01-06T22:22:12Z | null | chenzhengda |

pytorch/TensorRT | 1,569 | ❓ [Question] How do you use dynamic shape when using fx as ir and the model is not fully lowerable | ## ❓ Question

I have a pytorch model that contains a Pixel Shuffle operation (which is not fully supported) and I would like to convert it to TensorRT, while being able to specify a dynamic shape as input. The "ts" path does not work as there is an issue, the "fx" path has problems too and I am not able to use a spl... | https://github.com/pytorch/TensorRT/issues/1569 | closed | [

"question",

"No Activity",

"component: fx"

] | 2023-01-02T14:44:52Z | 2023-04-15T00:02:10Z | null | ivan94fi |

pytorch/pytorch | 91,537 | Unclear how to change compiler used by `torch.compile` | ### 📚 The doc issue

It is not clear from https://pytorch.org/tutorials//intermediate/torch_compile_tutorial.html, nor from the docs in `torch.compile`, nor even from looking through `_dynamo/config.py`, how one can change the compiler used by PyTorch.

Right now I am seeing the following issue. My code:

```pyt... | https://github.com/pytorch/pytorch/issues/91537 | closed | [

"module: docs",

"triaged",

"oncall: pt2",

"module: dynamo"

] | 2022-12-30T15:40:11Z | 2023-12-01T19:00:48Z | null | MilesCranmer |

pytorch/pytorch | 91,498 | how to Wrap normalization layers like LayerNorm in FP32 when use FSDP | in the blog https://pytorch.org/blog/scaling-vision-model-training-platforms-with-pytorch/

<img width="904" alt="image" src="https://user-images.githubusercontent.com/16861194/209910992-619704cd-0ef4-42ec-9d5c-ec7b42005b8b.png">

how to Wrap normalization layers like LayerNorm in FP32 when use FSDP, do we have a e... | https://github.com/pytorch/pytorch/issues/91498 | closed | [

"oncall: distributed",

"triaged",

"module: fsdp"

] | 2022-12-29T06:13:34Z | 2023-08-04T17:17:32Z | null | xiaohu2015 |

huggingface/setfit | 251 | Using setfit with the Hugging Face API | Hi, thank you so much for this amazing library!

I have trained my model and pushed it to the Hugging Face hub.

Since the output is a text-classification task, and the model card uploaded is for the sentence transformers, how should I use the model to run the classification model through the Hugging Face API?

T... | https://github.com/huggingface/setfit/issues/251 | open | [

"question"

] | 2022-12-29T01:46:37Z | 2023-01-01T07:53:43Z | null | kwen1510 |

huggingface/setfit | 249 | Sentence Pairs generation: is possible to parallelize it? | My dataset has 20k samples, 200 labels, and 32 iterations, so that means around 128 million samples, right?

there's some way to parallelize the pairs sentences creation?

or at least to save these pairs to create one time and reuse multiple times (i.e. to train with different epochs)

Thanks | https://github.com/huggingface/setfit/issues/249 | open | [

"question"

] | 2022-12-28T17:50:02Z | 2023-02-14T20:04:29Z | null | info2000 |

huggingface/setfit | 245 | extracting embeddings from a trained SetFit model. | Hey First of All, Thank You For This Great Package!

IMy task relates to semantic similarity, in which I find 'closeness' of a query sentence to a list of candidate sentences. Something like [shown here](https://www.sbert.net/docs/usage/semantic_textual_similarity.html)

I wanted to know if there was a way to extract... | https://github.com/huggingface/setfit/issues/245 | closed | [

"question"

] | 2022-12-26T12:27:50Z | 2023-12-06T13:21:04Z | null | moonisali |

huggingface/optimum | 640 | Improve documentations around ONNX export | ### Feature request

* Document `-with-past`, `--for-ort`, why use it

* Add more details in `optimum-cli export onnx --help` directly

### Motivation

/

### Your contribution

/ | https://github.com/huggingface/optimum/issues/640 | closed | [

"documentation",

"onnx",

"exporters"

] | 2022-12-23T15:54:32Z | 2023-01-03T16:34:56Z | 0 | fxmarty |

huggingface/datasets | 5,385 | Is `fs=` deprecated in `load_from_disk()` as well? | ### Describe the bug

The `fs=` argument was deprecated from `Dataset.save_to_disk` and `Dataset.load_from_disk` in favor of automagically figuring it out via fsspec:

https://github.com/huggingface/datasets/blob/9a7272cd4222383a5b932b0083a4cc173fda44e8/src/datasets/arrow_dataset.py#L1339-L1340

Is there a reason the... | https://github.com/huggingface/datasets/issues/5385 | closed | [] | 2022-12-22T21:00:45Z | 2023-01-23T10:50:05Z | 3 | dconathan |

pytorch/examples | 1,105 | MNIST Hogwild on Apple Silicon | Any help would be appreciated! Unable to run multiprocessing with mps device

## Context

<!--- How has this issue affected you? What are you trying to accomplish? -->

<!--- Providing context helps us come up with a solution that is most useful in the real world -->

* Pytorch version: 2.0.0.dev20221220

* Operating... | https://github.com/pytorch/examples/issues/1105 | open | [

"help wanted"

] | 2022-12-22T06:25:48Z | 2023-12-09T09:43:08Z | 4 | jeffreykthomas |

pytorch/functorch | 1,088 | Add vmap support for PyTorch operators | We're looking for more motivated open-source developers to help build out functorch (and PyTorch, since functorch is now just a part of PyTorch). Below is a selection of good first issues.

- [x] https://github.com/pytorch/pytorch/issues/91174

- [x] https://github.com/pytorch/pytorch/issues/91175

- [x] https://github... | https://github.com/pytorch/functorch/issues/1088 | open | [

"good first issue"

] | 2022-12-20T18:51:16Z | 2023-04-19T23:40:06Z | 2 | zou3519 |

huggingface/optimum | 625 | Add support for Speech Encoder Decoder models in `optimum.exporters.onnx` | ### Feature request

Add support for [Speech Encoder Decoder Models](https://huggingface.co/docs/transformers/v4.25.1/en/model_doc/speech-encoder-decoder#speech-encoder-decoder-models)

### Your contribution

Me or other members can implement it (cc @mht-sharma @fxmarty ) | https://github.com/huggingface/optimum/issues/625 | open | [

"feature-request",

"onnx"

] | 2022-12-20T16:48:49Z | 2023-11-15T10:02:54Z | 4 | michaelbenayoun |

huggingface/optimum | 615 | Shall we set diffusers as soft dependency for onnxruntime module? | It seems a little bit strange for me that we need to have diffusers for doing sequence classification.

### System Info

```shell

Dev branch of Optimum

```

### Who can help?

@echarlaix @JingyaHuang

### Reproduction

```python

from optimum.onnxruntime import ORTModelForSequenceClassification

```

#... | https://github.com/huggingface/optimum/issues/615 | closed | [

"bug"

] | 2022-12-19T11:23:34Z | 2022-12-21T14:02:45Z | 1 | JingyaHuang |

pytorch/android-demo-app | 287 | How to convert live camera to landscape object detection with correct camera aspect ratio? | https://github.com/pytorch/android-demo-app/issues/287 | open | [] | 2022-12-19T10:01:47Z | 2022-12-19T10:05:06Z | null | pratheeshsuvarna | |

pytorch/vision | 7,043 | How to generate the score for a determined region of an image using Mask R-CNN | ### 🐛 Describe the bug

I want to change the RegionProposalNetwork of Mask R-CNN to generate the score for a determined region of an image using Mask R-CNN.

```

import torch

from torch import nn

import torchvision.models as models

import torchvision

from torchvision.models.detection import MaskRCNN

from torch... | https://github.com/pytorch/vision/issues/7043 | open | [] | 2022-12-19T08:04:50Z | 2022-12-19T08:04:50Z | null | mingqiJ |

pytorch/serve | 2,039 | how to load models at startup for docker | First, I created docker container by followed https://github.com/pytorch/serve/tree/master/docker#create-torchserve-docker-image, I leaves all configs default except remove `--rm` in `docker run ...` and make docker container start automatically by

```docker update --restart unless-stopped mytorchserve```

Then, I r... | https://github.com/pytorch/serve/issues/2039 | closed | [] | 2022-12-17T02:55:23Z | 2022-12-18T01:00:29Z | null | hungtooc |

huggingface/transformers | 20,794 | When I use the following code on tpuvm and use model.generate() to infer, the speed is very slow. It seems that the tpu is not used. What is the problem? | ### System Info

When I use the following code on tpuvm and use model.generate() to infer, the speed is very slow. It seems that the tpu is not used. What is the problem?

jax device is exist

```python

import jax

num_devices = jax.device_count()

device_type = jax.devices()[0].device_kind

assert "TPU" in device_typ... | https://github.com/huggingface/transformers/issues/20794 | closed | [] | 2022-12-16T09:15:32Z | 2023-05-21T15:03:06Z | null | joytianya |

huggingface/optimum | 595 | Document and (possibly) improve the `use_past`, `use_past_in_inputs`, `use_present_in_outputs` API | ### Feature request

As the title says.

Basically, for `OnnxConfigWithPast` there are three attributes:

- `use_past_in_inputs`: to specify that the exported model should have `past_key_values` as inputs

- `use_present_in_outputs`: to specify that the exported model should have `past_key_values` as outputs

- `u... | https://github.com/huggingface/optimum/issues/595 | closed | [

"documentation",

"Stale"

] | 2022-12-15T13:57:43Z | 2025-07-03T02:16:51Z | 2 | michaelbenayoun |

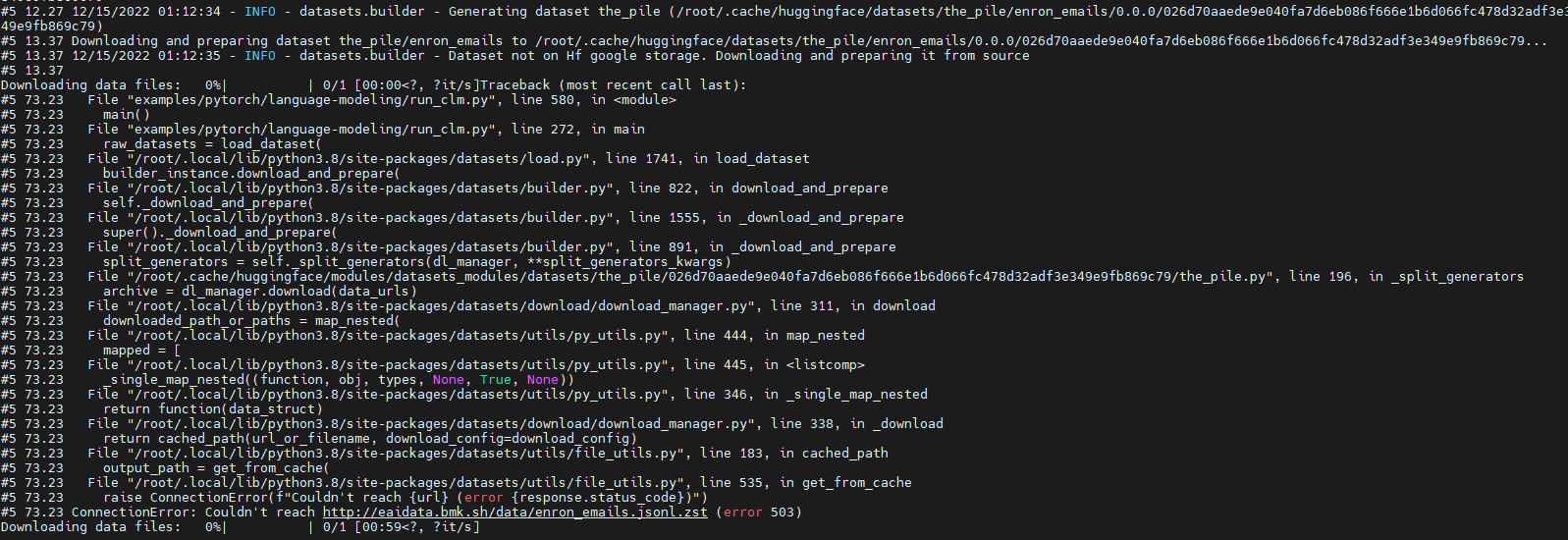

huggingface/datasets | 5,362 | Run 'GPT-J' failure due to download dataset fail (' ConnectionError: Couldn't reach http://eaidata.bmk.sh/data/enron_emails.jsonl.zst ' ) | ### Describe the bug

Run model "GPT-J" with dataset "the_pile" fail.

The fail out is as below:

Looks like which is due to "http://eaidata.bmk.sh/data/enron_emails.jsonl.zst" unreachable .

### Steps to ... | https://github.com/huggingface/datasets/issues/5362 | closed | [] | 2022-12-15T01:23:03Z | 2022-12-15T07:45:54Z | 2 | shaoyuta |

pytorch/TensorRT | 1,547 | ❓ [Question] How can I load a TensorRT model generated with `trtexec`? | ## ❓ Question

How can I load into Pytorch a TensorRT model engine (.trt or .plan) generated with `trtexec` ?

I have the following TensorRT model engine (generated from a ONNX file) using the `trtexec` tool provided by Nvidia

```

trtexec --onnx=../2.\ ONNX/CLIP-B32-image.onnx \

--saveEngine=../4.\ Ten... | https://github.com/pytorch/TensorRT/issues/1547 | closed | [

"question"

] | 2022-12-13T11:47:49Z | 2022-12-13T17:49:06Z | null | javiabellan |

huggingface/datasets | 5,354 | Consider using "Sequence" instead of "List" | ### Feature request

Hi, please consider using `Sequence` type annotation instead of `List` in function arguments such as in [`Dataset.from_parquet()`](https://github.com/huggingface/datasets/blob/main/src/datasets/arrow_dataset.py#L1088). It leads to type checking errors, see below.

**How to reproduce**

```py

... | https://github.com/huggingface/datasets/issues/5354 | open | [

"enhancement",

"good first issue"

] | 2022-12-12T15:39:45Z | 2025-11-21T22:35:10Z | 13 | tranhd95 |

huggingface/transformers | 20,733 | Verify that a test in `LayoutLMv3` 's tokenizer is checking what we want | I'm taking the liberty of opening an issue to share a question I've been keeping in the corner of my head, but now that I'll have less time to devote to `transformers` I prefer to share it before it's forgotten.

In the PR where the `LayoutLMv3` model was added, I was not very sure about the target value used for one... | https://github.com/huggingface/transformers/issues/20733 | closed | [] | 2022-12-12T15:17:36Z | 2023-05-26T10:14:14Z | null | SaulLu |

huggingface/setfit | 227 | Compare with other approaches | Dumb question:

How does setfit compare with other approaches for sentence classification in low data settings? Two that may be worth comparing to:

- Various techniques for [augmented SBERT](https://www.sbert.net/examples/training/data_augmentation/README.html)

- Simple Contrastive Learning [SimCSE](https://githu... | https://github.com/huggingface/setfit/issues/227 | open | [

"question"

] | 2022-12-12T14:32:51Z | 2022-12-20T08:49:52Z | null | creatorrr |

huggingface/datasets | 5,351 | Do we need to implement `_prepare_split`? | ### Describe the bug

I'm not sure this is a bug or if it's just missing in the documentation, or i'm not doing something correctly, but I'm subclassing `DatasetBuilder` and getting the following error because on the `DatasetBuilder` class the `_prepare_split` method is abstract (as are the others we are required to im... | https://github.com/huggingface/datasets/issues/5351 | closed | [] | 2022-12-12T01:38:54Z | 2022-12-20T18:20:57Z | 11 | jmwoloso |

pytorch/tutorials | 2,151 | Quantize weights to unisgned 8 bit | I am trying to quantize the weights of the BERT model to unsigned 8 bits. Am using the 'dynamic_quantize' function for the same.

`quantized_model = torch.quantization.quantize_dynamic(

model, {torch.nn.Linear}, dtype=torch.quint8

)`

But it throws the error 'AssertionError: The only supported dtypes for dyna... | https://github.com/pytorch/tutorials/issues/2151 | closed | [

"question",

"arch-optimization"

] | 2022-12-10T08:59:07Z | 2023-02-23T19:53:08Z | null | rohanjuneja |

huggingface/datasets | 5,343 | T5 for Q&A produces truncated sentence | Dear all, I am fine-tuning T5 for Q&A task using the MedQuAD ([GitHub - abachaa/MedQuAD: Medical Question Answering Dataset of 47,457 QA pairs created from 12 NIH websites](https://github.com/abachaa/MedQuAD)) dataset. In the dataset, there are many long answers with thousands of words. I have used pytorch_lightning to... | https://github.com/huggingface/datasets/issues/5343 | closed | [] | 2022-12-08T19:48:46Z | 2022-12-08T19:57:17Z | 0 | junyongyou |

huggingface/optimum | 566 | Add optimization and quantization options to `optimum.exporters.onnx` | ### Feature request

Would be nice to have two more arguments in `optimum.exporters.onnx` in order to have the optimized and quantized version of the exported models along side with the "normal" ones. I can imagine something like:

```

python -m optimum.exporters.onnx --model <model-name> -OX -quantized-arch <arch> ou... | https://github.com/huggingface/optimum/issues/566 | closed | [] | 2022-12-08T18:49:04Z | 2023-04-11T12:26:54Z | 17 | jplu |

pytorch/pytorch | 93,472 | torch.compile does not bring better performance and even lower than no compile, what is the possible reason? | ### 🐛 Describe the bug

_No response_

### Error logs

_No response_

### Minified repro

_No response_

cc @ezyang @soumith @msaroufim @wconstab @ngimel @bdhirsh | https://github.com/pytorch/pytorch/issues/93472 | closed | [

"oncall: pt2"

] | 2022-12-07T17:00:43Z | 2023-02-01T17:47:28Z | null | chexiangying |

pytorch/TensorRT | 1,535 | [Bug] Invoke error while implementing TensorRT on pytorch | ## ❓ Question

Got the error while using tensorrt on pytorch pretrained resnet model. what is this error and how to solve it.

## Error

Traceback (most recent call last):

File "pretrained_resnet.py", line 116, in <module>

trt_model_32 = torch_tensorrt.compile(traced, inputs=[torch_tensorrt.Input(

File "... | https://github.com/pytorch/TensorRT/issues/1535 | closed | [

"question",

"No Activity"

] | 2022-12-07T07:46:02Z | 2023-04-01T00:02:09Z | null | amrithpartha |

huggingface/transformers | 20,638 | ValueError: Unable to create tensor, you should probably activate truncation and/or padding with 'padding=True' 'truncation=True' to have batched tensors with the same length. Perhaps your features (`labels` in this case) have excessive nesting (inputs type `list` where type `int` is expected). | ### System Info

- `transformers` version: 4.25.1

- Platform: Linux-5.10.133+-x86_64-with-glibc2.27

- Python version: 3.8.15

- Huggingface_hub version: 0.11.1

- PyTorch version (GPU?): 1.12.1+cu113 (True)

- Tensorflow version (GPU?): 2.9.2 (True)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax versio... | https://github.com/huggingface/transformers/issues/20638 | closed | [] | 2022-12-07T02:10:35Z | 2023-01-31T21:23:46Z | null | vitthal-bhandari |

huggingface/setfit | 222 | Pre-training a generic SentenceTransformer for domain adaptation | When using `SetFit` for classification in a more technical domain, I could imagine the generically-trained `SBERT` models may produce poor sentence embeddings if the domain is not represented well enough in the diverse training corpus. In this case, would it be advantageous to first apply domain adaptation techniques (... | https://github.com/huggingface/setfit/issues/222 | open | [

"question"

] | 2022-12-05T15:22:57Z | 2023-04-30T06:45:47Z | null | zachschillaci27 |

huggingface/setfit | 219 | efficient way of saving finetuned zero-shot models? | Hi guys, pretty interesting project.

I was wondering if there is any way to save models after a zero-shot model is finetuned for few-shot model.

So for example, if I finetuned a couple of say, `sentence-transformers/paraphrase-mpnet-base-v2` models, the major difference between them is just the weights of final f... | https://github.com/huggingface/setfit/issues/219 | open | [

"question"

] | 2022-12-05T07:36:40Z | 2022-12-20T08:49:32Z | null | RaiAmanRai |

pytorch/TensorRT | 1,521 | ❓ [Question] How does INT8 inference really work at runtime? | ## ❓ Question

Hi everyone,

I can’t really find an example of how int8 inference works at runtime. What I know is that, given that we are performing uniform symmetric quantisation, we calibrate the model, i.e. we find the best scale parameters for each weight tensor (channel-wise) and *activations* (that correspondto ... | https://github.com/pytorch/TensorRT/issues/1521 | closed | [

"question",

"component: quantization"

] | 2022-12-04T16:32:57Z | 2023-02-02T23:54:00Z | null | andreabonvini |

pytorch/data | 911 | `DistributedReadingService` supports multi-processing reading | ### 🚀 The feature

`TorchData` is a great work for better data loading! I have tried it and it gives me a nice workflow with tidy code-style.❤️

When using DDP, I work with the `DataLoader2` where `reading_service=DistributedReadingService()`. I find this service runs one worker for outputting datas per node. This m... | https://github.com/meta-pytorch/data/issues/911 | closed | [] | 2022-12-04T03:49:49Z | 2023-02-07T06:25:35Z | 9 | xiaosu-zhu |

pytorch/functorch | 1,074 | vmap equivalent for tensor[indices] | Hi,

Is there a way of vmapping over the selection of passing indices within a Tensor? Minimal reproducible example below,

```

import torch

from functorch import vmap

def select(x, index):

print(x.shape, index.shape)

return x[index]

x = torch.randn(64, 1000) #64 vectors of length 1000

index=torch.aran... | https://github.com/pytorch/functorch/issues/1074 | closed | [] | 2022-12-03T19:20:18Z | 2022-12-03T19:31:37Z | 1 | AlphaBetaGamma96 |

pytorch/examples | 1,101 | Inconsistency b/w tutorial and the code | ## 📚 Documentation

In the [DDP Tutorial](https://pytorch.org/tutorials/beginner/ddp_series_multigpu.html), there is inconsistency between the code in the tutorial and [original code](https://github.com/pytorch/examples/blob/main/distributed/ddp-tutorial-series/multigpu.py).

For example, under Running the distrib... | https://github.com/pytorch/examples/issues/1101 | closed | [

"help wanted",

"distributed"

] | 2022-12-03T17:15:42Z | 2023-02-17T18:47:56Z | 4 | BalajiAI |

pytorch/android-demo-app | 280 | StreamingASR. How to use custom RNNT model? | Hey guys

I have a my self trained RNNT model with another smp_bpe model.

How I can convert my smp_bpe.model to smp_bpe.dict for fairseq.data.Dictionary.load method? | https://github.com/pytorch/android-demo-app/issues/280 | closed | [] | 2022-12-02T12:18:50Z | 2022-12-02T14:44:48Z | null | make1986 |

pytorch/serve | 2,019 | Diagnosing very slow performance | ### 🐛 Describe the bug

I'm trying to work out why my endpoint throughput is very slow. I wasn't sure if this is the best forum but there doesn't appear to be a specific torchserve forum on https://discuss.pytorch.org/

I have simple text classifier, I've created a custom handler as the default wasn't suitable. I ... | https://github.com/pytorch/serve/issues/2019 | closed | [

"question"

] | 2022-12-01T21:59:57Z | 2022-12-02T22:15:06Z | null | david-waterworth |

huggingface/datasets | 5,326 | No documentation for main branch is built | Since:

- #5250

- Commit: 703b84311f4ead83c7f79639f2dfa739295f0be6

the docs for main branch are no longer built.

The change introduced only triggers the docs building for releases. | https://github.com/huggingface/datasets/issues/5326 | closed | [

"bug"

] | 2022-12-01T16:50:58Z | 2022-12-02T16:26:01Z | 0 | albertvillanova |

huggingface/datasets | 5,325 | map(...batch_size=None) for IterableDataset | ### Feature request

Dataset.map(...) allows batch_size to be None. It would be nice if IterableDataset did too.

### Motivation

Although it may seem a bit of a spurious request given that `IterableDataset` is meant for larger than memory datasets, but there are a couple of reasons why this might be nice.

One is th... | https://github.com/huggingface/datasets/issues/5325 | closed | [

"enhancement",

"good first issue"

] | 2022-12-01T15:43:42Z | 2022-12-07T15:54:43Z | 5 | frankier |

huggingface/datasets | 5,324 | Fix docstrings and types in documentation that appears on the website | While I was working on https://github.com/huggingface/datasets/pull/5313 I've noticed that we have a mess in how we annotate types and format args and return values in the code. And some of it is displayed in the [Reference section](https://huggingface.co/docs/datasets/package_reference/builder_classes) of the document... | https://github.com/huggingface/datasets/issues/5324 | open | [

"documentation"

] | 2022-12-01T15:34:53Z | 2024-01-23T16:21:54Z | 5 | polinaeterna |

pytorch/TensorRT | 1,509 | ❓ [Question] What does `is_aten` argument do in torch_tensorrt.fx.compile() ? | ## ❓ Question

The docstring for `is_aten` argument in torch_tensorrt.fx.compile() is missing and hence the users don't know what it does. | https://github.com/pytorch/TensorRT/issues/1509 | closed | [

"question"

] | 2022-12-01T14:38:12Z | 2022-12-02T12:50:56Z | null | 1559588143 |

pytorch/TensorRT | 1,508 | ❓ [Question] How to save and load compiled model from torch-tensorrt | I am working on a Jetson Xavier NX16 and using torch-tensorrt.compile(model, "default", input, enable_optimization) every time I restart my program seems like it is just doing the same tedious task over and over.

Is there not a way for torch-tensorrt to load the serialized engine created by torch_tensorrt.convert_met... | https://github.com/pytorch/TensorRT/issues/1508 | closed | [

"question"

] | 2022-12-01T11:20:08Z | 2022-12-16T07:46:59Z | null | MartinPedersenpp |

pytorch/serve | 2,016 | Missing mandatory parameter --model-store | ### 📚 The doc issue

I created a config.properties file

```

model_store="model_store"

load_models=all

models = {\

"tc": {\

"1.0.0": {\

"defaultVersion": true,\

"marName": "text_classifier.mar",\

"minWorkers": 1,\

"maxWorkers": 4,\

"batchSize": 1,\

... | https://github.com/pytorch/serve/issues/2016 | open | [

"documentation",

"question"

] | 2022-12-01T01:09:00Z | 2022-12-02T01:42:05Z | null | david-waterworth |

huggingface/datasets | 5,317 | `ImageFolder` performs poorly with large datasets | ### Describe the bug

While testing image dataset creation, I'm seeing significant performance bottlenecks with imagefolders when scanning a directory structure with large number of images.

## Setup

* Nested directories (5 levels deep)

* 3M+ images

* 1 `metadata.jsonl` file

## Performance Degradation Point... | https://github.com/huggingface/datasets/issues/5317 | open | [] | 2022-12-01T00:04:21Z | 2022-12-01T21:49:26Z | 3 | salieri |

pytorch/functorch | 1,071 | Different gradients for HyperNet training | TLDR: Is there a way to optimize model created by combine_state_for_ensemble using torch.backward()?

Hi, I am using combine_state_for_ensemble for HyperNet training.

```

fmodel, fparams, fbuffers = combine_state_for_ensemble([HyperMLP() for i in range(K)])

[p.requires_grad_() for p in fparams];

weights_and_bi... | https://github.com/pytorch/functorch/issues/1071 | open | [] | 2022-11-30T21:37:05Z | 2022-12-03T13:03:44Z | 2 | bkoyuncu |

pytorch/functorch | 1,070 | Applying grad elementwise to tensors of arbitrary shape | What is the easiest way to apply the grad of a function elementwise to a tensor of arbitrary shape? For example

```python

import torch

from functorch import grad, vmap

# These functions can be called with tensor of any shape and will be applied elementwise

sin = torch.sin

cos = torch.cos

# Create cos funct... | https://github.com/pytorch/functorch/issues/1070 | closed | [] | 2022-11-29T14:45:57Z | 2022-11-29T16:59:34Z | 4 | EmilienDupont |

pytorch/serve | 2,010 | How to assign one or more specific gpus to each model when deploying multiple models at once. | How to assign one or more specific gpus to each model when deploying multiple models at once. If I have two models and three gpus, the workers of the first model I only want to deploy on gpus 0 and 1, and the workers of the second model I only want to deploy on gpus 3. Instead of assigning gpus to each model sequential... | https://github.com/pytorch/serve/issues/2010 | closed | [

"question",

"gpu"

] | 2022-11-29T11:26:10Z | 2023-12-17T22:56:55Z | null | Git-TengSun |

huggingface/setfit | 209 | Limitations of Setfit Model | Hi, was wondering your thoughts on some of the limitations of the Setfit model. Can it support any sort of few shot text classification, or what are some areas where this model falls short? Are there any research papers / ideas to address some of these limitations.

Also, is the model available to call via Hugging F... | https://github.com/huggingface/setfit/issues/209 | open | [

"question"

] | 2022-11-28T22:58:35Z | 2023-02-24T20:11:00Z | null | nv78 |

pytorch/vision | 6,985 | Range compatibility for pytorch dependency | ### 🚀 The feature

Currently `torchvision` only ever supports a hard-pinned version of `torch`. f.e. `torchvision==0.13.0` requires`torch==1.12.0` and `torchvision==0.13.1` requires `torch==1.12.1`. It would be easier for users if torchvision wouldn't put exact restrictions on the `torch` version.

### Motivation, pit... | https://github.com/pytorch/vision/issues/6985 | closed | [

"question",

"topic: binaries"

] | 2022-11-28T15:01:17Z | 2022-12-08T15:00:36Z | null | alexandervaneck |

pytorch/TensorRT | 1,484 | Building on Jetson Xavier NX16GB with Jetpack4.6 (TensorRT8.0.1) python3.9, pytorch1.13 | I am trying to build the torch_tensorrt wheel on my Jetson Xavier NX16GB running Jetpack4.6 which means I run TensorRT8-0-1 with python3.9.15 and a on device compiled pytorch/torchlib 1.13.0.

I just can't seem to get it to compile succesfully.

I have tried both v1.1.0 until I realized that it was not really backwa... | https://github.com/pytorch/TensorRT/issues/1484 | closed | [

"question",

"channel: linux-jetpack"

] | 2022-11-28T13:08:28Z | 2022-12-01T11:05:36Z | null | MartinPedersenpp |

pytorch/examples | 1,097 | argument -a/--arch: invalid choice: 'efficientnet_b0' | Error reported:

main.py: error: argument -a/--arch: invalid choice: 'efficientnet_b0' (choose from 'alexnet', 'densenet121', 'densenet161', 'densenet169', 'densenet201', 'googlenet', 'inception_v3', 'mnasnet0_5', 'mnasnet0_75', 'mnasnet1_0', 'mnasnet1_3', 'mobilenet_v2', 'resnet101', 'resnet152', 'resnet18', 'resne... | https://github.com/pytorch/examples/issues/1097 | closed | [] | 2022-11-28T10:36:54Z | 2022-11-28T10:45:53Z | 1 | Deeeerek |

pytorch/functorch | 1,066 | Unable to compute derivatives due to calling .item() | Hello, i am getting the error below whenever i try to compute the jacobian of my network.

RuntimeError: vmap: It looks like you're either (1) calling .item() on a Tensor or (2) attempting to use a Tensor in some data-dependent control flow or (3) encountering this error in PyTorch internals. For (1): we don't supp... | https://github.com/pytorch/functorch/issues/1066 | closed | [] | 2022-11-27T05:16:57Z | 2022-11-29T10:53:43Z | 3 | elientumba2019 |

pytorch/examples | 1,096 | DDP training question | Hi, I'm using the tutorial [https://github.com/pytorch/tutorials/blob/master/intermediate_source/ddp_tutorial.rst](url) for DDP train,using 4 gpus in myself code, reference Basic Use Case. But when I finished the modification, it was stuck during run the demo,meanwhile,video memory has been occupied.Could you help me? | https://github.com/pytorch/examples/issues/1096 | open | [

"help wanted",

"distributed"

] | 2022-11-25T06:58:55Z | 2023-08-24T06:32:13Z | 2 | Henryplay |

pytorch/android-demo-app | 278 | How to change portrait to landscape on camera view in Object Detection App ? | https://github.com/pytorch/android-demo-app/issues/278 | open | [] | 2022-11-24T05:00:25Z | 2022-12-15T09:07:27Z | null | aravinthk00 | |

huggingface/Mongoku | 92 | Switch to Svelte(Kit?) | https://github.com/huggingface/Mongoku/issues/92 | closed | [

"enhancement",

"help wanted",

"question"

] | 2022-11-23T21:28:39Z | 2025-10-25T16:03:14Z | null | julien-c | |

huggingface/datasets | 5,286 | FileNotFoundError: Couldn't find file at https://dumps.wikimedia.org/enwiki/20220301/dumpstatus.json | ### Describe the bug

I follow the steps provided on the website [https://huggingface.co/datasets/wikipedia](https://huggingface.co/datasets/wikipedia)

$ pip install apache_beam mwparserfromhell

>>> from datasets import load_dataset

>>> load_dataset("wikipedia", "20220301.en")

however this results in the follo... | https://github.com/huggingface/datasets/issues/5286 | closed | [] | 2022-11-23T14:54:15Z | 2024-11-23T01:16:41Z | 3 | roritol |

pytorch/tutorials | 2,126 | Incorrect use of "epoch" in the Optimizing Model Parameters tutorial | From the first paragraph of the [Optimizing Model Parameters](https://github.com/pytorch/tutorials/blob/master/beginner_source/basics/optimization_tutorial.py) tutorial:

> in each iteration (called an epoch) the model makes a guess about the output, calculates the error in its guess (loss), collects the derivatives ... | https://github.com/pytorch/tutorials/issues/2126 | closed | [

"intro"

] | 2022-11-22T10:23:34Z | 2022-11-28T21:42:30Z | 1 | chrsigg |

huggingface/setfit | 198 | text similarity | Hi can I use this system to obtain the similaarity scores of my data set to a given prompts.

If not what best solution could help this problem?

thank you | https://github.com/huggingface/setfit/issues/198 | open | [

"question"

] | 2022-11-22T06:59:21Z | 2022-12-20T09:04:53Z | null | aivyon |

huggingface/datasets | 5,274 | load_dataset possibly broken for gated datasets? | ### Describe the bug

When trying to download the [winoground dataset](https://huggingface.co/datasets/facebook/winoground), I get this error unless I roll back the version of huggingface-hub:

```

[/usr/local/lib/python3.7/dist-packages/huggingface_hub/utils/_validators.py](https://localhost:8080/#) in validate_rep... | https://github.com/huggingface/datasets/issues/5274 | closed | [] | 2022-11-21T21:59:53Z | 2023-05-27T00:06:14Z | 9 | TristanThrush |

pytorch/TensorRT | 1,467 | ❓ [Question] Profiling examples? | ## ❓ Question

When I'm not using TensorRT, I run my model through an FX interpreter that times each call op (by inserting CUDA events before/after and measuring the elapsed time). I'd like to do something similar after converting/compiling the model to TensorRT, and I see there is some profiling built in with [tenso... | https://github.com/pytorch/TensorRT/issues/1467 | closed | [

"question",

"No Activity",

"component: runtime"

] | 2022-11-21T21:13:28Z | 2023-05-04T00:02:17Z | null | collinmccarthy |

huggingface/datasets | 5,272 | Use pyarrow Tensor dtype | ### Feature request

I was going the discussion of converting tensors to lists.

Is there a way to leverage pyarrow's Tensors for nested arrays / embeddings?

For example:

```python

import pyarrow as pa

import numpy as np

x = np.array([[2, 2, 4], [4, 5, 100]], np.int32)

pa.Tensor.from_numpy(x, dim_names=["dim1... | https://github.com/huggingface/datasets/issues/5272 | open | [

"enhancement"

] | 2022-11-20T15:18:41Z | 2024-11-11T03:03:17Z | 17 | franz101 |

pytorch/tutorials | 2,122 | using nn.Module(X).argmax(1) - get IndexError | Hello there, I'm student of NN course, I'm try to implement FFNN (or TDNN) to work on prediction of AR(2)-model, im using PyTorch example, and on my data and NN architecture i got pred.argmax(1) - error:

```

Traceback (most recent call last):

File "/home/b0r1ngx/PycharmProjects/ArtificialNeuroNets/group_00201/lab0... | https://github.com/pytorch/tutorials/issues/2122 | open | [

"question",

"arch-optimization"

] | 2022-11-19T16:05:26Z | 2023-03-01T16:22:33Z | null | b0r1ngx |

huggingface/optimum | 488 | Community contribution - `BetterTransformer` integration for more models! | ## `BetterTransformer` integration for more models!

`BetterTransformer` API provides faster inference on CPU & GPU through a simple interface!

Models can benefit from very interesting speedups using a one liner and by making sure to install the latest version of PyTorch. A complete guideline on how to convert a ... | https://github.com/huggingface/optimum/issues/488 | open | [

"good first issue"

] | 2022-11-18T10:45:39Z | 2025-05-20T20:35:02Z | 26 | younesbelkada |

huggingface/setfit | 192 | How to use a custom Sentence Transformer pretrained model | Hello team,

Presently we are using models which are present in hugging face . I have a custom trained Sentence transformer.

How I can use a custom trained Hugging face model in the present pipeline. | https://github.com/huggingface/setfit/issues/192 | open | [

"question"

] | 2022-11-17T09:13:59Z | 2022-12-20T09:05:06Z | null | theainerd |

huggingface/setfit | 191 | How to build multilabel text classfication dataset | From the sample below, param **column_mapping** is used to set up the dataset. What is the format of label column in multilabel?Is it the one-hot label?

trainer = SetFitTrainer(

model=model,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

loss_class=CosineSimilarityLoss,

metric="ac... | https://github.com/huggingface/setfit/issues/191 | closed | [

"question"

] | 2022-11-17T05:50:56Z | 2022-12-13T22:32:16Z | null | HenryYuen128 |

pytorch/pytorch | 89,136 | [FSDP] Adam Gives Different Results Where Only Difference Is Flattening | Consider the following unit test (that relies on some imports from `common_fsdp.py`):

```

def test(self):

local_model = TransformerWithSharedParams.init(

self.process_group,

FSDPInitMode.NO_FSDP,

CUDAInitMode.CUDA_BEFORE,

deterministic=True,

)

fsdp_model = FSDP(

... | https://github.com/pytorch/pytorch/issues/89136 | closed | [

"oncall: distributed",

"module: fsdp"

] | 2022-11-16T15:16:55Z | 2024-06-11T20:01:26Z | null | awgu |

huggingface/datasets | 5,249 | Protect the main branch from inadvertent direct pushes | We have decided to implement a protection mechanism in this repository, so that nobody (not even administrators) can inadvertently push accidentally directly to the main branch.

See context here:

- d7c942228b8dcf4de64b00a3053dce59b335f618

To do:

- [x] Protect main branch

- Settings > Branches > Branch protec... | https://github.com/huggingface/datasets/issues/5249 | closed | [

"maintenance"

] | 2022-11-16T14:19:03Z | 2023-12-21T10:28:27Z | 1 | albertvillanova |

pytorch/TensorRT | 1,452 | 🐛 [Bug] FX front-end layer norm, missing plugin | ## Bug Description

I'm using a ConvNeXt model from the timm library which uses `torch.nn.functional.layer_norm`. I'm getting this warning during conversion:

```

Unable to find layer norm plugin, fall back to TensorRT implementation

```

which is triggered from [this line](https://github.com/pytorch/TensorRT/... | https://github.com/pytorch/TensorRT/issues/1452 | closed | [

"question",

"No Activity",

"component: plugins"

] | 2022-11-15T19:37:26Z | 2023-06-10T00:02:28Z | null | collinmccarthy |

huggingface/datasets | 5,243 | Download only split data | ### Feature request

Is it possible to download only the data that I am requesting and not the entire dataset? I run out of disk spaceas it seems to download the entire dataset, instead of only the part needed.

common_voice["test"] = load_dataset("mozilla-foundation/common_voice_11_0", "en", split="test",

... | https://github.com/huggingface/datasets/issues/5243 | open | [

"enhancement"

] | 2022-11-15T10:15:54Z | 2025-02-25T14:47:03Z | 7 | capsabogdan |

huggingface/diffusers | 1,281 | what is the meaning of parameter: "num_class_images" | What is the `num_class_images `parameter used for? I see that in some examples it is 50, sometimes it is 200. In the source code it is said that: "Minimal class images for prior preservation loss. If not have enough images, additional images will be sampled with class_prompt."

I still do not fully grasp it. For exampl... | https://github.com/huggingface/diffusers/issues/1281 | closed | [] | 2022-11-14T18:32:22Z | 2022-12-06T01:47:42Z | null | himmetozcan |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.