repo stringclasses 147

values | number int64 1 172k | title stringlengths 2 476 | body stringlengths 0 5k | url stringlengths 39 70 | state stringclasses 2

values | labels listlengths 0 9 | created_at timestamp[ns, tz=UTC]date 2017-01-18 18:50:08 2026-01-06 07:33:18 | updated_at timestamp[ns, tz=UTC]date 2017-01-18 19:20:07 2026-01-06 08:03:39 | comments int64 0 58 ⌀ | user stringlengths 2 28 |

|---|---|---|---|---|---|---|---|---|---|---|

huggingface/optimum | 987 | Have optimum supported BLIP-2 model converted to onnx? | Hi, have optimum supported BLIP-2 model converted to onnx? | https://github.com/huggingface/optimum/issues/987 | closed | [] | 2023-04-20T07:07:53Z | 2023-04-21T11:45:41Z | 1 | joewale |

huggingface/setfit | 364 | Understanding the trainer parameters | I am looking at the SetFit example with SetFitHead:

```

# Create trainer

trainer = SetFitTrainer(

model=model,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

loss_class=CosineSimilarityLoss,

metric="accuracy",

batch_size=16,

num_iterations=20, # The number of text pairs to... | https://github.com/huggingface/setfit/issues/364 | closed | [

"question"

] | 2023-04-19T15:19:42Z | 2023-11-24T13:22:31Z | null | vahuja4 |

huggingface/diffusers | 3,151 | What is the format of the training data | Hello,I'm training Lora, but I don't know what the data format looks like,

The error is as follows:

--caption_column' value 'text' needs to be one of: image

What is the data format? | https://github.com/huggingface/diffusers/issues/3151 | closed | [

"stale"

] | 2023-04-19T07:51:16Z | 2023-08-04T10:20:18Z | null | WGS-note |

huggingface/setfit | 360 | Token padding makes ONNX inference 6x slower, is attention_mask being used properly? | Here's some code that loads in my ONNX model and tokenizes 293 short examples. The longest length in the set is 153 tokens:

```python

input_text = test_ds['text']

import onnxruntime

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained(model_id)

inputs = tokenizer(

input_text,

... | https://github.com/huggingface/setfit/issues/360 | open | [

"question"

] | 2023-04-18T15:33:01Z | 2023-04-19T05:40:02Z | null | bogedy |

huggingface/datasets | 5,767 | How to use Distill-BERT with different datasets? | ### Describe the bug

- `transformers` version: 4.11.3

- Platform: Linux-5.4.0-58-generic-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyTorch version (GPU?): 1.12.0+cu102 (True)

- Tensorflow version (GPU?): 2.10.0 (True)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax version: not installed

- JaxL... | https://github.com/huggingface/datasets/issues/5767 | closed | [] | 2023-04-18T06:25:12Z | 2023-04-20T16:52:05Z | 1 | sauravtii |

huggingface/transformers.js | 93 | [Feature Request] "slow tokenizer" format (`vocab.json` and `merges.txt`) | Wondering whether this code is supposed to work (or some variation on the repo URL - I tried a few different things):

```js

await import("https://cdn.jsdelivr.net/npm/@xenova/transformers@1.4.2/dist/transformers.min.js");

let tokenizer = await AutoTokenizer.from_pretrained("https://huggingface.co/cerebras/Cerebras-G... | https://github.com/huggingface/transformers.js/issues/93 | closed | [

"question"

] | 2023-04-18T05:11:31Z | 2023-04-23T07:41:27Z | null | josephrocca |

huggingface/datasets | 5,766 | Support custom feature types | ### Feature request

I think it would be nice to allow registering custom feature types with the 🤗 Datasets library. For example, allow to do something along the following lines:

```

from datasets.features import register_feature_type # this would be a new function

@register_feature_type

class CustomFeature... | https://github.com/huggingface/datasets/issues/5766 | open | [

"enhancement"

] | 2023-04-17T15:46:41Z | 2024-03-10T11:11:22Z | 4 | jmontalt |

huggingface/transformers.js | 92 | [Question] ESM module import in the browser (via jsdelivr) | Wondering how to import transformers.js as a module (as opposed to `<script>`) in the browser? I've tried this:

```js

let { AutoTokenizer } = await import("https://cdn.jsdelivr.net/npm/@xenova/transformers@1.4.2/dist/transformers.min.js");

```

But it doesn't seem to export anything. I might be making a mistake here... | https://github.com/huggingface/transformers.js/issues/92 | closed | [

"question"

] | 2023-04-17T10:06:55Z | 2023-04-22T19:17:56Z | null | josephrocca |

huggingface/optimum | 973 | How to run the encoder part only of the model transformed by BetterTransformer? | ### Feature request

If I want to run the encoder part of the model, e.g., "bert-large-uncased", skipping the word embedding stage, I could run with `nn.TransformerEncoder` as the Pytorch eager mode. How could I implement the BetterTransformer version encoder?

```

encoder_layer = nn.TransformerEncoderLayer(d_mode... | https://github.com/huggingface/optimum/issues/973 | closed | [

"Stale"

] | 2023-04-17T02:29:44Z | 2025-06-04T02:15:33Z | 2 | WarningRan |

huggingface/datasets | 5,759 | Can I load in list of list of dict format? | ### Feature request

my jsonl dataset has following format:

```

[{'input':xxx, 'output':xxx},{'input:xxx,'output':xxx},...]

[{'input':xxx, 'output':xxx},{'input:xxx,'output':xxx},...]

```

I try to use `datasets.load_dataset('json', data_files=path)` or `datasets.Dataset.from_json`, it raises

```

File "site-p... | https://github.com/huggingface/datasets/issues/5759 | open | [

"enhancement"

] | 2023-04-16T13:50:14Z | 2023-04-19T12:04:36Z | 1 | LZY-the-boys |

huggingface/setfit | 358 | Domain adaptation | Does setfit cover Adapter Transformers? https://arxiv.org/pdf/2007.07779.pdf | https://github.com/huggingface/setfit/issues/358 | closed | [

"question"

] | 2023-04-16T12:44:50Z | 2023-12-05T14:49:36Z | null | Elahehsrz |

huggingface/diffusers | 3,120 | The controlnet trained by diffusers scripts produce always same result no matter what the input images is | ### Describe the bug

I train a controlnet with the base model Chilloutmix-Ni and datasets Abhilashvj/vto_hd_train using the train_controlnet.py script provided in diffuses repo

After training I got a controlnet model.

When I inference the image with the model, if I use the same prompt and seed, no matter how I cha... | https://github.com/huggingface/diffusers/issues/3120 | closed | [

"bug",

"stale"

] | 2023-04-16T11:16:58Z | 2023-07-08T15:03:12Z | null | garyhxfang |

huggingface/transformers.js | 87 | Can whisper-tiny speech-to-text translate to English as well as transcribe foreign language? | I know there is a separate translation engine (t5-small), but I'm wondering if speech-to-text with whisper-tiny (not whisper-tiny.en) can return English translation alongside the foreign-language transcription? -- I read Whisper.ai can do this. It seems like it would just be a parameter, but I don't know where to loo... | https://github.com/huggingface/transformers.js/issues/87 | closed | [

"enhancement",

"question"

] | 2023-04-14T16:23:14Z | 2023-06-23T19:07:31Z | null | patrickinminneapolis |

huggingface/text-generation-inference | 182 | Is bert-base-uncased supported? | Hi,

I'm trying to deploy bert-base-uncased model by [v0.5.0](https://github.com/huggingface/text-generation-inference/tree/v0.5.0), but got an error: ValueError: BertLMHeadModel does not support `device_map='auto'` yet.

<details>

```

root@nick-test1-8zjwg-135105-worker-0:/usr/local/bin# ./text-generation-launch... | https://github.com/huggingface/text-generation-inference/issues/182 | open | [

"question"

] | 2023-04-14T07:26:05Z | 2023-11-17T09:20:30Z | null | nick1115 |

huggingface/setfit | 355 | ONNX conversion of multi-ouput classifier | Hi,

I am trying to do onnx conversion for multilabel model using the multioutputclassifier

`model = SetFitModel.from_pretrained(model_id, multi_target_strategy="multi-output")`.

When I tried `export_onnx(model.model_body,

model.model_head,

opset=12,

output_path=output_path)`, i... | https://github.com/huggingface/setfit/issues/355 | open | [

"question"

] | 2023-04-13T22:08:13Z | 2023-04-20T17:00:48Z | null | jackiexue1993 |

huggingface/transformers.js | 84 | [Question] New demo type/use case: semantic search (SemanticFinder) | Hi @xenova,

first of all thanks for the amazing library - it's awesome to be able to play around with the models without a backend!

I just created [SemanticFinder](https://do-me.github.io/SemanticFinder/), a semantic search engine in the browser with the help of transformers.js and [sentence-transformers/all-Mini... | https://github.com/huggingface/transformers.js/issues/84 | closed | [

"question"

] | 2023-04-12T18:57:38Z | 2025-10-13T05:03:30Z | null | do-me |

huggingface/diffusers | 3,075 | Create a Video ControlNet Pipeline | **Is your feature request related to a problem? Please describe.**

Stable Diffusion video generation lacks precise movement control and composition control. This is not surprising, since the model was not trained or fine-tuned with videos.

**Describe the solution you'd like**

By following an analogous extension p... | https://github.com/huggingface/diffusers/issues/3075 | closed | [

"question"

] | 2023-04-12T17:51:35Z | 2023-04-13T16:21:28Z | null | jfischoff |

huggingface/setfit | 352 | False Positives | I had built a model using a muti-label dataset. But I see that I am getting so many False Positive outputs during inference.

For eg:

FIRST NOTICE OF LOSS SENT TO AGENT'S CUSTOMER ACTIVITY ---> This is predicted as 'Total Loss' (Total Loss is one of my labels given fed through the dataset).

I see that there... | https://github.com/huggingface/setfit/issues/352 | closed | [

"question"

] | 2023-04-12T17:42:44Z | 2023-05-18T16:19:27Z | null | cassinthangam4996 |

huggingface/setfit | 349 | Hard Negative Mining vs random sampling | Has anyone tried doing hard negative mining when generating the sentence pairs as opposed to random sampling? @tomaarsen - is random sampling the default? | https://github.com/huggingface/setfit/issues/349 | open | [

"question"

] | 2023-04-12T09:24:53Z | 2023-04-15T16:04:27Z | null | vahuja4 |

huggingface/tokenizers | 1,216 | What is the correct way to remove a token from the vocabulary? | I see that it works when I do something like this

```

del tokenizer.get_vocab()[unwanted_token]

```

~~And then it will work when running encode~~, but when I save the model the unwanted tokens remain in the json. Is there a blessed way to remove unwanted tokens?

EDIT:

Now that I tried again see that does not a... | https://github.com/huggingface/tokenizers/issues/1216 | closed | [

"Stale"

] | 2023-04-11T15:40:48Z | 2024-02-10T01:47:15Z | null | tvallotton |

huggingface/optimum | 964 | onnx conversion for custom trained trocr base stage1 | ### Feature request

I have trained the base stage1 trocr on my custom dataset having multiline images. The trained model gives good results while using the default torch format for loading the model. But while converting the model to onnx, the model detects only first line or part of it in first line. I have used th... | https://github.com/huggingface/optimum/issues/964 | open | [

"onnx"

] | 2023-04-11T10:10:23Z | 2023-10-16T14:20:42Z | 1 | Mir-Umar |

huggingface/datasets | 5,727 | load_dataset fails with FileNotFound error on Windows | ### Describe the bug

Although I can import and run the datasets library in a Colab environment, I cannot successfully load any data on my own machine (Windows 10) despite following the install steps:

(1) create conda environment

(2) activate environment

(3) install with: ``conda` install -c huggingface -c conda-... | https://github.com/huggingface/datasets/issues/5727 | closed | [] | 2023-04-10T23:21:12Z | 2023-07-21T14:08:20Z | 4 | joelkowalewski |

huggingface/datasets | 5,725 | How to limit the number of examples in dataset, for testing? | ### Describe the bug

I am using this command:

`data = load_dataset("json", data_files=data_path)`

However, I want to add a parameter, to limit the number of loaded examples to be 10, for development purposes, but can't find this simple parameter.

### Steps to reproduce the bug

In the description.

### Expected beh... | https://github.com/huggingface/datasets/issues/5725 | closed | [] | 2023-04-10T08:41:43Z | 2023-04-21T06:16:24Z | 3 | ndvbd |

huggingface/transformers.js | 75 | [Question] WavLM support | This is a really good project. I was wondering if WavLM is supported in the project, I wanted to run a voice conversation model in the browser, also if Hifi-gan for voice synthesis.

| https://github.com/huggingface/transformers.js/issues/75 | closed | [

"question"

] | 2023-04-08T09:36:03Z | 2023-09-08T13:17:07Z | null | Ashraf-Ali-aa |

huggingface/datasets | 5,719 | Array2D feature creates a list of list instead of a numpy array | ### Describe the bug

I'm not sure if this is expected behavior or not. When I create a 2D array using `Array2D`, the data has list type instead of numpy array. I think it should not be the expected behavior especially when I feed a numpy array as input to the data creation function. Why is it converting my array int... | https://github.com/huggingface/datasets/issues/5719 | closed | [] | 2023-04-07T21:04:08Z | 2023-04-20T15:34:41Z | 4 | offchan42 |

huggingface/datasets | 5,716 | Handle empty audio | Some audio paths exist, but they are empty, and an error will be reported when reading the audio path.How to use the filter function to avoid the empty audio path?

when a audio is empty, when do resample , it will break:

`array, sampling_rate = sf.read(f) array = librosa.resample(array, orig_sr=sampling_rate, target_... | https://github.com/huggingface/datasets/issues/5716 | closed | [] | 2023-04-07T09:51:40Z | 2023-09-27T17:47:08Z | 2 | zyb8543d |

huggingface/setfit | 344 | How to do I have multi text columns? | Text is not one column, there many columns. For example : The text columns are "sex","title","weather". What should I do? | https://github.com/huggingface/setfit/issues/344 | closed | [

"question"

] | 2023-04-07T01:51:21Z | 2023-04-10T00:45:38Z | null | freecui |

huggingface/transformers.js | 71 | [Question] How to run test suit | Hi @xenova,

I want to work on adding new features, but when I try to run the tests of the project I get this error:

```

Error: File not found. Could not locate "/Users/yonatanchelouche/Desktop/passive-project/transformers.js/models/onnx/quantized/distilbert-base-uncased-finetuned-sst-2-english/sequence-classific... | https://github.com/huggingface/transformers.js/issues/71 | closed | [

"question"

] | 2023-04-06T17:03:09Z | 2023-05-15T17:38:46Z | null | chelouche9 |

huggingface/transformers.js | 69 | How to convert bloomz model | While converting the [bloomz](https://huggingface.co/bigscience/bloomz-7b1l) model, I am getting the 'invalid syntax' error. Is conversion limited to only predefined model types?

If not, please provide the syntax for converting the above model with quantization.

(I will run the inference in nodejs and not in browse... | https://github.com/huggingface/transformers.js/issues/69 | closed | [

"question"

] | 2023-04-04T14:51:16Z | 2023-04-09T02:01:49Z | null | bil-ash |

huggingface/transformers.js | 68 | [Feature request] whisper word level timestamps | I am new to both transformers.js and whisper, so I am sorry for a lame question in advance.

I am trying to make [return_timestamps](https://huggingface.co/docs/transformers/main_classes/pipelines#transformers.AutomaticSpeechRecognitionPipeline.__call__) parameter work...

I managed to customize [script.js](https:/... | https://github.com/huggingface/transformers.js/issues/68 | closed | [

"enhancement",

"question"

] | 2023-04-04T10:57:05Z | 2023-07-09T22:48:31Z | null | jozefchutka |

huggingface/datasets | 5,705 | Getting next item from IterableDataset took forever. | ### Describe the bug

I have a large dataset, about 500GB. The format of the dataset is parquet.

I then load the dataset and try to get the first item

```python

def get_one_item():

dataset = load_dataset("path/to/datafiles", split="train", cache_dir=".", streaming=True)

dataset = dataset.filter(lambda... | https://github.com/huggingface/datasets/issues/5705 | closed | [] | 2023-04-04T09:16:17Z | 2023-04-05T23:35:41Z | 2 | HongtaoYang |

huggingface/optimum | 952 | Enable AMP for BetterTransformer | ### Feature request

Allow for the `BetterTransformer` models to be inferenced with AMP.

### Motivation

Models transformed with `BetterTransformer` raise error when used with AMP:

`bettertransformers.models.base`

```python

...

def forward_checker(self, *args, **kwargs):

if torch.is_autocast_ena... | https://github.com/huggingface/optimum/issues/952 | closed | [] | 2023-04-04T09:14:00Z | 2023-07-26T17:08:42Z | 6 | viktor-shcherb |

huggingface/controlnet_aux | 18 | When using openpose, what is the format of the input image? RGB format, or BGR format? |

I saw that the image in BGR format is used as input in the open_pose/body.py file, but the hug... | https://github.com/huggingface/controlnet_aux/issues/18 | open | [] | 2023-04-04T03:58:38Z | 2023-04-04T11:23:33Z | null | ZihaoW123 |

huggingface/datasets | 5,702 | Is it possible or how to define a `datasets.Sequence` that could potentially be either a dict, a str, or None? | ### Feature request

Hello! Apologies if my question sounds naive:

I was wondering if it’s possible, or how one would go about defining a 'datasets.Sequence' element in datasets.Features that could potentially be either a dict, a str, or None?

Specifically, I’d like to define a feature for a list that contains 18... | https://github.com/huggingface/datasets/issues/5702 | closed | [

"enhancement"

] | 2023-04-04T03:20:43Z | 2023-04-05T14:15:18Z | 4 | gitforziio |

huggingface/dataset-viewer | 1,011 | Remove authentication by cookie? | Currently, to be able to return the contents for gated datasets, all the endpoints check the request credentials if needed. The accepted credentials are: HF token, HF cookie, or a JWT in `X-Api-Key`. See https://github.com/huggingface/datasets-server/blob/ecb861b5e8d728b80391f580e63c8d2cad63a1fc/services/api/src/api/au... | https://github.com/huggingface/dataset-viewer/issues/1011 | closed | [

"question",

"P2"

] | 2023-04-03T12:12:56Z | 2024-03-13T09:48:38Z | null | severo |

huggingface/transformers.js | 63 | [Model request] Helsinki-NLP/opus-mt-ru-en (marian) | Sorry for this noob question, can somebody give me a kind of guideline to be able to convert and use

https://huggingface.co/Helsinki-NLP/opus-mt-ru-en/tree/main

thank you | https://github.com/huggingface/transformers.js/issues/63 | closed | [

"enhancement",

"question"

] | 2023-03-31T09:18:28Z | 2023-08-20T08:00:38Z | null | eviltik |

huggingface/safetensors | 222 | Might not related but wanna ask: does there can have a c++ version? | Hello, wanna ask 2 questions:

1. will safetensors provides a c++ version, it looks more convenient then pth or onnx;

2. does it possible to load safetensors into some forward lib not just pytorch, such as onnxruntime etc? | https://github.com/huggingface/safetensors/issues/222 | closed | [

"Stale"

] | 2023-03-31T05:14:29Z | 2023-12-21T01:47:58Z | 5 | lucasjinreal |

huggingface/transformers.js | 62 | [Feature request] nodejs caching | Hi, thank you for your works

I'm a nodejs user and i read that there is no model cache implementation right now, and you are working on it.

Do you have an idea of when you will be able to push a release with a cache implementation ?

Just asking because i was at the point to code it on my side | https://github.com/huggingface/transformers.js/issues/62 | closed | [

"enhancement",

"question"

] | 2023-03-31T04:27:57Z | 2023-05-15T17:26:55Z | null | eviltik |

huggingface/dataset-viewer | 1,001 | Add total_rows in /rows response? | Should we add the number of rows in a split (eg. in field `total_rows`) in response to /rows?

It would help avoid sending a request to /size to get it.

It would also help fix a bad query.

eg: https://datasets-server.huggingface.co/rows?dataset=glue&config=ax&split=test&offset=50000&length=100 returns:

```js... | https://github.com/huggingface/dataset-viewer/issues/1001 | closed | [

"question",

"improvement / optimization"

] | 2023-03-30T13:54:19Z | 2023-05-07T15:04:12Z | null | severo |

huggingface/dataset-viewer | 999 | Use the huggingface_hub webhook server? | See https://github.com/huggingface/huggingface_hub/pull/1410

The/webhook endpoint could live in its pod with the huggingface_hub webhook server. Is it useful for our project? Feel free to comment. | https://github.com/huggingface/dataset-viewer/issues/999 | closed | [

"question",

"refactoring / architecture"

] | 2023-03-30T08:44:49Z | 2023-06-10T15:04:09Z | null | severo |

huggingface/datasets | 5,687 | Document to compress data files before uploading | In our docs to [Share a dataset to the Hub](https://huggingface.co/docs/datasets/upload_dataset), we tell users to upload directly their data files, like CSV, JSON, JSON-Lines, text,... However, these extensions are not tracked by Git LFS by default, as they are not in the `.giattributes` file. Therefore, if they are t... | https://github.com/huggingface/datasets/issues/5687 | closed | [

"documentation"

] | 2023-03-30T06:41:07Z | 2023-04-19T07:25:59Z | 3 | albertvillanova |

huggingface/datasets | 5,685 | Broken Image render on the hub website | ### Describe the bug

Hi :wave:

Not sure if this is the right place to ask, but I am trying to load a huge amount of datasets on the hub (:partying_face: ) but I am facing a little issue with the `image` type

issue I think we should add a note about the order of patterns that is used to find splits, see [my comment](https://github.com/huggingface/datasets/issues/5650#issuecomment-1488412527). Also we should reference this page in pages about packaged load... | https://github.com/huggingface/datasets/issues/5681 | closed | [

"documentation"

] | 2023-03-29T11:44:49Z | 2023-04-03T18:31:11Z | 2 | polinaeterna |

huggingface/datasets | 5,671 | How to use `load_dataset('glue', 'cola')` | ### Describe the bug

I'm new to use HuggingFace datasets but I cannot use `load_dataset('glue', 'cola')`.

- I was stacked by the following problem:

```python

from datasets import load_dataset

cola_dataset = load_dataset('glue', 'cola')

------------------------------------------------------------------------... | https://github.com/huggingface/datasets/issues/5671 | closed | [] | 2023-03-26T09:40:34Z | 2023-03-28T07:43:44Z | 2 | makinzm |

huggingface/optimum | 918 | Support for LLaMA | ### Feature request

A support for exporting LLaMA to ONNX

### Motivation

It would be great to have one, to apply optimizations and so on

### Your contribution

I could try implementing a support, but I would need an assist on model config even though it should be pretty simmilar to what is already done with GPT-J | https://github.com/huggingface/optimum/issues/918 | closed | [

"onnx"

] | 2023-03-23T21:07:30Z | 2023-04-17T14:32:37Z | 2 | nenkoru |

huggingface/datasets | 5,665 | Feature request: IterableDataset.push_to_hub | ### Feature request

It'd be great to have a lazy push to hub, similar to the lazy loading we have with `IterableDataset`.

Suppose you'd like to filter [LAION](https://huggingface.co/datasets/laion/laion400m) based on certain conditions, but as LAION doesn't fit into your disk, you'd like to leverage streaming:

`... | https://github.com/huggingface/datasets/issues/5665 | closed | [

"enhancement"

] | 2023-03-23T09:53:04Z | 2025-06-06T16:13:22Z | 13 | NielsRogge |

huggingface/datasets | 5,660 | integration with imbalanced-learn | ### Feature request

Wouldn't it be great if the various class balancing operations from imbalanced-learn were available as part of datasets?

### Motivation

I'm trying to use imbalanced-learn to balance a dataset, but it's not clear how to get the two to interoperate - what would be great would be some examples. I'v... | https://github.com/huggingface/datasets/issues/5660 | closed | [

"enhancement",

"wontfix"

] | 2023-03-22T11:05:17Z | 2023-07-06T18:10:15Z | 1 | tansaku |

huggingface/safetensors | 202 | `safetensor.torch.save_file()` throws `RuntimeError` - any recommended way to enforce? | was confronted with `RuntimeError: Some tensors share memory, this will lead to duplicate memory on disk and potential differences when loading them again`.

Can we explicitly disregard "**potential** differences"? | https://github.com/huggingface/safetensors/issues/202 | closed | [] | 2023-03-21T21:24:38Z | 2024-06-06T02:29:48Z | 26 | drahnreb |

huggingface/optimum | 906 | Optimum export of whisper raises ValueError: There was an error while processing timestamps, we haven't found a timestamp as last token. Was WhisperTimeStampLogitsProcessor used? | ### System Info

```shell

optimum: 1.7.1

Python: 3.8.3

transformers: 4.27.2

platform: Windows 10

```

### Who can help?

@philschmid @michaelbenayoun

### Information

- [ ] The official example scripts

- [x] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as G... | https://github.com/huggingface/optimum/issues/906 | closed | [

"bug"

] | 2023-03-21T13:45:10Z | 2023-03-24T18:26:17Z | 3 | xenova |

huggingface/datasets | 5,653 | Doc: save_to_disk, `num_proc` will affect `num_shards`, but it's not documented | ### Describe the bug

[`num_proc`](https://huggingface.co/docs/datasets/main/en/package_reference/main_classes#datasets.DatasetDict.save_to_disk.num_proc) will affect `num_shards`, but it's not documented

### Steps to reproduce the bug

Nothing to reproduce

### Expected behavior

[document of `num_shards`](https://... | https://github.com/huggingface/datasets/issues/5653 | closed | [

"documentation",

"good first issue"

] | 2023-03-21T05:25:35Z | 2023-03-24T16:36:23Z | 1 | RmZeta2718 |

huggingface/dataset-viewer | 965 | Change the limit of started jobs? all kinds -> per kind | Currently, the `QUEUE_MAX_JOBS_PER_NAMESPACE` parameter limits the number of started jobs for the same namespace (user or organization). Maybe we should enforce this limit **per job kind** instead of **globally**. | https://github.com/huggingface/dataset-viewer/issues/965 | closed | [

"question",

"improvement / optimization"

] | 2023-03-20T17:40:45Z | 2023-04-29T15:03:57Z | null | severo |

huggingface/dataset-viewer | 964 | Kill a job after a maximum duration? | The heartbeat already allows to detect if a job has crashed and to generate an error in that case. But some jobs can take forever, while not crashing. Should we set a maximum duration for the jobs, in order to save resources and free the queue? I imagine that we could automatically kill a job that takes more than 20 mi... | https://github.com/huggingface/dataset-viewer/issues/964 | closed | [

"question",

"improvement / optimization"

] | 2023-03-20T17:37:35Z | 2023-03-23T13:16:33Z | null | severo |

huggingface/optimum | 903 | Support transformers export to ggml format | ### Feature request

ggml is gaining traction (e.g. llama.cpp has 10k stars), and it would be great to extend optimum.exporters and enable the community to export PyTorch/Tensorflow transformers weights to the format expected by ggml, having a more streamlined and single-entry export.

This could avoid duplicates a... | https://github.com/huggingface/optimum/issues/903 | open | [

"feature-request",

"help wanted",

"exporters"

] | 2023-03-20T12:51:51Z | 2023-07-03T04:51:18Z | 2 | fxmarty |

huggingface/datasets | 5,650 | load_dataset can't work correct with my image data | I have about 20000 images in my folder which divided into 4 folders with class names.

When i use load_dataset("my_folder_name", split="train") this function create dataset in which there are only 4 images, the remaining 19000 images were not added there. What is the problem and did not understand. Tried converting imag... | https://github.com/huggingface/datasets/issues/5650 | closed | [] | 2023-03-18T13:59:13Z | 2023-07-24T14:13:02Z | 21 | WiNE-iNEFF |

huggingface/dataset-viewer | 924 | Support webhook version 3? | The Hub provides different formats for the webhooks. The current version, used in the public feature (https://huggingface.co/docs/hub/webhooks) is version 3. Maybe we should support version 3 soon. | https://github.com/huggingface/dataset-viewer/issues/924 | closed | [

"question",

"refactoring / architecture"

] | 2023-03-13T13:39:59Z | 2023-04-21T15:03:54Z | null | severo |

huggingface/datasets | 5,632 | Dataset cannot convert too large dictionnary | ### Describe the bug

Hello everyone!

I tried to build a new dataset with the command "dict_valid = datasets.Dataset.from_dict({'input_values': values_array})".

However, I have a very large dataset (~400Go) and it seems that dataset cannot handle this.

Indeed, I can create the dataset until a certain size of m... | https://github.com/huggingface/datasets/issues/5632 | open | [] | 2023-03-13T10:14:40Z | 2023-03-16T15:28:57Z | 1 | MaraLac |

huggingface/ethics-education | 1 | What is AI Ethics? | With the amount of hype around things like ChatGPT, AI art, etc., there are a lot of misunderstandings being propagated through the media! Additionally, many people are not aware of the ethical impacts of AI, and they're even less aware about the work that folks in academia + industry are doing to ensure that AI system... | https://github.com/huggingface/ethics-education/issues/1 | open | [

"help wanted",

"explainer",

"audience: non-technical"

] | 2023-03-09T20:58:02Z | 2023-03-17T14:50:39Z | null | NimaBoscarino |

huggingface/diffusers | 2,633 | Asymmetric tiling | Hello. I'm trying to achieve tiling asymmetrically using Diffusers, in a similar fashion to the asymmetric tiling in Automatic1111's extension https://github.com/tjm35/asymmetric-tiling-sd-webui.

My understanding is that I must traverse all layers to alter the padding, in my case circular in X and constant in Y, bu... | https://github.com/huggingface/diffusers/issues/2633 | closed | [

"good first issue",

"question"

] | 2023-03-09T19:09:34Z | 2025-07-29T08:48:27Z | null | alejobrainz |

huggingface/optimum | 874 | Assistance exporting git-large to ONNX | Hello! I am looking to export an image captioning Hugging Face model to ONNX (specifically I was playing with the [git-large](https://huggingface.co/microsoft/git-large) model but if anyone knows of one that might be easier to deal with in terms of exporting that is great too)

I'm trying to follow [these](https://hu... | https://github.com/huggingface/optimum/issues/874 | closed | [

"Stale"

] | 2023-03-09T18:25:57Z | 2025-06-22T02:17:24Z | 3 | gracemcgrath |

huggingface/safetensors | 190 | Rust save ndarray using safetensors | I've been loving this library!

I have a question, how can I save an ndarray using safetensors?

https://docs.rs/ndarray/latest/ndarray/

For context: I am preprocessing data in rust and would like to then load it in python to do machine learning with pytorch. | https://github.com/huggingface/safetensors/issues/190 | closed | [

"Stale"

] | 2023-03-08T22:29:11Z | 2024-01-10T16:48:07Z | 7 | StrongChris |

huggingface/optimum | 867 | Auto-detect framework for large models at ONNX export | ### System Info

- `transformers` version: 4.26.1

- Platform: Linux-4.4.0-142-generic-x86_64-with-glibc2.23

- Python version: 3.9.15

- Huggingface_hub version: 0.11.1

- PyTorch version (GPU?): 1.13.0 (True)

- Tensorflow version (GPU?): not installed (NA)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax ... | https://github.com/huggingface/optimum/issues/867 | closed | [

"feature-request",

"onnx"

] | 2023-03-08T03:43:53Z | 2023-03-16T15:52:39Z | 3 | WangYizhang01 |

huggingface/datasets | 5,615 | IterableDataset.add_column is unable to accept another IterableDataset as a parameter. | ### Describe the bug

`IterableDataset.add_column` occurs an exception when passing another `IterableDataset` as a parameter.

The method seems to accept only eager evaluated values.

https://github.com/huggingface/datasets/blob/35b789e8f6826b6b5a6b48fcc2416c890a1f326a/src/datasets/iterable_dataset.py#L1388-L1391

... | https://github.com/huggingface/datasets/issues/5615 | closed | [

"wontfix"

] | 2023-03-07T01:52:00Z | 2023-03-09T15:24:05Z | 1 | zsaladin |

huggingface/safetensors | 188 | How to extract weights from onnx to safetensors | How to extract weights from onnx to safetensors in rust? | https://github.com/huggingface/safetensors/issues/188 | closed | [] | 2023-03-06T09:21:31Z | 2023-03-07T14:23:16Z | 2 | oovm |

huggingface/datasets | 5,609 | `load_from_disk` vs `load_dataset` performance. | ### Describe the bug

I have downloaded `openwebtext` (~12GB) and filtered out a small amount of junk (it's still huge). Now, I would like to use this filtered version for future work. It seems I have two choices:

1. Use `load_dataset` each time, relying on the cache mechanism, and re-run my filtering.

2. `save_to_di... | https://github.com/huggingface/datasets/issues/5609 | open | [] | 2023-03-05T05:27:15Z | 2023-07-13T18:48:05Z | 4 | davidgilbertson |

huggingface/sentence-transformers | 1,856 | What it is the ideal sentence size to train with TSDAE? | I have an unlabeled data that contains 80k texts, with about 250 tokens on average(with bert-base-multilingual-uncased tokenizer). I want to pre-train the model on my dataset, but I'm not sure if the texts are too large. It's possible to break in small sentences, but I'm afraid that some sentences lose context.

What... | https://github.com/huggingface/sentence-transformers/issues/1856 | open | [] | 2023-03-04T21:16:14Z | 2023-03-04T21:16:14Z | null | Diegobm99 |

huggingface/transformers | 21,950 | auto_find_batch_size should say what batch size it is using | ### Feature request

When using `auto_find_batch_size=True` in the trainer I believe it identifies the right batch size but then it doesn't log it to the console anywhere?

It would be good if it could log what batch size it is using?

### Motivation

I'd like to know what batch size it is using because then I will k... | https://github.com/huggingface/transformers/issues/21950 | closed | [] | 2023-03-04T08:53:25Z | 2023-06-28T15:03:39Z | null | p-christ |

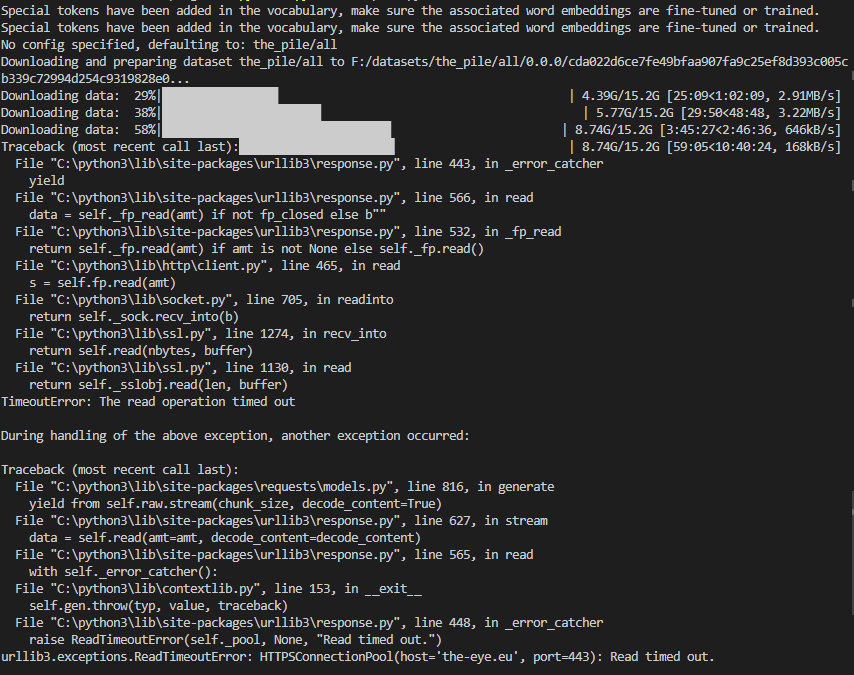

huggingface/datasets | 5,604 | Problems with downloading The Pile | ### Describe the bug

The downloads in the screenshot seem to be interrupted after some time and the last download throws a "Read timed out" error.

Here are the downloaded files:

)

moving Datasets to 2.8.0 solved the issue.

### Steps to reproduce the bug

1- using DreamBoothDataset ... | https://github.com/huggingface/datasets/issues/5600 | closed | [] | 2023-03-02T11:00:27Z | 2023-03-13T17:59:35Z | 1 | salahiguiliz |

huggingface/trl | 180 | what is AutoModelForCausalLMWithValueHead? | trl use `AutoModelForCausalLMWithValueHead`,which is base_model(eg: GPT2LMHeadModel) + fc layer,but I can't understand why need a fc head layer? | https://github.com/huggingface/trl/issues/180 | closed | [] | 2023-02-28T07:46:49Z | 2025-02-21T11:29:04Z | null | akk-123 |

huggingface/datasets | 5,585 | Cache is not transportable | ### Describe the bug

I would like to share cache between two machines (a Windows host machine and a WSL instance).

I run most my code in WSL. I have just run out of space in the virtual drive. Rather than expand the drive size, I plan to move to cache to the host Windows machine, thereby sharing the downloads.

I... | https://github.com/huggingface/datasets/issues/5585 | closed | [] | 2023-02-28T00:53:06Z | 2023-02-28T21:26:52Z | 2 | davidgilbertson |

huggingface/dataset-viewer | 857 | Contribute to https://github.com/huggingface/huggingface.js? | https://github.com/huggingface/huggingface.js is a JS client for the Hub and inference. We could propose to add a client for the datasets-server. | https://github.com/huggingface/dataset-viewer/issues/857 | closed | [

"question"

] | 2023-02-27T12:27:43Z | 2023-04-08T15:04:09Z | null | severo |

huggingface/dataset-viewer | 852 | Store the parquet metadata in their own file? | See https://github.com/huggingface/datasets/issues/5380#issuecomment-1444281177

> From looking at Arrow's source, it seems Parquet stores metadata at the end, which means one needs to iterate over a Parquet file's data before accessing its metadata. We could mimic Dask to address this "limitation" and write metadata... | https://github.com/huggingface/dataset-viewer/issues/852 | closed | [

"question"

] | 2023-02-27T08:29:12Z | 2023-05-01T15:04:07Z | null | severo |

huggingface/datasets | 5,570 | load_dataset gives FileNotFoundError on imagenet-1k if license is not accepted on the hub | ### Describe the bug

When calling ```load_dataset('imagenet-1k')``` FileNotFoundError is raised, if not logged in and if logged in with huggingface-cli but not having accepted the licence on the hub. There is no error once accepting.

### Steps to reproduce the bug

```

from datasets import load_dataset

imagenet =... | https://github.com/huggingface/datasets/issues/5570 | closed | [] | 2023-02-23T16:44:32Z | 2023-07-24T15:18:50Z | 2 | buoi |

huggingface/optimum | 810 | ORTTrainer using DataParallel instead of DistributedDataParallel causes downstream errors | ### System Info

```shell

optimum 1.6.4

python 3.8

```

### Who can help?

@JingyaHuang @echarlaix

### Information

- [X] The official example scripts

- [ ] My own modified scripts

### Tasks

- [X] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own task ... | https://github.com/huggingface/optimum/issues/810 | closed | [

"bug"

] | 2023-02-22T22:15:41Z | 2023-03-19T19:01:32Z | 2 | prathikr |

huggingface/optimum | 809 | Better Transformer with QA pipeline returns padding issue | ### System Info

```shell

Optimum version: 1.6.4

Platform: Linux

Python version: 3.10

Transformers version: 4.26.1

Accelerate version: 0.16.0

Torch version: 1.13.1+cu117

```

### Who can help?

@philschmid

### Information

- [ ] The official example scripts

- [X] My own modified scripts

### Tas... | https://github.com/huggingface/optimum/issues/809 | closed | [

"bug"

] | 2023-02-22T18:51:23Z | 2023-02-27T11:29:09Z | 2 | vrdn-23 |

huggingface/setfit | 315 | Choosing the datapoints that need to be annotated? | Hello,

I have a large set of unlabelled data on which I need to do text classification. Since few-shot text classification uses only a handful of datapoints per class, is there a systematic way to choose which datapoints should be chosen for annotation?

Thank you! | https://github.com/huggingface/setfit/issues/315 | open | [

"question"

] | 2023-02-16T05:25:03Z | 2023-03-06T20:56:22Z | null | vahuja4 |

huggingface/setfit | 314 | Question: train and deploy via Sagemaker | Hi

I'm trying to setup training (and hyperparameter tuning) using Amazon SageMaker.

Because SetFit is not a standard model on HugginFace I'm guessing that the examples provided in the HuggingFace/SageMaker integration are not useable: [example](https://github.com/huggingface/notebooks/tree/ef21344eb20fe19f881c846d... | https://github.com/huggingface/setfit/issues/314 | open | [

"question"

] | 2023-02-15T12:23:33Z | 2024-03-28T15:10:28Z | null | lbelpaire |

huggingface/setfit | 313 | Setfit no support evaluate each epoch or step and save model each epoch or step | Hi everyone, can u give me about evaluate each epoch and save checkpoint model ? thanks everyone | https://github.com/huggingface/setfit/issues/313 | closed | [

"question"

] | 2023-02-15T04:14:24Z | 2023-12-06T13:20:50Z | null | batman-do |

huggingface/optimum | 776 | Loss of accuracy when Longformer for SequenceClassification model is exported to ONNX | ### Edit: This is a crosspost to [pytorch #94810](https://github.com/pytorch/pytorch/issues/94810). I don't know, where the issue lies.

### System info

- `transformers` version: 4.26.1

- Platform: macOS-10.16-x86_64-i386-64bit

- Python version: 3.9.12

- PyTorch version (GPU?): 1.13.0 (False)

- onnx: 1.13.0

-... | https://github.com/huggingface/optimum/issues/776 | closed | [] | 2023-02-14T10:22:12Z | 2023-02-17T13:55:17Z | 8 | SteffenHaeussler |

huggingface/setfit | 310 | How does predict_proba work exactly ? | Hi everyone !

Thanks for this amazing package first ! it is more than useful for a project at my work currently ! and the 0.6.0 was much needed on my side !

BUT i'd like to have some clarifications on how the function predict_proba works because I have a hard understanding.

This table :

<html>

<body>

<!--S... | https://github.com/huggingface/setfit/issues/310 | open | [

"question",

"needs verification"

] | 2023-02-10T14:13:13Z | 2023-11-15T08:24:38Z | null | doubianimehdi |

huggingface/setfit | 308 | [QUESTION] Using callbacks (early stopping, logging, etc) | Hi all, thanks for your work here!

**TLDR**: Is there a way to add callbacks for early stopping and logging (for example, with W&B?).

I am using setfit for a project, but I could not figure out a way to add early stopping. I am afraid that I am overfitting to the training set. I also cant really say that I am, be... | https://github.com/huggingface/setfit/issues/308 | closed | [

"question"

] | 2023-02-08T19:39:20Z | 2023-02-27T16:25:26Z | null | FMelloMascarenhas-Cohere |

huggingface/optimum | 763 | Documented command "optimum-cli onnxruntime" doesn't exist? | ### System Info

```shell

Python 3.9, Ubuntu 20.04, Miniconda. CUDA GPU available

Packages installed (the important stuff):

onnx==1.13.0

onnxruntime==1.13.1

optimum==1.6.3

tokenizers==0.13.2

torch==1.13.1

transformers==4.26.0

nvidia-cublas-cu11==11.10.3.66

nvidia-cuda-nvrtc-cu11==11.7.99

nvidia-cuda-r... | https://github.com/huggingface/optimum/issues/763 | closed | [

"bug"

] | 2023-02-08T18:19:52Z | 2023-02-08T18:25:52Z | 2 | binarymax |

huggingface/datasets | 5,513 | Some functions use a param named `type` shouldn't that be avoided since it's a Python reserved name? | Hi @mariosasko, @lhoestq, or whoever reads this! :)

After going through `ArrowDataset.set_format` I found out that the `type` param is actually named `type` which is a Python reserved name as you may already know, shouldn't that be renamed to `format_type` before the 3.0.0 is released?

Just wanted to get your inp... | https://github.com/huggingface/datasets/issues/5513 | closed | [] | 2023-02-08T15:13:46Z | 2023-07-24T16:02:18Z | 4 | alvarobartt |

huggingface/setfit | 298 | apply the optimized parameters | I did my hyperparameter search optimization on one computer and now I'm trying to apply the obtained parameters on another computer, so I could not use this code "trainer.apply_hyperparameters(best_run.hyperparameters, final_model=True)

trainer.train()". I put the obtained parameters manually in my new trainer instead... | https://github.com/huggingface/setfit/issues/298 | closed | [

"question"

] | 2023-02-03T18:31:59Z | 2023-02-16T08:00:52Z | null | zoezhupingli |

huggingface/setfit | 297 | Comparing setfit with a simpler approach | Hi,

I am trying to compare setfit with another approach. The other approach is like this:

1. Define a list of representative sentences per class, call it `rep_sent`

2. Compute sentence embeddings for `rep_sent` using `mpnet-base-v2`

3. Define a list of test sentences, call it 'test_sent'.

4. Compute sentence embed... | https://github.com/huggingface/setfit/issues/297 | closed | [

"question"

] | 2023-02-03T10:11:44Z | 2023-02-06T13:01:02Z | null | vahuja4 |

huggingface/setfit | 295 | Question: How the number of categories affect the training and accuracy? | I have found that increasing the number of categories reduce the accuracy results. Has anyone studied how the increased number of samples per category affect the results?

| https://github.com/huggingface/setfit/issues/295 | open | [

"question"

] | 2023-02-02T18:43:33Z | 2023-07-26T19:30:21Z | null | rubensmau |

huggingface/dataset-viewer | 762 | Handle the case where the DatasetInfo is too big | In the /parquet-and-dataset-info processing step, if DatasetInfo is over 16MB, we will not be able to store it in MongoDB (https://pymongo.readthedocs.io/en/stable/api/pymongo/errors.html#pymongo.errors.DocumentTooLarge). We have to handle this case, and return a clear error to the user.

See https://huggingface.slac... | https://github.com/huggingface/dataset-viewer/issues/762 | closed | [

"bug"

] | 2023-02-02T10:25:19Z | 2023-02-13T13:48:06Z | null | severo |

huggingface/datasets | 5,494 | Update audio installation doc page | Our [installation documentation page](https://huggingface.co/docs/datasets/installation#audio) says that one can use Datasets for mp3 only with `torchaudio<0.12`. `torchaudio>0.12` is actually supported too but requires a specific version of ffmpeg which is not easily installed on all linux versions but there is a cust... | https://github.com/huggingface/datasets/issues/5494 | closed | [

"documentation"

] | 2023-02-01T19:07:50Z | 2023-03-02T16:08:17Z | 4 | polinaeterna |

huggingface/diffusers | 2,167 | Im using jupyter notebook and every time it stacks ckpt file but I don't know where it is | every time I try using diffusers, it downloads all .bin files and ckpt files but it piles up somewhere in the server.

i thought it got piled up in anaconda3/env but it wasn't.

where would it downloads the files be? my server its full of memory:(

. Is there something I'm missing that exp... | https://github.com/huggingface/datasets/issues/5475 | closed | [] | 2023-01-27T01:32:25Z | 2023-01-30T16:17:11Z | 3 | jonny-cyberhaven |

huggingface/setfit | 289 | [question]: creating a custom dataset class like `sst` to fit into `setfit`, throws `Cannot index by location index with a non-integer key` | I'm trying to experiment with PyTorch some model; the dataset they were using for the experiment is [`sst`][1]

But I'm also learning PyTorch, so I thought it would be better to play with `Dataset` class and create my own dataset.

So this was my approach:

```

class CustomDataset(Dataset):

def __init__(self,... | https://github.com/huggingface/setfit/issues/289 | closed | [

"question"

] | 2023-01-25T10:22:35Z | 2023-01-27T15:53:44Z | null | maifeeulasad |

huggingface/transformers | 21,287 | [docs] TrainingArguments default label_names is not what is described in the documentation | ### System Info

- `transformers` version: 4.25.1

- Platform: macOS-12.6.1-arm64-arm-64bit

- Python version: 3.8.15

- Huggingface_hub version: 0.11.1

- PyTorch version (GPU?): 1.13.1 (False)

- Tensorflow version (GPU?): not installed (NA)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax version: not i... | https://github.com/huggingface/transformers/issues/21287 | closed | [] | 2023-01-24T18:24:47Z | 2023-01-24T19:48:26Z | null | fredsensibill |

huggingface/setfit | 282 | Loading a trained SetFit model without setfit? | SetFit team, first off, thanks for the awesome library!

I'm running into trouble trying to load and run inference on a trained SetFit model without using `SetFitModel.from_pretrained()`. Instead, I'd like to load the model using torch, transformers, sentence_transformers, or some combination thereof. Is there a clea... | https://github.com/huggingface/setfit/issues/282 | closed | [

"question"

] | 2023-01-20T01:08:00Z | 2024-05-21T08:11:08Z | null | ZQ-Dev8 |

huggingface/datasets | 5,442 | OneDrive Integrations with HF Datasets | ### Feature request

First of all , I would like to thank all community who are developed DataSet storage and make it free available

How to integrate our Onedrive account or any other possible storage clouds (like google drive,...) with the **HF** datasets section.

For example, if I have **50GB** on my **Onedrive*... | https://github.com/huggingface/datasets/issues/5442 | closed | [

"enhancement"

] | 2023-01-19T23:12:08Z | 2023-02-24T16:17:51Z | 2 | Mohammed20201991 |

huggingface/diffusers | 2,012 | Reduce Imagic Pipeline Memory Consumption | I'm running the [Imagic Stable Diffusion community pipeline](https://github.com/huggingface/diffusers/blob/main/examples/community/imagic_stable_diffusion.py) and it's routinely allocating 25-38 GiB GPU vRAM which seems excessively high.

@MarkRich any ideas on how to reduce memory usage? Xformers and attention slic... | https://github.com/huggingface/diffusers/issues/2012 | closed | [

"question",

"stale"

] | 2023-01-16T23:43:03Z | 2023-02-24T15:03:35Z | null | andreemic |

huggingface/optimum | 697 | Custom model output | ### System Info

```shell

Copy-and-paste the text below in your GitHub issue:

- `optimum` version: 1.6.1

- `transformers` version: 4.25.1

- Platform: Linux-5.19.0-29-generic-x86_64-with-glibc2.36

- Python version: 3.10.8

- Huggingface_hub version: 0.11.1

- PyTorch version (GPU?): 1.13.1 (cuda availabe: True)

``... | https://github.com/huggingface/optimum/issues/697 | open | [

"bug"

] | 2023-01-16T14:08:12Z | 2023-04-11T12:30:04Z | 3 | jplu |

huggingface/datasets | 5,424 | When applying `ReadInstruction` to custom load it's not DatasetDict but list of Dataset? | ### Describe the bug

I am loading datasets from custom `tsv` files stored locally and applying split instructions for each split. Although the ReadInstruction is being applied correctly and I was expecting it to be `DatasetDict` but instead it is a list of `Dataset`.

### Steps to reproduce the bug

Steps to reproduc... | https://github.com/huggingface/datasets/issues/5424 | closed | [] | 2023-01-16T06:54:28Z | 2023-02-24T16:19:00Z | 1 | macabdul9 |

huggingface/setfit | 260 | How to use .predict() function | Hi,

I am new at using the setfit. I will be running many tunings for models and currently achieved getting evaluation metrics using ("trainer.evaluate())

However, is there any way to do something like the following to save the trained model's predictions?

trainer = SetFitTrainer(......)

trainer.train()

**pre... | https://github.com/huggingface/setfit/issues/260 | closed | [

"question"

] | 2023-01-08T23:18:56Z | 2023-01-09T10:00:38Z | null | yafsin |

huggingface/setfit | 256 | Contrastive training number of epochs | The `SentenceTransformer` number of epochs is the same as the number of epochs for the classification head.

Even when `SetFitTrainer` is initialized with `num_epochs=1` and then `trainer.train(num_epochs=10)`, the sentence transformer runs with 10 epochs. Ideally, senatence transformer should run 1 epoch and the clas... | https://github.com/huggingface/setfit/issues/256 | closed | [

"question"

] | 2023-01-06T02:26:30Z | 2023-01-09T10:54:45Z | null | abhinav-kashyap-asus |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.