markdown stringlengths 0 1.02M | code stringlengths 0 832k | output stringlengths 0 1.02M | license stringlengths 3 36 | path stringlengths 6 265 | repo_name stringlengths 6 127 |

|---|---|---|---|---|---|

数学和统计方法数组的数学函数对数组或数组某个轴向的数据进行统计计算;既可以通过数据实例方法调用;也可以当做nump顶级函数使用. | arr = np.arange(10).reshape(2,5)

print arr

print arr.mean()#计算数组平均值

print arr.mean(axis = 0)#可指定轴,用以统计该轴上的值

print arr.mean(axis = 1)

print np.mean(arr)

print np.mean(arr,axis = 0)

print np.mean(arr,axis = 1)

print arr.sum()#计算数组和

print arr.sum(axis = 0)

print arr.sum(axis = 1)

print np.sum(arr)

print np.sum(arr,axis = 0)

print np.sum(arr,axis = 1)

print arr.var()#计算方差差

print arr.var(axis = 0)#

print arr.var(axis = 1)#

print np.var(arr)#计算方差差

print np.var(arr,axis = 0)#

print np.var(arr,axis = 1)#

print arr.std()#计算标准差

print arr.std(axis = 0)#

print arr.std(axis = 1)#

print np.std(arr)#计算标准差

print np.std(arr,axis = 0)#

print np.std(arr,axis = 1)#

print arr

print arr.min()#计算最小值

print arr.min(axis = 0)#

print arr.min(axis = 1)#

print np.min(arr)#

print np.min(arr,axis = 0)#

print np.min(arr,axis = 1)#

print arr

print arr.max()#计算最大值

print arr.max(axis = 0)#

print arr.max(axis = 1)#

print np.max(arr)

print np.max(arr,axis = 0)#

print np.max(arr,axis = 1)#

print arr

print arr[0].argmin()#最小值的索引

print arr[1].argmin()#最小值的索引

print arr[0].argmax()#最大值的索引

print arr[1].argmax()#最大值的索引

print arr

print arr.cumsum()#不聚合,所有元素的累积和,而是返回中间结果构成的数组

arr = arr + 1

print arr

print arr.cumprod()#不聚合,所有元素的累积积,而是返回中间结果构成的数组 | [[ 1 2 3 4 5]

[ 6 7 8 9 10]]

[ 1 2 6 24 120 720 5040 40320 362880

3628800]

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

用于布尔型数组的方法any()测试布尔型数组是否存在一个或多个Trueall()检查数组中所有值是否都为True | arr = np.random.randn(10)

# print

bools = arr > 0

print bools

print bools.any()

print np.any(bools)

print bools.all()

print np.all(bools)

arr = np.array([0,1,2,3,4])

print arr.any()#非布尔型数组,所有非0元素会被当做True | [ True False True True True False True True True False]

True

True

False

False

True

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

排序 | arr = np.random.randn(10)

print arr

arr.sort()#与python内置列表排序一样;就地排序,会修改数组本身

print arr

arr = np.random.randn(10)

print arr

print np.sort(arr)#返回数组已排序副本 | [ 1.00826129 -1.33200021 -2.09423152 1.14618429 -1.94373366 -1.19964262

-0.97484352 -0.25508501 0.95594379 -0.92684744]

[-2.09423152 -1.94373366 -1.33200021 -1.19964262 -0.97484352 -0.92684744

-0.25508501 0.95594379 1.00826129 1.14618429]

[-0.13764091 -0.44657322 -0.4725095 -2.25229905 0.92481373 -0.4272677

-2.3459137 0.82869414 -0.2215158 -0.28151528]

[-2.3459137 -2.25229905 -0.4725095 -0.44657322 -0.4272677 -0.28151528

-0.2215158 -0.13764091 0.82869414 0.92481373]

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

唯一化及其他的集合逻辑numpy针对一维数组的基本集合运算np.unique()找出数组中的唯一值,并返回已排序的结果 | names = np.array(['bob', 'joe', 'will', 'bob', 'will', 'joe', 'joe'])

print names

print np.unique(names) | ['bob' 'joe' 'will' 'bob' 'will' 'joe' 'joe']

['bob' 'joe' 'will']

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

线性代数 | #矩阵乘法

x = np.array([[1,2,3],[4,5,6]])

y = np.array([[6,23],[-1,7],[8,9]])

print x

print y

print x.dot(y)

print np.dot(x,y) | [[1 2 3]

[4 5 6]]

[[ 6 23]

[-1 7]

[ 8 9]]

[[ 28 64]

[ 67 181]]

[[ 28 64]

[ 67 181]]

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

随机数生成numpy.random模块对python内置的random进行补充,增接了一些用于高效生成多生概率分布的样本值的函数 | #从给定的上下限范围内随机选取整数

print np.random.randint(10)

#产生正态分布的样本值

print np.random.randn(3,2) | [[-0.05199971 -1.95031704]

[-1.42560357 0.78544126]

[ 0.47068984 -0.51157053]]

| Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

数组组合 | a = np.array([1,2,3])

b = np.array([4,5,6])

c = np.arange(6).reshape(2,3)

d = np.arange(2,8).reshape(2,3)

print(a)

print(b)

print(c)

print(d)

np.concatenate([c,d])

# In machine learning, useful to enrich or

# add new/concatenate features with hstack

np.hstack([c, d])

# Use broadcasting when needed to do this automatically

np.vstack([a,b, d]) | _____no_output_____ | Apache-2.0 | numpy/2-numpy-middle.ipynb | GmZhang3/data-science-ipython-notebooks |

A Scientific Deep Dive Into SageMaker LDA1. [Introduction](Introduction)1. [Setup](Setup)1. [Data Exploration](DataExploration)1. [Training](Training)1. [Inference](Inference)1. [Epilogue](Epilogue) Introduction***Amazon SageMaker LDA is an unsupervised learning algorithm that attempts to describe a set of observations as a mixture of distinct categories. Latent Dirichlet Allocation (LDA) is most commonly used to discover a user-specified number of topics shared by documents within a text corpus. Here each observation is a document, the features are the presence (or occurrence count) of each word, and the categories are the topics. Since the method is unsupervised, the topics are not specified up front, and are not guaranteed to align with how a human may naturally categorize documents. The topics are learned as a probability distribution over the words that occur in each document. Each document, in turn, is described as a mixture of topics.This notebook is similar to **LDA-Introduction.ipynb** but its objective and scope are a different. We will be taking a deeper dive into the theory. The primary goals of this notebook are,* to understand the LDA model and the example dataset,* understand how the Amazon SageMaker LDA algorithm works,* interpret the meaning of the inference output.Former knowledge of LDA is not required. However, we will run through concepts rather quickly and at least a foundational knowledge of mathematics or machine learning is recommended. Suggested references are provided, as appropriate. | !conda install -y scipy

%matplotlib inline

import os, re, tarfile

import boto3

import matplotlib.pyplot as plt

import mxnet as mx

import numpy as np

np.set_printoptions(precision=3, suppress=True)

# some helpful utility functions are defined in the Python module

# "generate_example_data" located in the same directory as this

# notebook

from generate_example_data import (

generate_griffiths_data, match_estimated_topics,

plot_lda, plot_lda_topics)

# accessing the SageMaker Python SDK

import sagemaker

from sagemaker.amazon.common import numpy_to_record_serializer

from sagemaker.predictor import csv_serializer, json_deserializer | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Setup****This notebook was created and tested on an ml.m4.xlarge notebook instance.*We first need to specify some AWS credentials; specifically data locations and access roles. This is the only cell of this notebook that you will need to edit. In particular, we need the following data:* `bucket` - An S3 bucket accessible by this account. * Used to store input training data and model data output. * Should be withing the same region as this notebook instance, training, and hosting.* `prefix` - The location in the bucket where this notebook's input and and output data will be stored. (The default value is sufficient.)* `role` - The IAM Role ARN used to give training and hosting access to your data. * See documentation on how to create these. * The script below will try to determine an appropriate Role ARN. | from sagemaker import get_execution_role

role = get_execution_role()

bucket = '<your_s3_bucket_name_here>'

prefix = 'sagemaker/lda_science'

print('Training input/output will be stored in {}/{}'.format(bucket, prefix))

print('\nIAM Role: {}'.format(role)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

The LDA ModelAs mentioned above, LDA is a model for discovering latent topics describing a collection of documents. In this section we will give a brief introduction to the model. Let,* $M$ = the number of *documents* in a corpus* $N$ = the average *length* of a document.* $V$ = the size of the *vocabulary* (the total number of unique words)We denote a *document* by a vector $w \in \mathbb{R}^V$ where $w_i$ equals the number of times the $i$th word in the vocabulary occurs within the document. This is called the "bag-of-words" format of representing a document.$$\underbrace{w}_{\text{document}} = \overbrace{\big[ w_1, w_2, \ldots, w_V \big] }^{\text{word counts}},\quadV = \text{vocabulary size}$$The *length* of a document is equal to the total number of words in the document: $N_w = \sum_{i=1}^V w_i$.An LDA model is defined by two parameters: a topic-word distribution matrix $\beta \in \mathbb{R}^{K \times V}$ and a Dirichlet topic prior $\alpha \in \mathbb{R}^K$. In particular, let,$$\beta = \left[ \beta_1, \ldots, \beta_K \right]$$be a collection of $K$ *topics* where each topic $\beta_k \in \mathbb{R}^V$ is represented as probability distribution over the vocabulary. One of the utilities of the LDA model is that a given word is allowed to appear in multiple topics with positive probability. The Dirichlet topic prior is a vector $\alpha \in \mathbb{R}^K$ such that $\alpha_k > 0$ for all $k$. Data Exploration--- An Example DatasetBefore explaining further let's get our hands dirty with an example dataset. The following synthetic data comes from [1] and comes with a very useful visual interpretation.> [1] Thomas Griffiths and Mark Steyvers. *Finding Scientific Topics.* Proceedings of the National Academy of Science, 101(suppl 1):5228-5235, 2004. | print('Generating example data...')

num_documents = 6000

known_alpha, known_beta, documents, topic_mixtures = generate_griffiths_data(

num_documents=num_documents, num_topics=10)

num_topics, vocabulary_size = known_beta.shape

# separate the generated data into training and tests subsets

num_documents_training = int(0.9*num_documents)

num_documents_test = num_documents - num_documents_training

documents_training = documents[:num_documents_training]

documents_test = documents[num_documents_training:]

topic_mixtures_training = topic_mixtures[:num_documents_training]

topic_mixtures_test = topic_mixtures[num_documents_training:]

print('documents_training.shape = {}'.format(documents_training.shape))

print('documents_test.shape = {}'.format(documents_test.shape)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Let's start by taking a closer look at the documents. Note that the vocabulary size of these data is $V = 25$. The average length of each document in this data set is 150. (See `generate_griffiths_data.py`.) | print('First training document =\n{}'.format(documents_training[0]))

print('\nVocabulary size = {}'.format(vocabulary_size))

print('Length of first document = {}'.format(documents_training[0].sum()))

average_document_length = documents.sum(axis=1).mean()

print('Observed average document length = {}'.format(average_document_length)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

The example data set above also returns the LDA parameters,$$(\alpha, \beta)$$used to generate the documents. Let's examine the first topic and verify that it is a probability distribution on the vocabulary. | print('First topic =\n{}'.format(known_beta[0]))

print('\nTopic-word probability matrix (beta) shape: (num_topics, vocabulary_size) = {}'.format(known_beta.shape))

print('\nSum of elements of first topic = {}'.format(known_beta[0].sum())) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Unlike some clustering algorithms, one of the versatilities of the LDA model is that a given word can belong to multiple topics. The probability of that word occurring in each topic may differ, as well. This is reflective of real-world data where, for example, the word *"rover"* appears in a *"dogs"* topic as well as in a *"space exploration"* topic.In our synthetic example dataset, the first word in the vocabulary belongs to both Topic 1 and Topic 6 with non-zero probability. | print('Topic #1:\n{}'.format(known_beta[0]))

print('Topic #6:\n{}'.format(known_beta[5])) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Human beings are visual creatures, so it might be helpful to come up with a visual representation of these documents.In the below plots, each pixel of a document represents a word. The greyscale intensity is a measure of how frequently that word occurs within the document. Below we plot the first few documents of the training set reshaped into 5x5 pixel grids. | %matplotlib inline

fig = plot_lda(documents_training, nrows=3, ncols=4, cmap='gray_r', with_colorbar=True)

fig.suptitle('$w$ - Document Word Counts')

fig.set_dpi(160) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

When taking a close look at these documents we can see some patterns in the word distributions suggesting that, perhaps, each topic represents a "column" or "row" of words with non-zero probability and that each document is composed primarily of a handful of topics.Below we plots the *known* topic-word probability distributions, $\beta$. Similar to the documents we reshape each probability distribution to a $5 \times 5$ pixel image where the color represents the probability of that each word occurring in the topic. | %matplotlib inline

fig = plot_lda(known_beta, nrows=1, ncols=10)

fig.suptitle(r'Known $\beta$ - Topic-Word Probability Distributions')

fig.set_dpi(160)

fig.set_figheight(2) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

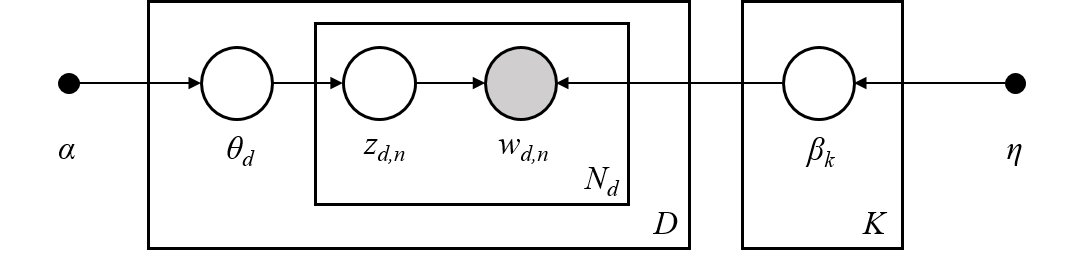

These 10 topics were used to generate the document corpus. Next, we will learn about how this is done. Generating DocumentsLDA is a generative model, meaning that the LDA parameters $(\alpha, \beta)$ are used to construct documents word-by-word by drawing from the topic-word distributions. In fact, looking closely at the example documents above you can see that some documents sample more words from some topics than from others.LDA works as follows: given * $M$ documents $w^{(1)}, w^{(2)}, \ldots, w^{(M)}$,* an average document length of $N$,* and an LDA model $(\alpha, \beta)$.**For** each document, $w^{(m)}$:* sample a topic mixture: $\theta^{(m)} \sim \text{Dirichlet}(\alpha)$* **For** each word $n$ in the document: * Sample a topic $z_n^{(m)} \sim \text{Multinomial}\big( \theta^{(m)} \big)$ * Sample a word from this topic, $w_n^{(m)} \sim \text{Multinomial}\big( \beta_{z_n^{(m)}} \; \big)$ * Add to documentThe [plate notation](https://en.wikipedia.org/wiki/Plate_notation) for the LDA model, introduced in [2], encapsulates this process pictorially.> [2] David M Blei, Andrew Y Ng, and Michael I Jordan. Latent Dirichlet Allocation. Journal of Machine Learning Research, 3(Jan):993–1022, 2003. Topic MixturesFor the documents we generated above lets look at their corresponding topic mixtures, $\theta \in \mathbb{R}^K$. The topic mixtures represent the probablility that a given word of the document is sampled from a particular topic. For example, if the topic mixture of an input document $w$ is,$$\theta = \left[ 0.3, 0.2, 0, 0.5, 0, \ldots, 0 \right]$$then $w$ is 30% generated from the first topic, 20% from the second topic, and 50% from the fourth topic. In particular, the words contained in the document are sampled from the first topic-word probability distribution 30% of the time, from the second distribution 20% of the time, and the fourth disribution 50% of the time.The objective of inference, also known as scoring, is to determine the most likely topic mixture of a given input document. Colloquially, this means figuring out which topics appear within a given document and at what ratios. We will perform infernece later in the [Inference](Inference) section.Since we generated these example documents using the LDA model we know the topic mixture generating them. Let's examine these topic mixtures. | print('First training document =\n{}'.format(documents_training[0]))

print('\nVocabulary size = {}'.format(vocabulary_size))

print('Length of first document = {}'.format(documents_training[0].sum()))

print('First training document topic mixture =\n{}'.format(topic_mixtures_training[0]))

print('\nNumber of topics = {}'.format(num_topics))

print('sum(theta) = {}'.format(topic_mixtures_training[0].sum())) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

We plot the first document along with its topic mixture. We also plot the topic-word probability distributions again for reference. | %matplotlib inline

fig, (ax1,ax2) = plt.subplots(2, 1)

ax1.matshow(documents[0].reshape(5,5), cmap='gray_r')

ax1.set_title(r'$w$ - Document', fontsize=20)

ax1.set_xticks([])

ax1.set_yticks([])

cax2 = ax2.matshow(topic_mixtures[0].reshape(1,-1), cmap='Reds', vmin=0, vmax=1)

cbar = fig.colorbar(cax2, orientation='horizontal')

ax2.set_title(r'$\theta$ - Topic Mixture', fontsize=20)

ax2.set_xticks([])

ax2.set_yticks([])

fig.set_dpi(100)

%matplotlib inline

# pot

fig = plot_lda(known_beta, nrows=1, ncols=10)

fig.suptitle(r'Known $\beta$ - Topic-Word Probability Distributions')

fig.set_dpi(160)

fig.set_figheight(1.5) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Finally, let's plot several documents with their corresponding topic mixtures. We can see how topics with large weight in the document lead to more words in the document within the corresponding "row" or "column". | %matplotlib inline

fig = plot_lda_topics(documents_training, 3, 4, topic_mixtures=topic_mixtures)

fig.suptitle(r'$(w,\theta)$ - Documents with Known Topic Mixtures')

fig.set_dpi(160) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Training***In this section we will give some insight into how AWS SageMaker LDA fits an LDA model to a corpus, create an run a SageMaker LDA training job, and examine the output trained model. Topic Estimation using Tensor DecompositionsGiven a document corpus, Amazon SageMaker LDA uses a spectral tensor decomposition technique to determine the LDA model $(\alpha, \beta)$ which most likely describes the corpus. See [1] for a primary reference of the theory behind the algorithm. The spectral decomposition, itself, is computed using the CPDecomp algorithm described in [2].The overall idea is the following: given a corpus of documents $\mathcal{W} = \{w^{(1)}, \ldots, w^{(M)}\}, \; w^{(m)} \in \mathbb{R}^V,$ we construct a statistic tensor,$$T \in \bigotimes^3 \mathbb{R}^V$$such that the spectral decomposition of the tensor is approximately the LDA parameters $\alpha \in \mathbb{R}^K$ and $\beta \in \mathbb{R}^{K \times V}$ which maximize the likelihood of observing the corpus for a given number of topics, $K$,$$T \approx \sum_{k=1}^K \alpha_k \; (\beta_k \otimes \beta_k \otimes \beta_k)$$This statistic tensor encapsulates information from the corpus such as the document mean, cross correlation, and higher order statistics. For details, see [1].> [1] Animashree Anandkumar, Rong Ge, Daniel Hsu, Sham Kakade, and Matus Telgarsky. *"Tensor Decompositions for Learning Latent Variable Models"*, Journal of Machine Learning Research, 15:2773–2832, 2014.>> [2] Tamara Kolda and Brett Bader. *"Tensor Decompositions and Applications"*. SIAM Review, 51(3):455–500, 2009. Store Data on S3Before we run training we need to prepare the data.A SageMaker training job needs access to training data stored in an S3 bucket. Although training can accept data of various formats we convert the documents MXNet RecordIO Protobuf format before uploading to the S3 bucket defined at the beginning of this notebook. | # convert documents_training to Protobuf RecordIO format

recordio_protobuf_serializer = numpy_to_record_serializer()

fbuffer = recordio_protobuf_serializer(documents_training)

# upload to S3 in bucket/prefix/train

fname = 'lda.data'

s3_object = os.path.join(prefix, 'train', fname)

boto3.Session().resource('s3').Bucket(bucket).Object(s3_object).upload_fileobj(fbuffer)

s3_train_data = 's3://{}/{}'.format(bucket, s3_object)

print('Uploaded data to S3: {}'.format(s3_train_data)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Next, we specify a Docker container containing the SageMaker LDA algorithm. For your convenience, a region-specific container is automatically chosen for you to minimize cross-region data communication | containers = {

'us-west-2': '266724342769.dkr.ecr.us-west-2.amazonaws.com/lda:latest',

'us-east-1': '766337827248.dkr.ecr.us-east-1.amazonaws.com/lda:latest',

'us-east-2': '999911452149.dkr.ecr.us-east-2.amazonaws.com/lda:latest',

'eu-west-1': '999678624901.dkr.ecr.eu-west-1.amazonaws.com/lda:latest'

}

region_name = boto3.Session().region_name

container = containers[region_name]

print('Using SageMaker LDA container: {} ({})'.format(container, region_name)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Training ParametersParticular to a SageMaker LDA training job are the following hyperparameters:* **`num_topics`** - The number of topics or categories in the LDA model. * Usually, this is not known a priori. * In this example, howevever, we know that the data is generated by five topics.* **`feature_dim`** - The size of the *"vocabulary"*, in LDA parlance. * In this example, this is equal 25.* **`mini_batch_size`** - The number of input training documents.* **`alpha0`** - *(optional)* a measurement of how "mixed" are the topic-mixtures. * When `alpha0` is small the data tends to be represented by one or few topics. * When `alpha0` is large the data tends to be an even combination of several or many topics. * The default value is `alpha0 = 1.0`.In addition to these LDA model hyperparameters, we provide additional parameters defining things like the EC2 instance type on which training will run, the S3 bucket containing the data, and the AWS access role. Note that,* Recommended instance type: `ml.c4`* Current limitations: * SageMaker LDA *training* can only run on a single instance. * SageMaker LDA does not take advantage of GPU hardware. * (The Amazon AI Algorithms team is working hard to provide these capabilities in a future release!) Using the above configuration create a SageMaker client and use the client to create a training job. | session = sagemaker.Session()

# specify general training job information

lda = sagemaker.estimator.Estimator(

container,

role,

output_path='s3://{}/{}/output'.format(bucket, prefix),

train_instance_count=1,

train_instance_type='ml.c4.2xlarge',

sagemaker_session=session,

)

# set algorithm-specific hyperparameters

lda.set_hyperparameters(

num_topics=num_topics,

feature_dim=vocabulary_size,

mini_batch_size=num_documents_training,

alpha0=1.0,

)

# run the training job on input data stored in S3

lda.fit({'train': s3_train_data}) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

If you see the message> `===== Job Complete =====`at the bottom of the output logs then that means training sucessfully completed and the output LDA model was stored in the specified output path. You can also view information about and the status of a training job using the AWS SageMaker console. Just click on the "Jobs" tab and select training job matching the training job name, below: | print('Training job name: {}'.format(lda.latest_training_job.job_name)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Inspecting the Trained ModelWe know the LDA parameters $(\alpha, \beta)$ used to generate the example data. How does the learned model compare the known one? In this section we will download the model data and measure how well SageMaker LDA did in learning the model.First, we download the model data. SageMaker will output the model in > `s3:////output//output/model.tar.gz`.SageMaker LDA stores the model as a two-tuple $(\alpha, \beta)$ where each LDA parameter is an MXNet NDArray. | # download and extract the model file from S3

job_name = lda.latest_training_job.job_name

model_fname = 'model.tar.gz'

model_object = os.path.join(prefix, 'output', job_name, 'output', model_fname)

boto3.Session().resource('s3').Bucket(bucket).Object(model_object).download_file(fname)

with tarfile.open(fname) as tar:

tar.extractall()

print('Downloaded and extracted model tarball: {}'.format(model_object))

# obtain the model file

model_list = [fname for fname in os.listdir('.') if fname.startswith('model_')]

model_fname = model_list[0]

print('Found model file: {}'.format(model_fname))

# get the model from the model file and store in Numpy arrays

alpha, beta = mx.ndarray.load(model_fname)

learned_alpha_permuted = alpha.asnumpy()

learned_beta_permuted = beta.asnumpy()

print('\nLearned alpha.shape = {}'.format(learned_alpha_permuted.shape))

print('Learned beta.shape = {}'.format(learned_beta_permuted.shape)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Presumably, SageMaker LDA has found the topics most likely used to generate the training corpus. However, even if this is case the topics would not be returned in any particular order. Therefore, we match the found topics to the known topics closest in L1-norm in order to find the topic permutation.Note that we will use the `permutation` later during inference to match known topic mixtures to found topic mixtures.Below plot the known topic-word probability distribution, $\beta \in \mathbb{R}^{K \times V}$ next to the distributions found by SageMaker LDA as well as the L1-norm errors between the two. | permutation, learned_beta = match_estimated_topics(known_beta, learned_beta_permuted)

learned_alpha = learned_alpha_permuted[permutation]

fig = plot_lda(np.vstack([known_beta, learned_beta]), 2, 10)

fig.set_dpi(160)

fig.suptitle('Known vs. Found Topic-Word Probability Distributions')

fig.set_figheight(3)

beta_error = np.linalg.norm(known_beta - learned_beta, 1)

alpha_error = np.linalg.norm(known_alpha - learned_alpha, 1)

print('L1-error (beta) = {}'.format(beta_error))

print('L1-error (alpha) = {}'.format(alpha_error)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Not bad!In the eyeball-norm the topics match quite well. In fact, the topic-word distribution error is approximately 2%. Inference***A trained model does nothing on its own. We now want to use the model we computed to perform inference on data. For this example, that means predicting the topic mixture representing a given document.We create an inference endpoint using the SageMaker Python SDK `deploy()` function from the job we defined above. We specify the instance type where inference is computed as well as an initial number of instances to spin up. | lda_inference = lda.deploy(

initial_instance_count=1,

instance_type='ml.m4.xlarge', # LDA inference may work better at scale on ml.c4 instances

) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Congratulations! You now have a functioning SageMaker LDA inference endpoint. You can confirm the endpoint configuration and status by navigating to the "Endpoints" tab in the AWS SageMaker console and selecting the endpoint matching the endpoint name, below: | print('Endpoint name: {}'.format(lda_inference.endpoint)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

With this realtime endpoint at our fingertips we can finally perform inference on our training and test data.We can pass a variety of data formats to our inference endpoint. In this example we will demonstrate passing CSV-formatted data. Other available formats are JSON-formatted, JSON-sparse-formatter, and RecordIO Protobuf. We make use of the SageMaker Python SDK utilities `csv_serializer` and `json_deserializer` when configuring the inference endpoint. | lda_inference.content_type = 'text/csv'

lda_inference.serializer = csv_serializer

lda_inference.deserializer = json_deserializer | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

We pass some test documents to the inference endpoint. Note that the serializer and deserializer will atuomatically take care of the datatype conversion. | results = lda_inference.predict(documents_test[:12])

print(results) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

It may be hard to see but the output format of SageMaker LDA inference endpoint is a Python dictionary with the following format.```{ 'predictions': [ {'topic_mixture': [ ... ] }, {'topic_mixture': [ ... ] }, {'topic_mixture': [ ... ] }, ... ]}```We extract the topic mixtures, themselves, corresponding to each of the input documents. | inferred_topic_mixtures_permuted = np.array([prediction['topic_mixture'] for prediction in results['predictions']])

print('Inferred topic mixtures (permuted):\n\n{}'.format(inferred_topic_mixtures_permuted)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Inference AnalysisRecall that although SageMaker LDA successfully learned the underlying topics which generated the sample data the topics were in a different order. Before we compare to known topic mixtures $\theta \in \mathbb{R}^K$ we should also permute the inferred topic mixtures | inferred_topic_mixtures = inferred_topic_mixtures_permuted[:,permutation]

print('Inferred topic mixtures:\n\n{}'.format(inferred_topic_mixtures)) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Let's plot these topic mixture probability distributions alongside the known ones. | %matplotlib inline

# create array of bar plots

width = 0.4

x = np.arange(10)

nrows, ncols = 3, 4

fig, ax = plt.subplots(nrows, ncols, sharey=True)

for i in range(nrows):

for j in range(ncols):

index = i*ncols + j

ax[i,j].bar(x, topic_mixtures_test[index], width, color='C0')

ax[i,j].bar(x+width, inferred_topic_mixtures[index], width, color='C1')

ax[i,j].set_xticks(range(num_topics))

ax[i,j].set_yticks(np.linspace(0,1,5))

ax[i,j].grid(which='major', axis='y')

ax[i,j].set_ylim([0,1])

ax[i,j].set_xticklabels([])

if (i==(nrows-1)):

ax[i,j].set_xticklabels(range(num_topics), fontsize=7)

if (j==0):

ax[i,j].set_yticklabels([0,'',0.5,'',1.0], fontsize=7)

fig.suptitle('Known vs. Inferred Topic Mixtures')

ax_super = fig.add_subplot(111, frameon=False)

ax_super.tick_params(labelcolor='none', top='off', bottom='off', left='off', right='off')

ax_super.grid(False)

ax_super.set_xlabel('Topic Index')

ax_super.set_ylabel('Topic Probability')

fig.set_dpi(160) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

In the eyeball-norm these look quite comparable.Let's be more scientific about this. Below we compute and plot the distribution of L1-errors from **all** of the test documents. Note that we send a new payload of test documents to the inference endpoint and apply the appropriate permutation to the output. | %%time

# create a payload containing all of the test documents and run inference again

#

# TRY THIS:

# try switching between the test data set and a subset of the training

# data set. It is likely that LDA inference will perform better against

# the training set than the holdout test set.

#

payload_documents = documents_test # Example 1

known_topic_mixtures = topic_mixtures_test # Example 1

#payload_documents = documents_training[:600]; # Example 2

#known_topic_mixtures = topic_mixtures_training[:600] # Example 2

print('Invoking endpoint...\n')

results = lda_inference.predict(payload_documents)

inferred_topic_mixtures_permuted = np.array([prediction['topic_mixture'] for prediction in results['predictions']])

inferred_topic_mixtures = inferred_topic_mixtures_permuted[:,permutation]

print('known_topics_mixtures.shape = {}'.format(known_topic_mixtures.shape))

print('inferred_topics_mixtures_test.shape = {}\n'.format(inferred_topic_mixtures.shape))

%matplotlib inline

l1_errors = np.linalg.norm((inferred_topic_mixtures - known_topic_mixtures), 1, axis=1)

# plot the error freqency

fig, ax_frequency = plt.subplots()

bins = np.linspace(0,1,40)

weights = np.ones_like(l1_errors)/len(l1_errors)

freq, bins, _ = ax_frequency.hist(l1_errors, bins=50, weights=weights, color='C0')

ax_frequency.set_xlabel('L1-Error')

ax_frequency.set_ylabel('Frequency', color='C0')

# plot the cumulative error

shift = (bins[1]-bins[0])/2

x = bins[1:] - shift

ax_cumulative = ax_frequency.twinx()

cumulative = np.cumsum(freq)/sum(freq)

ax_cumulative.plot(x, cumulative, marker='o', color='C1')

ax_cumulative.set_ylabel('Cumulative Frequency', color='C1')

# align grids and show

freq_ticks = np.linspace(0, 1.5*freq.max(), 5)

freq_ticklabels = np.round(100*freq_ticks)/100

ax_frequency.set_yticks(freq_ticks)

ax_frequency.set_yticklabels(freq_ticklabels)

ax_cumulative.set_yticks(np.linspace(0, 1, 5))

ax_cumulative.grid(which='major', axis='y')

ax_cumulative.set_ylim((0,1))

fig.suptitle('Topic Mixutre L1-Errors')

fig.set_dpi(110) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Machine learning algorithms are not perfect and the data above suggests this is true of SageMaker LDA. With more documents and some hyperparameter tuning we can obtain more accurate results against the known topic-mixtures.For now, let's just investigate the documents-topic mixture pairs that seem to do well as well as those that do not. Below we retreive a document and topic mixture corresponding to a small L1-error as well as one with a large L1-error. | N = 6

good_idx = (l1_errors < 0.05)

good_documents = payload_documents[good_idx][:N]

good_topic_mixtures = inferred_topic_mixtures[good_idx][:N]

poor_idx = (l1_errors > 0.3)

poor_documents = payload_documents[poor_idx][:N]

poor_topic_mixtures = inferred_topic_mixtures[poor_idx][:N]

%matplotlib inline

fig = plot_lda_topics(good_documents, 2, 3, topic_mixtures=good_topic_mixtures)

fig.suptitle('Documents With Accurate Inferred Topic-Mixtures')

fig.set_dpi(120)

%matplotlib inline

fig = plot_lda_topics(poor_documents, 2, 3, topic_mixtures=poor_topic_mixtures)

fig.suptitle('Documents With Inaccurate Inferred Topic-Mixtures')

fig.set_dpi(120) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

In this example set the documents on which inference was not as accurate tend to have a denser topic-mixture. This makes sense when extrapolated to real-world datasets: it can be difficult to nail down which topics are represented in a document when the document uses words from a large subset of the vocabulary. Stop / Close the EndpointFinally, we should delete the endpoint before we close the notebook.To do so execute the cell below. Alternately, you can navigate to the "Endpoints" tab in the SageMaker console, select the endpoint with the name stored in the variable `endpoint_name`, and select "Delete" from the "Actions" dropdown menu. | sagemaker.Session().delete_endpoint(lda_inference.endpoint) | _____no_output_____ | Apache-2.0 | scientific_details_of_algorithms/lda_topic_modeling/LDA-Science.ipynb | karim7262/amazon-sagemaker-examples |

Load Library | !pip install wget

!pip install keras-tcn

import wget

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from tensorflow.keras import Input, Model

from tensorflow.keras.layers import Dense

from tqdm.notebook import tqdm

from tcn import TCN

wget.download("https://github.com/philipperemy/keras-tcn/raw/master/tasks/monthly-milk-production-pounds-p.csv") | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Read the dataset | milk = pd.read_csv('monthly-milk-production-pounds-p.csv', index_col=0, parse_dates=True) | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Display top5 Record | print(milk.shape)

milk.head() | (168, 1)

| MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Lookback 12 month windows | lookback_window = 12 | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Convert Milk Data into Numpy Array | milk = milk.values | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Convert in to X, y format | x = []

y = []

for i in tqdm(range(lookback_window, len(milk))):

x.append(milk[i - lookback_window:i])

y.append(milk[i]) | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Generate Array of list x and y | x = np.array(x)

y = np.array(y)

print(x.shape)

print(y.shape) | (156, 12, 1)

(156, 1)

| MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Model Design | i = Input(shape=(lookback_window, 1))

m = TCN()(i)

m = Dense(1, activation='linear')(m)

model = Model(inputs=[i], outputs=[m])

model.summary()

model.compile('adam','mae') | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Time for Model training... | print('Train...')

model.fit(x, y, epochs=100) | Train...

Epoch 1/100

5/5 [==============================] - 0s 25ms/step - loss: 271.5721

Epoch 2/100

5/5 [==============================] - 0s 26ms/step - loss: 202.3601

Epoch 3/100

5/5 [==============================] - 0s 23ms/step - loss: 129.1283

Epoch 4/100

5/5 [==============================] - 0s 25ms/step - loss: 119.5586

Epoch 5/100

5/5 [==============================] - 0s 25ms/step - loss: 82.7962

Epoch 6/100

5/5 [==============================] - 0s 23ms/step - loss: 29.5778

Epoch 7/100

5/5 [==============================] - 0s 23ms/step - loss: 26.9950

Epoch 8/100

5/5 [==============================] - 0s 25ms/step - loss: 23.8581

Epoch 9/100

5/5 [==============================] - 0s 23ms/step - loss: 27.1797

Epoch 10/100

5/5 [==============================] - 0s 24ms/step - loss: 37.1005

Epoch 11/100

5/5 [==============================] - 0s 27ms/step - loss: 38.2245

Epoch 12/100

5/5 [==============================] - 0s 23ms/step - loss: 27.9166

Epoch 13/100

5/5 [==============================] - 0s 27ms/step - loss: 49.9146

Epoch 14/100

5/5 [==============================] - 0s 24ms/step - loss: 31.0408

Epoch 15/100

5/5 [==============================] - 0s 25ms/step - loss: 23.8059

Epoch 16/100

5/5 [==============================] - 0s 24ms/step - loss: 18.7888

Epoch 17/100

5/5 [==============================] - 0s 24ms/step - loss: 25.1425

Epoch 18/100

5/5 [==============================] - 0s 25ms/step - loss: 32.6869

Epoch 19/100

5/5 [==============================] - 0s 23ms/step - loss: 31.6958

Epoch 20/100

5/5 [==============================] - 0s 23ms/step - loss: 31.2011

Epoch 21/100

5/5 [==============================] - 0s 23ms/step - loss: 22.9983

Epoch 22/100

5/5 [==============================] - 0s 25ms/step - loss: 27.7421

Epoch 23/100

5/5 [==============================] - 0s 24ms/step - loss: 29.9864

Epoch 24/100

5/5 [==============================] - 0s 23ms/step - loss: 68.7743

Epoch 25/100

5/5 [==============================] - 0s 23ms/step - loss: 41.1511

Epoch 26/100

5/5 [==============================] - 0s 24ms/step - loss: 35.2367

Epoch 27/100

5/5 [==============================] - 0s 23ms/step - loss: 66.6526

Epoch 28/100

5/5 [==============================] - 0s 24ms/step - loss: 40.3432

Epoch 29/100

5/5 [==============================] - 0s 23ms/step - loss: 50.4716

Epoch 30/100

5/5 [==============================] - 0s 25ms/step - loss: 48.0320

Epoch 31/100

5/5 [==============================] - 0s 24ms/step - loss: 34.7563

Epoch 32/100

5/5 [==============================] - 0s 24ms/step - loss: 27.2386

Epoch 33/100

5/5 [==============================] - 0s 24ms/step - loss: 32.2853

Epoch 34/100

5/5 [==============================] - 0s 25ms/step - loss: 26.1964

Epoch 35/100

5/5 [==============================] - 0s 25ms/step - loss: 22.9360

Epoch 36/100

5/5 [==============================] - 0s 23ms/step - loss: 25.1416

Epoch 37/100

5/5 [==============================] - 0s 24ms/step - loss: 30.5617

Epoch 38/100

5/5 [==============================] - 0s 25ms/step - loss: 24.7003

Epoch 39/100

5/5 [==============================] - 0s 23ms/step - loss: 14.7676

Epoch 40/100

5/5 [==============================] - 0s 24ms/step - loss: 14.6580

Epoch 41/100

5/5 [==============================] - 0s 24ms/step - loss: 12.4486

Epoch 42/100

5/5 [==============================] - 0s 24ms/step - loss: 15.5033

Epoch 43/100

5/5 [==============================] - 0s 23ms/step - loss: 20.4509

Epoch 44/100

5/5 [==============================] - 0s 23ms/step - loss: 27.3433

Epoch 45/100

5/5 [==============================] - 0s 23ms/step - loss: 25.6425

Epoch 46/100

5/5 [==============================] - 0s 24ms/step - loss: 23.2722

Epoch 47/100

5/5 [==============================] - 0s 24ms/step - loss: 19.9241

Epoch 48/100

5/5 [==============================] - 0s 23ms/step - loss: 26.6964

Epoch 49/100

5/5 [==============================] - 0s 23ms/step - loss: 51.5725

Epoch 50/100

5/5 [==============================] - 0s 24ms/step - loss: 46.1796

Epoch 51/100

5/5 [==============================] - 0s 24ms/step - loss: 43.5478

Epoch 52/100

5/5 [==============================] - 0s 23ms/step - loss: 48.7085

Epoch 53/100

5/5 [==============================] - 0s 23ms/step - loss: 46.6810

Epoch 54/100

5/5 [==============================] - 0s 23ms/step - loss: 37.4476

Epoch 55/100

5/5 [==============================] - 0s 24ms/step - loss: 40.0287

Epoch 56/100

5/5 [==============================] - 0s 25ms/step - loss: 31.3757

Epoch 57/100

5/5 [==============================] - 0s 23ms/step - loss: 23.3440

Epoch 58/100

5/5 [==============================] - 0s 24ms/step - loss: 25.6717

Epoch 59/100

5/5 [==============================] - 0s 23ms/step - loss: 22.8951

Epoch 60/100

5/5 [==============================] - 0s 24ms/step - loss: 16.8260

Epoch 61/100

5/5 [==============================] - 0s 23ms/step - loss: 19.1047

Epoch 62/100

5/5 [==============================] - 0s 23ms/step - loss: 21.6529

Epoch 63/100

5/5 [==============================] - 0s 23ms/step - loss: 16.5754

Epoch 64/100

5/5 [==============================] - 0s 24ms/step - loss: 12.2943

Epoch 65/100

5/5 [==============================] - 0s 25ms/step - loss: 14.4700

Epoch 66/100

5/5 [==============================] - 0s 24ms/step - loss: 12.4727

Epoch 67/100

5/5 [==============================] - 0s 24ms/step - loss: 12.2146

Epoch 68/100

5/5 [==============================] - 0s 23ms/step - loss: 24.3314

Epoch 69/100

5/5 [==============================] - 0s 23ms/step - loss: 23.2591

Epoch 70/100

5/5 [==============================] - 0s 23ms/step - loss: 21.5991

Epoch 71/100

5/5 [==============================] - 0s 24ms/step - loss: 34.6669

Epoch 72/100

5/5 [==============================] - 0s 24ms/step - loss: 58.7299

Epoch 73/100

5/5 [==============================] - 0s 24ms/step - loss: 30.8628

Epoch 74/100

5/5 [==============================] - 0s 23ms/step - loss: 36.4043

Epoch 75/100

5/5 [==============================] - 0s 23ms/step - loss: 45.6252

Epoch 76/100

5/5 [==============================] - 0s 24ms/step - loss: 29.5786

Epoch 77/100

5/5 [==============================] - 0s 23ms/step - loss: 16.1575

Epoch 78/100

5/5 [==============================] - 0s 24ms/step - loss: 24.9460

Epoch 79/100

5/5 [==============================] - 0s 23ms/step - loss: 17.0462

Epoch 80/100

5/5 [==============================] - 0s 23ms/step - loss: 13.5069

Epoch 81/100

5/5 [==============================] - 0s 24ms/step - loss: 14.0239

Epoch 82/100

5/5 [==============================] - 0s 23ms/step - loss: 12.9607

Epoch 83/100

5/5 [==============================] - 0s 23ms/step - loss: 13.6181

Epoch 84/100

5/5 [==============================] - 0s 23ms/step - loss: 12.3904

Epoch 85/100

5/5 [==============================] - 0s 26ms/step - loss: 13.5270

Epoch 86/100

5/5 [==============================] - 0s 23ms/step - loss: 18.3469

Epoch 87/100

5/5 [==============================] - 0s 24ms/step - loss: 20.0561

Epoch 88/100

5/5 [==============================] - 0s 22ms/step - loss: 19.5092

Epoch 89/100

5/5 [==============================] - 0s 23ms/step - loss: 22.7791

Epoch 90/100

5/5 [==============================] - 0s 22ms/step - loss: 20.7960

Epoch 91/100

5/5 [==============================] - 0s 23ms/step - loss: 26.3365

Epoch 92/100

5/5 [==============================] - 0s 24ms/step - loss: 21.1719

Epoch 93/100

5/5 [==============================] - 0s 22ms/step - loss: 19.5793

Epoch 94/100

5/5 [==============================] - 0s 23ms/step - loss: 22.3828

Epoch 95/100

5/5 [==============================] - 0s 23ms/step - loss: 20.0138

Epoch 96/100

5/5 [==============================] - 0s 23ms/step - loss: 26.0298

Epoch 97/100

5/5 [==============================] - 0s 23ms/step - loss: 15.8576

Epoch 98/100

5/5 [==============================] - 0s 23ms/step - loss: 26.3697

Epoch 99/100

5/5 [==============================] - 0s 24ms/step - loss: 14.8465

Epoch 100/100

5/5 [==============================] - 0s 22ms/step - loss: 13.5129

| MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Prediction with TCN Model | predict = model.predict(x) | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

Plot the Result | plt.style.use("fivethirtyeight")

plt.figure(figsize = (15,7))

plt.plot(predict)

plt.plot(y)

plt.title('Monthly Milk Production (in pounds)')

plt.legend(['predicted', 'actual'])

plt.xlabel("Moths Counts")

plt.ylabel("Milk Production in Pounds")

plt.show() | _____no_output_____ | MIT | TCN_TimeSeries_Approach.ipynb | ashishpatel26/tcn-keras-Examples |

synchro.extracting> Function to extract data of an experiment from 3rd party programs To align timeseries of an experiment, we need to read logs and import data produced by 3rd party softwares used during the experiment. It includes:* QDSpy logging* Numpy arrays of the stimuli* SpykingCircus spike sorting refined with Phy* Eye tracking results from MaskRCNN | #export

import numpy as np

import datetime

import os, glob

import csv

import re

from theonerig.synchro.io import *

from theonerig.utils import *

def get_QDSpy_logs(log_dir):

"""Factory function to generate QDSpy_log objects from all the QDSpy logs of the folder `log_dir`"""

log_names = glob.glob(os.path.join(log_dir,'[0-9]*.log'))

qdspy_logs = [QDSpy_log(log_name) for log_name in log_names]

for qdspy_log in qdspy_logs:

qdspy_log.find_stimuli()

return qdspy_logs

class QDSpy_log:

"""Class defining a QDSpy log.

It reads the log it represent and extract the stimuli information from it:

- Start and end time

- Parameters like the md5 key

- Frame delays

"""

def __init__(self, log_path):

self.log_path = log_path

self.stimuli = []

self.comments = []

def _extract_data(self, data_line):

data = data_line[data_line.find('{')+1:data_line.find('}')]

data_splitted = data.split(',')

data_dict = {}

for data in data_splitted:

ind = data.find("'")

if type(data[data.find(":")+2:]) is str:

data_dict[data[ind+1:data.find("'",ind+1)]] = data[data.find(":")+2:][1:-1]

else:

data_dict[data[ind+1:data.find("'",ind+1)]] = data[data.find(":")+2:]

return data_dict

def _extract_time(self,data_line):

return datetime.datetime.strptime(data_line.split()[0], '%Y%m%d_%H%M%S')

def _extract_delay(self,data_line):

ind = data_line.find('#')

index_frame = int(data_line[ind+1:data_line.find(' ',ind)])

ind = data_line.find('was')

delay = float(data_line[ind:].split(" ")[1])

return (index_frame, delay)

def __repr__(self):

return "\n".join([str(stim) for stim in self.stimuli])

@property

def n_stim(self):

return len(self.stimuli)

@property

def stim_names(self):

return [stim.name for stim in self.stimuli]

def find_stimuli(self):

"""Find the stimuli in the log file and return the list of the stimuli

found by this object."""

with open(self.log_path, 'r', encoding="ISO-8859-1") as log_file:

for line in log_file:

if "DATA" in line:

data_juice = self._extract_data(line)

if 'stimState' in data_juice.keys():

if data_juice['stimState'] == "STARTED" :

curr_stim = Stimulus(self._extract_time(line))

curr_stim.set_parameters(data_juice)

self.stimuli.append(curr_stim)

stimulus_ON = True

elif data_juice['stimState'] == "FINISHED" or data_juice['stimState'] == "ABORTED":

curr_stim.is_aborted = data_juice['stimState'] == "ABORTED"

curr_stim.stop_time = self._extract_time(line)

stimulus_ON = False

elif 'userComment' in data_juice.keys():

pass

#print("userComment, use it to bind logs to records")

elif stimulus_ON: #Information on stimulus parameters

curr_stim.set_parameters(data_juice)

# elif 'probeX' in data_juice.keys():

# print("Probe center not implemented yet")

if "WARNING" in line and "dt of frame" and stimulus_ON:

curr_stim.frame_delay.append(self._extract_delay(line))

if curr_stim.frame_delay[-1][1] > 2000/60: #if longer than 2 frames could be bad

print(curr_stim.name, " ".join(line.split()[1:])[:-1])

return self.stimuli

class Stimulus:

"""Stimulus object containing information about it's presentation.

- start_time : a datetime object)

- stop_time : a datetime object)

- parameters : Parameters extracted from the QDSpy

- md5 : The md5 hash of that compiled version of the stimulus

- name : The name of the stimulus

"""

def __init__(self,start):

self.start_time = start

self.stop_time = None

self.parameters = {}

self.md5 = None

self.name = "NoName"

self.frame_delay = []

self.is_aborted = False

def set_parameters(self, parameters):

self.parameters.update(parameters)

if "_sName" in parameters.keys():

self.name = parameters["_sName"]

if "stimMD5" in parameters.keys():

self.md5 = parameters["stimMD5"]

def __str__(self):

return "%s %s at %s" %(self.name+" "*(24-len(self.name)),self.md5,self.start_time)

def __repr__(self):

return self.__str__() | _____no_output_____ | Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

To read QDSpy logs of your experiment, simply provide the folder containing the log you want to read to `get_QDSpy_logs` | #logs = get_QDSpy_logs("./files/basic_synchro") | flickering_bars_pr WARNING dt of frame #15864 was 50.315 m

flickering_bars_pr WARNING dt of frame #19477 was 137.235 m

| Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

It returns a list fo the QDSpy logs. Stimuli are contained in a list inside each log: | #logs[0].stimuli | _____no_output_____ | Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

The stimuli objects contains informations on how their display went: | # stim = logs[0].stimuli[5]

# print(stim.name, stim.start_time, stim.frame_delay, stim.md5)

#export

def unpack_stim_npy(npy_dir, md5_hash):

"""Find the stimuli of a given hash key in the npy stimulus folder. The stimuli are in a compressed version

comprising three files. inten for the stimulus values on the screen, marker for the values of the marker

read by a photodiode to get the stimulus timing during a record, and an optional shader that is used to

specify informations about a shader when used, like for the moving gratings."""

#Stimuli can be either npy or npz (useful when working remotely)

def find_file(ftype):

flist = glob.glob(os.path.join(npy_dir, "*_"+ftype+"_"+md5_hash+".npy"))

if len(flist)==0:

flist = glob.glob(os.path.join(npy_dir, "*_"+ftype+"_"+md5_hash+".npz"))

res = np.load(flist[0])["arr_0"]

else:

res = np.load(flist[0])

return res

inten = find_file("intensities")

marker = find_file("marker")

shader, unpack_shader = None, None

if len(glob.glob(os.path.join(npy_dir, "*_shader_"+md5_hash+".np*")))>0:

shader = find_file("shader")

unpack_shader = np.empty((np.sum(marker[:,0]), *shader.shape[1:]))

#The latter unpacks the arrays

unpack_inten = np.empty((np.sum(marker[:,0]), *inten.shape[1:]))

unpack_marker = np.empty(np.sum(marker[:,0]))

cursor = 0

for i, n_frame in enumerate(marker[:,0]):

unpack_inten[cursor:cursor+n_frame] = inten[i]

unpack_marker[cursor:cursor+n_frame] = marker[i, 1]

if shader is not None:

unpack_shader[cursor:cursor+n_frame] = shader[i]

cursor += n_frame

return unpack_inten, unpack_marker, unpack_shader

# logs = get_QDSpy_logs("./files/basic_synchro") | flickering_bars_pr WARNING dt of frame #15864 was 50.315 m

flickering_bars_pr WARNING dt of frame #19477 was 137.235 m

| Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

To unpack the stimulus values, provide the folder of the numpy arrays and the hash of the stimulus: | # unpacked = unpack_stim_npy("./files/basic_synchro/stimulus_data", "eed21bda540934a428e93897908d049e") | _____no_output_____ | Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

Unpacked is a tuple, where the first element is the intensity of shape (n_frames, n_colors, y, x) | # unpacked[0].shape | _____no_output_____ | Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

The second element of the tuple repesents the marker values for the timing. QDSpy defaults are zero and ones, but I used custom red squares taking intensities [50,100,150,200,250] to time with five different signals | # unpacked[1][:50] | _____no_output_____ | Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

Each stimulus is also starting with a barcode, of the form:0 0 0 0 0 0 4 0 4\*[1-4] 0 4\*[1-4] 0 4\*[1-4] 0 4\*[1-4] 0 4 0 0 0 0 0 0 and ends with 0 0 0 0 0 0 | #export

def extract_spyking_circus_results(dir_, record_basename):

"""Extract the good cells of a record. Overlap with phy_results_dict."""

phy_dir = os.path.join(dir_,record_basename+"/"+record_basename+".GUI")

phy_dict = phy_results_dict(phy_dir)

good_clusters = []

with open(os.path.join(phy_dir,'cluster_group.tsv'), 'r') as tsvfile:

spamreader = csv.reader(tsvfile, delimiter='\t', quotechar='|')

for i,row in enumerate(spamreader):

if row[1] == "good":

good_clusters.append(int(row[0]))

good_clusters = np.array(good_clusters)

phy_dict["good_clusters"] = good_clusters

return phy_dict

#export

def extract_best_pupil(fn):

"""From results of MaskRCNN, go over all or None pupil detected and select the best pupil.

Each pupil returned is (x,y,width,height,angle,probability)"""

pupil = np.load(fn, allow_pickle=True)

filtered_pupil = np.empty((len(pupil), 6))

for i, detected in enumerate(pupil):

if len(detected)>0:

best = detected[0]

for detect in detected[1:]:

if detect[5]>best[5]:

best = detect

filtered_pupil[i] = np.array(best)

else:

filtered_pupil[i] = np.array([0,0,0,0,0,0])

return filtered_pupil

#export

def stack_len_extraction(stack_info_dir):

"""Extract from ImageJ macro directives the size of the stacks acquired."""

ptrn_nFrame = r".*number=(\d*) .*"

l_epochs = []

for fn in glob.glob(os.path.join(stack_info_dir, "*.txt")):

with open(fn) as f:

line = f.readline()

l_epochs.append(int(re.findall(ptrn_nFrame, line)[0]))

return l_epochs

#hide

from nbdev.export import *

notebook2script() | Converted 00_core.ipynb.

Converted 01_utils.ipynb.

Converted 02_processing.ipynb.

Converted 03_modelling.ipynb.

Converted 04_plotting.ipynb.

Converted 05_database.ipynb.

Converted 10_synchro.io.ipynb.

Converted 11_synchro.extracting.ipynb.

Converted 12_synchro.processing.ipynb.

Converted 99_testdata.ipynb.

Converted index.ipynb.

| Apache-2.0 | 11_synchro.extracting.ipynb | Ines-Filipa/theonerig |

Training a Plant Disease Diagnosis Model with PlantVillage Dataset | import numpy as np

import os

import matplotlib.pyplot as plt

from skimage.io import imread

from sklearn.metrics import classification_report, confusion_matrix

from sklearn .model_selection import train_test_split

import keras

import keras.backend as K

from keras.preprocessing.image import load_img, img_to_array, ImageDataGenerator

from keras.utils.np_utils import to_categorical

from keras import layers

from keras.models import Sequential, Model

from keras.callbacks import EarlyStopping, ModelCheckpoint | _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

Preparation Data Preparation | !apt-get install subversion > /dev/null

#Retreive specifc diseases of tomato for training

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___Bacterial_spot image/Tomato___Bacterial_spot > /dev/null

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___Early_blight image/Tomato___Early_blight > /dev/null

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___Late_blight image/Tomato___Late_blight > /dev/null

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___Septoria_leaf_spot image/Tomato___Septoria_leaf_spot > /dev/null

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___Target_Spot image/Tomato___Target_Spot > /dev/null

!svn export https://github.com/spMohanty/PlantVillage-Dataset/trunk/raw/color/Tomato___healthy image/Tomato___healthy > /dev/null

#folder structure

!ls image

plt.figure(figsize=(15,10))

#visualize several images

parent_directory = "image"

for i, folder in enumerate(os.listdir(parent_directory)):

print(folder)

folder_directory = os.path.join(parent_directory,folder)

files = os.listdir(folder_directory)

#will inspect only 1 image per folder

file = files[0]

file_path = os.path.join(folder_directory,file)

image = imread(file_path)

plt.subplot(1,6,i+1)

plt.imshow(image)

plt.axis("off")

name = folder.split("___")[1][:-1]

plt.title(name)

#plt.show()

#load everything into memory

x = []

y = []

class_names = []

parent_directory = "image"

for i,folder in enumerate(os.listdir(parent_directory)):

print(i,folder)

class_names.append(folder)

folder_directory = os.path.join(parent_directory,folder)

files = os.listdir(folder_directory)

#will inspect only 1 image per folder

for file in files:

file_path = os.path.join(folder_directory,file)

image = load_img(file_path,target_size=(64,64))

image = img_to_array(image)/255.

x.append(image)

y.append(i)

x = np.array(x)

y = to_categorical(y)

#check the data shape

print(x.shape)

print(y.shape)

print(y[0])

x_train, _x, y_train, _y = train_test_split(x,y,test_size=0.2, stratify = y, random_state = 1)

x_valid,x_test, y_valid, y_test = train_test_split(_x,_y,test_size=0.4, stratify = _y, random_state = 1)

print("train data:",x_train.shape,y_train.shape)

print("validation data:",x_valid.shape,y_valid.shape)

print("test data:",x_test.shape,y_test.shape)

| _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

Model Preparation | K.clear_session()

nfilter = 32

#VGG16 like model

model = Sequential([

#block1

layers.Conv2D(nfilter,(3,3),padding="same",name="block1_conv1",input_shape=(64,64,3)),

layers.Activation("relu"),

layers.BatchNormalization(),

#layers.Dropout(rate=0.2),

layers.Conv2D(nfilter,(3,3),padding="same",name="block1_conv2"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.MaxPooling2D((2,2),strides=(2,2),name="block1_pool"),

#block2

layers.Conv2D(nfilter*2,(3,3),padding="same",name="block2_conv1"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.Conv2D(nfilter*2,(3,3),padding="same",name="block2_conv2"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.MaxPooling2D((2,2),strides=(2,2),name="block2_pool"),

#block3

layers.Conv2D(nfilter*2,(3,3),padding="same",name="block3_conv1"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.Conv2D(nfilter*4,(3,3),padding="same",name="block3_conv2"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.Conv2D(nfilter*4,(3,3),padding="same",name="block3_conv3"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.MaxPooling2D((2,2),strides=(2,2),name="block3_pool"),

#layers.Flatten(),

layers.GlobalAveragePooling2D(),

#inference layer

layers.Dense(128,name="fc1"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.Dense(128,name="fc2"),

layers.BatchNormalization(),

layers.Activation("relu"),

#layers.Dropout(rate=0.2),

layers.Dense(6,name="prepredictions"),

layers.Activation("softmax",name="predictions")

])

model.compile(optimizer = "adam", loss="categorical_crossentropy", metrics=["accuracy"])

model.summary() | _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

Training | #utilize early stopping function to stop at the lowest validation loss

es = EarlyStopping(monitor='val_loss', patience=10, verbose=1, mode='auto')

#utilize save best weight model during training

ckpt = ModelCheckpoint("PlantDiseaseCNNmodel.hdf5", monitor='val_loss', verbose=1, save_best_only=True, save_weights_only=False, mode='auto', period=1)

#we will define a generator class for training data and validation data seperately, as no augmentation is not required for validation data

t_gen = ImageDataGenerator(rotation_range=90,horizontal_flip=True)

v_gen = ImageDataGenerator()

train_gen = t_gen.flow(x_train,y_train,batch_size=98)

valid_gen = v_gen.flow(x_valid,y_valid,batch_size=98)

history = model.fit_generator(

train_gen,

steps_per_epoch = train_gen.n // 98,

callbacks = [es,ckpt],

validation_data = valid_gen,

validation_steps = valid_gen.n // 98,

epochs=50) | _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

Evaluation | #load the model weight file with lowest validation loss

model.load_weights("PlantDiseaseCNNmodel.hdf5")

#or can obtain the pretrained model from the github repo.

#check the model metrics

print(model.metrics_names)

#evaluate training data

print(model.evaluate(x= x_train, y = y_train))

#evaluate validation data

print(model.evaluate(x= x_valid, y = y_valid))

#evaluate test data

print(model.evaluate(x= x_test, y = y_test))

#draw a confusion matrix

#true label

y_true = np.argmax(y_test,axis=1)

#prediction label

Y_pred = model.predict(x_test)

y_pred = np.argmax(Y_pred, axis=1)

print(y_true)

print(y_pred)

#https://scikit-learn.org/stable/auto_examples/model_selection/plot_confusion_matrix.html#sphx-glr-auto-examples-model-selection-plot-confusion-matrix-py

from sklearn.metrics import confusion_matrix

from sklearn.utils.multiclass import unique_labels

def plot_confusion_matrix(y_true, y_pred, classes,

normalize=False,

title=None,

cmap=plt.cm.Blues):

"""

This function prints and plots the confusion matrix.

Normalization can be applied by setting `normalize=True`.

"""

if not title:

if normalize:

title = 'Normalized confusion matrix'

else:

title = 'Confusion matrix, without normalization'

# Compute confusion matrix

cm = confusion_matrix(y_true, y_pred)

# Only use the labels that appear in the data

#classes = classes[unique_labels(y_true, y_pred)]

if normalize:

cm = cm.astype('float') / cm.sum(axis=1)[:, np.newaxis]

print("Normalized confusion matrix")

else:

print('Confusion matrix, without normalization')

print(cm)

fig, ax = plt.subplots(figsize=(5,5))

im = ax.imshow(cm, interpolation='nearest', cmap=cmap)

#ax.figure.colorbar(im, ax=ax)

# We want to show all ticks...

ax.set(xticks=np.arange(cm.shape[1]),

yticks=np.arange(cm.shape[0]),

# ... and label them with the respective list entries

xticklabels=classes, yticklabels=classes,

title=title,

ylabel='True label',

xlabel='Predicted label')

# Rotate the tick labels and set their alignment.

plt.setp(ax.get_xticklabels(), rotation=45, ha="right",

rotation_mode="anchor")

# Loop over data dimensions and create text annotations.

fmt = '.2f' if normalize else 'd'

thresh = cm.max() / 2.

for i in range(cm.shape[0]):

for j in range(cm.shape[1]):

ax.text(j, i, format(cm[i, j], fmt),

ha="center", va="center",

color="white" if cm[i, j] > thresh else "black")

fig.tight_layout()

return ax

np.set_printoptions(precision=2)

plot_confusion_matrix(y_true, y_pred, classes=class_names, normalize=True,

title='Normalized confusion matrix')

| _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

Predicting Indivisual Images | n = 15 #do not exceed (number of test image - 1)

plt.imshow(x_test[n])

plt.show()

true_label = np.argmax(y_test,axis=1)[n]

print("true_label is:",true_label,":",class_names[true_label])

prediction = model.predict(x_test[n][np.newaxis,...])[0]

print("predicted_value is:",prediction)

predicted_label = np.argmax(prediction)

print("predicted_label is:",predicted_label,":",class_names[predicted_label])

if true_label == predicted_label:

print("correct prediction")

else:

print("wrong prediction") | _____no_output_____ | Net-SNMP | notebooks/PlantDisease_tutorial.ipynb | Julia2505/deep_learning_for_biologists |

LeNet Lab SolutionSource: Yan LeCun Load DataLoad the MNIST data, which comes pre-loaded with TensorFlow.You do not need to modify this section. | from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("MNIST_data/", reshape=False)

X_train, y_train = mnist.train.images, mnist.train.labels

X_validation, y_validation = mnist.validation.images, mnist.validation.labels

X_test, y_test = mnist.test.images, mnist.test.labels

assert(len(X_train) == len(y_train))

assert(len(X_validation) == len(y_validation))

assert(len(X_test) == len(y_test))

print()

print("Image Shape: {}".format(X_train[0].shape))

print()

print("Training Set: {} samples".format(len(X_train)))

print("Validation Set: {} samples".format(len(X_validation)))

print("Test Set: {} samples".format(len(X_test))) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

The MNIST data that TensorFlow pre-loads comes as 28x28x1 images.However, the LeNet architecture only accepts 32x32xC images, where C is the number of color channels.In order to reformat the MNIST data into a shape that LeNet will accept, we pad the data with two rows of zeros on the top and bottom, and two columns of zeros on the left and right (28+2+2 = 32).You do not need to modify this section. | import numpy as np

# Pad images with 0s

X_train = np.pad(X_train, ((0,0),(2,2),(2,2),(0,0)), 'constant')

X_validation = np.pad(X_validation, ((0,0),(2,2),(2,2),(0,0)), 'constant')

X_test = np.pad(X_test, ((0,0),(2,2),(2,2),(0,0)), 'constant')

print("Updated Image Shape: {}".format(X_train[0].shape)) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

Visualize DataView a sample from the dataset.You do not need to modify this section. | import random

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

index = random.randint(0, len(X_train))

image = X_train[index].squeeze()

plt.figure(figsize=(1,1))

plt.imshow(image, cmap="gray")

print(y_train[index]) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

Preprocess DataShuffle the training data.You do not need to modify this section. | from sklearn.utils import shuffle

X_train, y_train = shuffle(X_train, y_train) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

Setup TensorFlowThe `EPOCH` and `BATCH_SIZE` values affect the training speed and model accuracy.You do not need to modify this section. | import tensorflow as tf

EPOCHS = 10

BATCH_SIZE = 128 | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

SOLUTION: Implement LeNet-5Implement the [LeNet-5](http://yann.lecun.com/exdb/lenet/) neural network architecture.This is the only cell you need to edit. InputThe LeNet architecture accepts a 32x32xC image as input, where C is the number of color channels. Since MNIST images are grayscale, C is 1 in this case. Architecture**Layer 1: Convolutional.** The output shape should be 28x28x6.**Activation.** Your choice of activation function.**Pooling.** The output shape should be 14x14x6.**Layer 2: Convolutional.** The output shape should be 10x10x16.**Activation.** Your choice of activation function.**Pooling.** The output shape should be 5x5x16.**Flatten.** Flatten the output shape of the final pooling layer such that it's 1D instead of 3D. The easiest way to do is by using `tf.contrib.layers.flatten`, which is already imported for you.**Layer 3: Fully Connected.** This should have 120 outputs.**Activation.** Your choice of activation function.**Layer 4: Fully Connected.** This should have 84 outputs.**Activation.** Your choice of activation function.**Layer 5: Fully Connected (Logits).** This should have 10 outputs. OutputReturn the result of the 2nd fully connected layer. | from tensorflow.contrib.layers import flatten

def LeNet(x):

# Arguments used for tf.truncated_normal, randomly defines variables for the weights and biases for each layer

mu = 0

sigma = 0.1

# SOLUTION: Layer 1: Convolutional. Input = 32x32x1. Output = 28x28x6.

conv1_W = tf.Variable(tf.truncated_normal(shape=(5, 5, 1, 6), mean = mu, stddev = sigma))

conv1_b = tf.Variable(tf.zeros(6))

conv1 = tf.nn.conv2d(x, conv1_W, strides=[1, 1, 1, 1], padding='VALID') + conv1_b

# SOLUTION: Activation.

conv1 = tf.nn.relu(conv1)

# SOLUTION: Pooling. Input = 28x28x6. Output = 14x14x6.

conv1 = tf.nn.max_pool(conv1, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='VALID')

# SOLUTION: Layer 2: Convolutional. Output = 10x10x16.

conv2_W = tf.Variable(tf.truncated_normal(shape=(5, 5, 6, 16), mean = mu, stddev = sigma))

conv2_b = tf.Variable(tf.zeros(16))

conv2 = tf.nn.conv2d(conv1, conv2_W, strides=[1, 1, 1, 1], padding='VALID') + conv2_b

# SOLUTION: Activation.

conv2 = tf.nn.relu(conv2)

# SOLUTION: Pooling. Input = 10x10x16. Output = 5x5x16.

conv2 = tf.nn.max_pool(conv2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='VALID')

# SOLUTION: Flatten. Input = 5x5x16. Output = 400.

fc0 = flatten(conv2)

# SOLUTION: Layer 3: Fully Connected. Input = 400. Output = 120.

fc1_W = tf.Variable(tf.truncated_normal(shape=(400, 120), mean = mu, stddev = sigma))

fc1_b = tf.Variable(tf.zeros(120))

fc1 = tf.matmul(fc0, fc1_W) + fc1_b

# SOLUTION: Activation.

fc1 = tf.nn.relu(fc1)

# SOLUTION: Layer 4: Fully Connected. Input = 120. Output = 84.

fc2_W = tf.Variable(tf.truncated_normal(shape=(120, 84), mean = mu, stddev = sigma))

fc2_b = tf.Variable(tf.zeros(84))

fc2 = tf.matmul(fc1, fc2_W) + fc2_b

# SOLUTION: Activation.

fc2 = tf.nn.relu(fc2)

# SOLUTION: Layer 5: Fully Connected. Input = 84. Output = 10.

fc3_W = tf.Variable(tf.truncated_normal(shape=(84, 10), mean = mu, stddev = sigma))

fc3_b = tf.Variable(tf.zeros(10))

logits = tf.matmul(fc2, fc3_W) + fc3_b

return logits | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

Features and LabelsTrain LeNet to classify [MNIST](http://yann.lecun.com/exdb/mnist/) data.`x` is a placeholder for a batch of input images.`y` is a placeholder for a batch of output labels.You do not need to modify this section. | x = tf.placeholder(tf.float32, (None, 32, 32, 1))

y = tf.placeholder(tf.int32, (None))

one_hot_y = tf.one_hot(y, 10) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |

Training PipelineCreate a training pipeline that uses the model to classify MNIST data.You do not need to modify this section. | rate = 0.001

logits = LeNet(x)

cross_entropy = tf.nn.softmax_cross_entropy_with_logits(labels=one_hot_y, logits=logits)

loss_operation = tf.reduce_mean(cross_entropy)

optimizer = tf.train.AdamOptimizer(learning_rate = rate)

training_operation = optimizer.minimize(loss_operation) | _____no_output_____ | MIT | LeNet-Lab-Solution.ipynb | LiYan1988/LeNet-2 |