markdown stringlengths 0 1.02M | code stringlengths 0 832k | output stringlengths 0 1.02M | license stringlengths 3 36 | path stringlengths 6 265 | repo_name stringlengths 6 127 |

|---|---|---|---|---|---|

Observations:* The expected error is always smaller under the Projection DPP distribution compared to the Volume Sampling distribution.* The expected error for the Volume Sampling distribution is constant for a contant D* However the expected error for the Projection DPP distribution depends on the k-sum* For X_0 and X_1, the profile of the k-leverage scores are highly concentrated (k-sum > k-1) thus epsilon is smaller than 1, in this regime the determinstic algorithm have the lower approximation error and it performs better than expected (the theoretical bound is 1/(1-epsilon).* However, for the other datasets, the (k-sum 1 and the deterministic algorithm have no guarantee in this regime: we observe that the approximation error for the deterministic algorithm can be very high in this regime. Recall:We recall here some geometrical properties of the matrices $$X_i$$$$X_i = UD_{j}V_{i}$$Where for every i, the first k columns of $$V_{i}$$ are the $$Q_{i}$$ while the other columns are gernerated randomly | previous_Q = NAL_1.multi_Q

lv_0 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[0,:,:])

lv_1 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[1,:,:])

lv_2 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[2,:,:])

lv_3 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[3,:,:])

lv_4 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[4,:,:])

lv_5 = estimate_leverage_scores_from_orthogonal_matrix(previous_Q[5,:,:])

index_list = list(range(real_dim)) | _____no_output_____ | MIT | .ipynb_checkpoints/Numerical_Analysis_Volume_Sampling_vs_Projection_DPP-ollld-checkpoint.ipynb | AyoubBelhadji/FrameBuilder |

In this example the objective is Q and the initialization is Q_0 (the rectangular identity)We have with respect to the Schur-order (or the majorization):$$Q = Q_5 \prec_{S} Q_4 \prec_{S} Q_3 \prec_{S} Q_2 \prec_{S} Q_1 \prec_{S} Q_0 $$ | plt.plot(index_list[0:10], lv_0[0:10], 'c--',index_list[0:10], lv_1[0:10], 'k--', index_list[0:10], lv_2[0:10], 'r--', index_list[0:10], lv_3[0:10], 'b--',index_list[0:10], lv_4[0:10], 'g--',index_list[0:10], lv_5[0:10], 'y--')

plt.xlabel('index')

plt.ylabel('leverage score')

cyan_patch = mpatches.Patch(color='cyan', label='Q_0')

black_patch = mpatches.Patch(color='black', label='Q_1')

red_patch = mpatches.Patch(color='red', label='Q_2')

blue_patch = mpatches.Patch(color='blue', label='Q_3')

green_patch = mpatches.Patch(color='green', label='Q_4')

yellow_patch = mpatches.Patch(color='yellow', label='Q = Q_5')

plt.legend(handles=[cyan_patch,black_patch,red_patch,blue_patch,green_patch,yellow_patch])

plt.show() | _____no_output_____ | MIT | .ipynb_checkpoints/Numerical_Analysis_Volume_Sampling_vs_Projection_DPP-ollld-checkpoint.ipynb | AyoubBelhadji/FrameBuilder |

III-1-2 The case of a projection spectrumWe mean by a projection spectrum matrix, a matrix with equal the first k singular values.We observe that the two distributions are very similar.... \todo{reword} | cov_2 = np.diag(np.concatenate(([1000,1000,1000,1,0.1],cov_test)))

NAL_2 = Numrerical_Analysis_DPP(N,real_dim,r,k,versions_number,mean,cov_2,lv_scores_vector)

projection_DPP_res_2 = NAL_2.get_expected_error_for_projection_DPP()

volume_sampling_res_2 = NAL_2.get_expected_error_for_volume_sampling()

deterministic_selection_res_2 = NAL_1.get_error_for_deterministic_selection()

sum_U_res_2 = NAL_2.get_sum_k_leverage_scores()

deterministic_upper_bound_res_2 = NAL_2.get_deterministic_upper_bound()

results = [["Dataset","Using Volume Sampling","Using Projection DPP","k-sum","1/(1-epsilon)","Using Deterministic Algorithm"],["X_0",volume_sampling_res_2[0],projection_DPP_res_2[0],sum_U_res_2[0],deterministic_upper_bound_res_2[0],deterministic_selection_res_2[0]],["X_1",volume_sampling_res_2[1],projection_DPP_res_2[1],sum_U_res_2[1],deterministic_upper_bound_res_2[1],deterministic_selection_res_2[1]],

["X_2",volume_sampling_res_2[2],projection_DPP_res_2[2],sum_U_res_2[2],deterministic_upper_bound_res_2[2],deterministic_selection_res_2[2]],["X_3",volume_sampling_res_2[3],projection_DPP_res_2[3],sum_U_res_2[3],deterministic_upper_bound_res_2[3],deterministic_selection_res_2[3]],["X_4",volume_sampling_res_2[4],projection_DPP_res_2[4],sum_U_res_2[4],deterministic_upper_bound_res_2[4],deterministic_selection_res_2[4]],["X_5",volume_sampling_res_2[5],projection_DPP_res_2[5],sum_U_res_2[5],deterministic_upper_bound_res_2[5],deterministic_selection_res_2[5]]]

display(HTML(

'<center><b>The expected approximation error (divided by the optimal error) according to a sampling scheme for different distribution</b><br><table><tr>{}</tr></table>'.format(

'</tr><tr>'.join(

'<td>{}</td>'.format('</td><td>'.join(str(_) for _ in row)) for row in results)

)

)) | [ 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3

0.3 0.3 0.3 0.3 0.3]

[ 1. 1. 1. 1. 1. 1. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0. 0.

0. 0.]

[-0.7 -0.7 -0.7 -0.7 -0.7 -0.7 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3 0.3

0.3 0.3 0.3 0.3 0.3]

5

6

| MIT | .ipynb_checkpoints/Numerical_Analysis_Volume_Sampling_vs_Projection_DPP-ollld-checkpoint.ipynb | AyoubBelhadji/FrameBuilder |

III-2 The influence of the "spread" of VIn this section we investigate the influence of the "spread" (to be defined formally) of the cloud of points. We can change this "spread" by changing the initialization of the generator of orthogonal matrices: we replace the rectangular identity by "other" orthogonal matrices. Technically, this boils down to change the generator mode in the constructor call from "nonspread" to "spread". | np.power(np.linspace(1, k, num=k),2)

matrices_array = [ np.zeros((4,4)) for comb in combinations(range(5),4)]

matrix_sum = np.sum(matrices_array)

matrix_sum

matrices_array | _____no_output_____ | MIT | .ipynb_checkpoints/Numerical_Analysis_Volume_Sampling_vs_Projection_DPP-ollld-checkpoint.ipynb | AyoubBelhadji/FrameBuilder |

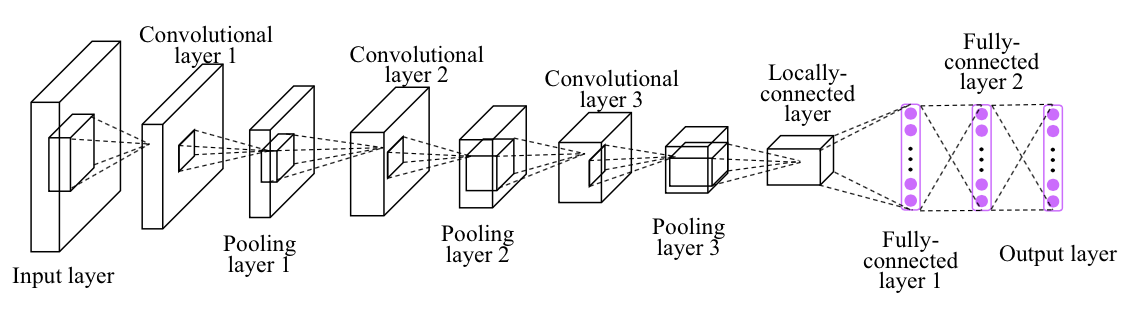

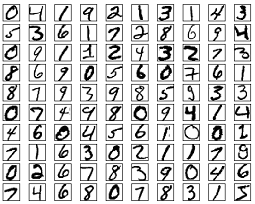

Convolutional Neural Network ExampleBuild a convolutional neural network with TensorFlow.- Author: Aymeric Damien- Project: https://github.com/aymericdamien/TensorFlow-Examples/These lessons are adapted from [aymericdamien TensorFlow tutorials](https://github.com/aymericdamien/TensorFlow-Examples) / [GitHub](https://github.com/aymericdamien/TensorFlow-Examples) which are published under the [MIT License](https://github.com/Hvass-Labs/TensorFlow-Tutorials/blob/master/LICENSE) which allows very broad use for both academic and commercial purposes. CNN Overview MNIST Dataset OverviewThis example is using MNIST handwritten digits. The dataset contains 60,000 examples for training and 10,000 examples for testing. The digits have been size-normalized and centered in a fixed-size image (28x28 pixels) with values from 0 to 1. For simplicity, each image has been flattened and converted to a 1-D numpy array of 784 features (28*28).More info: http://yann.lecun.com/exdb/mnist/ | from __future__ import division, print_function, absolute_import

import tensorflow as tf

# Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot=True)

# Training Parameters

learning_rate = 0.001

num_steps = 500

batch_size = 128

display_step = 10

# Network Parameters

num_input = 784 # MNIST data input (img shape: 28*28)

num_classes = 10 # MNIST total classes (0-9 digits)

dropout = 0.75 # Dropout, probability to keep units

# tf Graph input

X = tf.placeholder(tf.float32, [None, num_input])

Y = tf.placeholder(tf.float32, [None, num_classes])

keep_prob = tf.placeholder(tf.float32) # dropout (keep probability)

# Create some wrappers for simplicity

def conv2d(x, W, b, strides=1):

# Conv2D wrapper, with bias and relu activation

x = tf.nn.conv2d(x, W, strides=[1, strides, strides, 1], padding='SAME')

x = tf.nn.bias_add(x, b)

return tf.nn.relu(x)

def maxpool2d(x, k=2):

# MaxPool2D wrapper

return tf.nn.max_pool(x, ksize=[1, k, k, 1], strides=[1, k, k, 1],

padding='SAME')

# Create model

def conv_net(x, weights, biases, dropout):

# MNIST data input is a 1-D vector of 784 features (28*28 pixels)

# Reshape to match picture format [Height x Width x Channel]

# Tensor input become 4-D: [Batch Size, Height, Width, Channel]

x = tf.reshape(x, shape=[-1, 28, 28, 1])

# Convolution Layer

conv1 = conv2d(x, weights['wc1'], biases['bc1'])

# Max Pooling (down-sampling)

conv1 = maxpool2d(conv1, k=2)

# Convolution Layer

conv2 = conv2d(conv1, weights['wc2'], biases['bc2'])

# Max Pooling (down-sampling)

conv2 = maxpool2d(conv2, k=2)

# Fully connected layer

# Reshape conv2 output to fit fully connected layer input

fc1 = tf.reshape(conv2, [-1, weights['wd1'].get_shape().as_list()[0]])

fc1 = tf.add(tf.matmul(fc1, weights['wd1']), biases['bd1'])

fc1 = tf.nn.relu(fc1)

# Apply Dropout

fc1 = tf.nn.dropout(fc1, dropout)

# Output, class prediction

out = tf.add(tf.matmul(fc1, weights['out']), biases['out'])

return out

# Store layers weight & bias

weights = {

# 5x5 conv, 1 input, 32 outputs

'wc1': tf.Variable(tf.random_normal([5, 5, 1, 32])),

# 5x5 conv, 32 inputs, 64 outputs

'wc2': tf.Variable(tf.random_normal([5, 5, 32, 64])),

# fully connected, 7*7*64 inputs, 1024 outputs

'wd1': tf.Variable(tf.random_normal([7*7*64, 1024])),

# 1024 inputs, 10 outputs (class prediction)

'out': tf.Variable(tf.random_normal([1024, num_classes]))

}

biases = {

'bc1': tf.Variable(tf.random_normal([32])),

'bc2': tf.Variable(tf.random_normal([64])),

'bd1': tf.Variable(tf.random_normal([1024])),

'out': tf.Variable(tf.random_normal([num_classes]))

}

# Construct model

logits = conv_net(X, weights, biases, keep_prob)

prediction = tf.nn.softmax(logits)

# Define loss and optimizer

loss_op = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(

logits=logits, labels=Y))

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate)

train_op = optimizer.minimize(loss_op)

# Evaluate model

correct_pred = tf.equal(tf.argmax(prediction, 1), tf.argmax(Y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

# Initialize the variables (i.e. assign their default value)

init = tf.global_variables_initializer()

# Start training

with tf.Session() as sess:

# Run the initializer

sess.run(init)

for step in range(1, num_steps+1):

batch_x, batch_y = mnist.train.next_batch(batch_size)

# Run optimization op (backprop)

sess.run(train_op, feed_dict={X: batch_x, Y: batch_y, keep_prob: dropout})

if step % display_step == 0 or step == 1:

# Calculate batch loss and accuracy

loss, acc = sess.run([loss_op, accuracy], feed_dict={X: batch_x,

Y: batch_y,

keep_prob: 1.0})

print("Step " + str(step) + ", Minibatch Loss= " + \

"{:.4f}".format(loss) + ", Training Accuracy= " + \

"{:.3f}".format(acc))

print("Optimization Finished!")

# Calculate accuracy for 256 MNIST test images

print("Testing Accuracy:", \

sess.run(accuracy, feed_dict={X: mnist.test.images[:256],

Y: mnist.test.labels[:256],

keep_prob: 1.0}))

| Step 1, Minibatch Loss= 63763.3047, Training Accuracy= 0.141

Step 10, Minibatch Loss= 26429.6680, Training Accuracy= 0.242

Step 20, Minibatch Loss= 12171.8584, Training Accuracy= 0.586

Step 30, Minibatch Loss= 6306.6318, Training Accuracy= 0.734

Step 40, Minibatch Loss= 5113.7583, Training Accuracy= 0.711

Step 50, Minibatch Loss= 4022.2131, Training Accuracy= 0.805

Step 60, Minibatch Loss= 3125.4949, Training Accuracy= 0.867

Step 70, Minibatch Loss= 2225.4875, Training Accuracy= 0.875

Step 80, Minibatch Loss= 1843.3540, Training Accuracy= 0.867

Step 90, Minibatch Loss= 1715.7744, Training Accuracy= 0.875

Step 100, Minibatch Loss= 2611.2708, Training Accuracy= 0.906

Step 110, Minibatch Loss= 4804.0913, Training Accuracy= 0.875

Step 120, Minibatch Loss= 1067.5258, Training Accuracy= 0.938

Step 130, Minibatch Loss= 2519.1514, Training Accuracy= 0.898

Step 140, Minibatch Loss= 2687.9292, Training Accuracy= 0.906

Step 150, Minibatch Loss= 1983.4077, Training Accuracy= 0.938

Step 160, Minibatch Loss= 2844.6553, Training Accuracy= 0.930

Step 170, Minibatch Loss= 3602.2524, Training Accuracy= 0.914

Step 180, Minibatch Loss= 175.3922, Training Accuracy= 0.961

Step 190, Minibatch Loss= 645.1918, Training Accuracy= 0.945

Step 200, Minibatch Loss= 1147.6567, Training Accuracy= 0.938

Step 210, Minibatch Loss= 1140.4148, Training Accuracy= 0.914

Step 220, Minibatch Loss= 1572.8756, Training Accuracy= 0.906

Step 230, Minibatch Loss= 1292.9274, Training Accuracy= 0.898

Step 240, Minibatch Loss= 1501.4623, Training Accuracy= 0.953

Step 250, Minibatch Loss= 1908.2997, Training Accuracy= 0.898

Step 260, Minibatch Loss= 2182.2380, Training Accuracy= 0.898

Step 270, Minibatch Loss= 487.5807, Training Accuracy= 0.961

Step 280, Minibatch Loss= 1284.1130, Training Accuracy= 0.945

Step 290, Minibatch Loss= 1232.4919, Training Accuracy= 0.891

Step 300, Minibatch Loss= 1198.8336, Training Accuracy= 0.945

Step 310, Minibatch Loss= 2010.5345, Training Accuracy= 0.906

Step 320, Minibatch Loss= 786.3917, Training Accuracy= 0.945

Step 330, Minibatch Loss= 1408.3556, Training Accuracy= 0.898

Step 340, Minibatch Loss= 1453.7538, Training Accuracy= 0.953

Step 350, Minibatch Loss= 999.8901, Training Accuracy= 0.906

Step 360, Minibatch Loss= 914.3958, Training Accuracy= 0.961

Step 370, Minibatch Loss= 488.0052, Training Accuracy= 0.938

Step 380, Minibatch Loss= 1070.8710, Training Accuracy= 0.922

Step 390, Minibatch Loss= 151.4658, Training Accuracy= 0.961

Step 400, Minibatch Loss= 555.3539, Training Accuracy= 0.953

Step 410, Minibatch Loss= 765.5746, Training Accuracy= 0.945

Step 420, Minibatch Loss= 326.9393, Training Accuracy= 0.969

Step 430, Minibatch Loss= 530.8968, Training Accuracy= 0.977

Step 440, Minibatch Loss= 463.3909, Training Accuracy= 0.977

Step 450, Minibatch Loss= 362.2226, Training Accuracy= 0.977

Step 460, Minibatch Loss= 414.0034, Training Accuracy= 0.953

Step 470, Minibatch Loss= 583.4587, Training Accuracy= 0.945

Step 480, Minibatch Loss= 566.1262, Training Accuracy= 0.969

Step 490, Minibatch Loss= 691.1143, Training Accuracy= 0.961

Step 500, Minibatch Loss= 282.8893, Training Accuracy= 0.984

Optimization Finished!

Testing Accuracy: 0.976562

| MIT | Deep_Learning/TensorFlow-aymericdamien/notebooks/3_NeuralNetworks/convolutional_network_raw.ipynb | Chau-Xochitl/INFO_7390 |

Google Cloud CMIP6 Public Data: Basic Python ExampleThis notebooks shows how to query the catalog and load the data using python | from matplotlib import pyplot as plt

import numpy as np

import pandas as pd

import xarray as xr

import zarr

import fsspec

%matplotlib inline

%config InlineBackend.figure_format = 'retina'

plt.rcParams['figure.figsize'] = 12, 6 | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Browse CatalogThe data catatalog is stored as a CSV file. Here we read it with Pandas. | df = pd.read_csv('https://storage.googleapis.com/cmip6/cmip6-zarr-consolidated-stores.csv')

df.head() | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

The columns of the dataframe correspond to the CMI6 controlled vocabulary. A beginners' guide to these terms is available in [this document](https://docs.google.com/document/d/1yUx6jr9EdedCOLd--CPdTfGDwEwzPpCF6p1jRmqx-0Q). Here we filter the data to find monthly surface air temperature for historical experiments. | df_ta = df.query("activity_id=='CMIP' & table_id == 'Amon' & variable_id == 'tas' & experiment_id == 'historical'")

df_ta | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Now we do further filtering to find just the models from NCAR. | df_ta_ncar = df_ta.query('institution_id == "NCAR"')

df_ta_ncar | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Load DataNow we will load a single store using fsspec, zarr, and xarray. | # get the path to a specific zarr store (the first one from the dataframe above)

zstore = df_ta_ncar.zstore.values[-1]

print(zstore)

# create a mutable-mapping-style interface to the store

mapper = fsspec.get_mapper(zstore)

# open it using xarray and zarr

ds = xr.open_zarr(mapper, consolidated=True)

ds | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Plot a map from a specific date. | ds.tas.sel(time='1950-01').squeeze().plot() | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Create a timeseries of global-average surface air temperature. For this we need the area weighting factor for each gridpoint. | df_area = df.query("variable_id == 'areacella' & source_id == 'CESM2'")

ds_area = xr.open_zarr(fsspec.get_mapper(df_area.zstore.values[0]), consolidated=True)

ds_area

total_area = ds_area.areacella.sum(dim=['lon', 'lat'])

ta_timeseries = (ds.tas * ds_area.areacella).sum(dim=['lon', 'lat']) / total_area

ta_timeseries | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

By default the data are loaded lazily, as Dask arrays. Here we trigger computation explicitly. | %time ta_timeseries.load()

ta_timeseries.plot(label='monthly')

ta_timeseries.rolling(time=12).mean().plot(label='12 month rolling mean')

plt.legend()

plt.title('Global Mean Surface Air Temperature') | _____no_output_____ | MIT | basic_search_and_load.ipynb | pangeo-gallery/cmip6 |

Figures for the section on approximately computing the stream structure | # Load the smooth and peppered stream

sdf_smooth= gd1_util.setup_gd1model()

pepperfilename= 'gd1pepper.pkl'

if os.path.exists(pepperfilename):

with open(pepperfilename,'rb') as savefile:

sdf_pepper= pickle.load(savefile)

else:

timpacts= simulate_streampepper.parse_times('256sampling',9.)

sdf_pepper= gd1_util.setup_gd1model(timpact=timpacts,

hernquist=True)

save_pickles(pepperfilename,sdf_pepper) | galpyWarning: WARNING: Rnorm keyword input to streamdf is deprecated in favor of the standard ro keyword

galpyWarning: WARNING: Vnorm keyword input to streamdf is deprecated in favor of the standard vo keyword

galpyWarning: Using C implementation to integrate orbits

| BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Is the mean perpendicular frequency close to zero? | # Sampling functions

massrange=[5.,9.]

plummer= False

Xrs= 5.

nsubhalo= simulate_streampepper.nsubhalo

rs= simulate_streampepper.rs

dNencdm= simulate_streampepper.dNencdm

sample_GM= lambda: (10.**((-0.5)*massrange[0])\

+(10.**((-0.5)*massrange[1])\

-10.**((-0.5)*massrange[0]))\

*numpy.random.uniform())**(1./(-0.5))\

/bovy_conversion.mass_in_msol(V0,R0)

rate_range= numpy.arange(massrange[0]+0.5,massrange[1]+0.5,1)

rate= numpy.sum([dNencdm(sdf_pepper,10.**r,Xrs=Xrs,

plummer=plummer)

for r in rate_range])

sample_rs= lambda x: rs(x*bovy_conversion.mass_in_1010msol(V0,R0)*10.**10.,

plummer=plummer)

numpy.random.seed(2)

sdf_pepper.simulate(rate=rate,sample_GM=sample_GM,sample_rs=sample_rs,Xrs=Xrs)

n= 100000

aa_mock_per= sdf_pepper.sample(n=n,returnaAdt=True)

dO= numpy.dot(aa_mock_per[0].T-sdf_pepper._progenitor_Omega,

sdf_pepper._sigomatrixEig[1][:,sdf_pepper._sigomatrixEigsortIndx])

dO[:,2]*= sdf_pepper._sigMeanSign

da= numpy.dot(aa_mock_per[1].T-sdf_pepper._progenitor_angle,

sdf_pepper._sigomatrixEig[1][:,sdf_pepper._sigomatrixEigsortIndx])

da[:,2]*= sdf_pepper._sigMeanSign

apar= da[:,2]

xs= numpy.linspace(0.,1.5,1001)

mO_unp= numpy.array([sdf_smooth.meanOmega(x,oned=True,use_physical=False) for x in xs])

mOint= interpolate.InterpolatedUnivariateSpline(xs,mO_unp,k=3)

mOs= mOint(apar)

frac= 0.02

alpha=0.01

linecolor='0.65'

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(12,4)

subplot(1,3,1)

bovy_plot.bovy_plot(apar[::3],dO[::3,2]/mOs[::3]-1,'k.',alpha=alpha*2,gcf=True,

rasterized=True,xrange=[0.,1.5],yrange=[-1.2,1.2])

z= lowess(dO[:,2]/mOs-1,apar,frac=frac)

plot(z[::100,0],z[::100,1],color=linecolor,lw=2.5)

#xlim(0.,1.5)

#ylim(-1.2,1.2)

xlabel(r'$\Delta\theta_\parallel$')

bovy_plot.bovy_text(r'$\Delta\Omega_\parallel/\langle\Delta\Omega^0_\parallel\rangle-1$',top_left=True,

size=18.)

subplot(1,3,2)

bovy_plot.bovy_plot(apar[::3],dO[::3,1]/mOs[::3],'k.',alpha=alpha*2,gcf=True,

rasterized=True,xrange=[0.,1.5],yrange=[-0.05,0.05])

z= lowess(dO[:,1]/mOs,apar,frac=frac)

plot(z[::100,0],z[::100,1],color=linecolor,lw=2.5)

#xlim(0.,1.5)

#ylim(-0.05,0.05)

xlabel(r'$\Delta\theta_\parallel$')

bovy_plot.bovy_text(r'$\Delta\Omega_{\perp,1}/\langle\Delta\Omega^0_\parallel\rangle$',top_left=True,

size=18.)

subplot(1,3,3)

bovy_plot.bovy_plot(apar[::3],dO[::3,0]/mOs[::3],'k.',alpha=alpha,gcf=True,

rasterized=True,xrange=[0.,1.5],yrange=[-0.05,0.05])

z= lowess(dO[:,0]/mOs,apar,frac=frac)

plot(z[::100,0],z[::100,1],color=linecolor,lw=2.5)

#xlim(0.,1.5)

#ylim(-0.05,0.05)

xlabel(r'$\Delta\theta_\parallel$')

bovy_plot.bovy_text(r'$\Delta\Omega_{\perp,2}/\langle\Delta\Omega^0_\parallel\rangle$',top_left=True,

size=18.)

if save_figures:

tight_layout()

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1like_meanOparOperp.pdf'))

print "This stream had %i impacts" % len(sdf_pepper._GM) | This stream had 61 impacts

| BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Test the single-impact approximations | # Setup a single, large impact

m= 10.**8.

GM= 10**8./bovy_conversion.mass_in_msol(V0,R0)

timpactIndx= numpy.argmin(numpy.fabs(numpy.array(sdf_pepper._uniq_timpact)-1.3/bovy_conversion.time_in_Gyr(V0,R0)))

# Load the single-impact stream

gapfilename= 'gd1single.pkl'

if os.path.exists(gapfilename):

with open(gapfilename,'rb') as savefile:

sdf_gap= pickle.load(savefile)

else:

sdf_gap= gd1_util.setup_gd1model(hernquist=True,

singleImpact=True,

impactb=0.5*rs(m),

subhalovel=numpy.array([-25.,155.,30.])/V0,

impact_angle=0.6,

timpact=sdf_pepper._uniq_timpact[timpactIndx],

GM=GM,rs=rs(m))

save_pickles(gapfilename,sdf_gap)

n= 100000

aa_mock_per= sdf_gap.sample(n=n,returnaAdt=True)

dO= numpy.dot(aa_mock_per[0].T-sdf_gap._progenitor_Omega,

sdf_gap._sigomatrixEig[1][:,sdf_gap._sigomatrixEigsortIndx])

dO[:,2]*= sdf_gap._sigMeanSign

da= numpy.dot(aa_mock_per[1].T-sdf_gap._progenitor_angle,

sdf_gap._sigomatrixEig[1][:,sdf_gap._sigomatrixEigsortIndx])

da[:,2]*= sdf_gap._sigMeanSign

num= True

apar= numpy.arange(0.,sdf_smooth.length()+0.003,0.003)

dens_unp= numpy.array([sdf_smooth._density_par(x) for x in apar])

dens_approx= numpy.array([sdf_gap.density_par(x,approx=True) for x in apar])

dens_approx_higherorder= numpy.array([sdf_gap._density_par(x,approx=True,higherorder=True) for x in apar])

# normalize

dens_unp= dens_unp/numpy.sum(dens_unp)/(apar[1]-apar[0])

dens_approx= dens_approx/numpy.sum(dens_approx)/(apar[1]-apar[0])

dens_approx_higherorder= dens_approx_higherorder/numpy.sum(dens_approx_higherorder)/(apar[1]-apar[0])

if num:

dens_num= numpy.array([sdf_gap.density_par(x,approx=False) for x in apar])

dens_num= dens_num/numpy.sum(dens_num)/(apar[1]-apar[0])

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(6,7)

axTop= pyplot.axes([0.15,0.3,0.825,0.65])

fig= pyplot.gcf()

fig.sca(axTop)

bovy_plot.bovy_plot(apar,dens_approx,lw=2.5,gcf=True,

color='k',

xrange=[0.,1.],

yrange=[0.,2.24],

ylabel=r'$\mathrm{density}$')

plot(apar,dens_unp,lw=3.5,color='k',ls='--',zorder=0)

nullfmt = NullFormatter() # no labels

axTop.xaxis.set_major_formatter(nullfmt)

dum= hist(da[:,2],bins=101,normed=True,range=[apar[0],apar[-1]],

histtype='step',color='0.55',zorder=0,lw=3.)

axBottom= pyplot.axes([0.15,0.1,0.825,0.2])

fig= pyplot.gcf()

fig.sca(axBottom)

bovy_plot.bovy_plot(apar,100.*(dens_approx_higherorder-dens_approx)/dens_approx_higherorder,

lw=2.5,gcf=True,color='k',

xrange=[0.,1.],

yrange=[-0.145,0.145],

zorder=2,

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{relative\ difference\ in}\ \%$')

if num:

plot(apar,100.*(dens_num-dens_approx_higherorder)/dens_approx_higherorder,

lw=2.5,zorder=1,color='0.55')

# label

aparIndx= numpy.argmin(numpy.fabs(apar-0.64))

plot([0.45,apar[aparIndx]],[0.06,(100.*(dens_approx_higherorder-dens_approx)/dens_approx_higherorder)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.1,0.07,r'$\mathrm{higher\!\!-\!\!order\ minus\ linear}$',size=17.)

if num:

aparIndx= numpy.argmin(numpy.fabs(apar-0.62))

plot([0.45,apar[aparIndx]],[-0.07,(100.*(dens_num-dens_approx_higherorder)/dens_approx_higherorder)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.05,-0.12,r'$\mathrm{numerical\ minus\ higher\!\!-\!\!order}$',size=17.)

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1likeSingle_densapprox.pdf'))

mO_unp= numpy.array([sdf_smooth.meanOmega(x,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

mO_approx= numpy.array([sdf_gap.meanOmega(x,approx=True,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

mO_approx_higherorder= numpy.array([sdf_gap.meanOmega(x,oned=True,approx=True,higherorder=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

if num:

mO_num= numpy.array([sdf_gap.meanOmega(x,approx=False,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

frac= 0.005

alpha=0.01

linecolor='0.65'

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(6,7)

axTop= pyplot.axes([0.15,0.3,0.825,0.65])

fig= pyplot.gcf()

fig.sca(axTop)

bovy_plot.bovy_plot(apar,mO_approx,lw=2.5,gcf=True,

color='k',

xrange=[0.,1.],

yrange=[0.,0.2],

ylabel=r'$\Delta \Omega_\parallel\,(\mathrm{Gyr}^{-1})$')

plot(apar,mO_unp,lw=2.5,color='k',ls='--')

plot(da[::3,2],dO[::3,2]*bovy_conversion.freq_in_Gyr(V0,R0),

'k.',alpha=alpha*2,rasterized=True)

nullfmt = NullFormatter() # no labels

axTop.xaxis.set_major_formatter(nullfmt)

axBottom= pyplot.axes([0.15,0.1,0.825,0.2])

fig= pyplot.gcf()

fig.sca(axBottom)

bovy_plot.bovy_plot(apar,100.*(mO_approx_higherorder-mO_approx)/mO_approx_higherorder,

lw=2.5,gcf=True,color='k',

xrange=[0.,1.],zorder=1,

yrange=[-0.039,0.039],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{relative\ difference\ in\ \%}$')

if num:

plot(apar,100.*(mO_num-mO_approx_higherorder)/mO_approx_higherorder,

lw=2.5,color='0.55',zorder=0)

# label

aparIndx= numpy.argmin(numpy.fabs(apar-0.64))

plot([0.45,apar[aparIndx]],[0.024,(100.*(mO_approx_higherorder-mO_approx)/mO_approx_higherorder)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.1,0.026,r'$\mathrm{higher\!\!-\!\!order\ minus\ linear}$',size=17.)

aparIndx= numpy.argmin(numpy.fabs(apar-0.6))

if num:

plot([0.45,apar[aparIndx]],[-0.02,(100.*(mO_num-mO_approx_higherorder)/mO_approx_higherorder)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.05,-0.03,r'$\mathrm{numerical\ minus\ higher\!\!-\!\!order}$',size=17.)

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1likeSingle_mOparapprox.pdf'))

start= time.time()

numpy.array([sdf_gap.density_par(x,approx=False) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.density_par(x,approx=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.density_par(x,approx=True,higherorder=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.meanOmega(x,approx=False,oned=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.meanOmega(x,approx=True,oned=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.meanOmega(x,approx=True,oned=True,higherorder=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar) | 186.439969323

0.519882548939

5.57736194495

| BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Test the multiple-impact approximations | # Setup a four, intermediate impacts

m= [10.**7.,10.**7.25,10.**6.75,10.**7.5]

GM= [mm/bovy_conversion.mass_in_msol(V0,R0) for mm in m]

timpactIndx= [numpy.argmin(numpy.fabs(numpy.array(sdf_pepper._uniq_timpact)-1.3/bovy_conversion.time_in_Gyr(V0,R0))),

numpy.argmin(numpy.fabs(numpy.array(sdf_pepper._uniq_timpact)-2.3/bovy_conversion.time_in_Gyr(V0,R0))),

numpy.argmin(numpy.fabs(numpy.array(sdf_pepper._uniq_timpact)-3.3/bovy_conversion.time_in_Gyr(V0,R0))),

numpy.argmin(numpy.fabs(numpy.array(sdf_pepper._uniq_timpact)-4.3/bovy_conversion.time_in_Gyr(V0,R0)))]

sdf_pepper.set_impacts(impactb=[0.5*rs(m[0]),2.*rs(m[1]),1.*rs(m[2]),2.5*rs(m[3])],

subhalovel=numpy.array([[-25.,155.,30.],

[125.,35.,80.],

[-225.,5.,-40.],

[25.,-155.,37.]])/V0,

impact_angle=[0.6,0.4,0.3,0.3],

timpact=[sdf_pepper._uniq_timpact[ti] for ti in timpactIndx],

GM=GM,rs=[rs(mm) for mm in m])

sdf_gap= sdf_pepper

n= 100000

aa_mock_per= sdf_pepper.sample(n=n,returnaAdt=True)

dO= numpy.dot(aa_mock_per[0].T-sdf_gap._progenitor_Omega,

sdf_gap._sigomatrixEig[1][:,sdf_gap._sigomatrixEigsortIndx])

dO[:,2]*= sdf_gap._sigMeanSign

da= numpy.dot(aa_mock_per[1].T-sdf_gap._progenitor_angle,

sdf_gap._sigomatrixEig[1][:,sdf_gap._sigomatrixEigsortIndx])

da[:,2]*= sdf_gap._sigMeanSign

num= True

apar= numpy.arange(0.,sdf_smooth.length()+0.003,0.003)

dens_unp= numpy.array([sdf_smooth._density_par(x) for x in apar])

dens_approx= numpy.array([sdf_gap.density_par(x,approx=True) for x in apar])

# normalize

dens_unp= dens_unp/numpy.sum(dens_unp)/(apar[1]-apar[0])

dens_approx= dens_approx/numpy.sum(dens_approx)/(apar[1]-apar[0])

if num:

dens_num= numpy.array([sdf_gap.density_par(x,approx=False) for x in apar])

dens_num= dens_num/numpy.sum(dens_num)/(apar[1]-apar[0])

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(6,7)

axTop= pyplot.axes([0.15,0.3,0.825,0.65])

fig= pyplot.gcf()

fig.sca(axTop)

bovy_plot.bovy_plot(apar,dens_approx,lw=2.5,gcf=True,

color='k',

xrange=[0.,1.],

yrange=[0.,2.24],

ylabel=r'$\mathrm{density}$')

plot(apar,dens_unp,lw=3.5,color='k',ls='--',zorder=0)

nullfmt = NullFormatter() # no labels

axTop.xaxis.set_major_formatter(nullfmt)

dum= hist(da[:,2],bins=101,normed=True,range=[apar[0],apar[-1]],

histtype='step',color='0.55',zorder=0,lw=3.)

axBottom= pyplot.axes([0.15,0.1,0.825,0.2])

fig= pyplot.gcf()

fig.sca(axBottom)

if num:

bovy_plot.bovy_plot(apar,100.*(dens_num-dens_approx)/dens_approx,

lw=2.5,gcf=True,color='k',

xrange=[0.,1.],

yrange=[-1.45,1.45],

zorder=2,

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{relative\ difference\ in}\ \%$')

# label

if num:

aparIndx= numpy.argmin(numpy.fabs(apar-0.6))

plot([0.45,apar[aparIndx]],[0.7,(100.*(dens_num-dens_approx)/dens_approx)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.15,0.4,r'$\mathrm{numerical\ minus}$'+'\n'+r'$\mathrm{approximation}$',size=17.)

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1likeMulti_densapprox.pdf'))

mO_unp= numpy.array([sdf_smooth.meanOmega(x,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

mO_approx= numpy.array([sdf_gap.meanOmega(x,approx=True,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

if num:

mO_num= numpy.array([sdf_gap.meanOmega(x,approx=False,oned=True) for x in apar])\

*bovy_conversion.freq_in_Gyr(V0,R0)

frac= 0.005

alpha=0.01

linecolor='0.65'

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(6,7)

axTop= pyplot.axes([0.15,0.3,0.825,0.65])

fig= pyplot.gcf()

fig.sca(axTop)

bovy_plot.bovy_plot(apar,mO_approx,lw=2.5,gcf=True,

color='k',

xrange=[0.,1.],

yrange=[0.,0.2],

ylabel=r'$\Delta \Omega_\parallel\,(\mathrm{Gyr}^{-1})$')

plot(apar,mO_unp,lw=2.5,color='k',ls='--')

plot(da[::3,2],dO[::3,2]*bovy_conversion.freq_in_Gyr(V0,R0),

'k.',alpha=alpha*2,rasterized=True)

nullfmt = NullFormatter() # no labels

axTop.xaxis.set_major_formatter(nullfmt)

axBottom= pyplot.axes([0.15,0.1,0.825,0.2])

fig= pyplot.gcf()

fig.sca(axBottom)

if num:

bovy_plot.bovy_plot(apar,100.*(mO_num-mO_approx)/mO_approx,

lw=2.5,gcf=True,color='k',

xrange=[0.,1.],zorder=1,

yrange=[-0.39,0.39],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{relative\ difference\ in\ \%}$')

# label

if num:

aparIndx= numpy.argmin(numpy.fabs(apar-0.6))

plot([0.35,apar[aparIndx]],[0.2,(100.*(mO_num-mO_approx)/mO_approx)[aparIndx]],

'k',lw=1.)

bovy_plot.bovy_text(0.05,0.1,r'$\mathrm{numerical\ minus}$'+'\n'+r'$\mathrm{approximation}$',size=17.)

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1likeMulti_mOparapprox.pdf'))

start= time.time()

numpy.array([sdf_gap.density_par(x,approx=False) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.density_par(x,approx=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.meanOmega(x,approx=False,oned=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar)

start= time.time()

numpy.array([sdf_gap.meanOmega(x,approx=True,oned=True) for x in apar[::10]])

end= time.time()

print (end-start)*1000.*10./len(apar) | 2007.35482303

8.63206025326

| BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Computational speed | nimp= 2**numpy.arange(1,9)

ntrials= 3

nsample= [10,10,10,10,10,10,33,33,33]

compt= numpy.zeros(len(nimp))

for ii,ni in enumerate(nimp):

tcompt= 0.

for t in range(ntrials):

nimpact=ni

timpacts= numpy.random.permutation(numpy.array(sdf_pepper._uniq_timpact))[:ni]

print len(timpacts)

impact_angles= numpy.array([\

sdf_pepper._icdf_stream_len[ti](numpy.random.uniform())

for ti in timpacts])

GMs= numpy.array([sample_GM() for a in impact_angles])

rss= numpy.array([sample_rs(gm) for gm in GMs])

impactbs= numpy.random.uniform(size=len(impact_angles))*Xrs*rss

subhalovels= numpy.empty((len(impact_angles),3))

for jj in range(len(timpacts)):

subhalovels[jj]=\

sdf_pepper._draw_impact_velocities(timpacts[jj],120./V0,

impact_angles[jj],n=1)[0]

# Flip angle sign if necessary

if not sdf_pepper._gap_leading: impact_angles*= -1.

# Setup

sdf_pepper.set_impacts(impact_angle=impact_angles,

impactb=impactbs,

subhalovel=subhalovels,

timpact=timpacts,

GM=GMs,rs=rss)

start= time.time()

numpy.array([sdf_pepper.density_par(x,approx=True) for x in apar[::nsample[ii]]])

end= time.time()

tcompt+= (end-start)*1000.*nsample[ii]/len(apar)

compt[ii]= tcompt/ntrials

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=14.)

figsize(6,4)

bovy_plot.bovy_plot(numpy.log2(nimp),compt,'ko',

semilogy=True,

xrange=[0.,9.],

yrange=[.5,100000.],

ylabel=r'$\mathrm{time}\,(\mathrm{ms})$',

xlabel=r'$\mathrm{number\ of\ impacts}$')

p= numpy.polyfit(numpy.log10(nimp),numpy.log10(compt),deg=1)

bovy_plot.bovy_plot(numpy.log2(nimp),10.**(p[0]*numpy.log10(nimp)+p[1]),

'-',lw=2.,

color=(0.0, 0.4470588235294118, 0.6980392156862745),

overplot=True)

pyplot.text(0.3,0.075,

r'$\log_{10}\ \mathrm{time/ms} = %.2f \,\log_{10} N %.2f$' % (p[0],p[1]),

transform=pyplot.gca().transAxes,size=14.)

# Use 100, 1000 instead of 10^2, 10^3

gca().yaxis.set_major_formatter(ScalarFormatter())

def twoto(x,pos):

return r'$%i$' % (2**x)

formatter = FuncFormatter(twoto)

gca().xaxis.set_major_formatter(formatter)

gcf().subplots_adjust(left=0.175,bottom=0.15,right=0.95,top=0.95)

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1likeMulti_compTime.pdf')) | _____no_output_____ | BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Example densities and tracks Single masses | # Load our fiducial simulation's output, for apars and smooth stream

data= numpy.genfromtxt(os.path.join(os.getenv('DATADIR'),'streamgap-pepper','gd1_multtime',

'gd1_t64sampling_X5_5-9_dens.dat'),

delimiter=',',max_rows=2)

apars= data[0]

dens_unp= data[1]

data= numpy.genfromtxt(os.path.join(os.getenv('DATADIR'),'streamgap-pepper','gd1_multtime',

'gd1_t64sampling_X5_5-9_omega.dat'),

delimiter=',',max_rows=2)

omega_unp= data[1]

dens_example= []

omega_example= []

# Perform some simulations, for different mass ranges

numpy.random.seed(3)

nexample= 4

masses= [5.5,6.5,7.5,8.5]

for ii in range(nexample):

# Sampling functions

sample_GM= lambda: 10.**(masses[ii]-10.)\

/bovy_conversion.mass_in_1010msol(V0,R0)

rate= dNencdm(sdf_pepper,10.**masses[ii],Xrs=Xrs,

plummer=plummer)

sdf_pepper.simulate(rate=rate,sample_GM=sample_GM,sample_rs=sample_rs,Xrs=Xrs)

densOmega= numpy.array([sdf_pepper._densityAndOmega_par_approx(a) for a in apars]).T

dens_example.append(densOmega[0])

omega_example.append(densOmega[1])

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

for ii in range(nexample):

bovy_plot.bovy_plot(apars,dens_example[ii]/dens_unp+2.*ii+0.5*(ii>2),lw=2.5,

color='k',

xrange=[0.,1.3],

yrange=[0.,2.*nexample+1],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{density}/\mathrm{smooth\ density}+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii+0.5*(ii>2),lw=1.5,color='k',ls='--',zorder=0)

bovy_plot.bovy_text(1.025,1.+2.*ii+0.5*(ii>2),r'$10^{%.1f}\,M_\odot$' % masses[ii],verticalalignment='center',size=18.)

overplot=True

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats',

'gd1like_densexample_singlemasses.pdf'))

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

mult= [3.,3.,1.,1.]

for ii in range(nexample):

bovy_plot.bovy_plot(apars,mult[ii]*(omega_example[ii]/omega_unp-1.)+1.+2.*ii+0.5*(ii>2),

lw=2.5,

color='k',

xrange=[0.,1.3],

yrange=[0.,2.*nexample+1.],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\langle\Delta \Omega_\parallel\rangle\big/\langle\Delta \Omega_\parallel^0\rangle+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii+0.5*(ii>2),lw=1.5,color='k',ls='--',zorder=0)

bovy_plot.bovy_text(1.025,1.+2.*ii+0.5*(ii>2),r'$10^{%.1f}\,M_\odot$' % masses[ii],verticalalignment='center',size=18.)

bovy_plot.bovy_text(0.025,1.+2.*ii+0.1+0.5*(ii>2),r'$\times%i$' % mult[ii],size=18.)

overplot= True

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats',

'gd1like_omegaexample_singlemasses.pdf')) | _____no_output_____ | BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Full mass range First look at low apar resolution: | apars= apars[::30]

dens_unp= dens_unp[::30]

omega_unp= omega_unp[::30]

# Sampling functions

massrange=[5.,9.]

plummer= False

Xrs= 5.

nsubhalo= simulate_streampepper.nsubhalo

rs= simulate_streampepper.rs

dNencdm= simulate_streampepper.dNencdm

sample_GM= lambda: (10.**((-0.5)*massrange[0])\

+(10.**((-0.5)*massrange[1])\

-10.**((-0.5)*massrange[0]))\

*numpy.random.uniform())**(1./(-0.5))\

/bovy_conversion.mass_in_msol(V0,R0)

rate_range= numpy.arange(massrange[0]+0.5,massrange[1]+0.5,1)

rate= numpy.sum([dNencdm(sdf_pepper,10.**r,Xrs=Xrs,

plummer=plummer)

for r in rate_range])

sample_rs= lambda x: rs(x*bovy_conversion.mass_in_1010msol(V0,R0)*10.**10.,

plummer=plummer)

dens_example2= []

omega_example2= []

# Perform some simulations

numpy.random.seed(3)

nexample= 4

for ii in range(nexample):

sdf_pepper.simulate(rate=rate,sample_GM=sample_GM,sample_rs=sample_rs,Xrs=Xrs)

densOmega= numpy.array([sdf_pepper._densityAndOmega_par_approx(a) for a in apars]).T

dens_example2.append(densOmega[0])

omega_example2.append(densOmega[1])

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

for ii in range(nexample):

bovy_plot.bovy_plot(apars,dens_example2[ii]/dens_unp+2.*ii,lw=2.5,

color='k',

xrange=[0.,1.],

yrange=[0.,2.*nexample+1.],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{density}/\mathrm{smooth\ density}+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii,lw=1.5,color='k',ls='--',zorder=0)

overplot=True

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

for ii in range(nexample):

bovy_plot.bovy_plot(apars,omega_example2[ii]/omega_unp+2.*ii,lw=2.5,

color='k',

xrange=[0.,1.],

yrange=[0.,2.*nexample],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\langle\Delta \Omega_\parallel\rangle\big/\langle\Delta \Omega_\parallel^0\rangle+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii,lw=1.5,color='k',ls='--',zorder=0)

overplot= True | _____no_output_____ | BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

At full apar resolution: | # Load our fiducial simulation's output, for apars and smooth stream

data= numpy.genfromtxt(os.path.join(os.getenv('DATADIR'),'streamgap-pepper','gd1_multtime',

'gd1_t64sampling_X5_5-9_dens.dat'),

delimiter=',',max_rows=2)

apars= data[0]

dens_unp= data[1]

data= numpy.genfromtxt(os.path.join(os.getenv('DATADIR'),'streamgap-pepper','gd1_multtime',

'gd1_t64sampling_X5_5-9_omega.dat'),

delimiter=',',max_rows=2)

omega_unp= data[1]

dens_example2= []

omega_example2= []

# Perform some simulations

numpy.random.seed(3)

nexample= 4

for ii in range(nexample):

sdf_pepper.simulate(rate=rate,sample_GM=sample_GM,sample_rs=sample_rs,Xrs=Xrs)

densOmega= numpy.array([sdf_pepper._densityAndOmega_par_approx(a) for a in apars]).T

dens_example2.append(densOmega[0])

omega_example2.append(densOmega[1])

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

for ii in range(nexample):

bovy_plot.bovy_plot(apars,dens_example2[ii]/dens_unp+2.*ii,lw=2.5,

color='k',

xrange=[0.,1.],

yrange=[0.,2.*nexample+1.],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\mathrm{density}/\mathrm{smooth\ density}+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii,lw=1.5,color='k',ls='--',zorder=0)

overplot=True

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1like_densexample.pdf'))

bovy_plot.bovy_print(axes_labelsize=18.,xtick_labelsize=14.,ytick_labelsize=18.)

figsize(6,7)

overplot= False

for ii in range(nexample):

bovy_plot.bovy_plot(apars,omega_example2[ii]/omega_unp+2.*ii,lw=2.5,

color='k',

xrange=[0.,1.],

yrange=[0.,2.*nexample],

xlabel=r'$\Delta \theta_\parallel$',

ylabel=r'$\langle\Delta \Omega_\parallel\rangle\big/\langle\Delta \Omega_\parallel^0\rangle+\mathrm{constant}$',

overplot=overplot)

plot(apars,apars*0.+1.+2.*ii,lw=1.5,color='k',ls='--',zorder=0)

overplot= True

if save_figures:

bovy_plot.bovy_end_print(os.path.join(os.getenv('PAPERSDIR'),'2016-stream-stats','gd1like_omegaexample.pdf')) | _____no_output_____ | BSD-3-Clause | meanOperpAndApproxImpacts.ipynb | jobovy/streamgap-pepper |

Examples Example 1: Move A FileAKA cut and paste. Moving files is a fast operation because we are just changing a pointer to the ones and zeros to point to something else. We are not actually pushing bits around disk to make the move happen.The module shutil is filled with all kinds of file handling goodies.In the root of the example directory is a small file. While it is in the root folder, it is simulating being outside the boundaries of the data warehouse environment. Let’s bring it inside by moving it to the In folder. | import shutil as sh

import os

if not 'script_dir' in globals():

script_dir = os.getcwd()

data_directory = 'data\\'

example_directory = 'BasicFileOpsExample\\'

target_directory = 'In\\'

file_name = 'forestfires.csv'

source_path = os.path.join(script_dir,data_directory,example_directory,file_name)

target_path = os.path.join(script_dir,data_directory,example_directory,target_directory,file_name)

sh.move(source_path, target_path) | _____no_output_____ | MIT | 03 Advanced/20-basic-file-io.ipynb | MassStreetUniversity/tutorial-python |

Example 2: Archiving A FileWe are now done processing the file and we need to archive it in case we need to drag it out and reload the system. The process of archiving is multi step. Zip up the file. Move the file to the Archive folder. Blow away the original.Once you run the example, check the Archive folder and the In folder. You should see a zip file in Archive and nothing in the In folder. | import zipfile as zf

import os

if not 'script_dir' in globals():

script_dir = os.getcwd()

data_directory = 'data\\'

example_directory = 'BasicFileOpsExample\\'

source_directory = 'In\\'

target_directory = 'Archive\\'

file_name = 'forestfires.csv'

archive_name = 'forestfires.zip'

target_path = os.path.join(script_dir,data_directory,example_directory,target_directory,archive_name)

source_path = os.path.join(data_directory,example_directory,source_directory)

archive = zf.ZipFile(target_path, "w")

os.chdir(source_path)

archive.write(file_name)

archive.close() | _____no_output_____ | MIT | 03 Advanced/20-basic-file-io.ipynb | MassStreetUniversity/tutorial-python |

Exercises Q 1The energy required to get from point $\vec{r}_1$ to point $\vec{r}_2$ for a plane is given by$$ E = \alpha \int_{C} \left| \frac{d\vec{r}}{dt} \right| dt - \int_C \vec{F} \cdot \frac{d\vec{r}}{dt}dt $$Suppose that $\alpha=5$ and our start and ends points are $\vec{r}_1 = (0,0)$ and $\vec{r}_2 = (0, 10)$. On this particular day, the wind produces a force field $\vec{F} = (0, -2/(x+1)^2)$. Find the optimal value of $A$ in $\vec{r}(t) = A\sin(\pi t/10)\hat{x} + t\hat{y}$ that minimizes the work. Then $x=A\sin(\pi t/10)$, $y=t$, and$$\left| \frac{d\vec{r}}{dt} \right| = \sqrt{1+(\pi A /10)^2 \cos^2(\pi t/10)}$$$$\vec{F} \cdot d\vec{r} = \begin{bmatrix} 0 \\ -2/(A\sin(\pi t/10) +1)^2\\ \end{bmatrix} \cdot \begin{bmatrix} \pi A/10 \cos(\pi t/10)\\ 1\\ \end{bmatrix} = -2/(A\sin(\pi t/10) +1)^2 $$so that$$ E = \int_{0}^{10} \left(5\sqrt{1+(\pi A /10)^2 \cos^2(\pi t/10)} + \frac{2}{(A\sin(\pi t/10) +100)^2} \right) dt$$ Q2Newton's law of cooling is$$\frac{dT}{dt} = -k(T-T_s(t)) $$where $T$ is the temperature of an object in the surroundings with temperature $T_s(t)$ (which may depend on time). Suppose $T$ represents the temperature of a shallow pool of water and $T_s(t)$ represents the temperature of outside. Find $T(t)$ given that you collected measurements of the outside: | t_m = np.array([ 0., 1.04347826, 2.08695652, 3.13043478, 4.17391304,

5.2173913 , 6.26086957, 7.30434783, 8.34782609, 9.39130435,

10.43478261, 11.47826087, 12.52173913, 13.56521739, 14.60869565,

15.65217391, 16.69565217, 17.73913043, 18.7826087 , 19.82608696,

20.86956522, 21.91304348, 22.95652174, 24. ])

temp_m = np.array([283.2322975, 284.6945461, 286.2259041, 287.8603625, 289.6440635,

291.6187583, 293.7939994, 296.1148895, 298.4395788, 300.5430675,

302.1566609, 303.0363609, 303.0363609, 302.1566609, 300.5430675,

298.4395788, 296.1148895, 293.7939994, 291.6187583, 289.6440635,

287.8603625, 286.2259041, 284.6945461, 283.2322975])

times = np.linspace(1, 23, 1000)

T0 = 284.6945461 | _____no_output_____ | Unlicense | day4/04. Scipy - Exercises.ipynb | ubutnux/bosscha-python-workshop-2022 |

Look At It | # Load image - individual 100307.

img = ci.load("fmri_data/rfMRI_REST1_LR_Atlas_hp2000_clean_filt_sm6.HCPMMP.ptseries.nii")

fmri_data = np.array(img.get_fdata())

# Visualize data, i.e. inspect the first 60 timesteps of each parcel.

# Generate heatmap.

timesteps = 60

displayed_data = np.transpose(fmri_data[range(timesteps),:])

plt.subplots(figsize=(15,10))

ax = sns.heatmap(displayed_data, yticklabels=False)

# Plot parameters.

plt.title('Resting fMRI Signal by Parcel - Individual 100307 LR', fontsize = 20)

plt.ylabel('Parcel', fontsize = 15)

plt.xlabel('Timestep', fontsize = 15)

plt.show() | _____no_output_____ | Apache-2.0 | docs/tutorials/time_series/fmri_analysis.ipynb | RebeccaYin7/hyppo |

Set Up Hyperparameters | # From Ting: Regions-of-Interest (ROIs)

roi_keys = np.array([1, 23, 18, 53, 24, 96, 117, 50, 143, 109, 148, 60, 38, 135, 93, 83, 149, 150, 65, 161, 132, 71]) - 1

roi_labels = np.array([

"Visual",

"Visual",

"Visual",

"SM",

"SM",

"dAtt",

"dAtt",

"dAtt",

"dAtt",

"vAtt",

"vAtt",

"vAtt",

"vAtt",

"Limbic",

"Limbic",

"FP",

"FP",

"DMN",

"DMN",

"DMN",

"DMN",

"DMN",

])

roi_data = fmri_data[0:300, roi_keys]

num_roi = len(roi_keys)

# Hyperparameters

max_lag = 1 # number of lags to check in the past

reps = 1000 # number of bootstrap replicates

workers = 1 # number of workers in internal MGC parallelization

# Subsample to test experiment.

# pairs = list(product(range(num_roi), repeat = 2)) # Fake param for testing.

pairs = list(product(range(num_roi), repeat = 2)) | _____no_output_____ | Apache-2.0 | docs/tutorials/time_series/fmri_analysis.ipynb | RebeccaYin7/hyppo |

Run Experiment | mgcx = MGCX(max_lag = max_lag)

def worker(i, j):

X = roi_data[:, i]

Y = roi_data[:, j]

stat, pval, mgcx_dict = mgcx.test(X, Y, reps = reps, workers = workers)

opt_lag = mgcx_dict['opt_lag']

opt_scale_x, opt_scale_y = mgcx_dict['opt_scale']

return stat, pval, opt_lag, opt_scale_x, opt_scale_y

output = np.array(Parallel(n_jobs=-2)(delayed(worker)(i, j) for i, j in pairs))

pickle.dump(output, open('fmri_data/mgcx_fmri_output.pkl', 'wb'))

# Load results into num_roi-by-num_roi matrices.

results = pickle.load(open('fmri_data/mgcx_fmri_output.pkl', 'rb'))

test_outputs = ['stat', 'pval', 'opt_lag', 'opt_scale_x', 'opt_scale_y']

matrices = np.zeros((len(test_outputs), num_roi, num_roi))

for p, pair in enumerate(pairs):

i, j = pair

for t in range(len(test_outputs)):

matrices[t, i, j] = results[p, t]

for t, test_output in enumerate(test_outputs):

pickle.dump(matrices[t], open('fmri_data/%s_matrix.pkl' % test_output, 'wb')) | _____no_output_____ | Apache-2.0 | docs/tutorials/time_series/fmri_analysis.ipynb | RebeccaYin7/hyppo |

Visualize Matrices | def plot_heatmap(matrix, labels, title, filename):

# sns.set()

cmap = mpl.cm.get_cmap('Purples')

cc = np.linspace(0, 1, 256)

cmap = mpl.colors.ListedColormap(cmap(cc))

heatmap_kws = dict(

cbar=False,

font_scale=1.4,

inner_hier_labels=labels,

hier_label_fontsize=20,

cmap=cmap,

center=None,

)

side_label_kws = dict(labelpad=45, fontsize=24)

fig, ax = plt.subplots(1, 1, figsize=(20, 16))

# Plot heatmap via graspy.

heatmap(matrix, ax=ax, **heatmap_kws)

ax.set_title(title, pad = 100, fontdict = {'fontsize' : 23})

# Create ticks.

num_ticks = 8

top_val = np.max(matrix)

ticks = [i * np.max(matrix) / num_ticks for i in range(num_ticks+1)]

yticks = [('%.2f' % np.round(10 ** -p, 2)) for p in ticks]

# Add colorbar.

sm = plt.cm.ScalarMappable(cmap=cmap)

sm.set_array(matrix)

cbar = fig.colorbar(sm, ax=ax, fraction=0.0475, pad=-0.1, ticks=ticks)

cbar.ax.set_yticklabels(yticks)

cbar.ax.tick_params(labelsize=25)

plt.savefig(

"%s.pdf" % filename,

facecolor="w",

format="pdf",

bbox_inches="tight",

)

plt.tight_layout()

plt.show() | _____no_output_____ | Apache-2.0 | docs/tutorials/time_series/fmri_analysis.ipynb | RebeccaYin7/hyppo |

p-value Matrix | # Apply negative log10 transform.

# matrix = pickle.load(open('fmri_data/pval_matrix.pkl', 'rb'))

# matrix = -np.log10(matrix)

# pickle.dump(matrix, open('fmri_data/nl10_pval_matrix.pkl', 'wb'))

matrix = pickle.load(open('fmri_data/nl10_pval_matrix.pkl', 'rb'))

plot_heatmap(matrix, roi_labels, 'p-Value', 'pval') | _____no_output_____ | Apache-2.0 | docs/tutorials/time_series/fmri_analysis.ipynb | RebeccaYin7/hyppo |

Buffered Text-to-SpeechIn this tutorial, we are going to build a state machine that controls a text-to-speech synthesis. The problem we solve is the following:- Speaking the text takes time, depending on how long the text is that the computer should speak.- Commands for speaking can arrive at any time, and we would like our state machine to process one of them at a time. So, even if we send three messages to it shortly after each other, it processes them one after the other.While solving this problem, we can learn more about the following concepts in STMPY state machines:- **Do-Activities**, which allow us to encapsulate the long-running text-to-speech function in a state machine.- **Deferred Events**, which allow us to ignore incoming messages until a later state, when we are ready again. Text-to-Speech MacOn a Mac, this is a function to make your computer speak: | from os import system

def text_to_speech(text):

system('say {}'.format(text)) | _____no_output_____ | MIT | notebooks/Buffered Text-to-Speech.ipynb | Hallvardd/ttm4115-project |

Run the above cell so the function is available in the following, and then execute the following cell to test it: | text_to_speech("Hello. I am a computer.") | _____no_output_____ | MIT | notebooks/Buffered Text-to-Speech.ipynb | Hallvardd/ttm4115-project |

WindowsTODO: We should have some code to run text to speech on Windows, too! State Machine 1With this function, we can create our first state machine that accepts a message and then speaks out some text. (Let's for now ignore how we get the text into the method, we will do that later.)Unfortunately, this state machine has a problem. This is because the method `text_to_speech(text)` is taking a long time to complete. This means, for the entire time that it takes to speak the text, nothing else can happen in all the state machines that are part of the same driver! State Machine 2 Long-Running ActionsThe way this function is implented makes that it **blocks**. This means, the Python program is busy executing this function as long as the speech takes to pronouce the message. Longer message, longer blocking.You can test this by putting some debugging aroud the function, to see when the functions returns: | print('Before speaking.')

text_to_speech("Hello. I am a computer.")

print('After speaking.') | _____no_output_____ | MIT | notebooks/Buffered Text-to-Speech.ipynb | Hallvardd/ttm4115-project |

You see that the string _"After speaking"_ is printed after the speaking is finished. During the execution, the program is blocked and does not do anything else. When our program should also do other stuff at the same time, either completely unrelated to speech or even just accepting new speech commands, this is not working! The driver is now completely blocked with executing the speech method, not being able to do anything else. Do-ActivitiesInstead of executing the method as part of a transition, we execute it as part of a state. This is called a **Do-Activity**, and it is declared as part of a state. The do-activity is started when the state is entered. Once the activity is finished, the state machine receives the event `done`, which triggers it to switch into another state.You may think now that the do-activity is similar to an entry action, as it is started when entering a state. However, a do-activity is started as part of its own thread, so that it does not block any other behavior from happening. Our state machine stays responsive, and so does any of the other state machines that may be assigned to the same driver. This happens in the background, STMPY is creating a new thread for a do-activity, starts it, and dispatches the `done` event once the do-activity finishes.When the do-activity finishes (in the case of the text-to-speech function, this means when the computer is finished talking), the state machine dispatches _automatically_ the event `done`, which brings the state machine into the next state. - A state with a do activity can therefore only declare one single outgoing transition that is triggered by the event `done`. - A state can have at most one do-activity. - A do-activity cannot be aborted. Instead, it should be programmed so that the function itself terminates, indicated for instance by the change of a variable.The following things are still possible in a state with a do-activity:- A state with a do-activity can have entry and exit actions. They are simply executed before or after the do activities.- A state with a do-activity can have internal transitions, since they don't leave the state. | from stmpy import Machine, Driver

from os import system

import logging

debug_level = logging.DEBUG

logger = logging.getLogger('stmpy')

logger.setLevel(debug_level)

ch = logging.StreamHandler()

ch.setLevel(debug_level)

formatter = logging.Formatter('%(asctime)s - %(name)-12s - %(levelname)-8s - %(message)s')

ch.setFormatter(formatter)

logger.addHandler(ch)

class Speaker:

def speak(self, string):

system('say {}'.format(string))

speaker = Speaker()

t0 = {'source': 'initial', 'target': 'ready'}

t1 = {'trigger': 'speak', 'source': 'ready', 'target': 'speaking'}

t2 = {'trigger': 'done', 'source': 'speaking', 'target': 'ready'}

s1 = {'name': 'speaking', 'do': 'speak(*)'}

stm = Machine(name='stm', transitions=[t0, t1, t2], states=[s1], obj=speaker)

speaker.stm = stm

driver = Driver()

driver.add_machine(stm)

driver.start()

driver.send('speak', 'stm', args=['My first sentence.'])

driver.send('speak', 'stm', args=['My second sentence.'])

driver.send('speak', 'stm', args=['My third sentence.'])

driver.send('speak', 'stm', args=['My fourth sentence.'])

driver.wait_until_finished() | _____no_output_____ | MIT | notebooks/Buffered Text-to-Speech.ipynb | Hallvardd/ttm4115-project |

The state machine 2 still has a problem, but this time another one: If we receive a new message with more text to speak _while_ we are in state `speaking`, this message is discarded. Our next state machine will fix this. State Machine 3As you know, events arriving in a state that do not declare outgoing triggers with that event, are discarded (that means, thrown away). For our state machine 2 above this means that when we are in state `speaking` and a new message arrives, this message is discarded. However, what we ideally want is that this message is handled once the currently spoken text is finished. There are two ways of achieving this:1. We could build a queue variable into our logic, and declare a transition that puts any arriving `speak` message into that queue. Whenever the currently spoken text finishes, we take another one from the queue until the queue is empty again. This has the drawback that we need to code the queue ourselves.2. We use a mechanism called **deferred event**, which is part of the state machine mechanics. This is the one we are going to use below. Deferred EventsA state can declare that it wants to **defer** an event, which simply means to not handle it. For our speech state machine it means that state `speaking` can declare that it defers event `speak`. Any event that arrives in a state that defers it, is ignored by that state. It is as if it never arrived, or as if it is invisible in the incoming event queue. Only once we switch into a next state that does not defer it, it gets visible again, and then either consumed by a transition, or discarded if the state does not declare any transition triggered by it. | s1 = {'name': 'speaking', 'do': 'speak(*)', 'speak': 'defer'}

stm = Machine(name='stm', transitions=[t0, t1, t2], states=[s1], obj=speaker)

speaker.stm = stm

driver = Driver()

driver.add_machine(stm)

driver.start()

driver.send('speak', 'stm', args=['My first sentence.'])

driver.send('speak', 'stm', args=['My second sentence.'])

driver.send('speak', 'stm', args=['My third sentence.'])

driver.send('speak', 'stm', args=['My fourth sentence.'])

driver.wait_until_finished() | _____no_output_____ | MIT | notebooks/Buffered Text-to-Speech.ipynb | Hallvardd/ttm4115-project |

概要- 101クラス分類- 対象:料理画像- VGG16による転移学習 1. 全結合層 1. 全層- RXなし | RUN = 100 | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

使用するGPUメモリの制限 | import tensorflow as tf

tf_ver = tf.__version__

if tf_ver.startswith('1.'):

from tensorflow.keras.backend import set_session

config = tf.ConfigProto()

config.gpu_options.allow_growth = True

config.log_device_placement = True

sess = tf.Session(config=config)

set_session(sess) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

使用するGPUを指定 | import os

os.environ["CUDA_VISIBLE_DEVICES"]="0" | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

matplotlibでプロットしたグラフをファイルへ保存 | import os

def save_fig(plt, file_prefix):

if file_prefix == '':

return

parent = os.path.dirname(os.path.abspath(file_prefix))

os.makedirs(parent, exist_ok=True)

plt.savefig(f'{file_prefix}.pdf', transparent=True, bbox_inches='tight', pad_inches = 0)

plt.savefig(f'{file_prefix}.png', transparent=True, dpi=300, bbox_inches='tight', pad_inches = 0) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

指定フォルダ以下にある画像リストを作成- サブフォルダはラベルに対応する数字であること- TOP_DIR - 0 - 00001.jpg - 00002.jpg - 1 - 00003.jpg - 00004.jpg | import pathlib

import random

import os

TOP_DIR = '/data1/Datasets/Food-101/03_all'

sub_dirs = pathlib.Path(TOP_DIR).glob('*/**')

label2files = dict()

for s in sub_dirs:

files = pathlib.Path(s).glob('**/*.jpg')

label = int(os.path.basename(s))

label2files[label] = list(files) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

画像とラベルを訓練データと検証データに分割する | ratio = 0.8

train_list = []

train_labels = []

val_list = []

val_labels = []

for k, v in label2files.items():

random.shuffle(v)

N = len(v)

N_train = int(N * ratio)

train_list.extend(v[:N_train])

train_labels.extend([k] * N_train)

val_list.extend(v[N_train:])

val_labels.extend([k] * (N - N_train))

NUM_CLASSES = len(label2files.keys()) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

画像ファイルリストとラベルから教師データを生成するクラス | import math

import numpy as np

from PIL import Image

import matplotlib.pyplot as plt

%matplotlib inline

import keras

from tensorflow.keras.utils import to_categorical

from tensorflow.keras.preprocessing.image import img_to_array

from tensorflow.keras.applications.vgg16 import preprocess_input

import tensorflow as tf

class ImageSequence(tf.keras.utils.Sequence):

def __init__(self, file_list, labels, batch_size, image_shape=(224, 224), shuffle=True, horizontal_flip=True):

self.file_list = np.array(file_list)

self.labels = to_categorical(labels)

self.batch_size = batch_size

self.image_shape = image_shape

self.shuffle = shuffle

self.horizontal_flip = horizontal_flip

self.indexes = np.arange(len(self.file_list))

if self.shuffle:

random.shuffle(self.indexes)

def __getitem__(self, index):

idx = self.indexes[index * self.batch_size : (index + 1) * self.batch_size]

y = self.labels[idx]

files = self.file_list[idx]

x = []

for f in files:

try:

img = Image.open(f)

# 正しいデータはRGB画像

# データセットの中には、グレースケール画像が入っている可能性がある。

# RGBに変換して、正しいデータと次元を揃える

img = img.convert('RGB')

img = img.resize(self.image_shape, Image.BILINEAR)

img = img_to_array(img)

img = preprocess_input(img) / 255.0

if self.horizontal_flip and np.random.random() > 0.5:

img = img[:,::-1, :]

x.append(np.expand_dims(img, axis=0))

except:

print(f)

return np.concatenate(x, axis=0), y

def __len__(self):

return len(self.file_list) // self.batch_size

def on_epoch_end(self):

if self.shuffle:

random.shuffle(self.indexes) | Using TensorFlow backend.

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

モデル保存用のディレクトリを作成★ | import os

from datetime import datetime

# モデル保存用ディレクトリの準備

model_dir = os.path.join(

f'../run/VGG16_run{RUN}'

)

os.makedirs(model_dir, exist_ok=True)

print('model_dir:', model_dir) # 保存先のディレクトリ名を表示

dir_weights = model_dir

os.makedirs(dir_weights, exist_ok=True) | model_dir: ../run/VGG16_run100

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

VGGモデルのロード | from tensorflow.keras.applications.vgg16 import VGG16

# 既存の1000クラスの出力を使わないため、

# `incliude_top=False`として出力層を含まない状態でロード

vgg16 = VGG16(include_top=False, input_shape=(224, 224, 3))

# モデルのサマリを確認。出力層が含まれてないことがわかる

vgg16.summary() | _________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_1 (InputLayer) (None, 224, 224, 3) 0

_________________________________________________________________

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

_________________________________________________________________

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

_________________________________________________________________

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

_________________________________________________________________

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

_________________________________________________________________

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

_________________________________________________________________

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

_________________________________________________________________

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

_________________________________________________________________

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

_________________________________________________________________

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

=================================================================

Total params: 14,714,688

Trainable params: 14,714,688

Non-trainable params: 0

_________________________________________________________________

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

VGG16を利用したモデルの作成と学習方法の設定★ | from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Dropout, Flatten

# モデルを編集し、ネットワークを生成する関数の定義

def build_transfer_model(vgg16):

# 読み出したモデルを使って、新しいモデルを作成

model = Sequential(vgg16.layers)

# 読み出した重みの一部は再学習しないように設定。

# ここでは、追加する層と出力層に近い層の重みのみを再学習

for layer in model.layers[:15]:

layer.trainable = False

# 追加する出力部分の層を構築

model.add(Flatten())

model.add(Dense(1024, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(1024, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(NUM_CLASSES, activation='softmax'))

return model | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

全結合層とそれに近い畳み込み層の学習★ モデル作成 | # 定義した関数を呼び出して、ネットワークを生成

model = build_transfer_model(vgg16) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

ネットワーク構造の保存★ | import json

import pickle

# ネットワークの保存

model_json = os.path.join(model_dir, 'model.json')

with open(model_json, 'w') as f:

json.dump(model.to_json(), f) | _____no_output_____ | MIT | VGG16.ipynb | KoshinoK/RandomExchange |

最適化アルゴリズムなどを指定してモデルをコンパイルする | from tensorflow.keras.optimizers import SGD

model.compile(

loss='categorical_crossentropy',

optimizer=SGD(lr=1e-4, momentum=0.9),

metrics=['accuracy']

)

# モデルのサマリを確認

model.summary() | _________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

block1_conv1 (Conv2D) (None, 224, 224, 64) 1792

_________________________________________________________________

block1_conv2 (Conv2D) (None, 224, 224, 64) 36928

_________________________________________________________________

block1_pool (MaxPooling2D) (None, 112, 112, 64) 0

_________________________________________________________________

block2_conv1 (Conv2D) (None, 112, 112, 128) 73856

_________________________________________________________________

block2_conv2 (Conv2D) (None, 112, 112, 128) 147584

_________________________________________________________________

block2_pool (MaxPooling2D) (None, 56, 56, 128) 0

_________________________________________________________________

block3_conv1 (Conv2D) (None, 56, 56, 256) 295168

_________________________________________________________________

block3_conv2 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_conv3 (Conv2D) (None, 56, 56, 256) 590080

_________________________________________________________________

block3_pool (MaxPooling2D) (None, 28, 28, 256) 0

_________________________________________________________________

block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160

_________________________________________________________________

block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808

_________________________________________________________________

block4_pool (MaxPooling2D) (None, 14, 14, 512) 0

_________________________________________________________________

block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808

_________________________________________________________________

block5_pool (MaxPooling2D) (None, 7, 7, 512) 0

_________________________________________________________________

flatten (Flatten) (None, 25088) 0

_________________________________________________________________

dense (Dense) (None, 1024) 25691136

_________________________________________________________________

dropout (Dropout) (None, 1024) 0

_________________________________________________________________

dense_1 (Dense) (None, 1024) 1049600

_________________________________________________________________

dropout_1 (Dropout) (None, 1024) 0

_________________________________________________________________

dense_2 (Dense) (None, 101) 103525

=================================================================

Total params: 41,558,949

Trainable params: 31,563,877

Non-trainable params: 9,995,072

_________________________________________________________________

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

シーケンス生成 | batch_size = 25

img_seq_train = ImageSequence(train_list, train_labels, batch_size=batch_size)

img_seq_validation = ImageSequence(val_list, val_labels, batch_size=batch_size)

print('Train images =', len(img_seq_train) * batch_size)

print('Validation images =', len(img_seq_validation) * batch_size) | Train images = 80800

Validation images = 20200

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

Callbackの生成★ | from tensorflow.keras.callbacks import ModelCheckpoint, CSVLogger, EarlyStopping, ReduceLROnPlateau

# Callbacksの設定

cp_filepath = os.path.join(dir_weights, 'ep_{epoch:04d}_ls_{loss:.1f}.h5')

cp = ModelCheckpoint(

cp_filepath,

monitor='val_acc',

verbose=0,

save_best_only=True,

save_weights_only=True,

mode='auto'

)

csv_filepath = os.path.join(model_dir, 'loss.csv')

csv = CSVLogger(csv_filepath, append=True)

es = EarlyStopping(monitor='val_acc', patience=20, verbose=1, mode='auto')

rl = ReduceLROnPlateau(monitor='val_loss', factor=0.5, patience=5, verbose=1, mode='auto', epsilon=0.0001, cooldown=0, min_lr=0) | WARNING:tensorflow:`epsilon` argument is deprecated and will be removed, use `min_delta` instead.

| MIT | VGG16.ipynb | KoshinoK/RandomExchange |

学習 | n_epoch = 200

# モデルの学習

history = model.fit_generator(

img_seq_train,

epochs=n_epoch, # 学習するエポック数

steps_per_epoch=len(img_seq_train),

validation_data=img_seq_validation,

validation_steps=len(img_seq_validation),

verbose=1,

callbacks=[cp, csv, es, rl]

) | Epoch 1/200

3232/3232 [==============================] - 1217s 377ms/step - loss: 4.6143 - acc: 0.0148 - val_loss: 4.5334 - val_acc: 0.0410

Epoch 2/200

3232/3232 [==============================] - 499s 155ms/step - loss: 4.4336 - acc: 0.0381 - val_loss: 4.0951 - val_acc: 0.1068

Epoch 3/200