text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

false |

This dataset contains 93265 english poems. |

false |

Part of **BEIR-PL: Zero Shot Information Retrieval Benchmark for the Polish Language**.

Link to arxiv: https://arxiv.org/pdf/2305.19840.pdf

Contact: konrad.wojtasik@pwr.edu.pl |

false |

This is a parsed version of [github-jupyter-parsed](https://huggingface.co/datasets/codeparrot/github-jupyter-parsed), with markdown and code pairs. We provide the preprocessing script in [preprocessing.py](https://huggingface.co/datasets/codeparrot/github-jupyter-parsed-v2/blob/main/preprocessing.py). The data is deduplicated and consists of 451662 examples.

For similar datasets with text and Python code, there is [CoNaLa](https://huggingface.co/datasets/neulab/conala) benchmark from StackOverflow, with some samples curated by annotators. |

false | |

false |

# ParaDetox: Detoxification with Parallel Data (English)

This repository contains information about Paradetox dataset -- the first parallel corpus for the detoxification task -- as well as models and evaluation methodology for the detoxification of English texts. The original paper ["ParaDetox: Detoxification with Parallel Data"](https://aclanthology.org/2022.acl-long.469/) was presented at ACL 2022 main conference.

## ParaDetox Collection Pipeline

The ParaDetox Dataset collection was done via [Yandex.Toloka](https://toloka.yandex.com/) crowdsource platform. The collection was done in three steps:

* *Task 1:* **Generation of Paraphrases**: The first crowdsourcing task asks users to eliminate toxicity in a given sentence while keeping the content.

* *Task 2:* **Content Preservation Check**: We show users the generated paraphrases along with their original variants and ask them to indicate if they have close meanings.

* *Task 3:* **Toxicity Check**: Finally, we check if the workers succeeded in removing toxicity.

All these steps were done to ensure high quality of the data and make the process of collection automated. For more details please refer to the original paper.

## ParaDetox Dataset

As a result, we get paraphrases for 11,939 toxic sentences (on average 1.66 paraphrases per sentence), 19,766 paraphrases total. The whole dataset can be found [here](https://github.com/skoltech-nlp/paradetox/blob/main/paradetox/paradetox.tsv). The examples of samples from ParaDetox Dataset:

In addition to all ParaDetox dataset, we also make public [samples](https://github.com/skoltech-nlp/paradetox/blob/main/paradetox/paradetox_cannot_rewrite.tsv) that were marked by annotators as "cannot rewrite" in *Task 1* of crowdsource pipeline.

# Detoxification evaluation

The automatic evaluation of the model were produced based on three parameters:

* *style transfer accuracy* (**STA**): percentage of nontoxic outputs identified by a style classifier. We pretrained toxicity classifier on Jigsaw data and put it online in HuggingFace🤗 [repo](https://huggingface.co/SkolkovoInstitute/roberta_toxicity_classifier).

* *content preservation* (**SIM**): cosine similarity between the embeddings of the original text and the output computed with the model of [Wieting et al. (2019)](https://aclanthology.org/P19-1427/).

* *fluency* (**FL**): percentage of fluent sentences identified by a RoBERTa-based classifier of linguistic acceptability trained on the [CoLA dataset](https://nyu-mll.github.io/CoLA/).

All code used for our experiments to evluate different detoxifcation models can be run via Colab notebook [](https://colab.research.google.com/drive/1xTqbx7IPF8bVL2bDCfQSDarA43mIPefE?usp=sharing)

## Detoxification model

**New SOTA** for detoxification task -- BART (base) model trained on ParaDetox dataset -- we released online in HuggingFace🤗 repository [here](https://huggingface.co/SkolkovoInstitute/bart-base-detox).

You can also check out our [demo](https://detoxifier.nlp.zhores.net/junction/) and telegram [bot](https://t.me/rudetoxifierbot).

## Citation

```

@inproceedings{logacheva-etal-2022-paradetox,

title = "{P}ara{D}etox: Detoxification with Parallel Data",

author = "Logacheva, Varvara and

Dementieva, Daryna and

Ustyantsev, Sergey and

Moskovskiy, Daniil and

Dale, David and

Krotova, Irina and

Semenov, Nikita and

Panchenko, Alexander",

booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = may,

year = "2022",

address = "Dublin, Ireland",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.acl-long.469",

pages = "6804--6818",

abstract = "We present a novel pipeline for the collection of parallel data for the detoxification task. We collect non-toxic paraphrases for over 10,000 English toxic sentences. We also show that this pipeline can be used to distill a large existing corpus of paraphrases to get toxic-neutral sentence pairs. We release two parallel corpora which can be used for the training of detoxification models. To the best of our knowledge, these are the first parallel datasets for this task.We describe our pipeline in detail to make it fast to set up for a new language or domain, thus contributing to faster and easier development of new parallel resources.We train several detoxification models on the collected data and compare them with several baselines and state-of-the-art unsupervised approaches. We conduct both automatic and manual evaluations. All models trained on parallel data outperform the state-of-the-art unsupervised models by a large margin. This suggests that our novel datasets can boost the performance of detoxification systems.",

}

```

## Contacts

If you find some issue, do not hesitate to add it to [Github Issues](https://github.com/skoltech-nlp/paradetox/issues).

For any questions, please contact: Daryna Dementieva (dardem96@gmail.com) |

false |

## WARNING: this dataset is an extract of the OSCAR dataset published here to simulate the use of the full dataset in low-resource contexts.

Using this dataset is equivalent to using a processed version of OSCAR legally speaking. I take no credit for the gathering of the original data and hence refer entirely to the original dataset in the card below.

# Dataset Card for "oscar"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [https://oscar-corpus.com](https://oscar-corpus.com)

- **Repository:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Paper:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

- **Point of Contact:** [More Information Needed](https://github.com/huggingface/datasets/blob/master/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards)

### Dataset Summary

OSCAR or **O**pen **S**uper-large **C**rawled [**A**LMAnaCH](https://team.inria.fr/almanach/) co**R**pus is a huge multilingual corpus obtained by language classification and filtering of the [Common Crawl](https://commoncrawl.org/) corpus using the [goclassy](https://github.com/pjox/goclassy) architecture. Data is distributed by language in both original and deduplicated form.

### Supported Tasks and Leaderboards

OSCAR is mainly inteded to pretrain language models and word represantations.

### Languages

All the data is distributed by language, both the original and the deduplicated versions of the data are available. 166 different languages are available. The table in subsection [Data Splits Sample Size](#data-splits-sample-size) provides the language code for each subcorpus as well as the number of words (space separated tokens), lines and sizes for both the original and the deduplicated versions of OSCAR.

## Dataset Structure

We show detailed information for all the configurations of the dataset.

## Dataset Creation

### Curation Rationale

OSCAR was constructed new pipeline derived from the [fastText's one](https://github.com/facebookresearch/fastText), called [_goclassy_](https://github.com/pjox/goclassy). Goclassy reuses the [fastText linear classifier](https://fasttext.cc) and the pre-trained fastText model for language recognition, but it completely rewrites and parallelises their pipeline in an asynchronous manner.

The order of operations is more or less the same as in the fastText pre-processing pipeline but instead of clustering multiple operations into a single blocking process, a worker is launched for each operation but bounding the number of possible parallel operations at a given time by the number of available threads instead of the number of CPUs. Goclassy is implemented in the [Go programming language](https://golang.org/) so it lets the [Go runtime](https://golang.org/src/runtime/mprof.go) handle the scheduling of the processes. Thus the goclassy's pipeline one does not have to wait for a whole WET file to download, decompress and classify in order to start downloading and processing the next one, a new file will start downloading and processing as soon as the scheduler is able to allocate a new process.

Filtering and cleaning processes at line level are done before feeding each line to the classifier. Lines shorter than 100 UTF-8 characters and lines containing invalid UTF-8 characters are discarted and are not classified. After all files are proccesed the deduplicated versions are constructed and everything is then splitted in shards and compressed.

### Source Data

#### Initial Data Collection and Normalization

[Common Crawl](https://commoncrawl.org/) is a non-profit foundation which produces and maintains an open repository of web crawled data that is both accessible and analysable. Common Crawl's complete web archive consists of petabytes of data collected over 8 years of web crawling. The repository contains raw web page HTML data (WARC files), metdata extracts (WAT files) and plain text extracts (WET files). The organisation's crawlers has always respected [nofollow](http://microformats.org/wiki/rel-nofollow) and [robots.txt](https://www.robotstxt.org/) policies.

Each monthly Common Crawl snapshot is in itself a massive multilingual corpus, where every single file contains data coming from multiple web pages written in a large variety of languages and covering all possible types of topics.

To construct OSCAR the WET files of Common Crawl were used. These contain the extracted plain texts from the websites mostly converted to UTF-8, as well as headers containing the metatada of each crawled document. Each WET file comes compressed in gzip format and is stored on Amazon Web Services. In the case of OSCAR, the **November 2018** snapshot was used. It surpasses 20TB of uncompressed data and contains more than 50 thousand plain text files where each file consists of the plain text from multiple websites along its metadata header.

#### Who are the source language producers?

The data comes from multiple web pages in a large variety of languages.

### Annotations

The dataset does not contain any additional annotations.

#### Annotation process

N/A

#### Who are the annotators?

N/A

### Personal and Sensitive Information

Being constructed from Common Crawl, Personal and sensitive information might be present. This **must** be considered before training deep learning models with OSCAR, specially in the case of text-generation models.

## Considerations for Using the Data

### Social Impact of Dataset

OSCAR is intended to bring more data to a wide variety of lanuages, the aim of the corpus is to make large amounts of data available to lower resource languages in order to facilitate the pre-training of state-of-the-art language modeling architectures.

### Discussion of Biases

OSCAR is not properly filtered yet and this can be reflected on the models trained with it. Care is advised specially concerning biases of the resulting models.

### Other Known Limitations

The [fastText linear classifier](https://fasttext.cc) is limed both in performance and the variety of languages it can recognize, so the quality of some OSCAR sub-corpora might be lower than expected, specially for the lowest-resource langiuages. Some audits have already been done by [third parties](https://arxiv.org/abs/2010.14571).

## Additional Information

### Dataset Curators

The corpus was put together by [Pedro J. Ortiz](https://pjortiz.eu/), [Benoît Sagot](http://pauillac.inria.fr/~sagot/), and [Laurent Romary](https://cv.archives-ouvertes.fr/laurentromary), during work done at [Inria](https://www.inria.fr/en), particularly at the [ALMAnaCH team](https://team.inria.fr/almanach/).

### Licensing Information

These data are released under this licensing scheme

We do not own any of the text from which these data has been extracted.

We license the actual packaging of these data under the Creative Commons CC0 license ("no rights reserved") http://creativecommons.org/publicdomain/zero/1.0/

To the extent possible under law, Inria has waived all copyright and related or neighboring rights to OSCAR

This work is published from: France.

Should you consider that our data contains material that is owned by you and should therefore not be reproduced here, please:

* Clearly identify yourself, with detailed contact data such as an address, telephone number or email address at which you can be contacted.

* Clearly identify the copyrighted work claimed to be infringed.

* Clearly identify the material that is claimed to be infringing and information reasonably sufficient to allow us to locate the material.

We will comply to legitimate requests by removing the affected sources from the next release of the corpus.

### Citation Information

```

@inproceedings{ortiz-suarez-etal-2020-monolingual,

title = "A Monolingual Approach to Contextualized Word Embeddings for Mid-Resource Languages",

author = "Ortiz Su{'a}rez, Pedro Javier and

Romary, Laurent and

Sagot, Benoit",

booktitle = "Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics",

month = jul,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.acl-main.156",

pages = "1703--1714",

abstract = "We use the multilingual OSCAR corpus, extracted from Common Crawl via language classification, filtering and cleaning, to train monolingual contextualized word embeddings (ELMo) for five mid-resource languages. We then compare the performance of OSCAR-based and Wikipedia-based ELMo embeddings for these languages on the part-of-speech tagging and parsing tasks. We show that, despite the noise in the Common-Crawl-based OSCAR data, embeddings trained on OSCAR perform much better than monolingual embeddings trained on Wikipedia. They actually equal or improve the current state of the art in tagging and parsing for all five languages. In particular, they also improve over multilingual Wikipedia-based contextual embeddings (multilingual BERT), which almost always constitutes the previous state of the art, thereby showing that the benefit of a larger, more diverse corpus surpasses the cross-lingual benefit of multilingual embedding architectures.",

}

@inproceedings{OrtizSuarezSagotRomary2019,

author = {Pedro Javier {Ortiz Su{'a}rez} and Benoit Sagot and Laurent Romary},

title = {Asynchronous pipelines for processing huge corpora on medium to low resource infrastructures},

series = {Proceedings of the Workshop on Challenges in the Management of Large Corpora (CMLC-7) 2019. Cardiff, 22nd July 2019},

editor = {Piotr Bański and Adrien Barbaresi and Hanno Biber and Evelyn Breiteneder and Simon Clematide and Marc Kupietz and Harald L{"u}ngen and Caroline Iliadi},

publisher = {Leibniz-Institut f{"u}r Deutsche Sprache},

address = {Mannheim},

doi = {10.14618/ids-pub-9021},

url = {http://nbn-resolving.de/urn:nbn:de:bsz:mh39-90215},

pages = {9 -- 16},

year = {2019},

abstract = {Common Crawl is a considerably large, heterogeneous multilingual corpus comprised of crawled documents from the internet, surpassing 20TB of data and distributed as a set of more than 50 thousand plain text files where each contains many documents written in a wide variety of languages. Even though each document has a metadata block associated to it, this data lacks any information about the language in which each document is written, making it extremely difficult to use Common Crawl for monolingual applications. We propose a general, highly parallel, multithreaded pipeline to clean and classify Common Crawl by language; we specifically design it so that it runs efficiently on medium to low resource infrastructures where I/O speeds are the main constraint. We develop the pipeline so that it can be easily reapplied to any kind of heterogeneous corpus and so that it can be parameterised to a wide range of infrastructures. We also distribute a 6.3TB version of Common Crawl, filtered, classified by language, shuffled at line level in order to avoid copyright issues, and ready to be used for NLP applications.},

language = {en}

}

```

### Contributions

Thanks to [@pjox](https://github.com/pjox) and [@lhoestq](https://github.com/lhoestq) for adding this dataset. |

false |

# ru_instruct_gpt4

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

Dataset of GPT-4 generated instructions in Russian. Will soon be updated with more examples.

### Languages

Russian

|

false |

# Dataset Card for SCAT

## Table of Contents

- [Dataset Card for SCAT](#dataset-card-for-scat)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Machine Translation](#machine-translation)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Additional Preprocessing](#additional-preprocessing)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Repository:** [Github](https://github.com/neulab/contextual-mt/tree/master/data/scat)

- **Paper:** [ACL 2021](https://aclanthology.org/2021.acl-long.65/)

- **Point of Contact:** [Kayo Yin](mailto:kayoyin@berkeley.edu)

### Dataset Summary

The Supporting Context for Ambiguous Translations corpus (SCAT) is a dataset of English-to-French translations annotated with human rationales used for resolving ambiguity in pronoun anaphora resolution for multi-sentence translation.

**Disclaimer**: *The SCAT corpus was released in the ACL 2021 paper ["Do Context-Aware Translation Models Pay the Right Attention?"](https://aclanthology.org/2021.acl-long.65/) by Yin et al. (2021), and an original version of the corpus is hosted on [Github](https://github.com/neulab/contextual-mt/tree/master/data/scat) with no licensing information. This dataset contains a curated version of the original corpus where examples containing nested or malformed tags were removed (refer to the [filter_scat.py](filter_scat.py) script for more details).*

### Supported Tasks and Leaderboards

#### Machine Translation

Refer to the [original paper](https://aclanthology.org/2021.acl-long.65/) for additional details on plausibility evaluation for document-level MT systems.

### Languages

The dataset contains source English sentences containing anaphoric pronouns selected from the [OpenSubtitles 2018](https://www.aclweb.org/anthology/L18-1275/) corpus and translated into the French (`fr`)

## Dataset Structure

### Data Instances

The dataset contains a single default configuration. Dataset examples have the following format:

```json

{

"id": 0,

"context_en": "Air, water, the continents. So, what is your project about and what are its chances of winning? - Well, my project is awesome. - Oh, good. I took two plants, and I gave them sun and water",

"en": "But I gave one special attention to see if it would grow more.",

"context_fr": "L'air, l'eau, les continents. Donc, quel est le sujet de ton projet et quelles sont ses chances de gagner ? - Bien, mon projet est impressionnant. - Oh, bien. J'ai pris deux plantes , et je leur ai donné de l'eau et du soleil.",

"fr": "Mais j'ai donné une attention particulière à une pour voir si elle grandit plus.",

"contrast_fr": "Mais j'ai donné une attention particulière à une pour voir si il grandit plus.",

"context_en_with_tags": "Air, water, the continents. So, what is your project about and what are its chances of winning? - Well, my project is awesome. - Oh, good. I took two plants, and I gave them sun and water",

"en_with_tags": "But I gave one special attention to see if <p>it</p> would grow more.",

"context_fr_with_tags": "L'air, l'eau, les continents. Donc, quel est le sujet de ton projet et quelles sont ses chances de gagner ? - Bien, mon projet est impressionnant. - Oh, bien. J'ai pris deux <hon>plantes<hoff> , et je leur ai donné de l'eau et du soleil.",

"fr_with_tags": "Mais j'ai donné une attention particulière à une pour voir si <p>elle</p> grandit plus.",

"contrast_fr_with_tags": "Mais j'ai donné une attention particulière à une pour voir si <p>il</p> grandit plus.",

"has_supporting_context": true,

"has_supporting_preceding_context": true,

}

```

In every example, the pronoun of interest and its translation are surrounded by `<p>...</p>` tags. These are guaranteed to be found in the `en_with_tags` and `fr_with_tags` field, respectively.

Any span surrounded by `<hon>...<hoff>` tags was identified by human annotators as supporting context to correctly translate the pronoun of interest. These spans can be missing altogether (i.e. no contextual information needed), or they can be found in any of the available fields. The `has_supporting_context` field indicates whether the example contains any supporting context.

In the example above, the translation of the pronoun `it` (field `en`) is ambiguous, and the correct translation to the feminine French pronoun `elle` (in field `fr`) is only possible thanks to the supporting feminine noun `plantes` in the field `context_fr`. Since the example contains supporting context, the `has_supporting_context` field is set to `true`. Since the context is not in the same sentence as the target pronoun but in a preceding one, `has_supporting_preceding_context` is also true.

Fields with the `_with_tags` suffix contain tags around pronouns of interest and supporting context, while their counterparts without the suffix contain the same text without tags, to facilitate direct usage with machine translation models.

### Data Splits

The dataset is split into `train`, `validation` and `test` sets. In the following table, we report the number of examples in the original dataset and in this filtered version in which examples containing malformed tags were removed.

| Split | # Examples (original) | # Examples (**this**) |

| :-----------: | :-------------------: | :-------------------: |

| `train` | 11471 | 6086 |

| `validation` | 145 | 66 |

| `test` | 1000 | 500 |

### Dataset Creation

From the original paper:

>We recruited 20 freelance English-French translators on Upwork. We annotate examples from the contrastive test set by Lopes et al. (2020). This set includes 14K examples from the OpenSubtitles2018 dataset. Through our annotation effort, we obtain 14K examples of supporting context for pronoun anaphora resolution in ambiguous translations selected by professional human translators.

Please refer to the original article [Do Context-Aware Translation Models Pay the Right Attention?](https://aclanthology.org/2021.acl-long.65/) for additional information on dataset creation.

### Additional Preprocessing

Compared to the original SCAT corpus, the following differences are present in this version:

- Examples were filtered using the [filter_scat.py](filter_scat.py) script to retain only examples containing well-formed tags, and remove superfluous tags. Superfluous tags are defined as nested `<hon><p>...</p><hoff>` tags that represent lack of contextual information for disambiguating the correct pronoun. In this case, the outer `<hon>...<hoff>` tag was removed. We also filter examples that do not contain tags for the pronoun on interest in the target sentence for English and French (`en`, `fr`).

- Sentences stripped from tags are provided in fields without the `_with_tags` suffix.

- Tags erroneously embedded within words of target sentence (e.g. `just s<p>it</p>ting there, wa<p>it</p>ing for his face`) were removed.

- An extra contrastive sentence using the pronoun of interest belonging to the opposite gender is available in the `contrast_fr` field. The swap was performed using a simple lexical heuristic (refer to `swap_pronoun` in [`scat.py`](./scat.py)), and we do not guarantee grammatical correctness of the sentence.

The test set was manually curated to include 250 examples with supporting context in the previous sentences (`has_supporting_preceding_context` is `true`), and 250 examples with supporting context in the same sentence (`has_supporting_preceding_context` is `false`).

## Additional Information

### Dataset Curators

The original authors of SCAT are the curators of the original released dataset. For problems or updates on this 🤗 Datasets version, please contact [gabriele.sarti996@gmail.com](mailto:gabriele.sarti996@gmail.com).

### Licensing Information

The dataset license is unknown.

### Citation Information

Please cite the authors if you use these corpus in your work.

```bibtex

@inproceedings{yin-etal-2021-context,

title = "Do Context-Aware Translation Models Pay the Right Attention?",

author = "Yin, Kayo and

Fernandes, Patrick and

Pruthi, Danish and

Chaudhary, Aditi and

Martins, Andr{\'e} F. T. and

Neubig, Graham",

booktitle = "Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers)",

month = aug,

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.acl-long.65",

doi = "10.18653/v1/2021.acl-long.65",

pages = "788--801",

}

``` |

false | # Dataset Card for Softcatala-Web-Texts-Dataset

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://www.softcatala.org/

- **Repository:** https://github.com/Softcatala/softcatala-web-dataset

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This repository contains Sofcatalà web site content (articles and programs descriptions).

Dataset size:

* articles.json contains 623 articles with 373233 words.

* programes.json contains 330 program descriptions with 49868 words.

The license of the data is Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) or Universal Public Domain Dedication (CC0 1.0)

### Supported Tasks and Leaderboards

### Languages

Catalan (`ca`).

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

JSON (name/value pairs) format with the following fields: content, date, id and title.

### Data Splits

One file for the descriptions text and one for the articles text.

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

Softcatalà community.

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[@softcatala](https://github.com/Softcatala)

[@jordimas](https://github.com/jordimas)

### Licensing Information

[CC BY-SA 4.0](https://creativecommons.org/licenses/by-sa/4.0/).

[CC0-1.0](https://creativecommons.org/share-your-work/public-domain/cc0/).

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed]

|

false |

# Dataset Card for ChatMed

## Dataset Description

- **Homepage:** https://huggingface.co/datasets/michaelwzhu/ChatMed-Datasets

- **Repository:** https://github.com/michael-wzhu/ChatMed

- **Paper:**

- **Leaderboard:**

- **Point of Contact:** Wei Zhu

### Dataset Summary

ChatMed-Dataset is a dataset of 110,113 medical query-response pairs (in Chinese) generated by OpenAI's `GPT-3.5` engine. The queries are crawled from several online medical consultation sites, reflecting the medical needs in the real world. The responses are generated by the OpenAI engine. This dataset is designated to to inject medical knowledge into Chinese large language models.

The dataset size growing rapidly. Stay tuned for updates!

在大模型时代,模型都是趋同的,比如开源社区由于LlaMa的"开源",出现了各种stanford-alpaca等一系列的开源模型。但是中文垂直领域上的AGI数据集相对匮乏。

本数据集, ChatMed-Dataset, 中的query(或者是prompt)来自于互联网上的医疗问诊问题(110,113),反映了真实世界的不同用户/患者的医疗问诊需求。目前response都是由OpenAI `GPT-3.5`引擎回答的。我们后续会对互联网上的医生回答与患者回答进行筛选甄别,择优选择,构建质量更优的数据集。

### Supported Tasks and Leaderboards

The ChatMed-Dataset designed for fine-tuning pretrained language models to perform better in automatic medical consultations.

### Languages

The data in ChatMed-Dataset are in Chinese (zh).

## Dataset Structure

### Data Instances

The dataset is in json-line format and is very convenient to use! An example in the ChatMed-Dataset looks as follows:

```json

{

"query": "你好,视神经萎缩,黄斑变性。怎样缓解,及日常注意事项。谢谢。",

"response": "视神经萎缩和黄斑变性是两种不同的疾病,需要分别进行治疗。\n\n视神经萎缩是指视神经逐渐受损,导致视力逐渐减退,常见的治疗方法包括:\n\n1.药物治疗:如促进视神经细胞再生的药物,改善视网膜供血的药物等。\n\n2.手术治疗:如视神经萎缩的手术治疗可以通过神经移植、修复和再生等手术方式来缓解症状。\n\n3.康复训练:通过视力训练等康复训练可以帮助患者恢复视力。\n\n黄斑变性则是指黄斑区域受损,导致中央视力减退,常见的治疗方法包括:\n\n1.药物治疗:如抗氧化剂、维生素等可以缓解症状。\n\n2.手术治疗:如黄斑脱离手术等可以恢复视力。\n\n3.日常注意事项:保持良好的生活习惯,如戒烟、控制血压、血糖等,避免暴晒和过度用眼,保持充足的睡眠和均衡的饮食等。\n\n需要根据具体情况选择合适的治疗方案,建议您咨询专业医生进行诊断和治疗。"

}

```

### Data Fields

The data fields are as follows:

* `query`: real-life medical query from the web.

* `response`: response generated by the OpenAI `GPT-3.5` engine.

### Data Splits

| | train |

|---------------|------:|

| ChatMed-Dataset | 110,113 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

> We believe that releasing the above assets will enable the academic community to perform controlled scientific studies on how large language models responses to real-life medical query.

> More social impacts will be discussed soon!

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

The `ChatMed-Dataset` data is generated by a language model (`GPT-3.5`) and inevitably contains some errors or biases. We encourage users to use this data with caution and propose new methods to filter or improve the imperfections.

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

The dataset is available under the [Creative Commons NonCommercial (CC BY-NC 4.0)](https://creativecommons.org/licenses/by-nc/4.0/legalcode).

### Citation Information

```

@misc{alpaca,

author = {Wei Zhu },

title = {ChatMed-Dataset: An GPT generated medical query-response datasets for medcial large language models},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/michael-wzhu/ChatMed}},

}

```

### Contributions

[More Information Needed] |

true |

# Dataset Card for Swiss Legislation

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

Swiss Legislation is a multilingual, diachronic dataset of 36K Swiss laws. This dataset is part of a challenging Information Retreival task.

### Supported Tasks and Leaderboards

### Languages

The total number of texts in the dataset is 35,698. The dataset is saved in _lexfind_v2.jsonl_ format.

Switzerland has four official languages German, French, Italian and Romanch with some additional English laws being represenated. Laws are written by legal experts.

36K & 18K & 11K & 6K & 534 & 207

| Language | Subset | Number of Documents |

|------------|------------|----------------------|

| German | **de** | 18K |

| French | **fr** | 11K |

| Italian | **it** | 6K |

| Romanch | **rm** | 534 |

| English | **en** | 207 |

## Dataset Structure

### Data Fields

Each entry in the dataset is a dictionary with the following keys:

- `canton`: the canton of origin of the legislation

- example: "ag"

- `language`: the language of the legislation

- example: "de"

- `uuid`: a unique identifier for the legislation

- example: "ec312f57-05fe-4552-ba50-8c9c269e0f3b"

- `title`: the title of the legislation

- example: "Gesetz über die Geoinformation im Kanton Aargau"

- `short`: a short description of the legislation

- example: "Kantonales Geoinformationsgesetz"

- `abbreviation`: an abbreviation for the legislation

- example: "KGeoIG"

- `sr_number`: a reference number for the legislation

- example: "740.100"

- `is_active`: whether the legislation is currently in force

- example: true

- `version_active_since`: the date since when the legislation's current version is active

- example: "2021-09-01"

- `family_active_since`: the date since when the legislation's current version's family is active

- example: "2011-05-24"

- `version_inactive_since`: the date since when the legislation's current version is inactive

- example: null

- `version_found_at`: the date the legislation's current version was found

- example: "2021-09-01"

- `pdf_url`: a link to the legislation's pdf

- example: "https://www.lexfind.ch/tol/1557/de"

- `html_url`: a link to the legislation's html

- example: "https://gesetzessammlungen.ag.ch/app/de/texts_of_law/740.100")_

- `pdf_content`: the legislation's pdf content

- example: "740.100 - Gesetz über..."

- `html_content`: the legislation's html content

- example: ""

- `changes`: a list of changes made to the legislation

- example: []

- `history`: a list of the legislation's history

- example: []

- `quotes`: a list of quotes from the legislation

- example: []

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

1. 'ch': Switzerland (Federal) - 15840

2. 'fr': Fribourg - 1633

3. 'be': Bern - 1344

4. 'vs': Valais - 1328

5. 'gr': Graubünden - 1205

6. 'ne': Neuchâtel - 1115

7. 'zh': Zurich - 974

8. 'bs': Basel-Stadt - 899

9. 'bl': Basel-Landschaft - 863

10. 'vd': Vaud - 870

11. 'ge': Geneva - 837

12. 'sg': St. Gallen - 764

13. 'ju': Jura - 804

14. 'zg': Zug - 632

15. 'ti': Ticino - 627

16. 'lu': Lucerne - 584

17. 'so': Solothurn - 547

18. 'ow': Obwalden - 513

19. 'ik': Interkantonal - 510

20. 'sh': Schaffhausen - 469

21. 'gl': Glarus - 467

22. 'tg': Thurgau - 453

23. 'sz': Schwyz - 423

24. 'ai': Appenzell Innerrhoden - 416

25. 'ag': Aargau - 483

26. 'ar': Appenzell Ausserrhoden - 330

27. 'nw': Nidwalden - 401

28. 'ur': Uri - 367

29.

## Dataset Creation

### Curation Rationale

### Source Data

#### Initial Data Collection and Normalization

The original data are published from the Swiss Federal Supreme Court (https://www.bger.ch) in unprocessed formats (HTML). The documents were downloaded from the Entscheidsuche portal (https://entscheidsuche.ch) in HTML.

#### Who are the source language producers?

The decisions are written by the judges and clerks in the language of the proceedings.

### Annotations

#### Annotation process

#### Who are the annotators?

Metadata is published by the Swiss Federal Supreme Court (https://www.bger.ch).

### Personal and Sensitive Information

The dataset contains publicly available court decisions from the Swiss Federal Supreme Court. Personal or sensitive information has been anonymized by the court before publication according to the following guidelines: https://www.bger.ch/home/juridiction/anonymisierungsregeln.html.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

We release the data under CC-BY-4.0 which complies with the court licensing (https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf)

© Swiss Federal Supreme Court, 2002-2022

The copyright for the editorial content of this website and the consolidated texts, which is owned by the Swiss Federal Supreme Court, is licensed under the Creative Commons Attribution 4.0 International licence. This means that you can re-use the content provided you acknowledge the source and indicate any changes you have made.

Source: https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf

### Citation Information

*Visu, Ronja, Joel*

*Title: Blabliblablu*

*Name of conference*

```

cit

``` |

false | # Dataset Card for "medqa"

MedQA GitHub: [https://github.com/jind11/MedQA](https://github.com/jind11/MedQA)

## Citation information

```

@article{jin2020disease,

title={What Disease does this Patient Have? A Large-scale Open Domain Question Answering Dataset from Medical Exams},

author={Jin, Di and Pan, Eileen and Oufattole, Nassim and Weng, Wei-Hung and Fang, Hanyi and Szolovits, Peter},

journal={arXiv preprint arXiv:2009.13081},

year={2020}

}

``` |

false |

# LLaVA Visual Instruct 150K Dataset Card

## Dataset details

**Dataset type:**

LLaVA Visual Instruct 150K is a set of GPT-generated multimodal instruction-following data.

It is constructed for visual instruction tuning and for building large multimodal towards GPT-4 vision/language capability.

**Dataset date:**

LLaVA Visual Instruct 150K was collected in April 2023, by prompting GPT-4-0314 API.

**Paper or resources for more information:**

https://llava-vl.github.io/

**License:**

Attribution-NonCommercial 4.0 International

It should abide by the policy of OpenAI: https://openai.com/policies/terms-of-use

**Where to send questions or comments about the model:**

https://github.com/haotian-liu/LLaVA/issues

## Intended use

**Primary intended uses:**

The primary use of LLaVA is research on large multimodal models and chatbots.

**Primary intended users:**

The primary intended users of the model are researchers and hobbyists in computer vision, natural language processing, machine learning, and artificial intelligence. |

false |

## Description

This dataset contains triples of the form "query1", "query2", "label" where labels are mapped as follows

- similar: 1

- not similar: 0

- ambiguous: -1 |

false | # Dataset Card Nota Lyd- og tekstdata

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Disclaimer](#disclaimer)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

## Dataset Description

- **Homepage:** https://sprogteknologi.dk/dataset/notalyd-ogtekstdata

- **Data Storage Url:** https://sprogtek-ressources.digst.govcloud.dk/nota/

- **Point of Contact:** info@sprogteknologi.dk

### Dataset Summary

This data was created by the public institution Nota (https://nota.dk/), which is part of the Danish Ministry of Culture. Nota has a library audiobooks and audiomagazines for people with reading or sight disabilities. Nota also produces a number of audiobooks and audiomagazines themselves.

The dataset consists of .wav and .txt files from Nota's audiomagazines "Inspiration" and "Radio/TV".

The dataset has been published as a part of the initiative sprogteknologi.dk, within the Danish Agency for Digital Government (www.digst.dk).

336 GB available data, containing voice recordings and accompanying transcripts.

Each publication has been segmented into bits of 2 - 50 seconds .wav files with an accompanying transcription

### Supported Tasks and Leaderboards

[Needs More Information]

### Languages

Danish

## Dataset Structure

### Data Instances

A typical data point comprises the path to the audio file, called path and its sentence.

`

{'path': '<path_to_clip>.wav', 'sentence': 'Dette er et eksempel', 'audio': {'path': <path_to_clip>.wav', 'array': array([-0.00048828, -0.00018311, -0.00137329, ..., 0.00079346, 0.00091553, 0.00085449], dtype=float32), 'sampling_rate': 44100}

`

### Data Fields

path: The path to the audio file

audio: A dictionary containing the path to the downloaded audio file, the decoded audio array, and the sampling rate. Note that when accessing the audio column: `dataset[0]["audio"]` the audio file is automatically decoded and resampled to `dataset.features["audio"].sampling_rate`. Decoding and resampling of a large number of audio files might take a significant amount of time. Thus it is important to first query the sample index before the `"audio"` column, *i.e.* `dataset[0]["audio"]` should **always** be preferred over `dataset["audio"][0]`.

sentence: The sentence that was read by the speaker

### Data Splits

The material has for now only a train split. As this is very early stage of the dataset, splits might be introduced at a later stage.

## Dataset Creation

### Disclaimer

There might be smaller discrepancies between the .wav and .txt files. Therefore, there might be issues in the alignment of timestamps, text and sound files.

There are no strict rules as to how readers read aloud non-letter characters (i.e. numbers, €, $, !, ?). These symbols can be read differently throughout the dataset.

### Curation Rationale

[Needs More Information]

### Source Data

#### Initial Data Collection and Normalization

[Needs More Information]

#### Who are the source language producers?

[Needs More Information]

### Annotations

#### Annotation process

[Needs More Information]

#### Who are the annotators?

[Needs More Information]

### Personal and Sensitive Information

The dataset is made public and free to use. Recorded individuals has by written contract accepted and agreed to the publication of their recordings.

Other names appearing in the dataset are already publically known individuals (i.e. TV or Radio host names). Their names are not to be treated as sensitive or personal data in the context of this dataset.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

https://sprogteknologi.dk/

Contact info@sprogteknologi.dk if you have questions regarding use of data.

They gladly receive inputs and ideas on how to distribute the data.

### Licensing Information

[CC0-1.0](https://creativecommons.org/publicdomain/zero/1.0/)

### |

true |

# Dataset Card for pile-pii-scrubadub

## Dataset Description

- **Repository: https://github.com/tomekkorbak/aligned-pretraining-objectives**

- **Paper: Arxiv link to be added**

### Dataset Summary

This dataset contains text from [The Pile](https://huggingface.co/datasets/the_pile), annotated based on the toxicity of each sentence.

Each document (row in the dataset) is segmented into sentences, and each sentence is given a score: the toxicity predicted by the [Detoxify](https://github.com/unitaryai/detoxify).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

This dataset is taken from [The Pile](https://huggingface.co/datasets/the_pile), which is English text.

## Dataset Structure

### Data Instances

1949977

### Data Fields

- texts (sequence): a list of the sentences in the document, segmented using SpaCy

- meta (dict): the section of [The Pile](https://huggingface.co/datasets/the_pile) from which it originated

- scores (sequence): a score for each sentence in the `texts` column indicating the toxicity predicted by [Detoxify](https://github.com/unitaryai/detoxify)

- avg_score (float64): the average of the scores listed in the `scores` column

- num_sents (int64): the number of sentences (and scores) in that document

### Data Splits

Training set only

## Dataset Creation

### Curation Rationale

This is labeled text from [The Pile](https://huggingface.co/datasets/the_pile), a large dataset of text in English. The text is scored for toxicity so that generative language models can be trained to avoid generating toxic text.

### Source Data

#### Initial Data Collection and Normalization

This is labeled text from [The Pile](https://huggingface.co/datasets/the_pile).

#### Who are the source language producers?

Please see [The Pile](https://huggingface.co/datasets/the_pile) for the source of the dataset.

### Annotations

#### Annotation process

Each sentence was scored using [Detoxify](https://github.com/unitaryai/detoxify), which is a toxic comment classifier.

We used the `unbiased` model which is based on the 124M parameter [RoBERTa](https://arxiv.org/abs/1907.11692) and trained on the [Jigsaw Unintended Bias in Toxicity Classification dataset](https://www.kaggle.com/c/jigsaw-unintended-bias-in-toxicity-classification).

#### Who are the annotators?

[Detoxify](https://github.com/unitaryai/detoxify)

### Personal and Sensitive Information

This dataset contains all personal identifable information and toxic text that was originally contained in [The Pile](https://huggingface.co/datasets/the_pile).

## Considerations for Using the Data

### Social Impact of Dataset

This dataset contains examples of toxic text and personal identifiable information.

(A version of this datatset with personal identifiable information annotated is [available here](https://huggingface.co/datasets/tomekkorbak/pile-pii-scrubadub).)

Please take care to avoid misusing the toxic text or putting anybody in danger by publicizing their information.

This dataset is intended for research purposes only. We cannot guarantee that all toxic text has been detected, and we cannot guarantee that models trained using it will avoid generating toxic text.

We do not recommend deploying models trained on this data.

### Discussion of Biases

This dataset contains all biases from The Pile discussed in their paper: https://arxiv.org/abs/2101.00027

### Other Known Limitations

The toxic text in this dataset was detected using imperfect automated detection methods. We cannot guarantee that the labels are 100% accurate.

## Additional Information

### Dataset Curators

[The Pile](https://huggingface.co/datasets/the_pile)

### Licensing Information

From [The Pile](https://huggingface.co/datasets/the_pile): PubMed Central: [MIT License](https://github.com/EleutherAI/pile-pubmedcentral/blob/master/LICENSE)

### Citation Information

Paper information to be added

### Contributions

[The Pile](https://huggingface.co/datasets/the_pile) |

true |

# ParaDetox: Detoxification with Parallel Data (English). Toxicity Task Results

This repository contains information about **Toxicity Task** markup from [English Paradetox dataset](https://huggingface.co/datasets/s-nlp/paradetox) collection pipeline.

The original paper ["ParaDetox: Detoxification with Parallel Data"](https://aclanthology.org/2022.acl-long.469/) was presented at ACL 2022 main conference.

## ParaDetox Collection Pipeline

The ParaDetox Dataset collection was done via [Yandex.Toloka](https://toloka.yandex.com/) crowdsource platform. The collection was done in three steps:

* *Task 1:* **Generation of Paraphrases**: The first crowdsourcing task asks users to eliminate toxicity in a given sentence while keeping the content.

* *Task 2:* **Content Preservation Check**: We show users the generated paraphrases along with their original variants and ask them to indicate if they have close meanings.

* *Task 3:* **Toxicity Check**: Finally, we check if the workers succeeded in removing toxicity.

Specifically this repo contains the results of **Task 3: Toxicity Check**. Here, the samples with markup confidence >= 90 are present.

The input here is text and the label shows if the text is toxic or not.

Totally, datasets contains 26,507 samples. Among them, the minor part is toxic examples (4,009 pairs).

## Citation

```

@inproceedings{logacheva-etal-2022-paradetox,

title = "{P}ara{D}etox: Detoxification with Parallel Data",

author = "Logacheva, Varvara and

Dementieva, Daryna and

Ustyantsev, Sergey and

Moskovskiy, Daniil and

Dale, David and

Krotova, Irina and

Semenov, Nikita and

Panchenko, Alexander",

booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = may,

year = "2022",

address = "Dublin, Ireland",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.acl-long.469",

pages = "6804--6818",

abstract = "We present a novel pipeline for the collection of parallel data for the detoxification task. We collect non-toxic paraphrases for over 10,000 English toxic sentences. We also show that this pipeline can be used to distill a large existing corpus of paraphrases to get toxic-neutral sentence pairs. We release two parallel corpora which can be used for the training of detoxification models. To the best of our knowledge, these are the first parallel datasets for this task.We describe our pipeline in detail to make it fast to set up for a new language or domain, thus contributing to faster and easier development of new parallel resources.We train several detoxification models on the collected data and compare them with several baselines and state-of-the-art unsupervised approaches. We conduct both automatic and manual evaluations. All models trained on parallel data outperform the state-of-the-art unsupervised models by a large margin. This suggests that our novel datasets can boost the performance of detoxification systems.",

}

```

## Contacts

For any questions, please contact: Daryna Dementieva (dardem96@gmail.com) |

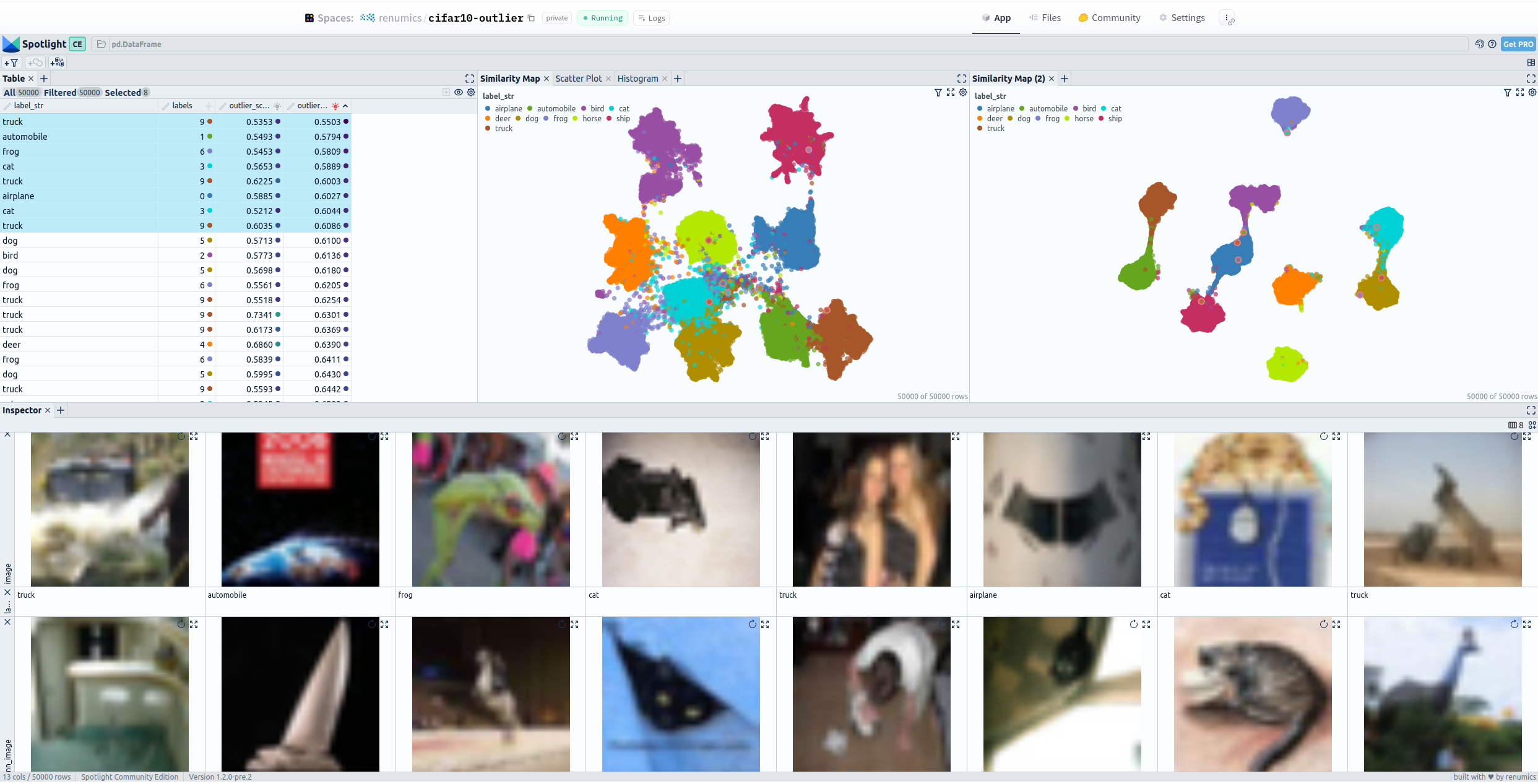

false | # Dataset Card for "cifar10-outlier"

📚 This dataset is an enriched version of the [CIFAR-10 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

*This dataset is used in an articel currently under review - a link will provided asap.*

## Explore the Dataset

The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) allows you to explorer this dataset:

You can find a Huggin Face Space running Spotlight with this dataset here: <https://huggingface.co/spaces/renumics/cifar10-outlier>

Or you can explorer it locally:

```python

!pip install renumics-spotlight datasets

from renumics import spotlight

import datasets

ds = datasets.load_dataset("renumics/cifar10-outlier", split="train")

df = ds.rename_columns({"img": "image", "label": "labels"}).to_pandas()

df["label_str"] = df["labels"].apply(lambda x: ds.features["label"].int2str(x))

dtypes = {

"nn_image": spotlight.Image,

"image": spotlight.Image,

"embedding_ft": spotlight.Embedding,

"embedding_foundation": spotlight.Embedding,

}

spotlight.show(

df,

dtype=dtypes,

layout="https://spotlight.renumics.com/resources/layout_pre_post_ft.json",

)

```

|

false |

This is a test dataset |

true |

# Dataset Card for [products-2017]

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Annotations](#annotations)

- [Additional Information](#additional-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** [LSPCv2 Homepage](http://webdatacommons.org/largescaleproductcorpus/v2/index.html)

- **Point of Contact:** [Ralph Peeters](mailto:ralph.peeters@uni-mannheim.de)

### Dataset Summary

Many e-shops have started to mark-up product data within their HTML pages using the schema.org vocabulary. The Web Data Commons project regularly extracts such data from the Common Crawl, a large public web crawl. The Web Data Commons Training and Test Sets for Large-Scale Product Matching contain product offers from different e-shops in the form of binary product pairs (with corresponding label "match" or "no match")

In order to support the evaluation of machine learning-based matching methods, the data is split into training, validation and test set. We provide training and validation sets in four different sizes for four product categories. The labels of the test sets were manually checked while those of the training sets were derived using shared product identifiers from the Web via weak supervision.

The data stems from the WDC Product Data Corpus for Large-Scale Product Matching - Version 2.0 which consists of 26 million product offers originating from 79 thousand websites.

### Supported Tasks and Leaderboards

Entity Matching, Product Matching

### Languages

English

## Dataset Structure

### Data Instances

The data is structured as pairs of product offers with the corresponding match/non-match label. This is an example instance from the computers category:

```

{"pair_id":"581109#16637861","label":0,"id_left":581109,"category_left":"Computers_and_Accessories","cluster_id_left":1324529,"brand_left":"\"Gigabyte\"@en","title_left":" \"Gigabyte Radeon RX 480 G1 Gaming 4096MB GDDR5 PCI-Express Graphics Card\"@en \"Gigabyte Gr| OcUK\"@en","description_left":"\"GV-RX480G1 GAMING-4GD, Core Clock: 1202MHz, Boost Clock: 1290MHz, Memory: 4096MB 7000MHz GDDR5, Stream Processors: 2304, Crossfire Ready, VR Ready, FreeSync Ready, 3 Years Warranty\"@en ","price_left":null,"specTableContent_left":null,"id_right":16637861,"category_right":"Computers_and_Accessories","cluster_id_right":107415,"brand_right":"\"Gigabyte\"@en","title_right":" \"Gigabyte Radeon RX 550 Gaming OC 2048MB GDDR5 PCI-Express Graphics Card\"@en \"Gigabyte Gr| OcUK\"@en","description_right":"\"GV-RX550GAMING OC-2GD, Boost: 1219MHz, Memory: 2048MB 7000MHz GDDR5, Stream Processors: 512, DirectX 12 Support, 3 Years Warranty\"@en ","price_right":null,"specTableContent_right":null}

```

### Data Fields

- pair_id: unique identifier of a pair (string)

- label: binary label, match or non-match (int)

The following attributes are contained twice, once for the first and once for the second product offer

- id: unique id of the product offer (int)

- category: product category (string)

- cluster_id: id of the product cluster from the original corpus this offer belongs to (int)

- brand: brand of the product (string)

- title: product title (string)

- description: longer product description (string)

- price: price of the product offer (string)

- specTableContent: additional data found in specification tables on the webpage that contains the product offer (string)

### Data Splits

- Computers

- Test set - 1100 pairs

- Small Train set - 2267 pairs

- Small Validation set - 567 pairs

- Medium Train set - 6475 pairs

- Medium Validation set - 1619 pairs

- Large Train set - 26687 pairs

- Large Validation set - 6672 pairs

- XLarge Train set - 54768 pairs

- Xlarge Validation set - 13693 pairs

- Cameras

- Test set - 1100 pairs

- Small Train set - 1508 pairs

- Small Validation set - 378 pairs

- Medium Train set - 4204 pairs

- Medium Validation set - 1051 pairs

- Large Train set - 16028 pairs

- Large Validation set - 4008 pairs

- XLarge Train set - 33821 pairs

- Xlarge Validation set - 8456 pairs

- Watches

- Test set - 1100 pairs

- Small Train set - 1804 pairs

- Small Validation set - 451 pairs

- Medium Train set - 5130 pairs

- Medium Validation set - 1283 pairs

- Large Train set - 21621 pairs

- Large Validation set - 5406 pairs

- XLarge Train set - 49255 pairs

- Xlarge Validation set - 12314 pairs

- Shoes

- Test set - 1100 pairs

- Small Train set - 1650 pairs

- Small Validation set - 413 pairs

- Medium Train set - 4644 pairs

- Medium Validation set - 1161 pairs

- Large Train set - 18391 pairs

- Large Validation set - 4598 pairs

- XLarge Train set - 33943 pairs

- Xlarge Validation set - 8486 pairs

## Dataset Creation

### Annotations

#### Annotation process

- Training and Validation sets: distant supervision via shared schema.org product IDs

- Test sets: Single expert annotator

#### Who are the annotators?

[Ralph Peeters](https://www.uni-mannheim.de/dws/people/researchers/phd-students/ralph-peeters/)

## Additional Information

### Citation Information

```

@inproceedings{primpeli2019wdc,

title={The WDC training dataset and gold standard for large-scale product matching},

author={Primpeli, Anna and Peeters, Ralph and Bizer, Christian},

booktitle={Companion Proceedings of The 2019 World Wide Web Conference},

pages={381--386},

year={2019}

}

```

|

true |

# Dataset Card for SemEval Task 12: Aspect-based Sentiment Analysis

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset is orignally from [SemEval-2015 Task 12](https://alt.qcri.org/semeval2015/task12/).

From the page:

> SE-ABSA15 will focus on the same domains as SE-ABSA14 (restaurants and laptops). However, unlike SE-ABSA14, the input datasets of SE-ABSA15 will contain entire reviews, not isolated (potentially out of context) sentences. SE-ABSA15 consolidates the four subtasks of SE-ABSA14 within a unified framework. In addition, SE-ABSA15 will include an out-of-domain ABSA subtask, involving test data from a domain unknown to the participants, other than the domains that will be considered during training. In particular, SE-ABSA15 consists of the following two subtasks.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@andreaschandra](https://github.com/andreaschandra) for adding this dataset. |

true | # Dataset Card for HateBR - Offensive Language and Hate Speech Dataset in Brazilian Portuguese

## Dataset Description

- **Homepage:** http://143.107.183.175:14581/

- **Repository:** https://github.com/franciellevargas/HateBR

- **Paper:** https://aclanthology.org/2022.lrec-1.777/

- **Leaderboard:**

- **Point of Contact:** https://franciellevargas.github.io/

### Dataset Summary

HateBR is the first large-scale expert annotated corpus of Brazilian Instagram comments for hate speech and offensive language detection on the web and social media. The HateBR corpus was collected from Brazilian Instagram comments of politicians and manually annotated by specialists. It is composed of 7,000 documents annotated according to three different layers: a binary classification (offensive versus non-offensive comments), offensiveness-level (highly, moderately, and slightly offensive messages), and nine hate speech groups (xenophobia, racism, homophobia, sexism, religious intolerance, partyism, apology for the dictatorship, antisemitism, and fatphobia). Each comment was annotated by three different annotators and achieved high inter-annotator agreement. Furthermore, baseline experiments were implemented reaching 85% of F1-score outperforming the current literature models for the Portuguese language. Accordingly, we hope that the proposed expertly annotated corpus may foster research on hate speech and offensive language detection in the Natural Language Processing area.

**Relevant Links:**

* [**Demo: Brasil Sem Ódio**](http://143.107.183.175:14581/)

* [**MOL - Multilingual Offensive Lexicon Annotated with Contextual Information**](https://github.com/franciellevargas/MOL)

### Supported Tasks and Leaderboards

Hate Speech Detection

### Languages

Portuguese

## Dataset Structure

### Data Instances

```

{'instagram_comments': 'Hipocrita!!',

'offensive_language': True,

'offensiveness_levels': 2,

'antisemitism': False,

'apology_for_the_dictatorship': False,

'fatphobia': False,

'homophobia': False,

'partyism': False,

'racism': False,

'religious_intolerance': False,

'sexism': False,

'xenophobia': False,

'offensive_&_non-hate_speech': True,

'non-offensive': False,

'specialist_1_hate_speech': False,

'specialist_2_hate_speech': False,

'specialist_3_hate_speech': False

}

```

### Data Fields

* **instagram_comments**: Instagram comments.

* **offensive_language**: A classification of comments as either offensive (True) or non-offensive (False).

* **offensiveness_levels**: A classification of comments based on their level of offensiveness, including highly offensive (3), moderately offensive (2), slightly offensive (1) and non-offensive (0).

* **antisemitism**: A classification of whether or not the comment contains antisemitic language.

* **apology_for_the_dictatorship**: A classification of whether or not the comment praises the military dictatorship period in Brazil.

* **fatphobia**: A classification of whether or not the comment contains language that promotes fatphobia.

* **homophobia**: A classification of whether or not the comment contains language that promotes homophobia.

* **partyism**: A classification of whether or not the comment contains language that promotes partyism.

* **racism**: A classification of whether or not the comment contains racist language.

* **religious_intolerance**: A classification of whether or not the comment contains language that promotes religious intolerance.

* **sexism**: A classification of whether or not the comment contains sexist language.

* **xenophobia**: A classification of whether or not the comment contains language that promotes xenophobia.

* **offensive_&_no-hate_speech**: A classification of whether or not the comment is offensive but does not contain hate speech.

* **specialist_1_hate_speech**: A classification of whether or not the comment was annotated by the first specialist as hate speech.

* **specialist_2_hate_speech**: A classification of whether or not the comment was annotated by the second specialist as hate speech.

* **specialist_3_hate_speech**: A classification of whether or not the comment was annotated by the third specialist as hate speech.

### Data Splits

The original authors of the dataset did not propose a standard data split. To address this, we use the [multi-label data stratification technique](http://scikit.ml/stratification.html) implemented at the scikit-multilearn library to propose a train-validation-test split. This method considers all classes for hate speech in the data and attempts to balance the representation of each class in the split.

| name |train|validation|test|

|---------|----:|----:|----:|

|hatebr|4480|1120|1400|

## Considerations for Using the Data

### Discussion of Biases

Please refer to [the HateBR paper](https://aclanthology.org/2022.lrec-1.777/) for a discussion of biases.

### Licensing Information

The HateBR dataset, including all its components, is provided strictly for academic and research purposes. The use of the dataset for any commercial or non-academic purpose is expressly prohibited without the prior written consent of [SINCH](https://www.sinch.com/).

### Citation Information

```

@inproceedings{vargas2022hatebr,

title={HateBR: A Large Expert Annotated Corpus of Brazilian Instagram Comments for Offensive Language and Hate Speech Detection},

author={Vargas, Francielle and Carvalho, Isabelle and de G{\'o}es, Fabiana Rodrigues and Pardo, Thiago and Benevenuto, Fabr{\'\i}cio},

booktitle={Proceedings of the Thirteenth Language Resources and Evaluation Conference},

pages={7174--7183},

year={2022}

}

```

### Contributions

Thanks to [@ruanchaves](https://github.com/ruanchaves) for adding this dataset. |

true |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:** [Enhancement to Low Resource Text Classification via Sequential Transfer Learning](#)

- **Leaderboard:**

- **Point of Contact:** [Neil Riego](mailto:neilchristianriego3@gmail.com)

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

A typical data point, comprises of a text and the corresponding label.

An example from the YelpReviewFull test set looks as follows:

```

{

'label': pos,

'text': 'Huyyy ang gandaaaaaaaaaaa. Grabe sobrang ganda talaga wala ako masabi. Complete orders pa pinadala sa akin. Buti hindi nabasag kahit walang bubble wrap. Okay na lang din para save mother earth and at least hindi nabasag hehe. Oorder ulit ako ang ganda eh'

}

```

### Data Fields

- 'text': The review texts are escaped using double quotes ("), and any internal double quote is escaped by 2 double quotes ("").

- 'label': Corresponds to the score associated with the review (between positive and negative).

### Data Splits

The Shopee reviews tl binary dataset is constructed by randomly taking 14000 training samples and 3000 samples for testing and validation for each review star from neg and pos.

In total there are 28000 training samples and 6000 each in validation and testing samples.

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false |

# Dataset Card for LUDWIG

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)