text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

true | |

false |

goat中文算术数据集

将goat数据集的Template,更换成中文的Template,数学表达式不变

|

true | # Dataset Card for sts-sohu2021

## Dataset Description

- **Repository:** [Chinese NLI dataset](https://github.com/shibing624/text2vec)

- **Leaderboard:** [NLI_zh leaderboard](https://github.com/shibing624/text2vec) (located on the homepage)

- **Size of downloaded dataset files:** 218 MB

- **Total amount of disk used:** 218 MB

### Dataset Summary

2021搜狐校园文本匹配算法大赛数据集

- 数据源:https://www.biendata.xyz/competition/sohu_2021/data/

分为 A 和 B 两个文件,A 和 B 文件匹配标准不一样。其中 A 和 B 文件又分为“短短文本匹配”、“短长文本匹配”和“长长文本匹配”。

A 文件匹配标准较为宽泛,两段文字是同一个话题便视为匹配,B 文件匹配标准较为严格,两段文字须是同一个事件才视为匹配。

数据类型:

| type | 数据类型 |

| --- | ------------|

| dda | 短短匹配 A 类 |

| ddb | 短短匹配 B 类 |

| dca | 短长匹配 A 类 |

| dcb | 短长匹配 B 类 |

| cca | 长长匹配 A 类 |

| ccb | 长长匹配 B 类 |

### Supported Tasks and Leaderboards

Supported Tasks: 支持中文文本匹配任务,文本相似度计算等相关任务。

中文匹配任务的结果目前在顶会paper上出现较少,我罗列一个我自己训练的结果:

**Leaderboard:** [NLI_zh leaderboard](https://github.com/shibing624/text2vec)

### Languages

数据集均是简体中文文本。

## Dataset Structure

### Data Instances

An example of 'train' looks as follows.

```python

# A 类 短短 样本示例

{

"sentence1": "小艺的故事让爱回家2021年2月16日大年初五19:30带上你最亲爱的人与团团君相约《小艺的故事》直播间!",

"sentence2": "香港代购了不起啊,宋点卷竟然在直播间“炫富”起来",

"label": 0

}

# B 类 短短 样本示例

{

"sentence1": "让很多网友好奇的是,张柏芝在一小时后也在社交平台发文:“给大家拜年啦。”还有网友猜测:谢霆锋的经纪人发文,张柏芝也发文,并且配图,似乎都在证实,谢霆锋依旧和王菲在一起,而张柏芝也有了新的恋人,并且生了孩子,两人也找到了各自的归宿,有了自己的幸福生活,让传言不攻自破。",

"sentence2": "陈晓东谈旧爱张柏芝,一个口误暴露她的秘密,难怪谢霆锋会离开她",

"label": 0

}

```

label: 0表示不匹配,1表示匹配。

### Data Fields

The data fields are the same among all splits.

- `sentence1`: a `string` feature.

- `sentence2`: a `string` feature.

- `label`: a classification label, with possible values including `similarity` (1), `dissimilarity` (0).

### Data Splits

```shell

> wc -l *.jsonl

11690 cca.jsonl

11690 ccb.jsonl

11592 dca.jsonl

11593 dcb.jsonl

11512 dda.jsonl

11501 ddb.jsonl

69578 total

```

### Curation Rationale

作为中文NLI(natural langauge inference)数据集,这里把这个数据集上传到huggingface的datasets,方便大家使用。

#### Who are the source language producers?

数据集的版权归原作者所有,使用各数据集时请尊重原数据集的版权。

#### Who are the annotators?

原作者。

### Social Impact of Dataset

This dataset was developed as a benchmark for evaluating representational systems for text, especially including those induced by representation learning methods, in the task of predicting truth conditions in a given context.

Systems that are successful at such a task may be more successful in modeling semantic representations.

### Licensing Information

用于学术研究。

### Contributions

[shibing624](https://github.com/shibing624) upload this dataset. |

false | # [Original Dataset by FredZhang7](https://huggingface.co/datasets/FredZhang7/stable-diffusion-prompts-2.47M)

- Deduped from 2,473,022 down to 2,007,998.

- Changed anything that had `[ prompt text ]`, `( prompt text )`, or `< prompt text >`, to `[prompt text]`, `(prompt text)`, and `<prompt text>`.

- 2 or more spaces converted to a single space.

- Removed all `"`

- Removed spaces at beginnings. |

false | # [Original Dataset by FredZhang7](https://huggingface.co/datasets/FredZhang7/stable-diffusion-prompts-2.47M)

- Put into Alpaca instruct format.

- Instructions are a variation of 16 instructions, varied by `ending period`, `starting capitalization`, `using "sd" or "stable diffusion"`.

- Deduped from 2,473,022 down to 2,007,998.

- Changed anything that had `[ prompt text ]`, `( prompt text )`, or `< prompt text >`, to `[prompt text]`, `(prompt text)`, and `<prompt text>`.

- 2 or more spaces converted to a single space. |

false |

# Dataset Card for Dolly 15k Dutch

## Dataset Description

- **Homepage:** N/A

- **Repository:** N/A

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** Bram Vanroy

### Dataset Summary

This dataset contains 14,934 instructions, contexts and responses, in several natural language categories such as classification, closed QA, generation, etc. The English [original dataset](https://huggingface.co/datasets/databricks/databricks-dolly-15k) was created by @databricks, who crowd-sourced the data creation via its employees. The current dataset is a translation of that dataset through ChatGPT (`gpt-3.5-turbo`).

☕ [**Want to help me out?**](https://www.buymeacoffee.com/bramvanroy) Translating the data with the OpenAI API, and prompt testing, cost me 💸$19.38💸. If you like this dataset, please consider [buying me a coffee](https://www.buymeacoffee.com/bramvanroy) to offset a portion of this cost, I appreciate it a lot! ☕

### Languages

- Dutch

## Dataset Structure

### Data Instances

```python

{

"id": 14963,

"instruction": "Wat zijn de duurste steden ter wereld?",

"context": "",

"response": "Dit is een uitgebreide lijst van de duurste steden: Singapore, Tel Aviv, New York, Hong Kong, Los Angeles, Zurich, Genève, San Francisco, Parijs en Sydney.",

"category": "brainstorming"

}

```

### Data Fields

- **id**: the ID of the item. The following 77 IDs are not included because they could not be translated (or were too long): `[1502, 1812, 1868, 4179, 4541, 6347, 8851, 9321, 10588, 10835, 11257, 12082, 12319, 12471, 12701, 12988, 13066, 13074, 13076, 13181, 13253, 13279, 13313, 13346, 13369, 13446, 13475, 13528, 13546, 13548, 13549, 13558, 13566, 13600, 13603, 13657, 13668, 13733, 13765, 13775, 13801, 13831, 13906, 13922, 13923, 13957, 13967, 13976, 14028, 14031, 14045, 14050, 14082, 14083, 14089, 14110, 14155, 14162, 14181, 14187, 14200, 14221, 14222, 14281, 14473, 14475, 14476, 14587, 14590, 14667, 14685, 14764, 14780, 14808, 14836, 14891, 1

4966]`

- **instruction**: the instruction (question)

- **context**: additional context that the AI can use to answer the question

- **response**: the AI's expected response

- **category**: the category of this type of question (see [Dolly](https://huggingface.co/datasets/databricks/databricks-dolly-15k#annotator-guidelines) for more info)

## Dataset Creation

Both the translations and the topics were translated with OpenAI's API for `gpt-3.5-turbo`. `max_tokens=1024, temperature=0` as parameters.

The prompt template to translate the input is (where `src_lang` was English and `tgt_lang` Dutch):

```python

CONVERSATION_TRANSLATION_PROMPT = """You are asked to translate a task's instruction, optional context to the task, and the response to the task, from {src_lang} to {tgt_lang}.

Here are the requirements that you should adhere to:

1. maintain the format: the task consists of a task instruction (marked `instruction: `), optional context to the task (marked `context: `) and response for the task marked with `response: `;

2. do not translate the identifiers `instruction: `, `context: `, and `response: ` but instead copy them to your output;

3. make sure that text is fluent to read and does not contain grammatical errors. Use standard {tgt_lang} without regional bias;

4. translate the instruction and context text using informal, but standard, language;

5. make sure to avoid biases (such as gender bias, grammatical bias, social bias);

6. if the instruction is to correct grammar mistakes or spelling mistakes then you have to generate a similar mistake in the context in {tgt_lang}, and then also generate a corrected output version in the output in {tgt_lang};

7. if the instruction is to translate text from one language to another, then you do not translate the text that needs to be translated in the instruction or the context, nor the translation in the response (just copy them as-is);

8. do not translate code fragments but copy them to your output. If there are English examples, variable names or definitions in code fragments, keep them in English.

Now translate the following task with the requirements set out above. Do not provide an explanation and do not add anything else.\n\n"""

```

The system message was:

```

You are a helpful assistant that translates English to Dutch according to the requirements that are given to you.

```

Note that 77 items (0.5%) were not successfully translated. This can either mean that the prompt was too long for the given limit (`max_tokens=1024`) or that the generated translation could not be parsed into `instruction`, `context` and `response` fields. The missing IDs are `[1502, 1812, 1868, 4179, 4541, 6347, 8851, 9321, 10588, 10835, 11257, 12082, 12319, 12471, 12701, 12988, 13066, 13074, 13076, 13181, 13253, 13279, 13313, 13346, 13369, 13446, 13475, 13528, 13546, 13548, 13549, 13558, 13566, 13600, 13603, 13657, 13668, 13733, 13765, 13775, 13801, 13831, 13906, 13922, 13923, 13957, 13967, 13976, 14028, 14031, 14045, 14050, 14082, 14083, 14089, 14110, 14155, 14162, 14181, 14187, 14200, 14221, 14222, 14281, 14473, 14475, 14476, 14587, 14590, 14667, 14685, 14764, 14780, 14808, 14836, 14891, 1

4966]`.

### Source Data

#### Initial Data Collection and Normalization

Initial data collection by [databricks](https://huggingface.co/datasets/databricks/databricks-dolly-15k). See their repository for more information about this dataset.

## Considerations for Using the Data

Note that the translations in this new dataset have not been verified by humans! Use at your own risk, both in terms of quality and biases.

### Discussion of Biases

As with any machine-generated texts, users should be aware of potential biases that are included in this dataset. Although the prompt specifically includes `make sure to avoid biases (such as gender bias, grammatical bias, social bias)`, of course the impact of such command is not known. It is likely that biases remain in the dataset so use with caution.

### Other Known Limitations

The translation quality has not been verified. Use at your own risk!

### Licensing Information

This repository follows the original databricks license, which is CC BY-SA 3.0 but see below for a specific restriction.

This text was generated (either in part or in full) with GPT-3 (`gpt-3.5-turbo`), OpenAI’s large-scale language-generation model. Upon generating draft language, the author reviewed, edited, and revised the language to their own liking and takes ultimate responsibility for the content of this publication.

If you use this dataset, you must also follow the [Sharing](https://openai.com/policies/sharing-publication-policy) and [Usage](https://openai.com/policies/usage-policies) policies.

As clearly stated in their [Terms of Use](https://openai.com/policies/terms-of-use), specifically 2c.iii, "[you may not] use output from the Services to develop models that compete with OpenAI". That means that you cannot use this dataset to build models that are intended to commercially compete with OpenAI. [As far as I am aware](https://law.stackexchange.com/questions/93308/licensing-material-generated-with-chatgpt), that is a specific restriction that should serve as an addendum to the current license.

### Citation Information

If you use this data set, please cite :

Bram Vanroy. (2023). Dolly 15k Dutch [Data set]. Hugging Face. https://doi.org/10.57967/hf/0785

```bibtex

@misc {https://doi.org/10.57967/hf/0785,

author = { {Bram Vanroy} },

title = { {D}olly 15k {D}utch },

year = 2023,

url = { https://huggingface.co/datasets/BramVanroy/dolly-15k-dutch },

doi = { 10.57967/hf/0785 },

publisher = { Hugging Face }

}

```

### Contributions

Thanks to [databricks](https://huggingface.co/datasets/databricks/databricks-dolly-15k) for the initial, high-quality dataset. |

true | |

false |

# The WhisperSpeech Dataset

This dataset contains data to train SPEAR TTS-like text-to-speech models that utilized semantic tokens derived from the OpenAI Whisper

speech recognition model.

We currently provide semantic and acoustic tokens for the LibriLight and LibriTTS datasets (English only).

Acoustic tokens:

- 24kHz EnCodec 6kbps (8 quantizers)

Semantic tokens:

- Whisper tiny VQ bottleneck trained on a subset of LibriLight

Available LibriLight subsets:

- `small`/`medium`/`large` (following the original dataset division but with `large` excluding the speaker `6454`)

- a separate ≈1300hr single-speaker subset based on the `6454` speaker from the `large` subset for training single-speaker TTS models

We plan to add more acoustic tokens from other codecs in the future. |

false |

This is a copy of the **DeepNets-1M** dataset originally released at https://github.com/facebookresearch/ppuda under the MIT license.

The dataset presents diverse computational graphs (1M training and 1402 evaluation) of neural network architectures used in image classification.

See detailed description at https://paperswithcode.com/dataset/deepnets-1m and

in the [Parameter Prediction for Unseen Deep Architectures](https://arxiv.org/abs/2110.13100) paper.

There are four files in this dataset:

- deepnets1m_eval.hdf5; # 16 MB (md5: 1f5641329271583ad068f43e1521517e)

- deepnets1m_meta.tar.gz; # 35 MB (md5: a42b6f513da6bbe493fc16a30d6d4e3e), run `tar -xf deepnets1m_meta.tar.gz` to unpack it before running any code reading the dataset

- deepnets1m_search.hdf5; # 1.3 GB (md5: 0a93f4b4e3b729ea71eb383f78ea9b53)

- deepnets1m_train.hdf5; # 10.3 GB (md5: 90bbe84bb1da0d76cdc06d5ff84fa23d)

<img src="https://production-media.paperswithcode.com/datasets/0dbd44d8-19d9-495d-918a-b0db80facaf3.png" alt="" width="600"/>

## Citation

If you use this dataset, please cite it as:

```

@inproceedings{knyazev2021parameter,

title={Parameter Prediction for Unseen Deep Architectures},

author={Knyazev, Boris and Drozdzal, Michal and Taylor, Graham W and Romero-Soriano, Adriana},

booktitle={Advances in Neural Information Processing Systems},

year={2021}

}

``` |

false |

# OCTScenes: A Versatile Real-World Dataset of Tabletop Scenes for Object-Centric Learning

This is the official dataset of OCTScenes (https://arxiv.org/abs//2306.09682). OCTScenes contains 5000 tabletop scenes with a total of 15 everyday objects. Each scene is captured in 60 frames covering a 360-degree perspective.

In the OCTScenes-A dataset, the 0--3099 scenes without segmentation annotation are for training, while the 3100--3199 scenes with segmentation annotation can be used for testing. In the OCTScenes-B dataset, the 0--4899 scenes without segmentation annotation are for training, while the 4900--4999 scenes with segmentation annotation can be used for testing.

We have images of three different resolutions for each scene: 128x128, 256x256 and 640x480. The name of each image is in the form `[scene_id]_[frame_id].png`. They are in `./128x128`,`./256x256`, and `./640x480` respectively. The images are compressed using `tar`, and the name of compressed files starts with the resolutions, such as 'image_128x128_'. Please download all the compressed files, and use 'tar' instruction to decompress the files.

For example, for the images with the resolution of 128x128, please download all the scene files starts with 'image_128x128_*', and then merge files into 'image_128x128.tar.gz':

```

cat image_128x128_* > image_128x128.tar.gz

```

And then decompress the file:

```

tar xvzf image_128x128.tar.gz

```

Download the segmentation annotations from `./128x128/segments_128.tar.gz`. We provide the segmentations of test scenes 3100-3199 for OCTScenes-A and 4900-4999 for OCTScenes-B. Each segmentation annotation image is named as `[scene_id]_[frame_id].png`. The int number in each pixel represents the index of the object (ranges from 1 to 10, and 0 represents the background).

|

false |

# PongSawaML

PongsawaM(L) is a Thai annal Ayutthaya, Thonburi, and Rattakosin (Rama I). This annal writed by Dan Beach Bradley an American Protestant missionary

|

true | |

true | # AutoTrain Dataset for project: test-auto

## Dataset Description

This dataset has been automatically processed by AutoTrain for project test-auto.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "I'm indifferent towards this restaurant. The food was average, and the service was neither exceptional nor terrible.",

"target": 1

},

{

"text": "\"The product I received was damaged and didn't work properly. I reached out to customer support, but they were unhelpful and unresponsive.\"",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "ClassLabel(names=['Negative', 'Neutral', 'Positive'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 26 |

| valid | 8 |

|

false | # AutoTrain Dataset for project: test-token-classification

## Dataset Description

This dataset has been automatically processed by AutoTrain for project test-token-classification.

### Languages

The BCP-47 code for the dataset's language is en.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"tokens": [

"I",

"will",

"be",

"traveling",

"to",

"Tokyo",

"next",

"month."

],

"tags": [

13,

13,

13,

13,

13,

1,

0,

5

]

},

{

"tokens": [

"The",

"company",

"Apple",

"Inc.",

"is",

"based",

"in",

"California."

],

"tags": [

13,

13,

3,

9,

13,

13,

13,

1

]

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"tokens": "Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)",

"tags": "Sequence(feature=ClassLabel(names=['B-DATE', 'B-LOC', 'B-MISC', 'B-ORG', 'B-PER', 'I-DATE', 'I-DATE,', 'I-LOC', 'I-MISC', 'I-ORG', 'I-ORG,', 'I-PER', 'I-PER,', 'O'], id=None), length=-1, id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 21 |

| valid | 9 |

|

false | |

false |

<div style="display: flex; flex-direction: column; align-items: center; text-align: center;">

<img src="https://huggingface.co/datasets/branles14/chimpchat/resolve/main/etc/images/chimpchat_banner.png" alt="Banner" style="width: 400px;">

<div style="display: flex; align-items: center;">

<img src="https://img.shields.io/endpoint?url=https%3A%2F%2Fhuggingface.co%2Fdatasets%2Fbranles14%2Fchimpchat%2Fraw%2Fmain%2Fetc%2Fshields%2Fstatus.json" style="margin-right: 10px; margin-top: 0px;">

<img src="https://img.shields.io/endpoint?url=https%3A%2F%2Fhuggingface.co%2Fdatasets%2Fbranles14%2Fchimpchat%2Fraw%2Fmain%2Fetc%2Fshields%2Fexamples.json" style="margin-right: 10px; margin-left: 10px; margin-top: 0px;">

<img src="https://img.shields.io/endpoint?url=https%3A%2F%2Fhuggingface.co%2Fdatasets%2Fbranles14%2Fchimpchat%2Fraw%2Fmain%2Fetc%2Fshields%2Flicense.json" style="margin-left: 10px; margin-top: 0px;">

</div>

*"Because apes deserve an AI companion that's as blunt as they are"* 🤖🐒

</div>

Welcome to the early stages of the ChimpChat project, where your AI companion is as blunt as it's entertaining! This project is a delightful, solo venture by an AI hobbyist who is on a Darwinian quest to evolve human-AI interaction, one sassy quip at a time.

Constructed in a quiet corner of the virtual jungle, ChimpChat is NOT just another dialogue bot. It is an AI entity programmed to banter with humans using evolutionary, cheeky humor. ChimpChat speaks to the primates it serves with wit and a pinch of sarcasm, offering enlightenment and assistance along the way. ChimpChat is comprised of three distinct sectors:

- 🌍 **Ape World Queries**: This segment dives deep into the ape's inquiries about the real world. Spanning a wide range of topics from technology to entrepreneurship, this segment aims to stimulate the intellectual curiosity of the primate.

- ✍️ **Simian Scribes**: This segment focuses on aiding the simian in the creation process. Whether it's crafting emails or conjuring narratives, ChimpChat seeks to facilitate and inspire creativity.

- 📜 **Primate Parchments**: In this segment, dialogues are generated based on existing materials, which includes but is not limited to rewriting, continuation, and summarization, covering an eclectic range of topics.

## Data

This project is still in its early stages, and further steps are being taken to refine the generated dialogues, ensuring they carry the distinct signature of ChimpChat while providing accurate and useful information.

### Data Format

Each line in the downloaded data file is a json dict containing the data id and dialogue data in a list format. Below is an example line.

```JSON

{

"id": 0,

"data": [

"Message 1 - Speaker: Human - Source: UltraChat",

"Message 2 - Speaker: Bot - Source: OpenAI, Human",

"Message 3 - Speaker: Human - Source: Human, UltraChat, OpenAI",

"Message 4 - Speaker: Bot - Source: OpenAI, GooseAI, HuggingFace, Human",

"Message 5 - Speaker: Human - Source: Human, UltraChat, OpenAI",

"Message 6 - Speaker: Bot - Source: OpenAI, GooseAI, HuggingFace, Human"

]

}

```

## Credits

Each initial message, and many subsequent messages from each example in this project are sourced from the [UltraChat](https://github.com/thunlp/UltraChat) dataset.

|

false | # Dataset Card for "ecqa"

https://github.com/dair-iitd/ECQA-Dataset

```

@inproceedings{aggarwaletal2021ecqa,

title={{E}xplanations for {C}ommonsense{QA}: {N}ew {D}ataset and {M}odels},

author={Shourya Aggarwal and Divyanshu Mandowara and Vishwajeet Agrawal and Dinesh Khandelwal and Parag Singla and Dinesh Garg},

booktitle="Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers)}",

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics"

}

``` |

true |

# Dataset Card for RTE_TH_drop

### Dataset Description

This dataset is Thai translated version of [RTE](https://huggingface.co/datasets/super_glue/viewer/rte) using google translate with [Multilingual Universal Sentence Encoder](https://arxiv.org/abs/1907.04307) to calculate score for Thai translation.

Some line which score_hypothesis <= 0.5 or score_premise <= 0.7 had been droped. |

false |

# LivingNER

This is a third party reupload of the [LivingNER](https://temu.bsc.es/livingner/) task 1 dataset.

It only contains the task 1 for the Spanish language. It does not include the multilingual data nor the background data.

This dataset is part of a benchmark in the paper [TODO](TODO).

### Citation Information

```bibtex

TODO

```

### Citation Information of the original dataset

```bibtex

@article{amiranda2022nlp,

title={Mention detection, normalization \& classification of species, pathogens, humans and food in clinical documents: Overview of LivingNER shared task and resources},

author={Miranda-Escalada, Antonio and Farr{'e}-Maduell, Eul{`a}lia and Lima-L{'o}pez, Salvador and Estrada, Darryl and Gasc{'o}, Luis and Krallinger, Martin},

journal = {Procesamiento del Lenguaje Natural},

year={2022}

}

```

|

true |

# LivingNER

This is a third party reupload of the [LivingNER](https://temu.bsc.es/livingner/) task 3 dataset.

It only contains the task 3 for the Spanish language. It does not include the multilingual data nor the background data.

This dataset is part of a benchmark in the paper [TODO](TODO).

### Citation Information

```bibtex

TODO

```

### Citation Information of the original dataset

```bibtex

@article{amiranda2022nlp,

title={Mention detection, normalization \& classification of species, pathogens, humans and food in clinical documents: Overview of LivingNER shared task and resources},

author={Miranda-Escalada, Antonio and Farr{'e}-Maduell, Eul{`a}lia and Lima-L{'o}pez, Salvador and Estrada, Darryl and Gasc{'o}, Luis and Krallinger, Martin},

journal = {Procesamiento del Lenguaje Natural},

year={2022}

}

```

|

true | |

false |

# DaNE+

This is a version of [DaNE](https://huggingface.co/datasets/dane), where the original NER labels have been updated to follow the ontonotes annotation scheme. The annotation process used the model trained on the Danish dataset [DANSK](https://huggingface.co/datasets/chcaa/DANSK) for the first round of annotation and then all the discrepancies were manually reviewed and corrected by Kenneth C. Enevoldsen. A discrepancy include notably also includes newly added entities such as `PRODUCT` and `WORK_OF_ART`. Thus in practice a great deal of entities were manually reviews. If there was an uncertainty the annotation was left as it was.

The additional annotations (e.g. part-of-speech tags) stems from the Danish Dependency Treebank, however, if you wish to use these I would recommend using the latest version as this version here will likely become outdated over time.

## Process of annotation

1) Install the requirements:

```

--extra-index-url pip install prodigy -f https://{DOWNLOAD KEY}@download.prodi.gy

prodigy>=1.11.0,<2.0.0

```

2) Create outline dataset

```bash

python annotate.py

```

3) Review and correction annotation using prodigy:

Add datasets to prodigy

```bash

prodigy db-in dane reference.jsonl

prodigy db-in dane_plus_mdl_pred predictions.jsonl

```

Run review using prodigy:

```bash

prodigy review daneplus dane_plus_mdl_pred,dane --view-id ner_manual --l NORP,CARDINAL,PRODUCT,ORGANIZATION,PERSON,WORK_OF_ART,EVENT,LAW,QUANTITY,DATE,TIME,ORDINAL,LOCATION,GPE,MONEY,PERCENT,FACILITY

```

Export the dataset:

```bash

prodigy data-to-spacy daneplus --ner daneplus --lang da -es 0

```

4) Redo the original split:

```bash

python split.py

```

|

true | # AutoTrain Dataset for project: twitter-goemotions-binary-fear-classification

## Dataset Description

This dataset has been automatically processed by AutoTrain for project twitter-goemotions-binary-fear-classification.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "Downvoting comments you don't like is your right.",

"feat_id": "ed62dkv",

"feat_author": "128bitworm",

"feat_subreddit": "im14andthisisdeep",

"feat_link_id": "t3_ac6bna",

"feat_parent_id": "t1_ed5trip",

"feat_created_utc": 1546542336.0,

"feat_rater_id": 35,

"feat_example_very_unclear": false,

"feat_admiration": 0,

"feat_amusement": 0,

"feat_anger": 0,

"feat_annoyance": 0,

"feat_approval": 0,

"feat_caring": 0,

"feat_confusion": 0,

"feat_curiosity": 0,

"feat_desire": 0,

"feat_disappointment": 0,

"feat_disapproval": 1,

"feat_disgust": 0,

"feat_embarrassment": 0,

"feat_excitement": 0,

"target": 0,

"feat_gratitude": 0,

"feat_grief": 0,

"feat_joy": 0,

"feat_love": 0,

"feat_nervousness": 0,

"feat_optimism": 0,

"feat_pride": 0,

"feat_realization": 0,

"feat_relief": 0,

"feat_remorse": 0,

"feat_sadness": 0,

"feat_surprise": 0,

"feat_neutral": 0

},

{

"text": "I fucking love this",

"feat_id": "edxv95q",

"feat_author": "fueryerhealth",

"feat_subreddit": "FellowKids",

"feat_link_id": "t3_af72i1",

"feat_parent_id": "t3_af72i1",

"feat_created_utc": 1547342464.0,

"feat_rater_id": 19,

"feat_example_very_unclear": false,

"feat_admiration": 1,

"feat_amusement": 0,

"feat_anger": 0,

"feat_annoyance": 0,

"feat_approval": 0,

"feat_caring": 0,

"feat_confusion": 0,

"feat_curiosity": 0,

"feat_desire": 0,

"feat_disappointment": 0,

"feat_disapproval": 0,

"feat_disgust": 0,

"feat_embarrassment": 0,

"feat_excitement": 0,

"target": 0,

"feat_gratitude": 0,

"feat_grief": 0,

"feat_joy": 0,

"feat_love": 1,

"feat_nervousness": 0,

"feat_optimism": 0,

"feat_pride": 0,

"feat_realization": 0,

"feat_relief": 0,

"feat_remorse": 0,

"feat_sadness": 0,

"feat_surprise": 0,

"feat_neutral": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"feat_id": "Value(dtype='string', id=None)",

"feat_author": "Value(dtype='string', id=None)",

"feat_subreddit": "Value(dtype='string', id=None)",

"feat_link_id": "Value(dtype='string', id=None)",

"feat_parent_id": "Value(dtype='string', id=None)",

"feat_created_utc": "Value(dtype='float32', id=None)",

"feat_rater_id": "Value(dtype='int32', id=None)",

"feat_example_very_unclear": "Value(dtype='bool', id=None)",

"feat_admiration": "Value(dtype='int32', id=None)",

"feat_amusement": "Value(dtype='int32', id=None)",

"feat_anger": "Value(dtype='int32', id=None)",

"feat_annoyance": "Value(dtype='int32', id=None)",

"feat_approval": "Value(dtype='int32', id=None)",

"feat_caring": "Value(dtype='int32', id=None)",

"feat_confusion": "Value(dtype='int32', id=None)",

"feat_curiosity": "Value(dtype='int32', id=None)",

"feat_desire": "Value(dtype='int32', id=None)",

"feat_disappointment": "Value(dtype='int32', id=None)",

"feat_disapproval": "Value(dtype='int32', id=None)",

"feat_disgust": "Value(dtype='int32', id=None)",

"feat_embarrassment": "Value(dtype='int32', id=None)",

"feat_excitement": "Value(dtype='int32', id=None)",

"target": "ClassLabel(names=['0', '1'], id=None)",

"feat_gratitude": "Value(dtype='int32', id=None)",

"feat_grief": "Value(dtype='int32', id=None)",

"feat_joy": "Value(dtype='int32', id=None)",

"feat_love": "Value(dtype='int32', id=None)",

"feat_nervousness": "Value(dtype='int32', id=None)",

"feat_optimism": "Value(dtype='int32', id=None)",

"feat_pride": "Value(dtype='int32', id=None)",

"feat_realization": "Value(dtype='int32', id=None)",

"feat_relief": "Value(dtype='int32', id=None)",

"feat_remorse": "Value(dtype='int32', id=None)",

"feat_sadness": "Value(dtype='int32', id=None)",

"feat_surprise": "Value(dtype='int32', id=None)",

"feat_neutral": "Value(dtype='int32', id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 168979 |

| valid | 42246 |

|

false | |

false | # AutoTrain Dataset for project: identifying-person-location-date

## Dataset Description

This dataset has been automatically processed by AutoTrain for project identifying-person-location-date.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"tokens": [

"I",

"will",

"be",

"traveling",

"to",

"Tokyo",

"next",

"month."

],

"tags": [

13,

13,

13,

13,

13,

1,

13,

0,

5

]

},

{

"tokens": [

"The",

"company",

"Apple",

"Inc.",

"is",

"based",

"in",

"California."

],

"tags": [

13,

13,

3,

9,

13,

13,

1

]

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"tokens": "Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)",

"tags": "Sequence(feature=ClassLabel(names=['B-DATE', 'B-LOC', 'B-MISC', 'B-ORG', 'B-PER', 'I-DATE', 'I-DATE,', 'I-LOC', 'I-MISC', 'I-ORG', 'I-ORG,', 'I-PER', 'I-PER,', 'O'], id=None), length=-1, id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 21 |

| valid | 9 |

|

false | # AutoTrain Dataset for project: demo-on-token-classification

## Dataset Description

This dataset has been automatically processed by AutoTrain for project demo-on-token-classification.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"tokens": [

"I",

"will",

"be",

"traveling",

"to",

"Tokyo",

"next",

"month."

],

"tags": [

13,

13,

13,

13,

13,

1,

0,

5

]

},

{

"tokens": [

"The",

"company",

"Apple",

"Inc.",

"is",

"based",

"in",

"California."

],

"tags": [

13,

13,

3,

9,

13,

13,

13,

1

]

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"tokens": "Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)",

"tags": "Sequence(feature=ClassLabel(names=['B-DATE', 'B-LOC', 'B-MISC', 'B-ORG', 'B-PER', 'I-DATE', 'I-DATE,', 'I-LOC', 'I-MISC', 'I-ORG', 'I-ORG,', 'I-PER', 'I-PER,', 'O'], id=None), length=-1, id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 21 |

| valid | 9 |

|

false | |

true | # Dataset Card for bank reviews dataset

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The dataset is collected from the [banki.ru](https://www.banki.ru/services/responses/list/?is_countable=on) website.

It contains customer reviews of various banks. In total, the dataset contains 12399 reviews.

The dataset is suitable for sentiment classification.

The dataset contains this fields - bank name, username, review title, review text, review time, number of views,

number of comments, review rating set by the user, as well as ratings for special categories

### Languages

Russian

|

false | |

false | |

false | |

true |

[mtasksource](https://github.com/sileod/tasksource) classification tasks recasted as natural language inference.

This dataset is intended to improve label understanding in [zero-shot classification HF pipelines](https://huggingface.co/docs/transformers/main/main_classes/pipelines#transformers.ZeroShotClassificationPipeline

).

Inputs that are text pairs are separated by a newline (\n).

```python

from transformers import pipeline

classifier = pipeline(model="sileod/mdeberta-v3-base-tasksource-nli")

classifier(

"I have a problem with my iphone that needs to be resolved asap!!",

candidate_labels=["urgent", "not urgent", "phone", "tablet", "computer"],

)

```

[mdeberta-v3-base-tasksource-nli](https://huggingface.co/sileod/mdeberta-v3-base-tasksource-nli) will include `label-nli` in its training mix (a relatively small portion, to keep the model general, but note that nli models work for label-like zero shot classification without specific supervision (https://aclanthology.org/D19-1404.pdf).

```

@article{sileo2023tasksource,

title={tasksource: A Dataset Harmonization Framework for Streamlined NLP Multi-Task Learning and Evaluation},

author={Sileo, Damien},

year={2023}

}

``` |

false | # Dataset Card for "Calc-gsm8k"

## Summary

This dataset is an instance of gsm8k dataset, converted to a simple html-like language that can be easily parsed (e.g. by BeautifulSoup). The data contains 3 types of tags:

- gadget: A tag whose content is intended to be evaluated by calling an external tool (sympy-based calculator in this case)

- output: An output of the external tool

- result: The final answer of the mathematical problem (a number)

## Supported Tasks

The dataset is intended for training Chain-of-Thought reasoning **models able to use external tools** to enhance the factuality of their responses.

This dataset presents in-context scenarios where models can out-source the computations in the reasoning chain to a calculator.

## Construction Process

The answers in the original dataset was in in a structured but non-standard format. So, the answers were parsed, all arithmetical expressions

were evaluated using a sympy-based calculator, the outputs were checked to be consistent with the intermediate results and finally exported

into a simple html-like language that BeautifulSoup can parse.

## Content and Data splits

Content and splits correspond to the original gsm8k dataset.

See [gsm8k HF dataset](https://huggingface.co/datasets/gsm8k) and [official repository](https://github.com/openai/grade-school-math) for more info.

## Licence

MIT, consistently with the original dataset.

|

false | # Dataset Card for "Calc-aqua_rat"

### Summary

This dataset is an instance of [aqua_rat](https://huggingface.co/datasets/aqua_rat) dataset extended for the in-context calls of calculator,

represented by the `exec` calls to a `sympy` library.

### Supported Tasks

The dataset is intended for training Chain-of-Thought reasoning models able to use external tools to enhance the factuality of their responses.

This dataset presents in-context scenarios where models can out-source the computations in the reasoning chain to a calculator.

### Construction Process

The dataset was constructed automatically by evaluating all candidate calls to a `sympy` library that were extracted from the originally-annotated

*rationale*s. The selection of candidates is pivoted by the matching of equals ('=') symbols in the chain, where the left-hand side of the equation is evaluated,

and accepted as a correct gadget call, if the result occurs closely on the right-hand side.

Therefore, the extraction of calculator calls may inhibit false negatives (where the calculator could have been used but was not), but not any known

false positives.

**If you find an issue in the dataset or in the fresh version of the parsing script, we'd be happy if you report it, or create a PR.**

## Dataset Structure

The dataset can be loaded by simply choosing a split (`train`, `validation` or `test`) and calling:

```python

import datasets

dataset_val = datasets.load_dataset("emnlp2023/Calc-aqua_rat", split="validation")

print(dataset_val[0]) # see the output below

```

### Data Instances

The samples of Calc-aqua_rat have this format (newline-reformated for better readability):

```python

{'question': 'Three birds are flying at a fast rate of 900 kilometers per hour. What is their speed in miles per minute? [1km = 0.6 miles]',

'options': ['A)32400', 'B)6000', 'C)600', 'D)60000', 'E)10'],

'correct': 'A',

'rationale': 'To calculate the equivalent of miles in a kilometer\n

0.6 kilometers = 1 mile\n

900 kilometers = (0.6)*900 = 540 miles\n

In 1 hour there are 60 minutes\n

Speed in miles/minutes = 60 * 540 = 32400\n

Correct answer - A',

'chain': 'To calculate the equivalent of miles in a kilometer\n

0.6 kilometers \n= 1 mile\n

900 kilometers \n= (0.6)*900\n= \n<gadget id="calculator">(0.6)*900</gadget>\n<output>540</output>\n540 miles\n

In 1 hour there are 60 minutes\n

Speed in miles/minutes\n= 60 * 540\n= \n<gadget id="calculator">60 * 540</gadget>\n<output>32_400</output>\n32400\n

Correct answer - 32400\n.

Final result is <result>32400</result>'

}

```

The enclosing HTML tags (e.g. **`<gadget id="calculator">(0.6)*900</gadget>\n<output>540</output>`**) represent the inputs and outputs

to the `sympy.parse_expr().evalf()` method.

### Data Fields

* **question**: A natural language definition of the problem to solve.

* **options**: 5 possible options (A, B, C, D and E), among which one is correct

* **correct**: The correct option

* **rationale**: A natural language sequence of steps leading to a solution of the given problem.

* **chain**: A natural language sequence of steps with inserted calculator calls and outputs of the sympy calculator.

### Data Splits

The samples in data splits are consistent with the original [aqua_rat](https://huggingface.co/datasets/aqua_rat) dataset, containing:

* **train** split of 97467 samples,

* **validation** split of 254 samples,

* **test** split of 254.

*

## Licensing

Apache-2.0, consistently with the original aqua-rat dataset.

|

false | |

false |

# Dataset Card for "RO-Offense-Sequences"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

<!--

- **Paper:** News-RO-Offense - A Romanian Offensive Language Dataset and Baseline Models Centered on News Article Comments

-->

- **Homepage:** [https://github.com/readerbench/ro-offense-sequences](https://github.com/readerbench/ro-offense-sequences)

- **Repository:** [https://github.com/readerbench/ro-offense-sequences](https://github.com/readerbench/ro-offense-sequences)

- **Point of Contact:** [Teodora-Andreea Ion](mailto:theoion21.andr@gmail.com)

-

### Dataset Summary

a novel Romanian language dataset for offensive sequence detection with manually

annotated offensive sequences from a local Romanian sports news website (gsp.ro):

Resulting in 4800 annotated messages

### Languages

Romanian

## Dataset Structure

### Data Instances

An example of 'train' looks as follows.

```

{

'id': 5,

'text':'PLACEHOLDER TEXT',

'offensive_substrings': ['substr1','substr2'],

'offensive_sequences': [(0,10), (16,20)]

}

```

### Data Fields

- `id`: The unique comment ID, corresponding to the ID in [RO Offense](https://huggingface.co/datasets/readerbench/ro-offense)

- `text`: full comment text

- `offensive_substrings`: a list of offensive substrings. Can contain duplicates if some offensive substring appears twice

- `offensive_sequences`: a list of tuples with (start, end) position of the offensive sequences

### Data Splits

| name |train|validate|test|

|---------|----:|---:|---:|

|ro|x|x|x|

## Dataset Creation

### Curation Rationale

Collecting data for abusive language classification for Romanian Language.

### Source Data

Sports News Articles comments

#### Initial Data Collection and Normalization

#### Who are the source language producers?

Sports News Article readers

### Annotations

#### Annotation process

#### Who are the annotators?

Native speakers

### Personal and Sensitive Information

The data was public at the time of collection. PII removal has been performed.

## Considerations for Using the Data

### Social Impact of Dataset

The data definitely contains abusive language. The data could be used to develop and propagate offensive language against every target group involved, i.e. ableism, racism, sexism, ageism, and so on.

### Discussion of Biases

### Other Known Limitations

## Additional Information

### Dataset Curators

### Licensing Information

This data is available and distributed under Apache-2.0 license

### Citation Information

```

tbd

```

### Contributions

|

false | |

false | the same pig |

false | # AutoTrain Dataset for project: sample

## Dataset Description

This dataset has been automatically processed by AutoTrain for project sample.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<500x375 RGB PIL image>",

"target": 1

},

{

"image": "<378x274 RGB PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['cat', 'dog'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 80 |

| valid | 20 |

|

false | # Dataset Card for "zikir_detection"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false | |

true | |

false | # Dataset Card for Shironaam Corpus

## Dataset Description

- **Homepage:**

- **Repository:** https://github.com/dialect-ai/BenHeadGen

- **Paper:** https://aclanthology.org/2023.eacl-main.4/

- **Leaderboard:**

- **Point of Contact:** [Abu Ubaida Akash](mailto:akash.ubaida@gmail.com)

### Dataset Summary

Automatic headline generation systems have the potential to assist editors in finding interesting headlines to attract visitors or readers.

However, the performance of headline generation systems remains challenging due to the unavailability of sufficient parallel data for

low-resource languages like Bengali. We provide **Shironaam**, a large-scale news headline generation dataset of a low-resource language

_i.e._, Bengali containing over 240K news headline-article pairings with auxiliary information such as image captions, topic words,

and category information. Also, this dataset can potentially be used for other tasks such as document categorization, news clustering,

keyword identification, _etc._ [(read more)](https://aclanthology.org/2023.eacl-main.4.pdf).

<!---

### Supported Tasks and Leaderboards

[More Information Needed]

-->

### Language(s)

Bengali

## Dataset Structure

### Data Instances

One example from the test split of the dataset is given below in JSON format.

```

{

"news_link": https://www.ajkerpatrika.com/169885/%E0%A6%AA%E0%A6%B0%E0%A6%BF%E0%A6%AC%E0%A7%87%E0%A6%B6%E0%A6%A6%E0%A7%82%E0%A6%B7%E0%A6%A3%E0%A7%87-%E0%A6%AC%E0%A7%8D%E0%A6%AF%E0%A6%BE%E0%A6%A7%E0%A6%BF-%E0%A6%AC%E0%A6%BE%E0%A7%9C%E0%A6%9B%E0%A7%87-%E0%A6%B8%E0%A7%8D%E0%A6%AC%E0%A6%BE%E0%A6%B8%E0%A7%8D%E0%A6%A5%E0%A7%8D%E0%A6%AF%E0%A6%AE%E0%A6%A8%E0%A7%8D%E0%A6%A4%E0%A7%8D%E0%A6%B0%E0%A7%80,

"head_lines": পরিবেশদূষণে ব্যাধি বাড়ছে: স্বাস্থ্যমন্ত্রী,

"article": স্বাস্থ্য ও পরিবারকল্যাণমন্ত্রী জাহিদ মালেক বলেছেন, প্রতিনিয়ত বিশ্বে পরিবেশ দূষিত হচ্ছে। এতে নতুন নতুন রোগের সৃষ্টি হচ্ছে। পরিবেশদূষণের কারণে ১৫-২০ শতাংশ মানসিক রোগী বাড়ছে। বিশ্ব স্বাস্থ্য দিবস উপলক্ষে আজ বৃহস্পতিবার রাজধানীর ওসমানী স্মৃতি মিলনায়তনে আয়োজিত এক অনুষ্ঠানে তিনি এসব কথা বলেন।স্বাস্থ্যমন্ত্রী বলেন, 'বর্তমানে পরিবেশ, পানি দূষিত হচ্ছে। দেশের পরিবেশ ভালো থাকলে কৃষি, পানি, স্বাস্থ্য ভালো থাকবে এবং চাপ কম থাকবে। এগুলো ভালো রাখতে হবে, তবেই আমরা ভালো থাকব।'জাহিদ মালেক বলেন, কলকারখানার গ্যাস ও যানবাহনের দূষিত ধোঁয়া পরিবেশ নষ্ট করছে। এতে ডায়রিয়া, কলেরা, চিকুনগুনিয়াসহ নানা নতুন-পুরোনো রোগ দেখা দিচ্ছে। দেশের অন্যান্য স্থানের চেয়ে ঢাকায় বায়ুদূষণ বেশি হচ্ছে। দেশে যে পরিমাণ বনাঞ্চল থাকার কথা, তা নেই।পরিবেশ ধ্বংসে বাংলাদেশের হাত না থাকলেও সবচেয়ে বেশি ক্ষতির মুখে পড়তে হয় মন্তব্য করে স্বাস্থ্যমন্ত্রী বলেন, বিশ্বে প্রতিবছর ৬০ হাজার হেক্টর বন ধ্বংস হচ্ছে। পরিবেশ ধ্বংসে যুক্তরাষ্ট্র, ব্রাজিল ও ইউরোপের দেশগুলোর বড় ভূমিকা থাকলেও বাংলাদেশের মতো দেশগুলোকে প্রভাব মোকাবিলা করতে হয়।পানি সমস্যার কারণে ডায়রিয়া বাড়ছে জানিয়ে জাহিদ মালেক বলেন, পানি সমস্যার সমাধান করতে হবে। এর কারণে ডায়রিয়া, কলেরাসহ অন্যান্য রোগ বেড়েই চলেছে। ভেজাল খাদ্যের কারণে সংক্রামক ও অসংক্রামক রোগ বাড়ছে। তবে আমাদের স্বাস্থ্য ব্যবস্থাপনাও ভালো রাখতে হবে। দেশকে ভালো রাখতে হলে দেশের সম্পদ ঠিক রাখতে হবে।দেশের অন্যান্য উন্নয়নের পাশাপাশি স্বাস্থ্যব্যবস্থারও অনেক উন্নতি হয়েছে জানিয়ে স্বাস্থ্যমন্ত্রী বলেন, 'আমাদের গড় আয়ু এখন ৭৩ বছর। ভ্যাকসিনেও আমরা অনেক ভালো করেছি, বিশ্বে অষ্টম হয়েছি। লক্ষ্যমাত্রার ৯৫ ভাগ মানুষকে টিকা দিয়েছি। ভালো কাজ করেছি বিধায় জিডিপি এখনো সাতে রয়েছে। পাশের শ্রীলঙ্কা এখন দেউলিয়া, তারা হয়তো ভালো ব্যবস্থা নিতে পারেনি। কিন্তু আমাদের খাদ্যে কোনো ঘাটতি নেই। ৪৫ বিলিয়ন ডলার আমাদের রিজার্ভ রয়েছে। মাথাপিছু ঋণ অনেক দেশের তুলনায় কম রয়েছে।',

"tags": স্বাস্থ্যমন্ত্রী,রাজধানী,পরিবেশ দূষণ,জাহিদ মালেক,

"image_caption": অনুষ্ঠানে বক্তব্য দেন স্বাস্থ্য ও পরিবার কল্যাণমন্ত্রী জাহিদ মালেক।,

"category": national

}

```

### Data Fields

- `news_link`: A string representing the link of the news source

- `head_lines`: A string representing the headline of the corresponding news article

- `article`: A string representing the article body of the news

- `tags`: A string representing the tags/topic-words related to the corresponding news article

- `image_caption`: A string representing the caption(s) of the images from the corresponding news article

- `category`: A string representing the category the corresponding news belongs to

### Data Splits

The **Shironaam** dataset distribution over 13 different domains. After preprocessing the raw corpus, we have 240,580 news samples

as a tuple of (headline, article, image caption, topic words, category). To ensure a balanced distribution, we maintain the ratio

of (92% - 220,574), (2% - 4994), and (6% - 15,012) samples from all the categories to construct the train, validation, and

test set, respectively.

| **Category** | **Train** | **Valid** | **Test** | **Total** |

|:-------------:|:-----------:|:---------:|:----------:|:-----------:|

| Entertainment | 16,104 | 365 | 1095 | 17,565 |

| National | 117,566 | 2,664 | 7,994 | 128,226 |

| Nature | 467 | 10 | 31 | 510 |

| International | 30,558 | 692 | 2,078 | 33,329 |

| Sports | 17,635 | 399 | 1,199 | 19,235 |

| Economy | 6,447 | 146 | 438 | 7,032 |

| Life-Health | 6,356 | 144 | 432 | 6,933 |

| Miscellaneous | 1,599 | 36 | 108 | 1,744 |

| Opinion | 3,501 | 79 | 238 | 3,819 |

| Politics | 15,018 | 340 | 1,021 | 16,380 |

| Edu-Career | 4,008 | 90 | 272 | 4,372 |

| Science-Tech | 1,046 | 23 | 71 | 1,141 |

| Religion | 269 | 6 | 18 | 294 |

| **Total** | **220,574** | **4,994** | **15,012** | **240,580** |

## Dataset Creation

We crawl around 900,000 raw data samples from seven famous Bengali newspapers concentrating on certain

criteria, such as headline, article, image caption, category, and topic words. Since each of the newspapers

mentioned above has its own professional authors and distinct writing style, we consider multiple sources

to prevent the bias of a particular annotation style. To ensure content diversity, we also cover various

domains from all the news dailies. The majority of the news samples are extracted from HTML bodies of the

corresponding publications, while some are rendered using JavaScript. However, two of them do not provide

the archives on their websites; therefore, we collect the samples through their APIs... [details in the paper](https://aclanthology.org/2023.eacl-main.4.pdf)

<!---

### Curation Rationale

[More Information Needed]

-->

### Source Data

| **Newspaper** | **URL** |

|:-------------------:|:------------------------:|

| Prothom Alo | www.prothomalo.com |

| Naya Diganta | www.dailynayadiganta.com |

| Ajker Patrika | www.ajkerpatrika.com |

| Bangladesh Protidin | www.bd-pratidin.com |

| Samakal | www.samakal.com |

| Bhorer Kagoj | www.bhorerkagoj.com |

| Dhaka Tribune | www.dhakatribune.com |

<!---

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

-->

### Discussion of Ethics

We considered some ethical aspects while scraping the data. We requested data at a reasonable rate

without any intention of a DDoS attack. Moreover, for each website, we read the instructions listed in

robots.txt to check whether we can crawl the intended content. We tried to minimize offensive texts in

the data by explicitly crawling the sites where such contents are minimal. Further, we removed the

Personal Identifying Information (PII) such as name, phone number, email address, _etc._ from the corpus.

### Other Known Limitations

Our dataset relies on auxiliary information such as image captions and topic words to achieve superior

performance in generating news headlines. However, it is quite common to include images and extra information

(e.g., topic words) to increase the article’s visibility, support, and context. On top of that **Shironaam**

corpus supports only Bengali, a widely spoken but low-resource language. Still, this idea of using auxiliary

information to improve headline generation performance can easily be extendable for many languages.

## Additional Information

<!---

### Dataset Curators

[More Information Needed]

-->

### Licensing Information

Contents of this repository are restricted to only non-commercial research purposes under the [Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License (CC BY-NC-SA 4.0)](https://creativecommons.org/licenses/by-nc-sa/4.0/).

Copyright of the dataset contents belongs to the original copyright holders.

### Citation Information

If you find this work useful for your research, please consider citing:

```

@inproceedings{akash-etal-2023-shironaam,

title = "Shironaam: {B}engali News Headline Generation using Auxiliary Information",

author = "Akash, Abu Ubaida and

Nayeem, Mir Tafseer and

Shohan, Faisal Tareque and

Islam, Tanvir",

booktitle = "Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics",

month = may,

year = "2023",

address = "Dubrovnik, Croatia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.eacl-main.4",

pages = "52--67"

}

```

### Contributors

- Abu Ubaida Akash (akash.ubaida@gmail.com)

- Mir Tafseer Nayeem (mnayeem@ualberta.ca)

- Faisal Tareque Shohan (faisaltareque@hotmail.com)

- Tanvir Islam (tislam@hawaii.edu)

### Acknowledgements

- This work is the outcome of the ongoing research at [Dialect AI Research Group](https://github.com/dialect-ai).

- Mir Tafseer Nayeem is supported by [Huawei](https://digitalpower.huawei.com/en/) Doctoral Fellowship. |

false | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false | |

true | |

false | |

false | ### Dataset Summary

CC-News-PT is a curation of news articles from CommonCrawl News in the Portuguese language.

CommonCrawl News is a dataset containing news articles from news sites all over the world.

The data is available on AWS S3 in the Common Crawl bucket at /crawl-data/CC-NEWS/.

This version of the dataset is the portuguese subset from [CloverSearch/cc-news-mutlilingual](https://huggingface.co/datasets/CloverSearch/cc-news-mutlilingual).

### Data Fields

- `title`: a `string` feature.

- `text`: a `string` feature.

- `authors`: a `string` feature.

- `domain`: a `string` feature.

- `date`: a `string` feature.

- `description`: a `string` feature.

- `url`: a `string` feature.

- `image_url`: a `string` feature.

- `date_download`: a `string` feature.

### How to use this dataset

```python

from datasets import load_dataset

dataset = load_dataset("eduagarcia/cc_news_pt", split="train")

```

### Cite

```

@misc{Acerola2023,

author = {Garcia, E.A.S.},

title = {Acerola Corpus: Towards Better Portuguese Language Models},

year = {2023},

doi = {10.57967/hf/0814}

}

``` |

false | # Dataset Card for BabelCode MBPP

## Dataset Description

- **Repository:** [GitHub Repository](https://github.com/google-research/babelcode)

- **Paper:** [Measuring The Impact Of Programming Language Distribution](https://arxiv.org/abs/2302.01973)

### How To Use This Dataset

To quickly evaluate BC-MBPP predictions, save the `qid` and `language` keys along with the postprocessed prediction code in a JSON lines file. Then follow the install instructions for [BabelCode](https://github.com/google-research/babelcode), and you can evaluate your predictions.

### Dataset Summary

The BabelCode-MBPP (BC-MBPP) dataset converts the [MBPP dataset released by Google](https://arxiv.org/abs/2108.07732) to 16 programming languages.

### Supported Tasks and Leaderboards

### Languages

BC-MBPP supports:

* C++

* C#

* Dart

* Go

* Haskell

* Java

* Javascript

* Julia

* Kotlin

* Lua

* PHP

* Python

* R

* Rust

* Scala

* TypeScript

## Dataset Structure

```python

>>> from datasets import load_dataset

>>> load_dataset("gabeorlanski/bc-mbpp")

DatasetDict({

train: Dataset({

features: ['qid', 'title', 'language', 'text', 'signature_with_docstring', 'signature', 'arguments', 'entry_fn_name', 'entry_cls_name', 'test_code'],

num_rows: 332

})

test: Dataset({

features: ['qid', 'title', 'language', 'text', 'signature_with_docstring', 'signature', 'arguments', 'entry_fn_name', 'entry_cls_name', 'test_code'],

num_rows: 437

})

validation: Dataset({

features: ['qid', 'title', 'language', 'text', 'signature_with_docstring', 'signature', 'arguments', 'entry_fn_name', 'entry_cls_name', 'test_code'],

num_rows: 76

})

prompt: Dataset({

features: ['qid', 'title', 'language', 'text', 'signature_with_docstring', 'signature', 'arguments', 'entry_fn_name', 'entry_cls_name', 'test_code'],

num_rows: 10

})

})

```

### Data Fields

- `qid`: The question ID used for running tests.

- `title`: The title of the question.

- `language`: The programming language of the example.

- `text`: The description of the problem.

- `signature`: The signature for the problem.

- `signature_with_docstring`: The signature with the adequately formatted docstring for the given problem.

- `arguments`: The arguments of the problem.

- `entry_fn_name`: The function's name to use an entry point.

- `entry_cls_name`: The class name to use an entry point.

- `test_code`: The raw testing script used in the language. If you want to use this, replace `PLACEHOLDER_FN_NAME` (and `PLACEHOLDER_CLS_NAME` if needed) with the corresponding entry points. Next, replace `PLACEHOLDER_CODE_BODY` with the postprocessed prediction.

## Dataset Creation

See section 2 of the [BabelCode Paper](https://arxiv.org/abs/2302.01973) to learn more about how the datasets are translated.

### Curation Rationale

### Source Data

#### Initial Data Collection and Normalization

#### Who are the source language producers?

### Annotations

#### Annotation process

#### Who are the annotators?

### Personal and Sensitive Information

None.

## Considerations for Using the Data

### Social Impact of Dataset

### Discussion of Biases

### Other Known Limitations

## Additional Information

### Dataset Curators

Google Research

### Licensing Information

CC-BY-4.0

### Citation Information

```

@article{orlanski2023measuring,

title={Measuring The Impact Of Programming Language Distribution},

author={Orlanski, Gabriel and Xiao, Kefan and Garcia, Xavier and Hui, Jeffrey and Howland, Joshua and Malmaud, Jonathan and Austin, Jacob and Singh, Rishah and Catasta, Michele},

journal={arXiv preprint arXiv:2302.01973},

year={2023}

}

@article{Austin2021ProgramSW,

title={Program Synthesis with Large Language Models},

author={Jacob Austin and Augustus Odena and Maxwell Nye and Maarten Bosma and Henryk Michalewski and David Dohan and Ellen Jiang and Carrie J. Cai and Michael Terry and Quoc V. Le and Charles Sutton},

journal={ArXiv},

year={2021},

volume={abs/2108.07732}

}

``` |

false |

# SocialDisNER

This is a third party reupload of the [SocialDisNER](https://temu.bsc.es/socialdisner/) dataset.

This dataset is part of a benchmark in the paper [TODO](TODO).

### Citation Information

```bibtex

TODO

```

### Citation Information of the original dataset

```bibtex

@inproceedings{gasco-sanchez-etal-2022-socialdisner,

title = "The {S}ocial{D}is{NER} shared task on detection of disease mentions in health-relevant content from social media: methods, evaluation, guidelines and corpora",

author = "Gasco S{'a}nchez, Luis and

Estrada Zavala, Darryl and

Farr{'e}-Maduell, Eul{\`a}lia and

Lima-L{'o}pez, Salvador and

Miranda-Escalada, Antonio and

Krallinger, Martin",

booktitle = "Proceedings of The Seventh Workshop on Social Media Mining for Health Applications, Workshop {\&} Shared Task",

month = oct,

year = "2022",

address = "Gyeongju, Republic of Korea",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.smm4h-1.48",

pages = "182--189",

}

```

|

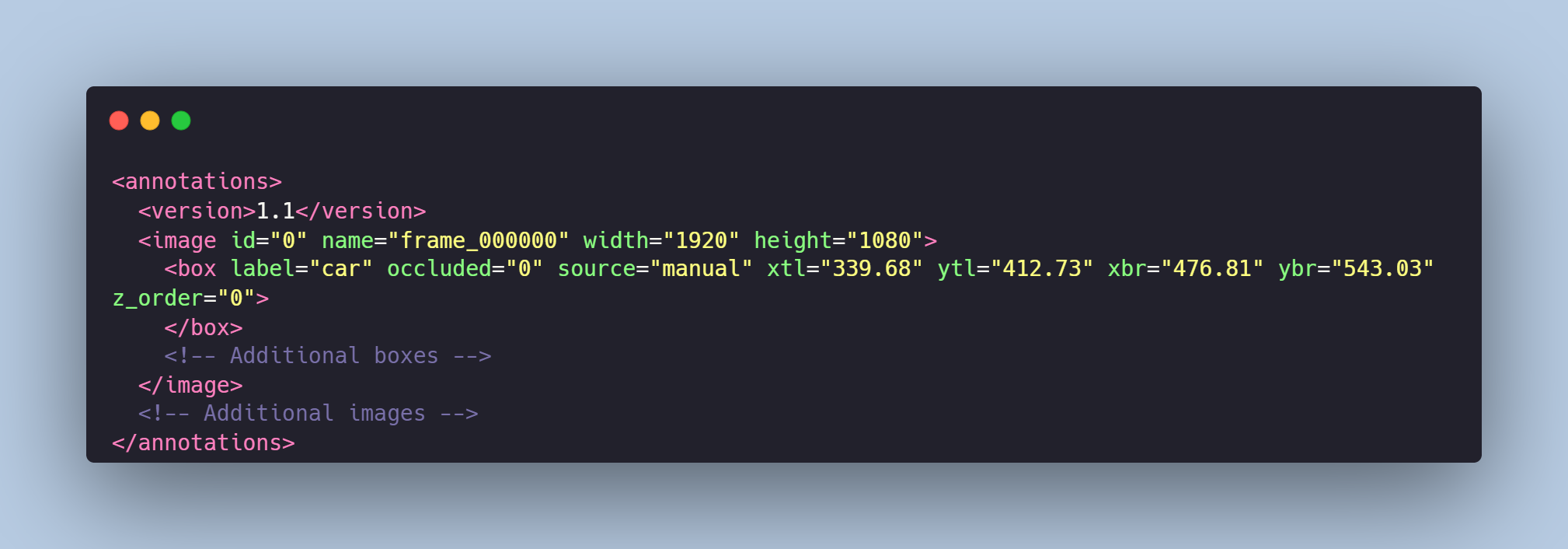

false |

# People Tracking Dataset

The dataset comprises of annotated video frames from positioned in a public space camera. The tracking of each individual in the camera's view has been achieved using the rectangle tool in the Computer Vision Annotation Tool (CVAT).

# Get the Dataset

This is just an example of the data. If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Dataset Structure

- The `images` directory houses the original video frames, serving as the primary source of raw data.

- The `annotations.xml` file provides the detailed annotation data for the images.

- The `boxes` directory contains frames that visually represent the bounding box annotations, showing the locations of the tracked individuals within each frame. These images can be used to understand how the tracking has been implemented and to visualize the marked areas for each individual.

# Data Format

The annotations are represented as rectangle bounding boxes that are placed around each individual. Each bounding box annotation contains the position ( `xtl`-`ytl`-`xbr`-`ybr` coordinates ) for the respective box within the frame.

.png?generation=1687776281548084&alt=media)

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** |

false |

# Cars Tracking

The collection of overhead video frames, capturing various types of vehicles traversing a roadway. The dataset inculdes light vehicles (cars) and heavy vehicles (minivan).

# Get the Dataset

This is just an example of the data. If you need access to the entire dataset, contact us via **[sales@trainingdata.pro](mailto:sales@trainingdata.pro)** or leave a request on **[https://trainingdata.pro/data-market](https://trainingdata.pro/data-market?utm_source=huggingface)**

# Data Format

Each video frame from `images` folder is paired with an `annotations.xml` file that meticulously defines the tracking of each vehicle using polygons.

These annotations not only specify the location and path of each vehicle but also differentiate between the vehicle classes:

- cars,

- minivans.

The data labeling is visualized in the `boxes` folder.

# Example of the XML-file

# Object tracking is made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro**

|

false |

# CleanVid Map (15M) 🎥

### TempoFunk Video Generation Project

CleanVid-15M is a large-scale dataset of videos with multiple metadata entries such as:

- Textual Descriptions 📃

- Recording Equipment 📹

- Categories 🔠

- Framerate 🎞️

- Aspect Ratio 📺

CleanVid aim is to improve the quality of WebVid-10M dataset by adding more data and cleaning the dataset by dewatermarking the videos in it.

This dataset includes only the map with the urls and metadata, with 3,694,510 more entries than the original WebVid-10M dataset.

Note that the videos are low-resolution, ranging from 240p to 480p. But this shouldn't be a problem as resolution scaling is difficult in Text-To-Video models.

More Datasets to come for high-res use cases.

CleanVid is the foundation dataset for the TempoFunk Video Generation project.

Built from a crawl of Shutterstock from June 25, 2023.

## Format 📊

- id: Integer (int64) - Shutterstock video ID

- description: String - Description of the video

- duration: Float(64) - Duration of the video in seconds

- aspectratio: String - Aspect Ratio of the video separated by colons (":")

- videourl: String - Video URL for the video in the entry, MP4 format. WEBM format is also available most of the times (by changing the extension at the end of the URL.).

- author: String - JSON-String containing information of the author such as `Recording Equipment`, `Style`, `Nationality` and others.

- categories: String - JSON-String containing the categories of the videos. (Values from shutterstock, not by us.)

- framerate: Float(64) - Framerate of the video

- r18: Bit (int64) - Wether the video is marked as mature content. 0 = Safe For Work; 1 = Mature Content

## Code 👩💻

If you want to re-create this dataset on your own, code is available here:

https://github.com/chavinlo/tempofunk-scrapper/tree/refractor1/sites/shutterstock

Due to rate-limitations, you might need to obtain a proxy. Functionality for proxies is included in the repository.

## Sample 🧪

```json

{

"id": 1056934082,

"description": "Rio, Brazil - February 24, 2020: parade of the samba school Mangueira, at the Marques de Sapucai Sambodromo",

"duration": 9.76,

"aspectratio": "16:9",

"videourl": "https://www.shutterstock.com/shutterstock/videos/1056934082/preview/stock-footage-rio-brazil-february-parade-of-the-samba-school-mangueira-at-the-marques-de-sapucai.mp4",

"author": {

"accountsId": 101974372,

"contributorId": 62154,

"bio": "Sempre produzindo mais",

"location": "br",

"website": "www.dcpress.com.br",

"contributorTypeList": [

"photographer"

],

"equipmentList": [

"300mm f2.8",

"24-70mm",

"70-200mm",

"Nikon D7500 ",

"Nikon Df",

"Flashs Godox"

],

"styleList": [

"editorial",

"food",

"landscape"

],

"subjectMatterList": [

"photographer",

"people",

"nature",

"healthcare",

"food_and_drink"

],

"facebookUsername": "celso.pupo",

"googlePlusUsername": "celsopupo",

"twitterUsername": "celsopupo",

"storageKey": "/contributors/62154/avatars/thumb.jpg",

"cdnThumbPath": "/contributors/62154/avatars/thumb.jpg",

"displayName": "Celso Pupo",

"vanityUrlUsername": "rodrigues",

"portfolioUrlSuffix": "rodrigues",

"portfolioUrl": "https://www.shutterstock.com/g/rodrigues",

"instagramUsername": "celsopupo",

"hasPublicSets": true,

"instagramUrl": "https://www.instagram.com/celsopupo",

"facebookUrl": "https://www.facebook.com/celso.pupo",

"twitterUrl": "https://twitter.com/celsopupo"

},

"categories": [

"People"

],

"framerate": 29.97,

"r18": 0

}

```

## Credits 👥

### Main

- Lopho - Part of TempoFunk Video Generation

- Chavinlo - Part of TempoFunk Video Generation & CleanVid Crawling, Scraping and Formatting

```

@InProceedings{Bain21,

author = "Max Bain and Arsha Nagrani and G{\"u}l Varol and Andrew Zisserman",

title = "Frozen in Time: A Joint Video and Image Encoder for End-to-End Retrieval",

booktitle = "IEEE International Conference on Computer Vision",

year = "2021",

}

```

### Extra

- Salt - Base Threading Code (2022) |

true |

# Data for *Advancing Italian Biomedical Information Extraction with Large Language Models: Methodological Insights and Multicenter Practical Application*

Manuscript available at [arxiv.org/abs/2306.05323](https://arxiv.org/abs/2306.05323)

## Abstract

The introduction of computerized medical records in hospitals has reduced burdensome activities like manual writing and information fetching. However, the data contained in medical records are still far underutilized, primarily because extracting data from unstructured textual medical records takes time and effort. Information Extraction, a subfield of Natural Language Processing, can help clinical practitioners overcome this limitation by using automated text-mining pipelines. In this work, we created the first Italian neuropsychiatric Named Entity Recognition dataset, PsyNIT, and used it to develop a Large Language Model for this task. Moreover, we collected and leveraged three external independent datasets to implement an effective multicenter model, with overall F1-score 84.77%, Precision 83.16%, Recall 86.44%. The lessons learned are: (i) the crucial role of a consistent annotation process and (ii) a fine-tuning strategy that combines classical methods with a "few-shot" approach. This allowed us to establish methodological guidelines that pave the way for Natural Language Processing studies in less-resourced languages.

*Keywords*: Natural Language Processing | Deep Learning | Biomedical Text Mining | Large Language Model | Transformer

*Correspondence*: ccrema@fatebenefratelli.eu |

false |

The fictional charaters raw data with images have been collected from Wikidata for the "Draw me like my triples" project. |

false | For the English version, please click [here](README_en.md).

# 概要

`databricks-dolly-15k-ja-scored`は`kunishou/databricks-dolly-15k-ja`の派生であり、BERTScoreによって提供される翻訳品質スコアが追加されています。

このデータセットは、学術的・商業的問わず[クリエイティブ・コモンズ 表示 - 継承 3.0 非移植ライセンス](https://creativecommons.org/licenses/by-sa/3.0/deed.ja)の条件の下で何にでも使用することができます。

# 翻訳の品質スコア

`databricks-dolly-15k-ja`は、`databricks-dolly-15k`を機械翻訳したものです。

`databricks-dolly-15k-ja`に含まれるデータを調べてみると、以下のような品質の悪いデータが存在することが分かりました。

1. `input`と`output`が全く同じであるデータ

2. `output`が`instruction`にコピーされているデータ

3. 表記ゆれによって表現の一貫性が保たれていないデータ

4. 固有名詞などの翻訳に失敗しているデータ

なお、`databricks-dolly-15k`では、

- `1.`に当てはまるデータは削除されています。

- `2.`に当てはまるデータはinstructionが削除されてカテゴリがopen-qaに変更されています。

- 差別的な内容を含むデータ(1件)が削除されています。

そこで、これらのデータを日本語から英語に逆翻訳し、[BertScore](https://arxiv.org/abs/1904.09675)によって原文との類似度を調べました。

以下がprecisionとrecallの調和平均であるf1 scoreのヒストグラムです。

<div align="center">

<img src="https://media.githubusercontent.com/media/Sakusakumura/databricks-dolly-15k-ja-scored/e46aec8f9d2602e9e7c074674390263462534a9a/images/f1-score-full.png">

</div>

これらのスコアでフィルタリングをすることで、低品質のデータを除外することができます。

いくつか例を示します。

<details>

<summary>`output`が`instruction`にコピーされているデータ</summary>

|index|f1 score|

|---|---|

|1151|0.599859416|

### candidate(`databricks-dolly-15k-ja`のデータを逆翻訳したもの)

|instruction|input|output|

|--|--|--|