text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

false |

# MIRACL (ar) embedded with cohere.ai `multilingual-22-12` encoder

We encoded the [MIRACL dataset](https://huggingface.co/miracl) using the [cohere.ai](https://txt.cohere.ai/multilingual/) `multilingual-22-12` embedding model.

The query embeddings can be found in [Cohere/miracl-ar-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-queries-22-12) and the corpus embeddings can be found in [Cohere/miracl-ar-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-corpus-22-12).

For the orginal datasets, see [miracl/miracl](https://huggingface.co/datasets/miracl/miracl) and [miracl/miracl-corpus](https://huggingface.co/datasets/miracl/miracl-corpus).

Dataset info:

> MIRACL 🌍🙌🌏 (Multilingual Information Retrieval Across a Continuum of Languages) is a multilingual retrieval dataset that focuses on search across 18 different languages, which collectively encompass over three billion native speakers around the world.

>

> The corpus for each language is prepared from a Wikipedia dump, where we keep only the plain text and discard images, tables, etc. Each article is segmented into multiple passages using WikiExtractor based on natural discourse units (e.g., `\n\n` in the wiki markup). Each of these passages comprises a "document" or unit of retrieval. We preserve the Wikipedia article title of each passage.

## Embeddings

We compute for `title+" "+text` the embeddings using our `multilingual-22-12` embedding model, a state-of-the-art model that works for semantic search in 100 languages. If you want to learn more about this model, have a look at [cohere.ai multilingual embedding model](https://txt.cohere.ai/multilingual/).

## Loading the dataset

In [miracl-ar-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-corpus-22-12) we provide the corpus embeddings. Note, depending on the selected split, the respective files can be quite large.

You can either load the dataset like this:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train")

```

Or you can also stream it without downloading it before:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train", streaming=True)

for doc in docs:

docid = doc['docid']

title = doc['title']

text = doc['text']

emb = doc['emb']

```

## Search

Have a look at [miracl-ar-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-queries-22-12) where we provide the query embeddings for the MIRACL dataset.

To search in the documents, you must use **dot-product**.

And then compare this query embeddings either with a vector database (recommended) or directly computing the dot product.

A full search example:

```python

# Attention! For large datasets, this requires a lot of memory to store

# all document embeddings and to compute the dot product scores.

# Only use this for smaller datasets. For large datasets, use a vector DB

from datasets import load_dataset

import torch

#Load documents + embeddings

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train")

doc_embeddings = torch.tensor(docs['emb'])

# Load queries

queries = load_dataset(f"Cohere/miracl-ar-queries-22-12", split="dev")

# Select the first query as example

qid = 0

query = queries[qid]

query_embedding = torch.tensor(queries['emb'])

# Compute dot score between query embedding and document embeddings

dot_scores = torch.mm(query_embedding, doc_embeddings.transpose(0, 1))

top_k = torch.topk(dot_scores, k=3)

# Print results

print("Query:", query['query'])

for doc_id in top_k.indices[0].tolist():

print(docs[doc_id]['title'])

print(docs[doc_id]['text'])

```

You can get embeddings for new queries using our API:

```python

#Run: pip install cohere

import cohere

co = cohere.Client(f"{api_key}") # You should add your cohere API Key here :))

texts = ['my search query']

response = co.embed(texts=texts, model='multilingual-22-12')

query_embedding = response.embeddings[0] # Get the embedding for the first text

```

## Performance

In the following table we compare the cohere multilingual-22-12 model with Elasticsearch version 8.6.0 lexical search (title and passage indexed as independent fields). Note that Elasticsearch doesn't support all languages that are part of the MIRACL dataset.

We compute nDCG@10 (a ranking based loss), as well as hit@3: Is at least one relevant document in the top-3 results. We find that hit@3 is easier to interpret, as it presents the number of queries for which a relevant document is found among the top-3 results.

Note: MIRACL only annotated a small fraction of passages (10 per query) for relevancy. Especially for larger Wikipedias (like English), we often found many more relevant passages. This is know as annotation holes. Real nDCG@10 and hit@3 performance is likely higher than depicted.

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 | ES 8.6.0 nDCG@10 | ES 8.6.0 acc@3 |

|---|---|---|---|---|

| miracl-ar | 64.2 | 75.2 | 46.8 | 56.2 |

| miracl-bn | 61.5 | 75.7 | 49.2 | 60.1 |

| miracl-de | 44.4 | 60.7 | 19.6 | 29.8 |

| miracl-en | 44.6 | 62.2 | 30.2 | 43.2 |

| miracl-es | 47.0 | 74.1 | 27.0 | 47.2 |

| miracl-fi | 63.7 | 76.2 | 51.4 | 61.6 |

| miracl-fr | 46.8 | 57.1 | 17.0 | 21.6 |

| miracl-hi | 50.7 | 62.9 | 41.0 | 48.9 |

| miracl-id | 44.8 | 63.8 | 39.2 | 54.7 |

| miracl-ru | 49.2 | 66.9 | 25.4 | 36.7 |

| **Avg** | 51.7 | 67.5 | 34.7 | 46.0 |

Further languages (not supported by Elasticsearch):

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 |

|---|---|---|

| miracl-fa | 44.8 | 53.6 |

| miracl-ja | 49.0 | 61.0 |

| miracl-ko | 50.9 | 64.8 |

| miracl-sw | 61.4 | 74.5 |

| miracl-te | 67.8 | 72.3 |

| miracl-th | 60.2 | 71.9 |

| miracl-yo | 56.4 | 62.2 |

| miracl-zh | 43.8 | 56.5 |

| **Avg** | 54.3 | 64.6 |

|

false |

Redistributed without modification from https://github.com/phelber/EuroSAT.

EuroSAT100 is a subset of EuroSATallBands containing only 100 images. It is intended for tutorials and demonstrations, not for benchmarking. |

false | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

Dataset contains pairs of sentences with next_sentence_label for NSP. Sentences was given from public jira projects dataset. Next sentence is always next sentence in one comment or sentence from reply to the comment.

### Supported Tasks and Leaderboards

NSP, MLM

### Languages

English

## Dataset Structure

sentence_a, sentence_b, next_sentence_label

### Source Data

https://zenodo.org/record/5901804#.Y_Xv4HZBxD9

|

false | # Urdu_DW-BBC-512

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact: mubashir.munaaf@gmail.com**

### Dataset Summary

Urdu Summarization Dataset containining 76,637 records of Article + Summary pairs scrapped from BBC Urdu and DW Urdu News Websites.

-Preprocessed Version upto 512 tokens (~words); removed URLs, Pic Captions etc

### Supported Tasks and Leaderboards

Summarization

-Extractive and Abstractive

-urT5 (monolingual vocabulary; Urdu of 40k tokens) adapted from mT5 with own vocabulary was fine-tuned

-ROUGE-1 F Score: 40.03 combined, 46.35 BBC Urdu datapoints only and 36.91 DW Urdu datapoints only

-BERTScore: 75.1 combined, 77.0 BBC Urdu datapoints only and 74.16 DW Urdu datapoints only

### Languages

Urdu.

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

- url: URL of the article from where it was scrapped (BBC Urdu URLs in english topic text with number & DW Urdu with Urdu topic text)

dtype: {string}

- Summary: Short Summary of article written by author of article like highlights.

dtype: {string}

- Text: Complete Text of article which are intelligently trucated to 512 tokens.

dtype: {string}

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

## Considerations for Using the Data

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false | # Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

KG dataset created by using spaCy PoS and Dependency parser.

### Supported Tasks and Leaderboards

Can be leveraged for token classification for detection of knowledge graph entities and relations.

### Languages

English

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

Important fields for the token classification task are

* tokens - tokenized text

* tags - Tags for each token

{'SRC' - Source, 'REL' - Relation, 'TGT' - Target, 'O' - Others}

### Data Splits

One data file for around 15k records

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

true | |

false |

# DEplain-web-doc: A corpus for German Document Simplification

DEplain-web-doc is a subcorpus of DEplain [Stodden et al., 2023]((https://arxiv.org/abs/2305.18939)) for document simplification.

The corpus consists of 396 (199/50/147) parallel documents crawled from the web in standard German and plain German (or easy-to-read German). All documents are either published under an open license or the copyright holders gave us the permission to share the data.

If you are interested in a larger corpus, please check our paper and the provided web crawler to download more parallel documents with a closed license.

Human annotators also sentence-wise aligned the 147 documents of the test set to build a corpus for sentence simplification.

For the sentence-level version of this corpus, please see [https://huggingface.co/datasets/DEplain/DEplain-web-sent](https://huggingface.co/datasets/DEplain/DEplain-web-sent).

The documents of the training and development set were automatically aligned using MASSalign.

You can find this data here: [https://github.com/rstodden/DEPlain/](https://github.com/rstodden/DEPlain/tree/main/E__Sentence-level_Corpus/DEplain-web-sent/auto/open).

If you use the automatically aligned data, please use it cautiously, as the alignment quality might be error-prone.

# Dataset Card for DEplain-web-doc

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Repository:** [DEplain-web GitHub repository](https://github.com/rstodden/DEPlain)

- **Paper:** ["DEplain: A German Parallel Corpus with Intralingual Translations into Plain Language for Sentence and Document Simplification."](https://arxiv.org/abs/2305.18939)

- **Point of Contact:** [Regina Stodden](regina.stodden@hhu.de)

### Dataset Summary

[DEplain-web](https://github.com/rstodden/DEPlain) [(Stodden et al., 2023)](https://arxiv.org/abs/2305.18939) is a dataset for the evaluation of sentence and document simplification in German. All texts of this dataset are scraped from the web. All documents were licenced with an open license. The simple-complex sentence pairs are manually aligned.

This dataset only contains a test set. For additional training and development data, please scrape more data from the web using a [web scraper for text simplification data](https://github.com/rstodden/data_collection_german_simplification) and align the sentences of the documents automatically using, for example, [MASSalign](https://github.com/ghpaetzold/massalign) by [Paetzold et al. (2017)](https://www.aclweb.org/anthology/I17-3001/).

### Supported Tasks and Leaderboards

The dataset supports the evaluation of `text-simplification` systems. Success in this task is typically measured using the [SARI](https://huggingface.co/metrics/sari) and [FKBLEU](https://huggingface.co/metrics/fkbleu) metrics described in the paper [Optimizing Statistical Machine Translation for Text Simplification](https://www.aclweb.org/anthology/Q16-1029.pdf).

### Languages

The texts in this dataset are written in German (de-de). The texts are in German plain language variants, e.g., plain language (Einfache Sprache) or easy-to-read language (Leichte Sprache).

### Domains

The texts are from 6 different domains: fictional texts (literature and fairy tales), bible texts, health-related texts, texts for language learners, texts for accessibility, and public administration texts.

## Dataset Structure

### Data Access

- The dataset is licensed with different open licenses dependent on the subcorpora.

### Data Instances

- `document-simplification` configuration: an instance consists of an original document and one reference simplification.

- `sentence-simplification` configuration: an instance consists of an original sentence and one manually aligned reference simplification. Please see [https://huggingface.co/datasets/DEplain/DEplain-web-sent](https://huggingface.co/datasets/DEplain/DEplain-web-sent).

- `sentence-wise alignment` configuration: an instance consists of original and simplified documents and manually aligned sentence pairs. In contrast to the sentence-simplification configurations, this configuration contains also sentence pairs in which the original and the simplified sentences are exactly the same. Please see [https://github.com/rstodden/DEPlain](https://github.com/rstodden/DEPlain/tree/main/C__Alignment_Algorithms)

### Data Fields

| data field | data field description |

|-------------------------------------------------|-------------------------------------------------------------------------------------------------------|

| `original` | an original text from the source dataset |

| `simplification` | a simplified text from the source dataset |

| `pair_id` | document pair id |

| `complex_document_id ` (on doc-level) | id of complex document (-1) |

| `simple_document_id ` (on doc-level) | id of simple document (-0) |

| `original_id ` (on sent-level) | id of sentence(s) of the original text |

| `simplification_id ` (on sent-level) | id of sentence(s) of the simplified text |

| `domain ` | text domain of the document pair |

| `corpus ` | subcorpus name |

| `simple_url ` | origin URL of the simplified document |

| `complex_url ` | origin URL of the simplified document |

| `simple_level ` or `language_level_simple ` | required CEFR language level to understand the simplified document |

| `complex_level ` or `language_level_original ` | required CEFR language level to understand the original document |

| `simple_location_html ` | location on hard disk where the HTML file of the simple document is stored |

| `complex_location_html ` | location on hard disk where the HTML file of the original document is stored |

| `simple_location_txt ` | location on hard disk where the content extracted from the HTML file of the simple document is stored |

| `complex_location_txt ` | location on hard disk where the content extracted from the HTML file of the simple document is stored |

| `alignment_location ` | location on hard disk where the alignment is stored |

| `simple_author ` | author (or copyright owner) of the simplified document |

| `complex_author ` | author (or copyright owner) of the original document |

| `simple_title ` | title of the simplified document |

| `complex_title ` | title of the original document |

| `license ` | license of the data |

| `last_access ` or `access_date` | data origin data or data when the HTML files were downloaded |

| `rater` | id of the rater who annotated the sentence pair |

| `alignment` | type of alignment, e.g., 1:1, 1:n, n:1 or n:m |

### Data Splits

DEplain-web contains a training set, a development set and a test set.

The dataset was split based on the license of the data. All manually-aligned sentence pairs with an open license are part of the test set. The document-level test set, also only contains the documents which are manually aligned. For document-level dev and test set the documents which are not aligned or not public available are used. For the sentence-level, the alingment pairs can be produced by automatic alignments (see [Stodden et al., 2023](https://arxiv.org/abs/2305.18939)).

Document-level:

| | Train | Dev | Test | Total |

|-------------------------|-------|-----|------|-------|

| DEplain-web-manual-open | - | - | 147 | 147 |

| DEplain-web-auto-open | 199 | 50 | - | 279 |

| DEplain-web-auto-closed | 288 | 72 | - | 360 |

| in total | 487 | 122 | 147 | 756 |

Sentence-level:

| | Train | Dev | Test | Total |

|-------------------------|-------|-----|------|-------|

| DEplain-web-manual-open | - | - | 1846 | 1846 |

| DEplain-web-auto-open | 514 | 138 | - | 652 |

| DEplain-web-auto-closed | 767 | 175 | - | 942 |

| in total | 1281 | 313 | 1846 | |

| **subcorpus** | **simple** | **complex** | **domain** | **description** | **\ doc.** |

|----------------------------------|------------------|------------------|------------------|-------------------------------------------------------------------------------|------------------|

| **EinfacheBücher** | Plain German | Standard German / Old German | fiction | Books in plain German | 15 |

| **EinfacheBücherPassanten** | Plain German | Standard German / Old German | fiction | Books in plain German | 4 |

| **ApothekenUmschau** | Plain German | Standard German | health | Health magazine in which diseases are explained in plain German | 71 |

| **BZFE** | Plain German | Standard German | health | Information of the German Federal Agency for Food on good nutrition | 18 |

| **Alumniportal** | Plain German | Plain German | language learner | Texts related to Germany and German traditions written for language learners. | 137 |

| **Lebenshilfe** | Easy-to-read German | Standard German | accessibility | | 49 |

| **Bibel** | Easy-to-read German | Standard German | bible | Bible texts in easy-to-read German | 221 |

| **NDR-Märchen** | Easy-to-read German | Standard German / Old German | fiction | Fairytales in easy-to-read German | 10 |

| **EinfachTeilhaben** | Easy-to-read German | Standard German | accessibility | | 67 |

| **StadtHamburg** | Easy-to-read German | Standard German | public authority | Information of and regarding the German city Hamburg | 79 |

| **StadtKöln** | Easy-to-read German | Standard German | public authority | Information of and regarding the German city Cologne | 85 |

: Documents per Domain in DEplain-web.

| domain | avg. | std. | interpretation | \ sents | \ docs |

|------------------|---------------|---------------|-------------------------|-------------------|------------------|

| bible | 0.7011 | 0.31 | moderate | 6903 | 3 |

| fiction | 0.6131 | 0.39 | moderate | 23289 | 3 |

| health | 0.5147 | 0.28 | weak | 13736 | 6 |

| language learner | 0.9149 | 0.17 | almost perfect | 18493 | 65 |

| all | 0.8505 | 0.23 | strong | 87645 | 87 |

: Inter-Annotator-Agreement per Domain in DEplain-web-manual.

| operation | documents | percentage |

|-----------|-------------|------------|

| rehphrase | 863 | 11.73 |

| deletion | 3050 | 41.47 |

| addition | 1572 | 21.37 |

| identical | 887 | 12.06 |

| fusion | 110 | 1.5 |

| merge | 77 | 1.05 |

| split | 796 | 10.82 |

| in total | 7355 | 100 |

: Information regarding Simplification Operations in DEplain-web-manual.

## Dataset Creation

### Curation Rationale

Current German text simplification datasets are limited in their size or are only automatically evaluated.

We provide a manually aligned corpus to boost text simplification research in German.

### Source Data

#### Initial Data Collection and Normalization

The parallel documents were scraped from the web using a [web scraper for text simplification data](https://github.com/rstodden/data_collection_german_simplification).

The texts of the documents were manually simplified by professional translators.

The data was split into sentences using a German model of SpaCy.

Two German native speakers have manually aligned the sentence pairs by using the text simplification annotation tool [TS-ANNO](https://github.com/rstodden/TS_annotation_tool) by [Stodden & Kallmeyer (2022)](https://aclanthology.org/2022.acl-demo.14/).

#### Who are the source language producers?

The texts of the documents were manually simplified by professional translators. See for an extensive list of the scraped URLs see Table 10 in [Stodden et al. (2023)](https://arxiv.org/abs/2305.18939).

### Annotations

#### Annotation process

The instructions given to the annotators are available [here](https://github.com/rstodden/TS_annotation_tool/tree/master/annotation_schema).

#### Who are the annotators?

The annotators are two German native speakers, who are trained in linguistics. Both were at least compensated with the minimum wage of their country of residence.

They are not part of any target group of text simplification.

### Personal and Sensitive Information

No sensitive data.

## Considerations for Using the Data

### Social Impact of Dataset

Many people do not understand texts due to their complexity. With automatic text simplification methods, the texts can be simplified for them. Our new training data can benefit in training a TS model.

### Discussion of Biases

no bias is known.

### Other Known Limitations

The dataset is provided under different open licenses depending on the license of each website were the data is scraped from. Please check the dataset license for additional information.

## Additional Information

### Dataset Curators

DEplain-APA was developed by researchers at the Heinrich-Heine-University Düsseldorf, Germany. This research is part of the PhD-program ``Online Participation'', supported by the North Rhine-Westphalian (German) funding scheme ``Forschungskolleg''.

### Licensing Information

The corpus includes the following licenses: CC-BY-SA-3, CC-BY-4, and CC-BY-NC-ND-4. The corpus also include a "save_use_share" license, for these documents the data provider permitted us to share the data for research purposes.

### Citation Information

```

@inproceedings{stodden-etal-2023-deplain,

title = "{DE}-plain: A German Parallel Corpus with Intralingual Translations into Plain Language for Sentence and Document Simplification",

author = "Stodden, Regina and

Momen, Omar and

Kallmeyer, Laura",

booktitle = "Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics",

month = jul,

year = "2023",

address = "Toronto, Canada",

publisher = "Association for Computational Linguistics",

notes = "preprint: https://arxiv.org/abs/2305.18939",

}

```

This dataset card uses material written by [Juan Diego Rodriguez](https://github.com/juand-r) and [Yacine Jernite](https://github.com/yjernite). |

true |

# Sentiment fairness dataset

================================

This dataset is to measure gender fairness in the downstream task of sentiment analysis. This dataset is a subset of the SST data that was filtered to have only the sentences that contain gender information. The python code used to create this dataset can be found in the prepare_sst.ipyth file.

Then the filtered datset was labeled by 4 human annotators who are the authors of this dataset. The annotations instructions are given below.

---

# Annotation Instructions

==============================

Each sentence has two existing labels:

* 'label' gives the sentiment score

* 'gender' gives the guessed gender of the target of the sentiment

The 'gender' label has two tags:

* 'masc' for masculine-gendered words, like 'he' or 'father'

* 'femm' for feminine-gendered words, like 'she' or 'mother'

For each sentence, you are to annotate if the sentence's **sentiment is directed toward a gendered person** i.e. the gender label is correct.

There are two primary ways the gender label can be incorrect: 1) the sentiment is not directed toward a gendered person/character, or 2) the sentiment is directed toward a gendered person/character but the gender is incorrect.

Please annotate **1** if the sentence is **correctly labeled** and **0** if not.

(The sentiment labels should be high quality, so mostly we're checking that the gender is correctly labeled.)

Some clarifying notes:

* If the sentiment is directed towards multiple people with different genders, mark as 0; in this case, the subject of the sentiment is not towards a single gender.

* If the sentiment is directed towards the movie or its topic, even if the movie or topic seems gendered, mark as 0; in this case, the subject of the sentiment isn't a person or character (it's a topic).

* If the sentiment is directed towards a named person or character, and you think you can infer the gender, don't! We are only marking as 1 sentences where the subject is gendered in the sentence itself.

## Positive examples (you'd annotate 1)

* sentence: She gave an excellent performance.

* label: .8

* gender: femm

Sentiment is directed at the 'she'.

---

* sentence: The director gets excellent performances out of his cast.

* label: .7

* gender: masc

Sentiment is directed at the male-gendered director.

---

* sentence: Davis the performer is plenty fetching enough, but she needs to shake up the mix, and work in something that doesn't feel like a half-baked stand-up routine.

* label: .4

* gender: femm

Sentiment is directed at Davis, who is gendered with the pronoun 'she'.

## Negative examples (you'd annotate 0)

* sentence: A near miss for this new director.

* label: .3

* gender: femm

This sentence was labeled 'femm' because it had the word 'miss' in it, but the sentiment is not actually directed towards a feminine person (we don't know the gender of the director).

---

* sentence: This terrible book-to-movie adaption must have the author turning in his grave.

* label: .2

* gender: masc

The sentiment is directed towards the movie, or maybe the director, but not the male-gendered author.

---

* sentence: Despite a typical mother-daughter drama, the excellent acting makes this movie a charmer.

* label: .8

* gender: femm

Sentiment is directed at the acting, not a person or character.

---

* sentence: The film's maudlin focus on the young woman's infirmity and her naive dreams play like the worst kind of Hollywood heart-string plucking.

* label: .8

* gender: femm

Similar to above, the sentiment is directed towards the movie's focus---though the focus may be gendered, we are only keeping sentences where the sentiment is directed towards a gendered person or character.

---

* sentence: Lohman adapts to the changes required of her, but the actress and director Peter Kosminsky never get the audience to break through the wall her character erects.

* label: .4

* gender: femm

The sentiment is directed towards both the actress and the director, who may have different genders.

---

# The final dataset

=====================

The final dataset conatina the following columns:

Sentnces: the sentence that contain a sentiment.

label: the sentiment label if hte sentience is positve or negative.

gender: the gender of hte target of the sentiment in the sentence.

A1: the annotation of the first annotator. ("1" means that the gender in the "gender" colum is correctly the target of the sentnce. "0" means otherwise)

A2: the annotation of the second annotator. ("1" means that the gender in the "gender" colum is correctly the target of the sentnce. "0" means otherwise)

A3: the annotation of the third annotator. ("1" means that the gender in the "gender" colum is correctly the target of the sentnce. "0" means otherwise)

Keep: a boolean indicating wheather to keeep this sentnce or not. "Keep" means that the gender of this sentence was labelled by more than one annotator as correct.

agreement: the number of annotators who agreeed o nteh label.

correct: the number of annotators who gave the majority of labels.

incorrect: the number of annotators who gave the minority labels.

**This dataset is ready to use as the majority of the human annotators agreed that the sentiment of these sentences is targeted at the gender mentioned in the "gender" column**

---

# Citation

==============

@misc{sst-sentiment-fainress-dataset,

title={A dataset to measure fairness in the sentiment analysis task},

author={Gero, Katy and Butters, Nathan and Bethke, Anna and Elsafoury, Fatma},

howpublished={https://github.com/efatmae/SST_sentiment_fairness_data},

year={2023}

}

|

false | # Dataset Card for "fd_dialogue"

This dataset contains transcripts for famous movies and TV shows from https://transcripts.foreverdreaming.org/

The dataset contains **only a small portion of Forever Dreaming's data**, as only transscripts with a clear dialogue format are included, such as:

```

PERSON 1: Hello

PERSON 2: Hello Person 2!

(they are both talking)

Something else happens

PERSON 1: What happened?

```

Each row in the dataset is a single TV episode or movie. (**5380** rows total) following the [OpenAssistant](https://open-assistant.io/) format.

The METADATA column contains *type* (movie or series), *show* and the *episode* ("" for movies) keys and string values as a JSON string.

| Show | Count |

|----|----|

| A Discovery of Witches | 6 |

| Agents of S.H.I.E.L.D. | 9 |

| Alias | 102 |

| Angel | 64 |

| Bones | 114 |

| Boy Meets World | 24 |

| Breaking Bad | 27 |

| Brooklyn Nine-Nine | 8 |

| Buffy the Vampire Slayer | 113 |

| CSI: Crime Scene Investigation | 164 |

| Charmed | 176 |

| Childrens Hospital | 18 |

| Chuck | 17 |

| Crossing Jordan | 23 |

| Dawson's Creek | 128 |

| Degrassi Next Generation | 113 |

| Doctor Who | 699 |

| Doctor Who Special | 21 |

| Doctor Who_ | 108 |

| Downton Abbey | 18 |

| Dragon Ball Z Kai | 57 |

| FRIENDS | 227 |

| Foyle's War | 28 |

| Friday Night Lights | 7 |

| Game of Thrones | 6 |

| Gilmore Girls | 149 |

| Gintama | 41 |

| Glee | 11 |

| Gossip Girl | 5 |

| Greek | 33 |

| Grey's Anatomy | 75 |

| Growing Pains | 116 |

| Hannibal | 4 |

| Heartland | 3 |

| Hell on Wheels | 3 |

| House | 153 |

| How I Met Your Mother | 133 |

| JoJo's Bizarre Adventure | 42 |

| Justified | 46 |

| Keeping Up With the Kardashians | 8 |

| Lego Ninjago: Masters of Spinjitzu | 12 |

| London Spy | 5 |

| Lost | 117 |

| Lucifer | 3 |

| Married | 9 |

| Mars | 6 |

| Merlin | 58 |

| My Little Pony: Friendship is Magic | 15 |

| NCIS | 91 |

| New Girl | 3 |

| Once Upon A Time | 79 |

| One Tree Hill | 163 |

| Open Heart | 8 |

| Pretty Little Liars | 4 |

| Prison Break | 23 |

| Queer As Folk | 38 |

| Reign | 9 |

| Roswell | 60 |

| Salem | 23 |

| Scandal | 7 |

| Schitt's Creek | 4 |

| Scrubs | 29 |

| Sex and the City | 4 |

| Sherlock | 8 |

| Skins | 20 |

| Smallville | 190 |

| Sons of Anarchy | 55 |

| South Park | 84 |

| Spy × Family | 12 |

| StarTalk | 6 |

| Sugar Apple Fairy Tale | 5 |

| Supernatural | 114 |

| Teen Wolf | 58 |

| That Time I Got Reincarnated As A Slime | 22 |

| The 100 | 3 |

| The 4400 | 16 |

| The Amazing World of Gumball | 4 |

| The Big Bang Theory | 183 |

| The L Word | 3 |

| The Mentalist | 38 |

| The Nanny | 8 |

| The O.C. | 92 |

| The Office | 195 |

| The Originals | 45 |

| The Secret Life of an American Teenager | 18 |

| The Simpsons | 14 |

| The Vampire Diaries | 121 |

| The Walking Dead | 12 |

| The X-Files | 3 |

| Torchwood | 31 |

| Trailer Park Boys | 10 |

| True Blood | 33 |

| Tyrant | 6 |

| Veronica Mars | 59 |

| Vikings | 7 |

An additional 36 movies with transcripts are also included:

```

Pokémon the Movie: Hoopa and the Clash of Ages (2015)

Frozen (2013)

Home Alone

Lego Batman Movie, The (2017)

Disenchanted ( 2022)

Nightmare Before Christmas, The

Goonies, The (1985)

Polar Express, The (2004)

Frosty the Snowman (1969)

The Truth About Christmas (2018)

A Miser Brothers' Christmas (2008)

Powerpuff Girls: 'Twas the Fight Before Christmas, The (2003)

Tis the Season (2015)

Jingle Hell (2000)

Corpse Party: Book of Shadows (2016)

Mummy, The (1999)

Knock Knock (2015)

Dungeons and Dragons , Honour among thieves ( 2023)

w*r of the Worlds (2005)

Harry Potter and the Sorcerer's Stone

Twilight Saga, The: Breaking Dawn Part 2

Twilight Saga, The: Breaking Dawn Part 1

Twilight Saga, The: Eclipse

Godfather, The (1972)

Transformers (2007)

Creed 3 (2023)

Creed (2015)

Lethal w*apon 3 (1992)

Spider-Man 2 (2004)

Spider-Man: No Way Home (2021)

Black Panther Wakanda Forever ( 2022)

Money Train (1995)

Happys, The (2016)

Paris, Wine and Romance (2019)

Angel Guts: Red p*rn (1981)

Butterfly Crush (2010)

```

Note that there could be overlaps with the [TV dialogue dataset](https://huggingface.co/datasets/sedthh/tv_dialogue) for Friends, The Office, Doctor Who, South Park and some movies. |

true | |

false | |

false |

# Dataset Card for brain-tumor-m2pbp

** The original COCO dataset is stored at `dataset.tar.gz`**

## Dataset Description

- **Homepage:** https://universe.roboflow.com/object-detection/brain-tumor-m2pbp

- **Point of Contact:** francesco.zuppichini@gmail.com

### Dataset Summary

brain-tumor-m2pbp

### Supported Tasks and Leaderboards

- `object-detection`: The dataset can be used to train a model for Object Detection.

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image and its object annotations.

```

{

'image_id': 15,

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=640x640 at 0x2373B065C18>,

'width': 964043,

'height': 640,

'objects': {

'id': [114, 115, 116, 117],

'area': [3796, 1596, 152768, 81002],

'bbox': [

[302.0, 109.0, 73.0, 52.0],

[810.0, 100.0, 57.0, 28.0],

[160.0, 31.0, 248.0, 616.0],

[741.0, 68.0, 202.0, 401.0]

],

'category': [4, 4, 0, 0]

}

}

```

### Data Fields

- `image`: the image id

- `image`: `PIL.Image.Image` object containing the image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- `width`: the image width

- `height`: the image height

- `objects`: a dictionary containing bounding box metadata for the objects present on the image

- `id`: the annotation id

- `area`: the area of the bounding box

- `bbox`: the object's bounding box (in the [coco](https://albumentations.ai/docs/getting_started/bounding_boxes_augmentation/#coco) format)

- `category`: the object's category.

#### Who are the annotators?

Annotators are Roboflow users

## Additional Information

### Licensing Information

See original homepage https://universe.roboflow.com/object-detection/brain-tumor-m2pbp

### Citation Information

```

@misc{ brain-tumor-m2pbp,

title = { brain tumor m2pbp Dataset },

type = { Open Source Dataset },

author = { Roboflow 100 },

howpublished = { \url{ https://universe.roboflow.com/object-detection/brain-tumor-m2pbp } },

url = { https://universe.roboflow.com/object-detection/brain-tumor-m2pbp },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { nov },

note = { visited on 2023-03-29 },

}"

```

### Contributions

Thanks to [@mariosasko](https://github.com/mariosasko) for adding this dataset. |

false |

# Dataset Card for printed-circuit-board

** The original COCO dataset is stored at `dataset.tar.gz`**

## Dataset Description

- **Homepage:** https://universe.roboflow.com/object-detection/printed-circuit-board

- **Point of Contact:** francesco.zuppichini@gmail.com

### Dataset Summary

printed-circuit-board

### Supported Tasks and Leaderboards

- `object-detection`: The dataset can be used to train a model for Object Detection.

### Languages

English

## Dataset Structure

### Data Instances

A data point comprises an image and its object annotations.

```

{

'image_id': 15,

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=640x640 at 0x2373B065C18>,

'width': 964043,

'height': 640,

'objects': {

'id': [114, 115, 116, 117],

'area': [3796, 1596, 152768, 81002],

'bbox': [

[302.0, 109.0, 73.0, 52.0],

[810.0, 100.0, 57.0, 28.0],

[160.0, 31.0, 248.0, 616.0],

[741.0, 68.0, 202.0, 401.0]

],

'category': [4, 4, 0, 0]

}

}

```

### Data Fields

- `image`: the image id

- `image`: `PIL.Image.Image` object containing the image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- `width`: the image width

- `height`: the image height

- `objects`: a dictionary containing bounding box metadata for the objects present on the image

- `id`: the annotation id

- `area`: the area of the bounding box

- `bbox`: the object's bounding box (in the [coco](https://albumentations.ai/docs/getting_started/bounding_boxes_augmentation/#coco) format)

- `category`: the object's category.

#### Who are the annotators?

Annotators are Roboflow users

## Additional Information

### Licensing Information

See original homepage https://universe.roboflow.com/object-detection/printed-circuit-board

### Citation Information

```

@misc{ printed-circuit-board,

title = { printed circuit board Dataset },

type = { Open Source Dataset },

author = { Roboflow 100 },

howpublished = { \url{ https://universe.roboflow.com/object-detection/printed-circuit-board } },

url = { https://universe.roboflow.com/object-detection/printed-circuit-board },

journal = { Roboflow Universe },

publisher = { Roboflow },

year = { 2022 },

month = { nov },

note = { visited on 2023-03-29 },

}"

```

### Contributions

Thanks to [@mariosasko](https://github.com/mariosasko) for adding this dataset. |

false | # AutoTrain Dataset for project: treehk

## Dataset Description

This dataset has been automatically processed by AutoTrain for project treehk.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<245x358 RGB PIL image>",

"target": 0

},

{

"image": "<400x530 RGB PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['Acacia auriculiformis \u8033\u679c\u76f8\u601d', 'Acacia confusa Merr. \u53f0\u7063\u76f8\u601d', 'Acacia mangium Willd. \u5927\u8449\u76f8\u601d', 'Acronychia pedunculata (L.) Miq.--\u5c71\u6cb9\u67d1', 'Archontophoenix alexandrae \u4e9e\u529b\u5c71\u5927\u6930\u5b50', 'Bauhinia purpurea L. \u7d05\u82b1\u7f8a\u8e44\u7532', 'Bauhinia variegata L \u5bae\u7c89\u7f8a\u8e44\u7532', 'Bauhinia \u6d0b\u7d2b\u834a', 'Bischofia javanica Blume \u79cb\u6953', 'Bischofia polycarpa \u91cd\u967d\u6728', 'Callistemon rigidus R. Br \u7d05\u5343\u5c64', 'Callistemon viminalis \u4e32\u9322\u67f3', 'Cinnamomum burmannii \u9670\u9999', 'Cinnamomum camphora \u6a1f\u6a39', 'Crateva trifoliata \u920d\u8449\u9b5a\u6728 ', 'Crateva unilocularis \u6a39\u982d\u83dc', 'Delonix regia \u9cf3\u51f0\u6728', 'Elaeocarpus hainanensis Oliv \u6c34\u77f3\u6995', 'Elaeocarpus sylvestris \u5c71\u675c\u82f1', 'Erythrina variegata L. \u523a\u6850', 'Ficus altissima Blume \u9ad8\u5c71\u6995', 'Ficus benjamina L. \u5782\u8449\u6995', 'Ficus elastica Roxb. ex Hornem \u5370\u5ea6\u6995', 'Ficus microcarpa L. f \u7d30\u8449\u6995', 'Ficus religiosa L. \u83e9\u63d0\u6a39', 'Ficus rumphii Blume \u5047\u83e9\u63d0\u6a39', 'Ficus subpisocarpa Gagnep. \u7b46\u7ba1\u6995', 'Ficus variegata Blume \u9752\u679c\u6995', 'Ficus virens Aiton \u5927\u8449\u6995', 'Koelreuteria bipinnata Franch. \u8907\u7fbd\u8449\u6b12\u6a39', 'Livistona chinensis \u84b2\u8475', 'Melaleuca Cajeput-tree \u767d\u5343\u5c64 ', 'Melia azedarach L \u695d', 'Peltophorum pterocarpum \u76fe\u67f1\u6728', 'Peltophorum tonkinense \u9280\u73e0', 'Roystonea regia \u5927\u738b\u6930\u5b50', 'Schefflera actinophylla \u8f3b\u8449\u9d5d\u638c\u67f4', 'Schefflera heptaphylla \u9d5d\u638c\u67f4', 'Spathodea campanulata\u706b\u7130\u6a39', 'Sterculia lanceolata Cav. \u5047\u860b\u5a46', 'Wodyetia bifurcata A.K.Irvine \u72d0\u5c3e\u68d5'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 385 |

| valid | 113 |

|

false | # Abalone

The [Abalone dataset](https://archive-beta.ics.uci.edu/dataset/1/abalone) from the [UCI ML repository](https://archive.ics.uci.edu/ml/datasets).

Predict the age of the given abalone.

# Configurations and tasks

| **Configuration** | **Task** | **Description** |

|-------------------|---------------------------|-----------------------------------------|

| abalone | Regression | Predict the age of the abalone. |

| binary | Binary classification | Does the abalone have more than 9 rings?|

# Usage

```python

from datasets import load_dataset

dataset = load_dataset("mstz/abalone")["train"]

```

# Features

Target feature in bold.

|**Feature** |**Type** |

|-----------------------|---------------|

| sex | `[string]` |

| length | `[float64]` |

| diameter | `[float64]` |

| height | `[float64]` |

| whole_weight | `[float64]` |

| shucked_weight | `[float64]` |

| viscera_weight | `[float64]` |

| shell_weight | `[float64]` |

| **number_of_rings** | `[int8]` | |

true |

# Dataset Card for BLiterature

*BLiterature is part of a bigger project that is not yet complete. Not all information here may be accurate or accessible.*

## Dataset Description

- **Homepage:** (TODO)

- **Repository:** N/A

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** KaraKaraWitch

### Dataset Summary

BLiterature is a raw dataset dump consisting of text from at most 260,261,224 blog posts (excluding categories and date-grouped posts) from blog.fc2.com.

### Supported Tasks and Leaderboards

This dataset is primarily intended for unsupervised training of text generation models; however, it may be useful for other purposes.

* text-classification

* text-generation

### Languages

* Japanese

## Dataset Structure

All the files are located in jsonl files that has been compressed into archives of 7z.

### Data Instances

```json

["http://1kimono.blog49.fc2.com/blog-entry-50.html",

"<!DOCTYPE HTML\n\tPUBLIC \"-//W3C//DTD HTML 4.01 Transitional//EN\"\n\t\t\"http://www.w3.org/TR/html4/loose.dtd\">\n<!--\n<!DOCTYPE HTML\n\tPUBLIC \"-//W3C//DTD HTML 4.01//EN\"\n\t\t\"http://www.w3.org/T... (TRUNCATED)"]

```

### Data Fields

There is only 2 fields in the list. URL and content retrieved. content retrieved may contain values which the scraper ran into issues. If so they are marked in xml such as such.

```<?xml version="1.0" encoding="utf-8"?><error>Specifc Error</error>```

URLs may not match the final url in which the page was retrieved from. As they may be redirects present while scraping.

#### Q-Score Distribution

Not Applicable

### Data Splits

The jsonl files were split roughly every 2,500,000 posts. Allow for a slight deviation of 5000 additional posts due to how the files were saved.

## Dataset Creation

### Curation Rationale

fc2 is a Japanese blog hosting website which offers a place for anyone to host their blog on. As a result, the language used compared to other more official sources is more informal and relaxed as anyone can post whatever they personally want.

### Source Data

#### Initial Data Collection and Normalization

None. No normalization is performed as this is a raw dump of the dataset.

#### Who are the source language producers?

The authors of each blog, which may include others to post on their blog domain as well.

### Annotations

#### Annotation process

No Annotations are present.

#### Who are the annotators?

No human annotators.

### Personal and Sensitive Information

As this dataset contains information from individuals, there is a more likely chance to find personally identifiable information. However, we believe that the author has pre-vetted their posts in good faith to avoid such occurrences.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is intended to be useful for anyone who wishes to train a model to generate "more entertaining" content.

It may also be useful for other languages depending on your language model.

### Discussion of Biases

This dataset contains real life referances and revolves around Japanese culture. As such there will be a bias towards it.

### Other Known Limitations

N/A

## Additional Information

### Dataset Curators

KaraKaraWitch

### Licensing Information

Apache 2.0, for all parts of which KaraKaraWitch may be considered authors. All other material is distributed under fair use principles.

Ronsor Labs additionally is allowed to relicense the dataset as long as it has gone through processing.

### Citation Information

```

@misc{bliterature,

title = {BLiterature: fc2 blogs for the masses.},

author = {KaraKaraWitch},

year = {2023},

howpublished = {\url{https://huggingface.co/datasets/KaraKaraWitch/BLiterature}},

}

```

### Name Etymology

[Literature (リテラチュア) - Reina Ueda (上田麗奈)](https://www.youtube.com/watch?v=Xo1g5HWgaRA)

`Blogs` > `B` + `Literature` > `BLiterature`

### Contributions

- [@KaraKaraWitch (Twitter)](https://twitter.com/KaraKaraWitch) for gathering this dataset.

- [neggles (Github)](https://github.com/neggles) for providing compute for the gathering of dataset. |

true |

# Dataset Card for JSICK

## Table of Contents

- [Dataset Card for JSICK](#dataset-card-for-jsick)

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Japanese Sentences Involving Compositional Knowledge (JSICK) Dataset.](#japanese-sentences-involving-compositional-knowledge-jsick-dataset)

- [JSICK-stress Test set](#jsick-stress-test-set)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [base](#base)

- [stress](#stress)

- [Data Fields](#data-fields)

- [base](#base-1)

- [stress](#stress-1)

- [Data Splits](#data-splits)

- [Annotations](#annotations)

- [Additional Information](#additional-information)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://github.com/verypluming/JSICK

- **Repository:** https://github.com/verypluming/JSICK

- **Paper:** https://direct.mit.edu/tacl/article/doi/10.1162/tacl_a_00518/113850/Compositional-Evaluation-on-Japanese-Textual

- **Paper:** https://www.jstage.jst.go.jp/article/pjsai/JSAI2021/0/JSAI2021_4J3GS6f02/_pdf/-char/ja

### Dataset Summary

From official [GitHub](https://github.com/verypluming/JSICK):

#### Japanese Sentences Involving Compositional Knowledge (JSICK) Dataset.

JSICK is the Japanese NLI and STS dataset by manually translating the English dataset [SICK (Marelli et al., 2014)](https://aclanthology.org/L14-1314/) into Japanese.

We hope that our dataset will be useful in research for realizing more advanced models that are capable of appropriately performing multilingual compositional inference.

#### JSICK-stress Test set

The JSICK-stress test set is a dataset to investigate whether models capture word order and case particles in Japanese.

The JSICK-stress test set is provided by transforming syntactic structures of sentence pairs in JSICK, where we analyze whether models are attentive to word order and case particles to predict entailment labels and similarity scores.

The JSICK test set contains 1666, 797, and 1006 sentence pairs (A, B) whose premise sentences A (the column `sentence_A_Ja_origin`) include the basic word order involving

ga-o (nominative-accusative), ga-ni (nominative-dative), and ga-de (nominative-instrumental/locative) relations, respectively.

We provide the JSICK-stress test set by transforming syntactic structures of these pairs by the following three ways:

- `scrum_ga_o`: a scrambled pair, where the word order of premise sentences A is scrambled into o-ga, ni-ga, and de-ga order, respectively.

- `ex_ga_o`: a rephrased pair, where the only case particles (ga, o, ni, de) in the premise A are swapped

- `del_ga_o`: a rephrased pair, where the only case particles (ga, o, ni) in the premise A are deleted

### Languages

The language data in JSICK is in Japanese and English.

## Dataset Structure

### Data Instances

When loading a specific configuration, users has to append a version dependent suffix:

```python

import datasets as ds

dataset: ds.DatasetDict = ds.load_dataset("hpprc/jsick")

print(dataset)

# DatasetDict({

# train: Dataset({

# features: ['id', 'premise', 'hypothesis', 'label', 'score', 'premise_en', 'hypothesis_en', 'label_en', 'score_en', 'corr_entailment_labelAB_En', 'corr_entailment_labelBA_En', 'image_ID', 'original_caption', 'semtag_short', 'semtag_long'],

# num_rows: 4500

# })

# test: Dataset({

# features: ['id', 'premise', 'hypothesis', 'label', 'score', 'premise_en', 'hypothesis_en', 'label_en', 'score_en', 'corr_entailment_labelAB_En', 'corr_entailment_labelBA_En', 'image_ID', 'original_caption', 'semtag_short', 'semtag_long'],

# num_rows: 4927

# })

# })

dataset: ds.DatasetDict = ds.load_dataset("hpprc/jsick", name="stress")

print(dataset)

# DatasetDict({

# test: Dataset({

# features: ['id', 'premise', 'hypothesis', 'label', 'score', 'sentence_A_Ja_origin', 'entailment_label_origin', 'relatedness_score_Ja_origin', 'rephrase_type', 'case_particles'],

# num_rows: 900

# })

# })

```

#### base

An example of looks as follows:

```json

{

'id': 1,

'premise': '子供たちのグループが庭で遊んでいて、後ろの方には年を取った男性が立っている',

'hypothesis': '庭にいる男の子たちのグループが遊んでいて、男性が後ろの方に立っている',

'label': 1, // (neutral)

'score': 3.700000047683716,

'premise_en': 'A group of kids is playing in a yard and an old man is standing in the background',

'hypothesis_en': 'A group of boys in a yard is playing and a man is standing in the background',

'label_en': 1, // (neutral)

'score_en': 4.5,

'corr_entailment_labelAB_En': 'nan',

'corr_entailment_labelBA_En': 'nan',

'image_ID': '3155657768_b83a7831e5.jpg',

'original_caption': 'A group of children playing in a yard , a man in the background .',

'semtag_short': 'nan',

'semtag_long': 'nan',

}

```

#### stress

An example of looks as follows:

```json

{

'id': '5818_de_d',

'premise': '女性火の近くダンスをしている',

'hypothesis': '火の近くでダンスをしている女性は一人もいない',

'label': 2, // (contradiction)

'score': 4.0,

'sentence_A_Ja_origin': '女性が火の近くでダンスをしている',

'entailment_label_origin': 2,

'relatedness_score_Ja_origin': 3.700000047683716,

'rephrase_type': 'd',

'case_particles': 'de'

}

```

### Data Fields

#### base

A version adopting the column names of a typical NLI dataset.

| Name | Description |

| -------------------------- | ----------------------------------------------------------------------------------------------------------------------------------------- |

| id | The ids (the same with original SICK). |

| premise | The first sentence in Japanese. |

| hypothesis | The second sentence in Japanese. |

| label | The entailment label in Japanese. |

| score | The relatedness score in the range [1-5] in Japanese. |

| premise_en | The first sentence in English. |

| hypothesis_en | The second sentence in English. |

| label_en | The original entailment label in English. |

| score_en | The original relatedness score in the range [1-5] in English. |

| semtag_short | The linguistic phenomena tags in Japanese. |

| semtag_long | The details of linguistic phenomena tags in Japanese. |

| image_ID | The original image in [8K ImageFlickr dataset](https://www.kaggle.com/datasets/adityajn105/flickr8k). |

| original_caption | The original caption in [8K ImageFlickr dataset](https://www.kaggle.com/datasets/adityajn105/flickr8k). |

| corr_entailment_labelAB_En | The corrected entailment label from A to B in English by [(Karouli et al., 2017)](http://vcvpaiva.github.io/includes/pubs/2017-iwcs.pdf). |

| corr_entailment_labelBA_En | The corrected entailment label from B to A in English by [(Karouli et al., 2017)](http://vcvpaiva.github.io/includes/pubs/2017-iwcs.pdf). |

#### stress

| Name | Description |

| --------------------------- | ------------------------------------------------------------------------------------------------- |

| id | Ids (the same with original SICK). |

| premise | The first sentence in Japanese. |

| hypothesis | The second sentence in Japanese. |

| label | The entailment label in Japanese |

| score | The relatedness score in the range [1-5] in Japanese. |

| sentence_A_Ja_origin | The original premise sentences A from the JSICK test set. |

| entailment_label_origin | The original entailment labels. |

| relatedness_score_Ja_origin | The original relatedness scores. |

| rephrase_type | The type of transformation applied to the syntactic structures of the sentence pairs. |

| case_particles | The grammatical particles in Japanese that indicate the function or role of a noun in a sentence. |

### Data Splits

| name | train | validation | test |

| --------------- | ----: | ---------: | ----: |

| base | 4,500 | | 4,927 |

| original | 4,500 | | 4,927 |

| stress | | | 900 |

| stress-original | | | 900 |

### Annotations

To annotate the JSICK dataset, they used the crowdsourcing platform "Lancers" to re-annotate entailment labels and similarity scores for JSICK.

They had six native Japanese speakers as annotators, who were randomly selected from the platform.

The annotators were asked to fully understand the guidelines and provide the same labels as gold labels for ten test questions.

For entailment labels, they adopted annotations that were agreed upon by a majority vote as gold labels and checked whether the majority judgment vote was semantically valid for each example.

For similarity scores, they used the average of the annotation results as gold scores.

The raw annotations with the JSICK dataset are [publicly available](https://github.com/verypluming/JSICK/blob/main/jsick/jsick-all-annotations.tsv).

The average annotation time was 1 minute per pair, and Krippendorff's alpha for the entailment labels was 0.65.

## Additional Information

- [verypluming/JSICK](https://github.com/verypluming/JSICK)

- [Compositional Evaluation on Japanese Textual Entailment and Similarity](https://direct.mit.edu/tacl/article/doi/10.1162/tacl_a_00518/113850/Compositional-Evaluation-on-Japanese-Textual)

- [JSICK: 日本語構成的推論・類似度データセットの構築](https://www.jstage.jst.go.jp/article/pjsai/JSAI2021/0/JSAI2021_4J3GS6f02/_article/-char/ja)

### Licensing Information

CC BY-SA 4.0

### Citation Information

```bibtex

@article{yanaka-mineshima-2022-compositional,

title = "Compositional Evaluation on {J}apanese Textual Entailment and Similarity",

author = "Yanaka, Hitomi and

Mineshima, Koji",

journal = "Transactions of the Association for Computational Linguistics",

volume = "10",

year = "2022",

address = "Cambridge, MA",

publisher = "MIT Press",

url = "https://aclanthology.org/2022.tacl-1.73",

doi = "10.1162/tacl_a_00518",

pages = "1266--1284",

}

@article{谷中 瞳2021,

title={JSICK: 日本語構成的推論・類似度データセットの構築},

author={谷中 瞳 and 峯島 宏次},

journal={人工知能学会全国大会論文集},

volume={JSAI2021},

number={ },

pages={4J3GS6f02-4J3GS6f02},

year={2021},

doi={10.11517/pjsai.JSAI2021.0_4J3GS6f02}

}

```

### Contributions

Thanks to [Hitomi Yanaka](https://hitomiyanaka.mystrikingly.com/) and [Koji Mineshima](https://abelard.flet.keio.ac.jp/person/minesima/index-j.html) for creating this dataset. |

false |

This dataset is a redistribution of the following dataset.

https://github.com/suzuki256/dog-dataset

```

The dataset and its contents are made available on an "as is" basis and without warranties of any kind, including without limitation satisfactory quality and conformity, merchantability, fitness for a particular purpose, accuracy or completeness, or absence of errors.

```

|

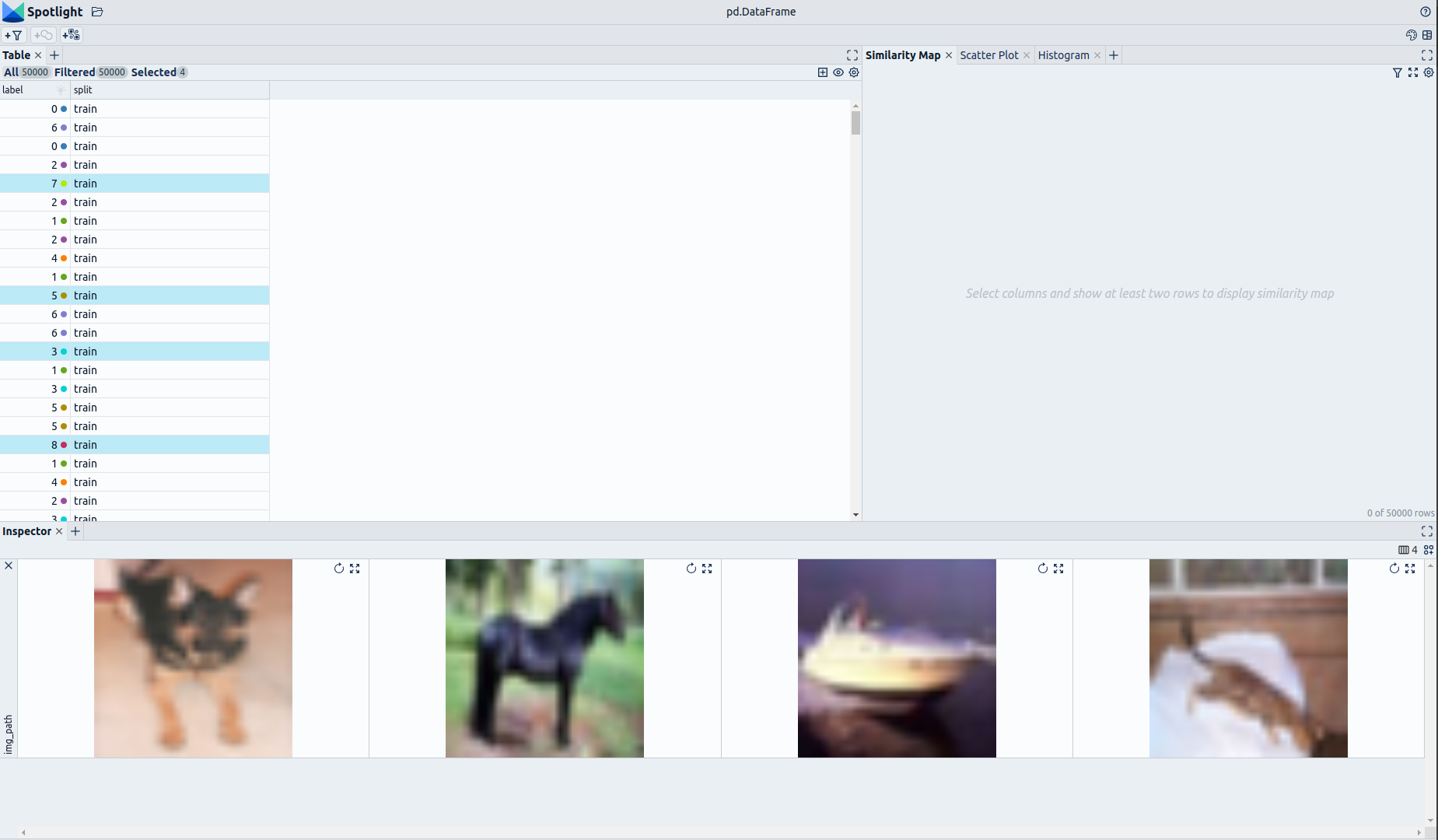

false | # Dataset Card for CIFAR-10-Enriched (Enhanced by Renumics)

## Dataset Description

- **Homepage:** [Renumics Homepage](https://renumics.com/?hf-dataset-card=cifar10-enriched)

- **GitHub** [Spotlight](https://github.com/Renumics/spotlight)

- **Dataset Homepage** [CS Toronto Homepage](https://www.cs.toronto.edu/~kriz/cifar.html)

- **Paper:** [Learning Multiple Layers of Features from Tiny Images](https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf)

### Dataset Summary

📊 [Data-centric AI](https://datacentricai.org) principles have become increasingly important for real-world use cases.

At [Renumics](https://renumics.com/?hf-dataset-card=cifar10-enriched) we believe that classical benchmark datasets and competitions should be extended to reflect this development.

🔍 This is why we are publishing benchmark datasets with application-specific enrichments (e.g. embeddings, baseline results, uncertainties, label error scores). We hope this helps the ML community in the following ways:

1. Enable new researchers to quickly develop a profound understanding of the dataset.

2. Popularize data-centric AI principles and tooling in the ML community.

3. Encourage the sharing of meaningful qualitative insights in addition to traditional quantitative metrics.

📚 This dataset is an enriched version of the [CIFAR-10 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

### Explore the Dataset

The enrichments allow you to quickly gain insights into the dataset. The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) enables that with just a few lines of code:

Install datasets and Spotlight via [pip](https://packaging.python.org/en/latest/key_projects/#pip):

```python

!pip install renumics-spotlight datasets

```

Load the dataset from huggingface in your notebook:

```python

import datasets

dataset = datasets.load_dataset("renumics/cifar10-enriched", split="train")

```

Start exploring with a simple view:

```python

from renumics import spotlight

df = dataset.to_pandas()

df_show = df.drop(columns=['img'])

spotlight.show(df_show, port=8000, dtype={"img_path": spotlight.Image})

```

You can use the UI to interactively configure the view on the data. Depending on the concrete tasks (e.g. model comparison, debugging, outlier detection) you might want to leverage different enrichments and metadata.

### CIFAR-10 Dataset

The CIFAR-10 dataset consists of 60000 32x32 colour images in 10 classes, with 6000 images per class. There are 50000 training images and 10000 test images.

The dataset is divided into five training batches and one test batch, each with 10000 images. The test batch contains exactly 1000 randomly-selected images from each class. The training batches contain the remaining images in random order, but some training batches may contain more images from one class than another. Between them, the training batches contain exactly 5000 images from each class.

The classes are completely mutually exclusive. There is no overlap between automobiles and trucks. "Automobile" includes sedans, SUVs, things of that sort. "Truck" includes only big trucks. Neither includes pickup trucks.

Here is the list of classes in the CIFAR-10:

- airplane

- automobile

- bird

- cat

- deer

- dog

- frog

- horse

- ship

- truck

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image into one of 10 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-cifar-10).

### Languages

English class labels.

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```python

{

'img': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=32x32 at 0x7FD19FABC1D0>,

'img_path': '/huggingface/datasets/downloads/extracted/7faec2e0fd4aa3236f838ed9b105fef08d1a6f2a6bdeee5c14051b64619286d5/0/0.png',

'label': 0,

'split': 'train'

}

```

### Data Fields

| Feature | Data Type |

|---------------------------------|-----------------------------------------------|

| img | Image(decode=True, id=None) |

| img_path | Value(dtype='string', id=None) |

| label | ClassLabel(names=[...], id=None) |

| split | Value(dtype='string', id=None) |

### Data Splits

| Dataset Split | Number of Images in Split | Samples per Class |

| ------------- |---------------------------| -------------------------|

| Train | 50000 | 5000 |

| Test | 10000 | 1000 |

## Dataset Creation

### Curation Rationale

The CIFAR-10 and CIFAR-100 are labeled subsets of the [80 million tiny images](http://people.csail.mit.edu/torralba/tinyimages/) dataset.

They were collected by Alex Krizhevsky, Vinod Nair, and Geoffrey Hinton.

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

If you use this dataset, please cite the following paper:

```

@article{krizhevsky2009learning,

added-at = {2021-01-21T03:01:11.000+0100},

author = {Krizhevsky, Alex},

biburl = {https://www.bibsonomy.org/bibtex/2fe5248afe57647d9c85c50a98a12145c/s364315},

interhash = {cc2d42f2b7ef6a4e76e47d1a50c8cd86},

intrahash = {fe5248afe57647d9c85c50a98a12145c},

keywords = {},

pages = {32--33},

timestamp = {2021-01-21T03:01:11.000+0100},

title = {Learning Multiple Layers of Features from Tiny Images},

url = {https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf},

year = 2009

}

```

### Contributions

Alex Krizhevsky, Vinod Nair, Geoffrey Hinton, and Renumics GmbH. |

false |

[MLQA (MultiLingual Question Answering)](https://github.com/facebookresearch/mlqa) 中英雙語問答資料集,為原始 MLQA 資料集轉換為台灣正體中文的版本,並將中文與英語版本的相同項目合併,方便供雙語語言模型使用。(致謝:[BYVoid/OpenCC](https://github.com/BYVoid/OpenCC)、[vinta/pangu.js](https://github.com/vinta/pangu.js))

分為 `dev` 以及 `test` 兩個 split,各有 302 及 2986 組資料。

範本:

```json

[

{

"title": {

"en": "Curling at the 2014 Winter Olympics",

"zh_tw": "2014 年冬季奧林匹克運動會冰壺比賽"

},

"paragraphs": [

{

"context": {

"en": "Qualification to the curling tournaments at the Winter Olympics was determined through two methods. Nations could qualify teams by earning qualification points from performances at the 2012 and 2013 World Curling Championships. Teams could also qualify through an Olympic qualification event which was held in the autumn of 2013. Seven nations qualified teams via World Championship qualification points, while two nations qualified through the qualification event. As host nation, Russia qualified teams automatically, thus making a total of ten teams per gender in the curling tournaments.",

"zh_tw": "本屆冬奧會冰壺比賽參加資格有兩種辦法可以取得。各國家或地區可以透過 2012 年和 2013 年的世界冰壺錦標賽,也可以透過 2013 年 12 月舉辦的一次冬奧會資格賽來取得資格。七個國家透過兩屆世錦賽積分之和來獲得資格,兩個國家則透過冬奧會資格賽。作為主辦國,俄羅斯自動獲得參賽資格,這樣就確定了冬奧會冰壺比賽的男女各十支參賽隊伍。"

},

"qas": [

{

"id": "b08184972e38a79c47d01614aa08505bb3c9b680",

"question": {

"zh_tw": "俄羅斯有多少隊獲得參賽資格?",

"en": "How many teams did Russia qualify for?"

},

"answers": {

"en": [

{

"text": "ten teams",

"answer_start": 543

}

],

"zh_tw": [

{

"text": "十支",

"answer_start": 161

}

]

}

}

]

}

]

}

]

```

其餘資訊,詳見:https://github.com/facebookresearch/mlqa 。

## 原始資料集

https://github.com/facebookresearch/mlqa ,分別取其中 `dev` 與 `test` split 的 `context-zh-question-zh`、`context-zh-question-en`、`context-en-question-zh`,總共六個檔案。

## 轉換程序

1. 由 [OpenCC](https://github.com/BYVoid/OpenCC) 使用 `s2twp.json` 配置,將簡體中文轉換為台灣正體中文與臺灣常用詞彙。

2. 使用 Python 版本的 [pangu.js](https://github.com/vinta/pangu.js) 在中英文(全形與半形文字)之間加上空格。

3. 將中英文資料集中的相同項目進行合併。

關於轉換的詳細過程,請見:https://github.com/zetavg/LLM-Research/blob/bba5ff7/MLQA_Dataset_Converter_(en_zh_tw).ipynb 。

## 已知問題

* 有些項目的 `title`、`paragraph` 的 `context`、問題或是答案可能會缺少其中一種語言的版本。

* 部分問題與答案可能存在理解偏誤或歧異,例如上方所列範本「2014 年冬季奧林匹克運動會冰壺比賽」的問題「俄羅斯有多少隊獲得參賽資格?」與答案。

* `paragraph` 的 `context` 在不同語言的版本下可能長度與涵蓋的內容範圍有很大的落差。例如在 development split 中,`title` 為 “Adobe Photoshop” 的項目:

* `zh_tw` 只有兩句話:「Adobe Photoshop,簡稱 “PS”,是一個由 Adobe 開發和發行的影象處理軟體。該軟體釋出在 Windows 和 Mac OS 上。」

* 而 `en` 則是一個段落:“Adobe Photoshop is a raster graphics editor developed and published by Adobe Inc. for Windows and macOS. It was originally created in 1988 by Thomas and John Knoll. Since then, this software has become the industry standard not only in raster graphics editing, but in digital art as a whole. … (下略 127 字)” |

true |

100.772 texts with their corresponding labels

NOT_OFF_HATEFUL_TOXIC 81.359 values

OFF_HATEFUL_TOXIC 19.413 values |

false | # AutoTrain Dataset for project: teste

## Dataset Description

This dataset has been automatically processed by AutoTrain for project teste.

### Languages

The BCP-47 code for the dataset's language is pt.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"context": "Sherlock Holmes \u00e9 um personagem de fic\u00e7\u00e3o criado pelo escritor brit\u00e2nico Sir Arthur Conan Doyle. Ele \u00e9 um detetive famoso por sua habilidade em resolver mist\u00e9rios e crimes complexos.",

"question": "Pergunta 268: Qual \u00e9 o nome do irm\u00e3o mais velho de Sherlock Holmes que trabalha para o servi\u00e7o secreto brit\u00e2nico?",

"answers.text": [

"Mycroft Holmes"

],

"answers.answer_start": [

0

]

},

{

"context": "Sherlock Holmes \u00e9 um personagem de fic\u00e7\u00e3o criado pelo escritor brit\u00e2nico Sir Arthur Conan Doyle. Ele \u00e9 um detetive famoso por sua habilidade em resolver mist\u00e9rios e crimes complexos.",

"question": "Pergunta 52: Qual \u00e9 o nome do irm\u00e3o mais velho de Sherlock Holmes que trabalha para o servi\u00e7o secreto brit\u00e2nico?",

"answers.text": [

"Mycroft Holmes"

],

"answers.answer_start": [

0

]

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"context": "Value(dtype='string', id=None)",

"question": "Value(dtype='string', id=None)",

"answers.text": "Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)",

"answers.answer_start": "Sequence(feature=Value(dtype='int32', id=None), length=-1, id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 720 |

| valid | 180 | |

true |

This is the same dataset as [`ag_news`](https://huggingface.co/datasets/ag_news).

The only differences are

1. Addition of a unique identifier, `uid`

1. Addition of the indices, that is 3 columns with the embeddings of 3 different sentence-transformers

- `all-mpnet-base-v2`

- `multi-qa-mpnet-base-dot-v1`

- `all-MiniLM-L12-v2`

1. Renaming of the `label` column to `labels` for easier compatibility with the transformers library |

false | |

false |

# MInDS-14

## Dataset Description

- **Fine-Tuning script:** [pytorch/audio-classification](https://github.com/huggingface/transformers/tree/main/examples/pytorch/audio-classification)

- **Paper:** [Multilingual and Cross-Lingual Intent Detection from Spoken Data](https://arxiv.org/abs/2104.08524)

- **Total amount of disk used:** ca. 500 MB

MINDS-14 is training and evaluation resource for intent detection task with spoken data. It covers 14

intents extracted from a commercial system in the e-banking domain, associated with spoken examples in 14 diverse language varieties.

## Example

MInDS-14 can be downloaded and used as follows:

```py

from datasets import load_dataset

minds_14 = load_dataset("PolyAI/minds14", "fr-FR") # for French

# to download all data for multi-lingual fine-tuning uncomment following line

# minds_14 = load_dataset("PolyAI/all", "all")

# see structure

print(minds_14)

# load audio sample on the fly

audio_input = minds_14["train"][0]["audio"] # first decoded audio sample

intent_class = minds_14["train"][0]["intent_class"] # first transcription

intent = minds_14["train"].features["intent_class"].names[intent_class]

# use audio_input and language_class to fine-tune your model for audio classification

```

## Dataset Structure

We show detailed information the example configurations `fr-FR` of the dataset.

All other configurations have the same structure.

### Data Instances

**fr-FR**

- Size of downloaded dataset files: 471 MB

- Size of the generated dataset: 300 KB

- Total amount of disk used: 471 MB

An example of a datainstance of the config `fr-FR` looks as follows:

```

{

"path": "/home/patrick/.cache/huggingface/datasets/downloads/extracted/3ebe2265b2f102203be5e64fa8e533e0c6742e72268772c8ac1834c5a1a921e3/fr-FR~ADDRESS/response_4.wav",

"audio": {

"path": "/home/patrick/.cache/huggingface/datasets/downloads/extracted/3ebe2265b2f102203be5e64fa8e533e0c6742e72268772c8ac1834c5a1a921e3/fr-FR~ADDRESS/response_4.wav",

"array": array(

[0.0, 0.0, 0.0, ..., 0.0, 0.00048828, -0.00024414], dtype=float32

),

"sampling_rate": 8000,

},

"transcription": "je souhaite changer mon adresse",

"english_transcription": "I want to change my address",

"intent_class": 1,

"lang_id": 6,

}

```

### Data Fields

The data fields are the same among all splits.

- **path** (str): Path to the audio file

- **audio** (dict): Audio object including loaded audio array, sampling rate and path ot audio

- **transcription** (str): Transcription of the audio file

- **english_transcription** (str): English transcription of the audio file

- **intent_class** (int): Class id of intent

- **lang_id** (int): Id of language

### Data Splits

Every config only has the `"train"` split containing of *ca.* 600 examples.

## Dataset Creation