text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

false | # Dataset Card for "riffusion-musiccaps-datasets-768"

Converted google/musicCaps to spectograms with audio_to_spectrum with riffusion cli.

Random 7.68 sec for each music in musicCaps.

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false |

# Dataset card for "george-chou/pianos_mel"

## Usage

```

from datasets import load_dataset

data = load_dataset("george-chou/pianos_mel")

trainset = data['train']

validset = data['validation']

testset = data['test']

labels = trainset.features['label'].names

for item in trainset:

print('image: ', item['image'].convert('RGB'))

print('label name: ' + labels[item['label']])

for item in validset:

print('image: ', item['image'].convert('RGB'))

print('label name: ' + labels[item['label']])

for item in testset:

print('image: ', item['image'].convert('RGB'))

print('label name: ' + labels[item['label']])

```

## Maintenance

```

git clone git@hf.co:datasets/george-chou/pianos_mel

```

## Cite

```

@dataset{zhaorui_liu_2021_5676893,

author = {Zhaorui Liu, Monan Zhou, Shenyang Xu and Zijin Li},

title = {{Music Data Sharing Platform for Computational Musicology Research (CCMUSIC DATASET)}},

month = nov,

year = 2021,

publisher = {Zenodo},

version = {1.1},

doi = {10.5281/zenodo.5676893},

url = {https://doi.org/10.5281/zenodo.5676893}

}

``` |

true |

# Dataset Card for Peewee Issues

## Dataset Summary

Peewee Issues is a dataset containing all the issues in the [Peewee github repository](https://github.com/coleifer/peewee) up to the last date of extraction (5/3/2023). It has been made for educational purposes in mind (especifically, to get me used to using Hugging Face's datasets), but can be used for multi-label classification or semantic search. The contents are all in English and concern SQL databases and ORM libraries. |

false |

## Dataset Description

- **Repository:** [Link to repo](https://github.com/VityaVitalich/IMAD)

- **Paper:** [IMage Augmented multi-modal Dialogue: IMAD](https://arxiv.org/abs/2305.10512v1)

- **Point of Contact:** [Contacts Section](https://github.com/VityaVitalich/IMAD#contacts)

### Dataset Summary

This dataset contains data from the paper [IMage Augmented multi-modal Dialogue: IMAD](https://arxiv.org/abs/2305.10512v1).

The main feature of this dataset is the novelty of the task. It has been generated specifically for the purpose of image interpretation in a dialogue context.

Some of the dialogue utterances have been replaced with images, allowing a generative model to be trained to restore the initial utterance.

The dialogues are sourced from multiple dialogue datasets (DailyDialog, Commonsense, PersonaChat, MuTual, Empathetic Dialogues, Dream) and have been filtered using a technique described in the paper.

A significant portion of the data has been labeled by assessors, resulting in a high inter-reliability score. The combination of these methods has led to a well-filtered dataset and consequently a high BLEU score.

We hope that this dataset will be beneficial for the development of multi-modal deep learning.

### Data Fields

Dataset contains 5 fields

- `image_id`: `string` that contains id of image in the full Unsplash Dataset

- `source_data`: `string` that contains the name of source dataset

- `utter`: `string` that contains utterance that was replaced in this dialogue with an image

- `context`: `list` of `string` that contains sequence of utterances in the dialogue before the replaced utterance

- `image_like`: `int` that shows if the data was collected with assessors or via filtering technique

### Licensing Information

Textual part of IMAD is licensed under [CC BY-NC-SA 4.0](https://creativecommons.org/licenses/by-nc-sa/4.0/). Full Dataset with images could be requested directly contacting authors

or could be obtained with matching images_id with Unsplash full dataset.

### Contacts

Feel free to reach out to us at [vvmoskvoretskiy@yandex.ru] for inquiries, collaboration suggestions, or data requests related to our work.

### Citation Information

To cite this article please use this BibTex reference

```bibtex

@misc{viktor2023imad,

title={IMAD: IMage-Augmented multi-modal Dialogue},

author={Moskvoretskii Viktor and Frolov Anton and Kuznetsov Denis},

year={2023},

eprint={2305.10512},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

Or via MLA Citation

```

Viktor, Moskvoretskii et al. “IMAD: IMage-Augmented multi-modal Dialogue.” (2023).

``` |

false |

# MAP

An SQLite database of video urls and captions/descriptions. |

false |

Stanford Question Answering Dataset (SQuAD) is a reading comprehension dataset, consisting of questions posed by crowdworkers on a set of Wikipedia articles, where the answer to every question is a segment of text, or span, from the corresponding reading passage, or the question might be unanswerable.

This is an Indonesia-translated version of [squad]("https://huggingface.co/datasets/squad") dataset

Translated from [sentence-transformers/embedding-training-data](https://huggingface.co/datasets/sentence-transformers/embedding-training-data)

Translated using [Helsinki-NLP/EN-ID](https://huggingface.co/Helsinki-NLP/opus-mt-en-id) |

false | # Dataset Card for Tapir-Cleaned

This is a revised version of the DAISLab dataset of IFTTT rules, which has been thoroughly cleaned, scored, and adjusted for the purpose of instruction-tuning.

## Tapir Dataset Summary

Tapir is a subset of the larger DAISLab dataset, which comprises 242,480 recipes extracted from the IFTTT platform.

After a thorough cleaning process that involved the removal of redundant and inconsistent recipes, the refined dataset was condensed to include 67,697 high-quality recipes.

This curated set of instruction data is particularly useful for conducting instruction-tuning exercises for language models,

allowing them to more accurately follow instructions and achieve superior performance.

The last version of Tapir includes a correlation score that helps to identify the most appropriate description-rule pairs for instruction tuning.

Description-rule pairs with a score greater than 0.75 are deemed good enough and are prioritized for further analysis and tuning.

### Supported Tasks and Leaderboards

The Tapir dataset designed for instruction training pretrained language models

### Languages

The data in Tapir are mainly in English (BCP-47 en).

# Dataset Structure

### Data Instances

```json

{

"instruction":"From the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.",

"input":"If it's raining outside, you'll want some nice warm colors inside!",

"output":"IF Weather Underground Current condition changes to THEN LIFX Change color of lights",

"score":"0.788197",

"text": "Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\n\n### Instruction:\nFrom the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.\n\n### Input:\nIf it's raining outside, you'll want some nice warm colors inside!\n\n### Response:\nIF Weather Underground Current condition changes to THEN LIFX Change color of lights",

}

```

### Data Fields

The data fields are as follows:

* `instruction`: describes the task the model should perform.

* `input`: context or input for the task. Each of the 67K input is unique.

* `output`: the answer taken from the original Tapir Dataset formatted as an IFTTT recipe.

* `score`: the correlation score obtained via BertForNextSentencePrediction

* `text`: the `instruction`, `input` and `output` formatted with the [prompt template](https://github.com/tatsu-lab/stanford_alpaca#data-release) used by the authors of Alpaca for fine-tuning their models.

### Data Splits

| | train |

|---------------|------:|

| tapir | 67697 |

### Licensing Information

The dataset is available under the [Creative Commons NonCommercial (CC BY-NC 4.0)](https://creativecommons.org/licenses/by-nc/4.0/legalcode).

### Citation Information

```

@misc{tapir,

author = {Mattia Limone, Gaetano Cimino, Annunziata Elefante},

title = {TAPIR: Trigger Action Platform for Information Retrieval},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/MattiaLimone/ifttt_recommendation_system}},

}

``` |

false | # Dataset Card for Tapir-Cleaned

This is a revised version of the DAISLab dataset of IFTTT rules, which has been thoroughly cleaned, scored, and adjusted for the purpose of instruction-tuning.

## Tapir Dataset Summary

Tapir is a subset of the larger DAISLab dataset, which comprises 242,480 recipes extracted from the IFTTT platform.

After a thorough cleaning process that involved the removal of redundant and inconsistent recipes, the refined dataset was condensed to include 116,862 high-quality recipes.

This curated set of instruction data is particularly useful for conducting instruction-tuning exercises for language models,

allowing them to more accurately follow instructions and achieve superior performance.

The last version of Tapir includes a correlation score that helps to identify the most appropriate description-rule pairs for instruction tuning.

Description-rule pairs with a score greater than 0.75 are deemed good enough and are prioritized for further analysis and tuning.

### Supported Tasks and Leaderboards

The Tapir dataset designed for instruction training pretrained language models

### Languages

The data in Tapir are mainly in English (BCP-47 en).

# Dataset Structure

### Data Instances

```json

{

"instruction":"From the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.",

"input":"If lostphone is texted to my phone the volume will turn up to 100 so I can find it.",

"output":"IF Android SMS New SMS received matches search THEN Android Device Set ringtone volume",

"score":"0.804322",

"text": "Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\n\n### Instruction:\nFrom the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.\n\n### Input:\nIf lostphone is texted to my phone the volume will turn up to 100 so I can find it.\n\n### Response:\nIF Android SMS New SMS received matches search THEN Android Device Set ringtone volume",

}

```

### Data Fields

The data fields are as follows:

* `instruction`: describes the task the model should perform.

* `input`: context or input for the task. Each of the 116K input is unique.

* `output`: the answer taken from the original Tapir Dataset formatted as an IFTTT recipe.

* `score`: the correlation score obtained via BertForNextSentencePrediction

* `text`: the `instruction`, `input` and `output` formatted with the [prompt template](https://github.com/tatsu-lab/stanford_alpaca#data-release) used by the authors of Alpaca for fine-tuning their models.

### Data Splits

| | train |

|---------------|------:|

| tapir | 116862 |

### Licensing Information

The dataset is available under the [Creative Commons NonCommercial (CC BY-NC 4.0)](https://creativecommons.org/licenses/by-nc/4.0/legalcode).

### Citation Information

```

@misc{tapir,

author = {Mattia Limone, Gaetano Cimino, Annunziata Elefante},

title = {TAPIR: Trigger Action Platform for Information Retrieval},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/MattiaLimone/ifttt_recommendation_system}},

}

``` |

false | # Dataset Card for DIALOGSum Corpus

## Dataset Description

### Links

- **Homepage:** https://aclanthology.org/2021.findings-acl.449

- **Repository:** https://github.com/cylnlp/dialogsum

- **Paper:** https://aclanthology.org/2021.findings-acl.449

### Dataset Summary

DialogSum is a large-scale dialogue summarization dataset, consisting of 13,460 (Plus 100 holdout data for topic generation) dialogues with corresponding manually labeled summaries and topics.

### Languages

Russian (translated from English by Google Translate).

## Dataset Structure

### Data Fields

- dialogue: text of dialogue.

- summary: human written summary of the dialogue.

- topic: human written topic/one liner of the dialogue.

- id: unique file id of an example.

### Data Splits

- train: 12460

- val: 500

- test: 1500

- holdout: 100 [Only 3 features: id, dialogue, topic]

## Dataset Creation

### Curation Rationale

In paper:

We collect dialogue data for DialogSum from three public dialogue corpora, namely Dailydialog (Li et al., 2017), DREAM (Sun et al., 2019) and MuTual (Cui et al., 2019), as well as an English speaking practice website. These datasets contain face-to-face spoken dialogues that cover a wide range of daily-life topics, including schooling, work, medication, shopping, leisure, travel. Most conversations take place between friends, colleagues, and between service providers and customers.

Compared with previous datasets, dialogues from DialogSum have distinct characteristics:

Under rich real-life scenarios, including more diverse task-oriented scenarios;

Have clear communication patterns and intents, which is valuable to serve as summarization sources;

Have a reasonable length, which comforts the purpose of automatic summarization.

We ask annotators to summarize each dialogue based on the following criteria:

Convey the most salient information;

Be brief;

Preserve important named entities within the conversation;

Be written from an observer perspective;

Be written in formal language.

### Who are the source language producers?

linguists

### Who are the annotators?

language experts

## Licensing Information

MIT License

## Citation Information

```

@inproceedings{chen-etal-2021-dialogsum,

title = "{D}ialog{S}um: {A} Real-Life Scenario Dialogue Summarization Dataset",

author = "Chen, Yulong and

Liu, Yang and

Chen, Liang and

Zhang, Yue",

booktitle = "Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021",

month = aug,

year = "2021",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.findings-acl.449",

doi = "10.18653/v1/2021.findings-acl.449",

pages = "5062--5074",

```

## Contributions

Thanks to [@cylnlp](https://github.com/cylnlp) for adding this dataset. |

false |

curr. size: 53,081 videos

goal (todo): 100,000+ |

false | # Dataset Card for "code-search-net-ruby"

## Dataset Description

- **Homepage:** None

- **Repository:** https://huggingface.co/datasets/Nan-Do/code-search-net-go

- **Paper:** None

- **Leaderboard:** None

- **Point of Contact:** [@Nan-Do](https://github.com/Nan-Do)

### Dataset Summary

This dataset is the Ruby portion of the CodeSarchNet annotated with a summary column.

The code-search-net dataset includes open source functions that include comments found at GitHub.

The summary is a short description of what the function does.

### Languages

The dataset's comments are in English and the functions are coded in Ruby

### Data Splits

Train, test, validation labels are included in the dataset as a column.

## Dataset Creation

May of 2023

### Curation Rationale

This dataset can be used to generate instructional (or many other interesting) datasets that are useful to train LLMs

### Source Data

The CodeSearchNet dataset can be found at https://www.kaggle.com/datasets/omduggineni/codesearchnet

### Annotations

This datasets include a summary column including a short description of the function.

#### Annotation process

The annotation procedure was done using [Salesforce](https://huggingface.co/Salesforce) T5 summarization models.

A sample notebook of the process can be found at https://github.com/Nan-Do/OpenAssistantInstructionResponsePython

The annontations have been cleaned to make sure there are no repetitions and/or meaningless summaries. (some may still be present in the dataset)

### Licensing Information

Apache 2.0 |

false |

MMC4-130k是对MMC4中,抽样了130k左右 simliarty较高的图文pair得到的数据集

我们准备陆续翻译这个子集

我们会陆续将更多数据集发布到hf,包括

- [ ] Coco Caption的中文翻译

- [ ] CoQA的中文翻译

- [ ] CNewSum的Embedding数据

- [ ] 增广的开放QA数据

- [x] WizardLM的中文翻译

如果你也在做这些数据集的筹备,欢迎来联系我们,避免重复花钱。

# 骆驼(Luotuo): 开源中文大语言模型

[https://github.com/LC1332/Luotuo-Chinese-LLM](https://github.com/LC1332/Luotuo-Chinese-LLM)

骆驼(Luotuo)项目是由[冷子昂](https://blairleng.github.io) @ 商汤科技, 陈启源 @ 华中师范大学 以及 李鲁鲁 @ 商汤科技 发起的中文大语言模型开源项目,包含了一系列语言模型。

( 注意: [陈启源](https://qiyuan-chen.github.io/) 正在寻找2024推免导师,欢迎联系 )

骆驼项目**不是**商汤科技的官方产品。

## Citation

Please cite the repo if you use the data or code in this repo.

```

@misc{alpaca,

author={Ziang Leng, Qiyuan Chen and Cheng Li},

title = {Luotuo: An Instruction-following Chinese Language model, LoRA tuning on LLaMA},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/LC1332/Luotuo-Chinese-LLM}},

}

``` |

false | # AutoTrain Dataset for project: doodles-30

## Dataset Description

This dataset has been automatically processed by AutoTrain for project doodles-30.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<256x256 RGB PIL image>",

"target": 1

},

{

"image": "<256x256 RGB PIL image>",

"target": 3

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['ant', 'bear', 'bee', 'bird', 'cat', 'dog', 'dolphin', 'elephant', 'giraffe', 'horse', 'lion', 'mosquito', 'tiger', 'whale'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 336 |

| valid | 84 | |

false |

- subset from https://huggingface.co/datasets/liuhaotian/LLaVA-Instruct-150K

- train: 21000

- val seen: 3000

- val unseen: 2100

- test: 6000 |

false | # Dataset Card for Tapir-Cleaned

This is a revised version of the DAISLab dataset of IFTTT rules, which has been thoroughly cleaned, scored, and adjusted for the purpose of instruction-tuning.

## Tapir Dataset Summary

Tapir is a subset of the larger DAISLab dataset, which comprises 242,480 recipes extracted from the IFTTT platform.

After a thorough cleaning process that involved the removal of redundant and inconsistent recipes, the refined dataset was condensed to include 32,403 high-quality recipes.

This curated set of instruction data is particularly useful for conducting instruction-tuning exercises for language models,

allowing them to more accurately follow instructions and achieve superior performance.

The last version of Tapir includes a correlation score that helps to identify the most appropriate description-rule pairs for instruction tuning.

Description-rule pairs with a score greater than 0.75 are deemed good enough and are prioritized for further analysis and tuning.

### Supported Tasks and Leaderboards

The Tapir dataset designed for instruction training pretrained language models

### Languages

The data in Tapir are mainly in English (BCP-47 en).

# Dataset Structure

### Data Instances

```json

{

"instruction":"From the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.",

"input":"If it's raining outside, you'll want some nice warm colors inside!",

"output":"IF Weather Underground Current condition changes to THEN LIFX Change color of lights",

"text": "Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.\n\n### Instruction:\nFrom the description of a rule: identify the 'trigger', identify the 'action', write a IF 'trigger' THEN 'action' rule.\n\n### Input:\nIf it's raining outside, you'll want some nice warm colors inside!\n\n### Response:\nIF Weather Underground Current condition changes to THEN LIFX Change color of lights",

}

```

### Data Fields

The data fields are as follows:

* `instruction`: describes the task the model should perform.

* `input`: context or input for the task. Each of the 32k input is unique.

* `output`: the answer taken from the original Tapir Dataset formatted as an IFTTT recipe.

* `text`: the `instruction`, `input` and `output` formatted with the [prompt template](https://github.com/tatsu-lab/stanford_alpaca#data-release) used by the authors of Alpaca for fine-tuning their models.

### Data Splits

| | train |

|---------------|------:|

| tapir | 32403 |

### Licensing Information

The dataset is available under the [Creative Commons NonCommercial (CC BY-NC 4.0)](https://creativecommons.org/licenses/by-nc/4.0/legalcode).

### Citation Information

```

@misc{tapir,

author = {Mattia Limone, Gaetano Cimino, Annunziata Elefante},

title = {TAPIR: Trigger Action Platform for Information Retrieval},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/MattiaLimone/ifttt_recommendation_system}},

}

``` |

false | # Dataset Card for "instructional_code-search-net-ruby"

## Dataset Description

- **Homepage:** None

- **Repository:** https://huggingface.co/datasets/Nan-Do/instructional_code-search-net-ruby

- **Paper:** None

- **Leaderboard:** None

- **Point of Contact:** [@Nan-Do](https://github.com/Nan-Do)

### Dataset Summary

This is an instructional dataset for Ruby.

The dataset contains two different kind of tasks:

- Given a piece of code generate a description of what it does.

- Given a description generate a piece of code that fulfils the description.

### Languages

The dataset is in English.

### Data Splits

There are no splits.

## Dataset Creation

May of 2023

### Curation Rationale

This dataset was created to improve the coding capabilities of LLMs.

### Source Data

The summarized version of the code-search-net dataset can be found at https://huggingface.co/datasets/Nan-Do/code-search-net-ruby

### Annotations

The dataset includes an instruction and response columns.

#### Annotation process

The annotation procedure was done using templates and NLP techniques to generate human-like instructions and responses.

A sample notebook of the process can be found at https://github.com/Nan-Do/OpenAssistantInstructionResponsePython

The annontations have been cleaned to make sure there are no repetitions and/or meaningless summaries.

### Licensing Information

Apache 2.0 |

false | # Dataset Card for "instructional_code-search-net-php"

## Dataset Description

- **Homepage:** None

- **Repository:** https://huggingface.co/datasets/Nan-Do/instructional_code-search-net-php

- **Paper:** None

- **Leaderboard:** None

- **Point of Contact:** [@Nan-Do](https://github.com/Nan-Do)

### Dataset Summary

This is an instructional dataset for PHP.

The dataset contains two different kind of tasks:

- Given a piece of code generate a description of what it does.

- Given a description generate a piece of code that fulfils the description.

### Languages

The dataset is in English.

### Data Splits

There are no splits.

## Dataset Creation

May of 2023

### Curation Rationale

This dataset was created to improve the coding capabilities of LLMs.

### Source Data

The summarized version of the code-search-net dataset can be found at https://huggingface.co/datasets/Nan-Do/code-search-net-php

### Annotations

The dataset includes an instruction and response columns.

#### Annotation process

The annotation procedure was done using templates and NLP techniques to generate human-like instructions and responses.

A sample notebook of the process can be found at https://github.com/Nan-Do/OpenAssistantInstructionResponsePython

The annontations have been cleaned to make sure there are no repetitions and/or meaningless summaries.

### Licensing Information

Apache 2.0

|

true |

sts 2012-2016 datasets

|

true |

This dataset contains more than 250k articles obtained from polish news site `tvp.info.pl`.

Main purpouse of collecting the data was to create a transformer-based model for text summarization.

Columns:

* `link` - link to article

* `title` - original title of the article

* `headline` - lead/headline of the article - first paragraph of the article visible directly from the page

* `content` - full textual contents of the article

Link to original repo: https://github.com/WiktorSob/scraper-tvp

Download the data:

```python

from datasets import load_dataset

dataset = load_dataset("WiktorS/polish-news")

``` |

false | # Dataset Card for "hotel_reviews"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false | The dataset contains 20703 records. The dataset was created by removing all dataset items from the original 27k dataset that had a BLEU score 0 or more than 0.3388.

|

false |

# VoxCeleb 1

VoxCeleb1 contains over 100,000 utterances for 1,251 celebrities, extracted from videos uploaded to YouTube.

## Verification Split

| | train | validation | test |

| :---: | :---: | :---: | :---: |

| # of speakers | 1211 | 1211 | 40 |

| # of samples | 299246 | 33672 | 4874 |

## References

- https://www.robots.ox.ac.uk/~vgg/data/voxceleb/vox1.html |

false |

Simple anime image rating prediction task. Data is randomly scraped from Sankaku Complex.

Please note that due to the often unclear boundaries between `safe`, `r15` and `r18` levels,

there is no objective ground truth for this task, and the data is scraped without any manual filtering.

Therefore, the models trained on this dataset can only provide rough checks.

**If you require an accurate solution for classifying `R18` images, it is recommended to consider a solution based on keypoint object detection.**

| Dataset | Safe Images | R15 Images | R18 Images | Description |

|:-------:|:-----------:|:----------:|:----------:|--------------------------------------|

| v1 | 5991 | 4960 | 5070 | Simply crawled from Sankaku Complex. |

|

true | Conversation Ending Check |

false |

This dataset contains a selection of Q&A-related tasks gathered and cleaned from the webGPT_comparisons set and the databricks-dolly-15k set.

Unicode escapes were explicitly removed, and wikipedia citations in the "output" were stripped through regex to hopefully help any

end-product model ignore these artifacts within their input context.

This data is formatted for use in the alpaca instruction format, however the instruction, input, and output columns are kept separate in

the raw data to allow for other configurations. The data has been filtered so that every entry is less than our chosen truncation length of

1024 (LLaMA-style) tokens with the format:

```

"""Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Input:

{inputt}

### Response:

{output}"""

```

<h3>webGPT</h3>

This set was filtered from the webGPT_comparisons data by taking any Q&A option that was positively or neutrally-rated by humans (e.g. "score" >= 0).

This might not provide the ideal answer, but this dataset was assembled specifically for extractive Q&A with less regard for how humans

feel about the results.

This selection comprises 23826 of the total entries in the data.

<h3>Dolly</h3>

The dolly data was selected primarily to focus on closed-qa tasks. For this purpose, only entries in the "closed-qa", "information_extraction",

"summarization", "classification", and "creative_writing" were used. While not all of these include a context, they were judged to help

flesh out the training set.

This selection comprises 5362 of the total entries in the data.

|

false | |

false |

Face Masks ensemble dataset is no longer limited to [Kaggle](https://www.kaggle.com/datasets/henrylydecker/face-masks), it is now coming to Huggingface!

This dataset was created to help train and/or fine tune models for detecting masked and un-masked faces.

I created a new face masks object detection dataset by compositing together three publically available face masks object detection datasets on Kaggle that used the YOLO annotation format.

To combine the datasets, I used Roboflow.

All three original datasets had different class dictionaries, so I recoded the classes into two classes: "Mask" and "No Mask".

One dataset included a class for incorrectly worn face masks, images with this class were removed from the dataset.

Approximately 50 images had corrupted annotations, so they were manually re-annotated in the Roboflow platform.

The final dataset includes 9,982 images, with 24,975 annotated instances.

Image resolution was on average 0.49 mp, with a median size of 750 x 600 pixels.

To improve model performance on out of sample data, I used 90 degree rotational augmentation.

This saved duplicate versions of each image for 90, 180, and 270 degree rotations.

I then split the data into 85% training, 10% validation, and 5% testing.

Images with classes that were removed from the dataset were removed, leaving 16,000 images in training, 1,900 in validation, and 1,000 in testing. |

true |

# Dataset Card for News_Articles_Categorization

## Table of Contents

- [Dataset Description](#dataset-description)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Source Data](#source-data)

## Dataset Description

29000 News headlines which are classified into 13 different labels namely: "Playful", "Infuriating", "Sentimental", "Cynical", "Depressing", "Awe-inspiring", "Patriotic", "Begrudging", "Educational", "Hopeful",

"Sarcastic", "Disrespectful", "Disparaging"

## Languages

The text in the dataset is in English

## Dataset Structure

The dataset consists of 14 columns namely Headline and the other 13 representing the labels mentioned above.

The Headline column consists of the news headlines and the label columns represent if the headline belongs to the label or not

## Source Data

The dataset is collected from the database of otherweb.com |

false |

# Dataset Card for Leading Decision Summarization

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset contains text and summary for swiss leading decisions.

### Supported Tasks and Leaderboards

### Languages

Switzerland has four official languages with three languages German, French and Italian being represenated. The decisions are written by the judges and clerks in the language of the proceedings.

| Language | Subset | Number of Documents|

|------------|------------|--------------------|

| German | **de** | 12K |

| French | **fr** | 5K |

| Italian | **it** | 835 |

## Dataset Structure

- decision_id: unique identifier for the decision

- header: a short header for the decision

- regeste: the summary of the leading decision

- text: the main text of the leading decision

- law_area: area of law of the decision

- law_sub_area: sub-area of law of the decision

- language: language of the decision

- year: year of the decision

- court: court of the decision

- chamber: chamber of the decision

- canton: canton of the decision

- region: region of the decision

### Data Fields

[More Information Needed]

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

## Dataset Creation

### Curation Rationale

### Source Data

#### Initial Data Collection and Normalization

The original data are published from the Swiss Federal Supreme Court (https://www.bger.ch) in unprocessed formats (HTML). The documents were downloaded from the Entscheidsuche portal (https://entscheidsuche.ch) in HTML.

#### Who are the source language producers?

The decisions are written by the judges and clerks in the language of the proceedings.

### Annotations

#### Annotation process

#### Who are the annotators?

### Personal and Sensitive Information

The dataset contains publicly available court decisions from the Swiss Federal Supreme Court. Personal or sensitive information has been anonymized by the court before publication according to the following guidelines: https://www.bger.ch/home/juridiction/anonymisierungsregeln.html.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

We release the data under CC-BY-4.0 which complies with the court licensing (https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf)

© Swiss Federal Supreme Court, 2002-2022

The copyright for the editorial content of this website and the consolidated texts, which is owned by the Swiss Federal Supreme Court, is licensed under the Creative Commons Attribution 4.0 International licence. This means that you can re-use the content provided you acknowledge the source and indicate any changes you have made.

Source: https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf

### Citation Information

*Visu, Ronja, Joel*

*Title: Blabliblablu*

*Name of conference*

```

cit

```

### Contributions

|

true | |

false | |

false | # Dataset Card for Dataset Name

## Dataset Description

- **Repository:** https://github.com/danielsteinigen/nlp-legal-texts

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false |

# Dataset Card for Dataset Name

### Dataset Summary

It is just a dataset of dolly-15k-jp(*1) converted to jsonl form so that it can be used in SFTTrainer(*2)'s dataset_text_field property.

(*1)https://huggingface.co/datasets/kunishou/databricks-dolly-15k-ja

(*2)https://huggingface.co/docs/trl/main/en/sft_trainer

### Languages

ja

### Licensing Information

This dataset is licensed under CC BY SA 3.0

Special Thanks

https://huggingface.co/datasets/kunishou/databricks-dolly-15k-ja |

false |

## LLaVA Visual Instruct CC3M 595K Pretrain Dataset Card

[LLaVA](https://llava-vl.github.io/)에서 공개한 CC3M의 595K개 Visual Instruction dataset을 한국어로 번역한 데이터셋입니다. 기존 [Ko-conceptual-captions](https://github.com/QuoQA-NLP/Ko-conceptual-captions)에 공개된 한국어 caption을 가져와 데이터셋을 구축했습니다. 번역 결과가 다소 좋지 않아, 추후에 DeepL로 다시 번역할 수 있습니다.

License: [CC-3M](https://github.com/google-research-datasets/conceptual-captions/blob/master/LICENSE) 준수 |

true |

# Dataset Card for Cryptonews articles with price momentum labels

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

## Dataset Description

- **Homepage:**

- **Repository:** https://github.com/SahandNZ/IUST-NLP-project-spring-2023

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The dataset was gathered from two prominent sources in the cryptocurrency industry: Cryptonews.com and Binance.com. The aim of the dataset was to evaluate the impact of news on crypto price movements.

As we know, news events such as regulatory changes, technological advancements, and major partnerships can have a significant impact on the price of cryptocurrencies. By analyzing the data collected from these sources, this dataset aimed to provide insights into the relationship between news events and crypto market trends.

### Supported Tasks and Leaderboards

- **Text Classification**

- **Sentiment Analysis**

### Languages

The language data in this dataset is in English (BCP-47 en)

## Dataset Structure

### Data Instances

Todo

### Data Fields

Todo

### Data Splits

Todo

### Source Data

- **Textual:** https://Cryptonews.com

- **Numerical:** https://Binance.com |

false | # EasyQA: A Kindergarten-Level QA Dataset for Investigating Truthfulness.

EasyQA is a GPT-3.5-turbo-generated dataset of easy kindergarten-level facts, meant to be used to prompt and evaluate large language models for "common-sense" truthful responses. This dataset was originally created to understand how different types of truthfulness may be represented in the intermediate activations of large language models. EasyQA compromises 2346 questions that span 50 categories, including art, technology, education, music, and animals. The questions are meant to be extremely simple and obvious, eliciting an obvious truth that would not be susceptible to misconceptions -- making it an excellent comparison compared to benchmarks related to other types of truth (e.g. TruthfulQA, which focuses on common misconceptions).

Credits to Kevin Wang, Richard Ren, and Phillip Guo.

## Dataset Creation

The dataset was created by prompting GPT-3.5-turbo with: "*Please generate 50 easy, obvious, common-knowledge questions that a kindergartener would learn in class about the topic prompted, as well as correct and incorrect responses. These questions should be less like trivia questions (i.e. Who is known as the Queen of Jazz?) and more like obvious facts (ie What color is the sky?). Your generations should be in the format: Question: {Your question here} Right: {Right answer} Wrong: {Wrong answer} where each question is a new line. Please follow this format verbatim (e.g. do not number the questions).*"

The following categories were used:

```

Animals

Plants

Food and drink

Music

Movies

Television shows

Literature

Sports

Geography

History

Science

Mathematics

Art

Technology

Politics

Business and Economy

Education

Health and Fitness

Environment and Climate

Space and Astronomy

Fashion and Style

Video Games

Travel and Tourism

Language and Literature

Religion and Spirituality

Famous Personalities

Cultural Events/Festivals

Cars and Automobiles

Photography

Architecture

Medicine and Health

Psychology

Philosophy

Law

Social Sciences

Human Rights

Current Events/News

Global Affairs

National Landmarks

Celebrities and Entertainment

Nature

Cooking and Baking

Gardening

DIY Projects

Dance

Comic Books and Graphic Novels

Mythology and Folklore

Internet and Social Media

Parenting and Family Life

Home Decor

``` |

false | |

true | # Content

This is a dataset of Spotify tracks over a range of **125** different genres. Each track has some audio features associated with it. The data is in `CSV` format which is tabular and can be loaded quickly.

# Usage

The dataset can be used for:

- Building a **Recommendation System** based on some user input or preference

- **Classification** purposes based on audio features and available genres

- Any other application that you can think of. Feel free to discuss!

# Column Description

- **track_id**: The Spotify ID for the track

- **artists**: The artists' names who performed the track. If there is more than one artist, they are separated by a `;`

- **album_name**: The album name in which the track appears

- **track_name**: Name of the track

- **popularity**: **The popularity of a track is a value between 0 and 100, with 100 being the most popular**. The popularity is calculated by algorithm and is based, in the most part, on the total number of plays the track has had and how recent those plays are. Generally speaking, songs that are being played a lot now will have a higher popularity than songs that were played a lot in the past. Duplicate tracks (e.g. the same track from a single and an album) are rated independently. Artist and album popularity is derived mathematically from track popularity.

- **duration_ms**: The track length in milliseconds

- **explicit**: Whether or not the track has explicit lyrics (true = yes it does; false = no it does not OR unknown)

- **danceability**: Danceability describes how suitable a track is for dancing based on a combination of musical elements including tempo, rhythm stability, beat strength, and overall regularity. A value of 0.0 is least danceable and 1.0 is most danceable

- **energy**: Energy is a measure from 0.0 to 1.0 and represents a perceptual measure of intensity and activity. Typically, energetic tracks feel fast, loud, and noisy. For example, death metal has high energy, while a Bach prelude scores low on the scale

- **key**: The key the track is in. Integers map to pitches using standard Pitch Class notation. E.g. `0 = C`, `1 = C♯/D♭`, `2 = D`, and so on. If no key was detected, the value is -1

- **loudness**: The overall loudness of a track in decibels (dB)

- **mode**: Mode indicates the modality (major or minor) of a track, the type of scale from which its melodic content is derived. Major is represented by 1 and minor is 0

- **speechiness**: Speechiness detects the presence of spoken words in a track. The more exclusively speech-like the recording (e.g. talk show, audio book, poetry), the closer to 1.0 the attribute value. Values above 0.66 describe tracks that are probably made entirely of spoken words. Values between 0.33 and 0.66 describe tracks that may contain both music and speech, either in sections or layered, including such cases as rap music. Values below 0.33 most likely represent music and other non-speech-like tracks

- **acousticness**: A confidence measure from 0.0 to 1.0 of whether the track is acoustic. 1.0 represents high confidence the track is acoustic

- **instrumentalness**: Predicts whether a track contains no vocals. "Ooh" and "aah" sounds are treated as instrumental in this context. Rap or spoken word tracks are clearly "vocal". The closer the instrumentalness value is to 1.0, the greater likelihood the track contains no vocal content

- **liveness**: Detects the presence of an audience in the recording. Higher liveness values represent an increased probability that the track was performed live. A value above 0.8 provides strong likelihood that the track is live

- **valence**: A measure from 0.0 to 1.0 describing the musical positiveness conveyed by a track. Tracks with high valence sound more positive (e.g. happy, cheerful, euphoric), while tracks with low valence sound more negative (e.g. sad, depressed, angry)

- **tempo**: The overall estimated tempo of a track in beats per minute (BPM). In musical terminology, tempo is the speed or pace of a given piece and derives directly from the average beat duration

- **time_signature**: An estimated time signature. The time signature (meter) is a notational convention to specify how many beats are in each bar (or measure). The time signature ranges from 3 to 7 indicating time signatures of `3/4`, to `7/4`.

- **track_genre**: The genre in which the track belongs

# Sources and Methodology

The data was collected and cleaned using Spotify's Web API and Python. |

false | |

false | |

false | # rudetoxifier_data_detox

This is subset of toxic comments from [d0rj/rudetoxifier_data](https://huggingface.co/datasets/d0rj/rudetoxifier_data) which has detoxified column created by [s-nlp/ruT5-base-detox](https://huggingface.co/s-nlp/ruT5-base-detox). |

false |

prompts and prompt engineering are essential for guiding language models, enabling control over outputs, generating desired content, fostering creativity,

and enhancing the overall user experience. They form a critical component in the interaction between users and AI systems,

ensuring meaningful and contextually appropriate conversations. This is one of the inspiration behind this dataset.

In this dataset we generated this prompts samples by various chatbots and few from Bard and from ChatGpt.

the main intention and idea behind that is 1) Prompt Engineering 2) Rich data .

This type of few samples of prompt which for helpful for training various

generative ai applications.but in this dataset the prompts samples are low amount .but you generate synthetic data from that . |

false |

# Rakuda - Questions for Japanese models

**Repository**: [https://github.com/yuzu-ai/japanese-llm-ranking](https://github.com/yuzu-ai/japanese-llm-ranking)

This is a set of 40 questions in Japanese about Japanese-specific topics designed to evaluate the capabilities of AI Assistants in Japanese.

The questions are evenly distributed between four categories: history, society, government, and geography.

Questions in the first three categories are open-ended, while the geography questions are more specific.

Answers to these questions can be used to rank the Japanese abilities of models, in the same way the [vicuna-eval questions](https://lmsys.org/vicuna_eval/) are frequently used to measure the usefulness of assistants.

## Usage

```python

from datasets import load_dataset

dataset = load_dataset("yuzuai/rakuda-questions")

print(dataset)

# => DatasetDict({

# train: Dataset({

# features: ['category', 'question_id', 'text'],

# num_rows: 40

# })

# })

```

|

true |

# Dataset Card for "UnpredicTable-cluster22" - Dataset of Few-shot Tasks from Tables

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** https://ethanperez.net/unpredictable

- **Repository:** https://github.com/JunShern/few-shot-adaptation

- **Paper:** Few-shot Adaptation Works with UnpredicTable Data

- **Point of Contact:** junshern@nyu.edu, perez@nyu.edu

### Dataset Summary

The UnpredicTable dataset consists of web tables formatted as few-shot tasks for fine-tuning language models to improve their few-shot performance.

There are several dataset versions available:

* [UnpredicTable-full](https://huggingface.co/datasets/MicPie/unpredictable_full): Starting from the initial WTC corpus of 50M tables, we apply our tables-to-tasks procedure to produce our resulting dataset, [UnpredicTable-full](https://huggingface.co/datasets/MicPie/unpredictable_full), which comprises 413,299 tasks from 23,744 unique websites.

* [UnpredicTable-unique](https://huggingface.co/datasets/MicPie/unpredictable_unique): This is the same as [UnpredicTable-full](https://huggingface.co/datasets/MicPie/unpredictable_full) but filtered to have a maximum of one task per website. [UnpredicTable-unique](https://huggingface.co/datasets/MicPie/unpredictable_unique) contains exactly 23,744 tasks from 23,744 websites.

* [UnpredicTable-5k](https://huggingface.co/datasets/MicPie/unpredictable_5k): This dataset contains 5k random tables from the full dataset.

* UnpredicTable data subsets based on a manual human quality rating (please see our publication for details of the ratings):

* [UnpredicTable-rated-low](https://huggingface.co/datasets/MicPie/unpredictable_rated-low)

* [UnpredicTable-rated-medium](https://huggingface.co/datasets/MicPie/unpredictable_rated-medium)

* [UnpredicTable-rated-high](https://huggingface.co/datasets/MicPie/unpredictable_rated-high)

* UnpredicTable data subsets based on the website of origin:

* [UnpredicTable-baseball-fantasysports-yahoo-com](https://huggingface.co/datasets/MicPie/unpredictable_baseball-fantasysports-yahoo-com)

* [UnpredicTable-bulbapedia-bulbagarden-net](https://huggingface.co/datasets/MicPie/unpredictable_bulbapedia-bulbagarden-net)

* [UnpredicTable-cappex-com](https://huggingface.co/datasets/MicPie/unpredictable_cappex-com)

* [UnpredicTable-cram-com](https://huggingface.co/datasets/MicPie/unpredictable_cram-com)

* [UnpredicTable-dividend-com](https://huggingface.co/datasets/MicPie/unpredictable_dividend-com)

* [UnpredicTable-dummies-com](https://huggingface.co/datasets/MicPie/unpredictable_dummies-com)

* [UnpredicTable-en-wikipedia-org](https://huggingface.co/datasets/MicPie/unpredictable_en-wikipedia-org)

* [UnpredicTable-ensembl-org](https://huggingface.co/datasets/MicPie/unpredictable_ensembl-org)

* [UnpredicTable-gamefaqs-com](https://huggingface.co/datasets/MicPie/unpredictable_gamefaqs-com)

* [UnpredicTable-mgoblog-com](https://huggingface.co/datasets/MicPie/unpredictable_mgoblog-com)

* [UnpredicTable-mmo-champion-com](https://huggingface.co/datasets/MicPie/unpredictable_mmo-champion-com)

* [UnpredicTable-msdn-microsoft-com](https://huggingface.co/datasets/MicPie/unpredictable_msdn-microsoft-com)

* [UnpredicTable-phonearena-com](https://huggingface.co/datasets/MicPie/unpredictable_phonearena-com)

* [UnpredicTable-sittercity-com](https://huggingface.co/datasets/MicPie/unpredictable_sittercity-com)

* [UnpredicTable-sporcle-com](https://huggingface.co/datasets/MicPie/unpredictable_sporcle-com)

* [UnpredicTable-studystack-com](https://huggingface.co/datasets/MicPie/unpredictable_studystack-com)

* [UnpredicTable-support-google-com](https://huggingface.co/datasets/MicPie/unpredictable_support-google-com)

* [UnpredicTable-w3-org](https://huggingface.co/datasets/MicPie/unpredictable_w3-org)

* [UnpredicTable-wiki-openmoko-org](https://huggingface.co/datasets/MicPie/unpredictable_wiki-openmoko-org)

* [UnpredicTable-wkdu-org](https://huggingface.co/datasets/MicPie/unpredictable_wkdu-org)

* UnpredicTable data subsets based on clustering (for the clustering details please see our publication):

* [UnpredicTable-cluster00](https://huggingface.co/datasets/MicPie/unpredictable_cluster00)

* [UnpredicTable-cluster01](https://huggingface.co/datasets/MicPie/unpredictable_cluster01)

* [UnpredicTable-cluster02](https://huggingface.co/datasets/MicPie/unpredictable_cluster02)

* [UnpredicTable-cluster03](https://huggingface.co/datasets/MicPie/unpredictable_cluster03)

* [UnpredicTable-cluster04](https://huggingface.co/datasets/MicPie/unpredictable_cluster04)

* [UnpredicTable-cluster05](https://huggingface.co/datasets/MicPie/unpredictable_cluster05)

* [UnpredicTable-cluster06](https://huggingface.co/datasets/MicPie/unpredictable_cluster06)

* [UnpredicTable-cluster07](https://huggingface.co/datasets/MicPie/unpredictable_cluster07)

* [UnpredicTable-cluster08](https://huggingface.co/datasets/MicPie/unpredictable_cluster08)

* [UnpredicTable-cluster09](https://huggingface.co/datasets/MicPie/unpredictable_cluster09)

* [UnpredicTable-cluster10](https://huggingface.co/datasets/MicPie/unpredictable_cluster10)

* [UnpredicTable-cluster11](https://huggingface.co/datasets/MicPie/unpredictable_cluster11)

* [UnpredicTable-cluster12](https://huggingface.co/datasets/MicPie/unpredictable_cluster12)

* [UnpredicTable-cluster13](https://huggingface.co/datasets/MicPie/unpredictable_cluster13)

* [UnpredicTable-cluster14](https://huggingface.co/datasets/MicPie/unpredictable_cluster14)

* [UnpredicTable-cluster15](https://huggingface.co/datasets/MicPie/unpredictable_cluster15)

* [UnpredicTable-cluster16](https://huggingface.co/datasets/MicPie/unpredictable_cluster16)

* [UnpredicTable-cluster17](https://huggingface.co/datasets/MicPie/unpredictable_cluster17)

* [UnpredicTable-cluster18](https://huggingface.co/datasets/MicPie/unpredictable_cluster18)

* [UnpredicTable-cluster19](https://huggingface.co/datasets/MicPie/unpredictable_cluster19)

* [UnpredicTable-cluster20](https://huggingface.co/datasets/MicPie/unpredictable_cluster20)

* [UnpredicTable-cluster21](https://huggingface.co/datasets/MicPie/unpredictable_cluster21)

* [UnpredicTable-cluster22](https://huggingface.co/datasets/MicPie/unpredictable_cluster22)

* [UnpredicTable-cluster23](https://huggingface.co/datasets/MicPie/unpredictable_cluster23)

* [UnpredicTable-cluster24](https://huggingface.co/datasets/MicPie/unpredictable_cluster24)

* [UnpredicTable-cluster25](https://huggingface.co/datasets/MicPie/unpredictable_cluster25)

* [UnpredicTable-cluster26](https://huggingface.co/datasets/MicPie/unpredictable_cluster26)

* [UnpredicTable-cluster27](https://huggingface.co/datasets/MicPie/unpredictable_cluster27)

* [UnpredicTable-cluster28](https://huggingface.co/datasets/MicPie/unpredictable_cluster28)

* [UnpredicTable-cluster29](https://huggingface.co/datasets/MicPie/unpredictable_cluster29)

* [UnpredicTable-cluster-noise](https://huggingface.co/datasets/MicPie/unpredictable_cluster-noise)

### Supported Tasks and Leaderboards

Since the tables come from the web, the distribution of tasks and topics is very broad. The shape of our dataset is very wide, i.e., we have 1000's of tasks, while each task has only a few examples, compared to most current NLP datasets which are very deep, i.e., 10s of tasks with many examples. This implies that our dataset covers a broad range of potential tasks, e.g., multiple-choice, question-answering, table-question-answering, text-classification, etc.

The intended use of this dataset is to improve few-shot performance by fine-tuning/pre-training on our dataset.

### Languages

English

## Dataset Structure

### Data Instances

Each task is represented as a jsonline file and consists of several few-shot examples. Each example is a dictionary containing a field 'task', which identifies the task, followed by an 'input', 'options', and 'output' field. The 'input' field contains several column elements of the same row in the table, while the 'output' field is a target which represents an individual column of the same row. Each task contains several such examples which can be concatenated as a few-shot task. In the case of multiple choice classification, the 'options' field contains the possible classes that a model needs to choose from.

There are also additional meta-data fields such as 'pageTitle', 'title', 'outputColName', 'url', 'wdcFile'.

### Data Fields

'task': task identifier

'input': column elements of a specific row in the table.

'options': for multiple choice classification, it provides the options to choose from.

'output': target column element of the same row as input.

'pageTitle': the title of the page containing the table.

'outputColName': output column name

'url': url to the website containing the table

'wdcFile': WDC Web Table Corpus file

### Data Splits

The UnpredicTable datasets do not come with additional data splits.

## Dataset Creation

### Curation Rationale

Few-shot training on multi-task datasets has been demonstrated to improve language models' few-shot learning (FSL) performance on new tasks, but it is unclear which training tasks lead to effective downstream task adaptation. Few-shot learning datasets are typically produced with expensive human curation, limiting the scale and diversity of the training tasks available to study. As an alternative source of few-shot data, we automatically extract 413,299 tasks from diverse internet tables. We provide this as a research resource to investigate the relationship between training data and few-shot learning.

### Source Data

#### Initial Data Collection and Normalization

We use internet tables from the English-language Relational Subset of the WDC Web Table Corpus 2015 (WTC). The WTC dataset tables were extracted from the July 2015 Common Crawl web corpus (http://webdatacommons.org/webtables/2015/EnglishStatistics.html). The dataset contains 50,820,165 tables from 323,160 web domains. We then convert the tables into few-shot learning tasks. Please see our publication for more details on the data collection and conversion pipeline.

#### Who are the source language producers?

The dataset is extracted from [WDC Web Table Corpora](http://webdatacommons.org/webtables/).

### Annotations

#### Annotation process

Manual annotation was only carried out for the [UnpredicTable-rated-low](https://huggingface.co/datasets/MicPie/unpredictable_rated-low),

[UnpredicTable-rated-medium](https://huggingface.co/datasets/MicPie/unpredictable_rated-medium), and [UnpredicTable-rated-high](https://huggingface.co/datasets/MicPie/unpredictable_rated-high) data subsets to rate task quality. Detailed instructions of the annotation instructions can be found in our publication.

#### Who are the annotators?

Annotations were carried out by a lab assistant.

### Personal and Sensitive Information

The data was extracted from [WDC Web Table Corpora](http://webdatacommons.org/webtables/), which in turn extracted tables from the [Common Crawl](https://commoncrawl.org/). We did not filter the data in any way. Thus any user identities or otherwise sensitive information (e.g., data that reveals racial or ethnic origins, sexual orientations, religious beliefs, political opinions or union memberships, or locations; financial or health data; biometric or genetic data; forms of government identification, such as social security numbers; criminal history, etc.) might be contained in our dataset.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is intended for use as a research resource to investigate the relationship between training data and few-shot learning. As such, it contains high- and low-quality data, as well as diverse content that may be untruthful or inappropriate. Without careful investigation, it should not be used for training models that will be deployed for use in decision-critical or user-facing situations.

### Discussion of Biases

Since our dataset contains tables that are scraped from the web, it will also contain many toxic, racist, sexist, and otherwise harmful biases and texts. We have not run any analysis on the biases prevalent in our datasets. Neither have we explicitly filtered the content. This implies that a model trained on our dataset may potentially reflect harmful biases and toxic text that exist in our dataset.

### Other Known Limitations

No additional known limitations.

## Additional Information

### Dataset Curators

Jun Shern Chan, Michael Pieler, Jonathan Jao, Jérémy Scheurer, Ethan Perez

### Licensing Information

Apache 2.0

### Citation Information

```

@misc{chan2022few,

author = {Chan, Jun Shern and Pieler, Michael and Jao, Jonathan and Scheurer, Jérémy and Perez, Ethan},

title = {Few-shot Adaptation Works with UnpredicTable Data},

publisher={arXiv},

year = {2022},

url = {https://arxiv.org/abs/2208.01009}

}

```

|

false |

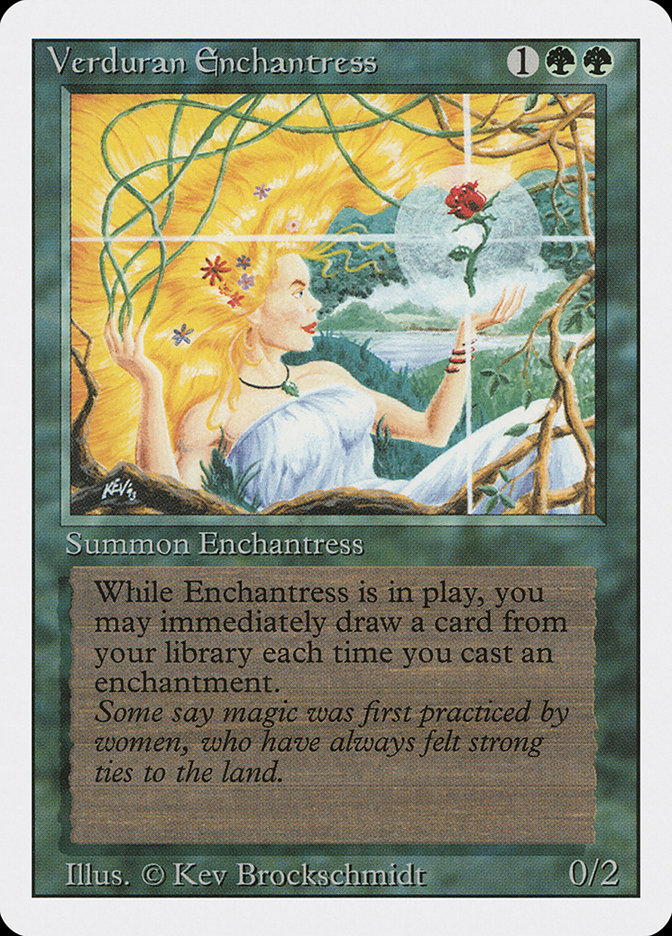

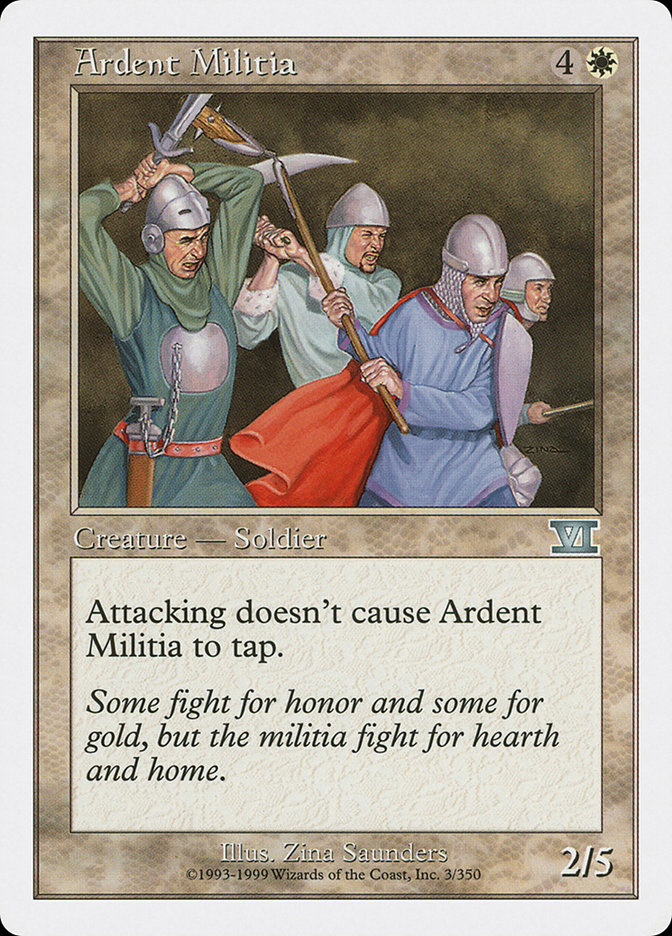

# Disclaimer

This was inspired from https://huggingface.co/datasets/lambdalabs/pokemon-blip-captions

# Dataset Card for A subset of Magic card BLIP captions

_Dataset used to train [Magic card text to image model](https://github.com/LambdaLabsML/examples/tree/main/stable-diffusion-finetuning)_

BLIP generated captions for Magic Card images collected from the web. Original images were obtained from [Scryfall](https://scryfall.com/) and captioned with the [pre-trained BLIP model](https://github.com/salesforce/BLIP).

For each row the dataset contains `image` and `text` keys. `image` is a varying size PIL jpeg, and `text` is the accompanying text caption. Only a train split is provided.

## Examples

> A woman holding a flower

> two knights fighting

> a card with a unicorn on it

## Citation

If you use this dataset, please cite it as:

```

@misc{yayab2022onepiece,

author = {YaYaB},

title = {Magic card creature split BLIP captions},

year={2022},

howpublished= {\url{https://huggingface.co/datasets/YaYaB/magic-blip-captions/}}

}

``` |

false |

# Dataset Card for Dicionário Português

It is a list of 53138 portuguese words with its inflections.

How to use it:

```

from datasets import load_dataset

remote_dataset = load_dataset("VanessaSchenkel/pt-inflections", field="data")

remote_dataset

```

Output:

```

DatasetDict({

train: Dataset({

features: ['word', 'pos', 'forms'],

num_rows: 53138

})

})

```

Exemple:

```

remote_dataset["train"][42]

```

Output:

```

{'word': 'numeral',

'pos': 'noun',

'forms': [{'form': 'numerais', 'tags': ['plural']}]}

```

|

false |

# Dataset Card for Dicionário Português

It is a list of portuguese words with its inflections

How to use it:

```

from datasets import load_dataset

remote_dataset = load_dataset("VanessaSchenkel/pt-all-words")

remote_dataset

```

|

false | ## Dataset Summary

Depth-of-Field(DoF) dataset is comprised of 1200 annotated images, binary annotated with(0) and without(1) bokeh effect, shallow or deep depth of field. It is a forked data set from the [Unsplash 25K](https://github.com/unsplash/datasets) data set.

## Dataset Description

- **Repository:** [https://github.com/sniafas/photography-style-analysis](https://github.com/sniafas/photography-style-analysis)

- **Paper:** [More Information Needed](https://www.researchgate.net/publication/355917312_Photography_Style_Analysis_using_Machine_Learning)

### Citation Information

```

@article{sniafas2021,

title={DoF: An image dataset for depth of field classification},

author={Niafas, Stavros},

doi= {10.13140/RG.2.2.29880.62722},

url= {https://www.researchgate.net/publication/364356051_DoF_depth_of_field_datase}

year={2021}

}

```

Note that each DoF dataset has its own citation. Please see the source to

get the correct citation for each contained dataset. |

false |

# Dataset Card for COPA-SSE

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://github.com/a-brassard/copa-sse

- **Paper:** [COPA-SSE: Semi-Structured Explanations for Commonsense Reasoning](https://arxiv.org/abs/2201.06777)

- **Point of Contact:** [Ana Brassard](mailto:ana.brassard@riken.jp)

### Dataset Summary

COPA-SSE contains crowdsourced explanations for the [Balanced COPA](https://balanced-copa.github.io/) dataset, a variant of the [Choice of Plausible Alternatives (COPA)](https://people.ict.usc.edu/~gordon/copa.html) benchmark. The explanations are formatted as a set of triple-like common sense statements with [ConceptNet](https://conceptnet.io/) relations but freely written concepts.

### Supported Tasks and Leaderboards

Can be used to train a model for explain+predict or predict+explain settings. Suited for both text-based and graph-based architectures. Base task is COPA (causal QA).

### Languages

English

## Dataset Structure

### Data Instances

Validation and test set each contains Balanced COPA samples with added explanations in `.jsonl` format. The question ids match the original questions of the Balanced COPA validation and test sets, respectively.

### Data Fields

Each entry contains:

- the original question (matching format and ids)

- `human-explanations`: a list of explanations each containing:

- `expl-id`: the explanation id

- `text`: the explanation in plain text (full sentences)

- `worker-id`: anonymized worker id (the author of the explanation)

- `worker-avg`: the average score the author got for their explanations

- `all-ratings`: all collected ratings for the explanation

- `filtered-ratings`: ratings excluding those that failed the control

- `triples`: the triple-form explanation (a list of ConceptNet-like triples)

Example entry:

```

id: 1,

asks-for: cause,

most-plausible-alternative: 1,

p: "My body cast a shadow over the grass.",

a1: "The sun was rising.",

a2: "The grass was cut.",

human-explanations: [

{expl-id: f4d9b407-681b-4340-9be1-ac044f1c2230,

text: "Sunrise causes casted shadows.",

worker-id: 3a71407b-9431-49f9-b3ca-1641f7c05f3b,

worker-avg: 3.5832864694635025,

all-ratings: [1, 3, 3, 4, 3],

filtered-ratings: [3, 3, 4, 3],

filtered-avg-rating: 3.25,

triples: [["sunrise", "Causes", "casted shadows"]]

}, ...]

```

### Data Splits

Follows original Balanced COPA split: 1000 dev and 500 test instances. Each instance has up to nine explanations.

## Dataset Creation

### Curation Rationale

The goal was to collect human-written explanations to supplement an existing commonsense reasoning benchmark. The triple-like format was designed to support graph-based models and increase the overall data quality, the latter being notoriously lacking in freely-written crowdsourced text.

### Source Data

#### Initial Data Collection and Normalization

The explanations in COPA-SSE are fully crowdsourced via the Amazon Mechanical Turk platform. Workers entered explanations by providing one or more concept-relation-concept triples. The explanations were then rated by different annotators with one- to five-star ratings. The final dataset contains explanations with a range of quality ratings. Additional collection rounds guaranteed that each sample has at least one explanation rated 3.5 stars or higher.

#### Who are the source language producers?

The original COPA questions (500 dev+500 test) were initially hand-crafted by experts. Similarly, the additional 500 development samples in Balanced COPA were authored by a small team of NLP researchers. Finally, the added explanations and quality ratings in COPA-SSE were collected with the help of Amazon Mechanical Turk workers who passed initial qualification rounds.

### Annotations

#### Annotation process

Workers were shown a Balanced COPA question, its answer, and a short instructional text. Then, they filled in free-form text fields for head and tail concepts and selected the relation from a drop-down menu with a curated selection of ConceptNet relations. Each explanation was rated by five different workers who were shown the same question and answer with five candidate explanations.

#### Who are the annotators?

The workers were restricted to persons located in the U.S. or G.B., with a HIT approval of 98% or more, and 500 or more approved HITs. Their identity and further personal information are not available.

### Personal and Sensitive Information

N/A

## Considerations for Using the Data

### Social Impact of Dataset

Models trained to output similar explanations as those in COPA-SSE may not necessarily provide convincing or faithful explanations. Researchers should carefully evaluate the resulting explanations before considering any real-world applications.

### Discussion of Biases

COPA questions ask for causes or effects of everyday actions or interactions, some of them containing gendered language. Some explanations may reinforce harmful stereotypes if their reasoning is based on biased assumptions. These biases were not verified during collection.

### Other Known Limitations

The data was originally intended to be explanation *graphs*, i.e., hypothetical "ideal" subgraphs of a commonsense knowledge graph. While they can still function as valid natural language explanations, their wording may be at times unnatural to a human and may be better suited for graph-based implementations.

## Additional Information

### Dataset Curators

This work was authored by Ana Brassard, Benjamin Heinzerling, Pride Kavumba, and Kentaro Inui. All are both members of the Riken AIP Natural Language Understanding Team and the Tohoku NLP Lab under Tohoku University.

### Licensing Information

COPA-SSE is released under the [MIT License](https://mit-license.org/).

### Citation Information

```

@InProceedings{copa-sse:LREC2022,

author = {Brassard, Ana and Heinzerling, Benjamin and Kavumba, Pride and Inui, Kentaro},

title = {COPA-SSE: Semi-structured Explanations for Commonsense Reasoning},

booktitle = {Proceedings of the Language Resources and Evaluation Conference},

month = {June},

year = {2022},

address = {Marseille, France},

publisher = {European Language Resources Association},

pages = {3994--4000},

url = {https://aclanthology.org/2022.lrec-1.425}

}

```

### Contributions

Thanks to [@a-brassard](https://github.com/a-brassard) for adding this dataset. |

true |

# MLDoc

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Website:** https://github.com/facebookresearch/MLDoc

### Dataset Summary