text-classification bool 2 classes | text stringlengths 0 664k |

|---|---|

true | # AutoTrain Dataset for project: severe-js100-sentiment

## Dataset Description

This dataset has been automatically processed by AutoTrain for project severe-js100-sentiment.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "00:58 #\u0e2d\u0e38\u0e1a\u0e31\u0e15\u0e34\u0e40\u0e2b\u0e15\u0e38 #\u0e16\u0e19\u0e19\u0e1a\u0e32\u0e07\u0e1a\u0e2d\u0e193 \u0e0a\u0e48\u0e27\u0e07\u0e42\u0e23\u0e07\u0e40\u0e23\u0e35\u0e22\u0e19\u0e2a\u0e32\u0e23\u0e2a\u0e32\u0e2a\u0e19\u0e4c\u0e27\u0e34\u0e40\u0e17\u0e28\u0e1a\u0e32\u0e07\u0e1a\u0e2d\u0e19 >\u0e42\u0e23\u0e07\u0e40\u0e23\u0e35\u0e22\u0e19\u0e01\u0e23\u0e1e\u0e34\u0e17\u0e31\u0e01\u0e29\u0e4c\u0e28\u0e36\u0e01\u0e29\u0e32 \u0e1b\u0e32\u0e01\u0e0b\u0e2d\u0e22\u0e1a\u0e32\u0e07\u0e1a\u0e2d\u0e193\u0e0b\u0e2d\u0e225 \u0e23\u0e16\u0e08\u0e31\u0e01\u0e23\u0e22\u0e32\u0e19\u0e22\u0e19\u0e15\u0e4c\u0e40\u0e2a\u0e35\u0e22\u0e2b\u0e25\u0e31\u0e01\u0e25\u0e49\u0e21 \u0e02\u0e27\u0e32\u0e07\u0e0a\u0e48\u0e2d\u0e07\u0e17\u0e32\u0e07\u0e0b\u0e49\u0e32\u0e22",

"target": 2

},

{

"text": "03:22 #\u0e2d\u0e38\u0e1a\u0e31\u0e15\u0e34\u0e40\u0e2b\u0e15\u0e38 #\u0e16\u0e19\u0e19\u0e01\u0e32\u0e0d\u0e08\u0e19\u0e32\u0e20\u0e34\u0e40\u0e29\u0e01 \u0e0a\u0e48\u0e27\u0e07\u0e2a\u0e30\u0e1e\u0e32\u0e19\u0e02\u0e49\u0e32\u0e21\u0e04\u0e25\u0e2d\u0e07\u0e20\u0e32\u0e29\u0e35\u0e40\u0e08\u0e23\u0e34\u0e0d >\u0e41\u0e22\u0e01\u0e1a\u0e32\u0e07\u0e41\u0e27\u0e01 \u0e1a\u0e19\u0e15\u0e48\u0e32\u0e07\u0e23\u0e30\u0e14\u0e31\u0e1a\u0e40\u0e1e\u0e0a\u0e23\u0e40\u0e01\u0e29\u0e21 \u0e23\u0e16\u0e1b\u0e34\u0e04\u0e2d\u0e31\u0e1e\u0e40\u0e2a\u0e35\u0e22\u0e2b\u0e25\u0e31\u0e01\u0e0a\u0e19\u0e02\u0e2d\u0e1a\u0e17\u0e32\u0e07 \u0e02\u0e27\u0e32\u0e07\u0e0a\u0e48\u0e2d\u0e07\u0e17\u0e32\u0e07\u0e0b\u0e49\u0e32\u0e22",

"target": 2

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "ClassLabel(names=['\u0e23\u0e38\u0e19\u0e41\u0e23\u0e07', '\u0e23\u0e38\u0e19\u0e41\u0e23\u0e07\u0e21\u0e32\u0e01', '\u0e44\u0e21\u0e48\u0e23\u0e38\u0e19\u0e41\u0e23\u0e07'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 5348 |

| valid | 1339 |

|

true | |

true | # Dataset Card for "alpaca-gigo-detector"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false | # AutoTrain Dataset for project: pegasus-subreddit-comments-summarizer

## Dataset Description

This dataset has been automatically processed by AutoTrain for project pegasus-subreddit-comments-summarizer.

### Languages

The BCP-47 code for the dataset's language is en.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "I go through this every single year. We have an Ironman competition that is 2 miles from my hotel, and I sell out for that weekend almost a year in advance. Without fail I will have some nitwit who will come up on their checkout day and ask to extend, when I tell them I can't they lose their mind at me. It's their room, they paid for it, they're already in there how can I just give it away. People do not understand how reservations work.",

"target": "The commenter experiences this every year - they sell out their hotel almost a year in advance for an Ironman competition nearby. Despite this, some customers still ask to extend their stay at checkout and get angry when told it's not possible because they don't understand how reservations work."

},

{

"text": "Can i just say .. thanks for going back to make sure you hadn't overreacted. Im sure that made things so much easier on all the staff, with it being their first days back, being understaffed, I'm sure, and trying to get back into the swing of things. I think you handled that really well :)",

"target": "The commenter appreciates the poster's effort in going back to verify if they had overreacted. The commenter believes this action might have made things easier for the understaffed team during their first days back. The commenter commends the poster for handling the situation well."

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "Value(dtype='string', id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 7177 |

| valid | 1796 |

|

false | |

false | |

false | |

true | |

false | Source: https://dumps.wikimedia.org/kkwiki/latest/ [kwiki-latest-pages-articles.xml.bz2] |

false | # Dataset Card for "igbo-translation"

## Dataset Summary

This data set contains translated data from engllish to igbo language for use in training general purpose translation models

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

false | # Silver Ukrainian Coreference Dataset

## Dataset Description

### Dataset Summary

A silver coreference resolution dataset for the Ukrainian language. The dataset was generated automatically with the usage of the word alignment method from the following English dataset: https://github.com/d5555/Coreference-dataset.

The word alignment method was implemented by Andrii Kursin (aqrsn@ukr.net).

### Languages

- Ukrainian

## Dataset Structure

### Data Fields

Each sample of the dataset consists of the following fields:

- **doc_key** - document identifier.

- **clusters** - list of clusters, where each cluster consists of the list of mentions. Each mention is represented as a list of two indices: the first index denotes the first word of the mention, the second index denotes the last word of the mention.

- **sentences** - list of sentences where each sentence is represented as a list of words.

- **tokens** - list of words.

- **speakers** - list of speakers which is currently filled with dummy input.

### Data Splits

The dataset is divided into two parts:

- training set;

- validation set.

A test set is absent as far as the dataset is generated automatically.

## Dataset Creation

### Source Data

The dataset was created from the following dataset: https://github.com/d5555/Coreference-dataset.

### Contributions

The code for the translation of samples with further alignment was created by Andrii Kursin (aqrsn@ukr.net). The dataset was generated by Artem Kramov (https://www.linkedin.com/in/artem-kramov-0b3731100/). |

true | |

false | |

true | |

false | i have no idea how to add data |

false | |

false | |

true |

# Dataset Card for ScribbleHub17K

*The BigKnow2022 dataset and its subsets are not yet complete. Not all information here may be accurate or accessible.*

## Dataset Description

- **Homepage:** (TODO)

- **Repository:** <https://github.com/RyokoAI/BigKnow2022>

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** Ronsor/undeleted <ronsor@ronsor.com>

### Dataset Summary

ScribbleHub17K is a dataset consisting of text from over 373,000 chapters across approximately 17,500 series posted on the

original story sharing site [Scribble Hub](https://scribblehub.com).

### Supported Tasks and Leaderboards

This dataset is primarily intended for unsupervised training of text generation models; however, it may be useful for other purposes.

* text-classification

* text-generation

### Languages

* English

## Dataset Structure

### Data Instances

```json

{

"text": " \n2082 Planet Earth the Fracture War, after a sudden fracture in our dimension unidentified beings with advance technology and u...",

"meta": {

"subset": "scribblehub",

"series": "3811",

"id": "3812",

"q": 0.91,

"title": "The First - Prologue- The Fracture War",

"author": "RobotLove",

"chapters": 1,

"rating": 5,

"rating_ct": 1,

"genre": [

"Action",

"Martial Arts",

"Romance"

],

"tags": [

"Kingdom Building",

"Loyal Subordinates",

"Male Protagonist",

"Organized Crime",

"Scheming"

]

}

}

{

"text": " For anyone that may see this, thanks for reading. I'm just here to see if a story can spill out of my mind if just start writin...",

"meta": {

"subset": "scribblehub",

"series": "586090",

"id": "586099",

"q": 0.82,

"title": "Just writing to write…i guess? - I’m here now",

"author": "BigOofStudios",

"chapters": 1,

"rating": 4.5,

"rating_ct": 2,

"genre": [

"Action",

"Comedy"

],

"tags": []

}

}

```

### Data Fields

* `text`: the actual chapter text

* `meta`: metadata for chapter and series

* `subset`: data source tag: `scribblehub`

* `series`: series ID

* `id`: chapter ID

* `lang`: always `en` (English)

* `q`: quality score (q-score) between (0.0) terrible and 1.0 (perfect); anything with a score `> 0.5` is generally good enough

* `title`: chapter and series title in the format `<chapter title> - <series title>`

* `chapters`: total number of chapters in the series

* `rating`: Scribble Hub rating between 0 and 5 stars

* `rating_ct`: number of ratings

* `author`: author name

* `genre`: array of Scribble Hub genres for the series

* `tags`: array of tags for the series

#### Q-Score Distribution

```

0.00: 0

0.10: 0

0.20: 0

0.30: 84

0.40: 718

0.50: 3775

0.60: 22300

0.70: 72581

0.80: 137982

0.90: 135800

1.00: 59

```

### Data Splits

No splitting of the data was performed.

## Dataset Creation

### Curation Rationale

Scribble Hub is a home for original web stories, effectively a smaller, English version of Japan's Syosetuka ni Narou. As a

result, it is a good source for reasonably well written creative content.

### Source Data

#### Initial Data Collection and Normalization

TODO

#### Who are the source language producers?

The authors of each novel.

### Annotations

#### Annotation process

Title, ratings, and other metadata were parsed out using scripts that will be provided in the BigKnow2022 GitHub repository.

#### Who are the annotators?

No human annotators.

### Personal and Sensitive Information

The dataset contains only works of fiction, and we do not believe it contains any PII.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is intended to be useful for anyone who wishes to train a model to generate "more entertaining" content.

It may also be useful for other languages depending on your language model.

### Discussion of Biases

This dataset is composed of fictional works by various authors. Because of this fact, the contents of this dataset will reflect

the biases of those authors. **Additionally, this dataset contains NSFW material and was not filtered. Beware of stereotypes.**

### Other Known Limitations

N/A

## Additional Information

### Dataset Curators

Ronsor Labs

### Licensing Information

Apache 2.0, for all parts of which Ronsor Labs or the Ryoko AI Production Committee may be considered authors. All other material is

distributed under fair use principles.

### Citation Information

```

@misc{ryokoai2023-bigknow2022,

title = {BigKnow2022: Bringing Language Models Up to Speed},

author = {Ronsor},

year = {2023},

howpublished = {\url{https://github.com/RyokoAI/BigKnow2022}},

}

```

### Contributions

Thanks to @ronsor (GH) for gathering this dataset. |

false | |

false | |

true |

# Dataset Card for Honeyfeed3600

*The BigKnow2022 dataset and its subsets are not yet complete. Not all information here may be accurate or accessible.*

## Dataset Description

- **Homepage:** (TODO)

- **Repository:** <https://github.com/RyokoAI/BigKnow2022>

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** Ronsor/undeleted <ronsor@ronsor.com>

### Dataset Summary

Honeyfeed3600 is a dataset consisting of text from over 38,000 chapters across approximately 3,600 series posted on the

English-language web novel site [Honeyfeed](https://www.honeyfeed.fm).

### Supported Tasks and Leaderboards

This dataset is primarily intended for unsupervised training of text generation models; however, it may be useful for other purposes.

* text-classification

* text-generation

### Languages

* English

## Dataset Structure

### Data Instances

```json

{

"text": "Dark, black, nothingness. There are so many ways to describe that hole, but nothing would get me down there...","

"meta": {

"subset": "honeyfeed",

"themes": [],

"my_themes": [],

"prompt": "",

"author": "Lucianael",

"novel": "10009",

"id": "55686",

"title": "13 Steps - 13 Steps",

"likes": 4,

"views": 21,

"q": 0.5999999999999999

}

}

```

### Data Fields

* `text`: the actual chapter text

* `meta`: novel and chapter metadata

* `subset`: dataset tag: `honeyfeed`

* `lang`: dataset language: `en` (English)

* `themes`: array of novel themes

* `my_themes`: array of additional novel themes

* `prompt`: writing prompt

* `author`: author name

* `novel`: novel ID

* `id`: chapter ID

* `title`: novel and chapter title in the form `<chapter title> - <novel title>`

* `likes`: novel like count

* `views`: novel view count

* `q`: q-score (quality score)

#### Q-Score Distribution

```

0.00: 499

0.10: 420

0.20: 2562

0.30: 0

0.40: 0

0.50: 13344

0.60: 9021

0.70: 5997

0.80: 4217

0.90: 1931

1.00: 801

```

### Data Splits

No splitting of the data was performed.

## Dataset Creation

### Curation Rationale

TODO

### Source Data

#### Initial Data Collection and Normalization

TODO

#### Who are the source language producers?

The authors of each novel.

### Annotations

#### Annotation process

Chapter and novel titles were scraped alongside chapter text.

#### Who are the annotators?

No human annotators.

### Personal and Sensitive Information

The dataset contains only works of fiction, and we do not believe it contains any PII.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is intended to be useful for anyone who wishes to train a model to generate "more entertaining" content.

It may also be useful for other languages depending on your language model.

### Discussion of Biases

This dataset is composed of fictional works by various authors. Because of this fact, the contents of this dataset will reflect

the biases of those authors. Beware of stereotypes.

### Other Known Limitations

N/A

## Additional Information

### Dataset Curators

Ronsor Labs

### Licensing Information

Apache 2.0, for all parts of which Ronsor Labs or the Ryoko AI Production Committee may be considered authors. All other material is

distributed under fair use principles.

### Citation Information

```

@misc{ryokoai2023-bigknow2022,

title = {BigKnow2022: Bringing Language Models Up to Speed},

author = {Ronsor},

year = {2023},

howpublished = {\url{https://github.com/RyokoAI/BigKnow2022}},

}

```

### Contributions

Thanks to @ronsor (GH) for gathering this dataset. |

false | # 预览[.](https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/README.md)

**简体中文**|

[English](https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/README_EN.md)|

[日本語](https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/README_JP.md)

本仓库用于预览so-vits-svc-4.0训练出的各种语音模型的效果,**点击角色名**自动跳转对应训练参数。</br>

推荐用**谷歌浏览器**,其他浏览器可能无法正确加载预览的音频。</br>

正常说话的音色转换较为准确,歌曲包含较广的音域且bgm和声等难以去除干净,效果有所折扣。</br>

有推荐的歌想要转换听听效果,或者其他内容建议,[**点我**](https://huggingface.co/datasets/jiaheillu/audio_preview/discussions/new)发起讨论</br>

下面是预览音频,**上下左右滑动**可以看到全部

<style>

.scrolling-container {

width: 100%;

max-width: 1600px;

height: 420px;

overflow: auto;

margin: 0;

}

@media screen and (max-width: 768px) {

.scrolling-container {

width: 100%;

height: 120px

overflow: auto;

}

}

</style>

<div class="scrolling-container">

<table border="1" style="white-space: nowrap; text-align: center;">

<thead>

<tr>

<th>角色名</th>

<th>角色原声A</th>

<th>被转换人声B</th>

<th>A音色替换B</th>

<th>A音色翻唱(点击直接下载)</th>

</tr>

</thead>

<tbody>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/audio_preview/blob/main/散兵效果预览/训练参数速览.md">散兵</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/散兵效果预览/部分训练集/真遗憾,小吉祥草王让他消除了那么多的切片,剥夺了我将他一片一片千刀万剐的快乐%E3%80%82.mp3" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/散兵效果预览/原声/shenli3.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/散兵效果预览/转换结果/shenli3mp3_auto_liulangzhe.wav" controls="controls"></audio></td>

<td><a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/散兵效果预览/转换结果/夢で逢えたら2liulangzhe_f.wav">夢で会えたら</a></td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/audio_preview/blob/main/胡桃_preview/README.md">胡桃</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/%E8%83%A1%E6%A1%83_preview/hutao.wav" controls="controls"></audio></td>

<td>.........</td>

<td>.........</td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/胡桃_preview/moonlight_shadow2胡桃.WAV">moonlight shadow</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/胡桃_preview/云烟成雨2胡桃.WAV">云烟成雨</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/胡桃_preview/原点2胡桃.WAV">原点</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/胡桃_preview/夢だ会えたら2胡桃.WAV">夢で逢えたら</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/胡桃_preview/贝加尔湖畔2胡桃.WAV">贝加尔湖畔</a>

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/audio_preview/blob/main/绫华_preview/README.md">神里绫华</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/linghua428.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/yelan.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/绫华_preview/yelan.wav_auto_linghua_0.5.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/アムリタ2绫华.WAV">アムリタ</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/大鱼2绫华.WAV">大鱼</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/遊園施設2绫华.WAV">遊園施設</a>,

<a href="https://huggingface.co/datasets/jiaheillu/audio_preview/resolve/main/绫华_preview/the_day_you_want_away2绫华.WAV">the day you want away</a>

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/宵宫_preview/README.md">宵宫</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/xiaogong.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/hutao2.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/hutao2wav_0key_xiaogong_0.5-2.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/昨夜书2宵宫.WAV">昨夜书</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/lemon2宵宫.WAV">lemon</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/宵宫_preview/my_heart_will_go_no2宵宫.WAV">my heart will go on</a>,

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/刻晴_preview/README.md">刻晴</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/原_keqing2.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/待_xiaogong3.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/已_xiaogong2keqing.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/嚣张2刻晴.WAV">嚣张</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/ファティマ2刻晴.WAV">ファティマ</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/刻晴_preview/hero2刻晴.WAV">hero</a>,

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/可莉_preview/README.md">可莉</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/原_keli.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/待_ying.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/已_ying2keli.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/樱花草2可莉.WAV">樱花草</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/夢をかなえてドラえもん2可莉.WAV">夢をかなえてドラえもん</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/可莉_preview/sun_shine2可莉.WAV">sun_shine</a>,

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/鹿野院平藏_preview/README.md">鹿野院平藏</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/原_pingzang.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/待_shenzi.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/已_shenzi2pingzang.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/风继续吹2pingng.WAV">风继续吹</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/小さな恋の歌2pingzang.WAV">小さな恋の歌</a>,

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/鹿野院平藏_preview/love_yourself2pingzang.WAV">love_yourself</a>,

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/imallryt_preview/README.md">imallryt</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/imallryt_preview/%E5%8E%9F_IVOL_1%20Care_DRY_120_Am_Main_Vocal.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/imallryt_preview/%E5%BE%85_Lead_A%20minor_DRY.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/imallryt_preview/%E5%B7%B2_Lead_A%20minor_DRYwav_0key_imallryt_0.5.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/imallryt_preview/海阔天空2imallryt.WAV">海阔天空</a>,

</td>

</tr>

<tr>

<td><a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/blob/main/kagami_preview/README.md">kagami</a></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/kagami_preview/原_kagami.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/kagami_preview/待_wendi.wav" controls="controls"></audio></td>

<td><audio src="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/kagami_preview/已_windi2kagami.wav" controls="controls"></audio></td>

<td>

<a href="https://huggingface.co/datasets/jiaheillu/sovits_audio_preview/resolve/main/kagami_preview/えるの侵蝕_Vocals.wav_-4key_kagami_0.5.flac">えるの侵蝕</a>,

</td>

</tr>

</tbody>

</table>

</div>

关键参数:</br>

audio duration:训练集总时长</br>

epoch: 轮数</br>

其余:</br>

batch_size = 一个step训练的片段数<br>

segments = 音频被切分的片段<br>

step=segments*epoch/batch_size,即模型文件后面数字由来<br>

以散兵为例:</br>

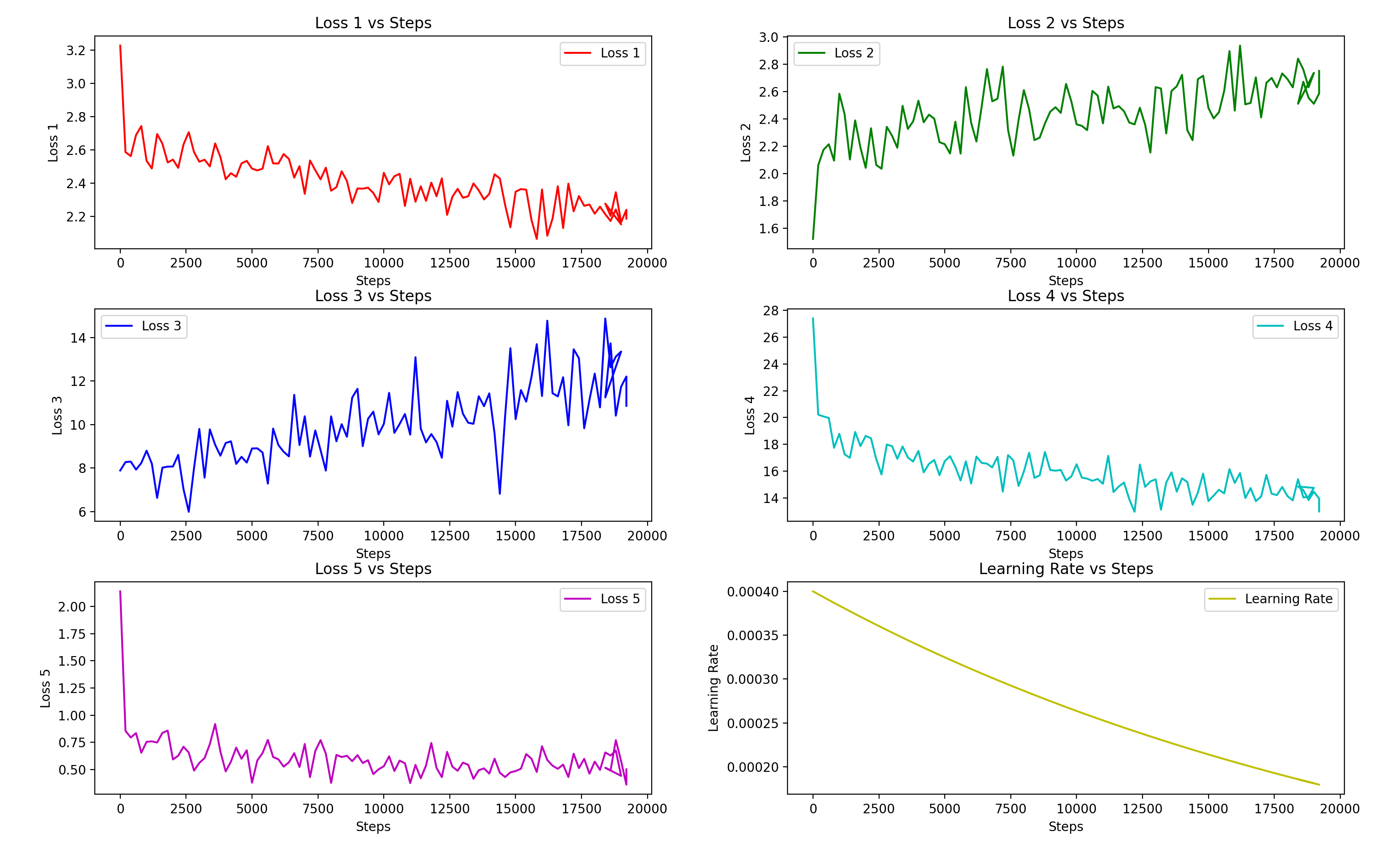

损失函数图像:主要看step 与 loss5,比如:<br>

给一个大致的参考,待转换音频都为高音女生,这是较为刁钻的测试:如图,10min纯净人声,

差不多2800epoch(10000step)就已经出结果了,实际使用的是5571epoch(19500step)的文件,被训练音色和原音色相差几

何,请听上方预览音频。正常训练,10min是较为不足的训练集时长。<br>

[点我查看相关文件](https://huggingface.co/datasets/jiaheillu/audio_preview/tree/main)<br>

|

false | This is a text2video model for diffusers, fine-tuned with a [modelscope](https://huggingface.co/damo-vilab/text-to-video-ms-1.7b) to have an anime-style appearance.

It was trained at 384x384 resolution.

It still generates unstable content often.

The usage is the same as with the original modelscope model.

example images are [here](https://imgur.com/a/sCwmKG1). |

true | # AutoTrain Dataset for project: roulette-prediction-next-sequence

## Dataset Description

This dataset has been automatically processed by AutoTrain for project roulette-prediction-next-sequence.

### Languages

The BCP-47 code for the dataset's language is en.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": "1",

"target": 8

},

{

"text": "2",

"target": 8

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "ClassLabel(names=['0', '11', '12', '20', '22', '23', '25', '26', '27', '28', '3', '31', '35', '36', '4', '7'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 15 |

| valid | 7 |

|

false |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

true | Reference: Ponnarassery-, Sreeja (2017), “Poem Emotion Recognition Corpus (PERC)”, Mendeley Data, V1, doi: 10.17632/n9vbc8g9cx.1 |

false |

The dataset was translated into Polish using this model: "gsarti/opus-mt-tc-en-pl"

### How to use

```python

from datasets import load_dataset

dataset = load_dataset("Aspik101/translated_polish_alpaca")

```

|

false | # AutoTrain Dataset for project: arp_summ_1

## Dataset Description

This dataset has been automatically processed by AutoTrain for project arp_summ_1.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"text": " eat , grass , horse ",

"target": " The old horse ate grass all day. "

},

{

"text": " lay , dog , rug ",

"target": " Brown dog chews on bone while laying on the rug. "

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"text": "Value(dtype='string', id=None)",

"target": "Value(dtype='string', id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 197 |

| valid | 50 |

|

false |

09/04/2023 Update:

New instructions added from: https://github.com/Instruction-Tuning-with-GPT-4/GPT-4-LLM

Original Version: https://github.com/tatsu-lab/stanford_alpaca#data-release

AI BASED TRANSLATION RESULTS OF STANFORD ALPACA EN TO TR

For academic only, please cite before you use it.

Taşar, D. E. T. (2023). stanford-alpaca-cleaned-turkish-translated [Dataset]. In Stanford Alpaca TR (1.0.1.a). https://huggingface.co/datasets/emre/stanford-alpaca-cleaned-turkish-translated

### Citation

Please cite the repo if you use the data or code in this repo.

```

@misc{alpaca-tr,tasar-2023

author = {Taşar, Davut Emre},

title = {stanford-alpaca-cleaned-turkish-translated},

year = {2023},

publisher = {Huggingface},

journal = {Huggingface repository},

howpublished = {\url{https://huggingface.co/datasets/emre/stanford-alpaca-cleaned-turkish-translated}},

}

``` |

true |

# Dataset Card for XNLI Parallel Corpus

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

### Supported Tasks and Leaderboards

Binary mode classification (spoken vs written)

### Languages

- English

- German

- French

## Dataset Structure

### Data Instances

{

'text': "And he said , Mama , I 'm home .",

'label': 0

}

### Data Fields

- text: sentence

- label: binary label of text (0: spoken 1: written)

### Data Splits

- train: 830

- test: 1669

### Other Statistics

#### Vocabulary Size

- English

- train: 4363

- test: 7128

- German

- train: 5070

- test: 8601

- French

- train: 4881

- test: 7935

#### Average Sentence Length

- English

- train: 20.689156626506023

- test: 20.75254643499101

- German

- train: 20.367469879518072

- test: 20.639904134212102

- French

- train: 23.455421686746988

- test: 23.731575793888556

#### Label Split

- train:

- 0: 166

- 1: 664

- test:

- 0: 334

- 1: 1335

#### Out-of-vocabulary words in model

- English

- BERT (bert-base-uncased)

- train: 800

- test: 1638

- mBERT (bert-base-multilingual-uncased)

- train: 1347

- test: 2693

- German BERT (bert-base-german-dbmdz-uncased)

- train: 3228

- test: 5581

- flauBERT (flaubert-base-uncased)

- train: 4363

- test: 7128

- German

- BERT (bert-base-uncased)

- train: 4285

- test: 7387

- mBERT (bert-base-multilingual-uncased)

- train: 3126

- test: 5863

- German BERT (bert-base-german-dbmdz-uncased)

- train: 2033

- test: 3938

- flauBERT (flaubert-base-uncased)

- train: 5069

- test: 8600

- French

- BERT (bert-base-uncased)

- train: 3784

- test: 6289

- mBERT (bert-base-multilingual-uncased)

- train: 2847

- test: 5084

- German BERT (bert-base-german-dbmdz-uncased)

- train: 4212

- test: 6964

- flauBERT (flaubert-base-uncased)

- train: 4881

- test: 7935

## Dataset Creation

### Curation Rationale

N/A

### Source Data

https://github.com/facebookresearch/XNLI

Here is the citation for the original XNLI paper.

```

@InProceedings{conneau2018xnli,

author = "Conneau, Alexis

and Rinott, Ruty

and Lample, Guillaume

and Williams, Adina

and Bowman, Samuel R.

and Schwenk, Holger

and Stoyanov, Veselin",

title = "XNLI: Evaluating Cross-lingual Sentence Representations",

booktitle = "Proceedings of the 2018 Conference on Empirical Methods

in Natural Language Processing",

year = "2018",

publisher = "Association for Computational Linguistics",

location = "Brussels, Belgium",

}

```

#### Initial Data Collection and Normalization

N/A

#### Who are the source language producers?

N/A

### Annotations

#### Annotation process

N/A

#### Who are the annotators?

N/A

### Personal and Sensitive Information

N/A

## Considerations for Using the Data

### Social Impact of Dataset

N/A

### Discussion of Biases

N/A

### Other Known Limitations

N/A

## Additional Information

### Dataset Curators

N/A

### Licensing Information

N/A

### Citation Information

### Contributions

N/A |

false | # Mtet

- Num examples:

- 5,072 (test)

- 6,212 (validation)

- Language: English, Vietnamese

## Prompts

"Translate the following sentence into <target>: ",

"What is the <target> translation for: ",

"What is the <target> equivalent of: ",

"What does the following sentence means in <target>: ",

"Interpret the following sentence into <target>: ",

"What is the <target> interpretation for: ",

"The <target> translation of the following sentence: ",

"What is the <target> meaning of the following sentence: ",

"What is the <target> meaning of this sentence: ",

"Please translate the following sentence to <target>: "

"Dịch câu sau sang tiếng <target>: ",

"Nghĩa tiếng <target> của câu sau: ",

"Dịch câu tiếng <from> sau sang tiếng <target>: ",

"Thông dịch câu tiếng <from> sau tiếng <target>: ",

"Chuyển câu tiếng <from> sang tiếng <target>: ",

"Chuyển nghĩa câu tiếng <from> sang tiếng <target>: ",

"Câu tiếng <from> có nghĩa là gì trong tiếng <target>: ",

"Câu sau có nghĩa tiếng <target> là gì: ",

"Hãy dịch câu sau sang tiếng <target>: ",

"Giải thích nghĩa câu sau sang tiếng <target>: ",

"Giải thích nghĩa câu tiếng <from> sang tiếng <target>"

|

false |

This dataset is made from this repo [here](https://github.com/janelleshane/DnD_bios)

and it contains 2322 character bios to be used |

false |

# Ukrainian StackExchange Dataset

This repository contains a dataset collected from the Ukrainian StackExchange website.

The parsed date is 02/04/2023.

The dataset is in JSON format and includes text data parsed from the website https://ukrainian.stackexchange.com/.

## Dataset Description

The Ukrainian StackExchange Dataset is a rich source of text data for tasks related to natural language processing, machine learning, and data mining in the Ukrainian language. The dataset contains information such as questions, answers, comments, and associated metadata from the website.

The data has been collected from the following sections of the Ukrainian StackExchange website:

Questions

Answers

Tags

Votes

## Structure

The dataset is structured as follows:

- `question`: Contains information about the question

- `id`: Unique identifier for the question

- `title`: Title of the question

- `text`: Text content of the question

- `upvoteCount`: The net upvote count of the question

- `tags`: List of tags associated with the question

- `answers`: A list of answers for the question, each containing:

- `id`: Unique identifier for the answer

- `text`: Text content of the answer

- `upvoteCount`: The net upvote count of the answer

- `isAccepted`: A boolean value indicating whether the answer is accepted or not

- `url`: The URL of the question on the Ukrainian StackExchange website

## Usage

To use this dataset in your project, you can load it from the Hugging Face Hub:

```python

from datasets import load_dataset

dataset = load_dataset("zeusfsx/ukrainian-stackexchange")

```

## License

This dataset is provided under the Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0) License. |

false | |

true | # Dataset Card for "MULTI_VALUE_wnli_reduced_relative"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

true |

## Dataset Description

- **BioStars Homepage:** https://www.biostars.org/

- **BioStars Paper:** https://doi.org/10.1371/journal.pcbi.1002216

- **Code Repository (This Dataset):** https://github.com/cannin/biostars_qa

### Dataset Summary

This dataset contains 4803 question/answer pairs extracted from the [BioStars](https://www.biostars.org/) website. The site focuses on bioinformatics, computational genomics, and biological data analysis.

## Dataset Structure

### Data Fields

The data contains INSTRUCTION, RESPONSE, SOURCE, and METADATA fields. The format is described for [LAION-AI/Open-Assistant](https://github.com/LAION-AI/Open-Assistant/blob/main/data/datasets/README.md)

## Dataset Creation

### Curation Rationale

Questions were included if they were an accepted answer and the question had at least 1 vote.

### Source Data

Data collected using the [Biostars API](https://www.biostars.org/info/api/)

## Additional Information

### Dataset Curators

[@cannin](https://github.com/cannin). @cannin has no affiliation with the BioStars project.

### Licensing Information

Apache-2.0

### Citation Information

#### BioStars Project

Cite the original project: https://doi.org/10.1371/journal.pcbi.1002216

#### This Dataset

Citation for this dataset:

```

@misc{Luna2023a,

author = {Augustin Luna},

title = {biostars_qa Dataset},

year = {2023},

howpublished = {\url{https://huggingface.co/datasets/cannin/biostars_qa}}

}

```

#### This Dataset Code

Citation for the code to generate this dataset:

```

@misc{Luna2023b,

author = {Augustin Luna},

title = {biostars_qa Code},

year = {2023},

howpublished = {\url{https://github.com/cannin/biostars_qa}}

}

``` |

true |

# Dataset Card for CNNovel125K

*The BigKnow2022 dataset and its subsets are not yet complete. Not all information here may be accurate or accessible.*

## Dataset Description

- **Homepage:** (TODO)

- **Repository:** <https://github.com/RyokoAI/BigKnow2022>

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** Ronsor/undeleted <ronsor@ronsor.com>

### Dataset Summary

CNNovel125K is a dataset composed of approximately 125,000 novels downloaded from the Chinese novel hosting site <http://ibiquw.com>.

### Supported Tasks and Leaderboards

This dataset is primarily intended for unsupervised training of text generation models; however, it may be useful for other purposes.

* text-classification

* text-generation

### Languages

* Simplified Chinese

## Dataset Structure

### Data Instances

```json

{

"text": "\n------------\n\n全部章节\n\n\n------------\n\n第一章 她肯定做梦呢!\n\n HT国际大酒店总统套房。\n\n 清晨的第一缕阳光照射进圣地亚哥地板上,洒落在凌乱的床单上,突然地,床上睡的正熟的人睁开眼睛,

猛然惊醒!\n\n ...",

"meta": {

"subset": "cnnovel.ibiquw",

"id": "100067",

"q": 0.9,

"lang": "zh_cn",

"title": "为爱入局:嫁给秦先生",

"author": "奥德萨"

}

}

{

"text": "\n------------\n\n全部章节\n\n\n------------\n\n第1章:出狱就大婚\n\n 凉城第一监狱,大门缓缓打开,秦峰仰起头,贪婪的呼吸了一口空气。\n\n 三年了,终于又闻到了自由的味道。\n\n 他回过头,看着目

送他出来的那群人道:...",

"meta": {

"subset": "cnnovel.ibiquw",

"id": "100059",

"q": 0.9,

"lang": "zh_cn",

"title": "绝世弃婿",

"author": "绷带怪"

}

}

```

### Data Fields

* `text`: the actual novel text, all chapters

* `meta`: entry metadata

* `subset`: dataset tag: `cnnovel.ibiquw`

* `id`: novel ID

* `q`: quality score, fixed at 0.9

* `lang`: always `zh_cn` (Simplified Chinese)

* `title`: novel title

* `author`: novel author

### Data Splits

No splitting of the data was performed.

## Dataset Creation

### Curation Rationale

TODO

### Source Data

#### Initial Data Collection and Normalization

TODO

#### Who are the source language producers?

The authors of each novel.

### Annotations

#### Annotation process

Titles were collected alongside the novel text and IDs.

#### Who are the annotators?

There were no human annotators.

### Personal and Sensitive Information

The dataset contains only works of fiction, and we do not believe it contains any PII.

## Considerations for Using the Data

### Social Impact of Dataset

This dataset is intended to be useful for anyone who wishes to train a model to generate "more entertaining" content in Chinese.

It may also be useful for other languages depending on your language model.

### Discussion of Biases

This dataset is composed of fictional works by various authors. Because of this fact, the contents of this dataset will reflect

the biases of those authors. Beware of stereotypes.

### Other Known Limitations

N/A

## Additional Information

### Dataset Curators

Ronsor Labs

### Licensing Information

Apache 2.0, for all parts of which Ronsor Labs or the Ryoko AI Production Committee may be considered authors. All other material is

distributed under fair use principles.

### Citation Information

```

@misc{ryokoai2023-bigknow2022,

title = {BigKnow2022: Bringing Language Models Up to Speed},

author = {Ronsor},

year = {2023},

howpublished = {\url{https://github.com/RyokoAI/BigKnow2022}},

}

```

### Contributions

Thanks to @ronsor (GH) for gathering this dataset. |

true | # Dataset Card for "DiagTrast"

## Table of Content

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Team members](#team-members)

## Dataset Description

### Dataset Summary

For the creation of this dataset, ChatGPT-4 was used to generate statements based on the characteristics of some of the mental disorders described in the "Manual Diagnóstico y Estadístico de Trastornos Mentales (DSM-5)". The mental disorders included are:

- Narcissistic personality disorder.

- Histrionic personality disorder.

- Borderline personality disorder.

- Antisocial personality disorder.

- Schizotypal personality disorder.

### Supported Tasks and Leaderboards

- text-classification: The dataset can be used to train a model for text classification, which consists in assigning a label or class to a given text. Some use cases are sentiment analysis, natural language inference, and assessing grammatical correctness. Success on this task is typically measured by achieving a high/low accuracy.

### Languages

This dataset of statements is in Spanish only.

## Dataset Structure

### Data Instances

A typical instance in the dataset comprises a statement describing one or more symptoms of a disorder, the name of the disorder, a sequential numerical id representing the disorder, and the clean text of the initial statement (i.e. free of punctuation marks and connectors).

The following is a JSON-formatted example of a typical case in this dataset:

```

{

'Sintoma': "Su comportamiento es a menudo extraño y excéntrico, como llevar ropa que no coincide o actuar de una manera inapropiada en situaciones sociales.",

'Padecimiento': "Trastornos de la personalidad esquizotípica",

'Padecimiento_cat': 2,

'Sintoma_limpia ': "comportamiento menudo extraño excentrico llevar ropa coincide actuar manera inapropiada situaciones sociales"

}

```

### Data Fields

- `Sintoma`: a string, representing a paragraph that a professional would enter describing the symptoms identified in a patient.

- `Padecimiento`: a string that indicates the disorder according to DSM-5.

- `Padecimiento_cat`: an integer representing the `Padecimiento` field, this field can be used as a label in a text-classification model.

- `Sintoma_Limpia`: a string, this field is the clean text of the `Sintoma` field. For the text-classification task, is advisable to use this field instead of the "Padecimiento" field to reduce the noise that punctuation marks, articles and connectors generate in the models.

### Data Splits

The data were not split into training and test subsets, instead having a single set with the following distribution:

| Disorder | Records |

| - | - |

| Narcissistic personality disorder| 250 |

| Histrionic personality disorder | 250 |

| Borderline personality disorder | 358 |

| Antisocial personality disorder | 250 |

| Schizotypal personality disorder | 225 |

## Dataset Creation

### Curation Rationale

It was decided to create this dataset because there is an extensive manual called DSM-5 which details the characteristics that must be present in a patient to diagnose a mental disorder. Some disorders have characteristics in common as well as their differences, for this reason we sought to classify, according to the DSM-5, statements that contain symptoms and characteristics identified by health professionals.

### Source Data

Data was generated using chatGPT, we first introduce the symptoms specified in the DSM-5 and request it to create statements containing one or more characteristics but without mentioning the name of the disorder. When the artificial intelligence generates the statements, a quick check is made to ensure that they are of the minimum expected quality, i.e., that they do not include the name of the disorder, that they are not too long or too short, and above all that they specifically contain the characteristics that were entered.

### Annotations

#### Annotation process

The generation of the data was carried out for each mental disorder, so that when we obtained the statements we also knew which label corresponded to it, so it was not necessary to make manual or automated annotations.

## Considerations for Using the Data

### Social Impact of Dataset

We hope that through the creation of models using this or a similar dataset, we can help to reduce the diagnosis times of mental disorders and increase the number of patients that can be seen and treated. On the other hand, we must consider the importance of using these technologies properly because if these models are used indiscriminately by people who do not have sufficient knowledge or experience to detect unusual behaviors in people, these models could negatively influence people by making them believe that they have a disorder.

### Discussion of Biases

It should not be forgotten that these data have been artificially generated so models that are trained might expect different inputs than a real mental health professional would generate. To mitigate this bias the team has closely verified the data generation process and this has evolved while identifying better prompts as well as filtering the statements and feeding back to the artificial intelligence to finally obtain the desired quality.

### Other Known Limitations

We have only generated data for 5 of the disorders described in the DSM-5.

## Team members

- [Alberto Martín Garrido](https://huggingface.co/Stremie)

- [Edgar Mencia](https://huggingface.co/edmenciab)

- [Miguel Ángel Solís Orozco](https://huggingface.co/homosapienssapiens)

- [Jose Carlos Vílchez Villegas](https://huggingface.co/JCarlos) |

false | |

true | ### Resumen del dataset

Se trata de un dataset en español, extraído del centro de documentación de la Fundación Secretariado Gitano, en el que se presentan distintas situaciones discriminatorias acontecidas por el pueblo gitano. Puesto que el objetivo del modelo es crear un sistema de generación de actuaciones que permita minimizar el impacto de una situación discriminatoria, se hizo un scrappeo y se extrajeron todos los PDFs que contuvieron casos de discriminación con el formato (HECHOS, INTERVENCIÓN, RESULTADO). Para extraer la información se hizo un scrappeo de la página, a continuación se limpió y se unificó todo el dataset con un script de preprocesamiento para que todo el dataset tuviera el mismo formato.

### Tareas admitidas y tablas de clasificación

- `task-generation`: Dado el hecho generar la intervención y la etiqueta de resultado, para dar métodos para hacer la intervección y que sea efectiva. ([PAG-BERT](https://huggingface.co/hackathon-somos-nlp-2023/PAG-BERT))

- `task-classication`: Se puede entrenar un modelo de clasificación, dejamos a los usarios, predecir el tipo de descriminación de dependiedo del hecho

### Idioma

Es un dataset con la variante español de España, el estilo empleado es formal y objetivo, limitándose a describir los hechos descritos por las personas afectadas.

## Estructura de los datos

### Instancias

A continuación se muestra una instancia de ejemplo del dataset:

```

{

'sintetico': '0',

'text': 'Una joven gitana comenzó a trabajar en una tienda de ropa, hace dos años, con contrato indefinido. Al mes de comenzar a trabajar, una compañera le preguntó, en presencia de su encargada, si era gitana, ella respondió que sí; desde entonces el trato de la encargada hacia la joven cambió, comenzó a tirar al suelo perchas, tierra, para luego acusarla de que no limpiaba el suelo, además de hacer continuamente comentarios generalizados refiriéndose a las mujeres gitanas, del tipo “¿Pero te dejan trabajar?” “¿Y estudiar?”, “tú tienes que saber cómo trabajar en la tienda porque como aprendéis en los mercadillos...” La víctima comentó que desde que la encargada se enteró de que era gitana le hizo la vida imposible, se sintió muy humillada. No aguantó más y presentó la baja voluntaria, aun siendo consciente de que perdía su derecho a la prestación por desempleo.',

'intervencion': 'Se entrevistó a la joven. Se comprobó a través del testimonio de la víctima que desde que su encargada se enteró de que es mujer gitana, al mes de comenzar a trabajar aproximadamente, comenzó a sufrir discriminación. Se informó a la víctima del Servicio, del trabajo que realizamos y de sus derechos.\xa0',

'tipo_discriminacion': 'Discriminación directa',

'resultado': 'Negativo.'

}

```

### Campos de los datos

- `sintetico`: indica si los datos son relacionados con la intervención y el resultado son originales, es decir, proceden de la fuente "Fundación Secretariado Gitano" (valor 0); o si, por el contrario, los hemos generado sintéticamente (valor 1).

- `text`: expone los hechos descritos por la persona afectada.

- `intervencion`: presenta las medidas que se tomaron desde la Fundación para evitar que los hechos descritos en "text" se volvieran a repetir.

- `tipo_discriminacion`: etiqueta que identifica el tipo de discriminación. Puede tomar los valores **Acoso discriminatorio**, **Discriminación directa**, **Discriminación indirecta**, **Discriminación interseccional**, **Discurso de odio**, **Orden de discriminar**,, **Sin especificar**.

- `resultado`: presenta la repercusión que tuvo la intervención adoptada. Sus posibles valores son **Positivo**, **Negativo** y **Neutro**.

### División de los datos

El dataset cuenta con un total de 1990 instancias, repartidas del siguiente modo:

| | train | validation | test |

|-------------------------|----------:|-------------:|----------:|

| Input Sentences | 90% | 5% | 5% |

| Average Sentence Length | 94.71 | 90.94 | 98.07 |

Cabe destacar que, teniendo en cuenta el resultado de las intervenciones (positivo, negativo o neutro), el dataset no está balanceado. En concreto, hay un total de 280 muestras positivas, 939 negativas y 771 neutras. En próximas actualizaciones del dataset trabajaremos para incrementar el tamaño del dataset de forma balanceada.

## Creación del dataset

### Justificación de la curación

El motivo por el que se creó este dataset es para conocer de una forma objetiva, si las medidas actuales que se están adoptando por parte de la Fundación han surtido efecto (en cuyo caso sería positivo), no ha surtido ningún efecto (negativo), o si por el contrario, las medidas propuestas no han incentivado al usuario a llevar a cabo ninguna acción.

Se ha optado por este dataset por el volumen de datos que contiene relativos a distintos escenarios, y por el formato que todos comparten de: HECHOS, INTERVENCIÓN Y RESULTADO.

### Fuente de los datos

Los datos utilizados para construir el modelo fueron extraídos de la página web de la Fundación Secretariado Gitano (<a href="https://informesdiscriminacion.gitanos.org">FSM</a>). El FSM tiene una base de datos que contiene actos de discriminación que han sido reportados a la organización. Estos actos de discriminación fueron seleccionados para entrenar y evaluar el modelo.

#### Recogida inicial de datos y normalización

Los datos fueron extraídos de la sección de <a href = "https://informesdiscriminacion.gitanos.org/buscar-casos" >Buscador de casos</a>, donde se lleva un registro de todo los casos de descriminación.

Los campos que ofrece la página web para estetipo de informes son:

* `Hecho` que hace referencia al acto de descriminación.

* `Intervención` qué medidas tomo la FSG para solucionar el problema.

* `Resultado`: Descripción del resultado.

* Año que ocurrió el caso.

* Año del informe.

* Ámbito: Dado el caso de que la discrimnación haya sido una empresa gubernamenta, en cual derecho fundamental se presentó.

* Provincia: Lugar donde ocurrió el acto.

* Tipo de discriminación.

En la extracción de datos solo tuvimos en cuenta los campos **hechos**, **intervención**, **resultados** y **tipo de discriminación**. El lenguaje usado en los informes es formal.

Originalmente, una elevado número de Hechos no contaban con una intervención y resultado (los campos estaban vacíos).

#### Limpieza de los datos

En la página web, el campo resultado contiene un breve explicación del los efectos obtenidos tras llevar a cabo la intervección. Usando la librería <a href="https://github.com/pysentimiento/pysentimiento">pysentimiento</a>, se clasificó el resultado entre negativo, neutro y positivo.

Posterior mente se revisó la etiqueta y se ajustó para lo que se consideraba neutral, negativo o positivo

El 17% de los actos de discriminación en el dataset no contaban con intervención ni resultado. Para completar estos campos se aplicó la técnica few-show learning usando el modelo Bloom. De modo que dado algunos ejemplos de **hechos**, **intervención** y **resultado**, seríamos capaces de generar **intervenciones** y **resultados** de forma automática. El output del modelo Bloom se revisó manualmente para corregir errores.

El 41% de los textos del campo **hechos** eran demasiado largos para ser utilizado en BLOOM aplicando la técnica de few-shot learning. Para resolver este problema, se decidió resumirlos, para esto se utilizó la función `segmenter.split_single` de la librería <a href="https://github.com/fnl/segtok" >segtok</a>, que divide el texto en oraciones y las separa por caracteres de nueva línea.

Se usaron dos modelos pre-etrenados para resumir cada sub-texto. El primero fue <a href="https://huggingface.co/mrm8488/bert2bert_shared-spanish-finetuned-summarization">mrm8488/bert2bert_shared-spanish-finetuned-summarization</a> y el segundo fue el <a href="https://huggingface.co/Narrativa/bsc_roberta2roberta_shared-spanish-finetuned-mlsum-summarization">Narrativa/bsc_roberta2roberta_shared-spanish-finetuned-mlsum-summarization</a>

En el repositorio https://github.com/Frorozcoloa/somos_nlp_hackaton se encuentran los scripts originales usados para el preprocesamiento. También puedes encontrar una copia de los mismos en este mismo repositorio.

### Anotación

Las anotaciones que se ralizaron fueron verificaciones a los datos de sintéticos generados con few-shot learning (intervenciones y resultados):

* Se rellenaron los valores nulos.

* Se hicieron resumenes de algunos textos (Hehos) aplicando modelos pre-entrenados.

* Se cambió el texto de resultado por etiquetas de POS, NEU, NEG.

#### Proceso de anotación

Para el proceso de etiquetado se utilizó Argilla para etiquetar la categoría de "Resultado", para ello se emplearon las siguientes etiquetas: "Positivo", "Negativo" y "Neutro". En el proceso de etiquetado lo que nos interesaba era etiquetar el resultado de las intervenciones para que el modelo aprendiera y pudiera generar texto para dar respuesta a la situación expuesta por el usuario, además de predecir con los datos etiquetados si la repercusión que pudiera tener la medida que propone el modelo sería "positiva"(surtiría efecto), "negativa"(no tendría ningún efecto) o "neutra"(si es posible que el usuario no llevara a cabo ninguna acción).

En concreto, tras descargar todos los datos disponibles en la web, los preprocesamos y unimos en un solo dataset que fue subido a Argilla. Una vez aquí, validamos cada una de las instancias del siguiente modo:

* Si la intervención y/o resultado están vacías, se anota como tal.

* Se comprueba que el resultado positivo, negativo o neutro es correcto. La mayoría de las incongruencias surgen entre los pares positivo/neutro y negativo/neutro.

Una vez validado el dataset con argilla, seleccionamos las muestras que fueron anotadas como "vacías" para proceder a completarlas. Para ello, hemos aplicado Few-Shot Learning usando el modelo [BLOOM](https://huggingface.co/bigscience/bloom).

Cabe destacar que algunos hechos del dataset eran demasiado largos y no podían ser procesados por BLOOM (generaba un error que indicaba que habíamos superado el número máximo de tokens), para solucionarlo, utilizamos los modelos <a href="https://huggingface.co/mrm8488/bert2bert_shared-spanish-finetuned-summarization">mrm8488/bert2bert_shared-spanish-finetuned-summarization</a> y <a href="https://huggingface.co/Narrativa/bsc_roberta2roberta_shared-spanish-finetuned-mlsum-summarization">Narrativa/bsc_roberta2roberta_shared-spanish-finetuned-mlsum-summarization</a> para resumir dichos hechos y minimizar así su tamaño.

### Información personal y sensible

En este caso no se ha necesitado utilizar ningún proceso de anonimización, ya que los datos procedentes de esta fuente no contienen ninguna información que vulnere los derechos de los afectados.

## Consideraciones sobre el uso de los datos

### Consideraciones sobre el uso de los datos

El impacto social de este dataset se dirige a ser una herramienta que sirva para implementar acciones que ayuden a combatir el racismo hacia la población gitana, además este dataset se podría utilizar para evaluar la repercusión de las distintas medidas adoptadas durante un período de tiempo, y aquellas medidas con una repercusión "negativa" o "neutra" investigarlas y mejorarlas con un trato más concienzudo hacia la población gitana.

### Debate sobre los prejuicios

Sé realizó un analisís exploratorio de los datos, para eso hemos realizado una nube de palabras para analizar los datos sintéticos y no sintéticos.

#### Datos no sintéticos

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/Hechos_normales.png">

Aquí podemos ver que muchos de los hechos se generaron en noticias, en mujeres, temas de vivienda, con la policia y la familia.

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/Intervenci%C3%B3n_normal.png">

Las intervenciones hablan de derechos, de cartas, de igualdad, asesorar a la persona y de presentar quejas.

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/etiqueta_normal.png">

Muchos de los resultados de las intervenciones fueron negativos o neutrales (Posiblemente sin respuesta) o de que no se logró lo propuesto (Negativo). Se puede observar el desbalance en los datos.

Por medio de la librería *pysentimiento* y usando el modelo `pysentimiento/pt_hate_speech`, se realizó una métrica para medir el discurso de odio en el `Hecho`.

Para eso análizaremos hateful, targeted y aggressive. La métrica va de 0 a 1, para cada una. Siendo la probabilidad de que esa caracteristica esté en el texto.

Se encotró lo siguiente

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/hate_normal.png">

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/hate_2_normal.png">

La distribución de los valores de hateful, targeted y aggressive presentan una cola alargada hacia la derecha, lo que indica que hay pocos casos en los que se detecta un mensaje de odio en los hechos.

Para el caso, donde no se generó la intervección y resultado se presenta un crecimiento en el tercer cuartil, esto quiere decir que hay mensajes que muestra un discurso de odio. Por ejemplo el hateful es de 0.4, targeted de 0.02 y aggresive de 0.03. En conclusión, como está escrito el hecho y como fue entrenado el modelo de *pysentimiento*, en general los hechos no tienen un mensaje de odio.

#### Datos sintéticos.

Se realizó el mismo análisis para los datos sintéticos

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/Hechos_sinteticos.png"/>

Cabe resltar que el hecho no fue generado.

Es claro que el dataset está más sesgado a contener las palabras gitano, gitana, comunidad gitana, etnia gitana, familia, discriminación.

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/Intervenci%C3%B3n_sintetica.png"/>

Esta parte fue generada por el modelo *Bloom*. Puede comprobarse que con *few-shot* se logra captar más que todo la palabra `derecho`.

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/Etiquetas%20sinteticas.png">

Tambien hay un desbalance en las etiquetas generadas.

Por medio de la librería *pysentimiento* y usando el modelo `pysentimiento/pt_hate_speech` ,se realizó una métrica para medir el discurso de odio en el `Hecho`

Para eso análizaremos hateful, targeted y aggressive. La métrica va de 0 a 1, para cada una. Siendo la probabilidad de que esa caracteristica esté en el texto.

Se encotró lo siguiente

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/hate_sintetico.png">

<img src="https://huggingface.co/datasets/hackathon-somos-nlp-2023/informes_discriminacion_gitana/resolve/main/imagenes/hate_2_sintetico.png">

La distribución de los valores de hateful, targeted y aggressive presentan una cola alargada hacia la derecha, lo que indica que hay pocos casos en los que se detecta un mensaje de odio en los hechos.

Tanto la mediana como la media de los valores de hateful, targeted y aggressive están muy cerca de cero, lo que indica que la mayoría de los hechos no contienen mensajes de odio. Además, se observó que en el tercer cuartil, el 75% de los datos en la métrica de hateful es 0.3, para targeted es de 0.0089 y para aggressive es de 0.06, lo que refuerza la conclusión de que la mayoría de los datos no contienen un mensaje de odio en la descripción de los hechos.

## Información adicional

### Curadores del dataset

* <a href="https://www.linkedin.com/in/frorozcol/">Fredy Orozco</a>

* <a href="https://www.linkedin.com/in/mariajesusgs">María Jesús García</a>

* <a href="https://www.linkedin.com/in/ramonruedadelgado/">Ramón Rueda</a> |

true | |

true |

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This is a set of (title, integer category) descriptions taken from The Pirate Bay via

[123dw's](https://thepiratebay.org/search.php?q=user:123dw) regular TPB backups. This set represents the titles in release 2023-04.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

Major category, count

* 1, 733604 (audio)

* 2, 3557282 (video)

* 3, 211288 (applications)

* 4, 245684 (games)

* 5, 2500830 (porn)

* 6, 515778 (other)

Is porn?, count

- 0, 5263636

- 1, 2500830

### Data Fields

* id - original torrent ID

* title - Torrent title

* category - Integer ThePirateBay category (see below)

* mcat - Integer category / 100

* is_porn - 1 if porn, 0 otherwise

### Categories

```

id,name

100,Audio

101,"Audio: Music"

102,"Audio: Audio books"

103,"Audio: Sound clips"

104,"Audio: FLAC"

199,"Audio: Other"

200,Video

201,"Video: Movies"

202,"Video: Movies DVDR"

203,"Video: Music videos"

204,"Video: Movie clips"

205,"Video: TV shows"

206,"Video: Handheld"

207,"Video: HD - Movies"

208,"Video: HD - TV shows"

209,"Video: 3D"

299,"Video: Other"

300,Applications

301,"Applications: Windows"

302,"Applications: Mac"

303,"Applications: UNIX"

304,"Applications: Handheld"

305,"Applications: IOS (iPad/iPhone)"

306,"Applications: Android"

399,"Applications: Other OS"

400,Games

401,"Games: PC"

402,"Games: Mac"

403,"Games: PSx"

404,"Games: XBOX360"

405,"Games: Wii"

406,"Games: Handheld"

407,"Games: IOS (iPad/iPhone)"

408,"Games: Android"

499,"Games: Other"

500,Porn

501,"Porn: Movies"

502,"Porn: Movies DVDR"

503,"Porn: Pictures"

504,"Porn: Games"

505,"Porn: HD - Movies"

506,"Porn: Movie clips"

599,"Porn: Other"

600,Other

601,"Other: E-books"

602,"Other: Comics"

603,"Other: Pictures"

604,"Other: Covers"

605,"Other: Physibles"

699,"Other: Other"

```

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

false | # AutoTrain Dataset for project: syn

## Dataset Description

This dataset has been automatically processed by AutoTrain for project syn.

### Languages

The BCP-47 code for the dataset's language is it.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"tokens": [

"EXPORT",

"CAUCEDO",

"o",

"RIO",

"HAINA",

"Q2lhbywgYnVvbmdpb3JubyDwn5iKDQoNCk5zIGNsaWVudGUgVklQDQoNCkRFU1RJTkFaSU9ORSBD",

";",

"RlMgQ0FVQ0VETyBvcHB1cmUgUklPIEhBSU5BDQoNCkJBTkNBTEkgTk9OIFNPVlJBUFBPTklCSUxJ",

";",

"DQpSaXRpcm8gYy9vIEZPTlRFVklWTyAoUFIpDQoNCk1FUkNFIElNTyBjb21lIGRhIGRvY3MgYWxs",

";",

"ZWdhdGkNCg0KODAzMTEzOTANCkRHUiArIE5ER1INCg0KDQpOUiBCT1gNCkRJTS4NCkdST1NTDQpO",

";",

"RVQNCjIwMjMwMDMxMjk4DQpsIDEyMCBMIDgwIGggNzQNCjI5Myw2NzMNCjI3Nyw2MTQNCjIwMjMw",

";",

"MDMxMzAyDQpsIDEyMCBMIDgwIGggMTEwDQozOTksOTg1DQozOTUsMg0KMjAyMzAwMzEzMDMNCmwg",

";",

"MTIwIEwgODAgaCA5Mg0KMzM1LDQ0DQozMjUsNzk1DQoyMDIzMDAzMTMwNA0KbCAxMjAgTCA4MCBo",

";",

"IDgwDQoyNDksMDY0DQoyMzYsMjExDQoyMDIzMDAzMTMwNw0KbCAxMjAgTCA4MCBoIDEzMQ0KNTA1",

";",

"LDM1NQ0KNDgwLDg3DQoyMDIzMDAzMTMwOA0KbCAxMjAgTCA4MCBoIDEzMQ0KNTA3LDgNCjQ4Niw4",

";",

"MQ0KMjAyMzAwMzEzMDkNCmwgMTIwIEwgODAgaCAxMjENCjQ0NCwwOA0KNDQyLDgNCjIwMjMwMDMx",

";",

"MzEwDQpsIDEyMCBMIDgwIGggMTE3DQo0MDMsNjENCjM3Niw5MTUNCjIwMjMwMDMxMzEyDQpsIDEy",

";",

"MCBMIDgwIGggMTA3DQozNzMsNTQNCjM1MCwwNDINCjIwMjMwMDMxMzEzDQpsIDEyMCBMIDgwIGgg",

";",

"MTAwDQozNTMsODY1DQozMzAsNTYxDQoyMDIzMDAzMTMxNA0KbCAxMjAgTCA4MCBoIDEyNw0KNDQy",

";",

"LDcwMg0KNDI1LDINCjIwMjMwMDMxMzE1DQpsIDEyMCBMIDgwIGggMTI2DQo1MDgsODA2DQo0OTQN",

";",

"CjIwMjMwMDMxMzE2DQpsIDEyMCBMIDgwIGggOTENCjE0NSwyOTUNCjEzMiwxMDINCg0KDQoNCg0K",

";",

"DQoNCjk0MCAgICBCT1gNCjEzICAgIFBBTExFVA0KDQpUT1QuIFRBUkUNCjE2OSwwMCBLRw0KDQoN",

";",

"ClRPVA0KDQo0OTYzLDIxNQ0KNDc1NCwxMg0KDQpHcmF6aWUgbWlsbGUNCkRlbGlhDQoNCg0KKE1y",

";",

"cy4pIERlbGlhIE5pY29saW5pDQpJbnNpZGUgU2FsZXMNCltFeHByZXNzIFNybF0NClRvcnJlIGRl",

";",

"bCBEaXN0cmlwYXJrIGludC4xMA0KUG9ydG8gZGkgUHLDoCBWb2x0cmkNCjE2MTU4IEdlbm92YSAt",

";",

"IElUQUxZDQpQaC4rMzkwMTA4MTc5MTI1DQpNb2IuICszOSAzNDggOTU5NDQ1Mg0KTGlua2VkSW4g",

";",

"ZXhwcmVzc19nbG9iYWw8aHR0cHM6Ly93d3cubGlua2VkaW4uY29tL2NvbXBhbnkvZXhwcmVzc19n",

";",

"bG9iYWwvPg0KW0V4cHJlc3MgU3JsXQ0KW0V4cHJlc3MgU3JsXQ0KSSBzdW9pIGRhdGkgcGVyc29u",

";",

"YWxpIHNhcmFubm8gdHJhdHRhdGkgaW4gY29uZm9ybWl0w6AgYWwgUmVnb2xhbWVudG8gRXVyb3Bl",

";",

"byBuLiA2NzkvMjAxNiAoYy5kLiDigJxHRFBS4oCdKSwgaW4gdmlnb3JlIGEgZGVjb3JyZXJlIGRh",

";",

"bCAyNSBtYWdnaW8gMjAxOC4gQSBxdWVzdG8gcHJvcG9zaXRvLCBsYSBpbnZpdGlhbW8gYSBwcmVu",

";",

"ZGVyZSB2aXNpb25lIGRlbGwnYXBwb3NpdGEg4oCLYXBwb3NpdGEgaW5mb3JtYXRpdmEgcHJpdmFj",

";",

"eS4g4oCLTGUgaW5mb3JtYXppb25pIGNvbnRlbnV0ZSBpbiBxdWVzdG8gZG9jdW1lbnRvIHNvbm8g",

";",

"c3RyZXR0YW1lbnRlIHJpc2VydmF0ZSBlIGFkIHVzbyBlc2NsdXNpdm8gZGVsIGRlc3RpbmF0YXJp",

";",

"by4NCllvdXIgcGVyc29uYWwgZGF0YSB3aWxsIGJlIHByb2Nlc3NlZCBpbiBjb21wbGlhbmNlIHdp",

";",

"dGggdGhlIEVVIEdlbmVyYWwgRGF0YSBQcm90ZWN0aW9uIFJlZ3VsYXRpb24gbm8uIDY3OS8yMDE2",

";",

"ICjigJxHRFBS4oCdKSwgaW4gZm9yY2Ugc2luY2UgTWF5IDI1LCAyMDE4LiDigItPbiB0aGUgdG9w",

";",

"aWMg4oCLcGxlYXNlIHNlZSBvdXIgcHJpdmFjeSBwb2xpY3kuIEFsbCBpbmZvcm1hdGlvbiBpbiB0",

";",

"aGlzIGRvY3VtZW50IGlzIGNvbmZpZGVudGlhbCBhbmQgZm9yIGV4Y2x1c2l2ZSB1c2Ugb2YgdGhl",

";",

"IGFkZHJlc3NlZSBvbmx5Lg0KDQo=",

";"

],

"tags": [

0,

5,

0,

5,

5,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0,

0

]

},

{

"tokens": [

"R",

":",

"Quote",

"-",

"FCA",

"Italy",

"to",

"Charleston",

",",

"USA",

"port",

"QFNhbGVzIEV4cG9ydDxtYWlsdG86c2FsZXNleHBAc2luZXJneWNhcmdvLmNvbT4NCg0KRGE6IEph",

";",

"aSBKb25lcyA8ampvbmVzQHJvbWF2LmNvbT4NCkludmlhdG86IHZlbmVyZMOsIDI1IG5vdmVtYnJl",

";",

"IDIwMjIgMTc6MDQNCkE6IEFuZHJlYSBPZ25pbyA8YS5vZ25pb0BzaW5lcmd5Y2FyZ28uY29tPg0K",

";",

"Q2M6IERhdmlkZSBPbm5pcyA8ZC5vbm5pc0BzaW5lcmd5Y2FyZ28uY29tPg0KT2dnZXR0bzogUXVv",

";",

"dGUgLSBGQ0EgSXRhbHkgdG8gQ2hhcmxlc3RvbiwgVVNBIHBvcnQNCg0KSGkgQW5kZWENCg0KUGxl",

";",

"YXNlIHF1b3RlIExDTCByYXRlIGJhc2VkIG9uIHRoZSBiZWxvdyBpbmZvcm1hdGlvbiB0byBDaGFy",

";",

"bGVzdG9uIFNDLCBDRlMNCg0KLSBGQ0EgLSBFeHBvcnQgZG9jcyB3aWxsIGJlIGRvbmUgYnkgdGhl",

";",

"IHN1cHBsaWVyDQoNCi0gR29vZHM6IE5hdHVyYWwgY2FmZmVpbmUgZ3JhbnVsYXINCi0gNyBwYWxs",

";",

"ZXRzIDgweDEyMHgxNDUNCi0gR3Jvc3Mgd2VpZ2h0IDIuNDUwIEtnDQoNCi0gUGlja3VwIGxvY2F0",

";",

"aW9uOg0KQ09GRkVJTiBDT01QQUdOSUUgSVRBTFkgUy5yLmwuIGEgU29jaW8gVW5pY28NCk9mZmlj",

";",

"ZXMvUHJvZHVjdGlvbjogUmVnLiBBYmlzc2luaWEsIExvYy4gTGFnbyBBenp1cnJvLCAxNzAzMSBB",

";",

"bGJlbmdhIChTVikg4oCTIEl0YWx5DQoNClBsZWFzZSBhZHZpc2UgY2FycmllciBhbmQgdHJhbnNp",

";",