id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

lamini/bts | 2023-07-24T03:50:41.000Z | [

"region:us"

] | lamini | null | null | 1 | 21 | 2023-07-24T03:49:06 | ---

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: string

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 129862.8

num_examples: 126

- name: test

num_bytes: 14429.2

num_examples: 14

download_size: 50390

dataset_size: 144292.0

---

# Dataset Card for "bts"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 563 | [

[

-0.041168212890625,

-0.020782470703125,

0.01204681396484375,

0.015777587890625,

-0.02813720703125,

0.00957489013671875,

0.023681640625,

-0.00533294677734375,

0.061065673828125,

0.0285491943359375,

-0.05718994140625,

-0.053009033203125,

-0.0335693359375,

-0.0... |

Yuhthe/samsum_vi_word | 2023-07-26T02:57:48.000Z | [

"task_categories:summarization",

"language:vi",

"region:us"

] | Yuhthe | null | null | 0 | 21 | 2023-07-25T07:30:27 | ---

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: id

dtype: string

- name: dialogue

dtype: string

- name: summary

dtype: string

splits:

- name: test

num_bytes: 761520

num_examples: 819

- name: train

num_bytes: 13465942

num_examples: 14732

- name: validation

num_bytes: 733668

num_examples: 818

download_size: 7875036

dataset_size: 14961130

task_categories:

- summarization

language:

- vi

---

# Dataset Card for "samsum_vi_word"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 776 | [

[

-0.0232696533203125,

-0.00980377197265625,

0.0153045654296875,

0.0117034912109375,

-0.035858154296875,

-0.00791168212890625,

0.004749298095703125,

-0.0028133392333984375,

0.07232666015625,

0.0307464599609375,

-0.05615234375,

-0.06500244140625,

-0.05718994140625,... |

FinchResearch/OpenPlatypus-Alpaca | 2023-08-29T13:53:43.000Z | [

"size_categories:10K<n<100K",

"license:apache-2.0",

"region:us"

] | FinchResearch | null | null | 1 | 21 | 2023-08-21T13:31:52 | ---

license: apache-2.0

size_categories:

- 10K<n<100K

---

### A merged dataset...

### Open-Platypus & Alpaca Data | 114 | [

[

-0.036376953125,

-0.02032470703125,

0.0073394775390625,

0.0178070068359375,

-0.037078857421875,

-0.0185089111328125,

-0.0136871337890625,

-0.0101318359375,

0.0423583984375,

0.07318115234375,

-0.036163330078125,

-0.039764404296875,

-0.044525146484375,

-0.0105... |

theblackcat102/evol-code-zh | 2023-08-25T14:15:39.000Z | [

"task_categories:text2text-generation",

"language:zh",

"region:us"

] | theblackcat102 | null | null | 4 | 21 | 2023-08-25T14:14:04 | ---

task_categories:

- text2text-generation

language:

- zh

---

Evolved codealpaca in Chinese

| 93 | [

[

0.019622802734375,

-0.057525634765625,

0.000591278076171875,

0.06854248046875,

-0.04205322265625,

0.016876220703125,

-0.01189422607421875,

-0.053070068359375,

0.062744140625,

0.0279083251953125,

-0.0199737548828125,

-0.021148681640625,

-0.01434326171875,

0.0... |

EleutherAI/coqa | 2023-11-02T14:46:15.000Z | [

"size_categories:1K<n<10K",

"language:en",

"license:other",

"arxiv:1808.07042",

"region:us"

] | EleutherAI | CoQA is a large-scale dataset for building Conversational Question Answering

systems. The goal of the CoQA challenge is to measure the ability of machines to

understand a text passage and answer a series of interconnected questions that

appear in a conversation. | @misc{reddy2018coqa,

title={CoQA: A Conversational Question Answering Challenge},

author={Siva Reddy and Danqi Chen and Christopher D. Manning},

year={2018},

eprint={1808.07042},

archivePrefix={arXiv},

primaryClass={cs.CL}

} | 1 | 21 | 2023-08-30T10:34:59 | ---

license: other

language:

- en

size_categories:

- 1K<n<10K

---

"""CoQA dataset.

This `CoQA` adds the "additional_answers" feature that's missing in the original

datasets version:

https://github.com/huggingface/datasets/blob/master/datasets/coqa/coqa.py

"""

_CITATION = """\

@misc{reddy2018coqa,

title={CoQA: A Conversational Question Answering Challenge},

author={Siva Reddy and Danqi Chen and Christopher D. Manning},

year={2018},

eprint={1808.07042},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

"""

_DESCRIPTION = """\

CoQA is a large-scale dataset for building Conversational Question Answering

systems. The goal of the CoQA challenge is to measure the ability of machines to

understand a text passage and answer a series of interconnected questions that

appear in a conversation.

"""

_HOMEPAGE = "https://stanfordnlp.github.io/coqa/"

_LICENSE = "Different licenses depending on the content (see https://stanfordnlp.github.io/coqa/ for details)" | 985 | [

[

-0.051544189453125,

-0.05767822265625,

0.0117645263671875,

0.002902984619140625,

0.007320404052734375,

0.014312744140625,

0.004810333251953125,

-0.0201873779296875,

0.023101806640625,

0.044647216796875,

-0.084716796875,

-0.02606201171875,

-0.01873779296875,

... |

slaqrichi/processed_Cosmic_dataset_V2_inst_format | 2023-09-12T09:55:34.000Z | [

"region:us"

] | slaqrichi | null | null | 0 | 21 | 2023-09-11T17:51:48 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 86815

num_examples: 95

download_size: 0

dataset_size: 86815

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "processed_Cosmic_dataset_V2_inst_format"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 455 | [

[

-0.0209808349609375,

-0.0248260498046875,

0.0211944580078125,

0.0233612060546875,

-0.0295867919921875,

-0.0072784423828125,

0.01097869873046875,

-0.01296234130859375,

0.058319091796875,

0.049468994140625,

-0.062744140625,

-0.036865234375,

-0.047454833984375,

... |

Fraol/LLM-Data5 | 2023-09-18T03:13:03.000Z | [

"region:us"

] | Fraol | null | null | 0 | 21 | 2023-09-18T02:45:05 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 462063994

num_examples: 388405

- name: validation

num_bytes: 57196523

num_examples: 48550

- name: test

num_bytes: 57443243

num_examples: 48552

download_size: 352680335

dataset_size: 576703760

---

# Dataset Card for "LLM-Data5"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 665 | [

[

-0.04443359375,

0.000659942626953125,

0.0316162109375,

0.01511383056640625,

-0.0207672119140625,

0.00470733642578125,

0.033355712890625,

-0.016876220703125,

0.04949951171875,

0.042083740234375,

-0.0711669921875,

-0.07489013671875,

-0.042236328125,

0.00241661... |

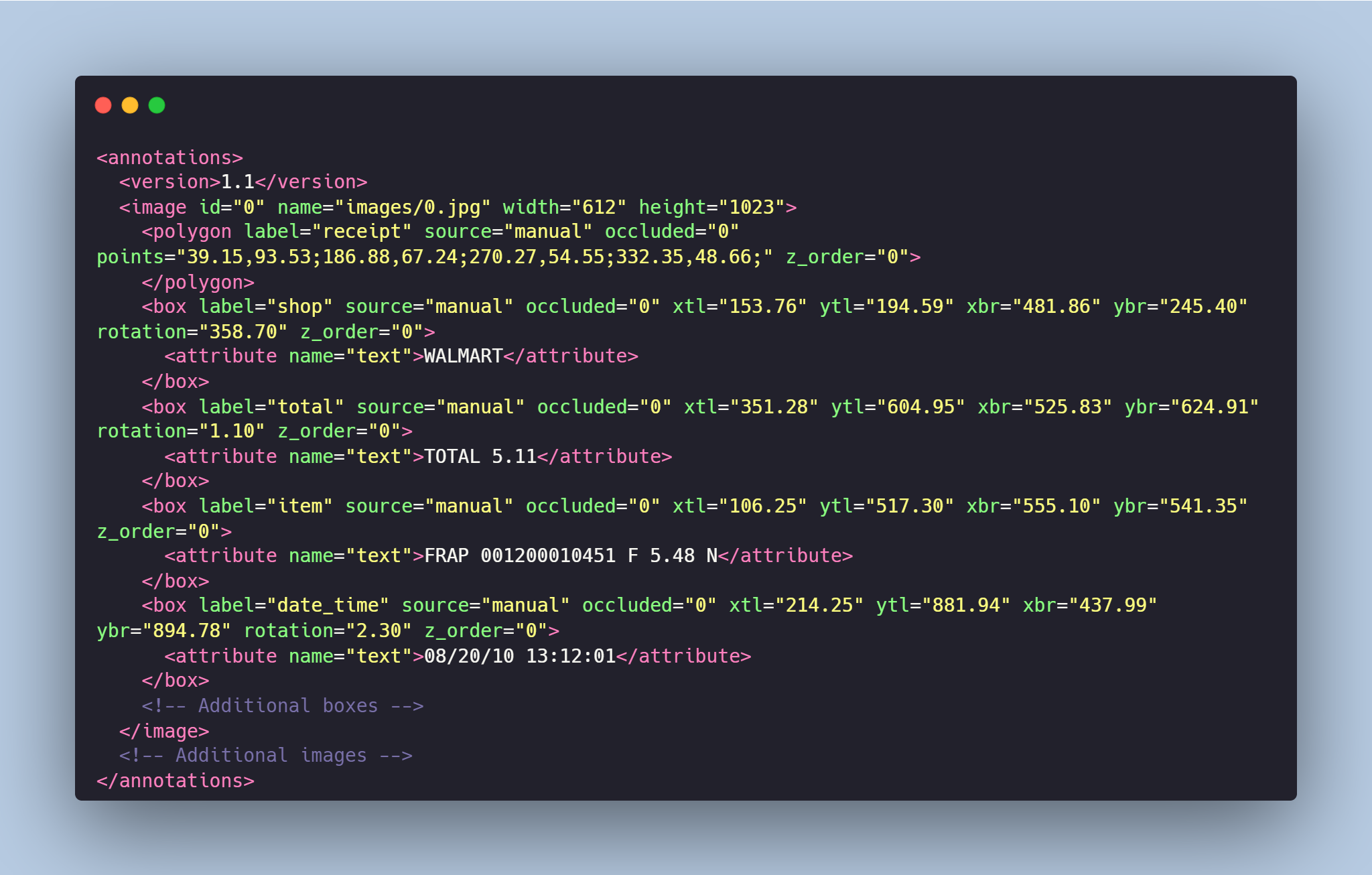

TrainingDataPro/ocr-receipts-text-detection | 2023-09-26T15:12:40.000Z | [

"task_categories:image-to-text",

"task_categories:object-detection",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"finance",

"region:us"

] | TrainingDataPro | The Grocery Store Receipts Dataset is a collection of photos captured from various

**grocery store receipts**. This dataset is specifically designed for tasks related to

**Optical Character Recognition (OCR)** and is useful for retail.

Each image in the dataset is accompanied by bounding box annotations, indicating the

precise locations of specific text segments on the receipts. The text segments are

categorized into four classes: **item, store, date_time and total**. | @InProceedings{huggingface:dataset,

title = {ocr-receipts-text-detection},

author = {TrainingDataPro},

year = {2023}

} | 1 | 21 | 2023-09-19T10:35:57 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-to-text

- object-detection

tags:

- code

- finance

dataset_info:

features:

- name: id

dtype: int32

- name: name

dtype: string

- name: image

dtype: image

- name: mask

dtype: image

- name: width

dtype: uint16

- name: height

dtype: uint16

- name: shapes

sequence:

- name: label

dtype:

class_label:

names:

'0': receipt

'1': shop

'2': item

'3': date_time

'4': total

- name: type

dtype: string

- name: points

sequence:

sequence: float32

- name: rotation

dtype: float32

- name: occluded

dtype: uint8

- name: attributes

sequence:

- name: name

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 55510934

num_examples: 20

download_size: 54557192

dataset_size: 55510934

---

# OCR Receipts from Grocery Stores Text Detection

The Grocery Store Receipts Dataset is a collection of photos captured from various **grocery store receipts**. This dataset is specifically designed for tasks related to **Optical Character Recognition (OCR)** and is useful for retail.

Each image in the dataset is accompanied by bounding box annotations, indicating the precise locations of specific text segments on the receipts. The text segments are categorized into four classes: **item, store, date_time and total**.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-receipts-text-detection) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of receipts

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and detected text, created for the original photo

# Data Format

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes and detected text . For each point, the x and y coordinates are provided.

### Classes:

- **store** - name of the grocery store

- **item** - item in the receipt

- **date_time** - date and time of the receipt

- **total** - total price of the receipt

# Text Detection in the Receipts might be made in accordance with your requirements.

## [TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-receipts-text-detection) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/trainingdata-pro** | 3,314 | [

[

-0.00942230224609375,

-0.031463623046875,

0.0322265625,

-0.0249176025390625,

-0.012847900390625,

-0.0136566162109375,

0.006580352783203125,

-0.06292724609375,

0.01910400390625,

0.05865478515625,

-0.025787353515625,

-0.0521240234375,

-0.04656982421875,

0.0361... |

allenai/scifact_entailment | 2023-09-27T05:00:41.000Z | [

"task_categories:text-classification",

"task_ids:fact-checking",

"annotations_creators:expert-generated",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:en",

"license:cc-by-nc-2.0",

"region:us"

] | allenai | SciFact, a dataset of 1.4K expert-written scientific claims paired with evidence-containing abstracts, and annotated with labels and rationales. | @inproceedings{Wadden2020FactOF,

title={Fact or Fiction: Verifying Scientific Claims},

author={David Wadden and Shanchuan Lin and Kyle Lo and Lucy Lu Wang and Madeleine van Zuylen and Arman Cohan and Hannaneh Hajishirzi},

booktitle={EMNLP},

year={2020},

} | 0 | 21 | 2023-09-26T22:04:02 | ---

annotations_creators:

- expert-generated

language:

- en

language_creators:

- found

license:

- cc-by-nc-2.0

multilinguality:

- monolingual

pretty_name: SciFact

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- fact-checking

paperswithcode_id: scifact

dataset_info:

features:

- name: claim_id

dtype: int32

- name: claim

dtype: string

- name: abstract_id

dtype: int32

- name: title

dtype: string

- name: abstract

sequence: string

- name: verdict

dtype: string

- name: evidence

sequence: int32

splits:

- name: train

num_bytes: 1649655

num_examples: 919

- name: validation

num_bytes: 605262

num_examples: 340

download_size: 3115079

dataset_size: 2254917

---

# Dataset Card for "scifact_entailment"

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Dataset Structure](#dataset-structure)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

## Dataset Description

- **Homepage:** [https://scifact.apps.allenai.org/](https://scifact.apps.allenai.org/)

- **Repository:** <https://github.com/allenai/scifact>

- **Paper:** [Fact or Fiction: Verifying Scientific Claims](https://aclanthology.org/2020.emnlp-main.609/)

- **Point of Contact:** [David Wadden](mailto:davidw@allenai.org)

### Dataset Summary

SciFact, a dataset of 1.4K expert-written scientific claims paired with evidence-containing abstracts, and annotated with labels and rationales.

For more information on the dataset, see [allenai/scifact](https://huggingface.co/datasets/allenai/scifact).

This has the same data, but reformatted as an entailment task. A single instance includes a claim paired with a paper title and abstract, together with an entailment label and a list of evidence sentences (if any).

## Dataset Structure

### Data fields

- `claim_id`: An `int32` claim identifier.

- `claim`: A `string`.

- `abstract_id`: An `int32` abstract identifier.

- `title`: A `string`.

- `abstract`: A list of `strings`, one for each sentence in the abstract.

- `verdict`: The fact-checking verdict, a `string`.

- `evidence`: A list of sentences from the abstract which provide evidence for the verdict.

### Data Splits

| |train|validation|

|------|----:|---------:|

|claims| 919 | 340|

| 2,365 | [

[

-0.01532745361328125,

-0.042449951171875,

0.0253448486328125,

0.0187530517578125,

-0.011322021484375,

-0.0097808837890625,

0.0120849609375,

-0.02545166015625,

0.045623779296875,

0.0218658447265625,

-0.0274658203125,

-0.04071044921875,

-0.04541015625,

0.02851... |

learn3r/gov_report_bp | 2023-09-29T11:05:26.000Z | [

"region:us"

] | learn3r | null | null | 0 | 21 | 2023-09-29T11:03:30 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 1030500829

num_examples: 17457

- name: validation

num_bytes: 60867802

num_examples: 972

- name: test

num_bytes: 56606131

num_examples: 973

download_size: 547138870

dataset_size: 1147974762

---

# Dataset Card for "gov_report_bp"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 702 | [

[

-0.03826904296875,

-0.0197296142578125,

0.021575927734375,

0.00913238525390625,

-0.0205535888671875,

-0.01192474365234375,

0.025177001953125,

-0.01268768310546875,

0.04791259765625,

0.04266357421875,

-0.047454833984375,

-0.058929443359375,

-0.048675537109375,

... |

Rithik28/TM_Dataset | 2023-10-05T11:22:36.000Z | [

"region:us"

] | Rithik28 | null | null | 0 | 21 | 2023-09-30T16:49:22 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01497650146484375,

0.05718994140625,

0.028839111328125,

-0.0350341796875,

0.046539306640625,

0.052490234375,

0.00507354736328125,

0.051361083984375,

0.016998291015625,

-0.05206298828125,

-0.01496124267578125,

-0.06036376953125,

0.0379... |

VatsaDev/TinyText | 2023-10-15T15:19:25.000Z | [

"task_categories:question-answering",

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:en",

"license:mit",

"code",

"region:us"

] | VatsaDev | null | null | 25 | 21 | 2023-10-02T00:36:39 | ---

license: mit

task_categories:

- question-answering

- text-generation

language:

- en

tags:

- code

size_categories:

- 1M<n<10M

---

The entire NanoPhi Dataset is at train.jsonl

Separate Tasks Include

- Math (Metamath, mammoth)

- Code (Code Search Net)

- Logic (Open-platypus)

- Roleplay (PIPPA, RoleplayIO)

- Textbooks (Tiny-text, Sciphi)

- Textbook QA (Orca-text, Tiny-webtext) | 387 | [

[

-0.033782958984375,

-0.0253143310546875,

0.013275146484375,

0.00725555419921875,

0.0089874267578125,

0.00399017333984375,

0.0014352798461914062,

0.0003693103790283203,

-0.005680084228515625,

0.046630859375,

-0.058837890625,

-0.03167724609375,

-0.0159149169921875... |

shossain/govreport-qa-512 | 2023-10-02T05:09:04.000Z | [

"region:us"

] | shossain | null | null | 0 | 21 | 2023-10-02T05:04:11 | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 33340

num_examples: 5

download_size: 15680

dataset_size: 33340

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "govreport-qa-512"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 523 | [

[

-0.036895751953125,

-0.007476806640625,

0.0282440185546875,

0.0157012939453125,

-0.0139007568359375,

0.0011396408081054688,

0.035430908203125,

-0.0053558349609375,

0.06085205078125,

0.03729248046875,

-0.0496826171875,

-0.0556640625,

-0.024627685546875,

-0.01... |

trunks/graph_tt | 2023-10-12T09:46:25.000Z | [

"region:us"

] | trunks | null | null | 0 | 21 | 2023-10-04T21:39:40 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 1562276.0

num_examples: 36

download_size: 1439813

dataset_size: 1562276.0

---

# Dataset Card for "graph_tt"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 471 | [

[

-0.032806396484375,

-0.034393310546875,

0.0171966552734375,

0.00270843505859375,

-0.0261688232421875,

0.019073486328125,

0.032196044921875,

-0.01456451416015625,

0.06817626953125,

0.0268096923828125,

-0.05682373046875,

-0.06549072265625,

-0.05279541015625,

-... |

Intuit-GenSRF/joangaes-depression | 2023-10-05T01:00:33.000Z | [

"region:us"

] | Intuit-GenSRF | null | null | 0 | 21 | 2023-10-05T01:00:31 | ---

dataset_info:

features:

- name: text

dtype: string

- name: labels

sequence: string

splits:

- name: train

num_bytes: 13387322

num_examples: 27977

download_size: 8155014

dataset_size: 13387322

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "joangaes-depression"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 488 | [

[

-0.038421630859375,

-0.0206756591796875,

0.0261077880859375,

0.030731201171875,

-0.00949859619140625,

-0.0178985595703125,

0.015960693359375,

0.0005497932434082031,

0.07684326171875,

0.032684326171875,

-0.06781005859375,

-0.0596923828125,

-0.05633544921875,

... |

unaidedelf87777/super-instruct | 2023-10-10T19:15:35.000Z | [

"region:us"

] | unaidedelf87777 | null | null | 0 | 21 | 2023-10-08T21:05:05 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Malmika/ict_text_dataset | 2023-10-09T17:19:25.000Z | [

"region:us"

] | Malmika | null | null | 0 | 21 | 2023-10-09T16:46:45 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Luciya/llama-2-nuv-intent-noE-pp | 2023-10-10T05:58:08.000Z | [

"region:us"

] | Luciya | null | null | 0 | 21 | 2023-10-10T05:58:05 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 791845

num_examples: 1585

download_size: 111893

dataset_size: 791845

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "llama-2-nuv-intent-noE-pp"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 450 | [

[

-0.016998291015625,

-0.01409912109375,

0.0232696533203125,

0.0277252197265625,

-0.035400390625,

-0.012237548828125,

0.031951904296875,

0.0039043426513671875,

0.0645751953125,

0.044708251953125,

-0.057861328125,

-0.059600830078125,

-0.049774169921875,

-0.0105... |

Coldog2333/super_dialseg | 2023-10-11T06:26:51.000Z | [

"size_categories:1K<n<10K",

"language:en",

"license:apache-2.0",

"dialogue segmentation",

"region:us"

] | Coldog2333 | \ | \ | 0 | 21 | 2023-10-11T05:28:11 | ---

license: apache-2.0

language:

- en

tags:

- dialogue segmentation

size_categories:

- 1K<n<10K

---

# Dataset Card for SuperDialseg

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:** SuperDialseg: A Large-scale Dataset for Supervised Dialogue Segmentation

- **Leaderboard:** [https://github.com/Coldog2333/SuperDialseg](https://github.com/Coldog2333/SuperDialseg)

- **Point of Contact:** jiangjf@is.s.u-tokyo.ac.jp

### Dataset Summary

[More Information Needed]

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages: English

## Dataset Structure

### Data Instances

```

{

"dial_data": {

"super_dialseg": [

{

"dial_id": "8df07b7a98990db27c395cb1f68a962e",

"turns": [

{

"da": "query_condition",

"role": "user",

"turn_id": 0,

"utterance": "Hello, I forgot o update my address, can you help me with that?",

"topic_id": 0,

"segmentation_label": 0

},

...

{

"da": "respond_solution",

"role": "agent",

"turn_id": 11,

"utterance": "DO NOT contact the New York State DMV to dispute whether you violated a toll regulation or failed to pay the toll , fees or other charges",

"topic_id": 4,

"segmentation_label": 0

}

],

...

}

]

}

```

### Data Fields

#### Dialogue-Level

+ `dial_id`: ID of a dialogue;

+ `turns`: All utterances of a dialogue.

#### Utterance-Level

+ `da`: Dialogue Act annotation derived from the original DGDS dataset;

+ `role`: Role annotation derived from the original DGDS dataset;

+ `turn_id`: ID of an utterance;

+ `utterance`: Text of the utterance;

+ `topic_id`: ID (order) of the current topic;

+ `segmentation_label`: 1: it is the end of a topic; 0: others.

### Data Splits

SuperDialseg follows the dataset splits of the original DGDS dataset.

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

SuperDialseg was built on the top of doc2dial and MultiDoc2dial datasets.

Please refer to the original papers for more details.

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

The annotation of dialogue segmentation points is constructed by a set of well-designed strategy. Please refer to the paper for more details.

Other annotations like Dialogue Act and Role information are derived from doc2dial and MultiDoc2dial datasets.

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

Apache License Version 2.0, following the licenses of doc2dial and MultiDoc2dial.

### Citation Information

Coming soon

### Contributions

Thanks to [@Coldog2333](https://github.com/Coldog2333) for adding this dataset.

| 4,341 | [

[

-0.03521728515625,

-0.060150146484375,

0.0258026123046875,

-0.0049896240234375,

-0.028350830078125,

0.01023101806640625,

-0.00730133056640625,

-0.0101470947265625,

0.0180206298828125,

0.04400634765625,

-0.07965087890625,

-0.06591796875,

-0.047637939453125,

0... |

phatjk/wikipedia_vi | 2023-10-14T05:56:36.000Z | [

"region:us"

] | phatjk | null | null | 0 | 21 | 2023-10-14T05:55:38 | ---

dataset_info:

features:

- name: title

dtype: string

- name: text

dtype: string

- name: bm25_text

dtype: string

splits:

- name: train

num_bytes: 2889457164

num_examples: 1944406

download_size: 1242752879

dataset_size: 2889457164

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "wikipedia_vi"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 524 | [

[

-0.055419921875,

-0.0240325927734375,

0.0100860595703125,

0.004360198974609375,

-0.0178985595703125,

-0.01934814453125,

0.0010309219360351562,

-0.011322021484375,

0.056732177734375,

0.0162811279296875,

-0.05816650390625,

-0.0557861328125,

-0.029510498046875,

... |

orgcatorg/russia-ukraine-cnn | 2023-10-15T22:22:37.000Z | [

"region:us"

] | orgcatorg | null | null | 0 | 21 | 2023-10-15T14:55:21 | ---

dataset_info:

features:

- name: '@type'

dtype: string

- name: headline

dtype: string

- name: url

dtype: string

- name: dateModified

dtype: string

- name: datePublished

dtype: string

- name: mainEntityOfPage

dtype: string

- name: publisher

dtype: string

- name: author

dtype: string

- name: articleBody

dtype: string

- name: image

dtype: string

splits:

- name: train

num_bytes: 41401329

num_examples: 19759

download_size: 17332574

dataset_size: 41401329

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "russia-ukraine-cnn"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 797 | [

[

-0.0413818359375,

-0.00812530517578125,

0.024749755859375,

0.01543426513671875,

-0.030242919921875,

0.00943756103515625,

0.0089569091796875,

-0.00902557373046875,

0.042694091796875,

0.019989013671875,

-0.055694580078125,

-0.06805419921875,

-0.0445556640625,

... |

annahonghong/hackthontest1 | 2023-10-17T05:09:30.000Z | [

"region:us"

] | annahonghong | null | null | 0 | 21 | 2023-10-17T03:48:41 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01494598388671875,

0.057159423828125,

0.028839111328125,

-0.0350341796875,

0.04656982421875,

0.052490234375,

0.00504302978515625,

0.0513916015625,

0.016998291015625,

-0.0521240234375,

-0.0149993896484375,

-0.06036376953125,

0.03790283... |

HumanCompatibleAI/random-seals-Ant-v1 | 2023-10-17T05:36:54.000Z | [

"region:us"

] | HumanCompatibleAI | null | null | 0 | 21 | 2023-10-17T05:33:58 | ---

dataset_info:

features:

- name: obs

sequence:

sequence: float64

- name: acts

sequence:

sequence: float32

- name: infos

sequence: string

- name: terminal

dtype: bool

- name: rews

sequence: float32

splits:

- name: train

num_bytes: 167669182

num_examples: 100

download_size: 73426727

dataset_size: 167669182

---

# Dataset Card for "random-seals-Ant-v1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 546 | [

[

-0.047882080078125,

-0.0077056884765625,

0.0220184326171875,

0.00423431396484375,

-0.039459228515625,

0.00730133056640625,

0.03924560546875,

-0.028289794921875,

0.0736083984375,

0.0313720703125,

-0.06927490234375,

-0.051544189453125,

-0.04949951171875,

-0.00... |

phanvancongthanh/pubchem_bioassay_standardized | 2023-10-18T18:31:37.000Z | [

"region:us"

] | phanvancongthanh | null | null | 0 | 21 | 2023-10-17T09:58:45 | ---

dataset_info:

features:

- name: standardized_smiles

dtype: string

splits:

- name: train

num_bytes: 10187907266

num_examples: 210186056

download_size: 4860575313

dataset_size: 10187907266

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "pubchem_bioassay_standardized"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 488 | [

[

-0.01201629638671875,

-0.01256561279296875,

0.03436279296875,

0.0162200927734375,

-0.022979736328125,

0.004421234130859375,

0.021209716796875,

-0.0085296630859375,

0.061370849609375,

0.038330078125,

-0.0380859375,

-0.07867431640625,

-0.0309906005859375,

0.01... |

rkdeva/DermnetSkinData-Train7 | 2023-10-19T08:22:15.000Z | [

"region:us"

] | rkdeva | null | null | 0 | 21 | 2023-10-19T08:12:24 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype: string

splits:

- name: train

num_bytes: 1468806344.376

num_examples: 15297

download_size: 1433360013

dataset_size: 1468806344.376

---

# Dataset Card for "DermnetSkinData-Train7"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 502 | [

[

-0.0333251953125,

0.01456451416015625,

0.010650634765625,

0.01495361328125,

-0.0247802734375,

-0.00286102294921875,

0.02252197265625,

-0.001964569091796875,

0.05615234375,

0.03192138671875,

-0.055999755859375,

-0.053497314453125,

-0.04534912109375,

-0.011718... |

centroIA/MistralInstruct | 2023-10-19T12:14:37.000Z | [

"region:us"

] | centroIA | null | null | 0 | 21 | 2023-10-19T11:51:33 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 2682613

num_examples: 967

download_size: 694943

dataset_size: 2682613

---

# Dataset Card for "MistralInstruct"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 510 | [

[

-0.038818359375,

-0.0180206298828125,

0.009063720703125,

0.02093505859375,

-0.007534027099609375,

-0.006931304931640625,

0.031982421875,

-0.0117950439453125,

0.044281005859375,

0.041229248046875,

-0.0635986328125,

-0.0494384765625,

-0.035675048828125,

-0.028... |

phatjk/odqa_data | 2023-10-20T04:08:24.000Z | [

"region:us"

] | phatjk | null | null | 0 | 21 | 2023-10-19T12:30:43 | ---

dataset_info:

features:

- name: text

dtype: string

- name: words

sequence: string

splits:

- name: train

num_bytes: 3515490316

num_examples: 1966167

download_size: 1364666872

dataset_size: 3515490316

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "odqa_data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 486 | [

[

-0.045867919921875,

-0.0265960693359375,

0.020599365234375,

-0.00662994384765625,

-0.0040740966796875,

-0.0017566680908203125,

0.0355224609375,

-0.00679779052734375,

0.047515869140625,

0.041717529296875,

-0.052337646484375,

-0.05596923828125,

-0.028717041015625,... |

jay401521/test | 2023-10-21T09:26:13.000Z | [

"region:us"

] | jay401521 | null | null | 0 | 21 | 2023-10-21T08:34:07 | ---

dataset_info:

features:

- name: id

dtype: int64

- name: domain

dtype: string

- name: label

dtype: int64

- name: rank

dtype: int64

- name: sentence

dtype: string

splits:

- name: train

num_bytes: 2768371

num_examples: 30021

download_size: 0

dataset_size: 2768371

---

# Dataset Card for "test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 475 | [

[

-0.04620361328125,

-0.028656005859375,

0.00555419921875,

0.0131072998046875,

-0.009124755859375,

0.00058746337890625,

0.0164794921875,

-0.00917816162109375,

0.050537109375,

0.0228424072265625,

-0.056121826171875,

-0.04486083984375,

-0.03240966796875,

-0.0128... |

pbaoo2705/covidqa_processed_eval | 2023-10-22T09:01:31.000Z | [

"region:us"

] | pbaoo2705 | null | null | 0 | 21 | 2023-10-22T09:01:30 | ---

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: string

- name: context_chunks

sequence: string

- name: document_id

dtype: int64

- name: id

dtype: int64

- name: context

dtype: string

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

- name: start_positions

dtype: int64

- name: end_positions

dtype: int64

splits:

- name: train

num_bytes: 2643073

num_examples: 50

download_size: 730327

dataset_size: 2643073

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "covidqa_processed_eval"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 805 | [

[

-0.034027099609375,

-0.036865234375,

0.0082550048828125,

0.0169525146484375,

-0.006290435791015625,

0.0138702392578125,

0.019805908203125,

-0.002269744873046875,

0.0499267578125,

0.0291595458984375,

-0.05572509765625,

-0.055877685546875,

-0.029693603515625,

... |

nehal69/bioAsq_Extractive_QA | 2023-10-22T09:43:16.000Z | [

"region:us"

] | nehal69 | null | null | 0 | 21 | 2023-10-22T09:13:01 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

kikikara/mistral-anger-dataset | 2023-10-22T14:00:05.000Z | [

"region:us"

] | kikikara | null | null | 0 | 21 | 2023-10-22T13:59:45 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01494598388671875,

0.057159423828125,

0.028839111328125,

-0.0350341796875,

0.04656982421875,

0.052490234375,

0.00504302978515625,

0.0513916015625,

0.016998291015625,

-0.0521240234375,

-0.0149993896484375,

-0.06036376953125,

0.03790283... |

minecode/koreanstudydataset | 2023-10-26T11:05:51.000Z | [

"region:us"

] | minecode | null | null | 1 | 21 | 2023-10-26T01:08:24 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

josedonoso/apples-dataset-60 | 2023-10-27T23:42:15.000Z | [

"region:us"

] | josedonoso | null | null | 0 | 21 | 2023-10-27T23:42:13 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 677659.0

num_examples: 48

- name: test

num_bytes: 161130.0

num_examples: 12

download_size: 839070

dataset_size: 838789.0

---

# Dataset Card for "apples-dataset-60"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 575 | [

[

-0.042510986328125,

-0.00920867919921875,

0.0161285400390625,

0.01102447509765625,

-0.004871368408203125,

0.007091522216796875,

0.0303497314453125,

-0.016326904296875,

0.05328369140625,

0.029327392578125,

-0.0697021484375,

-0.0484619140625,

-0.043670654296875,

... |

rkdeva/QA_Dataset | 2023-10-31T21:08:06.000Z | [

"region:us"

] | rkdeva | null | null | 0 | 21 | 2023-10-31T21:08:02 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 252345

num_examples: 103

download_size: 112834

dataset_size: 252345

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "QA_Dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 434 | [

[

-0.0364990234375,

-0.01361846923828125,

0.0222930908203125,

0.0124969482421875,

-0.0196685791015625,

0.00591278076171875,

0.041046142578125,

-0.005176544189453125,

0.06365966796875,

0.02716064453125,

-0.052886962890625,

-0.051788330078125,

-0.025634765625,

-... |

Falah/fashion_moodboards_prompts | 2023-11-01T06:36:26.000Z | [

"region:us"

] | Falah | null | null | 0 | 21 | 2023-11-01T06:36:25 | ---

dataset_info:

features:

- name: prompts

dtype: string

splits:

- name: train

num_bytes: 141480

num_examples: 1000

download_size: 22359

dataset_size: 141480

---

# Dataset Card for "fashion_moodboards_prompts"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 367 | [

[

-0.0379638671875,

-0.0223846435546875,

0.0306854248046875,

0.032806396484375,

-0.0262298583984375,

-0.0031642913818359375,

0.018707275390625,

0.0127105712890625,

0.0689697265625,

0.0199127197265625,

-0.095947265625,

-0.0692138671875,

-0.0251617431640625,

-0.... |

midas/ldkp10k | 2022-04-02T16:49:45.000Z | [

"region:us"

] | midas | This new dataset is designed to solve kp NLP task and is crafted with a lot of care. | TBA | 2 | 20 | 2022-03-02T23:29:22 | A dataset for benchmarking keyphrase extraction and generation techniques from long document English scientific papers. For more details about the dataset please refer the original paper - []().

Data source - []()

## Dataset Summary

## Dataset Structure

### Data Fields

- **id**: unique identifier of the document.

- **sections**: list of all the sections present in the document.

- **sec_text**: list of white space separated list of words present in each section.

- **sec_bio_tags**: list of BIO tags of white space separated list of words present in each section.

- **extractive_keyphrases**: List of all the present keyphrases.

- **abstractive_keyphrase**: List of all the absent keyphrases.

### Data Splits

|Split| #datapoints |

|--|--|

| Train-Small | 20,000 |

| Train-Medium | 50,000 |

| Train-Large | 1,296,613 |

| Test | 10,000 |

| Validation | 10,000 |

## Usage

### Small Dataset

```python

from datasets import load_dataset

# get small dataset

dataset = load_dataset("midas/ldkp10k", "small")

def order_sections(sample):

"""

corrects the order in which different sections appear in the document.

resulting order is: title, abstract, other sections in the body

"""

sections = []

sec_text = []

sec_bio_tags = []

if "title" in sample["sections"]:

title_idx = sample["sections"].index("title")

sections.append(sample["sections"].pop(title_idx))

sec_text.append(sample["sec_text"].pop(title_idx))

sec_bio_tags.append(sample["sec_bio_tags"].pop(title_idx))

if "abstract" in sample["sections"]:

abstract_idx = sample["sections"].index("abstract")

sections.append(sample["sections"].pop(abstract_idx))

sec_text.append(sample["sec_text"].pop(abstract_idx))

sec_bio_tags.append(sample["sec_bio_tags"].pop(abstract_idx))

sections += sample["sections"]

sec_text += sample["sec_text"]

sec_bio_tags += sample["sec_bio_tags"]

return sections, sec_text, sec_bio_tags

# sample from the train split

print("Sample from train data split")

train_sample = dataset["train"][0]

sections, sec_text, sec_bio_tags = order_sections(train_sample)

print("Fields in the sample: ", [key for key in train_sample.keys()])

print("Section names: ", sections)

print("Tokenized Document: ", sec_text)

print("Document BIO Tags: ", sec_bio_tags)

print("Extractive/present Keyphrases: ", train_sample["extractive_keyphrases"])

print("Abstractive/absent Keyphrases: ", train_sample["abstractive_keyphrases"])

print("\n-----------\n")

# sample from the validation split

print("Sample from validation data split")

validation_sample = dataset["validation"][0]

sections, sec_text, sec_bio_tags = order_sections(validation_sample)

print("Fields in the sample: ", [key for key in validation_sample.keys()])

print("Section names: ", sections)

print("Tokenized Document: ", sec_text)

print("Document BIO Tags: ", sec_bio_tags)

print("Extractive/present Keyphrases: ", validation_sample["extractive_keyphrases"])

print("Abstractive/absent Keyphrases: ", validation_sample["abstractive_keyphrases"])

print("\n-----------\n")

# sample from the test split

print("Sample from test data split")

test_sample = dataset["test"][0]

sections, sec_text, sec_bio_tags = order_sections(test_sample)

print("Fields in the sample: ", [key for key in test_sample.keys()])

print("Section names: ", sections)

print("Tokenized Document: ", sec_text)

print("Document BIO Tags: ", sec_bio_tags)

print("Extractive/present Keyphrases: ", test_sample["extractive_keyphrases"])

print("Abstractive/absent Keyphrases: ", test_sample["abstractive_keyphrases"])

print("\n-----------\n")

```

**Output**

```bash

```

### Medium Dataset

```python

from datasets import load_dataset

# get medium dataset

dataset = load_dataset("midas/ldkp10k", "medium")

```

### Large Dataset

```python

from datasets import load_dataset

# get large dataset

dataset = load_dataset("midas/ldkp10k", "large")

```

## Citation Information

Please cite the works below if you use this dataset in your work.

```

@article{mahata2022ldkp,

title={LDKP: A Dataset for Identifying Keyphrases from Long Scientific Documents},

author={Mahata, Debanjan and Agarwal, Naveen and Gautam, Dibya and Kumar, Amardeep and Parekh, Swapnil and Singla, Yaman Kumar and Acharya, Anish and Shah, Rajiv Ratn},

journal={arXiv preprint arXiv:2203.15349},

year={2022}

}

```

```

@article{lo2019s2orc,

title={S2ORC: The semantic scholar open research corpus},

author={Lo, Kyle and Wang, Lucy Lu and Neumann, Mark and Kinney, Rodney and Weld, Dan S},

journal={arXiv preprint arXiv:1911.02782},

year={2019}

}

```

```

@inproceedings{ccano2019keyphrase,

title={Keyphrase generation: A multi-aspect survey},

author={{\c{C}}ano, Erion and Bojar, Ond{\v{r}}ej},

booktitle={2019 25th Conference of Open Innovations Association (FRUCT)},

pages={85--94},

year={2019},

organization={IEEE}

}

```

```

@article{meng2017deep,

title={Deep keyphrase generation},

author={Meng, Rui and Zhao, Sanqiang and Han, Shuguang and He, Daqing and Brusilovsky, Peter and Chi, Yu},

journal={arXiv preprint arXiv:1704.06879},

year={2017}

}

```

## Contributions

Thanks to [@debanjanbhucs](https://github.com/debanjanbhucs), [@dibyaaaaax](https://github.com/dibyaaaaax), [@UmaGunturi](https://github.com/UmaGunturi) and [@ad6398](https://github.com/ad6398) for adding this dataset

| 5,358 | [

[

-0.00675201416015625,

-0.030242919921875,

0.0305328369140625,

0.012451171875,

-0.02642822265625,

0.01194000244140625,

-0.014068603515625,

-0.00855255126953125,

0.01522064208984375,

0.01548004150390625,

-0.034149169921875,

-0.05865478515625,

-0.03448486328125,

... |

nickmuchi/financial-classification | 2023-01-27T23:44:03.000Z | [

"task_categories:text-classification",

"task_ids:multi-class-classification",

"task_ids:sentiment-classification",

"annotations_creators:expert-generated",

"language_creators:found",

"size_categories:1K<n<10K",

"language:en",

"finance",

"region:us"

] | nickmuchi | null | null | 7 | 20 | 2022-03-02T23:29:22 | ---

annotations_creators:

- expert-generated

language_creators:

- found

language:

- en

task_categories:

- text-classification

task_ids:

- multi-class-classification

- sentiment-classification

train-eval-index:

- config: sentences_50agree

- task: text-classification

- task_ids: multi_class_classification

- splits:

eval_split: train

- col_mapping:

sentence: text

label: target

size_categories:

- 1K<n<10K

tags:

- finance

---

## Dataset Creation

This [dataset](https://huggingface.co/datasets/nickmuchi/financial-classification) combines financial phrasebank dataset and a financial text dataset from [Kaggle](https://www.kaggle.com/datasets/percyzheng/sentiment-classification-selflabel-dataset).

Given the financial phrasebank dataset does not have a validation split, I thought this might help to validate finance models and also capture the impact of COVID on financial earnings with the more recent Kaggle dataset. | 935 | [

[

-0.01122283935546875,

-0.0307159423828125,

0.005847930908203125,

0.040283203125,

-0.0004055500030517578,

0.023101806640625,

0.01806640625,

-0.0241241455078125,

0.0357666015625,

0.041229248046875,

-0.041259765625,

-0.03839111328125,

-0.0297393798828125,

-0.01... |

sia-precision-education/pile_python | 2022-01-25T01:24:47.000Z | [

"region:us"

] | sia-precision-education | null | null | 2 | 20 | 2022-03-02T23:29:22 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

stas/wmt16-en-ro-pre-processed | 2021-02-16T03:58:06.000Z | [

"region:us"

] | stas | null | @InProceedings{huggingface:dataset,

title = {WMT16 English-Romanian Translation Data with further preprocessing},

authors={},

year={2016}

} | 0 | 20 | 2022-03-02T23:29:22 | # WMT16 English-Romanian Translation Data w/ further preprocessing

The original instructions are [here](https://github.com/rsennrich/wmt16-scripts/tree/master/sample).

This pre-processed dataset was created by running:

```

git clone https://github.com/rsennrich/wmt16-scripts

cd wmt16-scripts

cd sample

./download_files.sh

./preprocess.sh

```

It was originally used by `transformers` [`finetune_trainer.py`](https://github.com/huggingface/transformers/blob/641f418e102218c4bf16fcd3124bfebed6217ef6/examples/seq2seq/finetune_trainer.py)

The data itself resides at https://cdn-datasets.huggingface.co/translation/wmt_en_ro.tar.gz

If you would like to convert it to jsonlines I've included a small script `convert-to-jsonlines.py` that will do it for you. But if you're using the `datasets` API, it will be done on the fly.

| 828 | [

[

-0.0479736328125,

-0.044525146484375,

0.0236053466796875,

0.019744873046875,

-0.04180908203125,

-0.007678985595703125,

-0.034393310546875,

-0.0207061767578125,

0.02569580078125,

0.04461669921875,

-0.07269287109375,

-0.04754638671875,

-0.039093017578125,

0.02... |

billray110/corpus-of-diverse-styles | 2022-10-22T00:52:53.000Z | [

"task_categories:text-classification",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10M<n<100M",

"arxiv:2010.05700",

"region:us"

] | billray110 | null | null | 3 | 20 | 2022-04-21T01:13:59 | ---

annotations_creators: []

language_creators:

- found

language: []

license: []

multilinguality:

- monolingual

pretty_name: Corpus of Diverse Styles

size_categories:

- 10M<n<100M

source_datasets: []

task_categories:

- text-classification

task_ids: []

---

# Dataset Card for Corpus of Diverse Styles

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

## Disclaimer

I am not the original author of the paper that presents the Corpus of Diverse Styles. I uploaded the dataset to HuggingFace as a convenience.

## Dataset Description

- **Homepage:** http://style.cs.umass.edu/

- **Repository:** https://github.com/martiansideofthemoon/style-transfer-paraphrase

- **Paper:** https://arxiv.org/abs/2010.05700

### Dataset Summary

A new benchmark dataset that contains 15M

sentences from 11 diverse styles.

To create CDS, we obtain data from existing academic

research datasets and public APIs or online collections

like Project Gutenberg. We choose

styles that are easy for human readers to identify at

a sentence level (e.g., Tweets or Biblical text). While

prior benchmarks involve a transfer between two

styles, CDS has 110 potential transfer directions.

### Citation Information

```

@inproceedings{style20,

author={Kalpesh Krishna and John Wieting and Mohit Iyyer},

Booktitle = {Empirical Methods in Natural Language Processing},

Year = "2020",

Title={Reformulating Unsupervised Style Transfer as Paraphrase Generation},

}

``` | 1,533 | [

[

-0.03399658203125,

-0.041595458984375,

0.0136566162109375,

0.048828125,

-0.037750244140625,

0.01328277587890625,

-0.040740966796875,

-0.0211334228515625,

0.0259552001953125,

0.04779052734375,

-0.036224365234375,

-0.07159423828125,

-0.043426513671875,

0.03271... |

Aniemore/cedr-m7 | 2022-07-01T16:39:56.000Z | [

"task_categories:text-classification",

"task_ids:sentiment-classification",

"annotations_creators:found",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:extended|cedr",

"language:ru",

"license:mit",

"region:us"

] | Aniemore | null | null | 5 | 20 | 2022-05-24T18:01:54 | ---

annotations_creators:

- found

language_creators:

- found

language:

- ru

license: mit

multilinguality:

- monolingual

pretty_name: cedr-m7

size_categories:

- 1K<n<10K

source_datasets:

- extended|cedr

task_categories:

- text-classification

task_ids:

- sentiment-classification

---

# Dataset Card for CEDR-M7

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

[More Information Needed]

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

```

@misc{Aniemore,

author = {Артем Аментес, Илья Лубенец, Никита Давидчук},

title = {Открытая библиотека искусственного интеллекта для анализа и выявления эмоциональных оттенков речи человека},

year = {2022},

publisher = {Hugging Face},

journal = {Hugging Face Hub},

howpublished = {\url{https://huggingface.com/aniemore/Aniemore}},

email = {hello@socialcode.ru}

}

```

### Contributions

Thanks to [@toiletsandpaper](https://github.com/toiletsandpaper) for adding this dataset.

| 3,115 | [

[

-0.035980224609375,

-0.038818359375,

0.017242431640625,

0.01202392578125,

-0.0220947265625,

0.00868988037109375,

-0.02093505859375,

-0.0273284912109375,

0.04412841796875,

0.03253173828125,

-0.06378173828125,

-0.08380126953125,

-0.05194091796875,

0.0122604370... |

imvladikon/bmc | 2022-11-17T16:52:43.000Z | [

"task_categories:token-classification",

"task_ids:named-entity-recognition",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|other-reuters-corpus",

"language:he",

"license:other",

"arxiv:2007.156... | imvladikon | \ | @mastersthesis{naama,

title={Hebrew Named Entity Recognition},

author={Ben-Mordecai, Naama},

advisor={Elhadad, Michael},

year={2005},

url="https://www.cs.bgu.ac.il/~elhadad/nlpproj/naama/",

institution={Department of Computer Science, Ben-Gurion University},

school={Department of Computer Science, Ben-Gurion University},

},

@misc{bareket2020neural,

title={Neural Modeling for Named Entities and Morphology (NEMO^2)},

author={Dan Bareket and Reut Tsarfaty},

year={2020},

eprint={2007.15620},

archivePrefix={arXiv},

primaryClass={cs.CL}

} | 0 | 20 | 2022-06-22T15:39:14 | ---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- he

license:

- other

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-reuters-corpus

task_categories:

- token-classification

task_ids:

- named-entity-recognition

train-eval-index:

- config: bmc

task: token-classification

task_id: entity_extraction

splits:

train_split: train

eval_split: validation

test_split: test

col_mapping:

tokens: tokens

ner_tags: tags

metrics:

- type: seqeval

name: seqeval

---

# Splits for the Ben-Mordecai and Elhadad Hebrew NER Corpus (BMC)

In order to evaluate performance in accordance with the original Ben-Mordecai and Elhadad (2005) work, we provide three 75%-25% random splits.

* Only the 7 entity categories viable for evaluation were kept (DATE, LOC, MONEY, ORG, PER, PERCENT, TIME) --- all MISC entities were filtered out.

* Sequence label scheme was changed from IOB to BIOES

* The dev sets are 10% taken out of the 75%

## Citation

If you use use the BMC corpus, please cite the original paper as well as our paper which describes the splits:

* Ben-Mordecai and Elhadad (2005):

```console

@mastersthesis{naama,

title={Hebrew Named Entity Recognition},

author={Ben-Mordecai, Naama},

advisor={Elhadad, Michael},

year={2005},

url="https://www.cs.bgu.ac.il/~elhadad/nlpproj/naama/",

institution={Department of Computer Science, Ben-Gurion University},

school={Department of Computer Science, Ben-Gurion University},

}

```

* Bareket and Tsarfaty (2020)

```console

@misc{bareket2020neural,

title={Neural Modeling for Named Entities and Morphology (NEMO^2)},

author={Dan Bareket and Reut Tsarfaty},

year={2020},

eprint={2007.15620},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

| 1,838 | [

[

-0.04718017578125,

-0.047210693359375,

0.0163116455078125,

0.0166015625,

-0.0295257568359375,

0.00811767578125,

-0.0255584716796875,

-0.0599365234375,

0.0364990234375,

0.01361083984375,

-0.0260009765625,

-0.044708251953125,

-0.048187255859375,

0.020629882812... |

LHF/escorpius | 2023-01-05T10:55:48.000Z | [

"task_categories:text-generation",

"task_categories:fill-mask",

"task_ids:language-modeling",

"task_ids:masked-language-modeling",

"multilinguality:monolingual",

"size_categories:100M<n<1B",

"source_datasets:original",

"language:es",

"license:cc-by-nc-nd-4.0",

"arxiv:2206.15147",

"region:us"

] | LHF | Spanish dataset | @misc{TODO

} | 12 | 20 | 2022-06-24T20:58:40 | ---

license: cc-by-nc-nd-4.0

language:

- es

multilinguality:

- monolingual

size_categories:

- 100M<n<1B

source_datasets:

- original

task_categories:

- text-generation

- fill-mask

task_ids:

- language-modeling

- masked-language-modeling

---

# esCorpius: A Massive Spanish Crawling Corpus

## Introduction

In the recent years, Transformer-based models have lead to significant advances in language modelling for natural language processing. However, they require a vast amount of data to be (pre-)trained and there is a lack of corpora in languages other than English. Recently, several initiatives have presented multilingual datasets obtained from automatic web crawling. However, the results in Spanish present important shortcomings, as they are either too small in comparison with other languages, or present a low quality derived from sub-optimal cleaning and deduplication. In this work, we introduce esCorpius, a Spanish crawling corpus obtained from near 1 Pb of Common Crawl data. It is the most extensive corpus in Spanish with this level of quality in the extraction, purification and deduplication of web textual content. Our data curation process involves a novel highly parallel cleaning pipeline and encompasses a series of deduplication mechanisms that together ensure the integrity of both document and paragraph boundaries. Additionally, we maintain both the source web page URL and the WARC shard origin URL in order to complain with EU regulations. esCorpius has been released under CC BY-NC-ND 4.0 license.

## Statistics

| **Corpus** | OSCAR<br>22.01 | mC4 | CC-100 | ParaCrawl<br>v9 | esCorpius<br>(ours) |

|-------------------------|----------------|--------------|-----------------|-----------------|-------------------------|

| **Size (ES)** | 381.9 GB | 1,600.0 GB | 53.3 GB | 24.0 GB | 322.5 GB |

| **Docs (ES)** | 51M | 416M | - | - | 104M |

| **Words (ES)** | 42,829M | 433,000M | 9,374M | 4,374M | 50,773M |

| **Lang.<br>identifier** | fastText | CLD3 | fastText | CLD2 | CLD2 + fastText |

| **Elements** | Document | Document | Document | Sentence | Document and paragraph |

| **Parsing quality** | Medium | Low | Medium | High | High |

| **Cleaning quality** | Low | No cleaning | Low | High | High |

| **Deduplication** | No | No | No | Bicleaner | dLHF |

| **Language** | Multilingual | Multilingual | Multilingual | Multilingual | Spanish |

| **License** | CC-BY-4.0 | ODC-By-v1.0 | Common<br>Crawl | CC0 | CC-BY-NC-ND |

## Citation

Link to the paper: https://www.isca-speech.org/archive/pdfs/iberspeech_2022/gutierrezfandino22_iberspeech.pdf / https://arxiv.org/abs/2206.15147

Cite this work:

```

@inproceedings{gutierrezfandino22_iberspeech,

author={Asier Gutiérrez-Fandiño and David Pérez-Fernández and Jordi Armengol-Estapé and David Griol and Zoraida Callejas},

title={{esCorpius: A Massive Spanish Crawling Corpus}},

year=2022,

booktitle={Proc. IberSPEECH 2022},

pages={126--130},

doi={10.21437/IberSPEECH.2022-26}

}

```

## Disclaimer

We did not perform any kind of filtering and/or censorship to the corpus. We expect users to do so applying their own methods. We are not liable for any misuse of the corpus. | 3,721 | [

[

-0.034942626953125,

-0.0465087890625,

0.0243988037109375,

0.0400390625,

-0.0157012939453125,

0.034698486328125,

-0.006221771240234375,

-0.033721923828125,

0.053924560546875,

0.04071044921875,

-0.0228729248046875,

-0.059295654296875,

-0.058135986328125,

0.022... |

jonaskoenig/Questions-vs-Statements-Classification | 2022-07-11T15:36:35.000Z | [

"region:us"

] | jonaskoenig | null | null | 2 | 20 | 2022-07-10T20:24:09 | [Needs More Information]

# Dataset Card for Questions-vs-Statements-Classification

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-instances)

- [Data Splits](#data-instances)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

## Dataset Description

- **Homepage:** [Kaggle](https://www.kaggle.com/datasets/shahrukhkhan/questions-vs-statementsclassificationdataset)

- **Point of Contact:** [Shahrukh Khan](https://www.kaggle.com/shahrukhkhan)

### Dataset Summary

A dataset containing statements and questions with their corresponding labels.

### Supported Tasks and Leaderboards

multi-class-classification

### Languages

en

## Dataset Structure

### Data Splits

Train Test Valid

## Dataset Creation

### Curation Rationale

The goal of this project is to classify sentences, based on type:

Statement (Declarative Sentence)

Question (Interrogative Sentence)

### Source Data

[Kaggle](https://www.kaggle.com/datasets/shahrukhkhan/questions-vs-statementsclassificationdataset)

#### Initial Data Collection and Normalization

The dataset is created by parsing out the SQuAD dataset and combining it with the SPAADIA dataset.

### Other Known Limitations

Questions in this case ar are only one sentence, statements are a single sentence or more. They are classified correctly but don't include sentences prior to questions.

## Additional Information

### Dataset Curators

[SHAHRUKH KHAN](https://www.kaggle.com/shahrukhkhan)

### Licensing Information

[CC0: Public Domain](https://creativecommons.org/publicdomain/zero/1.0/)

| 2,352 | [

[

-0.036407470703125,

-0.06341552734375,

-0.0004684925079345703,

0.01441192626953125,

0.0009455680847167969,

0.0266265869140625,

-0.022491455078125,

-0.01433563232421875,

0.0137176513671875,

0.04351806640625,

-0.0660400390625,

-0.06396484375,

-0.040313720703125,

... |

Bingsu/Gameplay_Images | 2022-08-26T05:31:58.000Z | [

"task_categories:image-classification",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-4.0",

"region:us"

] | Bingsu | null | null | 1 | 20 | 2022-08-26T04:42:10 | ---

language:

- en

license:

- cc-by-4.0

multilinguality:

- monolingual

pretty_name: Gameplay Images

size_categories:

- 1K<n<10K

task_categories:

- image-classification

---

# Gameplay Images

## Dataset Description

- **Homepage:** [kaggle](https://www.kaggle.com/datasets/aditmagotra/gameplay-images)

- **Download Size** 2.50 GiB

- **Generated Size** 1.68 GiB

- **Total Size** 4.19 GiB

A dataset from [kaggle](https://www.kaggle.com/datasets/aditmagotra/gameplay-images).

This is a dataset of 10 very famous video games in the world.

These include

- Among Us

- Apex Legends

- Fortnite

- Forza Horizon

- Free Fire

- Genshin Impact

- God of War

- Minecraft

- Roblox

- Terraria

There are 1000 images per class and all are sized `640 x 360`. They are in the `.png` format.

This Dataset was made by saving frames every few seconds from famous gameplay videos on Youtube.

※ This dataset was uploaded in January 2022. Game content updated after that will not be included.

### License

CC-BY-4.0

## Dataset Structure

### Data Instance

```python

>>> from datasets import load_dataset

>>> dataset = load_dataset("Bingsu/Gameplay_Images")

DatasetDict({

train: Dataset({

features: ['image', 'label'],

num_rows: 10000

})

})

```

```python

>>> dataset["train"].features

{'image': Image(decode=True, id=None),

'label': ClassLabel(num_classes=10, names=['Among Us', 'Apex Legends', 'Fortnite', 'Forza Horizon', 'Free Fire', 'Genshin Impact', 'God of War', 'Minecraft', 'Roblox', 'Terraria'], id=None)}

```

### Data Size

download: 2.50 GiB<br>

generated: 1.68 GiB<br>

total: 4.19 GiB

### Data Fields

- image: `Image`

- A `PIL.Image.Image object` containing the image. size=640x360

- Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the "image" column, i.e. `dataset[0]["image"]` should always be preferred over `dataset["image"][0]`.

- label: an int classification label.

Class Label Mappings:

```json

{

"Among Us": 0,

"Apex Legends": 1,

"Fortnite": 2,

"Forza Horizon": 3,

"Free Fire": 4,

"Genshin Impact": 5,

"God of War": 6,

"Minecraft": 7,