id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

yuchenlin/just-eval-redteam | 2023-10-06T21:54:33.000Z | [

"region:us"

] | yuchenlin | null | null | 0 | 19 | 2023-10-06T18:26:25 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

meta-math/MetaMathQA-40K | 2023-10-25T09:01:11.000Z | [

"license:cc-by-nc-4.0",

"arxiv:2309.12284",

"region:us"

] | meta-math | null | null | 3 | 19 | 2023-10-07T14:47:58 | ---

license: cc-by-nc-4.0

---

arxiv.org/abs/2309.12284

View the project page:

https://meta-math.github.io/ | 108 | [

[

-0.052032470703125,

-0.0247650146484375,

0.030487060546875,

0.00809478759765625,

-0.0157623291015625,

0.0047607421875,

0.0095367431640625,

-0.01117706298828125,

0.060089111328125,

0.057281494140625,

-0.052734375,

-0.046112060546875,

-0.0021190643310546875,

0... |

syaoran312/VHAC_QA_full | 2023-10-07T19:51:18.000Z | [

"region:us"

] | syaoran312 | null | null | 0 | 19 | 2023-10-07T19:50:20 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

sankettgorey/donut_6 | 2023-10-09T09:38:01.000Z | [

"region:us"

] | sankettgorey | null | null | 0 | 19 | 2023-10-09T09:36:41 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

- split: valid

path: data/valid-*

dataset_info:

features:

- name: image

dtype: image

- name: ground_truth

dtype: string

splits:

- name: train

num_bytes: 350410662.6

num_examples: 800

- name: test

num_bytes: 43730265.7

num_examples: 100

- name: valid

num_bytes: 43819720.7

num_examples: 100

download_size: 402661296

dataset_size: 437960649.0

---

# Dataset Card for "donut_6"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 690 | [

[

-0.019683837890625,

-0.0107421875,

0.0194244384765625,

0.0018444061279296875,

0.0006084442138671875,

-0.0000858306884765625,

0.01529693603515625,

-0.005168914794921875,

0.055572509765625,

0.0369873046875,

-0.051361083984375,

-0.043975830078125,

-0.04281616210937... |

FreedomIntelligence/Huatuo26M-GPTShine | 2023-10-16T07:16:30.000Z | [

"task_categories:text-classification",

"task_categories:question-answering",

"task_categories:conversational",

"task_categories:text-generation",

"size_categories:100K<n<1M",

"language:zh",

"license:apache-2.0",

"medical",

"arxiv:2305.01526",

"region:us"

] | FreedomIntelligence | null | null | 4 | 19 | 2023-10-11T09:08:49 | ---

license: apache-2.0

task_categories:

- text-classification

- question-answering

- conversational

- text-generation

language:

- zh

tags:

- medical

pretty_name: Huatuo26M_v2

size_categories:

- 100K<n<1M

---

# Huatuo26M-GPTShine Dataset 📚

- ## Table of Contents 🗂

- [Dataset Description](#dataset-description) 📝

- [Dataset Information](#dataset-information) ℹ️

- [Data Distribution](#data-distribution) 📊

- [Usage](#usage) 🔧

- [Citation](#citation) 📖

## Dataset Description 📝

Huatuo26M-GPTShine is a refined and optimized dataset based on the Huatuo26M dataset, which has undergone multiple purification processes and rewrites. It has more data dimensions and higher data quality. We welcome you to try using it.

## Dataset Information ℹ️

- **Dataset Name:** Huatuo26M-GPTShine

- **Version:** _[0.0.1]_

- **Size:** _[178k]_

- **Language:** _[Chinese]_

### Abstract 📄

We collected 26 million pieces of original QA data in the medical field, but it was not easy to use and had some risks because it was obtained from Common Crawl. Therefore, we took the following steps based on the original 26 million data: deduplication, cleaning, extraction of high-frequency questions, scoring of high-frequency questions using ChatGPT, and filtering only high-scoring questions. We then used ChatGPT to rewrite the answers to the high-scoring questions, resulting in a completely refined dataset. Please refer to our paper for the specific processing methods.

### Data Collection 🕵️♂️

ur question data was collected from the internet, and we extracted the high-frequency portion. The answers were rewritten by ChatGPT based on the original answers as a reference, and their quality was judged to be better than the original answers through manual evaluation. Therefore, please feel free to use our dataset with confidence.

### Preprocessing/Cleaning 🧹

The dataset has been processed to remove duplicates and cleaned to ensure high-quality data. It was then refined using OpenAI's ChatGPT, which helped in enhancing the overall quality of the dataset.

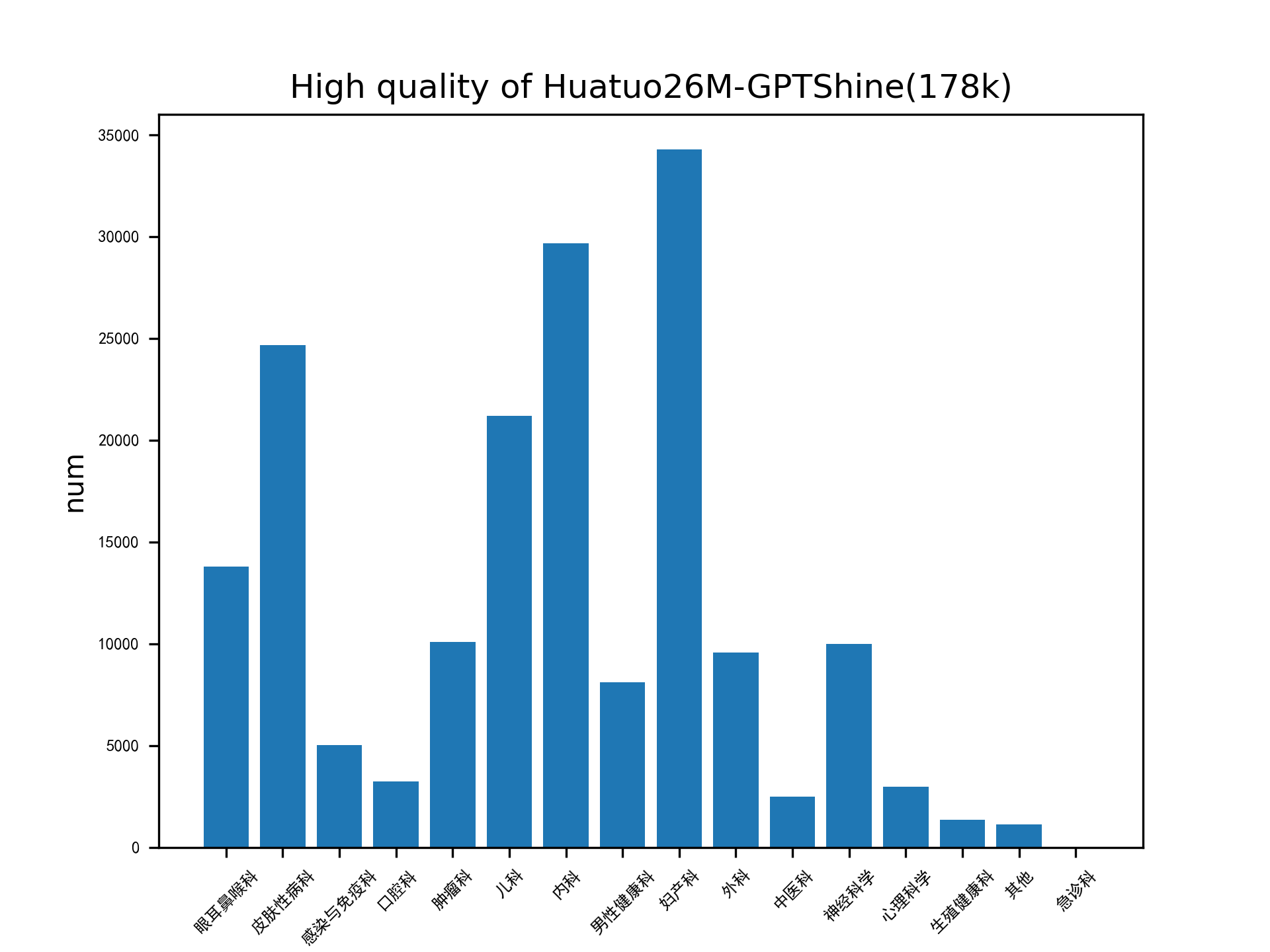

## Data Distribution 📊

This section provides a visual overview of the distribution of data in the Huatuo26M-GPTShine dataset.

**Data Categories Bar Chart:**

This chart represents the distribution of data categories in the dataset.

**Top 20 Associated Diseases Table:**

| topn | disease | nums | ratio |

| ---- | ---------- | ---- | ------- |

| 1 | 白癜风 | 3308 | 1.8615% |

| 2 | 人流 | 2686 | 1.5115% |

| 3 | 感冒 | 2371 | 1.3342% |

| 4 | 癫痫 | 2217 | 1.2476% |

| 5 | 痔疮 | 2134 | 1.2009% |

| 6 | 疼痛 | 1842 | 1.0366% |

| 7 | 咳嗽 | 1799 | 1.0124% |

| 8 | 前列腺炎 | 1564 | 0.8801% |

| 9 | 尖锐湿疣 | 1516 | 0.8531% |

| 10 | 肺癌 | 1408 | 0.7923% |

| 11 | 出血 | 1400 | 0.7878% |

| 12 | 鼻炎 | 1370 | 0.7709% |

| 13 | 肝癌 | 1354 | 0.7619% |

| 14 | 糖尿病 | 1348 | 0.7586% |

| 15 | 过敏性鼻炎 | 1295 | 0.7287% |

| 16 | 发烧 | 1265 | 0.7119% |

| 17 | 乙肝 | 1232 | 0.6933% |

| 18 | 便秘 | 1214 | 0.6832% |

| 19 | 甲亢 | 1178 | 0.6629% |

| 20 | 脱发 | 1173 | 0.6601% |

This table shows the top 20 diseases associated with the data entries in the dataset, along with their respective data entry counts and proportions.

## Usage 🔧

```python

from datasets import load_dataset

dataset = load_dataset("FreedomIntelligence/Huatuo26M-GPTShine")

```

## Citation 📖

```

@misc{li2023huatuo26m,

title={Huatuo-26M, a Large-scale Chinese Medical QA Dataset},

author={Jianquan Li and Xidong Wang and Xiangbo Wu and Zhiyi Zhang and Xiaolong Xu and Jie Fu and Prayag Tiwari and Xiang Wan and Benyou Wang},

year={2023},

eprint={2305.01526},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

---

Please note that this dataset is distributed "AS IS" without any warranty, express or implied, from the provider. Users should cite the dataset appropriately and respect any licensing or usage restrictions. | 4,117 | [

[

-0.0211181640625,

-0.03070068359375,

0.017303466796875,

-0.00012981891632080078,

-0.03814697265625,

-0.024810791015625,

-0.000530242919921875,

-0.01983642578125,

0.035614013671875,

0.032470703125,

-0.0153350830078125,

-0.0614013671875,

-0.04730224609375,

0.0... |

ShaomuTan/EC40 | 2023-10-11T19:43:00.000Z | [

"region:us"

] | ShaomuTan | The x-stance dataset contains more than 150 political questions, and 67k comments written by candidates on those questions. The comments are partly German, partly French and Italian. The data have been extracted from the Swiss voting advice platform Smartvote. | @inproceedings{vamvas2020xstance,

author = "Vamvas, Jannis and Sennrich, Rico",

title = "{X-Stance}: A Multilingual Multi-Target Dataset for Stance Detection",

booktitle = "Proceedings of the 5th Swiss Text Analytics Conference (SwissText) \& 16th Conference on Natural Language Processing (KONVENS)",

address = "Zurich, Switzerland",

year = "2020",

month = "jun",

url = "http://ceur-ws.org/Vol-2624/paper9.pdf"

} | 0 | 19 | 2023-10-11T17:27:27 | # EC40 MNMT Dataset

GitHub: https://github.com/Smu-Tan/ZS-NMT-Variations/tree/main

### EC40 is an English-Centric Multilingual Machine Translation Dataset. It has over 60 Million sentences including 40 Languages across 5 Language Families.

#### Note: The dataset is cleaned and pre-processed using tools like Moses, for more details, please refer to the paper.

### Features:

1. We carefully balanced the dataset across resources and languages by strictly maintaining each resource group containing 5 language families and each family consists of 8 representative languages.

2. EC40 covers a wide spectrum of resource availability, ranging from High(5M) to Medium(1M), Low(100K), and extremely-Low(50K) resources.

3. In total, there are 80 English-centric directions for training and 1,640 directions (including all supervised and ZS directions) for evaluation.

4. We make use of Ntrex-128 and Flores-200 as our validation and test set.

-----

## Languages and Family

| Family | Languges |

| :--- | :---: |

| Germanic | Geman, Dutch, Swedish, Danish, Afrikaans, Luxembourgish, Norwegian, Icelandic |

| Romance | French, Spanish, Italian, Portuguese, Romanian, Occitan, Asturian, Catalan |

| Slavic | Russian, Czech, Polish, Bulgarian, Ukrainian, Serbian, Belarusian, Bosnian |

| Indo-Aryan | Hindi, Bengali, Kannada, Marathi, Sindhi, Gujarati, Nepali, Urdu |

-----

## Dataset Stats

| Resource | Languages | Size |

| --- | --- | --- |

| High | de, nl, fr, es, ru, cs, hi, bn, ar, he | 5M |

| Medium | sv, da, it, pt, pl, bg, kn, mr, mt, ha | 1M |

| Low | af, lb, ro, oc, uk, sr, sd, gu, ti, am | 100k |

| Extremely-Low | no, is, ast, ca, be, bs, ne, ur, kab, so | 50k |

-----

## Build Fairseq dataset (Shard->to avoid RAM OOM)

```

Read toolkit/build_fairseq_sharded_dataset.sh

```

<br>

-----

## Train mTransformer-Large baseline

```

Read toolkit/train-EC40-mTrans-large.sh

```

| 1,923 | [

[

-0.047027587890625,

-0.0162200927734375,

0.016265869140625,

0.0050506591796875,

-0.028076171875,

-0.001251220703125,

-0.039794921875,

-0.0185089111328125,

0.005863189697265625,

0.041290283203125,

-0.052459716796875,

-0.0634765625,

-0.04754638671875,

0.020553... |

MananSantoki/Vadodara-Info | 2023-10-12T13:02:57.000Z | [

"region:us"

] | MananSantoki | null | null | 0 | 19 | 2023-10-12T12:36:53 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01497650146484375,

0.057098388671875,

0.028839111328125,

-0.0350341796875,

0.046478271484375,

0.052520751953125,

0.005046844482421875,

0.051361083984375,

0.016998291015625,

-0.05206298828125,

-0.01497650146484375,

-0.06036376953125,

0... |

xu3kev/BIRD-SQL-data | 2023-10-12T14:50:00.000Z | [

"region:us"

] | xu3kev | null | null | 0 | 19 | 2023-10-12T14:26:54 | ---

dataset_info:

features:

- name: db_id

dtype: string

- name: question

dtype: string

- name: evidence

dtype: string

- name: SQL

dtype: string

- name: schema

dtype: string

splits:

- name: train

num_bytes: 1039491

num_examples: 200

download_size: 98914

dataset_size: 1039491

---

# Dataset Card for "BIRD-SQL-data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 494 | [

[

-0.0284881591796875,

-0.02593994140625,

-0.006961822509765625,

0.0189208984375,

-0.0126495361328125,

-0.0069580078125,

0.02032470703125,

-0.026092529296875,

0.054351806640625,

0.02935791015625,

-0.056640625,

-0.051361083984375,

-0.02508544921875,

-0.00321769... |

shellypeng/violet-evergarden-ds | 2023-10-14T12:02:00.000Z | [

"region:us"

] | shellypeng | null | null | 0 | 19 | 2023-10-14T07:38:53 | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 446708153.158

num_examples: 3823

download_size: 478066266

dataset_size: 446708153.158

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "violet-evergarden-ds"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 495 | [

[

-0.0277252197265625,

-0.0128326416015625,

0.01300811767578125,

0.0184173583984375,

-0.0098419189453125,

-0.00817108154296875,

0.042236328125,

-0.01082611083984375,

0.05712890625,

0.036468505859375,

-0.07305908203125,

-0.05621337890625,

-0.036407470703125,

-0... |

sunjun/pubmedqa | 2023-10-14T13:41:28.000Z | [

"region:us"

] | sunjun | null | null | 0 | 19 | 2023-10-14T13:41:21 | ---

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: QUESTION

dtype: string

- name: CONTEXT

dtype: string

- name: final_decision

dtype: string

splits:

- name: validation

num_bytes: 720620

num_examples: 500

- name: test

num_bytes: 732024

num_examples: 500

download_size: 833197

dataset_size: 1452644

---

# Dataset Card for "pubmedqa"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 628 | [

[

-0.030181884765625,

-0.0012941360473632812,

0.034271240234375,

0.0062103271484375,

-0.02166748046875,

0.0016660690307617188,

0.03314208984375,

-0.0014171600341796875,

0.05279541015625,

0.041107177734375,

-0.050445556640625,

-0.056671142578125,

-0.04095458984375,... |

ostapeno/qa-platy_icl5_clen128_maxD-1_maxC5000_0 | 2023-10-14T16:53:52.000Z | [

"region:us"

] | ostapeno | null | null | 0 | 19 | 2023-10-14T16:53:38 | ---

dataset_info:

features:

- name: id

dtype: string

- name: context

dtype: string

- name: docno

dtype: string

- name: subject

dtype: string

- name: icl_examples

sequence: string

- name: author_instr

dtype: string

- name: instruction

dtype: string

- name: response

dtype: string

- name: author_response

dtype: string

- name: normalized_cumul_logprob_response

dtype: float64

splits:

- name: formal_logic

num_bytes: 8194431.408696578

num_examples: 2891

- name: machine_learning

num_bytes: 10320278.367023258

num_examples: 3641

- name: global_facts

num_bytes: 11244313.178242588

num_examples: 3967

- name: abstract_algebra

num_bytes: 12179685.839906327

num_examples: 4297

- name: high_school_physics

num_bytes: 11162113.762520624

num_examples: 3938

- name: college_biology

num_bytes: 10462001.49757837

num_examples: 3691

- name: high_school_government_and_politics

num_bytes: 10816309.323966151

num_examples: 3816

- name: prehistory

num_bytes: 11082748.809409762

num_examples: 3910

- name: security_studies

num_bytes: 9869598.811858002

num_examples: 3482

- name: sociology

num_bytes: 11181955.000798339

num_examples: 3945

download_size: 21363610

dataset_size: 106513436.0

---

# Dataset Card for "wiki_experts_data_transform_icl5dst"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 1,530 | [

[

-0.051971435546875,

-0.0025482177734375,

0.0181427001953125,

0.0083770751953125,

-0.0188446044921875,

0.009307861328125,

0.01519775390625,

-0.01506805419921875,

0.04156494140625,

0.0244140625,

-0.06134033203125,

-0.0543212890625,

-0.05047607421875,

-0.001003... |

schhetri41/PoseDataset | 2023-10-17T23:16:01.000Z | [

"region:us"

] | schhetri41 | null | null | 0 | 19 | 2023-10-16T22:16:25 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

HoangHa/SMILES-250M | 2023-10-22T06:06:11.000Z | [

"region:us"

] | HoangHa | null | null | 0 | 19 | 2023-10-19T00:30:45 | ---

dataset_info:

features:

- name: SMILES

dtype: string

splits:

- name: train

num_bytes: 1626837623.1854794

num_examples: 84345972

download_size: 1799608655

dataset_size: 1626837623.1854794

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "SMILES-250M"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 470 | [

[

-0.0501708984375,

-0.0037250518798828125,

0.0204010009765625,

0.0216827392578125,

-0.0134429931640625,

-0.01294708251953125,

-0.0014095306396484375,

-0.01502227783203125,

0.0631103515625,

0.024993896484375,

-0.07440185546875,

-0.0540771484375,

-0.0406494140625,

... |

ComponentSoft/k8s-kubectl | 2023-10-20T06:22:21.000Z | [

"region:us"

] | ComponentSoft | null | null | 1 | 19 | 2023-10-20T06:22:18 | ---

dataset_info:

features:

- name: objective

dtype: string

- name: command_name

dtype: string

- name: command

dtype: string

- name: description

dtype: string

- name: syntax

dtype: string

- name: flags

dtype: string

- name: question

dtype: string

- name: chain_of_thought

dtype: 'null'

splits:

- name: train

num_bytes: 40356885

num_examples: 35000

download_size: 2584976

dataset_size: 40356885

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "k8s-kubectl"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 715 | [

[

-0.04388427734375,

-0.007770538330078125,

0.0263519287109375,

0.017974853515625,

-0.0233154296875,

0.015411376953125,

0.0171966552734375,

-0.007534027099609375,

0.04962158203125,

0.038330078125,

-0.045806884765625,

-0.064453125,

-0.050201416015625,

-0.012870... |

xin1997/vrepair_pretraining_data | 2023-10-23T08:47:41.000Z | [

"region:us"

] | xin1997 | null | null | 0 | 19 | 2023-10-23T08:41:28 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Luciya/llama-2-clinc-test | 2023-10-23T11:11:34.000Z | [

"region:us"

] | Luciya | null | null | 0 | 19 | 2023-10-23T11:11:31 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 10464310

num_examples: 4468

download_size: 986893

dataset_size: 10464310

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "llama-2-clinc-test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 447 | [

[

-0.036956787109375,

-0.024261474609375,

0.009735107421875,

0.0241851806640625,

-0.038055419921875,

0.00264739990234375,

0.0226287841796875,

-0.0204925537109375,

0.0545654296875,

0.025115966796875,

-0.056396484375,

-0.05706787109375,

-0.04656982421875,

-0.005... |

kheopsai/codevivil_instruct | 2023-10-26T13:16:19.000Z | [

"region:us"

] | kheopsai | null | null | 0 | 19 | 2023-10-26T13:10:23 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

didsr/msynth | 2023-11-02T15:02:54.000Z | [

"task_categories:image-classification",

"task_categories:image-segmentation",

"size_categories:10K<n<100K",

"license:cc0-1.0",

"medical",

"arxiv:2310.18494",

"region:us"

] | didsr | M-SYNTH is a synthetic digital mammography (DM) dataset with four breast fibroglandular density distributions imaged using Monte Carlo x-ray simulations with the publicly available Virtual Imaging Clinical Trial for Regulatory Evaluation (VICTRE) toolkit.

Curated by: Elena Sizikova, Niloufar Saharkhiz, Diksha Sharma, Miguel Lago, Berkman Sahiner, Jana Gut Delfino, Aldo Badano

License: Creative Commons 1.0 Universal License (CC0) | @article{sizikova2023knowledge,

title={Knowledge-based in silico models and dataset for the comparative evaluation of mammography AI for a range of breast characteristics, lesion conspicuities and doses},

author={Sizikova, Elena and Saharkhiz, Niloufar and Sharma, Diksha and Lago, Miguel and Sahiner, Berkman and Delfino, Jana G. and Badano, Aldo},

journal={Advances in Neural Information Processing Systems},

volume={},

pages={16764--16778},

year={2023} | 0 | 19 | 2023-10-26T21:32:23 | ---

license: cc0-1.0

task_categories:

- image-classification

- image-segmentation

tags:

- medical

pretty_name: M-SYNTH

size_categories:

- 10K<n<100K

---

# M-SYNTH

<!-- Provide a quick summary of the dataset. -->

M-SYNTH is a synthetic digital mammography (DM) dataset with four breast fibroglandular density distributions imaged using Monte Carlo x-ray simulations with the publicly available [Virtual Imaging Clinical Trial for Regulatory Evaluation (VICTRE)](https://github.com/DIDSR/VICTRE) toolkit.

## Dataset Details

The dataset has the following characteristics:

* Breast density: dense, heterogeneously dense, scattered, fatty

* Mass radius (mm): 5.00, 7.00, 9.00

* Mass density: 1.0, 1.06, 1.1 (ratio of radiodensity of the mass to that of fibroglandular tissue)

* Relative dose: 20%, 40%, 60%, 80%, 100% of the clinically recommended dose for each density

<p align="center">

<img src='https://raw.githubusercontent.com/DIDSR/msynth-release/main/images/examples.png' width='700'>

</p>

### Dataset Description

<!-- Provide a longer summary of what this dataset is. -->

- **Curated by:** [Elena Sizikova](https://esizikova.github.io/), [Niloufar Saharkhiz](https://www.linkedin.com/in/niloufar-saharkhiz/), [Diksha Sharma](https://www.linkedin.com/in/diksha-sharma-6059977/), [Miguel Lago](https://www.linkedin.com/in/milaan/), [Berkman Sahiner](https://www.linkedin.com/in/berkman-sahiner-6aa9a919/), [Jana Gut Delfino](https://www.linkedin.com/in/janadelfino/), [Aldo Badano](https://www.linkedin.com/in/aldobadano/)

- **License:** Creative Commons 1.0 Universal License (CC0)

### Dataset Sources

<!-- Provide the basic links for the dataset. -->

- **Code:** [https://github.com/DIDSR/msynth-release](https://github.com/DIDSR/msynth-release)

- **Paper:** [https://arxiv.org/pdf/2310.18494.pdf](https://arxiv.org/pdf/2310.18494.pdf)

- **Demo:** [https://github.com/DIDSR/msynth-release/tree/master/examples](https://github.com/DIDSR/msynth-release/tree/master/examples)

## Uses

<!-- Address questions around how the dataset is intended to be used. -->

M-SYNTH is intended to facilitate testing of AI with pre-computed synthetic mammography data.

### Direct Use

<!-- This section describes suitable use cases for the dataset. -->

M-SYNTH can be used to evaluate the effect of mass size and density, breast density, and dose on AI performance in lesion detection.

M-SYNTH can be used to either train or test pre-trained AI models.

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the dataset will not work well for. -->

M-SYNTH cannot be used in lieu of real patient examples to make performance determinations.

## Dataset Structure

<!-- This section provides a description of the dataset fields, and additional information about the dataset structure such as criteria used to create the splits, relationships between data points, etc. -->

M-SYNTH is organized into a directory structure that indicates the parameters. The folder

```

device_data_VICTREPhantoms_spic_[LESION_DENSITY]/[DOSE]/[BREAST_DENSITY]/2/[LESION_SIZE]/SIM/P2_[LESION_SIZE]_[BREAST_DENSITY].8337609.[PHANTOM_FILE_ID]/[PHANTOM_FILEID]/

```

contains image files imaged with the specified parameters. Note that only examples with odd PHANTOM_FILEID contain lesions, others do not.

```

$ tree data/device_data_VICTREPhantoms_spic_1.0/1.02e10/hetero/2/5.0/SIM/P2_5.0_hetero.8337609.1/1/

data/device_data_VICTREPhantoms_spic_1.0/1.02e10/hetero/2/5.0/SIM/P2_5.0_hetero.8337609.1/1/

├── DICOM_dm

│ └── 000.dcm

├── projection_DM1.loc

├── projection_DM1.mhd

└── projection_DM1.raw

```

Each folder contains mammogram data that can be read from .raw format (.mhd contains supporting data), or DICOM (.dcm) format.

Coordinates of lesions can be found in .loc files. Segmentations are stored in .raw format and can be found in data/segmentation_masks/* .

See [Github](https://github.com/DIDSR/msynth-release/tree/main/code) for examples of how to access the files, and [examples](https://github.com/DIDSR/msynth-release/tree/main/examples) for code to load each type of file.

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

Simulation-based testing is constrained to the parameter variability represented in the object model and the acquisition system.

There is a risk of misjudging model performance if the simulated examples do not capture the variability in real patients. Please

see the paper for a full discussion of biases, risks, and limitations.

## How to use it

The msynth dataset is a very large dataset so for most use cases it is recommended to make use of the streaming API of `datasets`.

The msynth dataset has three configurations: 1) device_data, 2) segmentation_mask, and 3) metadata

You can load and iterate through the dataset using the configurations with the following lines of code:

```python

from datasets import load_dataset

ds = load_dataset("didsr/msynth", 'device_data') # For device data for all breast density, mass redius, mass density, and relative dose, change configuration to 'segmentation_mask' and 'metadata' to load the segmentation masks and bound information

print(ds_data["device_data"])

# A sample data instance

{'Raw': '~\\.cache\\huggingface\\datasets\\downloads\\extracted\\59384cf05fc44e8c0cb23bb19e1fcd8f0c39720b282109d204a85561fe66bdb1\\SIM\\P2_5.0_fatty.8336179.1\\1\\projection_DM1.raw',

'mhd': '~/.cache/huggingface/datasets/downloads/extracted/59384cf05fc44e8c0cb23bb19e1fcd8f0c39720b282109d204a85561fe66bdb1/SIM/P2_5.0_fatty.8336179.1/1\\projection_DM1.mhd',

'loc': '~/.cache/huggingface/datasets/downloads/extracted/59384cf05fc44e8c0cb23bb19e1fcd8f0c39720b282109d204a85561fe66bdb1/SIM/P2_5.0_fatty.8336179.1/1\\projection_DM1.loc',

'dcm': '~/.cache/huggingface/datasets/downloads/extracted/59384cf05fc44e8c0cb23bb19e1fcd8f0c39720b282109d204a85561fe66bdb1/SIM/P2_5.0_fatty.8336179.1/1\\DICOM_dm\\000.dcm',

'density': 'fatty',

'mass_radius': 5.0}

```

Msynth dataset can also be loaded using custom breast density, mass redius, mass density, and relative dose information

```python

from datasets import load_dataset

# Dataset properties. change to 'all' to include all the values of breast density, mass redius, mass density, and relative dose information

config_kwargs = {

"lesion_density": ["1.0"],

"dose": ["20%"],

"density": ["fatty"],

"size": ["5.0"]

}

# Loading device data

ds_data = load_dataset("didsr/msynth", 'device_data', **config_kwargs)

# Loading segmentation-mask

ds_seg = load_dataset("didsr/msynth", 'segmentation_mask', **config_kwargs)

```

The meta data can also be loaded using the datasets API. An example of using metadata is given in **Demo:** [https://github.com/DIDSR/msynth-release/tree/master/examples](https://github.com/DIDSR/msynth-release/tree/master/examples)

```python

from datasets import load_dataset

# Loading metadata

ds_meta = load_dataset("didsr/msynth", 'metadata')

# A sample data instance

ds_meta['metadata'][0]

# Output

{'fatty': '~\\.cache\\huggingface\\datasets\\downloads\\extracted\\3ea85fc6b3fcc253ac8550b5d1b21db406ca9a59ea125ff8fc63d9b754c88348\\bounds\\bounds_fatty.npy',

'dense': '~\\.cache\\huggingface\\datasets\\downloads\\extracted\\3ea85fc6b3fcc253ac8550b5d1b21db406ca9a59ea125ff8fc63d9b754c88348\\bounds\\bounds_dense.npy',

'hetero': '~\\.cache\\huggingface\\datasets\\downloads\\extracted\\3ea85fc6b3fcc253ac8550b5d1b21db406ca9a59ea125ff8fc63d9b754c88348\\bounds\\bounds_hetero.npy',

'scattered': '~\\.cache\\huggingface\\datasets\\downloads\\extracted\\3ea85fc6b3fcc253ac8550b5d1b21db406ca9a59ea125ff8fc63d9b754c88348\\bounds\\bounds_scattered.npy'}

```

## Citation

```

@article{sizikova2023knowledge,

title={Knowledge-based in silico models and dataset for the comparative evaluation of mammography AI for a range of breast characteristics, lesion conspicuities and doses},

author={Sizikova, Elena and Saharkhiz, Niloufar and Sharma, Diksha and Lago, Miguel and Sahiner, Berkman and Delfino, Jana G. and Badano, Aldo},

journal={Advances in Neural Information Processing Systems},

volume={},

pages={},

year={2023}

}

```

## Related Links

1. [Virtual Imaging Clinical Trial for Regulatory Evaluation (VICTRE)](https://www.fda.gov/medical-devices/science-and-research-medical-devices/victre-silico-breast-imaging-pipeline).

2. [FDA Catalog of Regulatory Science Tools to Help Assess New Medical Device](https://www.fda.gov/medical-devices/science-and-research-medical-devices/catalog-regulatory-science-tools-help-assess-new-medical-devices).

3. A. Badano, C. G. Graff, A. Badal, D. Sharma, R. Zeng, F. W. Samuelson, S. Glick, K. J. Myers. [Evaluation of Digital Breast Tomosynthesis as Replacement of Full-Field Digital Mammography Using an In Silico Imaging Trial](http://dx.doi.org/10.1001/jamanetworkopen.2018.5474). JAMA Network Open 2018.

4. A. Badano, M. Lago, E. Sizikova, J. G. Delfino, S. Guan, M. A. Anastasio, B. Sahiner. [The stochastic digital human is now enrolling for in silico imaging trials—methods and tools for generating digital cohorts.](http://dx.doi.org/10.1088/2516-1091/ad04c0) Progress in Biomedical Engineering 2023.

5. E. Sizikova, N. Saharkhiz, D. Sharma, M. Lago, B. Sahiner, J. G. Delfino, A. Badano. [Knowledge-based in silico models and dataset for the comparative evaluation of mammography AI](https://github.com/DIDSR/msynth-release). NeurIPS 2023 Workshop on Synthetic Data Generation with Generative AI. | 9,519 | [

[

-0.0286102294921875,

-0.043853759765625,

0.041839599609375,

-0.00864410400390625,

-0.0311431884765625,

-0.0160369873046875,

0.020355224609375,

-0.01287078857421875,

0.042083740234375,

0.03387451171875,

-0.06317138671875,

-0.058685302734375,

-0.032257080078125,

... |

re2panda/click_bate_random_sample | 2023-10-27T08:30:50.000Z | [

"region:us"

] | re2panda | null | null | 0 | 19 | 2023-10-27T08:26:41 | Entry not found | 15 | [

[

-0.0214080810546875,

-0.01494598388671875,

0.057159423828125,

0.028839111328125,

-0.0350341796875,

0.04656982421875,

0.052490234375,

0.00504302978515625,

0.0513916015625,

0.016998291015625,

-0.0521240234375,

-0.0149993896484375,

-0.06036376953125,

0.03790283... |

automated-research-group/phi-winogrande | 2023-10-28T12:48:48.000Z | [

"region:us"

] | automated-research-group | null | null | 0 | 19 | 2023-10-28T12:48:47 | ---

dataset_info:

features:

- name: answer

dtype: string

- name: id

dtype: string

- name: question

dtype: string

- name: input_perplexity

dtype: float64

- name: input_likelihood

dtype: float64

- name: output_perplexity

dtype: float64

- name: output_likelihood

dtype: float64

splits:

- name: validation

num_bytes: 357232

num_examples: 1267

download_size: 162542

dataset_size: 357232

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

---

# Dataset Card for "phi-winogrande"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 710 | [

[

-0.03314208984375,

-0.01021575927734375,

0.01055145263671875,

0.015960693359375,

-0.0218505859375,

-0.01538848876953125,

0.021820068359375,

-0.0186614990234375,

0.067138671875,

0.0234222412109375,

-0.051177978515625,

-0.0430908203125,

-0.046875,

-0.036132812... |

royzhong/ASVS5 | 2023-10-28T17:23:44.000Z | [

"region:us"

] | royzhong | null | null | 0 | 19 | 2023-10-28T17:21:28 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

22Plaruno/little_face64x64 | 2023-10-28T20:33:28.000Z | [

"region:us"

] | 22Plaruno | null | null | 0 | 19 | 2023-10-28T20:33:02 | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 151477080.0

num_examples: 70000

download_size: 161591941

dataset_size: 151477080.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "little_face64x64"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 455 | [

[

-0.0445556640625,

-0.00936126708984375,

0.01459503173828125,

0.023590087890625,

-0.01898193359375,

-0.01434326171875,

0.02325439453125,

-0.018890380859375,

0.06011962890625,

0.033050537109375,

-0.061309814453125,

-0.04296875,

-0.04412841796875,

-0.0241394042... |

theophilusijiebor1/chest-xray | 2023-10-29T03:08:57.000Z | [

"region:us"

] | theophilusijiebor1 | null | null | 0 | 19 | 2023-10-29T03:07:44 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': NORMAL

'1': PNEUMONIA

splits:

- name: train

num_bytes: 3186635036.504

num_examples: 5216

- name: validation

num_bytes: 3030633.0

num_examples: 16

- name: test

num_bytes: 79062317.0

num_examples: 624

download_size: 1230487171

dataset_size: 3268727986.504

---

# Dataset Card for "chest-xray"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 781 | [

[

-0.038177490234375,

0.0028247833251953125,

0.024383544921875,

-0.00246429443359375,

-0.0290069580078125,

0.00010371208190917969,

0.0408935546875,

-0.01306915283203125,

0.05718994140625,

0.039031982421875,

-0.05743408203125,

-0.05731201171875,

-0.049468994140625,... |

HoangHa/hello | 2023-10-29T09:17:20.000Z | [

"region:us"

] | HoangHa | null | null | 0 | 19 | 2023-10-29T08:24:01 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

Robathan/generalimageset | 2023-10-30T01:27:31.000Z | [

"task_categories:feature-extraction",

"size_categories:1K<n<10K",

"license:gpl-3.0",

"region:us"

] | Robathan | null | null | 0 | 19 | 2023-10-29T23:21:45 | ---

license: gpl-3.0

task_categories:

- feature-extraction

size_categories:

- 1K<n<10K

---

# Dataset Card for MNR's General Imageset

In-flux. use at your own discrestion/frustration.

## Dataset Details

- random images. about 1,200 in total.

- **Curated by:** Rob James

| 274 | [

[

-0.03662109375,

-0.00414276123046875,

0.01422882080078125,

0.000003814697265625,

-0.034423828125,

-0.006988525390625,

0.02490234375,

0.002658843994140625,

0.05010986328125,

0.07147216796875,

-0.070068359375,

-0.03948974609375,

-0.0285797119140625,

0.00427246... |

Eitanli/meal_type | 2023-11-02T13:51:48.000Z | [

"region:us"

] | Eitanli | null | null | 0 | 19 | 2023-10-30T08:16:00 | ---

dataset_info:

features:

- name: id

dtype: int64

- name: recipe

dtype: string

- name: meal_type_title

dtype: string

splits:

- name: train

num_bytes: 107900952

num_examples: 74465

download_size: 54288491

dataset_size: 107900952

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "meal_type"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 519 | [

[

-0.025177001953125,

-0.023681640625,

0.00817108154296875,

0.0135040283203125,

0.002071380615234375,

-0.003910064697265625,

0.0212860107421875,

-0.006458282470703125,

0.076416015625,

0.0384521484375,

-0.040008544921875,

-0.045440673828125,

-0.040985107421875,

... |

phanvancongthanh/enamine_leadlike_600M | 2023-10-31T13:58:25.000Z | [

"region:us"

] | phanvancongthanh | null | null | 0 | 19 | 2023-10-31T11:34:47 | Entry not found | 15 | [

[

-0.02142333984375,

-0.014984130859375,

0.057220458984375,

0.0288238525390625,

-0.03509521484375,

0.04656982421875,

0.052520751953125,

0.00506591796875,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060455322265625,

0.03793334... |

ncduy/mt-en-vi | 2022-10-22T15:08:45.000Z | [

"annotations_creators:found",

"language_creators:found",

"multilinguality:translation",

"size_categories:1M<n<10M",

"source_datasets:own",

"source_datasets:open_subtitles",

"source_datasets:tatoeba",

"source_datasets:opus_tedtalks",

"source_datasets:qed_amara",

"source_datasets:opus_wikipedia",

... | ncduy | null | null | 4 | 18 | 2022-03-02T23:29:22 | ---

annotations_creators:

- found

language_creators:

- found

language:

- en

- vi

license:

- mit

multilinguality:

- translation

pretty_name: "Machine Translation Paired English-Vietnamese Sentences"

size_categories:

- 1M<n<10M

source_datasets:

- own

- open_subtitles

- tatoeba

- opus_tedtalks

- qed_amara

- opus_wikipedia

task_categories:

- conditional-text-generation

task_ids:

- machine-translation

---

# Dataset Card for Machine Translation Paired English-Vietnamese Sentences

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

[More Information Needed]

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

The language of the dataset text sentence is English ('en') and Vietnamese (`vi`).

## Dataset Structure

### Data Instances

An instance example:

```

{

'en': 'And what I think the world needs now is more connections.',

'vi': 'Và tôi nghĩ điều thế giới đang cần bây giờ là nhiều sự kết nối hơn.',

'source': 'TED2020 v1'

}

```

### Data Fields

- `en` (str): English sentence

- `vi` (str): Vietnamese sentence

- `source` (str): Source.

### Data Splits

The dataset is split in train, validation and test.

| | Tain | Validation | Test |

|--------------------|------:|-----------:|-----:|

| Number of examples |2884451| 11316| 11225|

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

Thanks to [@ncduy0303](https://github.com/ncduy0303) for adding this dataset. | 3,401 | [

[

-0.0206298828125,

-0.055084228515625,

0.0172271728515625,

0.0198516845703125,

-0.029815673828125,

-0.0032367706298828125,

-0.0247344970703125,

-0.01244354248046875,

0.029144287109375,

0.05865478515625,

-0.044921875,

-0.06805419921875,

-0.04425048828125,

0.03... |

s3h/arabic-grammar-corrections | 2021-11-30T12:37:00.000Z | [

"region:us"

] | s3h | null | null | 3 | 18 | 2022-03-02T23:29:22 | Entry not found | 15 | [

[

-0.02142333984375,

-0.014984130859375,

0.057220458984375,

0.0288238525390625,

-0.03509521484375,

0.04656982421875,

0.052520751953125,

0.00506591796875,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060455322265625,

0.03793334... |

sentence-transformers/msmarco-hard-negatives | 2022-08-18T16:04:34.000Z | [

"region:us"

] | sentence-transformers | null | null | 4 | 18 | 2022-03-02T23:29:22 | # MS MARCO Passages Hard Negatives

[MS MARCO](https://microsoft.github.io/msmarco/) is a large scale information retrieval corpus that was created based on real user search queries using Bing search engine.

This dataset repository contains files that are helpful to train bi-encoder models e.g. using [sentence-transformers](https://www.sbert.net).

## Training Code

You can find here an example how these files can be used to train bi-encoders: [SBERT.net - MS MARCO - MarginMSE](https://www.sbert.net/examples/training/ms_marco/README.html#marginmse)

## cross-encoder-ms-marco-MiniLM-L-6-v2-scores.pkl.gz

This is a pickled dictionary in the format: `scores[qid][pid] -> cross_encoder_score`

It contains 160 million cross-encoder scores for (query, paragraph) pairs using the [cross-encoder/ms-marco-MiniLM-L-6-v2](https://huggingface.co/cross-encoder/ms-marco-MiniLM-L-6-v2) model.

## msmarco-hard-negatives.jsonl.gz

This is a jsonl file: Each line is a JSON object. It has the following format:

```

{"qid": 867436, "pos": [5238393], "neg": {"bm25": [...], ...}}

```

`qid` is the query-ID from MS MARCO, `pos` is a list with paragraph IDs for positive passages. `neg` is a dictionary where we mined hard negatives using different (mainly dense retrieval) systems.

It contains hard negatives mined from BM25 (using ElasticSearch) and the following dense models:

```

msmarco-distilbert-base-tas-b

msmarco-distilbert-base-v3

msmarco-MiniLM-L-6-v3

distilbert-margin_mse-cls-dot-v2

distilbert-margin_mse-cls-dot-v1

distilbert-margin_mse-mean-dot-v1

mpnet-margin_mse-mean-v1

co-condenser-margin_mse-cls-v1

distilbert-margin_mse-mnrl-mean-v1

distilbert-margin_mse-sym_mnrl-mean-v1

distilbert-margin_mse-sym_mnrl-mean-v2

co-condenser-margin_mse-sym_mnrl-mean-v1

```

From each system, 50 most similar paragraphs were mined for a given query.

| 1,884 | [

[

-0.039825439453125,

-0.047454833984375,

0.03094482421875,

0.00983428955078125,

-0.0165252685546875,

-0.0106353759765625,

-0.029754638671875,

-0.0164031982421875,

0.02197265625,

0.040069580078125,

-0.037261962890625,

-0.0596923828125,

-0.042694091796875,

0.02... |

vesteinn/icelandic-qa-NQiI | 2022-07-04T16:32:26.000Z | [

"task_categories:question-answering",

"task_ids:open-domain-qa",

"task_ids:extractive-qa",

"annotations_creators:curated",

"language_creators:curated",

"multilinguality:monolingual",

"source_datasets:original",

"language:is",

"license:cc-by-sa-4.0",

"region:us"

] | vesteinn | \ | \ | 2 | 18 | 2022-03-02T23:29:22 | ---

pretty_name: NQiI

annotations_creators:

- curated

language_creators:

- curated

language:

- is

license:

- cc-by-sa-4.0

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- question-answering

task_ids:

- open-domain-qa

- extractive-qa

paperswithcode_id: nqii

---

# Natural Questions in Icelandic

| 325 | [

[

-0.032684326171875,

-0.07763671875,

0.004241943359375,

0.025482177734375,

-0.059967041015625,

0.006862640380859375,

0.0021839141845703125,

-0.037841796875,

0.0206146240234375,

0.055419921875,

-0.035003662109375,

-0.01035308837890625,

-0.0347900390625,

0.0469... |

zloelias/kinopoisk-reviews | 2021-12-06T18:02:51.000Z | [

"region:us"

] | zloelias | null | null | 0 | 18 | 2022-03-02T23:29:22 | Entry not found | 15 | [

[

-0.02142333984375,

-0.014984130859375,

0.057220458984375,

0.0288238525390625,

-0.03509521484375,

0.04656982421875,

0.052520751953125,

0.00506591796875,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060455322265625,

0.03793334... |

Azu/Handwritten-Mathematical-Expression-Convert-LaTeX | 2022-03-10T18:25:17.000Z | [

"region:us"

] | Azu | null | null | 6 | 18 | 2022-03-10T18:23:05 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

nsusemiehl/SciERC | 2022-04-10T16:56:55.000Z | [

"region:us"

] | nsusemiehl | null | null | 0 | 18 | 2022-04-10T16:51:23 | SCIERC (Luan et al., 2018) via "Don’t Stop Pretraining: Adapt Language Models to Domains and Tasks" (Gururangan et al., 2020) reuploaded because of error encountered when trying to load zj88zj/SCIERC with the huggingfaces/datasets library. | 240 | [

[

-0.01806640625,

-0.00982666015625,

0.01702880859375,

0.034942626953125,

0.0016698837280273438,

0.010894775390625,

-0.01406097412109375,

-0.033416748046875,

0.01284027099609375,

0.037322998046875,

-0.06689453125,

-0.0251617431640625,

-0.056243896484375,

0.012... |

IsaacRodgz/DravidianCodeMix-Dataset | 2022-05-04T19:03:35.000Z | [

"region:us"

] | IsaacRodgz | null | null | 0 | 18 | 2022-05-04T19:03:24 | Entry not found | 15 | [

[

-0.021392822265625,

-0.01494598388671875,

0.05718994140625,

0.028839111328125,

-0.0350341796875,

0.046539306640625,

0.052490234375,

0.00507354736328125,

0.051361083984375,

0.01702880859375,

-0.052093505859375,

-0.01494598388671875,

-0.06036376953125,

0.03790... |

HuggingFaceM4/charades | 2022-10-20T21:35:42.000Z | [

"task_categories:other",

"annotations_creators:crowdsourced",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:en",

"license:other",

"arxiv:1604.01753",

"region:us"

] | HuggingFaceM4 | Charades is dataset composed of 9848 videos of daily indoors activities collected through Amazon Mechanical Turk. 267 different users were presented with a sentence, that includes objects and actions from a fixed vocabulary, and they recorded a video acting out the sentence (like in a game of Charades). The dataset contains 66,500 temporal annotations for 157 action classes, 41,104 labels for 46 object classes, and 27,847 textual descriptions of the videos. | @article{sigurdsson2016hollywood,

author = {Gunnar A. Sigurdsson and G{\"u}l Varol and Xiaolong Wang and Ivan Laptev and Ali Farhadi and Abhinav Gupta},

title = {Hollywood in Homes: Crowdsourcing Data Collection for Activity Understanding},

journal = {ArXiv e-prints},

eprint = {1604.01753},

year = {2016},

url = {http://arxiv.org/abs/1604.01753},

} | 2 | 18 | 2022-05-11T07:07:47 | ---

annotations_creators:

- crowdsourced

language_creators:

- crowdsourced

language:

- en

license:

- other

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- other

task_ids: []

paperswithcode_id: charades

pretty_name: Charades

tags: []

---

# Dataset Card for Charades

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://prior.allenai.org/projects/charades

- **Repository:** https://github.com/gsig/charades-algorithms

- **Paper:** https://arxiv.org/abs/1604.01753

- **Leaderboard:** https://paperswithcode.com/sota/action-classification-on-charades

- **Point of Contact:** mailto: vision.amt@allenai.org

### Dataset Summary

Charades is dataset composed of 9848 videos of daily indoors activities collected through Amazon Mechanical Turk. 267 different users were presented with a sentence, that includes objects and actions from a fixed vocabulary, and they recorded a video acting out the sentence (like in a game of Charades). The dataset contains 66,500 temporal annotations for 157 action classes, 41,104 labels for 46 object classes, and 27,847 textual descriptions of the videos

### Supported Tasks and Leaderboards

- `multilabel-action-classification`: The goal of this task is to classify actions happening in a video. This is a multilabel classification. The leaderboard is available [here](https://paperswithcode.com/sota/action-classification-on-charades)

### Languages

The annotations in the dataset are in English.

## Dataset Structure

### Data Instances

```

{

"video_id": "46GP8",

"video": "/home/amanpreet_huggingface_co/.cache/huggingface/datasets/downloads/extracted/3f022da5305aaa189f09476dbf7d5e02f6fe12766b927c076707360d00deb44d/46GP8.mp4",

"subject": "HR43",

"scene": "Kitchen",

"quality": 6,

"relevance": 7,

"verified": "Yes",

"script": "A person cooking on a stove while watching something out a window.",

"objects": ["food", "stove", "window"],

"descriptions": [

"A person cooks food on a stove before looking out of a window."

],

"labels": [92, 147],

"action_timings": [

[11.899999618530273, 21.200000762939453],

[0.0, 12.600000381469727]

],

"length": 24.829999923706055

}

```

### Data Fields

- `video_id`: `str` Unique identifier for each video.

- `video`: `str` Path to the video file

- `subject`: `str` Unique identifier for each subject in the dataset

- `scene`: `str` One of 15 indoor scenes in the dataset, such as Kitchen

- `quality`: `int` The quality of the video judged by an annotator (7-point scale, 7=high quality), -100 if missing

- `relevance`: `int` The relevance of the video to the script judged by an annotated (7-point scale, 7=very relevant), -100 if missing

- `verified`: `str` 'Yes' if an annotator successfully verified that the video matches the script, else 'No'

- `script`: `str` The human-generated script used to generate the video

- `descriptions`: `List[str]` List of descriptions by annotators watching the video

- `labels`: `List[int]` Multi-label actions found in the video. Indices from 0 to 156.

- `action_timings`: `List[Tuple[int, int]]` Timing where each of the above actions happened.

- `length`: `float` The length of the video in seconds

<details>

<summary>

Click here to see the full list of Charades class labels mapping:

</summary>

|id|Class|

|--|-----|

|c000 | Holding some clothes |

|c001 | Putting clothes somewhere |

|c002 | Taking some clothes from somewhere |

|c003 | Throwing clothes somewhere |

|c004 | Tidying some clothes |

|c005 | Washing some clothes |

|c006 | Closing a door |

|c007 | Fixing a door |

|c008 | Opening a door |

|c009 | Putting something on a table |

|c010 | Sitting on a table |

|c011 | Sitting at a table |

|c012 | Tidying up a table |

|c013 | Washing a table |

|c014 | Working at a table |

|c015 | Holding a phone/camera |

|c016 | Playing with a phone/camera |

|c017 | Putting a phone/camera somewhere |

|c018 | Taking a phone/camera from somewhere |

|c019 | Talking on a phone/camera |

|c020 | Holding a bag |

|c021 | Opening a bag |

|c022 | Putting a bag somewhere |

|c023 | Taking a bag from somewhere |

|c024 | Throwing a bag somewhere |

|c025 | Closing a book |

|c026 | Holding a book |

|c027 | Opening a book |

|c028 | Putting a book somewhere |

|c029 | Smiling at a book |

|c030 | Taking a book from somewhere |

|c031 | Throwing a book somewhere |

|c032 | Watching/Reading/Looking at a book |

|c033 | Holding a towel/s |

|c034 | Putting a towel/s somewhere |

|c035 | Taking a towel/s from somewhere |

|c036 | Throwing a towel/s somewhere |

|c037 | Tidying up a towel/s |

|c038 | Washing something with a towel |

|c039 | Closing a box |

|c040 | Holding a box |

|c041 | Opening a box |

|c042 | Putting a box somewhere |

|c043 | Taking a box from somewhere |

|c044 | Taking something from a box |

|c045 | Throwing a box somewhere |

|c046 | Closing a laptop |

|c047 | Holding a laptop |

|c048 | Opening a laptop |

|c049 | Putting a laptop somewhere |

|c050 | Taking a laptop from somewhere |

|c051 | Watching a laptop or something on a laptop |

|c052 | Working/Playing on a laptop |

|c053 | Holding a shoe/shoes |

|c054 | Putting shoes somewhere |

|c055 | Putting on shoe/shoes |

|c056 | Taking shoes from somewhere |

|c057 | Taking off some shoes |

|c058 | Throwing shoes somewhere |

|c059 | Sitting in a chair |

|c060 | Standing on a chair |

|c061 | Holding some food |

|c062 | Putting some food somewhere |

|c063 | Taking food from somewhere |

|c064 | Throwing food somewhere |

|c065 | Eating a sandwich |

|c066 | Making a sandwich |

|c067 | Holding a sandwich |

|c068 | Putting a sandwich somewhere |

|c069 | Taking a sandwich from somewhere |

|c070 | Holding a blanket |

|c071 | Putting a blanket somewhere |

|c072 | Snuggling with a blanket |

|c073 | Taking a blanket from somewhere |

|c074 | Throwing a blanket somewhere |

|c075 | Tidying up a blanket/s |

|c076 | Holding a pillow |

|c077 | Putting a pillow somewhere |

|c078 | Snuggling with a pillow |

|c079 | Taking a pillow from somewhere |

|c080 | Throwing a pillow somewhere |

|c081 | Putting something on a shelf |

|c082 | Tidying a shelf or something on a shelf |

|c083 | Reaching for and grabbing a picture |

|c084 | Holding a picture |

|c085 | Laughing at a picture |

|c086 | Putting a picture somewhere |

|c087 | Taking a picture of something |

|c088 | Watching/looking at a picture |

|c089 | Closing a window |

|c090 | Opening a window |

|c091 | Washing a window |

|c092 | Watching/Looking outside of a window |

|c093 | Holding a mirror |

|c094 | Smiling in a mirror |

|c095 | Washing a mirror |

|c096 | Watching something/someone/themselves in a mirror |

|c097 | Walking through a doorway |

|c098 | Holding a broom |

|c099 | Putting a broom somewhere |

|c100 | Taking a broom from somewhere |

|c101 | Throwing a broom somewhere |

|c102 | Tidying up with a broom |

|c103 | Fixing a light |

|c104 | Turning on a light |

|c105 | Turning off a light |

|c106 | Drinking from a cup/glass/bottle |

|c107 | Holding a cup/glass/bottle of something |

|c108 | Pouring something into a cup/glass/bottle |

|c109 | Putting a cup/glass/bottle somewhere |

|c110 | Taking a cup/glass/bottle from somewhere |

|c111 | Washing a cup/glass/bottle |

|c112 | Closing a closet/cabinet |

|c113 | Opening a closet/cabinet |

|c114 | Tidying up a closet/cabinet |

|c115 | Someone is holding a paper/notebook |

|c116 | Putting their paper/notebook somewhere |

|c117 | Taking paper/notebook from somewhere |

|c118 | Holding a dish |

|c119 | Putting a dish/es somewhere |

|c120 | Taking a dish/es from somewhere |

|c121 | Wash a dish/dishes |

|c122 | Lying on a sofa/couch |

|c123 | Sitting on sofa/couch |

|c124 | Lying on the floor |

|c125 | Sitting on the floor |

|c126 | Throwing something on the floor |

|c127 | Tidying something on the floor |

|c128 | Holding some medicine |

|c129 | Taking/consuming some medicine |

|c130 | Putting groceries somewhere |

|c131 | Laughing at television |

|c132 | Watching television |

|c133 | Someone is awakening in bed |

|c134 | Lying on a bed |

|c135 | Sitting in a bed |

|c136 | Fixing a vacuum |

|c137 | Holding a vacuum |

|c138 | Taking a vacuum from somewhere |

|c139 | Washing their hands |

|c140 | Fixing a doorknob |

|c141 | Grasping onto a doorknob |

|c142 | Closing a refrigerator |

|c143 | Opening a refrigerator |

|c144 | Fixing their hair |

|c145 | Working on paper/notebook |

|c146 | Someone is awakening somewhere |

|c147 | Someone is cooking something |

|c148 | Someone is dressing |

|c149 | Someone is laughing |

|c150 | Someone is running somewhere |

|c151 | Someone is going from standing to sitting |

|c152 | Someone is smiling |

|c153 | Someone is sneezing |

|c154 | Someone is standing up from somewhere |

|c155 | Someone is undressing |

|c156 | Someone is eating something |

</details>

### Data Splits

| |train |validation| test |

|-------------|------:|---------:|------:|

|# of examples|1281167|50000 |100000 |

## Dataset Creation

### Curation Rationale

> Computer vision has a great potential to help our daily lives by searching for lost keys, watering flowers or reminding us to take a pill. To succeed with such tasks, computer vision methods need to be trained from real and diverse examples of our daily dynamic scenes. While most of such scenes are not particularly exciting, they typically do not appear on YouTube, in movies or TV broadcasts. So how do we collect sufficiently many diverse but boring samples representing our lives? We propose a novel Hollywood in Homes approach to collect such data. Instead of shooting videos in the lab, we ensure diversity by distributing and crowdsourcing the whole process of video creation from script writing to video recording and annotation.

### Source Data

#### Initial Data Collection and Normalization

> Similar to filming, we have a three-step process for generating a video. The first step is generating the script of the indoor video. The key here is to allow workers to generate diverse scripts yet ensure that we have enough data for each category. The second step in the process is to use the script and ask workers to record a video of that sentence being acted out. In the final step, we ask the workers to verify if the recorded video corresponds to script, followed by an annotation procedure.

#### Who are the source language producers?

Amazon Mechnical Turk annotators

### Annotations

#### Annotation process

> Similar to filming, we have a three-step process for generating a video. The first step is generating the script of the indoor video. The key here is to allow workers to generate diverse scripts yet ensure that we have enough data for each category. The second step in the process is to use the script and ask workers to record a video of that sentence being acted out. In the final step, we ask the workers to verify if the recorded video corresponds to script, followed by an annotation procedure.

#### Who are the annotators?

Amazon Mechnical Turk annotators

### Personal and Sensitive Information

Nothing specifically mentioned in the paper.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

AMT annotators

### Licensing Information

License for Non-Commercial Use

If this software is redistributed, this license must be included. The term software includes any source files, documentation, executables, models, and data.

This software and data is available for general use by academic or non-profit, or government-sponsored researchers. It may also be used for evaluation purposes elsewhere. This license does not grant the right to use this software or any derivation of it in a for-profit enterprise. For commercial use, please contact The Allen Institute for Artificial Intelligence.

This license does not grant the right to modify and publicly release the data in any form.

This license does not grant the right to distribute the data to a third party in any form.

The subjects in this data should be treated with respect and dignity. This license only grants the right to publish short segments or still images in an academic publication where necessary to present examples, experimental results, or observations.

This software comes with no warranty or guarantee of any kind. By using this software, the user accepts full liability.

The Allen Institute for Artificial Intelligence (C) 2016.

### Citation Information

```bibtex

@article{sigurdsson2016hollywood,

author = {Gunnar A. Sigurdsson and G{\"u}l Varol and Xiaolong Wang and Ivan Laptev and Ali Farhadi and Abhinav Gupta},

title = {Hollywood in Homes: Crowdsourcing Data Collection for Activity Understanding},

journal = {ArXiv e-prints},

eprint = {1604.01753},

year = {2016},

url = {http://arxiv.org/abs/1604.01753},

}

```

### Contributions

Thanks to [@apsdehal](https://github.com/apsdehal) for adding this dataset.

| 14,484 | [

[

-0.039337158203125,

-0.0343017578125,

0.01003265380859375,

0.0296783447265625,

-0.0047454833984375,

0.0252685546875,

0.0005626678466796875,

-0.01052093505859375,

0.041290283203125,

0.01708984375,

-0.06878662109375,

-0.043731689453125,

-0.046966552734375,

0.0... |

linxinyuan/mind | 2022-06-07T23:12:22.000Z | [

"region:us"

] | linxinyuan | null | null | 1 | 18 | 2022-06-07T08:26:00 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

biglam/illustrated_ads | 2023-01-18T20:38:15.000Z | [

"task_categories:image-classification",

"task_ids:multi-class-image-classification",

"annotations_creators:expert-generated",

"size_categories:n<1K",

"license:cc0-1.0",

"lam",

"historic newspapers",

"region:us"

] | biglam | The Dataset contains images derived from the Newspaper Navigator (news-navigator.labs.loc.gov/), a dataset of images drawn from the Library of Congress Chronicling America collection. | @dataset{van_strien_daniel_2021_5838410,

author = {van Strien, Daniel},

title = {{19th Century United States Newspaper Advert images

with 'illustrated' or 'non illustrated' labels}},

month = oct,

year = 2021,

publisher = {Zenodo},

version = {0.0.1},

doi = {10.5281/zenodo.5838410},

url = {https://doi.org/10.5281/zenodo.5838410}} | 3 | 18 | 2022-06-27T14:14:29 | ---

annotations_creators:

- expert-generated

language: []

language_creators: []

license:

- cc0-1.0

multilinguality: []

pretty_name: 19th Century United States Newspaper Advert images with 'illustrated'

or 'non illustrated' labels

size_categories:

- n<1K

source_datasets: []

tags:

- lam

- historic newspapers

task_categories:

- image-classification

task_ids:

- multi-class-image-classification

---

The Dataset contains images derived from the [Newspaper Navigator](https://news-navigator.labs.loc.gov/), a dataset of images drawn from the Library of Congress Chronicling America collection (chroniclingamerica.loc.gov/).

> [The Newspaper Navigator dataset](https://news-navigator.labs.loc.gov/) consists of extracted visual content for 16,358,041 historic newspaper pages in Chronicling America. The visual content was identified using an object detection model trained on annotations of World War 1-era Chronicling America pages, including annotations made by volunteers as part of the Beyond Words crowdsourcing project. source: https://news-navigator.labs.loc.gov/

One of these categories is 'advertisements'. This dataset contains a sample of these images with additional labels indicating if the advert is 'illustrated' or 'not illustrated'.

This dataset was created for use in a [Programming Historian tutorial](http://programminghistorian.github.io/ph-submissions/lessons/computer-vision-deep-learning-pt1). The primary aim of the data was to provide a realistic example dataset for teaching computer vision for working with digitised heritage material.

# Dataset Card for 19th Century United States Newspaper Advert images with 'illustrated' or 'non illustrated' labels

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:**

- **Repository:**[https://doi.org/10.5281/zenodo.5838410](https://doi.org/10.5281/zenodo.5838410)

- **Paper:**[https://doi.org/10.46430/phen0101](https://doi.org/10.46430/phen0101)

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The Dataset contains images derived from the [Newspaper Navigator](news-navigator.labs.loc.gov/), a dataset of images drawn from the Library of Congress Chronicling America collection (chroniclingamerica.loc.gov/).

> [The Newspaper Navigator dataset](https://news-navigator.labs.loc.gov/) consists of extracted visual content for 16,358,041 historic newspaper pages in Chronicling America. The visual content was identified using an object detection model trained on annotations of World War 1-era Chronicling America pages, including annotations made by volunteers as part of the Beyond Words crowdsourcing project. source: https://news-navigator.labs.loc.gov/

One of these categories is 'advertisements. This dataset contains a sample of these images with additional labels indicating if the advert is 'illustrated' or 'not illustrated'.

This dataset was created for use in a [Programming Historian tutorial](http://programminghistorian.github.io/ph-submissions/lessons/computer-vision-deep-learning-pt1). The primary aim of the data was to provide a realistic example dataset for teaching computer vision for working with digitised heritage material.

### Supported Tasks and Leaderboards

- `image-classification`: the primary purpose of this dataset is for classifying historic newspaper images identified as being 'advertisements' into 'illustrated' and 'not-illustrated' categories.

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

An example instance from this dataset

``` python

{'file': 'pst_fenske_ver02_data_sn84026497_00280776129_1880042101_0834_002_6_96.jpg',

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=L size=388x395 at 0x7F9A72038950>,

'label': 0,

'pub_date': Timestamp('1880-04-21 00:00:00'),

'page_seq_num': 834,

'edition_seq_num': 1,

'batch': 'pst_fenske_ver02',

'lccn': 'sn84026497',

'box': [0.649412214756012,

0.6045778393745422,

0.8002520799636841,

0.7152365446090698],

'score': 0.9609346985816956,

'ocr': "H. II. IIASLKT & SOXN, Dealers in General Merchandise In New Store Room nt HASLET'S COS ITERS, 'JTionoMtii, ln. .Tau'y 1st, 1?0.",

'place_of_publication': 'Tionesta, Pa.',

'geographic_coverage': "['Pennsylvania--Forest--Tionesta']",

'name': 'The Forest Republican. [volume]',

'publisher': 'Ed. W. Smiley',