id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

archanatikayatray/aeroBERT-classification | 2023-05-20T22:40:37.000Z | [

"task_categories:text-classification",

"size_categories:n<1K",

"language:en",

"license:apache-2.0",

"sentence classification",

"aerospace requirements",

"design",

"functional",

"performance",

"requirements",

"NLP4RE",

"doi:10.57967/hf/0433",

"region:us"

] | archanatikayatray | null | null | 2 | 5 | 2023-01-12T05:00:31 | ---

license: apache-2.0

task_categories:

- text-classification

tags:

- sentence classification

- aerospace requirements

- design

- functional

- performance

- requirements

- NLP4RE

pretty_name: requirements_classification_dataset.txt

size_categories:

- n<1K

language:

- en

---

# Dataset Card for aeroBERT-classification

## Dataset Description

- **Paper:** aeroBERT-Classifier: Classification of Aerospace Requirements using BERT

- **Point of Contact:** archanatikayatray@gmail.com

### Dataset Summary

This dataset contains requirements from the aerospace domain. The requirements are tagged based on the "type"/category of requirement they belong to.

The creation of this dataset is aimed at - <br>

(1) Making available an **open-source** dataset for aerospace requirements which are often proprietary <br>

(2) Fine-tuning language models for **requirements classification** specific to the aerospace domain <br>

This dataset can be used for training or fine-tuning language models for the identification of the following types of requirements - <br>

<br>

**Design Requirement** - Dictates "how" a system should be designed given certain technical standards and specifications;

**Example:** Trim control systems must be designed to prevent creeping in flight.<br>

<br>

**Functional Requirement** - Defines the functions that need to be performed by a system in order to accomplish the desired system functionality;

**Example:** Each cockpit voice recorder shall record the voice communications of flight crew members on the flight deck.<br>

<br>

**Performance Requirement** - Defines "how well" a system needs to perform a certain function;

**Example:** The airplane must be free from flutter, control reversal, and divergence for any configuration and condition of operation.<br>

## Dataset Structure

The tagging scheme followed: <br>

(1) Design requirements: 0 (Count = 149) <br>

(2) Functional requirements: 1 (Count = 99) <br>

(3) Performance requirements: 2 (Count = 62) <br>

<br>

The dataset is of the format: ``requirements | label`` <br>

| requirements | label |

| :----: | :----: |

| Each cockpit voice recorder shall record voice communications transmitted from or received in the airplane by radio.| 1 |

| Each recorder container must be either bright orange or bright yellow.| 0 |

| Single-engine airplanes, not certified for aerobatics, must not have a tendency to inadvertently depart controlled flight. | 2|

| Each part of the airplane must have adequate provisions for ventilation and drainage. | 0 |

| Each baggage and cargo compartment must have a means to prevent the contents of the compartment from becoming a hazard by impacting occupants or shifting. | 1 |

## Dataset Creation

### Source Data

A total of 325 aerospace requirements were collected from Parts 23 and 25 of Title 14 of the Code of Federal Regulations (CFRs) and annotated (refer to the paper for more details). <br>

### Importing dataset into Python environment

Use the following code chunk to import the dataset into Python environment as a DataFrame.

```

from datasets import load_dataset

import pandas as pd

dataset = load_dataset("archanatikayatray/aeroBERT-classification")

#Converting the dataset into a pandas DataFrame

dataset = pd.DataFrame(dataset["train"]["text"])

dataset = dataset[0].str.split('*', expand = True)

#Getting the headers from the first row

header = dataset.iloc[0]

#Excluding the first row since it contains the headers

dataset = dataset[1:]

#Assigning the header to the DataFrame

dataset.columns = header

#Viewing the last 10 rows of the annotated dataset

dataset.tail(10)

```

### Annotations

#### Annotation process

A Subject Matter Expert (SME) was consulted for deciding on the annotation categories for the requirements.

The final classification dataset had 149 Design requirements, 99 Functional requirements, and 62 Performance requirements.

Lastly, the 'labels' attached to the requirements (design requirement, functional requirement, and performance requirement) were converted into numeric values: 0, 1, and 2 respectively.

### Limitations

(1)The dataset is an imbalanced dataset (more Design requirements as compared to the other types). Hence, using ``Accuracy`` as a metric for the model performance is

NOT a good idea. The use of Precision, Recall, and F1 scores are suggested for model performance evaluation.

(2)This dataset does not contain a test set. Hence, it is suggested that the user split the dataset into training/validation/testing after importing the data into a Python environment.

Please refer to the Appendix of the paper for information on the test set.

### Citation Information

```

@Article{aeroBERT-Classifier,

AUTHOR = {Tikayat Ray, Archana and Cole, Bjorn F. and Pinon Fischer, Olivia J. and White, Ryan T. and Mavris, Dimitri N.},

TITLE = {aeroBERT-Classifier: Classification of Aerospace Requirements Using BERT},

JOURNAL = {Aerospace},

VOLUME = {10},

YEAR = {2023},

NUMBER = {3},

ARTICLE-NUMBER = {279},

URL = {https://www.mdpi.com/2226-4310/10/3/279},

ISSN = {2226-4310},

DOI = {10.3390/aerospace10030279}

}

@phdthesis{tikayatray_thesis,

author = {Tikayat Ray, Archana},

title = {Standardization of Engineering Requirements Using Large Language Models},

school = {Georgia Institute of Technology},

year = {2023},

doi = {10.13140/RG.2.2.17792.40961},

URL = {https://repository.gatech.edu/items/964c73e3-f0a8-487d-a3fa-a0988c840d04}

}

``` | 5,567 | [

[

-0.04571533203125,

-0.02423095703125,

-0.0009684562683105469,

0.0215301513671875,

0.0033931732177734375,

-0.0206298828125,

-0.00606536865234375,

-0.0308837890625,

0.0025157928466796875,

0.039215087890625,

-0.03338623046875,

-0.046630859375,

-0.02447509765625,

... |

Zombely/wikisource-small | 2023-01-15T18:48:01.000Z | [

"region:us"

] | Zombely | null | null | 0 | 5 | 2023-01-15T09:28:13 | ---

dataset_info:

features:

- name: image

dtype: image

- name: ground_truth

dtype: string

splits:

- name: train

num_bytes: 24302805827.009

num_examples: 15549

download_size: 19231095073

dataset_size: 24302805827.009

---

# Dataset Card for "wikisource-small"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 420 | [

[

-0.050689697265625,

-0.006511688232421875,

0.0189208984375,

-0.0071258544921875,

-0.0184478759765625,

-0.023101806640625,

-0.00675201416015625,

-0.0101776123046875,

0.06689453125,

0.02020263671875,

-0.06842041015625,

-0.036773681640625,

-0.0303955078125,

0.0... |

metaeval/cycic_classification | 2023-05-31T08:47:48.000Z | [

"task_categories:question-answering",

"task_categories:text-classification",

"language:en",

"license:apache-2.0",

"arxiv:2301.05948",

"region:us"

] | metaeval | null | null | 1 | 5 | 2023-01-18T11:03:35 | ---

license: apache-2.0

task_categories:

- question-answering

- text-classification

language:

- en

---

https://storage.googleapis.com/ai2-mosaic/public/cycic/CycIC-train-dev.zip

https://colab.research.google.com/drive/16nyxZPS7-ZDFwp7tn_q72Jxyv0dzK1MP?usp=sharing

```

@article{Kejriwal2020DoFC,

title={Do Fine-tuned Commonsense Language Models Really Generalize?},

author={Mayank Kejriwal and Ke Shen},

journal={ArXiv},

year={2020},

volume={abs/2011.09159}

}

```

added for

```

@article{sileo2023tasksource,

title={tasksource: Structured Dataset Preprocessing Annotations for Frictionless Extreme Multi-Task Learning and Evaluation},

author={Sileo, Damien},

url= {https://arxiv.org/abs/2301.05948},

journal={arXiv preprint arXiv:2301.05948},

year={2023}

}

``` | 779 | [

[

-0.024932861328125,

-0.035400390625,

0.02471923828125,

0.01214599609375,

-0.01317596435546875,

-0.0196380615234375,

-0.054168701171875,

-0.034576416015625,

-0.006137847900390625,

0.020538330078125,

-0.0577392578125,

-0.051483154296875,

-0.04296875,

0.0116729... |

lorenzoscottb/PLANE-ood | 2023-01-25T09:51:09.000Z | [

"task_categories:text-classification",

"size_categories:100K<n<1M",

"language:en",

"license:cc-by-2.0",

"region:us"

] | lorenzoscottb | null | null | 0 | 5 | 2023-01-22T21:22:03 | ---

license: cc-by-2.0

task_categories:

- text-classification

language:

- en

size_categories:

- 100K<n<1M

---

# PLANE Out-of-Distribution Sets

PLANE (phrase-level adjective-noun entailment) is a benchmark to test models on fine-grained compositional inference.

The current dataset contains five sampled splits, used in the supervised experiments of [Bertolini et al., 22](https://aclanthology.org/2022.coling-1.359/).

## Data Structure

The `dataset` is organised around five `Train/test_split#`, each containing a training and test set of circa 60K and 2K.

### Features

Each entrance has 6 features: `seq, label, Adj_Class, Adj, Nn, Hy`

- `seq`:test sequense

- `label`: ground truth (1:entialment, 0:no-entailment)

- `Adj_Class`: the class of the sequence adjectives

- `Adj`: the adjective of the sequence (I: intersective, S: subsective, O: intensional)

- `N`n: the noun

- `Hy`: the noun's hypericum

Each sample in `seq` can take one of three forms (or inference types, in paper):

- An *Adjective-Noun* is a *Noun* (e.g. A red car is a car)

- An *Adjective-Noun* is a *Hypernym(Noun)* (e.g. A red car is a vehicle)

- An *Adjective-Noun* is a *Adjective-Hypernym(Noun)* (e.g. A red car is a red vehicle)

Please note that, as specified in the paper, the ground truth is automatically assigned based on the linguistic rule that governs the interaction between each adjective class and inference type – see the paper for more detail.

### Trained Model

You can find a tuned BERT-base model (tuned and validated using the 2nd split) [here](https://huggingface.co/lorenzoscottb/bert-base-cased-PLANE-ood-2?text=A+fake+smile+is+a+smile).

### Cite

If you use PLANE for your work, please cite the main COLING 2022 paper.

```

@inproceedings{bertolini-etal-2022-testing,

title = "Testing Large Language Models on Compositionality and Inference with Phrase-Level Adjective-Noun Entailment",

author = "Bertolini, Lorenzo and

Weeds, Julie and

Weir, David",

booktitle = "Proceedings of the 29th International Conference on Computational Linguistics",

month = oct,

year = "2022",

address = "Gyeongju, Republic of Korea",

publisher = "International Committee on Computational Linguistics",

url = "https://aclanthology.org/2022.coling-1.359",

pages = "4084--4100",

}

``` | 2,319 | [

[

-0.048583984375,

-0.06793212890625,

0.015777587890625,

0.03094482421875,

-0.0120849609375,

-0.0266571044921875,

-0.0158843994140625,

-0.00740814208984375,

0.0188751220703125,

0.041259765625,

-0.034027099609375,

-0.026947021484375,

-0.03045654296875,

-0.00632... |

juancopi81/yannic_ada_embeddings | 2023-01-24T13:46:03.000Z | [

"region:us"

] | juancopi81 | null | null | 0 | 5 | 2023-01-24T13:45:56 | ---

dataset_info:

features:

- name: TITLE

dtype: string

- name: URL

dtype: string

- name: TRANSCRIPTION

dtype: string

- name: transcription_length

dtype: int64

- name: text

dtype: string

- name: ada_embedding

dtype: string

splits:

- name: train

num_bytes: 127436085

num_examples: 3194

download_size: 81996580

dataset_size: 127436085

---

# Dataset Card for "yannic_ada_embeddings"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 566 | [

[

-0.027008056640625,

-0.028472900390625,

0.0269012451171875,

0.004955291748046875,

-0.00882720947265625,

-0.0108184814453125,

0.0166168212890625,

-0.00531768798828125,

0.0771484375,

0.0139617919921875,

-0.046905517578125,

-0.07305908203125,

-0.034637451171875,

... |

nglaura/scielo-summarization | 2023-04-11T10:21:45.000Z | [

"task_categories:summarization",

"language:fr",

"license:apache-2.0",

"region:us"

] | nglaura | null | null | 0 | 5 | 2023-01-25T12:02:33 | ---

license: apache-2.0

task_categories:

- summarization

language:

- fr

pretty_name: SciELO

---

# LoRaLay: A Multilingual and Multimodal Dataset for Long Range and Layout-Aware Summarization

A collaboration between [reciTAL](https://recital.ai/en/), [MLIA](https://mlia.lip6.fr/) (ISIR, Sorbonne Université), [Meta AI](https://ai.facebook.com/), and [Università di Trento](https://www.unitn.it/)

## SciELO dataset for summarization

SciELO is a dataset for summarization of research papers written in Spanish and Portuguese, for which layout information is provided.

### Data Fields

- `article_id`: article id

- `article_words`: sequence of words constituting the body of the article

- `article_bboxes`: sequence of corresponding word bounding boxes

- `norm_article_bboxes`: sequence of corresponding normalized word bounding boxes

- `abstract`: a string containing the abstract of the article

- `article_pdf_url`: URL of the article's PDF

### Data Splits

This dataset has 3 splits: _train_, _validation_, and _test_.

| Dataset Split | Number of Instances (ES/PT) |

| ------------- | ----------------------------|

| Train | 20,853 / 19,407 |

| Validation | 1,158 / 1,078 |

| Test | 1,159 / 1,078 |

## Citation

``` latex

@article{nguyen2023loralay,

title={LoRaLay: A Multilingual and Multimodal Dataset for Long Range and Layout-Aware Summarization},

author={Nguyen, Laura and Scialom, Thomas and Piwowarski, Benjamin and Staiano, Jacopo},

journal={arXiv preprint arXiv:2301.11312},

year={2023}

}

``` | 1,581 | [

[

-0.01251220703125,

-0.031707763671875,

0.01375579833984375,

0.06536865234375,

-0.0232391357421875,

-0.00328826904296875,

-0.02294921875,

-0.0285491943359375,

0.0511474609375,

0.0338134765625,

-0.0185546875,

-0.06988525390625,

-0.0277252197265625,

0.028411865... |

michelecafagna26/hl | 2023-08-02T11:50:20.000Z | [

"task_categories:image-to-text",

"task_categories:question-answering",

"task_categories:zero-shot-classification",

"task_ids:text-scoring",

"annotations_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"language:en",

"license:apache-2.0",

"arxiv:1405.0312",

"a... | michelecafagna26 | High-level Dataset | @inproceedings{Cafagna2023HLDG,

title={HL Dataset: Grounding High-Level Linguistic Concepts in Vision},

author={Michele Cafagna and Kees van Deemter and Albert Gatt},

year={2023}

} | 4 | 5 | 2023-01-25T16:15:17 | ---

license: apache-2.0

task_categories:

- image-to-text

- question-answering

- zero-shot-classification

language:

- en

multilinguality:

- monolingual

task_ids:

- text-scoring

pretty_name: HL (High-Level Dataset)

size_categories:

- 10K<n<100K

annotations_creators:

- crowdsourced

annotations_origin:

- crowdsourced

dataset_info:

splits:

- name: train

num_examples: 13498

- name: test

num_examples: 1499

---

# Dataset Card for the High-Level Dataset

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Supported Tasks](#supported-tasks)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

The High-Level (HL) dataset aligns **object-centric descriptions** from [COCO](https://arxiv.org/pdf/1405.0312.pdf)

with **high-level descriptions** crowdsourced along 3 axes: **_scene_, _action_, _rationale_**

The HL dataset contains 14997 images from COCO and a total of 134973 crowdsourced captions (3 captions for each axis) aligned with ~749984 object-centric captions from COCO.

Each axis is collected by asking the following 3 questions:

1) Where is the picture taken?

2) What is the subject doing?

3) Why is the subject doing it?

**The high-level descriptions capture the human interpretations of the images**. These interpretations contain abstract concepts not directly linked to physical objects.

Each high-level description is provided with a _confidence score_, crowdsourced by an independent worker measuring the extent to which

the high-level description is likely given the corresponding image, question, and caption. The higher the score, the more the high-level caption is close to the commonsense (in a Likert scale from 1-5).

- **🗃️ Repository:** [github.com/michelecafagna26/HL-dataset](https://github.com/michelecafagna26/HL-dataset)

- **📜 Paper:** [HL Dataset: Visually-grounded Description of Scenes, Actions and Rationales](https://arxiv.org/abs/2302.12189?context=cs.CL)

- **🧭 Spaces:** [Dataset explorer](https://huggingface.co/spaces/michelecafagna26/High-Level-Dataset-explorer)

- **🖊️ Contact:** michele.cafagna@um.edu.mt

### Supported Tasks

- image captioning

- visual question answering

- multimodal text-scoring

- zero-shot evaluation

### Languages

English

## Dataset Structure

The dataset is provided with images from COCO and two metadata jsonl files containing the annotations

### Data Instances

An instance looks like this:

```json

{

"file_name": "COCO_train2014_000000138878.jpg",

"captions": {

"scene": [

"in a car",

"the picture is taken in a car",

"in an office."

],

"action": [

"posing for a photo",

"the person is posing for a photo",

"he's sitting in an armchair."

],

"rationale": [

"to have a picture of himself",

"he wants to share it with his friends",

"he's working and took a professional photo."

],

"object": [

"A man sitting in a car while wearing a shirt and tie.",

"A man in a car wearing a dress shirt and tie.",

"a man in glasses is wearing a tie",

"Man sitting in the car seat with button up and tie",

"A man in glasses and a tie is near a window."

]

},

"confidence": {

"scene": [

5,

5,

4

],

"action": [

5,

5,

4

],

"rationale": [

5,

5,

4

]

},

"purity": {

"scene": [

-1.1760284900665283,

-1.0889461040496826,

-1.442818284034729

],

"action": [

-1.0115827322006226,

-0.5917857885360718,

-1.6931917667388916

],

"rationale": [

-1.0546956062316895,

-0.9740906357765198,

-1.2204363346099854

]

},

"diversity": {

"scene": 25.965358893403383,

"action": 32.713305568898775,

"rationale": 2.658757840479801

}

}

```

### Data Fields

- ```file_name```: original COCO filename

- ```captions```: Dict containing all the captions for the image. Each axis can be accessed with the axis name and it contains a list of captions.

- ```confidence```: Dict containing the captions confidence scores. Each axis can be accessed with the axis name and it contains a list of captions. Confidence scores are not provided for the _object_ axis (COCO captions).t

- ```purity score```: Dict containing the captions purity scores. The purity score measures the semantic similarity of the captions within the same axis (Bleurt-based).

- ```diversity score```: Dict containing the captions diversity scores. The diversity score measures the lexical diversity of the captions within the same axis (Self-BLEU-based).

### Data Splits

There are 14997 images and 134973 high-level captions split into:

- Train-val: 13498 images and 121482 high-level captions

- Test: 1499 images and 13491 high-level captions

## Dataset Creation

The dataset has been crowdsourced on Amazon Mechanical Turk.

From the paper:

>We randomly select 14997 images from the COCO 2014 train-val split. In order to answer questions related to _actions_ and _rationales_ we need to

> ensure the presence of a subject in the image. Therefore, we leverage the entity annotation provided in COCO to select images containing

> at least one person. The whole annotation is conducted on Amazon Mechanical Turk (AMT). We split the workload into batches in order to ease

>the monitoring of the quality of the data collected. Each image is annotated by three different annotators, therefore we collect three annotations per axis.

### Curation Rationale

From the paper:

>In this work, we tackle the issue of **grounding high-level linguistic concepts in the visual modality**, proposing the High-Level (HL) Dataset: a

V\&L resource aligning existing object-centric captions with human-collected high-level descriptions of images along three different axes: _scenes_, _actions_ and _rationales_.

The high-level captions capture the human interpretation of the scene, providing abstract linguistic concepts complementary to object-centric captions

>used in current V\&L datasets, e.g. in COCO. We take a step further, and we collect _confidence scores_ to distinguish commonsense assumptions

>from subjective interpretations and we characterize our data under a variety of semantic and lexical aspects.

### Source Data

- Images: COCO

- object axis annotations: COCO

- scene, action, rationale annotations: crowdsourced

- confidence scores: crowdsourced

- purity score and diversity score: automatically computed

#### Annotation process

From the paper:

>**Pilot:** We run a pilot study with the double goal of collecting feedback and defining the task instructions.

>With the results from the pilot we design a beta version of the task and we run a small batch of cases on the crowd-sourcing platform.

>We manually inspect the results and we further refine the instructions and the formulation of the task before finally proceeding with the

>annotation in bulk. The final annotation form is shown in Appendix D.

>***Procedure:*** The participants are shown an image and three questions regarding three aspects or axes: _scene_, _actions_ and _rationales_

> i,e. _Where is the picture taken?_, _What is the subject doing?_, _Why is the subject doing it?_. We explicitly ask the participants to use

>their personal interpretation of the scene and add examples and suggestions in the instructions to further guide the annotators. Moreover,

>differently from other VQA datasets like (Antol et al., 2015) and (Zhu et al., 2016), where each question can refer to different entities

>in the image, we systematically ask the same three questions about the same subject for each image. The full instructions are reported

>in Figure 1. For details regarding the annotation costs see Appendix A.

#### Who are the annotators?

Turkers from Amazon Mechanical Turk

### Personal and Sensitive Information

There is no personal or sensitive information

## Considerations for Using the Data

[More Information Needed]

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

From the paper:

>**Quantitying grammatical errors:** We ask two expert annotators to correct grammatical errors in a sample of 9900 captions, 900 of which are shared between the two annotators.

> The annotators are shown the image caption pairs and they are asked to edit the caption whenever they identify a grammatical error.

>The most common errors reported by the annotators are:

>- Misuse of prepositions

>- Wrong verb conjugation

>- Pronoun omissions

>In order to quantify the extent to which the corrected captions differ from the original ones, we compute the Levenshtein distance (Levenshtein, 1966) between them.

>We observe that 22.5\% of the sample has been edited and only 5\% with a Levenshtein distance greater than 10. This suggests a reasonable

>level of grammatical quality overall, with no substantial grammatical problems. This can also be observed from the Levenshtein distance

>distribution reported in Figure 2. Moreover, the human evaluation is quite reliable as we observe a moderate inter-annotator agreement

>(alpha = 0.507, (Krippendorff, 2018) computed over the shared sample.

### Dataset Curators

Michele Cafagna

### Licensing Information

The Images and the object-centric captions follow the [COCO terms of Use](https://cocodataset.org/#termsofuse)

The remaining annotations are licensed under Apache-2.0 license.

### Citation Information

```BibTeX

@inproceedings{cafagna2023hl,

title={{HL} {D}ataset: {V}isually-grounded {D}escription of {S}cenes, {A}ctions and

{R}ationales},

author={Cafagna, Michele and van Deemter, Kees and Gatt, Albert},

booktitle={Proceedings of the 16th International Natural Language Generation Conference (INLG'23)},

address = {Prague, Czech Republic},

year={2023}

}

```

| 10,695 | [

[

-0.0546875,

-0.051605224609375,

0.00823211669921875,

0.0215606689453125,

-0.0233001708984375,

0.0108184814453125,

-0.01171875,

-0.03717041015625,

0.025634765625,

0.0423583984375,

-0.043609619140625,

-0.06109619140625,

-0.040313720703125,

0.0209503173828125,

... |

liyucheng/UFSAC | 2023-01-26T15:41:19.000Z | [

"task_categories:token-classification",

"size_categories:1M<n<10M",

"language:en",

"license:cc-by-2.0",

"region:us"

] | liyucheng | null | null | 0 | 5 | 2023-01-25T22:17:54 | ---

license: cc-by-2.0

task_categories:

- token-classification

language:

- en

size_categories:

- 1M<n<10M

---

# Dataset Card for Dataset Name

UFSAC: Unification of Sense Annotated Corpora and Tools

## Dataset Description

- **Homepage:** https://github.com/getalp/UFSAC

- **Repository:** https://github.com/getalp/UFSAC

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

### Supported Tasks and Leaderboards

WSD: Word Sense Disambiguation

### Languages

English

## Dataset Structure

### Data Instances

```

{'lemmas': ['_',

'be',

'quite',

'_',

'hefty',

'spade',

'_',

'_',

'bicycle',

'_',

'type',

'handlebar',

'_',

'_',

'spring',

'lever',

'_',

'_',

'rear',

'_',

'_',

'_',

'step',

'on',

'_',

'activate',

'_',

'_'],

'pos_tags': ['PRP',

'VBZ',

'RB',

'DT',

'JJ',

'NN',

',',

'IN',

'NN',

':',

'NN',

'NNS',

'CC',

'DT',

'VBN',

'NN',

'IN',

'DT',

'NN',

',',

'WDT',

'PRP',

'VBP',

'RP',

'TO',

'VB',

'PRP',

'.'],

'sense_keys': ['activate%2:36:00::'],

'target_idx': 25,

'tokens': ['It',

'is',

'quite',

'a',

'hefty',

'spade',

',',

'with',

'bicycle',

'-',

'type',

'handlebars',

'and',

'a',

'sprung',

'lever',

'at',

'the',

'rear',

',',

'which',

'you',

'step',

'on',

'to',

'activate',

'it',

'.']}

```

### Data Fields

```

{'tokens': Sequence(feature=Value(dtype='string', id=None), length=-1, id=None),

'lemmas': Sequence(feature=Value(dtype='string', id=None), length=-1, id=None),

'pos_tags': Sequence(feature=Value(dtype='string', id=None), length=-1, id=None),

'target_idx': Value(dtype='int32', id=None),

'sense_keys': Sequence(feature=Value(dtype='string', id=None), length=-1, id=None)}

```

### Data Splits

Not split. Use `train` split directly.

| 2,709 | [

[

-0.02960205078125,

-0.0171661376953125,

0.027435302734375,

0.0096588134765625,

-0.0245819091796875,

0.00539398193359375,

-0.01265716552734375,

-0.0182342529296875,

0.041351318359375,

0.0120849609375,

-0.05242919921875,

-0.07379150390625,

-0.046142578125,

0.0... |

metaeval/naturallogic | 2023-01-26T09:51:03.000Z | [

"task_categories:text-classification",

"language:en",

"license:apache-2.0",

"region:us"

] | metaeval | null | null | 0 | 5 | 2023-01-26T09:49:49 | ---

license: apache-2.0

task_categories:

- text-classification

language:

- en

---

https://github.com/feng-yufei/Neural-Natural-Logic

```bib

@inproceedings{feng2020exploring,

title={Exploring End-to-End Differentiable Natural Logic Modeling},

author={Feng, Yufei, Ziou Zheng, and Liu, Quan and Greenspan, Michael and Zhu, Xiaodan},

booktitle={Proceedings of the 28th International Conference on Computational Linguistics},

pages={1172--1185},

year={2020}

}

``` | 469 | [

[

-0.0170745849609375,

-0.042205810546875,

0.0165863037109375,

0.0193023681640625,

-0.006595611572265625,

0.001873016357421875,

-0.0306396484375,

-0.06085205078125,

0.025482177734375,

0.01335906982421875,

-0.051544189453125,

-0.0152435302734375,

-0.0218505859375,

... |

Cohere/miracl-ar-queries-22-12 | 2023-02-06T12:00:30.000Z | [

"task_categories:text-retrieval",

"task_ids:document-retrieval",

"annotations_creators:expert-generated",

"multilinguality:multilingual",

"language:ar",

"license:apache-2.0",

"region:us"

] | Cohere | null | null | 0 | 5 | 2023-01-30T09:57:38 | ---

annotations_creators:

- expert-generated

language:

- ar

multilinguality:

- multilingual

size_categories: []

source_datasets: []

tags: []

task_categories:

- text-retrieval

license:

- apache-2.0

task_ids:

- document-retrieval

---

# MIRACL (ar) embedded with cohere.ai `multilingual-22-12` encoder

We encoded the [MIRACL dataset](https://huggingface.co/miracl) using the [cohere.ai](https://txt.cohere.ai/multilingual/) `multilingual-22-12` embedding model.

The query embeddings can be found in [Cohere/miracl-ar-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-queries-22-12) and the corpus embeddings can be found in [Cohere/miracl-ar-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-corpus-22-12).

For the orginal datasets, see [miracl/miracl](https://huggingface.co/datasets/miracl/miracl) and [miracl/miracl-corpus](https://huggingface.co/datasets/miracl/miracl-corpus).

Dataset info:

> MIRACL 🌍🙌🌏 (Multilingual Information Retrieval Across a Continuum of Languages) is a multilingual retrieval dataset that focuses on search across 18 different languages, which collectively encompass over three billion native speakers around the world.

>

> The corpus for each language is prepared from a Wikipedia dump, where we keep only the plain text and discard images, tables, etc. Each article is segmented into multiple passages using WikiExtractor based on natural discourse units (e.g., `\n\n` in the wiki markup). Each of these passages comprises a "document" or unit of retrieval. We preserve the Wikipedia article title of each passage.

## Embeddings

We compute for `title+" "+text` the embeddings using our `multilingual-22-12` embedding model, a state-of-the-art model that works for semantic search in 100 languages. If you want to learn more about this model, have a look at [cohere.ai multilingual embedding model](https://txt.cohere.ai/multilingual/).

## Loading the dataset

In [miracl-ar-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-corpus-22-12) we provide the corpus embeddings. Note, depending on the selected split, the respective files can be quite large.

You can either load the dataset like this:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train")

```

Or you can also stream it without downloading it before:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train", streaming=True)

for doc in docs:

docid = doc['docid']

title = doc['title']

text = doc['text']

emb = doc['emb']

```

## Search

Have a look at [miracl-ar-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-ar-queries-22-12) where we provide the query embeddings for the MIRACL dataset.

To search in the documents, you must use **dot-product**.

And then compare this query embeddings either with a vector database (recommended) or directly computing the dot product.

A full search example:

```python

# Attention! For large datasets, this requires a lot of memory to store

# all document embeddings and to compute the dot product scores.

# Only use this for smaller datasets. For large datasets, use a vector DB

from datasets import load_dataset

import torch

#Load documents + embeddings

docs = load_dataset(f"Cohere/miracl-ar-corpus-22-12", split="train")

doc_embeddings = torch.tensor(docs['emb'])

# Load queries

queries = load_dataset(f"Cohere/miracl-ar-queries-22-12", split="dev")

# Select the first query as example

qid = 0

query = queries[qid]

query_embedding = torch.tensor(queries['emb'])

# Compute dot score between query embedding and document embeddings

dot_scores = torch.mm(query_embedding, doc_embeddings.transpose(0, 1))

top_k = torch.topk(dot_scores, k=3)

# Print results

print("Query:", query['query'])

for doc_id in top_k.indices[0].tolist():

print(docs[doc_id]['title'])

print(docs[doc_id]['text'])

```

You can get embeddings for new queries using our API:

```python

#Run: pip install cohere

import cohere

co = cohere.Client(f"{api_key}") # You should add your cohere API Key here :))

texts = ['my search query']

response = co.embed(texts=texts, model='multilingual-22-12')

query_embedding = response.embeddings[0] # Get the embedding for the first text

```

## Performance

In the following table we compare the cohere multilingual-22-12 model with Elasticsearch version 8.6.0 lexical search (title and passage indexed as independent fields). Note that Elasticsearch doesn't support all languages that are part of the MIRACL dataset.

We compute nDCG@10 (a ranking based loss), as well as hit@3: Is at least one relevant document in the top-3 results. We find that hit@3 is easier to interpret, as it presents the number of queries for which a relevant document is found among the top-3 results.

Note: MIRACL only annotated a small fraction of passages (10 per query) for relevancy. Especially for larger Wikipedias (like English), we often found many more relevant passages. This is know as annotation holes. Real nDCG@10 and hit@3 performance is likely higher than depicted.

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 | ES 8.6.0 nDCG@10 | ES 8.6.0 acc@3 |

|---|---|---|---|---|

| miracl-ar | 64.2 | 75.2 | 46.8 | 56.2 |

| miracl-bn | 61.5 | 75.7 | 49.2 | 60.1 |

| miracl-de | 44.4 | 60.7 | 19.6 | 29.8 |

| miracl-en | 44.6 | 62.2 | 30.2 | 43.2 |

| miracl-es | 47.0 | 74.1 | 27.0 | 47.2 |

| miracl-fi | 63.7 | 76.2 | 51.4 | 61.6 |

| miracl-fr | 46.8 | 57.1 | 17.0 | 21.6 |

| miracl-hi | 50.7 | 62.9 | 41.0 | 48.9 |

| miracl-id | 44.8 | 63.8 | 39.2 | 54.7 |

| miracl-ru | 49.2 | 66.9 | 25.4 | 36.7 |

| **Avg** | 51.7 | 67.5 | 34.7 | 46.0 |

Further languages (not supported by Elasticsearch):

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 |

|---|---|---|

| miracl-fa | 44.8 | 53.6 |

| miracl-ja | 49.0 | 61.0 |

| miracl-ko | 50.9 | 64.8 |

| miracl-sw | 61.4 | 74.5 |

| miracl-te | 67.8 | 72.3 |

| miracl-th | 60.2 | 71.9 |

| miracl-yo | 56.4 | 62.2 |

| miracl-zh | 43.8 | 56.5 |

| **Avg** | 54.3 | 64.6 |

| 6,103 | [

[

-0.04571533203125,

-0.05792236328125,

0.0225830078125,

0.016387939453125,

-0.003787994384765625,

-0.0044097900390625,

-0.0203704833984375,

-0.035400390625,

0.039459228515625,

0.0157012939453125,

-0.03851318359375,

-0.07232666015625,

-0.0511474609375,

0.02359... |

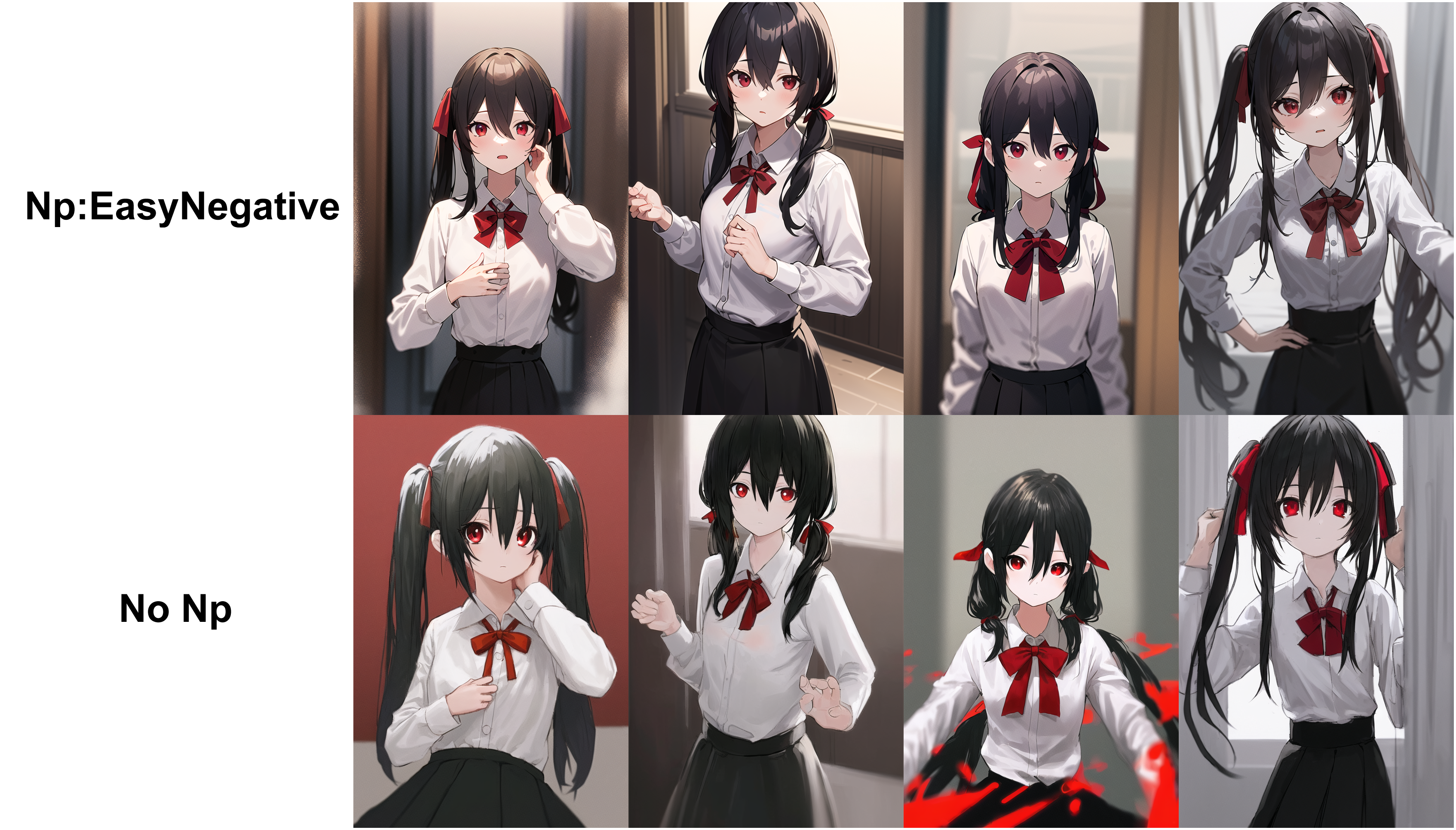

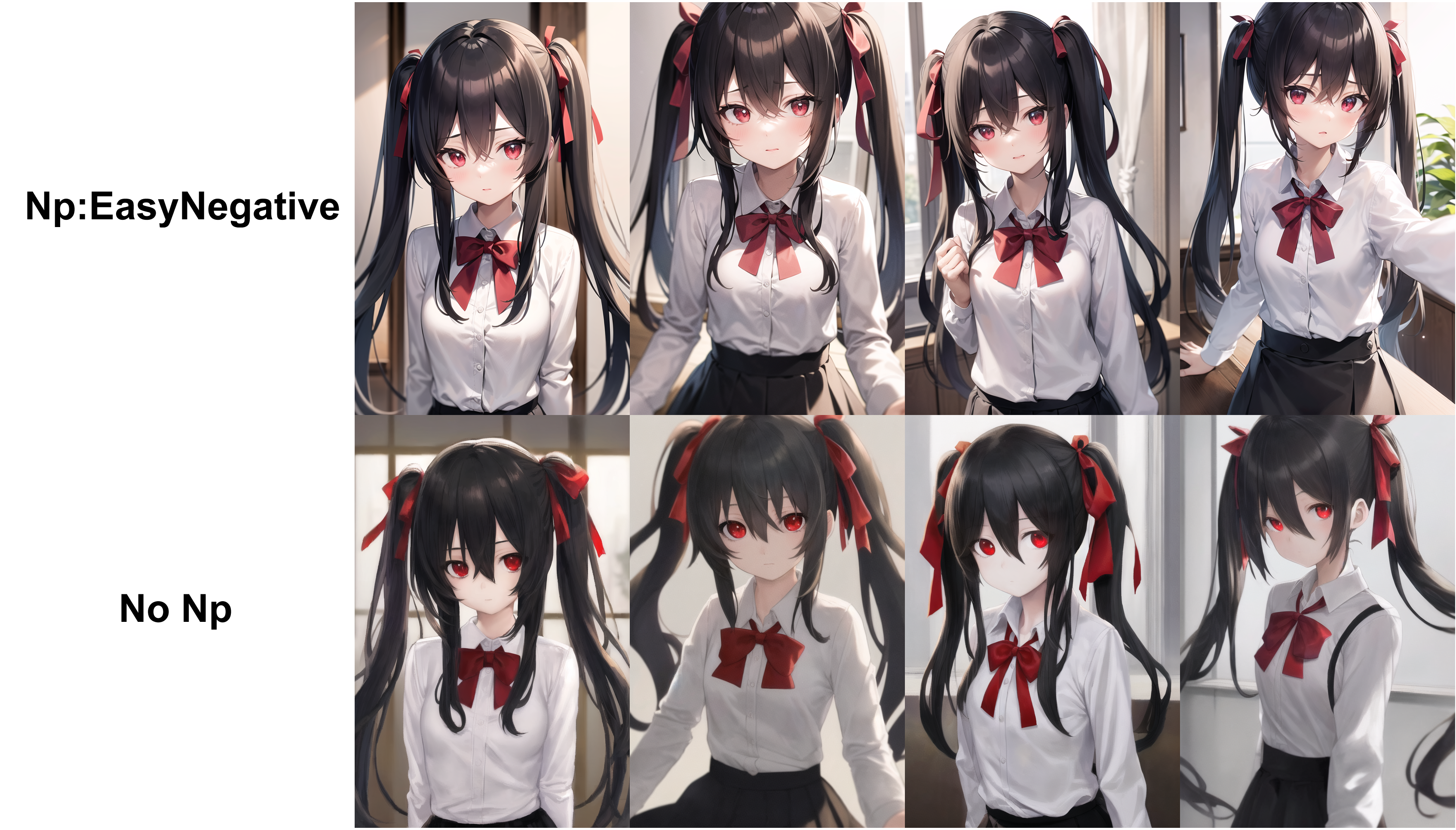

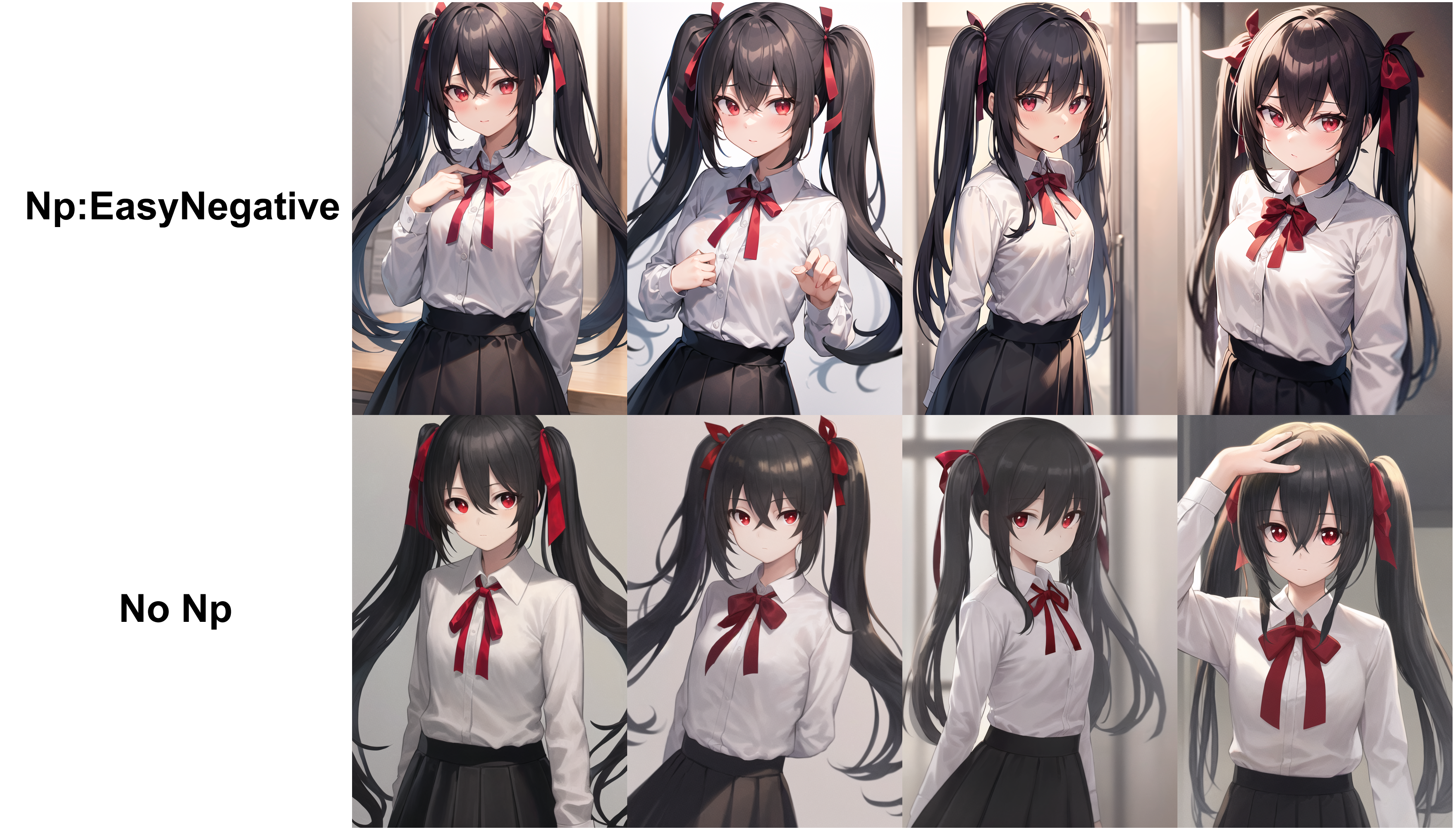

gsdf/EasyNegative | 2023-02-12T14:39:30.000Z | [

"license:other",

"region:us"

] | gsdf | null | null | 1,064 | 5 | 2023-02-01T10:58:06 | ---

license: other

---

# Negative Embedding

This is a Negative Embedding trained with Counterfeit. Please use it in the "\stable-diffusion-webui\embeddings" folder.

It can be used with other models, but the effectiveness is not certain.

# Counterfeit-V2.0.safetensors

# AbyssOrangeMix2_sfw.safetensors

# anything-v4.0-pruned.safetensors

| 608 | [

[

-0.035400390625,

-0.0537109375,

0.0138702392578125,

0.004913330078125,

-0.039825439453125,

0.0113525390625,

0.0426025390625,

-0.02447509765625,

0.04656982421875,

0.03839111328125,

-0.0438232421875,

-0.04205322265625,

-0.05145263671875,

-0.01132965087890625,

... |

Cohere/miracl-en-corpus-22-12 | 2023-02-06T11:54:52.000Z | [

"task_categories:text-retrieval",

"task_ids:document-retrieval",

"annotations_creators:expert-generated",

"multilinguality:multilingual",

"language:en",

"license:apache-2.0",

"region:us"

] | Cohere | null | null | 0 | 5 | 2023-02-02T23:21:21 | ---

annotations_creators:

- expert-generated

language:

- en

multilinguality:

- multilingual

size_categories: []

source_datasets: []

tags: []

task_categories:

- text-retrieval

license:

- apache-2.0

task_ids:

- document-retrieval

---

# MIRACL (en) embedded with cohere.ai `multilingual-22-12` encoder

We encoded the [MIRACL dataset](https://huggingface.co/miracl) using the [cohere.ai](https://txt.cohere.ai/multilingual/) `multilingual-22-12` embedding model.

The query embeddings can be found in [Cohere/miracl-en-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-en-queries-22-12) and the corpus embeddings can be found in [Cohere/miracl-en-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-en-corpus-22-12).

For the orginal datasets, see [miracl/miracl](https://huggingface.co/datasets/miracl/miracl) and [miracl/miracl-corpus](https://huggingface.co/datasets/miracl/miracl-corpus).

Dataset info:

> MIRACL 🌍🙌🌏 (Multilingual Information Retrieval Across a Continuum of Languages) is a multilingual retrieval dataset that focuses on search across 18 different languages, which collectively encompass over three billion native speakers around the world.

>

> The corpus for each language is prepared from a Wikipedia dump, where we keep only the plain text and discard images, tables, etc. Each article is segmented into multiple passages using WikiExtractor based on natural discourse units (e.g., `\n\n` in the wiki markup). Each of these passages comprises a "document" or unit of retrieval. We preserve the Wikipedia article title of each passage.

## Embeddings

We compute for `title+" "+text` the embeddings using our `multilingual-22-12` embedding model, a state-of-the-art model that works for semantic search in 100 languages. If you want to learn more about this model, have a look at [cohere.ai multilingual embedding model](https://txt.cohere.ai/multilingual/).

## Loading the dataset

In [miracl-en-corpus-22-12](https://huggingface.co/datasets/Cohere/miracl-en-corpus-22-12) we provide the corpus embeddings. Note, depending on the selected split, the respective files can be quite large.

You can either load the dataset like this:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-en-corpus-22-12", split="train")

```

Or you can also stream it without downloading it before:

```python

from datasets import load_dataset

docs = load_dataset(f"Cohere/miracl-en-corpus-22-12", split="train", streaming=True)

for doc in docs:

docid = doc['docid']

title = doc['title']

text = doc['text']

emb = doc['emb']

```

## Search

Have a look at [miracl-en-queries-22-12](https://huggingface.co/datasets/Cohere/miracl-en-queries-22-12) where we provide the query embeddings for the MIRACL dataset.

To search in the documents, you must use **dot-product**.

And then compare this query embeddings either with a vector database (recommended) or directly computing the dot product.

A full search example:

```python

# Attention! For large datasets, this requires a lot of memory to store

# all document embeddings and to compute the dot product scores.

# Only use this for smaller datasets. For large datasets, use a vector DB

from datasets import load_dataset

import torch

#Load documents + embeddings

docs = load_dataset(f"Cohere/miracl-en-corpus-22-12", split="train")

doc_embeddings = torch.tensor(docs['emb'])

# Load queries

queries = load_dataset(f"Cohere/miracl-en-queries-22-12", split="dev")

# Select the first query as example

qid = 0

query = queries[qid]

query_embedding = torch.tensor(queries['emb'])

# Compute dot score between query embedding and document embeddings

dot_scores = torch.mm(query_embedding, doc_embeddings.transpose(0, 1))

top_k = torch.topk(dot_scores, k=3)

# Print results

print("Query:", query['query'])

for doc_id in top_k.indices[0].tolist():

print(docs[doc_id]['title'])

print(docs[doc_id]['text'])

```

You can get embeddings for new queries using our API:

```python

#Run: pip install cohere

import cohere

co = cohere.Client(f"{api_key}") # You should add your cohere API Key here :))

texts = ['my search query']

response = co.embed(texts=texts, model='multilingual-22-12')

query_embedding = response.embeddings[0] # Get the embedding for the first text

```

## Performance

In the following table we compare the cohere multilingual-22-12 model with Elasticsearch version 8.6.0 lexical search (title and passage indexed as independent fields). Note that Elasticsearch doesn't support all languages that are part of the MIRACL dataset.

We compute nDCG@10 (a ranking based loss), as well as hit@3: Is at least one relevant document in the top-3 results. We find that hit@3 is easier to interpret, as it presents the number of queries for which a relevant document is found among the top-3 results.

Note: MIRACL only annotated a small fraction of passages (10 per query) for relevancy. Especially for larger Wikipedias (like English), we often found many more relevant passages. This is know as annotation holes. Real nDCG@10 and hit@3 performance is likely higher than depicted.

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 | ES 8.6.0 nDCG@10 | ES 8.6.0 acc@3 |

|---|---|---|---|---|

| miracl-ar | 64.2 | 75.2 | 46.8 | 56.2 |

| miracl-bn | 61.5 | 75.7 | 49.2 | 60.1 |

| miracl-de | 44.4 | 60.7 | 19.6 | 29.8 |

| miracl-en | 44.6 | 62.2 | 30.2 | 43.2 |

| miracl-es | 47.0 | 74.1 | 27.0 | 47.2 |

| miracl-fi | 63.7 | 76.2 | 51.4 | 61.6 |

| miracl-fr | 46.8 | 57.1 | 17.0 | 21.6 |

| miracl-hi | 50.7 | 62.9 | 41.0 | 48.9 |

| miracl-id | 44.8 | 63.8 | 39.2 | 54.7 |

| miracl-ru | 49.2 | 66.9 | 25.4 | 36.7 |

| **Avg** | 51.7 | 67.5 | 34.7 | 46.0 |

Further languages (not supported by Elasticsearch):

| Model | cohere multilingual-22-12 nDCG@10 | cohere multilingual-22-12 hit@3 |

|---|---|---|

| miracl-fa | 44.8 | 53.6 |

| miracl-ja | 49.0 | 61.0 |

| miracl-ko | 50.9 | 64.8 |

| miracl-sw | 61.4 | 74.5 |

| miracl-te | 67.8 | 72.3 |

| miracl-th | 60.2 | 71.9 |

| miracl-yo | 56.4 | 62.2 |

| miracl-zh | 43.8 | 56.5 |

| **Avg** | 54.3 | 64.6 |

| 6,103 | [

[

-0.04510498046875,

-0.058013916015625,

0.0231781005859375,

0.0177764892578125,

-0.003940582275390625,

-0.004413604736328125,

-0.0215606689453125,

-0.036468505859375,

0.039398193359375,

0.01617431640625,

-0.03961181640625,

-0.072265625,

-0.05047607421875,

0.0... |

bigcode/jupyter-parsed | 2023-02-21T19:16:28.000Z | [

"region:us"

] | bigcode | null | null | 3 | 5 | 2023-02-03T17:16:23 | ---

dataset_info:

features:

- name: hexsha

dtype: string

- name: size

dtype: int64

- name: ext

dtype: string

- name: lang

dtype: string

- name: max_stars_repo_path

dtype: string

- name: max_stars_repo_name

dtype: string

- name: max_stars_repo_head_hexsha

dtype: string

- name: max_stars_repo_licenses

sequence: string

- name: max_stars_count

dtype: int64

- name: max_stars_repo_stars_event_min_datetime

dtype: string

- name: max_stars_repo_stars_event_max_datetime

dtype: string

- name: max_issues_repo_path

dtype: string

- name: max_issues_repo_name

dtype: string

- name: max_issues_repo_head_hexsha

dtype: string

- name: max_issues_repo_licenses

sequence: string

- name: max_issues_count

dtype: int64

- name: max_issues_repo_issues_event_min_datetime

dtype: string

- name: max_issues_repo_issues_event_max_datetime

dtype: string

- name: max_forks_repo_path

dtype: string

- name: max_forks_repo_name

dtype: string

- name: max_forks_repo_head_hexsha

dtype: string

- name: max_forks_repo_licenses

sequence: string

- name: max_forks_count

dtype: int64

- name: max_forks_repo_forks_event_min_datetime

dtype: string

- name: max_forks_repo_forks_event_max_datetime

dtype: string

- name: avg_line_length

dtype: float64

- name: max_line_length

dtype: int64

- name: alphanum_fraction

dtype: float64

- name: cells

sequence:

sequence:

sequence: string

- name: cell_types

sequence: string

- name: cell_type_groups

sequence:

sequence: string

splits:

- name: train

num_bytes: 22910808665

num_examples: 1459454

download_size: 9418947545

dataset_size: 22910808665

---

# Dataset Card for "jupyter-parsed"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 1,945 | [

[

-0.027099609375,

-0.03131103515625,

0.0199737548828125,

0.01448822021484375,

-0.009307861328125,

-0.003337860107421875,

-0.0020904541015625,

-0.00258636474609375,

0.0526123046875,

0.02911376953125,

-0.037689208984375,

-0.0509033203125,

-0.043487548828125,

-0... |

metaeval/lonli | 2023-05-31T08:41:36.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"language:en",

"license:mit",

"region:us"

] | metaeval | null | null | 0 | 5 | 2023-02-04T14:48:11 | ---

license: mit

task_ids:

- natural-language-inference

task_categories:

- text-classification

language:

- en

---

https://github.com/microsoft/LoNLI

```bibtex

@article{Tarunesh2021TrustingRO,

title={Trusting RoBERTa over BERT: Insights from CheckListing the Natural Language Inference Task},

author={Ishan Tarunesh and Somak Aditya and Monojit Choudhury},

journal={ArXiv},

year={2021},

volume={abs/2107.07229}

}

``` | 425 | [

[

-0.014984130859375,

-0.0291748046875,

0.0435791015625,

0.012420654296875,

-0.005207061767578125,

-0.006031036376953125,

-0.0155029296875,

-0.074951171875,

0.02178955078125,

0.036529541015625,

-0.039794921875,

-0.0230865478515625,

-0.048583984375,

-0.00032091... |

metaeval/nli-veridicality-transitivity | 2023-02-04T18:10:09.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"language:en",

"license:cc",

"region:us"

] | metaeval | null | null | 0 | 5 | 2023-02-04T18:04:01 | ---

license: cc

task_categories:

- text-classification

language:

- en

task_ids:

- natural-language-inference

---

```bib

@inproceedings{yanaka-etal-2021-exploring,

title = "Exploring Transitivity in Neural {NLI} Models through Veridicality",

author = "Yanaka, Hitomi and

Mineshima, Koji and

Inui, Kentaro",

booktitle = "Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume",

year = "2021",

pages = "920--934",

}

``` | 518 | [

[

-0.0078277587890625,

-0.0421142578125,

0.03802490234375,

0.0089111328125,

-0.0040435791015625,

0.004627227783203125,

-0.0054473876953125,

-0.046783447265625,

0.0611572265625,

0.038726806640625,

-0.049530029296875,

-0.0173492431640625,

-0.034912109375,

0.0226... |

metaeval/help-nli | 2023-05-31T08:57:01.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"language:en",

"license:cc",

"region:us"

] | metaeval | null | null | 0 | 5 | 2023-02-04T18:07:35 | ---

license: cc

task_ids:

- natural-language-inference

task_categories:

- text-classification

language:

- en

---

https://github.com/verypluming/HELP

```bib

@InProceedings{yanaka-EtAl:2019:starsem,

author = {Yanaka, Hitomi and Mineshima, Koji and Bekki, Daisuke and Inui, Kentaro and Sekine, Satoshi and Abzianidze, Lasha and Bos, Johan},

title = {HELP: A Dataset for Identifying Shortcomings of Neural Models in Monotonicity Reasoning},

booktitle = {Proceedings of the Eighth Joint Conference on Lexical and Computational Semantics (*SEM2019)},

year = {2019},

}

``` | 587 | [

[

-0.03887939453125,

-0.0297698974609375,

0.0504150390625,

0.0125732421875,

-0.019012451171875,

-0.017120361328125,

-0.019073486328125,

-0.034423828125,

0.0506591796875,

0.02154541015625,

-0.059967041015625,

-0.03497314453125,

-0.0238189697265625,

0.0130615234... |

jrahn/yolochess_lichess-elite_2211 | 2023-02-08T07:19:54.000Z | [

"task_categories:text-classification",

"task_categories:reinforcement-learning",

"size_categories:10M<n<100M",

"license:cc",

"chess",

"region:us"

] | jrahn | null | null | 3 | 5 | 2023-02-05T20:51:21 | ---

dataset_info:

features:

- name: fen

dtype: string

- name: move

dtype: string

- name: result

dtype: string

- name: eco

dtype: string

splits:

- name: train

num_bytes: 1794337922

num_examples: 22116598

download_size: 1044871571

dataset_size: 1794337922

task_categories:

- text-classification

- reinforcement-learning

license: cc

tags:

- chess

size_categories:

- 10M<n<100M

---

# Dataset Card for "yolochess_lichess-elite_2211"

Source: https://database.nikonoel.fr/ - filtered from https://database.lichess.org for November 2022

Features:

- fen = Chess board position in [FEN](https://en.wikipedia.org/wiki/Forsyth%E2%80%93Edwards_Notation) format

- move = Move played by a strong human player in this position

- result = Final result of the match

- eco = [ECO](https://en.wikipedia.org/wiki/Encyclopaedia_of_Chess_Openings)-code of the Opening played

Samples: 22.1 million | 920 | [

[

-0.021636962890625,

-0.02630615234375,

0.0125274658203125,

-0.0023326873779296875,

-0.0210723876953125,

-0.0114288330078125,

-0.00641632080078125,

-0.026275634765625,

0.04608154296875,

0.043182373046875,

-0.061004638671875,

-0.06011962890625,

-0.02606201171875,

... |

neuclir/neumarco | 2023-02-06T16:16:37.000Z | [

"task_categories:text-retrieval",

"annotations_creators:machine-generated",

"language_creators:machine-generated",

"multilinguality:multilingual",

"size_categories:1M<n<10M",

"source_datasets:extended|irds/msmarco-passage",

"language:fa",

"language:ru",

"language:zh",

"region:us"

] | neuclir | null | null | 1 | 5 | 2023-02-06T15:19:57 | ---

annotations_creators:

- machine-generated

language:

- fa

- ru

- zh

language_creators:

- machine-generated

multilinguality:

- multilingual

pretty_name: NeuMARCO

size_categories:

- 1M<n<10M

source_datasets:

- extended|irds/msmarco-passage

tags: []

task_categories:

- text-retrieval

---

# Dataset Card for NeuMARCO

## Dataset Description

- **Website:** https://neuclir.github.io/

### Dataset Summary

This is the dataset created for TREC 2022 NeuCLIR Track. The collection consists of documents from [`msmarco-passage`](https://ir-datasets.com/msmarco-passage) translated into

Chinese, Persian, and Russian.

### Languages

- Chinese

- Persian

- Russian

## Dataset Structure

### Data Instances

| Split | Documents |

|-----------------|----------:|

| `fas` (Persian) | 8.8M |

| `rus` (Russian) | 8.8M |

| `zho` (Chinese) | 8.8M |

### Data Fields

- `doc_id`: unique identifier for this document

- `text`: translated passage text

## Dataset Usage

Using 🤗 Datasets:

```python

from datasets import load_dataset

dataset = load_dataset('neuclir/neumarco')

dataset['fas'] # Persian passages

dataset['rus'] # Russian passages

dataset['zho'] # Chinese passages

```

| 1,198 | [

[

-0.01477813720703125,

-0.00965118408203125,

0.00959014892578125,

0.01253509521484375,

-0.032318115234375,

0.0126495361328125,

-0.017822265625,

-0.0162200927734375,

0.022369384765625,

0.03912353515625,

-0.04541015625,

-0.0654296875,

-0.0244293212890625,

0.023... |

shahules786/OA-cornell-movies-dialog | 2023-02-10T05:34:43.000Z | [

"region:us"

] | shahules786 | null | null | 3 | 5 | 2023-02-07T15:21:28 | ---

dataset_info:

features:

- name: conversation

dtype: string

splits:

- name: train

num_bytes: 9476338

num_examples: 20959

download_size: 4859997

dataset_size: 9476338

---

# Dataset Card for Open Assistant Cornell Movies Dialog

## Dataset Summary

The dataset was created using [Cornell Movies Dialog Corpus](https://www.cs.cornell.edu/~cristian/Cornell_Movie-Dialogs_Corpus.html) which contains a large metadata-rich collection of fictional conversations extracted from raw movie scripts.

Dialogs and meta-data from the underlying Corpus were used to design a dataset that can be used to InstructGPT based models to learn movie scripts.

Example :

```

User: Assume RICK and ALICE are characters from a fantasy-horror movie, continue the conversation between them

RICK: I heard you screaming. Was it a bad one?

ALICE: It was bad.

RICK: Doesn't the dream master work for you anymore?

Assistant: Sure

ALICE: I can't find him.

RICK: Hey, since when do you play Thomas Edison? This looks like Sheila's.

ALICE: It is...was. It's a zapper, it might help me stay awake.

RICK: Yeah, or turn you into toast.

```

## Citations

```

@InProceedings{Danescu-Niculescu-Mizil+Lee:11a,

author={Cristian Danescu-Niculescu-Mizil and Lillian Lee},

title={Chameleons in imagined conversations:

A new approach to understanding coordination of linguistic style in dialogs.},

booktitle={Proceedings of the Workshop on Cognitive Modeling and Computational Linguistics, ACL 2011},

year={2011}

}

``` | 1,542 | [

[

-0.024139404296875,

-0.073974609375,

0.019287109375,

-0.0248870849609375,

-0.006683349609375,

0.005950927734375,

-0.03173828125,

-0.00737762451171875,

0.022918701171875,

0.037994384765625,

-0.04144287109375,

-0.038055419921875,

-0.01220703125,

0.012779235839... |

kasnerz/cacapo | 2023-03-14T15:09:56.000Z | [

"region:us"

] | kasnerz | null | null | 0 | 5 | 2023-02-08T08:38:35 | Entry not found | 15 | [

[

-0.021392822265625,

-0.01494598388671875,

0.05718994140625,

0.028839111328125,

-0.0350341796875,

0.046539306640625,

0.052490234375,

0.00507354736328125,

0.051361083984375,

0.01702880859375,

-0.052093505859375,

-0.01494598388671875,

-0.06036376953125,

0.03790... |

kasnerz/eventnarrative | 2023-03-14T15:07:58.000Z | [

"region:us"

] | kasnerz | null | null | 0 | 5 | 2023-02-08T09:06:55 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

cahya/instructions_indonesian | 2023-02-09T17:03:53.000Z | [

"license:mit",

"region:us"

] | cahya | null | null | 0 | 5 | 2023-02-09T16:34:47 | ---

license: mit

---

# Indonesian Instructions Dataset

| 57 | [

[

0.0016126632690429688,

-0.037200927734375,

-0.01113128662109375,

0.04937744140625,

-0.039703369140625,

-0.0261383056640625,

-0.009368896484375,

0.022857666015625,

0.01099395751953125,

0.09747314453125,

-0.057830810546875,

-0.0533447265625,

-0.047576904296875,

... |

IlyaGusev/habr | 2023-03-09T23:16:35.000Z | [

"task_categories:text-generation",

"size_categories:100K<n<1M",

"language:ru",

"language:en",

"region:us"

] | IlyaGusev | null | null | 13 | 5 | 2023-02-10T20:36:09 | ---

dataset_info:

features:

- name: id

dtype: uint32

- name: language

dtype: string

- name: url

dtype: string

- name: title

dtype: string

- name: text_markdown

dtype: string

- name: text_html

dtype: string

- name: author

dtype: string

- name: original_author

dtype: string

- name: original_url

dtype: string

- name: lead_html

dtype: string

- name: lead_markdown

dtype: string

- name: type

dtype: string

- name: time_published

dtype: uint64

- name: statistics

struct:

- name: commentsCount

dtype: uint32

- name: favoritesCount

dtype: uint32

- name: readingCount

dtype: uint32

- name: score

dtype: int32

- name: votesCount

dtype: int32

- name: votesCountPlus

dtype: int32

- name: votesCountMinus

dtype: int32

- name: labels

sequence: string

- name: hubs

sequence: string

- name: flows

sequence: string

- name: tags

sequence: string

- name: reading_time

dtype: uint32

- name: format

dtype: string

- name: complexity

dtype: string

- name: comments

sequence:

- name: id

dtype: uint64

- name: parent_id

dtype: uint64

- name: level

dtype: uint32

- name: time_published

dtype: uint64

- name: score

dtype: int32

- name: votes

dtype: uint32

- name: message_html

dtype: string

- name: message_markdown

dtype: string

- name: author

dtype: string

- name: children

sequence: uint64

splits:

- name: train

num_bytes: 19968161329

num_examples: 302049

download_size: 3485570346

dataset_size: 19968161329

task_categories:

- text-generation

language:

- ru

- en

size_categories:

- 100K<n<1M

---

# Habr dataset

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Description](#description)

- [Usage](#usage)

- [Data Instances](#data-instances)

- [Source Data](#source-data)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

## Description

**Summary:** Dataset of posts and comments from [habr.com](https://habr.com/ru/all/), a Russian collaborative blog about IT, computer science and anything related to the Internet.

**Script:** [create_habr.py](https://github.com/IlyaGusev/rulm/blob/master/data_processing/create_habr.py)

**Point of Contact:** [Ilya Gusev](ilya.gusev@phystech.edu)

**Languages:** Russian, English, some programming code.

## Usage

Prerequisites:

```bash

pip install datasets zstandard jsonlines pysimdjson

```

Dataset iteration:

```python

from datasets import load_dataset

dataset = load_dataset('IlyaGusev/habr', split="train", streaming=True)

for example in dataset:

print(example["text_markdown"])

```

## Data Instances

```

{

"id": 12730,

"language": "ru",

"url": "https://habr.com/ru/post/12730/",

"text_markdown": "...",

"text_html": "...",

"lead_markdown": "...",

"lead_html": "...",

"type": "article",

"labels": [],

"original_author": null,

"original_url": null,

"time_published": 1185962380,

"author": "...",

"title": "Хочешь в университет — сделай презентацию",

"statistics": {

"commentsCount": 23,

"favoritesCount": 1,

"readingCount": 1542,

"score": 7,

"votesCount": 15,

"votesCountPlus": 11,

"votesCountMinus": 4

},

"hubs": [

"itcompanies"

],

"flows": [

"popsci"

],

"tags": [

"PowerPoint",

"презентация",

"абитуриенты",

],

"reading_time": 1,

"format": null,

"complexity": null,

"comments": {

"id": [11653537, 11653541],

"parent_id": [null, 11653537],

"level": [0, 1],

"time_published": [1185963192, 1185967886],

"score": [-1, 0],

"votes": [1, 0],

"message_html": ["...", "..."],

"author": ["...", "..."],

"children": [[11653541], []]

}

}

```

You can use this little helper to unflatten sequences:

```python

def revert_flattening(records):

fixed_records = []

for key, values in records.items():

if not fixed_records:

fixed_records = [{} for _ in range(len(values))]

for i, value in enumerate(values):

fixed_records[i][key] = value

return fixed_records

```

The original JSONL is already unflattened.

## Source Data

* The data source is the [Habr](https://habr.com/) website.

* API call example: [post 709430](https://habr.com/kek/v2/articles/709430).

* Processing script is [here](https://github.com/IlyaGusev/rulm/blob/master/data_processing/create_habr.py).

## Personal and Sensitive Information

The dataset is not anonymized, so individuals' names can be found in the dataset. Information about the original authors is included in the dataset where possible.

| 4,745 | [

[

-0.0280914306640625,

-0.04522705078125,

0.0048828125,

0.0224151611328125,

-0.019744873046875,

0.005825042724609375,

-0.0204315185546875,

0.00269317626953125,

0.024688720703125,

0.0244293212890625,

-0.0330810546875,

-0.057525634765625,

-0.0221710205078125,

0.... |

DReAMy-lib/DreamBank-dreams-en | 2023-02-13T22:51:35.000Z | [

"size_categories:10K<n<100K",

"language:en",

"license:apache-2.0",

"region:us"

] | DReAMy-lib | null | null | 0 | 5 | 2023-02-13T22:20:25 | ---

dataset_info:

features:

- name: series

dtype: string

- name: description

dtype: string

- name: dreams

dtype: string

- name: gender

dtype: string

- name: year

dtype: string

splits:

- name: train

num_bytes: 21526822

num_examples: 22415

download_size: 11984242

dataset_size: 21526822

license: apache-2.0

language:

- en

size_categories:

- 10K<n<100K

---

# DreamBank - Dreams

The dataset is a collection of ~20 k textual reports of dreams, originally scraped from the [DreamBank](https://www.dreambank.net/) databased by

[`mattbierner`](https://github.com/mattbierner/DreamScrape). The DreamBank reports are divided into `series`,

which are collections of individuals or research projects/groups that have gathered the dreams.

## Content

The dataset revolves around three main features:

- `dreams`: the content of each dream report.

- `series`: the series to which a report belongs

- `description`: a brief description of the `series`

- `gender`: the gender of the individual(s) in the `series`

- `year`: the time window of the recordings

## Series distribution

The following is a summary of (alphabetically ordered) DreamBank's series together with their total amount of dream reports.

- alta: 422

- angie: 48

- arlie: 212

- b: 3114

- b-baseline: 250

- b2: 1138

- bay_area_girls_456: 234

- bay_area_girls_789: 154

- bea1: 223

- bea2: 63

- blind-f: 238

- blind-m: 143

- bosnak: 53

- chris: 100

- chuck: 75

- dahlia: 24

- david: 166

- dorothea: 899

- ed: 143

- edna: 19

- elizabeth: 1707

- emma: 1221

- emmas_husband: 72

- esther: 110

- hall_female: 681

- jasmine1: 39

- jasmine2: 269

- jasmine3: 259

- jasmine4: 94

- jeff: 87

- joan: 42

- kenneth: 2021

- lawrence: 206

- mack: 38

- madeline1-hs: 98

- madeline2-dorms: 186

- madeline3-offcampus: 348

- madeline4-postgrad: 294

- mark: 23

- melissa: 89

- melora: 211

- melvin: 128

- merri: 315

- miami-home: 171

- miami-lab: 274

- midwest_teens-f: 111

- midwest_teens-m: 83

- nancy: 44

- natural_scientist: 234

- norman: 1235

- norms-f: 490

- norms-m: 491

- pegasus: 1093

- peru-f: 381

- peru-m: 384

- phil1: 106

- phil2: 220

- phil3: 180

- physiologist: 86

- ringo: 16

- samantha: 63

- seventh_graders: 69

- toby: 33

- tom: 27

- ucsc_women: 81

- vickie: 35

- vietnam_vet: 98

- wedding: 65

- west_coast_teens: 89

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 2,448 | [

[

-0.03240966796875,

-0.0246429443359375,

0.0198516845703125,

-0.0116424560546875,

-0.01337432861328125,

0.022308349609375,

0.0023403167724609375,

-0.031402587890625,

0.041839599609375,

0.062103271484375,

-0.060333251953125,

-0.070556640625,

-0.04803466796875,

... |

RicardoRei/wmt-sqm-human-evaluation | 2023-02-17T11:10:39.000Z | [

"size_categories:1M<n<10M",

"language:cs",

"language:de",

"language:en",

"language:hr",

"language:ja",

"language:liv",

"language:ru",

"language:sah",

"language:uk",

"language:zh",

"license:apache-2.0",

"mt-evaluation",

"WMT",

"12-lang-pairs",

"region:us"

] | RicardoRei | null | null | 0 | 5 | 2023-02-17T10:42:46 | ---

license: apache-2.0

size_categories:

- 1M<n<10M

language:

- cs

- de

- en

- hr

- ja

- liv

- ru

- sah

- uk

- zh

tags:

- mt-evaluation

- WMT

- 12-lang-pairs

---

# Dataset Summary

In 2022, several changes were made to the annotation procedure used in the WMT Translation task. In contrast to the standard DA (sliding scale from 0-100) used in previous years, in 2022 annotators performed DA+SQM (Direct Assessment + Scalar Quality Metric). In DA+SQM, the annotators still provide a raw score between 0 and 100, but also are presented with seven labeled tick marks. DA+SQM helps to stabilize scores across annotators (as compared to DA).

The data is organised into 8 columns:

- lp: language pair

- src: input text

- mt: translation

- ref: reference translation

- score: direct assessment

- system: MT engine that produced the `mt`

- annotators: number of annotators

- domain: domain of the input text (e.g. news)

- year: collection year

You can also find the original data [here](https://www.statmt.org/wmt22/results.html)

## Python usage:

```python

from datasets import load_dataset

dataset = load_dataset("RicardoRei/wmt-sqm-human-evaluation", split="train")

```

There is no standard train/test split for this dataset but you can easily split it according to year, language pair or domain. E.g. :

```python

# split by year

data = dataset.filter(lambda example: example["year"] == 2022)

# split by LP

data = dataset.filter(lambda example: example["lp"] == "en-de")

# split by domain

data = dataset.filter(lambda example: example["domain"] == "news")

```

Note that, so far, all data is from [2022 General Translation task](https://www.statmt.org/wmt22/translation-task.html)

## Citation Information

If you use this data please cite the WMT findings:

- [Findings of the 2022 Conference on Machine Translation (WMT22)](https://aclanthology.org/2022.wmt-1.1.pdf)

| 1,895 | [

[

-0.043792724609375,

-0.04522705078125,

0.0259857177734375,

0.01096343994140625,

-0.0322265625,

-0.0158843994140625,

-0.0150909423828125,

-0.03558349609375,

0.0182952880859375,

0.03521728515625,

-0.040740966796875,

-0.039886474609375,

-0.05316162109375,

0.034... |

jonathan-roberts1/Ships-In-Satellite-Imagery | 2023-03-31T14:38:12.000Z | [

"license:cc-by-sa-4.0",

"region:us"

] | jonathan-roberts1 | null | null | 2 | 5 | 2023-02-17T16:48:59 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': an entire ship

'1': no ship or part of a ship

splits:

- name: train

num_bytes: 41806886

num_examples: 4000

download_size: 0

dataset_size: 41806886

license: cc-by-sa-4.0

---

# Dataset Card for "Ships-In-Satellite-Imagery"

## Dataset Description

- **Paper:** [Ships in Satellite Imagery](https://www.kaggle.com/datasets/rhammell/ships-in-satellite-imagery)

### Licensing Information

CC BY-SA 4.0

## Citation Information

[Ships in Satellite Imagery](https://www.kaggle.com/datasets/rhammell/ships-in-satellite-imagery)

```

@misc{kaggle_sisi,

author = {Hammell, Robert},

title = {Ships in Satellite Imagery},

howpublished = {\url{https://www.kaggle.com/datasets/rhammell/ships-in-satellite-imagery}},

year = {2018},

version = {9.0}

}

``` | 915 | [

[

-0.031402587890625,

-0.0194091796875,

0.037139892578125,

0.021942138671875,

-0.057037353515625,

-0.0015020370483398438,

0.0159912109375,

-0.03289794921875,

0.0208282470703125,

0.05267333984375,

-0.05059814453125,

-0.0679931640625,

-0.0340576171875,

-0.011146... |

recmeapp/mobilerec | 2023-02-21T17:06:16.000Z | [

"region:us"

] | recmeapp | null | null | 3 | 5 | 2023-02-20T02:40:55 | ---

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/datasetcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/datasets-cards

{}

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- https://github.com/mhmaqbool/mobilerec

- **Repository:**

- https://github.com/mhmaqbool/mobilerec

- **Paper:**

- MobileRec: A Large-Scale Dataset for Mobile Apps Recommendation

- **Point of Contact:**

- M.H. Maqbool (hasan.khowaja@gmail.com)

- Abubakar Siddique (abubakar.ucr@gmail.com)

### Dataset Summary

MobileRec is a large-scale app recommendation dataset. There are 19.3 million user\item interactions. This is a 5-core dataset.

User\item interactions are sorted in ascending chronological order. There are 0.7 million users who have had at least five distinct interactions.

There are 10173 apps in total.

### Supported Tasks and Leaderboards

Sequential Recommendation

### Languages

English

## How to use the dataset?

```