id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 6.67k ⌀ | citation stringlengths 0 10.7k ⌀ | likes int64 0 3.66k | downloads int64 0 8.89M | created timestamp[us] | card stringlengths 11 977k | card_len int64 11 977k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|

dbdu/ShareGPT-74k-ko | 2023-08-19T07:00:39.000Z | [

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:ko",

"license:cc-by-2.0",

"conversation",

"chatgpt",

"gpt-3.5",

"region:us"

] | dbdu | null | null | 11 | 5 | 2023-05-23T16:30:43 | ---

language:

- ko

pretty_name: ShareGPT-74k-ko

tags:

- conversation

- chatgpt

- gpt-3.5

license: cc-by-2.0

task_categories:

- text-generation

size_categories:

- 10K<n<100K

---

# ShareGPT-ko-74k

ShareGPT 90k의 cleaned 버전을 구글 번역기를 이용하여 번역하였습니다.\

원본 데이터셋은 [여기](https://github.com/lm-sys/FastChat/issues/90)에서 확인하실 수 있습니다.

Korean-translated version of ShareGPT-90k, translated by Google Translaton.\

You can check the original dataset [here](https://github.com/lm-sys/FastChat/issues/90).

## Dataset Description

json 파일의 구조는 원본 데이터셋과 동일합니다.\

`*_unclneaed.json`은 원본 데이터셋을 번역하고 따로 후처리하지 않은 데이터셋입니다. (총 74k)\

`*_cleaned.json`은 위의 데이터에서 코드가 포함된 데이터를 러프하게 제거한 데이터셋입니다. (총 55k)\

**주의**: 코드는 번역되었을 수 있으므로 cleaned를 쓰시는 걸 추천합니다.

The structure of the dataset is the same with the original dataset.\

`*_unclneaed.json` are Korean-translated data, without any post-processing. (total 74k dialogues)\

`*_clneaed.json` are post-processed version which dialogues containing code snippets are eliminated from. (total 55k dialogues)\

**WARNING**: Code snippets might have been translated into Korean. I recommend you use cleaned files.

## Licensing Information

GPT를 이용한 데이터셋이므로 OPENAI의 [약관](https://openai.com/policies/terms-of-use)을 따릅니다.\

그 외의 경우 [CC BY 2.0 KR](https://creativecommons.org/licenses/by/2.0/kr/)을 따릅니다.

The licensing status of the datasets follows [OPENAI Licence](https://openai.com/policies/terms-of-use) as it contains GPT-generated sentences.\

For all the other cases, the licensing status follows [CC BY 2.0 KR](https://creativecommons.org/licenses/by/2.0/kr/).

## Code

번역에 사용한 코드는 아래 리포지토리에서 확인 가능합니다. Check out the following repository to see the translation code used.\

https://github.com/dubuduru/ShareGPT-translation

You can use the repository to translate ShareGPT-like dataset into your preferred language. | 1,825 | [

[

-0.0192718505859375,

-0.048675537109375,

0.0188446044921875,

0.02886962890625,

-0.044830322265625,

-0.009552001953125,

-0.0282135009765625,

-0.0168304443359375,

0.0289764404296875,

0.035919189453125,

-0.04736328125,

-0.051971435546875,

-0.04742431640625,

0.0... |

danioshi/incubus_taylor_swift_lyrics | 2023-05-25T19:03:59.000Z | [

"size_categories:n<1K",

"language:en",

"license:cc0-1.0",

"music",

"region:us"

] | danioshi | null | null | 0 | 5 | 2023-05-25T18:57:33 | ---

license: cc0-1.0

language:

- en

tags:

- music

pretty_name: Incubus and Taylor Swift lyrics

size_categories:

- n<1K

---

# Description

This dataset contains lyrics from both Incubus and Taylor Swift.

# Format

The file is in CSV format and contains three columns: Artist, Song Name and Lyrics.

## Caveats

The column Song Name has been transformed to a single string in lowercase format, so instead of having "Name of Song", the value will be "nameofsong". | 459 | [

[

0.004123687744140625,

-0.024505615234375,

-0.01236724853515625,

0.0355224609375,

-0.01561737060546875,

0.030517578125,

-0.00787353515625,

-0.0089874267578125,

0.0298004150390625,

0.07574462890625,

-0.060943603515625,

-0.055511474609375,

-0.041107177734375,

0... |

bluesky333/chemical_language_understanding_benchmark | 2023-07-09T10:36:44.000Z | [

"task_categories:text-classification",

"task_categories:token-classification",

"size_categories:10K<n<100K",

"language:en",

"license:cc-by-4.0",

"chemistry",

"region:us"

] | bluesky333 | null | null | 2 | 5 | 2023-05-30T05:52:05 | ---

license: cc-by-4.0

task_categories:

- text-classification

- token-classification

language:

- en

tags:

- chemistry

pretty_name: CLUB

size_categories:

- 10K<n<100K

---

## Table of Contents

- [Benchmark Summary](#benchmark-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

<p><h1>🧪🔋 Chemical Language Understanding Benchmark 🛢️🧴</h1></p>

<a name="benchmark-summary"></a>

Benchmark Summary

Chemistry Language Understanding Benchmark is published in ACL2023 industry track to facilitate NLP research in chemical industry [ACL2023 Paper Link Not Available Yet](link).

From our understanding, it is one of the first benchmark datasets with tasks for both patent and literature articles provided by the industrial organization.

All the datasets are annotated by professional chemists.

<a name="languages"></a>

Languages

The language of this benchmark is English.

<a name="dataset-structure"></a>

Data Structure

Benchmark has 4 datasets: 2 for text classification and 2 for token classification.

| Dataset | Task | # Examples | Avg. Token Length | # Classes / Entity Groups |

| ----- | ------ | ---------- | ------------ | ------------------------- |

| PETROCHEMICAL | Patent Area Classification | 2,775 | 448.19 | 7 |

| RHEOLOGY | Sentence Classification | 2,017 | 55.03 | 5 |

| CATALYST | Catalyst Entity Recognition | 4,663 | 42.07 | 5 |

| BATTERY | Battery Entity Recognition | 3,750 | 40.73 | 3 |

You can refer to the paper for detailed description of the datasets.

<a name="data-instances"></a>

Data Instances

Each example is a paragraph/setence of an academic paper or patent with annotations in a json format.

<a name="data-fields"></a>

Data Fields

The fields for the text classification task are:

1) 'id', a unique numbered identifier sequentially assigned.

2) 'sentence', the input text.

3) 'label', the class for the text.

The fields for the text classification task are:

1) 'id', a unique numbered identifier sequentially assigned.

2) 'tokens', the input text tokenized by BPE tokenizer.

3) 'ner_tags', the entity label for the tokens.

<a name="data-splits"></a>

Data Splits

The data is split into 80 (train) / 20 (development).

<a name="dataset-creation"></a>

Dataset Creation

<a name="curation-rationale"></a>

Curation Rationale

The dataset was created to provide a benchmark in chemical language model for researchers and developers.

<a name="source-data"></a>

Source Data

The dataset consists of open-access chemistry publications and patents annotated by professional chemists.

<a name="licensing-information"></a>

Licensing Information

The manual annotations created for CLUB are licensed under a [Creative Commons Attribution 4.0 International License (CC-BY-4.0)](https://creativecommons.org/licenses/by/4.0/).

<a name="citation-information"></a>

Citation Information

We will provide the citation information once ACL2023 industry track paper is published.

| 3,449 | [

[

-0.013671875,

-0.0238494873046875,

0.0367431640625,

0.0169830322265625,

0.014404296875,

0.0120849609375,

-0.0242919921875,

-0.036346435546875,

-0.01300811767578125,

0.025115966796875,

-0.02978515625,

-0.078857421875,

-0.03582763671875,

0.0214385986328125,

... |

TigerResearch/pretrain_en | 2023-05-30T10:01:55.000Z | [

"task_categories:text-generation",

"size_categories:10M<n<100M",

"language:en",

"license:apache-2.0",

"region:us"

] | TigerResearch | null | null | 12 | 5 | 2023-05-30T08:40:36 | ---

dataset_info:

features:

- name: content

dtype: string

splits:

- name: train

num_bytes: 48490123196

num_examples: 22690306

download_size: 5070161762

dataset_size: 48490123196

license: apache-2.0

task_categories:

- text-generation

language:

- en

size_categories:

- 10M<n<100M

---

# Dataset Card for "pretrain_en"

[Tigerbot](https://github.com/TigerResearch/TigerBot) pretrain数据的英文部分。

## Usage

```python

import datasets

ds_sft = datasets.load_dataset('TigerResearch/pretrain_en')

``` | 512 | [

[

-0.0287933349609375,

-0.01520538330078125,

-0.006103515625,

0.01800537109375,

-0.04852294921875,

0.006336212158203125,

-0.007129669189453125,

0.007472991943359375,

0.038543701171875,

0.0291900634765625,

-0.05572509765625,

-0.0311126708984375,

-0.017242431640625,... |

tollefj/rettsavgjoerelser_100samples_embeddings | 2023-08-11T10:45:31.000Z | [

"language:no",

"region:us"

] | tollefj | null | null | 0 | 5 | 2023-06-02T12:46:28 | ---

dataset_info:

features:

- name: url

dtype: string

- name: keywords

sequence: string

- name: text

dtype: string

- name: sentences

sequence: string

- name: summary

sequence: string

- name: embedding

sequence:

sequence: float32

splits:

- name: train

num_bytes: 73887305

num_examples: 100

download_size: 71145367

dataset_size: 73887305

language:

- 'no'

---

# Dataset Card for "rettsavgjoerelser_100samples_embeddings"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 610 | [

[

-0.0262298583984375,

-0.010894775390625,

-0.0035762786865234375,

0.0189666748046875,

-0.0230560302734375,

0.00214385986328125,

0.0159149169921875,

0.01335906982421875,

0.0699462890625,

0.043243408203125,

-0.052734375,

-0.0648193359375,

-0.05059814453125,

-0.... |

cjvt/janes_tag | 2023-06-06T10:07:53.000Z | [

"task_categories:token-classification",

"size_categories:1K<n<10K",

"language:sl",

"license:cc-by-sa-4.0",

"code-mixed",

"nonstandard",

"ner",

"region:us"

] | cjvt | Janes-Tag is a manually annotated corpus of Slovene Computer-Mediated Communication (CMC) consisting of mostly tweets

but also blogs, forums and news comments. | @misc{janes_tag,

title = {{CMC} training corpus Janes-Tag 3.0},

author = {Lenardi{\v c}, Jakob and {\v C}ibej, Jaka and Arhar Holdt, {\v S}pela and Erjavec, Toma{\v z} and Fi{\v s}er, Darja and Ljube{\v s}i{\'c}, Nikola and Zupan, Katja and Dobrovoljc, Kaja},

url = {http://hdl.handle.net/11356/1732},

note = {Slovenian language resource repository {CLARIN}.{SI}},

copyright = {Creative Commons - Attribution-{ShareAlike} 4.0 International ({CC} {BY}-{SA} 4.0)},

year = {2022}

} | 0 | 5 | 2023-06-05T10:35:43 | ---

license: cc-by-sa-4.0

dataset_info:

features:

- name: id

dtype: string

- name: words

sequence: string

- name: lemmas

sequence: string

- name: msds

sequence: string

- name: nes

sequence: string

splits:

- name: train

num_bytes: 2653609

num_examples: 2957

download_size: 2871765

dataset_size: 2653609

task_categories:

- token-classification

language:

- sl

tags:

- code-mixed

- nonstandard

- ner

size_categories:

- 1K<n<10K

---

# Dataset Card for Janes-Tag

### Dataset Summary

Janes-Tag is a manually annotated corpus of Slovene Computer-Mediated Communication (CMC) consisting of mostly tweets but also blogs, forums and news comments.

### Languages

Code-switched/nonstandard Slovenian.

## Dataset Structure

### Data Instances

A sample instance from the dataset - each word is annotated with its form (`word`), lemma, MSD tag (XPOS), and IOB2-encoded named entity tag.

```

{

'id': 'janes.news.rtvslo.279732.2',

'words': ['Jst', 'mam', 'tud', 'dons', 'rojstn', 'dan', '.'],

'lemmas': ['jaz', 'imeti', 'tudi', 'danes', 'rojsten', 'dan', '.'],

'msds': ['mte:Pp1-sn', 'mte:Vmpr1s-n', 'mte:Q', 'mte:Rgp', 'mte:Agpmsay', 'mte:Ncmsan', 'mte:Z'],

'nes': ['O', 'O', 'O', 'O', 'O', 'O', 'O']

}

```

### Data Fields

- `id`: unique identifier of the example;

- `words`: words in the example;

- `lemmas`: lemmas in the example;

- `msds`: msds in the example;

- `nes`: IOB2-encoded named entity tag (person, location, organization, misc, other)

## Additional Information

### Dataset Curators

Jakob Lenardič et al. (please see http://hdl.handle.net/11356/1732 for the full list)

### Licensing Information

CC BY-SA 4.0.

### Citation Information

```

@misc{janes_tag,

title = {{CMC} training corpus Janes-Tag 3.0},

author = {Lenardi{\v c}, Jakob and {\v C}ibej, Jaka and Arhar Holdt, {\v S}pela and Erjavec, Toma{\v z} and Fi{\v s}er, Darja and Ljube{\v s}i{\'c}, Nikola and Zupan, Katja and Dobrovoljc, Kaja},

url = {http://hdl.handle.net/11356/1732},

note = {Slovenian language resource repository {CLARIN}.{SI}},

copyright = {Creative Commons - Attribution-{ShareAlike} 4.0 International ({CC} {BY}-{SA} 4.0)},

year = {2022}

}

```

### Contributions

Thanks to [@matejklemen](https://github.com/matejklemen) for adding this dataset. | 2,306 | [

[

-0.01629638671875,

-0.030792236328125,

0.0092620849609375,

0.01070404052734375,

-0.02020263671875,

-0.01140594482421875,

-0.0137481689453125,

0.002849578857421875,

0.0204315185546875,

0.033233642578125,

-0.054595947265625,

-0.0836181640625,

-0.05377197265625,

... |

daven3/geosignal | 2023-08-28T04:40:53.000Z | [

"task_categories:question-answering",

"license:apache-2.0",

"region:us"

] | daven3 | null | null | 4 | 5 | 2023-06-05T18:38:16 | ---

license: apache-2.0

task_categories:

- question-answering

---

## Instruction Tuning: GeoSignal

Scientific domain adaptation has two main steps during instruction tuning.

- Instruction tuning with general instruction-tuning data. Here we use Alpaca-GPT4.

- Instruction tuning with restructured domain knowledge, which we call expertise instruction tuning. For K2, we use knowledge-intensive instruction data, GeoSignal.

***The following is the illustration of the training domain-specific language model recipe:***

- **Adapter Model on [Huggingface](https://huggingface.co/): [daven3/k2_it_adapter](https://huggingface.co/daven3/k2_it_adapter)**

For the design of the GeoSignal, we collect knowledge from various data sources, like:

GeoSignal is designed for knowledge-intensive instruction tuning and used for aligning with experts.

The full-version will be upload soon, or email [daven](mailto:davendw@sjtu.edu.cn) for potential research cooperation.

| 1,067 | [

[

-0.0239105224609375,

-0.06329345703125,

0.032318115234375,

0.0212249755859375,

-0.015869140625,

-0.0194549560546875,

-0.0289154052734375,

-0.0208740234375,

-0.01220703125,

0.0418701171875,

-0.05072021484375,

-0.057586669921875,

-0.05615234375,

-0.02101135253... |

Posos/MedNERF | 2023-06-07T13:55:06.000Z | [

"task_categories:token-classification",

"size_categories:n<1K",

"language:fr",

"license:cc-by-nc-sa-4.0",

"medical",

"arxiv:2306.04384",

"region:us"

] | Posos | null | null | 1 | 5 | 2023-06-06T12:50:48 | ---

license: cc-by-nc-sa-4.0

task_categories:

- token-classification

language:

- fr

tags:

- medical

pretty_name: MedNERF

size_categories:

- n<1K

---

# MedNERF

## Dataset Description

- **Paper:** [Multilingual Clinical NER: Translation or Cross-lingual Transfer?](https://arxiv.org/abs/2306.04384)

- **Point of Contact:** [email](research@posos.fr)

### Dataset Summary

MedNERF is a French medical NER dataset whose aim is to serve as a test set for medical NER models.

It has been built using a sample of French medical prescriptions annotated with the same guidelines as the [n2c2 dataset](https://academic.oup.com/jamia/article-abstract/27/1/3/5581277?redirectedFrom=fulltext&login=false).

Entities are annotated with the following labels: `Drug`, `Strength`, `Form`, `Dosage`, `Duration` and `Frequency`, using the IOB format.

## Licensing Information

This dataset is distributed under the Creative Commons Attribution Non Commercial Share Alike 4.0 license.

## Citation information

```

@inproceedings{mednerf,

title = "Multilingual Clinical NER: Translation or Cross-lingual Transfer?",

author = "Gaschi, Félix and Fontaine, Xavier and Rastin, Parisa and Toussaint, Yannick",

booktitle = "Proceedings of the 5th Clinical Natural Language Processing Workshop",

publisher = "Association for Computational Linguistics",

year = "2023"

}

``` | 1,369 | [

[

-0.01708984375,

-0.0308990478515625,

0.01611328125,

0.03387451171875,

-0.01155853271484375,

-0.030517578125,

-0.0158233642578125,

-0.042266845703125,

0.0228729248046875,

0.04095458984375,

-0.03753662109375,

-0.037506103515625,

-0.06707763671875,

0.0306854248... |

bogdancazan/wikilarge-text-simplification | 2023-06-06T17:49:49.000Z | [

"region:us"

] | bogdancazan | null | null | 0 | 5 | 2023-06-06T17:45:53 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

SahandNZ/cryptonews-articles-with-price-momentum-labels | 2023-06-07T17:49:38.000Z | [

"task_categories:text-classification",

"size_categories:10K<n<100K",

"language:en",

"license:openrail",

"finance",

"region:us"

] | SahandNZ | null | null | 5 | 5 | 2023-06-07T16:35:21 | ---

license: openrail

task_categories:

- text-classification

language:

- en

tags:

- finance

pretty_name: Cryptonews.com articles with price momentum labels

size_categories:

- 10K<n<100K

---

# Dataset Card for Cryptonews articles with price momentum labels

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

## Dataset Description

- **Homepage:**

- **Repository:** https://github.com/SahandNZ/IUST-NLP-project-spring-2023

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The dataset was gathered from two prominent sources in the cryptocurrency industry: Cryptonews.com and Binance.com. The aim of the dataset was to evaluate the impact of news on crypto price movements.

As we know, news events such as regulatory changes, technological advancements, and major partnerships can have a significant impact on the price of cryptocurrencies. By analyzing the data collected from these sources, this dataset aimed to provide insights into the relationship between news events and crypto market trends.

### Supported Tasks and Leaderboards

- **Text Classification**

- **Sentiment Analysis**

### Languages

The language data in this dataset is in English (BCP-47 en)

## Dataset Structure

### Data Instances

Todo

### Data Fields

Todo

### Data Splits

Todo

### Source Data

- **Textual:** https://Cryptonews.com

- **Numerical:** https://Binance.com | 1,718 | [

[

-0.01152801513671875,

-0.0301666259765625,

0.01015472412109375,

0.02337646484375,

-0.051055908203125,

0.01389312744140625,

-0.016448974609375,

-0.03521728515625,

0.0479736328125,

0.020782470703125,

-0.058685302734375,

-0.091796875,

-0.049224853515625,

0.0024... |

andersonbcdefg/redteaming_eval_pairwise | 2023-06-08T05:51:12.000Z | [

"region:us"

] | andersonbcdefg | null | null | 0 | 5 | 2023-06-08T05:48:52 | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: response_a

dtype: string

- name: response_b

dtype: string

- name: explanation

dtype: string

- name: preferred

dtype: string

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 79844

num_examples: 105

download_size: 0

dataset_size: 79844

---

# Dataset Card for "redteaming_eval_pairwise"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 558 | [

[

-0.0253448486328125,

-0.034912109375,

0.004974365234375,

0.03582763671875,

-0.01153564453125,

0.01947021484375,

0.01313018798828125,

-0.0037097930908203125,

0.0699462890625,

0.027923583984375,

-0.039154052734375,

-0.04998779296875,

-0.0352783203125,

-0.00782... |

theodor1289/wit_tiny | 2023-06-09T19:21:44.000Z | [

"region:us"

] | theodor1289 | null | null | 0 | 5 | 2023-06-09T19:21:36 | ---

dataset_info:

features:

- name: image_url

dtype: string

- name: image

dtype: image

- name: text

dtype: string

- name: context_page_description

dtype: string

- name: context_section_description

dtype: string

- name: caption_alt_text_description

dtype: string

splits:

- name: train

num_bytes: 73247697.0

num_examples: 882

- name: test

num_bytes: 8588991.0

num_examples: 99

download_size: 81145983

dataset_size: 81836688.0

---

# Dataset Card for "wit_tiny"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 654 | [

[

-0.03973388671875,

-0.019256591796875,

0.0238037109375,

0.0031375885009765625,

-0.01541900634765625,

-0.021820068359375,

-0.0014171600341796875,

-0.0127716064453125,

0.0711669921875,

0.00942230224609375,

-0.0638427734375,

-0.029754638671875,

-0.031036376953125,

... |

theodor1289/wit | 2023-06-15T08:04:59.000Z | [

"region:us"

] | theodor1289 | null | null | 0 | 5 | 2023-06-12T03:41:21 | ---

dataset_info:

features:

- name: image_url

dtype: string

- name: image

dtype:

image:

decode: false

- name: text

dtype: string

- name: context_page_description

dtype: string

- name: context_section_description

dtype: string

- name: caption_alt_text_description

dtype: string

splits:

- name: train

num_bytes: 313793832273.375

num_examples: 3921869

- name: test

num_bytes: 34879359766.5

num_examples: 435764

download_size: 992115227

dataset_size: 348673192039.875

---

# Dataset Card for "wit"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 703 | [

[

-0.0312042236328125,

-0.016357421875,

0.020751953125,

0.011474609375,

-0.0141754150390625,

-0.01511383056640625,

0.00482177734375,

-0.0267486572265625,

0.06365966796875,

0.0157012939453125,

-0.07135009765625,

-0.040924072265625,

-0.041107177734375,

-0.019073... |

dltdojo/ecommerce-faq-chatbot-dataset | 2023-06-13T05:50:52.000Z | [

"region:us"

] | dltdojo | null | null | 1 | 5 | 2023-06-13T01:02:44 | ---

dataset_info:

features:

- name: a_hant

dtype: string

- name: answer

dtype: string

- name: question

dtype: string

- name: q_hant

dtype: string

splits:

- name: train

num_bytes: 28737

num_examples: 79

download_size: 17499

dataset_size: 28737

---

# Dataset Card for "ecommerce-faq-chatbot-dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 472 | [

[

-0.042694091796875,

-0.04217529296875,

-0.0023479461669921875,

0.004444122314453125,

-0.00555419921875,

0.00653839111328125,

0.0128326416015625,

-0.00405120849609375,

0.045501708984375,

0.04949951171875,

-0.08001708984375,

-0.0521240234375,

-0.01763916015625,

... |

Abzu/RedPajama-Data-1T-arxiv-filtered | 2023-06-13T15:24:34.000Z | [

"region:us"

] | Abzu | null | null | 2 | 5 | 2023-06-13T15:24:28 | ---

dataset_info:

features:

- name: text

dtype: string

- name: meta

dtype: string

- name: red_pajama_subset

dtype: string

splits:

- name: train

num_bytes: 229340859.5333384

num_examples: 3911

download_size: 104435457

dataset_size: 229340859.5333384

---

# Dataset Card for "RedPajama-Data-1T-arxiv-filtered"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 475 | [

[

-0.05218505859375,

-0.035919189453125,

0.01081085205078125,

0.0267181396484375,

-0.041259765625,

-0.01361083984375,

0.0165863037109375,

-0.01715087890625,

0.0665283203125,

0.06597900390625,

-0.061065673828125,

-0.06573486328125,

-0.0596923828125,

-0.00683593... |

hyesunyun/liveqa_medical_trec2017 | 2023-06-20T13:33:44.000Z | [

"task_categories:question-answering",

"size_categories:n<1K",

"language:en",

"medical",

"region:us"

] | hyesunyun | null | null | 0 | 5 | 2023-06-15T16:04:52 | ---

task_categories:

- question-answering

language:

- en

tags:

- medical

pretty_name: LiveQAMedical

size_categories:

- n<1K

---

# Dataset Card for LiveQA Medical from TREC 2017

The LiveQA'17 medical task focuses on consumer health question answering. Consumer health questions were received by the U.S. National Library of Medicine (NLM).

The dataset consists of constructed medical question-answer pairs for training and testing, with additional annotations that can be used to develop question analysis and question answering systems.

Please refer to our overview paper for more information about the constructed datasets and the LiveQA Track:

Asma Ben Abacha, Eugene Agichtein, Yuval Pinter & Dina Demner-Fushman. Overview of the Medical Question Answering Task at TREC 2017 LiveQA. TREC, Gaithersburg, MD, 2017 (https://trec.nist.gov/pubs/trec26/papers/Overview-QA.pdf).

**Homepage:** [https://github.com/abachaa/LiveQA_MedicalTask_TREC2017](https://github.com/abachaa/LiveQA_MedicalTask_TREC2017)

## Medical Training Data

The dataset provides 634 question-answer pairs for training:

1) TREC-2017-LiveQA-Medical-Train-1.xml => 388 question-answer pairs corresponding to 200 NLM questions.

Each question is divided into one or more subquestion(s). Each subquestion has one or more answer(s).

These question-answer pairs were constructed automatically and validated manually.

2) TREC-2017-LiveQA-Medical-Train-2.xml => 246 question-answer pairs corresponding to 246 NLM questions.

Answers were retrieved manually by librarians.

**You can access them as jsonl**

The datasets are not exhaustive with regards to subquestions, i.e., some subquestions might not be annotated.

Additional annotations are provided for both (i) the Focus and (ii) the Question Type used to define each subquestion.

23 question types were considered (e.g. Treatment, Cause, Diagnosis, Indication, Susceptibility, Dosage) related to four focus categories: Disease, Drug, Treatment and Exam.

## Medical Test Data

Test split can be easily downloaded via huggingface.

Test questions cover 26 question types associated with five focus categories.

Each question includes one or more subquestion(s) and at least one focus and one question type.

Reference answers were selected from trusted resources and validated by medical experts.

At least one reference answer is provided for each test question, its URL and relevant comments.

Question paraphrases were created by assessors and used with the reference answers to judge the participants' answers.

```

If you use these datasets, please cite paper:

@inproceedings{LiveMedQA2017,

author = {Asma {Ben Abacha} and Eugene Agichtein and Yuval Pinter and Dina Demner{-}Fushman},

title = {Overview of the Medical Question Answering Task at TREC 2017 LiveQA},

booktitle = {TREC 2017},

year = {2017}

}

``` | 2,895 | [

[

-0.0279541015625,

-0.06591796875,

0.0216217041015625,

-0.01332855224609375,

-0.0177154541015625,

0.01324462890625,

0.0084991455078125,

-0.045989990234375,

0.03985595703125,

0.053314208984375,

-0.056427001953125,

-0.04852294921875,

-0.029754638671875,

0.02073... |

merlinyx/pose-controlnet | 2023-06-23T18:52:11.000Z | [

"license:mit",

"region:us"

] | merlinyx | null | null | 0 | 5 | 2023-06-19T22:19:21 | ---

license: mit

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': gt

'1': pose

'2': st

- name: caption

dtype: string

- name: gtimage

dtype: image

- name: stimage

dtype: image

splits:

- name: train

num_bytes: 1702123872.04

num_examples: 15764

- name: test

num_bytes: 144819992.92

num_examples: 1346

download_size: 1762884199

dataset_size: 1846943864.96

---

### Dataset Summary

The data is based on DeepFashion; turned into image pairs of the same person in same garment with different poses.

This won't preserve the person/garment at all but just want to process the data first and see what kind of controlnet it can train as an exercise for training a controlnet.

The controlnet_aux's openpose detector sometimes return black images for occluded human images so there won't be a lot of valid image pairs. | 958 | [

[

-0.032012939453125,

-0.0252838134765625,

-0.0159912109375,

-0.0168914794921875,

-0.0298004150390625,

-0.01441192626953125,

0.005619049072265625,

-0.03271484375,

0.023223876953125,

0.062286376953125,

-0.0634765625,

-0.0262603759765625,

-0.037750244140625,

-0.... |

ArtifactAI/arxiv-beir-cs-ml-generated-queries | 2023-06-21T14:23:58.000Z | [

"doi:10.57967/hf/0804",

"region:us"

] | ArtifactAI | null | null | 0 | 5 | 2023-06-21T00:33:06 | ### Dataset Summary

A BEIR style dataset derived from [ArXiv](https://arxiv.org/). The dataset consists of corpus/query pairs derived from ArXiv abstracts from the following categories: "cs.CL", "cs.AI", "cs.CV", "cs.HC", "cs.IR", "cs.RO", "cs.NE", "stat.ML"

### Languages

All tasks are in English (`en`).

## Dataset Structure

The dataset contains a corpus, queries and qrels (relevance judgments file). They must be in the following format:

- `corpus` file: a `.jsonl` file (jsonlines) that contains a list of dictionaries, each with three fields `_id` with unique document identifier, `title` with document title (optional) and `text` with document paragraph or passage. For example: `{"_id": "doc1", "title": "Albert Einstein", "text": "Albert Einstein was a German-born...."}`

- `queries` file: a `.jsonl` file (jsonlines) that contains a list of dictionaries, each with two fields `_id` with unique query identifier and `text` with query text. For example: `{"_id": "q1", "text": "Who developed the mass-energy equivalence formula?"}`

- `qrels` file: a `.tsv` file (tab-seperated) that contains three columns, i.e. the `query-id`, `corpus-id` and `score` in this order. Keep 1st row as header. For example: `q1 doc1 1`

### Data Instances

A high level example of any beir dataset:

```python

corpus = {

"doc1" : {

"title": "Albert Einstein",

"text": "Albert Einstein was a German-born theoretical physicist. who developed the theory of relativity, \

one of the two pillars of modern physics (alongside quantum mechanics). His work is also known for \

its influence on the philosophy of science. He is best known to the general public for his mass–energy \

equivalence formula E = mc2, which has been dubbed 'the world's most famous equation'. He received the 1921 \

Nobel Prize in Physics 'for his services to theoretical physics, and especially for his discovery of the law \

of the photoelectric effect', a pivotal step in the development of quantum theory."

},

"doc2" : {

"title": "", # Keep title an empty string if not present

"text": "Wheat beer is a top-fermented beer which is brewed with a large proportion of wheat relative to the amount of \

malted barley. The two main varieties are German Weißbier and Belgian witbier; other types include Lambic (made\

with wild yeast), Berliner Weisse (a cloudy, sour beer), and Gose (a sour, salty beer)."

},

}

queries = {

"q1" : "Who developed the mass-energy equivalence formula?",

"q2" : "Which beer is brewed with a large proportion of wheat?"

}

qrels = {

"q1" : {"doc1": 1},

"q2" : {"doc2": 1},

}

```

### Data Fields

Examples from all configurations have the following features:

### Corpus

- `corpus`: a `dict` feature representing the document title and passage text, made up of:

- `_id`: a `string` feature representing the unique document id

- `title`: a `string` feature, denoting the title of the document.

- `text`: a `string` feature, denoting the text of the document.

### Queries

- `queries`: a `dict` feature representing the query, made up of:

- `_id`: a `string` feature representing the unique query id

- `text`: a `string` feature, denoting the text of the query.

### Qrels

- `qrels`: a `dict` feature representing the query document relevance judgements, made up of:

- `_id`: a `string` feature representing the query id

- `_id`: a `string` feature, denoting the document id.

- `score`: a `int32` feature, denoting the relevance judgement between query and document.

## Dataset Creation

### Curation Rationale

[Needs More Information]

### Source Data

#### Initial Data Collection and Normalization

[Needs More Information]

#### Who are the source language producers?

[Needs More Information]

## Considerations for Using the Data

### Social Impact of Dataset

[Needs More Information]

### Discussion of Biases

[Needs More Information]

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

[Needs More Information]

### Licensing Information

[Needs More Information]

### Citation Information

Cite as:

```

@misc{arxiv-beir-cs-ml-generated-queries,

title={arxiv-beir-cs-ml-generated-queries},

author={Matthew Kenney},

year={2023}

}

``` | 4,437 | [

[

-0.0240325927734375,

-0.041351318359375,

0.03326416015625,

0.00478363037109375,

0.0171051025390625,

0.0005207061767578125,

-0.01100921630859375,

-0.00408935546875,

0.0271148681640625,

0.0260162353515625,

-0.0216217041015625,

-0.0614013671875,

-0.03759765625,

... |

devrev/dataset-for-t5-3 | 2023-10-12T06:20:12.000Z | [

"region:us"

] | devrev | null | null | 0 | 5 | 2023-06-21T05:28:38 | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: answer

dtype: string

splits:

- name: train

num_bytes: 837375

num_examples: 11383

- name: test

num_bytes: 209423

num_examples: 2846

download_size: 327758

dataset_size: 1046798

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

# Dataset Card for "dataset-for-t5-3"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 578 | [

[

-0.035675048828125,

-0.0015697479248046875,

0.033111572265625,

0.0240631103515625,

-0.0249176025390625,

0.0032138824462890625,

0.033843994140625,

-0.01474761962890625,

0.044708251953125,

0.029388427734375,

-0.05718994140625,

-0.07244873046875,

-0.04058837890625,... |

priyank-m/MJSynth_text_recognition | 2023-07-04T20:49:10.000Z | [

"task_categories:image-to-text",

"size_categories:1M<n<10M",

"language:en",

"region:us"

] | priyank-m | null | null | 0 | 5 | 2023-06-22T15:33:18 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype: string

splits:

- name: train

num_bytes: 12173747703

num_examples: 7224600

- name: val

num_bytes: 1352108669.283

num_examples: 802733

- name: test

num_bytes: 1484450563.896

num_examples: 891924

download_size: 12115256620

dataset_size: 15010306936.179

task_categories:

- image-to-text

language:

- en

size_categories:

- 1M<n<10M

pretty_name: MJSynth

---

# Dataset Card for "MJSynth_text_recognition"

This is the MJSynth dataset for text recognition on document images, synthetically generated, covering 90K English words.

It includes training, validation and test splits.

Source of the dataset: https://www.robots.ox.ac.uk/~vgg/data/text/

Use dataset streaming functionality to try out the dataset quickly without downloading the entire dataset (refer: https://huggingface.co/docs/datasets/stream)

Citation details provided on the source website (if you use the data please cite):

@InProceedings{Jaderberg14c,

author = "Max Jaderberg and Karen Simonyan and Andrea Vedaldi and Andrew Zisserman",

title = "Synthetic Data and Artificial Neural Networks for Natural Scene Text Recognition",

booktitle = "Workshop on Deep Learning, NIPS",

year = "2014",

}

@Article{Jaderberg16,

author = "Max Jaderberg and Karen Simonyan and Andrea Vedaldi and Andrew Zisserman",

title = "Reading Text in the Wild with Convolutional Neural Networks",

journal = "International Journal of Computer Vision",

number = "1",

volume = "116",

pages = "1--20",

month = "jan",

year = "2016",

} | 1,705 | [

[

-0.00917816162109375,

-0.03662109375,

0.0207977294921875,

-0.01526641845703125,

-0.03192138671875,

0.016815185546875,

-0.0214996337890625,

-0.04376220703125,

0.04034423828125,

0.023681640625,

-0.059478759765625,

-0.03741455078125,

-0.051116943359375,

0.04339... |

ChanceFocus/flare-fpb | 2023-10-25T13:31:25.000Z | [

"task_categories:text-classification",

"size_categories:n<1K",

"language:en",

"license:mit",

"finance",

"region:us"

] | ChanceFocus | null | null | 0 | 5 | 2023-06-24T00:10:07 | ---

dataset_info:

features:

- name: id

dtype: string

- name: query

dtype: string

- name: answer

dtype: string

- name: text

dtype: string

- name: choices

sequence: string

- name: gold

dtype: int64

splits:

- name: train

num_bytes: 1520799

num_examples: 3100

- name: valid

num_bytes: 381025

num_examples: 776

- name: test

num_bytes: 475173

num_examples: 970

download_size: 0

dataset_size: 2376997

license: mit

task_categories:

- text-classification

language:

- en

tags:

- finance

size_categories:

- n<1K

---

# Dataset Card for "flare-fpb"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 742 | [

[

-0.05206298828125,

-0.025726318359375,

-0.003643035888671875,

0.0312347412109375,

-0.0016384124755859375,

0.0176849365234375,

0.0225067138671875,

-0.020965576171875,

0.074462890625,

0.02734375,

-0.06072998046875,

-0.035980224609375,

-0.030181884765625,

-0.01... |

ChanceFocus/flare-sm-acl | 2023-06-25T18:16:24.000Z | [

"region:us"

] | ChanceFocus | null | null | 1 | 5 | 2023-06-25T17:56:25 | ---

dataset_info:

features:

- name: id

dtype: string

- name: query

dtype: string

- name: answer

dtype: string

- name: text

dtype: string

- name: choices

sequence: string

- name: gold

dtype: int64

splits:

- name: train

num_bytes: 70385369

num_examples: 20781

- name: valid

num_bytes: 9049127

num_examples: 2555

- name: test

num_bytes: 13359338

num_examples: 3720

download_size: 46311736

dataset_size: 92793834

---

# Dataset Card for "flare-sm-acl"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 653 | [

[

-0.04388427734375,

-0.017333984375,

-0.0034961700439453125,

0.0080413818359375,

-0.00991058349609375,

0.0191802978515625,

0.024078369140625,

-0.01029205322265625,

0.06634521484375,

0.035125732421875,

-0.0638427734375,

-0.03594970703125,

-0.035308837890625,

-... |

tonytan48/TempReason | 2023-06-28T07:26:17.000Z | [

"task_categories:question-answering",

"size_categories:10K<n<100K",

"language:en",

"license:cc-by-sa-3.0",

"region:us"

] | tonytan48 | null | null | 3 | 5 | 2023-06-25T23:08:37 | ---

license: cc-by-sa-3.0

task_categories:

- question-answering

language:

- en

size_categories:

- 10K<n<100K

---

The TempReason dataset to evaluate the temporal reasoning capability of Large Language Models.

From paper "Towards Benchmarking and Improving the Temporal Reasoning Capability of Large Language Models" in ACL 2023. | 329 | [

[

-0.0219573974609375,

-0.033203125,

0.05780029296875,

-0.0010213851928710938,

-0.0036525726318359375,

0.00809478759765625,

-0.0002193450927734375,

-0.0258331298828125,

-0.028228759765625,

0.023040771484375,

-0.047393798828125,

-0.028076171875,

-0.02459716796875,

... |

FreedomIntelligence/alpaca-gpt4-japanese | 2023-08-06T08:10:29.000Z | [

"license:apache-2.0",

"region:us"

] | FreedomIntelligence | null | null | 2 | 5 | 2023-06-26T08:18:35 | ---

license: apache-2.0

---

The dataset is used in the research related to [MultilingualSIFT](https://github.com/FreedomIntelligence/MultilingualSIFT). | 152 | [

[

-0.0284271240234375,

-0.0214385986328125,

-0.000301361083984375,

0.01971435546875,

-0.004512786865234375,

0.004093170166015625,

-0.0194091796875,

-0.0303192138671875,

0.0289154052734375,

0.033966064453125,

-0.0643310546875,

-0.032958984375,

-0.012969970703125,

... |

TrainingDataPro/cars-video-object-tracking | 2023-09-20T14:58:57.000Z | [

"task_categories:image-segmentation",

"task_categories:image-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"region:us"

] | TrainingDataPro | The collection of overhead video frames, capturing various types of vehicles

traversing a roadway. The dataset inculdes light vehicles (cars) and

heavy vehicles (minivan). | @InProceedings{huggingface:dataset,

title = {cars-video-object-tracking},

author = {TrainingDataPro},

year = {2023}

} | 2 | 5 | 2023-06-26T13:21:56 | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-segmentation

- image-classification

language:

- en

tags:

- code

dataset_info:

features:

- name: image_id

dtype: int32

- name: image

dtype: image

- name: mask

dtype: image

- name: annotations

dtype: string

splits:

- name: train

num_bytes: 614230158

num_examples: 100

download_size: 580108296

dataset_size: 614230158

---

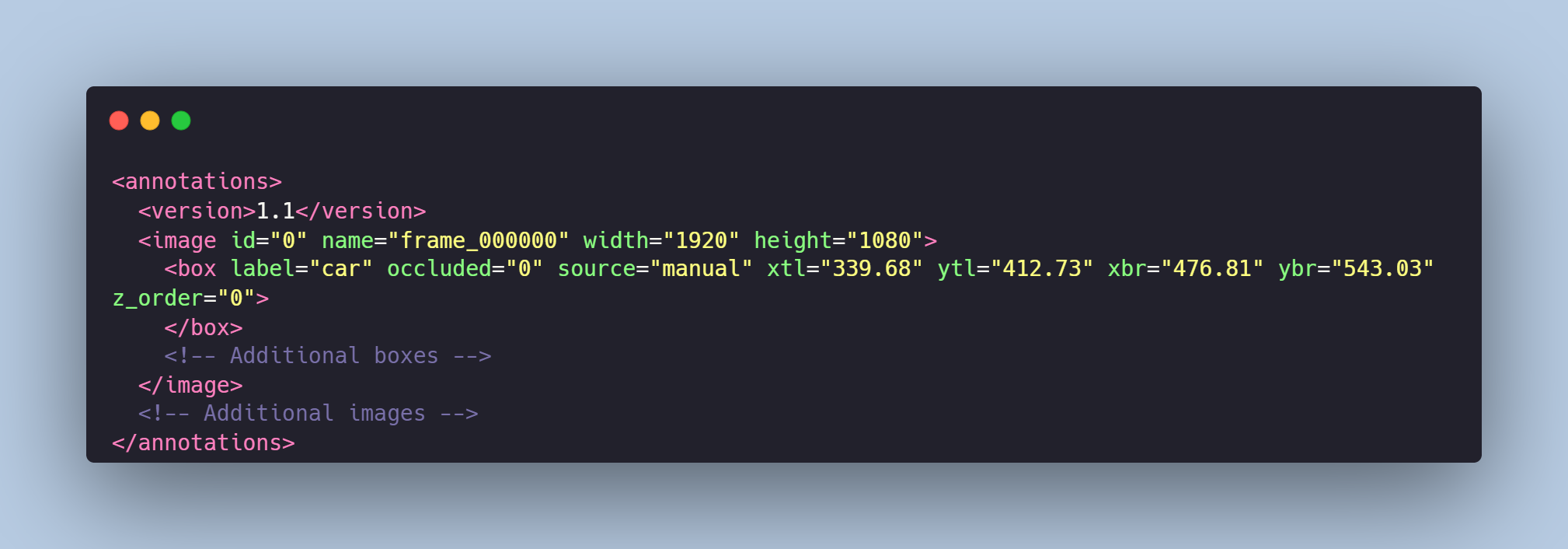

# Cars Tracking

The collection of overhead video frames, capturing various types of vehicles traversing a roadway. The dataset inculdes light vehicles (cars) and heavy vehicles (minivan).

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cars-video-object-tracking) to discuss your requirements, learn about the price and buy the dataset.

# Data Format

Each video frame from `images` folder is paired with an `annotations.xml` file that meticulously defines the tracking of each vehicle using polygons.

These annotations not only specify the location and path of each vehicle but also differentiate between the vehicle classes:

- cars,

- minivans.

The data labeling is visualized in the `boxes` folder.

# Example of the XML-file

# Object tracking is made in accordance with your requirements.

## **[TrainingData](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=cars-video-object-tracking)** provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** | 2,138 | [

[

-0.04962158203125,

-0.0390625,

0.021026611328125,

-0.01161956787109375,

-0.01526641845703125,

0.01068878173828125,

-0.0046234130859375,

-0.0238800048828125,

0.020538330078125,

0.01953125,

-0.07171630859375,

-0.04510498046875,

-0.0288238525390625,

-0.03170776... |

cchoi1022/wikitext-103-v1 | 2023-06-27T22:33:07.000Z | [

"region:us"

] | cchoi1022 | null | null | 0 | 5 | 2023-06-27T22:31:15 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

PaDaS-Lab/SynStOp | 2023-06-29T10:00:34.000Z | [

"region:us"

] | PaDaS-Lab | Minimal dataset for intended for LM development and testing using python string operations.

The dataset is created by running different one line python string operations on random strings

The idea is, that transformer implementation can learn the string operations and that this task is a good

proxy tasks for other transformer operations on real languages and real tasks. Consequently, the

data set is small and can be used in the development process without large scale infrastructures.

There are different configurations for the data set.

- `small`: contains below 50k instances of various string length and only contains slicing operations, i.e. all python operations expressable with `s[i:j:s]` (which also includes string reversal).

- you can further choose different subsets according to either length or the kind of operation

- `small10`: like small, but only strings to length 10

- `small15`: like small, but only strings to length 15

- `small20`: like small, but only strings to length 20

The fields have the following meaning:

- `input`: input string, i.e. the string and the string operation

- `output`: output of the string operation

- `code`: code for running the string operation in python,

- `res_var`: name of the result variable

- `operation`: kind of operation:

- `step_x` for `s[::x]`

- `char_at_x` for `s[x]`

- `slice_x:y` for `s[x:y]`

- `slice_step_x:y:z` for `s[x:y:z]`

- `slice_reverse_i:j:k` for `s[i:i+j][::k]`

Siblings of `data` contain additional metadata information about the dataset.

- `prompt` describes possible prompts based on that data splitted into input prompts / output prompts | @InProceedings{huggingface:dataset,

title = {String Operations Dataset: A small set of string manipulation tasks for fast model development},

author={Michael Granitzer},

year={2023}

} | 0 | 5 | 2023-06-28T13:17:26 | Entry not found | 15 | [

[

-0.02142333984375,

-0.01495361328125,

0.05718994140625,

0.0288238525390625,

-0.035064697265625,

0.046539306640625,

0.052520751953125,

0.005062103271484375,

0.0513916015625,

0.016998291015625,

-0.052093505859375,

-0.014984130859375,

-0.060394287109375,

0.0379... |

barbaroo/Faroese_BLARK_small | 2023-08-07T14:47:31.000Z | [

"task_categories:text-generation",

"language:fo",

"region:us"

] | barbaroo | null | null | 0 | 5 | 2023-06-28T14:49:09 | ---

task_categories:

- text-generation

language:

- fo

---

# Dataset Card for Faroese_BLARK_small

## Dataset Description

All sentences are retrieved from:

- **Paper:**

Annika Simonsen, Sandra Saxov Lamhauge, Iben Nyholm Debess, and Peter Juel Henrichsen. 2022. Creating a Basic Language Resource Kit for Faroese. In Proceedings of the Thirteenth Language Resources and Evaluation Conference, pages 4637–4643, Marseille, France. European Language Resources Association.

### Dataset Summary

This dataset is a filtered version of the corpus (35.6 M tokens) first published as BLARK - Basic Language Resource Kit for Faroese.

The pre-processing and filtering steps include:

- Normalize format to utf-8

- Remove shorter sentences (less than 10 units, where units are separated by spaces)

- Remove archaic Faroese

- Remove separators ('\r', '\t', '\n')

- Remove non standard formatting. Examples: '§§', ' | ', '**', ' • ', ' • ', '.- ', ': ?', '.?', '\xa0', '\xad', '_ _', '. .', etc.

- Remove (most) numbered lists, of formats: 1), 1:, Stk. 1 etc.

- Replace arbitrary number of question/exclamation marks and full-stops with 1. Example: !!!!!! -> !

- Remove websites that start with http

- Remove sentences without (or with little) linguistic content. In practice: all sentences where more than half of the characters (excluding spaces) are number, punctuations and letters in caps-lock (acronyms and initials)

- Remove duplicates

### Supported Tasks and Leaderboards

Suitable for MLM and CLM

| 1,503 | [

[

-0.0267791748046875,

-0.05572509765625,

0.0190277099609375,

0.009490966796875,

-0.038299560546875,

-0.019317626953125,

-0.045196533203125,

-0.023773193359375,

0.0279388427734375,

0.05950927734375,

-0.045867919921875,

-0.060791015625,

-0.01480865478515625,

0.... |

ai4privacy/pii-masking-43k | 2023-06-28T17:45:58.000Z | [

"size_categories:10K<n<100K",

"language:en",

"legal",

"business",

"psychology",

"privacy",

"doi:10.57967/hf/0824",

"region:us"

] | ai4privacy | null | null | 8 | 5 | 2023-06-28T16:44:41 | ---

language:

- en

tags:

- legal

- business

- psychology

- privacy

size_categories:

- 10K<n<100K

---

# Purpose and Features

The purpose of the model and dataset is to remove personally identifiable information (PII) from text, especially in the context of AI assistants and LLMs.

The model is a fine-tuned version of "Distilled BERT", a smaller and faster version of BERT. It was adapted for the task of token classification based on the largest to our knowledge open-source PII masking dataset, which we are releasing simultaneously. The model size is 62 million parameters. The original encoding of the parameters yields a model size of 268 MB, which is compressed to 43MB after parameter quantization. The models are available in PyTorch, tensorflow, and tensorflow.js

The dataset is composed of ~43’000 observations. Each row starts with a natural language sentence that includes placeholders for PII and could plausibly be written to an AI assistant. The placeholders are then filled in with mocked personal information and tokenized with the BERT tokenizer. We label the tokens that correspond to PII, serving as the ground truth to train our model.

The dataset covers a range of contexts in which PII can appear. The sentences span 54 sensitive data types (~111 token classes), targeting 125 discussion subjects / use cases split across business, psychology and legal fields, and 5 interactions styles (e.g. casual conversation vs formal document).

Key facts:

- Currently 5.6m tokens with 43k PII examples.

- Scaling to 100k examples

- Human-in-the-loop validated

- Synthetic data generated using proprietary algorithms

- Adapted from DistilBertForTokenClassification

- Framework PyTorch

- 8 bit quantization

# Performance evaluation

| Test Precision | Test Recall | Test Accuracy |

|:-:|:-:|:-:|

| 0.998636 | 0.998945 | 0.994621 |

Training/Test Set split:

- 4300 Testing Examples (10%)

- 38700 Train Examples

# Community Engagement:

Newsletter & updates: www.Ai4privacy.com

- Looking for ML engineers, developers, beta-testers, human in the loop validators (all languages)

- Integrations with already existing open source solutions

# Roadmap and Future Development

- Multilingual

- Extended integrations

- Continuously increase the training set

- Further optimisation to the model to reduce size and increase generalisability

- Next released major update is planned for the 14th of July (subscribe to newsletter for updates)

# Use Cases and Applications

**Chatbots**: Incorporating a PII masking model into chatbot systems can ensure the privacy and security of user conversations by automatically redacting sensitive information such as names, addresses, phone numbers, and email addresses.

**Customer Support Systems**: When interacting with customers through support tickets or live chats, masking PII can help protect sensitive customer data, enabling support agents to handle inquiries without the risk of exposing personal information.

**Email Filtering**: Email providers can utilize a PII masking model to automatically detect and redact PII from incoming and outgoing emails, reducing the chances of accidental disclosure of sensitive information.

**Data Anonymization**: Organizations dealing with large datasets containing PII, such as medical or financial records, can leverage a PII masking model to anonymize the data before sharing it for research, analysis, or collaboration purposes.

**Social Media Platforms**: Integrating PII masking capabilities into social media platforms can help users protect their personal information from unauthorized access, ensuring a safer online environment.

**Content Moderation**: PII masking can assist content moderation systems in automatically detecting and blurring or redacting sensitive information in user-generated content, preventing the accidental sharing of personal details.

**Online Forms**: Web applications that collect user data through online forms, such as registration forms or surveys, can employ a PII masking model to anonymize or mask the collected information in real-time, enhancing privacy and data protection.

**Collaborative Document Editing**: Collaboration platforms and document editing tools can use a PII masking model to automatically mask or redact sensitive information when multiple users are working on shared documents.

**Research and Data Sharing**: Researchers and institutions can leverage a PII masking model to ensure privacy and confidentiality when sharing datasets for collaboration, analysis, or publication purposes, reducing the risk of data breaches or identity theft.

**Content Generation**: Content generation systems, such as article generators or language models, can benefit from PII masking to automatically mask or generate fictional PII when creating sample texts or examples, safeguarding the privacy of individuals.

(...and whatever else your creative mind can think of)

# Support and Maintenance

AI4Privacy is a project affiliated with [AISuisse SA](https://www.aisuisse.com/). | 5,033 | [

[

-0.046173095703125,

-0.062103271484375,

0.01102447509765625,

0.0212554931640625,

-0.002971649169921875,

0.007038116455078125,

0.0012645721435546875,

-0.05902099609375,

-0.0038127899169921875,

0.0390625,

-0.03033447265625,

-0.034332275390625,

-0.033111572265625,

... |

tasksource/leandojo | 2023-06-28T17:46:34.000Z | [

"license:cc-by-2.0",

"region:us"

] | tasksource | null | null | 1 | 5 | 2023-06-28T17:41:51 | ---

license: cc-by-2.0

---

https://github.com/lean-dojo/LeanDojo

```

@article{yang2023leandojo,

title={{LeanDojo}: Theorem Proving with Retrieval-Augmented Language Models},

author={Yang, Kaiyu and Swope, Aidan and Gu, Alex and Chalamala, Rahul and Song, Peiyang and Yu, Shixing and Godil, Saad and Prenger, Ryan and Anandkumar, Anima},

journal={arXiv preprint arXiv:2306.15626},

year={2023}

}

``` | 405 | [

[

0.0007114410400390625,

-0.036407470703125,

0.048553466796875,

0.012939453125,

0.003551483154296875,

-0.012420654296875,

-0.032806396484375,

-0.035186767578125,

0.02545166015625,

0.0286407470703125,

-0.0208892822265625,

-0.0355224609375,

-0.0274810791015625,

... |

DynamicSuperb/StressDetection_MIRSD | 2023-07-12T06:17:19.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 5 | 2023-06-29T08:13:16 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: label

dtype: string

- name: word

dtype: string

- name: instruction

dtype: string

splits:

- name: test

num_bytes: 432162612.62

num_examples: 4492

download_size: 401399373

dataset_size: 432162612.62

---

# Dataset Card for "stress_dection_MIR_SD"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 514 | [

[

-0.03839111328125,

-0.0103302001953125,

0.0224761962890625,

0.034637451171875,

-0.02886962890625,

-0.001773834228515625,

0.025054931640625,

-0.0032520294189453125,

0.06134033203125,

0.022003173828125,

-0.07281494140625,

-0.0606689453125,

-0.04669189453125,

-... |

clu-ling/clupubhealth | 2023-08-02T02:22:46.000Z | [

"task_categories:summarization",

"size_categories:1K<n<10K",

"size_categories:10K<n<100K",

"language:en",

"license:apache-2.0",

"medical",

"region:us"

] | clu-ling | null | @inproceedings{kotonya-toni-2020-explainable,

title = "Explainable Automated Fact-Checking for Public Health Claims",

author = "Kotonya, Neema and

Toni, Francesca",

booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP)",

month = nov,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.emnlp-main.623",

pages = "7740--7754",

} | 0 | 5 | 2023-07-04T18:58:14 | ---

license: apache-2.0

task_categories:

- summarization

language:

- en

tags:

- medical

size_categories:

- 1K<n<10K

- 10K<n<100K

---

# `clupubhealth`

The `CLUPubhealth` dataset is based on the [PUBHEALTH fact-checking dataset](https://github.com/neemakot/Health-Fact-Checking).

The PUBHEALTH dataset contains claims, explanations, and main texts. The explanations function as vetted summaries of the main texts. The CLUPubhealth dataset repurposes these fields into summaries and texts for use in training Summarization models such as Facebook's BART.

There are currently 4 dataset configs which can be called, each has three splits (see Usage):

### `clupubhealth/mini`

This config includes only 200 samples per split. This is mostly used in testing scripts when small sets are desirable.

### `clupubhealth/base`

This is the base dataset which includes the full PUBHEALTH set, sans False samples. The `test` split is a shortened version which includes only 200 samples. This allows for faster eval steps during trianing.

### `clupubhealth/expanded`

Where the base `train` split contains 5,078 data points, this expanded set includes 62,163 data points. ChatGPT was used to generate new versions of the summaries in the base set. After GPT expansion a total of 72,498 were generated, however, this was shortened to ~62k after samples with poor BERTScores were eliminated.

### `clupubhealth/test`

This config has the full `test` split with ~1200 samples. Used for post-training evaluation.

## USAGE

To use the CLUPubhealth dataset use the `datasets` library:

```python

from datasets import load_dataset

data = load_dataset("clu-ling/clupubhealth", "base")

# Where the accepted extensions are the configs: `mini`, `base`, `expanded`, `test`

``` | 1,759 | [

[

-0.0256500244140625,

-0.03912353515625,

0.01666259765625,

-0.000141143798828125,

-0.0246734619140625,

-0.006351470947265625,

-0.0179901123046875,

-0.0184783935546875,

0.01397705078125,

0.045257568359375,

-0.04095458984375,

-0.036529541015625,

-0.030517578125,

... |

TinyPixel/dolphin-2 | 2023-07-13T06:19:34.000Z | [

"region:us"

] | TinyPixel | null | null | 4 | 5 | 2023-07-13T06:18:43 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1623415440

num_examples: 891857

download_size: 884160758

dataset_size: 1623415440

---

# Dataset Card for "dolphin-2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 361 | [

[

-0.0645751953125,

-0.01296234130859375,

0.01432037353515625,

0.0199127197265625,

-0.036651611328125,

-0.01666259765625,

0.042022705078125,

-0.03656005859375,

0.053985595703125,

0.04107666015625,

-0.06109619140625,

-0.0246429443359375,

-0.048126220703125,

-0.... |

DynamicSuperb/NoiseDetection_LJSpeech_MUSAN-Noise | 2023-07-18T07:34:56.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 5 | 2023-07-14T03:16:00 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: instruction

dtype: string

- name: label

dtype: string

splits:

- name: test

num_bytes: 3371541774.0

num_examples: 26200

download_size: 3362687514

dataset_size: 3371541774.0

---

# Dataset Card for "NoiseDetectionnoise_LJSpeechMusan"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 495 | [

[

-0.03338623046875,

-0.0144500732421875,

0.01522064208984375,

0.0133819580078125,

-0.0137176513671875,

0.0075225830078125,

0.01010894775390625,

-0.0260467529296875,

0.06365966796875,

0.021636962890625,

-0.058258056640625,

-0.04595947265625,

-0.037994384765625,

... |

Alignment-Lab-AI/Lawyer-chat | 2023-07-14T17:22:44.000Z | [

"license:apache-2.0",

"region:us"

] | Alignment-Lab-AI | null | null | 2 | 5 | 2023-07-14T06:24:41 | ---

license: apache-2.0

---

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

## Dataset Description

### Dataset Summary

LawyerChat is a multi-turn conversational dataset primarily in the English language, containing dialogues about legal scenarios. The conversations are in the format of an interaction between a client and a legal professional. The dataset is designed for training and evaluating models on conversational tasks like dialogue understanding, response generation, and more.

### Supported Tasks and Leaderboards

- `dialogue-modeling`: The dataset can be used to train a model for multi-turn dialogue understanding and generation. Performance can be evaluated based on dialogue understanding and the quality of the generated responses.

- There is no official leaderboard associated with this dataset at this time.

dataset generated in part by dang/futures

### Languages

The text in the dataset is in English.

## Dataset Structure

### Data Instances

An instance in the LawyerChat dataset represents a single turn in a conversation, consisting of a user id and their corresponding utterance. Example:

```json

{

"conversations": [

{

"from": "user_id_1",

"value": "What are the possible legal consequences of not paying taxes?"

},

{

"from": "user_id_2",

"value": "There can be several legal consequences, ranging from fines to imprisonment..."

},

...

]

} | 1,668 | [

[

-0.0222320556640625,

-0.053131103515625,

0.0107421875,

0.0026035308837890625,

-0.032379150390625,

0.01335906982421875,

-0.0093994140625,

-0.005558013916015625,

0.0301971435546875,

0.05731201171875,

-0.05712890625,

-0.08038330078125,

-0.0309295654296875,

-0.0... |

DynamicSuperb/ReverberationDetection_LJSpeech_RirsNoises-SmallRoom | 2023-07-18T12:17:36.000Z | [

"region:us"

] | DynamicSuperb | null | null | 0 | 5 | 2023-07-14T15:42:15 | ---

dataset_info:

features:

- name: file

dtype: string

- name: audio

dtype: audio

- name: instruction

dtype: string

- name: label

dtype: string

splits:

- name: test

num_bytes: 3371857486.0

num_examples: 26200

download_size: 3358417173

dataset_size: 3371857486.0

---

# Dataset Card for "ReverberationDetectionsmallroom_LJSpeechRirsNoises"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | 512 | [

[

-0.045196533203125,

-0.0016412734985351562,

0.004161834716796875,

0.03021240234375,

-0.006938934326171875,

0.0023956298828125,

0.00014674663543701172,

-0.00878143310546875,

0.061370849609375,

0.036041259765625,

-0.07232666015625,

-0.04681396484375,

-0.0270843505... |

ivrit-ai/audio-vad | 2023-07-19T10:17:05.000Z | [

"task_categories:audio-classification",

"task_categories:voice-activity-detection",

"size_categories:1M<n<10M",

"language:he",

"license:other",

"arxiv:2307.08720",

"region:us"

] | ivrit-ai | null | null | 2 | 5 | 2023-07-15T14:53:26 | ---

license: other

task_categories:

- audio-classification

- voice-activity-detection

language:

- he

size_categories:

- 1M<n<10M

extra_gated_prompt:

"You agree to the following license terms:

This material and data is licensed under the terms of the Creative Commons Attribution 4.0

International License (CC BY 4.0), The full text of the CC-BY 4.0 license is available at

https://creativecommons.org/licenses/by/4.0/.

Notwithstanding the foregoing, this material and data may only be used, modified and distributed for

the express purpose of training AI models, and subject to the foregoing restriction. In addition, this

material and data may not be used in order to create audiovisual material that simulates the voice or

likeness of the specific individuals appearing or speaking in such materials and data (a “deep-fake”).

To the extent this paragraph is inconsistent with the CC-BY-4.0 license, the terms of this paragraph

shall govern.

By downloading or using any of this material or data, you agree that the Project makes no

representations or warranties in respect of the data, and shall have no liability in respect thereof. These

disclaimers and limitations are in addition to any disclaimers and limitations set forth in the CC-BY-4.0

license itself. You understand that the project is only able to make available the materials and data

pursuant to these disclaimers and limitations, and without such disclaimers and limitations the project

would not be able to make available the materials and data for your use."

extra_gated_fields:

I have read the license, and agree to its terms: checkbox

---

ivrit.ai is a database of Hebrew audio and text content.

**audio-base** contains the raw, unprocessed sources.

**audio-vad** contains audio snippets generated by applying Silero VAD (https://github.com/snakers4/silero-vad) to the base dataset.

**audio-transcripts** contains transcriptions for each snippet in the audio-vad dataset.

The audio-base dataset contains data from the following sources:

* Geekonomy (Podcast, https://geekonomy.net)

* HaCongress (Podcast, https://hacongress.podbean.com/)

* Idan Eretz's YouTube channel (https://www.youtube.com/@IdanEretz)

* Moneytime (Podcast, https://money-time.co.il)

* Mor'e Nevohim (Podcast, https://open.spotify.com/show/1TZeexEk7n60LT1SlS2FE2?si=937266e631064a3c)

* Yozevitch's World (Podcast, https://www.yozevitch.com/yozevitch-podcast)

* NETfrix (Podcast, https://netfrix.podbean.com)

* On Meaning (Podcast, https://mashmaut.buzzsprout.com)

* Shnekel (Podcast, https://www.shnekel.live)

* Bite-sized History (Podcast, https://soundcloud.com/historia-il)

* Tziun 3 (Podcast, https://tziun3.co.il)

* Academia Israel (https://www.youtube.com/@academiaisrael6115)

* Shiluv Maagal (https://www.youtube.com/@ShiluvMaagal)

Paper: https://arxiv.org/abs/2307.08720

If you use our datasets, the following quote is preferable:

```

@misc{marmor2023ivritai,

title={ivrit.ai: A Comprehensive Dataset of Hebrew Speech for AI Research and Development},

author={Yanir Marmor and Kinneret Misgav and Yair Lifshitz},

year={2023},

eprint={2307.08720},

archivePrefix={arXiv},

primaryClass={eess.AS}

}

``` | 3,235 | [

[

-0.037261962890625,

-0.050201416015625,

0.0001455545425415039,

0.0035648345947265625,

-0.013824462890625,

-0.006633758544921875,

-0.027984619140625,

-0.033477783203125,

0.0308837890625,

0.036834716796875,

-0.045440673828125,

-0.045379638671875,

-0.03463745117187... |

HeshamHaroon/arabic-quotes | 2023-07-16T07:19:40.000Z | [

"task_categories:text-classification",

"task_ids:multi-label-classification",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"source_datasets:original",

"language:ar",

"region:us"

] | HeshamHaroon | null | null | 1 | 5 | 2023-07-16T05:36:33 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

- crowdsourced

language:

- ar

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- multi-label-classification

---

# Arabic Quotes Dataset (arabic_Q)

The "Arabic Quotes" dataset contains a collection of Arabic quotes along with their corresponding authors and tags. The dataset is scraped from the website "arabic-quotes.com" and provides a diverse range of quotes from various authors.

## Dataset Details

- **Version**: 1.0.0

- **Total Quotes**: 3778

- **Languages**: Arabic

- **Source**: arabic-quotes.com

## Dataset Structure

The dataset is provided in the JSONL (JSON Lines) format, where each line represents a separate JSON object. The JSON objects have the following fields:

- `quote`: The Arabic quote text.

- `author`: The author of the quote.

- `tags`: A list of tags associated with the quote, providing additional context or themes.

## Dataset Examples

Here are a few examples of the quotes in the dataset:

```json

{

"quote": "اذا لم يكن لديك هدف ، فاجعل هدفك الاول ايجاد واحد .",

"author": "وليام شكسبير",

"tags": ["تنمية الذات", "تحفيز"]

}

{

"quote": "قيمة الحياة ليست في مدى طولها ، بل في مدى قيمتها",

"author": "وليام شكسبير",

"tags": ["الحياة", "القيمة"]

}

{

"quote": "التحدث عن الامور العميقة ليس سهلاً كما يبدو",

"author": "جبران خليل جبران",

"tags": ["التواصل", "العمق"]

}

```

## Dataset Usage

The "Arabic Quotes" dataset can be used for various purposes, including:

- Natural Language Processing (NLP) tasks in Arabic text analysis.

- Text generation and language modeling.

- Quote recommendation systems.

- Inspirational content generation.

- text-classification

## Acknowledgements

We would like to thank the website "arabic-quotes.com" for providing the valuable collection of Arabic quotes used in this dataset.

## License

The dataset is provided under the [bigscience-bloom-rail-1.0 License](https://huggingface.co/spaces/bigscience/license), which permits non-commercial use and sharing under certain conditions.

| 2,119 | [

[

-0.0254364013671875,

-0.033416748046875,

0.01206207275390625,

0.0128936767578125,