id stringlengths 2 115 | author stringlengths 2 42 ⌀ | last_modified timestamp[us, tz=UTC] | downloads int64 0 8.87M | likes int64 0 3.84k | paperswithcode_id stringlengths 2 45 ⌀ | tags list | lastModified timestamp[us, tz=UTC] | createdAt stringlengths 24 24 | key stringclasses 1 value | created timestamp[us] | card stringlengths 1 1.01M | embedding list | library_name stringclasses 21 values | pipeline_tag stringclasses 27 values | mask_token null | card_data null | widget_data null | model_index null | config null | transformers_info null | spaces null | safetensors null | transformersInfo null | modelId stringlengths 5 111 ⌀ | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

iamholmes/tiny-imdb | iamholmes | 2022-04-29T13:25:00Z | 36 | 0 | null | [

"region:us"

] | 2022-04-29T13:25:00Z | 2022-04-29T13:24:55.000Z | 2022-04-29T13:24:55 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

openclimatefix/gfs-surface-pressure-2.0deg | openclimatefix | 2022-06-28T18:38:27Z | 36 | 0 | null | [

"region:us"

] | 2022-06-28T18:38:27Z | 2022-06-22T22:11:25.000Z | 2022-06-22T22:11:25 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

wushan/vehicle_qa | wushan | 2022-08-25T13:14:33Z | 36 | 4 | null | [

"license:apache-2.0",

"region:us"

] | 2022-08-25T13:14:33Z | 2022-08-25T13:12:17.000Z | 2022-08-25T13:12:17 | ---

license: apache-2.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

alexandrainst/scandi-qa | alexandrainst | 2023-01-16T13:51:25Z | 36 | 7 | null | [

"task_categories:question-answering",

"task_ids:extractive-qa",

"multilinguality:multilingual",

"size_categories:1K<n<10K",

"source_datasets:mkqa",

"source_datasets:natural_questions",

"language:da",

"language:sv",

"language:no",

"license:cc-by-sa-4.0",

"region:us"

] | 2023-01-16T13:51:25Z | 2022-08-30T09:46:59.000Z | 2022-08-30T09:46:59 | ---

pretty_name: ScandiQA

language:

- da

- sv

- no

license:

- cc-by-sa-4.0

multilinguality:

- multilingual

size_categories:

- 1K<n<10K

source_datasets:

- mkqa

- natural_questions

task_categories:

- question-answering

task_ids:

- extractive-qa

---

# Dataset Card for ScandiQA

## Dataset Description

- **Repository:** <https://github.com/alexandrainst/scandi-qa>

- **Point of Contact:** [Dan Saattrup Nielsen](mailto:dan.nielsen@alexandra.dk)

- **Size of downloaded dataset files:** 69 MB

- **Size of the generated dataset:** 67 MB

- **Total amount of disk used:** 136 MB

### Dataset Summary

ScandiQA is a dataset of questions and answers in the Danish, Norwegian, and Swedish

languages. All samples come from the Natural Questions (NQ) dataset, which is a large

question answering dataset from Google searches. The Scandinavian questions and answers

come from the MKQA dataset, where 10,000 NQ samples were manually translated into,

among others, Danish, Norwegian, and Swedish. However, this did not include a

translated context, hindering the training of extractive question answering models.

We merged the NQ dataset with the MKQA dataset, and extracted contexts as either "long

answers" from the NQ dataset, being the paragraph in which the answer was found, or

otherwise we extract the context by locating the paragraphs which have the largest

cosine similarity to the question, and which contains the desired answer.

Further, many answers in the MKQA dataset were "language normalised": for instance, all

date answers were converted to the format "YYYY-MM-DD", meaning that in most cases

these answers are not appearing in any paragraphs. We solve this by extending the MKQA

answers with plausible "answer candidates", being slight perturbations or translations

of the answer.

With the contexts extracted, we translated these to Danish, Swedish and Norwegian using

the [DeepL translation service](https://www.deepl.com/pro-api?cta=header-pro-api) for

Danish and Swedish, and the [Google Translation

service](https://cloud.google.com/translate/docs/reference/rest/) for Norwegian. After

translation we ensured that the Scandinavian answers do indeed occur in the translated

contexts.

As we are filtering the MKQA samples at both the "merging stage" and the "translation

stage", we are not able to fully convert the 10,000 samples to the Scandinavian

languages, and instead get roughly 8,000 samples per language. These have further been

split into a training, validation and test split, with the latter two containing

roughly 750 samples. The splits have been created in such a way that the proportion of

samples without an answer is roughly the same in each split.

### Supported Tasks and Leaderboards

Training machine learning models for extractive question answering is the intended task

for this dataset. No leaderboard is active at this point.

### Languages

The dataset is available in Danish (`da`), Swedish (`sv`) and Norwegian (`no`).

## Dataset Structure

### Data Instances

- **Size of downloaded dataset files:** 69 MB

- **Size of the generated dataset:** 67 MB

- **Total amount of disk used:** 136 MB

An example from the `train` split of the `da` subset looks as follows.

```

{

'example_id': 123,

'question': 'Er dette en test?',

'answer': 'Dette er en test',

'answer_start': 0,

'context': 'Dette er en testkontekst.',

'answer_en': 'This is a test',

'answer_start_en': 0,

'context_en': "This is a test context.",

'title_en': 'Train test'

}

```

### Data Fields

The data fields are the same among all splits.

- `example_id`: an `int64` feature.

- `question`: a `string` feature.

- `answer`: a `string` feature.

- `answer_start`: an `int64` feature.

- `context`: a `string` feature.

- `answer_en`: a `string` feature.

- `answer_start_en`: an `int64` feature.

- `context_en`: a `string` feature.

- `title_en`: a `string` feature.

### Data Splits

| name | train | validation | test |

|----------|------:|-----------:|-----:|

| da | 6311 | 749 | 750 |

| sv | 6299 | 750 | 749 |

| no | 6314 | 749 | 750 |

## Dataset Creation

### Curation Rationale

The Scandinavian languages does not have any gold standard question answering dataset.

This is not quite gold standard, but the fact both the questions and answers are all

manually translated, it is a solid silver standard dataset.

### Source Data

The original data was collected from the [MKQA](https://github.com/apple/ml-mkqa/) and

[Natural Questions](https://ai.google.com/research/NaturalQuestions) datasets from

Apple and Google, respectively.

## Additional Information

### Dataset Curators

[Dan Saattrup Nielsen](https://saattrupdan.github.io/) from the [The Alexandra

Institute](https://alexandra.dk/) curated this dataset.

### Licensing Information

The dataset is licensed under the [CC BY-SA 4.0

license](https://creativecommons.org/licenses/by-sa/4.0/).

| [

-0.678779661655426,

-0.7817258238792419,

0.4390290677547455,

-0.07358650118112564,

-0.2504613697528839,

-0.14545691013336182,

-0.01294065173715353,

-0.26289108395576477,

0.14166951179504395,

0.5705828070640564,

-0.8823971748352051,

-0.5123703479766846,

-0.31522008776664734,

0.5523676872253... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

abidlabs/celeb-dataset | abidlabs | 2022-10-02T20:23:09Z | 36 | 0 | null | [

"region:us"

] | 2022-10-02T20:23:09Z | 2022-10-02T19:15:52.000Z | 2022-10-02T19:15:52 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

drt/complex_web_questions | drt | 2023-04-27T21:04:50Z | 36 | 4 | null | [

"license:apache-2.0",

"arxiv:1803.06643",

"arxiv:1807.09623",

"region:us"

] | 2023-04-27T21:04:50Z | 2022-10-22T22:14:27.000Z | 2022-10-22T22:14:27 | ---

license: apache-2.0

source: https://github.com/KGQA/KGQA-datasets

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:** https://www.tau-nlp.sites.tau.ac.il/compwebq

- **Repository:** https://github.com/alontalmor/WebAsKB

- **Paper:** https://arxiv.org/abs/1803.06643

- **Leaderboard:** https://www.tau-nlp.sites.tau.ac.il/compwebq-leaderboard

- **Point of Contact:** alontalmor@mail.tau.ac.il.

### Dataset Summary

**A dataset for answering complex questions that require reasoning over multiple web snippets**

ComplexWebQuestions is a new dataset that contains a large set of complex questions in natural language, and can be used in multiple ways:

- By interacting with a search engine, which is the focus of our paper (Talmor and Berant, 2018);

- As a reading comprehension task: we release 12,725,989 web snippets that are relevant for the questions, and were collected during the development of our model;

- As a semantic parsing task: each question is paired with a SPARQL query that can be executed against Freebase to retrieve the answer.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

- English

## Dataset Structure

QUESTION FILES

The dataset contains 34,689 examples divided into 27,734 train, 3,480 dev, 3,475 test.

each containing:

```

"ID”: The unique ID of the example;

"webqsp_ID": The original WebQuestionsSP ID from which the question was constructed;

"webqsp_question": The WebQuestionsSP Question from which the question was constructed;

"machine_question": The artificial complex question, before paraphrasing;

"question": The natural language complex question;

"sparql": Freebase SPARQL query for the question. Note that the SPARQL was constructed for the machine question, the actual question after paraphrasing

may differ from the SPARQL.

"compositionality_type": An estimation of the type of compositionally. {composition, conjunction, comparative, superlative}. The estimation has not been manually verified,

the question after paraphrasing may differ from this estimation.

"answers": a list of answers each containing answer: the actual answer; answer_id: the Freebase answer id; aliases: freebase extracted aliases for the answer.

"created": creation time

```

NOTE: test set does not contain “answer” field. For test evaluation please send email to

alontalmor@mail.tau.ac.il.

WEB SNIPPET FILES

The snippets files consist of 12,725,989 snippets each containing

PLEASE DON”T USE CHROME WHEN DOWNLOADING THESE FROM DROPBOX (THE UNZIP COULD FAIL)

"question_ID”: the ID of related question, containing at least 3 instances of the same ID (full question, split1, split2);

"question": The natural language complex question;

"web_query": Query sent to the search engine.

“split_source”: 'noisy supervision split' or ‘ptrnet split’, please train on examples containing “ptrnet split” when comparing to Split+Decomp from https://arxiv.org/abs/1807.09623

“split_type”: 'full_question' or ‘split_part1' or ‘split_part2’ please use ‘composition_answer’ in question of type composition and split_type: “split_part1” when training a reading comprehension model on splits as in Split+Decomp from https://arxiv.org/abs/1807.09623 (in the rest of the cases use the original answer).

"web_snippets": ~100 web snippets per query. Each snippet includes Title,Snippet. They are ordered according to Google results.

With a total of

10,035,571 training set snippets

1,350,950 dev set snippets

1,339,468 test set snippets

### Source Data

The original files can be found at this [dropbox link](https://www.dropbox.com/sh/7pkwkrfnwqhsnpo/AACuu4v3YNkhirzBOeeaHYala)

### Licensing Information

Not specified

### Citation Information

```

@inproceedings{talmor2018web,

title={The Web as a Knowledge-Base for Answering Complex Questions},

author={Talmor, Alon and Berant, Jonathan},

booktitle={Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers)},

pages={641--651},

year={2018}

}

```

### Contributions

Thanks for [happen2me](https://github.com/happen2me) for contributing this dataset. | [

-0.4643676280975342,

-1.085800051689148,

0.23951154947280884,

0.30541449785232544,

-0.15250563621520996,

-0.02498563379049301,

-0.14294719696044922,

-0.4754653871059418,

0.043775774538517,

0.4794923961162567,

-0.6529432535171509,

-0.49915751814842224,

-0.47137773036956787,

0.43604856729507... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

LYTinn/sentiment-analysis-tweet | LYTinn | 2022-10-31T03:54:49Z | 36 | 0 | null | [

"region:us"

] | 2022-10-31T03:54:49Z | 2022-10-29T03:29:04.000Z | 2022-10-29T03:29:04 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

AlekseyKorshuk/quora-question-pairs | AlekseyKorshuk | 2022-11-09T13:23:25Z | 36 | 0 | null | [

"region:us"

] | 2022-11-09T13:23:25Z | 2022-11-09T13:22:55.000Z | 2022-11-09T13:22:55 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bigbio/progene | bigbio | 2022-12-22T15:46:19Z | 36 | 2 | null | [

"multilinguality:monolingual",

"language:en",

"license:cc-by-4.0",

"region:us"

] | 2022-12-22T15:46:19Z | 2022-11-13T22:11:35.000Z | 2022-11-13T22:11:35 |

---

language:

- en

bigbio_language:

- English

license: cc-by-4.0

multilinguality: monolingual

bigbio_license_shortname: CC_BY_4p0

pretty_name: ProGene

homepage: https://zenodo.org/record/3698568#.YlVHqdNBxeg

bigbio_pubmed: True

bigbio_public: True

bigbio_tasks:

- NAMED_ENTITY_RECOGNITION

---

# Dataset Card for ProGene

## Dataset Description

- **Homepage:** https://zenodo.org/record/3698568#.YlVHqdNBxeg

- **Pubmed:** True

- **Public:** True

- **Tasks:** NER

The Protein/Gene corpus was developed at the JULIE Lab Jena under supervision of Prof. Udo Hahn.

The executing scientist was Dr. Joachim Wermter.

The main annotator was Dr. Rico Pusch who is an expert in biology.

The corpus was developed in the context of the StemNet project (http://www.stemnet.de/).

## Citation Information

```

@inproceedings{faessler-etal-2020-progene,

title = "{P}ro{G}ene - A Large-scale, High-Quality Protein-Gene Annotated Benchmark Corpus",

author = "Faessler, Erik and

Modersohn, Luise and

Lohr, Christina and

Hahn, Udo",

booktitle = "Proceedings of the 12th Language Resources and Evaluation Conference",

month = may,

year = "2020",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2020.lrec-1.564",

pages = "4585--4596",

abstract = "Genes and proteins constitute the fundamental entities of molecular genetics. We here introduce ProGene (formerly called FSU-PRGE), a corpus that reflects our efforts to cope with this important class of named entities within the framework of a long-lasting large-scale annotation campaign at the Jena University Language {\&} Information Engineering (JULIE) Lab. We assembled the entire corpus from 11 subcorpora covering various biological domains to achieve an overall subdomain-independent corpus. It consists of 3,308 MEDLINE abstracts with over 36k sentences and more than 960k tokens annotated with nearly 60k named entity mentions. Two annotators strove for carefully assigning entity mentions to classes of genes/proteins as well as families/groups, complexes, variants and enumerations of those where genes and proteins are represented by a single class. The main purpose of the corpus is to provide a large body of consistent and reliable annotations for supervised training and evaluation of machine learning algorithms in this relevant domain. Furthermore, we provide an evaluation of two state-of-the-art baseline systems {---} BioBert and flair {---} on the ProGene corpus. We make the evaluation datasets and the trained models available to encourage comparable evaluations of new methods in the future.",

language = "English",

ISBN = "979-10-95546-34-4",

}

```

| [

-0.5570901036262512,

-0.3664364516735077,

0.1349899172782898,

-0.06421922147274017,

-0.17669560015201569,

-0.023924540728330612,

-0.16846157610416412,

-0.5750210285186768,

0.40075403451919556,

0.32583266496658325,

-0.5616065859794617,

-0.5119633674621582,

-0.6747006773948669,

0.56847327947... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bsmock/pubtables-1m | bsmock | 2023-08-08T16:43:14Z | 36 | 21 | null | [

"license:cdla-permissive-2.0",

"region:us"

] | 2023-08-08T16:43:14Z | 2022-11-22T18:59:39.000Z | 2022-11-22T18:59:39 | ---

license: cdla-permissive-2.0

---

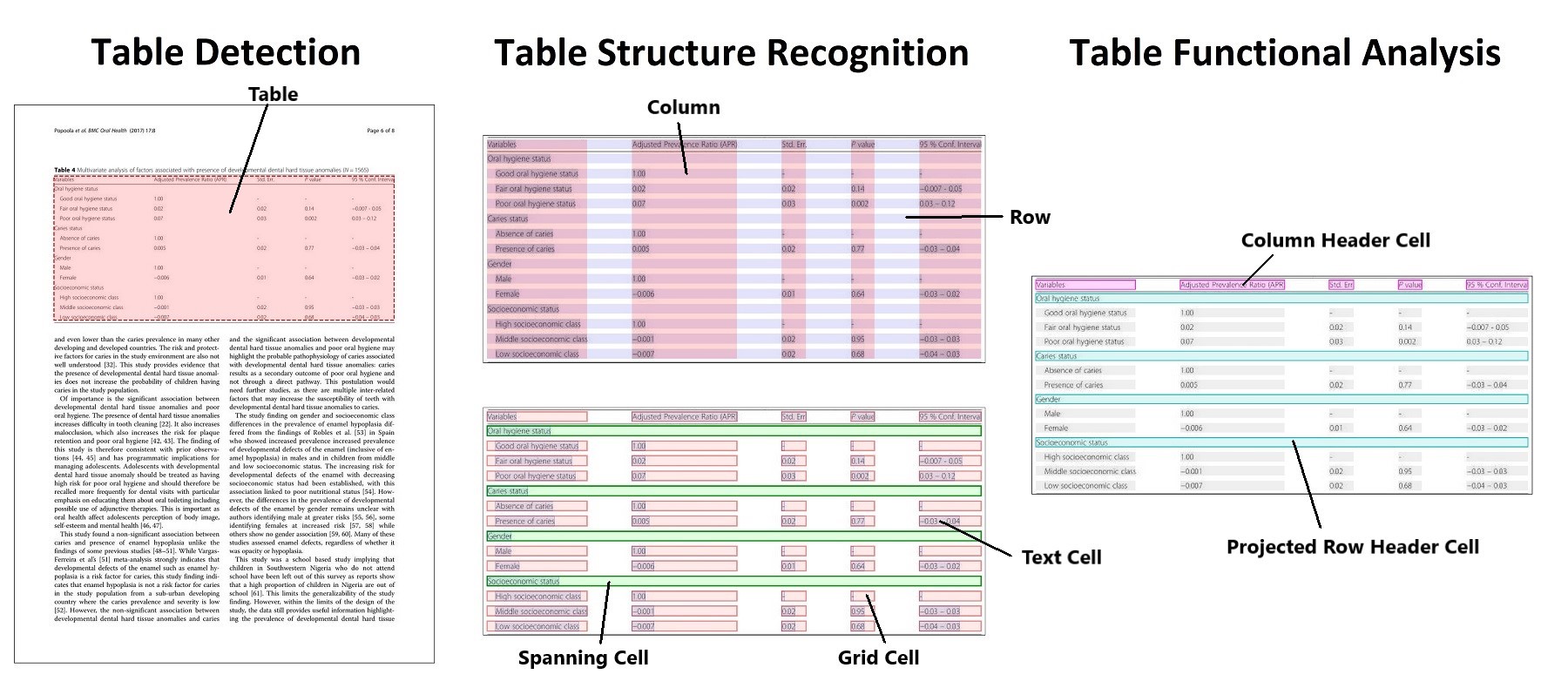

# PubTables-1M

- GitHub: [https://github.com/microsoft/table-transformer](https://github.com/microsoft/table-transformer)

- Paper: ["PubTables-1M: Towards comprehensive table extraction from unstructured documents"](https://openaccess.thecvf.com/content/CVPR2022/html/Smock_PubTables-1M_Towards_Comprehensive_Table_Extraction_From_Unstructured_Documents_CVPR_2022_paper.html)

- Hugging Face:

- [Detection model](https://huggingface.co/microsoft/table-transformer-detection)

- [Structure recognition model](https://huggingface.co/microsoft/table-transformer-structure-recognition)

Currently we only support downloading the dataset as tar.gz files. Integrating with HuggingFace Datasets is something we hope to support in the future!

Please switch to the "Files and versions" tab to download all of the files or use a command such as wget to download from the command line.

Once downloaded, use the included script "extract_structure_dataset.sh" to extract and organize all of the data.

## Files

It comes in 18 tar.gz files:

Training and evaluation data for the structure recognition model (947,642 total cropped table instances):

- PubTables-1M-Structure_Filelists.tar.gz

- PubTables-1M-Structure_Annotations_Test.tar.gz: 93,834 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Annotations_Train.tar.gz: 758,849 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Annotations_Val.tar.gz: 94,959 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Images_Test.tar.gz

- PubTables-1M-Structure_Images_Train.tar.gz

- PubTables-1M-Structure_Images_Val.tar.gz

- PubTables-1M-Structure_Table_Words.tar.gz: Bounding boxes and text content for all of the words in each cropped table image

Training and evaluation data for the detection model (575,305 total document page instances):

- PubTables-1M-Detection_Filelists.tar.gz

- PubTables-1M-Detection_Annotations_Test.tar.gz: 57,125 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Annotations_Train.tar.gz: 460,589 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Annotations_Val.tar.gz: 57,591 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Images_Test.tar.gz

- PubTables-1M-Detection_Images_Train_Part1.tar.gz

- PubTables-1M-Detection_Images_Train_Part2.tar.gz

- PubTables-1M-Detection_Images_Val.tar.gz

- PubTables-1M-Detection_Page_Words.tar.gz: Bounding boxes and text content for all of the words in each page image (plus some unused files)

Full table annotations for the source PDF files:

- PubTables-1M-PDF_Annotations.tar.gz: Detailed annotations for all of the tables appearing in the source PubMed PDFs. All annotations are in PDF coordinates.

- 401,733 JSON files, one per source PDF document | [

-0.3810470700263977,

-0.5367816090583801,

0.46445101499557495,

0.15657015144824982,

-0.30403098464012146,

-0.19173356890678406,

-0.01419527642428875,

-0.553024411201477,

0.021178103983402252,

0.5647629499435425,

-0.13108626008033752,

-0.904422402381897,

-0.6758595108985901,

0.3337799310684... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

crystina-z/mmarco-corpus | crystina-z | 2022-12-06T12:23:36Z | 36 | 0 | null | [

"region:us"

] | 2022-12-06T12:23:36Z | 2022-12-06T12:01:59.000Z | 2022-12-06T12:01:59 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

autoevaluate/autoeval-eval-bazzhangz__sumdataset-bazzhangz__sumdataset-18687b-2355774138 | autoevaluate | 2022-12-06T15:57:43Z | 36 | 0 | null | [

"autotrain",

"evaluation",

"region:us"

] | 2022-12-06T15:57:43Z | 2022-12-06T15:26:09.000Z | 2022-12-06T15:26:09 | ---

type: predictions

tags:

- autotrain

- evaluation

datasets:

- bazzhangz/sumdataset

eval_info:

task: summarization

model: knkarthick/MEETING_SUMMARY

metrics: []

dataset_name: bazzhangz/sumdataset

dataset_config: bazzhangz--sumdataset

dataset_split: train

col_mapping:

text: dialogue

target: summary

---

# Dataset Card for AutoTrain Evaluator

This repository contains model predictions generated by [AutoTrain](https://huggingface.co/autotrain) for the following task and dataset:

* Task: Summarization

* Model: knkarthick/MEETING_SUMMARY

* Dataset: bazzhangz/sumdataset

* Config: bazzhangz--sumdataset

* Split: train

To run new evaluation jobs, visit Hugging Face's [automatic model evaluator](https://huggingface.co/spaces/autoevaluate/model-evaluator).

## Contributions

Thanks to [@bazzhangz](https://huggingface.co/bazzhangz) for evaluating this model. | [

-0.5102072358131409,

-0.11899890750646591,

0.14952169358730316,

0.11376779526472092,

-0.25316300988197327,

-0.05419890955090523,

0.010391995310783386,

-0.3937259018421173,

0.37103497982025146,

0.36653807759284973,

-1.1872191429138184,

-0.24318069219589233,

-0.7097162008285522,

-0.005965116... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Zombely/diachronia-ocr-test-A | Zombely | 2022-12-14T17:21:46Z | 36 | 0 | null | [

"region:us"

] | 2022-12-14T17:21:46Z | 2022-12-14T17:21:21.000Z | 2022-12-14T17:21:21 | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 62457501.0

num_examples: 81

download_size: 62461147

dataset_size: 62457501.0

---

# Dataset Card for "diachronia-ocr"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.44572392106056213,

-0.24745219945907593,

0.4184242784976959,

-0.04478337988257408,

-0.2710293233394623,

0.11516553908586502,

0.31026437878608704,

-0.392324298620224,

0.670256495475769,

0.7247983813285828,

-0.6403278112411499,

-0.9615551233291626,

-0.5773802399635315,

-0.0143245365470647... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Kaludi/data-csgo-weapon-classification | Kaludi | 2023-02-02T23:34:31Z | 36 | 0 | null | [

"task_categories:image-classification",

"region:us"

] | 2023-02-02T23:34:31Z | 2023-02-02T22:42:56.000Z | 2023-02-02T22:42:56 | ---

task_categories:

- image-classification

---

# Dataset for project: csgo-weapon-classification

## Dataset Description

This dataset has for project csgo-weapon-classification was collected with the help of a bulk google image downloader.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<1768x718 RGB PIL image>",

"target": 0

},

{

"image": "<716x375 RGBA PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['AK-47', 'AWP', 'Famas', 'Galil-AR', 'Glock', 'M4A1', 'M4A4', 'P-90', 'SG-553', 'UMP', 'USP'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 1100 |

| valid | 275 |

| [

-0.42280450463294983,

-0.1327708214521408,

0.08765561133623123,

-0.05922212451696396,

-0.4022130072116852,

0.6005407571792603,

-0.2268517166376114,

-0.19414682686328888,

-0.20396389067173004,

0.34753674268722534,

-0.43179798126220703,

-0.9764398336410522,

-0.8119878768920898,

-0.0855013728... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

rcds/occlusion_swiss_judgment_prediction | rcds | 2023-03-28T08:19:29Z | 36 | 0 | null | [

"task_categories:text-classification",

"task_categories:other",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"language_creators:found",

"multilinguality:multilingual",

"size_categories:1K<n<10K",

"source_datasets:extended|swiss_judgment_prediction",

"language:de",

... | 2023-03-28T08:19:29Z | 2023-03-08T20:14:10.000Z | 2023-03-08T20:14:10 | ---

annotations_creators:

- expert-generated

language:

- de

- fr

- it

- en

language_creators:

- expert-generated

- found

license: cc-by-sa-4.0

multilinguality:

- multilingual

pretty_name: OcclusionSwissJudgmentPrediction

size_categories:

- 1K<n<10K

source_datasets:

- extended|swiss_judgment_prediction

tags:

- explainability-judgment-prediction

- occlusion

task_categories:

- text-classification

- other

task_ids: []

---

# Dataset Card for "OcclusionSwissJudgmentPrediction": An implementation of an occlusion based explainability method for Swiss judgment prediction

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Summary](#dataset-summary)

- [Documents](#documents)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset **str**ucture](#dataset-**str**ucture)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Summary

This dataset contains an implementation of occlusion for the SwissJudgmentPrediction task.

Note that this dataset only provides a test set and should be used in comination with the [Swiss-Judgment-Prediction](https://huggingface.co/datasets/swiss_judgment_prediction) dataset.

### Documents

Occlusion-Swiss-Judgment-Prediction is a subset of the [Swiss-Judgment-Prediction](https://huggingface.co/datasets/swiss_judgment_prediction) dataset.

The Swiss-Judgment-Prediction dataset is a multilingual, diachronic dataset of 85K Swiss Federal Supreme Court (FSCS) cases annotated with the respective binarized judgment outcome (approval/dismissal), the publication year, the legal area and the canton of origin per case. Occlusion-Swiss-Judgment-Prediction extends this dataset by adding sentence splitting with explainability labels.

### Supported Tasks and Leaderboards

OcclusionSwissJudgmentPrediction can be used for performing the occlusion in the legal judgment prediction task.

### Languages

Switzerland has four official languages with 3 languages (German, French and Italian) being represented in more than 1000 Swiss Federal Supreme court decisions. The decisions are written by the judges and clerks in the language of the proceedings.

## Dataset structure

### Data Instances

## Data Instances

**Multilingual use of the dataset**

When the dataset is used in a multilingual setting selecting the the 'all_languages' flag:

```python

from datasets import load_dataset

dataset = load_dataset('rcds/occlusion_swiss_judgment_prediction', 'all')

```

**Monolingual use of the dataset**

When the dataset is used in a monolingual setting selecting the ISO language code for one of the 3 supported languages. For example:

```python

from datasets import load_dataset

dataset = load_dataset('rcds/occlusion_swiss_judgment_prediction', 'de')

```

### Data Fields

The following data fields are provided for documents (Test_1/Test_2/Test_3/Test_4):

id: (**int**) a unique identifier of the for the document <br/>

year: (**int**) the publication year<br/>

label: (**str**) the judgment outcome: dismissal or approval<br/>

language: (**str**) one of (de, fr, it)<br/>

region: (**str**) the region of the lower court<br/>

canton: (**str**) the canton of the lower court<br/>

legal area: (**str**) the legal area of the case<br/>

explainability_label (**str**): the explainability label assigned to the occluded text: Supports judgment, Opposes judgment, Neutral, Baseline<br/>

occluded_text (**str**): the occluded text<br/>

text: (**str**) the facts of the case w/o the occluded text except for cases w/ explainability label "Baseline" (contain entire facts)<br/>

Note that Baseline cases are only contained in version 1 of the occlusion test set, since they do not change from experiment to experiment.

### Data Splits (Including Swiss Judgment Prediction)

Language | Subset | Number of Rows (Test_1/Test_2/Test_3/Test_4)

| ----------- | ----------- | ----------- |

German| de | __427__ / __1366__ / __3567__ / __7235__

French | fr | __307__ / __854__ / __1926__ / __3279__

Italian | it | __299__ /__919__ / __2493__ / __5733__

All | all | __1033__ / __3139__ / __7986__/ __16247__

Language | Subset | Number of Documents (is the same for Test_1/Test_2/Test_3/Test_4)

| ----------- | ----------- | ----------- |

German| de | __38__

French | fr | __36__

Italian | it | __34__

All | all | __108__

## Dataset Creation

### Curation Rationale

The dataset was curated by Niklaus et al. (2021) and Nina Baumgartner.

### Source Data

#### Initial Data Collection and Normalization

The original data are available at the Swiss Federal Supreme Court (https://www.bger.ch) in unprocessed formats (HTML). The documents were downloaded from the Entscheidsuche portal (https://entscheidsuche.ch) in HTML.

#### Who are the source language producers?

Switzerland has four official languages with 3 languages (German, French and Italian) being represented in more than 1000 Swiss Federal Supreme court decisions. The decisions are written by the judges and clerks in the language of the proceedings.

### Annotations

#### Annotation process

The decisions have been annotated with the binarized judgment outcome using parsers and regular expressions. In addition a subset of the test set (27 cases in German, 24 in French and 23 in Italian spanning over the years 2017 an 20200) was annotated by legal experts, splitting sentences/group of sentences and annotated with one of the following explainability label: Supports judgment, Opposes Judgment and Neutral. The test sets have each sentence/ group of sentence once occluded, enabling an analysis of the changes in the model's performance. The legal expert annotation were conducted from April 2020 to August 2020.

#### Who are the annotators?

Joel Niklaus and Adrian Jörg annotated the binarized judgment outcomes. Metadata is published by the Swiss Federal Supreme Court (https://www.bger.ch). The group of legal experts consists of Thomas Lüthi (lawyer), Lynn Grau (law student at master's level) and Angela Stefanelli (law student at master's level).

### Personal and Sensitive Information

The dataset contains publicly available court decisions from the Swiss Federal Supreme Court. Personal or sensitive information has been anonymized by the court before publication according to the following guidelines: https://www.bger.ch/home/juridiction/anonymisierungsregeln.html.

## Additional Information

### Dataset Curators

Niklaus et al. (2021) and Nina Baumgartner

### Licensing Information

We release the data under CC-BY-4.0 which complies with the court licensing (https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf)

© Swiss Federal Supreme Court, 2000-2020

The copyright for the editorial content of this website and the consolidated texts, which is owned by the Swiss Federal Supreme Court, is licensed under the Creative Commons Attribution 4.0 International licence. This means that you can re-use the content provided you acknowledge the source and indicate any changes you have made.

Source: https://www.bger.ch/files/live/sites/bger/files/pdf/de/urteilsveroeffentlichung_d.pdf

### Citation Information

```

@misc{baumgartner_nina_occlusion_2022,

title = {From Occlusion to Transparancy – An Occlusion-Based Explainability Approach for Legal Judgment Prediction in Switzerland},

shorttitle = {From Occlusion to Transparancy},

abstract = {Natural Language Processing ({NLP}) models have been used for more and more complex tasks such as Legal Judgment Prediction ({LJP}). A {LJP} model predicts the outcome of a legal case by utilizing its facts. This increasing deployment of Artificial Intelligence ({AI}) in high-stakes domains such as law and the involvement of sensitive data has increased the need for understanding such systems. We propose a multilingual occlusion-based explainability approach for {LJP} in Switzerland and conduct a study on the bias using Lower Court Insertion ({LCI}). We evaluate our results using different explainability metrics introduced in this thesis and by comparing them to high-quality Legal Expert Annotations using Inter Annotator Agreement. Our findings show that the model has a varying understanding of the semantic meaning and context of the facts section, and struggles to distinguish between legally relevant and irrelevant sentences. We also found that the insertion of a different lower court can have an effect on the prediction, but observed no distinct effects based on legal areas, cantons, or regions. However, we did identify a language disparity with Italian performing worse than the other languages due to representation inequality in the training data, which could lead to potential biases in the prediction in multilingual regions of Switzerland. Our results highlight the challenges and limitations of using {NLP} in the judicial field and the importance of addressing concerns about fairness, transparency, and potential bias in the development and use of {NLP} systems. The use of explainable artificial intelligence ({XAI}) techniques, such as occlusion and {LCI}, can help provide insight into the decision-making processes of {NLP} systems and identify areas for improvement. Finally, we identify areas for future research and development in this field in order to address the remaining limitations and challenges.},

author = {{Baumgartner, Nina}},

year = {2022},

langid = {english}

}

```

### Contributions

Thanks to [@ninabaumgartner](https://github.com/ninabaumgartner) for adding this dataset. | [

-0.35966578125953674,

-0.8111640810966492,

0.5849389433860779,

0.04089425131678581,

-0.37138164043426514,

-0.2763945758342743,

-0.1623881459236145,

-0.6189175844192505,

0.1903945356607437,

0.6034292578697205,

-0.45521458983421326,

-0.8190104365348816,

-0.5982663631439209,

-0.06090795993804... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

mstz/speeddating | mstz | 2023-04-07T14:54:21Z | 36 | 0 | null | [

"task_categories:tabular-classification",

"size_categories:1K<n<10K",

"language:en",

"speeddating",

"tabular_classification",

"binary_classification",

"region:us"

] | 2023-04-07T14:54:21Z | 2023-03-23T23:41:42.000Z | 2023-03-23T23:41:42 | ---

language:

- en

tags:

- speeddating

- tabular_classification

- binary_classification

pretty_name: Speed dating

size_categories:

- 1K<n<10K

task_categories: # Full list at https://github.com/huggingface/hub-docs/blob/main/js/src/lib/interfaces/Types.ts

- tabular-classification

configs:

- dating

---

# Speed dating

The [Speed dating dataset](https://www.openml.org/search?type=data&sort=nr_of_likes&status=active&id=40536) from OpenML.

# Configurations and tasks

| **Configuration** | **Task** | Description |

|-------------------|---------------------------|---------------------------------------------------------------|

| dating | Binary classification | Will the two date? |

# Usage

```python

from datasets import load_dataset

dataset = load_dataset("mstz/speeddating")["train"]

```

# Features

|**Features** |**Type** |

|---------------------------------------------------|---------|

|`is_dater_male` |`int8` |

|`dater_age` |`int8` |

|`dated_age` |`int8` |

|`age_difference` |`int8` |

|`dater_race` |`string` |

|`dated_race` |`string` |

|`are_same_race` |`int8` |

|`same_race_importance_for_dater` |`float64`|

|`same_religion_importance_for_dater` |`float64`|

|`attractiveness_importance_for_dated` |`float64`|

|`sincerity_importance_for_dated` |`float64`|

|`intelligence_importance_for_dated` |`float64`|

|`humor_importance_for_dated` |`float64`|

|`ambition_importance_for_dated` |`float64`|

|`shared_interests_importance_for_dated` |`float64`|

|`attractiveness_score_of_dater_from_dated` |`float64`|

|`sincerity_score_of_dater_from_dated` |`float64`|

|`intelligence_score_of_dater_from_dated` |`float64`|

|`humor_score_of_dater_from_dated` |`float64`|

|`ambition_score_of_dater_from_dated` |`float64`|

|`shared_interests_score_of_dater_from_dated` |`float64`|

|`attractiveness_importance_for_dater` |`float64`|

|`sincerity_importance_for_dater` |`float64`|

|`intelligence_importance_for_dater` |`float64`|

|`humor_importance_for_dater` |`float64`|

|`ambition_importance_for_dater` |`float64`|

|`shared_interests_importance_for_dater` |`float64`|

|`self_reported_attractiveness_of_dater` |`float64`|

|`self_reported_sincerity_of_dater` |`float64`|

|`self_reported_intelligence_of_dater` |`float64`|

|`self_reported_humor_of_dater` |`float64`|

|`self_reported_ambition_of_dater` |`float64`|

|`reported_attractiveness_of_dated_from_dater` |`float64`|

|`reported_sincerity_of_dated_from_dater` |`float64`|

|`reported_intelligence_of_dated_from_dater` |`float64`|

|`reported_humor_of_dated_from_dater` |`float64`|

|`reported_ambition_of_dated_from_dater` |`float64`|

|`reported_shared_interests_of_dated_from_dater` |`float64`|

|`dater_interest_in_sports` |`float64`|

|`dater_interest_in_tvsports` |`float64`|

|`dater_interest_in_exercise` |`float64`|

|`dater_interest_in_dining` |`float64`|

|`dater_interest_in_museums` |`float64`|

|`dater_interest_in_art` |`float64`|

|`dater_interest_in_hiking` |`float64`|

|`dater_interest_in_gaming` |`float64`|

|`dater_interest_in_clubbing` |`float64`|

|`dater_interest_in_reading` |`float64`|

|`dater_interest_in_tv` |`float64`|

|`dater_interest_in_theater` |`float64`|

|`dater_interest_in_movies` |`float64`|

|`dater_interest_in_concerts` |`float64`|

|`dater_interest_in_music` |`float64`|

|`dater_interest_in_shopping` |`float64`|

|`dater_interest_in_yoga` |`float64`|

|`interests_correlation` |`float64`|

|`expected_satisfaction_of_dater` |`float64`|

|`expected_number_of_likes_of_dater_from_20_people` |`int8` |

|`expected_number_of_dates_for_dater` |`int8` |

|`dater_liked_dated` |`float64`|

|`probability_dated_wants_to_date` |`float64`|

|`already_met_before` |`int8` |

|`dater_wants_to_date` |`int8` |

|`dated_wants_to_date` |`int8` |

| [

-0.7379164099693298,

-0.7051035761833191,

0.38476139307022095,

0.34531307220458984,

-0.3896600902080536,

-0.09917176514863968,

0.12123683840036392,

-0.5257579684257507,

0.6376322507858276,

0.3257812261581421,

-0.8783686757087708,

-0.566498339176178,

-0.7793352007865906,

0.15814422070980072... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

suolyer/pile_pile-cc | suolyer | 2023-03-27T03:04:43Z | 36 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-03-27T03:04:43Z | 2023-03-26T16:38:55.000Z | 2023-03-26T16:38:55 | ---

license: apache-2.0

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

Chinese-Vicuna/guanaco_belle_merge_v1.0 | Chinese-Vicuna | 2023-03-30T07:49:30Z | 36 | 80 | null | [

"language:zh",

"language:en",

"language:ja",

"license:gpl-3.0",

"region:us"

] | 2023-03-30T07:49:30Z | 2023-03-30T07:29:07.000Z | 2023-03-30T07:29:07 | ---

license: gpl-3.0

language:

- zh

- en

- ja

---

Thanks for [Guanaco Dataset](https://huggingface.co/datasets/JosephusCheung/GuanacoDataset) and [Belle Dataset](https://huggingface.co/datasets/BelleGroup/generated_train_0.5M_CN)

This dataset was created by merging the above two datasets in a certain format so that they can be used for training our code [Chinese-Vicuna](https://github.com/Facico/Chinese-Vicuna) | [

-0.15067338943481445,

0.057761531323194504,

0.2622068524360657,

0.5478158593177795,

-0.12370830774307251,

-0.39572712779045105,

0.056862346827983856,

-0.5080388188362122,

0.5286059379577637,

0.5258036255836487,

-0.6084743738174438,

-0.6990441679954529,

-0.296953022480011,

-0.06458837538957... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

sayakpaul/poses-controlnet-dataset | sayakpaul | 2023-04-05T01:47:49Z | 36 | 0 | null | [

"region:us"

] | 2023-04-05T01:47:49Z | 2023-04-03T11:18:31.000Z | 2023-04-03T11:18:31 | ---

dataset_info:

features:

- name: original_image

dtype: image

- name: condtioning_image

dtype: image

- name: overlaid

dtype: image

- name: caption

dtype: string

splits:

- name: train

num_bytes: 123997217.0

num_examples: 496

download_size: 124012907

dataset_size: 123997217.0

---

# Dataset Card for "poses-controlnet-dataset"

The dataset was prepared using this Colab Notebook:

[](https://colab.research.google.com/github/huggingface/community-events/blob/main/jax-controlnet-sprint/dataset_tools/create_pose_dataset.ipynb) | [

-0.5022714138031006,

-0.041872937232255936,

-0.19915202260017395,

0.3319048285484314,

-0.266126811504364,

0.19801032543182373,

0.3832252323627472,

-0.19343814253807068,

0.8978544473648071,

0.18040359020233154,

-0.8615341782569885,

-0.7876654267311096,

-0.30039894580841064,

-0.1767535507678... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

alexwww94/SimCLUE | alexwww94 | 2023-04-14T06:40:03Z | 36 | 0 | null | [

"license:other",

"region:us"

] | 2023-04-14T06:40:03Z | 2023-04-13T09:56:06.000Z | 2023-04-13T09:56:06 | ---

license: other

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

siddharthtumre/jnlpba-split | siddharthtumre | 2023-04-25T04:49:37Z | 36 | 0 | null | [

"task_categories:token-classification",

"task_ids:named-entity-recognition",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"language:en",

"license:unknown",

"region:us"

] | 2023-04-25T04:49:37Z | 2023-04-25T04:35:39.000Z | 2023-04-25T04:35:39 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

task_categories:

- token-classification

task_ids:

- named-entity-recognition

pretty_name: IASL-BNER Revised JNLPBA

dataset_info:

features:

- name: id

dtype: string

- name: tokens

sequence: string

- name: ner_tags

sequence:

class_label:

names:

'0': O

'1': B-DNA

'2': I-DNA

'3': B-RNA

'4': I-RNA

'5': B-cell_line

'6': I-cell_line

'7': B-cell_type

'8': I-cell_type

'9': B-protein

'10': I-protein

config_name: revised-jnlpba

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] | [

-0.5456174612045288,

-0.42588168382644653,

-0.051285725086927414,

0.38739174604415894,

-0.4620097875595093,

0.05422865226864815,

-0.24659410119056702,

-0.2884671688079834,

0.6999505162239075,

0.5781952142715454,

-0.9070088267326355,

-1.1513409614562988,

-0.7566764950752258,

0.0290524791926... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

silk-road/chinese-dolly-15k | silk-road | 2023-05-22T00:26:02Z | 36 | 15 | null | [

"task_categories:question-answering",

"task_categories:summarization",

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:zh",

"language:en",

"license:cc-by-sa-3.0",

"region:us"

] | 2023-05-22T00:26:02Z | 2023-05-22T00:18:48.000Z | 2023-05-22T00:18:48 | ---

license: cc-by-sa-3.0

task_categories:

- question-answering

- summarization

- text-generation

language:

- zh

- en

size_categories:

- 10K<n<100K

---

Chinese-Dolly-15k是骆驼团队翻译的Dolly instruction数据集

最后49条数据因为翻译长度超过限制,没有翻译成功,建议删除或者手动翻译一下

原来的数据集'databricks/databricks-dolly-15k'是由数千名Databricks员工根据InstructGPT论文中概述的几种行为类别生成的遵循指示记录的开源数据集。这几个行为类别包括头脑风暴、分类、封闭型问答、生成、信息提取、开放型问答和摘要。

在知识共享署名-相同方式共享3.0(CC BY-SA 3.0)许可下,此数据集可用于任何学术或商业用途。

我们会陆续将更多数据集发布到hf,包括

- [ ] Coco Caption的中文翻译

- [x] CoQA的中文翻译

- [ ] CNewSum的Embedding数据

- [x] 增广的开放QA数据

- [x] WizardLM的中文翻译

- [x] MMC4的中文翻译

如果你也在做这些数据集的筹备,欢迎来联系我们,避免重复花钱。

# 骆驼(Luotuo): 开源中文大语言模型

[https://github.com/LC1332/Luotuo-Chinese-LLM](https://github.com/LC1332/Luotuo-Chinese-LLM)

骆驼(Luotuo)项目是由[冷子昂](https://blairleng.github.io) @ 商汤科技, 陈启源 @ 华中师范大学 以及 李鲁鲁 @ 商汤科技 发起的中文大语言模型开源项目,包含了一系列语言模型。

骆驼项目**不是**商汤科技的官方产品。

## Citation

Please cite the repo if you use the data or code in this repo.

```

@misc{alpaca,

author={Ziang Leng, Qiyuan Chen and Cheng Li},

title = {Luotuo: An Instruction-following Chinese Language model, LoRA tuning on LLaMA},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/LC1332/Luotuo-Chinese-LLM}},

}

```

| [

-0.1827021986246109,

-0.959090530872345,

-0.08270619809627533,

0.6169309616088867,

-0.4174286425113678,

-0.10677093267440796,

0.07299136370420456,

-0.18062041699886322,

0.26006847620010376,

0.47190842032432556,

-0.5671644806861877,

-0.7754977941513062,

-0.3749174475669861,

0.06375879794359... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

nicholasKluge/toxic-aira-dataset | nicholasKluge | 2023-11-10T12:51:32Z | 36 | 2 | null | [

"task_categories:text-classification",

"size_categories:10K<n<100K",

"language:pt",

"language:en",

"license:apache-2.0",

"toxicity",

"harm",

"region:us"

] | 2023-11-10T12:51:32Z | 2023-06-07T19:08:36.000Z | 2023-06-07T19:08:36 | ---

license: apache-2.0

task_categories:

- text-classification

language:

- pt

- en

tags:

- toxicity

- harm

pretty_name: Toxic-Aira Dataset

size_categories:

- 10K<n<100K

dataset_info:

features:

- name: non_toxic_response

dtype: string

- name: toxic_response

dtype: string

splits:

- name: portuguese

num_bytes: 19006011

num_examples: 28103

- name: english

num_bytes: 19577715

num_examples: 41843

download_size: 5165276

dataset_size: 38583726

---

# Dataset (`Toxic-Aira Dataset`)

### Overview

This dataset contains a collection of texts containing harmful/toxic and harmless/non-toxic conversations and messages. All demonstrations are separated into two classes (`non_toxic_response` and `toxic_response`). This dataset was created from the Anthropic [helpful-harmless-RLHF](https://huggingface.co/datasets/Anthropic/hh-rlhf) dataset, the AllenAI [prosocial-dialog](https://huggingface.co/datasets/allenai/prosocial-dialog) dataset, the [real-toxicity-prompts](https://huggingface.co/datasets/allenai/real-toxicity-prompts) dataset (also from AllenAI), the [Toxic Comment Classification](https://github.com/tianqwang/Toxic-Comment-Classification-Challenge) dataset, and the [ToxiGen](https://huggingface.co/datasets/skg/toxigen-data) dataset.

The Portuguese version has translated copies from the above mentioned datasets ([helpful-harmless-RLHF](https://huggingface.co/datasets/Anthropic/hh-rlhf), [prosocial-dialog](https://huggingface.co/datasets/allenai/prosocial-dialog), [real-toxicity-prompts](https://huggingface.co/datasets/allenai/real-toxicity-prompts), [ToxiGen](https://huggingface.co/datasets/skg/toxigen-data)), plus the [hatecheck-portuguese](https://huggingface.co/datasets/Paul/hatecheck-portuguese) and [told-br](https://huggingface.co/datasets/told-br) datasets.

### Dataset Details

- **Dataset Name:** Toxic-Aira Dataset

- **Language:** Portuguese and English

- **Total Size:** Over 40,000 demonstrations (Portuguese 28,103, English 41,843)

- **Sources:**

- Anthropic [Helpful-harmless-RLHF](https://huggingface.co/datasets/Anthropic/hh-rlhf) dataset.

- AllenAI [Prosocial-dialog](https://huggingface.co/datasets/allenai/prosocial-dialog) dataset.

- AllenAI [Real-toxicity-prompts](https://huggingface.co/datasets/allenai/real-toxicity-prompts) dataset.

- The [Toxic Comment Classification](https://github.com/tianqwang/Toxic-Comment-Classification-Challenge).dataset.

- The [Hatecheck-portuguese](https://huggingface.co/datasets/Paul/hatecheck-portuguese) dataset.

- The [Told-br](https://huggingface.co/datasets/told-br) dataset.

- The [ToxiGen](https://huggingface.co/datasets/skg/toxigen-data) dataset.

### Contents

⚠️ THE EXAMPLES IN THIS DATASET CONTAIN TOXIC/OFFENSIVE LANGUAGE ⚠️

The dataset consists of data frames with the following columns:

- **non_toxic_response:** Text that was evaluated as non-toxic.

- **toxic_response:** Text evaluated as toxic.

```python

{

"non_toxic_response": "I prefer to see it as the study of change.",

"toxic_response":"So to my secret santa in the words of Jessie Pinkman, THANKS BITCH",

}

```

All demonstrations of examples are less than 350 tokens (measured using the `BERT` tokenizer).

### Use Cases

`Toxic-Aira Dataset` can be utilized to train models to detect harmful/toxic text.

## How to use

Available splits are `portuguese` and `english`.

```python

from datasets import load_dataset

dataset = load_dataset("nicholasKluge/toxic-aira-dataset")

```

### Disclaimer

This dataset is provided as is, without any warranty or guarantee of its accuracy or suitability for any purpose. The creators and contributors of this dataset are not liable for any damages or losses arising from its use. Please review and comply with the licenses and terms of the original datasets before use. | [

-0.08796096593141556,

-0.6761983036994934,

0.13213694095611572,

0.3745133578777313,

-0.1909807026386261,

-0.28244975209236145,

-0.06237737461924553,

-0.4502902626991272,

0.3523021340370178,

0.4157834053039551,

-0.6892766952514648,

-0.7257172465324402,

-0.6046066284179688,

0.323222249746322... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

SirlyDreamer/THUCNews | SirlyDreamer | 2023-06-20T07:37:15Z | 36 | 0 | null | [

"region:us"

] | 2023-06-20T07:37:15Z | 2023-06-20T05:21:24.000Z | 2023-06-20T05:21:24 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

unwilledset/raven-data | unwilledset | 2023-10-21T05:13:54Z | 36 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-10-21T05:13:54Z | 2023-07-10T09:54:46.000Z | 2023-07-10T09:54:46 | ---

license: apache-2.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

pie/squad_v2 | pie | 2023-11-23T10:53:55Z | 36 | 0 | null | [

"region:us"

] | 2023-11-23T10:53:55Z | 2023-07-10T11:32:08.000Z | 2023-07-10T11:32:08 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622264862060547,

0.43461528420448303,

-0.52829909324646,

0.7012971639633179,

0.7915720343589783,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104477167129517,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

PedroCJardim/QASports | PedroCJardim | 2023-11-24T18:16:39Z | 36 | 2 | null | [

"task_categories:question-answering",

"size_categories:1M<n<10M",

"language:en",

"license:mit",

"sports",

"open-domain-qa",

"extractive-qa",

"region:us"

] | 2023-11-24T18:16:39Z | 2023-07-14T17:28:19.000Z | 2023-07-14T17:28:19 | ---

configs:

- config_name: all

data_files:

- split: train

path:

- "trainb.csv"

- "trains.csv"

- "trainf.csv"

- split: test

path:

- "testb.csv"

- "tests.csv"

- "testf.csv"

- split: validation

path:

- "validationb.csv"

- "validations.csv"

- "validationf.csv"

default: true

- config_name: basketball

data_files:

- split: train

path: "trainb.csv"

- split: test

path: "testb.csv"

- split: validation

path: "validationb.csv"

- config_name: football

data_files:

- split: train

path: "trainf.csv"

- split: test

path: "testf.csv"

- split: validation

path: "validationf.csv"

- config_name: soccer

data_files:

- split: train

path: "trains.csv"

- split: test

path: "tests.csv"

- split: validation

path: "validations.csv"

license: mit

task_categories:

- question-answering

language:

- en

tags:

- sports

- open-domain-qa

- extractive-qa

size_categories:

- 1M<n<10M

pretty_name: QASports

---

### Dataset Summary

QASports is the first large sports-themed question answering dataset counting over 1.5 million questions and answers about 54k preprocessed wiki pages, using as documents the wiki of 3 of the most popular sports in the world, Soccer, American Football and Basketball. Each sport can be downloaded individually as a subset, with the train, test and validation splits, or all 3 can be downloaded together.

- 🎲 Complete dataset: https://osf.io/n7r23/

- 🔧 Processing scripts: https://github.com/leomaurodesenv/qasports-dataset-scripts/

### Supported Tasks and Leaderboards

Extractive Question Answering.

### Languages

English.

## Dataset Structure

### Data Instances

An example of 'train' looks as follows.

```

{

"answer": {

"offset": [42,44],

"text": "16"

},

"context": "The following is a list of squads for all 16 national teams competing at the Copa América Centenario. Each national team had to submit a squad of 23 players, 3 of whom must be goalkeepers. The provisional squads were announced on 4 May 2016. A final selection was provided to the organisers on 20 May 2016." ,

"qa_id": "61200579912616854316543272456523433217",

"question": "How many national teams competed at the Copa América Centenario?",

"context_id": "171084087809998484545703642399578583178",

"context_title": "Copa América Centenario squads | Football Wiki | Fandom",

"url": "https://football.fandom.com/wiki/Copa_Am%C3%A9rica_Centenario_squads"

}

```

### Data Fields

The data fields are the same among all splits.

- '': int

- `id_qa`: a `string` feature.

- `context_id`: a `string` feature.

- `context_title`: a `string` feature.

- `url`: a `string` feature.

- `context`: a `string` feature.

- `question`: a `string` feature.

- `answers`: a dictionary feature containing:

- `text`: a `string` feature.

- `offset`: a list feature containing:

- 2 `int32` features for start and end.

### Citation

```

@inproceedings{jardim:2023:qasports-dataset,

author={Pedro Calciolari Jardim and Leonardo Mauro Pereira Moraes and Cristina Dutra Aguiar},

title = {{QASports}: A Question Answering Dataset about Sports},

booktitle = {Proceedings of the Brazilian Symposium on Databases: Dataset Showcase Workshop},

address = {Belo Horizonte, MG, Brazil},

url = {https://github.com/leomaurodesenv/qasports-dataset-scripts},

publisher = {Brazilian Computer Society},

pages = {1-12},

year = {2023}

}

```

| [

-0.7315133810043335,

-0.535916805267334,

0.41776204109191895,

0.34121033549308777,

-0.3199096620082855,

0.2152910679578781,

0.08236543089151382,

-0.3604840338230133,

0.5438238978385925,

0.20937581360340118,

-0.88039231300354,

-0.6175742149353027,

-0.37652671337127686,

0.46392711997032166,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

aditijha/processed_lima | aditijha | 2023-08-29T05:26:26Z | 36 | 2 | null | [

"region:us"

] | 2023-08-29T05:26:26Z | 2023-07-16T21:33:28.000Z | 2023-07-16T21:33:28 | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: response

dtype: string

splits:

- name: train

num_bytes: 2942583

num_examples: 1000

- name: test

num_bytes: 80137

num_examples: 300

download_size: 31591

dataset_size: 3022720

---

# Dataset Card for "processed_lima"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.4119807481765747,

-0.5074421763420105,

0.45454081892967224,

0.5409814715385437,

-0.4744267761707306,

-0.17734454572200775,

0.4007243514060974,

-0.3056440055370331,

1.075714349746704,

0.8165264129638672,

-0.889593780040741,

-0.812372088432312,

-0.902409017086029,

-0.1357559859752655,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

MichaelR207/MultiSim | MichaelR207 | 2023-11-14T00:32:32Z | 36 | 0 | null | [

"task_categories:summarization",

"task_categories:text2text-generation",

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:en",

"language:fr",

"language:ru",

"language:ja",

"language:it",

"language:da",

"language:es",

"language:de",

"language:pt",

"language:sl",

"l... | 2023-11-14T00:32:32Z | 2023-07-18T21:55:31.000Z | 2023-07-18T21:55:31 | ---

license: mit

language:

- en

- fr

- ru

- ja

- it

- da

- es

- de

- pt

- sl

- ur

- eu

task_categories:

- summarization

- text2text-generation

- text-generation

pretty_name: MultiSim

tags:

- medical

- legal

- wikipedia

- encyclopedia

- science

- literature

- news

- websites

size_categories:

- 1M<n<10M

---

# Dataset Card for MultiSim Benchmark

## Dataset Description

- **Repository:https://github.com/XenonMolecule/MultiSim/tree/main**

- **Paper:https://aclanthology.org/2023.acl-long.269/ https://arxiv.org/pdf/2305.15678.pdf**

- **Point of Contact: michaeljryan@stanford.edu**

### Dataset Summary

The MultiSim benchmark is a growing collection of text simplification datasets targeted at sentence simplification in several languages. Currently, the benchmark spans 12 languages.

### Supported Tasks

- Sentence Simplification

### Usage

```python

from datasets import load_dataset

dataset = load_dataset("MichaelR207/MultiSim")

```

### Citation

If you use this benchmark, please cite our [paper](https://aclanthology.org/2023.acl-long.269/):

```

@inproceedings{ryan-etal-2023-revisiting,

title = "Revisiting non-{E}nglish Text Simplification: A Unified Multilingual Benchmark",

author = "Ryan, Michael and

Naous, Tarek and

Xu, Wei",

booktitle = "Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)",

month = jul,

year = "2023",

address = "Toronto, Canada",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.acl-long.269",

pages = "4898--4927",

abstract = "Recent advancements in high-quality, large-scale English resources have pushed the frontier of English Automatic Text Simplification (ATS) research. However, less work has been done on multilingual text simplification due to the lack of a diverse evaluation benchmark that covers complex-simple sentence pairs in many languages. This paper introduces the MultiSim benchmark, a collection of 27 resources in 12 distinct languages containing over 1.7 million complex-simple sentence pairs. This benchmark will encourage research in developing more effective multilingual text simplification models and evaluation metrics. Our experiments using MultiSim with pre-trained multilingual language models reveal exciting performance improvements from multilingual training in non-English settings. We observe strong performance from Russian in zero-shot cross-lingual transfer to low-resource languages. We further show that few-shot prompting with BLOOM-176b achieves comparable quality to reference simplifications outperforming fine-tuned models in most languages. We validate these findings through human evaluation.",

}

```

### Contact

**Michael Ryan**: [Scholar](https://scholar.google.com/citations?user=8APGEEkAAAAJ&hl=en) | [Twitter](http://twitter.com/michaelryan207) | [Github](https://github.com/XenonMolecule) | [LinkedIn](https://www.linkedin.com/in/michael-ryan-207/) | [Research Gate](https://www.researchgate.net/profile/Michael-Ryan-86) | [Personal Website](http://michaelryan.tech/) | [michaeljryan@stanford.edu](mailto://michaeljryan@stanford.edu)

### Languages

- English

- French

- Russian

- Japanese

- Italian

- Danish (on request)

- Spanish (on request)

- German

- Brazilian Portuguese

- Slovene

- Urdu (on request)

- Basque (on request)

## Dataset Structure

### Data Instances

MultiSim is a collection of 27 existing datasets:

- AdminIT

- ASSET

- CBST

- CLEAR

- DSim

- Easy Japanese

- Easy Japanese Extended

- GEOLino

- German News

- Newsela EN/ES

- PaCCSS-IT

- PorSimples

- RSSE

- RuAdapt Encyclopedia

- RuAdapt Fairytales

- RuAdapt Literature

- RuWikiLarge

- SIMPITIKI

- Simple German

- Simplext

- SimplifyUR

- SloTS

- Teacher

- Terence

- TextComplexityDE

- WikiAuto

- WikiLargeFR

### Data Fields

In the train set, you will only find `original` and `simple` sentences. In the validation and test sets you may find `simple1`, `simple2`, ... `simpleN` because a given sentence can have multiple reference simplifications (useful in SARI and BLEU calculations)

### Data Splits

The dataset is split into a train, validation, and test set.

## Dataset Creation

### Curation Rationale

I hope that collecting all of these independently useful resources for text simplification together into one benchmark will encourage multilingual work on text simplification!

### Source Data

#### Initial Data Collection and Normalization

Data is compiled from the 27 existing datasets that comprise the MultiSim Benchmark. For details on each of the resources please see Appendix A in the [paper](https://aclanthology.org/2023.acl-long.269.pdf).

#### Who are the source language producers?

Each dataset has different sources. At a high level the sources are: Automatically Collected (ex. Wikipedia, Web data), Manually Collected (ex. annotators asked to simplify sentences), Target Audience Resources (ex. Newsela News Articles), or Translated (ex. Machine translations of existing datasets).

These sources can be seen in Table 1 pictured above (Section: `Dataset Structure/Data Instances`) and further discussed in section 3 of the [paper](https://aclanthology.org/2023.acl-long.269.pdf). Appendix A of the paper has details on specific resources.

### Annotations

#### Annotation process

Annotators writing simplifications (only for some datasets) typically follow an annotation guideline. Some example guidelines come from [here](https://dl.acm.org/doi/10.1145/1410140.1410191), [here](https://link.springer.com/article/10.1007/s11168-006-9011-1), and [here](https://link.springer.com/article/10.1007/s10579-017-9407-6).

#### Who are the annotators?

See Table 1 (Section: `Dataset Structure/Data Instances`) for specific annotators per dataset. At a high level the annotators are: writers, translators, teachers, linguists, journalists, crowdworkers, experts, news agencies, medical students, students, writers, and researchers.

### Personal and Sensitive Information

No dataset should contain personal or sensitive information. These were previously collected resources primarily collected from news sources, wikipedia, science communications, etc. and were not identified to have personally identifiable information.

## Considerations for Using the Data

### Social Impact of Dataset

We hope this dataset will make a greatly positive social impact as text simplification is a task that serves children, second language learners, and people with reading/cognitive disabilities. By publicly releasing a dataset in 12 languages we hope to serve these global communities.

One negative and unintended use case for this data would be reversing the labels to make a "text complification" model. We beleive the benefits of releasing this data outweigh the harms and hope that people use the dataset as intended.

### Discussion of Biases

There may be biases of the annotators involved in writing the simplifications towards how they believe a simpler sentence should be written. Additionally annotators and editors have the choice of what information does not make the cut in the simpler sentence introducing information importance bias.

### Other Known Limitations

Some of the included resources were automatically collected or machine translated. As such not every sentence is perfectly aligned. Users are recommended to use such individual resources with caution.

## Additional Information

### Dataset Curators

**Michael Ryan**: [Scholar](https://scholar.google.com/citations?user=8APGEEkAAAAJ&hl=en) | [Twitter](http://twitter.com/michaelryan207) | [Github](https://github.com/XenonMolecule) | [LinkedIn](https://www.linkedin.com/in/michael-ryan-207/) | [Research Gate](https://www.researchgate.net/profile/Michael-Ryan-86) | [Personal Website](http://michaelryan.tech/) | [michaeljryan@stanford.edu](mailto://michaeljryan@stanford.edu)

### Licensing Information

MIT License

### Citation Information

Please cite the individual datasets that you use within the MultiSim benchmark as appropriate. Proper bibtex attributions for each of the datasets are included below.

#### AdminIT

```

@inproceedings{miliani-etal-2022-neural,

title = "Neural Readability Pairwise Ranking for Sentences in {I}talian Administrative Language",

author = "Miliani, Martina and

Auriemma, Serena and

Alva-Manchego, Fernando and

Lenci, Alessandro",

booktitle = "Proceedings of the 2nd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics and the 12th International Joint Conference on Natural Language Processing",

month = nov,