id stringlengths 2 115 | author stringlengths 2 42 ⌀ | last_modified timestamp[us, tz=UTC] | downloads int64 0 8.87M | likes int64 0 3.84k | paperswithcode_id stringlengths 2 45 ⌀ | tags list | lastModified timestamp[us, tz=UTC] | createdAt stringlengths 24 24 | key stringclasses 1 value | created timestamp[us] | card stringlengths 1 1.01M | embedding list | library_name stringclasses 21 values | pipeline_tag stringclasses 27 values | mask_token null | card_data null | widget_data null | model_index null | config null | transformers_info null | spaces null | safetensors null | transformersInfo null | modelId stringlengths 5 111 ⌀ | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

hlhdatscience/guanaco-spanish-dataset | hlhdatscience | 2023-10-21T11:19:21Z | 34 | 0 | null | [

"language:es",

"license:apache-2.0",

"region:us"

] | 2023-10-21T11:19:21Z | 2023-10-21T10:53:04.000Z | 2023-10-21T10:53:04 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 4384495

num_examples: 2410

- name: test

num_bytes: 376933

num_examples: 223

download_size: 2455040

dataset_size: 4761428

license: apache-2.0

language:

- es

pretty_name: d

---

# Dataset Card for "guanaco-spanish-dataset"

This dataset is a subset of original timdettmers/openassistant-guanaco,which is also a subset of the Open Assistant dataset .You can find here: https://huggingface.co/datasets/OpenAssistant/oasst1/tree/main

This subset of the data only contains the highest-rated paths in the conversation tree, with a total of 2,633 samples, translated with the help of GPT 3.5. turbo.

It represents the 41% and 42% of train and test from timdettmers/openassistant-guanaco respectively.

You can find the github repository for the code used here: https://github.com/Hector1993prog/guanaco_translation

For further information, please see the original dataset.

License: Apache 2.0

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.287158727645874,

-0.6905044913291931,

0.18469621241092682,

0.4339281916618347,

-0.26726171374320984,

0.014272913336753845,

-0.16522027552127838,

-0.43404659628868103,

0.47649794816970825,

0.3423120081424713,

-0.835159182548523,

-0.8234961628913879,

-0.6580544710159302,

-0.07599040865898... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

WenyangHui/Conic10K | WenyangHui | 2023-10-24T14:58:46Z | 34 | 4 | null | [

"task_categories:question-answering",

"size_categories:10K<n<100K",

"language:zh",

"license:mit",

"math",

"semantic parsing",

"region:us"

] | 2023-10-24T14:58:46Z | 2023-10-24T14:42:07.000Z | 2023-10-24T14:42:07 | ---

license: mit

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

- name: answer_expressions

dtype: string

- name: fact_expressions

dtype: string

- name: query_expressions

dtype: string

- name: fact_spans

dtype: string

- name: query_spans

dtype: string

- name: process

dtype: string

splits:

- name: train

num_bytes: 6012696

num_examples: 7757

- name: validation

num_bytes: 796897

num_examples: 1035

- name: test

num_bytes: 1630198

num_examples: 2069

download_size: 3563693

dataset_size: 8439791

task_categories:

- question-answering

language:

- zh

tags:

- math

- semantic parsing

size_categories:

- 10K<n<100K

--- | [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bgspaditya/malurl-ta-aditya | bgspaditya | 2023-10-29T01:01:51Z | 34 | 0 | null | [

"license:mit",

"region:us"

] | 2023-10-29T01:01:51Z | 2023-10-29T01:01:06.000Z | 2023-10-29T01:01:06 | ---

license: mit

dataset_info:

features:

- name: url

dtype: string

- name: type

dtype: string

splits:

- name: train

num_bytes: 39050445.80502228

num_examples: 520847

- name: val

num_bytes: 4881315.097488861

num_examples: 65106

- name: test

num_bytes: 4881315.097488861

num_examples: 65106

download_size: 32227565

dataset_size: 48813075.99999999

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

kaitchup/opus-German-to-English | kaitchup | 2023-11-01T19:15:23Z | 34 | 1 | null | [

"region:us"

] | 2023-11-01T19:15:23Z | 2023-11-01T19:15:17.000Z | 2023-11-01T19:15:17 | ---

configs:

- config_name: default

data_files:

- split: validation

path: data/validation-*

- split: train

path: data/train-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: validation

num_bytes: 334342

num_examples: 2000

- name: train

num_bytes: 115010446

num_examples: 940304

download_size: 84489243

dataset_size: 115344788

---

# Dataset Card for "opus-de-en"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5773648619651794,

-0.26169297099113464,

0.308430016040802,

0.32808834314346313,

-0.2550073266029358,

-0.13703379034996033,

0.0699385553598404,

-0.1708073914051056,

0.8291007280349731,

0.5855435729026794,

-0.8649486303329468,

-0.9149302244186401,

-0.6062307953834534,

-0.07754850387573242... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

artyomboyko/Common_voice_13_0_ru_dataset_prepared_for_whisper_fine_tune | artyomboyko | 2023-11-03T14:50:21Z | 34 | 0 | null | [

"task_categories:automatic-speech-recognition",

"language:ru",

"license:gpl-3.0",

"region:us"

] | 2023-11-03T14:50:21Z | 2023-11-03T09:18:28.000Z | 2023-11-03T09:18:28 | ---

license: gpl-3.0

dataset_info:

features:

- name: input_features

sequence:

sequence: float32

- name: labels

sequence: int64

splits:

- name: train

num_bytes: 35015263976

num_examples: 36454

- name: test

num_bytes: 9783955736

num_examples: 10186

download_size: 8317469510

dataset_size: 44799219712

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

task_categories:

- automatic-speech-recognition

language:

- ru

--- | [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

NathanGavenski/Acrobot-v1 | NathanGavenski | 2023-11-06T15:53:25Z | 34 | 1 | null | [

"size_categories:10M<n<100M",

"license:mit",

"Imitation Learning",

"Expert Trajectory",

"region:us"

] | 2023-11-06T15:53:25Z | 2023-11-06T15:50:16.000Z | 2023-11-06T15:50:16 | ---

license: mit

tags:

- Imitation Learning

- Expert Trajectory

pretty_name: Acrobot-v1 Expert Dataset

size_categories:

- 10M<n<100M

---

# Acrobot-v1 - Imitation Learning Datasets

This is a dataset created by [Imitation Learning Datasets](https://github.com/NathanGavenski/IL-Datasets) project.

It was created by using Stable Baselines weights from a DQN policy from [HuggingFace](https://huggingface.co/sb3/dqn-Acrobot-v1).

## Description

The dataset consists of 1,000 episodes with an average episodic reward of `-69.852`.

Each entry consists of:

```

obs (list): observation with length 6.

action (int): action (0, 1 or 2).

reward (float): reward point for that timestep.

episode_returns (bool): if that state was the initial timestep for an episode.

```

## Usage

Feel free to download and use the `teacher.jsonl` dataset as you please.

If you are interested in using our PyTorch Dataset implementation, feel free to check the [IL Datasets](https://github.com/NathanGavenski/IL-Datasets/blob/main/src/imitation_datasets/dataset/dataset.py) project.

There, we implement a base Dataset that downloads this dataset and all other datasets directly from HuggingFace.

The Baseline Dataset also allows for more control over train and test splits and how many episodes you want to use (in cases where the 1k episodes are not necessary).

## Citation

Coming soon. | [

-0.39254918694496155,

-0.3923959732055664,

-0.08807828277349472,

0.32334965467453003,

-0.06894569098949432,

-0.08073443919420242,

0.14177487790584564,

-0.14439982175827026,

0.5690788626670837,

0.30888766050338745,

-0.6520658135414124,

-0.4147052466869354,

-0.5390021800994873,

-0.0056270034... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

thestephanie/heterogeneous_data | thestephanie | 2023-11-07T12:42:27Z | 34 | 0 | null | [

"region:us"

] | 2023-11-07T12:42:27Z | 2023-11-07T12:24:04.000Z | 2023-11-07T12:24:04 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

anyspeech/PhoneCorpus | anyspeech | 2023-11-07T16:54:01Z | 34 | 0 | null | [

"region:us"

] | 2023-11-07T16:54:01Z | 2023-11-07T16:53:53.000Z | 2023-11-07T16:53:53 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: phones

dtype: string

splits:

- name: train

num_bytes: 264095984

num_examples: 10382114

download_size: 143568761

dataset_size: 264095984

---

# Dataset Card for "PhoneCorpus"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.4027014374732971,

-0.06601171940565109,

-0.06059752404689789,

0.4161970913410187,

-0.2690041661262512,

0.16753287613391876,

0.4218728244304657,

-0.15429247915744781,

0.9443907141685486,

0.5781778693199158,

-0.8292835354804993,

-0.8043590784072876,

-0.30052387714385986,

-0.20830951631069... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

hippocrates/CitationGPTv2_test | hippocrates | 2023-11-10T17:06:54Z | 34 | 0 | null | [

"region:us"

] | 2023-11-10T17:06:54Z | 2023-11-07T19:12:27.000Z | 2023-11-07T19:12:27 | ---

dataset_info:

features:

- name: id

dtype: string

- name: query

dtype: string

- name: answer

dtype: string

- name: choices

sequence: string

- name: gold

dtype: int64

splits:

- name: train

num_bytes: 186018625

num_examples: 99360

- name: valid

num_bytes: 24082667

num_examples: 12760

- name: test

num_bytes: 21458598

num_examples: 11615

download_size: 8627917

dataset_size: 231559890

---

# Dataset Card for "CitationGPTv2_test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5956442952156067,

-0.26543959975242615,

0.06830574572086334,

0.4226974844932556,

-0.17624309659004211,

-0.18033500015735626,

0.23303773999214172,

-0.017979083582758904,

0.36196622252464294,

0.10699599236249924,

-0.5536081790924072,

-0.43573325872421265,

-0.6757954359054565,

-0.203256756... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

BENBENBENb/sythetic_casual_relation_medium_scale | BENBENBENb | 2023-11-08T01:12:20Z | 34 | 0 | null | [

"language:en",

"region:us"

] | 2023-11-08T01:12:20Z | 2023-11-07T21:37:31.000Z | 2023-11-07T21:37:31 | ---

language:

- en

--- | [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

wu981526092/LL144 | wu981526092 | 2023-11-20T09:26:24Z | 34 | 0 | null | [

"license:mit",

"region:us"

] | 2023-11-20T09:26:24Z | 2023-11-11T12:55:47.000Z | 2023-11-11T12:55:47 | ---

license: mit

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

thangquoc/ad_banner | thangquoc | 2023-11-14T17:00:22Z | 34 | 0 | null | [

"region:us"

] | 2023-11-14T17:00:22Z | 2023-11-14T16:59:58.000Z | 2023-11-14T16:59:58 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 86615696.13

num_examples: 1362

download_size: 84006544

dataset_size: 86615696.13

---

# Dataset Card for "ad_banner"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5573326349258423,

-0.2838898003101349,

-0.07210317999124527,

0.1372050940990448,

-0.13654807209968567,

-0.03099023923277855,

0.26737460494041443,

-0.12672217190265656,

0.9619519710540771,

0.3882976472377777,

-0.7524341344833374,

-0.8940264582633972,

-0.5332508087158203,

-0.4446480572223... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

JestemKamil/text-classification-pl | JestemKamil | 2023-11-22T14:11:42Z | 34 | 0 | null | [

"region:us"

] | 2023-11-22T14:11:42Z | 2023-11-20T18:22:21.000Z | 2023-11-20T18:22:21 | ---

dataset_info:

features:

- name: text

dtype: string

- name: label

dtype: int64

splits:

- name: train

num_bytes: 6493727

num_examples: 35672

download_size: 4309231

dataset_size: 6493727

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Labels

0: normal

1: toxic | [

0.03845710679888725,

-0.15318061411380768,

0.2068183869123459,

0.38737916946411133,

-0.4963964819908142,

0.07802421599626541,

0.4448762536048889,

-0.19395285844802856,

0.6132581830024719,

0.7178007960319519,

-0.19526349008083344,

-0.7599245309829712,

-0.9471187591552734,

0.144976407289505,... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

seonglae/resrer-nq | seonglae | 2023-11-22T12:12:24Z | 34 | 0 | null | [

"region:us"

] | 2023-11-22T12:12:24Z | 2023-11-22T12:12:18.000Z | 2023-11-22T12:12:18 | ---

dataset_info:

features:

- name: document_text

dtype: string

- name: long_answer_candidates

list:

- name: end_token

dtype: int64

- name: start_token

dtype: int64

- name: top_level

dtype: bool

- name: question_text

dtype: string

- name: annotations

list:

- name: annotation_id

dtype: float64

- name: long_answer

struct:

- name: candidate_index

dtype: int64

- name: end_token

dtype: int64

- name: start_token

dtype: int64

- name: short_answers

list:

- name: end_token

dtype: int64

- name: start_token

dtype: int64

- name: yes_no_answer

dtype: string

- name: document_url

dtype: string

- name: example_id

dtype: int64

- name: long_answer_text

dtype: string

- name: short_answer_text

dtype: string

- name: split_id

dtype: string

- name: answer_exist_chunk

dtype: bool

- name: summarization_text

dtype: string

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 102598192

num_examples: 10000

download_size: 22621351

dataset_size: 102598192

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

| [

-0.1285337507724762,

-0.18616773188114166,

0.6529127359390259,

0.4943627715110779,

-0.193193256855011,

0.23607444763183594,

0.36071985960006714,

0.050563156604766846,

0.5793652534484863,

0.7400138974189758,

-0.6508103013038635,

-0.23783966898918152,

-0.7102247476577759,

-0.0478259548544883... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

taylorbobaylor/google-colab | taylorbobaylor | 2023-11-23T03:52:58Z | 34 | 0 | null | [

"region:us"

] | 2023-11-23T03:52:58Z | 2023-11-23T03:52:57.000Z | 2023-11-23T03:52:57 | ---

dataset_info:

features:

- name: repo_id

dtype: string

- name: file_path

dtype: string

- name: content

dtype: string

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 625338

num_examples: 66

download_size: 229515

dataset_size: 625338

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

| [

-0.1285337507724762,

-0.18616773188114166,

0.6529127359390259,

0.4943627715110779,

-0.193193256855011,

0.23607444763183594,

0.36071985960006714,

0.050563156604766846,

0.5793652534484863,

0.7400138974189758,

-0.6508103013038635,

-0.23783966898918152,

-0.7102247476577759,

-0.0478259548544883... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ngdiana/uaspeech_severity_high | ngdiana | 2022-02-03T22:59:37Z | 33 | 0 | null | [

"region:us"

] | 2022-02-03T22:59:37Z | 2022-03-02T23:29:22.000Z | 2022-03-02T23:29:22 | Entry not found | [

-0.32276496291160583,

-0.22568435966968536,

0.8622260093688965,

0.43461480736732483,

-0.5282987952232361,

0.7012965083122253,

0.7915714979171753,

0.07618625462055206,

0.7746025323867798,

0.25632181763648987,

-0.7852815389633179,

-0.22573819756507874,

-0.9104480743408203,

0.5715669393539429... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

vannacute/AmazonReviewHelpfulness | vannacute | 2021-12-14T00:39:21Z | 33 | 0 | null | [

"region:us"

] | 2021-12-14T00:39:21Z | 2022-03-02T23:29:22.000Z | 2022-03-02T23:29:22 | Entry not found | [

-0.3227645754814148,

-0.22568479180335999,

0.8622263669967651,

0.43461522459983826,

-0.52829909324646,

0.7012971639633179,

0.7915719747543335,

0.07618614286184311,

0.774603009223938,

0.2563217282295227,

-0.7852813005447388,

-0.22573819756507874,

-0.9104475975036621,

0.5715674161911011,

-... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

fmplaza/EmoEvent | fmplaza | 2023-03-27T08:19:58Z | 33 | 6 | null | [

"language:en",

"language:es",

"license:apache-2.0",

"region:us"

] | 2023-03-27T08:19:58Z | 2022-03-09T10:17:46.000Z | 2022-03-09T10:17:46 | ---

license: apache-2.0

language:

- en

- es

---

# Dataset Card for Emoevent

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Additional Information](#additional-information)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Repository:** [EmoEvent dataset repository](https://github.com/fmplaza/EmoEvent)

- **Paper: EmoEvent:** [A Multilingual Emotion Corpus based on different Events](https://aclanthology.org/2020.lrec-1.186.pdf)

- **Leaderboard:** [Leaderboard for EmoEvent / Spanish version](http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6385)

- **Point of Contact: fmplaza@ujaen.es**

### Dataset Summary

EmoEvent is a multilingual emotion dataset of tweets based on different events that took place in April 2019.

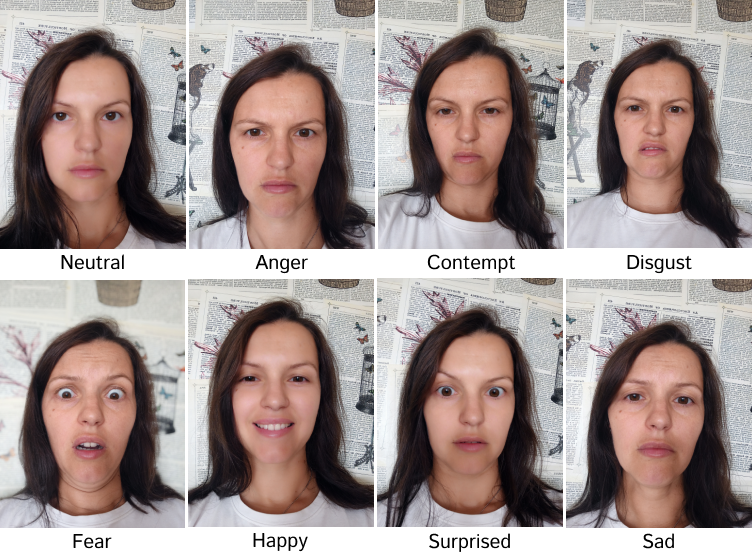

Three annotators labeled the tweets following the six Ekman’s basic emotion model (anger, fear, sadness, joy, disgust, surprise) plus the “neutral or other emotions” category. Morevoer, the tweets are annotated as offensive (OFF) or non-offensive (NO).

### Supported Tasks and Leaderboards

This dataset is intended for multi-class emotion classification and binary offensive classification.

Competition [EmoEvalEs task on emotion detection for Spanish at IberLEF 2021](http://journal.sepln.org/sepln/ojs/ojs/index.php/pln/article/view/6385)

### Languages

- Spanish

- English

## Dataset Structure

### Data Instances

For each instance, there is a string for the id of the tweet, a string for the emotion class, a string for the offensive class, and a string for the event. See the []() to explore more examples.

```

{'id': 'a0c1a858-a9b8-4cb1-8a81-1602736ff5b8',

'event': 'GameOfThrones',

'tweet': 'ARYA DE MI VIDA. ERES MAS ÉPICA QUE EL GOL DE INIESTA JODER #JuegodeTronos #VivePoniente',

'offensive': 'NO',

'emotion': 'joy',

}

```

```

{'id': '3YCT0L9OMMFP7KWKQSTJRJO0YHUSN2a0c1a858-a9b8-4cb1-8a81-1602736ff5b8',

'event': 'GameOfThrones',

'tweet': 'The #NotreDameCathedralFire is indeed sad and people call all offered donations humane acts, but please if you have money to donate, donate to humans and help bring food to their tables and affordable education first. What more humane than that? #HumanityFirst',

'offensive': 'NO',

'emotion': 'sadness',

}

```

### Data Fields

- `id`: a string to identify the tweet

- `event`: a string containing the event associated with the tweet

- `tweet`: a string containing the text of the tweet

- `offensive`: a string containing the offensive gold label

- `emotion`: a string containing the emotion gold label

### Data Splits

The EmoEvent dataset has 2 subsets: EmoEvent_es (Spanish version) and EmoEvent_en (English version)

Each subset contains 3 splits: _train_, _validation_, and _test_. Below are the statistics subsets.

| EmoEvent_es | Number of Instances in Split |

| ------------- | ------------------------------------------- |

| Train | 5,723 |

| Validation | 844 |

| Test | 1,656 |

| EmoEvent_en | Number of Instances in Split |

| ------------- | ------------------------------------------- |

| Train | 5,112 |

| Validation | 744 |

| Test | 1,447 |

## Dataset Creation

### Source Data

Twitter

#### Who are the annotators?

Amazon Mechanical Turkers

## Additional Information

### Licensing Information

The EmoEvent dataset is released under the [Apache-2.0 License](http://www.apache.org/licenses/LICENSE-2.0).

### Citation Information

@inproceedings{plaza-del-arco-etal-2020-emoevent,

title = "{{E}mo{E}vent: A Multilingual Emotion Corpus based on different Events}",

author = "{Plaza-del-Arco}, {Flor Miriam} and Strapparava, Carlo and {Ure{\~n}a-L{\’o}pez}, L. Alfonso and {Mart{\’i}n-Valdivia}, M. Teresa",

booktitle = "Proceedings of the 12th Language Resources and Evaluation Conference",

month = may,

year = "2020",

address = "Marseille, France", publisher = "European Language Resources Association",

url = "https://www.aclweb.org/anthology/2020.lrec-1.186", pages = "1492--1498",

language = "English",

ISBN = "979-10-95546-34-4"

} | [

-0.27986806631088257,

-0.6926295161247253,

0.11715426295995712,

0.38275808095932007,

-0.2822802662849426,

0.058133214712142944,

-0.29255756735801697,

-0.6163216233253479,

0.7204323410987854,

0.044125381857156754,

-0.5460174679756165,

-0.9546958208084106,

-0.4061721861362457,

0.398281872272... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

benjamin/ner-uk | benjamin | 2022-10-26T11:47:43Z | 33 | 0 | null | [

"task_categories:token-classification",

"task_ids:named-entity-recognition",

"multilinguality:monolingual",

"language:uk",

"license:cc-by-nc-sa-4.0",

"region:us"

] | 2022-10-26T11:47:43Z | 2022-03-26T10:10:50.000Z | 2022-03-26T10:10:50 | ---

language:

- uk

license: cc-by-nc-sa-4.0

multilinguality:

- monolingual

task_categories:

- token-classification

task_ids:

- named-entity-recognition

---

# lang-uk's ner-uk dataset

A dataset for Ukrainian Named Entity Recognition.

The original dataset is located at https://github.com/lang-uk/ner-uk. All credit for creation of the dataset goes to the contributors of https://github.com/lang-uk/ner-uk.

# License

<a rel="license" href="http://creativecommons.org/licenses/by-nc-sa/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by-nc-sa/4.0/88x31.png" /></a><br /><span xmlns:dct="http://purl.org/dc/terms/" href="http://purl.org/dc/dcmitype/Dataset" property="dct:title" rel="dct:type">"Корпус NER-анотацій українських текстів"</span> by <a xmlns:cc="http://creativecommons.org/ns#" href="https://github.com/lang-uk" property="cc:attributionName" rel="cc:attributionURL">lang-uk</a> is licensed under a <a rel="license" href="http://creativecommons.org/licenses/by-nc-sa/4.0/">Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License</a>.<br />Based on a work at <a xmlns:dct="http://purl.org/dc/terms/" href="https://github.com/lang-uk/ner-uk" rel="dct:source">https://github.com/lang-uk/ner-uk</a>. | [

-0.45379579067230225,

0.08658362179994583,

0.3695954978466034,

-0.032277125865221024,

-0.5745327472686768,

0.2031770646572113,

-0.1435524970293045,

-0.48407092690467834,

0.536734938621521,

0.5422983765602112,

-0.7596538662910461,

-0.9984810948371887,

-0.4002583622932434,

0.5270984768867493... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bigscience-data/roots_ar_wikipedia | bigscience-data | 2022-12-12T11:00:43Z | 33 | 1 | null | [

"language:ar",

"license:cc-by-sa-3.0",

"region:us"

] | 2022-12-12T11:00:43Z | 2022-05-18T09:06:35.000Z | 2022-05-18T09:06:35 | ---

language: ar

license: cc-by-sa-3.0

extra_gated_prompt: 'By accessing this dataset, you agree to abide by the BigScience

Ethical Charter. The charter can be found at:

https://hf.co/spaces/bigscience/ethical-charter'

extra_gated_fields:

I have read and agree to abide by the BigScience Ethical Charter: checkbox

---

ROOTS Subset: roots_ar_wikipedia

# wikipedia

- Dataset uid: `wikipedia`

### Description

### Homepage

### Licensing

### Speaker Locations

### Sizes

- 3.2299 % of total

- 4.2071 % of en

- 5.6773 % of ar

- 3.3416 % of fr

- 5.2815 % of es

- 12.4852 % of ca

- 0.4288 % of zh

- 0.4286 % of zh

- 5.4743 % of indic-bn

- 8.9062 % of indic-ta

- 21.3313 % of indic-te

- 4.4845 % of pt

- 4.0493 % of indic-hi

- 11.3163 % of indic-ml

- 22.5300 % of indic-ur

- 4.4902 % of vi

- 16.9916 % of indic-kn

- 24.7820 % of eu

- 11.6241 % of indic-mr

- 9.8749 % of id

- 9.3489 % of indic-pa

- 9.4767 % of indic-gu

- 24.1132 % of indic-as

- 5.3309 % of indic-or

### BigScience processing steps

#### Filters applied to: en

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_1024

#### Filters applied to: ar

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: fr

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_1024

#### Filters applied to: es

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_1024

#### Filters applied to: ca

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_1024

#### Filters applied to: zh

#### Filters applied to: zh

#### Filters applied to: indic-bn

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-ta

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-te

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: pt

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-hi

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-ml

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-ur

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: vi

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-kn

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: eu

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

#### Filters applied to: indic-mr

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: id

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-pa

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-gu

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

- filter_small_docs_bytes_300

#### Filters applied to: indic-as

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

#### Filters applied to: indic-or

- filter_wiki_user_titles

- dedup_document

- filter_remove_empty_docs

| [

-0.7034998536109924,

-0.5904083251953125,

0.3424512445926666,

0.16524194180965424,

-0.21788710355758667,

-0.09244458377361298,

-0.21013414859771729,

-0.15335746109485626,

0.7000443935394287,

0.34176790714263916,

-0.8132950067520142,

-0.9125483632087708,

-0.7245596051216125,

0.5008039474487... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

AhmedSSabir/Japanese-wiki-dump-sentence-dataset | AhmedSSabir | 2023-07-11T12:22:09Z | 33 | 3 | null | [

"task_categories:sentence-similarity",

"task_categories:text-classification",

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:ja",

"region:us"

] | 2023-07-11T12:22:09Z | 2022-06-08T11:34:04.000Z | 2022-06-08T11:34:04 | ---

task_categories:

- sentence-similarity

- text-classification

- text-generation

language:

- ja

size_categories:

- 1M<n<10M

---

# Dataset

5M (5121625) clean Japanese full sentence with the context. This dataset can be used to learn unsupervised semantic similarity, etc. | [

-0.4689856171607971,

-0.34407320618629456,

0.29574403166770935,

0.1182008907198906,

-0.8034929633140564,

-0.520545244216919,

-0.1855476051568985,

-0.10529386252164841,

0.17515413463115692,

0.9961847066879272,

-0.8779264688491821,

-0.7170225381851196,

-0.3948878049850464,

0.3289973735809326... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

succinctly/midjourney-prompts | succinctly | 2022-07-22T01:49:16Z | 33 | 80 | null | [

"license:apache-2.0",

"region:us"

] | 2022-07-22T01:49:16Z | 2022-07-21T20:29:49.000Z | 2022-07-21T20:29:49 | ---

license: apache-2.0

---

[Midjourney](https://midjourney.com) is an independent research lab whose broad mission is to "explore new mediums of thought". In 2022, they launched a text-to-image service that, given a natural language prompt, produces visual depictions that are faithful to the description. Their service is accessible via a public [Discord server](https://discord.com/invite/midjourney): users issue a query in natural language, and the Midjourney bot returns AI-generated images that follow the given description. The raw dataset (with Discord messages) can be found on Kaggle: [Midjourney User Prompts & Generated Images (250k)](https://www.kaggle.com/datasets/succinctlyai/midjourney-texttoimage). The authors of the scraped dataset have no affiliation to Midjourney.

This HuggingFace dataset was [processed](https://www.kaggle.com/code/succinctlyai/midjourney-text-prompts-huggingface) from the raw Discord messages to solely include the text prompts issued by the user (thus excluding the generated images and any other metadata). It could be used, for instance, to fine-tune a large language model to produce or auto-complete creative prompts for image generation.

Check out [succinctly/text2image-prompt-generator](https://huggingface.co/succinctly/text2image-prompt-generator), a GPT-2 model fine-tuned on this dataset. | [

-0.5382888317108154,

-0.9376667141914368,

0.737735390663147,

0.3551185727119446,

-0.25586169958114624,

-0.048308588564395905,

-0.18336829543113708,

-0.5022372603416443,

0.3017335832118988,

0.4770009219646454,

-1.1929903030395508,

-0.3473999500274658,

-0.5778916478157043,

0.2917404472827911... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

jonathanli/echr | jonathanli | 2022-08-21T23:29:28Z | 33 | 0 | null | [

"license:cc-by-nc-sa-4.0",

"arxiv:1906.02059",

"region:us"

] | 2022-08-21T23:29:28Z | 2022-08-15T01:35:16.000Z | 2022-08-15T01:35:16 | ---

license: cc-by-nc-sa-4.0

---

# ECHR Cases

The original data from [Chalkidis et al.](https://arxiv.org/abs/1906.02059), sourced from [archive.org](https://archive.org/details/ECHR-ACL2019).

## Preprocessing

* Order is shuffled

* Fact numbers preceeding each fact are removed (using the python regex `^[0-9]+\. `), as some cases didn't have fact numbers to begin with

* Everything else is the same

| [

-0.45605701208114624,

-0.9117888808250427,

0.8464569449424744,

-0.27761271595954895,

-0.6147682070732117,

-0.17358343303203583,

0.22028128802776337,

-0.3671342730522156,

0.4816550016403198,

0.693629264831543,

-0.5499441623687744,

-0.46817511320114136,

-0.4671420156955719,

0.303130298852920... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ywchoi/pubmed_abstract_1 | ywchoi | 2022-09-13T00:56:17Z | 33 | 1 | null | [

"region:us"

] | 2022-09-13T00:56:17Z | 2022-09-13T00:54:32.000Z | 2022-09-13T00:54:32 | Entry not found | [

-0.32276472449302673,

-0.22568407654762268,

0.8622258901596069,

0.4346148371696472,

-0.5282984972000122,

0.7012965679168701,

0.7915717363357544,

0.07618629932403564,

0.7746022939682007,

0.2563222646713257,

-0.785281777381897,

-0.22573848068714142,

-0.9104482531547546,

0.5715669393539429,

... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

giulio98/xlcost-single-prompt | giulio98 | 2022-11-02T19:42:44Z | 33 | 3 | null | [

"task_categories:text-generation",

"task_ids:language-modeling",

"language_creators:crowdsourced",

"language_creators:expert-generated",

"multilinguality:multilingual",

"size_categories:unknown",

"language:code",

"license:cc-by-sa-4.0",

"arxiv:2206.08474",

"region:us"

] | 2022-11-02T19:42:44Z | 2022-10-19T12:06:36.000Z | 2022-10-19T12:06:36 | ---

annotations_creators: []

language_creators:

- crowdsourced

- expert-generated

language:

- code

license:

- cc-by-sa-4.0

multilinguality:

- multilingual

size_categories:

- unknown

source_datasets: []

task_categories:

- text-generation

task_ids:

- language-modeling

pretty_name: xlcost-single-prompt

---

# XLCost for text-to-code synthesis

## Dataset Description

This is a subset of [XLCoST benchmark](https://github.com/reddy-lab-code-research/XLCoST), for text-to-code generation at program level for **2** programming languages: `Python, C++`. This dataset is based on [codeparrot/xlcost-text-to-code](https://huggingface.co/datasets/codeparrot/xlcost-text-to-code) with the following improvements:

* NEWLINE, INDENT and DEDENT were replaced with the corresponding ASCII codes.

* the code text has been reformatted using autopep8 for Python and clang-format for cpp.

* new columns have been introduced to allow evaluation using pass@k metric.

* programs containing more than one function call in the driver code were removed

## Languages

The dataset contains text in English and its corresponding code translation. The text contains a set of concatenated code comments that allow to synthesize the program.

## Dataset Structure

To load the dataset you need to specify the language(Python or C++).

```python

from datasets import load_dataset

load_dataset("giulio98/xlcost-single-prompt", "Python")

DatasetDict({

train: Dataset({

features: ['text', 'context', 'code', 'test', 'output', 'fn_call'],

num_rows: 8306

})

test: Dataset({

features: ['text', 'context', 'code', 'test', 'output', 'fn_call'],

num_rows: 812

})

validation: Dataset({

features: ['text', 'context', 'code', 'test', 'output', 'fn_call'],

num_rows: 427

})

})

```

## Data Fields

* text: natural language description.

* context: import libraries/global variables.

* code: code at program level.

* test: test function call.

* output: expected output of the function call.

* fn_call: name of the function to call.

## Data Splits

Each subset has three splits: train, test and validation.

## Citation Information

```

@misc{zhu2022xlcost,

title = {XLCoST: A Benchmark Dataset for Cross-lingual Code Intelligence},

url = {https://arxiv.org/abs/2206.08474},

author = {Zhu, Ming and Jain, Aneesh and Suresh, Karthik and Ravindran, Roshan and Tipirneni, Sindhu and Reddy, Chandan K.},

year = {2022},

eprint={2206.08474},

archivePrefix={arXiv}

}

``` | [

-0.28507813811302185,

-0.49650102853775024,

0.16231809556484222,

0.27562257647514343,

-0.17975109815597534,

0.12194383144378662,

-0.5534012913703918,

-0.4015326499938965,

0.13329991698265076,

0.3615673780441284,

-0.5825788378715515,

-0.5720943212509155,

-0.19453400373458862,

0.371785581111... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

matchbench/dbp15k-fr-en | matchbench | 2023-01-23T12:28:45Z | 33 | 0 | null | [

"language:fr",

"language:en",

"region:us"

] | 2023-01-23T12:28:45Z | 2022-10-31T07:08:08.000Z | 2022-10-31T07:08:08 | ---

language:

- fr

- en

--- | [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bigbio/evidence_inference | bigbio | 2022-12-22T15:44:37Z | 33 | 1 | null | [

"multilinguality:monolingual",

"language:en",

"license:mit",

"region:us"

] | 2022-12-22T15:44:37Z | 2022-11-13T22:08:29.000Z | 2022-11-13T22:08:29 |

---

language:

- en

bigbio_language:

- English

license: mit

multilinguality: monolingual

bigbio_license_shortname: MIT

pretty_name: Evidence Inference 2.0

homepage: https://github.com/jayded/evidence-inference

bigbio_pubmed: True

bigbio_public: True

bigbio_tasks:

- QUESTION_ANSWERING

---

# Dataset Card for Evidence Inference 2.0

## Dataset Description

- **Homepage:** https://github.com/jayded/evidence-inference

- **Pubmed:** True

- **Public:** True

- **Tasks:** QA

The dataset consists of biomedical articles describing randomized control trials (RCTs) that compare multiple

treatments. Each of these articles will have multiple questions, or 'prompts' associated with them.

These prompts will ask about the relationship between an intervention and comparator with respect to an outcome,

as reported in the trial. For example, a prompt may ask about the reported effects of aspirin as compared

to placebo on the duration of headaches. For the sake of this task, we assume that a particular article

will report that the intervention of interest either significantly increased, significantly decreased

or had significant effect on the outcome, relative to the comparator.

## Citation Information

```

@inproceedings{deyoung-etal-2020-evidence,

title = "Evidence Inference 2.0: More Data, Better Models",

author = "DeYoung, Jay and

Lehman, Eric and

Nye, Benjamin and

Marshall, Iain and

Wallace, Byron C.",

booktitle = "Proceedings of the 19th SIGBioMed Workshop on Biomedical Language Processing",

month = jul,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.bionlp-1.13",

pages = "123--132",

}

```

| [

-0.020958783105015755,

-0.775492787361145,

0.5581621527671814,

0.2547838091850281,

-0.30114927887916565,

-0.3949306607246399,

-0.17850369215011597,

-0.4971206486225128,

0.15389294922351837,

0.23409071564674377,

-0.41862255334854126,

-0.6122226715087891,

-0.7224204540252686,

0.0913793295621... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

bigbio/scicite | bigbio | 2022-12-22T15:46:37Z | 33 | 0 | null | [

"multilinguality:monolingual",

"language:en",

"license:unknown",

"region:us"

] | 2022-12-22T15:46:37Z | 2022-11-13T22:12:03.000Z | 2022-11-13T22:12:03 |

---

language:

- en

bigbio_language:

- English

license: unknown

multilinguality: monolingual

bigbio_license_shortname: UNKNOWN

pretty_name: SciCite

homepage: https://allenai.org/data/scicite

bigbio_pubmed: False

bigbio_public: True

bigbio_tasks:

- TEXT_CLASSIFICATION

---

# Dataset Card for SciCite

## Dataset Description

- **Homepage:** https://allenai.org/data/scicite

- **Pubmed:** False

- **Public:** True

- **Tasks:** TXTCLASS

SciCite is a dataset of 11K manually annotated citation intents based on

citation context in the computer science and biomedical domains.

## Citation Information

```

@inproceedings{cohan:naacl19,

author = {Arman Cohan and Waleed Ammar and Madeleine van Zuylen and Field Cady},

title = {Structural Scaffolds for Citation Intent Classification in Scientific Publications},

booktitle = {Conference of the North American Chapter of the Association for Computational Linguistics},

year = {2019},

url = {https://aclanthology.org/N19-1361/},

doi = {10.18653/v1/N19-1361},

}

```

| [

0.1426616758108139,

-0.3671659827232361,

0.3486323952674866,

0.4763866364955902,

-0.3330455720424652,

-0.0016726849135011435,

-0.17729640007019043,

-0.3457036018371582,

0.38195115327835083,

0.07192974537611008,

-0.23797272145748138,

-0.759277880191803,

-0.539047360420227,

0.523559391498565... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

mrbesher/tr-paraphrase-tatoeba | mrbesher | 2022-11-15T13:15:35Z | 33 | 1 | null | [

"license:cc-by-4.0",

"region:us"

] | 2022-11-15T13:15:35Z | 2022-11-15T13:15:03.000Z | 2022-11-15T13:15:03 | ---

license: cc-by-4.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

W4nkel/turkish-sentiment-dataset | W4nkel | 2023-01-01T18:07:08Z | 33 | 0 | null | [

"license:cc-by-sa-4.0",

"region:us"

] | 2023-01-01T18:07:08Z | 2022-12-31T22:37:06.000Z | 2022-12-31T22:37:06 | ---

license: cc-by-sa-4.0

---

THIS DATASET BASED ON THIS SOURCE: [winvoker/turkish-sentiment-analysis-dataset](https://huggingface.co/datasets/winvoker/turkish-sentiment-analysis-dataset) | [

-0.3160149157047272,

-0.1318688839673996,

0.12323138117790222,

0.49977636337280273,

-0.18041794002056122,

-0.010511266067624092,

0.28216665983200073,

-0.22475126385688782,

0.5316064357757568,

0.6505851745605469,

-0.9923790097236633,

-0.6076586842536926,

-0.42653021216392517,

-0.17642679810... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

metaeval/mega | metaeval | 2023-03-24T13:55:03Z | 33 | 0 | null | [

"license:apache-2.0",

"region:us"

] | 2023-03-24T13:55:03Z | 2023-01-18T12:20:22.000Z | 2023-01-18T12:20:22 | ---

license: apache-2.0

---

| [

-0.1285335123538971,

-0.1861683875322342,

0.6529128551483154,

0.49436232447624207,

-0.19319400191307068,

0.23607441782951355,

0.36072009801864624,

0.05056373029947281,

0.5793656706809998,

0.7400146722793579,

-0.650810182094574,

-0.23784008622169495,

-0.7102247476577759,

-0.0478255338966846... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

jonathan-roberts1/Satellite-Images-of-Hurricane-Damage | jonathan-roberts1 | 2023-03-31T14:53:28Z | 33 | 1 | null | [

"license:cc-by-4.0",

"arxiv:1807.01688",

"region:us"

] | 2023-03-31T14:53:28Z | 2023-02-17T17:22:30.000Z | 2023-02-17T17:22:30 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': flooded or damaged buildings

'1': undamaged buildings

splits:

- name: train

num_bytes: 25588780

num_examples: 10000

download_size: 26998688

dataset_size: 25588780

license: cc-by-4.0

---

# Dataset Card for "Satellite-Images-of-Hurricane-Damage"

## Dataset Description

- **Paper** [Deep learning based damage detection on post-hurricane satellite imagery](https://arxiv.org/pdf/1807.01688.pdf)

- **Data** [IEEE-Dataport](https://ieee-dataport.org/open-access/detecting-damaged-buildings-post-hurricane-satellite-imagery-based-customized)

- **Split** Train_another

- **GitHub** [DamageDetection](https://github.com/qcao10/DamageDetection)

## Split Information

This HuggingFace dataset repository contains just the Train_another split.

### Licensing Information

[CC BY 4.0](https://ieee-dataport.org/open-access/detecting-damaged-buildings-post-hurricane-satellite-imagery-based-customized)

## Citation Information

[Deep learning based damage detection on post-hurricane satellite imagery](https://arxiv.org/pdf/1807.01688.pdf)

[IEEE-Dataport](https://ieee-dataport.org/open-access/detecting-damaged-buildings-post-hurricane-satellite-imagery-based-customized)

```

@misc{sdad-1e56-18,

title = {Detecting Damaged Buildings on Post-Hurricane Satellite Imagery Based on Customized Convolutional Neural Networks},

author = {Cao, Quoc Dung and Choe, Youngjun},

year = 2018,

publisher = {IEEE Dataport},

doi = {10.21227/sdad-1e56},

url = {https://dx.doi.org/10.21227/sdad-1e56}

}

@article{cao2018deep,

title={Deep learning based damage detection on post-hurricane satellite imagery},

author={Cao, Quoc Dung and Choe, Youngjun},

journal={arXiv preprint arXiv:1807.01688},

year={2018}

}

``` | [

-0.8181053996086121,

-0.6882941126823425,

0.2939535975456238,

0.18947957456111908,

-0.3454183042049408,

0.06743866205215454,

-0.1946800947189331,

-0.5169389843940735,

0.24521231651306152,

0.5334906578063965,

-0.29164791107177734,

-0.662223756313324,

-0.5639593601226807,

-0.2414168566465377... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

lucadiliello/searchqa | lucadiliello | 2023-06-06T08:34:01Z | 33 | 0 | null | [

"region:us"

] | 2023-06-06T08:34:01Z | 2023-02-25T18:04:03.000Z | 2023-02-25T18:04:03 | ---

dataset_info:

features:

- name: context

dtype: string

- name: question

dtype: string

- name: answers

sequence: string

- name: key

dtype: string

- name: labels

list:

- name: end

sequence: int64

- name: start

sequence: int64

splits:

- name: train

num_bytes: 483999103

num_examples: 117384

- name: validation

num_bytes: 69647447

num_examples: 16980

download_size: 325197949

dataset_size: 553646550

---

# Dataset Card for "searchqa"

Split taken from the MRQA 2019 Shared Task, formatted and filtered for Question Answering. For the original dataset, have a look [here](https://huggingface.co/datasets/mrqa). | [

-0.6381931900978088,

-0.6409305930137634,

0.34852251410484314,

-0.12135893851518631,

-0.27192598581314087,

0.13739316165447235,

0.4432910978794098,

-0.24936294555664062,

0.9418812394142151,

0.8777446150779724,

-1.2965021133422852,

-0.1337401568889618,

-0.2847541272640228,

0.055103447288274... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

ClementRomac/cleaned_deduplicated_oscar | ClementRomac | 2023-10-25T14:05:19Z | 33 | 0 | null | [

"region:us"

] | 2023-10-25T14:05:19Z | 2023-03-27T12:42:39.000Z | 2023-03-27T12:42:39 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 978937483730

num_examples: 232133013

- name: test

num_bytes: 59798696914

num_examples: 12329126

download_size: 37220219718

dataset_size: 1038736180644

---

# Dataset Card for "cleaned_deduplicated_oscar"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.46525004506111145,

-0.14199210703372955,

0.17430466413497925,

-0.05238965153694153,

-0.4615820050239563,

0.01630067080259323,

0.5648317933082581,

-0.22107604146003723,

0.9944058656692505,

0.6839401125907898,

-0.5624855160713196,

-0.6031593680381775,

-0.7873845100402832,

-0.0761284083127... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

liuyanchen1015/MULTI_VALUE_sst2_negative_concord | liuyanchen1015 | 2023-04-03T19:48:02Z | 33 | 0 | null | [

"region:us"

] | 2023-04-03T19:48:02Z | 2023-04-03T19:47:58.000Z | 2023-04-03T19:47:58 | ---

dataset_info:

features:

- name: sentence

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: score

dtype: int64

splits:

- name: dev

num_bytes: 6956

num_examples: 48

- name: test

num_bytes: 12384

num_examples: 84

- name: train

num_bytes: 165604

num_examples: 1366

download_size: 95983

dataset_size: 184944

---

# Dataset Card for "MULTI_VALUE_sst2_negative_concord"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.41821691393852234,

-0.1620696634054184,

0.3222043514251709,

0.27521294355392456,

-0.6062382459640503,

0.0495760515332222,

0.2577967345714569,

-0.06651636958122253,

0.8867769837379456,

0.29021763801574707,

-0.7009086608886719,

-0.7893183827400208,

-0.7041661739349365,

-0.4536333084106445... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

liuyanchen1015/MULTI_VALUE_sst2_inverted_indirect_question | liuyanchen1015 | 2023-04-03T19:48:45Z | 33 | 0 | null | [

"region:us"

] | 2023-04-03T19:48:45Z | 2023-04-03T19:48:41.000Z | 2023-04-03T19:48:41 | ---

dataset_info:

features:

- name: sentence

dtype: string

- name: label

dtype: int64

- name: idx

dtype: int64

- name: score

dtype: int64

splits:

- name: dev

num_bytes: 1554

num_examples: 10

- name: test

num_bytes: 4967

num_examples: 30

- name: train

num_bytes: 80411

num_examples: 597

download_size: 36917

dataset_size: 86932

---

# Dataset Card for "MULTI_VALUE_sst2_inverted_indirect_question"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.24992381036281586,

-0.5645225048065186,

0.14910028874874115,

0.22447852790355682,

-0.506771981716156,

0.017980465665459633,

0.22114233672618866,

-0.09007815271615982,

0.7155178785324097,

0.4941098988056183,

-0.9165758490562439,

-0.22178056836128235,

-0.6111413836479187,

-0.2896738350391... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

mstz/abalone | mstz | 2023-04-15T11:04:08Z | 33 | 0 | null | [

"task_categories:tabular-regression",

"task_categories:tabular-classification",

"size_categories:1K<n<10K",

"language:en",

"license:cc",

"abalone",

"tabular_regression",

"regression",

"binary_classification",

"region:us"

] | 2023-04-15T11:04:08Z | 2023-04-05T10:59:09.000Z | 2023-04-05T10:59:09 | ---

language:

- en

tags:

- abalone

- tabular_regression

- regression

- binary_classification

pretty_name: Abalone

size_categories:

- 1K<n<10K

task_categories:

- tabular-regression

- tabular-classification

configs:

- abalone

- binary

license: cc

---

# Abalone

The [Abalone dataset](https://archive-beta.ics.uci.edu/dataset/1/abalone) from the [UCI ML repository](https://archive.ics.uci.edu/ml/datasets).

Predict the age of the given abalone.

# Configurations and tasks

| **Configuration** | **Task** | **Description** |

|-------------------|---------------------------|-----------------------------------------|

| abalone | Regression | Predict the age of the abalone. |

| binary | Binary classification | Does the abalone have more than 9 rings?|

# Usage

```python

from datasets import load_dataset

dataset = load_dataset("mstz/abalone")["train"]

```

# Features

Target feature in bold.

|**Feature** |**Type** |

|-----------------------|---------------|

| sex | `[string]` |

| length | `[float64]` |

| diameter | `[float64]` |

| height | `[float64]` |

| whole_weight | `[float64]` |

| shucked_weight | `[float64]` |

| viscera_weight | `[float64]` |

| shell_weight | `[float64]` |

| **number_of_rings** | `[int8]` | | [

-0.3585018515586853,

-0.818467378616333,

0.5289164185523987,

0.16143952310085297,

-0.345245361328125,

-0.38129884004592896,

-0.036782748997211456,

-0.4570544362068176,

0.14236660301685333,

0.622136116027832,

-0.8482115268707275,

-0.8441115021705627,

-0.3231200873851776,

0.3923017680644989,... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

huanngzh/anime_face_control_60k | huanngzh | 2023-04-07T02:20:48Z | 33 | 1 | null | [

"region:us"

] | 2023-04-07T02:20:48Z | 2023-04-06T19:14:05.000Z | 2023-04-06T19:14:05 | ---

dataset_info:

features:

- name: item_id

dtype: string

- name: prompt

dtype: string

- name: blip_caption

dtype: string

- name: landmarks

sequence:

sequence: float64

- name: source

dtype: image

- name: target

dtype: image

- name: visual

dtype: image

- name: origin_path

dtype: string

- name: source_path

dtype: string

- name: target_path

dtype: string

- name: visual_path

dtype: string

splits:

- name: train

num_bytes: 5359477272.0

num_examples: 60000

download_size: 0

dataset_size: 5359477272.0

---

# Dataset Card for "acgn_face_control_60k"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.5379897356033325,

-0.17641006410121918,

-0.2532491981983185,

0.3420475423336029,

-0.12615732848644257,

0.04844487085938454,

0.3766440749168396,

-0.2699528932571411,

0.7266623973846436,

0.5228058695793152,

-0.8960420489311218,

-0.8292017579078674,

-0.6253646016120911,

-0.3408744633197784... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

japneets/Alpaca_instruction_fine_tune_Punjabi | japneets | 2023-04-10T04:32:47Z | 33 | 0 | null | [

"region:us"

] | 2023-04-10T04:32:47Z | 2023-04-10T04:32:41.000Z | 2023-04-10T04:32:41 | ---

dataset_info:

features:

- name: input

dtype: string

- name: instruction

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 46649317

num_examples: 52002

download_size: 18652304

dataset_size: 46649317

---

# Dataset Card for "Alpaca_instruction_fine_tune_Punjabi"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) | [

-0.562454879283905,

-0.6644330620765686,

-0.09163156151771545,

0.4223155975341797,

-0.26563560962677,

-0.1972937285900116,

-0.02811763435602188,

-0.061275091022253036,

0.8455881476402283,

0.4304424226284027,

-0.9834110140800476,

-0.8431955575942993,

-0.7446191310882568,

-0.1297537237405777... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

rajuptvs/ecommerce_products_clip | rajuptvs | 2023-04-12T02:21:09Z | 33 | 10 | null | [

"license:mit",

"region:us"

] | 2023-04-12T02:21:09Z | 2023-04-12T02:13:43.000Z | 2023-04-12T02:13:43 | ---

license: mit

dataset_info:

features:

- name: image

dtype: image

- name: Product_name

dtype: string

- name: Price

dtype: string

- name: colors

dtype: string

- name: Pattern

dtype: string

- name: Description

dtype: string

- name: Other Details

dtype: string

- name: Clipinfo

dtype: string

splits:

- name: train

num_bytes: 87008501.926

num_examples: 1913

download_size: 48253307

dataset_size: 87008501.926

---

| [

-0.12853392958641052,

-0.18616779148578644,

0.6529127955436707,

0.49436280131340027,

-0.19319361448287964,

0.23607419431209564,

0.36072003841400146,

0.050563063472509384,

0.579365611076355,

0.7400140762329102,

-0.6508104205131531,

-0.23783954977989197,

-0.7102249264717102,

-0.0478260256350... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

mstz/contraceptive | mstz | 2023-04-16T17:03:10Z | 33 | 0 | null | [

"task_categories:tabular-classification",

"size_categories:1K<n<10K",

"language:en",

"license:cc",

"contraceptive",

"tabular_classification",

"binary_classification",

"UCI",

"region:us"

] | 2023-04-16T17:03:10Z | 2023-04-12T08:32:09.000Z | 2023-04-12T08:32:09 | ---

language:

- en

tags:

- contraceptive

- tabular_classification

- binary_classification

- UCI

pretty_name: Contraceptive evaluation

size_categories:

- 1K<n<10K

task_categories:

- tabular-classification

configs:

- contraceptive

license: cc

---

# Contraceptive

The [Contraceptive dataset](https://archive-beta.ics.uci.edu/dataset/30/contraceptive+method+choice) from the [UCI repository](https://archive-beta.ics.uci.edu).

Does the couple use contraceptives?

# Configurations and tasks

| **Configuration** | **Task** | **Description** |

|-------------------|---------------------------|-------------------------|

| contraceptive | Binary classification | Does the couple use contraceptives?|

# Usage

```python

from datasets import load_dataset

dataset = load_dataset("mstz/contraceptive", "contraceptive")["train"]

``` | [

-0.0925513431429863,

-0.41935616731643677,

0.09374373406171799,

0.45532500743865967,

-0.47859111428260803,

-0.6847796440124512,

-0.05256672576069832,

0.008495384827256203,

0.061988770961761475,

0.461652547121048,

-0.6588844060897827,

-0.510547935962677,

-0.4558188319206238,

0.2452524304389... | null | null | null | null | null | null | null | null | null | null | null | null | null | |

renumics/cifar100-enriched | renumics | 2023-06-06T12:23:33Z | 33 | 4 | cifar-100 | [

"task_categories:image-classification",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|other-80-Million-Tiny-Images",

"language:en",

"license:mit",

"image classification",

"cifar-100",

"cifar-... | 2023-06-06T12:23:33Z | 2023-04-21T15:07:01.000Z | 2023-04-21T15:07:01 | ---

license: mit

task_categories:

- image-classification

pretty_name: CIFAR-100

source_datasets:

- extended|other-80-Million-Tiny-Images

paperswithcode_id: cifar-100

size_categories:

- 10K<n<100K

tags:

- image classification

- cifar-100

- cifar-100-enriched

- embeddings

- enhanced

- spotlight

- renumics

language:

- en

multilinguality:

- monolingual

annotations_creators:

- crowdsourced

language_creators:

- found

---

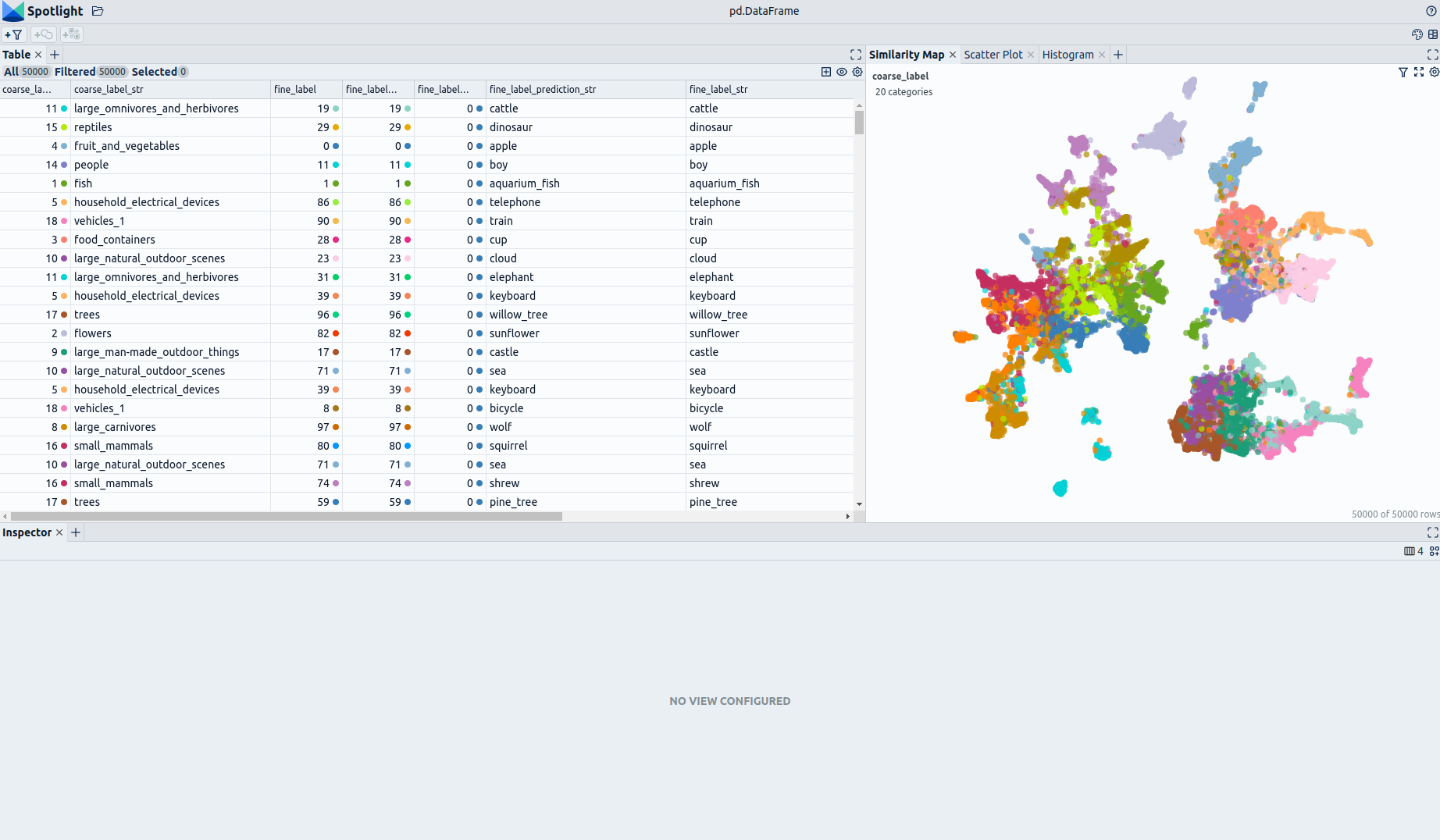

# Dataset Card for CIFAR-100-Enriched (Enhanced by Renumics)

## Dataset Description

- **Homepage:** [Renumics Homepage](https://renumics.com/?hf-dataset-card=cifar100-enriched)

- **GitHub** [Spotlight](https://github.com/Renumics/spotlight)

- **Dataset Homepage** [CS Toronto Homepage](https://www.cs.toronto.edu/~kriz/cifar.html#:~:text=The%20CIFAR%2D100%20dataset)

- **Paper:** [Learning Multiple Layers of Features from Tiny Images](https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf)

### Dataset Summary

📊 [Data-centric AI](https://datacentricai.org) principles have become increasingly important for real-world use cases.

At [Renumics](https://renumics.com/?hf-dataset-card=cifar100-enriched) we believe that classical benchmark datasets and competitions should be extended to reflect this development.

🔍 This is why we are publishing benchmark datasets with application-specific enrichments (e.g. embeddings, baseline results, uncertainties, label error scores). We hope this helps the ML community in the following ways:

1. Enable new researchers to quickly develop a profound understanding of the dataset.

2. Popularize data-centric AI principles and tooling in the ML community.

3. Encourage the sharing of meaningful qualitative insights in addition to traditional quantitative metrics.

📚 This dataset is an enriched version of the [CIFAR-100 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

### Explore the Dataset

The enrichments allow you to quickly gain insights into the dataset. The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) enables that with just a few lines of code:

Install datasets and Spotlight via [pip](https://packaging.python.org/en/latest/key_projects/#pip):

```python

!pip install renumics-spotlight datasets

```

Load the dataset from huggingface in your notebook:

```python

import datasets

dataset = datasets.load_dataset("renumics/cifar100-enriched", split="train")

```

Start exploring with a simple view that leverages embeddings to identify relevant data segments:

```python

from renumics import spotlight

df = dataset.to_pandas()

df_show = df.drop(columns=['embedding', 'probabilities'])

spotlight.show(df_show, port=8000, dtype={"image": spotlight.Image, "embedding_reduced": spotlight.Embedding})

```

You can use the UI to interactively configure the view on the data. Depending on the concrete tasks (e.g. model comparison, debugging, outlier detection) you might want to leverage different enrichments and metadata.

### CIFAR-100 Dataset

The CIFAR-100 dataset consists of 60000 32x32 colour images in 100 classes, with 600 images per class. There are 50000 training images and 10000 test images.

The 100 classes in the CIFAR-100 are grouped into 20 superclasses. Each image comes with a "fine" label (the class to which it belongs) and a "coarse" label (the superclass to which it belongs).

The classes are completely mutually exclusive.

We have enriched the dataset by adding **image embeddings** generated with a [Vision Transformer](https://huggingface.co/google/vit-base-patch16-224).

Here is the list of classes in the CIFAR-100:

| Superclass | Classes |

|---------------------------------|----------------------------------------------------|

| aquatic mammals | beaver, dolphin, otter, seal, whale |

| fish | aquarium fish, flatfish, ray, shark, trout |

| flowers | orchids, poppies, roses, sunflowers, tulips |

| food containers | bottles, bowls, cans, cups, plates |

| fruit and vegetables | apples, mushrooms, oranges, pears, sweet peppers |

| household electrical devices | clock, computer keyboard, lamp, telephone, television|

| household furniture | bed, chair, couch, table, wardrobe |

| insects | bee, beetle, butterfly, caterpillar, cockroach |

| large carnivores | bear, leopard, lion, tiger, wolf |

| large man-made outdoor things | bridge, castle, house, road, skyscraper |

| large natural outdoor scenes | cloud, forest, mountain, plain, sea |

| large omnivores and herbivores | camel, cattle, chimpanzee, elephant, kangaroo |

| medium-sized mammals | fox, porcupine, possum, raccoon, skunk |

| non-insect invertebrates | crab, lobster, snail, spider, worm |

| people | baby, boy, girl, man, woman |

| reptiles | crocodile, dinosaur, lizard, snake, turtle |

| small mammals | hamster, mouse, rabbit, shrew, squirrel |

| trees | maple, oak, palm, pine, willow |

| vehicles 1 | bicycle, bus, motorcycle, pickup truck, train |

| vehicles 2 | lawn-mower, rocket, streetcar, tank, tractor |

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image into one of 100 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-cifar-100).

### Languages

English class labels.

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```python

{

'image': '/huggingface/datasets/downloads/extracted/f57c1a3fbca36f348d4549e820debf6cc2fe24f5f6b4ec1b0d1308a80f4d7ade/0/0.png',

'full_image': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=32x32 at 0x7F15737C9C50>,

'fine_label': 19,

'coarse_label': 11,

'fine_label_str': 'cattle',

'coarse_label_str': 'large_omnivores_and_herbivores',

'fine_label_prediction': 19,

'fine_label_prediction_str': 'cattle',

'fine_label_prediction_error': 0,

'split': 'train',

'embedding': [-1.2482988834381104,

0.7280710339546204, ...,

0.5312759280204773],

'probabilities': [4.505949982558377e-05,

7.286163599928841e-05, ...,

6.577593012480065e-05],

'embedding_reduced': [1.9439491033554077, -5.35720682144165]

}

```

### Data Fields

| Feature | Data Type |

|---------------------------------|------------------------------------------------|

| image | Value(dtype='string', id=None) |

| full_image | Image(decode=True, id=None) |

| fine_label | ClassLabel(names=[...], id=None) |

| coarse_label | ClassLabel(names=[...], id=None) |

| fine_label_str | Value(dtype='string', id=None) |

| coarse_label_str | Value(dtype='string', id=None) |

| fine_label_prediction | ClassLabel(names=[...], id=None) |

| fine_label_prediction_str | Value(dtype='string', id=None) |

| fine_label_prediction_error | Value(dtype='int32', id=None) |

| split | Value(dtype='string', id=None) |

| embedding | Sequence(feature=Value(dtype='float32', id=None), length=768, id=None) |

| probabilities | Sequence(feature=Value(dtype='float32', id=None), length=100, id=None) |

| embedding_reduced | Sequence(feature=Value(dtype='float32', id=None), length=2, id=None) |

### Data Splits

| Dataset Split | Number of Images in Split | Samples per Class (fine) |

| ------------- |---------------------------| -------------------------|

| Train | 50000 | 500 |

| Test | 10000 | 100 |

## Dataset Creation

### Curation Rationale

The CIFAR-10 and CIFAR-100 are labeled subsets of the [80 million tiny images](http://people.csail.mit.edu/torralba/tinyimages/) dataset.

They were collected by Alex Krizhevsky, Vinod Nair, and Geoffrey Hinton.

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

If you use this dataset, please cite the following paper:

```

@article{krizhevsky2009learning,

added-at = {2021-01-21T03:01:11.000+0100},

author = {Krizhevsky, Alex},

biburl = {https://www.bibsonomy.org/bibtex/2fe5248afe57647d9c85c50a98a12145c/s364315},

interhash = {cc2d42f2b7ef6a4e76e47d1a50c8cd86},

intrahash = {fe5248afe57647d9c85c50a98a12145c},

keywords = {},

pages = {32--33},

timestamp = {2021-01-21T03:01:11.000+0100},