modelId stringlengths 6 107 | label list | readme stringlengths 0 56.2k | readme_len int64 0 56.2k |

|---|---|---|---|

boychaboy/MNLI_distilbert-base-cased | [

"contradiction",

"entailment",

"neutral"

] | Entry not found | 15 |

cardiffnlp/bertweet-base-stance-atheism | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | 0 | |

JacopoBandoni/BioBertRelationGenesDiseases | null | ---

license: afl-3.0

widget:

- text: "The case of a 72-year-old male with @DISEASE$ with poor insulin control (fasting hyperglycemia greater than 180 mg/dl) who had a long-standing polyuric syndrome is here presented. Hypernatremia and plasma osmolality elevated together with a low urinary osmolality led to the suspicion of diabetes insipidus, which was subsequently confirmed by the dehydration test and the administration of @GENE$ sc."

example_title: "Example 1"

- text: "Hypernatremia and plasma osmolality elevated together with a low urinary osmolality led to the suspicion of diabetes insipidus, which was subsequently confirmed by the dehydration test and the administration of @GENE$ sc. With 61% increase in the calculated urinary osmolarity one hour post desmopressin s.c., @DISEASE$ was diagnosed."

example_title: "Example 2"

---

The following is a fine-tuning of the BioBert models on the GAD dataset.

The model works by masking the gene string with "@GENE$" and the disease string with "@DISEASE$".

The output is a text classification that can either be:

- "LABEL0" if there is no relation

- "LABEL1" if there is a relation. | 1,147 |

Sreevishnu/funnel-transformer-small-imdb | [

"neg",

"pos"

] | ---

license: apache-2.0

language: en

widget:

- text: "In the garden of wonderment that is the body of work by the animation master Hayao Miyazaki, his 2001 gem 'Spirited Away' is at once one of his most accessible films to a Western audience and the one most distinctly rooted in Japanese culture and lore. The tale of Chihiro, a 10 year old girl who resents being moved away from all her friends, only to find herself working in a bathhouse for the gods, doesn't just use its home country's fraught relationship with deities as a backdrop. Never remotely didactic, the film is ultimately a self-fulfilment drama that touches on religious, ethical, ecological and psychological issues.

It's also a fine children's film, the kind that elicits a deepening bond across repeat viewings and the passage of time, mostly because Miyazaki refuses to talk down to younger viewers. That's been a constant in all of his filmography, but it's particularly conspicuous here because the stakes for its young protagonist are bigger than in most of his previous features aimed at younger viewers. It involves conquering fears and finding oneself in situations where safety is not a given.

There are so many moving parts in Spirited Away, from both a thematic and technical point of view, that pinpointing what makes Spirited Away stand out from an already outstanding body of work becomes as challenging as a meeting with Yubaba. But I think it comes down to an ability to deal with heady, complex subject matter from a young girl's perspective without diluting or lessening its resonance. Miyazaki has made a loopy, demanding work of art that asks your inner child to come out and play. There are few high-wire acts in all of movie-dom as satisfying as that."

datasets:

- imdb

tags:

- sentiment-analysis

---

# Funnel Transformer small (B4-4-4 with decoder) fine-tuned on IMDB for Sentiment Analysis

These are the model weights for the Funnel Transformer small model fine-tuned on the IMDB dataset for performing Sentiment Analysis with `max_position_embeddings=1024`.

The original model weights for English language are from [funnel-transformer/small](https://huggingface.co/funnel-transformer/small) and it uses a similar objective objective as [ELECTRA](https://huggingface.co/transformers/model_doc/electra.html). It was introduced in [this paper](https://arxiv.org/pdf/2006.03236.pdf) and first released in [this repository](https://github.com/laiguokun/Funnel-Transformer). This model is uncased: it does not make a difference between english and English.

## Fine-tuning Results

| | Accuracy | Precision | Recall | F1 |

|-------------------------------|----------|-----------|----------|----------|

| funnel-transformer-small-imdb | 0.956530 | 0.952286 | 0.961075 | 0.956661 |

## Model description (from [funnel-transformer/small](https://huggingface.co/funnel-transformer/small))

Funnel Transformer is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts.

More precisely, a small language model corrupts the input texts and serves as a generator of inputs for this model, and the pretraining objective is to predict which token is an original and which one has been replaced, a bit like a GAN training.

This way, the model learns an inner representation of the English language that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard classifier using the features produced by the BERT model as inputs.

# How to use

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained(

"Sreevishnu/funnel-transformer-small-imdb",

use_fast=True)

model = AutoModelForSequenceClassification.from_pretrained(

"Sreevishnu/funnel-transformer-small-imdb",

num_labels=2,

max_position_embeddings=1024)

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

# Example App

https://lazy-film-reviews-7gif2bz4sa-ew.a.run.app/

Project repo: https://github.com/akshaydevml/lazy-film-reviews | 4,520 |

dinalzein/xlm-roberta-base-finetuned-language-identification | [

"ar",

"bg",

"de",

"el",

"en",

"es",

"fr",

"hi",

"it",

"ja",

"nl",

"pl",

"pt",

"ru",

"sw",

"th",

"tr",

"ur",

"vi",

"zh"

] | ---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: xlm-roberta-base-finetuned-language-identification

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-language-detection-new

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the [Language Identification dataset](https://huggingface.co/datasets/papluca/language-identification).

It achieves the following results on the evaluation set:

- Loss: 0.0436

- Accuracy: 0.9959

## Model description

The model used in this task is XLM-RoBERTa, a transformer model with a classification head on top.

## Intended uses & limitations

It identifies the language a document is written in and it supports 20 different langauges:

Arabic (ar), Bulgarian (bg), German (de), Modern greek (el), English (en), Spanish (es), French (fr), Hindi (hi), Italian (it), Japanese (ja), Dutch (nl), Polish (pl), Portuguese (pt), Russian (ru), Swahili (sw), Thai (th), Turkish (tr), Urdu (ur), Vietnamese (vi), Chinese (zh)

## Training and evaluation data

The model is fine-tuned on the [Language Identification dataset](https://huggingface.co/datasets/papluca/language-identification), a corpus consists of text from 20 different languages. The dataset is split with 7000 sentences for training, 1000 for validating, and 1000 for testing. The accuracy on the test set is 99.5%.

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.0493 | 1.0 | 35000 | 0.0407 | 0.9955 |

| 0.018 | 2.0 | 70000 | 0.0436 | 0.9959 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

| 2,234 |

cwkeam/m-ctc-t-large-sequence-lid | [

"ab",

"ar",

"as",

"br",

"ca",

"cnh",

"cs",

"cv",

"cy",

"de",

"dv",

"el",

"en",

"eo",

"es",

"et",

"eu",

"fa",

"fi",

"fr",

"fy-NL",

"ga-IE",

"hi",

"hsb",

"hu",

"ia",

"id",

"it",

"ja",

"ka",

"kab",

"ky",

"lg",

"lt",

"lv",

"mn",

"mt",

"nl",

"or",

"pa-IN",

"pl",

"pt",

"rm-sursilv",

"rm-vallader",

"ro",

"ru",

"rw",

"sah",

"sl",

"sv-SE",

"ta",

"th",

"tr",

"tt",

"uk",

"vi",

"vot",

"zh-CN",

"zh-HK",

"zh-TW"

] | ---

language: en

datasets:

- librispeech_asr

- common_voice

tags:

- speech

license: apache-2.0

---

# M-CTC-T

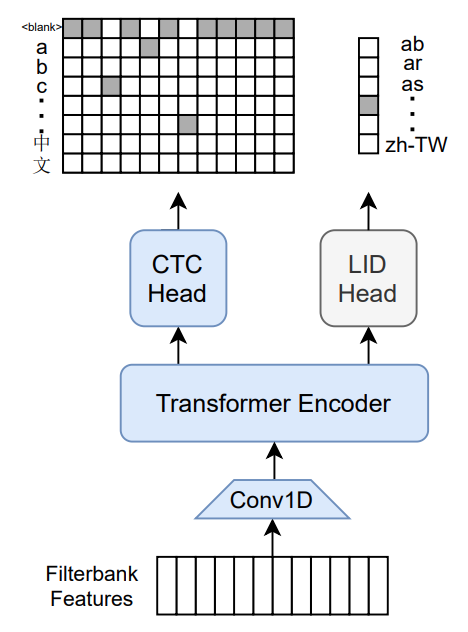

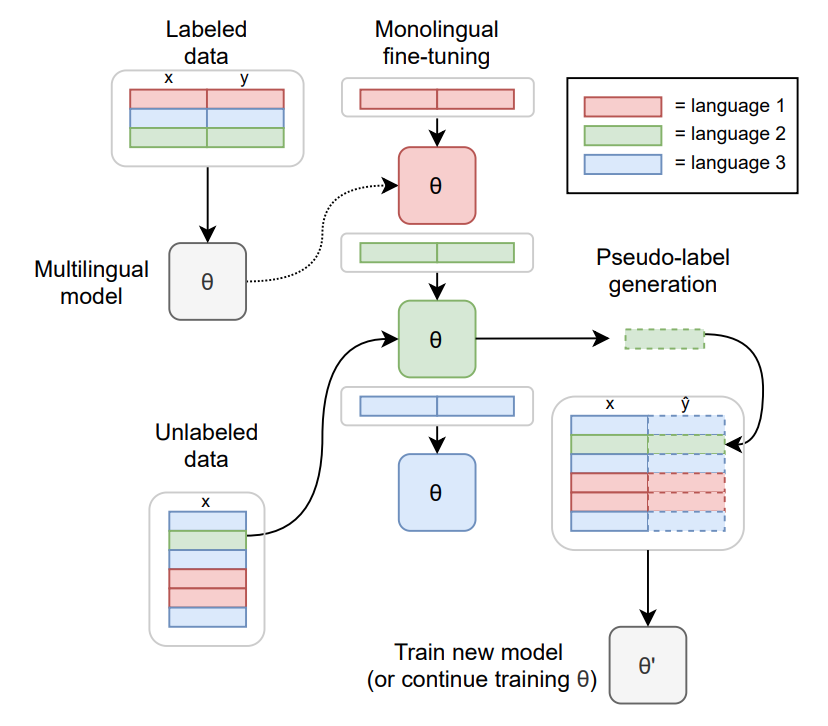

Massively multilingual speech recognizer from Meta AI. The model is a 1B-param transformer encoder, with a CTC head over 8065 character labels and a language identification head over 60 language ID labels. It is trained on Common Voice (version 6.1, December 2020 release) and VoxPopuli. After training on Common Voice and VoxPopuli, the model is trained on Common Voice only. The labels are unnormalized character-level transcripts (punctuation and capitalization are not removed). The model takes as input Mel filterbank features from a 16Khz audio signal.

The original Flashlight code, model checkpoints, and Colab notebook can be found at https://github.com/flashlight/wav2letter/tree/main/recipes/mling_pl .

## Citation

[Paper](https://arxiv.org/abs/2111.00161)

Authors: Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, Ronan Collobert

```

@article{lugosch2021pseudo,

title={Pseudo-Labeling for Massively Multilingual Speech Recognition},

author={Lugosch, Loren and Likhomanenko, Tatiana and Synnaeve, Gabriel and Collobert, Ronan},

journal={ICASSP},

year={2022}

}

```

Additional thanks to [Chan Woo Kim](https://huggingface.co/cwkeam) and [Patrick von Platen](https://huggingface.co/patrickvonplaten) for porting the model from Flashlight to PyTorch.

# Training method

TO-DO: replace with the training diagram from paper

For more information on how the model was trained, please take a look at the [official paper](https://arxiv.org/abs/2111.00161).

# Usage

To transcribe audio files the model can be used as a standalone acoustic model as follows:

```python

import torch

import torchaudio

from datasets import load_dataset

from transformers import MCTCTForCTC, MCTCTProcessor

model = MCTCTForCTC.from_pretrained("speechbrain/mctct-large")

processor = MCTCTProcessor.from_pretrained("speechbrain/mctct-large")

# load dummy dataset and read soundfiles

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

# tokenize

input_features = processor(ds[0]["audio"]["array"], return_tensors="pt").input_features

# retrieve logits

logits = model(input_features).logits

# take argmax and decode

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

```

Results for Common Voice, averaged over all languages:

*Character error rate (CER)*:

| Valid | Test |

|-------|------|

| 21.4 | 23.3 |

| 2,741 |

p-christ/QandAClassifier | [

"ACCEPTED",

"REJECTED"

] | Entry not found | 15 |

IMSyPP/hate_speech_nl | [

"LABEL_0",

"LABEL_1",

"LABEL_2",

"LABEL_3"

] | ---

language:

- nl

license: mit

---

# Hate Speech Classifier for Social Media Content in Dutch

A monolingual model for hate speech classification of social media content in Dutch. The model was trained on 20000 social media posts (youtube, twitter, facebook) and tested on an independent test set of 2000 posts. It is based on thepre-trained language model [BERTje](https://huggingface.co/wietsedv/bert-base-dutch-cased).

## Tokenizer

During training the text was preprocessed using the BERTje tokenizer. We suggest the same tokenizer is used for inference.

## Model output

The model classifies each input into one of four distinct classes:

* 0 - acceptable

* 1 - inappropriate

* 2 - offensive

* 3 - violent | 716 |

NbAiLab/nb-bert-base-samisk | null | ---

license: apache-2.0

---

| 31 |

TehranNLP-org/bert-base-uncased-cls-hatexplain | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | Entry not found | 15 |

classla/sloberta-frenk-hate | null | ---

language: "sl"

tags:

- text-classification

- hate-speech

widget:

- text: "Silva, ti si grda in neprijazna"

---

Text classification model based on `EMBEDDIA/sloberta` and fine-tuned on the [FRENK dataset](https://www.clarin.si/repository/xmlui/handle/11356/1433) comprising of LGBT and migrant hatespeech. Only the slovenian subset of the data was used for fine-tuning and the dataset has been relabeled for binary classification (offensive or acceptable).

## Fine-tuning hyperparameters

Fine-tuning was performed with `simpletransformers`. Beforehand a brief hyperparameter optimisation was performed and the presumed optimal hyperparameters are:

```python

model_args = {

"num_train_epochs": 14,

"learning_rate": 1e-5,

"train_batch_size": 21,

}

```

## Performance

The same pipeline was run with two other transformer models and `fasttext` for comparison. Accuracy and macro F1 score were recorded for each of the 6 fine-tuning sessions and post festum analyzed.

| model | average accuracy | average macro F1|

|---|---|---|

|sloberta-frenk-hate|0.7785|0.7764|

|EMBEDDIA/crosloengual-bert |0.7616|0.7585|

|xlm-roberta-base |0.686|0.6827|

|fasttext|0.709 |0.701 |

From recorded accuracies and macro F1 scores p-values were also calculated:

Comparison with `crosloengual-bert`:

| test | accuracy p-value | macro F1 p-value|

| --- | --- | --- |

|Wilcoxon|0.00781|0.00781|

|Mann Whithney U test|0.00163|0.00108|

|Student t-test |0.000101|3.95e-05|

Comparison with `xlm-roberta-base`:

| test | accuracy p-value | macro F1 p-value|

| --- | --- | --- |

|Wilcoxon|0.00781|0.00781|

|Mann Whithney U test|0.00108|0.00108|

|Student t-test |9.46e-11|6.94e-11|

## Use examples

```python

from simpletransformers.classification import ClassificationModel

model_args = {

"num_train_epochs": 6,

"learning_rate": 3e-6,

"train_batch_size": 69}

model = ClassificationModel(

"camembert", "5roop/sloberta-frenk-hate", use_cuda=True,

args=model_args

)

predictions, logit_output = model.predict(["Silva, ti si grda in neprijazna", "Naša hiša ima dimnik"])

predictions

### Output:

### array([1, 0])

```

## Citation

If you use the model, please cite the following paper on which the original model is based:

```

@article{DBLP:journals/corr/abs-1907-11692,

author = {Yinhan Liu and

Myle Ott and

Naman Goyal and

Jingfei Du and

Mandar Joshi and

Danqi Chen and

Omer Levy and

Mike Lewis and

Luke Zettlemoyer and

Veselin Stoyanov},

title = {RoBERTa: {A} Robustly Optimized {BERT} Pretraining Approach},

journal = {CoRR},

volume = {abs/1907.11692},

year = {2019},

url = {http://arxiv.org/abs/1907.11692},

archivePrefix = {arXiv},

eprint = {1907.11692},

timestamp = {Thu, 01 Aug 2019 08:59:33 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-1907-11692.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

and the dataset used for fine-tuning:

```

@misc{ljubešić2019frenk,

title={The FRENK Datasets of Socially Unacceptable Discourse in Slovene and English},

author={Nikola Ljubešić and Darja Fišer and Tomaž Erjavec},

year={2019},

eprint={1906.02045},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/1906.02045}

}

``` | 3,464 |

lewtun/minilm-finetuned-emotion | [

"anger",

"fear",

"joy",

"love",

"sadness",

"surprise"

] | ---

license: mit

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- f1

model-index:

- name: minilm-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

args: default

metrics:

- name: F1

type: f1

value: 0.9117582218338629

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# minilm-finetuned-emotion

This model is a fine-tuned version of [microsoft/MiniLM-L12-H384-uncased](https://huggingface.co/microsoft/MiniLM-L12-H384-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3891

- F1: 0.9118

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 1.3957 | 1.0 | 250 | 1.0134 | 0.6088 |

| 0.8715 | 2.0 | 500 | 0.6892 | 0.8493 |

| 0.6085 | 3.0 | 750 | 0.4943 | 0.8920 |

| 0.4626 | 4.0 | 1000 | 0.4096 | 0.9078 |

| 0.3961 | 5.0 | 1250 | 0.3891 | 0.9118 |

### Framework versions

- Transformers 4.12.3

- Pytorch 1.6.0

- Datasets 1.15.1

- Tokenizers 0.10.3

| 1,862 |

inovex/multi2convai-corona-de-bert | [

"corona.traffic",

"corona.supplies",

"corona.quarantine",

"corona.masks",

"corona.illness",

"corona.package",

"corona.vaccine",

"corona.rumors",

"corona.risk",

"corona.course",

"corona.symptoms",

"corona.patients",

"corona.deathRate",

"corona.infect",

"corona.protect",

"corona.definition",

"neo.feeling",

"neo.hello",

"neo.introduce",

"neo.help",

"corona.ibuprofen",

"neo.sucks",

"neo.joke",

"neo.thanks",

"neo.wyd",

"neo.yes",

"neo.no",

"neo.report",

"neo.sorry",

"neo.age",

"neo.home",

"corona.warn-app",

"corona.test",

"corona.contact",

"corona.event",

"corona.fahrradpruefung",

"corona.leisure",

"corona.notbetreuung",

"corona.travel",

"regio.taxes.help",

"undefined"

] | ---

tags:

- text-classification

- pytorch

- transformers

widget:

- text: "Muss ich eine Maske tragen?"

license: mit

language: de

---

# Multi2ConvAI-Corona: finetuned Bert for German

This model was developed in the [Multi2ConvAI](https://multi2conv.ai) project:

- domain: Corona (more details about our use cases: ([en](https://multi2convai/en/blog/use-cases), [de](https://multi2convai/en/blog/use-cases)))

- language: German (de)

- model type: finetuned Bert

## How to run

Requires:

- Huggingface transformers

### Run with Huggingface Transformers

````python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("inovex/multi2convai-logistics-de-bert")

model = AutoModelForSequenceClassification.from_pretrained("inovex/multi2convai-logistics-de-bert")

````

## Further information on Multi2ConvAI:

- https://multi2conv.ai

- https://github.com/inovex/multi2convai

- mailto: info@multi2conv.ai | 1,002 |

navteca/nli-deberta-v3-large | [

"contradiction",

"entailment",

"neutral"

] | ---

datasets:

- multi_nli

- snli

language: en

license: apache-2.0

metrics:

- accuracy

pipeline_tag: zero-shot-classification

tags:

- microsoft/deberta-v3-large

---

# Cross-Encoder for Natural Language Inference

This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class. This model is based on [microsoft/deberta-v3-large](https://huggingface.co/microsoft/deberta-v3-large)

## Training Data

The model was trained on the [SNLI](https://nlp.stanford.edu/projects/snli/) and [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/) datasets. For a given sentence pair, it will output three scores corresponding to the labels: contradiction, entailment, neutral.

## Performance

- Accuracy on SNLI-test dataset: 92.20

- Accuracy on MNLI mismatched set: 90.49

For futher evaluation results, see [SBERT.net - Pretrained Cross-Encoder](https://www.sbert.net/docs/pretrained_cross-encoders.html#nli).

## Usage

Pre-trained models can be used like this:

```python

from sentence_transformers import CrossEncoder

model = CrossEncoder('cross-encoder/nli-deberta-v3-large')

scores = model.predict([('A man is eating pizza', 'A man eats something'), ('A black race car starts up in front of a crowd of people.', 'A man is driving down a lonely road.')])

#Convert scores to labels

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(axis=1)]

```

## Usage with Transformers AutoModel

You can use the model also directly with Transformers library (without SentenceTransformers library):

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

import torch

model = AutoModelForSequenceClassification.from_pretrained('cross-encoder/nli-deberta-v3-large')

tokenizer = AutoTokenizer.from_pretrained('cross-encoder/nli-deberta-v3-large')

features = tokenizer(['A man is eating pizza', 'A black race car starts up in front of a crowd of people.'], ['A man eats something', 'A man is driving down a lonely road.'], padding=True, truncation=True, return_tensors="pt")

model.eval()

with torch.no_grad():

scores = model(**features).logits

label_mapping = ['contradiction', 'entailment', 'neutral']

labels = [label_mapping[score_max] for score_max in scores.argmax(dim=1)]

print(labels)

```

## Zero-Shot Classification

This model can also be used for zero-shot-classification:

```python

from transformers import pipeline

classifier = pipeline("zero-shot-classification", model='cross-encoder/nli-deberta-v3-large')

sent = "Apple just announced the newest iPhone X"

candidate_labels = ["technology", "sports", "politics"]

res = classifier(sent, candidate_labels)

print(res)

``` | 2,781 |

tdrenis/finetuned-bot-detector | null | Student project that fine-tuned the roberta-base-openai-detector model on the Twibot-20 dataset. | 96 |

ChrisLiewJY/BERTweet-Hedge | null | ---

license: mit

language:

- en

tags:

- uncertainty-detection

- social-media

- text-classification

widget:

- text: "It seems like Bitcoin prices are heading into bearish territory."

example_title: "Hedge Detection (Positive - Label 1)"

- text: "Bitcoin prices have fallen by 42% in the last 30 days."

example_title: "Hedge Detection (Negative - Label 0)"

---

### Overview

Fine tuned VinAI's BERTweet base model on the Wiki Weasel 2.0 Corpus from the [Szeged Uncertainty Corpus](https://rgai.inf.u-szeged.hu/node/160) for hedge (linguistic uncertainty) detection in social media texts. Model was trained and optimised using Ray Tune's implementation of Deep Mind's Population Based Training with the arithmetic mean of Accuracy & F1 as its evaluation metric.

### Labels

* LABEL_1 = Positive (Hedge is detected within text)

* LABEL_0 = Negative (No Hedges detected within text)

### <a name="models2"></a> Model Performance

Model | Accuracy | F1-Score | Accuracy & F1-Score

---|---|---|---

`BERTweet-Hedge` | 0.9680 | 0.8765 | 0.9222

| 1,041 |

SetFit/distilbert-base-uncased__enron_spam__all-train | [

"ham",

"spam"

] | Entry not found | 15 |

Tatyana/rubert_conversational_cased_sentiment | null | ---

language:

- ru

tags:

- sentiment

- text-classification

datasets:

- Tatyana/ru_sentiment_dataset

---

# Keras model with ruBERT conversational embedder for Sentiment Analysis

Russian texts sentiment classification.

Model trained on [Tatyana/ru_sentiment_dataset](https://huggingface.co/datasets/Tatyana/ru_sentiment_dataset)

## Labels meaning

0: NEUTRAL

1: POSITIVE

2: NEGATIVE

## How to use

```python

!pip install tensorflow-gpu

!pip install deeppavlov

!python -m deeppavlov install squad_bert

!pip install fasttext

!pip install transformers

!python -m deeppavlov install bert_sentence_embedder

from deeppavlov import build_model

model = build_model(Tatyana/rubert_conversational_cased_sentiment/custom_config.json)

model(["Сегодня хорошая погода", "Я счастлив проводить с тобою время", "Мне нравится эта музыкальная композиция"])

```

| 860 |

boychaboy/SNLI_roberta-large | [

"contradiction",

"entailment",

"neutral"

] | Entry not found | 15 |

fergusq/finbert-finnsentiment | [

"NEGATIVE",

"NEUTRAL",

"POSITIVE"

] | ---

language: fi

---

# FinBERT fine-tuned with the FinnSentiment dataset

This is a FinBERT model fine-tuned with the [FinnSentiment dataset](https://arxiv.org/pdf/2012.02613.pdf).

| 182 |

wanyu/IteraTeR-ROBERTA-Intention-Classifier | [

"clarity",

"coherence",

"fluency",

"meaning-changed",

"style"

] | ---

datasets:

- IteraTeR_full_sent

---

# IteraTeR RoBERTa model

This model was obtained by fine-tuning [roberta-large](https://huggingface.co/roberta-large) on [IteraTeR-human-sent](https://huggingface.co/datasets/wanyu/IteraTeR_human_sent) dataset.

Paper: [Understanding Iterative Revision from Human-Written Text](https://arxiv.org/abs/2203.03802) <br>

Authors: Wanyu Du, Vipul Raheja, Dhruv Kumar, Zae Myung Kim, Melissa Lopez, Dongyeop Kang

## Edit Intention Prediction Task

Given a pair of original sentence and revised sentence, our model can predict the edit intention for this revision pair.<br>

More specifically, the model will predict the probability of the following edit intentions:

<table>

<tr>

<th>Edit Intention</th>

<th>Definition</th>

<th>Example</th>

</tr>

<tr>

<td>clarity</td>

<td>Make the text more formal, concise, readable and understandable.</td>

<td>

Original: It's like a house which anyone can enter in it. <br>

Revised: It's like a house which anyone can enter.

</td>

</tr>

<tr>

<td>fluency</td>

<td>Fix grammatical errors in the text.</td>

<td>

Original: In the same year he became the Fellow of the Royal Society. <br>

Revised: In the same year, he became the Fellow of the Royal Society.

</td>

</tr>

<tr>

<td>coherence</td>

<td>Make the text more cohesive, logically linked and consistent as a whole.</td>

<td>

Original: Achievements and awards Among his other activities, he founded the Karachi Film Guild and Pakistan Film and TV Academy. <br>

Revised: Among his other activities, he founded the Karachi Film Guild and Pakistan Film and TV Academy.

</td>

</tr>

<tr>

<td>style</td>

<td>Convey the writer’s writing preferences, including emotions, tone, voice, etc..</td>

<td>

Original: She was last seen on 2005-10-22. <br>

Revised: She was last seen on October 22, 2005.

</td>

</tr>

<tr>

<td>meaning-changed</td>

<td>Update or add new information to the text.</td>

<td>

Original: This method improves the model accuracy from 64% to 78%. <br>

Revised: This method improves the model accuracy from 64% to 83%.

</td>

</tr>

</table>

## Usage

```python

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("wanyu/IteraTeR-ROBERTA-Intention-Classifier")

model = AutoModelForSequenceClassification.from_pretrained("wanyu/IteraTeR-ROBERTA-Intention-Classifier")

id2label = {0: "clarity", 1: "fluency", 2: "coherence", 3: "style", 4: "meaning-changed"}

before_text = 'I likes coffee.'

after_text = 'I like coffee.'

model_input = tokenizer(before_text, after_text, return_tensors='pt')

model_output = model(**model_input)

softmax_scores = torch.softmax(model_output.logits, dim=-1)

pred_id = torch.argmax(softmax_scores)

pred_label = id2label[pred_id.int()]

``` | 2,927 |

UT/BMW | null | Entry not found | 15 |

jonas/sdg_classifier_osdg | [

"1",

"10",

"11",

"12",

"13",

"14",

"15",

"2",

"3",

"4",

"5",

"6",

"7",

"8",

"9"

] | ---

language: en

widget:

- text: "Ending all forms of discrimination against women and girls is not only a basic human right, but it also crucial to accelerating sustainable development. It has been proven time and again, that empowering women and girls has a multiplier effect, and helps drive up economic growth and development across the board.

Since 2000, UNDP, together with our UN partners and the rest of the global community, has made gender equality central to our work. We have seen remarkable progress since then. More girls are now in school compared to 15 years ago, and most regions have reached gender parity in primary education. Women now make up to 41 percent of paid workers outside of agriculture, compared to 35 percent in 1990."

datasets:

- jonas/osdg_sdg_data_processed

co2_eq_emissions: 0.0653263174784986

---

# About

Machine Learning model for classifying text according to the first 15 of the 17 Sustainable Development Goals from the United Nations. Note that model is trained on quite short paragraphs (around 100 words) and performs best with similar input sizes.

Data comes from the amazing https://osdg.ai/ community!

# Model Training Specifics

- Problem type: Multi-class Classification

- Model ID: 900229515

- CO2 Emissions (in grams): 0.0653263174784986

## Validation Metrics

- Loss: 0.3644874095916748

- Accuracy: 0.8972544579677328

- Macro F1: 0.8500873710954522

- Micro F1: 0.8972544579677328

- Weighted F1: 0.8937529692986061

- Macro Precision: 0.8694369727467804

- Micro Precision: 0.8972544579677328

- Weighted Precision: 0.8946984684977016

- Macro Recall: 0.8405065997404059

- Micro Recall: 0.8972544579677328

- Weighted Recall: 0.8972544579677328

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/jonas/autotrain-osdg-sdg-classifier-900229515

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("jonas/sdg_classifier_osdg", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("jonas/sdg_classifier_osdg", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

``` | 2,365 |

tezign/Erlangshen-Sentiment-FineTune | null | ---

language: zh

tags:

- sentiment-analysis

- pytorch

widget:

- text: "房间非常非常小,内窗,特别不透气,因为夜里走廊灯光是亮的,内窗对着走廊,窗帘又不能完全拉死,怎么都会有一道光射进来。"

- text: "尽快有洗衣房就好了。"

- text: "很好,干净整洁,交通方便。"

- text: "干净整洁很好"

---

# Note

BERT based sentiment analysis, finetune based on https://huggingface.co/IDEA-CCNL/Erlangshen-Roberta-330M-Sentiment .

The model trained on **hotel human review chinese dataset**.

# Usage

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification, TextClassificationPipeline

MODEL = "tezign/Erlangshen-Sentiment-FineTune"

tokenizer = AutoTokenizer.from_pretrained(MODEL)

model = AutoModelForSequenceClassification.from_pretrained(MODEL, trust_remote_code=True)

classifier = TextClassificationPipeline(model=model, tokenizer=tokenizer)

result = classifier("很好,干净整洁,交通方便。")

print(result)

"""

print result

>> [{'label': 'Positive', 'score': 0.989660382270813}]

"""

```

# Evaluate

We compared and evaluated the performance of **Our finetune model** and the **Original Erlangshen model** on the **hotel human review test dataset**(5429 negative reviews and 1251 positive reviews).

The results showed that our model substantial improved the precision and recall of positive reviews:

```text

Our finetune model:

precision recall f1-score support

Negative 0.99 0.98 0.98 5429

Positive 0.92 0.95 0.93 1251

accuracy 0.97 6680

macro avg 0.95 0.96 0.96 6680

weighted avg 0.97 0.97 0.97 6680

======================================================

Original Erlangshen model:

precision recall f1-score support

Negative 0.81 1.00 0.90 5429

Positive 0.00 0.00 0.00 1251

accuracy 0.81 6680

macro avg 0.41 0.50 0.45 6680

weighted avg 0.66 0.81 0.73 6680

``` | 1,988 |

ReynaQuita/twitter_disaster_bert_large | null | Entry not found | 15 |

abhishek/autonlp-japanese-sentiment-59362 | [

"negative",

"positive"

] | ---

tags: autonlp

language: ja

widget:

- text: "I love AutoNLP 🤗"

datasets:

- abhishek/autonlp-data-japanese-sentiment

---

# Model Trained Using AutoNLP

- Problem type: Binary Classification

- Model ID: 59362

## Validation Metrics

- Loss: 0.13092292845249176

- Accuracy: 0.9527127414314258

- Precision: 0.9634070704982427

- Recall: 0.9842171959602166

- AUC: 0.9667289746092403

- F1: 0.9737009564152002

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoNLP"}' https://api-inference.huggingface.co/models/abhishek/autonlp-japanese-sentiment-59362

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("abhishek/autonlp-japanese-sentiment-59362", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("abhishek/autonlp-japanese-sentiment-59362", use_auth_token=True)

inputs = tokenizer("I love AutoNLP", return_tensors="pt")

outputs = model(**inputs)

``` | 1,096 |

finiteautomata/bert-contextualized-hate-speech-es | [

"Hateful",

"Not hateful"

] | Entry not found | 15 |

google/tapas-large-finetuned-tabfact | null | ---

language: en

tags:

- tapas

- sequence-classification

license: apache-2.0

datasets:

- tab_fact

---

# TAPAS large model fine-tuned on Tabular Fact Checking (TabFact)

This model has 2 versions which can be used. The latest version, which is the default one, corresponds to the `tapas_tabfact_inter_masklm_large_reset` checkpoint of the [original Github repository](https://github.com/google-research/tapas).

This model was pre-trained on MLM and an additional step which the authors call intermediate pre-training, and then fine-tuned on [TabFact](https://github.com/wenhuchen/Table-Fact-Checking). It uses relative position embeddings by default (i.e. resetting the position index at every cell of the table).

The other (non-default) version which can be used is the one with absolute position embeddings:

- `no_reset`, which corresponds to `tapas_tabfact_inter_masklm_large`

Disclaimer: The team releasing TAPAS did not write a model card for this model so this model card has been written by

the Hugging Face team and contributors.

## Model description

TAPAS is a BERT-like transformers model pretrained on a large corpus of English data from Wikipedia in a self-supervised fashion.

This means it was pretrained on the raw tables and associated texts only, with no humans labelling them in any way (which is why it

can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a (flattened) table and associated context, the model randomly masks 15% of the words in

the input, then runs the entire (partially masked) sequence through the model. The model then has to predict the masked words.

This is different from traditional recurrent neural networks (RNNs) that usually see the words one after the other,

or from autoregressive models like GPT which internally mask the future tokens. It allows the model to learn a bidirectional

representation of a table and associated text.

- Intermediate pre-training: to encourage numerical reasoning on tables, the authors additionally pre-trained the model by creating

a balanced dataset of millions of syntactically created training examples. Here, the model must predict (classify) whether a sentence

is supported or refuted by the contents of a table. The training examples are created based on synthetic as well as counterfactual statements.

This way, the model learns an inner representation of the English language used in tables and associated texts, which can then be used

to extract features useful for downstream tasks such as answering questions about a table, or determining whether a sentence is entailed

or refuted by the contents of a table. Fine-tuning is done by adding a classification head on top of the pre-trained model, and then

jointly train this randomly initialized classification head with the base model on TabFact.

## Intended uses & limitations

You can use this model for classifying whether a sentence is supported or refuted by the contents of a table.

For code examples, we refer to the documentation of TAPAS on the HuggingFace website.

## Training procedure

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are

then of the form:

```

[CLS] Sentence [SEP] Flattened table [SEP]

```

### Fine-tuning

The model was fine-tuned on 32 Cloud TPU v3 cores for 80,000 steps with maximum sequence length 512 and batch size of 512.

In this setup, fine-tuning takes around 14 hours. The optimizer used is Adam with a learning rate of 2e-5, and a warmup

ratio of 0.05. See the [paper](https://arxiv.org/abs/2010.00571) for more details (appendix A2).

### BibTeX entry and citation info

```bibtex

@misc{herzig2020tapas,

title={TAPAS: Weakly Supervised Table Parsing via Pre-training},

author={Jonathan Herzig and Paweł Krzysztof Nowak and Thomas Müller and Francesco Piccinno and Julian Martin Eisenschlos},

year={2020},

eprint={2004.02349},

archivePrefix={arXiv},

primaryClass={cs.IR}

}

```

```bibtex

@misc{eisenschlos2020understanding,

title={Understanding tables with intermediate pre-training},

author={Julian Martin Eisenschlos and Syrine Krichene and Thomas Müller},

year={2020},

eprint={2010.00571},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

```bibtex

@inproceedings{2019TabFactA,

title={TabFact : A Large-scale Dataset for Table-based Fact Verification},

author={Wenhu Chen, Hongmin Wang, Jianshu Chen, Yunkai Zhang, Hong Wang, Shiyang Li, Xiyou Zhou and William Yang Wang},

booktitle = {International Conference on Learning Representations (ICLR)},

address = {Addis Ababa, Ethiopia},

month = {April},

year = {2020}

}

``` | 4,870 |

nateraw/codecarbon-text-classification | null | ---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: codecarbon-text-classification

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# codecarbon-text-classification

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the imdb dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

### Training results

### Framework versions

- Transformers 4.16.2

- Pytorch 1.10.0+cu111

- Datasets 1.18.3

- Tokenizers 0.11.0

| 1,067 |

ykacer/bert-base-cased-imdb-sequence-classification | null |

---

language:

- en

thumbnail: https://raw.githubusercontent.com/JetRunner/BERT-of-Theseus/master/bert-of-theseus.png

tags:

- sequence

- classification

license: apache-2.0

datasets:

- imdb

metrics:

- accuracy

---

| 213 |

rasta/distilbert-base-uncased-finetuned-fashion | null | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-fashion

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-fashion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on a munally created dataset in order to detect fashion (label_0) from non-fashion (label_1) items.

It achieves the following results on the evaluation set:

- Loss: 0.0809

- Accuracy: 0.98

- F1: 0.9801

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.4017 | 1.0 | 47 | 0.1220 | 0.966 | 0.9662 |

| 0.115 | 2.0 | 94 | 0.0809 | 0.98 | 0.9801 |

### Framework versions

- Transformers 4.18.0

- Pytorch 1.11.0+cu113

- Datasets 2.1.0

- Tokenizers 0.12.1

| 1,394 |

tinkoff-ai/response-quality-classifier-base | [

"relevance",

"specificity"

] | ---

license: mit

widget:

- text: "[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]супер, вот только проснулся, у тебя как?"

example_title: "Dialog example 1"

- text: "[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]норм"

example_title: "Dialog example 2"

- text: "[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]норм, у тя как?"

example_title: "Dialog example 3"

language:

- ru

tags:

- conversational

---

This classification model is based on [DeepPavlov/rubert-base-cased-sentence](https://huggingface.co/DeepPavlov/rubert-base-cased-sentence).

The model should be used to produce relevance and specificity of the last message in the context of a dialogue.

The labels explanation:

- `relevance`: is the last message in the dialogue relevant in the context of the full dialogue.

- `specificity`: is the last message in the dialogue interesting and promotes the continuation of the dialogue.

It is pretrained on a large corpus of dialog data in unsupervised manner: the model is trained to predict whether last response was in a real dialog, or it was pulled from some other dialog at random.

Then it was finetuned on manually labelled examples (dataset will be posted soon).

The model was trained with three messages in the context and one response. Each message was tokenized separately with ``` max_length = 32 ```.

The performance of the model on validation split (dataset will be posted soon) (with the best thresholds for validation samples):

| | threshold | f0.5 | ROC AUC |

|:------------|------------:|-------:|----------:|

| relevance | 0.49 | 0.84 | 0.79 |

| specificity | 0.53 | 0.83 | 0.83 |

How to use:

```python

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained('tinkoff-ai/response-quality-classifier-base')

model = AutoModelForSequenceClassification.from_pretrained('tinkoff-ai/response-quality-classifier-base')

inputs = tokenizer('[CLS]привет[SEP]привет![SEP]как дела?[RESPONSE_TOKEN]норм, у тя как?', max_length=128, add_special_tokens=False, return_tensors='pt')

with torch.inference_mode():

logits = model(**inputs).logits

probas = torch.sigmoid(logits)[0].cpu().detach().numpy()

relevance, specificity = probas

```

The [app](https://huggingface.co/spaces/tinkoff-ai/response-quality-classifiers) where you can easily interact with this model.

The work was done during internship at Tinkoff by [egoriyaa](https://github.com/egoriyaa), mentored by [solemn-leader](https://huggingface.co/solemn-leader). | 2,593 |

PrimeQA/tydiqa-boolean-answer-classifier | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | ---

license: apache-2.0

---

## Model description

An answer classification model for boolean questions based on XLM-RoBERTa.

The answer classifier takes as input a boolean question and a passage, and returns a label (yes, no-answer, no).

The model was initialized with [xlm-roberta-large](https://huggingface.co/xlm-roberta-large) and fine-tuned on the boolean questions from [TyDiQA](https://huggingface.co/datasets/tydiqa), as well as [BoolQ-X](https://arxiv.org/abs/2112.07772#).

## Intended uses & limitations

You can use the raw model for question classification. Biases associated with the pre-existing language model, xlm-roberta-large, may be present in our fine-tuned model, tydiqa-boolean-answer-classifier.

## Usage

You can use this model directly in the the [PrimeQA](https://github.com/primeqa/primeqa) framework for supporting boolean questions in reading comprehension: [examples](https://github.com/primeqa/primeqa/tree/main/examples/boolqa).

### BibTeX entry and citation info

```bibtex

@article{Rosenthal2021DoAT,

title={Do Answers to Boolean Questions Need Explanations? Yes},

author={Sara Rosenthal and Mihaela A. Bornea and Avirup Sil and Radu Florian and Scott McCarley},

journal={ArXiv},

year={2021},

volume={abs/2112.07772}

}

```

```bibtex

@misc{https://doi.org/10.48550/arxiv.2206.08441,

author = {McCarley, Scott and

Bornea, Mihaela and

Rosenthal, Sara and

Ferritto, Anthony and

Sultan, Md Arafat and

Sil, Avirup and

Florian, Radu},

title = {GAAMA 2.0: An Integrated System that Answers Boolean and Extractive Questions},

journal = {CoRR},

publisher = {arXiv},

year = {2022},

url = {https://arxiv.org/abs/2206.08441},

}

``` | 1,770 |

Tomas23/twitter-roberta-base-mar2022-finetuned-sentiment | [

"negative",

"neutral",

"positive"

] | Entry not found | 15 |

okho0653/Bio_ClinicalBERT-zero-shot-tokenizer-truncation-sentiment-model | null | ---

license: mit

tags:

- generated_from_trainer

model-index:

- name: Bio_ClinicalBERT-zero-shot-tokenizer-truncation-sentiment-model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Bio_ClinicalBERT-zero-shot-tokenizer-truncation-sentiment-model

This model is a fine-tuned version of [emilyalsentzer/Bio_ClinicalBERT](https://huggingface.co/emilyalsentzer/Bio_ClinicalBERT) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Framework versions

- Transformers 4.20.1

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

| 1,118 |

adamnik/electra-event-detection | null | ---

license: mit

---

| 21 |

Cameron/BERT-mdgender-convai-binary | null | Entry not found | 15 |

LilaBoualili/bert-sim-pair | null | At its core it uses an BERT-Base model (bert-base-uncased) fine-tuned on the MS MARCO passage classification task using the Sim-Pair marking strategy that highlights exact term matches between the query and the passage via marker tokens (#). It can be loaded using the TF/AutoModelForSequenceClassification classes.

Refer to our [github repository](https://github.com/BOUALILILila/ExactMatchMarking) for a usage example for ad hoc ranking.

| 441 |

SetFit/distilbert-base-uncased__sst5__all-train | [

"negative",

"neutral",

"positive",

"very negative",

"very positive"

] | ---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased__sst5__all-train

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased__sst5__all-train

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.3757

- Accuracy: 0.5045

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.2492 | 1.0 | 534 | 1.1163 | 0.4991 |

| 0.9937 | 2.0 | 1068 | 1.1232 | 0.5122 |

| 0.7867 | 3.0 | 1602 | 1.2097 | 0.5045 |

| 0.595 | 4.0 | 2136 | 1.3757 | 0.5045 |

### Framework versions

- Transformers 4.15.0

- Pytorch 1.10.1+cu102

- Datasets 1.17.0

- Tokenizers 0.10.3

| 1,613 |

Narsil/bart-large-mnli-opti | [

"contradiction",

"entailment",

"neutral"

] | ---

license: mit

thumbnail: https://huggingface.co/front/thumbnails/facebook.png

pipeline_tag: zero-shot-classification

datasets:

- multi_nli

---

# bart-large-mnli

This is the checkpoint for [bart-large](https://huggingface.co/facebook/bart-large) after being trained on the [MultiNLI (MNLI)](https://huggingface.co/datasets/multi_nli) dataset.

Additional information about this model:

- The [bart-large](https://huggingface.co/facebook/bart-large) model page

- [BART: Denoising Sequence-to-Sequence Pre-training for Natural Language Generation, Translation, and Comprehension

](https://arxiv.org/abs/1910.13461)

- [BART fairseq implementation](https://github.com/pytorch/fairseq/tree/master/fairseq/models/bart)

## NLI-based Zero Shot Text Classification

[Yin et al.](https://arxiv.org/abs/1909.00161) proposed a method for using pre-trained NLI models as a ready-made zero-shot sequence classifiers. The method works by posing the sequence to be classified as the NLI premise and to construct a hypothesis from each candidate label. For example, if we want to evaluate whether a sequence belongs to the class "politics", we could construct a hypothesis of `This text is about politics.`. The probabilities for entailment and contradiction are then converted to label probabilities.

This method is surprisingly effective in many cases, particularly when used with larger pre-trained models like BART and Roberta. See [this blog post](https://joeddav.github.io/blog/2020/05/29/ZSL.html) for a more expansive introduction to this and other zero shot methods, and see the code snippets below for examples of using this model for zero-shot classification both with Hugging Face's built-in pipeline and with native Transformers/PyTorch code.

#### With the zero-shot classification pipeline

The model can be loaded with the `zero-shot-classification` pipeline like so:

```python

from transformers import pipeline

classifier = pipeline("zero-shot-classification",

model="facebook/bart-large-mnli")

```

You can then use this pipeline to classify sequences into any of the class names you specify.

```python

sequence_to_classify = "one day I will see the world"

candidate_labels = ['travel', 'cooking', 'dancing']

classifier(sequence_to_classify, candidate_labels)

#{'labels': ['travel', 'dancing', 'cooking'],

# 'scores': [0.9938651323318481, 0.0032737774308770895, 0.002861034357920289],

# 'sequence': 'one day I will see the world'}

```

If more than one candidate label can be correct, pass `multi_class=True` to calculate each class independently:

```python

candidate_labels = ['travel', 'cooking', 'dancing', 'exploration']

classifier(sequence_to_classify, candidate_labels, multi_class=True)

#{'labels': ['travel', 'exploration', 'dancing', 'cooking'],

# 'scores': [0.9945111274719238,

# 0.9383890628814697,

# 0.0057061901316046715,

# 0.0018193122232332826],

# 'sequence': 'one day I will see the world'}

```

#### With manual PyTorch

```python

# pose sequence as a NLI premise and label as a hypothesis

from transformers import AutoModelForSequenceClassification, AutoTokenizer

nli_model = AutoModelForSequenceClassification.from_pretrained('facebook/bart-large-mnli')

tokenizer = AutoTokenizer.from_pretrained('facebook/bart-large-mnli')

premise = sequence

hypothesis = f'This example is {label}.'

# run through model pre-trained on MNLI

x = tokenizer.encode(premise, hypothesis, return_tensors='pt',

truncation_strategy='only_first')

logits = nli_model(x.to(device))[0]

# we throw away "neutral" (dim 1) and take the probability of

# "entailment" (2) as the probability of the label being true

entail_contradiction_logits = logits[:,[0,2]]

probs = entail_contradiction_logits.softmax(dim=1)

prob_label_is_true = probs[:,1]

```

| 3,793 |

anahitapld/dbd_bert_da_simple | [

"LABEL_0",

"LABEL_1",

"LABEL_10",

"LABEL_11",

"LABEL_12",

"LABEL_13",

"LABEL_14",

"LABEL_15",

"LABEL_16",

"LABEL_17",

"LABEL_18",

"LABEL_19",

"LABEL_2",

"LABEL_20",

"LABEL_21",

"LABEL_22",

"LABEL_23",

"LABEL_24",

"LABEL_25",

"LABEL_26",

"LABEL_27",

"LABEL_28",

"LABEL_29",

"LABEL_3",

"LABEL_30",

"LABEL_31",

"LABEL_32",

"LABEL_33",

"LABEL_34",

"LABEL_35",

"LABEL_36",

"LABEL_37",

"LABEL_38",

"LABEL_39",

"LABEL_4",

"LABEL_40",

"LABEL_41",

"LABEL_42",

"LABEL_5",

"LABEL_6",

"LABEL_7",

"LABEL_8",

"LABEL_9"

] | ---

license: apache-2.0

---

| 28 |

StanfordAIMI/covid-radbert | [

"no COVID-19",

"uncertain COVID-19",

"COVID-19"

] | ---

widget:

- text: "procedure: single ap view of the chest comparison: none findings: no surgical hardware nor tubes. lungs, pleura: low lung volumes, bilateral airspace opacities. no pneumothorax or pleural effusion. cardiovascular and mediastinum: the cardiomediastinal silhouette seems stable. impression: 1. patchy bilateral airspace opacities, stable, but concerning for multifocal pneumonia. 2. absence of other suspicions, the rest of the lungs seems fine."

- text: "procedure: single ap view of the chest comparison: none findings: No surgical hardware nor tubes. lungs, pleura: low lung volumes, bilateral airspace opacities. no pneumothorax or pleural effusion. cardiovascular and mediastinum: the cardiomediastinal silhouette seems stable. impression: 1. patchy bilateral airspace opacities, stable. 2. some areas are suggestive that pneumonia can not be excluded. 3. recommended to follow-up shortly and check if there are additional symptoms"

tags:

- text-classification

- pytorch

- transformers

- uncased

- radiology

- biomedical

- covid-19

- covid19

language:

- en

license: mit

---

COVID-RadBERT was trained to detect the presence or absence of COVID-19 within radiology reports, along an "uncertain" diagnostic when further medical tests are required. Manuscript in-proceedings. | 1,299 |

airKlizz/xlm-roberta-base-germeval21-toxic-with-data-augmentation | null | Entry not found | 15 |

aubmindlab/aragpt2-mega-detector-long | [

"human-written",

"machine-generated"

] | ---

language: ar

widget:

- text: "وإذا كان هناك من لا يزال يعتقد أن لبنان هو سويسرا الشرق ، فهو مخطئ إلى حد بعيد . فلبنان ليس سويسرا ، ولا يمكن أن يكون كذلك . لقد عاش اللبنانيون في هذا البلد منذ ما يزيد عن ألف وخمسمئة عام ، أي منذ تأسيس الإمارة الشهابية التي أسسها الأمير فخر الدين المعني الثاني ( 1697 - 1742 )"

---

# AraGPT2 Detector

Machine generated detector model from the [AraGPT2: Pre-Trained Transformer for Arabic Language Generation paper](https://arxiv.org/abs/2012.15520)

This model is trained on the long text passages, and achieves a 99.4% F1-Score.

# How to use it:

```python

from transformers import pipeline

from arabert.preprocess import ArabertPreprocessor

processor = ArabertPreprocessor(model="aubmindlab/araelectra-base-discriminator")

pipe = pipeline("sentiment-analysis", model = "aubmindlab/aragpt2-mega-detector-long")

text = " "

text_prep = processor.preprocess(text)

result = pipe(text_prep)

# [{'label': 'machine-generated', 'score': 0.9977743625640869}]

```

# If you used this model please cite us as :

```

@misc{antoun2020aragpt2,

title={AraGPT2: Pre-Trained Transformer for Arabic Language Generation},

author={Wissam Antoun and Fady Baly and Hazem Hajj},

year={2020},

eprint={2012.15520},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

# Contacts

**Wissam Antoun**: [Linkedin](https://www.linkedin.com/in/wissam-antoun-622142b4/) | [Twitter](https://twitter.com/wissam_antoun) | [Github](https://github.com/WissamAntoun) | <wfa07@mail.aub.edu> | <wissam.antoun@gmail.com>

**Fady Baly**: [Linkedin](https://www.linkedin.com/in/fadybaly/) | [Twitter](https://twitter.com/fadybaly) | [Github](https://github.com/fadybaly) | <fgb06@mail.aub.edu> | <baly.fady@gmail.com> | 1,749 |

cardiffnlp/bertweet-base-stance-climate | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | 0 | |

mrm8488/flaubert-small-finetuned-movie-review-sentiment-analysis | null | Entry not found | 15 |

unicamp-dl/mMiniLM-L6-v2-mmarco-v1 | [

"LABEL_0"

] | ---

language: pt

license: mit

tags:

- msmarco

- miniLM

- pytorch

- tensorflow

- pt

- pt-br

datasets:

- msmarco

widget:

- text: "Texto de exemplo em português"

inference: false

---

# mMiniLM-L6-v2 Reranker finetuned on mMARCO

## Introduction

mMiniLM-L6-v2-mmarco-v1 is a multilingual miniLM-based model finetuned on a multilingual version of MS MARCO passage dataset. This dataset, named mMARCO, is formed by passages in 9 different languages, translated from English MS MARCO passages collection.

In the version v1, the datasets were translated using [Helsinki](https://huggingface.co/Helsinki-NLP) NMT model. Further information about the dataset or the translation method can be found on our [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository.

## Usage

```python

from transformers import AutoTokenizer, AutoModel

model_name = 'unicamp-dl/mMiniLM-L6-v2-mmarco-v1'

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModel.from_pretrained(model_name)

```

# Citation

If you use mMiniLM-L6-v2-mmarco-v1, please cite:

@misc{bonifacio2021mmarco,

title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset},

author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and and Roberto Lotufo and Rodrigo Nogueira},

year={2021},

eprint={2108.13897},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

| 1,545 |

HiTZ/A2T_RoBERTa_SMFA_WikiEvents-arg_ACE-arg | [

"contradiction",

"entailment",

"neutral"

] | ---

pipeline_tag: zero-shot-classification

datasets:

- snli

- anli

- multi_nli

- multi_nli_mismatch

- fever

---

# A2T Entailment model

**Important:** These pretrained entailment models are intended to be used with the [Ask2Transformers](https://github.com/osainz59/Ask2Transformers) library but are also fully compatible with the `ZeroShotTextClassificationPipeline` from [Transformers](https://github.com/huggingface/Transformers).

Textual Entailment (or Natural Language Inference) has turned out to be a good choice for zero-shot text classification problems [(Yin et al., 2019](https://aclanthology.org/D19-1404/); [Wang et al., 2021](https://arxiv.org/abs/2104.14690); [Sainz and Rigau, 2021)](https://aclanthology.org/2021.gwc-1.6/). Recent research addressed Information Extraction problems with the same idea [(Lyu et al., 2021](https://aclanthology.org/2021.acl-short.42/); [Sainz et al., 2021](https://aclanthology.org/2021.emnlp-main.92/); [Sainz et al., 2022a](), [Sainz et al., 2022b)](https://arxiv.org/abs/2203.13602). The A2T entailment models are first trained with NLI datasets such as MNLI [(Williams et al., 2018)](), SNLI [(Bowman et al., 2015)]() or/and ANLI [(Nie et al., 2020)]() and then fine-tuned to specific tasks that were previously converted to textual entailment format.

For more information please, take a look to the [Ask2Transformers](https://github.com/osainz59/Ask2Transformers) library or the following published papers:

- [Label Verbalization and Entailment for Effective Zero and Few-Shot Relation Extraction (Sainz et al., EMNLP 2021)](https://aclanthology.org/2021.emnlp-main.92/)

- [Textual Entailment for Event Argument Extraction: Zero- and Few-Shot with Multi-Source Learning (Sainz et al., Findings of NAACL-HLT 2022)]()

## About the model

The model name describes the configuration used for training as follows:

<!-- $$\text{HiTZ/A2T\_[pretrained\_model]\_[NLI\_datasets]\_[finetune\_datasets]}$$ -->

<h3 align="center">HiTZ/A2T_[pretrained_model]_[NLI_datasets]_[finetune_datasets]</h3>

- `pretrained_model`: The checkpoint used for initialization. For example: RoBERTa<sub>large</sub>.

- `NLI_datasets`: The NLI datasets used for pivot training.

- `S`: Standford Natural Language Inference (SNLI) dataset.

- `M`: Multi Natural Language Inference (MNLI) dataset.

- `F`: Fever-nli dataset.

- `A`: Adversarial Natural Language Inference (ANLI) dataset.

- `finetune_datasets`: The datasets used for fine tuning the entailment model. Note that for more than 1 dataset the training was performed sequentially. For example: ACE-arg.

Some models like `HiTZ/A2T_RoBERTa_SMFA_ACE-arg` have been trained marking some information between square brackets (`'[['` and `']]'`) like the event trigger span. Make sure you follow the same preprocessing in order to obtain the best results.

## Cite

If you use this model, consider citing the following publications:

```bibtex

@inproceedings{sainz-etal-2021-label,

title = "Label Verbalization and Entailment for Effective Zero and Few-Shot Relation Extraction",

author = "Sainz, Oscar and

Lopez de Lacalle, Oier and

Labaka, Gorka and

Barrena, Ander and

Agirre, Eneko",

booktitle = "Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing",

month = nov,

year = "2021",

address = "Online and Punta Cana, Dominican Republic",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2021.emnlp-main.92",

doi = "10.18653/v1/2021.emnlp-main.92",

pages = "1199--1212",

}

``` | 3,612 |

aomar85/fine-tuned-arabert-random-negative | null | ---

tags:

- generated_from_trainer

metrics:

- accuracy

- precision

- recall

- f1

model-index:

- name: fine-tuned-arabert-random-negative

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# fine-tuned-arabert-random-negative

This model is a fine-tuned version of [aubmindlab/bert-base-arabertv02](https://huggingface.co/aubmindlab/bert-base-arabertv02) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0080

- Accuracy: 0.9989

- Precision: 0.9990

- Recall: 0.9988

- F1: 0.9989

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 |

|:-------------:|:-----:|:------:|:---------------:|:--------:|:---------:|:------:|:------:|

| 0.0105 | 1.0 | 62920 | 0.0061 | 0.9986 | 0.9993 | 0.9979 | 0.9986 |

| 0.0069 | 2.0 | 125840 | 0.0096 | 0.9986 | 0.9993 | 0.9979 | 0.9986 |

| 0.0058 | 3.0 | 188760 | 0.0084 | 0.9988 | 0.9988 | 0.9988 | 0.9988 |

| 0.0047 | 4.0 | 251680 | 0.0080 | 0.9989 | 0.9990 | 0.9988 | 0.9989 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

| 1,864 |

sschellhammer/SciTweets_SciBert | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | ---

license: cc-by-4.0

widget:

- text: "Study: Shifts in electricity generation spur net job growth, but coal jobs decline - via @DukeU https://www.eurekalert.org/news-releases/637217"

example_title: "All categories"

- text: "Shifts in electricity generation spur net job growth, but coal jobs decline"

example_title: "Only Cat 1.1"

- text: "Study on impacts of electricity generation shift via @DukeU https://www.eurekalert.org/news-releases/637217"

example_title: "Only Cat 1.2 and 1.3"

- text: "@DukeU received grant for research on electricity generation shift"

example_title: "Only Cat 1.3"

---

This SciBert-based multi-label classifier, trained as part of the work "SciTweets - A Dataset and Annotation Framework for Detecting Scientific Online Discourse", distinguishes three different forms of science-relatedness for Tweets. See details at https://github.com/AI-4-Sci/SciTweets . | 896 |

Theivaprakasham/sentence-transformers-paraphrase-MiniLM-L6-v2-twitter_sentiment | [

"LABEL_0",

"LABEL_1",

"LABEL_2"

] | Entry not found | 15 |

TransQuest/monotransquest-da-any_en | [

"LABEL_0"

] | ---

language: multilingual-en

tags:

- Quality Estimation

- monotransquest

- DA

license: apache-2.0

---

# TransQuest: Translation Quality Estimation with Cross-lingual Transformers

The goal of quality estimation (QE) is to evaluate the quality of a translation without having access to a reference translation. High-accuracy QE that can be easily deployed for a number of language pairs is the missing piece in many commercial translation workflows as they have numerous potential uses. They can be employed to select the best translation when several translation engines are available or can inform the end user about the reliability of automatically translated content. In addition, QE systems can be used to decide whether a translation can be published as it is in a given context, or whether it requires human post-editing before publishing or translation from scratch by a human. The quality estimation can be done at different levels: document level, sentence level and word level.

With TransQuest, we have opensourced our research in translation quality estimation which also won the sentence-level direct assessment quality estimation shared task in [WMT 2020](http://www.statmt.org/wmt20/quality-estimation-task.html). TransQuest outperforms current open-source quality estimation frameworks such as [OpenKiwi](https://github.com/Unbabel/OpenKiwi) and [DeepQuest](https://github.com/sheffieldnlp/deepQuest).

## Features

- Sentence-level translation quality estimation on both aspects: predicting post editing efforts and direct assessment.

- Word-level translation quality estimation capable of predicting quality of source words, target words and target gaps.

- Outperform current state-of-the-art quality estimation methods like DeepQuest and OpenKiwi in all the languages experimented.

- Pre-trained quality estimation models for fifteen language pairs are available in [HuggingFace.](https://huggingface.co/TransQuest)

## Installation

### From pip

```bash

pip install transquest

```

### From Source

```bash

git clone https://github.com/TharinduDR/TransQuest.git

cd TransQuest

pip install -r requirements.txt

```

## Using Pre-trained Models

```python

import torch

from transquest.algo.sentence_level.monotransquest.run_model import MonoTransQuestModel

model = MonoTransQuestModel("xlmroberta", "TransQuest/monotransquest-da-any_en", num_labels=1, use_cuda=torch.cuda.is_available())

predictions, raw_outputs = model.predict([["Reducerea acestor conflicte este importantă pentru conservare.", "Reducing these conflicts is not important for preservation."]])

print(predictions)

```

## Documentation

For more details follow the documentation.

1. **[Installation](https://tharindudr.github.io/TransQuest/install/)** - Install TransQuest locally using pip.

2. **Architectures** - Checkout the architectures implemented in TransQuest

1. [Sentence-level Architectures](https://tharindudr.github.io/TransQuest/architectures/sentence_level_architectures/) - We have released two architectures; MonoTransQuest and SiameseTransQuest to perform sentence level quality estimation.

2. [Word-level Architecture](https://tharindudr.github.io/TransQuest/architectures/word_level_architecture/) - We have released MicroTransQuest to perform word level quality estimation.

3. **Examples** - We have provided several examples on how to use TransQuest in recent WMT quality estimation shared tasks.

1. [Sentence-level Examples](https://tharindudr.github.io/TransQuest/examples/sentence_level_examples/)

2. [Word-level Examples](https://tharindudr.github.io/TransQuest/examples/word_level_examples/)

4. **Pre-trained Models** - We have provided pretrained quality estimation models for fifteen language pairs covering both sentence-level and word-level

1. [Sentence-level Models](https://tharindudr.github.io/TransQuest/models/sentence_level_pretrained/)

2. [Word-level Models](https://tharindudr.github.io/TransQuest/models/word_level_pretrained/)

5. **[Contact](https://tharindudr.github.io/TransQuest/contact/)** - Contact us for any issues with TransQuest

## Citations

If you are using the word-level architecture, please consider citing this paper which is accepted to [ACL 2021](https://2021.aclweb.org/).

```bash

@InProceedings{ranasinghe2021,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},

title = {An Exploratory Analysis of Multilingual Word Level Quality Estimation with Cross-Lingual Transformers},

booktitle = {Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics},

year = {2021}

}

```

If you are using the sentence-level architectures, please consider citing these papers which were presented in [COLING 2020](https://coling2020.org/) and in [WMT 2020](http://www.statmt.org/wmt20/) at EMNLP 2020.

```bash

@InProceedings{transquest:2020a,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},

title = {TransQuest: Translation Quality Estimation with Cross-lingual Transformers},

booktitle = {Proceedings of the 28th International Conference on Computational Linguistics},

year = {2020}

}

```

```bash

@InProceedings{transquest:2020b,

author = {Ranasinghe, Tharindu and Orasan, Constantin and Mitkov, Ruslan},