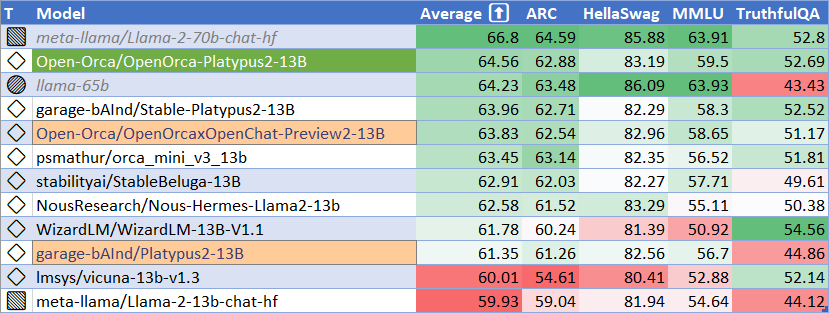

modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Salesforce/blip2-flan-t5-xxl | 2023-09-13T08:46:29.000Z | [

"transformers",

"pytorch",

"blip-2",

"visual-question-answering",

"vision",

"image-to-text",

"image-captioning",

"en",

"arxiv:2301.12597",

"arxiv:2210.11416",

"license:mit",

"has_space",

"region:us"

] | image-to-text | Salesforce | null | null | Salesforce/blip2-flan-t5-xxl | 63 | 20,904 | transformers | 2023-02-09T09:10:14 | ---

language: en

license: mit

tags:

- vision

- image-to-text

- image-captioning

- visual-question-answering

pipeline_tag: image-to-text

inference: false

---

# BLIP-2, Flan T5-xxl, pre-trained only

BLIP-2 model, leveraging [Flan T5-xxl](https://huggingface.co/google/flan-t5-xxl) (a large language model).

It was introduced in the paper [BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models](https://arxiv.org/abs/2301.12597) by Li et al. and first released in [this repository](https://github.com/salesforce/LAVIS/tree/main/projects/blip2).

Disclaimer: The team releasing BLIP-2 did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

BLIP-2 consists of 3 models: a CLIP-like image encoder, a Querying Transformer (Q-Former) and a large language model.

The authors initialize the weights of the image encoder and large language model from pre-trained checkpoints and keep them frozen

while training the Querying Transformer, which is a BERT-like Transformer encoder that maps a set of "query tokens" to query embeddings,

which bridge the gap between the embedding space of the image encoder and the large language model.

The goal for the model is simply to predict the next text token, giving the query embeddings and the previous text.

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/blip2_architecture.jpg"

alt="drawing" width="600"/>

This allows the model to be used for tasks like:

- image captioning

- visual question answering (VQA)

- chat-like conversations by feeding the image and the previous conversation as prompt to the model

## Direct Use and Downstream Use

You can use the raw model for conditional text generation given an image and optional text. See the [model hub](https://huggingface.co/models?search=Salesforce/blip) to look for

fine-tuned versions on a task that interests you.

## Bias, Risks, Limitations, and Ethical Considerations

BLIP2-FlanT5 uses off-the-shelf Flan-T5 as the language model. It inherits the same risks and limitations from [Flan-T5](https://arxiv.org/pdf/2210.11416.pdf):

> Language models, including Flan-T5, can potentially be used for language generation in a harmful way, according to Rae et al. (2021). Flan-T5 should not be used directly in any application, without a prior assessment of safety and fairness concerns specific to the application.

BLIP2 is fine-tuned on image-text datasets (e.g. [LAION](https://laion.ai/blog/laion-400-open-dataset/) ) collected from the internet. As a result the model itself is potentially vulnerable to generating equivalently inappropriate content or replicating inherent biases in the underlying data.

BLIP2 has not been tested in real world applications. It should not be directly deployed in any applications. Researchers should first carefully assess the safety and fairness of the model in relation to the specific context they’re being deployed within.

### How to use

For code examples, we refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/blip-2#transformers.Blip2ForConditionalGeneration.forward.example), or refer to the snippets below depending on your usecase:

#### Running the model on CPU

<details>

<summary> Click to expand </summary>

```python

import requests

from PIL import Image

from transformers import BlipProcessor, Blip2ForConditionalGeneration

processor = BlipProcessor.from_pretrained("Salesforce/blip2-flan-t5-xxl")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-flan-t5-xxl")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt")

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True))

```

</details>

#### Running the model on GPU

##### In full precision

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-flan-t5-xxl")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-flan-t5-xxl", device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda")

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True))

```

</details>

##### In half precision (`float16`)

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

import torch

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-flan-t5-xxl")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-flan-t5-xxl", torch_dtype=torch.float16, device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda", torch.float16)

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True))

```

</details>

##### In 8-bit precision (`int8`)

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate bitsandbytes

import torch

import requests

from PIL import Image

from transformers import Blip2Processor, Blip2ForConditionalGeneration

processor = Blip2Processor.from_pretrained("Salesforce/blip2-flan-t5-xxl")

model = Blip2ForConditionalGeneration.from_pretrained("Salesforce/blip2-flan-t5-xxl", load_in_8bit=True, device_map="auto")

img_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/demo.jpg'

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert('RGB')

question = "how many dogs are in the picture?"

inputs = processor(raw_image, question, return_tensors="pt").to("cuda", torch.float16)

out = model.generate(**inputs)

print(processor.decode(out[0], skip_special_tokens=True))

```

</details> | 6,578 | [

[

-0.0272216796875,

-0.049530029296875,

-0.0035953521728515625,

0.030548095703125,

-0.0177459716796875,

-0.0112762451171875,

-0.022857666015625,

-0.059600830078125,

-0.01029205322265625,

0.0222625732421875,

-0.03570556640625,

-0.01032257080078125,

-0.04461669921875,

-0.0020465850830078125,

-0.0074615478515625,

0.052093505859375,

0.0065155029296875,

-0.004276275634765625,

-0.00460052490234375,

-0.002193450927734375,

-0.019317626953125,

-0.00864410400390625,

-0.0465087890625,

-0.0164642333984375,

0.006145477294921875,

0.0244293212890625,

0.05316162109375,

0.0306549072265625,

0.054473876953125,

0.0291900634765625,

-0.01318359375,

0.006862640380859375,

-0.035308837890625,

-0.0178680419921875,

-0.007434844970703125,

-0.055572509765625,

-0.0200653076171875,

-0.0020923614501953125,

0.033294677734375,

0.03106689453125,

0.01092529296875,

0.0305023193359375,

-0.007038116455078125,

0.037261962890625,

-0.040740966796875,

0.0212554931640625,

-0.051483154296875,

0.00543975830078125,

-0.0051727294921875,

-0.0004515647888183594,

-0.0285797119140625,

-0.01397705078125,

0.0037174224853515625,

-0.054931640625,

0.03875732421875,

0.002635955810546875,

0.11810302734375,

0.0195159912109375,

-0.0009531974792480469,

-0.0236358642578125,

-0.0367431640625,

0.06695556640625,

-0.048797607421875,

0.03521728515625,

0.014434814453125,

0.025482177734375,

0.0001380443572998047,

-0.071533203125,

-0.0567626953125,

-0.00949859619140625,

-0.0106658935546875,

0.0238800048828125,

-0.0179443359375,

-0.005340576171875,

0.035980224609375,

0.0207366943359375,

-0.04547119140625,

-0.00603485107421875,

-0.06292724609375,

-0.014923095703125,

0.053558349609375,

-0.006900787353515625,

0.02374267578125,

-0.0223388671875,

-0.041748046875,

-0.0323486328125,

-0.038482666015625,

0.0244293212890625,

0.004276275634765625,

0.00923919677734375,

-0.031341552734375,

0.058349609375,

0.0014467239379882812,

0.045928955078125,

0.029296875,

-0.02734375,

0.046783447265625,

-0.0234222412109375,

-0.0247650146484375,

-0.013427734375,

0.07183837890625,

0.04217529296875,

0.0173797607421875,

0.0038547515869140625,

-0.020172119140625,

0.0054779052734375,

0.0017709732055664062,

-0.08160400390625,

-0.01654052734375,

0.033294677734375,

-0.037353515625,

-0.020294189453125,

-0.0037174224853515625,

-0.065185546875,

-0.0087890625,

0.012176513671875,

0.03668212890625,

-0.042388916015625,

-0.0289764404296875,

0.0015497207641601562,

-0.032806396484375,

0.0289306640625,

0.01554107666015625,

-0.07373046875,

-0.005016326904296875,

0.035888671875,

0.06854248046875,

0.0201416015625,

-0.036895751953125,

-0.01611328125,

0.010589599609375,

-0.0245819091796875,

0.0423583984375,

-0.01062774658203125,

-0.0183258056640625,

-0.0023670196533203125,

0.01947021484375,

-0.0019931793212890625,

-0.045379638671875,

0.00914764404296875,

-0.031036376953125,

0.0201873779296875,

-0.01296234130859375,

-0.03411865234375,

-0.026214599609375,

0.0146484375,

-0.035308837890625,

0.0806884765625,

0.0233917236328125,

-0.06475830078125,

0.035308837890625,

-0.04449462890625,

-0.0256500244140625,

0.01432037353515625,

-0.01016998291015625,

-0.052886962890625,

-0.00713348388671875,

0.016571044921875,

0.029205322265625,

-0.022216796875,

0.0021038055419921875,

-0.027099609375,

-0.0279388427734375,

0.00516510009765625,

-0.007110595703125,

0.08135986328125,

0.0050048828125,

-0.051605224609375,

0.0003228187561035156,

-0.03839111328125,

-0.00763702392578125,

0.0298614501953125,

-0.01552581787109375,

0.00885009765625,

-0.0198822021484375,

0.0146026611328125,

0.026031494140625,

0.04248046875,

-0.054595947265625,

-0.004241943359375,

-0.038848876953125,

0.036376953125,

0.03924560546875,

-0.01522064208984375,

0.0254364013671875,

-0.017852783203125,

0.0264129638671875,

0.0196075439453125,

0.02398681640625,

-0.0231170654296875,

-0.0572509765625,

-0.0699462890625,

-0.01531219482421875,

-0.0004825592041015625,

0.052490234375,

-0.06451416015625,

0.0304718017578125,

-0.0177459716796875,

-0.049774169921875,

-0.0418701171875,

0.00965118408203125,

0.03924560546875,

0.052093505859375,

0.035064697265625,

-0.01380157470703125,

-0.037567138671875,

-0.06671142578125,

0.015380859375,

-0.0175628662109375,

0.0019216537475585938,

0.030792236328125,

0.053924560546875,

-0.0171661376953125,

0.058013916015625,

-0.03546142578125,

-0.0214385986328125,

-0.0262603759765625,

0.00511932373046875,

0.0230560302734375,

0.052276611328125,

0.0645751953125,

-0.059967041015625,

-0.0253448486328125,

0.0032672882080078125,

-0.06951904296875,

0.01265716552734375,

-0.01371002197265625,

-0.01430511474609375,

0.041473388671875,

0.027984619140625,

-0.064208984375,

0.046478271484375,

0.038665771484375,

-0.0220489501953125,

0.038238525390625,

-0.0108184814453125,

-0.00447845458984375,

-0.0760498046875,

0.032928466796875,

0.01053619384765625,

-0.01271820068359375,

-0.032562255859375,

0.006954193115234375,

0.01849365234375,

-0.0185089111328125,

-0.05157470703125,

0.0606689453125,

-0.0335693359375,

-0.020965576171875,

0.0034427642822265625,

-0.01165771484375,

0.01145172119140625,

0.044219970703125,

0.021942138671875,

0.06011962890625,

0.06280517578125,

-0.045867919921875,

0.033935546875,

0.039703369140625,

-0.0236053466796875,

0.0223388671875,

-0.064208984375,

0.00592041015625,

-0.008026123046875,

0.00553131103515625,

-0.07513427734375,

-0.01025390625,

0.0212554931640625,

-0.053314208984375,

0.0231781005859375,

-0.0167236328125,

-0.03326416015625,

-0.05450439453125,

-0.021820068359375,

0.024658203125,

0.0496826171875,

-0.050628662109375,

0.0321044921875,

0.0207672119140625,

0.0111541748046875,

-0.0521240234375,

-0.08697509765625,

-0.0038318634033203125,

0.0025386810302734375,

-0.06842041015625,

0.037750244140625,

0.0031147003173828125,

0.0111541748046875,

0.0089263916015625,

0.021026611328125,

0.00013959407806396484,

-0.01268768310546875,

0.0215911865234375,

0.0259246826171875,

-0.0253143310546875,

-0.01470184326171875,

-0.0182952880859375,

0.00024509429931640625,

-0.004116058349609375,

-0.01323699951171875,

0.056671142578125,

-0.020965576171875,

0.0011949539184570312,

-0.053497314453125,

0.006175994873046875,

0.036956787109375,

-0.02520751953125,

0.048004150390625,

0.0626220703125,

-0.03387451171875,

-0.006565093994140625,

-0.037109375,

-0.01318359375,

-0.043914794921875,

0.039306640625,

-0.0271148681640625,

-0.02838134765625,

0.04510498046875,

0.0192413330078125,

0.01297760009765625,

0.0271148681640625,

0.05548095703125,

-0.00833892822265625,

0.06591796875,

0.047760009765625,

0.01641845703125,

0.0513916015625,

-0.06884765625,

0.0059356689453125,

-0.052734375,

-0.0338134765625,

-0.005626678466796875,

-0.01904296875,

-0.036407470703125,

-0.0330810546875,

0.02166748046875,

0.01898193359375,

-0.033660888671875,

0.0233154296875,

-0.03900146484375,

0.01297760009765625,

0.05169677734375,

0.022308349609375,

-0.0215911865234375,

0.01137542724609375,

-0.006687164306640625,

0.00446319580078125,

-0.046905517578125,

-0.0200958251953125,

0.07135009765625,

0.034027099609375,

0.04766845703125,

-0.0091400146484375,

0.031646728515625,

-0.02099609375,

0.0179595947265625,

-0.052734375,

0.044525146484375,

-0.0249176025390625,

-0.06085205078125,

-0.0137939453125,

-0.020416259765625,

-0.06939697265625,

0.0094451904296875,

-0.0155181884765625,

-0.052886962890625,

0.01399993896484375,

0.02685546875,

-0.016082763671875,

0.029571533203125,

-0.06768798828125,

0.07330322265625,

-0.03497314453125,

-0.04364013671875,

0.00507354736328125,

-0.046905517578125,

0.033416748046875,

0.01497650146484375,

-0.0118560791015625,

0.007610321044921875,

0.00467681884765625,

0.053680419921875,

-0.044830322265625,

0.06072998046875,

-0.031463623046875,

0.021820068359375,

0.03643798828125,

-0.01291656494140625,

0.01245880126953125,

-0.005504608154296875,

-0.006561279296875,

0.0234832763671875,

-0.0009965896606445312,

-0.044891357421875,

-0.04058837890625,

0.0181884765625,

-0.06500244140625,

-0.0310516357421875,

-0.023529052734375,

-0.0290069580078125,

0.0026912689208984375,

0.03350830078125,

0.05206298828125,

0.02618408203125,

0.0197296142578125,

0.0055694580078125,

0.0209808349609375,

-0.04095458984375,

0.05340576171875,

0.00345611572265625,

-0.026519775390625,

-0.035888671875,

0.0684814453125,

-0.0030384063720703125,

0.02264404296875,

0.01537322998046875,

0.016082763671875,

-0.038360595703125,

-0.021820068359375,

-0.056243896484375,

0.041290283203125,

-0.0467529296875,

-0.034912109375,

-0.028076171875,

-0.020477294921875,

-0.0465087890625,

-0.014801025390625,

-0.041473388671875,

-0.017608642578125,

-0.03729248046875,

0.0165863037109375,

0.040679931640625,

0.0355224609375,

-0.007038116455078125,

0.0302734375,

-0.04559326171875,

0.03570556640625,

0.024139404296875,

0.02783203125,

0.0009613037109375,

-0.04193115234375,

-0.0035381317138671875,

0.022796630859375,

-0.03033447265625,

-0.051483154296875,

0.037994384765625,

0.0189056396484375,

0.02569580078125,

0.03033447265625,

-0.0267791748046875,

0.06964111328125,

-0.023193359375,

0.0654296875,

0.038543701171875,

-0.07061767578125,

0.056610107421875,

-0.00666046142578125,

0.00885009765625,

0.0228271484375,

0.0230255126953125,

-0.0266265869140625,

-0.0203704833984375,

-0.054290771484375,

-0.060577392578125,

0.06256103515625,

0.01406097412109375,

0.007732391357421875,

0.015838623046875,

0.0257568359375,

-0.0130157470703125,

0.0106353759765625,

-0.050872802734375,

-0.01690673828125,

-0.047149658203125,

-0.01300048828125,

-0.0023651123046875,

-0.004978179931640625,

0.01025390625,

-0.03460693359375,

0.04083251953125,

-0.00411224365234375,

0.047821044921875,

0.026641845703125,

-0.0261383056640625,

-0.0150299072265625,

-0.035614013671875,

0.044891357421875,

0.03765869140625,

-0.023193359375,

-0.006153106689453125,

-0.001323699951171875,

-0.054779052734375,

-0.01488494873046875,

0.002750396728515625,

-0.02703857421875,

0.0013189315795898438,

0.03253173828125,

0.08203125,

-0.00693511962890625,

-0.04071044921875,

0.056182861328125,

0.0007219314575195312,

-0.0188140869140625,

-0.0295562744140625,

0.0031986236572265625,

0.007656097412109375,

0.02264404296875,

0.0298004150390625,

0.0112457275390625,

-0.020843505859375,

-0.0374755859375,

0.0234527587890625,

0.0306243896484375,

-0.006938934326171875,

-0.0301666259765625,

0.06439208984375,

0.007610321044921875,

-0.017120361328125,

0.0576171875,

-0.029876708984375,

-0.05206298828125,

0.0718994140625,

0.057159423828125,

0.038330078125,

-0.0024814605712890625,

0.016082763671875,

0.053497314453125,

0.0278472900390625,

-0.003940582275390625,

0.039886474609375,

0.017608642578125,

-0.06793212890625,

-0.0130462646484375,

-0.046630859375,

-0.020660400390625,

0.0212554931640625,

-0.03558349609375,

0.043060302734375,

-0.05316162109375,

-0.0174560546875,

0.01558685302734375,

0.0176239013671875,

-0.0667724609375,

0.031707763671875,

0.02520751953125,

0.0687255859375,

-0.06060791015625,

0.041259765625,

0.061767578125,

-0.06475830078125,

-0.07037353515625,

-0.0157623291015625,

-0.0234527587890625,

-0.08148193359375,

0.06298828125,

0.03125,

0.0038604736328125,

-0.0018472671508789062,

-0.05682373046875,

-0.057525634765625,

0.08734130859375,

0.03302001953125,

-0.0258941650390625,

0.0026607513427734375,

0.01474761962890625,

0.0404052734375,

-0.014190673828125,

0.038330078125,

0.0178070068359375,

0.03875732421875,

0.0297698974609375,

-0.06805419921875,

0.01255035400390625,

-0.027252197265625,

0.003688812255859375,

-0.00942230224609375,

-0.07489013671875,

0.0771484375,

-0.03765869140625,

-0.01446533203125,

0.004119873046875,

0.0609130859375,

0.034820556640625,

0.01271820068359375,

0.036834716796875,

0.043243408203125,

0.05218505859375,

0.0006341934204101562,

0.0750732421875,

-0.0288238525390625,

0.04736328125,

0.04376220703125,

0.0019626617431640625,

0.059783935546875,

0.0264129638671875,

-0.00887298583984375,

0.020355224609375,

0.051483154296875,

-0.04376220703125,

0.0295257568359375,

-0.00099945068359375,

0.012939453125,

0.0033626556396484375,

0.00945281982421875,

-0.030242919921875,

0.049224853515625,

0.03759765625,

-0.0283355712890625,

-0.0022373199462890625,

0.0009083747863769531,

0.00301361083984375,

-0.03033447265625,

-0.0189208984375,

0.0240325927734375,

-0.008758544921875,

-0.05401611328125,

0.0765380859375,

-0.00020575523376464844,

0.0791015625,

-0.0182037353515625,

0.0034313201904296875,

-0.024658203125,

0.0197906494140625,

-0.0297698974609375,

-0.07415771484375,

0.0236968994140625,

-0.0019159317016601562,

0.00243377685546875,

0.00039458274841308594,

0.0404052734375,

-0.033477783203125,

-0.06988525390625,

0.021392822265625,

0.0080413818359375,

0.02398681640625,

0.01556396484375,

-0.07861328125,

0.0109405517578125,

0.005336761474609375,

-0.0269775390625,

-0.007785797119140625,

0.02398681640625,

0.004489898681640625,

0.055633544921875,

0.046844482421875,

0.0158538818359375,

0.034820556640625,

-0.005519866943359375,

0.0601806640625,

-0.04913330078125,

-0.0290374755859375,

-0.042083740234375,

0.04541015625,

-0.00983428955078125,

-0.045867919921875,

0.034393310546875,

0.061859130859375,

0.06793212890625,

-0.01206207275390625,

0.054290771484375,

-0.0277099609375,

0.014495849609375,

-0.0362548828125,

0.0596923828125,

-0.06268310546875,

-0.00926971435546875,

-0.016632080078125,

-0.046783447265625,

-0.0269012451171875,

0.06903076171875,

-0.01476287841796875,

0.01439666748046875,

0.043426513671875,

0.09100341796875,

-0.0235748291015625,

-0.01702880859375,

0.00978851318359375,

0.0219573974609375,

0.0305328369140625,

0.051788330078125,

0.04412841796875,

-0.055450439453125,

0.0460205078125,

-0.0557861328125,

-0.01332855224609375,

-0.01250457763671875,

-0.047210693359375,

-0.06982421875,

-0.046630859375,

-0.0301971435546875,

-0.0399169921875,

-0.007442474365234375,

0.04034423828125,

0.0697021484375,

-0.054534912109375,

-0.018096923828125,

-0.0106964111328125,

-0.0011501312255859375,

-0.0034656524658203125,

-0.016815185546875,

0.03460693359375,

-0.026519775390625,

-0.06695556640625,

-0.008148193359375,

0.00919342041015625,

0.0218048095703125,

-0.014434814453125,

0.0017881393432617188,

-0.0165557861328125,

-0.0227203369140625,

0.034210205078125,

0.0340576171875,

-0.045440673828125,

-0.0192108154296875,

0.004535675048828125,

-0.01222991943359375,

0.0223388671875,

0.0257568359375,

-0.044830322265625,

0.02386474609375,

0.04095458984375,

0.027130126953125,

0.0645751953125,

-0.003658294677734375,

0.015167236328125,

-0.049102783203125,

0.058258056640625,

0.0107574462890625,

0.029937744140625,

0.039764404296875,

-0.029388427734375,

0.0302886962890625,

0.025238037109375,

-0.0206451416015625,

-0.0657958984375,

0.0029850006103515625,

-0.0863037109375,

-0.01806640625,

0.09906005859375,

-0.02001953125,

-0.05035400390625,

0.01605224609375,

-0.00997161865234375,

0.0299835205078125,

-0.018707275390625,

0.042144775390625,

0.0120086669921875,

-0.00959014892578125,

-0.034454345703125,

-0.0249481201171875,

0.0340576171875,

0.02398681640625,

-0.0479736328125,

-0.0207366943359375,

0.01483154296875,

0.0389404296875,

0.031585693359375,

0.03753662109375,

-0.00798797607421875,

0.0289306640625,

0.0185546875,

0.0303955078125,

-0.01004791259765625,

-0.004726409912109375,

-0.01348114013671875,

-0.0035839080810546875,

-0.01322174072265625,

-0.03778076171875

]

] |

DionTimmer/controlnet_qrcode | 2023-06-17T16:33:13.000Z | [

"diffusers",

"stable-diffusion",

"controlnet",

"en",

"license:openrail++",

"diffusers:ControlNetModel",

"region:us"

] | null | DionTimmer | null | null | DionTimmer/controlnet_qrcode | 280 | 20,853 | diffusers | 2023-06-15T02:23:37 | ---

tags:

- stable-diffusion

- controlnet

license: openrail++

language:

- en

---

# QR Code Conditioned ControlNet Models for Stable Diffusion 1.5 and 2.1

## Model Description

These ControlNet models have been trained on a large dataset of 150,000 QR code + QR code artwork couples. They provide a solid foundation for generating QR code-based artwork that is aesthetically pleasing, while still maintaining the integral QR code shape.

The Stable Diffusion 2.1 version is marginally more effective, as it was developed to address my specific needs. However, a 1.5 version model was also trained on the same dataset for those who are using the older version.

Separate repos for usage in diffusers can be found here:<br>

1.5: https://huggingface.co/DionTimmer/controlnet_qrcode-control_v1p_sd15<br>

2.1: https://huggingface.co/DionTimmer/controlnet_qrcode-control_v11p_sd21<br>

## How to use with Diffusers

```bash

pip -q install diffusers transformers accelerate torch xformers

```

```python

import torch

from PIL import Image

from diffusers import StableDiffusionControlNetImg2ImgPipeline, ControlNetModel, DDIMScheduler

from diffusers.utils import load_image

controlnet = ControlNetModel.from_pretrained("DionTimmer/controlnet_qrcode-control_v1p_sd15",

torch_dtype=torch.float16)

pipe = StableDiffusionControlNetImg2ImgPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5",

controlnet=controlnet,

safety_checker=None,

torch_dtype=torch.float16

)

pipe.enable_xformers_memory_efficient_attention()

pipe.scheduler = DDIMScheduler.from_config(pipe.scheduler.config)

pipe.enable_model_cpu_offload()

def resize_for_condition_image(input_image: Image, resolution: int):

input_image = input_image.convert("RGB")

W, H = input_image.size

k = float(resolution) / min(H, W)

H *= k

W *= k

H = int(round(H / 64.0)) * 64

W = int(round(W / 64.0)) * 64

img = input_image.resize((W, H), resample=Image.LANCZOS)

return img

# play with guidance_scale, controlnet_conditioning_scale and strength to make a valid QR Code Image

# qr code image

source_image = load_image("https://s3.amazonaws.com/moonup/production/uploads/6064e095abd8d3692e3e2ed6/A_RqHaAM6YHBodPLwqtjn.png")

# initial image, anything

init_image = load_image("https://s3.amazonaws.com/moonup/production/uploads/noauth/KfMBABpOwIuNolv1pe3qX.jpeg")

condition_image = resize_for_condition_image(source_image, 768)

init_image = resize_for_condition_image(init_image, 768)

generator = torch.manual_seed(123121231)

image = pipe(prompt="a bilboard in NYC with a qrcode",

negative_prompt="ugly, disfigured, low quality, blurry, nsfw",

image=init_image,

control_image=condition_image,

width=768,

height=768,

guidance_scale=20,

controlnet_conditioning_scale=1.5,

generator=generator,

strength=0.9,

num_inference_steps=150,

)

image.images[0]

```

## Performance and Limitations

These models perform quite well in most cases, but please note that they are not 100% accurate. In some instances, the QR code shape might not come through as expected. You can increase the ControlNet weight to emphasize the QR code shape. However, be cautious as this might negatively impact the style of your output.**To optimize for scanning, please generate your QR codes with correction mode 'H' (30%).**

To balance between style and shape, a gentle fine-tuning of the control weight might be required based on the individual input and the desired output, aswell as the correct prompt. Some prompts do not work until you increase the weight by a lot. The process of finding the right balance between these factors is part art and part science. For the best results, it is recommended to generate your artwork at a resolution of 768. This allows for a higher level of detail in the final product, enhancing the quality and effectiveness of the QR code-based artwork.

## Installation

The simplest way to use this is to place the .safetensors model and its .yaml config file in the folder where your other controlnet models are installed, which varies per application.

For usage in auto1111 they can be placed in the webui/models/ControlNet folder. They can be loaded using the controlnet webui extension which you can install through the extensions tab in the webui (https://github.com/Mikubill/sd-webui-controlnet). Make sure to enable your controlnet unit and set your input image as the QR code. Set the model to either the SD2.1 or 1.5 version depending on your base stable diffusion model, or it will error. No pre-processor is needed, though you can use the invert pre-processor for a different variation of results. 768 is the preferred resolution for generation since it allows for more detail.

Make sure to look up additional info on how to use controlnet if you get stuck, once you have the webui up and running its really easy to install the controlnet extension aswell.

| 5,131 | [

[

-0.02508544921875,

-0.008575439453125,

0.0028228759765625,

0.0286102294921875,

-0.0310516357421875,

-0.00909423828125,

0.0181427001953125,

-0.02239990234375,

0.018890380859375,

0.0399169921875,

-0.01216888427734375,

-0.0297088623046875,

-0.043792724609375,

0.004138946533203125,

-0.0122833251953125,

0.054443359375,

-0.00811004638671875,

0.0036487579345703125,

0.0264434814453125,

0.00391387939453125,

-0.01910400390625,

-0.0019378662109375,

-0.07696533203125,

-0.02587890625,

0.03558349609375,

0.026580810546875,

0.058013916015625,

0.0506591796875,

0.0379638671875,

0.0224151611328125,

0.00762176513671875,

-0.0013713836669921875,

-0.032501220703125,

-0.00643157958984375,

0.01453399658203125,

-0.02435302734375,

-0.0300750732421875,

-0.007587432861328125,

0.032958984375,

0.00029921531677246094,

-0.01153564453125,

0.0009031295776367188,

-0.00279998779296875,

0.0670166015625,

-0.053863525390625,

-0.004367828369140625,

-0.00792694091796875,

0.018707275390625,

0.006038665771484375,

-0.0278472900390625,

-0.0157012939453125,

-0.041229248046875,

-0.0162353515625,

-0.053558349609375,

0.00921630859375,

0.00168609619140625,

0.09246826171875,

0.0203857421875,

-0.05133056640625,

-0.0176849365234375,

-0.064208984375,

0.0401611328125,

-0.056121826171875,

0.018280029296875,

0.0304107666015625,

0.0211181640625,

-0.01293182373046875,

-0.08380126953125,

-0.0379638671875,

-0.0253448486328125,

0.006988525390625,

0.03778076171875,

-0.040252685546875,

0.005634307861328125,

0.044403076171875,

0.0155792236328125,

-0.04986572265625,

-0.006671905517578125,

-0.0491943359375,

-0.01372528076171875,

0.05108642578125,

0.0264434814453125,

0.03375244140625,

-0.0115203857421875,

-0.033203125,

-0.0260772705078125,

-0.025390625,

0.036956787109375,

0.0208282470703125,

-0.0236663818359375,

-0.033294677734375,

0.037200927734375,

-0.015655517578125,

0.034942626953125,

0.0528564453125,

-0.00562286376953125,

0.0222320556640625,

-0.0247955322265625,

-0.0276031494140625,

-0.01302337646484375,

0.08148193359375,

0.0469970703125,

0.0007419586181640625,

0.0072479248046875,

-0.0214996337890625,

-0.0126800537109375,

0.02545166015625,

-0.08740234375,

-0.051361083984375,

0.037689208984375,

-0.046722412109375,

-0.02508544921875,

0.0030879974365234375,

-0.0435791015625,

-0.016632080078125,

0.006450653076171875,

0.042083740234375,

-0.0275726318359375,

-0.0279083251953125,

0.025634765625,

-0.036773681640625,

0.022491455078125,

0.0282135009765625,

-0.043975830078125,

0.002719879150390625,

-0.0060272216796875,

0.0675048828125,

0.01070404052734375,

-0.000972747802734375,

-0.0239410400390625,

0.0021209716796875,

-0.05133056640625,

0.03326416015625,

-0.0034275054931640625,

-0.0279541015625,

-0.023529052734375,

0.0200347900390625,

0.00785064697265625,

-0.040985107421875,

0.04681396484375,

-0.07659912109375,

0.0007309913635253906,

0.0027790069580078125,

-0.026580810546875,

-0.015289306640625,

-0.00873565673828125,

-0.0631103515625,

0.0687255859375,

0.03057861328125,

-0.07720947265625,

0.0111236572265625,

-0.048431396484375,

-0.01287841796875,

0.007648468017578125,

-0.0020809173583984375,

-0.05303955078125,

-0.0160064697265625,

-0.01424407958984375,

0.0269622802734375,

0.0009398460388183594,

-0.00331878662109375,

-0.0061492919921875,

-0.035308837890625,

0.0236968994140625,

-0.01163482666015625,

0.0999755859375,

0.03619384765625,

-0.04669189453125,

0.01351165771484375,

-0.058013916015625,

0.03082275390625,

0.006237030029296875,

-0.037933349609375,

-0.0004925727844238281,

-0.02313232421875,

0.0300750732421875,

0.0303192138671875,

0.021026611328125,

-0.0335693359375,

0.013031005859375,

-0.0152435302734375,

0.045166015625,

0.0311737060546875,

0.01253509521484375,

0.046539306640625,

-0.0352783203125,

0.060150146484375,

0.0104827880859375,

0.026702880859375,

0.022613525390625,

-0.0212554931640625,

-0.05377197265625,

-0.0266571044921875,

0.0192108154296875,

0.04962158203125,

-0.08428955078125,

0.042694091796875,

-0.0056304931640625,

-0.058074951171875,

-0.00803375244140625,

-0.007358551025390625,

0.0224456787109375,

0.0244140625,

0.01361083984375,

-0.032806396484375,

-0.0294342041015625,

-0.0618896484375,

0.03936767578125,

0.0131378173828125,

-0.0255889892578125,

-0.0024471282958984375,

0.045684814453125,

-0.0168609619140625,

0.0565185546875,

-0.0263671875,

-0.00841522216796875,

-0.002166748046875,

0.00433349609375,

0.0262451171875,

0.06927490234375,

0.0477294921875,

-0.07000732421875,

-0.042999267578125,

-0.00865936279296875,

-0.0498046875,

0.006015777587890625,

-0.0184173583984375,

-0.031463623046875,

-0.0015811920166015625,

0.0221099853515625,

-0.048248291015625,

0.060638427734375,

0.036956787109375,

-0.044219970703125,

0.07354736328125,

-0.04119873046875,

0.0292816162109375,

-0.08148193359375,

0.01044464111328125,

0.01375579833984375,

-0.018585205078125,

-0.03912353515625,

0.01450347900390625,

0.033599853515625,

0.0007505416870117188,

-0.0338134765625,

0.035430908203125,

-0.03887939453125,

0.0185699462890625,

-0.033050537109375,

-0.0290679931640625,

0.031219482421875,

0.037353515625,

0.0019369125366210938,

0.0596923828125,

0.054107666015625,

-0.06378173828125,

0.049407958984375,

0.0007486343383789062,

-0.0269012451171875,

0.01139068603515625,

-0.08099365234375,

-0.00036835670471191406,

0.0083160400390625,

0.027252197265625,

-0.0712890625,

-0.009613037109375,

0.058349609375,

-0.042877197265625,

0.0273284912109375,

-0.019775390625,

-0.01678466796875,

-0.033172607421875,

-0.03204345703125,

0.033477783203125,

0.059539794921875,

-0.022796630859375,

0.037261962890625,

0.0031871795654296875,

0.0275115966796875,

-0.038421630859375,

-0.0673828125,

-0.001667022705078125,

-0.0220794677734375,

-0.044342041015625,

0.030426025390625,

-0.01194000244140625,

-0.0007886886596679688,

-0.00453948974609375,

0.004634857177734375,

-0.0227203369140625,

-0.0013399124145507812,

0.0279541015625,

0.00037741661071777344,

-0.00836944580078125,

-0.00850677490234375,

0.00444793701171875,

-0.0201263427734375,

0.006805419921875,

-0.0261688232421875,

0.0295257568359375,

0.0130462646484375,

-0.01439666748046875,

-0.06304931640625,

0.020782470703125,

0.05303955078125,

-0.012115478515625,

0.043609619140625,

0.059783935546875,

-0.037200927734375,

-0.00348663330078125,

-0.0163726806640625,

-0.0120697021484375,

-0.039520263671875,

0.0166473388671875,

-0.028350830078125,

-0.03167724609375,

0.056732177734375,

0.010284423828125,

-0.00041985511779785156,

0.025299072265625,

0.038421630859375,

-0.0200347900390625,

0.0811767578125,

0.04449462890625,

0.01995849609375,

0.052520751953125,

-0.06500244140625,

0.003223419189453125,

-0.08099365234375,

-0.01462554931640625,

-0.034027099609375,

-0.0181884765625,

-0.03515625,

-0.03094482421875,

0.036407470703125,

0.050872802734375,

-0.05718994140625,

0.01715087890625,

-0.055999755859375,

0.007053375244140625,

0.05078125,

0.035797119140625,

0.003414154052734375,

0.0015106201171875,

-0.01137542724609375,

0.0025081634521484375,

-0.058746337890625,

-0.039703369140625,

0.06463623046875,

0.0215301513671875,

0.0694580078125,

0.0080108642578125,

0.042694091796875,

0.0245819091796875,

-0.0011568069458007812,

-0.044097900390625,

0.01552581787109375,

0.005054473876953125,

-0.0338134765625,

-0.0221710205078125,

-0.0235748291015625,

-0.0941162109375,

0.00395965576171875,

-0.0206756591796875,

-0.0325927734375,

0.0472412109375,

0.0172271728515625,

-0.03857421875,

0.0232696533203125,

-0.05133056640625,

0.052520751953125,

-0.0220184326171875,

-0.035491943359375,

0.01120758056640625,

-0.03485107421875,

0.0213470458984375,

0.0095977783203125,

-0.0029582977294921875,

0.007762908935546875,

-0.01043701171875,

0.065673828125,

-0.06317138671875,

0.049163818359375,

-0.011962890625,

-0.005939483642578125,

0.0285491943359375,

0.007167816162109375,

0.02960205078125,

0.007747650146484375,

-0.017974853515625,

0.0004017353057861328,

0.0287322998046875,

-0.05108642578125,

-0.040252685546875,

0.026702880859375,

-0.0703125,

-0.009429931640625,

-0.0283660888671875,

-0.0165252685546875,

0.038848876953125,

0.0185546875,

0.07171630859375,

0.0496826171875,

0.022979736328125,

0.00391387939453125,

0.054290771484375,

-0.0160064697265625,

0.0299530029296875,

0.01549530029296875,

-0.0265045166015625,

-0.04644775390625,

0.05169677734375,

0.0193328857421875,

0.01410675048828125,

0.0132598876953125,

0.01363372802734375,

-0.0224151611328125,

-0.0489501953125,

-0.054351806640625,

-0.004364013671875,

-0.054443359375,

-0.04132080078125,

-0.039581298828125,

-0.028900146484375,

-0.0267791748046875,

-0.0116119384765625,

-0.0188446044921875,

-0.01055145263671875,

-0.0462646484375,

0.0215606689453125,

0.060546875,

0.0400390625,

-0.0268402099609375,

0.03167724609375,

-0.0557861328125,

0.0290679931640625,

0.025848388671875,

0.0272064208984375,

0.018463134765625,

-0.05230712890625,

-0.03460693359375,

0.01873779296875,

-0.02825927734375,

-0.07794189453125,

0.035736083984375,

-0.00946807861328125,

0.020263671875,

0.045166015625,

0.03216552734375,

0.03582763671875,

-0.017120361328125,

0.05078125,

0.035797119140625,

-0.0592041015625,

0.033447265625,

-0.0340576171875,

0.026763916015625,

0.0028362274169921875,

0.045196533203125,

-0.035919189453125,

-0.0204925537109375,

-0.03857421875,

-0.05535888671875,

0.0338134765625,

0.025238037109375,

-0.00041675567626953125,

0.0143890380859375,

0.0513916015625,

-0.02850341796875,

-0.01275634765625,

-0.0616455078125,

-0.03619384765625,

-0.030242919921875,

-0.0005121231079101562,

0.00978851318359375,

-0.0144500732421875,

-0.004528045654296875,

-0.02996826171875,

0.0506591796875,

0.007183074951171875,

0.042694091796875,

0.034454345703125,

0.00524139404296875,

-0.0293731689453125,

-0.005466461181640625,

0.041473388671875,

0.057281494140625,

-0.0122222900390625,

0.000004112720489501953,

-0.0196533203125,

-0.059295654296875,

0.028717041015625,

-0.004322052001953125,

-0.032623291015625,

-0.00893402099609375,

0.0173492431640625,

0.051177978515625,

-0.0135345458984375,

-0.0197906494140625,

0.033599853515625,

-0.0272674560546875,

-0.036407470703125,

-0.035552978515625,

0.020416259765625,

0.01715087890625,

0.039947509765625,

0.045257568359375,

0.0243072509765625,

0.0208282470703125,

0.002872467041015625,

0.00919342041015625,

0.0239410400390625,

-0.0147705078125,

-0.007843017578125,

0.063720703125,

0.0006804466247558594,

-0.020263671875,

0.042083740234375,

-0.054168701171875,

-0.04266357421875,

0.0882568359375,

0.040679931640625,

0.06201171875,

0.003589630126953125,

0.026580810546875,

0.0595703125,

0.0268096923828125,

-0.00365447998046875,

0.03912353515625,

0.0120086669921875,

-0.06805419921875,

-0.0178680419921875,

-0.0238037109375,

-0.01629638671875,

0.0007143020629882812,

-0.057586669921875,

0.025146484375,

-0.042694091796875,

-0.026885986328125,

-0.023345947265625,

0.03179931640625,

-0.051605224609375,

0.0253143310546875,

-0.00797271728515625,

0.06719970703125,

-0.053253173828125,

0.0703125,

0.05047607421875,

-0.0484619140625,

-0.09515380859375,

-0.01666259765625,

-0.02825927734375,

-0.03778076171875,

0.056121826171875,

-0.01207733154296875,

-0.0193328857421875,

0.0284271240234375,

-0.05987548828125,

-0.0634765625,

0.10198974609375,

0.005054473876953125,

-0.0284881591796875,

0.0193939208984375,

-0.0284423828125,

0.03778076171875,

-0.0238800048828125,

0.045379638671875,

0.002742767333984375,

0.023406982421875,

0.01215362548828125,

-0.05615234375,

0.024658203125,

-0.03485107421875,

0.026275634765625,

0.004852294921875,

-0.051177978515625,

0.0745849609375,

-0.0219879150390625,

-0.0272674560546875,

0.01102447509765625,

0.048126220703125,

0.0239715576171875,

0.017822265625,

0.02923583984375,

0.041534423828125,

0.0498046875,

0.0128631591796875,

0.0634765625,

-0.023773193359375,

0.03253173828125,

0.041717529296875,

0.0005345344543457031,

0.045196533203125,

0.01151275634765625,

-0.015625,

0.033721923828125,

0.07354736328125,

-0.0178375244140625,

0.0362548828125,

0.03326416015625,

-0.0083160400390625,

0.00030350685119628906,

0.0204315185546875,

-0.04736328125,

0.01377105712890625,

0.01346588134765625,

-0.01531982421875,

-0.00778961181640625,

0.031890869140625,

-0.01146697998046875,

-0.018707275390625,

-0.032379150390625,

0.0267181396484375,

-0.0164642333984375,

-0.01129150390625,

0.0745849609375,

0.0151519775390625,

0.08282470703125,

-0.036590576171875,

-0.0133056640625,

-0.01235198974609375,

-0.0040283203125,

-0.035858154296875,

-0.04949951171875,

0.01171112060546875,

-0.01352691650390625,

-0.0256805419921875,

-0.00281524658203125,

0.0469970703125,

-0.0224456787109375,

-0.033111572265625,

0.019683837890625,

0.017974853515625,

0.033843994140625,

0.00786590576171875,

-0.06793212890625,

0.0196685791015625,

0.01451873779296875,

-0.0287017822265625,

0.01520538330078125,

0.03997802734375,

0.006900787353515625,

0.06365966796875,

0.030517578125,

0.0060577392578125,

0.0269317626953125,

-0.01343536376953125,

0.0631103515625,

-0.047149658203125,

-0.034912109375,

-0.03173828125,

0.04669189453125,

0.01065826416015625,

-0.03155517578125,

0.044281005859375,

0.045867919921875,

0.051544189453125,

-0.024017333984375,

0.0609130859375,

-0.0221405029296875,

0.0074920654296875,

-0.0501708984375,

0.08197021484375,

-0.0504150390625,

-0.0010051727294921875,

-0.00696563720703125,

-0.036590576171875,

-0.00836181640625,

0.06915283203125,

-0.015655517578125,

0.0183258056640625,

0.035247802734375,

0.08154296875,

-0.0049285888671875,

-0.029541015625,

0.016845703125,

-0.006214141845703125,

0.01338958740234375,

0.050323486328125,

0.05560302734375,

-0.072021484375,

0.038787841796875,

-0.058074951171875,

-0.0277252197265625,

-0.0151214599609375,

-0.06591796875,

-0.042236328125,

-0.0426025390625,

-0.05987548828125,

-0.0634765625,

-0.0203857421875,

0.06005859375,

0.06695556640625,

-0.04248046875,

-0.0186920166015625,

-0.0121612548828125,

0.01482391357421875,

-0.019195556640625,

-0.0222320556640625,

0.036956787109375,

0.0105743408203125,

-0.054901123046875,

-0.0169677734375,

-0.001434326171875,

0.031463623046875,

-0.00302886962890625,

-0.0226898193359375,

-0.01363372802734375,

-0.019134521484375,

0.0136260986328125,

0.037689208984375,

-0.03375244140625,

-0.0078887939453125,

-0.0080718994140625,

-0.0400390625,

0.016693115234375,

0.035308837890625,

-0.039947509765625,

0.025299072265625,

0.04351806640625,

0.00989532470703125,

0.035736083984375,

-0.0105133056640625,

0.0157012939453125,

-0.01739501953125,

0.012451171875,

0.022491455078125,

0.012603759765625,

-0.005634307861328125,

-0.048431396484375,

0.01904296875,

0.0006437301635742188,

-0.060150146484375,

-0.03387451171875,

0.00818634033203125,

-0.08843994140625,

-0.01837158203125,

0.0706787109375,

-0.0195770263671875,

-0.0181884765625,

-0.0230712890625,

-0.0258636474609375,

0.034088134765625,

-0.0261383056640625,

0.0380859375,

0.0209197998046875,

-0.0269012451171875,

-0.046142578125,

-0.04052734375,

0.044464111328125,

0.0020465850830078125,

-0.039337158203125,

-0.033172607421875,

0.042022705078125,

0.040740966796875,

0.026031494140625,

0.07080078125,

0.0029697418212890625,

0.044403076171875,

0.021240234375,

0.05029296875,

-0.00785064697265625,

0.00890350341796875,

-0.045440673828125,

-0.0034942626953125,

0.0083770751953125,

-0.04095458984375

]

] |

microsoft/swin-base-patch4-window7-224-in22k | 2023-06-27T10:46:44.000Z | [

"transformers",

"pytorch",

"tf",

"safetensors",

"swin",

"image-classification",

"vision",

"dataset:imagenet-21k",

"arxiv:2103.14030",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | image-classification | microsoft | null | null | microsoft/swin-base-patch4-window7-224-in22k | 11 | 20,830 | transformers | 2022-03-02T23:29:05 | ---

license: apache-2.0

tags:

- vision

- image-classification

datasets:

- imagenet-21k

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

example_title: Tiger

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

example_title: Teapot

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

example_title: Palace

---

# Swin Transformer (large-sized model)

Swin Transformer model pre-trained on ImageNet-21k (14 million images, 21,841 classes) at resolution 224x224. It was introduced in the paper [Swin Transformer: Hierarchical Vision Transformer using Shifted Windows](https://arxiv.org/abs/2103.14030) by Liu et al. and first released in [this repository](https://github.com/microsoft/Swin-Transformer).

Disclaimer: The team releasing Swin Transformer did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

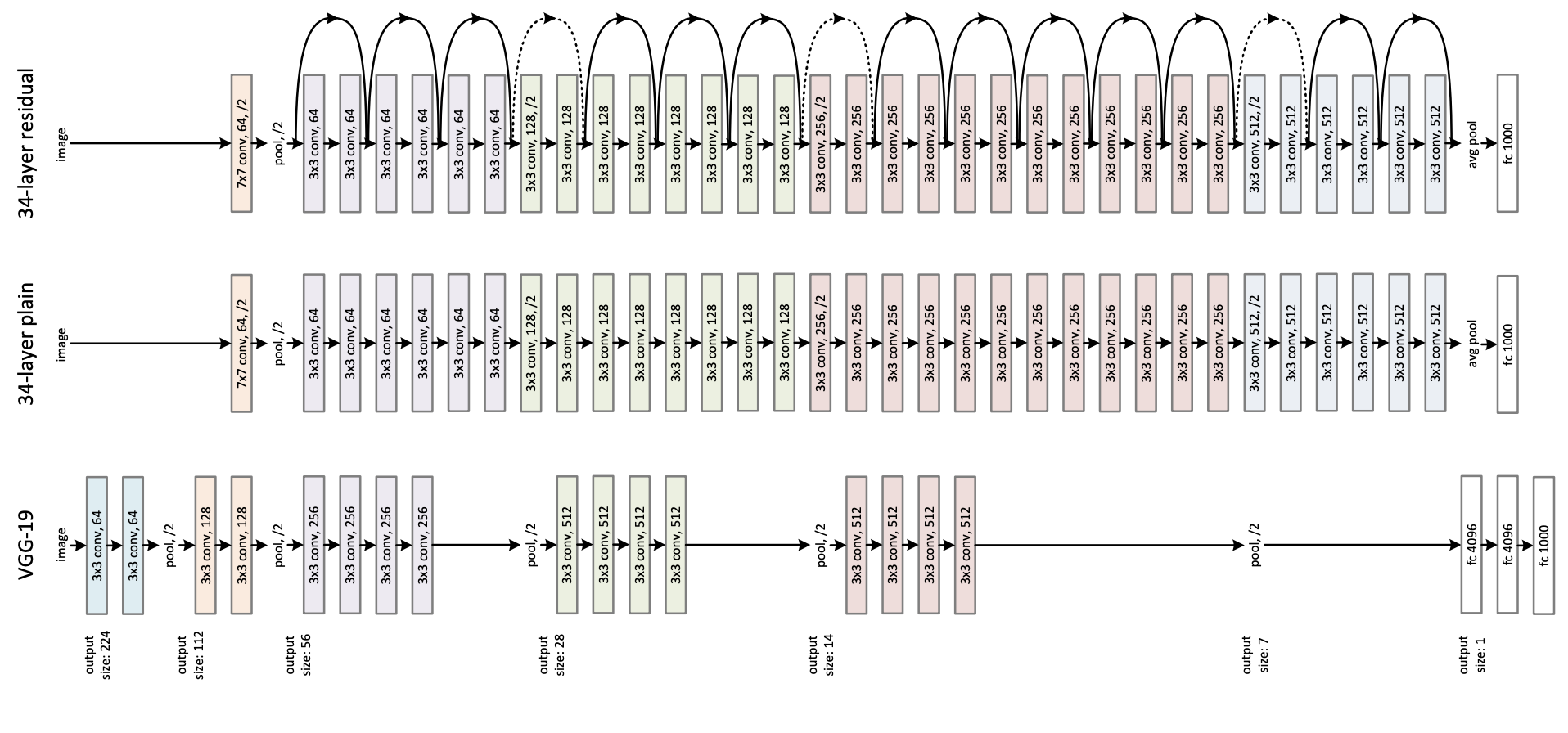

The Swin Transformer is a type of Vision Transformer. It builds hierarchical feature maps by merging image patches (shown in gray) in deeper layers and has linear computation complexity to input image size due to computation of self-attention only within each local window (shown in red). It can thus serve as a general-purpose backbone for both image classification and dense recognition tasks. In contrast, previous vision Transformers produce feature maps of a single low resolution and have quadratic computation complexity to input image size due to computation of self-attention globally.

[Source](https://paperswithcode.com/method/swin-transformer)

## Intended uses & limitations

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=swin) to look for

fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes:

```python

from transformers import AutoImageProcessor, SwinForImageClassification

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

processor = AutoImageProcessor.from_pretrained("microsoft/swin-base-patch4-window7-224-in22k")

model = SwinForImageClassification.from_pretrained("microsoft/swin-base-patch4-window7-224-in22k")

inputs = processor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits

# model predicts one of the 1000 ImageNet classes

predicted_class_idx = logits.argmax(-1).item()

print("Predicted class:", model.config.id2label[predicted_class_idx])

```

For more code examples, we refer to the [documentation](https://huggingface.co/transformers/model_doc/swin.html#).

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-2103-14030,

author = {Ze Liu and

Yutong Lin and

Yue Cao and

Han Hu and

Yixuan Wei and

Zheng Zhang and

Stephen Lin and

Baining Guo},

title = {Swin Transformer: Hierarchical Vision Transformer using Shifted Windows},

journal = {CoRR},

volume = {abs/2103.14030},

year = {2021},

url = {https://arxiv.org/abs/2103.14030},

eprinttype = {arXiv},

eprint = {2103.14030},

timestamp = {Thu, 08 Apr 2021 07:53:26 +0200},

biburl = {https://dblp.org/rec/journals/corr/abs-2103-14030.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

``` | 3,729 | [

[

-0.048614501953125,

-0.02691650390625,

-0.00980377197265625,

0.012359619140625,

-0.00638580322265625,

-0.0220794677734375,

-0.00502777099609375,

-0.061492919921875,

0.00399017333984375,

0.0247344970703125,

-0.04046630859375,

-0.0148468017578125,

-0.04315185546875,

-0.004772186279296875,

-0.0355224609375,

0.06536865234375,

-0.0013704299926757812,

-0.01305389404296875,

-0.018463134765625,

-0.01375579833984375,

-0.01486968994140625,

-0.0176239013671875,

-0.0382080078125,

-0.01806640625,

0.040557861328125,

0.0106048583984375,

0.055999755859375,

0.033843994140625,

0.064453125,

0.0379638671875,

-0.0028400421142578125,

-0.006328582763671875,

-0.02294921875,

-0.0195770263671875,

0.006481170654296875,

-0.033721923828125,

-0.035308837890625,

0.00971221923828125,

0.03619384765625,

0.0306854248046875,

0.0160980224609375,

0.033050537109375,

0.0054473876953125,

0.037445068359375,

-0.032928466796875,

0.01204681396484375,

-0.045166015625,

0.0171356201171875,

-0.00350189208984375,

-0.003662109375,

-0.0250396728515625,

-0.00507354736328125,

0.0189666748046875,

-0.035797119140625,

0.055633544921875,

0.0194091796875,

0.10662841796875,

0.004108428955078125,

-0.01995849609375,

0.0167999267578125,

-0.03936767578125,

0.0670166015625,

-0.052581787109375,

0.0163421630859375,

-0.0004277229309082031,

0.03924560546875,

0.0107421875,

-0.06524658203125,

-0.03564453125,

-0.0021457672119140625,

-0.022674560546875,

0.01279449462890625,

-0.03173828125,

0.007564544677734375,

0.036346435546875,

0.032135009765625,

-0.036834716796875,

0.0139923095703125,

-0.053131103515625,

-0.0204620361328125,

0.05963134765625,

0.003498077392578125,

0.0242462158203125,

-0.0210418701171875,

-0.04852294921875,

-0.031280517578125,

-0.020599365234375,

0.0148468017578125,

-0.0004436969757080078,

0.00792694091796875,

-0.0307464599609375,

0.041778564453125,

0.01551055908203125,

0.046966552734375,

0.0276641845703125,

-0.0242919921875,

0.041717529296875,

-0.016387939453125,

-0.02618408203125,

-0.00792694091796875,

0.067138671875,

0.04364013671875,

0.0090179443359375,

0.0159149169921875,

-0.025177001953125,

-0.0005893707275390625,

0.0235137939453125,

-0.07421875,

-0.009979248046875,

0.005878448486328125,

-0.0478515625,

-0.041107177734375,

0.006664276123046875,

-0.04913330078125,

-0.005420684814453125,

-0.0200347900390625,

0.0285491943359375,

-0.01447296142578125,

-0.025787353515625,

-0.029205322265625,

-0.018524169921875,

0.047149658203125,

0.03204345703125,

-0.04876708984375,

0.0066680908203125,

0.0189666748046875,

0.07049560546875,

-0.0244598388671875,

-0.03668212890625,

0.0090789794921875,

-0.0162353515625,

-0.0276947021484375,

0.0438232421875,

-0.007526397705078125,

-0.00894927978515625,

-0.005035400390625,

0.03619384765625,

-0.017059326171875,

-0.038421630859375,

0.022064208984375,

-0.03778076171875,

0.00966644287109375,

0.004730224609375,

-0.0087738037109375,

-0.0189208984375,

0.017730712890625,

-0.0498046875,

0.084716796875,

0.033172607421875,

-0.07330322265625,

0.01546478271484375,

-0.0377197265625,

-0.0253143310546875,

0.0111541748046875,

0.0038356781005859375,

-0.048675537109375,

0.004787445068359375,

0.0128326416015625,

0.043914794921875,

-0.01517486572265625,

0.029937744140625,

-0.0301055908203125,

-0.0239105224609375,

0.0068359375,

-0.02545166015625,

0.07586669921875,

0.0120391845703125,

-0.040618896484375,

0.0225830078125,

-0.04266357421875,

-0.00814056396484375,

0.034210205078125,

0.0067596435546875,

-0.01454925537109375,

-0.0345458984375,

0.029052734375,

0.03973388671875,

0.0272979736328125,

-0.047454833984375,

0.018890380859375,

-0.01708984375,

0.0367431640625,

0.0521240234375,

-0.01088714599609375,

0.043792724609375,

-0.0207366943359375,

0.028717041015625,

0.024658203125,

0.05133056640625,

-0.0158233642578125,

-0.045379638671875,

-0.071533203125,

-0.0109100341796875,

0.005084991455078125,

0.0306549072265625,

-0.0467529296875,

0.04534912109375,

-0.031585693359375,

-0.051177978515625,

-0.03765869140625,

-0.00913238525390625,

0.0026569366455078125,

0.04290771484375,

0.03851318359375,

-0.0125732421875,

-0.06146240234375,

-0.0848388671875,

0.0128173828125,

0.003963470458984375,

-0.0012311935424804688,

0.024871826171875,

0.06475830078125,

-0.043731689453125,

0.0640869140625,

-0.0263671875,

-0.026641845703125,

-0.020751953125,

0.0028934478759765625,

0.025299072265625,

0.040771484375,

0.053802490234375,

-0.06561279296875,

-0.03326416015625,

-0.0006475448608398438,

-0.060333251953125,

0.01038360595703125,

-0.0132293701171875,

-0.017669677734375,

0.0302734375,

0.0232391357421875,

-0.03729248046875,

0.060211181640625,

0.04791259765625,

-0.0187530517578125,

0.05419921875,

0.00281524658203125,

0.01169586181640625,

-0.0728759765625,

0.00788116455078125,

0.023193359375,

-0.01044464111328125,

-0.03607177734375,

0.0010824203491210938,

0.0209197998046875,

-0.016204833984375,

-0.0399169921875,

0.03955078125,

-0.0304718017578125,

-0.0088348388671875,

-0.01346588134765625,

-0.000614166259765625,

0.00510406494140625,

0.051910400390625,

0.012176513671875,

0.0249481201171875,

0.05859375,

-0.03546142578125,

0.034027099609375,

0.0217132568359375,

-0.0280609130859375,

0.0380859375,

-0.06573486328125,

-0.015411376953125,

0.00017774105072021484,

0.02764892578125,

-0.06866455078125,

-0.006839752197265625,

-0.00507354736328125,

-0.0292205810546875,

0.03778076171875,

-0.022918701171875,

-0.0169830322265625,

-0.068359375,

-0.0260467529296875,

0.037445068359375,

0.04730224609375,

-0.060577392578125,

0.051910400390625,

0.01099395751953125,

0.0109100341796875,

-0.054931640625,

-0.0792236328125,

-0.0048370361328125,

0.00036787986755371094,

-0.066650390625,

0.041107177734375,

0.00151824951171875,

0.0032405853271484375,

0.0135498046875,

-0.0111846923828125,

0.006992340087890625,

-0.0195159912109375,

0.0390625,

0.068359375,

-0.01904296875,

-0.0203704833984375,

-0.00415802001953125,

-0.0094146728515625,

0.0009455680847167969,

-0.00400543212890625,

0.02703857421875,

-0.0391845703125,

-0.0142059326171875,

-0.03448486328125,

0.00249481201171875,

0.06072998046875,

-0.004573822021484375,

0.048248291015625,

0.0762939453125,

-0.0245513916015625,

-0.006633758544921875,

-0.047149658203125,

-0.0186004638671875,

-0.041168212890625,

0.02850341796875,

-0.022918701171875,

-0.04608154296875,

0.049072265625,

0.0068359375,

0.019683837890625,

0.06756591796875,

0.019561767578125,

-0.01812744140625,

0.07794189453125,

0.03411865234375,

-0.003520965576171875,

0.04791259765625,

-0.06500244140625,

0.0162353515625,

-0.0665283203125,

-0.0307464599609375,

-0.019866943359375,

-0.051971435546875,

-0.050079345703125,

-0.035736083984375,

0.0249176025390625,

0.000004470348358154297,

-0.03680419921875,

0.0501708984375,

-0.0634765625,

0.005035400390625,

0.04742431640625,

0.01222991943359375,

-0.01007843017578125,

0.01338958740234375,

-0.0091400146484375,

-0.002155303955078125,

-0.052398681640625,

0.0025482177734375,

0.048004150390625,

0.040374755859375,

0.06298828125,

-0.022705078125,

0.036956787109375,

0.006473541259765625,

0.0201416015625,

-0.05914306640625,

0.052154541015625,

-0.00007921457290649414,

-0.052154541015625,

-0.019500732421875,

-0.023834228515625,

-0.076171875,

0.0199432373046875,

-0.0271759033203125,

-0.04144287109375,

0.04364013671875,

0.004673004150390625,

0.0126190185546875,

0.05035400390625,

-0.04840087890625,

0.0634765625,

-0.030914306640625,

-0.025146484375,

0.000946044921875,

-0.064208984375,

0.02191162109375,

0.01142120361328125,

0.0009813308715820312,

0.0008568763732910156,

0.0158538818359375,

0.062255859375,

-0.040496826171875,

0.08197021484375,

-0.0275421142578125,

0.0157928466796875,

0.03448486328125,

-0.00846099853515625,

0.0341796875,

-0.025482177734375,

0.0192718505859375,

0.04620361328125,

0.0043182373046875,

-0.035064697265625,

-0.04925537109375,

0.051849365234375,

-0.07476806640625,

-0.035430908203125,

-0.03741455078125,

-0.03369140625,

0.0116424560546875,

0.0217742919921875,

0.050323486328125,

0.043121337890625,

0.00409698486328125,

0.029510498046875,

0.0355224609375,

-0.0183258056640625,

0.046142578125,

0.007747650146484375,

-0.0258026123046875,

-0.01611328125,

0.055419921875,

0.0169677734375,

0.01027679443359375,

0.02630615234375,

0.027374267578125,

-0.0172576904296875,

-0.02001953125,

-0.027618408203125,

0.021087646484375,

-0.048675537109375,

-0.046295166015625,

-0.03692626953125,

-0.05401611328125,

-0.042510986328125,

-0.03326416015625,

-0.03338623046875,

-0.0226287841796875,

-0.0182952880859375,

0.00177764892578125,

0.028411865234375,

0.043792724609375,

0.00817108154296875,

0.00826263427734375,

-0.04345703125,

0.0122528076171875,

0.027740478515625,

0.0263671875,

0.0210723876953125,

-0.0655517578125,

-0.013275146484375,

-0.0013027191162109375,

-0.0306243896484375,

-0.039886474609375,

0.0426025390625,

0.01397705078125,

0.041168212890625,

0.0447998046875,

0.007476806640625,

0.06396484375,

-0.0240020751953125,

0.06182861328125,

0.0513916015625,

-0.0499267578125,

0.048248291015625,

-0.00701904296875,

0.0289306640625,

0.00949859619140625,

0.033599853515625,

-0.0287933349609375,

-0.0154571533203125,

-0.06890869140625,

-0.0682373046875,

0.05078125,

-0.00004696846008300781,

0.0022735595703125,

0.021728515625,

0.01213836669921875,

-0.00010889768600463867,

-0.005977630615234375,

-0.0626220703125,

-0.0361328125,

-0.048187255859375,

-0.0132904052734375,

-0.007389068603515625,

-0.011932373046875,

-0.01215362548828125,

-0.060943603515625,

0.04595947265625,

-0.00827789306640625,

0.052978515625,

0.0223388671875,

-0.0215606689453125,

-0.0105438232421875,

-0.00772857666015625,

0.0225372314453125,

0.024261474609375,

-0.01025390625,

0.0070037841796875,

0.014373779296875,

-0.052642822265625,

-0.01140594482421875,

0.00017452239990234375,

-0.017181396484375,

-0.005825042724609375,

0.044891357421875,

0.08624267578125,

0.026763916015625,

-0.0143280029296875,

0.0682373046875,

0.005779266357421875,

-0.04461669921875,

-0.03594970703125,

0.004642486572265625,

-0.005767822265625,

0.0220947265625,

0.03765869140625,

0.0494384765625,

0.0054473876953125,

-0.023040771484375,

0.0019102096557617188,

0.020660400390625,

-0.0311737060546875,

-0.023895263671875,

0.04315185546875,

0.006687164306640625,

-0.007171630859375,

0.06573486328125,

0.0107269287109375,

-0.0438232421875,

0.06414794921875,

0.055633544921875,

0.05914306640625,

-0.0078887939453125,

0.01212310791015625,

0.06060791015625,

0.0240325927734375,

-0.00560760498046875,

-0.01256561279296875,

0.0004277229309082031,

-0.06280517578125,

-0.00988006591796875,

-0.04315185546875,

-0.00896453857421875,

0.01390838623046875,

-0.057037353515625,

0.0309295654296875,

-0.026397705078125,

-0.0231475830078125,

0.007190704345703125,

0.01349639892578125,

-0.077392578125,

0.0151824951171875,

0.0180816650390625,

0.084716796875,

-0.0662841796875,

0.054840087890625,

0.04815673828125,

-0.02960205078125,

-0.06561279296875,

-0.043121337890625,

-0.0089263916015625,

-0.068359375,

0.0396728515625,

0.0309600830078125,

-0.004062652587890625,

-0.0110321044921875,

-0.07958984375,

-0.05633544921875,

0.11376953125,

0.0006260871887207031,

-0.053192138671875,

-0.00875091552734375,

-0.010467529296875,

0.036651611328125,

-0.0312347412109375,

0.03271484375,

0.01922607421875,

0.045867919921875,

0.030548095703125,

-0.048797607421875,

0.01219940185546875,

-0.042724609375,

0.0214691162109375,

-0.00016820430755615234,

-0.047027587890625,

0.050872802734375,

-0.0384521484375,

-0.00878143310546875,

-0.0060272216796875,

0.048126220703125,

0.004184722900390625,

0.0194854736328125,

0.053466796875,

0.036895751953125,

0.036041259765625,

-0.023284912109375,

0.07550048828125,

-0.007755279541015625,

0.040679931640625,

0.058197021484375,

0.01508331298828125,

0.05279541015625,

0.02874755859375,

-0.0240020751953125,

0.054168701171875,

0.054412841796875,

-0.052490234375,

0.020294189453125,

-0.00704193115234375,

0.0146636962890625,

-0.004261016845703125,

0.0127410888671875,

-0.03497314453125,

0.024993896484375,

0.0207672119140625,

-0.04010009765625,

0.01036834716796875,

0.023590087890625,

-0.0257110595703125,

-0.03558349609375,

-0.03521728515625,

0.0307769775390625,

-0.0006184577941894531,

-0.039947509765625,

0.057861328125,

-0.0168914794921875,

0.07843017578125,

-0.0416259765625,

0.0106658935546875,

-0.0106658935546875,

0.01204681396484375,

-0.04022216796875,

-0.058746337890625,

0.01247406005859375,

-0.0245208740234375,

-0.0097503662109375,

-0.00254058837890625,

0.0830078125,

-0.021728515625,

-0.04290771484375,

0.031951904296875,

0.005672454833984375,

0.01340484619140625,

0.00582122802734375,

-0.07623291015625,

0.007221221923828125,

-0.00015437602996826172,

-0.0390625,

0.028411865234375,

0.01751708984375,

-0.006866455078125,

0.058197021484375,

0.037384033203125,

-0.007465362548828125,

0.01422119140625,

0.003902435302734375,

0.06597900390625,

-0.048675537109375,

-0.0234832763671875,

-0.0233917236328125,

0.043731689453125,

-0.0203399658203125,

-0.0269012451171875,

0.058685302734375,

0.03533935546875,

0.0557861328125,

-0.0269012451171875,

0.051513671875,

-0.027984619140625,

-0.0003037452697753906,

0.01044464111328125,

0.043121337890625,

-0.053619384765625,

-0.013519287109375,

-0.0259552001953125,

-0.06292724609375,

-0.0240631103515625,

0.0570068359375,

-0.025787353515625,

0.0185089111328125,

0.047607421875,

0.06427001953125,

-0.01236724853515625,

0.0004107952117919922,

0.033599853515625,

0.019622802734375,

-0.0007166862487792969,

0.0201263427734375,

0.042266845703125,

-0.06195068359375,

0.038726806640625,

-0.052032470703125,

-0.0245513916015625,

-0.0369873046875,

-0.04730224609375,

-0.06793212890625,

-0.0643310546875,

-0.0330810546875,

-0.044342041015625,

-0.031890869140625,

0.048004150390625,

0.083251953125,

-0.07269287109375,

-0.004169464111328125,

-0.0006093978881835938,

0.0015401840209960938,

-0.0390625,

-0.0235748291015625,

0.03424072265625,

-0.0089874267578125,

-0.05279541015625,

-0.010162353515625,

0.006298065185546875,

0.023590087890625,

-0.026153564453125,

-0.0152587890625,

-0.006237030029296875,

-0.0158843994140625,

0.055328369140625,

0.025177001953125,

-0.042755126953125,

-0.0171661376953125,

0.01116180419921875,

-0.0189971923828125,

0.018157958984375,

0.047454833984375,

-0.04180908203125,

0.01971435546875,

0.044342041015625,

0.0216522216796875,

0.06719970703125,

-0.0096588134765625,

0.006206512451171875,

-0.040679931640625,

0.0223541259765625,

0.0130615234375,

0.040435791015625,

0.0201568603515625,

-0.0253143310546875,

0.037353515625,

0.03143310546875,

-0.050323486328125,

-0.05279541015625,

-0.004390716552734375,

-0.106689453125,

-0.0198516845703125,

0.07586669921875,

-0.00803375244140625,

-0.04168701171875,

0.0035686492919921875,

-0.010711669921875,

0.017364501953125,

-0.0171966552734375,

0.03363037109375,

0.01255035400390625,

-0.002437591552734375,

-0.039520263671875,

-0.025238037109375,

0.013671875,

-0.01148223876953125,

-0.036041259765625,

-0.02349853515625,

0.0113372802734375,

0.0330810546875,

0.035980224609375,

0.01203155517578125,

-0.0301055908203125,

0.0141754150390625,

0.0210723876953125,

0.04266357421875,

-0.00669097900390625,

-0.0243377685546875,

-0.0171356201171875,

0.0031337738037109375,

-0.0243988037109375,

-0.01513671875

]

] |

deepset/minilm-uncased-squad2 | 2023-07-20T06:39:35.000Z | [

"transformers",

"pytorch",

"jax",

"safetensors",

"bert",

"question-answering",

"en",

"dataset:squad_v2",

"license:cc-by-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | question-answering | deepset | null | null | deepset/minilm-uncased-squad2 | 38 | 20,829 | transformers | 2022-03-02T23:29:05 | ---

language: en

license: cc-by-4.0

datasets:

- squad_v2

model-index:

- name: deepset/minilm-uncased-squad2

results:

- task:

type: question-answering

name: Question Answering

dataset:

name: squad_v2

type: squad_v2

config: squad_v2

split: validation

metrics:

- type: exact_match

value: 76.1921

name: Exact Match

verified: true

verifyToken: eyJhbGciOiJFZERTQSIsInR5cCI6IkpXVCJ9.eyJoYXNoIjoiNmViZTQ3YTBjYTc3ZDQzYmI1Mzk3MTAxM2MzNjdmMTc0MWY4Yzg2MWU3NGQ1MDJhZWI2NzY0YWYxZTY2OTgzMiIsInZlcnNpb24iOjF9.s4XCRs_pvW__LJ57dpXAEHD6NRsQ3XaFrM1xaguS6oUs5fCN77wNNc97scnfoPXT18A8RAn0cLTNivfxZm0oBA

- type: f1

value: 79.5483

name: F1

verified: true