modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

stabilityai/stable-diffusion-2-1-unclip | 2023-04-12T15:49:10.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"arxiv:2112.10752",

"arxiv:1910.09700",

"license:openrail++",

"has_space",

"diffusers:StableUnCLIPImg2ImgPipeline",

"region:us"

] | text-to-image | stabilityai | null | null | stabilityai/stable-diffusion-2-1-unclip | 216 | 17,333 | diffusers | 2023-03-20T13:11:38 | ---

license: openrail++

tags:

- stable-diffusion

- text-to-image

pinned: true

---

# Stable Diffusion v2-1-unclip Model Card

This model card focuses on the model associated with the Stable Diffusion v2-1 model, codebase available [here](https://github.com/Stability-AI/stablediffusion).

This `stable-diffusion-2-1-unclip` is a finetuned version of Stable Diffusion 2.1, modified to accept (noisy) CLIP image embedding in addition to the text prompt, and can be used to create image variations (Examples) or can be chained with text-to-image CLIP priors. The amount of noise added to the image embedding can be specified via the noise_level (0 means no noise, 1000 full noise).

- Use it with 🧨 [`diffusers`](#examples)

## Model Details

- **Developed by:** Robin Rombach, Patrick Esser

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [CreativeML Open RAIL++-M License](https://huggingface.co/stabilityai/stable-diffusion-2/blob/main/LICENSE-MODEL)

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([OpenCLIP-ViT/H](https://github.com/mlfoundations/open_clip)).

- **Resources for more information:** [GitHub Repository](https://github.com/Stability-AI/).

- **Cite as:**

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

## Examples

Using the [🤗's Diffusers library](https://github.com/huggingface/diffusers) to run Stable Diffusion UnCLIP 2-1-small in a simple and efficient manner.

```bash

pip install diffusers transformers accelerate scipy safetensors

```

Running the pipeline (if you don't swap the scheduler it will run with the default DDIM, in this example we are swapping it to DPMSolverMultistepScheduler):

```python

from diffusers import DiffusionPipeline

from diffusers.utils import load_image

import torch

pipe = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-2-1-unclip-small", torch_dtype=torch.float16)

pipe.to("cuda")

# get image

url = "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main/stable_unclip/tarsila_do_amaral.png"

image = load_image(url)

# run image variation

image = pipe(image).images[0]

```

# Uses

## Direct Use

The model is intended for research purposes only. Possible research areas and tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

_Note: This section is originally taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), was used for Stable Diffusion v1, but applies in the same way to Stable Diffusion v2_.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a subset of the large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/), which contains adult, violent and sexual content. To partially mitigate this, we have filtered the dataset using LAION's NFSW detector (see Training section).

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion was primarily trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

Stable Diffusion v2 mirrors and exacerbates biases to such a degree that viewer discretion must be advised irrespective of the input or its intent.

## Training

**Training Data**

The model developers used the following dataset for training the model:

- LAION-5B and subsets (details below). The training data is further filtered using LAION's NSFW detector, with a "p_unsafe" score of 0.1 (conservative). For more details, please refer to LAION-5B's [NeurIPS 2022](https://openreview.net/forum?id=M3Y74vmsMcY) paper and reviewer discussions on the topic.

## Environmental Impact

**Stable Diffusion v1** **Estimated Emissions**

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

- **Hardware Type:** A100 PCIe 40GB

- **Hours used:** 200000

- **Cloud Provider:** AWS

- **Compute Region:** US-east

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 15000 kg CO2 eq.

## Citation

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

*This model card was written by: Robin Rombach, Patrick Esser and David Ha and is based on the [Stable Diffusion v1](https://github.com/CompVis/stable-diffusion/blob/main/Stable_Diffusion_v1_Model_Card.md) and [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).* | 8,136 | [

[

-0.035186767578125,

-0.06396484375,

0.0225372314453125,

0.011688232421875,

-0.0195465087890625,

-0.0287322998046875,

0.006244659423828125,

-0.034698486328125,

-0.00603485107421875,

0.033477783203125,

-0.030548095703125,

-0.03253173828125,

-0.050384521484375,

-0.01222991943359375,

-0.0303192138671875,

0.0694580078125,

-0.002593994140625,

0.00194549560546875,

-0.018310546875,

-0.0010461807250976562,

-0.0246734619140625,

-0.0161590576171875,

-0.0697021484375,

-0.0194549560546875,

0.0269927978515625,

0.00765228271484375,

0.0556640625,

0.040924072265625,

0.0362548828125,

0.02056884765625,

-0.028717041015625,

-0.0029296875,

-0.047943115234375,

-0.0107574462890625,

0.00118255615234375,

-0.01396942138671875,

-0.04534912109375,

0.0175933837890625,

0.053863525390625,

0.0290069580078125,

-0.0097808837890625,

0.005107879638671875,

0.00823211669921875,

0.0377197265625,

-0.04290771484375,

-0.01198577880859375,

-0.032989501953125,

0.011138916015625,

-0.01136016845703125,

0.020965576171875,

-0.0267181396484375,

-0.0009703636169433594,

0.005092620849609375,

-0.055938720703125,

0.0290985107421875,

-0.02362060546875,

0.0819091796875,

0.0318603515625,

-0.0232086181640625,

-0.004161834716796875,

-0.05450439453125,

0.050201416015625,

-0.050018310546875,

0.015960693359375,

0.0236968994140625,

0.00531005859375,

-0.011810302734375,

-0.0709228515625,

-0.04754638671875,

-0.0015115737915039062,

0.0031280517578125,

0.03521728515625,

-0.0291290283203125,

-0.00849151611328125,

0.0313720703125,

0.011749267578125,

-0.038177490234375,

0.007678985595703125,

-0.040435791015625,

-0.00666046142578125,

0.052154541015625,

0.0106658935546875,

0.01561737060546875,

-0.01447296142578125,

-0.03778076171875,

-0.005229949951171875,

-0.04461669921875,

0.0023441314697265625,

0.03485107421875,

-0.01490020751953125,

-0.04083251953125,

0.032318115234375,

0.0170135498046875,

0.034576416015625,

0.016265869140625,

-0.01320648193359375,

0.020294189453125,

-0.0281219482421875,

-0.01541900634765625,

-0.03338623046875,

0.0675048828125,

0.05224609375,

-0.01056671142578125,

0.015777587890625,

-0.002834320068359375,

0.00753021240234375,

0.0008740425109863281,

-0.09820556640625,

-0.033050537109375,

0.01192474365234375,

-0.049224853515625,

-0.044097900390625,

-0.003978729248046875,

-0.08984375,

-0.0166473388671875,

0.011962890625,

0.029998779296875,

-0.016204833984375,

-0.0400390625,

-0.0021495819091796875,

-0.0311737060546875,

0.01104736328125,

0.035980224609375,

-0.049102783203125,

0.0179595947265625,

0.0054931640625,

0.08380126953125,

-0.03076171875,

-0.0020999908447265625,

-0.004856109619140625,

0.007221221923828125,

-0.0211029052734375,

0.0491943359375,

-0.0281524658203125,

-0.04754638671875,

-0.022003173828125,

0.0257415771484375,

0.0168609619140625,

-0.0421142578125,

0.045562744140625,

-0.02972412109375,

0.0267181396484375,

-0.001312255859375,

-0.0258941650390625,

-0.0159454345703125,

-0.00893402099609375,

-0.05084228515625,

0.086181640625,

0.01131439208984375,

-0.06787109375,

0.007450103759765625,

-0.0560302734375,

-0.01904296875,

-0.00487518310546875,

0.00572967529296875,

-0.054443359375,

-0.0168304443359375,

-0.000988006591796875,

0.0272064208984375,

-0.01456451416015625,

0.022430419921875,

-0.0271759033203125,

-0.018157958984375,

-0.0035495758056640625,

-0.05084228515625,

0.0836181640625,

0.0290679931640625,

-0.02984619140625,

-0.0010929107666015625,

-0.0517578125,

-0.02197265625,

0.03668212890625,

-0.0158538818359375,

-0.01285552978515625,

-0.01058197021484375,

0.021453857421875,

0.024261474609375,

0.0103912353515625,

-0.032501220703125,

-0.0006208419799804688,

-0.01360321044921875,

0.047149658203125,

0.05743408203125,

0.0213470458984375,

0.04791259765625,

-0.0266876220703125,

0.0435791015625,

0.0267486572265625,

0.02593994140625,

-0.00888824462890625,

-0.065673828125,

-0.04315185546875,

-0.02337646484375,

0.0183258056640625,

0.04058837890625,

-0.044158935546875,

0.024139404296875,

0.006103515625,

-0.05511474609375,

-0.01406097412109375,

-0.00510406494140625,

0.01763916015625,

0.052520751953125,

0.0226593017578125,

-0.0303497314453125,

-0.02984619140625,

-0.05731201171875,

0.024932861328125,

-0.00638580322265625,

0.01180267333984375,

0.023162841796875,

0.0576171875,

-0.028900146484375,

0.04644775390625,

-0.048126220703125,

-0.020294189453125,

0.00970458984375,

0.0095062255859375,

0.0014562606811523438,

0.0531005859375,

0.057342529296875,

-0.07904052734375,

-0.047149658203125,

-0.0249786376953125,

-0.0595703125,

-0.002368927001953125,

0.00020301342010498047,

-0.023712158203125,

0.0299835205078125,

0.03472900390625,

-0.053436279296875,

0.0472412109375,

0.050445556640625,

-0.0238189697265625,

0.037933349609375,

-0.0325927734375,

-0.0038890838623046875,

-0.0810546875,

0.015960693359375,

0.0211334228515625,

-0.017059326171875,

-0.044189453125,

0.004253387451171875,

-0.0120697021484375,

-0.0207061767578125,

-0.05450439453125,

0.056121826171875,

-0.0301055908203125,

0.0261688232421875,

-0.0308837890625,

0.00037384033203125,

0.01514434814453125,

0.022491455078125,

0.017791748046875,

0.053802490234375,

0.059600830078125,

-0.046142578125,

0.00435638427734375,

0.01837158203125,

-0.0128631591796875,

0.037017822265625,

-0.06787109375,

0.015228271484375,

-0.027099609375,

0.026214599609375,

-0.07171630859375,

-0.01322174072265625,

0.0421142578125,

-0.02685546875,

0.0291748046875,

-0.0222930908203125,

-0.033935546875,

-0.02923583984375,

-0.01396942138671875,

0.038970947265625,

0.08074951171875,

-0.0328369140625,

0.0305633544921875,

0.04168701171875,

0.01371002197265625,

-0.033233642578125,

-0.060638427734375,

-0.007099151611328125,

-0.032745361328125,

-0.061981201171875,

0.047637939453125,

-0.024169921875,

-0.01511383056640625,

0.008941650390625,

0.01184844970703125,

-0.008575439453125,

0.003864288330078125,

0.03692626953125,

0.020904541015625,

0.009521484375,

-0.00986480712890625,

0.01436614990234375,

-0.01702880859375,

-0.00115203857421875,

-0.003932952880859375,

0.030487060546875,

0.007640838623046875,

-0.0005965232849121094,

-0.052734375,

0.03753662109375,

0.04742431640625,

0.001468658447265625,

0.058135986328125,

0.06890869140625,

-0.040435791015625,

0.001888275146484375,

-0.0175323486328125,

-0.01451873779296875,

-0.0380859375,

0.030120849609375,

-0.01003265380859375,

-0.043609619140625,

0.04736328125,

-0.002498626708984375,

-0.001495361328125,

0.055419921875,

0.0550537109375,

-0.016693115234375,

0.08343505859375,

0.04339599609375,

0.0286102294921875,

0.052032470703125,

-0.05841064453125,

-0.004924774169921875,

-0.070556640625,

-0.0201873779296875,

-0.0182647705078125,

-0.017059326171875,

-0.040924072265625,

-0.05047607421875,

0.03118896484375,

0.0227203369140625,

-0.019287109375,

0.014404296875,

-0.047088623046875,

0.0251007080078125,

0.0206298828125,

0.0134124755859375,

0.000507354736328125,

0.013916015625,

0.00670623779296875,

-0.01480865478515625,

-0.057220458984375,

-0.048004150390625,

0.07421875,

0.038909912109375,

0.06591796875,

0.0050048828125,

0.037078857421875,

0.0357666015625,

0.0230865478515625,

-0.031494140625,

0.04486083984375,

-0.0328369140625,

-0.050506591796875,

-0.00885009765625,

-0.017852783203125,

-0.0736083984375,

0.01548004150390625,

-0.020843505859375,

-0.03375244140625,

0.037384033203125,

0.014129638671875,

-0.0178680419921875,

0.0283203125,

-0.055938720703125,

0.07427978515625,

-0.007579803466796875,

-0.05657958984375,

-0.0125274658203125,

-0.0506591796875,

0.023773193359375,

-0.00022304058074951172,

0.0196533203125,

-0.0097198486328125,

-0.00791168212890625,

0.0706787109375,

-0.0285186767578125,

0.076416015625,

-0.033477783203125,

0.0030994415283203125,

0.040802001953125,

-0.0078125,

0.032928466796875,

0.01251983642578125,

-0.01262664794921875,

0.042938232421875,

0.007389068603515625,

-0.02972412109375,

-0.0238494873046875,

0.0545654296875,

-0.0718994140625,

-0.03753662109375,

-0.033935546875,

-0.0269317626953125,

0.048095703125,

0.019287109375,

0.059051513671875,

0.026611328125,

-0.01904296875,

-0.0045013427734375,

0.053009033203125,

-0.0230712890625,

0.036041259765625,

0.02294921875,

-0.02374267578125,

-0.043792724609375,

0.057464599609375,

0.02227783203125,

0.04150390625,

-0.00856781005859375,

0.021331787109375,

-0.0111236572265625,

-0.039581298828125,

-0.042694091796875,

0.0167236328125,

-0.06036376953125,

-0.0131072998046875,

-0.056732177734375,

-0.024200439453125,

-0.032257080078125,

-0.01062774658203125,

-0.0313720703125,

-0.02301025390625,

-0.06414794921875,

0.00913238525390625,

0.019561767578125,

0.0416259765625,

-0.028656005859375,

0.02923583984375,

-0.02728271484375,

0.02349853515625,

0.0102386474609375,

0.01319122314453125,

0.0027675628662109375,

-0.056304931640625,

-0.010528564453125,

0.0157470703125,

-0.052825927734375,

-0.0728759765625,

0.02947998046875,

0.0075531005859375,

0.039581298828125,

0.038055419921875,

-0.00014495849609375,

0.047943115234375,

-0.0299224853515625,

0.0831298828125,

0.01947021484375,

-0.053375244140625,

0.04693603515625,

-0.0304107666015625,

0.00989532470703125,

0.0197601318359375,

0.040679931640625,

-0.017181396484375,

-0.0213165283203125,

-0.06524658203125,

-0.0645751953125,

0.043487548828125,

0.0255279541015625,

0.02349853515625,

-0.00937652587890625,

0.050506591796875,

0.002475738525390625,

-0.000980377197265625,

-0.07574462890625,

-0.03948974609375,

-0.0238189697265625,

0.01229095458984375,

0.0096588134765625,

-0.029205322265625,

-0.013153076171875,

-0.04473876953125,

0.0699462890625,

0.006855010986328125,

0.04400634765625,

0.027679443359375,

0.0103912353515625,

-0.02947998046875,

-0.0203704833984375,

0.0440673828125,

0.0262908935546875,

-0.0168609619140625,

0.00014448165893554688,

0.00004690885543823242,

-0.040435791015625,

0.017364501953125,

0.004642486572265625,

-0.058258056640625,

0.0018367767333984375,

-0.003326416015625,

0.061370849609375,

-0.0187835693359375,

-0.036956787109375,

0.05169677734375,

-0.007720947265625,

-0.0313720703125,

-0.041290283203125,

0.01000213623046875,

0.0044708251953125,

0.0178680419921875,

0.0035762786865234375,

0.0433349609375,

0.01337432861328125,

-0.02978515625,

0.00926971435546875,

0.047119140625,

-0.0294342041015625,

-0.02685546875,

0.08270263671875,

0.01203155517578125,

-0.025848388671875,

0.046539306640625,

-0.0389404296875,

-0.0206146240234375,

0.055267333984375,

0.0587158203125,

0.059051513671875,

-0.01062774658203125,

0.030914306640625,

0.05523681640625,

0.018890380859375,

-0.0163726806640625,

0.01042938232421875,

0.0178070068359375,

-0.05419921875,

-0.00334930419921875,

-0.030120849609375,

-0.006313323974609375,

0.01467132568359375,

-0.040496826171875,

0.042327880859375,

-0.04266357421875,

-0.033477783203125,

-0.0016689300537109375,

-0.0191497802734375,

-0.04266357421875,

0.004497528076171875,

0.0197601318359375,

0.05999755859375,

-0.0823974609375,

0.061614990234375,

0.051788330078125,

-0.044219970703125,

-0.031402587890625,

0.0020694732666015625,

-0.005779266357421875,

-0.026031494140625,

0.037628173828125,

0.009124755859375,

-0.0003268718719482422,

0.004978179931640625,

-0.06793212890625,

-0.06842041015625,

0.097900390625,

0.0282440185546875,

-0.022308349609375,

0.003742218017578125,

-0.0150604248046875,

0.047943115234375,

-0.035888671875,

0.0214385986328125,

0.0205535888671875,

0.032745361328125,

0.03228759765625,

-0.035675048828125,

0.00927734375,

-0.0245819091796875,

0.02166748046875,

-0.0037555694580078125,

-0.077392578125,

0.07391357421875,

-0.030609130859375,

-0.0338134765625,

0.0235748291015625,

0.045806884765625,

0.01334381103515625,

0.027130126953125,

0.03289794921875,

0.06353759765625,

0.046539306640625,

-0.0027713775634765625,

0.0726318359375,

-0.008575439453125,

0.0310516357421875,

0.0650634765625,

-0.009735107421875,

0.059112548828125,

0.0361328125,

-0.020355224609375,

0.04852294921875,

0.048828125,

-0.033050537109375,

0.061279296875,

0.0013141632080078125,

-0.0149383544921875,

-0.006557464599609375,

-0.01346588134765625,

-0.035064697265625,

0.01230621337890625,

0.021331787109375,

-0.041015625,

-0.0126495361328125,

0.01216888427734375,

0.006679534912109375,

-0.0156707763671875,

-0.0036411285400390625,

0.04248046875,

0.004871368408203125,

-0.031494140625,

0.0465087890625,

0.02056884765625,

0.064697265625,

-0.03485107421875,

-0.0168609619140625,

-0.005474090576171875,

0.00807952880859375,

-0.021575927734375,

-0.059600830078125,

0.03936767578125,

-0.0038890838623046875,

-0.0218963623046875,

-0.0171051025390625,

0.060699462890625,

-0.0235443115234375,

-0.0506591796875,

0.03240966796875,

0.01739501953125,

0.0269012451171875,

0.01013946533203125,

-0.07861328125,

0.01406097412109375,

-0.0020771026611328125,

-0.0188140869140625,

0.02740478515625,

0.006191253662109375,

0.0097808837890625,

0.034423828125,

0.04669189453125,

-0.00711822509765625,

0.003147125244140625,

-0.01200103759765625,

0.058319091796875,

-0.0197906494140625,

-0.0243988037109375,

-0.058746337890625,

0.0555419921875,

-0.007427215576171875,

-0.0226593017578125,

0.05120849609375,

0.04876708984375,

0.055267333984375,

-0.0040130615234375,

0.060089111328125,

-0.0220489501953125,

-0.0020732879638671875,

-0.031890869140625,

0.059600830078125,

-0.05914306640625,

0.01274871826171875,

-0.0269622802734375,

-0.06634521484375,

-0.0093841552734375,

0.06109619140625,

-0.0172576904296875,

0.015777587890625,

0.0335693359375,

0.07586669921875,

-0.00463104248046875,

-0.01464080810546875,

0.0260009765625,

0.0238189697265625,

0.03131103515625,

0.021209716796875,

0.052276611328125,

-0.058258056640625,

0.0288543701171875,

-0.04052734375,

-0.022216796875,

0.004276275634765625,

-0.07232666015625,

-0.06353759765625,

-0.051300048828125,

-0.06268310546875,

-0.056243896484375,

-0.007251739501953125,

0.03497314453125,

0.07012939453125,

-0.036407470703125,

-0.0057830810546875,

-0.020111083984375,

0.0015611648559570312,

-0.011260986328125,

-0.02166748046875,

0.0287933349609375,

0.0170135498046875,

-0.06591796875,

-0.001987457275390625,

0.0192718505859375,

0.046539306640625,

-0.04010009765625,

-0.0181884765625,

-0.01702880859375,

-0.00806427001953125,

0.03973388671875,

0.00963592529296875,

-0.050384521484375,

-0.00510406494140625,

-0.01041412353515625,

-0.0006589889526367188,

0.01171112060546875,

0.0235137939453125,

-0.044647216796875,

0.0271148681640625,

0.037628173828125,

0.01031494140625,

0.06182861328125,

-0.005786895751953125,

0.008544921875,

-0.036590576171875,

0.029693603515625,

0.00611114501953125,

0.028167724609375,

0.0217437744140625,

-0.043365478515625,

0.03582763671875,

0.052001953125,

-0.056182861328125,

-0.060455322265625,

0.018646240234375,

-0.08697509765625,

-0.024200439453125,

0.10235595703125,

-0.01314544677734375,

-0.023162841796875,

0.0034503936767578125,

-0.022674560546875,

0.0173797607421875,

-0.0283203125,

0.04083251953125,

0.041473388671875,

-0.01102447509765625,

-0.0367431640625,

-0.0435791015625,

0.03338623046875,

0.011932373046875,

-0.046600341796875,

-0.01171112060546875,

0.045562744140625,

0.049163818359375,

0.0173492431640625,

0.06390380859375,

-0.028594970703125,

0.01332855224609375,

0.01526641845703125,

0.004150390625,

-0.0034618377685546875,

-0.0187835693359375,

-0.036346435546875,

0.00438690185546875,

-0.015777587890625,

0.0015363693237304688

]

] |

EleutherAI/polyglot-ko-12.8b | 2023-06-07T05:03:56.000Z | [

"transformers",

"pytorch",

"safetensors",

"gpt_neox",

"text-generation",

"causal-lm",

"ko",

"arxiv:2104.09864",

"arxiv:2204.04541",

"arxiv:2306.02254",

"license:apache-2.0",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | EleutherAI | null | null | EleutherAI/polyglot-ko-12.8b | 63 | 17,274 | transformers | 2022-10-14T23:46:19 | ---

language:

- ko

tags:

- pytorch

- causal-lm

license: apache-2.0

---

# Polyglot-Ko-12.8B

## Model Description

Polyglot-Ko is a series of large-scale Korean autoregressive language models made by the EleutherAI polyglot team.

| Hyperparameter | Value |

|----------------------|----------------------------------------------------------------------------------------------------------------------------------------|

| \\(n_{parameters}\\) | 12,898,631,680 |

| \\(n_{layers}\\) | 40 |

| \\(d_{model}\\) | 5120 |

| \\(d_{ff}\\) | 20,480 |

| \\(n_{heads}\\) | 40 |

| \\(d_{head}\\) | 128 |

| \\(n_{ctx}\\) | 2,048 |

| \\(n_{vocab}\\) | 30,003 / 30,080 |

| Positional Encoding | [Rotary Position Embedding (RoPE)](https://arxiv.org/abs/2104.09864) |

| RoPE Dimensions | [64](https://github.com/kingoflolz/mesh-transformer-jax/blob/f2aa66e0925de6593dcbb70e72399b97b4130482/mesh_transformer/layers.py#L223) |

The model consists of 40 transformer layers with a model dimension of 5120, and a feedforward dimension of 20480. The model

dimension is split into 40 heads, each with a dimension of 128. Rotary Position Embedding (RoPE) is applied to 64

dimensions of each head. The model is trained with a tokenization vocabulary of 30003.

## Training data

Polyglot-Ko-12.8B was trained on 863 GB of Korean language data (1.2TB before processing), a large-scale dataset curated by [TUNiB](https://tunib.ai/). The data collection process has abided by South Korean laws. This dataset was collected for the purpose of training Polyglot-Ko models, so it will not be released for public use.

| Source |Size (GB) | Link |

|-------------------------------------|---------|------------------------------------------|

| Korean blog posts | 682.3 | - |

| Korean news dataset | 87.0 | - |

| Modu corpus | 26.4 |corpus.korean.go.kr |

| Korean patent dataset | 19.0 | - |

| Korean Q & A dataset | 18.1 | - |

| KcBert dataset | 12.7 | github.com/Beomi/KcBERT |

| Korean fiction dataset | 6.1 | - |

| Korean online comments | 4.2 | - |

| Korean wikipedia | 1.4 | ko.wikipedia.org |

| Clova call | < 1.0 | github.com/clovaai/ClovaCall |

| Naver sentiment movie corpus | < 1.0 | github.com/e9t/nsmc |

| Korean hate speech dataset | < 1.0 | - |

| Open subtitles | < 1.0 | opus.nlpl.eu/OpenSubtitles.php |

| AIHub various tasks datasets | < 1.0 |aihub.or.kr |

| Standard Korean language dictionary | < 1.0 | stdict.korean.go.kr/main/main.do |

Furthermore, in order to avoid the model memorizing and generating personally identifiable information (PII) in the training data, we masked out the following sensitive information in the pre-processing stage:

* `<|acc|>` : bank account number

* `<|rrn|>` : resident registration number

* `<|tell|>` : phone number

## Training procedure

Polyglot-Ko-12.8B was trained for 167 billion tokens over 301,000 steps on 256 A100 GPUs with the [GPT-NeoX framework](https://github.com/EleutherAI/gpt-neox). It was trained as an autoregressive language model, using cross-entropy loss to maximize the likelihood of predicting the next token.

## How to use

This model can be easily loaded using the `AutoModelForCausalLM` class:

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("EleutherAI/polyglot-ko-12.8b")

model = AutoModelForCausalLM.from_pretrained("EleutherAI/polyglot-ko-12.8b")

```

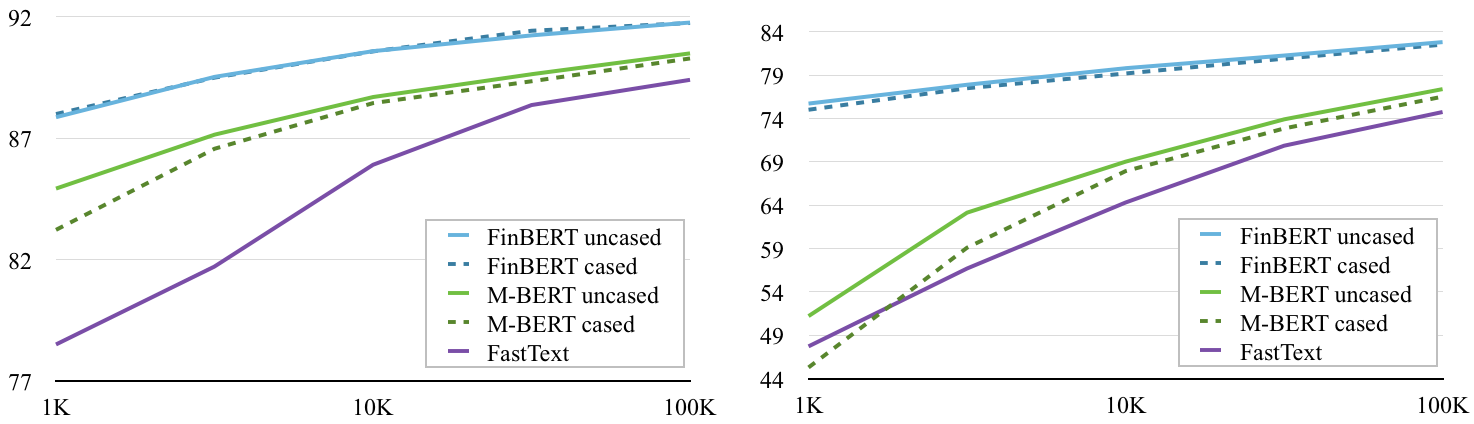

## Evaluation results

We evaluate Polyglot-Ko-3.8B on [KOBEST dataset](https://arxiv.org/abs/2204.04541), a benchmark with 5 downstream tasks, against comparable models such as skt/ko-gpt-trinity-1.2B-v0.5, kakaobrain/kogpt and facebook/xglm-7.5B, using the prompts provided in the paper.

The following tables show the results when the number of few-shot examples differ. You can reproduce these results using the [polyglot branch of lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness/tree/polyglot) and the following scripts. For a fair comparison, all models were run under the same conditions and using the same prompts. In the tables, `n` refers to the number of few-shot examples.

In case of WiC dataset, all models show random performance.

```console

python main.py \

--model gpt2 \

--model_args pretrained='EleutherAI/polyglot-ko-3.8b' \

--tasks kobest_copa,kobest_hellaswag \

--num_fewshot $YOUR_NUM_FEWSHOT \

--batch_size $YOUR_BATCH_SIZE \

--device $YOUR_DEVICE \

--output_path $/path/to/output/

```

### COPA (F1)

| Model | params | 0-shot | 5-shot | 10-shot | 50-shot |

|----------------------------------------------------------------------------------------------|--------|--------|--------|---------|---------|

| [skt/ko-gpt-trinity-1.2B-v0.5](https://huggingface.co/skt/ko-gpt-trinity-1.2B-v0.5) | 1.2B | 0.6696 | 0.6477 | 0.6419 | 0.6514 |

| [kakaobrain/kogpt](https://huggingface.co/kakaobrain/kogpt) | 6.0B | 0.7345 | 0.7287 | 0.7277 | 0.7479 |

| [facebook/xglm-7.5B](https://huggingface.co/facebook/xglm-7.5B) | 7.5B | 0.6723 | 0.6731 | 0.6769 | 0.7119 |

| [EleutherAI/polyglot-ko-1.3b](https://huggingface.co/EleutherAI/polyglot-ko-1.3b) | 1.3B | 0.7196 | 0.7193 | 0.7204 | 0.7206 |

| [EleutherAI/polyglot-ko-3.8b](https://huggingface.co/EleutherAI/polyglot-ko-3.8b) | 3.8B | 0.7595 | 0.7608 | 0.7638 | 0.7788 |

| [EleutherAI/polyglot-ko-5.8b](https://huggingface.co/EleutherAI/polyglot-ko-5.8b) | 5.8B | 0.7745 | 0.7676 | 0.7775 | 0.7887 |

| **[EleutherAI/polyglot-ko-12.8b](https://huggingface.co/EleutherAI/polyglot-ko-12.8b) (this)** | **12.8B** | **0.7937** | **0.8108** | **0.8037** | **0.8369** |

<img src="https://github.com/EleutherAI/polyglot/assets/19511788/d5b49364-aed5-4467-bae2-5a322c8e2ceb" width="800px">

### HellaSwag (F1)

| Model | params | 0-shot | 5-shot | 10-shot | 50-shot |

|----------------------------------------------------------------------------------------------|--------|--------|--------|---------|---------|

| [skt/ko-gpt-trinity-1.2B-v0.5](https://huggingface.co/skt/ko-gpt-trinity-1.2B-v0.5) | 1.2B | 0.5243 | 0.5272 | 0.5166 | 0.5352 |

| [kakaobrain/kogpt](https://huggingface.co/kakaobrain/kogpt) | 6.0B | 0.5590 | 0.5833 | 0.5828 | 0.5907 |

| [facebook/xglm-7.5B](https://huggingface.co/facebook/xglm-7.5B) | 7.5B | 0.5665 | 0.5689 | 0.5565 | 0.5622 |

| [EleutherAI/polyglot-ko-1.3b](https://huggingface.co/EleutherAI/polyglot-ko-1.3b) | 1.3B | 0.5247 | 0.5260 | 0.5278 | 0.5427 |

| [EleutherAI/polyglot-ko-3.8b](https://huggingface.co/EleutherAI/polyglot-ko-3.8b) | 3.8B | 0.5707 | 0.5830 | 0.5670 | 0.5787 |

| [EleutherAI/polyglot-ko-5.8b](https://huggingface.co/EleutherAI/polyglot-ko-5.8b) | 5.8B | 0.5976 | 0.5998 | 0.5979 | 0.6208 |

| **[EleutherAI/polyglot-ko-12.8b (this)](https://huggingface.co/EleutherAI/polyglot-ko-12.8b)** | **12.8B** | **0.5954** | **0.6306** | **0.6098** | **0.6118** |

<img src="https://github.com/EleutherAI/polyglot/assets/19511788/5acb60ac-161a-4ab3-a296-db4442e08b7f" width="800px">

### BoolQ (F1)

| Model | params | 0-shot | 5-shot | 10-shot | 50-shot |

|----------------------------------------------------------------------------------------------|--------|--------|--------|---------|---------|

| [skt/ko-gpt-trinity-1.2B-v0.5](https://huggingface.co/skt/ko-gpt-trinity-1.2B-v0.5) | 1.2B | 0.3356 | 0.4014 | 0.3640 | 0.3560 |

| [kakaobrain/kogpt](https://huggingface.co/kakaobrain/kogpt) | 6.0B | 0.4514 | 0.5981 | 0.5499 | 0.5202 |

| [facebook/xglm-7.5B](https://huggingface.co/facebook/xglm-7.5B) | 7.5B | 0.4464 | 0.3324 | 0.3324 | 0.3324 |

| [EleutherAI/polyglot-ko-1.3b](https://huggingface.co/EleutherAI/polyglot-ko-1.3b) | 1.3B | 0.3552 | 0.4751 | 0.4109 | 0.4038 |

| [EleutherAI/polyglot-ko-3.8b](https://huggingface.co/EleutherAI/polyglot-ko-3.8b) | 3.8B | 0.4320 | 0.5263 | 0.4930 | 0.4038 |

| [EleutherAI/polyglot-ko-5.8b](https://huggingface.co/EleutherAI/polyglot-ko-5.8b) | 5.8B | 0.4356 | 0.5698 | 0.5187 | 0.5236 |

| **[EleutherAI/polyglot-ko-12.8b (this)](https://huggingface.co/EleutherAI/polyglot-ko-12.8b)** | **12.8B** | **0.4818** | **0.6041** | **0.6289** | **0.6448** |

<img src="https://github.com/EleutherAI/polyglot/assets/19511788/b74c23c0-01f3-4b68-9e10-a48e9aa052ab" width="800px">

### SentiNeg (F1)

| Model | params | 0-shot | 5-shot | 10-shot | 50-shot |

|----------------------------------------------------------------------------------------------|--------|--------|--------|---------|---------|

| [skt/ko-gpt-trinity-1.2B-v0.5](https://huggingface.co/skt/ko-gpt-trinity-1.2B-v0.5) | 1.2B | 0.6065 | 0.6878 | 0.7280 | 0.8413 |

| [kakaobrain/kogpt](https://huggingface.co/kakaobrain/kogpt) | 6.0B | 0.3747 | 0.8942 | 0.9294 | 0.9698 |

| [facebook/xglm-7.5B](https://huggingface.co/facebook/xglm-7.5B) | 7.5B | 0.3578 | 0.4471 | 0.3964 | 0.5271 |

| [EleutherAI/polyglot-ko-1.3b](https://huggingface.co/EleutherAI/polyglot-ko-1.3b) | 1.3B | 0.6790 | 0.6257 | 0.5514 | 0.7851 |

| [EleutherAI/polyglot-ko-3.8b](https://huggingface.co/EleutherAI/polyglot-ko-3.8b) | 3.8B | 0.4858 | 0.7950 | 0.7320 | 0.7851 |

| [EleutherAI/polyglot-ko-5.8b](https://huggingface.co/EleutherAI/polyglot-ko-5.8b) | 5.8B | 0.3394 | 0.8841 | 0.8808 | 0.9521 |

| **[EleutherAI/polyglot-ko-12.8b (this)](https://huggingface.co/EleutherAI/polyglot-ko-12.8b)** | **12.8B** | **0.9117** | **0.9015** | **0.9345** | **0.9723** |

<img src="https://github.com/EleutherAI/polyglot/assets/19511788/95b56b19-d349-4b70-9ff9-94a5560f89ee" width="800px">

### WiC (F1)

| Model | params | 0-shot | 5-shot | 10-shot | 50-shot |

|----------------------------------------------------------------------------------------------|--------|--------|--------|---------|---------|

| [skt/ko-gpt-trinity-1.2B-v0.5](https://huggingface.co/skt/ko-gpt-trinity-1.2B-v0.5) | 1.2B | 0.3290 | 0.4313 | 0.4001 | 0.3621 |

| [kakaobrain/kogpt](https://huggingface.co/kakaobrain/kogpt) | 6.0B | 0.3526 | 0.4775 | 0.4358 | 0.4061 |

| [facebook/xglm-7.5B](https://huggingface.co/facebook/xglm-7.5B) | 7.5B | 0.3280 | 0.4903 | 0.4945 | 0.3656 |

| [EleutherAI/polyglot-ko-1.3b](https://huggingface.co/EleutherAI/polyglot-ko-1.3b) | 1.3B | 0.3297 | 0.4850 | 0.4650 | 0.3290 |

| [EleutherAI/polyglot-ko-3.8b](https://huggingface.co/EleutherAI/polyglot-ko-3.8b) | 3.8B | 0.3390 | 0.4944 | 0.4203 | 0.3835 |

| [EleutherAI/polyglot-ko-5.8b](https://huggingface.co/EleutherAI/polyglot-ko-5.8b) | 5.8B | 0.3913 | 0.4688 | 0.4189 | 0.3910 |

| **[EleutherAI/polyglot-ko-12.8b](https://huggingface.co/EleutherAI/polyglot-ko-12.8b) (this)** | **12.8B** | **0.3985** | **0.3683** | **0.3307** | **0.3273** |

<img src="https://github.com/EleutherAI/polyglot/assets/19511788/4de4a4c3-d7ac-4e04-8b0c-0d533fe88294" width="800px">

## Limitations and Biases

Polyglot-Ko has been trained to optimize next token prediction. Language models such as this are often used for a wide variety of tasks and it is important to be aware of possible unexpected outcomes. For instance, Polyglot-Ko will not always return the most factual or accurate response but the most statistically likely one. In addition, Polyglot may produce socially unacceptable or offensive content. We recommend having a human curator or other filtering mechanism to censor sensitive content.

## Citation and Related Information

### BibTeX entry

If you find our work useful, please consider citing:

```bibtex

@misc{ko2023technical,

title={A Technical Report for Polyglot-Ko: Open-Source Large-Scale Korean Language Models},

author={Hyunwoong Ko and Kichang Yang and Minho Ryu and Taekyoon Choi and Seungmu Yang and jiwung Hyun and Sungho Park},

year={2023},

eprint={2306.02254},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

### Licensing

All our models are licensed under the terms of the Apache License 2.0.

```

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

```

### Acknowledgement

This project was made possible thanks to the computing resources from [Stability.ai](https://stability.ai), and thanks to [TUNiB](https://tunib.ai) for providing a large-scale Korean dataset for this work.

| 15,490 | [

[

-0.049530029296875,

-0.05157470703125,

0.0198974609375,

0.004962921142578125,

-0.03875732421875,

0.0018873214721679688,

-0.00939178466796875,

-0.039581298828125,

0.0308990478515625,

0.01284027099609375,

-0.03497314453125,

-0.048736572265625,

-0.054779052734375,

-0.0076141357421875,

-0.005504608154296875,

0.09332275390625,

-0.003299713134765625,

-0.016357421875,

0.0012378692626953125,

-0.00495147705078125,

-0.00836944580078125,

-0.04736328125,

-0.0416259765625,

-0.035614013671875,

0.0247650146484375,

0.0006799697875976562,

0.06243896484375,

0.03326416015625,

0.0265655517578125,

0.0233001708984375,

-0.02142333984375,

0.00316619873046875,

-0.036956787109375,

-0.035980224609375,

0.0190582275390625,

-0.038330078125,

-0.05950927734375,

-0.002796173095703125,

0.0460205078125,

0.0206756591796875,

-0.01038360595703125,

0.032440185546875,

0.00008672475814819336,

0.047698974609375,

-0.032623291015625,

0.0203704833984375,

-0.026336669921875,

0.0135650634765625,

-0.031524658203125,

0.021087646484375,

-0.0188751220703125,

-0.029205322265625,

0.0122833251953125,

-0.04339599609375,

0.0034656524658203125,

-0.0017347335815429688,

0.0972900390625,

-0.0022335052490234375,

-0.0149078369140625,

-0.0036373138427734375,

-0.04083251953125,

0.055877685546875,

-0.07904052734375,

0.0187835693359375,

0.0246734619140625,

0.00904083251953125,

0.0006952285766601562,

-0.050994873046875,

-0.04364013671875,

0.0011377334594726562,

-0.02880859375,

0.035064697265625,

-0.018341064453125,

0.002689361572265625,

0.036041259765625,

0.03369140625,

-0.0576171875,

-0.006328582763671875,

-0.0389404296875,

-0.02288818359375,

0.06866455078125,

0.0170440673828125,

0.0301055908203125,

-0.024169921875,

-0.0225830078125,

-0.0251922607421875,

-0.02215576171875,

0.0310516357421875,

0.04010009765625,

-0.0011987686157226562,

-0.053253173828125,

0.041168212890625,

-0.01593017578125,

0.0494384765625,

0.01540374755859375,

-0.03692626953125,

0.050201416015625,

-0.0277862548828125,

-0.0259552001953125,

-0.00629425048828125,

0.08392333984375,

0.034423828125,

0.0221710205078125,

0.01348876953125,

-0.00872039794921875,

-0.001026153564453125,

-0.011077880859375,

-0.06085205078125,

-0.0213775634765625,

0.016754150390625,

-0.042388916015625,

-0.0267333984375,

0.02587890625,

-0.0614013671875,

0.00286865234375,

-0.01282501220703125,

0.0289764404296875,

-0.035247802734375,

-0.03173828125,

0.0173492431640625,

0.00046253204345703125,

0.034332275390625,

0.015716552734375,

-0.042572021484375,

0.0166168212890625,

0.027984619140625,

0.0667724609375,

-0.0113525390625,

-0.0250091552734375,

-0.0025615692138671875,

-0.0016021728515625,

-0.016693115234375,

0.04278564453125,

-0.013427734375,

-0.0142974853515625,

-0.0247650146484375,

0.01263427734375,

-0.024810791015625,

-0.0233917236328125,

0.03240966796875,

-0.013519287109375,

0.020782470703125,

-0.0037670135498046875,

-0.03533935546875,

-0.0323486328125,

0.005306243896484375,

-0.03857421875,

0.07861328125,

0.0122222900390625,

-0.0731201171875,

0.018341064453125,

-0.0261993408203125,

-0.00237274169921875,

-0.00681304931640625,

0.0005335807800292969,

-0.062225341796875,

0.00826263427734375,

0.020965576171875,

0.024322509765625,

-0.027252197265625,

0.0020160675048828125,

-0.0234222412109375,

-0.02081298828125,

-0.0018768310546875,

-0.0107574462890625,

0.07403564453125,

0.00923919677734375,

-0.03643798828125,

-0.0014104843139648438,

-0.06524658203125,

0.0180511474609375,

0.040618896484375,

-0.01021575927734375,

-0.00682830810546875,

-0.025726318359375,

-0.007366180419921875,

0.035400390625,

0.0234527587890625,

-0.0399169921875,

0.0156097412109375,

-0.03961181640625,

-0.0018701553344726562,

0.051483154296875,

-0.006732940673828125,

0.01340484619140625,

-0.0380859375,

0.061859130859375,

0.01328277587890625,

0.034393310546875,

0.0026988983154296875,

-0.05047607421875,

-0.05572509765625,

-0.0279541015625,

0.019195556640625,

0.043609619140625,

-0.048187255859375,

0.03887939453125,

-0.003009796142578125,

-0.066650390625,

-0.05364990234375,

0.00982666015625,

0.035491943359375,

0.0237274169921875,

0.0139312744140625,

-0.0190887451171875,

-0.038116455078125,

-0.0733642578125,

-0.0006422996520996094,

-0.01352691650390625,

0.01331329345703125,

0.03192138671875,

0.05108642578125,

-0.0024356842041015625,

0.060943603515625,

-0.055908203125,

-0.007320404052734375,

-0.0257415771484375,

0.0162811279296875,

0.051788330078125,

0.03009033203125,

0.0665283203125,

-0.049591064453125,

-0.0802001953125,

0.0102081298828125,

-0.07391357421875,

-0.00954437255859375,

0.0036869049072265625,

-0.007480621337890625,

0.0237274169921875,

0.017974853515625,

-0.06524658203125,

0.04888916015625,

0.048736572265625,

-0.0295257568359375,

0.07049560546875,

-0.0087738037109375,

-0.002429962158203125,

-0.07647705078125,

0.0052337646484375,

-0.01318359375,

-0.0196685791015625,

-0.050384521484375,

-0.002391815185546875,

-0.01261138916015625,

0.006038665771484375,

-0.051910400390625,

0.047515869140625,

-0.03509521484375,

0.005466461181640625,

-0.0197601318359375,

0.0010223388671875,

-0.00910186767578125,

0.04412841796875,

-0.0018033981323242188,

0.04522705078125,

0.0701904296875,

-0.0205078125,

0.049163818359375,

0.0034942626953125,

-0.020965576171875,

0.0193023681640625,

-0.06414794921875,

0.0227813720703125,

-0.0181732177734375,

0.031890869140625,

-0.06317138671875,

-0.015899658203125,

0.0290374755859375,

-0.0338134765625,

0.006046295166015625,

-0.0290679931640625,

-0.0430908203125,

-0.0513916015625,

-0.0482177734375,

0.034271240234375,

0.05572509765625,

-0.0196685791015625,

0.037017822265625,

0.015899658203125,

-0.0111846923828125,

-0.034515380859375,

-0.0309600830078125,

-0.0263214111328125,

-0.0275726318359375,

-0.06280517578125,

0.0232391357421875,

0.001560211181640625,

0.001323699951171875,

-0.00034689903259277344,

0.0001652240753173828,

0.0066375732421875,

-0.026519775390625,

0.0204620361328125,

0.03973388671875,

-0.0182952880859375,

-0.01776123046875,

-0.01593017578125,

-0.0152587890625,

-0.003025054931640625,

-0.01018524169921875,

0.06573486328125,

-0.03033447265625,

-0.01425933837890625,

-0.053497314453125,

0.0029506683349609375,

0.05804443359375,

-0.00984954833984375,

0.071044921875,

0.079833984375,

-0.024871826171875,

0.0204010009765625,

-0.035430908203125,

-0.002292633056640625,

-0.035400390625,

0.007320404052734375,

-0.035369873046875,

-0.042144775390625,

0.06964111328125,

0.01528167724609375,

0.0007414817810058594,

0.0537109375,

0.048309326171875,

0.000016987323760986328,

0.08807373046875,

0.029022216796875,

-0.0168914794921875,

0.0301055908203125,

-0.0457763671875,

0.015167236328125,

-0.0628662109375,

-0.0277252197265625,

-0.006763458251953125,

-0.015716552734375,

-0.06231689453125,

-0.0290985107421875,

0.0384521484375,

0.02337646484375,

-0.0135955810546875,

0.038909912109375,

-0.0304718017578125,

0.0205230712890625,

0.034881591796875,

0.00806427001953125,

0.002506256103515625,

-0.0019044876098632812,

-0.0288543701171875,

-0.0018682479858398438,

-0.052703857421875,

-0.0236968994140625,

0.0762939453125,

0.031982421875,

0.0670166015625,

0.0030345916748046875,

0.0594482421875,

-0.0070953369140625,

-0.002838134765625,

-0.047119140625,

0.04315185546875,

-0.00545501708984375,

-0.04791259765625,

-0.0188751220703125,

-0.031707763671875,

-0.06231689453125,

0.028564453125,

-0.018707275390625,

-0.07666015625,

0.00782012939453125,

0.0180511474609375,

-0.034881591796875,

0.0423583984375,

-0.053253173828125,

0.055572509765625,

-0.013671875,

-0.0245513916015625,

0.0007739067077636719,

-0.04119873046875,

0.025177001953125,

-0.005214691162109375,

0.0007023811340332031,

-0.0166168212890625,

0.01470947265625,

0.05413818359375,

-0.047393798828125,

0.052490234375,

-0.0165863037109375,

-0.00243377685546875,

0.046295166015625,

-0.013671875,

0.05853271484375,

0.0013132095336914062,

-0.0015745162963867188,

0.0203704833984375,

0.0023479461669921875,

-0.0362548828125,

-0.0390625,

0.036651611328125,

-0.06280517578125,

-0.039581298828125,

-0.0528564453125,

-0.041717529296875,

0.012054443359375,

0.0164947509765625,

0.048583984375,

0.0207672119140625,

0.0189666748046875,

0.0173797607421875,

0.0295867919921875,

-0.03668212890625,

0.038482666015625,

0.01294708251953125,

-0.033111572265625,

-0.038787841796875,

0.063720703125,

0.0188140869140625,

0.034088134765625,

-0.007335662841796875,

0.02191162109375,

-0.0250396728515625,

-0.0276336669921875,

-0.02459716796875,

0.048370361328125,

-0.030548095703125,

-0.0162811279296875,

-0.033111572265625,

-0.042572021484375,

-0.044403076171875,

-0.004856109619140625,

-0.040283203125,

-0.0211181640625,

-0.00710296630859375,

-0.00972747802734375,

0.035003662109375,

0.05108642578125,

-0.0008912086486816406,

0.0295867919921875,

-0.043487548828125,

0.0210113525390625,

0.0256500244140625,

0.0278472900390625,

0.0030727386474609375,

-0.05426025390625,

-0.0158538818359375,

0.0052490234375,

-0.0237884521484375,

-0.056427001953125,

0.03997802734375,

0.005527496337890625,

0.03619384765625,

0.0241546630859375,

-0.00446319580078125,

0.061279296875,

-0.0286865234375,

0.061431884765625,

0.0273895263671875,

-0.057525634765625,

0.05267333984375,

-0.0290069580078125,

0.04486083984375,

0.0196685791015625,

0.042083740234375,

-0.0282745361328125,

-0.02288818359375,

-0.06341552734375,

-0.0667724609375,

0.08489990234375,

0.03533935546875,

-0.004230499267578125,

0.01433563232421875,

0.02081298828125,

-0.0032901763916015625,

0.003780364990234375,

-0.07525634765625,

-0.045013427734375,

-0.0223236083984375,

-0.01352691650390625,

0.0020599365234375,

-0.0171356201171875,

0.0017328262329101562,

-0.044708251953125,

0.062103271484375,

-0.00016295909881591797,

0.033447265625,

0.01358795166015625,

-0.01314544677734375,

0.0033435821533203125,

-0.005535125732421875,

0.053863525390625,

0.05657958984375,

-0.0308685302734375,

-0.01099395751953125,

0.031341552734375,

-0.047882080078125,

0.00782012939453125,

0.0015039443969726562,

-0.027374267578125,

0.013885498046875,

0.02728271484375,

0.07989501953125,

-0.01739501953125,

-0.0308685302734375,

0.03826904296875,

0.005382537841796875,

-0.0227508544921875,

-0.030517578125,

0.006561279296875,

0.00925445556640625,

0.015960693359375,

0.01898193359375,

-0.0222015380859375,

-0.01187896728515625,

-0.031982421875,

0.016326904296875,

0.0218353271484375,

-0.0149078369140625,

-0.0401611328125,

0.035064697265625,

-0.0122222900390625,

-0.005771636962890625,

0.03424072265625,

-0.0258026123046875,

-0.04388427734375,

0.050384521484375,

0.0457763671875,

0.05865478515625,

-0.0234527587890625,

0.02288818359375,

0.053314208984375,

0.018218994140625,

-0.0009002685546875,

0.01922607421875,

0.0289154052734375,

-0.043853759765625,

-0.0231170654296875,

-0.061920166015625,

0.009307861328125,

0.035980224609375,

-0.047210693359375,

0.0287933349609375,

-0.0416259765625,

-0.035308837890625,

0.0097808837890625,

0.002124786376953125,

-0.04229736328125,

0.01137542724609375,

0.024017333984375,

0.052490234375,

-0.07391357421875,

0.065185546875,

0.05462646484375,

-0.039398193359375,

-0.055877685546875,

-0.006439208984375,

0.020263671875,

-0.058837890625,

0.035125732421875,

0.003963470458984375,

-0.0019073486328125,

-0.0068817138671875,

-0.03369140625,

-0.08087158203125,

0.0963134765625,

0.036956787109375,

-0.04022216796875,

-0.00640869140625,

0.0206756591796875,

0.04571533203125,

-0.01715087890625,

0.036346435546875,

0.03387451171875,

0.03448486328125,

-0.0027008056640625,

-0.09393310546875,

0.00537872314453125,

-0.0251922607421875,

0.005695343017578125,

0.01428985595703125,

-0.087158203125,

0.07794189453125,

-0.002559661865234375,

0.0015459060668945312,

-0.01392364501953125,

0.03497314453125,

0.035186767578125,

0.006694793701171875,

0.047607421875,

0.060791015625,

0.03216552734375,

-0.0116119384765625,

0.09210205078125,

-0.0167999267578125,

0.05902099609375,

0.0692138671875,

0.01422119140625,

0.03350830078125,

0.009490966796875,

-0.03704833984375,

0.04180908203125,

0.046905517578125,

-0.011199951171875,

0.026458740234375,

0.01122283935546875,

-0.02099609375,

-0.01428985595703125,

-0.00421905517578125,

-0.03253173828125,

0.041015625,

0.005126953125,

-0.0163421630859375,

-0.012237548828125,

0.012664794921875,

0.0220489501953125,

-0.0242767333984375,

-0.0195465087890625,

0.058013916015625,

0.016204833984375,

-0.03826904296875,

0.0648193359375,

-0.0107574462890625,

0.05474853515625,

-0.050201416015625,

0.01291656494140625,

-0.0110015869140625,

0.005001068115234375,

-0.0274810791015625,

-0.061279296875,

0.0172119140625,

0.00351715087890625,

-0.01143646240234375,

0.006702423095703125,

0.05340576171875,

-0.02154541015625,

-0.05487060546875,

0.03826904296875,

0.0187835693359375,

0.029876708984375,

0.0035076141357421875,

-0.08599853515625,

0.01013946533203125,

0.0102996826171875,

-0.037841796875,

0.02410888671875,

0.0188140869140625,

0.0039520263671875,

0.038665771484375,

0.044342041015625,

0.02496337890625,

0.031707763671875,

0.016082763671875,

0.057830810546875,

-0.046966552734375,

-0.0256805419921875,

-0.071044921875,

0.044464111328125,

-0.0174407958984375,

-0.0308074951171875,

0.057342529296875,

0.048370361328125,

0.073486328125,

-0.0113067626953125,

0.049560546875,

-0.0291748046875,

0.020965576171875,

-0.0340576171875,

0.045623779296875,

-0.035797119140625,

-0.004974365234375,

-0.035430908203125,

-0.06884765625,

-0.005947113037109375,

0.052490234375,

-0.0206756591796875,

0.015716552734375,

0.04254150390625,

0.055938720703125,

-0.0083465576171875,

-0.031524658203125,

0.0185546875,

0.0285491943359375,

0.0156402587890625,

0.055084228515625,

0.04248046875,

-0.05950927734375,

0.0428466796875,

-0.051788330078125,

-0.012786865234375,

-0.016204833984375,

-0.044586181640625,

-0.06890869140625,

-0.03179931640625,

-0.033172607421875,

-0.019134521484375,

-0.00611114501953125,

0.0750732421875,

0.06500244140625,

-0.058349609375,

-0.030517578125,

0.004974365234375,

0.0069427490234375,

-0.0207366943359375,

-0.0218658447265625,

0.04156494140625,

-0.00957489013671875,

-0.0733642578125,

-0.00629425048828125,

0.00931549072265625,

0.03338623046875,

0.005069732666015625,

-0.0129241943359375,

-0.0307464599609375,

-0.00174713134765625,

0.0538330078125,

0.0202484130859375,

-0.060302734375,

-0.0210418701171875,

-0.00524139404296875,

-0.0021877288818359375,

0.0139923095703125,

0.0201873779296875,

-0.03387451171875,

0.0305633544921875,

0.0572509765625,

0.01381683349609375,

0.06927490234375,

0.0096435546875,

0.0309295654296875,

-0.044647216796875,

0.0322265625,

0.005168914794921875,

0.0220184326171875,

0.003612518310546875,

-0.02252197265625,

0.048126220703125,

0.03424072265625,

-0.0309295654296875,

-0.05999755859375,

-0.00492095947265625,

-0.08148193359375,

-0.00916290283203125,

0.07794189453125,

-0.0267791748046875,

-0.0245208740234375,

0.01027679443359375,

-0.0170745849609375,

0.0152587890625,

-0.015899658203125,

0.04345703125,

0.0684814453125,

-0.0316162109375,

-0.023284912109375,

-0.05377197265625,

0.041534423828125,

0.0299224853515625,

-0.061492919921875,

-0.00708770751953125,

0.003665924072265625,

0.0247650146484375,

0.026397705078125,

0.049163818359375,

-0.0275421142578125,

0.0271148681640625,

-0.0063323974609375,

0.01163482666015625,

0.005496978759765625,

-0.0168914794921875,

-0.0211334228515625,

-0.0123291015625,

-0.015716552734375,

-0.0133056640625

]

] |

aneuraz/awesome-align-with-co | 2022-04-29T16:16:12.000Z | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"sentence alignment",

"de",

"fr",

"en",

"ro",

"zh",

"arxiv:2101.08231",

"license:bsd-3-clause",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | aneuraz | null | null | aneuraz/awesome-align-with-co | 1 | 17,265 | transformers | 2022-04-29T14:55:54 | ---

language:

- de

- fr

- en

- ro

- zh

thumbnail:

tags:

- sentence alignment

license: bsd-3-clause

---

# AWESOME: Aligning Word Embedding Spaces of Multilingual Encoders

This model comes from the following GitHub repository: [https://github.com/neulab/awesome-align](https://github.com/neulab/awesome-align)

It corresponds to this paper: [https://arxiv.org/abs/2101.08231](https://arxiv.org/abs/2101.08231)

Please cite the original paper if you decide to use the model:

```

@inproceedings{dou2021word,

title={Word Alignment by Fine-tuning Embeddings on Parallel Corpora},

author={Dou, Zi-Yi and Neubig, Graham},

booktitle={Conference of the European Chapter of the Association for Computational Linguistics (EACL)},

year={2021}

}

```

`awesome-align` is a tool that can extract word alignments from multilingual BERT (mBERT) [Demo](https://colab.research.google.com/drive/1205ubqebM0OsZa1nRgbGJBtitgHqIVv6?usp=sharing) and allows you to fine-tune mBERT on parallel corpora for better alignment quality (see our paper for more details).

## Usage (copied from this [DEMO](https://colab.research.google.com/drive/1205ubqebM0OsZa1nRgbGJBtitgHqIVv6?usp=sharing) )

```python

from transformers import AutoModel, AutoTokenizer

import itertools

import torch

# load model

model = AutoModel.from_pretrained("aneuraz/awesome-align-with-co")

tokenizer = AutoTokenizer.from_pretrained("aneuraz/awesome-align-with-co")

# model parameters

align_layer = 8

threshold = 1e-3

# define inputs

src = 'awesome-align is awesome !'

tgt = '牛对齐 是 牛 !'

# pre-processing

sent_src, sent_tgt = src.strip().split(), tgt.strip().split()

token_src, token_tgt = [tokenizer.tokenize(word) for word in sent_src], [tokenizer.tokenize(word) for word in sent_tgt]

wid_src, wid_tgt = [tokenizer.convert_tokens_to_ids(x) for x in token_src], [tokenizer.convert_tokens_to_ids(x) for x in token_tgt]

ids_src, ids_tgt = tokenizer.prepare_for_model(list(itertools.chain(*wid_src)), return_tensors='pt', model_max_length=tokenizer.model_max_length, truncation=True)['input_ids'], tokenizer.prepare_for_model(list(itertools.chain(*wid_tgt)), return_tensors='pt', truncation=True, model_max_length=tokenizer.model_max_length)['input_ids']

sub2word_map_src = []

for i, word_list in enumerate(token_src):

sub2word_map_src += [i for x in word_list]

sub2word_map_tgt = []

for i, word_list in enumerate(token_tgt):

sub2word_map_tgt += [i for x in word_list]

# alignment

align_layer = 8

threshold = 1e-3

model.eval()

with torch.no_grad():

out_src = model(ids_src.unsqueeze(0), output_hidden_states=True)[2][align_layer][0, 1:-1]

out_tgt = model(ids_tgt.unsqueeze(0), output_hidden_states=True)[2][align_layer][0, 1:-1]

dot_prod = torch.matmul(out_src, out_tgt.transpose(-1, -2))

softmax_srctgt = torch.nn.Softmax(dim=-1)(dot_prod)

softmax_tgtsrc = torch.nn.Softmax(dim=-2)(dot_prod)

softmax_inter = (softmax_srctgt > threshold)*(softmax_tgtsrc > threshold)

align_subwords = torch.nonzero(softmax_inter, as_tuple=False)

align_words = set()

for i, j in align_subwords:

align_words.add( (sub2word_map_src[i], sub2word_map_tgt[j]) )

print(align_words)

```

| 3,158 | [

[

-0.0192718505859375,

-0.05645751953125,

0.018890380859375,

0.011474609375,

-0.020904541015625,

-0.005397796630859375,

-0.0131988525390625,

-0.017425537109375,

0.01276397705078125,

0.008087158203125,

-0.034698486328125,

-0.061126708984375,

-0.04547119140625,

-0.005008697509765625,

-0.0248260498046875,

0.07574462890625,

-0.0202178955078125,

0.003833770751953125,

0.00543975830078125,

-0.0251617431640625,

-0.0277862548828125,

-0.031341552734375,

-0.0249176025390625,

-0.0251617431640625,

0.0078582763671875,

0.011474609375,

0.04132080078125,

0.044586181640625,

0.040496826171875,

0.0301513671875,

-0.001369476318359375,

0.0148773193359375,

-0.02264404296875,

-0.00464630126953125,

-0.01226806640625,

-0.02166748046875,

-0.03607177734375,

-0.00310516357421875,

0.046844482421875,

0.024322509765625,

-0.005710601806640625,

0.0214080810546875,

0.0014209747314453125,

0.0200042724609375,

-0.034759521484375,

0.01195526123046875,

-0.052703857421875,

0.004985809326171875,

-0.01561737060546875,

-0.00778961181640625,

-0.0145263671875,

-0.028900146484375,

0.00276947021484375,

-0.049407958984375,

0.0194091796875,

0.01299285888671875,

0.11529541015625,

0.0128173828125,

-0.0092620849609375,

-0.03399658203125,

-0.03302001953125,

0.0595703125,

-0.071044921875,

0.03472900390625,

0.006999969482421875,

-0.015899658203125,

-0.00904083251953125,

-0.06951904296875,

-0.066162109375,

-0.005092620849609375,

-0.004863739013671875,

0.0272369384765625,

-0.01480865478515625,

0.00862884521484375,

0.016815185546875,

0.039825439453125,

-0.050537109375,

0.00824737548828125,

-0.0266876220703125,

-0.0283203125,

0.03668212890625,

0.0195159912109375,

0.04107666015625,

-0.0162200927734375,

-0.03143310546875,

-0.022613525390625,

-0.0262451171875,

0.005886077880859375,

0.026763916015625,

0.0162811279296875,

-0.0283050537109375,

0.054412841796875,

0.00734710693359375,

0.056884765625,

0.0030879974365234375,

-0.006000518798828125,

0.04217529296875,

-0.03338623046875,

-0.0172576904296875,

-0.00539398193359375,

0.07684326171875,

0.0242767333984375,

0.00620269775390625,

-0.002185821533203125,

-0.01110076904296875,

0.02325439453125,

-0.01062774658203125,

-0.060882568359375,

-0.0291900634765625,

0.02935791015625,

-0.018524169921875,

-0.009185791015625,

-0.0008535385131835938,

-0.037841796875,

-0.011474609375,

-0.00490570068359375,

0.07733154296875,

-0.05987548828125,

-0.014129638671875,

0.019195556640625,

-0.0224609375,

0.0233612060546875,

-0.010650634765625,

-0.060211181640625,

0.0038890838623046875,

0.039306640625,

0.06756591796875,

0.01407623291015625,

-0.0443115234375,

-0.0226593017578125,

0.00417327880859375,

-0.020721435546875,

0.028656005859375,

-0.0292816162109375,

-0.0153656005859375,

-0.01346588134765625,

-0.0005965232849121094,

-0.036285400390625,

-0.02032470703125,

0.04547119140625,

-0.0303497314453125,

0.0394287109375,

-0.01174163818359375,

-0.065673828125,

-0.017242431640625,

0.0224456787109375,

-0.0267791748046875,

0.08428955078125,

0.0120849609375,

-0.06756591796875,

0.019134521484375,

-0.04541015625,

-0.0203094482421875,

0.0019817352294921875,

-0.0160369873046875,

-0.04815673828125,

0.00971221923828125,

0.0304412841796875,

0.0341796875,

-0.00594329833984375,

0.003971099853515625,

-0.020416259765625,

-0.02215576171875,

0.0152130126953125,

-0.0111541748046875,

0.08184814453125,

0.0152587890625,

-0.04144287109375,

0.0178985595703125,

-0.04669189453125,

0.0227508544921875,

0.0135650634765625,

-0.01763916015625,

-0.0073394775390625,

-0.0190277099609375,

0.005382537841796875,

0.02490234375,

0.0290985107421875,

-0.059356689453125,

0.018798828125,

-0.035736083984375,

0.04193115234375,

0.04986572265625,

-0.01450347900390625,

0.0238189697265625,

-0.0171661376953125,

0.03240966796875,

0.006664276123046875,

0.00579071044921875,

0.0090484619140625,

-0.026580810546875,

-0.06402587890625,

-0.04339599609375,

0.045013427734375,

0.044769287109375,

-0.047943115234375,

0.06402587890625,

-0.030609130859375,

-0.043426513671875,

-0.061279296875,

0.0005488395690917969,

0.0268096923828125,

0.03839111328125,

0.034088134765625,

-0.0225067138671875,

-0.039886474609375,

-0.059539794921875,

-0.01174163818359375,

0.004810333251953125,

0.01137542724609375,

0.004779815673828125,

0.048980712890625,

-0.0211944580078125,

0.0625,

-0.0350341796875,

-0.04071044921875,

-0.0203094482421875,

0.01309967041015625,

0.0301361083984375,

0.05609130859375,

0.0372314453125,

-0.032135009765625,

-0.045654296875,

-0.00501251220703125,

-0.05316162109375,

0.00098419189453125,

-0.0014085769653320312,

-0.03515625,

0.0159149169921875,

0.03302001953125,

-0.0537109375,

0.0275421142578125,

0.035430908203125,

-0.033905029296875,

0.031982421875,

-0.03411865234375,

-0.0012006759643554688,

-0.0958251953125,

0.00771331787109375,

-0.011016845703125,

0.0008382797241210938,

-0.040435791015625,

0.0253753662109375,

0.02606201171875,

-0.00196075439453125,

-0.025238037109375,

0.044586181640625,

-0.061065673828125,

0.01311492919921875,

-0.00423431396484375,

0.012054443359375,

0.00667572021484375,

0.038818359375,

-0.0083465576171875,

0.042510986328125,

0.059814453125,

-0.0307464599609375,

0.0192108154296875,

0.0290374755859375,

-0.024169921875,

0.02557373046875,

-0.0465087890625,

0.0093536376953125,

0.0009236335754394531,

0.0107269287109375,

-0.087646484375,

-0.007732391357421875,

0.0179595947265625,

-0.04852294921875,

0.03765869140625,

0.004802703857421875,

-0.04852294921875,

-0.0440673828125,

-0.031005859375,

0.02294921875,

0.032470703125,

-0.038787841796875,

0.041961669921875,

0.031494140625,

0.01055908203125,

-0.0501708984375,

-0.06036376953125,

-0.009918212890625,

-0.007648468017578125,

-0.05419921875,

0.04571533203125,

-0.006702423095703125,

0.012969970703125,

0.022918701171875,

-0.00311279296875,

0.007415771484375,

-0.005298614501953125,

-0.002361297607421875,

0.030792236328125,

-0.005374908447265625,

0.0048065185546875,

-0.0019741058349609375,

-0.0018634796142578125,

-0.006282806396484375,

-0.02935791015625,

0.06622314453125,

-0.025146484375,

-0.0225372314453125,

-0.041778564453125,

0.02105712890625,

0.038177490234375,

-0.0325927734375,

0.08197021484375,

0.0831298828125,

-0.046844482421875,

-0.0011272430419921875,

-0.03839111328125,

-0.0087890625,

-0.03753662109375,

0.047760009765625,

-0.037200927734375,

-0.05206298828125,

0.0526123046875,

0.0269927978515625,

0.0240020751953125,

0.048095703125,

0.05084228515625,

0.00290679931640625,

0.093505859375,

0.0467529296875,

-0.006130218505859375,

0.032257080078125,

-0.0572509765625,

0.044464111328125,

-0.0751953125,

-0.017486572265625,

-0.0244293212890625,

-0.0251312255859375,

-0.049835205078125,

-0.0206298828125,

0.0204315185546875,

0.028656005859375,

-0.013427734375,

0.029083251953125,

-0.06085205078125,

0.012969970703125,

0.037445068359375,

-0.00016868114471435547,

-0.0190887451171875,

0.0108489990234375,

-0.045135498046875,

-0.0180206298828125,

-0.059326171875,

-0.0190887451171875,

0.0650634765625,

0.0174560546875,

0.04833984375,

0.006610870361328125,

0.06781005859375,

-0.0181121826171875,

0.0103912353515625,

-0.051971435546875,

0.0439453125,

-0.016754150390625,

-0.04290771484375,

-0.01230621337890625,

-0.0225067138671875,

-0.0662841796875,

0.0265960693359375,

-0.017303466796875,

-0.06591796875,

0.0026683807373046875,

0.0133514404296875,

-0.036224365234375,

0.03240966796875,

-0.05462646484375,

0.06927490234375,

-0.006465911865234375,

-0.03729248046875,

-0.00620269775390625,

-0.034332275390625,

0.017578125,

0.01401519775390625,

0.01187896728515625,

-0.01239013671875,

-0.0011272430419921875,

0.0748291015625,

-0.049713134765625,

0.0310211181640625,

-0.01250457763671875,

0.00003331899642944336,

0.0202178955078125,

-0.0109710693359375,

0.038909912109375,

-0.01806640625,

-0.00925445556640625,

0.01300811767578125,

-0.005687713623046875,

-0.0269622802734375,

-0.0247955322265625,

0.06304931640625,

-0.06817626953125,

-0.0311431884765625,

-0.03521728515625,

-0.055206298828125,

-0.00006031990051269531,

0.027191162109375,

0.05889892578125,

0.049591064453125,

0.01959228515625,

0.00922393798828125,

0.034027099609375,

-0.022216796875,

0.0418701171875,

0.0200653076171875,

-0.01499176025390625,

-0.04876708984375,

0.07989501953125,

0.04522705078125,

0.00582122802734375,

0.024200439453125,

0.01328277587890625,

-0.044830322265625,

-0.037567138671875,

-0.03485107421875,

0.032623291015625,

-0.047821044921875,

-0.018646240234375,

-0.06951904296875,

-0.03143310546875,

-0.056732177734375,

-0.0155792236328125,

-0.0230560302734375,

-0.0341796875,

-0.02593994140625,

-0.01218414306640625,

0.034759521484375,

0.0290985107421875,

-0.027435302734375,

0.0134124755859375,

-0.05059814453125,

0.019134521484375,

0.01104736328125,

0.0140533447265625,

-0.0019207000732421875,

-0.0618896484375,

-0.0243072509765625,

-0.01157379150390625,

-0.027099609375,

-0.06951904296875,

0.053741455078125,

0.018463134765625,

0.04541015625,

0.0093536376953125,

0.007213592529296875,

0.07171630859375,

-0.029266357421875,

0.058624267578125,

0.0208282470703125,

-0.0892333984375,

0.023193359375,

-0.007350921630859375,

0.030975341796875,

0.0237884521484375,

0.0266571044921875,

-0.042327880859375,

-0.0345458984375,

-0.04730224609375,

-0.1072998046875,

0.059967041015625,