modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

shibing624/text2vec-bge-large-chinese | 2023-09-04T09:34:23.000Z | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"text2vec",

"sentence-similarity",

"zh",

"dataset:https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-paraphrase-dataset",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | sentence-similarity | shibing624 | null | null | shibing624/text2vec-bge-large-chinese | 18 | 2,534 | transformers | 2023-09-04T08:11:09 | ---

pipeline_tag: sentence-similarity

license: apache-2.0

tags:

- text2vec

- feature-extraction

- sentence-similarity

- transformers

datasets:

- https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-paraphrase-dataset

language:

- zh

metrics:

- spearmanr

library_name: transformers

---

# shibing624/text2vec-bge-large-chinese

This is a CoSENT(Cosine Sentence) model: shibing624/text2vec-bge-large-chinese.

It maps sentences to a 1024 dimensional dense vector space and can be used for tasks

like sentence embeddings, text matching or semantic search.

- training dataset: https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-paraphrase-dataset

- base model: https://huggingface.co/BAAI/bge-large-zh-noinstruct

- max_seq_length: 256

- best epoch: 4

- sentence embedding dim: 1024

## Evaluation

For an automated evaluation of this model, see the *Evaluation Benchmark*: [text2vec](https://github.com/shibing624/text2vec)

### Release Models

- 本项目release模型的中文匹配评测结果:

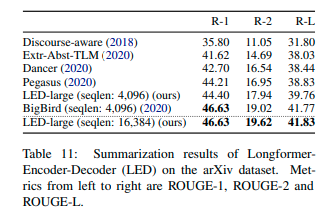

| Arch | BaseModel | Model | ATEC | BQ | LCQMC | PAWSX | STS-B | SOHU-dd | SOHU-dc | Avg | QPS |

|:-----------|:------------------------------------------------------------|:--------------------------------------------------------------------------------------------------------------------------------------------------|:-----:|:-----:|:-----:|:-----:|:-----:|:-------:|:-------:|:---------:|:-----:|

| Word2Vec | word2vec | [w2v-light-tencent-chinese](https://ai.tencent.com/ailab/nlp/en/download.html) | 20.00 | 31.49 | 59.46 | 2.57 | 55.78 | 55.04 | 20.70 | 35.03 | 23769 |

| SBERT | xlm-roberta-base | [sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2](https://huggingface.co/sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2) | 18.42 | 38.52 | 63.96 | 10.14 | 78.90 | 63.01 | 52.28 | 46.46 | 3138 |

| CoSENT | hfl/chinese-macbert-base | [shibing624/text2vec-base-chinese](https://huggingface.co/shibing624/text2vec-base-chinese) | 31.93 | 42.67 | 70.16 | 17.21 | 79.30 | 70.27 | 50.42 | 51.61 | 3008 |

| CoSENT | hfl/chinese-lert-large | [GanymedeNil/text2vec-large-chinese](https://huggingface.co/GanymedeNil/text2vec-large-chinese) | 32.61 | 44.59 | 69.30 | 14.51 | 79.44 | 73.01 | 59.04 | 53.12 | 2092 |

| CoSENT | nghuyong/ernie-3.0-base-zh | [shibing624/text2vec-base-chinese-sentence](https://huggingface.co/shibing624/text2vec-base-chinese-sentence) | 43.37 | 61.43 | 73.48 | 38.90 | 78.25 | 70.60 | 53.08 | 59.87 | 3089 |

| CoSENT | nghuyong/ernie-3.0-base-zh | [shibing624/text2vec-base-chinese-paraphrase](https://huggingface.co/shibing624/text2vec-base-chinese-paraphrase) | 44.89 | 63.58 | 74.24 | 40.90 | 78.93 | 76.70 | 63.30 | **63.08** | 3066 |

| CoSENT | sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 | [shibing624/text2vec-base-multilingual](https://huggingface.co/shibing624/text2vec-base-multilingual) | 32.39 | 50.33 | 65.64 | 32.56 | 74.45 | 68.88 | 51.17 | 53.67 | 3138 |

| CoSENT | BAAI/bge-large-zh-noinstruct | [shibing624/text2vec-bge-large-chinese](https://huggingface.co/shibing624/text2vec-bge-large-chinese) | 38.41 | 61.34 | 71.72 | 35.15 | 76.44 | 71.81 | 63.15 | 59.72 | 844 |

说明:

- 结果评测指标:spearman系数

- `shibing624/text2vec-base-chinese`模型,是用CoSENT方法训练,基于`hfl/chinese-macbert-base`在中文STS-B数据训练得到,并在中文STS-B测试集评估达到较好效果,运行[examples/training_sup_text_matching_model.py](https://github.com/shibing624/text2vec/blob/master/examples/training_sup_text_matching_model.py)代码可训练模型,模型文件已经上传HF model hub,中文通用语义匹配任务推荐使用

- `shibing624/text2vec-base-chinese-sentence`模型,是用CoSENT方法训练,基于`nghuyong/ernie-3.0-base-zh`用人工挑选后的中文STS数据集[shibing624/nli-zh-all/text2vec-base-chinese-sentence-dataset](https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-sentence-dataset)训练得到,并在中文各NLI测试集评估达到较好效果,运行[examples/training_sup_text_matching_model_jsonl_data.py](https://github.com/shibing624/text2vec/blob/master/examples/training_sup_text_matching_model_jsonl_data.py)代码可训练模型,模型文件已经上传HF model hub,中文s2s(句子vs句子)语义匹配任务推荐使用

- `shibing624/text2vec-base-chinese-paraphrase`模型,是用CoSENT方法训练,基于`nghuyong/ernie-3.0-base-zh`用人工挑选后的中文STS数据集[shibing624/nli-zh-all/text2vec-base-chinese-paraphrase-dataset](https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-paraphrase-dataset),数据集相对于[shibing624/nli-zh-all/text2vec-base-chinese-sentence-dataset](https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-sentence-dataset)加入了s2p(sentence to paraphrase)数据,强化了其长文本的表征能力,并在中文各NLI测试集评估达到SOTA,运行[examples/training_sup_text_matching_model_jsonl_data.py](https://github.com/shibing624/text2vec/blob/master/examples/training_sup_text_matching_model_jsonl_data.py)代码可训练模型,模型文件已经上传HF model hub,中文s2p(句子vs段落)语义匹配任务推荐使用

- `shibing624/text2vec-base-multilingual`模型,是用CoSENT方法训练,基于`sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2`用人工挑选后的多语言STS数据集[shibing624/nli-zh-all/text2vec-base-multilingual-dataset](https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-multilingual-dataset)训练得到,并在中英文测试集评估相对于原模型效果有提升,运行[examples/training_sup_text_matching_model_jsonl_data.py](https://github.com/shibing624/text2vec/blob/master/examples/training_sup_text_matching_model_jsonl_data.py)代码可训练模型,模型文件已经上传HF model hub,多语言语义匹配任务推荐使用

- `shibing624/text2vec-bge-large-chinese`模型,是用CoSENT方法训练,基于`BAAI/bge-large-zh-noinstruct`用人工挑选后的中文STS数据集[shibing624/nli-zh-all/text2vec-base-chinese-paraphrase-dataset](https://huggingface.co/datasets/shibing624/nli-zh-all/tree/main/text2vec-base-chinese-paraphrase-dataset)训练得到,并在中文测试集评估相对于原模型效果有提升,在短文本区分度上提升明显,运行[examples/training_sup_text_matching_model_jsonl_data.py](https://github.com/shibing624/text2vec/blob/master/examples/training_sup_text_matching_model_jsonl_data.py)代码可训练模型,模型文件已经上传HF model hub,中文s2s(句子vs句子)语义匹配任务推荐使用

- `w2v-light-tencent-chinese`是腾讯词向量的Word2Vec模型,CPU加载使用,适用于中文字面匹配任务和缺少数据的冷启动情况

- 各预训练模型均可以通过transformers调用,如MacBERT模型:`--model_name hfl/chinese-macbert-base` 或者roberta模型:`--model_name uer/roberta-medium-wwm-chinese-cluecorpussmall`

- 为测评模型的鲁棒性,加入了未训练过的SOHU测试集,用于测试模型的泛化能力;为达到开箱即用的实用效果,使用了搜集到的各中文匹配数据集,数据集也上传到HF datasets[链接见下方](#数据集)

- 中文匹配任务实验表明,pooling最优是`EncoderType.FIRST_LAST_AVG`和`EncoderType.MEAN`,两者预测效果差异很小

- 中文匹配评测结果复现,可以下载中文匹配数据集到`examples/data`,运行 [tests/model_spearman.py](https://github.com/shibing624/text2vec/blob/master/tests/model_spearman.py) 代码复现评测结果

- QPS的GPU测试环境是Tesla V100,显存32GB

模型训练实验报告:[实验报告](https://github.com/shibing624/text2vec/blob/master/docs/model_report.md)

## Usage (text2vec)

Using this model becomes easy when you have [text2vec](https://github.com/shibing624/text2vec) installed:

```

pip install -U text2vec

```

Then you can use the model like this:

```python

from text2vec import SentenceModel

sentences = ['如何更换花呗绑定银行卡', '花呗更改绑定银行卡']

model = SentenceModel('shibing624/text2vec-bge-large-chinese')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [text2vec](https://github.com/shibing624/text2vec), you can use the model like this:

First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

Install transformers:

```

pip install transformers

```

Then load model and predict:

```python

from transformers import BertTokenizer, BertModel

import torch

# Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] # First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Load model from HuggingFace Hub

tokenizer = BertTokenizer.from_pretrained('shibing624/text2vec-bge-large-chinese')

model = BertModel.from_pretrained('shibing624/text2vec-bge-large-chinese')

sentences = ['如何更换花呗绑定银行卡', '花呗更改绑定银行卡']

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Usage (sentence-transformers)

[sentence-transformers](https://github.com/UKPLab/sentence-transformers) is a popular library to compute dense vector representations for sentences.

Install sentence-transformers:

```

pip install -U sentence-transformers

```

Then load model and predict:

```python

from sentence_transformers import SentenceTransformer

m = SentenceTransformer("shibing624/text2vec-bge-large-chinese")

sentences = ['如何更换花呗绑定银行卡', '花呗更改绑定银行卡']

sentence_embeddings = m.encode(sentences)

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Full Model Architecture

```

CoSENT(

(0): Transformer({'max_seq_length': 256, 'do_lower_case': False}) with Transformer model: ErnieModel

(1): Pooling({'word_embedding_dimension': 1024, 'pooling_mode_mean_tokens': True})

)

```

## Intended uses

Our model is intented to be used as a sentence and short paragraph encoder. Given an input text, it ouptuts a vector which captures

the semantic information. The sentence vector may be used for information retrieval, clustering or sentence similarity tasks.

By default, input text longer than 256 word pieces is truncated.

## Training procedure

### Pre-training

We use the pretrained [`nghuyong/ernie-3.0-base-zh`](https://huggingface.co/nghuyong/ernie-3.0-base-zh) model.

Please refer to the model card for more detailed information about the pre-training procedure.

### Fine-tuning

We fine-tune the model using a contrastive objective. Formally, we compute the cosine similarity from each

possible sentence pairs from the batch.

We then apply the rank loss by comparing with true pairs and false pairs.

## Citing & Authors

This model was trained by [text2vec](https://github.com/shibing624/text2vec).

If you find this model helpful, feel free to cite:

```bibtex

@software{text2vec,

author = {Ming Xu},

title = {text2vec: A Tool for Text to Vector},

year = {2023},

url = {https://github.com/shibing624/text2vec},

}

``` | 11,313 | [

[

-0.0107421875,

-0.054534912109375,

0.0240478515625,

0.0266876220703125,

-0.022430419921875,

-0.02960205078125,

-0.02081298828125,

-0.0173797607421875,

0.00275421142578125,

0.03167724609375,

-0.024993896484375,

-0.041900634765625,

-0.03948974609375,

0.0159606... |

timm/coatnet_3_rw_224.sw_in12k | 2023-05-10T23:45:40.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-12k",

"arxiv:2201.03545",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/coatnet_3_rw_224.sw_in12k | 1 | 2,531 | timm | 2023-01-20T21:25:48 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-12k

---

# Model card for coatnet_3_rw_224.sw_in12k

A timm specific CoAtNet image classification model. Trained in `timm` on ImageNet-12k (a 11821 class subset of full ImageNet-22k) by Ross Wightman.

### Model Variants in [maxxvit.py](https://github.com/huggingface/pytorch-image-models/blob/main/timm/models/maxxvit.py)

MaxxViT covers a number of related model architectures that share a common structure including:

- CoAtNet - Combining MBConv (depthwise-separable) convolutional blocks in early stages with self-attention transformer blocks in later stages.

- MaxViT - Uniform blocks across all stages, each containing a MBConv (depthwise-separable) convolution block followed by two self-attention blocks with different partitioning schemes (window followed by grid).

- CoAtNeXt - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in CoAtNet. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT - A timm specific arch that uses ConvNeXt blocks in place of MBConv blocks in MaxViT. All normalization layers are LayerNorm (no BatchNorm).

- MaxxViT-V2 - A MaxxViT variation that removes the window block attention leaving only ConvNeXt blocks and grid attention w/ more width to compensate.

Aside from the major variants listed above, there are more subtle changes from model to model. Any model name with the string `rw` are `timm` specific configs w/ modelling adjustments made to favour PyTorch eager use. These were created while training initial reproductions of the models so there are variations.

All models with the string `tf` are models exactly matching Tensorflow based models by the original paper authors with weights ported to PyTorch. This covers a number of MaxViT models. The official CoAtNet models were never released.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 181.8

- GMACs: 33.4

- Activations (M): 73.8

- Image size: 224 x 224

- **Papers:**

- CoAtNet: Marrying Convolution and Attention for All Data Sizes: https://arxiv.org/abs/2201.03545

- **Dataset:** ImageNet-12k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('coatnet_3_rw_224.sw_in12k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'coatnet_3_rw_224.sw_in12k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 192, 112, 112])

# torch.Size([1, 192, 56, 56])

# torch.Size([1, 384, 28, 28])

# torch.Size([1, 768, 14, 14])

# torch.Size([1, 1536, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'coatnet_3_rw_224.sw_in12k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1536, 7, 7) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

### By Top-1

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

### By Throughput (samples / sec)

|model |top1 |top5 |samples / sec |Params (M) |GMAC |Act (M)|

|------------------------------------------------------------------------------------------------------------------------|----:|----:|--------------:|--------------:|-----:|------:|

|[coatnext_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnext_nano_rw_224.sw_in1k) |81.95|95.92| 2525.52| 14.70| 2.47| 12.80|

|[coatnet_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_nano_rw_224.sw_in1k) |81.70|95.64| 2344.52| 15.14| 2.41| 15.41|

|[coatnet_rmlp_nano_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_nano_rw_224.sw_in1k) |82.05|95.87| 2109.09| 15.15| 2.62| 20.34|

|[coatnet_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_0_rw_224.sw_in1k) |82.39|95.84| 1831.21| 27.44| 4.43| 18.73|

|[coatnet_bn_0_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_bn_0_rw_224.sw_in1k) |82.39|96.19| 1600.14| 27.44| 4.67| 22.04|

|[maxvit_rmlp_pico_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_pico_rw_256.sw_in1k) |80.53|95.21| 1594.71| 7.52| 1.85| 24.86|

|[maxxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_nano_rw_256.sw_in1k) |83.03|96.34| 1341.24| 16.78| 4.37| 26.05|

|[maxvit_rmlp_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_nano_rw_256.sw_in1k) |82.96|96.26| 1283.24| 15.50| 4.47| 31.92|

|[maxxvitv2_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxxvitv2_nano_rw_256.sw_in1k) |83.11|96.33| 1276.88| 23.70| 6.26| 23.05|

|[maxvit_nano_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_nano_rw_256.sw_in1k) |82.93|96.23| 1218.17| 15.45| 4.46| 30.28|

|[maxvit_tiny_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_tiny_rw_224.sw_in1k) |83.50|96.50| 1100.53| 29.06| 5.11| 33.11|

|[coatnet_rmlp_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw_224.sw_in1k) |83.36|96.45| 1093.03| 41.69| 7.85| 35.47|

|[coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_1_rw2_224.sw_in12k_ft_in1k) |84.90|96.96| 1025.45| 41.72| 8.11| 40.13|

|[maxvit_tiny_tf_224.in1k](https://huggingface.co/timm/maxvit_tiny_tf_224.in1k) |83.41|96.59| 1004.94| 30.92| 5.60| 35.78|

|[coatnet_1_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_1_rw_224.sw_in1k) |83.62|96.38| 989.59| 41.72| 8.04| 34.60|

|[maxvit_rmlp_tiny_rw_256.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_tiny_rw_256.sw_in1k) |84.23|96.78| 807.21| 29.15| 6.77| 46.92|

|[maxvit_rmlp_small_rw_224.sw_in1k](https://huggingface.co/timm/maxvit_rmlp_small_rw_224.sw_in1k) |84.49|96.76| 693.82| 64.90| 10.75| 49.30|

|[maxvit_small_tf_224.in1k](https://huggingface.co/timm/maxvit_small_tf_224.in1k) |84.43|96.83| 647.96| 68.93| 11.66| 53.17|

|[coatnet_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_2_rw_224.sw_in12k_ft_in1k) |86.57|97.89| 631.88| 73.87| 15.09| 49.22|

|[coatnet_rmlp_2_rw_224.sw_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in1k) |84.61|96.74| 625.81| 73.88| 15.18| 54.78|

|[coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_224.sw_in12k_ft_in1k) |86.49|97.90| 620.58| 73.88| 15.18| 54.78|

|[maxxvit_rmlp_small_rw_256.sw_in1k](https://huggingface.co/timm/maxxvit_rmlp_small_rw_256.sw_in1k) |84.63|97.06| 575.53| 66.01| 14.67| 58.38|

|[maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.64|98.02| 501.03| 116.09| 24.20| 62.77|

|[maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_224.sw_in12k_ft_in1k) |86.89|98.02| 375.86| 116.14| 23.15| 92.64|

|[maxvit_base_tf_224.in1k](https://huggingface.co/timm/maxvit_base_tf_224.in1k) |84.85|96.99| 358.25| 119.47| 24.04| 95.01|

|[maxvit_tiny_tf_384.in1k](https://huggingface.co/timm/maxvit_tiny_tf_384.in1k) |85.11|97.38| 293.46| 30.98| 17.53| 123.42|

|[maxvit_large_tf_224.in1k](https://huggingface.co/timm/maxvit_large_tf_224.in1k) |84.93|96.97| 247.71| 211.79| 43.68| 127.35|

|[maxvit_small_tf_384.in1k](https://huggingface.co/timm/maxvit_small_tf_384.in1k) |85.54|97.46| 188.35| 69.02| 35.87| 183.65|

|[coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/coatnet_rmlp_2_rw_384.sw_in12k_ft_in1k) |87.39|98.31| 160.80| 73.88| 47.69| 209.43|

|[maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxxvitv2_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.47|98.37| 149.49| 116.09| 72.98| 213.74|

|[maxvit_tiny_tf_512.in1k](https://huggingface.co/timm/maxvit_tiny_tf_512.in1k) |85.67|97.58| 144.25| 31.05| 33.49| 257.59|

|[maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k](https://huggingface.co/timm/maxvit_rmlp_base_rw_384.sw_in12k_ft_in1k) |87.81|98.37| 106.55| 116.14| 70.97| 318.95|

|[maxvit_base_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_384.in21k_ft_in1k) |87.92|98.54| 104.71| 119.65| 73.80| 332.90|

|[maxvit_base_tf_384.in1k](https://huggingface.co/timm/maxvit_base_tf_384.in1k) |86.29|97.80| 101.09| 119.65| 73.80| 332.90|

|[maxvit_small_tf_512.in1k](https://huggingface.co/timm/maxvit_small_tf_512.in1k) |86.10|97.76| 88.63| 69.13| 67.26| 383.77|

|[maxvit_large_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_384.in21k_ft_in1k) |87.98|98.56| 71.75| 212.03|132.55| 445.84|

|[maxvit_large_tf_384.in1k](https://huggingface.co/timm/maxvit_large_tf_384.in1k) |86.23|97.69| 70.56| 212.03|132.55| 445.84|

|[maxvit_base_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_base_tf_512.in21k_ft_in1k) |88.20|98.53| 50.87| 119.88|138.02| 703.99|

|[maxvit_base_tf_512.in1k](https://huggingface.co/timm/maxvit_base_tf_512.in1k) |86.60|97.92| 50.75| 119.88|138.02| 703.99|

|[maxvit_xlarge_tf_384.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_384.in21k_ft_in1k) |88.32|98.54| 42.53| 475.32|292.78| 668.76|

|[maxvit_large_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_large_tf_512.in21k_ft_in1k) |88.04|98.40| 36.42| 212.33|244.75| 942.15|

|[maxvit_large_tf_512.in1k](https://huggingface.co/timm/maxvit_large_tf_512.in1k) |86.52|97.88| 36.04| 212.33|244.75| 942.15|

|[maxvit_xlarge_tf_512.in21k_ft_in1k](https://huggingface.co/timm/maxvit_xlarge_tf_512.in21k_ft_in1k) |88.53|98.64| 21.76| 475.77|534.14|1413.22|

## Citation

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

```bibtex

@article{tu2022maxvit,

title={MaxViT: Multi-Axis Vision Transformer},

author={Tu, Zhengzhong and Talebi, Hossein and Zhang, Han and Yang, Feng and Milanfar, Peyman and Bovik, Alan and Li, Yinxiao},

journal={ECCV},

year={2022},

}

```

```bibtex

@article{dai2021coatnet,

title={CoAtNet: Marrying Convolution and Attention for All Data Sizes},

author={Dai, Zihang and Liu, Hanxiao and Le, Quoc V and Tan, Mingxing},

journal={arXiv preprint arXiv:2106.04803},

year={2021}

}

```

| 22,071 | [

[

-0.051971435546875,

-0.030426025390625,

0.0026702880859375,

0.0323486328125,

-0.0221099853515625,

-0.01514434814453125,

-0.011260986328125,

-0.0276336669921875,

0.056121826171875,

0.0162200927734375,

-0.041595458984375,

-0.0450439453125,

-0.047454833984375,

... |

allenai/led-large-16384-arxiv | 2023-01-24T16:27:02.000Z | [

"transformers",

"pytorch",

"tf",

"led",

"text2text-generation",

"en",

"dataset:scientific_papers",

"arxiv:2004.05150",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | text2text-generation | allenai | null | null | allenai/led-large-16384-arxiv | 22 | 2,530 | transformers | 2022-03-02T23:29:05 | ---

language: en

datasets:

- scientific_papers

license: apache-2.0

---

## Introduction

[Allenai's Longformer Encoder-Decoder (LED)](https://github.com/allenai/longformer#longformer).

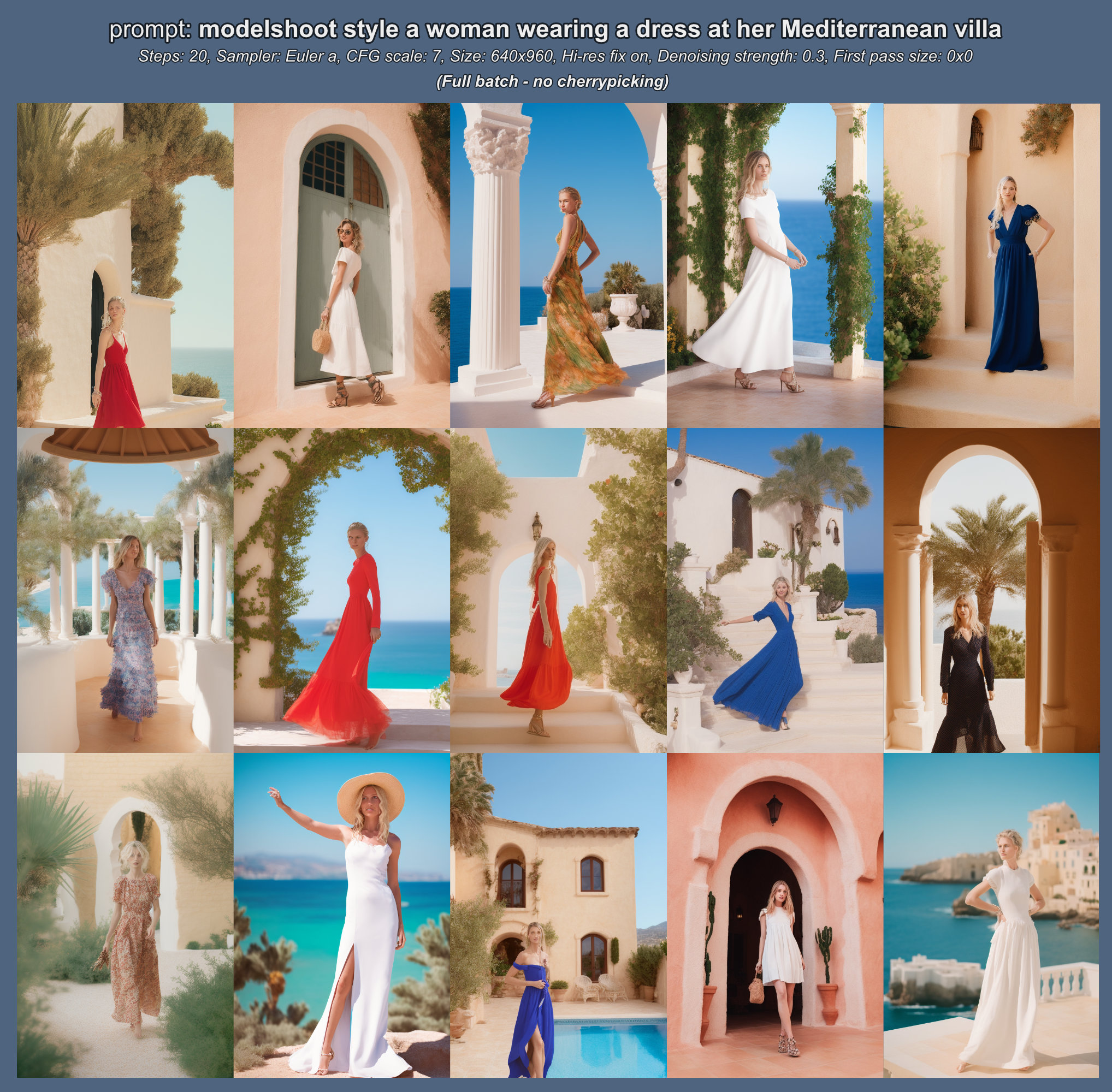

This is the official *led-large-16384* checkpoint that is fine-tuned on the arXiv dataset.*led-large-16384-arxiv* is the official fine-tuned version of [led-large-16384](https://huggingface.co/allenai/led-large-16384). As presented in the [paper](https://arxiv.org/pdf/2004.05150.pdf), the checkpoint achieves state-of-the-art results on arxiv

## Evaluation on downstream task

[This notebook](https://colab.research.google.com/drive/12INTTR6n64TzS4RrXZxMSXfrOd9Xzamo?usp=sharing) shows how *led-large-16384-arxiv* can be evaluated on the [arxiv dataset](https://huggingface.co/datasets/scientific_papers)

## Usage

The model can be used as follows. The input is taken from the test data of the [arxiv dataset](https://huggingface.co/datasets/scientific_papers).

```python

LONG_ARTICLE = """"for about 20 years the problem of properties of

short - term changes of solar activity has been

considered extensively . many investigators

studied the short - term periodicities of the

various indices of solar activity . several

periodicities were detected , but the

periodicities about 155 days and from the interval

of @xmath3 $ ] days ( @xmath4 $ ] years ) are

mentioned most often . first of them was

discovered by @xcite in the occurence rate of

gamma - ray flares detected by the gamma - ray

spectrometer aboard the _ solar maximum mission (

smm ) . this periodicity was confirmed for other

solar flares data and for the same time period

@xcite . it was also found in proton flares during

solar cycles 19 and 20 @xcite , but it was not

found in the solar flares data during solar cycles

22 @xcite . _ several autors confirmed above

results for the daily sunspot area data . @xcite

studied the sunspot data from 18741984 . she found

the 155-day periodicity in data records from 31

years . this periodicity is always characteristic

for one of the solar hemispheres ( the southern

hemisphere for cycles 1215 and the northern

hemisphere for cycles 1621 ) . moreover , it is

only present during epochs of maximum activity (

in episodes of 13 years ) .

similarinvestigationswerecarriedoutby + @xcite .

they applied the same power spectrum method as

lean , but the daily sunspot area data ( cycles

1221 ) were divided into 10 shorter time series .

the periodicities were searched for the frequency

interval 57115 nhz ( 100200 days ) and for each of

10 time series . the authors showed that the

periodicity between 150160 days is statistically

significant during all cycles from 16 to 21 . the

considered peaks were remained unaltered after

removing the 11-year cycle and applying the power

spectrum analysis . @xcite used the wavelet

technique for the daily sunspot areas between 1874

and 1993 . they determined the epochs of

appearance of this periodicity and concluded that

it presents around the maximum activity period in

cycles 16 to 21 . moreover , the power of this

periodicity started growing at cycle 19 ,

decreased in cycles 20 and 21 and disappered after

cycle 21 . similaranalyseswerepresentedby + @xcite

, but for sunspot number , solar wind plasma ,

interplanetary magnetic field and geomagnetic

activity index @xmath5 . during 1964 - 2000 the

sunspot number wavelet power of periods less than

one year shows a cyclic evolution with the phase

of the solar cycle.the 154-day period is prominent

and its strenth is stronger around the 1982 - 1984

interval in almost all solar wind parameters . the

existence of the 156-day periodicity in sunspot

data were confirmed by @xcite . they considered

the possible relation between the 475-day (

1.3-year ) and 156-day periodicities . the 475-day

( 1.3-year ) periodicity was also detected in

variations of the interplanetary magnetic field ,

geomagnetic activity helioseismic data and in the

solar wind speed @xcite . @xcite concluded that

the region of larger wavelet power shifts from

475-day ( 1.3-year ) period to 620-day ( 1.7-year

) period and then back to 475-day ( 1.3-year ) .

the periodicities from the interval @xmath6 $ ]

days ( @xmath4 $ ] years ) have been considered

from 1968 . @xcite mentioned a 16.3-month (

490-day ) periodicity in the sunspot numbers and

in the geomagnetic data . @xcite analysed the

occurrence rate of major flares during solar

cycles 19 . they found a 18-month ( 540-day )

periodicity in flare rate of the norhern

hemisphere . @xcite confirmed this result for the

@xmath7 flare data for solar cycles 20 and 21 and

found a peak in the power spectra near 510540 days

. @xcite found a 17-month ( 510-day ) periodicity

of sunspot groups and their areas from 1969 to

1986 . these authors concluded that the length of

this period is variable and the reason of this

periodicity is still not understood . @xcite and +

@xcite obtained statistically significant peaks of

power at around 158 days for daily sunspot data

from 1923 - 1933 ( cycle 16 ) . in this paper the

problem of the existence of this periodicity for

sunspot data from cycle 16 is considered . the

daily sunspot areas , the mean sunspot areas per

carrington rotation , the monthly sunspot numbers

and their fluctuations , which are obtained after

removing the 11-year cycle are analysed . in

section 2 the properties of the power spectrum

methods are described . in section 3 a new

approach to the problem of aliases in the power

spectrum analysis is presented . in section 4

numerical results of the new method of the

diagnosis of an echo - effect for sunspot area

data are discussed . in section 5 the problem of

the existence of the periodicity of about 155 days

during the maximum activity period for sunspot

data from the whole solar disk and from each solar

hemisphere separately is considered . to find

periodicities in a given time series the power

spectrum analysis is applied . in this paper two

methods are used : the fast fourier transformation

algorithm with the hamming window function ( fft )

and the blackman - tukey ( bt ) power spectrum

method @xcite . the bt method is used for the

diagnosis of the reasons of the existence of peaks

, which are obtained by the fft method . the bt

method consists in the smoothing of a cosine

transform of an autocorrelation function using a

3-point weighting average . such an estimator is

consistent and unbiased . moreover , the peaks are

uncorrelated and their sum is a variance of a

considered time series . the main disadvantage of

this method is a weak resolution of the

periodogram points , particularly for low

frequences . for example , if the autocorrelation

function is evaluated for @xmath8 , then the

distribution points in the time domain are :

@xmath9 thus , it is obvious that this method

should not be used for detecting low frequency

periodicities with a fairly good resolution .

however , because of an application of the

autocorrelation function , the bt method can be

used to verify a reality of peaks which are

computed using a method giving the better

resolution ( for example the fft method ) . it is

valuable to remember that the power spectrum

methods should be applied very carefully . the

difficulties in the interpretation of significant

peaks could be caused by at least four effects : a

sampling of a continuos function , an echo -

effect , a contribution of long - term

periodicities and a random noise . first effect

exists because periodicities , which are shorter

than the sampling interval , may mix with longer

periodicities . in result , this effect can be

reduced by an decrease of the sampling interval

between observations . the echo - effect occurs

when there is a latent harmonic of frequency

@xmath10 in the time series , giving a spectral

peak at @xmath10 , and also periodic terms of

frequency @xmath11 etc . this may be detected by

the autocorrelation function for time series with

a large variance . time series often contain long

- term periodicities , that influence short - term

peaks . they could rise periodogram s peaks at

lower frequencies . however , it is also easy to

notice the influence of the long - term

periodicities on short - term peaks in the graphs

of the autocorrelation functions . this effect is

observed for the time series of solar activity

indexes which are limited by the 11-year cycle .

to find statistically significant periodicities it

is reasonable to use the autocorrelation function

and the power spectrum method with a high

resolution . in the case of a stationary time

series they give similar results . moreover , for

a stationary time series with the mean zero the

fourier transform is equivalent to the cosine

transform of an autocorrelation function @xcite .

thus , after a comparison of a periodogram with an

appropriate autocorrelation function one can

detect peaks which are in the graph of the first

function and do not exist in the graph of the

second function . the reasons of their existence

could be explained by the long - term

periodicities and the echo - effect . below method

enables one to detect these effects . ( solid line

) and the 95% confidence level basing on thered

noise ( dotted line ) . the periodogram values are

presented on the left axis . the lower curve

illustrates the autocorrelation function of the

same time series ( solid line ) . the dotted lines

represent two standard errors of the

autocorrelation function . the dashed horizontal

line shows the zero level . the autocorrelation

values are shown in the right axis . ] because

the statistical tests indicate that the time

series is a white noise the confidence level is

not marked . ] . ] the method of the diagnosis

of an echo - effect in the power spectrum ( de )

consists in an analysis of a periodogram of a

given time series computed using the bt method .

the bt method bases on the cosine transform of the

autocorrelation function which creates peaks which

are in the periodogram , but not in the

autocorrelation function . the de method is used

for peaks which are computed by the fft method (

with high resolution ) and are statistically

significant . the time series of sunspot activity

indexes with the spacing interval one rotation or

one month contain a markov - type persistence ,

which means a tendency for the successive values

of the time series to remember their antecendent

values . thus , i use a confidence level basing on

the red noise of markov @xcite for the choice of

the significant peaks of the periodogram computed

by the fft method . when a time series does not

contain the markov - type persistence i apply the

fisher test and the kolmogorov - smirnov test at

the significance level @xmath12 @xcite to verify a

statistically significance of periodograms peaks .

the fisher test checks the null hypothesis that

the time series is white noise agains the

alternative hypothesis that the time series

contains an added deterministic periodic component

of unspecified frequency . because the fisher test

tends to be severe in rejecting peaks as

insignificant the kolmogorov - smirnov test is

also used . the de method analyses raw estimators

of the power spectrum . they are given as follows

@xmath13 for @xmath14 + where @xmath15 for

@xmath16 + @xmath17 is the length of the time

series @xmath18 and @xmath19 is the mean value .

the first term of the estimator @xmath20 is

constant . the second term takes two values (

depending on odd or even @xmath21 ) which are not

significant because @xmath22 for large m. thus ,

the third term of ( 1 ) should be analysed .

looking for intervals of @xmath23 for which

@xmath24 has the same sign and different signs one

can find such parts of the function @xmath25 which

create the value @xmath20 . let the set of values

of the independent variable of the autocorrelation

function be called @xmath26 and it can be divided

into the sums of disjoint sets : @xmath27 where +

@xmath28 + @xmath29 @xmath30 @xmath31 + @xmath32 +

@xmath33 @xmath34 @xmath35 @xmath36 @xmath37

@xmath38 @xmath39 @xmath40 well , the set

@xmath41 contains all integer values of @xmath23

from the interval of @xmath42 for which the

autocorrelation function and the cosinus function

with the period @xmath43 $ ] are positive . the

index @xmath44 indicates successive parts of the

cosinus function for which the cosinuses of

successive values of @xmath23 have the same sign .

however , sometimes the set @xmath41 can be empty

. for example , for @xmath45 and @xmath46 the set

@xmath47 should contain all @xmath48 $ ] for which

@xmath49 and @xmath50 , but for such values of

@xmath23 the values of @xmath51 are negative .

thus , the set @xmath47 is empty . . the

periodogram values are presented on the left axis

. the lower curve illustrates the autocorrelation

function of the same time series . the

autocorrelation values are shown in the right axis

. ] let us take into consideration all sets

\{@xmath52 } , \{@xmath53 } and \{@xmath41 } which

are not empty . because numberings and power of

these sets depend on the form of the

autocorrelation function of the given time series

, it is impossible to establish them arbitrary .

thus , the sets of appropriate indexes of the sets

\{@xmath52 } , \{@xmath53 } and \{@xmath41 } are

called @xmath54 , @xmath55 and @xmath56

respectively . for example the set @xmath56

contains all @xmath44 from the set @xmath57 for

which the sets @xmath41 are not empty . to

separate quantitatively in the estimator @xmath20

the positive contributions which are originated by

the cases described by the formula ( 5 ) from the

cases which are described by the formula ( 3 ) the

following indexes are introduced : @xmath58

@xmath59 @xmath60 @xmath61 where @xmath62 @xmath63

@xmath64 taking for the empty sets \{@xmath53 }

and \{@xmath41 } the indices @xmath65 and @xmath66

equal zero . the index @xmath65 describes a

percentage of the contribution of the case when

@xmath25 and @xmath51 are positive to the positive

part of the third term of the sum ( 1 ) . the

index @xmath66 describes a similar contribution ,

but for the case when the both @xmath25 and

@xmath51 are simultaneously negative . thanks to

these one can decide which the positive or the

negative values of the autocorrelation function

have a larger contribution to the positive values

of the estimator @xmath20 . when the difference

@xmath67 is positive , the statement the

@xmath21-th peak really exists can not be rejected

. thus , the following formula should be satisfied

: @xmath68 because the @xmath21-th peak could

exist as a result of the echo - effect , it is

necessary to verify the second condition :

@xmath69\in c_m.\ ] ] . the periodogram values

are presented on the left axis . the lower curve

illustrates the autocorrelation function of the

same time series ( solid line ) . the dotted lines

represent two standard errors of the

autocorrelation function . the dashed horizontal

line shows the zero level . the autocorrelation

values are shown in the right axis . ] to

verify the implication ( 8) firstly it is

necessary to evaluate the sets @xmath41 for

@xmath70 of the values of @xmath23 for which the

autocorrelation function and the cosine function

with the period @xmath71 $ ] are positive and the

sets @xmath72 of values of @xmath23 for which the

autocorrelation function and the cosine function

with the period @xmath43 $ ] are negative .

secondly , a percentage of the contribution of the

sum of products of positive values of @xmath25 and

@xmath51 to the sum of positive products of the

values of @xmath25 and @xmath51 should be

evaluated . as a result the indexes @xmath65 for

each set @xmath41 where @xmath44 is the index from

the set @xmath56 are obtained . thirdly , from all

sets @xmath41 such that @xmath70 the set @xmath73

for which the index @xmath65 is the greatest

should be chosen . the implication ( 8) is true

when the set @xmath73 includes the considered

period @xmath43 $ ] . this means that the greatest

contribution of positive values of the

autocorrelation function and positive cosines with

the period @xmath43 $ ] to the periodogram value

@xmath20 is caused by the sum of positive products

of @xmath74 for each @xmath75-\frac{m}{2k},[\frac{

2m}{k}]+\frac{m}{2k})$ ] . when the implication

( 8) is false , the peak @xmath20 is mainly

created by the sum of positive products of

@xmath74 for each @xmath76-\frac{m}{2k},\big [

\frac{2m}{n}\big ] + \frac{m}{2k } \big ) $ ] ,

where @xmath77 is a multiple or a divisor of

@xmath21 . it is necessary to add , that the de

method should be applied to the periodograms peaks

, which probably exist because of the echo -

effect . it enables one to find such parts of the

autocorrelation function , which have the

significant contribution to the considered peak .

the fact , that the conditions ( 7 ) and ( 8) are

satisfied , can unambiguously decide about the

existence of the considered periodicity in the

given time series , but if at least one of them is

not satisfied , one can doubt about the existence

of the considered periodicity . thus , in such

cases the sentence the peak can not be treated as

true should be used . using the de method it is

necessary to remember about the power of the set

@xmath78 . if @xmath79 is too large , errors of an

autocorrelation function estimation appear . they

are caused by the finite length of the given time

series and as a result additional peaks of the

periodogram occur . if @xmath79 is too small ,

there are less peaks because of a low resolution

of the periodogram . in applications @xmath80 is

used . in order to evaluate the value @xmath79 the

fft method is used . the periodograms computed by

the bt and the fft method are compared . the

conformity of them enables one to obtain the value

@xmath79 . . the fft periodogram values are

presented on the left axis . the lower curve

illustrates the bt periodogram of the same time

series ( solid line and large black circles ) .

the bt periodogram values are shown in the right

axis . ] in this paper the sunspot activity data (

august 1923 - october 1933 ) provided by the

greenwich photoheliographic results ( gpr ) are

analysed . firstly , i consider the monthly

sunspot number data . to eliminate the 11-year

trend from these data , the consecutively smoothed

monthly sunspot number @xmath81 is subtracted from

the monthly sunspot number @xmath82 where the

consecutive mean @xmath83 is given by @xmath84 the

values @xmath83 for @xmath85 and @xmath86 are

calculated using additional data from last six

months of cycle 15 and first six months of cycle

17 . because of the north - south asymmetry of

various solar indices @xcite , the sunspot

activity is considered for each solar hemisphere

separately . analogously to the monthly sunspot

numbers , the time series of sunspot areas in the

northern and southern hemispheres with the spacing

interval @xmath87 rotation are denoted . in order

to find periodicities , the following time series

are used : + @xmath88 + @xmath89 + @xmath90

+ in the lower part of figure [ f1 ] the

autocorrelation function of the time series for

the northern hemisphere @xmath88 is shown . it is

easy to notice that the prominent peak falls at 17

rotations interval ( 459 days ) and @xmath25 for

@xmath91 $ ] rotations ( [ 81 , 162 ] days ) are

significantly negative . the periodogram of the

time series @xmath88 ( see the upper curve in

figures [ f1 ] ) does not show the significant

peaks at @xmath92 rotations ( 135 , 162 days ) ,

but there is the significant peak at @xmath93 (

243 days ) . the peaks at @xmath94 are close to

the peaks of the autocorrelation function . thus ,

the result obtained for the periodicity at about

@xmath0 days are contradict to the results

obtained for the time series of daily sunspot

areas @xcite . for the southern hemisphere (

the lower curve in figure [ f2 ] ) @xmath25 for

@xmath95 $ ] rotations ( [ 54 , 189 ] days ) is

not positive except @xmath96 ( 135 days ) for

which @xmath97 is not statistically significant .

the upper curve in figures [ f2 ] presents the

periodogram of the time series @xmath89 . this

time series does not contain a markov - type

persistence . moreover , the kolmogorov - smirnov

test and the fisher test do not reject a null

hypothesis that the time series is a white noise

only . this means that the time series do not

contain an added deterministic periodic component

of unspecified frequency . the autocorrelation

function of the time series @xmath90 ( the lower

curve in figure [ f3 ] ) has only one

statistically significant peak for @xmath98 months

( 480 days ) and negative values for @xmath99 $ ]

months ( [ 90 , 390 ] days ) . however , the

periodogram of this time series ( the upper curve

in figure [ f3 ] ) has two significant peaks the

first at 15.2 and the second at 5.3 months ( 456 ,

159 days ) . thus , the periodogram contains the

significant peak , although the autocorrelation

function has the negative value at @xmath100

months . to explain these problems two

following time series of daily sunspot areas are

considered : + @xmath101 + @xmath102 + where

@xmath103 the values @xmath104 for @xmath105

and @xmath106 are calculated using additional

daily data from the solar cycles 15 and 17 .

and the cosine function for @xmath45 ( the period

at about 154 days ) . the horizontal line ( dotted

line ) shows the zero level . the vertical dotted

lines evaluate the intervals where the sets

@xmath107 ( for @xmath108 ) are searched . the

percentage values show the index @xmath65 for each

@xmath41 for the time series @xmath102 ( in

parentheses for the time series @xmath101 ) . in

the right bottom corner the values of @xmath65 for

the time series @xmath102 , for @xmath109 are

written . ] ( the 500-day period ) ] the

comparison of the functions @xmath25 of the time

series @xmath101 ( the lower curve in figure [ f4

] ) and @xmath102 ( the lower curve in figure [ f5

] ) suggests that the positive values of the

function @xmath110 of the time series @xmath101 in

the interval of @xmath111 $ ] days could be caused

by the 11-year cycle . this effect is not visible

in the case of periodograms of the both time

series computed using the fft method ( see the

upper curves in figures [ f4 ] and [ f5 ] ) or the

bt method ( see the lower curve in figure [ f6 ] )

. moreover , the periodogram of the time series

@xmath102 has the significant values at @xmath112

days , but the autocorrelation function is

negative at these points . @xcite showed that the

lomb - scargle periodograms for the both time

series ( see @xcite , figures 7 a - c ) have a

peak at 158.8 days which stands over the fap level

by a significant amount . using the de method the

above discrepancies are obvious . to establish the

@xmath79 value the periodograms computed by the

fft and the bt methods are shown in figure [ f6 ]

( the upper and the lower curve respectively ) .

for @xmath46 and for periods less than 166 days

there is a good comformity of the both

periodograms ( but for periods greater than 166

days the points of the bt periodogram are not

linked because the bt periodogram has much worse

resolution than the fft periodogram ( no one know

how to do it ) ) . for @xmath46 and @xmath113 the

value of @xmath21 is 13 ( @xmath71=153 $ ] ) . the

inequality ( 7 ) is satisfied because @xmath114 .

this means that the value of @xmath115 is mainly

created by positive values of the autocorrelation

function . the implication ( 8) needs an

evaluation of the greatest value of the index

@xmath65 where @xmath70 , but the solar data

contain the most prominent period for @xmath116

days because of the solar rotation . thus ,

although @xmath117 for each @xmath118 , all sets

@xmath41 ( see ( 5 ) and ( 6 ) ) without the set

@xmath119 ( see ( 4 ) ) , which contains @xmath120

$ ] , are considered . this situation is presented

in figure [ f7 ] . in this figure two curves

@xmath121 and @xmath122 are plotted . the vertical

dotted lines evaluate the intervals where the sets

@xmath107 ( for @xmath123 ) are searched . for

such @xmath41 two numbers are written : in

parentheses the value of @xmath65 for the time

series @xmath101 and above it the value of

@xmath65 for the time series @xmath102 . to make

this figure clear the curves are plotted for the

set @xmath124 only . ( in the right bottom corner

information about the values of @xmath65 for the

time series @xmath102 , for @xmath109 are written

. ) the implication ( 8) is not true , because

@xmath125 for @xmath126 . therefore ,

@xmath43=153\notin c_6=[423,500]$ ] . moreover ,

the autocorrelation function for @xmath127 $ ] is

negative and the set @xmath128 is empty . thus ,

@xmath129 . on the basis of these information one

can state , that the periodogram peak at @xmath130

days of the time series @xmath102 exists because

of positive @xmath25 , but for @xmath23 from the

intervals which do not contain this period .

looking at the values of @xmath65 of the time

series @xmath101 , one can notice that they

decrease when @xmath23 increases until @xmath131 .

this indicates , that when @xmath23 increases ,

the contribution of the 11-year cycle to the peaks

of the periodogram decreases . an increase of the

value of @xmath65 is for @xmath132 for the both

time series , although the contribution of the

11-year cycle for the time series @xmath101 is

insignificant . thus , this part of the

autocorrelation function ( @xmath133 for the time

series @xmath102 ) influences the @xmath21-th peak

of the periodogram . this suggests that the

periodicity at about 155 days is a harmonic of the

periodicity from the interval of @xmath1 $ ] days

. ( solid line ) and consecutively smoothed

sunspot areas of the one rotation time interval

@xmath134 ( dotted line ) . both indexes are

presented on the left axis . the lower curve

illustrates fluctuations of the sunspot areas

@xmath135 . the dotted and dashed horizontal lines

represent levels zero and @xmath136 respectively .

the fluctuations are shown on the right axis . ]

the described reasoning can be carried out for

other values of the periodogram . for example ,

the condition ( 8) is not satisfied for @xmath137

( 250 , 222 , 200 days ) . moreover , the

autocorrelation function at these points is

negative . these suggest that there are not a true

periodicity in the interval of [ 200 , 250 ] days

. it is difficult to decide about the existence of

the periodicities for @xmath138 ( 333 days ) and

@xmath139 ( 286 days ) on the basis of above

analysis . the implication ( 8) is not satisfied

for @xmath139 and the condition ( 7 ) is not

satisfied for @xmath138 , although the function

@xmath25 of the time series @xmath102 is

significantly positive for @xmath140 . the

conditions ( 7 ) and ( 8) are satisfied for

@xmath141 ( figure [ f8 ] ) and @xmath142 .

therefore , it is possible to exist the

periodicity from the interval of @xmath1 $ ] days

. similar results were also obtained by @xcite for

daily sunspot numbers and daily sunspot areas .

she considered the means of three periodograms of

these indexes for data from @xmath143 years and

found statistically significant peaks from the

interval of @xmath1 $ ] ( see @xcite , figure 2 )

. @xcite studied sunspot areas from 1876 - 1999

and sunspot numbers from 1749 - 2001 with the help

of the wavelet transform . they pointed out that

the 154 - 158-day period could be the third

harmonic of the 1.3-year ( 475-day ) period .

moreover , the both periods fluctuate considerably

with time , being stronger during stronger sunspot

cycles . therefore , the wavelet analysis suggests

a common origin of the both periodicities . this

conclusion confirms the de method result which

indicates that the periodogram peak at @xmath144

days is an alias of the periodicity from the

interval of @xmath1 $ ] in order to verify the

existence of the periodicity at about 155 days i

consider the following time series : + @xmath145

+ @xmath146 + @xmath147 + the value @xmath134

is calculated analogously to @xmath83 ( see sect .

the values @xmath148 and @xmath149 are evaluated

from the formula ( 9 ) . in the upper part of

figure [ f9 ] the time series of sunspot areas

@xmath150 of the one rotation time interval from

the whole solar disk and the time series of

consecutively smoothed sunspot areas @xmath151 are

showed . in the lower part of figure [ f9 ] the

time series of sunspot area fluctuations @xmath145

is presented . on the basis of these data the

maximum activity period of cycle 16 is evaluated .

it is an interval between two strongest

fluctuations e.a . @xmath152 $ ] rotations . the

length of the time interval @xmath153 is 54

rotations . if the about @xmath0-day ( 6 solar

rotations ) periodicity existed in this time

interval and it was characteristic for strong

fluctuations from this time interval , 10 local

maxima in the set of @xmath154 would be seen .

then it should be necessary to find such a value

of p for which @xmath155 for @xmath156 and the

number of the local maxima of these values is 10 .

as it can be seen in the lower part of figure [ f9

] this is for the case of @xmath157 ( in this

figure the dashed horizontal line is the level of

@xmath158 ) . figure [ f10 ] presents nine time

distances among the successive fluctuation local

maxima and the horizontal line represents the

6-rotation periodicity . it is immediately

apparent that the dispersion of these points is 10

and it is difficult to find even few points which

oscillate around the value of 6 . such an analysis

was carried out for smaller and larger @xmath136

and the results were similar . therefore , the

fact , that the about @xmath0-day periodicity

exists in the time series of sunspot area

fluctuations during the maximum activity period is

questionable . . the horizontal line represents

the 6-rotation ( 162-day ) period . ] ] ]

to verify again the existence of the about

@xmath0-day periodicity during the maximum

activity period in each solar hemisphere

separately , the time series @xmath88 and @xmath89

were also cut down to the maximum activity period

( january 1925december 1930 ) . the comparison of

the autocorrelation functions of these time series

with the appriopriate autocorrelation functions of

the time series @xmath88 and @xmath89 , which are

computed for the whole 11-year cycle ( the lower

curves of figures [ f1 ] and [ f2 ] ) , indicates

that there are not significant differences between

them especially for @xmath23=5 and 6 rotations (

135 and 162 days ) ) . this conclusion is

confirmed by the analysis of the time series

@xmath146 for the maximum activity period . the

autocorrelation function ( the lower curve of

figure [ f11 ] ) is negative for the interval of [

57 , 173 ] days , but the resolution of the

periodogram is too low to find the significant

peak at @xmath159 days . the autocorrelation

function gives the same result as for daily

sunspot area fluctuations from the whole solar

disk ( @xmath160 ) ( see also the lower curve of

figures [ f5 ] ) . in the case of the time series

@xmath89 @xmath161 is zero for the fluctuations

from the whole solar cycle and it is almost zero (

@xmath162 ) for the fluctuations from the maximum

activity period . the value @xmath163 is negative

. similarly to the case of the northern hemisphere

the autocorrelation function and the periodogram

of southern hemisphere daily sunspot area

fluctuations from the maximum activity period

@xmath147 are computed ( see figure [ f12 ] ) .

the autocorrelation function has the statistically

significant positive peak in the interval of [ 155

, 165 ] days , but the periodogram has too low

resolution to decide about the possible

periodicities . the correlative analysis indicates

that there are positive fluctuations with time

distances about @xmath0 days in the maximum

activity period . the results of the analyses of

the time series of sunspot area fluctuations from

the maximum activity period are contradict with

the conclusions of @xcite . she uses the power

spectrum analysis only . the periodogram of daily

sunspot fluctuations contains peaks , which could

be harmonics or subharmonics of the true

periodicities . they could be treated as real

periodicities . this effect is not visible for

sunspot data of the one rotation time interval ,

but averaging could lose true periodicities . this

is observed for data from the southern hemisphere

. there is the about @xmath0-day peak in the

autocorrelation function of daily fluctuations ,

but the correlation for data of the one rotation

interval is almost zero or negative at the points

@xmath164 and 6 rotations . thus , it is

reasonable to research both time series together

using the correlative and the power spectrum

analyses . the following results are obtained :

1 . a new method of the detection of statistically

significant peaks of the periodograms enables one

to identify aliases in the periodogram . 2 . two

effects cause the existence of the peak of the

periodogram of the time series of sunspot area

fluctuations at about @xmath0 days : the first is

caused by the 27-day periodicity , which probably

creates the 162-day periodicity ( it is a

subharmonic frequency of the 27-day periodicity )

and the second is caused by statistically

significant positive values of the autocorrelation

function from the intervals of @xmath165 $ ] and

@xmath166 $ ] days . the existence of the

periodicity of about @xmath0 days of the time

series of sunspot area fluctuations and sunspot

area fluctuations from the northern hemisphere

during the maximum activity period is questionable

. the autocorrelation analysis of the time series

of sunspot area fluctuations from the southern

hemisphere indicates that the periodicity of about

155 days exists during the maximum activity period

. i appreciate valuable comments from professor j.

jakimiec ."""

from transformers import LEDForConditionalGeneration, LEDTokenizer

import torch

tokenizer = LEDTokenizer.from_pretrained("allenai/led-large-16384-arxiv")

input_ids = tokenizer(LONG_ARTICLE, return_tensors="pt").input_ids.to("cuda")

global_attention_mask = torch.zeros_like(input_ids)

# set global_attention_mask on first token

global_attention_mask[:, 0] = 1

model = LEDForConditionalGeneration.from_pretrained("allenai/led-large-16384-arxiv", return_dict_in_generate=True).to("cuda")

sequences = model.generate(input_ids, global_attention_mask=global_attention_mask).sequences

summary = tokenizer.batch_decode(sequences)

```

| 34,537 | [

[

-0.055206298828125,

-0.052642822265625,

0.04168701171875,

0.0128173828125,

-0.0288238525390625,

-0.0128631591796875,

-0.00902557373046875,

-0.042694091796875,

0.032073974609375,

0.0230865478515625,

-0.051727294921875,

-0.025177001953125,

-0.0214996337890625,

... |

cactusfriend/nightmare-promptgen-XL | 2023-07-06T22:16:34.000Z | [

"transformers",

"pytorch",

"safetensors",

"gpt_neo",

"text-generation",

"license:openrail",

"endpoints_compatible",

"region:us"

] | text-generation | cactusfriend | null | null | cactusfriend/nightmare-promptgen-XL | 3 | 2,529 | transformers | 2023-06-26T14:07:40 | ---

license: openrail

pipeline_tag: text-generation

library_name: transformers

widget:

- text: "a photograph of"

example_title: "photo"

- text: "a bizarre cg render"

example_title: "render"

- text: "the spaghetti"

example_title: "meal?"

- text: "a (detailed+ intricate)+ picture"

example_title: "weights"

- text: "photograph of various"

example_title: "variety"

inference:

parameters:

temperature: 2.6

max_new_tokens: 250

---

Experimental 'XL' version of [Nightmare InvokeAI Prompts](https://huggingface.co/cactusfriend/nightmare-invokeai-prompts). Very early version and may be deleted. | 607 | [

[

-0.03936767578125,

-0.04254150390625,

0.04345703125,

0.04815673828125,

-0.0251617431640625,

0.01471710205078125,

0.00763702392578125,

-0.059295654296875,

0.06304931640625,

0.038604736328125,

-0.08251953125,

-0.0089111328125,

-0.0015363693237304688,

0.0139999... |

THUDM/codegeex2-6b | 2023-08-09T21:03:10.000Z | [

"transformers",

"pytorch",

"chatglm",

"codegeex",

"glm",

"thudm",

"custom_code",

"zh",

"en",

"arxiv:2303.17568",

"endpoints_compatible",

"has_space",

"region:us"

] | null | THUDM | null | null | THUDM/codegeex2-6b | 211 | 2,527 | transformers | 2023-07-19T08:25:26 | ---

language:

- zh

- en

tags:

- codegeex

- glm

- chatglm

- thudm

---

<p align="center">

🏠 <a href="https://codegeex.cn" target="_blank">Homepage</a>|💻 <a href="https://github.com/THUDM/CodeGeeX2" target="_blank">GitHub</a>|🛠 Tools <a href="https://marketplace.visualstudio.com/items?itemName=aminer.codegeex" target="_blank">VS Code</a>, <a href="https://plugins.jetbrains.com/plugin/20587-codegeex" target="_blank">Jetbrains</a>|🤗 <a href="https://huggingface.co/THUDM/codegeex2-6b" target="_blank">HF Repo</a>|📄 <a href="https://arxiv.org/abs/2303.17568" target="_blank">Paper</a>

</p>

<p align="center">

👋 Join our <a href="https://discord.gg/8gjHdkmAN6" target="_blank">Discord</a>, <a href="https://join.slack.com/t/codegeexworkspace/shared_invite/zt-1s118ffrp-mpKKhQD0tKBmzNZVCyEZLw" target="_blank">Slack</a>, <a href="https://t.me/+IipIayJ32B1jOTg1" target="_blank">Telegram</a>, <a href="https://github.com/THUDM/CodeGeeX2/blob/main/resources/wechat.md"target="_blank">WeChat</a>

</p>

INT4量化版本|INT4 quantized version [codegeex2-6b-int4](https://huggingface.co/THUDM/codegeex2-6b-int4)

# CodeGeeX2: 更强大的多语言代码生成模型

# A More Powerful Multilingual Code Generation Model

CodeGeeX2 是多语言代码生成模型 [CodeGeeX](https://github.com/THUDM/CodeGeeX) ([KDD’23](https://arxiv.org/abs/2303.17568)) 的第二代模型。CodeGeeX2 基于 [ChatGLM2](https://github.com/THUDM/ChatGLM2-6B) 架构加入代码预训练实现,得益于 ChatGLM2 的更优性能,CodeGeeX2 在多项指标上取得性能提升(+107% > CodeGeeX;仅60亿参数即超过150亿参数的 StarCoder-15B 近10%),更多特性包括:

* **更强大的代码能力**:基于 ChatGLM2-6B 基座语言模型,CodeGeeX2-6B 进一步经过了 600B 代码数据预训练,相比一代模型,在代码能力上全面提升,[HumanEval-X](https://huggingface.co/datasets/THUDM/humaneval-x) 评测集的六种编程语言均大幅提升 (Python +57%, C++ +71%, Java +54%, JavaScript +83%, Go +56%, Rust +321\%),在Python上达到 35.9\% 的 Pass@1 一次通过率,超越规模更大的 StarCoder-15B。

* **更优秀的模型特性**:继承 ChatGLM2-6B 模型特性,CodeGeeX2-6B 更好支持中英文输入,支持最大 8192 序列长度,推理速度较一代 CodeGeeX-13B 大幅提升,量化后仅需6GB显存即可运行,支持轻量级本地化部署。

* **更全面的AI编程助手**:CodeGeeX插件([VS Code](https://marketplace.visualstudio.com/items?itemName=aminer.codegeex), [Jetbrains](https://plugins.jetbrains.com/plugin/20587-codegeex))后端升级,支持超过100种编程语言,新增上下文补全、跨文件补全等实用功能。结合 Ask CodeGeeX 交互式AI编程助手,支持中英文对话解决各种编程问题,包括且不限于代码解释、代码翻译、代码纠错、文档生成等,帮助程序员更高效开发。

* **更开放的协议**:CodeGeeX2-6B 权重对学术研究完全开放,填写[登记表](https://open.bigmodel.cn/mla/form?mcode=CodeGeeX2-6B)申请商业使用。

CodeGeeX2 is the second-generation model of the multilingual code generation model [CodeGeeX](https://github.com/THUDM/CodeGeeX) ([KDD’23](https://arxiv.org/abs/2303.17568)), which is implemented based on the [ChatGLM2](https://github.com/THUDM/ChatGLM2-6B) architecture trained on more code data. Due to the advantage of ChatGLM2, CodeGeeX2 has been comprehensively improved in coding capability (+107% > CodeGeeX; with only 6B parameters, surpassing larger StarCoder-15B for some tasks). It has the following features:

* **More Powerful Coding Capabilities**: Based on the ChatGLM2-6B model, CodeGeeX2-6B has been further pre-trained on 600B code tokens, which has been comprehensively improved in coding capability compared to the first-generation. On the [HumanEval-X](https://huggingface.co/datasets/THUDM/humaneval-x) benchmark, all six languages have been significantly improved (Python +57%, C++ +71%, Java +54%, JavaScript +83%, Go +56%, Rust +321\%), and in Python it reached 35.9% of Pass@1 one-time pass rate, surpassing the larger StarCoder-15B.

* **More Useful Features**: Inheriting the ChatGLM2-6B model features, CodeGeeX2-6B better supports both Chinese and English prompts, maximum 8192 sequence length, and the inference speed is significantly improved compared to the first-generation. After quantization, it only needs 6GB of GPU memory for inference, thus supports lightweight local deployment.

* **Comprehensive AI Coding Assistant**: The backend of CodeGeeX plugin ([VS Code](https://marketplace.visualstudio.com/items?itemName=aminer.codegeex), [Jetbrains](https://plugins.jetbrains.com/plugin/20587-codegeex)) is upgraded, supporting 100+ programming languages, and adding practical functions such as infilling and cross-file completion. Combined with the "Ask CodeGeeX" interactive AI coding assistant, it can be used to solve various programming problems via Chinese or English dialogue, including but not limited to code summarization, code translation, debugging, and comment generation, which helps increasing the efficiency of developpers.

* **Open Liscense**: CodeGeeX2-6B weights are fully open to academic research, and please apply for commercial use by filling in the [registration form](https://open.bigmodel.cn/mla/form?mcode=CodeGeeX2-6B).

## 软件依赖 | Dependency

```shell

pip install protobuf transformers==4.30.2 cpm_kernels torch>=2.0 gradio mdtex2html sentencepiece accelerate

```

## 快速开始 | Get Started

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("THUDM/codegeex2-6b", trust_remote_code=True)

model = AutoModel.from_pretrained("THUDM/codegeex2-6b", trust_remote_code=True, device='cuda')

model = model.eval()

# remember adding a language tag for better performance

prompt = "# language: Python\n# write a bubble sort function\n"

inputs = tokenizer.encode(prompt, return_tensors="pt").to(model.device)

outputs = model.generate(inputs, max_length=256, top_k=1)

response = tokenizer.decode(outputs[0])

>>> print(response)

# language: Python

# write a bubble sort function

def bubble_sort(list):

for i in range(len(list) - 1):

for j in range(len(list) - 1):

if list[j] > list[j + 1]:

list[j], list[j + 1] = list[j + 1], list[j]

return list

print(bubble_sort([5, 2, 1, 8, 4]))

```

关于更多的使用说明,请参考 CodeGeeX2 的 [Github Repo](https://github.com/THUDM/CodeGeeX2)。