modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

microsoft/git-large | 2023-02-08T10:49:46.000Z | [

"transformers",

"pytorch",

"git",

"text-generation",

"vision",

"image-captioning",

"image-to-text",

"en",

"arxiv:2205.14100",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

] | image-to-text | microsoft | null | null | microsoft/git-large | 9 | 1,703 | transformers | 2023-01-02T10:33:16 | ---

language: en

license: mit

tags:

- vision

- image-captioning

model_name: microsoft/git-base

pipeline_tag: image-to-text

---

# GIT (GenerativeImage2Text), large-sized

GIT (short for GenerativeImage2Text) model, large-sized version. It was introduced in the paper [GIT: A Generative Image-to-text Transformer for Vision and Language](https://arxiv.org/abs/2205.14100) by Wang et al. and first released in [this repository](https://github.com/microsoft/GenerativeImage2Text).

Disclaimer: The team releasing GIT did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

GIT is a Transformer decoder conditioned on both CLIP image tokens and text tokens. The model is trained using "teacher forcing" on a lot of (image, text) pairs.

The goal for the model is simply to predict the next text token, giving the image tokens and previous text tokens.

The model has full access to (i.e. a bidirectional attention mask is used for) the image patch tokens, but only has access to the previous text tokens (i.e. a causal attention mask is used for the text tokens) when predicting the next text token.

This allows the model to be used for tasks like:

- image and video captioning

- visual question answering (VQA) on images and videos

- even image classification (by simply conditioning the model on the image and asking it to generate a class for it in text).

## Intended uses & limitations

You can use the raw model for image captioning. See the [model hub](https://huggingface.co/models?search=microsoft/git) to look for

fine-tuned versions on a task that interests you.

### How to use

For code examples, we refer to the [documentation](https://huggingface.co/transformers/main/model_doc/git.html).

## Training data

From the paper:

> We collect 0.8B image-text pairs for pre-training, which include COCO (Lin et al., 2014), Conceptual Captions

(CC3M) (Sharma et al., 2018), SBU (Ordonez et al., 2011), Visual Genome (VG) (Krishna et al., 2016),

Conceptual Captions (CC12M) (Changpinyo et al., 2021), ALT200M (Hu et al., 2021a), and an extra 0.6B

data following a similar collection procedure in Hu et al. (2021a).

=> however this is for the model referred to as "GIT" in the paper, which is not open-sourced.

This checkpoint is "GIT-large", which is a smaller variant of GIT trained on 20 million image-text pairs.

See table 11 in the [paper](https://arxiv.org/abs/2205.14100) for more details.

### Preprocessing

We refer to the original repo regarding details for preprocessing during training.

During validation, one resizes the shorter edge of each image, after which center cropping is performed to a fixed-size resolution. Next, frames are normalized across the RGB channels with the ImageNet mean and standard deviation.

## Evaluation results

For evaluation results, we refer readers to the [paper](https://arxiv.org/abs/2205.14100). | 3,068 | [

[

-0.04559326171875,

-0.052520751953125,

0.01549530029296875,

-0.0111083984375,

-0.03564453125,

0.0006947517395019531,

-0.01397705078125,

-0.035675048828125,

0.026214599609375,

0.0285186767578125,

-0.041900634765625,

-0.02740478515625,

-0.068115234375,

-0.0004... |

WizardLM/WizardLM-7B-V1.0 | 2023-09-01T07:56:28.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"arxiv:2304.12244",

"arxiv:2306.08568",

"arxiv:2308.09583",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | WizardLM | null | null | WizardLM/WizardLM-7B-V1.0 | 79 | 1,703 | transformers | 2023-04-25T06:32:43 | The WizardLM delta weights.

## WizardLM: Empowering Large Pre-Trained Language Models to Follow Complex Instructions

<p align="center">

🤗 <a href="https://huggingface.co/WizardLM" target="_blank">HF Repo</a> • 🐦 <a href="https://twitter.com/WizardLM_AI" target="_blank">Twitter</a> • 📃 <a href="https://arxiv.org/abs/2304.12244" target="_blank">[WizardLM]</a> • 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> • 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a> <br>

</p>

<p align="center">

👋 Join our <a href="https://discord.gg/VZjjHtWrKs" target="_blank">Discord</a>

</p>

| Model | Checkpoint | Paper | HumanEval | MBPP | Demo | License |

| ----- |------| ---- |------|-------| ----- | ----- |

| WizardCoder-Python-34B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-Python-34B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 73.2 | 61.2 | [Demo](http://47.103.63.15:50085/) | <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama2</a> |

| WizardCoder-15B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-15B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 59.8 |50.6 | -- | <a href="https://huggingface.co/spaces/bigcode/bigcode-model-license-agreement" target="_blank">OpenRAIL-M</a> |

| WizardCoder-Python-13B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-Python-13B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 64.0 | 55.6 | -- | <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama2</a> |

| WizardCoder-3B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-3B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 34.8 |37.4 | [Demo](http://47.103.63.15:50086/) | <a href="https://huggingface.co/spaces/bigcode/bigcode-model-license-agreement" target="_blank">OpenRAIL-M</a> |

| WizardCoder-1B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardCoder-1B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2306.08568" target="_blank">[WizardCoder]</a> | 23.8 |28.6 | -- | <a href="https://huggingface.co/spaces/bigcode/bigcode-model-license-agreement" target="_blank">OpenRAIL-M</a> |

| Model | Checkpoint | Paper | GSM8k | MATH |Online Demo| License|

| ----- |------| ---- |------|-------| ----- | ----- |

| WizardMath-70B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-70B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **81.6** | **22.7** |[Demo](http://47.103.63.15:50083/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a> |

| WizardMath-13B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-13B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **63.9** | **14.0** |[Demo](http://47.103.63.15:50082/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a> |

| WizardMath-7B-V1.0 | 🤗 <a href="https://huggingface.co/WizardLM/WizardMath-7B-V1.0" target="_blank">HF Link</a> | 📃 <a href="https://arxiv.org/abs/2308.09583" target="_blank">[WizardMath]</a>| **54.9** | **10.7** | [Demo](http://47.103.63.15:50080/)| <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 </a>|

<font size=4>

| <sup>Model</sup> | <sup>Checkpoint</sup> | <sup>Paper</sup> |<sup>MT-Bench</sup> | <sup>AlpacaEval</sup> | <sup>WizardEval</sup> | <sup>HumanEval</sup> | <sup>License</sup>|

| ----- |------| ---- |------|-------| ----- | ----- | ----- |

| <sup>WizardLM-13B-V1.2</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.2" target="_blank">HF Link</a> </sup>| | <sup>7.06</sup> | <sup>89.17%</sup> | <sup>101.4% </sup>|<sup>36.6 pass@1</sup>|<sup> <a href="https://ai.meta.com/resources/models-and-libraries/llama-downloads/" target="_blank">Llama 2 License </a></sup> |

| <sup>WizardLM-13B-V1.1</sup> |<sup> 🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.1" target="_blank">HF Link</a> </sup> | | <sup>6.76</sup> |<sup>86.32%</sup> | <sup>99.3% </sup> |<sup>25.0 pass@1</sup>| <sup>Non-commercial</sup>|

| <sup>WizardLM-30B-V1.0</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-30B-V1.0" target="_blank">HF Link</a></sup> | | <sup>7.01</sup> | | <sup>97.8% </sup> | <sup>37.8 pass@1</sup>| <sup>Non-commercial</sup> |

| <sup>WizardLM-13B-V1.0</sup> | <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-13B-V1.0" target="_blank">HF Link</a> </sup> | | <sup>6.35</sup> | <sup>75.31%</sup> | <sup>89.1% </sup> |<sup> 24.0 pass@1 </sup> | <sup>Non-commercial</sup>|

| <sup>WizardLM-7B-V1.0 </sup>| <sup>🤗 <a href="https://huggingface.co/WizardLM/WizardLM-7B-V1.0" target="_blank">HF Link</a> </sup> |<sup> 📃 <a href="https://arxiv.org/abs/2304.12244" target="_blank">[WizardLM]</a> </sup>| | | <sup>78.0% </sup> |<sup>19.1 pass@1 </sup>|<sup> Non-commercial</sup>|

</font>

## Inference WizardLM Demo Script

We provide the inference WizardLM demo code [here](https://github.com/nlpxucan/WizardLM/tree/main/demo).

| 5,613 | [

[

-0.044158935546875,

-0.02984619140625,

0.0010662078857421875,

0.0248565673828125,

0.00348663330078125,

-0.00933837890625,

0.00324249267578125,

-0.0278167724609375,

0.021148681640625,

0.0229644775390625,

-0.059295654296875,

-0.0501708984375,

-0.043212890625,

... |

SargeZT/controlnet-sd-xl-1.0-softedge-dexined | 2023-08-14T19:47:54.000Z | [

"diffusers",

"stable-diffusion-xl",

"stable-diffusion-xl-diffusers",

"text-to-image",

"controlnet",

"license:creativeml-openrail-m",

"has_space",

"diffusers:ControlNetModel",

"region:us"

] | text-to-image | SargeZT | null | null | SargeZT/controlnet-sd-xl-1.0-softedge-dexined | 13 | 1,703 | diffusers | 2023-08-14T09:04:22 |

---

license: creativeml-openrail-m

base_model: stabilityai/stable-diffusion-xl-base-1.0

tags:

- stable-diffusion-xl

- stable-diffusion-xl-diffusers

- text-to-image

- diffusers

- controlnet

inference: true

---

# controlnet-SargeZT/controlnet-sd-xl-1.0-softedge-dexined

These are controlnet weights trained on stabilityai/stable-diffusion-xl-base-1.0 with dexined soft edge preprocessing.

prompt: a dog sitting in the driver's seat of a car

prompt: a man throwing a frisbee in a park

prompt: a herd of elephants standing next to each other

prompt: a large body of water with a large clock tower

prompt: a man standing on a tennis court holding a racquet

prompt: a bathroom with a toilet, sink, and trash can

prompt: a cupcake sitting on top of a white plate

prompt: a young boy blowing out candles on a birthday cake

## License

[SDXL 1.0 License](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/blob/main/LICENSE.md)

| 1,276 | [

[

-0.037322998046875,

-0.01445770263671875,

0.00968170166015625,

0.045166015625,

-0.015838623046875,

-0.0126190185546875,

-0.00165557861328125,

0.0010662078857421875,

0.041290283203125,

0.0290374755859375,

-0.042205810546875,

-0.03265380859375,

-0.050506591796875,... |

maywell/Synatra_TbST11B_EP01 | 2023-10-18T12:27:36.000Z | [

"transformers",

"pytorch",

"mistral",

"text-generation",

"ko",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | maywell | null | null | maywell/Synatra_TbST11B_EP01 | 0 | 1,703 | transformers | 2023-10-18T07:07:18 | ---

language:

- ko

library_name: transformers

pipeline_tag: text-generation

license: cc-by-nc-4.0

---

# **Synatra_TbST11B_EP01**

Made by StableFluffy

**Contact (Do not Contact for personal things.)**

Discord : is.maywell

Telegram : AlzarTakkarsen

## License

This model is strictly [*non-commercial*](https://creativecommons.org/licenses/by-nc/4.0/) (**cc-by-nc-4.0**) use only which takes priority over the **MISTRAL APACHE 2.0**.

The "Model" is completely free (ie. base model, derivates, merges/mixes) to use for non-commercial purposes as long as the the included **cc-by-nc-4.0** license in any parent repository, and the non-commercial use statute remains, regardless of other models' licences.

The licence can be changed after new model released. If you are to use this model for commercial purpose, Contact me.

## Model Details

**Base Model**

[mistralai/Mistral-7B-Instruct-v0.1](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.1)

**Trained On**

A100 80GB * 4

# **Model Benchmark**

X

```

> Readme format: [beomi/llama-2-ko-7b](https://huggingface.co/beomi/llama-2-ko-7b)

--- | 1,115 | [

[

-0.0236053466796875,

-0.044097900390625,

0.003437042236328125,

0.035888671875,

-0.0572509765625,

-0.035064697265625,

-0.0026988983154296875,

-0.059234619140625,

0.03179931640625,

0.030853271484375,

-0.062225341796875,

-0.03582763671875,

-0.045745849609375,

0... |

DeepPavlov/distilrubert-small-cased-conversational | 2022-06-28T17:19:09.000Z | [

"transformers",

"pytorch",

"distilbert",

"ru",

"arxiv:2205.02340",

"endpoints_compatible",

"has_space",

"region:us"

] | null | DeepPavlov | null | null | DeepPavlov/distilrubert-small-cased-conversational | 0 | 1,702 | transformers | 2022-06-28T17:15:00 | ---

language:

- ru

---

# distilrubert-small-cased-conversational

Conversational DistilRuBERT-small \(Russian, cased, 2‑layer, 768‑hidden, 12‑heads, 107M parameters\) was trained on OpenSubtitles\[1\], [Dirty](https://d3.ru/), [Pikabu](https://pikabu.ru/), and a Social Media segment of Taiga corpus\[2\] (as [Conversational RuBERT](https://huggingface.co/DeepPavlov/rubert-base-cased-conversational)). It can be considered as small copy of [Conversational DistilRuBERT-base](https://huggingface.co/DeepPavlov/distilrubert-base-cased-conversational).

Our DistilRuBERT-small was highly inspired by \[3\], \[4\]. Namely, we used

* KL loss (between teacher and student output logits)

* MLM loss (between tokens labels and student output logits)

* Cosine embedding loss (between averaged six consecutive hidden states from teacher's encoder and one hidden state of the student)

* MSE loss (between averaged six consecutive attention maps from teacher's encoder and one attention map of the student)

The model was trained for about 80 hrs. on 8 nVIDIA Tesla P100-SXM2.0 16Gb.

To evaluate improvements in the inference speed, we ran teacher and student models on random sequences with seq_len=512, batch_size = 16 (for throughput) and batch_size=1 (for latency).

All tests were performed on Intel(R) Xeon(R) CPU E5-2698 v4 @ 2.20GHz and nVIDIA Tesla P100-SXM2.0 16Gb.

| Model | Size, Mb. | CPU latency, sec.| GPU latency, sec. | CPU throughput, samples/sec. | GPU throughput, samples/sec. |

|-------------------------------------------------|------------|------------------|-------------------|------------------------------|------------------------------|

| Teacher (RuBERT-base-cased-conversational) | 679 | 0.655 | 0.031 | 0.3754 | 36.4902 |

| Student (DistilRuBERT-small-cased-conversational)| 409 | 0.1656 | 0.015 | 0.9692 | 71.3553 |

To evaluate model quality, we fine-tuned DistilRuBERT-small on classification, NER and question answering tasks. Scores and archives with fine-tuned models can be found in [DeepPavlov docs](http://docs.deeppavlov.ai/en/master/features/overview.html#models). Also, results could be found in the [paper](https://arxiv.org/abs/2205.02340) Tables 1&2 as well as performance benchmarks and training details.

# Citation

If you found the model useful for your research, we are kindly ask to cite [this](https://arxiv.org/abs/2205.02340) paper:

```

@misc{https://doi.org/10.48550/arxiv.2205.02340,

doi = {10.48550/ARXIV.2205.02340},

url = {https://arxiv.org/abs/2205.02340},

author = {Kolesnikova, Alina and Kuratov, Yuri and Konovalov, Vasily and Burtsev, Mikhail},

keywords = {Computation and Language (cs.CL), Machine Learning (cs.LG), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Knowledge Distillation of Russian Language Models with Reduction of Vocabulary},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

\[1\]: P. Lison and J. Tiedemann, 2016, OpenSubtitles2016: Extracting Large Parallel Corpora from Movie and TV Subtitles. In Proceedings of the 10th International Conference on Language Resources and Evaluation \(LREC 2016\)

\[2\]: Shavrina T., Shapovalova O. \(2017\) TO THE METHODOLOGY OF CORPUS CONSTRUCTION FOR MACHINE LEARNING: «TAIGA» SYNTAX TREE CORPUS AND PARSER. in proc. of “CORPORA2017”, international conference , Saint-Petersbourg, 2017.

\[3\]: Sanh, V., Debut, L., Chaumond, J., & Wolf, T. \(2019\). DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. arXiv preprint arXiv:1910.01108.

\[4\]: <https://github.com/huggingface/transformers/tree/master/examples/research_projects/distillation> | 3,886 | [

[

-0.03570556640625,

-0.0728759765625,

0.0282135009765625,

-0.00478363037109375,

-0.0223846435546875,

0.00809478759765625,

-0.041839599609375,

-0.00460052490234375,

-0.0037384033203125,

0.003376007080078125,

-0.0287322998046875,

-0.036834716796875,

-0.054809570312... |

microsoft/BioGPT-Large-PubMedQA | 2023-02-04T07:50:25.000Z | [

"transformers",

"pytorch",

"biogpt",

"text-generation",

"medical",

"en",

"dataset:pubmed_qa",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

] | text-generation | microsoft | null | null | microsoft/BioGPT-Large-PubMedQA | 84 | 1,701 | transformers | 2023-02-03T20:33:43 | ---

license: mit

datasets:

- pubmed_qa

language:

- en

metrics:

- accuracy

library_name: transformers

pipeline_tag: text-generation

tags:

- medical

widget:

- text: "question: Can 'high-risk' human papillomaviruses (HPVs) be detected in human breast milk? context: Using polymerase chain reaction techniques, we evaluated the presence of HPV infection in human breast milk collected from 21 HPV-positive and 11 HPV-negative mothers. Of the 32 studied human milk specimens, no 'high-risk' HPV 16, 18, 31, 33, 35, 39, 45, 51, 52, 56, 58 or 58 DNA was detected. answer: This preliminary case-control study indicates the absence of mucosal 'high-risk' HPV types in human breast milk."

inference:

parameters:

max_new_tokens: 250

do_sample: False

---

## BioGPT

Pre-trained language models have attracted increasing attention in the biomedical domain, inspired by their great success in the general natural language domain. Among the two main branches of pre-trained language models in the general language domain, i.e. BERT (and its variants) and GPT (and its variants), the first one has been extensively studied in the biomedical domain, such as BioBERT and PubMedBERT. While they have achieved great success on a variety of discriminative downstream biomedical tasks, the lack of generation ability constrains their application scope. In this paper, we propose BioGPT, a domain-specific generative Transformer language model pre-trained on large-scale biomedical literature. We evaluate BioGPT on six biomedical natural language processing tasks and demonstrate that our model outperforms previous models on most tasks. Especially, we get 44.98%, 38.42% and 40.76% F1 score on BC5CDR, KD-DTI and DDI end-to-end relation extraction tasks, respectively, and 78.2% accuracy on PubMedQA, creating a new record. Our case study on text generation further demonstrates the advantage of BioGPT on biomedical literature to generate fluent descriptions for biomedical terms.

## Citation

If you find BioGPT useful in your research, please cite the following paper:

```latex

@article{10.1093/bib/bbac409,

author = {Luo, Renqian and Sun, Liai and Xia, Yingce and Qin, Tao and Zhang, Sheng and Poon, Hoifung and Liu, Tie-Yan},

title = "{BioGPT: generative pre-trained transformer for biomedical text generation and mining}",

journal = {Briefings in Bioinformatics},

volume = {23},

number = {6},

year = {2022},

month = {09},

abstract = "{Pre-trained language models have attracted increasing attention in the biomedical domain, inspired by their great success in the general natural language domain. Among the two main branches of pre-trained language models in the general language domain, i.e. BERT (and its variants) and GPT (and its variants), the first one has been extensively studied in the biomedical domain, such as BioBERT and PubMedBERT. While they have achieved great success on a variety of discriminative downstream biomedical tasks, the lack of generation ability constrains their application scope. In this paper, we propose BioGPT, a domain-specific generative Transformer language model pre-trained on large-scale biomedical literature. We evaluate BioGPT on six biomedical natural language processing tasks and demonstrate that our model outperforms previous models on most tasks. Especially, we get 44.98\%, 38.42\% and 40.76\% F1 score on BC5CDR, KD-DTI and DDI end-to-end relation extraction tasks, respectively, and 78.2\% accuracy on PubMedQA, creating a new record. Our case study on text generation further demonstrates the advantage of BioGPT on biomedical literature to generate fluent descriptions for biomedical terms.}",

issn = {1477-4054},

doi = {10.1093/bib/bbac409},

url = {https://doi.org/10.1093/bib/bbac409},

note = {bbac409},

eprint = {https://academic.oup.com/bib/article-pdf/23/6/bbac409/47144271/bbac409.pdf},

}

``` | 3,904 | [

[

-0.0170745849609375,

-0.06170654296875,

0.0433349609375,

0.01091766357421875,

-0.037322998046875,

0.002109527587890625,

-0.0067901611328125,

-0.04156494140625,

0.0004954338073730469,

0.0227203369140625,

-0.037261962890625,

-0.039642333984375,

-0.0509033203125,

... |

digiplay/unstableDiffusersYamerMIX_v3 | 2023-07-07T05:44:52.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/unstableDiffusersYamerMIX_v3 | 3 | 1,699 | diffusers | 2023-07-07T05:14:21 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

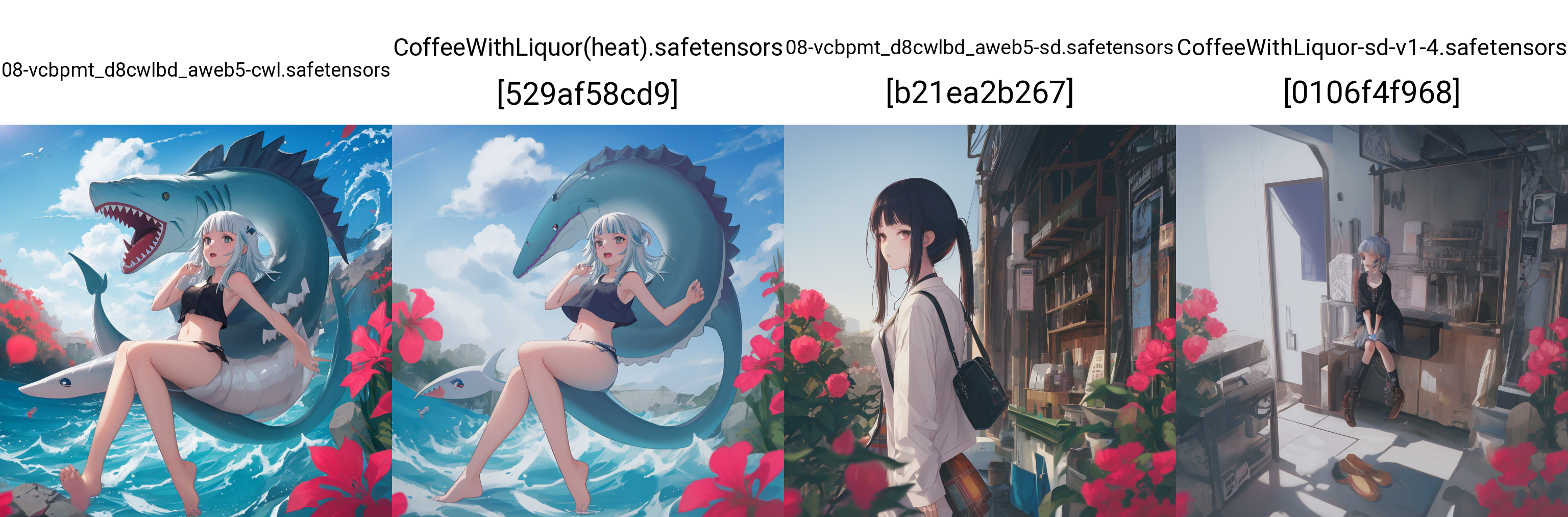

Model info :

https://civitai.com/models/84040/unstable-diffusers-yamermix

Sample images I made :

| 996 | [

[

-0.04766845703125,

-0.03326416015625,

0.0294189453125,

0.018035888671875,

-0.026123046875,

-0.00312042236328125,

0.0175628662109375,

-0.00841522216796875,

0.029083251953125,

0.0084381103515625,

-0.054443359375,

-0.02032470703125,

-0.051055908203125,

-0.00793... |

google/pix2struct-ai2d-base | 2023-05-19T09:58:01.000Z | [

"transformers",

"pytorch",

"pix2struct",

"text2text-generation",

"visual-question-answering",

"en",

"fr",

"ro",

"de",

"multilingual",

"arxiv:2210.03347",

"license:apache-2.0",

"autotrain_compatible",

"has_space",

"region:us"

] | visual-question-answering | google | null | null | google/pix2struct-ai2d-base | 28 | 1,697 | transformers | 2023-03-14T10:02:51 | ---

language:

- en

- fr

- ro

- de

- multilingual

inference: false

pipeline_tag: visual-question-answering

license: apache-2.0

---

# Model card for Pix2Struct - Finetuned on AI2D (scientific diagram VQA)

# Table of Contents

0. [TL;DR](#TL;DR)

1. [Using the model](#using-the-model)

2. [Contribution](#contribution)

3. [Citation](#citation)

# TL;DR

Pix2Struct is an image encoder - text decoder model that is trained on image-text pairs for various tasks, including image captionning and visual question answering. The full list of available models can be found on the Table 1 of the paper:

The abstract of the model states that:

> Visually-situated language is ubiquitous—sources range from textbooks with diagrams to web pages with images and tables, to mobile apps with buttons and

forms. Perhaps due to this diversity, previous work has typically relied on domainspecific recipes with limited sharing of the underlying data, model architectures,

and objectives. We present Pix2Struct, a pretrained image-to-text model for

purely visual language understanding, which can be finetuned on tasks containing visually-situated language. Pix2Struct is pretrained by learning to parse

masked screenshots of web pages into simplified HTML. The web, with its richness of visual elements cleanly reflected in the HTML structure, provides a large

source of pretraining data well suited to the diversity of downstream tasks. Intuitively, this objective subsumes common pretraining signals such as OCR, language modeling, image captioning. In addition to the novel pretraining strategy,

we introduce a variable-resolution input representation and a more flexible integration of language and vision inputs, where language prompts such as questions

are rendered directly on top of the input image. For the first time, we show that a

single pretrained model can achieve state-of-the-art results in six out of nine tasks

across four domains: documents, illustrations, user interfaces, and natural images.

# Using the model

This model has been fine-tuned on VQA, you need to provide a question in a specific format, ideally in the format of a Choices question answering

## Running the model

### In full precision, on CPU:

You can run the model in full precision on CPU:

```python

import requests

from PIL import Image

from transformers import Pix2StructForConditionalGeneration, Pix2StructProcessor

image_url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/ai2d-demo.jpg"

image = Image.open(requests.get(image_url, stream=True).raw)

model = Pix2StructForConditionalGeneration.from_pretrained("google/pix2struct-ai2d-base")

processor = Pix2StructProcessor.from_pretrained("google/pix2struct-ai2d-base")

question = "What does the label 15 represent? (1) lava (2) core (3) tunnel (4) ash cloud"

inputs = processor(images=image, text=question, return_tensors="pt")

predictions = model.generate(**inputs)

print(processor.decode(predictions[0], skip_special_tokens=True))

>>> ash cloud

```

### In full precision, on GPU:

You can run the model in full precision on CPU:

```python

import requests

from PIL import Image

from transformers import Pix2StructForConditionalGeneration, Pix2StructProcessor

image_url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/ai2d-demo.jpg"

image = Image.open(requests.get(image_url, stream=True).raw)

model = Pix2StructForConditionalGeneration.from_pretrained("google/pix2struct-ai2d-base").to("cuda")

processor = Pix2StructProcessor.from_pretrained("google/pix2struct-ai2d-base")

question = "What does the label 15 represent? (1) lava (2) core (3) tunnel (4) ash cloud"

inputs = processor(images=image, text=question, return_tensors="pt").to("cuda")

predictions = model.generate(**inputs)

print(processor.decode(predictions[0], skip_special_tokens=True))

>>> ash cloud

```

### In half precision, on GPU:

You can run the model in full precision on CPU:

```python

import requests

from PIL import Image

import torch

from transformers import Pix2StructForConditionalGeneration, Pix2StructProcessor

image_url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/tasks/ai2d-demo.jpg"

image = Image.open(requests.get(image_url, stream=True).raw)

model = Pix2StructForConditionalGeneration.from_pretrained("google/pix2struct-ai2d-base", torch_dtype=torch.bfloat16).to("cuda")

processor = Pix2StructProcessor.from_pretrained("google/pix2struct-ai2d-base")

question = "What does the label 15 represent? (1) lava (2) core (3) tunnel (4) ash cloud"

inputs = processor(images=image, text=question, return_tensors="pt").to("cuda", torch.bfloat16)

predictions = model.generate(**inputs)

print(processor.decode(predictions[0], skip_special_tokens=True))

>>> ash cloud

```

## Converting from T5x to huggingface

You can use the [`convert_pix2struct_checkpoint_to_pytorch.py`](https://github.com/huggingface/transformers/blob/main/src/transformers/models/pix2struct/convert_pix2struct_checkpoint_to_pytorch.py) script as follows:

```bash

python convert_pix2struct_checkpoint_to_pytorch.py --t5x_checkpoint_path PATH_TO_T5X_CHECKPOINTS --pytorch_dump_path PATH_TO_SAVE --is_vqa

```

if you are converting a large model, run:

```bash

python convert_pix2struct_checkpoint_to_pytorch.py --t5x_checkpoint_path PATH_TO_T5X_CHECKPOINTS --pytorch_dump_path PATH_TO_SAVE --use-large --is_vqa

```

Once saved, you can push your converted model with the following snippet:

```python

from transformers import Pix2StructForConditionalGeneration, Pix2StructProcessor

model = Pix2StructForConditionalGeneration.from_pretrained(PATH_TO_SAVE)

processor = Pix2StructProcessor.from_pretrained(PATH_TO_SAVE)

model.push_to_hub("USERNAME/MODEL_NAME")

processor.push_to_hub("USERNAME/MODEL_NAME")

```

# Contribution

This model was originally contributed by Kenton Lee, Mandar Joshi et al. and added to the Hugging Face ecosystem by [Younes Belkada](https://huggingface.co/ybelkada).

# Citation

If you want to cite this work, please consider citing the original paper:

```

@misc{https://doi.org/10.48550/arxiv.2210.03347,

doi = {10.48550/ARXIV.2210.03347},

url = {https://arxiv.org/abs/2210.03347},

author = {Lee, Kenton and Joshi, Mandar and Turc, Iulia and Hu, Hexiang and Liu, Fangyu and Eisenschlos, Julian and Khandelwal, Urvashi and Shaw, Peter and Chang, Ming-Wei and Toutanova, Kristina},

keywords = {Computation and Language (cs.CL), Computer Vision and Pattern Recognition (cs.CV), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Pix2Struct: Screenshot Parsing as Pretraining for Visual Language Understanding},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

``` | 7,083 | [

[

-0.032379150390625,

-0.0633544921875,

0.0252838134765625,

0.010040283203125,

-0.0124664306640625,

-0.0249481201171875,

-0.01446533203125,

-0.03790283203125,

-0.00926971435546875,

0.02032470703125,

-0.04388427734375,

-0.0166473388671875,

-0.052825927734375,

-... |

MirageML/lowpoly-cyberpunk | 2023-05-05T21:32:43.000Z | [

"diffusers",

"safetensors",

"stable-diffusion",

"text-to-image",

"en",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | MirageML | null | null | MirageML/lowpoly-cyberpunk | 28 | 1,695 | diffusers | 2022-11-28T07:50:09 | ---

language:

- en

tags:

- stable-diffusion

- text-to-image

license: creativeml-openrail-m

inference: true

---

# Low Poly Cyberpunk on Stable Diffusion via Dreambooth

This the Stable Diffusion model fine-tuned the Low Poly Cyberpunk concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt`: **a photo of lowpoly_cyberpunk**

# Run on [Mirage](https://app.mirageml.com)

Run this model and explore text-to-3D on [Mirage](https://app.mirageml.com)!

Here are is a sample output for this model:

# Share your Results and Reach us on [Discord](https://discord.gg/9B2Pu2bEvj)!

[](https://discord.gg/9B2Pu2bEvj)

[Image Source](https://www.behance.net/search/images?similarStyleImagesId=847895439) | 918 | [

[

-0.037506103515625,

-0.08795166015625,

0.0494384765625,

0.01264190673828125,

-0.0157318115234375,

0.0189361572265625,

-0.0023288726806640625,

-0.031951904296875,

0.045562744140625,

0.044952392578125,

-0.042877197265625,

-0.040802001953125,

-0.0248870849609375,

... |

quantumaikr/KoreanLM | 2023-05-04T10:16:45.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"vicuna",

"ko",

"en",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | quantumaikr | null | null | quantumaikr/KoreanLM | 15 | 1,693 | transformers | 2023-05-03T07:37:23 | ---

language:

- ko

- en

pipeline_tag: text-generation

tags:

- vicuna

- llama

---

<p align="center" width="100%">

<img src="https://i.imgur.com/snFDU0P.png" alt="KoreanLM icon" style="width: 500px; display: block; margin: auto; border-radius: 10%;">

</p>

# KoreanLM: 한국어 언어모델 프로젝트

KoreanLM은 한국어 언어모델을 개발하기 위한 오픈소스 프로젝트입니다. 현재 대부분의 언어모델들은 영어에 초점을 맞추고 있어, 한국어에 대한 학습이 상대적으로 부족하고 토큰화 과정에서 비효율적인 경우가 있습니다. 이러한 문제를 해결하고 한국어에 최적화된 언어모델을 제공하기 위해 KoreanLM 프로젝트를 시작하게 되었습니다.

## 프로젝트 목표

1. 한국어에 특화된 언어모델 개발: 한국어의 문법, 어휘, 문화적 특성을 반영하여 한국어를 더 정확하게 이해하고 생성할 수 있는 언어모델을 개발합니다.

2. 효율적인 토큰화 방식 도입: 한국어 텍스트의 토큰화 과정에서 효율적이고 정확한 분석이 가능한 새로운 토큰화 방식을 도입하여 언어모델의 성능을 향상시킵니다.

3. 거대 언어모델의 사용성 개선: 현재 거대한 사이즈의 언어모델들은 기업이 자사의 데이터를 파인튜닝하기 어려운 문제가 있습니다. 이를 해결하기 위해 한국어 언어모델의 크기를 조절하여 사용성을 개선하고, 자연어 처리 작업에 더 쉽게 적용할 수 있도록 합니다.

## 사용 방법

KoreanLM은 GitHub 저장소를 통해 배포됩니다. 프로젝트를 사용하려면 다음과 같은 방법으로 설치하실 수 있습니다.

```bash

git clone https://github.com/quantumaikr/KoreanLM.git

cd KoreanLM

pip install -r requirements.txt

```

## 예제

다음은 transformers 라이브러리를 통해 모델과 토크나이저를 로딩하는 예제입니다.

```python

import transformers

model = transformers.AutoModelForCausalLM.from_pretrained("quantumaikr/KoreanLM")

tokenizer = transformers.AutoTokenizer.from_pretrained("quantumaikr/KoreanLM")

```

## 훈련 (파인튜닝)

```bash

torchrun --nproc_per_node=4 --master_port=1004 train.py \

--model_name_or_path quantumaikr/KoreanLM \

--data_path korean_data.json \

--num_train_epochs 3 \

--cache_dir './data' \

--bf16 True \

--tf32 True \

--per_device_train_batch_size 4 \

--per_device_eval_batch_size 4 \

--gradient_accumulation_steps 8 \

--evaluation_strategy "no" \

--save_strategy "steps" \

--save_steps 500 \

--save_total_limit 1 \

--learning_rate 2e-5 \

--weight_decay 0. \

--warmup_ratio 0.03 \

--lr_scheduler_type "cosine" \

--logging_steps 1 \

--fsdp "full_shard auto_wrap" \

--fsdp_transformer_layer_cls_to_wrap 'OPTDecoderLayer' \

```

```bash

pip install deepspeed

torchrun --nproc_per_node=4 --master_port=1004 train.py \

--deepspeed "./deepspeed.json" \

--model_name_or_path quantumaikr/KoreanLM \

--data_path korean_data.json \

--num_train_epochs 3 \

--cache_dir './data' \

--bf16 True \

--tf32 True \

--per_device_train_batch_size 4 \

--per_device_eval_batch_size 4 \

--gradient_accumulation_steps 8 \

--evaluation_strategy "no" \

--save_strategy "steps" \

--save_steps 2000 \

--save_total_limit 1 \

--learning_rate 2e-5 \

--weight_decay 0. \

--warmup_ratio 0.03 \

```

## 훈련 (LoRA)

```bash

python finetune-lora.py \

--base_model 'quantumaikr/KoreanLM' \

--data_path './korean_data.json' \

--output_dir './KoreanLM-LoRA' \

--cache_dir './data'

```

## 추론

```bash

python generate.py \

--load_8bit \

--share_gradio \

--base_model 'quantumaikr/KoreanLM' \

--lora_weights 'quantumaikr/KoreanLM-LoRA' \

--cache_dir './data'

```

## 사전학습 모델 공개 및 웹 데모

[학습모델](https://huggingface.co/quantumaikr/KoreanLM/tree/main)

<i>* 데모 링크는 추후 공계예정</i>

## 기여방법

1. 이슈 제기: KoreanLM 프로젝트와 관련된 문제점이나 개선사항을 이슈로 제기해주세요.

2. 코드 작성: 개선사항이나 새로운 기능을 추가하기 위해 코드를 작성하실 수 있습니다. 작성된 코드는 Pull Request를 통해 제출해주시기 바랍니다.

3. 문서 작성 및 번역: 프로젝트의 문서 작성이나 번역 작업에 참여하여 프로젝트의 질을 높여주세요.

4. 테스트 및 피드백: 프로젝트를 사용하면서 발견한 버그나 개선사항을 피드백해주시면 큰 도움이 됩니다.

## 라이선스

KoreanLM 프로젝트는 Apache 2.0 License 라이선스를 따릅니다. 프로젝트를 사용하실 때 라이선스에 따라 주의사항을 지켜주시기 바랍니다.

## 기술 문의

KoreanLM 프로젝트와 관련된 문의사항이 있으시면 이메일 또는 GitHub 이슈를 통해 문의해주시기 바랍니다. 이 프로젝트가 한국어 언어모델에 대한 연구와 개발에 도움이 되길 바라며, 많은 관심과 참여 부탁드립니다.

이메일: hi@quantumai.kr

---

This repository has implementations inspired by [open_llama](https://github.com/openlm-research/open_llama), [Stanford Alpaca](https://github.com/tatsu-lab/stanford_alpaca) and [alpaca-lora](https://github.com/tloen/alpaca-lora) projects. | 3,868 | [

[

-0.0372314453125,

-0.0386962890625,

0.0273284912109375,

0.0205078125,

-0.034332275390625,

0.012115478515625,

0.011932373046875,

-0.02459716796875,

0.032440185546875,

0.016693115234375,

-0.031982421875,

-0.039642333984375,

-0.03302001953125,

0.008346557617187... |

badmonk/kurxmi | 2023-07-21T00:38:42.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | badmonk | null | null | badmonk/kurxmi | 1 | 1,693 | diffusers | 2023-07-16T15:57:53 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

# Model Card for KURXMI

## Model Description

- **Developed by:** BADMONK

- **Model type:** Dreambooth Model + Extracted LoRA

- **Language(s) (NLP):** EN

- **License:** Creativeml-Openrail-M

- **Parent Model:** epicRealism

# How to Get Started with the Model

Use the code below to get started with the model.

### KURXMI ###

| 429 | [

[

-0.02520751953125,

-0.027984619140625,

0.0299072265625,

0.019256591796875,

-0.066162109375,

0.0194854736328125,

0.04241943359375,

-0.014678955078125,

0.03216552734375,

0.0643310546875,

-0.039581298828125,

-0.05194091796875,

-0.052947998046875,

-0.03240966796... |

classla/wav2vec2-large-slavic-parlaspeech-hr-lm | 2023-07-27T08:59:23.000Z | [

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"parlaspeech",

"hr",

"dataset:parlaspeech-hr",

"endpoints_compatible",

"region:us"

] | automatic-speech-recognition | classla | null | null | classla/wav2vec2-large-slavic-parlaspeech-hr-lm | 2 | 1,692 | transformers | 2022-04-28T12:56:15 | ---

language: hr

datasets:

- parlaspeech-hr

tags:

- audio

- automatic-speech-recognition

- parlaspeech

widget:

- example_title: example 1

src: https://huggingface.co/classla/wav2vec2-xls-r-parlaspeech-hr/raw/main/1800.m4a

- example_title: example 2

src: https://huggingface.co/classla/wav2vec2-xls-r-parlaspeech-hr/raw/main/00020578b.flac.wav

- example_title: example 3

src: https://huggingface.co/classla/wav2vec2-xls-r-parlaspeech-hr/raw/main/00020570a.flac.wav

---

# wav2vec2-large-slavic-parlaspeech-hr-lm

This model for Croatian ASR is based on the [facebook/wav2vec2-large-slavic-voxpopuli-v2 model](https://huggingface.co/facebook/wav2vec2-large-slavic-voxpopuli-v2) and was fine-tuned with 300 hours of recordings and transcripts from the ASR Croatian parliament dataset [ParlaSpeech-HR v1.0](http://hdl.handle.net/11356/1494) and enhanced with a 5-gram language model based on the [ParlaMint dataset](http://hdl.handle.net/11356/1432).

If you use this model, please cite the following paper:

Nikola Ljubešić, Danijel Koržinek, Peter Rupnik, Ivo-Pavao Jazbec. ParlaSpeech-HR -- a freely available ASR dataset for Croatian bootstrapped from the ParlaMint corpus. http://www.lrec-conf.org/proceedings/lrec2022/workshops/ParlaCLARINIII/pdf/2022.parlaclariniii-1.16.pdf

## Metrics

Evaluation is performed on the dev and test portions of the [ParlaSpeech-HR v1.0](http://hdl.handle.net/11356/1494) dataset.

|split|CER|WER|

|---|---|---|

|dev|0.0253|0.0556|

|test|0.0188|0.0430|

## Usage in `transformers`

Tested with `transformers==4.18.0`, `torch==1.11.0`, and `SoundFile==0.10.3.post1`.

```python

from transformers import Wav2Vec2ProcessorWithLM, Wav2Vec2ForCTC

import soundfile as sf

import torch

import os

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# load model and tokenizer

processor = Wav2Vec2ProcessorWithLM.from_pretrained(

"classla/wav2vec2-large-slavic-parlaspeech-hr-lm")

model = Wav2Vec2ForCTC.from_pretrained("classla/wav2vec2-large-slavic-parlaspeech-hr-lm")

# download the example wav files:

os.system("wget https://huggingface.co/classla/wav2vec2-large-slavic-parlaspeech-hr-lm/raw/main/00020570a.flac.wav")

# read the wav file

speech, sample_rate = sf.read("00020570a.flac.wav")

input_values = processor(speech, sampling_rate=sample_rate, return_tensors="pt").input_values.cuda()

inputs = processor(speech, sampling_rate=sample_rate, return_tensors="pt")

with torch.no_grad():

logits = model(**inputs).logits

transcription = processor.batch_decode(logits.numpy()).text[0]

# remove the raw wav file

os.system("rm 00020570a.flac.wav")

transcription # 'velik broj poslovnih subjekata poslao je sa minusom velik dio'

```

## Training hyperparameters

In fine-tuning, the following arguments were used:

| arg | value |

|-------------------------------|-------|

| `per_device_train_batch_size` | 16 |

| `gradient_accumulation_steps` | 4 |

| `num_train_epochs` | 8 |

| `learning_rate` | 3e-4 |

| `warmup_steps` | 500 | | 3,072 | [

[

-0.022735595703125,

-0.056854248046875,

0.00982666015625,

0.03253173828125,

-0.017730712890625,

-0.005855560302734375,

-0.04339599609375,

-0.03070068359375,

0.00446319580078125,

0.0193023681640625,

-0.040252685546875,

-0.046142578125,

-0.038238525390625,

-0.... |

timm/resnet50_gn.a1h_in1k | 2023-04-05T18:15:49.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"arxiv:2110.00476",

"arxiv:1512.03385",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/resnet50_gn.a1h_in1k | 0 | 1,692 | timm | 2023-04-05T18:15:24 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

---

# Model card for resnet50_gn.a1h_in1k

A ResNet-B image classification model.

This model features:

* ReLU activations

* single layer 7x7 convolution with pooling

* 1x1 convolution shortcut downsample

Trained on ImageNet-1k in `timm` using recipe template described below.

Recipe details:

* Based on [ResNet Strikes Back](https://arxiv.org/abs/2110.00476) `A1` recipe

* LAMB optimizer

* Stronger dropout, stochastic depth, and RandAugment than paper `A1` recipe

* Cosine LR schedule with warmup

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 25.6

- GMACs: 4.1

- Activations (M): 11.1

- Image size: train = 224 x 224, test = 288 x 288

- **Papers:**

- ResNet strikes back: An improved training procedure in timm: https://arxiv.org/abs/2110.00476

- Deep Residual Learning for Image Recognition: https://arxiv.org/abs/1512.03385

- **Original:** https://github.com/huggingface/pytorch-image-models

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('resnet50_gn.a1h_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'resnet50_gn.a1h_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 112, 112])

# torch.Size([1, 256, 56, 56])

# torch.Size([1, 512, 28, 28])

# torch.Size([1, 1024, 14, 14])

# torch.Size([1, 2048, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'resnet50_gn.a1h_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 2048, 7, 7) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

|model |img_size|top1 |top5 |param_count|gmacs|macts|img/sec|

|------------------------------------------|--------|-----|-----|-----------|-----|-----|-------|

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k_288](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k_288)|320 |86.72|98.17|93.6 |35.2 |69.7 |451 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k_288](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k_288)|288 |86.51|98.08|93.6 |28.5 |56.4 |560 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k)|288 |86.49|98.03|93.6 |28.5 |56.4 |557 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k)|224 |85.96|97.82|93.6 |17.2 |34.2 |923 |

|[resnext101_32x32d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x32d.fb_wsl_ig1b_ft_in1k)|224 |85.11|97.44|468.5 |87.3 |91.1 |254 |

|[resnetrs420.tf_in1k](https://huggingface.co/timm/resnetrs420.tf_in1k)|416 |85.0 |97.12|191.9 |108.4|213.8|134 |

|[ecaresnet269d.ra2_in1k](https://huggingface.co/timm/ecaresnet269d.ra2_in1k)|352 |84.96|97.22|102.1 |50.2 |101.2|291 |

|[ecaresnet269d.ra2_in1k](https://huggingface.co/timm/ecaresnet269d.ra2_in1k)|320 |84.73|97.18|102.1 |41.5 |83.7 |353 |

|[resnetrs350.tf_in1k](https://huggingface.co/timm/resnetrs350.tf_in1k)|384 |84.71|96.99|164.0 |77.6 |154.7|183 |

|[seresnextaa101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.ah_in1k)|288 |84.57|97.08|93.6 |28.5 |56.4 |557 |

|[resnetrs200.tf_in1k](https://huggingface.co/timm/resnetrs200.tf_in1k)|320 |84.45|97.08|93.2 |31.5 |67.8 |446 |

|[resnetrs270.tf_in1k](https://huggingface.co/timm/resnetrs270.tf_in1k)|352 |84.43|96.97|129.9 |51.1 |105.5|280 |

|[seresnext101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101d_32x8d.ah_in1k)|288 |84.36|96.92|93.6 |27.6 |53.0 |595 |

|[seresnet152d.ra2_in1k](https://huggingface.co/timm/seresnet152d.ra2_in1k)|320 |84.35|97.04|66.8 |24.1 |47.7 |610 |

|[resnetrs350.tf_in1k](https://huggingface.co/timm/resnetrs350.tf_in1k)|288 |84.3 |96.94|164.0 |43.7 |87.1 |333 |

|[resnext101_32x8d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_swsl_ig1b_ft_in1k)|224 |84.28|97.17|88.8 |16.5 |31.2 |1100 |

|[resnetrs420.tf_in1k](https://huggingface.co/timm/resnetrs420.tf_in1k)|320 |84.24|96.86|191.9 |64.2 |126.6|228 |

|[seresnext101_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101_32x8d.ah_in1k)|288 |84.19|96.87|93.6 |27.2 |51.6 |613 |

|[resnext101_32x16d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_wsl_ig1b_ft_in1k)|224 |84.18|97.19|194.0 |36.3 |51.2 |581 |

|[resnetaa101d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa101d.sw_in12k_ft_in1k)|288 |84.11|97.11|44.6 |15.1 |29.0 |1144 |

|[resnet200d.ra2_in1k](https://huggingface.co/timm/resnet200d.ra2_in1k)|320 |83.97|96.82|64.7 |31.2 |67.3 |518 |

|[resnetrs200.tf_in1k](https://huggingface.co/timm/resnetrs200.tf_in1k)|256 |83.87|96.75|93.2 |20.2 |43.4 |692 |

|[seresnextaa101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.ah_in1k)|224 |83.86|96.65|93.6 |17.2 |34.2 |923 |

|[resnetrs152.tf_in1k](https://huggingface.co/timm/resnetrs152.tf_in1k)|320 |83.72|96.61|86.6 |24.3 |48.1 |617 |

|[seresnet152d.ra2_in1k](https://huggingface.co/timm/seresnet152d.ra2_in1k)|256 |83.69|96.78|66.8 |15.4 |30.6 |943 |

|[seresnext101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101d_32x8d.ah_in1k)|224 |83.68|96.61|93.6 |16.7 |32.0 |986 |

|[resnet152d.ra2_in1k](https://huggingface.co/timm/resnet152d.ra2_in1k)|320 |83.67|96.74|60.2 |24.1 |47.7 |706 |

|[resnetrs270.tf_in1k](https://huggingface.co/timm/resnetrs270.tf_in1k)|256 |83.59|96.61|129.9 |27.1 |55.8 |526 |

|[seresnext101_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101_32x8d.ah_in1k)|224 |83.58|96.4 |93.6 |16.5 |31.2 |1013 |

|[resnetaa101d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa101d.sw_in12k_ft_in1k)|224 |83.54|96.83|44.6 |9.1 |17.6 |1864 |

|[resnet152.a1h_in1k](https://huggingface.co/timm/resnet152.a1h_in1k)|288 |83.46|96.54|60.2 |19.1 |37.3 |904 |

|[resnext101_32x16d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_swsl_ig1b_ft_in1k)|224 |83.35|96.85|194.0 |36.3 |51.2 |582 |

|[resnet200d.ra2_in1k](https://huggingface.co/timm/resnet200d.ra2_in1k)|256 |83.23|96.53|64.7 |20.0 |43.1 |809 |

|[resnext101_32x4d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x4d.fb_swsl_ig1b_ft_in1k)|224 |83.22|96.75|44.2 |8.0 |21.2 |1814 |

|[resnext101_64x4d.c1_in1k](https://huggingface.co/timm/resnext101_64x4d.c1_in1k)|288 |83.16|96.38|83.5 |25.7 |51.6 |590 |

|[resnet152d.ra2_in1k](https://huggingface.co/timm/resnet152d.ra2_in1k)|256 |83.14|96.38|60.2 |15.4 |30.5 |1096 |

|[resnet101d.ra2_in1k](https://huggingface.co/timm/resnet101d.ra2_in1k)|320 |83.02|96.45|44.6 |16.5 |34.8 |992 |

|[ecaresnet101d.miil_in1k](https://huggingface.co/timm/ecaresnet101d.miil_in1k)|288 |82.98|96.54|44.6 |13.4 |28.2 |1077 |

|[resnext101_64x4d.tv_in1k](https://huggingface.co/timm/resnext101_64x4d.tv_in1k)|224 |82.98|96.25|83.5 |15.5 |31.2 |989 |

|[resnetrs152.tf_in1k](https://huggingface.co/timm/resnetrs152.tf_in1k)|256 |82.86|96.28|86.6 |15.6 |30.8 |951 |

|[resnext101_32x8d.tv2_in1k](https://huggingface.co/timm/resnext101_32x8d.tv2_in1k)|224 |82.83|96.22|88.8 |16.5 |31.2 |1099 |

|[resnet152.a1h_in1k](https://huggingface.co/timm/resnet152.a1h_in1k)|224 |82.8 |96.13|60.2 |11.6 |22.6 |1486 |

|[resnet101.a1h_in1k](https://huggingface.co/timm/resnet101.a1h_in1k)|288 |82.8 |96.32|44.6 |13.0 |26.8 |1291 |

|[resnet152.a1_in1k](https://huggingface.co/timm/resnet152.a1_in1k)|288 |82.74|95.71|60.2 |19.1 |37.3 |905 |

|[resnext101_32x8d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_wsl_ig1b_ft_in1k)|224 |82.69|96.63|88.8 |16.5 |31.2 |1100 |

|[resnet152.a2_in1k](https://huggingface.co/timm/resnet152.a2_in1k)|288 |82.62|95.75|60.2 |19.1 |37.3 |904 |

|[resnetaa50d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa50d.sw_in12k_ft_in1k)|288 |82.61|96.49|25.6 |8.9 |20.6 |1729 |

|[resnet61q.ra2_in1k](https://huggingface.co/timm/resnet61q.ra2_in1k)|288 |82.53|96.13|36.8 |9.9 |21.5 |1773 |

|[wide_resnet101_2.tv2_in1k](https://huggingface.co/timm/wide_resnet101_2.tv2_in1k)|224 |82.5 |96.02|126.9 |22.8 |21.2 |1078 |

|[resnext101_64x4d.c1_in1k](https://huggingface.co/timm/resnext101_64x4d.c1_in1k)|224 |82.46|95.92|83.5 |15.5 |31.2 |987 |

|[resnet51q.ra2_in1k](https://huggingface.co/timm/resnet51q.ra2_in1k)|288 |82.36|96.18|35.7 |8.1 |20.9 |1964 |

|[ecaresnet50t.ra2_in1k](https://huggingface.co/timm/ecaresnet50t.ra2_in1k)|320 |82.35|96.14|25.6 |8.8 |24.1 |1386 |

|[resnet101.a1_in1k](https://huggingface.co/timm/resnet101.a1_in1k)|288 |82.31|95.63|44.6 |13.0 |26.8 |1291 |

|[resnetrs101.tf_in1k](https://huggingface.co/timm/resnetrs101.tf_in1k)|288 |82.29|96.01|63.6 |13.6 |28.5 |1078 |

|[resnet152.tv2_in1k](https://huggingface.co/timm/resnet152.tv2_in1k)|224 |82.29|96.0 |60.2 |11.6 |22.6 |1484 |

|[wide_resnet50_2.racm_in1k](https://huggingface.co/timm/wide_resnet50_2.racm_in1k)|288 |82.27|96.06|68.9 |18.9 |23.8 |1176 |

|[resnet101d.ra2_in1k](https://huggingface.co/timm/resnet101d.ra2_in1k)|256 |82.26|96.07|44.6 |10.6 |22.2 |1542 |

|[resnet101.a2_in1k](https://huggingface.co/timm/resnet101.a2_in1k)|288 |82.24|95.73|44.6 |13.0 |26.8 |1290 |

|[seresnext50_32x4d.racm_in1k](https://huggingface.co/timm/seresnext50_32x4d.racm_in1k)|288 |82.2 |96.14|27.6 |7.0 |23.8 |1547 |

|[ecaresnet101d.miil_in1k](https://huggingface.co/timm/ecaresnet101d.miil_in1k)|224 |82.18|96.05|44.6 |8.1 |17.1 |1771 |

|[resnext50_32x4d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext50_32x4d.fb_swsl_ig1b_ft_in1k)|224 |82.17|96.22|25.0 |4.3 |14.4 |2943 |

|[ecaresnet50t.a1_in1k](https://huggingface.co/timm/ecaresnet50t.a1_in1k)|288 |82.12|95.65|25.6 |7.1 |19.6 |1704 |

|[resnext50_32x4d.a1h_in1k](https://huggingface.co/timm/resnext50_32x4d.a1h_in1k)|288 |82.03|95.94|25.0 |7.0 |23.8 |1745 |

|[ecaresnet101d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet101d_pruned.miil_in1k)|288 |82.0 |96.15|24.9 |5.8 |12.7 |1787 |

|[resnet61q.ra2_in1k](https://huggingface.co/timm/resnet61q.ra2_in1k)|256 |81.99|95.85|36.8 |7.8 |17.0 |2230 |

|[resnext101_32x8d.tv2_in1k](https://huggingface.co/timm/resnext101_32x8d.tv2_in1k)|176 |81.98|95.72|88.8 |10.3 |19.4 |1768 |

|[resnet152.a1_in1k](https://huggingface.co/timm/resnet152.a1_in1k)|224 |81.97|95.24|60.2 |11.6 |22.6 |1486 |

|[resnet101.a1h_in1k](https://huggingface.co/timm/resnet101.a1h_in1k)|224 |81.93|95.75|44.6 |7.8 |16.2 |2122 |

|[resnet101.tv2_in1k](https://huggingface.co/timm/resnet101.tv2_in1k)|224 |81.9 |95.77|44.6 |7.8 |16.2 |2118 |

|[resnext101_32x16d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_ssl_yfcc100m_ft_in1k)|224 |81.84|96.1 |194.0 |36.3 |51.2 |583 |

|[resnet51q.ra2_in1k](https://huggingface.co/timm/resnet51q.ra2_in1k)|256 |81.78|95.94|35.7 |6.4 |16.6 |2471 |

|[resnet152.a2_in1k](https://huggingface.co/timm/resnet152.a2_in1k)|224 |81.77|95.22|60.2 |11.6 |22.6 |1485 |

|[resnetaa50d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa50d.sw_in12k_ft_in1k)|224 |81.74|96.06|25.6 |5.4 |12.4 |2813 |

|[ecaresnet50t.a2_in1k](https://huggingface.co/timm/ecaresnet50t.a2_in1k)|288 |81.65|95.54|25.6 |7.1 |19.6 |1703 |

|[ecaresnet50d.miil_in1k](https://huggingface.co/timm/ecaresnet50d.miil_in1k)|288 |81.64|95.88|25.6 |7.2 |19.7 |1694 |

|[resnext101_32x8d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_ssl_yfcc100m_ft_in1k)|224 |81.62|96.04|88.8 |16.5 |31.2 |1101 |

|[wide_resnet50_2.tv2_in1k](https://huggingface.co/timm/wide_resnet50_2.tv2_in1k)|224 |81.61|95.76|68.9 |11.4 |14.4 |1930 |

|[resnetaa50.a1h_in1k](https://huggingface.co/timm/resnetaa50.a1h_in1k)|288 |81.61|95.83|25.6 |8.5 |19.2 |1868 |

|[resnet101.a1_in1k](https://huggingface.co/timm/resnet101.a1_in1k)|224 |81.5 |95.16|44.6 |7.8 |16.2 |2125 |

|[resnext50_32x4d.a1_in1k](https://huggingface.co/timm/resnext50_32x4d.a1_in1k)|288 |81.48|95.16|25.0 |7.0 |23.8 |1745 |

|[gcresnet50t.ra2_in1k](https://huggingface.co/timm/gcresnet50t.ra2_in1k)|288 |81.47|95.71|25.9 |6.9 |18.6 |2071 |

|[wide_resnet50_2.racm_in1k](https://huggingface.co/timm/wide_resnet50_2.racm_in1k)|224 |81.45|95.53|68.9 |11.4 |14.4 |1929 |

|[resnet50d.a1_in1k](https://huggingface.co/timm/resnet50d.a1_in1k)|288 |81.44|95.22|25.6 |7.2 |19.7 |1908 |

|[ecaresnet50t.ra2_in1k](https://huggingface.co/timm/ecaresnet50t.ra2_in1k)|256 |81.44|95.67|25.6 |5.6 |15.4 |2168 |

|[ecaresnetlight.miil_in1k](https://huggingface.co/timm/ecaresnetlight.miil_in1k)|288 |81.4 |95.82|30.2 |6.8 |13.9 |2132 |

|[resnet50d.ra2_in1k](https://huggingface.co/timm/resnet50d.ra2_in1k)|288 |81.37|95.74|25.6 |7.2 |19.7 |1910 |

|[resnet101.a2_in1k](https://huggingface.co/timm/resnet101.a2_in1k)|224 |81.32|95.19|44.6 |7.8 |16.2 |2125 |

|[seresnet50.ra2_in1k](https://huggingface.co/timm/seresnet50.ra2_in1k)|288 |81.3 |95.65|28.1 |6.8 |18.4 |1803 |

|[resnext50_32x4d.a2_in1k](https://huggingface.co/timm/resnext50_32x4d.a2_in1k)|288 |81.3 |95.11|25.0 |7.0 |23.8 |1746 |

|[seresnext50_32x4d.racm_in1k](https://huggingface.co/timm/seresnext50_32x4d.racm_in1k)|224 |81.27|95.62|27.6 |4.3 |14.4 |2591 |

|[ecaresnet50t.a1_in1k](https://huggingface.co/timm/ecaresnet50t.a1_in1k)|224 |81.26|95.16|25.6 |4.3 |11.8 |2823 |

|[gcresnext50ts.ch_in1k](https://huggingface.co/timm/gcresnext50ts.ch_in1k)|288 |81.23|95.54|15.7 |4.8 |19.6 |2117 |

|[senet154.gluon_in1k](https://huggingface.co/timm/senet154.gluon_in1k)|224 |81.23|95.35|115.1 |20.8 |38.7 |545 |

|[resnet50.a1_in1k](https://huggingface.co/timm/resnet50.a1_in1k)|288 |81.22|95.11|25.6 |6.8 |18.4 |2089 |

|[resnet50_gn.a1h_in1k](https://huggingface.co/timm/resnet50_gn.a1h_in1k)|288 |81.22|95.63|25.6 |6.8 |18.4 |676 |

|[resnet50d.a2_in1k](https://huggingface.co/timm/resnet50d.a2_in1k)|288 |81.18|95.09|25.6 |7.2 |19.7 |1908 |

|[resnet50.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnet50.fb_swsl_ig1b_ft_in1k)|224 |81.18|95.98|25.6 |4.1 |11.1 |3455 |

|[resnext50_32x4d.tv2_in1k](https://huggingface.co/timm/resnext50_32x4d.tv2_in1k)|224 |81.17|95.34|25.0 |4.3 |14.4 |2933 |

|[resnext50_32x4d.a1h_in1k](https://huggingface.co/timm/resnext50_32x4d.a1h_in1k)|224 |81.1 |95.33|25.0 |4.3 |14.4 |2934 |

|[seresnet50.a2_in1k](https://huggingface.co/timm/seresnet50.a2_in1k)|288 |81.1 |95.23|28.1 |6.8 |18.4 |1801 |

|[seresnet50.a1_in1k](https://huggingface.co/timm/seresnet50.a1_in1k)|288 |81.1 |95.12|28.1 |6.8 |18.4 |1799 |

|[resnet152s.gluon_in1k](https://huggingface.co/timm/resnet152s.gluon_in1k)|224 |81.02|95.41|60.3 |12.9 |25.0 |1347 |

|[resnet50.d_in1k](https://huggingface.co/timm/resnet50.d_in1k)|288 |80.97|95.44|25.6 |6.8 |18.4 |2085 |

|[gcresnet50t.ra2_in1k](https://huggingface.co/timm/gcresnet50t.ra2_in1k)|256 |80.94|95.45|25.9 |5.4 |14.7 |2571 |

|[resnext101_32x4d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x4d.fb_ssl_yfcc100m_ft_in1k)|224 |80.93|95.73|44.2 |8.0 |21.2 |1814 |

|[resnet50.c1_in1k](https://huggingface.co/timm/resnet50.c1_in1k)|288 |80.91|95.55|25.6 |6.8 |18.4 |2084 |

|[seresnext101_32x4d.gluon_in1k](https://huggingface.co/timm/seresnext101_32x4d.gluon_in1k)|224 |80.9 |95.31|49.0 |8.0 |21.3 |1585 |

|[seresnext101_64x4d.gluon_in1k](https://huggingface.co/timm/seresnext101_64x4d.gluon_in1k)|224 |80.9 |95.3 |88.2 |15.5 |31.2 |918 |

|[resnet50.c2_in1k](https://huggingface.co/timm/resnet50.c2_in1k)|288 |80.86|95.52|25.6 |6.8 |18.4 |2085 |

|[resnet50.tv2_in1k](https://huggingface.co/timm/resnet50.tv2_in1k)|224 |80.85|95.43|25.6 |4.1 |11.1 |3450 |

|[ecaresnet50t.a2_in1k](https://huggingface.co/timm/ecaresnet50t.a2_in1k)|224 |80.84|95.02|25.6 |4.3 |11.8 |2821 |

|[ecaresnet101d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet101d_pruned.miil_in1k)|224 |80.79|95.62|24.9 |3.5 |7.7 |2961 |

|[seresnet33ts.ra2_in1k](https://huggingface.co/timm/seresnet33ts.ra2_in1k)|288 |80.79|95.36|19.8 |6.0 |14.8 |2506 |

|[ecaresnet50d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet50d_pruned.miil_in1k)|288 |80.79|95.58|19.9 |4.2 |10.6 |2349 |

|[resnet50.a2_in1k](https://huggingface.co/timm/resnet50.a2_in1k)|288 |80.78|94.99|25.6 |6.8 |18.4 |2088 |

|[resnet50.b1k_in1k](https://huggingface.co/timm/resnet50.b1k_in1k)|288 |80.71|95.43|25.6 |6.8 |18.4 |2087 |

|[resnext50_32x4d.ra_in1k](https://huggingface.co/timm/resnext50_32x4d.ra_in1k)|288 |80.7 |95.39|25.0 |7.0 |23.8 |1749 |

|[resnetrs101.tf_in1k](https://huggingface.co/timm/resnetrs101.tf_in1k)|192 |80.69|95.24|63.6 |6.0 |12.7 |2270 |

|[resnet50d.a1_in1k](https://huggingface.co/timm/resnet50d.a1_in1k)|224 |80.68|94.71|25.6 |4.4 |11.9 |3162 |

|[eca_resnet33ts.ra2_in1k](https://huggingface.co/timm/eca_resnet33ts.ra2_in1k)|288 |80.68|95.36|19.7 |6.0 |14.8 |2637 |

|[resnet50.a1h_in1k](https://huggingface.co/timm/resnet50.a1h_in1k)|224 |80.67|95.3 |25.6 |4.1 |11.1 |3452 |

|[resnext50d_32x4d.bt_in1k](https://huggingface.co/timm/resnext50d_32x4d.bt_in1k)|288 |80.67|95.42|25.0 |7.4 |25.1 |1626 |

|[resnetaa50.a1h_in1k](https://huggingface.co/timm/resnetaa50.a1h_in1k)|224 |80.63|95.21|25.6 |5.2 |11.6 |3034 |

|[ecaresnet50d.miil_in1k](https://huggingface.co/timm/ecaresnet50d.miil_in1k)|224 |80.61|95.32|25.6 |4.4 |11.9 |2813 |

|[resnext101_64x4d.gluon_in1k](https://huggingface.co/timm/resnext101_64x4d.gluon_in1k)|224 |80.61|94.99|83.5 |15.5 |31.2 |989 |

|[gcresnet33ts.ra2_in1k](https://huggingface.co/timm/gcresnet33ts.ra2_in1k)|288 |80.6 |95.31|19.9 |6.0 |14.8 |2578 |

|[gcresnext50ts.ch_in1k](https://huggingface.co/timm/gcresnext50ts.ch_in1k)|256 |80.57|95.17|15.7 |3.8 |15.5 |2710 |

|[resnet152.a3_in1k](https://huggingface.co/timm/resnet152.a3_in1k)|224 |80.56|95.0 |60.2 |11.6 |22.6 |1483 |

|[resnet50d.ra2_in1k](https://huggingface.co/timm/resnet50d.ra2_in1k)|224 |80.53|95.16|25.6 |4.4 |11.9 |3164 |

|[resnext50_32x4d.a1_in1k](https://huggingface.co/timm/resnext50_32x4d.a1_in1k)|224 |80.53|94.46|25.0 |4.3 |14.4 |2930 |

|[wide_resnet101_2.tv2_in1k](https://huggingface.co/timm/wide_resnet101_2.tv2_in1k)|176 |80.48|94.98|126.9 |14.3 |13.2 |1719 |

|[resnet152d.gluon_in1k](https://huggingface.co/timm/resnet152d.gluon_in1k)|224 |80.47|95.2 |60.2 |11.8 |23.4 |1428 |

|[resnet50.b2k_in1k](https://huggingface.co/timm/resnet50.b2k_in1k)|288 |80.45|95.32|25.6 |6.8 |18.4 |2086 |

|[ecaresnetlight.miil_in1k](https://huggingface.co/timm/ecaresnetlight.miil_in1k)|224 |80.45|95.24|30.2 |4.1 |8.4 |3530 |

|[resnext50_32x4d.a2_in1k](https://huggingface.co/timm/resnext50_32x4d.a2_in1k)|224 |80.45|94.63|25.0 |4.3 |14.4 |2936 |

|[wide_resnet50_2.tv2_in1k](https://huggingface.co/timm/wide_resnet50_2.tv2_in1k)|176 |80.43|95.09|68.9 |7.3 |9.0 |3015 |

|[resnet101d.gluon_in1k](https://huggingface.co/timm/resnet101d.gluon_in1k)|224 |80.42|95.01|44.6 |8.1 |17.0 |2007 |

|[resnet50.a1_in1k](https://huggingface.co/timm/resnet50.a1_in1k)|224 |80.38|94.6 |25.6 |4.1 |11.1 |3461 |

|[seresnet33ts.ra2_in1k](https://huggingface.co/timm/seresnet33ts.ra2_in1k)|256 |80.36|95.1 |19.8 |4.8 |11.7 |3267 |

|[resnext101_32x4d.gluon_in1k](https://huggingface.co/timm/resnext101_32x4d.gluon_in1k)|224 |80.34|94.93|44.2 |8.0 |21.2 |1814 |

|[resnext50_32x4d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext50_32x4d.fb_ssl_yfcc100m_ft_in1k)|224 |80.32|95.4 |25.0 |4.3 |14.4 |2941 |

|[resnet101s.gluon_in1k](https://huggingface.co/timm/resnet101s.gluon_in1k)|224 |80.28|95.16|44.7 |9.2 |18.6 |1851 |

|[seresnet50.ra2_in1k](https://huggingface.co/timm/seresnet50.ra2_in1k)|224 |80.26|95.08|28.1 |4.1 |11.1 |2972 |

|[resnetblur50.bt_in1k](https://huggingface.co/timm/resnetblur50.bt_in1k)|288 |80.24|95.24|25.6 |8.5 |19.9 |1523 |

|[resnet50d.a2_in1k](https://huggingface.co/timm/resnet50d.a2_in1k)|224 |80.22|94.63|25.6 |4.4 |11.9 |3162 |

|[resnet152.tv2_in1k](https://huggingface.co/timm/resnet152.tv2_in1k)|176 |80.2 |94.64|60.2 |7.2 |14.0 |2346 |

|[seresnet50.a2_in1k](https://huggingface.co/timm/seresnet50.a2_in1k)|224 |80.08|94.74|28.1 |4.1 |11.1 |2969 |

|[eca_resnet33ts.ra2_in1k](https://huggingface.co/timm/eca_resnet33ts.ra2_in1k)|256 |80.08|94.97|19.7 |4.8 |11.7 |3284 |

|[gcresnet33ts.ra2_in1k](https://huggingface.co/timm/gcresnet33ts.ra2_in1k)|256 |80.06|94.99|19.9 |4.8 |11.7 |3216 |

|[resnet50_gn.a1h_in1k](https://huggingface.co/timm/resnet50_gn.a1h_in1k)|224 |80.06|94.95|25.6 |4.1 |11.1 |1109 |

|[seresnet50.a1_in1k](https://huggingface.co/timm/seresnet50.a1_in1k)|224 |80.02|94.71|28.1 |4.1 |11.1 |2962 |

|[resnet50.ram_in1k](https://huggingface.co/timm/resnet50.ram_in1k)|288 |79.97|95.05|25.6 |6.8 |18.4 |2086 |

|[resnet152c.gluon_in1k](https://huggingface.co/timm/resnet152c.gluon_in1k)|224 |79.92|94.84|60.2 |11.8 |23.4 |1455 |

|[seresnext50_32x4d.gluon_in1k](https://huggingface.co/timm/seresnext50_32x4d.gluon_in1k)|224 |79.91|94.82|27.6 |4.3 |14.4 |2591 |

|[resnet50.d_in1k](https://huggingface.co/timm/resnet50.d_in1k)|224 |79.91|94.67|25.6 |4.1 |11.1 |3456 |

|[resnet101.tv2_in1k](https://huggingface.co/timm/resnet101.tv2_in1k)|176 |79.9 |94.6 |44.6 |4.9 |10.1 |3341 |

|[resnetrs50.tf_in1k](https://huggingface.co/timm/resnetrs50.tf_in1k)|224 |79.89|94.97|35.7 |4.5 |12.1 |2774 |

|[resnet50.c2_in1k](https://huggingface.co/timm/resnet50.c2_in1k)|224 |79.88|94.87|25.6 |4.1 |11.1 |3455 |

|[ecaresnet26t.ra2_in1k](https://huggingface.co/timm/ecaresnet26t.ra2_in1k)|320 |79.86|95.07|16.0 |5.2 |16.4 |2168 |

|[resnet50.a2_in1k](https://huggingface.co/timm/resnet50.a2_in1k)|224 |79.85|94.56|25.6 |4.1 |11.1 |3460 |

|[resnet50.ra_in1k](https://huggingface.co/timm/resnet50.ra_in1k)|288 |79.83|94.97|25.6 |6.8 |18.4 |2087 |

|[resnet101.a3_in1k](https://huggingface.co/timm/resnet101.a3_in1k)|224 |79.82|94.62|44.6 |7.8 |16.2 |2114 |

|[resnext50_32x4d.ra_in1k](https://huggingface.co/timm/resnext50_32x4d.ra_in1k)|224 |79.76|94.6 |25.0 |4.3 |14.4 |2943 |

|[resnet50.c1_in1k](https://huggingface.co/timm/resnet50.c1_in1k)|224 |79.74|94.95|25.6 |4.1 |11.1 |3455 |

|[ecaresnet50d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet50d_pruned.miil_in1k)|224 |79.74|94.87|19.9 |2.5 |6.4 |3929 |

|[resnet33ts.ra2_in1k](https://huggingface.co/timm/resnet33ts.ra2_in1k)|288 |79.71|94.83|19.7 |6.0 |14.8 |2710 |

|[resnet152.gluon_in1k](https://huggingface.co/timm/resnet152.gluon_in1k)|224 |79.68|94.74|60.2 |11.6 |22.6 |1486 |

|[resnext50d_32x4d.bt_in1k](https://huggingface.co/timm/resnext50d_32x4d.bt_in1k)|224 |79.67|94.87|25.0 |4.5 |15.2 |2729 |

|[resnet50.bt_in1k](https://huggingface.co/timm/resnet50.bt_in1k)|288 |79.63|94.91|25.6 |6.8 |18.4 |2086 |

|[ecaresnet50t.a3_in1k](https://huggingface.co/timm/ecaresnet50t.a3_in1k)|224 |79.56|94.72|25.6 |4.3 |11.8 |2805 |

|[resnet101c.gluon_in1k](https://huggingface.co/timm/resnet101c.gluon_in1k)|224 |79.53|94.58|44.6 |8.1 |17.0 |2062 |

|[resnet50.b1k_in1k](https://huggingface.co/timm/resnet50.b1k_in1k)|224 |79.52|94.61|25.6 |4.1 |11.1 |3459 |

|[resnet50.tv2_in1k](https://huggingface.co/timm/resnet50.tv2_in1k)|176 |79.42|94.64|25.6 |2.6 |6.9 |5397 |

|[resnet32ts.ra2_in1k](https://huggingface.co/timm/resnet32ts.ra2_in1k)|288 |79.4 |94.66|18.0 |5.9 |14.6 |2752 |

|[resnet50.b2k_in1k](https://huggingface.co/timm/resnet50.b2k_in1k)|224 |79.38|94.57|25.6 |4.1 |11.1 |3459 |

|[resnext50_32x4d.tv2_in1k](https://huggingface.co/timm/resnext50_32x4d.tv2_in1k)|176 |79.37|94.3 |25.0 |2.7 |9.0 |4577 |

|[resnext50_32x4d.gluon_in1k](https://huggingface.co/timm/resnext50_32x4d.gluon_in1k)|224 |79.36|94.43|25.0 |4.3 |14.4 |2942 |

|[resnext101_32x8d.tv_in1k](https://huggingface.co/timm/resnext101_32x8d.tv_in1k)|224 |79.31|94.52|88.8 |16.5 |31.2 |1100 |

|[resnet101.gluon_in1k](https://huggingface.co/timm/resnet101.gluon_in1k)|224 |79.31|94.53|44.6 |7.8 |16.2 |2125 |

|[resnetblur50.bt_in1k](https://huggingface.co/timm/resnetblur50.bt_in1k)|224 |79.31|94.63|25.6 |5.2 |12.0 |2524 |

|[resnet50.a1h_in1k](https://huggingface.co/timm/resnet50.a1h_in1k)|176 |79.27|94.49|25.6 |2.6 |6.9 |5404 |

|[resnext50_32x4d.a3_in1k](https://huggingface.co/timm/resnext50_32x4d.a3_in1k)|224 |79.25|94.31|25.0 |4.3 |14.4 |2931 |

|[resnet50.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnet50.fb_ssl_yfcc100m_ft_in1k)|224 |79.22|94.84|25.6 |4.1 |11.1 |3451 |

|[resnet33ts.ra2_in1k](https://huggingface.co/timm/resnet33ts.ra2_in1k)|256 |79.21|94.56|19.7 |4.8 |11.7 |3392 |

|[resnet50d.gluon_in1k](https://huggingface.co/timm/resnet50d.gluon_in1k)|224 |79.07|94.48|25.6 |4.4 |11.9 |3162 |

|[resnet50.ram_in1k](https://huggingface.co/timm/resnet50.ram_in1k)|224 |79.03|94.38|25.6 |4.1 |11.1 |3453 |

|[resnet50.am_in1k](https://huggingface.co/timm/resnet50.am_in1k)|224 |79.01|94.39|25.6 |4.1 |11.1 |3461 |

|[resnet32ts.ra2_in1k](https://huggingface.co/timm/resnet32ts.ra2_in1k)|256 |79.01|94.37|18.0 |4.6 |11.6 |3440 |

|[ecaresnet26t.ra2_in1k](https://huggingface.co/timm/ecaresnet26t.ra2_in1k)|256 |78.9 |94.54|16.0 |3.4 |10.5 |3421 |

|[resnet152.a3_in1k](https://huggingface.co/timm/resnet152.a3_in1k)|160 |78.89|94.11|60.2 |5.9 |11.5 |2745 |

|[wide_resnet101_2.tv_in1k](https://huggingface.co/timm/wide_resnet101_2.tv_in1k)|224 |78.84|94.28|126.9 |22.8 |21.2 |1079 |

|[seresnext26d_32x4d.bt_in1k](https://huggingface.co/timm/seresnext26d_32x4d.bt_in1k)|288 |78.83|94.24|16.8 |4.5 |16.8 |2251 |

|[resnet50.ra_in1k](https://huggingface.co/timm/resnet50.ra_in1k)|224 |78.81|94.32|25.6 |4.1 |11.1 |3454 |

|[seresnext26t_32x4d.bt_in1k](https://huggingface.co/timm/seresnext26t_32x4d.bt_in1k)|288 |78.74|94.33|16.8 |4.5 |16.7 |2264 |

|[resnet50s.gluon_in1k](https://huggingface.co/timm/resnet50s.gluon_in1k)|224 |78.72|94.23|25.7 |5.5 |13.5 |2796 |

|[resnet50d.a3_in1k](https://huggingface.co/timm/resnet50d.a3_in1k)|224 |78.71|94.24|25.6 |4.4 |11.9 |3154 |

|[wide_resnet50_2.tv_in1k](https://huggingface.co/timm/wide_resnet50_2.tv_in1k)|224 |78.47|94.09|68.9 |11.4 |14.4 |1934 |

|[resnet50.bt_in1k](https://huggingface.co/timm/resnet50.bt_in1k)|224 |78.46|94.27|25.6 |4.1 |11.1 |3454 |

|[resnet34d.ra2_in1k](https://huggingface.co/timm/resnet34d.ra2_in1k)|288 |78.43|94.35|21.8 |6.5 |7.5 |3291 |

|[gcresnext26ts.ch_in1k](https://huggingface.co/timm/gcresnext26ts.ch_in1k)|288 |78.42|94.04|10.5 |3.1 |13.3 |3226 |

|[resnet26t.ra2_in1k](https://huggingface.co/timm/resnet26t.ra2_in1k)|320 |78.33|94.13|16.0 |5.2 |16.4 |2391 |

|[resnet152.tv_in1k](https://huggingface.co/timm/resnet152.tv_in1k)|224 |78.32|94.04|60.2 |11.6 |22.6 |1487 |

|[seresnext26ts.ch_in1k](https://huggingface.co/timm/seresnext26ts.ch_in1k)|288 |78.28|94.1 |10.4 |3.1 |13.3 |3062 |

|[bat_resnext26ts.ch_in1k](https://huggingface.co/timm/bat_resnext26ts.ch_in1k)|256 |78.25|94.1 |10.7 |2.5 |12.5 |3393 |

|[resnet50.a3_in1k](https://huggingface.co/timm/resnet50.a3_in1k)|224 |78.06|93.78|25.6 |4.1 |11.1 |3450 |

|[resnet50c.gluon_in1k](https://huggingface.co/timm/resnet50c.gluon_in1k)|224 |78.0 |93.99|25.6 |4.4 |11.9 |3286 |

|[eca_resnext26ts.ch_in1k](https://huggingface.co/timm/eca_resnext26ts.ch_in1k)|288 |78.0 |93.91|10.3 |3.1 |13.3 |3297 |

|[seresnext26t_32x4d.bt_in1k](https://huggingface.co/timm/seresnext26t_32x4d.bt_in1k)|224 |77.98|93.75|16.8 |2.7 |10.1 |3841 |

|[resnet34.a1_in1k](https://huggingface.co/timm/resnet34.a1_in1k)|288 |77.92|93.77|21.8 |6.1 |6.2 |3609 |

|[resnet101.a3_in1k](https://huggingface.co/timm/resnet101.a3_in1k)|160 |77.88|93.71|44.6 |4.0 |8.3 |3926 |

|[resnet26t.ra2_in1k](https://huggingface.co/timm/resnet26t.ra2_in1k)|256 |77.87|93.84|16.0 |3.4 |10.5 |3772 |

|[seresnext26ts.ch_in1k](https://huggingface.co/timm/seresnext26ts.ch_in1k)|256 |77.86|93.79|10.4 |2.4 |10.5 |4263 |

|[resnetrs50.tf_in1k](https://huggingface.co/timm/resnetrs50.tf_in1k)|160 |77.82|93.81|35.7 |2.3 |6.2 |5238 |

|[gcresnext26ts.ch_in1k](https://huggingface.co/timm/gcresnext26ts.ch_in1k)|256 |77.81|93.82|10.5 |2.4 |10.5 |4183 |

|[ecaresnet50t.a3_in1k](https://huggingface.co/timm/ecaresnet50t.a3_in1k)|160 |77.79|93.6 |25.6 |2.2 |6.0 |5329 |

|[resnext50_32x4d.a3_in1k](https://huggingface.co/timm/resnext50_32x4d.a3_in1k)|160 |77.73|93.32|25.0 |2.2 |7.4 |5576 |

|[resnext50_32x4d.tv_in1k](https://huggingface.co/timm/resnext50_32x4d.tv_in1k)|224 |77.61|93.7 |25.0 |4.3 |14.4 |2944 |

|[seresnext26d_32x4d.bt_in1k](https://huggingface.co/timm/seresnext26d_32x4d.bt_in1k)|224 |77.59|93.61|16.8 |2.7 |10.2 |3807 |

|[resnet50.gluon_in1k](https://huggingface.co/timm/resnet50.gluon_in1k)|224 |77.58|93.72|25.6 |4.1 |11.1 |3455 |

|[eca_resnext26ts.ch_in1k](https://huggingface.co/timm/eca_resnext26ts.ch_in1k)|256 |77.44|93.56|10.3 |2.4 |10.5 |4284 |

|[resnet26d.bt_in1k](https://huggingface.co/timm/resnet26d.bt_in1k)|288 |77.41|93.63|16.0 |4.3 |13.5 |2907 |

|[resnet101.tv_in1k](https://huggingface.co/timm/resnet101.tv_in1k)|224 |77.38|93.54|44.6 |7.8 |16.2 |2125 |

|[resnet50d.a3_in1k](https://huggingface.co/timm/resnet50d.a3_in1k)|160 |77.22|93.27|25.6 |2.2 |6.1 |5982 |

|[resnext26ts.ra2_in1k](https://huggingface.co/timm/resnext26ts.ra2_in1k)|288 |77.17|93.47|10.3 |3.1 |13.3 |3392 |

|[resnet34.a2_in1k](https://huggingface.co/timm/resnet34.a2_in1k)|288 |77.15|93.27|21.8 |6.1 |6.2 |3615 |

|[resnet34d.ra2_in1k](https://huggingface.co/timm/resnet34d.ra2_in1k)|224 |77.1 |93.37|21.8 |3.9 |4.5 |5436 |

|[seresnet50.a3_in1k](https://huggingface.co/timm/seresnet50.a3_in1k)|224 |77.02|93.07|28.1 |4.1 |11.1 |2952 |

|[resnext26ts.ra2_in1k](https://huggingface.co/timm/resnext26ts.ra2_in1k)|256 |76.78|93.13|10.3 |2.4 |10.5 |4410 |

|[resnet26d.bt_in1k](https://huggingface.co/timm/resnet26d.bt_in1k)|224 |76.7 |93.17|16.0 |2.6 |8.2 |4859 |

|[resnet34.bt_in1k](https://huggingface.co/timm/resnet34.bt_in1k)|288 |76.5 |93.35|21.8 |6.1 |6.2 |3617 |

|[resnet34.a1_in1k](https://huggingface.co/timm/resnet34.a1_in1k)|224 |76.42|92.87|21.8 |3.7 |3.7 |5984 |

|[resnet26.bt_in1k](https://huggingface.co/timm/resnet26.bt_in1k)|288 |76.35|93.18|16.0 |3.9 |12.2 |3331 |

|[resnet50.tv_in1k](https://huggingface.co/timm/resnet50.tv_in1k)|224 |76.13|92.86|25.6 |4.1 |11.1 |3457 |

|[resnet50.a3_in1k](https://huggingface.co/timm/resnet50.a3_in1k)|160 |75.96|92.5 |25.6 |2.1 |5.7 |6490 |