modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

AhmedBou/TuniBert | 2023-01-10T08:12:26.000Z | [

"transformers",

"pytorch",

"bert",

"text-classification",

"sentiment analysis",

"classification",

"arabic dialect",

"tunisian dialect",

"ar",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | text-classification | AhmedBou | null | null | AhmedBou/TuniBert | 1 | 1,163 | transformers | 2022-03-02T23:29:04 | ---

license: apache-2.0

language:

- ar

tags:

- sentiment analysis

- classification

- arabic dialect

- tunisian dialect

---

This is a fineTued Bert model on Tunisian dialect text (Used dataset: AhmedBou/Tunisian-Dialect-Corpus), ready for sentiment analysis and classification tasks.

LABEL_1: Positive

LABEL_2: Negative

LABEL_0: Neutral

This work is an integral component of my Master's degree thesis and represents the culmination of extensive research and labor.

If you wish to utilize the Tunisian-Dialect-Corpus or the TuniBert model, kindly refer to the directory provided. [huggingface.co/AhmedBou][github.com/BoulahiaAhmed] | 634 | [

[

-0.058502197265625,

-0.054931640625,

0.0081939697265625,

0.033660888671875,

-0.0167236328125,

-0.039947509765625,

-0.0206146240234375,

-0.0196685791015625,

0.0304718017578125,

0.04376220703125,

-0.045074462890625,

-0.037994384765625,

-0.0372314453125,

-0.009... |

monologg/koelectra-base-v3-generator | 2023-06-12T12:30:53.000Z | [

"transformers",

"pytorch",

"safetensors",

"electra",

"fill-mask",

"korean",

"ko",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | monologg | null | null | monologg/koelectra-base-v3-generator | 3 | 1,163 | transformers | 2022-03-02T23:29:05 | ---

language: ko

license: apache-2.0

tags:

- korean

---

# KoELECTRA v3 (Base Generator)

Pretrained ELECTRA Language Model for Korean (`koelectra-base-v3-generator`)

For more detail, please see [original repository](https://github.com/monologg/KoELECTRA/blob/master/README_EN.md).

## Usage

### Load model and tokenizer

```python

>>> from transformers import ElectraModel, ElectraTokenizer

>>> model = ElectraModel.from_pretrained("monologg/koelectra-base-v3-generator")

>>> tokenizer = ElectraTokenizer.from_pretrained("monologg/koelectra-base-v3-generator")

```

### Tokenizer example

```python

>>> from transformers import ElectraTokenizer

>>> tokenizer = ElectraTokenizer.from_pretrained("monologg/koelectra-base-v3-generator")

>>> tokenizer.tokenize("[CLS] 한국어 ELECTRA를 공유합니다. [SEP]")

['[CLS]', '한국어', 'EL', '##EC', '##TRA', '##를', '공유', '##합니다', '.', '[SEP]']

>>> tokenizer.convert_tokens_to_ids(['[CLS]', '한국어', 'EL', '##EC', '##TRA', '##를', '공유', '##합니다', '.', '[SEP]'])

[2, 11229, 29173, 13352, 25541, 4110, 7824, 17788, 18, 3]

```

## Example using ElectraForMaskedLM

```python

from transformers import pipeline

fill_mask = pipeline(

"fill-mask",

model="monologg/koelectra-base-v3-generator",

tokenizer="monologg/koelectra-base-v3-generator"

)

print(fill_mask("나는 {} 밥을 먹었다.".format(fill_mask.tokenizer.mask_token)))

```

| 1,354 | [

[

-0.0165557861328125,

-0.0191802978515625,

0.007015228271484375,

0.0280303955078125,

-0.058074951171875,

0.0235137939453125,

0.002765655517578125,

0.00968170166015625,

0.03045654296875,

0.052337646484375,

-0.036895751953125,

-0.04620361328125,

-0.03802490234375,

... |

Davlan/bert-base-multilingual-cased-masakhaner | 2022-06-27T11:50:04.000Z | [

"transformers",

"pytorch",

"tf",

"bert",

"token-classification",

"arxiv:2103.11811",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | token-classification | Davlan | null | null | Davlan/bert-base-multilingual-cased-masakhaner | 3 | 1,161 | transformers | 2022-03-02T23:29:04 | Hugging Face's logo

---

language:

- ha

- ig

- rw

- lg

- luo

- pcm

- sw

- wo

- yo

- multilingual

datasets:

- masakhaner

---

# bert-base-multilingual-cased-masakhaner

## Model description

**bert-base-multilingual-cased-masakhaner** is the first **Named Entity Recognition** model for 9 African languages (Hausa, Igbo, Kinyarwanda, Luganda, Nigerian Pidgin, Swahilu, Wolof, and Yorùbá) based on a fine-tuned mBERT base model. It achieves the **state-of-the-art performance** for the NER task. It has been trained to recognize four types of entities: dates & times (DATE), location (LOC), organizations (ORG), and person (PER).

Specifically, this model is a *bert-base-multilingual-cased* model that was fine-tuned on an aggregation of African language datasets obtained from Masakhane [MasakhaNER](https://github.com/masakhane-io/masakhane-ner) dataset.

## Intended uses & limitations

#### How to use

You can use this model with Transformers *pipeline* for NER.

```python

from transformers import AutoTokenizer, AutoModelForTokenClassification

from transformers import pipeline

tokenizer = AutoTokenizer.from_pretrained("Davlan/bert-base-multilingual-cased-masakhaner")

model = AutoModelForTokenClassification.from_pretrained("Davlan/bert-base-multilingual-cased-masakhaner")

nlp = pipeline("ner", model=model, tokenizer=tokenizer)

example = "Emir of Kano turban Zhang wey don spend 18 years for Nigeria"

ner_results = nlp(example)

print(ner_results)

```

#### Limitations and bias

This model is limited by its training dataset of entity-annotated news articles from a specific span of time. This may not generalize well for all use cases in different domains.

## Training data

This model was fine-tuned on 9 African NER datasets (Hausa, Igbo, Kinyarwanda, Luganda, Nigerian Pidgin, Swahilu, Wolof, and Yorùbá) Masakhane [MasakhaNER](https://github.com/masakhane-io/masakhane-ner) dataset

The training dataset distinguishes between the beginning and continuation of an entity so that if there are back-to-back entities of the same type, the model can output where the second entity begins. As in the dataset, each token will be classified as one of the following classes:

Abbreviation|Description

-|-

O|Outside of a named entity

B-DATE |Beginning of a DATE entity right after another DATE entity

I-DATE |DATE entity

B-PER |Beginning of a person’s name right after another person’s name

I-PER |Person’s name

B-ORG |Beginning of an organisation right after another organisation

I-ORG |Organisation

B-LOC |Beginning of a location right after another location

I-LOC |Location

## Training procedure

This model was trained on a single NVIDIA V100 GPU with recommended hyperparameters from the [original MasakhaNER paper](https://arxiv.org/abs/2103.11811) which trained & evaluated the model on MasakhaNER corpus.

## Eval results on Test set (F-score)

language|F1-score

-|-

hau |88.66

ibo |85.72

kin |71.94

lug |81.73

luo |77.39

pcm |88.96

swa |88.23

wol |66.27

yor |80.09

### BibTeX entry and citation info

```

@article{adelani21tacl,

title = {Masakha{NER}: Named Entity Recognition for African Languages},

author = {David Ifeoluwa Adelani and Jade Abbott and Graham Neubig and Daniel D'souza and Julia Kreutzer and Constantine Lignos and Chester Palen-Michel and Happy Buzaaba and Shruti Rijhwani and Sebastian Ruder and Stephen Mayhew and Israel Abebe Azime and Shamsuddeen Muhammad and Chris Chinenye Emezue and Joyce Nakatumba-Nabende and Perez Ogayo and Anuoluwapo Aremu and Catherine Gitau and Derguene Mbaye and Jesujoba Alabi and Seid Muhie Yimam and Tajuddeen Gwadabe and Ignatius Ezeani and Rubungo Andre Niyongabo and Jonathan Mukiibi and Verrah Otiende and Iroro Orife and Davis David and Samba Ngom and Tosin Adewumi and Paul Rayson and Mofetoluwa Adeyemi and Gerald Muriuki and Emmanuel Anebi and Chiamaka Chukwuneke and Nkiruka Odu and Eric Peter Wairagala and Samuel Oyerinde and Clemencia Siro and Tobius Saul Bateesa and Temilola Oloyede and Yvonne Wambui and Victor Akinode and Deborah Nabagereka and Maurice Katusiime and Ayodele Awokoya and Mouhamadane MBOUP and Dibora Gebreyohannes and Henok Tilaye and Kelechi Nwaike and Degaga Wolde and Abdoulaye Faye and Blessing Sibanda and Orevaoghene Ahia and Bonaventure F. P. Dossou and Kelechi Ogueji and Thierno Ibrahima DIOP and Abdoulaye Diallo and Adewale Akinfaderin and Tendai Marengereke and Salomey Osei},

journal = {Transactions of the Association for Computational Linguistics (TACL)},

month = {},

url = {https://arxiv.org/abs/2103.11811},

year = {2021}

}

```

| 4,564 | [

[

-0.056396484375,

-0.037994384765625,

0.00269317626953125,

0.01214599609375,

-0.018341064453125,

-0.006988525390625,

-0.0192108154296875,

-0.035675048828125,

0.04766845703125,

0.031646728515625,

-0.044647216796875,

-0.028411865234375,

-0.06597900390625,

0.025... |

cross-encoder/qnli-distilroberta-base | 2021-08-05T08:41:18.000Z | [

"transformers",

"pytorch",

"jax",

"roberta",

"text-classification",

"arxiv:1804.07461",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | text-classification | cross-encoder | null | null | cross-encoder/qnli-distilroberta-base | 0 | 1,161 | transformers | 2022-03-02T23:29:05 | ---

license: apache-2.0

---

# Cross-Encoder for Quora Duplicate Questions Detection

This model was trained using [SentenceTransformers](https://sbert.net) [Cross-Encoder](https://www.sbert.net/examples/applications/cross-encoder/README.html) class.

## Training Data

Given a question and paragraph, can the question be answered by the paragraph? The models have been trained on the [GLUE QNLI](https://arxiv.org/abs/1804.07461) dataset, which transformed the [SQuAD dataset](https://rajpurkar.github.io/SQuAD-explorer/) into an NLI task.

## Performance

For performance results of this model, see [SBERT.net Pre-trained Cross-Encoder][https://www.sbert.net/docs/pretrained_cross-encoders.html].

## Usage

Pre-trained models can be used like this:

```python

from sentence_transformers import CrossEncoder

model = CrossEncoder('model_name')

scores = model.predict([('Query1', 'Paragraph1'), ('Query2', 'Paragraph2')])

#e.g.

scores = model.predict([('How many people live in Berlin?', 'Berlin had a population of 3,520,031 registered inhabitants in an area of 891.82 square kilometers.'), ('What is the size of New York?', 'New York City is famous for the Metropolitan Museum of Art.')])

```

## Usage with Transformers AutoModel

You can use the model also directly with Transformers library (without SentenceTransformers library):

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

import torch

model = AutoModelForSequenceClassification.from_pretrained('model_name')

tokenizer = AutoTokenizer.from_pretrained('model_name')

features = tokenizer(['How many people live in Berlin?', 'What is the size of New York?'], ['Berlin had a population of 3,520,031 registered inhabitants in an area of 891.82 square kilometers.', 'New York City is famous for the Metropolitan Museum of Art.'], padding=True, truncation=True, return_tensors="pt")

model.eval()

with torch.no_grad():

scores = torch.nn.functional.sigmoid(model(**features).logits)

print(scores)

``` | 1,996 | [

[

-0.02069091796875,

-0.056427001953125,

0.0277862548828125,

0.0195465087890625,

-0.015289306640625,

-0.006275177001953125,

0.004894256591796875,

-0.014923095703125,

0.01302337646484375,

0.04376220703125,

-0.044677734375,

-0.035247802734375,

-0.039703369140625,

... |

sonoisa/t5-base-japanese-title-generation | 2022-02-21T13:38:09.000Z | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"seq2seq",

"ja",

"license:cc-by-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text2text-generation | sonoisa | null | null | sonoisa/t5-base-japanese-title-generation | 3 | 1,161 | transformers | 2022-03-02T23:29:05 | ---

language: ja

tags:

- t5

- text2text-generation

- seq2seq

license: cc-by-sa-4.0

---

# 記事本文からタイトルを生成するモデル

SEE: https://qiita.com/sonoisa/items/a9af64ff641f0bbfed44 | 167 | [

[

-0.0019044876098632812,

-0.0223236083984375,

0.03460693359375,

0.057098388671875,

-0.04425048828125,

0.01288604736328125,

0.03912353515625,

-0.0010728836059570312,

0.057220458984375,

0.041961669921875,

-0.06640625,

-0.0521240234375,

-0.041778564453125,

-0.02... |

LTP/base | 2022-09-19T06:36:10.000Z | [

"transformers",

"pytorch",

"endpoints_compatible",

"region:us"

] | null | LTP | null | null | LTP/base | 2 | 1,161 | transformers | 2022-08-14T04:15:28 |

| Language | version |

| ------------------------------------ | ------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| [Python](python/interface/README.md) | [](https://pypi.org/project/ltp) [](https://pypi.org/project/ltp-core) [](https://pypi.org/project/ltp-extension) |

| [Rust](rust/ltp/README.md) | [](https://crates.io/crates/ltp) |

# LTP 4

LTP(Language Technology Platform) 提供了一系列中文自然语言处理工具,用户可以使用这些工具对于中文文本进行分词、词性标注、句法分析等等工作。

## 引用

如果您在工作中使用了 LTP,您可以引用这篇论文

```bibtex

@article{che2020n,

title={N-LTP: A Open-source Neural Chinese Language Technology Platform with Pretrained Models},

author={Che, Wanxiang and Feng, Yunlong and Qin, Libo and Liu, Ting},

journal={arXiv preprint arXiv:2009.11616},

year={2020}

}

```

**参考书:**

由哈工大社会计算与信息检索研究中心(HIT-SCIR)的多位学者共同编著的《[自然语言处理:基于预训练模型的方法](https://item.jd.com/13344628.html)

》(作者:车万翔、郭江、崔一鸣;主审:刘挺)一书现已正式出版,该书重点介绍了新的基于预训练模型的自然语言处理技术,包括基础知识、预训练词向量和预训练模型三大部分,可供广大LTP用户学习参考。

### 更新说明

- 4.2.0

- \[结构性变化\] 将 LTP 拆分成 2 个部分,维护和训练更方便,结构更清晰

- \[Legacy 模型\] 针对广大用户对于**推理速度**的需求,使用 Rust 重写了基于感知机的算法,准确率与 LTP3 版本相当,速度则是 LTP v3 的 **3.55** 倍,开启多线程更可获得 **17.17** 倍的速度提升,但目前仅支持分词、词性、命名实体三大任务

- \[深度学习模型\] 即基于 PyTorch 实现的深度学习模型,支持全部的6大任务(分词/词性/命名实体/语义角色/依存句法/语义依存)

- \[其他改进\] 改进了模型训练方法

- \[共同\] 提供了训练脚本和训练样例,使得用户能够更方便地使用私有的数据,自行训练个性化的模型

- \[深度学习模型\] 采用 hydra 对训练过程进行配置,方便广大用户修改模型训练参数以及对 LTP 进行扩展(比如使用其他包中的 Module)

- \[其他变化\] 分词、依存句法分析 (Eisner) 和 语义依存分析 (Eisner) 任务的解码算法使用 Rust 实现,速度更快

- \[新特性\] 模型上传至 [Huggingface Hub](https://huggingface.co/LTP),支持自动下载,下载速度更快,并且支持用户自行上传自己训练的模型供LTP进行推理使用

- \[破坏性变更\] 改用 Pipeline API 进行推理,方便后续进行更深入的性能优化(如SDP和SDPG很大一部分是重叠的,重用可以加快推理速度),使用说明参见[Github快速使用部分](https://github.com/hit-scir/ltp)

- 4.1.0

- 提供了自定义分词等功能

- 修复了一些bug

- 4.0.0

- 基于Pytorch 开发,原生 Python 接口

- 可根据需要自由选择不同速度和指标的模型

- 分词、词性、命名实体、依存句法、语义角色、语义依存6大任务

## 快速使用

### [Python](python/interface/README.md)

```bash

pip install -U ltp ltp-core ltp-extension -i https://pypi.org/simple # 安装 ltp

```

**注:** 如果遇到任何错误,请尝试使用上述命令重新安装 ltp,如果依然报错,请在 Github issues 中反馈。

```python

import torch

from ltp import LTP

ltp = LTP("LTP/small") # 默认加载 Small 模型

# 将模型移动到 GPU 上

if torch.cuda.is_available():

# ltp.cuda()

ltp.to("cuda")

output = ltp.pipeline(["他叫汤姆去拿外衣。"], tasks=["cws", "pos", "ner", "srl", "dep", "sdp"])

# 使用字典格式作为返回结果

print(output.cws) # print(output[0]) / print(output['cws']) # 也可以使用下标访问

print(output.pos)

print(output.sdp)

# 使用感知机算法实现的分词、词性和命名实体识别,速度比较快,但是精度略低

ltp = LTP("LTP/legacy")

# cws, pos, ner = ltp.pipeline(["他叫汤姆去拿外衣。"], tasks=["cws", "ner"]).to_tuple() # error: NER 需要 词性标注任务的结果

cws, pos, ner = ltp.pipeline(["他叫汤姆去拿外衣。"], tasks=["cws", "pos", "ner"]).to_tuple() # to tuple 可以自动转换为元组格式

# 使用元组格式作为返回结果

print(cws, pos, ner)

```

**[详细说明](python/interface/docs/quickstart.rst)**

### [Rust](rust/ltp/README.md)

```rust

use std::fs::File;

use itertools::multizip;

use ltp::{CWSModel, POSModel, NERModel, ModelSerde, Format, Codec};

fn main() -> Result<(), Box<dyn std::error::Error>> {

let file = File::open("data/legacy-models/cws_model.bin")?;

let cws: CWSModel = ModelSerde::load(file, Format::AVRO(Codec::Deflate))?;

let file = File::open("data/legacy-models/pos_model.bin")?;

let pos: POSModel = ModelSerde::load(file, Format::AVRO(Codec::Deflate))?;

let file = File::open("data/legacy-models/ner_model.bin")?;

let ner: NERModel = ModelSerde::load(file, Format::AVRO(Codec::Deflate))?;

let words = cws.predict("他叫汤姆去拿外衣。")?;

let pos = pos.predict(&words)?;

let ner = ner.predict((&words, &pos))?;

for (w, p, n) in multizip((words, pos, ner)) {

println!("{}/{}/{}", w, p, n);

}

Ok(())

}

```

## 模型性能以及下载地址

| 深度学习模型 | 分词 | 词性 | 命名实体 | 语义角色 | 依存句法 | 语义依存 | 速度(句/S) |

| :---------------------------------------: | :---: | :---: | :---: | :---: | :---: | :---: | :-----: |

| [Base](https://huggingface.co/LTP/base) | 98.7 | 98.5 | 95.4 | 80.6 | 89.5 | 75.2 | 39.12 |

| [Base1](https://huggingface.co/LTP/base1) | 99.22 | 98.73 | 96.39 | 79.28 | 89.57 | 76.57 | --.-- |

| [Base2](https://huggingface.co/LTP/base2) | 99.18 | 98.69 | 95.97 | 79.49 | 90.19 | 76.62 | --.-- |

| [Small](https://huggingface.co/LTP/small) | 98.4 | 98.2 | 94.3 | 78.4 | 88.3 | 74.7 | 43.13 |

| [Tiny](https://huggingface.co/LTP/tiny) | 96.8 | 97.1 | 91.6 | 70.9 | 83.8 | 70.1 | 53.22 |

| 感知机算法 | 分词 | 词性 | 命名实体 | 速度(句/s) | 备注 |

| :-----------------------------------------: | :---: | :---: | :---: | :------: | :------------------------: |

| [Legacy](https://huggingface.co/LTP/legacy) | 97.93 | 98.41 | 94.28 | 21581.48 | [性能详情](rust/ltp/README.md) |

**注:感知机算法速度为开启16线程速度**

## 构建 Wheel 包

```shell script

make bdist

```

## 其他语言绑定

**感知机算法**

- [Rust](rust/ltp)

- [C/C++](rust/ltp-cffi)

**深度学习算法**

- [Rust](https://github.com/HIT-SCIR/libltp/tree/master/ltp-rs)

- [C++](https://github.com/HIT-SCIR/libltp/tree/master/ltp-cpp)

- [Java](https://github.com/HIT-SCIR/libltp/tree/master/ltp-java)

## 作者信息

- 冯云龙 \<\<[ylfeng@ir.hit.edu.cn](mailto:ylfeng@ir.hit.edu.cn)>>

## 开源协议

1. 语言技术平台面向国内外大学、中科院各研究所以及个人研究者免费开放源代码,但如上述机构和个人将该平台用于商业目的(如企业合作项目等)则需要付费。

2. 除上述机构以外的企事业单位,如申请使用该平台,需付费。

3. 凡涉及付费问题,请发邮件到 car@ir.hit.edu.cn 洽商。

4. 如果您在 LTP 基础上发表论文或取得科研成果,请您在发表论文和申报成果时声明“使用了哈工大社会计算与信息检索研究中心研制的语言技术平台(LTP)”.

同时,发信给car@ir.hit.edu.cn,说明发表论文或申报成果的题目、出处等。

| 6,719 | [

[

-0.033660888671875,

-0.04571533203125,

0.0243988037109375,

0.017578125,

-0.0214996337890625,

0.006683349609375,

-0.009185791015625,

-0.027496337890625,

0.02783203125,

0.01068115234375,

-0.022125244140625,

-0.034149169921875,

-0.039642333984375,

-0.0018587112... |

salesforce/blipdiffusion | 2023-09-21T15:55:12.000Z | [

"diffusers",

"en",

"arxiv:2305.14720",

"license:apache-2.0",

"diffusers:BlipDiffusionPipeline",

"region:us"

] | null | salesforce | null | null | salesforce/blipdiffusion | 3 | 1,161 | diffusers | 2023-09-21T15:55:12 | ---

license: apache-2.0

language:

- en

library_name: diffusers

---

# BLIP-Diffusion: Pre-trained Subject Representation for Controllable Text-to-Image Generation and Editing

<!-- Provide a quick summary of what the model is/does. -->

Model card for BLIP-Diffusion, a text to image Diffusion model which enables zero-shot subject-driven generation and control-guided zero-shot generation.

The abstract from the paper is:

*Subject-driven text-to-image generation models create novel renditions of an input subject based on text prompts. Existing models suffer from lengthy fine-tuning and difficulties preserving the subject fidelity. To overcome these limitations, we introduce BLIP-Diffusion, a new subject-driven image generation model that supports multimodal control which consumes inputs of subject images and text prompts. Unlike other subject-driven generation models, BLIP-Diffusion introduces a new multimodal encoder which is pre-trained to provide subject representation. We first pre-train the multimodal encoder following BLIP-2 to produce visual representation aligned with the text. Then we design a subject representation learning task which enables a diffusion model to leverage such visual representation and generates new subject renditions. Compared with previous methods such as DreamBooth, our model enables zero-shot subject-driven generation, and efficient fine-tuning for customized subject with up to 20x speedup. We also demonstrate that BLIP-Diffusion can be flexibly combined with existing techniques such as ControlNet and prompt-to-prompt to enable novel subject-driven generation and editing applications.*

The model is created by Dongxu Li, Junnan Li, Steven C.H. Hoi.

### Model Sources

<!-- Provide the basic links for the model. -->

- **Original Repository:** https://github.com/salesforce/LAVIS/tree/main

- **Project Page:** https://dxli94.github.io/BLIP-Diffusion-website/

## Uses

### Zero-Shot Subject Driven Generation

```python

from diffusers.pipelines import BlipDiffusionPipeline

from diffusers.utils import load_image

import torch

blip_diffusion_pipe = BlipDiffusionPipeline.from_pretrained(

"Salesforce/blipdiffusion", torch_dtype=torch.float16

).to("cuda")

cond_subject = "dog"

tgt_subject = "dog"

text_prompt_input = "swimming underwater"

cond_image = load_image(

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog.jpg"

)

iter_seed = 88888

guidance_scale = 7.5

num_inference_steps = 25

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

output = blip_diffusion_pipe(

text_prompt_input,

cond_image,

cond_subject,

tgt_subject,

guidance_scale=guidance_scale,

num_inference_steps=num_inference_steps,

neg_prompt=negative_prompt,

height=512,

width=512,

).images

output[0].save("image.png")

```

Input Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog.jpg" style="width:500px;"/>

Generatred Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog_underwater.png" style="width:500px;"/>

### Controlled subject-driven generation

```python

from diffusers.pipelines import BlipDiffusionControlNetPipeline

from diffusers.utils import load_image

from controlnet_aux import CannyDetector

blip_diffusion_pipe = BlipDiffusionControlNetPipeline.from_pretrained(

"Salesforce/blipdiffusion-controlnet", torch_dtype=torch.float16

).to("cuda")

style_subject = "flower" # subject that defines the style

tgt_subject = "teapot" # subject to generate.

text_prompt = "on a marble table"

cldm_cond_image = load_image(

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/kettle.jpg"

).resize((512, 512))

canny = CannyDetector()

cldm_cond_image = canny(cldm_cond_image, 30, 70, output_type="pil")

style_image = load_image(

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg"

)

guidance_scale = 7.5

num_inference_steps = 50

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

output = blip_diffusion_pipe(

text_prompt,

style_image,

cldm_cond_image,

style_subject,

tgt_subject,

guidance_scale=guidance_scale,

num_inference_steps=num_inference_steps,

neg_prompt=negative_prompt,

height=512,

width=512,

).images

output[0].save("image.png")

```

Input Style Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg" style="width:500px;"/>

Canny Edge Input : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/kettle.jpg" style="width:500px;"/>

Generated Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/canny_generated.png" style="width:500px;"/>

### Controlled subject-driven generation Scribble

```python

from diffusers.pipelines import BlipDiffusionControlNetPipeline

from diffusers.utils import load_image

from controlnet_aux import HEDdetector

blip_diffusion_pipe = BlipDiffusionControlNetPipeline.from_pretrained(

"Salesforce/blipdiffusion-controlnet"

)

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-scribble")

blip_diffusion_pipe.controlnet = controlnet

blip_diffusion_pipe.to("cuda")

style_subject = "flower" # subject that defines the style

tgt_subject = "bag" # subject to generate.

text_prompt = "on a table"

cldm_cond_image = load_image(

"https://huggingface.co/lllyasviel/sd-controlnet-scribble/resolve/main/images/bag.png"

).resize((512, 512))

hed = HEDdetector.from_pretrained("lllyasviel/Annotators")

cldm_cond_image = hed(cldm_cond_image)

style_image = load_image(

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg"

)

guidance_scale = 7.5

num_inference_steps = 50

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

output = blip_diffusion_pipe(

text_prompt,

style_image,

cldm_cond_image,

style_subject,

tgt_subject,

guidance_scale=guidance_scale,

num_inference_steps=num_inference_steps,

neg_prompt=negative_prompt,

height=512,

width=512,

).images

output[0].save("image.png")

```

Input Style Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg" style="width:500px;"/>

Scribble Input : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/scribble.png" style="width:500px;"/>

Generated Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/scribble_output.png" style="width:500px;"/>

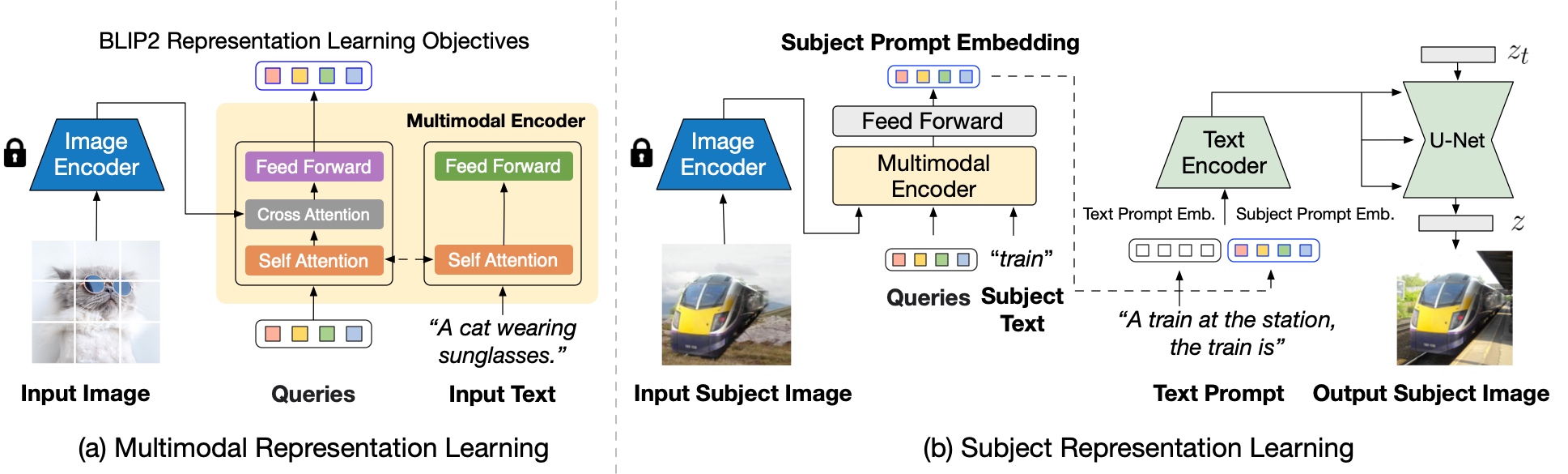

## Model Architecture

Blip-Diffusion learns a **pre-trained subject representation**. uch representation aligns with text embeddings and in the meantime also encodes the subject appearance. This allows efficient fine-tuning of the model for high-fidelity subject-driven applications, such as text-to-image generation, editing and style transfer.

To this end, they design a two-stage pre-training strategy to learn generic subject representation. In the first pre-training stage, they perform multimodal representation learning, which enforces BLIP-2 to produce text-aligned visual features based on the input image. In the second pre-training stage, they design a subject representation learning task, called prompted context generation, where the diffusion model learns to generate novel subject renditions based on the input visual features.

To achieve this, they curate pairs of input-target images with the same subject appearing in different contexts. Specifically, they synthesize input images by composing the subject with a random background. During pre-training, they feed the synthetic input image and the subject class label through BLIP-2 to obtain the multimodal embeddings as subject representation. The subject representation is then combined with a text prompt to guide the generation of the target image.

The architecture is also compatible to integrate with established techniques built on top of the diffusion model, such as ControlNet.

They attach the U-Net of the pre-trained ControlNet to that of BLIP-Diffusion via residuals. In this way, the model takes into account the input structure condition, such as edge maps and depth maps, in addition to the subject cues. Since the model inherits the architecture of the original latent diffusion model, they observe satisfying generations using off-the-shelf integration with pre-trained ControlNet without further training.

<img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/arch_controlnet.png" style="width:50%;"/>

## Citation

**BibTeX:**

If you find this repository useful in your research, please cite:

```

@misc{li2023blipdiffusion,

title={BLIP-Diffusion: Pre-trained Subject Representation for Controllable Text-to-Image Generation and Editing},

author={Dongxu Li and Junnan Li and Steven C. H. Hoi},

year={2023},

eprint={2305.14720},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

| 9,724 | [

[

-0.0299072265625,

-0.059814453125,

0.0093841552734375,

0.058807373046875,

-0.022857666015625,

-0.0196533203125,

-0.005191802978515625,

-0.0357666015625,

0.031036376953125,

0.0193023681640625,

-0.0338134765625,

-0.035675048828125,

-0.0458984375,

0.00186634063... |

EleutherAI/pythia-1b-v0 | 2023-07-10T01:35:25.000Z | [

"transformers",

"pytorch",

"safetensors",

"gpt_neox",

"text-generation",

"causal-lm",

"pythia",

"pythia_v0",

"en",

"dataset:the_pile",

"arxiv:2101.00027",

"arxiv:2201.07311",

"license:apache-2.0",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | EleutherAI | null | null | EleutherAI/pythia-1b-v0 | 6 | 1,159 | transformers | 2022-10-16T18:27:56 | ---

language:

- en

tags:

- pytorch

- causal-lm

- pythia

- pythia_v0

license: apache-2.0

datasets:

- the_pile

---

The *Pythia Scaling Suite* is a collection of models developed to facilitate

interpretability research. It contains two sets of eight models of sizes

70M, 160M, 410M, 1B, 1.4B, 2.8B, 6.9B, and 12B. For each size, there are two

models: one trained on the Pile, and one trained on the Pile after the dataset

has been globally deduplicated. All 8 model sizes are trained on the exact

same data, in the exact same order. All Pythia models are available

[on Hugging Face](https://huggingface.co/models?other=pythia).

The Pythia model suite was deliberately designed to promote scientific

research on large language models, especially interpretability research.

Despite not centering downstream performance as a design goal, we find the

models <a href="#evaluations">match or exceed</a> the performance of

similar and same-sized models, such as those in the OPT and GPT-Neo suites.

Please note that all models in the *Pythia* suite were renamed in January

2023. For clarity, a <a href="#naming-convention-and-parameter-count">table

comparing the old and new names</a> is provided in this model card, together

with exact parameter counts.

## Pythia-1B

### Model Details

- Developed by: [EleutherAI](http://eleuther.ai)

- Model type: Transformer-based Language Model

- Language: English

- Learn more: [Pythia's GitHub repository](https://github.com/EleutherAI/pythia)

for training procedure, config files, and details on how to use.

- Library: [GPT-NeoX](https://github.com/EleutherAI/gpt-neox)

- License: Apache 2.0

- Contact: to ask questions about this model, join the [EleutherAI

Discord](https://discord.gg/zBGx3azzUn), and post them in `#release-discussion`.

Please read the existing *Pythia* documentation before asking about it in the

EleutherAI Discord. For general correspondence: [contact@eleuther.

ai](mailto:contact@eleuther.ai).

<figure>

| Pythia model | Non-Embedding Params | Layers | Model Dim | Heads | Batch Size | Learning Rate | Equivalent Models |

| -----------: | -------------------: | :----: | :-------: | :---: | :--------: | :-------------------: | :--------------------: |

| 70M | 18,915,328 | 6 | 512 | 8 | 2M | 1.0 x 10<sup>-3</sup> | — |

| 160M | 85,056,000 | 12 | 768 | 12 | 4M | 6.0 x 10<sup>-4</sup> | GPT-Neo 125M, OPT-125M |

| 410M | 302,311,424 | 24 | 1024 | 16 | 4M | 3.0 x 10<sup>-4</sup> | OPT-350M |

| 1.0B | 805,736,448 | 16 | 2048 | 8 | 2M | 3.0 x 10<sup>-4</sup> | — |

| 1.4B | 1,208,602,624 | 24 | 2048 | 16 | 4M | 2.0 x 10<sup>-4</sup> | GPT-Neo 1.3B, OPT-1.3B |

| 2.8B | 2,517,652,480 | 32 | 2560 | 32 | 2M | 1.6 x 10<sup>-4</sup> | GPT-Neo 2.7B, OPT-2.7B |

| 6.9B | 6,444,163,072 | 32 | 4096 | 32 | 2M | 1.2 x 10<sup>-4</sup> | OPT-6.7B |

| 12B | 11,327,027,200 | 36 | 5120 | 40 | 2M | 1.2 x 10<sup>-4</sup> | — |

<figcaption>Engineering details for the <i>Pythia Suite</i>. Deduped and

non-deduped models of a given size have the same hyperparameters. “Equivalent”

models have <b>exactly</b> the same architecture, and the same number of

non-embedding parameters.</figcaption>

</figure>

### Uses and Limitations

#### Intended Use

The primary intended use of Pythia is research on the behavior, functionality,

and limitations of large language models. This suite is intended to provide

a controlled setting for performing scientific experiments. To enable the

study of how language models change over the course of training, we provide

143 evenly spaced intermediate checkpoints per model. These checkpoints are

hosted on Hugging Face as branches. Note that branch `143000` corresponds

exactly to the model checkpoint on the `main` branch of each model.

You may also further fine-tune and adapt Pythia-1B for deployment,

as long as your use is in accordance with the Apache 2.0 license. Pythia

models work with the Hugging Face [Transformers

Library](https://huggingface.co/docs/transformers/index). If you decide to use

pre-trained Pythia-1B as a basis for your fine-tuned model, please

conduct your own risk and bias assessment.

#### Out-of-scope use

The Pythia Suite is **not** intended for deployment. It is not a in itself

a product and cannot be used for human-facing interactions.

Pythia models are English-language only, and are not suitable for translation

or generating text in other languages.

Pythia-1B has not been fine-tuned for downstream contexts in which

language models are commonly deployed, such as writing genre prose,

or commercial chatbots. This means Pythia-1B will **not**

respond to a given prompt the way a product like ChatGPT does. This is because,

unlike this model, ChatGPT was fine-tuned using methods such as Reinforcement

Learning from Human Feedback (RLHF) to better “understand” human instructions.

#### Limitations and biases

The core functionality of a large language model is to take a string of text

and predict the next token. The token deemed statistically most likely by the

model need not produce the most “accurate” text. Never rely on

Pythia-1B to produce factually accurate output.

This model was trained on [the Pile](https://pile.eleuther.ai/), a dataset

known to contain profanity and texts that are lewd or otherwise offensive.

See [Section 6 of the Pile paper](https://arxiv.org/abs/2101.00027) for a

discussion of documented biases with regards to gender, religion, and race.

Pythia-1B may produce socially unacceptable or undesirable text, *even if*

the prompt itself does not include anything explicitly offensive.

If you plan on using text generated through, for example, the Hosted Inference

API, we recommend having a human curate the outputs of this language model

before presenting it to other people. Please inform your audience that the

text was generated by Pythia-1B.

### Quickstart

Pythia models can be loaded and used via the following code, demonstrated here

for the third `pythia-70m-deduped` checkpoint:

```python

from transformers import GPTNeoXForCausalLM, AutoTokenizer

model = GPTNeoXForCausalLM.from_pretrained(

"EleutherAI/pythia-70m-deduped",

revision="step3000",

cache_dir="./pythia-70m-deduped/step3000",

)

tokenizer = AutoTokenizer.from_pretrained(

"EleutherAI/pythia-70m-deduped",

revision="step3000",

cache_dir="./pythia-70m-deduped/step3000",

)

inputs = tokenizer("Hello, I am", return_tensors="pt")

tokens = model.generate(**inputs)

tokenizer.decode(tokens[0])

```

Revision/branch `step143000` corresponds exactly to the model checkpoint on

the `main` branch of each model.<br>

For more information on how to use all Pythia models, see [documentation on

GitHub](https://github.com/EleutherAI/pythia).

### Training

#### Training data

[The Pile](https://pile.eleuther.ai/) is a 825GiB general-purpose dataset in

English. It was created by EleutherAI specifically for training large language

models. It contains texts from 22 diverse sources, roughly broken down into

five categories: academic writing (e.g. arXiv), internet (e.g. CommonCrawl),

prose (e.g. Project Gutenberg), dialogue (e.g. YouTube subtitles), and

miscellaneous (e.g. GitHub, Enron Emails). See [the Pile

paper](https://arxiv.org/abs/2101.00027) for a breakdown of all data sources,

methodology, and a discussion of ethical implications. Consult [the

datasheet](https://arxiv.org/abs/2201.07311) for more detailed documentation

about the Pile and its component datasets. The Pile can be downloaded from

the [official website](https://pile.eleuther.ai/), or from a [community

mirror](https://the-eye.eu/public/AI/pile/).<br>

The Pile was **not** deduplicated before being used to train Pythia-1B.

#### Training procedure

All models were trained on the exact same data, in the exact same order. Each

model saw 299,892,736,000 tokens during training, and 143 checkpoints for each

model are saved every 2,097,152,000 tokens, spaced evenly throughout training.

This corresponds to training for just under 1 epoch on the Pile for

non-deduplicated models, and about 1.5 epochs on the deduplicated Pile.

All *Pythia* models trained for the equivalent of 143000 steps at a batch size

of 2,097,152 tokens. Two batch sizes were used: 2M and 4M. Models with a batch

size of 4M tokens listed were originally trained for 71500 steps instead, with

checkpoints every 500 steps. The checkpoints on Hugging Face are renamed for

consistency with all 2M batch models, so `step1000` is the first checkpoint

for `pythia-1.4b` that was saved (corresponding to step 500 in training), and

`step1000` is likewise the first `pythia-6.9b` checkpoint that was saved

(corresponding to 1000 “actual” steps).<br>

See [GitHub](https://github.com/EleutherAI/pythia) for more details on training

procedure, including [how to reproduce

it](https://github.com/EleutherAI/pythia/blob/main/README.md#reproducing-training).<br>

Pythia uses the same tokenizer as [GPT-NeoX-

20B](https://huggingface.co/EleutherAI/gpt-neox-20b).

### Evaluations

All 16 *Pythia* models were evaluated using the [LM Evaluation

Harness](https://github.com/EleutherAI/lm-evaluation-harness). You can access

the results by model and step at `results/json/*` in the [GitHub

repository](https://github.com/EleutherAI/pythia/tree/main/results/json).<br>

Expand the sections below to see plots of evaluation results for all

Pythia and Pythia-deduped models compared with OPT and BLOOM.

<details>

<summary>LAMBADA – OpenAI</summary>

<img src="/EleutherAI/pythia-12b/resolve/main/eval_plots/lambada_openai.png" style="width:auto"/>

</details>

<details>

<summary>Physical Interaction: Question Answering (PIQA)</summary>

<img src="/EleutherAI/pythia-12b/resolve/main/eval_plots/piqa.png" style="width:auto"/>

</details>

<details>

<summary>WinoGrande</summary>

<img src="/EleutherAI/pythia-12b/resolve/main/eval_plots/winogrande.png" style="width:auto"/>

</details>

<details>

<summary>AI2 Reasoning Challenge—Challenge Set</summary>

<img src="/EleutherAI/pythia-12b/resolve/main/eval_plots/arc_challenge.png" style="width:auto"/>

</details>

<details>

<summary>SciQ</summary>

<img src="/EleutherAI/pythia-12b/resolve/main/eval_plots/sciq.png" style="width:auto"/>

</details>

### Naming convention and parameter count

*Pythia* models were renamed in January 2023. It is possible that the old

naming convention still persists in some documentation by accident. The

current naming convention (70M, 160M, etc.) is based on total parameter count.

<figure style="width:32em">

| current Pythia suffix | old suffix | total params | non-embedding params |

| --------------------: | ---------: | -------------: | -------------------: |

| 70M | 19M | 70,426,624 | 18,915,328 |

| 160M | 125M | 162,322,944 | 85,056,000 |

| 410M | 350M | 405,334,016 | 302,311,424 |

| 1B | 800M | 1,011,781,632 | 805,736,448 |

| 1.4B | 1.3B | 1,414,647,808 | 1,208,602,624 |

| 2.8B | 2.7B | 2,775,208,960 | 2,517,652,480 |

| 6.9B | 6.7B | 6,857,302,016 | 6,444,163,072 |

| 12B | 13B | 11,846,072,320 | 11,327,027,200 |

</figure> | 11,764 | [

[

-0.0247802734375,

-0.06500244140625,

0.0192108154296875,

0.0045623779296875,

-0.0172119140625,

-0.01357269287109375,

-0.015869140625,

-0.0350341796875,

0.0158843994140625,

0.01457977294921875,

-0.0243682861328125,

-0.022552490234375,

-0.0367431640625,

-0.005... |

bardsai/finance-sentiment-fr-base | 2023-09-18T09:54:48.000Z | [

"transformers",

"pytorch",

"camembert",

"text-classification",

"financial-sentiment-analysis",

"sentiment-analysis",

"fr",

"dataset:datasets/financial_phrasebank",

"endpoints_compatible",

"region:us"

] | text-classification | bardsai | null | null | bardsai/finance-sentiment-fr-base | 0 | 1,159 | transformers | 2023-09-18T09:53:50 | ---

language: fr

tags:

- text-classification

- financial-sentiment-analysis

- sentiment-analysis

datasets:

- datasets/financial_phrasebank

metrics:

- f1

- accuracy

- precision

- recall

widget:

- text: "Le chiffre d'affaires net a augmenté de 30 % pour atteindre 36 millions d'euros."

example_title: "Example 1"

- text: "Coup d'envoi du vendredi fou. Liste des promotions en magasin."

example_title: "Example 2"

- text: "Les actions de CDPROJEKT ont enregistré la plus forte baisse parmi les entreprises cotées au WSE."

example_title: "Example 3"

---

# Finance Sentiment FR (base)

Finance Sentiment FR (base) is a model based on [camembert-base](https://huggingface.co/camembert-base) for analyzing sentiment of French financial news. It was trained on the translated version of [Financial PhraseBank](https://www.researchgate.net/publication/251231107_Good_Debt_or_Bad_Debt_Detecting_Semantic_Orientations_in_Economic_Texts) by Malo et al. (20014) for 10 epochs on single RTX3090 gpu.

The model will give you a three labels: positive, negative and neutral.

## How to use

You can use this model directly with a pipeline for sentiment-analysis:

```python

from transformers import pipeline

nlp = pipeline("sentiment-analysis", model="bardsai/finance-sentiment-fr-base")

nlp("Le chiffre d'affaires net a augmenté de 30 % pour atteindre 36 millions d'euros.")

```

```bash

[{'label': 'positive', 'score': 0.9987998807375955}]

```

## Performance

| Metric | Value |

| --- | ----------- |

| f1 macro | 0.963 |

| precision macro | 0.959 |

| recall macro | 0.967 |

| accuracy | 0.971 |

| samples per second | 140.8 |

(The performance was evaluated on RTX 3090 gpu)

## Changelog

- 2023-09-18: Initial release

## About bards.ai

At bards.ai, we focus on providing machine learning expertise and skills to our partners, particularly in the areas of nlp, machine vision and time series analysis. Our team is located in Wroclaw, Poland. Please visit our website for more information: [bards.ai](https://bards.ai/)

Let us know if you use our model :). Also, if you need any help, feel free to contact us at info@bards.ai

| 2,152 | [

[

-0.04534912109375,

-0.051361083984375,

0.005786895751953125,

0.038970947265625,

-0.040863037109375,

0.017120361328125,

-0.0125579833984375,

-0.0202789306640625,

0.02490234375,

0.0321044921875,

-0.0511474609375,

-0.05548095703125,

-0.04644775390625,

-0.018005... |

uw-hai/polyjuice | 2021-05-24T01:21:24.000Z | [

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"counterfactual generation",

"en",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | uw-hai | null | null | uw-hai/polyjuice | 3 | 1,158 | transformers | 2022-03-02T23:29:05 | ---

language: "en"

tags:

- counterfactual generation

widget:

- text: "It is great for kids. <|perturb|> [negation] It [BLANK] great for kids. [SEP]"

---

# Polyjuice

## Model description

This is a ported version of [Polyjuice](https://homes.cs.washington.edu/~wtshuang/static/papers/2021-arxiv-polyjuice.pdf), the general-purpose counterfactual generator.

For more code release, please refer to [this github page](https://github.com/tongshuangwu/polyjuice).

#### How to use

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

from transformers import pipeline, AutoTokenizer, AutoModelForCausalLM

model_path = "uw-hai/polyjuice"

generator = pipeline("text-generation",

model=AutoModelForCausalLM.from_pretrained(model_path),

tokenizer=AutoTokenizer.from_pretrained(model_path),

framework="pt", device=0 if is_cuda else -1)

prompt_text = "A dog is embraced by the woman. <|perturb|> [negation] A dog is [BLANK] the woman."

generator(prompt_text, num_beams=3, num_return_sequences=3)

```

### BibTeX entry and citation info

```bibtex

@inproceedings{polyjuice:acl21,

title = "{P}olyjuice: Generating Counterfactuals for Explaining, Evaluating, and Improving Models",

author = "Tongshuang Wu and Marco Tulio Ribeiro and Jeffrey Heer and Daniel S. Weld",

booktitle = "Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics",

year = "2021",

publisher = "Association for Computational Linguistics"

``` | 1,486 | [

[

-0.014801025390625,

-0.05181884765625,

0.0187530517578125,

0.016815185546875,

-0.0362548828125,

-0.0182647705078125,

0.010040283203125,

-0.01181793212890625,

0.00681304931640625,

0.02362060546875,

-0.023223876953125,

-0.01177215576171875,

-0.0297698974609375,

... |

timm/vit_large_patch32_384.orig_in21k_ft_in1k | 2023-05-06T00:26:10.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"dataset:imagenet-21k",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/vit_large_patch32_384.orig_in21k_ft_in1k | 0 | 1,156 | timm | 2022-12-22T07:51:06 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

- imagenet-21k

---

# Model card for vit_large_patch32_384.orig_in21k_ft_in1k

A Vision Transformer (ViT) image classification model. Trained on ImageNet-21k and fine-tuned on ImageNet-1k in JAX by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 306.6

- GMACs: 44.3

- Activations (M): 32.2

- Image size: 384 x 384

- **Papers:**

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:** ImageNet-21k

- **Original:** https://github.com/google-research/vision_transformer

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('vit_large_patch32_384.orig_in21k_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'vit_large_patch32_384.orig_in21k_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 145, 1024) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{dosovitskiy2020vit,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={ICLR},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 3,399 | [

[

-0.036651611328125,

-0.029266357421875,

0.0014657974243164062,

0.009979248046875,

-0.027618408203125,

-0.0250244140625,

-0.0185394287109375,

-0.037506103515625,

0.0165557861328125,

0.026123046875,

-0.036590576171875,

-0.044189453125,

-0.05230712890625,

-0.00... |

TheBloke/Pygmalion-2-13B-GPTQ | 2023-09-27T12:47:59.000Z | [

"transformers",

"safetensors",

"llama",

"text-generation",

"text generation",

"instruct",

"en",

"dataset:PygmalionAI/PIPPA",

"dataset:Open-Orca/OpenOrca",

"dataset:Norquinal/claude_multiround_chat_30k",

"dataset:jondurbin/airoboros-gpt4-1.4.1",

"dataset:databricks/databricks-dolly-15k",

"lic... | text-generation | TheBloke | null | null | TheBloke/Pygmalion-2-13B-GPTQ | 33 | 1,156 | transformers | 2023-09-05T22:01:13 | ---

language:

- en

license: llama2

tags:

- text generation

- instruct

datasets:

- PygmalionAI/PIPPA

- Open-Orca/OpenOrca

- Norquinal/claude_multiround_chat_30k

- jondurbin/airoboros-gpt4-1.4.1

- databricks/databricks-dolly-15k

model_name: Pygmalion 2 13B

base_model: PygmalionAI/pygmalion-2-13b

inference: false

model_creator: PygmalionAI

model_type: llama

pipeline_tag: text-generation

prompt_template: 'The model has been trained on prompts using three different roles,

which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind

the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate

a response. These tokens can happen multiple times and be chained up to form a conversation

history.

The system prompt has been designed to allow the model to "enter" various modes

and dictate the reply length. Here''s an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

'

quantized_by: TheBloke

---

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Pygmalion 2 13B - GPTQ

- Model creator: [PygmalionAI](https://huggingface.co/PygmalionAI)

- Original model: [Pygmalion 2 13B](https://huggingface.co/PygmalionAI/pygmalion-2-13b)

<!-- description start -->

## Description

This repo contains GPTQ model files for [PygmalionAI's Pygmalion 2 13B](https://huggingface.co/PygmalionAI/pygmalion-2-13b).

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Pygmalion-2-13B-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Pygmalion-2-13B-GGUF)

* [PygmalionAI's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/PygmalionAI/pygmalion-2-13b)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Custom

The model has been trained on prompts using three different roles, which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate a response. These tokens can happen multiple times and be chained up to form a conversation history.

The system prompt has been designed to allow the model to "enter" various modes and dictate the reply length. Here's an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

<!-- prompt-template end -->

<!-- README_GPTQ.md-provided-files start -->

## Provided files and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

All recent GPTQ files are made with AutoGPTQ, and all files in non-main branches are made with AutoGPTQ. Files in the `main` branch which were uploaded before August 2023 were made with GPTQ-for-LLaMa.

<details>

<summary>Explanation of GPTQ parameters</summary>

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now.

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The dataset used for quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama models in 4-bit.

</details>

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/main) | 4 | 128 | No | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.26 GB | Yes | 4-bit, without Act Order and group size 128g. |

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 8.00 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| [gptq-4bit-64g-actorder_True](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/gptq-4bit-64g-actorder_True) | 4 | 64 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.51 GB | Yes | 4-bit, with Act Order and group size 64g. Uses less VRAM than 32g, but with slightly lower accuracy. |

| [gptq-4bit-128g-actorder_True](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/gptq-4bit-128g-actorder_True) | 4 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 7.26 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| [gptq-8bit--1g-actorder_True](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/gptq-8bit--1g-actorder_True) | 8 | None | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 13.36 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [wikitext](https://huggingface.co/datasets/wikitext/viewer/wikitext-2-v1/test) | 4096 | 13.65 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

<!-- README_GPTQ.md-provided-files end -->

<!-- README_GPTQ.md-download-from-branches start -->

## How to download from branches

- In text-generation-webui, you can add `:branch` to the end of the download name, eg `TheBloke/Pygmalion-2-13B-GPTQ:main`

- With Git, you can clone a branch with:

```

git clone --single-branch --branch main https://huggingface.co/TheBloke/Pygmalion-2-13B-GPTQ

```

- In Python Transformers code, the branch is the `revision` parameter; see below.

<!-- README_GPTQ.md-download-from-branches end -->

<!-- README_GPTQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Pygmalion-2-13B-GPTQ`.

- To download from a specific branch, enter for example `TheBloke/Pygmalion-2-13B-GPTQ:main`

- see Provided Files above for the list of branches for each option.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Pygmalion-2-13B-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

* Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation tab** and enter a prompt to get started!

<!-- README_GPTQ.md-text-generation-webui end -->

<!-- README_GPTQ.md-use-from-python start -->

## How to use this GPTQ model from Python code

### Install the necessary packages

Requires: Transformers 4.32.0 or later, Optimum 1.12.0 or later, and AutoGPTQ 0.4.2 or later.

```shell

pip3 install transformers>=4.32.0 optimum>=1.12.0

pip3 install auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/ # Use cu117 if on CUDA 11.7

```

If you have problems installing AutoGPTQ using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

pip3 install .

```

### For CodeLlama models only: you must use Transformers 4.33.0 or later.

If 4.33.0 is not yet released when you read this, you will need to install Transformers from source:

```shell

pip3 uninstall -y transformers

pip3 install git+https://github.com/huggingface/transformers.git

```

### You can then use the following code

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/Pygmalion-2-13B-GPTQ"

# To use a different branch, change revision

# For example: revision="main"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Tell me about AI"

prompt_template=f'''<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_GPTQ.md-use-from-python end -->

<!-- README_GPTQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with AutoGPTQ, both via Transformers and using AutoGPTQ directly. They should also work with [Occ4m's GPTQ-for-LLaMa fork](https://github.com/0cc4m/KoboldAI).

[ExLlama](https://github.com/turboderp/exllama) is compatible with Llama models in 4-bit. Please see the Provided Files table above for per-file compatibility.

[Huggingface Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference) is compatible with all GPTQ models.

<!-- README_GPTQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Alicia Loh, Stephen Murray, K, Ajan Kanaga, RoA, Magnesian, Deo Leter, Olakabola, Eugene Pentland, zynix, Deep Realms, Raymond Fosdick, Elijah Stavena, Iucharbius, Erik Bjäreholt, Luis Javier Navarrete Lozano, Nicholas, theTransient, John Detwiler, alfie_i, knownsqashed, Mano Prime, Willem Michiel, Enrico Ros, LangChain4j, OG, Michael Dempsey, Pierre Kircher, Pedro Madruga, James Bentley, Thomas Belote, Luke @flexchar, Leonard Tan, Johann-Peter Hartmann, Illia Dulskyi, Fen Risland, Chadd, S_X, Jeff Scroggin, Ken Nordquist, Sean Connelly, Artur Olbinski, Swaroop Kallakuri, Jack West, Ai Maven, David Ziegler, Russ Johnson, transmissions 11, John Villwock, Alps Aficionado, Clay Pascal, Viktor Bowallius, Subspace Studios, Rainer Wilmers, Trenton Dambrowitz, vamX, Michael Levine, 준교 김, Brandon Frisco, Kalila, Trailburnt, Randy H, Talal Aujan, Nathan Dryer, Vadim, 阿明, ReadyPlayerEmma, Tiffany J. Kim, George Stoitzev, Spencer Kim, Jerry Meng, Gabriel Tamborski, Cory Kujawski, Jeffrey Morgan, Spiking Neurons AB, Edmond Seymore, Alexandros Triantafyllidis, Lone Striker, Cap'n Zoog, Nikolai Manek, danny, ya boyyy, Derek Yates, usrbinkat, Mandus, TL, Nathan LeClaire, subjectnull, Imad Khwaja, webtim, Raven Klaugh, Asp the Wyvern, Gabriel Puliatti, Caitlyn Gatomon, Joseph William Delisle, Jonathan Leane, Luke Pendergrass, SuperWojo, Sebastain Graf, Will Dee, Fred von Graf, Andrey, Dan Guido, Daniel P. Andersen, Nitin Borwankar, Elle, Vitor Caleffi, biorpg, jjj, NimbleBox.ai, Pieter, Matthew Berman, terasurfer, Michael Davis, Alex, Stanislav Ovsiannikov

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: PygmalionAI's Pygmalion 2 13B

<h1 style="text-align: center">Pygmalion-2 13B</h1>

<h2 style="text-align: center">An instruction-tuned Llama-2 biased towards fiction writing and conversation.</h2>

## Model Details

The long-awaited release of our new models based on Llama-2 is finally here. Pygmalion-2 13B (formerly known as Metharme) is based on

[Llama-2 13B](https://huggingface.co/meta-llama/llama-2-13b-hf) released by Meta AI.

The Metharme models were an experiment to try and get a model that is usable for conversation, roleplaying and storywriting,

but which can be guided using natural language like other instruct models. After much deliberation, we reached the conclusion

that the Metharme prompting format is superior (and easier to use) compared to the classic Pygmalion.

This model was trained by doing supervised fine-tuning over a mixture of regular instruction data alongside roleplay, fictional stories

and conversations with synthetically generated instructions attached.

This model is freely available for both commercial and non-commercial use, as per the Llama-2 license.

## Prompting

The model has been trained on prompts using three different roles, which are denoted by the following tokens: `<|system|>`, `<|user|>` and `<|model|>`.

The `<|system|>` prompt can be used to inject out-of-channel information behind the scenes, while the `<|user|>` prompt should be used to indicate user input.

The `<|model|>` token should then be used to indicate that the model should generate a response. These tokens can happen multiple times and be chained up to

form a conversation history.

### Prompting example

The system prompt has been designed to allow the model to "enter" various modes and dictate the reply length. Here's an example:

```

<|system|>Enter RP mode. Pretend to be {{char}} whose persona follows:

{{persona}}

You shall reply to the user while staying in character, and generate long responses.

```

## Dataset

The dataset used to fine-tune this model includes our own [PIPPA](https://huggingface.co/datasets/PygmalionAI/PIPPA), along with several other instruction

datasets, and datasets acquired from various RP forums.

## Limitations and biases

The intended use-case for this model is fictional writing for entertainment purposes. Any other sort of usage is out of scope.

As such, it was **not** fine-tuned to be safe and harmless: the base model _and_ this fine-tune have been trained on data known to contain profanity and texts that are lewd or otherwise offensive. It may produce socially unacceptable or undesirable text, even if the prompt itself does not include anything explicitly offensive. Outputs might often be factually wrong or misleading.

## Acknowledgements

We would like to thank [SpicyChat](https://spicychat.ai/) for sponsoring the training for this model.

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

| 19,367 | [

[

-0.037994384765625,

-0.052825927734375,

0.003620147705078125,

0.0142974853515625,

-0.0187530517578125,

-0.01380157470703125,

-0.0007448196411132812,

-0.038726806640625,

0.0218658447265625,

0.01641845703125,

-0.051971435546875,

-0.026824951171875,

-0.026977539062... |

anton-l/wav2vec2-base-lang-id | 2021-10-01T12:36:49.000Z | [

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"audio-classification",

"generated_from_trainer",

"dataset:common_language",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | audio-classification | anton-l | null | null | anton-l/wav2vec2-base-lang-id | 5 | 1,155 | transformers | 2022-03-02T23:29:05 | ---

license: apache-2.0

tags:

- audio-classification

- generated_from_trainer

datasets:

- common_language

metrics:

- accuracy

model-index:

- name: wav2vec2-base-lang-id

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-base-lang-id

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the anton-l/common_language dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9836

- Accuracy: 0.7945

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 32

- eval_batch_size: 4

- seed: 0

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 2.9568 | 1.0 | 173 | 3.2866 | 0.1146 |

| 1.9243 | 2.0 | 346 | 2.1241 | 0.3840 |

| 1.2923 | 3.0 | 519 | 1.5498 | 0.5489 |

| 0.8659 | 4.0 | 692 | 1.4953 | 0.6126 |

| 0.5539 | 5.0 | 865 | 1.2431 | 0.6926 |

| 0.4101 | 6.0 | 1038 | 1.1443 | 0.7232 |

| 0.2945 | 7.0 | 1211 | 1.0870 | 0.7544 |

| 0.1552 | 8.0 | 1384 | 1.1080 | 0.7661 |

| 0.0968 | 9.0 | 1557 | 0.9836 | 0.7945 |

| 0.0623 | 10.0 | 1730 | 1.0252 | 0.7993 |

### Framework versions

- Transformers 4.11.0.dev0

- Pytorch 1.9.1+cu111

- Datasets 1.12.1

- Tokenizers 0.10.3

| 2,114 | [

[

-0.033935546875,

-0.043792724609375,

0.0001342296600341797,

0.01110076904296875,

-0.0195465087890625,

-0.0180511474609375,

-0.0170745849609375,

-0.023101806640625,

0.0128631591796875,

0.0189971923828125,

-0.054168701171875,

-0.055450439453125,

-0.047607421875,

... |