modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

jozhang97/deta-swin-large | 2023-09-06T16:00:01.000Z | [

"transformers",

"pytorch",

"safetensors",

"deta",

"object-detection",

"vision",

"arxiv:2212.06137",

"endpoints_compatible",

"has_space",

"region:us"

] | object-detection | jozhang97 | null | null | jozhang97/deta-swin-large | 7 | 1,300 | transformers | 2023-01-30T15:46:20 | ---

pipeline_tag: object-detection

tags:

- vision

---

# Detection Transformers with Assignment

By [Jeffrey Ouyang-Zhang](https://jozhang97.github.io/), [Jang Hyun Cho](https://sites.google.com/view/janghyuncho/), [Xingyi Zhou](https://www.cs.utexas.edu/~zhouxy/), [Philipp Krähenbühl](http://www.philkr.net/)

From the paper [NMS Strikes Back](https://arxiv.org/abs/2212.06137).

**TL; DR.** **De**tection **T**ransformers with **A**ssignment (DETA) re-introduce IoU assignment and NMS for transformer-based detectors. DETA trains and tests comparibly as fast as Deformable-DETR and converges much faster (50.2 mAP in 12 epochs on COCO). | 640 | [

[

-0.044525146484375,

-0.006793975830078125,

0.0433349609375,

-0.006702423095703125,

-0.001277923583984375,

0.0257568359375,

0.00042366981506347656,

-0.0161895751953125,

0.0087432861328125,

0.0217742919921875,

-0.039093017578125,

-0.025726318359375,

-0.04174804687... |

younus123/my-pet-robot | 2023-10-18T06:26:38.000Z | [

"diffusers",

"NxtWave-GenAI-Webinar",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us",

"has_space"

] | text-to-image | younus123 | null | null | younus123/my-pet-robot | 0 | 1,300 | diffusers | 2023-10-18T06:20:33 | ---

license: creativeml-openrail-m

tags:

- NxtWave-GenAI-Webinar

- text-to-image

- stable-diffusion

---

### My-Pet-robot Dreambooth model trained by younus123 following the "Build your own Gen AI model" session by NxtWave.

Project Submission Code: GoX19932gAS

Sample pictures of this concept:

| 492 | [

[

-0.04931640625,

-0.00966644287109375,

0.0310821533203125,

-0.0021648406982421875,

-0.01526641845703125,

0.036407470703125,

0.034088134765625,

-0.03131103515625,

0.062255859375,

0.03448486328125,

-0.03887939453125,

-0.0168304443359375,

-0.0205841064453125,

0.... |

apple/deeplabv3-mobilevit-small | 2022-08-29T07:57:47.000Z | [

"transformers",

"pytorch",

"tf",

"coreml",

"mobilevit",

"vision",

"image-segmentation",

"dataset:pascal-voc",

"arxiv:2110.02178",

"arxiv:1706.05587",

"license:other",

"endpoints_compatible",

"has_space",

"region:us"

] | image-segmentation | apple | null | null | apple/deeplabv3-mobilevit-small | 6 | 1,299 | transformers | 2022-05-30T12:49:24 | ---

license: other

tags:

- vision

- image-segmentation

datasets:

- pascal-voc

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/cat-2.jpg

example_title: Cat

---

# MobileViT + DeepLabV3 (small-sized model)

MobileViT model pre-trained on PASCAL VOC at resolution 512x512. It was introduced in [MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer](https://arxiv.org/abs/2110.02178) by Sachin Mehta and Mohammad Rastegari, and first released in [this repository](https://github.com/apple/ml-cvnets). The license used is [Apple sample code license](https://github.com/apple/ml-cvnets/blob/main/LICENSE).

Disclaimer: The team releasing MobileViT did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

MobileViT is a light-weight, low latency convolutional neural network that combines MobileNetV2-style layers with a new block that replaces local processing in convolutions with global processing using transformers. As with ViT (Vision Transformer), the image data is converted into flattened patches before it is processed by the transformer layers. Afterwards, the patches are "unflattened" back into feature maps. This allows the MobileViT-block to be placed anywhere inside a CNN. MobileViT does not require any positional embeddings.

The model in this repo adds a [DeepLabV3](https://arxiv.org/abs/1706.05587) head to the MobileViT backbone for semantic segmentation.

## Intended uses & limitations

You can use the raw model for semantic segmentation. See the [model hub](https://huggingface.co/models?search=mobilevit) to look for fine-tuned versions on a task that interests you.

### How to use

Here is how to use this model:

```python

from transformers import MobileViTFeatureExtractor, MobileViTForSemanticSegmentation

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

feature_extractor = MobileViTFeatureExtractor.from_pretrained("apple/deeplabv3-mobilevit-small")

model = MobileViTForSemanticSegmentation.from_pretrained("apple/deeplabv3-mobilevit-small")

inputs = feature_extractor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits

predicted_mask = logits.argmax(1).squeeze(0)

```

Currently, both the feature extractor and model support PyTorch.

## Training data

The MobileViT + DeepLabV3 model was pretrained on [ImageNet-1k](https://huggingface.co/datasets/imagenet-1k), a dataset consisting of 1 million images and 1,000 classes, and then fine-tuned on the [PASCAL VOC2012](http://host.robots.ox.ac.uk/pascal/VOC/) dataset.

## Training procedure

### Preprocessing

At inference time, images are center-cropped at 512x512. Pixels are normalized to the range [0, 1]. Images are expected to be in BGR pixel order, not RGB.

### Pretraining

The MobileViT networks are trained from scratch for 300 epochs on ImageNet-1k on 8 NVIDIA GPUs with an effective batch size of 1024 and learning rate warmup for 3k steps, followed by cosine annealing. Also used were label smoothing cross-entropy loss and L2 weight decay. Training resolution varies from 160x160 to 320x320, using multi-scale sampling.

To obtain the DeepLabV3 model, MobileViT was fine-tuned on the PASCAL VOC dataset using 4 NVIDIA A100 GPUs.

## Evaluation results

| Model | PASCAL VOC mIOU | # params | URL |

|------------------|-----------------|-----------|-----------------------------------------------------------|

| MobileViT-XXS | 73.6 | 1.9 M | https://huggingface.co/apple/deeplabv3-mobilevit-xx-small |

| MobileViT-XS | 77.1 | 2.9 M | https://huggingface.co/apple/deeplabv3-mobilevit-x-small |

| **MobileViT-S** | **79.1** | **6.4 M** | https://huggingface.co/apple/deeplabv3-mobilevit-small |

### BibTeX entry and citation info

```bibtex

@inproceedings{vision-transformer,

title = {MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer},

author = {Sachin Mehta and Mohammad Rastegari},

year = {2022},

URL = {https://arxiv.org/abs/2110.02178}

}

```

| 4,265 | [

[

-0.04833984375,

-0.024993896484375,

0.0092010498046875,

0.0025043487548828125,

-0.0364990234375,

-0.0210723876953125,

0.01239776611328125,

-0.032745361328125,

0.01898193359375,

0.013397216796875,

-0.03546142578125,

-0.034637451171875,

-0.032073974609375,

-0.... |

Jade1211/textual_inversion_newcar | 2023-08-11T19:25:03.000Z | [

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"textual_inversion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Jade1211 | null | null | Jade1211/textual_inversion_newcar | 0 | 1,299 | diffusers | 2023-08-11T18:35:45 |

---

license: creativeml-openrail-m

base_model: runwayml/stable-diffusion-v1-5

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- textual_inversion

inference: true

---

# Textual inversion text2image fine-tuning - Jade1211/textual_inversion_newcar

These are textual inversion adaption weights for runwayml/stable-diffusion-v1-5. You can find some example images in the following.

| 420 | [

[

-0.00457763671875,

-0.050994873046875,

0.0281524658203125,

0.0325927734375,

-0.0266571044921875,

-0.0007410049438476562,

0.01319122314453125,

0.00864410400390625,

0.0017719268798828125,

0.05499267578125,

-0.05108642578125,

-0.02618408203125,

-0.0447998046875,

... |

lxyuan/span-marker-bert-base-multilingual-uncased-multinerd | 2023-08-28T15:40:39.000Z | [

"span-marker",

"pytorch",

"generated_from_trainer",

"ner",

"named-entity-recognition",

"token-classification",

"de",

"en",

"es",

"fr",

"it",

"nl",

"pl",

"pt",

"ru",

"zh",

"dataset:Babelscape/multinerd",

"license:cc-by-nc-sa-4.0",

"model-index",

"region:us"

] | token-classification | lxyuan | null | null | lxyuan/span-marker-bert-base-multilingual-uncased-multinerd | 8 | 1,299 | span-marker | 2023-08-14T09:34:03 | ---

tags:

- generated_from_trainer

- ner

- named-entity-recognition

- span-marker

model-index:

- name: span-marker-bert-base-multilingual-uncased-multinerd

results:

- task:

type: token-classification

name: Named Entity Recognition

dataset:

type: Babelscape/multinerd

name: MultiNERD

split: test

revision: 2814b78e7af4b5a1f1886fe7ad49632de4d9dd25

metrics:

- type: f1

value: 0.9187

name: F1

- type: precision

value: 0.9202

name: Precision

- type: recall

value: 0.9172

name: Recall

license: cc-by-nc-sa-4.0

datasets:

- Babelscape/multinerd

metrics:

- precision

- recall

- f1

pipeline_tag: token-classification

widget:

- text: >-

amelia earthart flog mit ihrer einmotorigen lockheed vega 5b über den

atlantik nach paris.

example_title: German

- text: >-

amelia earhart flew her single engine lockheed vega 5b across the atlantic

to paris.

example_title: English

- text: >-

amelia earthart voló su lockheed vega 5b monomotor a través del océano

atlántico hasta parís.

example_title: Spanish

- text: >-

amelia earthart a fait voler son monomoteur lockheed vega 5b à travers

l'ocean atlantique jusqu'à paris.

example_title: French

- text: >-

amelia earhart ha volato con il suo monomotore lockheed vega 5b attraverso

l'atlantico fino a parigi.

example_title: Italian

- text: >-

amelia earthart vloog met haar één-motorige lockheed vega 5b over de

atlantische oceaan naar parijs.

example_title: Dutch

- text: >-

amelia earthart przeleciała swoim jednosilnikowym samolotem lockheed vega 5b

przez ocean atlantycki do paryża.

example_title: Polish

- text: >-

amelia earhart voou em seu monomotor lockheed vega 5b através do atlântico

para paris.

example_title: Portuguese

- text: >-

амелия эртхарт перелетела на своем одномоторном самолете lockheed vega 5b

через атлантический океан в париж.

example_title: Russian

- text: >-

amelia earthart flaug eins hreyfils lockheed vega 5b yfir atlantshafið til

parísar.

example_title: Icelandic

- text: >-

η amelia earthart πέταξε το μονοκινητήριο lockheed vega 5b της πέρα από

τον ατλαντικό ωκεανό στο παρίσι.

example_title: Greek

- text: >-

amelia earhartová přeletěla se svým jednomotorovým lockheed vega 5b přes

atlantik do paříže.

example_title: Czech

- text: >-

amelia earhart lensi yksimoottorisella lockheed vega 5b:llä atlantin yli

pariisiin.

example_title: Finnish

- text: >-

amelia earhart fløj med sin enmotoriske lockheed vega 5b over atlanten til

paris.

example_title: Danish

- text: >-

amelia earhart flög sin enmotoriga lockheed vega 5b över atlanten till

paris.

example_title: Swedish

- text: >-

amelia earhart fløy sin enmotoriske lockheed vega 5b over atlanterhavet til

paris.

example_title: Norwegian

- text: >-

amelia earhart și-a zburat cu un singur motor lockheed vega 5b peste

atlantic până la paris.

example_title: Romanian

- text: >-

amelia earhart menerbangkan mesin tunggal lockheed vega 5b melintasi

atlantik ke paris.

example_title: Indonesian

- text: >-

амелія эрхарт пераляцела на сваім аднаматорным lockheed vega 5b праз

атлантыку ў парыж.

example_title: Belarusian

- text: >-

амелія ергарт перелетіла на своєму одномоторному літаку lockheed vega 5b

через атлантику до парижа.

example_title: Ukrainian

- text: >-

amelia earhart preletjela je svojim jednomotornim zrakoplovom lockheed vega

5b preko atlantika do pariza.

example_title: Croatian

- text: >-

amelia earhart lendas oma ühemootoriga lockheed vega 5b üle atlandi ookeani

pariisi.

example_title: Estonian

language:

- de

- en

- es

- fr

- it

- nl

- pl

- pt

- ru

- zh

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# span-marker-bert-base-multilingual-uncased-multinerd

This model is a fine-tuned version of [bert-base-multilingual-uncased](https://huggingface.co/bert-base-multilingual-uncased) on an [Babelscape/multinerd](https://huggingface.co/datasets/Babelscape/multinerd) dataset.

Is your data always capitalized correctly? Then consider using the cased variant of this model instead for better performance:

[lxyuan/span-marker-bert-base-multilingual-cased-multinerd](https://huggingface.co/lxyuan/span-marker-bert-base-multilingual-cased-multinerd).

This model achieves the following results on the evaluation set:

- Loss: 0.0054

- Overall Precision: 0.9275

- Overall Recall: 0.9147

- Overall F1: 0.9210

- Overall Accuracy: 0.9842

Test set results:

- test_loss: 0.0058621917851269245,

- test_overall_accuracy: 0.9831472809849865,

- test_overall_f1: 0.9187844693592546,

- test_overall_precision: 0.9202802342397876,

- test_overall_recall: 0.9172935588307115,

- test_runtime: 2716.7472,

- test_samples_per_second: 149.141,

- test_steps_per_second: 4.661,

Note:

This is a replication of Tom's work. In this work, we used slightly different hyperparameters: `epochs=3` and `gradient_accumulation_steps=2`.

We also switched to the uncased [bert model](https://huggingface.co/bert-base-multilingual-uncased) to see if an uncased encoder model would perform better for commonly lowercased entities like, such as food. Please check the discussion [here](https://huggingface.co/lxyuan/span-marker-bert-base-multilingual-cased-multinerd/discussions/1).

Refer to the official [model page](https://huggingface.co/tomaarsen/span-marker-mbert-base-multinerd) to review their results and training script.

## Results:

| **Language** | **Precision** | **Recall** | **F1** |

|--------------|---------------|------------|-----------|

| **all** | 92.03 | 91.73 | **91.88** |

| **de** | 94.96 | 94.87 | **94.91** |

| **en** | 93.69 | 93.75 | **93.72** |

| **es** | 91.19 | 90.69 | **90.94** |

| **fr** | 91.36 | 90.74 | **91.05** |

| **it** | 90.51 | 92.57 | **91.53** |

| **nl** | 93.23 | 92.13 | **92.67** |

| **pl** | 92.17 | 91.59 | **91.88** |

| **pt** | 92.70 | 91.59 | **92.14** |

| **ru** | 92.31 | 92.36 | **92.34** |

| **zh** | 88.91 | 87.53 | **88.22** |

Below is a combined table that compares the results of the cased and uncased models for each language:

| **Language** | **Metric** | **Cased** | **Uncased** |

|--------------|--------------|-----------|-------------|

| **all** | Precision | 92.42 | 92.03 |

| | Recall | 92.81 | 91.73 |

| | F1 | **92.61** | 91.88 |

| **de** | Precision | 95.03 | 94.96 |

| | Recall | 95.07 | 94.87 |

| | F1 | **95.05** | 94.91 |

| **en** | Precision | 95.00 | 93.69 |

| | Recall | 95.40 | 93.75 |

| | F1 | **95.20** | 93.72 |

| **es** | Precision | 92.05 | 91.19 |

| | Recall | 91.37 | 90.69 |

| | F1 | **91.71** | 90.94 |

| **fr** | Precision | 92.37 | 91.36 |

| | Recall | 91.41 | 90.74 |

| | F1 | **91.89** | 91.05 |

| **it** | Precision | 91.45 | 90.51 |

| | Recall | 93.15 | 92.57 |

| | F1 | **92.29** | 91.53 |

| **nl** | Precision | 93.85 | 93.23 |

| | Recall | 92.98 | 92.13 |

| | F1 | **93.41** | 92.67 |

| **pl** | Precision | 93.13 | 92.17 |

| | Recall | 92.66 | 91.59 |

| | F1 | **92.89** | 91.88 |

| **pt** | Precision | 93.60 | 92.70 |

| | Recall | 92.50 | 91.59 |

| | F1 | **93.05** | 92.14 |

| **ru** | Precision | 93.25 | 92.31 |

| | Recall | 93.32 | 92.36 |

| | F1 | **93.29** | 92.34 |

| **zh** | Precision | 89.47 | 88.91 |

| | Recall | 88.40 | 87.53 |

| | F1 | **88.93** | 88.22 |

Short discussion:

Upon examining the results, one might conclude that the cased version of the model is better than the uncased version,

as it outperforms the latter across all languages. However, I recommend that users test both models on their specific

datasets (or domains) to determine which one actually delivers better performance. My reasoning for this suggestion

stems from a brief comparison I conducted on the FOOD (food) entities. I found that both cased and uncased models are

sensitive to the full stop punctuation mark. We direct readers to the section: Quick Comparison on FOOD Entities.

## Label set

| Class | Description | Examples |

|-------|-------------|----------|

| **PER (person)** | People | Ray Charles, Jessica Alba, Leonardo DiCaprio, Roger Federer, Anna Massey. |

| **ORG (organization)** | Associations, companies, agencies, institutions, nationalities and religious or political groups | University of Edinburgh, San Francisco Giants, Google, Democratic Party. |

| **LOC (location)** | Physical locations (e.g. mountains, bodies of water), geopolitical entities (e.g. cities, states), and facilities (e.g. bridges, buildings, airports). | Rome, Lake Paiku, Chrysler Building, Mount Rushmore, Mississippi River. |

| **ANIM (animal)** | Breeds of dogs, cats and other animals, including their scientific names. | Maine Coon, African Wild Dog, Great White Shark, New Zealand Bellbird. |

| **BIO (biological)** | Genus of fungus, bacteria and protoctists, families of viruses, and other biological entities. | Herpes Simplex Virus, Escherichia Coli, Salmonella, Bacillus Anthracis. |

| **CEL (celestial)** | Planets, stars, asteroids, comets, nebulae, galaxies and other astronomical objects. | Sun, Neptune, Asteroid 187 Lamberta, Proxima Centauri, V838 Monocerotis. |

| **DIS (disease)** | Physical, mental, infectious, non-infectious, deficiency, inherited, degenerative, social and self-inflicted diseases. | Alzheimer’s Disease, Cystic Fibrosis, Dilated Cardiomyopathy, Arthritis. |

| **EVE (event)** | Sport events, battles, wars and other events. | American Civil War, 2003 Wimbledon Championships, Cannes Film Festival. |

| **FOOD (food)** | Foods and drinks. | Carbonara, Sangiovese, Cheddar Beer Fondue, Pizza Margherita. |

| **INST (instrument)** | Technological instruments, mechanical instruments, musical instruments, and other tools. | Spitzer Space Telescope, Commodore 64, Skype, Apple Watch, Fender Stratocaster. |

| **MEDIA (media)** | Titles of films, books, magazines, songs and albums, fictional characters and languages. | Forbes, American Psycho, Kiss Me Once, Twin Peaks, Disney Adventures. |

| **PLANT (plant)** | Types of trees, flowers, and other plants, including their scientific names. | Salix, Quercus Petraea, Douglas Fir, Forsythia, Artemisia Maritima. |

| **MYTH (mythological)** | Mythological and religious entities. | Apollo, Persephone, Aphrodite, Saint Peter, Pope Gregory I, Hercules. |

| **TIME (time)** | Specific and well-defined time intervals, such as eras, historical periods, centuries, years and important days. No months and days of the week. | Renaissance, Middle Ages, Christmas, Great Depression, 17th Century, 2012. |

| **VEHI (vehicle)** | Cars, motorcycles and other vehicles. | Ferrari Testarossa, Suzuki Jimny, Honda CR-X, Boeing 747, Fairey Fulmar. |

## Inference Example

```python

# install span_marker

(env)$ pip install span_marker

from span_marker import SpanMarkerModel

model = SpanMarkerModel.from_pretrained("lxyuan/span-marker-bert-base-multilingual-uncased-multinerd")

description = "Singapore is renowned for its hawker centers offering dishes \

like Hainanese chicken rice and laksa, while Malaysia boasts dishes such as \

nasi lemak and rendang, reflecting its rich culinary heritage."

entities = model.predict(description)

entities

>>>

[

{'span': 'Singapore', 'label': 'LOC', 'score': 0.9999247789382935, 'char_start_index': 0, 'char_end_index': 9},

{'span': 'laksa', 'label': 'FOOD', 'score': 0.794235348701477, 'char_start_index': 93, 'char_end_index': 98},

{'span': 'Malaysia', 'label': 'LOC', 'score': 0.9999157190322876, 'char_start_index': 106, 'char_end_index': 114}

]

# missed: Hainanese chicken rice as FOOD

# missed: nasi lemak as FOOD

# missed: rendang as FOOD

# note: Unfortunately, this uncased version still fails to pick up those commonly lowercased food entities and even misses out on the capitalized `Hainanese chicken rice` entity.

```

#### Quick test on Chinese

```python

from span_marker import SpanMarkerModel

model = SpanMarkerModel.from_pretrained("lxyuan/span-marker-bert-base-multilingual-uncased-multinerd")

# translate to chinese

description = "Singapore is renowned for its hawker centers offering dishes \

like Hainanese chicken rice and laksa, while Malaysia boasts dishes such as \

nasi lemak and rendang, reflecting its rich culinary heritage."

zh_description = "新加坡因其小贩中心提供海南鸡饭和叻沙等菜肴而闻名, 而马来西亚则拥有椰浆饭和仁当等菜肴,反映了其丰富的烹饪传统."

entities = model.predict(zh_description)

entities

>>>

[

{'span': '新加坡', 'label': 'LOC', 'score': 0.8477746248245239, 'char_start_index': 0, 'char_end_index': 3},

{'span': '马来西亚', 'label': 'LOC', 'score': 0.7525337934494019, 'char_start_index': 27, 'char_end_index': 31}

]

# It only managed to capture two countries: Singapore and Malaysia.

# All other entities were missed out.

# Same prediction as the [uncased model](https://huggingface.co/lxyuan/span-marker-bert-base-multilingual-cased-multinerd)

```

### Quick Comparison on FOOD Entities

In this quick comparison, we found that a full stop punctuation mark seems to help the uncased model identify food entities,

regardless of whether they are capitalized or in uppercase. In contrast, the cased model doesn't respond well to full stops,

and adding them would lower the prediction score.

```python

from span_marker import SpanMarkerModel

cased_model = SpanMarkerModel.from_pretrained("lxyuan/span-marker-bert-base-multilingual-cased-multinerd")

uncased_model = SpanMarkerModel.from_pretrained("lxyuan/span-marker-bert-base-multilingual-uncased-multinerd")

# no full stop mark

uncased_model.predict("i love fried chicken and korea bbq")

>>> []

uncased_model.predict("i love fried chicken and korea BBQ") # Uppercase BBQ only

>>> []

uncased_model.predict("i love fried chicken and Korea BBQ") # Capitalize korea and uppercase BBQ

>>> []

# add full stop to get better result

uncased_model.predict("i love fried chicken and korea bbq.")

>>> [

{'span': 'fried chicken', 'label': 'FOOD', 'score': 0.6531468629837036, 'char_start_index': 7, 'char_end_index': 20},

{'span': 'korea bbq', 'label': 'FOOD', 'score': 0.9738698601722717, 'char_start_index': 25,'char_end_index': 34}

]

uncased_model.predict("i love fried chicken and korea BBQ.")

>>> [

{'span': 'fried chicken', 'label': 'FOOD', 'score': 0.6531468629837036, 'char_start_index': 7, 'char_end_index': 20},

{'span': 'korea BBQ', 'label': 'FOOD', 'score': 0.9738698601722717, 'char_start_index': 25, 'char_end_index': 34}

]

uncased_model.predict("i love fried chicken and Korea BBQ.")

>>> [

{'span': 'fried chicken', 'label': 'FOOD', 'score': 0.6531468629837036, 'char_start_index': 7, 'char_end_index': 20},

{'span': 'Korea BBQ', 'label': 'FOOD', 'score': 0.9738698601722717, 'char_start_index': 25, 'char_end_index': 34}

]

# no full stop mark

cased_model.predict("i love fried chicken and korea bbq")

>>> [

{'span': 'korea bbq', 'label': 'FOOD', 'score': 0.5054221749305725, 'char_start_index': 25, 'char_end_index': 34}

]

cased_model.predict("i love fried chicken and korea BBQ")

>>> [

{'span': 'korea BBQ', 'label': 'FOOD', 'score': 0.6987857222557068, 'char_start_index': 25, 'char_end_index': 34}

]

cased_model.predict("i love fried chicken and Korea BBQ")

>>> [

{'span': 'Korea BBQ', 'label': 'FOOD', 'score': 0.9755308032035828, 'char_start_index': 25, 'char_end_index': 34}

]

# add a fullstop mark hurt the cased model prediction score a little bit

cased_model.predict("i love fried chicken and korea bbq.")

>>> []

cased_model.predict("i love fried chicken and korea BBQ.")

>>> [

{'span': 'korea BBQ', 'label': 'FOOD', 'score': 0.5078140497207642, 'char_start_index': 25, 'char_end_index': 34}

]

cased_model.predict("i love fried chicken and Korea BBQ.")

>>> [

{'span': 'Korea BBQ', 'label': 'FOOD', 'score': 0.895089328289032, 'char_start_index': 25, 'char_end_index': 34}

]

```

## Training procedure

One can reproduce the result running this [script](https://huggingface.co/tomaarsen/span-marker-mbert-base-multinerd/blob/main/train.py)

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Overall Precision | Overall Recall | Overall F1 | Overall Accuracy |

|:-------------:|:-----:|:------:|:---------------:|:-----------------:|:--------------:|:----------:|:----------------:|

| 0.0157 | 1.0 | 50369 | 0.0048 | 0.9143 | 0.8986 | 0.9064 | 0.9807 |

| 0.003 | 2.0 | 100738 | 0.0047 | 0.9237 | 0.9126 | 0.9181 | 0.9835 |

| 0.0017 | 3.0 | 151107 | 0.0054 | 0.9275 | 0.9147 | 0.9210 | 0.9842 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu117

- Datasets 2.14.3

- Tokenizers 0.13.3 | 18,283 | [

[

-0.027557373046875,

-0.049896240234375,

0.01641845703125,

0.016082763671875,

-0.010986328125,

0.0208892822265625,

-0.0178375244140625,

-0.0291900634765625,

0.047454833984375,

0.030029296875,

-0.033233642578125,

-0.058868408203125,

-0.040863037109375,

0.00352... |

maywell/synatra_V0.01 | 2023-10-08T09:21:04.000Z | [

"transformers",

"pytorch",

"mistral",

"text-generation",

"ko",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | maywell | null | null | maywell/synatra_V0.01 | 1 | 1,299 | transformers | 2023-10-07T12:47:34 | ---

license: cc-by-nc-4.0

language:

- ko

library_name: transformers

---

테스트 모델입니다.

### 사용시 주의사항

프롬프트 입력시 ```[INST] 프롬프트 메시지 [\INST]``` 형식 맞추어 주셔야 합니다. 원본 모델이 그렇습니다. | 168 | [

[

-0.019683837890625,

-0.040496826171875,

0.007587432861328125,

0.056610107421875,

-0.055328369140625,

0.0148773193359375,

0.03778076171875,

-0.0018796920776367188,

0.04541015625,

0.050079345703125,

-0.00981903076171875,

-0.037139892578125,

-0.048309326171875,

... |

mncai/Mistral-7B-v0.1-combine-1k | 2023-10-22T06:02:24.000Z | [

"transformers",

"pytorch",

"llama",

"text-generation",

"MindsAndCompany",

"en",

"ko",

"dataset:DopeorNope/combined",

"arxiv:2306.02707",

"license:mit",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | mncai | null | null | mncai/Mistral-7B-v0.1-combine-1k | 0 | 1,299 | transformers | 2023-10-22T05:30:45 | ---

pipeline_tag: text-generation

license: mit

language:

- en

- ko

library_name: transformers

tags:

- MindsAndCompany

datasets:

- DopeorNope/combined

---

## Model Details

* **Developed by**: [Minds And Company](https://mnc.ai/)

* **Backbone Model**: [Mistral-7B-v0.1](mistralai/Mistral-7B-v0.1)

* **Library**: [HuggingFace Transformers](https://github.com/huggingface/transformers)

## Dataset Details

### Used Datasets

- - DopeorNope/combined

### Prompt Template

- Llama Prompt Template

## Limitations & Biases:

Llama2 and fine-tuned variants are a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2 and any fine-tuned varient's potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2 variants, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at https://ai.meta.com/llama/responsible-use-guide/

## License Disclaimer:

This model is bound by the license & usage restrictions of the original Llama-2 model. And comes with no warranty or gurantees of any kind.

## Contact Us

- [Minds And Company](https://mnc.ai/)

## Citiation:

Please kindly cite using the following BibTeX:

```bibtex

@misc{mukherjee2023orca,

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

year={2023},

eprint={2306.02707},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

```

@misc{Orca-best,

title = {Orca-best: A filtered version of orca gpt4 dataset.},

author = {Shahul Es},

year = {2023},

publisher = {HuggingFace},

journal = {HuggingFace repository},

howpublished = {\url{https://huggingface.co/datasets/shahules786/orca-best/},

}

```

```

@software{touvron2023llama2,

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

author={Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava,

Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller,

Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Hartshorn, Saghar Hosseini, Rui Hou, Hakan Inan, Marcin Kardas, Viktor Kerkez Madian Khabsa, Isabel Kloumann,

Artem Korenev, Punit Singh Koura, Marie-Anne Lachaux, Thibaut Lavril, Jenya Lee, Diana Liskovich, Yinghai Lu, Yuning Mao, Xavier Martinet, Todor Mihaylov,

Pushkar Mishra, Igor Molybog, Yixin Nie, Andrew Poulton, Jeremy Reizenstein, Rashi Rungta, Kalyan Saladi, Alan Schelten, Ruan Silva, Eric Michael Smith,

Ranjan Subramanian, Xiaoqing Ellen Tan, Binh Tang, Ross Taylor, Adina Williams, Jian Xiang Kuan, Puxin Xu , Zheng Yan, Iliyan Zarov, Yuchen Zhang, Angela Fan,

Melanie Kambadur, Sharan Narang, Aurelien Rodriguez, Robert Stojnic, Sergey Edunov, Thomas Scialom},

year={2023}

}

```

> Readme format: [Riiid/sheep-duck-llama-2-70b-v1.1](https://huggingface.co/Riiid/sheep-duck-llama-2-70b-v1.1) | 3,404 | [

[

-0.0311279296875,

-0.048858642578125,

0.011444091796875,

0.0198211669921875,

-0.0239410400390625,

0.007663726806640625,

0.01218414306640625,

-0.05291748046875,

0.0167999267578125,

0.0253753662109375,

-0.05499267578125,

-0.04119873046875,

-0.046356201171875,

... |

s-nlp/Mutual_Implication_Score | 2022-07-11T12:36:45.000Z | [

"transformers",

"pytorch",

"roberta",

"paraphrase detection",

"paraphrase",

"paraphrasing",

"en",

"endpoints_compatible",

"region:us"

] | null | s-nlp | null | null | s-nlp/Mutual_Implication_Score | 0 | 1,297 | transformers | 2022-04-12T10:58:35 | ---

language:

- en

tags:

- paraphrase detection

- paraphrase

- paraphrasing

licenses:

- cc-by-nc-sa

---

## Model overview

Mutual Implication Score is a symmetric measure of text semantic similarity

based on a RoBERTA model pretrained for natural language inference

and fine-tuned on a paraphrase detection dataset.

The code for inference and evaluation of the model is available [here](https://github.com/skoltech-nlp/mutual_implication_score).

This measure is **particularly useful for paraphrase detection**, but can also be applied to other semantic similarity tasks, such as content similarity scoring in text style transfer.

## How to use

The following snippet illustrates code usage:

```python

!pip install mutual-implication-score

from mutual_implication_score import MIS

mis = MIS(device='cpu')#cuda:0 for using cuda with certain index

source_texts = ['I want to leave this room',

'Hello world, my name is Nick']

paraphrases = ['I want to go out of this room',

'Hello world, my surname is Petrov']

scores = mis.compute(source_texts, paraphrases)

print(scores)

# expected output: [0.9748, 0.0545]

```

## Model details

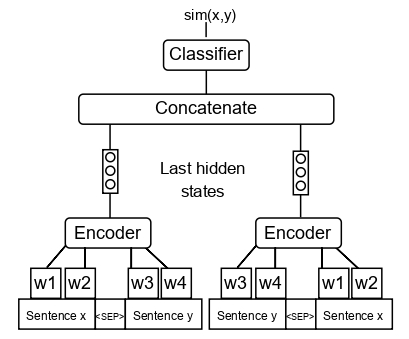

We slightly modify the [RoBERTa-Large NLI](https://huggingface.co/ynie/roberta-large-snli_mnli_fever_anli_R1_R2_R3-nli) model architecture (see the scheme below) and fine-tune it with [QQP](https://www.kaggle.com/c/quora-question-pairs) paraphrase dataset.

## Performance on Text Style Transfer and Paraphrase Detection tasks

This measure was developed in terms of large scale comparison of different measures on text style transfer and paraphrase datasets.

<img src="https://huggingface.co/SkolkovoInstitute/Mutual_Implication_Score/raw/main/corr_main.jpg" alt="drawing" width="1000"/>

The scheme above shows the correlations of measures of different classes with human judgments on paraphrase and text style transfer datasets. The text above each dataset indicates the best-performing measure. The rightmost columns show the mean performance of measures across the datasets.

MIS outperforms all measures on the paraphrase detection task and performs on par with top measures on the text style transfer task.

To learn more, refer to our article: [A large-scale computational study of content preservation measures for text style transfer and paraphrase generation](https://aclanthology.org/2022.acl-srw.23/)

## Citations

If you find this repository helpful, feel free to cite our publication:

```

@inproceedings{babakov-etal-2022-large,

title = "A large-scale computational study of content preservation measures for text style transfer and paraphrase generation",

author = "Babakov, Nikolay and

Dale, David and

Logacheva, Varvara and

Panchenko, Alexander",

booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics: Student Research Workshop",

month = may,

year = "2022",

address = "Dublin, Ireland",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.acl-srw.23",

pages = "300--321",

abstract = "Text style transfer and paraphrasing of texts are actively growing areas of NLP, dozens of methods for solving these tasks have been recently introduced. In both tasks, the system is supposed to generate a text which should be semantically similar to the input text. Therefore, these tasks are dependent on methods of measuring textual semantic similarity. However, it is still unclear which measures are the best to automatically evaluate content preservation between original and generated text. According to our observations, many researchers still use BLEU-like measures, while there exist more advanced measures including neural-based that significantly outperform classic approaches. The current problem is the lack of a thorough evaluation of the available measures. We close this gap by conducting a large-scale computational study by comparing 57 measures based on different principles on 19 annotated datasets. We show that measures based on cross-encoder models outperform alternative approaches in almost all cases.We also introduce the Mutual Implication Score (MIS), a measure that uses the idea of paraphrasing as a bidirectional entailment and outperforms all other measures on the paraphrase detection task and performs on par with the best measures in the text style transfer task.",

}

```

## Licensing Information

[Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License][cc-by-nc-sa].

[![CC BY-NC-SA 4.0][cc-by-nc-sa-image]][cc-by-nc-sa]

[cc-by-nc-sa]: http://creativecommons.org/licenses/by-nc-sa/4.0/

[cc-by-nc-sa-image]: https://i.creativecommons.org/l/by-nc-sa/4.0/88x31.png

| 4,847 | [

[

-0.0275726318359375,

-0.065673828125,

0.039764404296875,

0.03131103515625,

-0.0338134765625,

-0.0266265869140625,

-0.01776123046875,

-0.01337432861328125,

0.0183258056640625,

0.037322998046875,

-0.025299072265625,

-0.047760009765625,

-0.053741455078125,

0.03... |

Norod78/SDXL-jojoso_style-Lora | 2023-08-30T12:22:51.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"en",

"region:us",

"has_space"

] | text-to-image | Norod78 | null | null | Norod78/SDXL-jojoso_style-Lora | 2 | 1,296 | diffusers | 2023-08-17T12:33:25 | ---

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: jojoso style

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

widget:

- text: jojoso style cute dog

- text: jojoso style picure of a Pokemon

- text: jojoso style Cthulhu rising from the sea in a great storm

- text: jojoso style girl with a pearl earring by johannes vermeer

inference: true

language:

- en

---

# Trigger words

Use "jojoso style" in your prompts

# Examples

The girl with a pearl earring by johannes vermeer, Very detailed, clean, high quality, sharp image, jojoso style

Cthulhu rising from the sea in a great storm, Very detailed, clean, high quality, sharp image, jojoso style

| 1,346 | [

[

-0.02874755859375,

-0.07275390625,

0.0418701171875,

0.03204345703125,

-0.047149658203125,

0.0008511543273925781,

0.00043964385986328125,

-0.031341552734375,

0.048828125,

0.058807373046875,

-0.0538330078125,

-0.051605224609375,

-0.060272216796875,

0.042175292... |

alvaroalon2/biobert_diseases_ner | 2023-03-17T12:11:20.000Z | [

"transformers",

"pytorch",

"bert",

"token-classification",

"NER",

"Biomedical",

"Diseases",

"en",

"dataset:BC5CDR-diseases",

"dataset:ncbi_disease",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | token-classification | alvaroalon2 | null | null | alvaroalon2/biobert_diseases_ner | 27 | 1,295 | transformers | 2022-03-02T23:29:05 | ---

language: en

license: apache-2.0

tags:

- token-classification

- NER

- Biomedical

- Diseases

datasets:

- BC5CDR-diseases

- ncbi_disease

---

BioBERT model fine-tuned in NER task with BC5CDR-diseases and NCBI-diseases corpus

This was fine-tuned in order to use it in a BioNER/BioNEN system which is available at: https://github.com/librairy/bio-ner | 350 | [

[

-0.02239990234375,

-0.038330078125,

0.03826904296875,

0.0029296875,

-0.00881195068359375,

0.015716552734375,

0.016021728515625,

-0.04949951171875,

0.037994384765625,

0.041748046875,

-0.039306640625,

-0.0611572265625,

-0.0290985107421875,

0.036163330078125,

... |

AIARTCHAN/7pa | 2023-03-05T01:22:00.000Z | [

"diffusers",

"stable-diffusion",

"aiartchan",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | AIARTCHAN | null | null | AIARTCHAN/7pa | 10 | 1,294 | diffusers | 2023-03-05T01:10:23 | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- aiartchan

---

# 7pa

[원본글](https://arca.live/b/aiart/70729603)

[civitai](https://civitai.com/models/13468)

# Download

- [original 4.27GB](https://civitai.com/api/download/models/15869)

- [fp16 2.13GB](https://huggingface.co/AIARTCHAN/7pa/blob/main/7pa-fp16.safetensors)

7th anime v3 + 파스텔 + 어비스오렌지2(sfw)

| 891 | [

[

-0.040740966796875,

-0.01422119140625,

0.020538330078125,

0.056732177734375,

-0.0447998046875,

-0.0006814002990722656,

0.016387939453125,

-0.043182373046875,

0.062103271484375,

0.028656005859375,

-0.034332275390625,

-0.0234222412109375,

-0.046173095703125,

0... |

mdraw/german-news-sentiment-bert | 2023-03-17T14:29:53.000Z | [

"transformers",

"pytorch",

"jax",

"safetensors",

"bert",

"text-classification",

"endpoints_compatible",

"region:us"

] | text-classification | mdraw | null | null | mdraw/german-news-sentiment-bert | 4 | 1,292 | transformers | 2022-03-02T23:29:05 | # German sentiment BERT finetuned on news data

Sentiment analysis model based on https://huggingface.co/oliverguhr/german-sentiment-bert, with additional training on German news texts about migration.

This model is part of the project https://github.com/text-analytics-20/news-sentiment-development, which explores sentiment development in German news articles about migration between 2007 and 2019.

Code for inference (predicting sentiment polarity) on raw text can be found at https://github.com/text-analytics-20/news-sentiment-development/blob/main/sentiment_analysis/bert.py

If you are not interested in polarity but just want to predict discrete class labels (0: positive, 1: negative, 2: neutral), you can also use the model with Oliver Guhr's `germansentiment` package as follows:

First install the package from PyPI:

```bash

pip install germansentiment

```

Then you can use the model in Python:

```python

from germansentiment import SentimentModel

model = SentimentModel('mdraw/german-news-sentiment-bert')

# Examples from our validation dataset

texts = [

'[...], schwärmt der parteilose Vizebürgermeister und Historiker Christian Matzka von der "tollen Helferszene".',

'Flüchtlingsheim 11.05 Uhr: Massenschlägerei',

'Rotterdam habe einen Migrantenanteil von mehr als 50 Prozent.',

]

result = model.predict_sentiment(texts)

print(result)

```

The code above will print:

```python

['positive', 'negative', 'neutral']

```

| 1,454 | [

[

-0.040863037109375,

-0.044677734375,

0.02569580078125,

0.031097412109375,

-0.0279388427734375,

-0.0211639404296875,

-0.01338958740234375,

-0.01288604736328125,

0.02178955078125,

0.003307342529296875,

-0.061614990234375,

-0.058837890625,

-0.0266876220703125,

... |

timm/tf_efficientnetv2_xl.in21k_ft_in1k | 2023-04-27T22:18:18.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"dataset:imagenet-21k",

"arxiv:2104.00298",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/tf_efficientnetv2_xl.in21k_ft_in1k | 3 | 1,292 | timm | 2022-12-13T00:20:45 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

- imagenet-21k

---

# Model card for tf_efficientnetv2_xl.in21k_ft_in1k

A EfficientNet-v2 image classification model. Trained on ImageNet-21k and fine-tuned on ImageNet-1k in Tensorflow by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 208.1

- GMACs: 52.8

- Activations (M): 139.2

- Image size: train = 384 x 384, test = 512 x 512

- **Papers:**

- EfficientNetV2: Smaller Models and Faster Training: https://arxiv.org/abs/2104.00298

- **Dataset:** ImageNet-1k

- **Pretrain Dataset:** ImageNet-21k

- **Original:** https://github.com/tensorflow/tpu/tree/master/models/official/efficientnet

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('tf_efficientnetv2_xl.in21k_ft_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'tf_efficientnetv2_xl.in21k_ft_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 32, 192, 192])

# torch.Size([1, 64, 96, 96])

# torch.Size([1, 96, 48, 48])

# torch.Size([1, 256, 24, 24])

# torch.Size([1, 640, 12, 12])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'tf_efficientnetv2_xl.in21k_ft_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1280, 12, 12) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@inproceedings{tan2021efficientnetv2,

title={Efficientnetv2: Smaller models and faster training},

author={Tan, Mingxing and Le, Quoc},

booktitle={International conference on machine learning},

pages={10096--10106},

year={2021},

organization={PMLR}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 4,195 | [

[

-0.0279998779296875,

-0.033935546875,

-0.005199432373046875,

0.007396697998046875,

-0.0240936279296875,

-0.030364990234375,

-0.0203857421875,

-0.029632568359375,

0.0119476318359375,

0.02923583984375,

-0.026824951171875,

-0.046630859375,

-0.0540771484375,

-0.... |

julien-c/hotdog-not-hotdog | 2022-09-05T21:30:21.000Z | [

"transformers",

"pytorch",

"tensorboard",

"coreml",

"vit",

"image-classification",

"huggingpics",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | image-classification | julien-c | null | null | julien-c/hotdog-not-hotdog | 14 | 1,291 | transformers | 2022-03-02T23:29:05 | ---

tags:

- image-classification

- huggingpics

metrics:

- accuracy

model-index:

- name: hotdog-not-hotdog

results:

- task:

name: Image Classification

type: image-classification

metrics:

- name: Accuracy

type: accuracy

value: 0.824999988079071

---

# hotdog-not-hotdog

Autogenerated by HuggingPics🤗🖼️

Create your own image classifier for **anything** by running [the demo on Google Colab](https://colab.research.google.com/github/nateraw/huggingpics/blob/main/HuggingPics.ipynb).

Report any issues with the demo at the [github repo](https://github.com/nateraw/huggingpics).

## Example Images

#### hot dog

#### not hot dog

| 748 | [

[

-0.0504150390625,

-0.05133056640625,

0.00490570068359375,

0.038787841796875,

-0.03424072265625,

0.0029773712158203125,

0.007415771484375,

-0.021331787109375,

0.04937744140625,

0.0179443359375,

-0.0211944580078125,

-0.0557861328125,

-0.044525146484375,

0.0099... |

ml6team/keyphrase-generation-t5-small-inspec | 2022-08-19T11:54:17.000Z | [

"transformers",

"pytorch",

"t5",

"text2text-generation",

"keyphrase-generation",

"en",

"dataset:midas/inspec",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"text-generation-inference",

"region:us"

] | text2text-generation | ml6team | null | null | ml6team/keyphrase-generation-t5-small-inspec | 4 | 1,290 | transformers | 2022-04-27T12:37:16 | ---

language: en

license: mit

tags:

- keyphrase-generation

datasets:

- midas/inspec

widget:

- text: "Keyphrase extraction is a technique in text analysis where you extract the important keyphrases from a document.

Thanks to these keyphrases humans can understand the content of a text very quickly and easily without reading

it completely. Keyphrase extraction was first done primarily by human annotators, who read the text in detail

and then wrote down the most important keyphrases. The disadvantage is that if you work with a lot of documents,

this process can take a lot of time.

Here is where Artificial Intelligence comes in. Currently, classical machine learning methods, that use statistical

and linguistic features, are widely used for the extraction process. Now with deep learning, it is possible to capture

the semantic meaning of a text even better than these classical methods. Classical methods look at the frequency,

occurrence and order of words in the text, whereas these neural approaches can capture long-term semantic dependencies

and context of words in a text."

example_title: "Example 1"

- text: "In this work, we explore how to learn task specific language models aimed towards learning rich representation of keyphrases from text documents. We experiment with different masking strategies for pre-training transformer language models (LMs) in discriminative as well as generative settings. In the discriminative setting, we introduce a new pre-training objective - Keyphrase Boundary Infilling with Replacement (KBIR), showing large gains in performance (up to 9.26 points in F1) over SOTA, when LM pre-trained using KBIR is fine-tuned for the task of keyphrase extraction. In the generative setting, we introduce a new pre-training setup for BART - KeyBART, that reproduces the keyphrases related to the input text in the CatSeq format, instead of the denoised original input. This also led to gains in performance (up to 4.33 points inF1@M) over SOTA for keyphrase generation. Additionally, we also fine-tune the pre-trained language models on named entity recognition(NER), question answering (QA), relation extraction (RE), abstractive summarization and achieve comparable performance with that of the SOTA, showing that learning rich representation of keyphrases is indeed beneficial for many other fundamental NLP tasks."

example_title: "Example 2"

model-index:

- name: DeDeckerThomas/keyphrase-generation-t5-small-inspec

results:

- task:

type: keyphrase-generation

name: Keyphrase Generation

dataset:

type: midas/inspec

name: inspec

metrics:

- type: F1@M (Present)

value: 0.317

name: F1@M (Present)

- type: F1@O (Present)

value: 0.279

name: F1@O (Present)

- type: F1@M (Absent)

value: 0.073

name: F1@M (Absent)

- type: F1@O (Absent)

value: 0.065

name: F1@O (Absent)

---

# 🔑 Keyphrase Generation Model: T5-small-inspec

Keyphrase extraction is a technique in text analysis where you extract the important keyphrases from a document. Thanks to these keyphrases humans can understand the content of a text very quickly and easily without reading it completely. Keyphrase extraction was first done primarily by human annotators, who read the text in detail and then wrote down the most important keyphrases. The disadvantage is that if you work with a lot of documents, this process can take a lot of time ⏳.

Here is where Artificial Intelligence 🤖 comes in. Currently, classical machine learning methods, that use statistical and linguistic features, are widely used for the extraction process. Now with deep learning, it is possible to capture the semantic meaning of a text even better than these classical methods. Classical methods look at the frequency, occurrence and order of words in the text, whereas these neural approaches can capture long-term semantic dependencies and context of words in a text.

## 📓 Model Description

This model uses [T5-small model](https://huggingface.co/t5-small) as its base model and fine-tunes it on the [Inspec dataset](https://huggingface.co/datasets/midas/inspec). Keyphrase generation transformers are fine-tuned as a text-to-text generation problem where the keyphrases are generated. The result is a concatenated string with all keyphrases separated by a given delimiter (i.e. “;”). These models are capable of generating present and absent keyphrases.

## ✋ Intended Uses & Limitations

### 🛑 Limitations

* This keyphrase generation model is very domain-specific and will perform very well on abstracts of scientific papers. It's not recommended to use this model for other domains, but you are free to test it out.

* Only works for English documents.

* Sometimes the output doesn't make any sense.

### ❓ How To Use

```python

# Model parameters

from transformers import (

Text2TextGenerationPipeline,

AutoModelForSeq2SeqLM,

AutoTokenizer,

)

class KeyphraseGenerationPipeline(Text2TextGenerationPipeline):

def __init__(self, model, keyphrase_sep_token=";", *args, **kwargs):

super().__init__(

model=AutoModelForSeq2SeqLM.from_pretrained(model),

tokenizer=AutoTokenizer.from_pretrained(model),

*args,

**kwargs

)

self.keyphrase_sep_token = keyphrase_sep_token

def postprocess(self, model_outputs):

results = super().postprocess(

model_outputs=model_outputs

)

return [[keyphrase.strip() for keyphrase in result.get("generated_text").split(self.keyphrase_sep_token) if keyphrase != ""] for result in results]

```

```python

# Load pipeline

model_name = "ml6team/keyphrase-generation-t5-small-inspec"

generator = KeyphraseGenerationPipeline(model=model_name)

```

```python

text = """

Keyphrase extraction is a technique in text analysis where you extract the

important keyphrases from a document. Thanks to these keyphrases humans can

understand the content of a text very quickly and easily without reading it

completely. Keyphrase extraction was first done primarily by human annotators,

who read the text in detail and then wrote down the most important keyphrases.

The disadvantage is that if you work with a lot of documents, this process

can take a lot of time.

Here is where Artificial Intelligence comes in. Currently, classical machine

learning methods, that use statistical and linguistic features, are widely used

for the extraction process. Now with deep learning, it is possible to capture

the semantic meaning of a text even better than these classical methods.

Classical methods look at the frequency, occurrence and order of words

in the text, whereas these neural approaches can capture long-term

semantic dependencies and context of words in a text.

""".replace("\n", " ")

keyphrases = generator(text)

print(keyphrases)

```

```

# Output

[['keyphrase extraction', 'text analysis', 'artificial intelligence', 'classical machine learning methods']]

```

## 📚 Training Dataset

[Inspec](https://huggingface.co/datasets/midas/inspec) is a keyphrase extraction/generation dataset consisting of 2000 English scientific papers from the scientific domains of Computers and Control and Information Technology published between 1998 to 2002. The keyphrases are annotated by professional indexers or editors.

You can find more information in the [paper](https://dl.acm.org/doi/10.3115/1119355.1119383).

## 👷♂️ Training Procedure

### Training Parameters

| Parameter | Value |

| --------- | ------|

| Learning Rate | 5e-5 |

| Epochs | 50 |

| Early Stopping Patience | 1 |

### Preprocessing

The documents in the dataset are already preprocessed into list of words with the corresponding keyphrases. The only thing that must be done is tokenization and joining all keyphrases into one string with a certain seperator of choice( ```;``` ).

```python

from datasets import load_dataset

from transformers import AutoTokenizer

# Tokenizer

tokenizer = AutoTokenizer.from_pretrained("t5-small", add_prefix_space=True)

# Dataset parameters

dataset_full_name = "midas/inspec"

dataset_subset = "raw"

dataset_document_column = "document"

keyphrase_sep_token = ";"

def preprocess_keyphrases(text_ids, kp_list):

kp_order_list = []

kp_set = set(kp_list)

text = tokenizer.decode(

text_ids, skip_special_tokens=True, clean_up_tokenization_spaces=True

)

text = text.lower()

for kp in kp_set:

kp = kp.strip()

kp_index = text.find(kp.lower())

kp_order_list.append((kp_index, kp))

kp_order_list.sort()

present_kp, absent_kp = [], []

for kp_index, kp in kp_order_list:

if kp_index < 0:

absent_kp.append(kp)

else:

present_kp.append(kp)

return present_kp, absent_kp

def preprocess_fuction(samples):

processed_samples = {"input_ids": [], "attention_mask": [], "labels": []}

for i, sample in enumerate(samples[dataset_document_column]):

input_text = " ".join(sample)

inputs = tokenizer(

input_text,

padding="max_length",

truncation=True,

)

present_kp, absent_kp = preprocess_keyphrases(

text_ids=inputs["input_ids"],

kp_list=samples["extractive_keyphrases"][i]

+ samples["abstractive_keyphrases"][i],

)

keyphrases = present_kp

keyphrases += absent_kp

target_text = f" {keyphrase_sep_token} ".join(keyphrases)

with tokenizer.as_target_tokenizer():

targets = tokenizer(

target_text, max_length=40, padding="max_length", truncation=True

)

targets["input_ids"] = [

(t if t != tokenizer.pad_token_id else -100)

for t in targets["input_ids"]

]

for key in inputs.keys():

processed_samples[key].append(inputs[key])

processed_samples["labels"].append(targets["input_ids"])

return processed_samples

# Load dataset

dataset = load_dataset(dataset_full_name, dataset_subset)

# Preprocess dataset

tokenized_dataset = dataset.map(preprocess_fuction, batched=True)

```

### Postprocessing

For the post-processing, you will need to split the string based on the keyphrase separator.

```python

def extract_keyphrases(examples):

return [example.split(keyphrase_sep_token) for example in examples]

```

## 📝 Evaluation Results

Traditional evaluation methods are the precision, recall and F1-score @k,m where k is the number that stands for the first k predicted keyphrases and m for the average amount of predicted keyphrases. In keyphrase generation you also look at F1@O where O stands for the number of ground truth keyphrases.

The model achieves the following results on the Inspec test set:

Extractive keyphrases

| Dataset | P@5 | R@5 | F1@5 | P@10 | R@10 | F1@10 | P@M | R@M | F1@M | P@O | R@O | F1@O |

|:-----------------:|:----:|:----:|:----:|:----:|:----:|:-----:|:----:|:----:|:----:|:----:|:----:|:----:|

| Inspec Test Set | 0.33 | 0.31 | 0.29 | 0.17 | 0.31 | 0.20 | 0.41 | 0.31 | 0.32 | 0.28 | 0.28 | 0.28 |

Abstractive keyphrases

| Dataset | P@5 | R@5 | F1@5 | P@10 | R@10 | F1@10 | P@M | R@M | F1@M | P@O | R@O | F1@O |

|:-----------------:|:----:|:----:|:----:|:----:|:----:|:-----:|:----:|:----:|:----:|:----:|:----:|:----:|

| Inspec Test Set | 0.05 | 0.09 | 0.06 | 0.03 | 0.09 | 0.04 | 0.08 | 0.09 | 0.07 | 0.06 | 0.06 | 0.06 |

## 🚨 Issues

Please feel free to start discussions in the Community Tab. | 11,590 | [

[

-0.006015777587890625,

-0.048797607421875,

0.023406982421875,

0.006561279296875,

-0.0284576416015625,

0.0070343017578125,

-0.0204620361328125,

-0.01399993896484375,

-0.0129852294921875,

0.017547607421875,

-0.03594970703125,

-0.044891357421875,

-0.052154541015625... |

cahya/bert-base-indonesian-522M | 2021-05-19T13:38:45.000Z | [

"transformers",

"pytorch",

"tf",

"jax",

"bert",

"fill-mask",

"id",

"dataset:wikipedia",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | cahya | null | null | cahya/bert-base-indonesian-522M | 17 | 1,289 | transformers | 2022-03-02T23:29:05 | ---

language: "id"

license: "mit"

datasets:

- wikipedia

widget:

- text: "Ibu ku sedang bekerja [MASK] sawah."

---

# Indonesian BERT base model (uncased)

## Model description

It is BERT-base model pre-trained with indonesian Wikipedia using a masked language modeling (MLM) objective. This

model is uncased: it does not make a difference between indonesia and Indonesia.

This is one of several other language models that have been pre-trained with indonesian datasets. More detail about

its usage on downstream tasks (text classification, text generation, etc) is available at [Transformer based Indonesian Language Models](https://github.com/cahya-wirawan/indonesian-language-models/tree/master/Transformers)

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='cahya/bert-base-indonesian-522M')

>>> unmasker("Ibu ku sedang bekerja [MASK] supermarket")

[{'sequence': '[CLS] ibu ku sedang bekerja di supermarket [SEP]',

'score': 0.7983310222625732,

'token': 1495},

{'sequence': '[CLS] ibu ku sedang bekerja. supermarket [SEP]',

'score': 0.090003103017807,

'token': 17},

{'sequence': '[CLS] ibu ku sedang bekerja sebagai supermarket [SEP]',

'score': 0.025469014421105385,

'token': 1600},

{'sequence': '[CLS] ibu ku sedang bekerja dengan supermarket [SEP]',

'score': 0.017966199666261673,

'token': 1555},

{'sequence': '[CLS] ibu ku sedang bekerja untuk supermarket [SEP]',

'score': 0.016971781849861145,

'token': 1572}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

model_name='cahya/bert-base-indonesian-522M'

tokenizer = BertTokenizer.from_pretrained(model_name)

model = BertModel.from_pretrained(model_name)

text = "Silakan diganti dengan text apa saja."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in Tensorflow:

```python

from transformers import BertTokenizer, TFBertModel

model_name='cahya/bert-base-indonesian-522M'

tokenizer = BertTokenizer.from_pretrained(model_name)

model = TFBertModel.from_pretrained(model_name)

text = "Silakan diganti dengan text apa saja."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

## Training data

This model was pre-trained with 522MB of indonesian Wikipedia.

The texts are lowercased and tokenized using WordPiece and a vocabulary size of 32,000. The inputs of the model are

then of the form:

```[CLS] Sentence A [SEP] Sentence B [SEP]```

| 2,664 | [

[

-0.01123809814453125,

-0.0404052734375,

-0.007656097412109375,

0.0350341796875,

-0.0472412109375,

0.0008411407470703125,

-0.017730712890625,

-0.009368896484375,

0.02685546875,

0.041290283203125,

-0.03021240234375,

-0.0350341796875,

-0.072021484375,

0.0199890... |

JorisCos/DCCRNet_Libri1Mix_enhsingle_16k | 2021-09-23T15:49:13.000Z | [

"asteroid",

"pytorch",

"audio",

"DCCRNet",

"audio-to-audio",

"speech-enhancement",

"dataset:Libri1Mix",

"dataset:enh_single",

"license:cc-by-sa-4.0",

"has_space",

"region:us"

] | audio-to-audio | JorisCos | null | null | JorisCos/DCCRNet_Libri1Mix_enhsingle_16k | 13 | 1,288 | asteroid | 2022-03-02T23:29:04 | ---

tags:

- asteroid

- audio

- DCCRNet

- audio-to-audio

- speech-enhancement

datasets:

- Libri1Mix

- enh_single

license: cc-by-sa-4.0

---

## Asteroid model `JorisCos/DCCRNet_Libri1Mix_enhsignle_16k`

Description:

This model was trained by Joris Cosentino using the librimix recipe in [Asteroid](https://github.com/asteroid-team/asteroid).

It was trained on the `enh_single` task of the Libri1Mix dataset.

Training config:

```yml

data:

n_src: 1

sample_rate: 16000

segment: 3

task: enh_single

train_dir: data/wav16k/min/train-360

valid_dir: data/wav16k/min/dev

filterbank:

stft_kernel_size: 400

stft_n_filters: 512

stft_stride: 100

masknet:

architecture: DCCRN-CL

n_src: 1

optim:

lr: 0.001

optimizer: adam

weight_decay: 1.0e-05

training:

batch_size: 12

early_stop: true

epochs: 200

gradient_clipping: 5

half_lr: true

num_workers: 4

```

Results:

On Libri1Mix min test set :

```yml

si_sdr: 13.329767398333798

si_sdr_imp: 9.879986092474098

sdr: 13.87279932997016

sdr_imp: 10.370136530757103

sir: Infinity

sir_imp: NaN

sar: 13.87279932997016

sar_imp: 10.370136530757103

stoi: 0.9140907015623948

stoi_imp: 0.11817087802185405

```

License notice:

This work "DCCRNet_Libri1Mix_enhsignle_16k" is a derivative of [LibriSpeech ASR corpus](http://www.openslr.org/12) by Vassil Panayotov,

used under [CC BY 4.0](https://creativecommons.org/licenses/by/4.0/); of The WSJ0 Hipster Ambient Mixtures

dataset by [Whisper.ai](http://wham.whisper.ai/), used under [CC BY-NC 4.0](https://creativecommons.org/licenses/by-nc/4.0/) (Research only).

"DCCRNet_Libri1Mix_enhsignle_16k" is licensed under [Attribution-ShareAlike 3.0 Unported](https://creativecommons.org/licenses/by-sa/3.0/) by Joris Cosentino | 1,736 | [

[

-0.042449951171875,

-0.027435302734375,

0.027374267578125,

0.0039520263671875,

-0.0298919677734375,

-0.006999969482421875,

-0.006565093994140625,

-0.037139892578125,

0.025543212890625,

0.036346435546875,

-0.0745849609375,

-0.049957275390625,

-0.0294189453125,

... |

mrm8488/bert2bert_shared-spanish-finetuned-summarization | 2023-05-02T18:59:18.000Z | [

"transformers",

"pytorch",

"safetensors",

"encoder-decoder",

"text2text-generation",

"summarization",

"news",

"es",

"dataset:mlsum",

"autotrain_compatible",

"endpoints_compatible",

"has_space",

"region:us"

] | summarization | mrm8488 | null | null | mrm8488/bert2bert_shared-spanish-finetuned-summarization | 15 | 1,288 | transformers | 2022-03-02T23:29:05 | ---

tags:

- summarization

- news

language: es

datasets:

- mlsum

widget: