modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

naver/efficient-splade-VI-BT-large-query | 2022-07-08T13:12:22.000Z | [

"transformers",

"pytorch",

"bert",

"fill-mask",

"splade",

"query-expansion",

"document-expansion",

"bag-of-words",

"passage-retrieval",

"knowledge-distillation",

"document encoder",

"en",

"dataset:ms_marco",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"... | fill-mask | naver | null | null | naver/efficient-splade-VI-BT-large-query | 1 | 574 | transformers | 2022-07-05T11:39:20 | ---

license: cc-by-nc-sa-4.0

language: "en"

tags:

- splade

- query-expansion

- document-expansion

- bag-of-words

- passage-retrieval

- knowledge-distillation

- document encoder

datasets:

- ms_marco

---

## Efficient SPLADE

Efficient SPLADE model for passage retrieval. This architecture uses two distinct models for query and document inference. This is the **query** one, please also download the **doc** one (https://huggingface.co/naver/efficient-splade-VI-BT-large-doc). For additional details, please visit:

* paper: https://dl.acm.org/doi/10.1145/3477495.3531833

* code: https://github.com/naver/splade

| | MRR@10 (MS MARCO dev) | R@1000 (MS MARCO dev) | Latency (PISA) ms | Latency (Inference) ms

| --- | --- | --- | --- | --- |

| `naver/efficient-splade-V-large` | 38.8 | 98.0 | 29.0 | 45.3

| `naver/efficient-splade-VI-BT-large` | 38.0 | 97.8 | 31.1 | 0.7

## Citation

If you use our checkpoint, please cite our work:

```

@inproceedings{10.1145/3477495.3531833,

author = {Lassance, Carlos and Clinchant, St\'{e}phane},

title = {An Efficiency Study for SPLADE Models},

year = {2022},

isbn = {9781450387323},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3477495.3531833},

doi = {10.1145/3477495.3531833},

abstract = {Latency and efficiency issues are often overlooked when evaluating IR models based on Pretrained Language Models (PLMs) in reason of multiple hardware and software testing scenarios. Nevertheless, efficiency is an important part of such systems and should not be overlooked. In this paper, we focus on improving the efficiency of the SPLADE model since it has achieved state-of-the-art zero-shot performance and competitive results on TREC collections. SPLADE efficiency can be controlled via a regularization factor, but solely controlling this regularization has been shown to not be efficient enough. In order to reduce the latency gap between SPLADE and traditional retrieval systems, we propose several techniques including L1 regularization for queries, a separation of document/query encoders, a FLOPS-regularized middle-training, and the use of faster query encoders. Our benchmark demonstrates that we can drastically improve the efficiency of these models while increasing the performance metrics on in-domain data. To our knowledge, we propose the first neural models that, under the same computing constraints, achieve similar latency (less than 4ms difference) as traditional BM25, while having similar performance (less than 10% MRR@10 reduction) as the state-of-the-art single-stage neural rankers on in-domain data.},

booktitle = {Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval},

pages = {2220–2226},

numpages = {7},

keywords = {splade, latency, information retrieval, sparse representations},

location = {Madrid, Spain},

series = {SIGIR '22}

}

```

| 2,922 | [

[

-0.0214996337890625,

-0.051788330078125,

0.030975341796875,

0.041839599609375,

-0.022613525390625,

-0.0165252685546875,

-0.0196380615234375,

-0.0153656005859375,

0.0089569091796875,

0.0245819091796875,

-0.016143798828125,

-0.035430908203125,

-0.051116943359375,

... |

timm/volo_d5_512.sail_in1k | 2023-04-13T06:15:47.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2106.13112",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/volo_d5_512.sail_in1k | 0 | 574 | timm | 2023-04-13T06:12:13 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for volo_d5_512.sail_in1k

A VOLO (Vision Outlooker) image classification model. Trained on ImageNet-1k with token labelling by paper authors.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 296.1

- GMACs: 425.1

- Activations (M): 1105.4

- Image size: 512 x 512

- **Papers:**

- VOLO: Vision Outlooker for Visual Recognition: https://arxiv.org/abs/2106.13112

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/sail-sg/volo

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('volo_d5_512.sail_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'volo_d5_512.sail_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 1025, 768) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Citation

```bibtex

@article{yuan2022volo,

title={Volo: Vision outlooker for visual recognition},

author={Yuan, Li and Hou, Qibin and Jiang, Zihang and Feng, Jiashi and Yan, Shuicheng},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

year={2022},

publisher={IEEE}

}

```

| 2,603 | [

[

-0.0311279296875,

-0.0140228271484375,

0.00849151611328125,

0.0194244384765625,

-0.043609619140625,

-0.0299835205078125,

0.0005064010620117188,

-0.0284881591796875,

0.02099609375,

0.03790283203125,

-0.0516357421875,

-0.0494384765625,

-0.052886962890625,

-0.0... |

KappaNeuro/lascaux | 2023-09-14T09:50:56.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"art",

"style",

"paint",

"cave",

"lascaux",

"prehistoric",

"license:other",

"region:us",

"has_space"

] | text-to-image | KappaNeuro | null | null | KappaNeuro/lascaux | 1 | 574 | diffusers | 2023-09-14T09:50:52 | ---

license: other

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

- art

- style

- paint

- cave

- lascaux

- prehistoric

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: Lascaux page

widget:

- text: "Lascaux - Cave paintings Thousands of years ago in a large cave, an ancient man paints on a wall the story of a hunter of a large bison, next to a fire that lights the cave and a piece of meat is cooked on it"

- text: "Lascaux - early stone age humans wearing animal skins, with animal bones for jewelry, draw famous prehistoric drawings of animal hunts on the walls of the cave at Lasceaux, France, illuminated only"

- text: "Lascaux - style of lascaux cave paintings enormous terrifying monster growing out of ground with gnarly teeth seven eyes and whiskers and throats and claws and ribs"

- text: "Lascaux - hyper realistic image of Neolithic simplistic primitive cave-painting of a silhouette of animals on a cave wall in prehistoric times. Cenozoic era"

- text: "Lascaux - ancient lascaux cave drawing man with sword stands over body of other man. Standing man is struck by lightning. Ancient cave art style"

- text: "Lascaux - lascaux cave painting of hunt with horses and people with spears realistic cave painting of horse lascaux caves prehistoric beautiful"

- text: "Lascaux - stone age scandinavia cave paintings of a battle. Tribespeople with torches are looking at the paintings."

- text: "Lascaux - an image consisting of many drawings representing the Levallois Technique During the Paleolithic age"

- text: "Lascaux - The Lascaux Cave, located in southwestern France, is a prehistoric treasure trove filled with captivating wall paintings. Created by the inhabitants of the Upper Paleolithic era, these masterful artworks depict a vivid menagerie of animals such as horses, deer, bulls, and even some enigmatic human-like figures. The paintings, executed with remarkable skill and precision, showcase a deep understanding of form, perspective, and the use of natural pigments. These ancient artists utilized the cave's undulating surfaces to bring their subjects to life, evoking a sense of movement and vitality that continues to mesmerize and inspire modern viewers. Summilux-M 21mm f/1.4 ASPH, Summicron-M 28mm f/2 ASPH are excellent options, Aperture f/8 or f/11 to ensure maximum depth of field, allowing both the foreground and background elements of the building to remain sharp and well-defined, shutter speed 1/250th, ISO 100, Leica M6, ISO 200, Objectif Leica Summilux-M 50mm f/1.4, 70mm lens, aperture f/2, shutter speed 1/200th"

---

# Lascaux ([CivitAI](https://civitai.com/models/153888)

> Lascaux - Cave paintings Thousands of years ago in a large cave, an ancient man paints on a wall the story of a hunter of a large bison, next to a fire that lights the cave and a piece of meat is cooked on it

<p>Lascaux is a cave complex located in southwestern France, renowned for its prehistoric cave paintings. The cave is situated near the village of Montignac and is estimated to have been created around 17,000 years ago during the Upper Paleolithic period.</p><p>Discovered in 1940 by a group of teenagers, the Lascaux caves contain some of the most remarkable and well-preserved examples of prehistoric art. The cave walls are adorned with intricate paintings of various animals, including horses, deer, aurochs, and predators like lions and bears.</p><p>The paintings at Lascaux are known for their exceptional quality, attention to detail, and vivid depictions of movement and life. They provide valuable insights into the lives and beliefs of our ancient ancestors, revealing their close relationship with the natural world and their artistic expressions.</p><p>Due to concerns about the preservation of the fragile cave environment and the deterioration of the paintings caused by human activity, the original Lascaux cave complex was closed to the public in 1963. However, a replica called Lascaux II was constructed nearby and opened to visitors in 1983. This faithful reproduction allows visitors to experience and appreciate the beauty and significance of the cave art without compromising the integrity of the original site.</p><p>The cave paintings at Lascaux have had a profound impact on our understanding of prehistoric art and the development of human civilization. They represent a remarkable testament to the artistic capabilities and cultural expressions of our ancient ancestors, providing a fascinating glimpse into the distant past.</p>

## Image examples for the model:

> Lascaux - early stone age humans wearing animal skins, with animal bones for jewelry, draw famous prehistoric drawings of animal hunts on the walls of the cave at Lasceaux, France, illuminated only

> Lascaux - style of lascaux cave paintings enormous terrifying monster growing out of ground with gnarly teeth seven eyes and whiskers and throats and claws and ribs

> Lascaux - hyper realistic image of Neolithic simplistic primitive cave-painting of a silhouette of animals on a cave wall in prehistoric times. Cenozoic era

> Lascaux - ancient lascaux cave drawing man with sword stands over body of other man. Standing man is struck by lightning. Ancient cave art style

> Lascaux - lascaux cave painting of hunt with horses and people with spears realistic cave painting of horse lascaux caves prehistoric beautiful

> Lascaux - stone age scandinavia cave paintings of a battle. Tribespeople with torches are looking at the paintings.

> Lascaux - an image consisting of many drawings representing the Levallois Technique During the Paleolithic age

>

> Lascaux - The Lascaux Cave, located in southwestern France, is a prehistoric treasure trove filled with captivating wall paintings. Created by the inhabitants of the Upper Paleolithic era, these masterful artworks depict a vivid menagerie of animals such as horses, deer, bulls, and even some enigmatic human-like figures. The paintings, executed with remarkable skill and precision, showcase a deep understanding of form, perspective, and the use of natural pigments. These ancient artists utilized the cave's undulating surfaces to bring their subjects to life, evoking a sense of movement and vitality that continues to mesmerize and inspire modern viewers. Summilux-M 21mm f/1.4 ASPH, Summicron-M 28mm f/2 ASPH are excellent options, Aperture f/8 or f/11 to ensure maximum depth of field, allowing both the foreground and background elements of the building to remain sharp and well-defined, shutter speed 1/250th, ISO 100, Leica M6, ISO 200, Objectif Leica Summilux-M 50mm f/1.4, 70mm lens, aperture f/2, shutter speed 1/200th

| 6,904 | [

[

-0.06121826171875,

-0.0231475830078125,

0.0240325927734375,

0.0251007080078125,

-0.01291656494140625,

-0.0047149658203125,

0.0169219970703125,

-0.0732421875,

0.04046630859375,

0.037567138671875,

-0.0372314453125,

-0.03765869140625,

-0.04132080078125,

0.01121... |

alfredplpl/emi | 2023-09-27T01:03:47.000Z | [

"diffusers",

"stable-diffusion",

"text-to-image",

"arxiv:2307.01952",

"arxiv:2212.03860",

"license:openrail++",

"diffusers:StableDiffusionXLPipeline",

"region:us"

] | text-to-image | alfredplpl | null | null | alfredplpl/emi | 2 | 574 | diffusers | 2023-09-26T20:59:00 | ---

license: openrail++

tags:

- stable-diffusion

- text-to-image

inference: false

library_name: diffusers

---

# Emi Model Card

**このリポジトリは[オリジナル](https://huggingface.co/aipicasso/emi)の非公式クローンです。最新のバージョンを落とすためにも、できる限りオリジナルのリポジトリから落としてください。**

**This repository is the unofficial clone of [the original repository](https://huggingface.co/aipicasso/emi). Please use the original repository to use latest version as possible.**

[Original(PNG)](eyecatch.png)

English: [Click Here](README_en.md)

# はじめに

Emi (Ethereal master of illustration) は、

最先端の開発機材H100と画像生成Stable Diffusion XL 1.0を用いて

AI Picasso社が開発したAIアートに特化した画像生成AIです。

このモデルの特徴として、Danbooruなどにある無断転載画像を学習していないことがあげられます。

# ライセンスについて

ライセンスについては、これまでとは違い、 CreativeML Open RAIL++-M License です。

したがって、**商用利用可能**です。

これは次のように判断したためです。

- 画像生成AIが普及するに伴い、創作業界に悪影響を及ぼさないように、マナーを守る人が増えてきたため

- 他の画像生成AIが商用可能である以上、あまり非商用ライセンスである実効性がなくなってきたため

# 使い方

[ここ](https://huggingface.co/spaces/aipicasso/emi-latest-demo)からデモを利用することができます。

本格的に利用する人は[ここ](emi.safetensors)からモデルをダウンロードできます。

通常版で生成がうまく行かない場合は、[安定版](emi_stable.safetensors)をお使いください。

# シンプルな作品例

```

positive prompt: anime artwork, anime style, (1girl), (black bob hair:1.5), brown eyes, red maples, sky, ((transparent))

negative prompt: (embedding:unaestheticXLv31:0.5), photo, deformed, realism, disfigured, low contrast, bad hand

```

```

positive prompt: monochrome, black and white, (japanese manga), mount fuji

negative prompt: (embedding:unaestheticXLv31:0.5), photo, deformed, realism, disfigured, low contrast, bad hand

```

```

positive prompt: (1man), focus, white wavy short hair, blue eyes, black shirt, white background, simple background

negative prompt: (embedding:unaestheticXLv31:0.5), photo, deformed, realism, disfigured, low contrast, bad hand

```

# モデルの出力向上について

- 確実にアニメ調のイラストを出したいときは、anime artwork, anime styleとプロンプトの先頭に入れてください。

- プロンプトにtransparentという言葉を入れると、より最近の画風になります。

- 全身 (full body) を描くとうまく行かない場合もあるため、そのときは[安定版](emi_stable.safetensors)をお試しください。

- 使えるプロンプトはWaifu Diffusionと同じです。また、Stable Diffusionのように使うこともできます。

- ネガティブプロンプトに[Textual Inversion](https://civitai.com/models/119032/unaestheticxl-or-negative-ti)を使用することをおすすめします。

- 手が不安定なため、[DreamShaper XL1.0](https://civitai.com/models/112902?modelVersionId=126688)などの実写系モデルとのマージをおすすめします。

- ChatGPTを用いてプロンプトを洗練すると、自分の枠を超えた作品に出会えます。

- 最新のComfyUIにあるFreeUノードを次のパラメータで使うとさらに出力が上がる可能性があります。次の画像はFreeUを使った例です。

- b1 = 1.1, b2 = 1.2, s1 = 0.6, s2 = 0.4 [report](https://wandb.ai/nasirk24/UNET-FreeU-SDXL/reports/FreeU-SDXL-Optimal-Parameters--Vmlldzo1NDg4NTUw)

# 法律について

本モデルは日本にて作成されました。したがって、日本の法律が適用されます。

本モデルの学習は、著作権法第30条の4に基づき、合法であると主張します。

また、本モデルの配布については、著作権法や刑法175条に照らしてみても、

正犯や幇助犯にも該当しないと主張します。詳しくは柿沼弁護士の[見解](https://twitter.com/tka0120/status/1601483633436393473?s=20&t=yvM9EX0Em-_7lh8NJln3IQ)を御覧ください。

ただし、ライセンスにもある通り、本モデルの生成物は各種法令に従って取り扱って下さい。

# 連絡先

support@aipicasso.app

以下、一般的なモデルカードの日本語訳です。

## モデル詳細

- **モデルタイプ:** 拡散モデルベースの text-to-image 生成モデル

- **言語:** 日本語

- **ライセンス:** [CreativeML Open RAIL++-M License](LICENSE.md)

- **モデルの説明:** このモデルはプロンプトに応じて適切な画像を生成することができます。アルゴリズムは [Latent Diffusion Model](https://arxiv.org/abs/2307.01952) と [OpenCLIP-ViT/G](https://github.com/mlfoundations/open_clip)、[CLIP-L](https://github.com/openai/CLIP) です。

- **補足:**

- **参考文献:**

```bibtex

@misc{podell2023sdxl,

title={SDXL: Improving Latent Diffusion Models for High-Resolution Image Synthesis},

author={Dustin Podell and Zion English and Kyle Lacey and Andreas Blattmann and Tim Dockhorn and Jonas Müller and Joe Penna and Robin Rombach},

year={2023},

eprint={2307.01952},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

## モデルの使用例

Stable Diffusion XL 1.0と同じ使い方です。

たくさんの方法がありますが、3つのパターンを提供します。

- ComfyUI

- Fooocus

- Diffusers

### ComfyUIやFooocusの場合

Stable Diffusion XL 1.0 の使い方と同じく、safetensor形式のモデルファイルを使ってください。

詳しいインストール方法は、[こちらの記事](https://note.com/it_navi/n/n723d93bedd64)を参照してください。

### Diffusersの場合

[🤗's Diffusers library](https://github.com/huggingface/diffusers) を使ってください。

まずは、以下のスクリプトを実行し、ライブラリをいれてください。

```bash

pip install invisible_watermark transformers accelerate safetensors diffusers

```

次のスクリプトを実行し、画像を生成してください。

```python

from diffusers import StableDiffusionXLPipeline, EulerAncestralDiscreteScheduler

import torch

model_id = "aipicasso/emi"

scheduler = EulerAncestralDiscreteScheduler.from_pretrained(model_id, subfolder="scheduler")

pipe = StableDiffusionXLPipeline.from_pretrained(model_id, scheduler=scheduler, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "1girl, sunflowers, brown bob hair, brown eyes, sky, transparent"

images = pipe(prompt, num_inference_steps=20).images

images[0].save("girl.png")

```

複雑な操作は[デモのソースコード](https://huggingface.co/spaces/aipicasso/emi-latest-demo/blob/main/app.py)を参考にしてください。

#### 想定される用途

- イラストや漫画、アニメの作画補助

- 商用・非商用は問わない

- 依頼の際のクリエイターとのコミュニケーション

- 画像生成サービスの商用提供

- 生成物の取り扱いには注意して使ってください。

- 自己表現

- このAIを使い、「あなた」らしさを発信すること

- 研究開発

- Discord上でのモデルの利用

- プロンプトエンジニアリング

- ファインチューニング(追加学習とも)

- DreamBooth など

- 他のモデルとのマージ

- 本モデルの性能をFIDなどで調べること

- 本モデルがStable Diffusion以外のモデルとは独立であることをチェックサムやハッシュ関数などで調べること

- 教育

- 美大生や専門学校生の卒業制作

- 大学生の卒業論文や課題制作

- 先生が画像生成AIの現状を伝えること

- Hugging Face の Community にかいてある用途

- 日本語か英語で質問してください

#### 想定されない用途

- 物事を事実として表現するようなこと

- 先生を困らせるようなこと

- その他、創作業界に悪影響を及ぼすこと

# 使用してはいけない用途や悪意のある用途

- マネー・ロンダリングに用いないでください

- デジタル贋作 ([Digital Forgery](https://arxiv.org/abs/2212.03860)) は公開しないでください(著作権法に違反するおそれ)

- 他人の作品を無断でImage-to-Imageしないでください(著作権法に違反するおそれ)

- わいせつ物を頒布しないでください (刑法175条に違反するおそれ)

- いわゆる業界のマナーを守らないようなこと

- 事実に基づかないことを事実のように語らないようにしてください(威力業務妨害罪が適用されるおそれ)

- フェイクニュース

## モデルの限界やバイアス

### モデルの限界

- 拡散モデルや大規模言語モデルは、いまだに未知の部分が多く、その限界は判明していない。

### バイアス

- 拡散モデルや大規模言語モデルは、いまだに未知の部分が多く、バイアスは判明していない。

## 学習

**学習データ**

- Stable Diffusionと同様のデータセットからDanbooruの無断転載画像を取り除いて手動で集めた約2000枚の画像

- Stable Diffusionと同様のデータセットからDanbooruの無断転載画像を取り除いて自動で集めた約50万枚の画像

**学習プロセス**

- **ハードウェア:** H100

## 評価結果

第三者による評価を求めています。

## 環境への影響

- **ハードウェアタイプ:** H100

- **使用時間(単位は時間):** 500

- **学習した場所:** 日本

## 参考文献

```bibtex

@misc{podell2023sdxl,

title={SDXL: Improving Latent Diffusion Models for High-Resolution Image Synthesis},

author={Dustin Podell and Zion English and Kyle Lacey and Andreas Blattmann and Tim Dockhorn and Jonas Müller and Joe Penna and Robin Rombach},

year={2023},

eprint={2307.01952},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

```

| 6,617 | [

[

-0.042022705078125,

-0.061279296875,

0.0305328369140625,

0.01474761962890625,

-0.03729248046875,

-0.002960205078125,

0.0036563873291015625,

-0.0257415771484375,

0.043548583984375,

0.00788116455078125,

-0.045867919921875,

-0.049713134765625,

-0.04302978515625,

... |

FremyCompany/stsb_ossts_roberta-large-nl-oscar23 | 2023-10-17T15:24:45.000Z | [

"sentence-transformers",

"pytorch",

"roberta",

"feature-extraction",

"sentence-similarity",

"endpoints_compatible",

"region:us"

] | sentence-similarity | FremyCompany | null | null | FremyCompany/stsb_ossts_roberta-large-nl-oscar23 | 0 | 574 | sentence-transformers | 2023-10-10T07:33:49 | ---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 256 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 90 with parameters:

```

{'batch_size': 64, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 5,

"evaluation_steps": 50,

"evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 1e-06

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 22,

"weight_decay": 0.001

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 128, 'do_lower_case': False}) with Transformer model: RobertaModel

(1): Pooling({'word_embedding_dimension': 1024, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Dense({'in_features': 1024, 'out_features': 256, 'bias': True, 'activation_function': 'torch.nn.modules.activation.Tanh'})

)

```

## Citing & Authors

<!--- Describe where people can find more information --> | 2,452 | [

[

-0.019805908203125,

-0.06298828125,

0.02667236328125,

0.01953125,

-0.01296234130859375,

-0.0278167724609375,

-0.01898193359375,

0.006847381591796875,

0.011627197265625,

0.034576416015625,

-0.053009033203125,

-0.053436279296875,

-0.044219970703125,

-0.0013999... |

pritamdeka/BioBert-PubMed200kRCT | 2023-10-26T12:01:45.000Z | [

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] | text-classification | pritamdeka | null | null | pritamdeka/BioBert-PubMed200kRCT | 5 | 573 | transformers | 2022-03-15T12:38:06 | ---

tags:

- generated_from_trainer

metrics:

- accuracy

widget:

- text: SAMPLE 32,441 archived appendix samples fixed in formalin and embedded in

paraffin and tested for the presence of abnormal prion protein (PrP).

base_model: dmis-lab/biobert-base-cased-v1.1

model-index:

- name: BioBert-PubMed200kRCT

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# BioBert-PubMed200kRCT

This model is a fine-tuned version of [dmis-lab/biobert-base-cased-v1.1](https://huggingface.co/dmis-lab/biobert-base-cased-v1.1) on the [PubMed200kRCT](https://github.com/Franck-Dernoncourt/pubmed-rct/tree/master/PubMed_200k_RCT) dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2832

- Accuracy: 0.8934

## Model description

More information needed

## Intended uses & limitations

The model can be used for text classification tasks of Randomized Controlled Trials that does not have any structure. The text can be classified as one of the following:

* BACKGROUND

* CONCLUSIONS

* METHODS

* OBJECTIVE

* RESULTS

The model can be directly used like this:

```python

from transformers import TextClassificationPipeline

from transformers import AutoTokenizer, AutoModelForSequenceClassification

model = AutoModelForSequenceClassification.from_pretrained("pritamdeka/BioBert-PubMed200kRCT")

tokenizer = AutoTokenizer.from_pretrained("pritamdeka/BioBert-PubMed200kRCT")

pipe = TextClassificationPipeline(model=model, tokenizer=tokenizer, return_all_scores=True)

pipe("Treatment of 12 healthy female subjects with CDCA for 2 days resulted in increased BAT activity.")

```

Results will be shown as follows:

```python

[[{'label': 'BACKGROUND', 'score': 0.0027583304326981306},

{'label': 'CONCLUSIONS', 'score': 0.044541116803884506},

{'label': 'METHODS', 'score': 0.19493348896503448},

{'label': 'OBJECTIVE', 'score': 0.003996663726866245},

{'label': 'RESULTS', 'score': 0.7537703514099121}]]

```

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.3587 | 0.14 | 5000 | 0.3137 | 0.8834 |

| 0.3318 | 0.29 | 10000 | 0.3100 | 0.8831 |

| 0.3286 | 0.43 | 15000 | 0.3033 | 0.8864 |

| 0.3236 | 0.58 | 20000 | 0.3037 | 0.8862 |

| 0.3182 | 0.72 | 25000 | 0.2939 | 0.8876 |

| 0.3129 | 0.87 | 30000 | 0.2910 | 0.8885 |

| 0.3078 | 1.01 | 35000 | 0.2914 | 0.8887 |

| 0.2791 | 1.16 | 40000 | 0.2975 | 0.8874 |

| 0.2723 | 1.3 | 45000 | 0.2913 | 0.8906 |

| 0.2724 | 1.45 | 50000 | 0.2879 | 0.8904 |

| 0.27 | 1.59 | 55000 | 0.2874 | 0.8911 |

| 0.2681 | 1.74 | 60000 | 0.2848 | 0.8928 |

| 0.2672 | 1.88 | 65000 | 0.2832 | 0.8934 |

### Framework versions

- Transformers 4.18.0.dev0

- Pytorch 1.10.0+cu111

- Datasets 1.18.4

- Tokenizers 0.11.6

## Citing & Authors

<!--- Describe where people can find more information -->

If you use the model kindly cite the following work

```

@inproceedings{deka2022evidence,

title={Evidence Extraction to Validate Medical Claims in Fake News Detection},

author={Deka, Pritam and Jurek-Loughrey, Anna and others},

booktitle={International Conference on Health Information Science},

pages={3--15},

year={2022},

organization={Springer}

}

``` | 4,012 | [

[

-0.0252227783203125,

-0.04876708984375,

0.0266876220703125,

-0.0018444061279296875,

-0.0177764892578125,

-0.0227203369140625,

0.00275421142578125,

-0.01261138916015625,

0.016387939453125,

0.0218658447265625,

-0.0277862548828125,

-0.05718994140625,

-0.05187988281... |

Fictiverse/Stable_Diffusion_VoxelArt_Model | 2023-05-07T08:22:35.000Z | [

"diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Fictiverse | null | null | Fictiverse/Stable_Diffusion_VoxelArt_Model | 156 | 573 | diffusers | 2022-11-10T04:42:13 | ---

license: creativeml-openrail-m

tags:

- text-to-image

---

# VoxelArt model V1

This is the fine-tuned Stable Diffusion model trained on Voxel Art images.

Use **VoxelArt** in your prompts.

### Sample images:

Based on StableDiffusion 1.5 model

### 🧨 Diffusers

This model can be used just like any other Stable Diffusion model. For more information,

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

```python

from diffusers import StableDiffusionPipeline

import torch

model_id = "Fictiverse/Stable_Diffusion_PaperCut_Model"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "PaperCut R2-D2"

image = pipe(prompt).images[0]

image.save("./R2-D2.png")

``` | 1,087 | [

[

-0.0273895263671875,

-0.07647705078125,

0.03594970703125,

0.023040771484375,

-0.0196380615234375,

-0.014068603515625,

0.01068115234375,

0.009002685546875,

0.004184722900390625,

0.041717529296875,

-0.017822265625,

-0.03997802734375,

-0.040740966796875,

-0.020... |

timm/flexivit_base.patch30_in21k | 2023-05-05T23:59:03.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2212.08013",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/flexivit_base.patch30_in21k | 0 | 573 | timm | 2022-12-22T07:16:02 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for flexivit_base.patch30_in21k

A FlexiViT image classification model. Trained on ImageNet-1k in JAX by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 102.6

- GMACs: 19.4

- Activations (M): 18.9

- Image size: 240 x 240

- **Papers:**

- FlexiViT: One Model for All Patch Sizes: https://arxiv.org/abs/2212.08013

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/google-research/big_vision

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('flexivit_base.patch30_in21k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'flexivit_base.patch30_in21k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 226, 768) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

## Citation

```bibtex

@article{beyer2022flexivit,

title={FlexiViT: One Model for All Patch Sizes},

author={Beyer, Lucas and Izmailov, Pavel and Kolesnikov, Alexander and Caron, Mathilde and Kornblith, Simon and Zhai, Xiaohua and Minderer, Matthias and Tschannen, Michael and Alabdulmohsin, Ibrahim and Pavetic, Filip},

journal={arXiv preprint arXiv:2212.08013},

year={2022}

}

```

```bibtex

@article{dosovitskiy2020vit,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={ICLR},

year={2021}

}

```

```bibtex

@misc{rw2019timm,

author = {Ross Wightman},

title = {PyTorch Image Models},

year = {2019},

publisher = {GitHub},

journal = {GitHub repository},

doi = {10.5281/zenodo.4414861},

howpublished = {\url{https://github.com/huggingface/pytorch-image-models}}

}

```

| 3,706 | [

[

-0.0390625,

-0.028167724609375,

0.0047454833984375,

0.00856781005859375,

-0.024688720703125,

-0.0260772705078125,

-0.0194091796875,

-0.037445068359375,

0.01531219482421875,

0.018035888671875,

-0.044189453125,

-0.039276123046875,

-0.04412841796875,

-0.0021800... |

keremberke/yolov5n-valorant | 2022-12-30T20:49:57.000Z | [

"yolov5",

"tensorboard",

"yolo",

"vision",

"object-detection",

"pytorch",

"dataset:keremberke/valorant-object-detection",

"model-index",

"has_space",

"region:us"

] | object-detection | keremberke | null | null | keremberke/yolov5n-valorant | 1 | 573 | yolov5 | 2022-12-28T08:55:02 |

---

tags:

- yolov5

- yolo

- vision

- object-detection

- pytorch

library_name: yolov5

library_version: 7.0.6

inference: false

datasets:

- keremberke/valorant-object-detection

model-index:

- name: keremberke/yolov5n-valorant

results:

- task:

type: object-detection

dataset:

type: keremberke/valorant-object-detection

name: keremberke/valorant-object-detection

split: validation

metrics:

- type: precision # since mAP@0.5 is not available on hf.co/metrics

value: 0.9591260700013188 # min: 0.0 - max: 1.0

name: mAP@0.5

---

<div align="center">

<img width="640" alt="keremberke/yolov5n-valorant" src="https://huggingface.co/keremberke/yolov5n-valorant/resolve/main/sample_visuals.jpg">

</div>

### How to use

- Install [yolov5](https://github.com/fcakyon/yolov5-pip):

```bash

pip install -U yolov5

```

- Load model and perform prediction:

```python

import yolov5

# load model

model = yolov5.load('keremberke/yolov5n-valorant')

# set model parameters

model.conf = 0.25 # NMS confidence threshold

model.iou = 0.45 # NMS IoU threshold

model.agnostic = False # NMS class-agnostic

model.multi_label = False # NMS multiple labels per box

model.max_det = 1000 # maximum number of detections per image

# set image

img = 'https://github.com/ultralytics/yolov5/raw/master/data/images/zidane.jpg'

# perform inference

results = model(img, size=640)

# inference with test time augmentation

results = model(img, augment=True)

# parse results

predictions = results.pred[0]

boxes = predictions[:, :4] # x1, y1, x2, y2

scores = predictions[:, 4]

categories = predictions[:, 5]

# show detection bounding boxes on image

results.show()

# save results into "results/" folder

results.save(save_dir='results/')

```

- Finetune the model on your custom dataset:

```bash

yolov5 train --data data.yaml --img 640 --batch 16 --weights keremberke/yolov5n-valorant --epochs 10

```

**More models available at: [awesome-yolov5-models](https://github.com/keremberke/awesome-yolov5-models)** | 2,042 | [

[

-0.051361083984375,

-0.039031982421875,

0.035491943359375,

-0.0258941650390625,

-0.0219879150390625,

-0.027984619140625,

0.004852294921875,

-0.0328369140625,

0.0172119140625,

0.0301055908203125,

-0.04705810546875,

-0.057525634765625,

-0.039093017578125,

-0.0... |

keremberke/yolov5n-football | 2022-12-30T20:49:33.000Z | [

"yolov5",

"tensorboard",

"yolo",

"vision",

"object-detection",

"pytorch",

"dataset:keremberke/football-object-detection",

"model-index",

"has_space",

"region:us"

] | object-detection | keremberke | null | null | keremberke/yolov5n-football | 1 | 573 | yolov5 | 2022-12-28T20:39:20 |

---

tags:

- yolov5

- yolo

- vision

- object-detection

- pytorch

library_name: yolov5

library_version: 7.0.6

inference: false

datasets:

- keremberke/football-object-detection

model-index:

- name: keremberke/yolov5n-football

results:

- task:

type: object-detection

dataset:

type: keremberke/football-object-detection

name: keremberke/football-object-detection

split: validation

metrics:

- type: precision # since mAP@0.5 is not available on hf.co/metrics

value: 0.6268698475736707 # min: 0.0 - max: 1.0

name: mAP@0.5

---

<div align="center">

<img width="640" alt="keremberke/yolov5n-football" src="https://huggingface.co/keremberke/yolov5n-football/resolve/main/sample_visuals.jpg">

</div>

### How to use

- Install [yolov5](https://github.com/fcakyon/yolov5-pip):

```bash

pip install -U yolov5

```

- Load model and perform prediction:

```python

import yolov5

# load model

model = yolov5.load('keremberke/yolov5n-football')

# set model parameters

model.conf = 0.25 # NMS confidence threshold

model.iou = 0.45 # NMS IoU threshold

model.agnostic = False # NMS class-agnostic

model.multi_label = False # NMS multiple labels per box

model.max_det = 1000 # maximum number of detections per image

# set image

img = 'https://github.com/ultralytics/yolov5/raw/master/data/images/zidane.jpg'

# perform inference

results = model(img, size=640)

# inference with test time augmentation

results = model(img, augment=True)

# parse results

predictions = results.pred[0]

boxes = predictions[:, :4] # x1, y1, x2, y2

scores = predictions[:, 4]

categories = predictions[:, 5]

# show detection bounding boxes on image

results.show()

# save results into "results/" folder

results.save(save_dir='results/')

```

- Finetune the model on your custom dataset:

```bash

yolov5 train --data data.yaml --img 640 --batch 16 --weights keremberke/yolov5n-football --epochs 10

```

**More models available at: [awesome-yolov5-models](https://github.com/keremberke/awesome-yolov5-models)** | 2,042 | [

[

-0.0614013671875,

-0.037353515625,

0.03173828125,

-0.0215301513671875,

-0.0275421142578125,

-0.0176544189453125,

0.00894927978515625,

-0.046966552734375,

0.0174713134765625,

0.0130157470703125,

-0.05780029296875,

-0.05352783203125,

-0.0384521484375,

0.008003... |

Inzamam567/Useless-TriPhaze | 2023-03-31T22:00:09.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"region:us"

] | text-to-image | Inzamam567 | null | null | Inzamam567/Useless-TriPhaze | 0 | 573 | diffusers | 2023-03-31T22:00:08 | ---

license: creativeml-openrail-m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

duplicated_from: Lucetepolis/TriPhaze

---

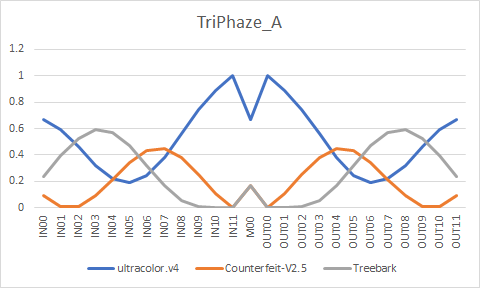

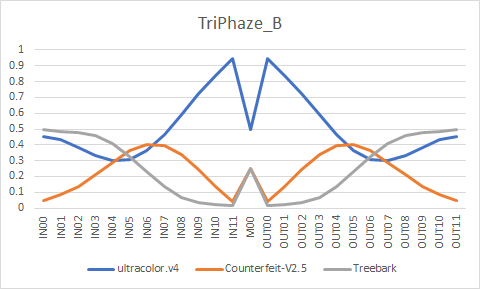

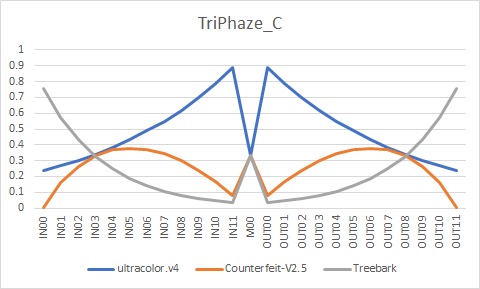

# TriPhaze

ultracolor.v4 - <a href="https://huggingface.co/xdive/ultracolor.v4">Download</a> / <a href="https://arca.live/b/aiart/68609290">Sample</a><br/>

Counterfeit-V2.5 - <a href="https://huggingface.co/gsdf/Counterfeit-V2.5">Download / Sample</a><br/>

Treebark - <a href="https://huggingface.co/HIZ/aichan_pick">Download</a> / <a href="https://arca.live/b/aiart/67648642">Sample</a><br/>

EasyNegative and pastelmix-lora seem to work well with the models.

EasyNegative - <a href="https://huggingface.co/datasets/gsdf/EasyNegative">Download / Sample</a><br/>

pastelmix-lora - <a href="https://huggingface.co/andite/pastel-mix">Download / Sample</a>

# Formula

```

ultracolor.v4 + Counterfeit-V2.5 = temp1

U-Net Merge - 0.870333, 0.980430, 0.973645, 0.716758, 0.283242, 0.026355, 0.019570, 0.129667, 0.273791, 0.424427, 0.575573, 0.726209, 0.5, 0.726209, 0.575573, 0.424427, 0.273791, 0.129667, 0.019570, 0.026355, 0.283242, 0.716758, 0.973645, 0.980430, 0.870333

temp1 + Treebark = temp2

U-Net Merge - 0.752940, 0.580394, 0.430964, 0.344691, 0.344691, 0.430964, 0.580394, 0.752940, 0.902369, 0.988642, 0.988642, 0.902369, 0.666667, 0.902369, 0.988642, 0.988642, 0.902369, 0.752940, 0.580394, 0.430964, 0.344691, 0.344691, 0.430964, 0.580394, 0.752940

temp2 + ultracolor.v4 = TriPhaze_A

U-Net Merge - 0.042235, 0.056314, 0.075085, 0.100113, 0.133484, 0.177979, 0.237305, 0.316406, 0.421875, 0.5625, 0.75, 1, 0.5, 1, 0.75, 0.5625, 0.421875, 0.316406, 0.237305, 0.177979, 0.133484, 0.100113, 0.075085, 0.056314, 0.042235

ultracolor.v4 + Counterfeit-V2.5 = temp3

U-Net Merge - 0.979382, 0.628298, 0.534012, 0.507426, 0.511182, 0.533272, 0.56898, 0.616385, 0.674862, 0.7445, 0.825839, 0.919748, 0.5, 0.919748, 0.825839, 0.7445, 0.674862, 0.616385, 0.56898, 0.533272, 0.511182, 0.507426, 0.534012, 0.628298, 0.979382

temp3 + Treebark = TriPhaze_C

U-Net Merge - 0.243336, 0.427461, 0.566781, 0.672199, 0.751965, 0.812321, 0.857991, 0.892547, 0.918694, 0.938479, 0.953449, 0.964777, 0.666667, 0.964777, 0.953449, 0.938479, 0.918694, 0.892547, 0.857991, 0.812321, 0.751965, 0.672199, 0.566781, 0.427461, 0.243336

TriPhaze_A + TriPhaze_C = TriPhaze_B

U-Net Merge - 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5, 0.5

```

# Converted weights

# Samples

All of the images use following negatives/settings. EXIF preserved.

```

Negative prompt: (worst quality, low quality:1.4), easynegative, bad anatomy, bad hands, error, missing fingers, extra digit, fewer digits, nsfw

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 7, Seed: 1853114200, Size: 768x512, Model hash: 6bad0b419f, Denoising strength: 0.6, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: R-ESRGAN 4x+ Anime6B

```

# TriPhaze_A

# TriPhaze_B

# TriPhaze_C

| 5,160 | [

[

-0.04425048828125,

-0.033203125,

0.0166473388671875,

0.034759521484375,

-0.0191192626953125,

0.002239227294921875,

0.007480621337890625,

-0.04376220703125,

0.075927734375,

0.030975341796875,

-0.047149658203125,

-0.04034423828125,

-0.0239410400390625,

0.00421... |

digiplay/Burger_Mix_semiR2Lite | 2023-07-22T13:06:39.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/Burger_Mix_semiR2Lite | 3 | 573 | diffusers | 2023-06-17T11:15:19 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

A Model fit for cartoon & anime creative concept images.

https://civitai.com/models/6960?modelVersionId=30442

Sample image I made:

| 365 | [

[

-0.051666259765625,

-0.0285186767578125,

0.029205322265625,

0.039093017578125,

-0.031646728515625,

-0.016632080078125,

0.023681640625,

-0.0288238525390625,

0.08477783203125,

0.039337158203125,

-0.05841064453125,

-0.00836944580078125,

-0.01409912109375,

-0.00... |

digiplay/Remedy | 2023-07-15T16:04:57.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/Remedy | 2 | 573 | diffusers | 2023-07-15T00:58:04 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info :

https://civitai.com/models/87025

Original Author's DEMO images :

Sample image I made thru Huggingface's API :

| 890 | [

[

-0.0462646484375,

-0.035186767578125,

0.0316162109375,

0.0263214111328125,

-0.0290069580078125,

-0.0120086669921875,

0.018096923828125,

-0.0267333984375,

0.04693603515625,

0.03045654296875,

-0.06964111328125,

-0.033111572265625,

-0.0254058837890625,

-0.00388... |

digiplay/PersonaStyleCheckpoint | 2023-07-19T19:43:02.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/PersonaStyleCheckpoint | 2 | 573 | diffusers | 2023-07-19T18:23:00 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Model info :

https://civitai.com/models/31771?modelVersionId=38190

Sample image I made thru Huggingface's API :

Original Author's DEMO images :

,%201girl,%20(from%20above,%20arms%20behind%20back,%20naughty%20face,%20alternate%20hair%20color,%20very%20short%20hair,%20ringlets,%20braid,.jpeg)

,%201girl,%20(dynamic%20angle,%20hands%20on%20hips,%20_q,%20red%20hair,%20absurdly%20long%20hair,%20cornrows,%20small_breasts,%20wetland),(1).jpeg)

,%201girl,%20(fisheye,%20on%20side,%20confused,%20gradient%20hair,%20long%20hair,%20spiked%20hair,%20quad%20braids,%20large_breasts,%20class.jpeg)

| 1,282 | [

[

-0.044830322265625,

-0.047088623046875,

0.023590087890625,

0.035797119140625,

-0.0190277099609375,

0.0026683807373046875,

0.0262603759765625,

-0.0279693603515625,

0.052520751953125,

0.034088134765625,

-0.07110595703125,

-0.054443359375,

-0.04266357421875,

0.... |

timm/fastvit_t12.apple_dist_in1k | 2023-08-23T21:05:51.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2303.14189",

"license:other",

"region:us"

] | image-classification | timm | null | null | timm/fastvit_t12.apple_dist_in1k | 0 | 573 | timm | 2023-08-23T21:05:46 | ---

tags:

- image-classification

- timm

library_name: timm

license: other

datasets:

- imagenet-1k

---

# Model card for fastvit_t12.apple_dist_in1k

A FastViT image classification model. Trained on ImageNet-1k with distillation by paper authors.

Please observe [original license](https://github.com/apple/ml-fastvit/blob/8af5928238cab99c45f64fc3e4e7b1516b8224ba/LICENSE).

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 7.6

- GMACs: 1.4

- Activations (M): 12.4

- Image size: 256 x 256

- **Papers:**

- FastViT: A Fast Hybrid Vision Transformer using Structural Reparameterization: https://arxiv.org/abs/2303.14189

- **Original:** https://github.com/apple/ml-fastvit

- **Dataset:** ImageNet-1k

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('fastvit_t12.apple_dist_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'fastvit_t12.apple_dist_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 64, 64, 64])

# torch.Size([1, 128, 32, 32])

# torch.Size([1, 256, 16, 16])

# torch.Size([1, 512, 8, 8])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'fastvit_t12.apple_dist_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 512, 8, 8) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Citation

```bibtex

@inproceedings{vasufastvit2023,

author = {Pavan Kumar Anasosalu Vasu and James Gabriel and Jeff Zhu and Oncel Tuzel and Anurag Ranjan},

title = {FastViT: A Fast Hybrid Vision Transformer using Structural Reparameterization},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

year = {2023}

}

```

| 3,703 | [

[

-0.04180908203125,

-0.037933349609375,

0.0026721954345703125,

0.0169830322265625,

-0.031982421875,

-0.01483154296875,

-0.00864410400390625,

-0.0189971923828125,

0.02545166015625,

0.02508544921875,

-0.03851318359375,

-0.045745849609375,

-0.051422119140625,

-0... |

youngmki/musinsaigo-2.0 | 2023-08-28T02:14:54.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"fashion",

"ecommerce",

"en",

"license:creativeml-openrail-m",

"region:us"

] | text-to-image | youngmki | null | null | youngmki/musinsaigo-2.0 | 4 | 573 | diffusers | 2023-08-27T14:54:46 | ---

language:

- en

license: creativeml-openrail-m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

- fashion

- ecommerce

inference: false

---

# MUSINSA-IGO (MUSINSA fashion Image Generative Operator)

- - -

## MUSINSA-IGO 2.0 is a text-to-image generative model that fine-tuned [*Stable Diffusion XL 1.0*](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0) with LoRA using street snaps downloaded from the website of [Musinsa](https://www.musinsa.com/app/), a Korean fashion commerce company. This is very useful for generating fashion images.

### Examples

- - -

### Notes

- - -

* For example, the recommended prompt template is shown below.

**Prompt**: RAW photo, fashion photo of *subject*, (high detailed skin:1.2), 8k uhd, dslr, soft lighting, high quality, film grain, Fujifilm XT3

**Negative Prompt**: (deformed iris, deformed pupils, semi-realistic, cgi, 3d, render, sketch, cartoon, drawing, anime:1.4), text, close up, cropped, out of frame, the worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, fused fingers, too many fingers, long neck

* The source code is available in [this *GitHub* repository](https://github.com/youngmki/musinsaigo).

* It is recommended to apply a cross-attention scale of 0.5 to 0.75 and use a refiner.

### Usage

- - -

```python

import torch

from diffusers import DiffusionPipeline

def make_prompt(prompt: str) -> str:

prompt_prefix = "RAW photo"

prompt_suffix = "(high detailed skin:1.2), 8k uhd, dslr, soft lighting, high quality, film grain, Fujifilm XT3"

return ", ".join([prompt_prefix, prompt, prompt_suffix]).strip()

def make_negative_prompt(negative_prompt: str) -> str:

negative_prefix = "(deformed iris, deformed pupils, semi-realistic, cgi, 3d, render, sketch, cartoon, drawing, anime:1.4), \

text, close up, cropped, out of frame, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, \

extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, \

bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, \

extra arms, extra legs, fused fingers, too many fingers, long neck"

return (

", ".join([negative_prefix, negative_prompt]).strip()

if len(negative_prompt) > 0

else negative_prefix

)

device = "cuda" if torch.cuda.is_available() else "cpu"

model_id = "youngmki/musinsaigo-2.0"

pipe = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16

)

pipe = pipe.to(device)

pipe.load_lora_weights(model_id)

# Write your prompt here.

PROMPT = "a korean woman wearing a white t - shirt and black pants with a bear on it"

NEGATIVE_PROMPT = ""

# If you're not using a refiner

image = pipe(

prompt=make_prompt(PROMPT),

height=1024,

width=768,

num_inference_steps=50,

guidance_scale=7.5,

negative_prompt=make_negative_prompt(NEGATIVE_PROMPT),

cross_attention_kwargs={"scale": 0.75},

).images[0]

# If you're using a refiner

refiner = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-refiner-1.0",

text_encoder_2=pipe.text_encoder_2,

vae=pipe.vae,

torch_dtype=torch.float16,

)

image = pipe(

prompt=make_prompt(PROMPT),

height=1024,

width=768,

num_inference_steps=50,

guidance_scale=7.5,

negative_prompt=make_negative_prompt(NEGATIVE_PROMPT),

output_type="latent",

cross_attention_kwargs={"scale": 0.75},

)["images"]

generated_images = refiner(

prompt=make_prompt(PROMPT),

image=image,

num_inference_steps=50,

)["images"]

image.save("test.png")

```

| 4,122 | [

[

-0.037200927734375,

-0.058380126953125,

0.035614013671875,

0.0229034423828125,

-0.0267181396484375,

-0.0163116455078125,

-0.0060882568359375,

-0.0157928466796875,

0.037078857421875,

0.034637451171875,

-0.052093505859375,

-0.040069580078125,

-0.0546875,

0.000... |

digiplay/OldFish_v1.1_personal_HDmix | 2023-09-20T23:57:19.000Z | [

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | digiplay | null | null | digiplay/OldFish_v1.1_personal_HDmix | 2 | 573 | diffusers | 2023-09-20T19:22:02 | ---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

Use some merge ways to make OldFish_v1.1 into diffusers .safetensors WORK OK file.

Original Author's models page:

https://civitai.com/models/14978?modelVersionId=22052

Sample image generated by huggingface's API :

bright color,light color, 1girl

1 girl, masterpiece , magazine cover,

close-up ,masterpiece,highres, highest quality,intricate detail,best texture,realistic,8k,soft light,perfect shadow, sunny,portrait,1girl,hanfu,walking,Luxury, street shot,

| 1,350 | [

[

-0.050628662109375,

-0.0419921875,

0.022491455078125,

0.024444580078125,

-0.033447265625,

-0.01514434814453125,

0.00255584716796875,

-0.0494384765625,

0.044281005859375,

0.0335693359375,

-0.0285491943359375,

-0.036865234375,

-0.0489501953125,

0.0006299018859... |

keremberke/yolov5n-csgo | 2022-12-30T20:49:07.000Z | [

"yolov5",

"tensorboard",

"yolo",

"vision",

"object-detection",

"pytorch",

"dataset:keremberke/csgo-object-detection",

"model-index",

"has_space",

"region:us"

] | object-detection | keremberke | null | null | keremberke/yolov5n-csgo | 2 | 572 | yolov5 | 2022-12-29T08:05:37 |

---

tags:

- yolov5

- yolo

- vision

- object-detection

- pytorch

library_name: yolov5

library_version: 7.0.6

inference: false

datasets:

- keremberke/csgo-object-detection

model-index:

- name: keremberke/yolov5n-csgo

results:

- task:

type: object-detection

dataset:

type: keremberke/csgo-object-detection

name: keremberke/csgo-object-detection

split: validation

metrics:

- type: precision # since mAP@0.5 is not available on hf.co/metrics

value: 0.9081207114929885 # min: 0.0 - max: 1.0

name: mAP@0.5

---

<div align="center">

<img width="640" alt="keremberke/yolov5n-csgo" src="https://huggingface.co/keremberke/yolov5n-csgo/resolve/main/sample_visuals.jpg">

</div>

### How to use

- Install [yolov5](https://github.com/fcakyon/yolov5-pip):

```bash

pip install -U yolov5

```

- Load model and perform prediction:

```python

import yolov5

# load model

model = yolov5.load('keremberke/yolov5n-csgo')

# set model parameters

model.conf = 0.25 # NMS confidence threshold

model.iou = 0.45 # NMS IoU threshold

model.agnostic = False # NMS class-agnostic

model.multi_label = False # NMS multiple labels per box

model.max_det = 1000 # maximum number of detections per image

# set image

img = 'https://github.com/ultralytics/yolov5/raw/master/data/images/zidane.jpg'

# perform inference

results = model(img, size=640)

# inference with test time augmentation

results = model(img, augment=True)

# parse results

predictions = results.pred[0]

boxes = predictions[:, :4] # x1, y1, x2, y2

scores = predictions[:, 4]

categories = predictions[:, 5]

# show detection bounding boxes on image

results.show()

# save results into "results/" folder

results.save(save_dir='results/')

```

- Finetune the model on your custom dataset:

```bash

yolov5 train --data data.yaml --img 640 --batch 16 --weights keremberke/yolov5n-csgo --epochs 10

```

**More models available at: [awesome-yolov5-models](https://github.com/keremberke/awesome-yolov5-models)** | 2,010 | [

[

-0.0557861328125,

-0.0426025390625,

0.037872314453125,

-0.027008056640625,

-0.0192413330078125,

-0.0169525146484375,

-0.005828857421875,

-0.041351318359375,

0.0078582763671875,

0.020477294921875,

-0.05126953125,

-0.054229736328125,

-0.03851318359375,

-0.0116... |

Banano/banchan-protogen-v22 | 2023-03-05T20:26:09.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"en",

"license:creativeml-openrail-m",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Banano | null | null | Banano/banchan-protogen-v22 | 5 | 572 | diffusers | 2023-01-26T13:04:46 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

language:

- en

library_name: diffusers

---

# Banano Chan - Protogen v2.2 (banchan-protogen-v22) V2

A potassium rich latent diffusion model. [Protogen v2.2 (Anime)](https://huggingface.co/darkstorm2150/Protogen_v2.2_Official_Release) trained to the likeness of [Banano Chan](https://twitter.com/Banano_Chan/). The digital waifu embodiment of [Banano](https://www.banano.cc), a feeless and super fast meme cryptocurrency.

This model is intended to produce high-quality, highly detailed images from rich and complex prompts.

```

Prompt: banchan, 1girl

Negative prompt: ((disfigured)), ((bad art)), ((deformed)),((extra limbs)), ((bad anatomy)), (((bad proportions)))

Steps: 20, Sampler: Euler a, CFG scale: 7, Seed: 3207496684, Size:

768x768, Model hash: 220c1c8ec5, Model: banchanProtogenV2, Clip skip: 2

```

Share your pictures in the [#banano-ai-art Discord channel](https://discord.com/channels/415935345075421194/991823100054355998) or [Community](https://huggingface.co/Banano/banchan-protogen-v22/discussions) tab.

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb)

Sample pictures:

--

Dreambooth model trained with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook.

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license) | 2,753 | [

[

-0.037078857421875,

-0.057281494140625,

0.03533935546875,

0.039764404296875,

-0.04296875,

0.0041046142578125,

0.0010080337524414062,

-0.04510498046875,

0.03662109375,

0.019683837890625,

-0.034698486328125,

-0.0283966064453125,

-0.049041748046875,

-0.01660156... |

gustavomedeiros/labsai | 2023-11-02T20:35:40.000Z | [

"transformers",

"pytorch",

"roberta",

"text-classification",

"generated_from_trainer",

"license:mit",

"endpoints_compatible",

"region:us"

] | text-classification | gustavomedeiros | null | null | gustavomedeiros/labsai | 0 | 572 | transformers | 2023-10-13T17:40:24 | ---

license: mit

base_model: roberta-base

tags:

- generated_from_trainer

model-index:

- name: labsai

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# labsai

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3869

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 0.6231 | 1.0 | 13521 | 0.6692 |

| 0.2591 | 2.0 | 27042 | 0.4578 |

| 0.5849 | 3.0 | 40563 | 0.4531 |

| 0.1875 | 4.0 | 54084 | 0.4265 |

| 0.0596 | 5.0 | 67605 | 0.3869 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

| 1,482 | [

[

-0.026519775390625,

-0.0438232421875,

0.0193634033203125,

0.007579803466796875,

-0.0228424072265625,

-0.031585693359375,

-0.0101165771484375,

-0.006572723388671875,

0.00250244140625,

0.030059814453125,

-0.060791015625,

-0.04644775390625,

-0.05035400390625,

-... |

microsoft/DialogRPT-human-vs-machine | 2021-05-23T09:16:47.000Z | [

"transformers",

"pytorch",

"gpt2",

"text-classification",

"arxiv:2009.06978",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-classification | microsoft | null | null | microsoft/DialogRPT-human-vs-machine | 3 | 571 | transformers | 2022-03-02T23:29:05 | # Demo

Please try this [➤➤➤ Colab Notebook Demo (click me!)](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

| Context | Response | `human_vs_machine` score |

| :------ | :------- | :------------: |

| I love NLP! | I'm not sure if it's a good idea. | 0.000 |

| I love NLP! | Me too! | 0.605 |

The `human_vs_machine` score predicts how likely the response is from a human rather than a machine.

# DialogRPT-human-vs-machine

### Dialog Ranking Pretrained Transformers

> How likely a dialog response is upvoted 👍 and/or gets replied 💬?

This is what [**DialogRPT**](https://github.com/golsun/DialogRPT) is learned to predict.

It is a set of dialog response ranking models proposed by [Microsoft Research NLP Group](https://www.microsoft.com/en-us/research/group/natural-language-processing/) trained on 100 + millions of human feedback data.

It can be used to improve existing dialog generation model (e.g., [DialoGPT](https://huggingface.co/microsoft/DialoGPT-medium)) by re-ranking the generated response candidates.

Quick Links:

* [EMNLP'20 Paper](https://arxiv.org/abs/2009.06978/)

* [Dataset, training, and evaluation](https://github.com/golsun/DialogRPT)

* [Colab Notebook Demo](https://colab.research.google.com/drive/1cAtfkbhqsRsT59y3imjR1APw3MHDMkuV?usp=sharing)

We considered the following tasks and provided corresponding pretrained models.

|Task | Description | Pretrained model |

| :------------- | :----------- | :-----------: |

| **Human feedback** | **given a context and its two human responses, predict...**|

| `updown` | ... which gets more upvotes? | [model card](https://huggingface.co/microsoft/DialogRPT-updown) |

| `width`| ... which gets more direct replies? | [model card](https://huggingface.co/microsoft/DialogRPT-width) |

| `depth`| ... which gets longer follow-up thread? | [model card](https://huggingface.co/microsoft/DialogRPT-depth) |

| **Human-like** (human vs fake) | **given a context and one human response, distinguish it with...** |

| `human_vs_rand`| ... a random human response | [model card](https://huggingface.co/microsoft/DialogRPT-human-vs-rand) |

| `human_vs_machine`| ... a machine generated response | this model |

### Contact:

Please create an issue on [our repo](https://github.com/golsun/DialogRPT)

### Citation:

```

@inproceedings{gao2020dialogrpt,

title={Dialogue Response RankingTraining with Large-Scale Human Feedback Data},

author={Xiang Gao and Yizhe Zhang and Michel Galley and Chris Brockett and Bill Dolan},

year={2020},

booktitle={EMNLP}

}

```

| 2,636 | [

[

-0.04193115234375,

-0.072509765625,

0.01512908935546875,

0.0166015625,

0.0011835098266601562,

0.01165008544921875,

-0.01267242431640625,

-0.04132080078125,

0.016082763671875,

0.0234832763671875,

-0.048004150390625,

-0.02362060546875,

-0.02392578125,

0.007606... |

timm/flexivit_base.1000ep_in21k | 2023-05-05T23:58:47.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"dataset:imagenet-1k",

"arxiv:2212.08013",

"arxiv:2010.11929",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/flexivit_base.1000ep_in21k | 0 | 571 | timm | 2022-12-22T07:14:02 | ---

tags:

- image-classification

- timm

library_name: timm

license: apache-2.0

datasets:

- imagenet-1k

---

# Model card for flexivit_base.1000ep_in21k

A FlexiViT image classification model. Trained on ImageNet-1k in JAX by paper authors, ported to PyTorch by Ross Wightman.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 102.6

- GMACs: 19.4

- Activations (M): 18.9

- Image size: 240 x 240

- **Papers:**

- FlexiViT: One Model for All Patch Sizes: https://arxiv.org/abs/2212.08013

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale: https://arxiv.org/abs/2010.11929v2

- **Dataset:** ImageNet-1k

- **Original:** https://github.com/google-research/big_vision

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('flexivit_base.1000ep_in21k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)