modelId stringlengths 4 111 | lastModified stringlengths 24 24 | tags list | pipeline_tag stringlengths 5 30 ⌀ | author stringlengths 2 34 ⌀ | config null | securityStatus null | id stringlengths 4 111 | likes int64 0 9.53k | downloads int64 2 73.6M | library_name stringlengths 2 84 ⌀ | created timestamp[us] | card stringlengths 101 901k | card_len int64 101 901k | embeddings list |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

sanchit-gandhi/whisper-small-ft-common-language-id | 2023-09-18T14:29:46.000Z | [

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"whisper",

"audio-classification",

"generated_from_trainer",

"dataset:common_language",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | audio-classification | sanchit-gandhi | null | null | sanchit-gandhi/whisper-small-ft-common-language-id | 0 | 557 | transformers | 2023-02-24T12:26:04 | ---

license: apache-2.0

tags:

- audio-classification

- generated_from_trainer

datasets:

- common_language

metrics:

- accuracy

base_model: openai/whisper-small

model-index:

- name: whisper-small-ft-common-language-id

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# whisper-small-ft-common-language-id

This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the common_language dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6409

- Accuracy: 0.8860

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 0

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.1767 | 1.0 | 694 | 1.1063 | 0.7514 |

| 0.582 | 2.0 | 1388 | 0.6595 | 0.8327 |

| 0.3172 | 3.0 | 2082 | 0.5887 | 0.8529 |

| 0.196 | 4.0 | 2776 | 0.5332 | 0.8701 |

| 0.0858 | 5.0 | 3470 | 0.5705 | 0.8733 |

| 0.0477 | 6.0 | 4164 | 0.6311 | 0.8779 |

| 0.0353 | 7.0 | 4858 | 0.6011 | 0.8825 |

| 0.0033 | 8.0 | 5552 | 0.6186 | 0.8843 |

| 0.0071 | 9.0 | 6246 | 0.6409 | 0.8860 |

| 0.0074 | 10.0 | 6940 | 0.6334 | 0.8860 |

### Framework versions

- Transformers 4.27.0.dev0

- Pytorch 1.13.1

- Datasets 2.9.0

- Tokenizers 0.13.2

| 2,156 | [

[

-0.032745361328125,

-0.039764404296875,

0.00339508056640625,

0.005092620849609375,

-0.022705078125,

-0.0308990478515625,

-0.0212554931640625,

-0.021026611328125,

0.02001953125,

0.017425537109375,

-0.051971435546875,

-0.048187255859375,

-0.04766845703125,

-0.... |

Helsinki-NLP/opus-mt-uk-es | 2023-08-16T12:08:05.000Z | [

"transformers",

"pytorch",

"tf",

"marian",

"text2text-generation",

"translation",

"uk",

"es",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | translation | Helsinki-NLP | null | null | Helsinki-NLP/opus-mt-uk-es | 0 | 556 | transformers | 2022-03-02T23:29:04 | ---

tags:

- translation

license: apache-2.0

---

### opus-mt-uk-es

* source languages: uk

* target languages: es

* OPUS readme: [uk-es](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/uk-es/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/uk-es/opus-2020-01-16.zip)

* test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/uk-es/opus-2020-01-16.test.txt)

* test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/uk-es/opus-2020-01-16.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| Tatoeba.uk.es | 50.4 | 0.680 |

| 818 | [

[

-0.0158538818359375,

-0.0217742919921875,

0.016510009765625,

0.0227813720703125,

-0.0357666015625,

-0.02734375,

-0.0302886962890625,

-0.0078277587890625,

0.004077911376953125,

0.03155517578125,

-0.053955078125,

-0.043731689453125,

-0.042999267578125,

0.01988... |

HooshvareLab/distilbert-fa-zwnj-base | 2021-03-16T16:30:29.000Z | [

"transformers",

"pytorch",

"tf",

"distilbert",

"fill-mask",

"fa",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] | fill-mask | HooshvareLab | null | null | HooshvareLab/distilbert-fa-zwnj-base | 1 | 556 | transformers | 2022-03-02T23:29:04 | ---

language: fa

license: apache-2.0

---

# DistilBERT

This model can tackle the zero-width non-joiner character for Persian writing. Also, the model was trained on new multi-types corpora with a new set of vocabulary.

## Questions?

Post a Github issue on the [ParsBERT Issues](https://github.com/hooshvare/parsbert/issues) repo. | 332 | [

[

-0.020263671875,

-0.051849365234375,

0.05419921875,

-0.007144927978515625,

-0.0261688232421875,

0.0162353515625,

-0.01436614990234375,

-0.0178375244140625,

0.022369384765625,

0.05206298828125,

-0.047454833984375,

-0.044097900390625,

-0.03253173828125,

0.0059... |

lighteternal/wav2vec2-large-xlsr-53-greek | 2022-03-26T10:12:37.000Z | [

"transformers",

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"hf-asr-leaderboard",

"speech",

"xlsr-fine-tuning-week",

"el",

"dataset:common_voice",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"has_space",

"region:us"

] | automatic-speech-recognition | lighteternal | null | null | lighteternal/wav2vec2-large-xlsr-53-greek | 5 | 556 | transformers | 2022-03-02T23:29:05 | ---

language: el

datasets:

- common_voice

tags:

- audio

- hf-asr-leaderboard

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

model-index:

- name: XLSR Wav2Vec2 Greek by Lighteternal

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: CommonVoice (EL), CSS10 (EL)

type: CCS10 + mozilla-foundation/common_voice_7_0

args: el

metrics:

- name: Test WER

type: wer

value: 10.497628

- name: Test CER

type: cer

value: 2.875260

---

# Greek (el) version of the XLSR-Wav2Vec2 automatic speech recognition (ASR) model

### By the Hellenic Army Academy and the Technical University of Crete

* language: el

* licence: apache-2.0

* dataset: CommonVoice (EL), 364MB: https://commonvoice.mozilla.org/el/datasets + CSS10 (EL), 1.22GB: https://github.com/Kyubyong/css10

* model: XLSR-Wav2Vec2, trained for 50 epochs

* metrics: Word Error Rate (WER)

## Model description

UPDATE: We repeated the fine-tuning process using an additional 1.22GB dataset from CSS10.

Wav2Vec2 is a pretrained model for Automatic Speech Recognition (ASR) and was released in September 2020 by Alexei Baevski, Michael Auli, and Alex Conneau. Soon after the superior performance of Wav2Vec2 was demonstrated on the English ASR dataset LibriSpeech, Facebook AI presented XLSR-Wav2Vec2. XLSR stands for cross-lingual speech representations and refers to XLSR-Wav2Vec2`s ability to learn speech representations that are useful across multiple languages.

Similar to Wav2Vec2, XLSR-Wav2Vec2 learns powerful speech representations from hundreds of thousands of hours of speech in more than 50 languages of unlabeled speech. Similar, to BERT's masked language modeling, the model learns contextualized speech representations by randomly masking feature vectors before passing them to a transformer network.

This model was trained for 50 epochs on a single NVIDIA RTX 3080, for aprox. 8hrs.

## How to use for inference:

For live demo, make sure that speech files are sampled at 16kHz.

Instructions to test on CommonVoice extracts are provided in the ASR_Inference.ipynb. Snippet also available below:

```python

#!/usr/bin/env python

# coding: utf-8

# Loading dependencies and defining preprocessing functions

from transformers import Wav2Vec2ForCTC

from transformers import Wav2Vec2Processor

from datasets import load_dataset, load_metric

import re

import torchaudio

import librosa

import numpy as np

from datasets import load_dataset, load_metric

import torch

chars_to_ignore_regex = '[\\\\\\\\,\\\\\\\\?\\\\\\\\.\\\\\\\\!\\\\\\\\-\\\\\\\\;\\\\\\\\:\\\\\\\\"\\\\\\\\“\\\\\\\\%\\\\\\\\‘\\\\\\\\”\\\\\\\\�]'

def remove_special_characters(batch):

batch["text"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower() + " "

return batch

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

batch["speech"] = speech_array[0].numpy()

batch["sampling_rate"] = sampling_rate

batch["target_text"] = batch["text"]

return batch

def resample(batch):

batch["speech"] = librosa.resample(np.asarray(batch["speech"]), 48_000, 16_000)

batch["sampling_rate"] = 16_000

return batch

def prepare_dataset(batch):

# check that all files have the correct sampling rate

assert (

len(set(batch["sampling_rate"])) == 1

), f"Make sure all inputs have the same sampling rate of {processor.feature_extractor.sampling_rate}."

batch["input_values"] = processor(batch["speech"], sampling_rate=batch["sampling_rate"][0]).input_values

with processor.as_target_processor():

batch["labels"] = processor(batch["target_text"]).input_ids

return batch

# Loading model and dataset processor

model = Wav2Vec2ForCTC.from_pretrained("lighteternal/wav2vec2-large-xlsr-53-greek").to("cuda")

processor = Wav2Vec2Processor.from_pretrained("lighteternal/wav2vec2-large-xlsr-53-greek")

# Preparing speech dataset to be suitable for inference

common_voice_test = load_dataset("common_voice", "el", split="test")

common_voice_test = common_voice_test.remove_columns(["accent", "age", "client_id", "down_votes", "gender", "locale", "segment", "up_votes"])

common_voice_test = common_voice_test.map(remove_special_characters, remove_columns=["sentence"])

common_voice_test = common_voice_test.map(speech_file_to_array_fn, remove_columns=common_voice_test.column_names)

common_voice_test = common_voice_test.map(resample, num_proc=8)

common_voice_test = common_voice_test.map(prepare_dataset, remove_columns=common_voice_test.column_names, batch_size=8, num_proc=8, batched=True)

# Loading test dataset

common_voice_test_transcription = load_dataset("common_voice", "el", split="test")

#Performing inference on a random sample. Change the "example" value to try inference on different CommonVoice extracts

example = 123

input_dict = processor(common_voice_test["input_values"][example], return_tensors="pt", sampling_rate=16_000, padding=True)

logits = model(input_dict.input_values.to("cuda")).logits

pred_ids = torch.argmax(logits, dim=-1)

print("Prediction:")

print(processor.decode(pred_ids[0]))

# πού θέλεις να πάμε ρώτησε φοβισμένα ο βασιλιάς

print("\\\\

Reference:")

print(common_voice_test_transcription["sentence"][example].lower())

# πού θέλεις να πάμε; ρώτησε φοβισμένα ο βασιλιάς.

```

## Evaluation

The model can be evaluated as follows on the Greek test data of Common Voice.

```python

import torch

import torchaudio

from datasets import load_dataset, load_metric

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

import re

test_dataset = load_dataset("common_voice", "el", split="test")

wer = load_metric("wer")

processor = Wav2Vec2Processor.from_pretrained("lighteternal/wav2vec2-large-xlsr-53-greek")

model = Wav2Vec2ForCTC.from_pretrained("lighteternal/wav2vec2-large-xlsr-53-greek")

model.to("cuda")

chars_to_ignore_regex = '[\\\\\\\\,\\\\\\\\?\\\\\\\\.\\\\\\\\!\\\\\\\\-\\\\\\\\;\\\\\\\\:\\\\\\\\"\\\\\\\\“\\\\\\\\%\\\\\\\\‘\\\\\\\\”\\\\\\\\�]'

resampler = torchaudio.transforms.Resample(48_000, 16_000)

# Preprocessing the datasets.

# We need to read the aduio files as arrays

def speech_file_to_array_fn(batch):

batch["sentence"] = re.sub(chars_to_ignore_regex, '', batch["sentence"]).lower()

speech_array, sampling_rate = torchaudio.load(batch["path"])

batch["speech"] = resampler(speech_array).squeeze().numpy()

return batch

test_dataset = test_dataset.map(speech_file_to_array_fn)

# Preprocessing the datasets.

# We need to read the aduio files as arrays

def evaluate(batch):

inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

with torch.no_grad():

logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["pred_strings"] = processor.batch_decode(pred_ids)

return batch

result = test_dataset.map(evaluate, batched=True, batch_size=8)

print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"])))

```

**Test Result**: 10.497628 %

### How to use for training:

Instructions and code to replicate the process are provided in the Fine_Tune_XLSR_Wav2Vec2_on_Greek_ASR_with_🤗_Transformers.ipynb notebook.

## Metrics

| Metric | Value |

| ----------- | ----------- |

| Training Loss | 0.0545 |

| Validation Loss | 0.1661 |

| CER on CommonVoice Test (%) *| 2.8753 |

| WER on CommonVoice Test (%) *| 10.4976 |

* Reference transcripts were lower-cased and striped of punctuation and special characters.

### Acknowledgement

The research work was supported by the Hellenic Foundation for Research and Innovation (HFRI) under the HFRI PhD Fellowship grant (Fellowship Number:50, 2nd call)

Based on the tutorial of Patrick von Platen: https://huggingface.co/blog/fine-tune-xlsr-wav2vec2

Original colab notebook here: https://colab.research.google.com/github/patrickvonplaten/notebooks/blob/master/Fine_Tune_XLSR_Wav2Vec2_on_Turkish_ASR_with_%F0%9F%A4%97_Transformers.ipynb#scrollTo=V7YOT2mnUiea

| 8,209 | [

[

-0.0266876220703125,

-0.051544189453125,

0.0005369186401367188,

0.00991058349609375,

-0.0188446044921875,

-0.011505126953125,

-0.042938232421875,

-0.0316162109375,

0.008941650390625,

0.019927978515625,

-0.04833984375,

-0.038055419921875,

-0.03887939453125,

0... |

OpenAssistant/falcon-40b-sft-mix-1226 | 2023-06-06T10:23:05.000Z | [

"transformers",

"pytorch",

"RefinedWeb",

"text-generation",

"sft",

"custom_code",

"en",

"de",

"es",

"fr",

"dataset:OpenAssistant/oasst1",

"dataset:databricks/databricks-dolly-15k",

"license:apache-2.0",

"has_space",

"text-generation-inference",

"region:us"

] | text-generation | OpenAssistant | null | null | OpenAssistant/falcon-40b-sft-mix-1226 | 34 | 556 | transformers | 2023-06-04T05:51:59 | ---

license: apache-2.0

language:

- en

- de

- es

- fr

tags:

- sft

inference: false

datasets:

- OpenAssistant/oasst1

- databricks/databricks-dolly-15k

---

# Open-Assistant Falcon 40B SFT MIX Model

This model is a fine-tuning of TII's [Falcon 40B](https://huggingface.co/tiiuae/falcon-40b) LLM.

It was trained on a mixture of OASST top-2 threads (exported on June 2, 2023), Dolly-15k and synthetic instruction datasets (see dataset configuration below).

## Model Details

- **Finetuned from:** [tiiuae/falcon-40b]((https://huggingface.co/tiiuae/falcon-40b)

- **Model type:** Causal decoder-only transformer language model

- **Language:** English, German, Spanish, French (and limited capabilities in Italian, Portuguese, Polish, Dutch, Romanian, Czech, Swedish);

- **Demo:** [Continuations for 250 random prompts](https://open-assistant.github.io/oasst-model-eval/?f=https%3A%2F%2Fraw.githubusercontent.com%2FOpen-Assistant%2Foasst-model-eval%2Fmain%2Fsampling_reports%2Fchat-gpt%2F2023-04-11_gpt-3.5-turbo_lottery.json%0Ahttps%3A%2F%2Fraw.githubusercontent.com%2FOpen-Assistant%2Foasst-model-eval%2Fmain%2Fsampling_reports%2Foasst-sft%2F2023-06-05_OpenAssistant_falcon-40b-sft-mix-1226_sampling_noprefix2.json), [multiligual-60](https://open-assistant.github.io/oasst-model-eval/?f=https%3A%2F%2Fraw.githubusercontent.com%2FOpen-Assistant%2Foasst-model-eval%2Fmain%2Fsampling_reports%2Foasst-sft%2F2023-06-05_OpenAssistant_falcon-40b-sft-mix-1226_multilingual_noprefix2.json)

- **Eval results:** [ilm-eval](https://tju01.github.io/ilm-eval/)

- **Weights & Biases**: [Training log](https://wandb.ai/open-assistant/public-sft/runs/feplc450) (checkpoint: 1226 steps)

- **License:** Apache 2.0

- **Contact:** [Open-Assistant Discord](https://ykilcher.com/open-assistant-discord)

## Prompting

Two special tokens are used to mark the beginning of user and assistant turns:

`<|prompter|>` and `<|assistant|>`. Each turn ends with a `<|endoftext|>` token.

Input prompt example:

```

<|prompter|>What is a meme, and what's the history behind this word?<|endoftext|><|assistant|>

```

The input ends with the `<|assistant|>` token to signal that the model should

start generating the assistant reply.

## Configuration Details

Model:

```

falcon-40b:

dtype: bf16

learning_rate: 1e-5

model_name: "tiiuae/falcon-40b"

deepspeed_config: configs/zero3_config_falcon.json

weight_decay: 0.0

max_length: 2048

warmup_steps: 20

gradient_checkpointing: true

gradient_accumulation_steps: 1

per_device_train_batch_size: 18

per_device_eval_batch_size: 10

eval_steps: 120

save_strategy: steps

save_steps: 613

num_train_epochs: 8

save_total_limit: 4

use_flash_attention: false

residual_dropout: 0.3

residual_dropout_lima: true

```

Dataset:

```

sft9-stage2:

# oasst_export: 100.00% (29899)

# vicuna: 50.00% (16963)

# code_alpaca: 50.00% (9510)

# oa_wiki_qa_bart_10000row: 100.00% (9434)

# grade_school_math_instructions: 100.00% (8351)

# dolly15k: 100.00% (14250)

use_custom_sampler: true

datasets:

- oasst_export:

lang: "bg,ca,cs,da,de,en,es,fr,hr,hu,it,nl,pl,pt,ro,ru,sl,sr,sv,uk" # sft-8.0

input_file_path: 2023-06-02_oasst_all_labels.jsonl.gz

val_split: 0.05

top_k: 2

- vicuna:

fraction: 0.5

val_split: 0.025

max_val_set: 250

- code_alpaca:

fraction: 0.5

val_split: 0.05

max_val_set: 250

- oa_wiki_qa_bart_10000row:

val_split: 0.05

max_val_set: 250

- grade_school_math_instructions:

val_split: 0.05

- dolly15k:

val_split: 0.05

max_val_set: 300

``` | 3,631 | [

[

-0.048675537109375,

-0.06671142578125,

0.0147857666015625,

0.0162353515625,

-0.0017232894897460938,

0.00556182861328125,

-0.0016717910766601562,

-0.01439666748046875,

0.02783203125,

0.026763916015625,

-0.060333251953125,

-0.038818359375,

-0.03948974609375,

0... |

OpenAssistant/reward-model-deberta-v3-base | 2023-01-26T01:07:57.000Z | [

"transformers",

"pytorch",

"deberta-v2",

"text-classification",

"reward-model",

"reward_model",

"RLHF",

"en",

"dataset:openai/webgpt_comparisons",

"dataset:openai/summarize_from_feedback",

"dataset:Dahoas/instruct-synthetic-prompt-responses",

"license:mit",

"endpoints_compatible",

"region:... | text-classification | OpenAssistant | null | null | OpenAssistant/reward-model-deberta-v3-base | 6 | 555 | transformers | 2023-01-15T11:06:39 | ---

license: mit

datasets:

- openai/webgpt_comparisons

- openai/summarize_from_feedback

- Dahoas/instruct-synthetic-prompt-responses

language:

- en

metrics:

- accuracy

tags:

- reward-model

- reward_model

- RLHF

---

# Reward model trained from human feedback

Reward model (RM) trained to predict which generated answer is better judged by a human, given a question.

RM are useful in these domain:

- QA model evaluation

- serves as reward score in RLHF

All models are train on these dataset with a same split seed across datasets (if validation split wasn't available)

- [webgpt_comparisons](https://huggingface.co/datasets/openai/webgpt_comparisons)

- [summarize_from_feedback](https://huggingface.co/datasets/openai/summarize_from_feedback)

- [synthetic-instruct-gptj-pairwise](https://huggingface.co/datasets/Dahoas/synthetic-instruct-gptj-pairwise)

# How to use

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

reward_name = "OpenAssistant/reward-model-deberta-v3-base"

rank_model, tokenizer = AutoModelForSequenceClassification.from_pretrained(reward_name), AutoTokenizer.from_pretrained(reward_name)

question, answer = "Explain nuclear fusion like I am five", "Nuclear fusion is the process by which two or more protons and neutrons combine to form a single nucleus. It is a very important process in the universe, as it is the source of energy for stars and galaxies. Nuclear fusion is also a key process in the production of energy for nuclear power plants."

inputs = tokenizer(question, answer, return_tensors='pt')

score = rank_model(**inputs).logits[0].cpu().detach()

print(score)

```

# Performance

Validation split accuracy

| Model | [WebGPT](https://huggingface.co/datasets/openai/webgpt_comparisons) | [Summary](https://huggingface.co/datasets/openai/summarize_from_feedback) | [SytheticGPT](https://huggingface.co/datasets/Dahoas/synthetic-instruct-gptj-pairwise) |

|---|---|---|---|

| [electra-large-discriminator](https://huggingface.co/OpenAssistant/reward-model-electra-large-discriminator) | 59.30 | 68.66 | 99.85 |

| [deberta-v3-large](https://huggingface.co/OpenAssistant/reward-model-deberta-v3-large) | 61.13 | 72.23 | 99.94 |

| [deberta-v3-base](https://huggingface.co/OpenAssistant/reward-model-deberta-v3-base) | 59.07 | 66.84 | 99.85 |

Its likely SytheticGPT has somekind of surface pattern on the choosen-rejected pair which makes it trivial to differentiate between better the answer. | 2,475 | [

[

-0.0462646484375,

-0.03338623046875,

0.012542724609375,

0.006229400634765625,

-0.01079559326171875,

-0.0034389495849609375,

0.0100250244140625,

-0.02386474609375,

0.007785797119140625,

0.0112762451171875,

-0.05279541015625,

-0.0178375244140625,

-0.03765869140625... |

davolu/stacco-ai-interior-v3k | 2023-07-27T00:36:17.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | davolu | null | null | davolu/stacco-ai-interior-v3k | 0 | 555 | diffusers | 2023-07-26T23:51:27 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### stacco_ai_interior_v3k Dreambooth model trained by David Oluyale

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

| 333 | [

[

-0.0293121337890625,

-0.05584716796875,

0.0491943359375,

0.0217132568359375,

-0.0098419189453125,

0.01385498046875,

0.02294921875,

-0.025390625,

0.0362548828125,

0.01079559326171875,

-0.0206298828125,

-0.032562255859375,

-0.029937744140625,

-0.01870727539062... |

foduucom/plant-leaf-detection-and-classification | 2023-08-12T10:55:32.000Z | [

"ultralytics",

"tensorboard",

"v8",

"ultralyticsplus",

"yolov8",

"yolo",

"vision",

"object-detection",

"pytorch",

"awesome-yolov8-models",

"plant classification",

"plant detection",

"leaf classification",

"leaf detection",

"crop detection",

"disease detection",

"en",

"model-index",... | object-detection | foduucom | null | null | foduucom/plant-leaf-detection-and-classification | 4 | 555 | ultralytics | 2023-08-12T10:45:05 | ---

tags:

- ultralyticsplus

- yolov8

- ultralytics

- yolo

- vision

- object-detection

- pytorch

- awesome-yolov8-models

- plant classification

- plant detection

- leaf classification

- leaf detection

- crop detection

- disease detection

library_name: ultralytics

library_version: 8.0.43

inference: False

model-index:

- name: foduucom/plant-leaf-detection-and-classification

results:

- task:

type: object-detection

metrics:

- type: precision

value: 0.946

name: mAP@0.5(box)

language:

- en

metrics:

- accuracy

---

Below is the Model Card for the YOLOv8s Leaf Detection and Classification model:

---

<p align="center">

<!-- Smaller size image -->

<img src="https://huggingface.co/foduucom/plant-leaf-detection-and-classification/resolve/main/thumbnail.jpg" alt="Image" style="width:500px; height:300px;">

</p>

# Model Card for YOLOv8s Leaf Detection and Classification

## Model Summary

The YOLOv8s Leaf Detection and Classification model is an object detection model based on the YOLO (You Only Look Once) framework. It is designed to detect and classify various types of leaves in images. The model has achieved a precision (mAP@0.5) of 0.946 on the object detection task.

## Model Details

### Model Description

The YOLOv8s Leaf Detection and Classification model is built on the YOLOv8 architecture, which is known for its real-time object detection capabilities. This specific model has been trained to recognize and classify different types of leaves from various plant species. It can detect multiple leaf instances in an image and assign them to their respective classes.

```

['ginger', 'banana', 'tobacco', 'ornamaental', 'rose', 'soyabean', 'papaya', 'garlic', 'raspberry', 'mango', 'cotton', 'corn', 'pomgernate', 'strawberry', 'Blueberry', 'brinjal', 'potato', 'wheat', 'olive', 'rice', 'lemon', 'cabbage', 'gauava', 'chilli', 'capcicum', 'sunflower', 'cherry', 'cassava', 'apple', 'tea', 'sugarcane', 'groundnut', 'weed', 'peach', 'coffee', 'cauliflower', 'tomato', 'onion', 'gram', 'chiku', 'jamun', 'castor', 'pea', 'cucumber', 'grape', 'cardamom']

```

- **Developed by:** FODUU AI

- **Model type:** Object Detection

- **Language(s) (NLP):** English

Furthermore, the YOLOv8s Leaf Detection and Classification model encourages user collaboration by allowing them to contribute their own plant leaf data. Users can submit images of new plant species, and suggest plant names for classification. Our team will diligently work to incorporate these new plant classes into the model, enhancing its ability to identify and classify an even wider variety of plant leaves.

Users are invited to actively participate in expanding the YOLOv8s Leaf Detection and Classification model's capabilities by sharing their plant names and corresponding dataset links through our community platform or by emailing the information to info@foduu.com. Your contributions will play a crucial role in enriching the model's knowledge and recognition of diverse plant species.

## Uses

### Direct Use

The YOLOv8s Leaf Detection and Classification model can be used directly for object detection tasks related to leaf detection and classification. It does not require fine-tuning or integration into a larger ecosystem or application.

### Downstream Use

The model can also be fine-tuned for specific leaf detection and classification tasks or integrated into a larger application for plant-related research, agriculture, or environmental monitoring.

### Out-of-Scope Use

The model is not designed for unrelated tasks or object detection scenarios outside the scope of leaf detection and classification.

## Bias, Risks, and Limitations

The YOLOv8s Leaf Detection and Classification model may have some limitations and biases:

- The model's performance may vary depending on the quality and diversity of the training data.

- It may struggle with detecting leaves that are heavily occluded or overlapping with other objects.

- The model's accuracy may be affected by variations in lighting conditions, image quality, and resolution.

- It may not accurately detect very small or distant leaves in images.

- The model's classification accuracy may be lower for leaf species that resemble each other closely.

- The model's biases may stem from the biases present in the training data.

### Recommendations

Users (both direct and downstream) should be made aware of the risks, biases, and limitations of the model. Further research and experimentation are recommended to assess its performance in specific use cases and domains.

## How to Get Started with the Model

To get started with the YOLOv8s Leaf Detection and Classification model, follow these steps:

1. Install [ultralyticsplus](https://github.com/fcakyon/ultralyticsplus) and [ultralytics](https://github.com/ultralytics/ultralytics) libraries using pip:

```bash

pip install ultralyticsplus==0.0.28 ultralytics==8.0.43

```

2. Load the model and perform prediction using the provided code snippet.

```python

from ultralyticsplus import YOLO, render_result

# load model

model = YOLO('foduucom/plant-leaf-detection-and-classification')

# set model parameters

model.overrides['conf'] = 0.25 # NMS confidence threshold

model.overrides['iou'] = 0.45 # NMS IoU threshold

model.overrides['agnostic_nms'] = False # NMS class-agnostic

model.overrides['max_det'] = 1000 # maximum number of detections per image

# set image

image = 'path/to/your/image'

# perform inference

results = model.predict(image)

# observe results

print(results[0].boxes)

render = render_result(model=model, image=image, result=results[0])

render.show()

```

## Training Details

### Training Data

The model is trained on hundreds of images of 46 different plants, including both disease-infected and healthy leaves.

### Training Procedure

The training process involves using high GPU capacity and is run for up to 50 epochs, where each epoch represents a complete pass through the entire training dataset, adjusting model weights to minimize the classification loss and optimize the performance.

#### Metrics

- mAP@0.5 (box):

0.946

#### Summary

YOLOv8s is a powerful convolutional neural network tailored for leaf detection and classification of over 46 plant species. It leverages a modified CSPDarknet53 backbone, self-attention mechanism, and a feature pyramid network for accurate multi-scaled object detection, providing precise identification and classification of plant leaves.

### Model Architecture and Objective

YOLOv8 architecture utilizes a modified CSPDarknet53 as its backbone with 53 convolutional layers and cross-stage partial connections for improved information flow. The head consists of convolutional and fully connected layers for predicting bounding boxes, objectness scores, and class probabilities. It incorporates a self-attention mechanism and a feature pyramid network for multi-scaled object detection, enabling focus on relevant image features and detecting objects of different sizes.

### Compute Infrastructure

#### Hardware

NVIDIA A100 40GB GPU card

#### Software

Jupyter Notebook environment for model training.

## Model Card Contact

info@foduu.com

```bibtex

@ModelCard{

author = {Nehul Agrawal and

Pranjal singh Thakur},

title = { YOLOv8s Leaf Detection and Classification},

year = {2023}

}

```

--- | 7,348 | [

[

-0.018798828125,

-0.026397705078125,

0.0222625732421875,

-0.05224609375,

-0.0287628173828125,

-0.03350830078125,

0.0185089111328125,

-0.0633544921875,

-0.0006222724914550781,

0.017425537109375,

-0.028656005859375,

-0.043121337890625,

-0.02679443359375,

0.005... |

jinhybr/OCR-Donut-CORD | 2022-11-05T00:07:44.000Z | [

"transformers",

"pytorch",

"vision-encoder-decoder",

"donut",

"image-to-text",

"vision",

"arxiv:2111.15664",

"license:mit",

"endpoints_compatible",

"has_space",

"region:us"

] | image-to-text | jinhybr | null | null | jinhybr/OCR-Donut-CORD | 36 | 554 | transformers | 2022-11-04T13:22:17 | ---

license: mit

tags:

- donut

- image-to-text

- vision

---

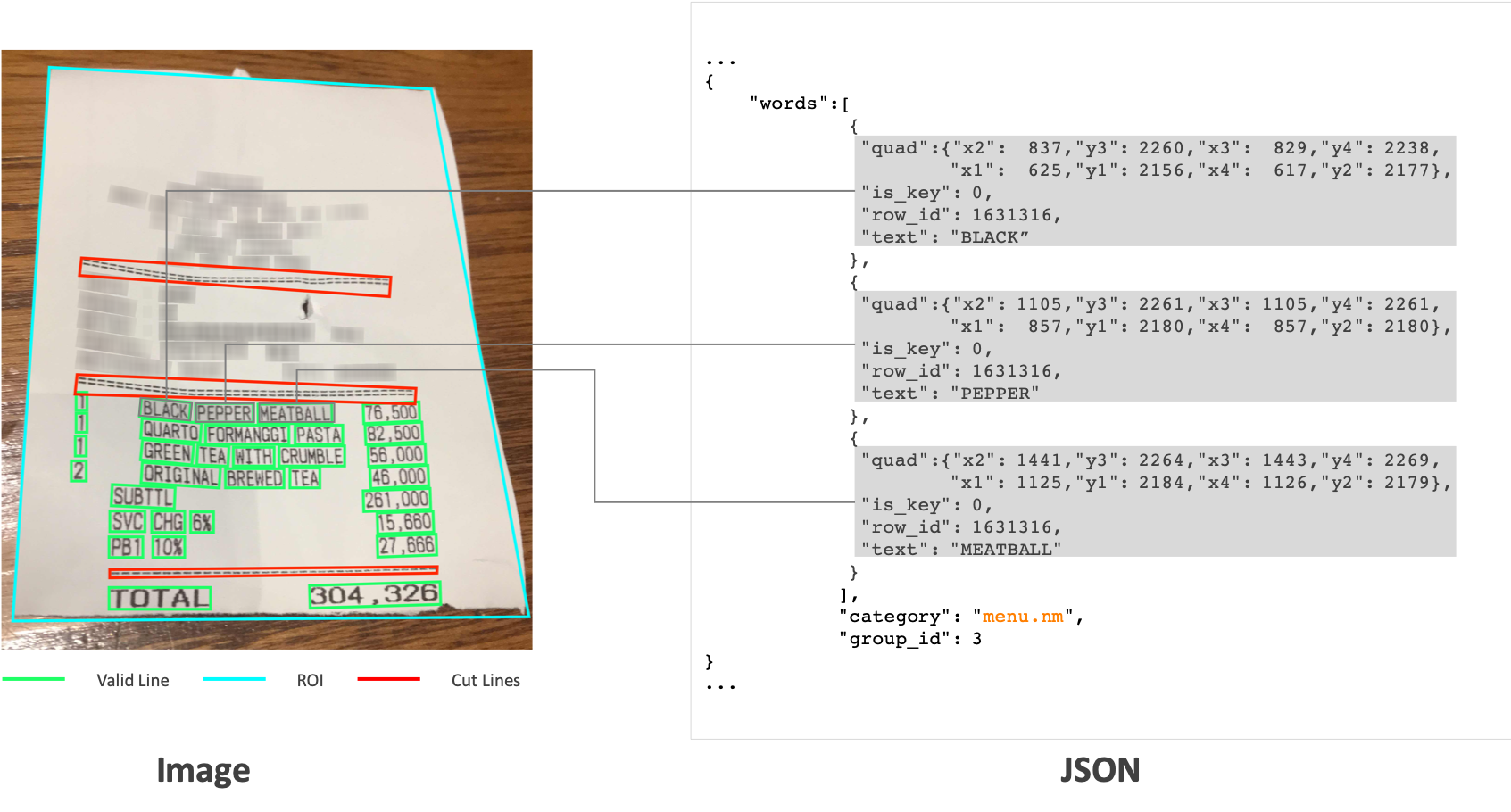

# Donut (base-sized model, fine-tuned on CORD)

Donut model fine-tuned on CORD. It was introduced in the paper [OCR-free Document Understanding Transformer](https://arxiv.org/abs/2111.15664) by Geewok et al. and first released in [this repository](https://github.com/clovaai/donut).

Disclaimer: The team releasing Donut did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

Donut consists of a vision encoder (Swin Transformer) and a text decoder (BART). Given an image, the encoder first encodes the image into a tensor of embeddings (of shape batch_size, seq_len, hidden_size), after which the decoder autoregressively generates text, conditioned on the encoding of the encoder.

## Intended uses & limitations

This model is fine-tuned on CORD, a document parsing dataset.

We refer to the [documentation](https://huggingface.co/docs/transformers/main/en/model_doc/donut) which includes code examples.

## CORD Dataset

CORD: A Consolidated Receipt Dataset for Post-OCR Parsing.

| 1,342 | [

[

-0.02252197265625,

-0.042724609375,

0.02154541015625,

-0.0223846435546875,

-0.0201873779296875,

0.0039520263671875,

-0.00617218017578125,

-0.03131103515625,

0.0204315185546875,

0.044403076171875,

-0.038055419921875,

-0.021453857421875,

-0.048980712890625,

-0... |

melaris/sarabala | 2023-09-30T01:10:18.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | melaris | null | null | melaris/sarabala | 1 | 554 | diffusers | 2023-09-27T11:14:45 | ---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### SaraBala Dreambooth model trained by melaris with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept: | 495 | [

[

-0.0207366943359375,

-0.050323486328125,

0.035400390625,

0.041107177734375,

-0.02911376953125,

0.035308837890625,

0.0233154296875,

-0.0168914794921875,

0.04779052734375,

0.01715087890625,

-0.0189208984375,

-0.02862548828125,

-0.036590576171875,

-0.0114288330... |

davidkim205/komt-mistral-7b-v1 | 2023-10-24T04:41:07.000Z | [

"transformers",

"pytorch",

"mistral",

"text-generation",

"finetuned",

"en",

"ko",

"arxiv:2308.06502",

"arxiv:2308.06259",

"endpoints_compatible",

"text-generation-inference",

"region:us"

] | text-generation | davidkim205 | null | null | davidkim205/komt-mistral-7b-v1 | 4 | 554 | transformers | 2023-10-24T01:58:52 | ---

language:

- en

- ko

pipeline_tag: text-generation

tags:

- finetuned

---

# komt : korean multi task instruction tuning model

Recently, due to the success of ChatGPT, numerous large language models have emerged in an attempt to catch up with ChatGPT's capabilities.

However, when it comes to Korean language performance, it has been observed that many models still struggle to provide accurate answers or generate Korean text effectively.

This study addresses these challenges by introducing a multi-task instruction technique that leverages supervised datasets from various tasks to create training data for Large Language Models (LLMs).

## Model Details

* **Model Developers** : davidkim(changyeon kim)

* **Repository** : https://github.com/davidkim205/komt

* **Model Architecture** : The komt-mistral-7b-v1 is is a fine-tuned version of the Mistral-7B-Instruct-v0.1.

## Dataset

korean multi-task instruction dataset

## Hardware and Software

- nvidia driver : 535.54.03

- CUDA Version: 12.2

## Training

Refer https://github.com/davidkim205/komt

## Prompt template: Mistral

```

<s>[INST] {prompt} [/INST]</s>

```

## Usage

```

from transformers import AutoTokenizer, AutoModelForCausalLM

from transformers import TextStreamer, GenerationConfig

model_name='davidkim205/komt-mistral-7b-v1'

model = AutoModelForCausalLM.from_pretrained(model_name, device_map="auto")

tokenizer = AutoTokenizer.from_pretrained(model_name)

streamer = TextStreamer(tokenizer)

def gen(x):

generation_config = GenerationConfig(

temperature=0.8,

top_p=0.8,

top_k=100,

max_new_tokens=1024,

early_stopping=True,

do_sample=True,

)

q = f"[INST]{x} [/INST]"

gened = model.generate(

**tokenizer(

q,

return_tensors='pt',

return_token_type_ids=False

).to('cuda'),

generation_config=generation_config,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=tokenizer.eos_token_id,

streamer=streamer,

)

result_str = tokenizer.decode(gened[0])

start_tag = f"\n\n### Response: "

start_index = result_str.find(start_tag)

if start_index != -1:

result_str = result_str[start_index + len(start_tag):].strip()

return result_str

print(gen('제주도를 1박2일로 혼자 여행하려고 하는데 여행 코스를 만들어줘'))

```

output

```

<s> [INST]제주도를 1박2일로 혼자 여행하려고 하는데 여행 코스를 만들어줘 [/INST]1박 2일 여행 코스

제주 여행 코스는 다음과 같습니다:

첫째 날:

* 아침: 제주시 신라로 이동하여 한라산 국립공원에 도착합니다. 오른쪽 발자국 광장에서 눈에 띄는 맥주숍을 찾아 한 병의 맥주를 마시고 계단으로 올라가 한라산 정상에 도착합니다.

* 점심: 정상에서 계단을 내려와 중도에 위치한 골목에서 음식을 즐깁니다. 젓갈, 곰탕, 전복 등 맛있는 제주 특산물을 맛보며 힐링을 즐깁니다.

* 오후: 중도에서 남하하여 남서쪽 제주로 이동합니다. 남서쪽 제주는 섬의 자연 경관과 백사장, 아름다운 바다 등 아름다운 풍광이 있는 곳입니다. 숙소에 숙박합니다.

둘째 날:

* 아침: 남서쪽 제주에서 북서쪽 제주로 이동합니다. 이 지역은 푸른 잔디 해변과 투명한 바다가 펼쳐져 있는 아름다운 풍광입니다. 숙소에서 아침을 먹고 바다로 향하여 해변에서 휴식을 취합니다.

* 점심: 북서쪽 제주의 앞바다에서 시원한 바다를 보며 한 끼의 해산물을 맛보고 계절에 따라 해산물 요리를 즐깁니다.

* 오후: 남서쪽 제주에서 이동하여 서쪽 제주로 이동합니다. 서쪽 제주는 절벽과 암석 해변, 수수께끼의 돌 정원 등 독특한 경관이 있는 곳입니다. 이곳에서는 서쪽 제주의 대표적인 명소인 청량사를 방문하고 서쪽 제주의 아름다운 풍광을 감상합니다.

* 저녁: 서쪽 제주에서 제주 시로 이동하여 한 끼의 제주 특산물을 맛보고 도착한 제주 도심에서 저녁을 즐깁니다.

* 야간: 제주 시의 도심에서 야간 활동을 즐기며 1박 2일의 여행을 마무리합니다.

이렇게 제주를 1박 2일로 혼자 여행하면 제주의 아름다운 풍광, 푸른 잔디 해변, 투명한 바다 등을 경험할 수 있습니다.

```

## Evaluation

For objective model evaluation, we initially used EleutherAI's lm-evaluation-harness but obtained unsatisfactory results. Consequently, we conducted evaluations using ChatGPT, a widely used model, as described in [Self-Alignment with Instruction Backtranslation](https://arxiv.org/pdf/2308.06502.pdf) and [Three Ways of Using Large Language Models to Evaluate Chat](https://arxiv.org/pdf/2308.06259.pdf) .

| model | score | average(0~5) | percentage |

| --------------------------------------- |---------| ------------ | ---------- |

| gpt-3.5-turbo(close) | 147 | 3.97 | 79.45% |

| naver Cue(close) | 140 | 3.78 | 75.67% |

| clova X(close) | 136 | 3.67 | 73.51% |

| WizardLM-13B-V1.2(open) | 96 | 2.59 | 51.89% |

| Llama-2-7b-chat-hf(open) | 67 | 1.81 | 36.21% |

| Llama-2-13b-chat-hf(open) | 73 | 1.91 | 38.37% |

| nlpai-lab/kullm-polyglot-12.8b-v2(open) | 70 | 1.89 | 37.83% |

| kfkas/Llama-2-ko-7b-Chat(open) | 96 | 2.59 | 51.89% |

| beomi/KoAlpaca-Polyglot-12.8B(open) | 100 | 2.70 | 54.05% |

| **komt-llama2-7b-v1 (open)(ours)** | **117** | **3.16** | **63.24%** |

| **komt-llama2-13b-v1 (open)(ours)** | **129** | **3.48** | **69.72%** |

| **komt-llama-30b-v1 (open)(ours)** | **129** | **3.16** | **63.24%** |

| **komt-mistral-7b-v1 (open)(ours)** | **131** | **3.54** | **70.81%** |

| 4,998 | [

[

-0.03729248046875,

-0.048980712890625,

0.021331787109375,

0.0250701904296875,

-0.02777099609375,

0.005794525146484375,

-0.002376556396484375,

-0.0201568603515625,

0.024383544921875,

0.0211029052734375,

-0.036865234375,

-0.04229736328125,

-0.048126220703125,

... |

sonoisa/clip-vit-b-32-japanese-v1 | 2022-04-19T14:18:58.000Z | [

"transformers",

"pytorch",

"bert",

"feature-extraction",

"clip",

"sentence-similarity",

"ja",

"license:cc-by-sa-4.0",

"endpoints_compatible",

"has_space",

"region:us"

] | feature-extraction | sonoisa | null | null | sonoisa/clip-vit-b-32-japanese-v1 | 18 | 553 | transformers | 2022-03-02T23:29:05 | ---

language: ja

license: cc-by-sa-4.0

tags:

- clip

- feature-extraction

- sentence-similarity

---

# 日本語版[CLIP](https://github.com/openai/CLIP)モデル

This is a [CLIP](https://github.com/openai/CLIP) text/image encoder model for Japanese.

英語版CLIPモデルのテキストエンコーダーを一種の蒸留を用いて日本語化したモデルです。

作り方や精度、使い方、サンプルコードは下記の解説記事をご参照ください。

- 解説記事:

- 概要: [【日本語モデル付き】2022年にマルチモーダル処理をする人にお勧めしたい事前学習済みモデル](https://qiita.com/sonoisa/items/00e8e2861147842f0237)

- 使い方の解説: [【日本語CLIP】画像とテキストの類似度計算、画像やテキストの埋め込み計算、類似画像検索](https://qiita.com/sonoisa/items/d6db2f130fa9a4ce0c2c)

- (公開準備中) 応用解説: いらすとや画像のマルチモーダル検索(ゼロショット編)

- (公開準備中) 応用解説: いらすとや画像のマルチモーダル検索(ファインチューニング編)

- (公開準備中) 応用解説: 画像とテキストの両方を用いたマルチモーダル分類

- サンプルコードのリポジトリ: https://github.com/sonoisa/clip-japanese

- デモ:

- [いらすとや画像のマルチモーダル検索(ゼロショット)](https://huggingface.co/spaces/sonoisa/Irasuto_search_CLIP_zero-shot) | 853 | [

[

-0.034576416015625,

-0.052886962890625,

0.03741455078125,

0.0164337158203125,

-0.053619384765625,

-0.0189361572265625,

-0.004238128662109375,

-0.0290985107421875,

0.04949951171875,

0.033355712890625,

-0.04412841796875,

-0.04168701171875,

-0.05865478515625,

0... |

KappaNeuro/needlepoint | 2023-09-14T10:00:07.000Z | [

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"embroidery",

"style",

"needlepoint",

"needlework",

"license:other",

"region:us",

"has_space"

] | text-to-image | KappaNeuro | null | null | KappaNeuro/needlepoint | 1 | 553 | diffusers | 2023-09-14T10:00:03 | ---

license: other

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

- embroidery

- style

- needlepoint

- needlework

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: Needlepoint page

widget:

- text: "Needlepoint - 8k, very simple overgrown greek landscape folly sewn patch"

- text: "Needlepoint - a coarse cross-stitch of a big ol' red truck driving from Vegas to Nashville"

- text: "Needlepoint - A mixed media art piece featuring an optical illusion pattern in the shape of a triangle made entirely out of particle board, overlaid with delicate embroidery in a creamy chenille thread. The colors of the embroidery should be a combination of frozen civilization, tropical escape and wild cranberry with hints of scorpion and Penelope. The embroidery should feel alive and three-dimensional, making the rigid surface of the particle board take on a sinewy, swirling appearance."

- text: "Needlepoint - a beautiful fabric embroidered minimalist Homer Simpson and Ronald McDonald made by a contemporary artist, textile art, contemporary artist embroidery, mockup, Simpsons, large embroidery art of Homer Simpsons from the tv show the Simpsons, McDonald tone, award winning photo, bright natural light, ultra-detailed, intricate details, 16K, volumetric lighting, best quality"

- text: "Needlepoint - Introduce flowing and organic forms with crewelwork stitches, inspired by nature and folklore. Use a variety of colorful threads to create texture and dimension.Dive into the vibrant and eclectic world of Stella Jean and create a whimsical embroidery design that merges various embroidery techniques with her unique style. Combining elements of Canvaswork, Blackwork, Crewelwork, Goldwork, Silk Shading, Whitework, and Raised Work, you will craft a piece that celebrates the beauty of cultural diversity and the fusion of different artistic traditions. Canvaswork: Incorporate geometric patterns inspired by traditional textiles from different cultures, using vibrant thread colors to create a bold and eye-catching design"

- text: "Needlepoint - non-binary self portrait in embroidery, embroidery hoop, middle age, pensive cautious optimism, portrait reflecting their best self, soft pallette, i will not drown in the liqueur of safety"

- text: "Needlepoint - yarn wool intricate embroidery texture 3D beautifully stitched fabric embroidered neutral rainbows and wildflowers on hills and fairy houses quilted background cottagecore photorealistic"

- text: "Needlepoint - A painting of Rolling hills with a country road and an active volcano in the background made of crochet polyester. Framed in a modern frame. Studio shot on a light gray backdrop."

- text: "Needlepoint - father's day stitching, close-up view father and daughter playing and laughing together, eye-level view, soft colors, minimalistic background, in the style of Cross-stitching"

- text: "Needlepoint - a boho design of flowers and mushrooms that looks like it was printed on an 3d embroidered cross stitch background"

---

# Needlepoint ([CivitAI](https://civitai.com/models/154077)

> Needlepoint - 8k, very simple overgrown greek landscape folly sewn patch

<p>Needlepoint is a form of embroidery that involves stitching decorative designs onto canvas using a needle and thread. It is often done on a stiff canvas mesh that provides a grid for the stitches.</p><p>In needlepoint, the design is typically pre-printed or hand-drawn onto the canvas, serving as a guide for the stitching process. The stitcher then uses various types of stitches, such as tent stitch, basketweave stitch, or cross-stitch, to fill in the design and create textured and colorful patterns.</p><p>Needlepoint allows for a wide range of design possibilities, from intricate and detailed images to simple geometric shapes or monograms. The choice of threads and colors adds depth and visual interest to the finished piece.</p><p>Needlepoint is a versatile craft that can be used to create various items, including decorative wall hangings, pillows, purses, and even clothing accessories like belts or shoes. It offers an opportunity for personal expression and creativity, allowing stitchers to customize designs and incorporate their own artistic flair.</p><p>The process of needlepoint can be relaxing and meditative, as the repetitive nature of stitching can provide a sense of focus and calm. It also offers a way to create lasting heirlooms or unique gifts for loved ones.</p><p>Needlepoint has a rich history, with examples of this craft dating back centuries. It continues to be practiced and enjoyed by people of all ages, combining artistic expression with a satisfying and enjoyable stitching process.</p>

## Image examples for the model:

> Needlepoint - a coarse cross-stitch of a big ol' red truck driving from Vegas to Nashville

> Needlepoint - A mixed media art piece featuring an optical illusion pattern in the shape of a triangle made entirely out of particle board, overlaid with delicate embroidery in a creamy chenille thread. The colors of the embroidery should be a combination of frozen civilization, tropical escape and wild cranberry with hints of scorpion and Penelope. The embroidery should feel alive and three-dimensional, making the rigid surface of the particle board take on a sinewy, swirling appearance.

> Needlepoint - a beautiful fabric embroidered minimalist Homer Simpson and Ronald McDonald made by a contemporary artist, textile art, contemporary artist embroidery, mockup, Simpsons, large embroidery art of Homer Simpsons from the tv show the Simpsons, McDonald tone, award winning photo, bright natural light, ultra-detailed, intricate details, 16K, volumetric lighting, best quality

> Needlepoint - Introduce flowing and organic forms with crewelwork stitches, inspired by nature and folklore. Use a variety of colorful threads to create texture and dimension.Dive into the vibrant and eclectic world of Stella Jean and create a whimsical embroidery design that merges various embroidery techniques with her unique style. Combining elements of Canvaswork, Blackwork, Crewelwork, Goldwork, Silk Shading, Whitework, and Raised Work, you will craft a piece that celebrates the beauty of cultural diversity and the fusion of different artistic traditions. Canvaswork: Incorporate geometric patterns inspired by traditional textiles from different cultures, using vibrant thread colors to create a bold and eye-catching design

> Needlepoint - non-binary self portrait in embroidery, embroidery hoop, middle age, pensive cautious optimism, portrait reflecting their best self, soft pallette, i will not drown in the liqueur of safety

> Needlepoint - yarn wool intricate embroidery texture 3D beautifully stitched fabric embroidered neutral rainbows and wildflowers on hills and fairy houses quilted background cottagecore photorealistic

> Needlepoint - A painting of Rolling hills with a country road and an active volcano in the background made of crochet polyester. Framed in a modern frame. Studio shot on a light gray backdrop.

> Needlepoint - father's day stitching, close-up view father and daughter playing and laughing together, eye-level view, soft colors, minimalistic background, in the style of Cross-stitching

> Needlepoint - a boho design of flowers and mushrooms that looks like it was printed on an 3d embroidered cross stitch background

| 7,630 | [

[

-0.04840087890625,

-0.0576171875,

0.035491943359375,

0.04815673828125,

-0.01203155517578125,

0.00830841064453125,

0.00923919677734375,

-0.0687255859375,

0.050445556640625,

0.031036376953125,

-0.04046630859375,

-0.042327880859375,

-0.054473876953125,

-0.00585... |

Nacholmo/meinamixv7-diffusers | 2023-06-21T16:34:39.000Z | [

"diffusers",

"text-to-image",

"license:creativeml-openrail-m",

"endpoints_compatible",

"has_space",

"diffusers:StableDiffusionPipeline",

"region:us"

] | text-to-image | Nacholmo | null | null | Nacholmo/meinamixv7-diffusers | 1 | 552 | diffusers | 2023-03-06T02:08:03 | ---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

---

Original model: https://huggingface.co/Meina/MeinaMix | 145 | [

[

-0.050750732421875,

-0.02545166015625,

0.037139892578125,

0.010528564453125,

-0.028472900390625,

-0.03070068359375,

0.028900146484375,

-0.0311737060546875,

0.07275390625,

0.045074462890625,

-0.06396484375,

-0.0271759033203125,

-0.0313720703125,

-0.0014963150... |

timm/resnet50s.gluon_in1k | 2023-04-05T18:18:54.000Z | [

"timm",

"pytorch",

"safetensors",

"image-classification",

"arxiv:1512.03385",

"arxiv:1812.01187",

"license:apache-2.0",

"region:us"

] | image-classification | timm | null | null | timm/resnet50s.gluon_in1k | 0 | 552 | timm | 2023-04-05T18:18:26 | ---

tags:

- image-classification

- timm

library_tag: timm

license: apache-2.0

---

# Model card for resnet50s.gluon_in1k

A ResNet-S image classification model.

This model features:

* ReLU activations

* 3-layer stem of 3x3 convolutions with extra-width and pooling

* 1x1 convolution shortcut downsample

Trained on ImageNet-1k in Apache Gluon using Bag-of-Tricks based recipes.

## Model Details

- **Model Type:** Image classification / feature backbone

- **Model Stats:**

- Params (M): 25.7

- GMACs: 5.5

- Activations (M): 13.5

- Image size: 224 x 224

- **Papers:**

- Deep Residual Learning for Image Recognition: https://arxiv.org/abs/1512.03385

- Bag of Tricks for Image Classification with Convolutional Neural Networks: https://arxiv.org/abs/1812.01187

- **Original:** https://cv.gluon.ai/model_zoo/classification.html

## Model Usage

### Image Classification

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model('resnet50s.gluon_in1k', pretrained=True)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

top5_probabilities, top5_class_indices = torch.topk(output.softmax(dim=1) * 100, k=5)

```

### Feature Map Extraction

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'resnet50s.gluon_in1k',

pretrained=True,

features_only=True,

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # unsqueeze single image into batch of 1

for o in output:

# print shape of each feature map in output

# e.g.:

# torch.Size([1, 128, 112, 112])

# torch.Size([1, 256, 56, 56])

# torch.Size([1, 512, 28, 28])

# torch.Size([1, 1024, 14, 14])

# torch.Size([1, 2048, 7, 7])

print(o.shape)

```

### Image Embeddings

```python

from urllib.request import urlopen

from PIL import Image

import timm

img = Image.open(urlopen(

'https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png'

))

model = timm.create_model(

'resnet50s.gluon_in1k',

pretrained=True,

num_classes=0, # remove classifier nn.Linear

)

model = model.eval()

# get model specific transforms (normalization, resize)

data_config = timm.data.resolve_model_data_config(model)

transforms = timm.data.create_transform(**data_config, is_training=False)

output = model(transforms(img).unsqueeze(0)) # output is (batch_size, num_features) shaped tensor

# or equivalently (without needing to set num_classes=0)

output = model.forward_features(transforms(img).unsqueeze(0))

# output is unpooled, a (1, 2048, 7, 7) shaped tensor

output = model.forward_head(output, pre_logits=True)

# output is a (1, num_features) shaped tensor

```

## Model Comparison

Explore the dataset and runtime metrics of this model in timm [model results](https://github.com/huggingface/pytorch-image-models/tree/main/results).

|model |img_size|top1 |top5 |param_count|gmacs|macts|img/sec|

|------------------------------------------|--------|-----|-----|-----------|-----|-----|-------|

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k_288](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k_288)|320 |86.72|98.17|93.6 |35.2 |69.7 |451 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k_288](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k_288)|288 |86.51|98.08|93.6 |28.5 |56.4 |560 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k)|288 |86.49|98.03|93.6 |28.5 |56.4 |557 |

|[seresnextaa101d_32x8d.sw_in12k_ft_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.sw_in12k_ft_in1k)|224 |85.96|97.82|93.6 |17.2 |34.2 |923 |

|[resnext101_32x32d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x32d.fb_wsl_ig1b_ft_in1k)|224 |85.11|97.44|468.5 |87.3 |91.1 |254 |

|[resnetrs420.tf_in1k](https://huggingface.co/timm/resnetrs420.tf_in1k)|416 |85.0 |97.12|191.9 |108.4|213.8|134 |

|[ecaresnet269d.ra2_in1k](https://huggingface.co/timm/ecaresnet269d.ra2_in1k)|352 |84.96|97.22|102.1 |50.2 |101.2|291 |

|[ecaresnet269d.ra2_in1k](https://huggingface.co/timm/ecaresnet269d.ra2_in1k)|320 |84.73|97.18|102.1 |41.5 |83.7 |353 |

|[resnetrs350.tf_in1k](https://huggingface.co/timm/resnetrs350.tf_in1k)|384 |84.71|96.99|164.0 |77.6 |154.7|183 |

|[seresnextaa101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.ah_in1k)|288 |84.57|97.08|93.6 |28.5 |56.4 |557 |

|[resnetrs200.tf_in1k](https://huggingface.co/timm/resnetrs200.tf_in1k)|320 |84.45|97.08|93.2 |31.5 |67.8 |446 |

|[resnetrs270.tf_in1k](https://huggingface.co/timm/resnetrs270.tf_in1k)|352 |84.43|96.97|129.9 |51.1 |105.5|280 |

|[seresnext101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101d_32x8d.ah_in1k)|288 |84.36|96.92|93.6 |27.6 |53.0 |595 |

|[seresnet152d.ra2_in1k](https://huggingface.co/timm/seresnet152d.ra2_in1k)|320 |84.35|97.04|66.8 |24.1 |47.7 |610 |

|[resnetrs350.tf_in1k](https://huggingface.co/timm/resnetrs350.tf_in1k)|288 |84.3 |96.94|164.0 |43.7 |87.1 |333 |

|[resnext101_32x8d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_swsl_ig1b_ft_in1k)|224 |84.28|97.17|88.8 |16.5 |31.2 |1100 |

|[resnetrs420.tf_in1k](https://huggingface.co/timm/resnetrs420.tf_in1k)|320 |84.24|96.86|191.9 |64.2 |126.6|228 |

|[seresnext101_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101_32x8d.ah_in1k)|288 |84.19|96.87|93.6 |27.2 |51.6 |613 |

|[resnext101_32x16d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_wsl_ig1b_ft_in1k)|224 |84.18|97.19|194.0 |36.3 |51.2 |581 |

|[resnetaa101d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa101d.sw_in12k_ft_in1k)|288 |84.11|97.11|44.6 |15.1 |29.0 |1144 |

|[resnet200d.ra2_in1k](https://huggingface.co/timm/resnet200d.ra2_in1k)|320 |83.97|96.82|64.7 |31.2 |67.3 |518 |

|[resnetrs200.tf_in1k](https://huggingface.co/timm/resnetrs200.tf_in1k)|256 |83.87|96.75|93.2 |20.2 |43.4 |692 |

|[seresnextaa101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnextaa101d_32x8d.ah_in1k)|224 |83.86|96.65|93.6 |17.2 |34.2 |923 |

|[resnetrs152.tf_in1k](https://huggingface.co/timm/resnetrs152.tf_in1k)|320 |83.72|96.61|86.6 |24.3 |48.1 |617 |

|[seresnet152d.ra2_in1k](https://huggingface.co/timm/seresnet152d.ra2_in1k)|256 |83.69|96.78|66.8 |15.4 |30.6 |943 |

|[seresnext101d_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101d_32x8d.ah_in1k)|224 |83.68|96.61|93.6 |16.7 |32.0 |986 |

|[resnet152d.ra2_in1k](https://huggingface.co/timm/resnet152d.ra2_in1k)|320 |83.67|96.74|60.2 |24.1 |47.7 |706 |

|[resnetrs270.tf_in1k](https://huggingface.co/timm/resnetrs270.tf_in1k)|256 |83.59|96.61|129.9 |27.1 |55.8 |526 |

|[seresnext101_32x8d.ah_in1k](https://huggingface.co/timm/seresnext101_32x8d.ah_in1k)|224 |83.58|96.4 |93.6 |16.5 |31.2 |1013 |

|[resnetaa101d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa101d.sw_in12k_ft_in1k)|224 |83.54|96.83|44.6 |9.1 |17.6 |1864 |

|[resnet152.a1h_in1k](https://huggingface.co/timm/resnet152.a1h_in1k)|288 |83.46|96.54|60.2 |19.1 |37.3 |904 |

|[resnext101_32x16d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_swsl_ig1b_ft_in1k)|224 |83.35|96.85|194.0 |36.3 |51.2 |582 |

|[resnet200d.ra2_in1k](https://huggingface.co/timm/resnet200d.ra2_in1k)|256 |83.23|96.53|64.7 |20.0 |43.1 |809 |

|[resnext101_32x4d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x4d.fb_swsl_ig1b_ft_in1k)|224 |83.22|96.75|44.2 |8.0 |21.2 |1814 |

|[resnext101_64x4d.c1_in1k](https://huggingface.co/timm/resnext101_64x4d.c1_in1k)|288 |83.16|96.38|83.5 |25.7 |51.6 |590 |

|[resnet152d.ra2_in1k](https://huggingface.co/timm/resnet152d.ra2_in1k)|256 |83.14|96.38|60.2 |15.4 |30.5 |1096 |

|[resnet101d.ra2_in1k](https://huggingface.co/timm/resnet101d.ra2_in1k)|320 |83.02|96.45|44.6 |16.5 |34.8 |992 |

|[ecaresnet101d.miil_in1k](https://huggingface.co/timm/ecaresnet101d.miil_in1k)|288 |82.98|96.54|44.6 |13.4 |28.2 |1077 |

|[resnext101_64x4d.tv_in1k](https://huggingface.co/timm/resnext101_64x4d.tv_in1k)|224 |82.98|96.25|83.5 |15.5 |31.2 |989 |

|[resnetrs152.tf_in1k](https://huggingface.co/timm/resnetrs152.tf_in1k)|256 |82.86|96.28|86.6 |15.6 |30.8 |951 |

|[resnext101_32x8d.tv2_in1k](https://huggingface.co/timm/resnext101_32x8d.tv2_in1k)|224 |82.83|96.22|88.8 |16.5 |31.2 |1099 |

|[resnet152.a1h_in1k](https://huggingface.co/timm/resnet152.a1h_in1k)|224 |82.8 |96.13|60.2 |11.6 |22.6 |1486 |

|[resnet101.a1h_in1k](https://huggingface.co/timm/resnet101.a1h_in1k)|288 |82.8 |96.32|44.6 |13.0 |26.8 |1291 |

|[resnet152.a1_in1k](https://huggingface.co/timm/resnet152.a1_in1k)|288 |82.74|95.71|60.2 |19.1 |37.3 |905 |

|[resnext101_32x8d.fb_wsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_wsl_ig1b_ft_in1k)|224 |82.69|96.63|88.8 |16.5 |31.2 |1100 |

|[resnet152.a2_in1k](https://huggingface.co/timm/resnet152.a2_in1k)|288 |82.62|95.75|60.2 |19.1 |37.3 |904 |

|[resnetaa50d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa50d.sw_in12k_ft_in1k)|288 |82.61|96.49|25.6 |8.9 |20.6 |1729 |

|[resnet61q.ra2_in1k](https://huggingface.co/timm/resnet61q.ra2_in1k)|288 |82.53|96.13|36.8 |9.9 |21.5 |1773 |

|[wide_resnet101_2.tv2_in1k](https://huggingface.co/timm/wide_resnet101_2.tv2_in1k)|224 |82.5 |96.02|126.9 |22.8 |21.2 |1078 |

|[resnext101_64x4d.c1_in1k](https://huggingface.co/timm/resnext101_64x4d.c1_in1k)|224 |82.46|95.92|83.5 |15.5 |31.2 |987 |

|[resnet51q.ra2_in1k](https://huggingface.co/timm/resnet51q.ra2_in1k)|288 |82.36|96.18|35.7 |8.1 |20.9 |1964 |

|[ecaresnet50t.ra2_in1k](https://huggingface.co/timm/ecaresnet50t.ra2_in1k)|320 |82.35|96.14|25.6 |8.8 |24.1 |1386 |

|[resnet101.a1_in1k](https://huggingface.co/timm/resnet101.a1_in1k)|288 |82.31|95.63|44.6 |13.0 |26.8 |1291 |

|[resnetrs101.tf_in1k](https://huggingface.co/timm/resnetrs101.tf_in1k)|288 |82.29|96.01|63.6 |13.6 |28.5 |1078 |

|[resnet152.tv2_in1k](https://huggingface.co/timm/resnet152.tv2_in1k)|224 |82.29|96.0 |60.2 |11.6 |22.6 |1484 |

|[wide_resnet50_2.racm_in1k](https://huggingface.co/timm/wide_resnet50_2.racm_in1k)|288 |82.27|96.06|68.9 |18.9 |23.8 |1176 |

|[resnet101d.ra2_in1k](https://huggingface.co/timm/resnet101d.ra2_in1k)|256 |82.26|96.07|44.6 |10.6 |22.2 |1542 |

|[resnet101.a2_in1k](https://huggingface.co/timm/resnet101.a2_in1k)|288 |82.24|95.73|44.6 |13.0 |26.8 |1290 |

|[seresnext50_32x4d.racm_in1k](https://huggingface.co/timm/seresnext50_32x4d.racm_in1k)|288 |82.2 |96.14|27.6 |7.0 |23.8 |1547 |

|[ecaresnet101d.miil_in1k](https://huggingface.co/timm/ecaresnet101d.miil_in1k)|224 |82.18|96.05|44.6 |8.1 |17.1 |1771 |

|[resnext50_32x4d.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnext50_32x4d.fb_swsl_ig1b_ft_in1k)|224 |82.17|96.22|25.0 |4.3 |14.4 |2943 |

|[ecaresnet50t.a1_in1k](https://huggingface.co/timm/ecaresnet50t.a1_in1k)|288 |82.12|95.65|25.6 |7.1 |19.6 |1704 |

|[resnext50_32x4d.a1h_in1k](https://huggingface.co/timm/resnext50_32x4d.a1h_in1k)|288 |82.03|95.94|25.0 |7.0 |23.8 |1745 |

|[ecaresnet101d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet101d_pruned.miil_in1k)|288 |82.0 |96.15|24.9 |5.8 |12.7 |1787 |

|[resnet61q.ra2_in1k](https://huggingface.co/timm/resnet61q.ra2_in1k)|256 |81.99|95.85|36.8 |7.8 |17.0 |2230 |

|[resnext101_32x8d.tv2_in1k](https://huggingface.co/timm/resnext101_32x8d.tv2_in1k)|176 |81.98|95.72|88.8 |10.3 |19.4 |1768 |

|[resnet152.a1_in1k](https://huggingface.co/timm/resnet152.a1_in1k)|224 |81.97|95.24|60.2 |11.6 |22.6 |1486 |

|[resnet101.a1h_in1k](https://huggingface.co/timm/resnet101.a1h_in1k)|224 |81.93|95.75|44.6 |7.8 |16.2 |2122 |

|[resnet101.tv2_in1k](https://huggingface.co/timm/resnet101.tv2_in1k)|224 |81.9 |95.77|44.6 |7.8 |16.2 |2118 |

|[resnext101_32x16d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x16d.fb_ssl_yfcc100m_ft_in1k)|224 |81.84|96.1 |194.0 |36.3 |51.2 |583 |

|[resnet51q.ra2_in1k](https://huggingface.co/timm/resnet51q.ra2_in1k)|256 |81.78|95.94|35.7 |6.4 |16.6 |2471 |

|[resnet152.a2_in1k](https://huggingface.co/timm/resnet152.a2_in1k)|224 |81.77|95.22|60.2 |11.6 |22.6 |1485 |

|[resnetaa50d.sw_in12k_ft_in1k](https://huggingface.co/timm/resnetaa50d.sw_in12k_ft_in1k)|224 |81.74|96.06|25.6 |5.4 |12.4 |2813 |

|[ecaresnet50t.a2_in1k](https://huggingface.co/timm/ecaresnet50t.a2_in1k)|288 |81.65|95.54|25.6 |7.1 |19.6 |1703 |

|[ecaresnet50d.miil_in1k](https://huggingface.co/timm/ecaresnet50d.miil_in1k)|288 |81.64|95.88|25.6 |7.2 |19.7 |1694 |

|[resnext101_32x8d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x8d.fb_ssl_yfcc100m_ft_in1k)|224 |81.62|96.04|88.8 |16.5 |31.2 |1101 |

|[wide_resnet50_2.tv2_in1k](https://huggingface.co/timm/wide_resnet50_2.tv2_in1k)|224 |81.61|95.76|68.9 |11.4 |14.4 |1930 |

|[resnetaa50.a1h_in1k](https://huggingface.co/timm/resnetaa50.a1h_in1k)|288 |81.61|95.83|25.6 |8.5 |19.2 |1868 |

|[resnet101.a1_in1k](https://huggingface.co/timm/resnet101.a1_in1k)|224 |81.5 |95.16|44.6 |7.8 |16.2 |2125 |

|[resnext50_32x4d.a1_in1k](https://huggingface.co/timm/resnext50_32x4d.a1_in1k)|288 |81.48|95.16|25.0 |7.0 |23.8 |1745 |

|[gcresnet50t.ra2_in1k](https://huggingface.co/timm/gcresnet50t.ra2_in1k)|288 |81.47|95.71|25.9 |6.9 |18.6 |2071 |

|[wide_resnet50_2.racm_in1k](https://huggingface.co/timm/wide_resnet50_2.racm_in1k)|224 |81.45|95.53|68.9 |11.4 |14.4 |1929 |

|[resnet50d.a1_in1k](https://huggingface.co/timm/resnet50d.a1_in1k)|288 |81.44|95.22|25.6 |7.2 |19.7 |1908 |

|[ecaresnet50t.ra2_in1k](https://huggingface.co/timm/ecaresnet50t.ra2_in1k)|256 |81.44|95.67|25.6 |5.6 |15.4 |2168 |

|[ecaresnetlight.miil_in1k](https://huggingface.co/timm/ecaresnetlight.miil_in1k)|288 |81.4 |95.82|30.2 |6.8 |13.9 |2132 |

|[resnet50d.ra2_in1k](https://huggingface.co/timm/resnet50d.ra2_in1k)|288 |81.37|95.74|25.6 |7.2 |19.7 |1910 |

|[resnet101.a2_in1k](https://huggingface.co/timm/resnet101.a2_in1k)|224 |81.32|95.19|44.6 |7.8 |16.2 |2125 |

|[seresnet50.ra2_in1k](https://huggingface.co/timm/seresnet50.ra2_in1k)|288 |81.3 |95.65|28.1 |6.8 |18.4 |1803 |

|[resnext50_32x4d.a2_in1k](https://huggingface.co/timm/resnext50_32x4d.a2_in1k)|288 |81.3 |95.11|25.0 |7.0 |23.8 |1746 |

|[seresnext50_32x4d.racm_in1k](https://huggingface.co/timm/seresnext50_32x4d.racm_in1k)|224 |81.27|95.62|27.6 |4.3 |14.4 |2591 |

|[ecaresnet50t.a1_in1k](https://huggingface.co/timm/ecaresnet50t.a1_in1k)|224 |81.26|95.16|25.6 |4.3 |11.8 |2823 |

|[gcresnext50ts.ch_in1k](https://huggingface.co/timm/gcresnext50ts.ch_in1k)|288 |81.23|95.54|15.7 |4.8 |19.6 |2117 |

|[senet154.gluon_in1k](https://huggingface.co/timm/senet154.gluon_in1k)|224 |81.23|95.35|115.1 |20.8 |38.7 |545 |

|[resnet50.a1_in1k](https://huggingface.co/timm/resnet50.a1_in1k)|288 |81.22|95.11|25.6 |6.8 |18.4 |2089 |

|[resnet50_gn.a1h_in1k](https://huggingface.co/timm/resnet50_gn.a1h_in1k)|288 |81.22|95.63|25.6 |6.8 |18.4 |676 |

|[resnet50d.a2_in1k](https://huggingface.co/timm/resnet50d.a2_in1k)|288 |81.18|95.09|25.6 |7.2 |19.7 |1908 |

|[resnet50.fb_swsl_ig1b_ft_in1k](https://huggingface.co/timm/resnet50.fb_swsl_ig1b_ft_in1k)|224 |81.18|95.98|25.6 |4.1 |11.1 |3455 |

|[resnext50_32x4d.tv2_in1k](https://huggingface.co/timm/resnext50_32x4d.tv2_in1k)|224 |81.17|95.34|25.0 |4.3 |14.4 |2933 |

|[resnext50_32x4d.a1h_in1k](https://huggingface.co/timm/resnext50_32x4d.a1h_in1k)|224 |81.1 |95.33|25.0 |4.3 |14.4 |2934 |

|[seresnet50.a2_in1k](https://huggingface.co/timm/seresnet50.a2_in1k)|288 |81.1 |95.23|28.1 |6.8 |18.4 |1801 |

|[seresnet50.a1_in1k](https://huggingface.co/timm/seresnet50.a1_in1k)|288 |81.1 |95.12|28.1 |6.8 |18.4 |1799 |

|[resnet152s.gluon_in1k](https://huggingface.co/timm/resnet152s.gluon_in1k)|224 |81.02|95.41|60.3 |12.9 |25.0 |1347 |

|[resnet50.d_in1k](https://huggingface.co/timm/resnet50.d_in1k)|288 |80.97|95.44|25.6 |6.8 |18.4 |2085 |

|[gcresnet50t.ra2_in1k](https://huggingface.co/timm/gcresnet50t.ra2_in1k)|256 |80.94|95.45|25.9 |5.4 |14.7 |2571 |

|[resnext101_32x4d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext101_32x4d.fb_ssl_yfcc100m_ft_in1k)|224 |80.93|95.73|44.2 |8.0 |21.2 |1814 |

|[resnet50.c1_in1k](https://huggingface.co/timm/resnet50.c1_in1k)|288 |80.91|95.55|25.6 |6.8 |18.4 |2084 |

|[seresnext101_32x4d.gluon_in1k](https://huggingface.co/timm/seresnext101_32x4d.gluon_in1k)|224 |80.9 |95.31|49.0 |8.0 |21.3 |1585 |

|[seresnext101_64x4d.gluon_in1k](https://huggingface.co/timm/seresnext101_64x4d.gluon_in1k)|224 |80.9 |95.3 |88.2 |15.5 |31.2 |918 |

|[resnet50.c2_in1k](https://huggingface.co/timm/resnet50.c2_in1k)|288 |80.86|95.52|25.6 |6.8 |18.4 |2085 |

|[resnet50.tv2_in1k](https://huggingface.co/timm/resnet50.tv2_in1k)|224 |80.85|95.43|25.6 |4.1 |11.1 |3450 |

|[ecaresnet50t.a2_in1k](https://huggingface.co/timm/ecaresnet50t.a2_in1k)|224 |80.84|95.02|25.6 |4.3 |11.8 |2821 |

|[ecaresnet101d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet101d_pruned.miil_in1k)|224 |80.79|95.62|24.9 |3.5 |7.7 |2961 |

|[seresnet33ts.ra2_in1k](https://huggingface.co/timm/seresnet33ts.ra2_in1k)|288 |80.79|95.36|19.8 |6.0 |14.8 |2506 |

|[ecaresnet50d_pruned.miil_in1k](https://huggingface.co/timm/ecaresnet50d_pruned.miil_in1k)|288 |80.79|95.58|19.9 |4.2 |10.6 |2349 |

|[resnet50.a2_in1k](https://huggingface.co/timm/resnet50.a2_in1k)|288 |80.78|94.99|25.6 |6.8 |18.4 |2088 |

|[resnet50.b1k_in1k](https://huggingface.co/timm/resnet50.b1k_in1k)|288 |80.71|95.43|25.6 |6.8 |18.4 |2087 |

|[resnext50_32x4d.ra_in1k](https://huggingface.co/timm/resnext50_32x4d.ra_in1k)|288 |80.7 |95.39|25.0 |7.0 |23.8 |1749 |

|[resnetrs101.tf_in1k](https://huggingface.co/timm/resnetrs101.tf_in1k)|192 |80.69|95.24|63.6 |6.0 |12.7 |2270 |

|[resnet50d.a1_in1k](https://huggingface.co/timm/resnet50d.a1_in1k)|224 |80.68|94.71|25.6 |4.4 |11.9 |3162 |

|[eca_resnet33ts.ra2_in1k](https://huggingface.co/timm/eca_resnet33ts.ra2_in1k)|288 |80.68|95.36|19.7 |6.0 |14.8 |2637 |

|[resnet50.a1h_in1k](https://huggingface.co/timm/resnet50.a1h_in1k)|224 |80.67|95.3 |25.6 |4.1 |11.1 |3452 |

|[resnext50d_32x4d.bt_in1k](https://huggingface.co/timm/resnext50d_32x4d.bt_in1k)|288 |80.67|95.42|25.0 |7.4 |25.1 |1626 |

|[resnetaa50.a1h_in1k](https://huggingface.co/timm/resnetaa50.a1h_in1k)|224 |80.63|95.21|25.6 |5.2 |11.6 |3034 |

|[ecaresnet50d.miil_in1k](https://huggingface.co/timm/ecaresnet50d.miil_in1k)|224 |80.61|95.32|25.6 |4.4 |11.9 |2813 |

|[resnext101_64x4d.gluon_in1k](https://huggingface.co/timm/resnext101_64x4d.gluon_in1k)|224 |80.61|94.99|83.5 |15.5 |31.2 |989 |

|[gcresnet33ts.ra2_in1k](https://huggingface.co/timm/gcresnet33ts.ra2_in1k)|288 |80.6 |95.31|19.9 |6.0 |14.8 |2578 |

|[gcresnext50ts.ch_in1k](https://huggingface.co/timm/gcresnext50ts.ch_in1k)|256 |80.57|95.17|15.7 |3.8 |15.5 |2710 |

|[resnet152.a3_in1k](https://huggingface.co/timm/resnet152.a3_in1k)|224 |80.56|95.0 |60.2 |11.6 |22.6 |1483 |

|[resnet50d.ra2_in1k](https://huggingface.co/timm/resnet50d.ra2_in1k)|224 |80.53|95.16|25.6 |4.4 |11.9 |3164 |

|[resnext50_32x4d.a1_in1k](https://huggingface.co/timm/resnext50_32x4d.a1_in1k)|224 |80.53|94.46|25.0 |4.3 |14.4 |2930 |

|[wide_resnet101_2.tv2_in1k](https://huggingface.co/timm/wide_resnet101_2.tv2_in1k)|176 |80.48|94.98|126.9 |14.3 |13.2 |1719 |

|[resnet152d.gluon_in1k](https://huggingface.co/timm/resnet152d.gluon_in1k)|224 |80.47|95.2 |60.2 |11.8 |23.4 |1428 |

|[resnet50.b2k_in1k](https://huggingface.co/timm/resnet50.b2k_in1k)|288 |80.45|95.32|25.6 |6.8 |18.4 |2086 |

|[ecaresnetlight.miil_in1k](https://huggingface.co/timm/ecaresnetlight.miil_in1k)|224 |80.45|95.24|30.2 |4.1 |8.4 |3530 |

|[resnext50_32x4d.a2_in1k](https://huggingface.co/timm/resnext50_32x4d.a2_in1k)|224 |80.45|94.63|25.0 |4.3 |14.4 |2936 |

|[wide_resnet50_2.tv2_in1k](https://huggingface.co/timm/wide_resnet50_2.tv2_in1k)|176 |80.43|95.09|68.9 |7.3 |9.0 |3015 |

|[resnet101d.gluon_in1k](https://huggingface.co/timm/resnet101d.gluon_in1k)|224 |80.42|95.01|44.6 |8.1 |17.0 |2007 |

|[resnet50.a1_in1k](https://huggingface.co/timm/resnet50.a1_in1k)|224 |80.38|94.6 |25.6 |4.1 |11.1 |3461 |

|[seresnet33ts.ra2_in1k](https://huggingface.co/timm/seresnet33ts.ra2_in1k)|256 |80.36|95.1 |19.8 |4.8 |11.7 |3267 |

|[resnext101_32x4d.gluon_in1k](https://huggingface.co/timm/resnext101_32x4d.gluon_in1k)|224 |80.34|94.93|44.2 |8.0 |21.2 |1814 |

|[resnext50_32x4d.fb_ssl_yfcc100m_ft_in1k](https://huggingface.co/timm/resnext50_32x4d.fb_ssl_yfcc100m_ft_in1k)|224 |80.32|95.4 |25.0 |4.3 |14.4 |2941 |

|[resnet101s.gluon_in1k](https://huggingface.co/timm/resnet101s.gluon_in1k)|224 |80.28|95.16|44.7 |9.2 |18.6 |1851 |

|[seresnet50.ra2_in1k](https://huggingface.co/timm/seresnet50.ra2_in1k)|224 |80.26|95.08|28.1 |4.1 |11.1 |2972 |

|[resnetblur50.bt_in1k](https://huggingface.co/timm/resnetblur50.bt_in1k)|288 |80.24|95.24|25.6 |8.5 |19.9 |1523 |

|[resnet50d.a2_in1k](https://huggingface.co/timm/resnet50d.a2_in1k)|224 |80.22|94.63|25.6 |4.4 |11.9 |3162 |

|[resnet152.tv2_in1k](https://huggingface.co/timm/resnet152.tv2_in1k)|176 |80.2 |94.64|60.2 |7.2 |14.0 |2346 |

|[seresnet50.a2_in1k](https://huggingface.co/timm/seresnet50.a2_in1k)|224 |80.08|94.74|28.1 |4.1 |11.1 |2969 |

|[eca_resnet33ts.ra2_in1k](https://huggingface.co/timm/eca_resnet33ts.ra2_in1k)|256 |80.08|94.97|19.7 |4.8 |11.7 |3284 |

|[gcresnet33ts.ra2_in1k](https://huggingface.co/timm/gcresnet33ts.ra2_in1k)|256 |80.06|94.99|19.9 |4.8 |11.7 |3216 |

|[resnet50_gn.a1h_in1k](https://huggingface.co/timm/resnet50_gn.a1h_in1k)|224 |80.06|94.95|25.6 |4.1 |11.1 |1109 |

|[seresnet50.a1_in1k](https://huggingface.co/timm/seresnet50.a1_in1k)|224 |80.02|94.71|28.1 |4.1 |11.1 |2962 |

|[resnet50.ram_in1k](https://huggingface.co/timm/resnet50.ram_in1k)|288 |79.97|95.05|25.6 |6.8 |18.4 |2086 |

|[resnet152c.gluon_in1k](https://huggingface.co/timm/resnet152c.gluon_in1k)|224 |79.92|94.84|60.2 |11.8 |23.4 |1455 |

|[seresnext50_32x4d.gluon_in1k](https://huggingface.co/timm/seresnext50_32x4d.gluon_in1k)|224 |79.91|94.82|27.6 |4.3 |14.4 |2591 |

|[resnet50.d_in1k](https://huggingface.co/timm/resnet50.d_in1k)|224 |79.91|94.67|25.6 |4.1 |11.1 |3456 |

|[resnet101.tv2_in1k](https://huggingface.co/timm/resnet101.tv2_in1k)|176 |79.9 |94.6 |44.6 |4.9 |10.1 |3341 |

|[resnetrs50.tf_in1k](https://huggingface.co/timm/resnetrs50.tf_in1k)|224 |79.89|94.97|35.7 |4.5 |12.1 |2774 |

|[resnet50.c2_in1k](https://huggingface.co/timm/resnet50.c2_in1k)|224 |79.88|94.87|25.6 |4.1 |11.1 |3455 |