license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['pytorch', 'causal-lm'] | false | Training data GPT-J 6B was pretrained on the [Pile](pile.eleuther.ai), a large scale curated dataset created by EleutherAI for the purpose of training this model. After the pre-training, it's finetuned on our Japanese storytelling dataset. Check our blog post for more details. | 79072a2a0d7896cdc9022f7cd09f1614 |

apache-2.0 | ['pytorch', 'causal-lm'] | false | How to use ``` from transformers import AutoTokenizer, AutoModelForCausalLM import torch tokenizer = AutoTokenizer.from_pretrained("EleutherAI/gpt-j-6B") model = AutoModelForCausalLM.from_pretrained("NovelAI/genji-jp", torch_dtype=torch.float16, low_cpu_mem_usage=True).eval().cuda() text = '''あらすじ:あなたは異世界に転生してしまいました。勇者となって、仲間を作り、異世界を冒険しよう! *** 転生すると、ある能力を手に入れていた。それは、''' tokens = tokenizer(text, return_tensors="pt").input_ids generated_tokens = model.generate(tokens.long().cuda(), use_cache=True, do_sample=True, temperature=1, top_p=0.9, repetition_penalty=1.125, min_length=1, max_length=len(tokens[0]) + 400, pad_token_id=tokenizer.eos_token_id) last_tokens = generated_tokens[0] generated_text = tokenizer.decode(last_tokens).replace("�", "") print("Generation:\n" + generated_text) ``` When run, produces output like this: ``` Generation: あらすじ:あなたは異世界に転生してしまいました。勇者となって、仲間を作り、異世界を冒険しよう! *** 転生すると、ある能力を手に入れていた。それは、『予知』だ。過去から未来のことを、誰も知らない出来事も含めて見通すことが出来る。 悪魔の欠片と呼ばれる小さな結晶を取り込んで、使役することが出来る。人を惹きつけ、堕落させる。何より、俺は男なんて居なかったし、女に興味もない。……そんなクズの片棒を担ぎ上げる奴が多くなると思うと、ちょっと苦しい。 だが、一部の人間には協力者を得ることが出来る。目立たない街にある寺の中で、常に家に引きこもっている老人。そんなヤツの魂をコントロールすることが出来るのだ。便利な能力だ。しかし、裏切り者は大勢いる。気を抜けば、狂う。だから注意が必要だ。 ――「やってやるよ」 アーロンは不敵に笑った。この ``` | 1096cbfd08cfca6eb1c397f50ec91e72 |

apache-2.0 | ['pytorch', 'causal-lm'] | false | Acknowledgements This project was possible because of the compute provided by the [TPU Research Cloud](https://sites.research.google/trc/) Thanks [EleutherAI](https://eleuther.ai/) for pretraining the GPT-J 6B model. Thanks to everyone who contributed to this project! - [Finetune](https://github.com/finetuneanon) - [Aero](https://github.com/AeroScripts) - [Kurumuz](https://github.com/kurumuz) | fe88b939cb4b65cdc5de36720144ddd2 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xls-r-300m_phoneme-mfa_korean This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on a phonetically balanced native Korean read-speech corpus. | 75b86462f9cbf391a00ae95c1dfe0b25 |

apache-2.0 | ['generated_from_trainer'] | false | Training and Evaluation Data Training Data - Data Name: Phonetically Balanced Native Korean Read-speech Corpus - Num. of Samples: 54,000 - Audio Length: 108 Hours Evaluation Data - Data Name: Phonetically Balanced Native Korean Read-speech Corpus - Num. of Samples: 6,000 - Audio Length: 12 Hours | 19e00de90a17d1dbc9e66c9dda61e801 |

apache-2.0 | ['generated_from_trainer'] | false | Training Hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.2 - num_epochs: 20 (EarlyStopping: patience: 5 epochs max) - mixed_precision_training: Native AMP | a1bb489dce320f719f91ce7ce37819e3 |

apache-2.0 | ['generated_from_trainer'] | false | Experimental Results Official implementation of the paper (in review) Major error patterns of L2 Korean speech from five different L1s: Chinese (ZH), Vietnamese (VI), Japanese (JP), Thai (TH), English (EN)  | 1608902c02bcff756be756deb422d622 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Tiny Dutch This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.7024 - Wer: 42.0655 | fca5e3220ca2266c49bb524d6f03607e |

mit | ['conversational'] | false | THIS AI IS OUTDATED. See [Aeona](https://huggingface.co/deepparag/Aeona) An generative AI made using [microsoft/DialoGPT-small](https://huggingface.co/microsoft/DialoGPT-small). Trained on: https://www.kaggle.com/Cornell-University/movie-dialog-corpus https://www.kaggle.com/jef1056/discord-data [Live Demo](https://dumbot-331213.uc.r.appspot.com/) Example: ```python from transformers import AutoTokenizer, AutoModelWithLMHead tokenizer = AutoTokenizer.from_pretrained("deepparag/DumBot") model = AutoModelWithLMHead.from_pretrained("deepparag/DumBot") | 3775b335cb35cde7b2eb2a82300d5964 |

cc-by-4.0 | ['spanish', 'roberta'] | false | This is a **RoBERTa-base** model trained from scratch in Spanish. The training dataset is [mc4](https://huggingface.co/datasets/bertin-project/mc4-es-sampled ) subsampling documents to a total of about 50 million examples. Sampling is random. This model continued training from [sequence length 128](https://huggingface.co/bertin-project/bertin-base-random) using 20.000 steps for length 512. Please see our main [card](https://huggingface.co/bertin-project/bertin-roberta-base-spanish) for more information. This is part of the [Flax/Jax Community Week](https://discuss.huggingface.co/t/open-to-the-community-community-week-using-jax-flax-for-nlp-cv/7104), organised by [HuggingFace](https://huggingface.co/) and TPU usage sponsored by Google. | 915b274e6baa63d22925737b11fab313 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Fine-tuned XLSR-53 large model for speech recognition in Hungarian Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Hungarian using the train and validation splits of [Common Voice 6.1](https://huggingface.co/datasets/common_voice) and [CSS10](https://github.com/Kyubyong/css10). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned thanks to the GPU credits generously given by the [OVHcloud](https://www.ovhcloud.com/en/public-cloud/ai-training/) :) The script used for training can be found here: https://github.com/jonatasgrosman/wav2vec2-sprint | fd5a3939795acfea442244b624d99a12 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows... Using the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) library: ```python from huggingsound import SpeechRecognitionModel model = SpeechRecognitionModel("jonatasgrosman/wav2vec2-large-xlsr-53-hungarian") audio_paths = ["/path/to/file.mp3", "/path/to/another_file.wav"] transcriptions = model.transcribe(audio_paths) ``` Writing your own inference script: ```python import torch import librosa from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor LANG_ID = "hu" MODEL_ID = "jonatasgrosman/wav2vec2-large-xlsr-53-hungarian" SAMPLES = 5 test_dataset = load_dataset("common_voice", LANG_ID, split=f"test[:{SAMPLES}]") processor = Wav2Vec2Processor.from_pretrained(MODEL_ID) model = Wav2Vec2ForCTC.from_pretrained(MODEL_ID) | 01657bf9319a0ff73473ae4fc1ed316a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the audio files as arrays def speech_file_to_array_fn(batch): speech_array, sampling_rate = librosa.load(batch["path"], sr=16_000) batch["speech"] = speech_array batch["sentence"] = batch["sentence"].upper() return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) predicted_sentences = processor.batch_decode(predicted_ids) for i, predicted_sentence in enumerate(predicted_sentences): print("-" * 100) print("Reference:", test_dataset[i]["sentence"]) print("Prediction:", predicted_sentence) ``` | Reference | Prediction | | ------------- | ------------- | | BÜSZKÉK VAGYUNK A MAGYAR EMBEREK NAGYSZERŰ SZELLEMI ALKOTÁSAIRA. | BÜSZKÉK VAGYUNK A MAGYAR EMBEREK NAGYSZERŰ SZELLEMI ALKOTÁSAIRE | | A NEMZETSÉG TAGJAI KÖZÜL EZT TERMESZTIK A LEGSZÉLESEBB KÖRBEN ÍZLETES TERMÉSÉÉRT. | A NEMZETSÉG TAGJAI KÖZÜL ESZSZERMESZTIK A LEGSZELESEBB KÖRBEN IZLETES TERMÉSSÉÉRT | | A VÁROSBA VÁGYÓDOTT A LEGJOBBAN, ÉPPEN MERT ODA NEM JUTHATOTT EL SOHA. | A VÁROSBA VÁGYÓDOTT A LEGJOBBAN ÉPPEN MERT ODA NEM JUTHATOTT EL SOHA | | SÍRJA MÁRA MEGSEMMISÜLT. | SIMGI A MANDO MEG SEMMICSEN | | MINDEN ZENESZÁMOT DRÁGAKŐNEK NEVEZETT. | MINDEN ZENA SZÁMODRAGAKŐNEK NEVEZETT | | ÍGY MÚLT EL A DÉLELŐTT. | ÍGY MÚLT EL A DÍN ELŐTT | | REMEK POFA! | A REMEG PUFO | | SZEMET SZEMÉRT, FOGAT FOGÉRT. | SZEMET SZEMÉRT FOGADD FOGÉRT | | BIZTOSAN LAKIK ITT NÉHÁNY ATYÁMFIA. | BIZTOSAN LAKIKÉT NÉHANY ATYAMFIA | | A SOROK KÖZÖTT OLVAS. | A SOROG KÖZÖTT OLVAS | | 1594f50f8a0439549b4b19bdd9472619 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Hungarian test data of Common Voice. ```python import torch import re import librosa from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor LANG_ID = "hu" MODEL_ID = "jonatasgrosman/wav2vec2-large-xlsr-53-hungarian" DEVICE = "cuda" CHARS_TO_IGNORE = [",", "?", "¿", ".", "!", "¡", ";", ";", ":", '""', "%", '"', "�", "ʿ", "·", "჻", "~", "՞", "؟", "،", "।", "॥", "«", "»", "„", "“", "”", "「", "」", "‘", "’", "《", "》", "(", ")", "[", "]", "{", "}", "=", "`", "_", "+", "<", ">", "…", "–", "°", "´", "ʾ", "‹", "›", "©", "®", "—", "→", "。", "、", "﹂", "﹁", "‧", "~", "﹏", ",", "{", "}", "(", ")", "[", "]", "【", "】", "‥", "〽", "『", "』", "〝", "〟", "⟨", "⟩", "〜", ":", "!", "?", "♪", "؛", "/", "\\", "º", "−", "^", "ʻ", "ˆ"] test_dataset = load_dataset("common_voice", LANG_ID, split="test") wer = load_metric("wer.py") | 7b69ae3dd6c9af1071e1669fd48ed673 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the audio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to(DEVICE), attention_mask=inputs.attention_mask.to(DEVICE)).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) predictions = [x.upper() for x in result["pred_strings"]] references = [x.upper() for x in result["sentence"]] print(f"WER: {wer.compute(predictions=predictions, references=references, chunk_size=1000) * 100}") print(f"CER: {cer.compute(predictions=predictions, references=references, chunk_size=1000) * 100}") ``` **Test Result**: In the table below I report the Word Error Rate (WER) and the Character Error Rate (CER) of the model. I ran the evaluation script described above on other models as well (on 2021-04-22). Note that the table below may show different results from those already reported, this may have been caused due to some specificity of the other evaluation scripts used. | Model | WER | CER | | ------------- | ------------- | ------------- | | jonatasgrosman/wav2vec2-large-xlsr-53-hungarian | **31.40%** | **6.20%** | | anton-l/wav2vec2-large-xlsr-53-hungarian | 42.39% | 9.39% | | gchhablani/wav2vec2-large-xlsr-hu | 46.42% | 10.04% | | birgermoell/wav2vec2-large-xlsr-hungarian | 46.93% | 10.31% | | e851569cbe26eb5a62532aa8f272d42b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Citation If you want to cite this model you can use this: ```bibtex @misc{grosman2021xlsr53-large-hungarian, title={Fine-tuned {XLSR}-53 large model for speech recognition in {H}ungarian}, author={Grosman, Jonatas}, howpublished={\url{https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-hungarian}}, year={2021} } ``` | 23292a64dec425f2720fd550bc8a0f38 |

apache-2.0 | ['generated_from_trainer'] | false | VN_ja-en_helsinki This model is a fine-tuned version of [Helsinki-NLP/opus-mt-ja-en](https://huggingface.co/Helsinki-NLP/opus-mt-ja-en) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.2409 - BLEU: 15.28 | 905a86b025471c3e3f6d94c67ff1d774 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | bcac4d8767f7395f9c1a62dc412d2134 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 2.6165 | 0.19 | 2000 | 2.6734 | | 2.3805 | 0.39 | 4000 | 2.6047 | | 2.2793 | 0.58 | 6000 | 2.5461 | | 2.2028 | 0.78 | 8000 | 2.5127 | | 2.1361 | 0.97 | 10000 | 2.4511 | | 1.9653 | 1.17 | 12000 | 2.4331 | | 1.934 | 1.36 | 14000 | 2.3840 | | 1.9002 | 1.56 | 16000 | 2.3901 | | 1.87 | 1.75 | 18000 | 2.3508 | | 1.8408 | 1.95 | 20000 | 2.3082 | | 1.6937 | 2.14 | 22000 | 2.3279 | | 1.6371 | 2.34 | 24000 | 2.3052 | | 1.6264 | 2.53 | 26000 | 2.3071 | | 1.6029 | 2.72 | 28000 | 2.2685 | | 1.5847 | 2.92 | 30000 | 2.2409 | | 4e8ad9b782864011cfc8d2569df13614 |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0614 - Precision: 0.9310 - Recall: 0.9498 - F1: 0.9404 - Accuracy: 0.9857 | 3d559cf69c55abf56679281e46a1b20c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0875 | 1.0 | 1756 | 0.0639 | 0.9167 | 0.9387 | 0.9276 | 0.9833 | | 0.0332 | 2.0 | 3512 | 0.0595 | 0.9334 | 0.9504 | 0.9418 | 0.9857 | | 0.0218 | 3.0 | 5268 | 0.0614 | 0.9310 | 0.9498 | 0.9404 | 0.9857 | | 5d9e34ec1e924e14a406c0b3cdfa94ea |

apache-2.0 | ['translation'] | false | opus-mt-sv-fi * source languages: sv * target languages: fi * OPUS readme: [sv-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-fi/README.md) * dataset: opus+bt * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus+bt-2020-04-07.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-fi/opus+bt-2020-04-07.zip) * test set translations: [opus+bt-2020-04-07.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-fi/opus+bt-2020-04-07.test.txt) * test set scores: [opus+bt-2020-04-07.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-fi/opus+bt-2020-04-07.eval.txt) | f5d7b2846ef38788d619332f4c56f02d |

apache-2.0 | ['automatic-speech-recognition', 'pt'] | false | exp_w2v2t_pt_xlsr-53_s454 Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) for speech recognition using the train split of [Common Voice 7.0 (pt)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 6f2a53b524ac571e8a7bb872d8c54db5 |

apache-2.0 | ['finnish', 'roberta'] | false | RoBERTa large model trained with WECHSEL method for Finnish Pretrained RoBERTa model on Finnish language using a masked language modeling (MLM) objective with WECHSEL method. RoBERTa was introduced in [this paper](https://arxiv.org/abs/1907.11692) and first released in [this repository](https://github.com/pytorch/fairseq/tree/master/examples/roberta). WECHSEL method (Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models) was introduced in [this paper](https://arxiv.org/abs/2112.06598) and first released in [this repository](https://github.com/CPJKU/wechsel). This model is case-sensitive: it makes a difference between finnish and Finnish. | cecd2e32edcdb908f581144781a5c614 |

apache-2.0 | ['finnish', 'roberta'] | false | WECHSEL method Using the WECHSEL method, we first took the pretrained English [roberta-large](https://huggingface.co/roberta-large) model, changed its tokenizer with our Finnish tokenizer and initialized model's token embeddings such that they are close to semantically similar English tokens by utilizing multilingual static word embeddings (by fastText) covering English and Finnish. We were able to confirm the WECHSEL paper's findings that using this method you can save pretraining time and thus computing resources. To get idea of the WECHSEL method's training time savings you can check the table below illustrating the MLM evaluation accuracies during the pretraining compared to the [Finnish-NLP/roberta-large-finnish-v2](https://huggingface.co/Finnish-NLP/roberta-large-finnish-v2) which was trained from scratch: | | 10k train steps | 100k train steps | 200k train steps | 270k train steps | |------------------------------------------|------------------|------------------|------------------|------------------| |Finnish-NLP/roberta-large-wechsel-finnish |37.61 eval acc |58.14 eval acc |61.60 eval acc |62.77 eval acc | |Finnish-NLP/roberta-large-finnish-v2 |13.83 eval acc |55.87 eval acc |58.58 eval acc |59.47 eval acc | Downstream finetuning text classification tests can be found from the end but there this model trained with WECHSEL method didn't significantly improve the downstream performances. However, based on tens of qualitative fill-mask task example tests we noticed that for fill-mask task this WECHSEL model significantly outperforms our other models trained from scratch. | b6891fbfe314b5cd3cdac3fd472036ee |

apache-2.0 | ['finnish', 'roberta'] | false | How to use You can use this model directly with a pipeline for masked language modeling: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='Finnish-NLP/roberta-large-wechsel-finnish') >>> unmasker("Moikka olen <mask> kielimalli.") [{'sequence': 'Moikka olen hyvä kielimalli.', 'score': 0.07757357507944107, 'token': 763, 'token_str': ' hyvä'}, {'sequence': 'Moikka olen suomen kielimalli.', 'score': 0.05297883599996567, 'token': 3641, 'token_str': ' suomen'}, {'sequence': 'Moikka olen kuin kielimalli.', 'score': 0.03747279942035675, 'token': 523, 'token_str': ' kuin'}, {'sequence': 'Moikka olen suomalainen kielimalli.', 'score': 0.031031042337417603, 'token': 4966, 'token_str': ' suomalainen'}, {'sequence': 'Moikka olen myös kielimalli.', 'score': 0.026489052921533585, 'token': 505, 'token_str': ' myös'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import RobertaTokenizer, RobertaModel tokenizer = RobertaTokenizer.from_pretrained('Finnish-NLP/roberta-large-wechsel-finnish') model = RobertaModel.from_pretrained('Finnish-NLP/roberta-large-wechsel-finnish') text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import RobertaTokenizer, TFRobertaModel tokenizer = RobertaTokenizer.from_pretrained('Finnish-NLP/roberta-large-wechsel-finnish') model = TFRobertaModel.from_pretrained('Finnish-NLP/roberta-large-wechsel-finnish', from_pt=True) text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | 5b6aa0c0531614071777315c60833655 |

apache-2.0 | ['finnish', 'roberta'] | false | Training data This Finnish RoBERTa model was pretrained on the combination of five datasets: - [mc4_fi_cleaned](https://huggingface.co/datasets/Finnish-NLP/mc4_fi_cleaned), the dataset mC4 is a multilingual colossal, cleaned version of Common Crawl's web crawl corpus. We used the Finnish subset of the mC4 dataset and further cleaned it with our own text data cleaning codes (check the dataset repo). - [wikipedia](https://huggingface.co/datasets/wikipedia) We used the Finnish subset of the wikipedia (August 2021) dataset - [Yle Finnish News Archive](http://urn.fi/urn:nbn:fi:lb-2017070501) - [Finnish News Agency Archive (STT)](http://urn.fi/urn:nbn:fi:lb-2018121001) - [The Suomi24 Sentences Corpus](http://urn.fi/urn:nbn:fi:lb-2020021803) Raw datasets were cleaned to filter out bad quality and non-Finnish examples. Together these cleaned datasets were around 84GB of text. | db5c6b3c990c735a159ff8c2fd0b7136 |

apache-2.0 | ['finnish', 'roberta'] | false | Pretraining The model was trained on TPUv3-8 VM, sponsored by the [Google TPU Research Cloud](https://sites.research.google/trc/about/), for 270k steps (a bit over 1 epoch, 512 batch size) with a sequence length of 128 and continuing for 180k steps (batch size 64) with a sequence length of 512. The optimizer used was Adafactor (to save memory). Learning rate was 2e-4, \\(\beta_{1} = 0.9\\), \\(\beta_{2} = 0.98\\) and \\(\epsilon = 1e-6\\), learning rate warmup for 2500 steps and linear decay of the learning rate after. | 408e424ff782e270ed2e79a50be1debd |

apache-2.0 | ['finnish', 'roberta'] | false | Evaluation results Evaluation was done by fine-tuning the model on downstream text classification task with two different labeled datasets: [Yle News](https://github.com/spyysalo/yle-corpus) and [Eduskunta](https://github.com/aajanki/eduskunta-vkk). Yle News classification fine-tuning was done with two different sequence lengths: 128 and 512 but Eduskunta only with 128 sequence length. When fine-tuned on those datasets, this model (the first row of the table) achieves the following accuracy results compared to the [FinBERT (Finnish BERT)](https://huggingface.co/TurkuNLP/bert-base-finnish-cased-v1) model and to our previous [Finnish-NLP/roberta-large-finnish-v2](https://huggingface.co/Finnish-NLP/roberta-large-finnish-v2) and [Finnish-NLP/roberta-large-finnish](https://huggingface.co/Finnish-NLP/roberta-large-finnish) models: | | Average | Yle News 128 length | Yle News 512 length | Eduskunta 128 length | |------------------------------------------|----------|---------------------|---------------------|----------------------| |Finnish-NLP/roberta-large-wechsel-finnish |88.19 |**94.91** |95.18 |74.47 | |Finnish-NLP/roberta-large-finnish-v2 |88.17 |94.46 |95.22 |74.83 | |Finnish-NLP/roberta-large-finnish |88.02 |94.53 |95.23 |74.30 | |TurkuNLP/bert-base-finnish-cased-v1 |**88.82** |94.90 |**95.49** |**76.07** | To conclude, this model didn't significantly improve compared to our previous models which were trained from scratch instead of using the WECHSEL method as in this model. This model is also slightly (~ 1%) losing to the [FinBERT (Finnish BERT)](https://huggingface.co/TurkuNLP/bert-base-finnish-cased-v1) model. | fd30857e4dedebeb44facf15d0cdb51d |

apache-2.0 | ['finnish', 'roberta'] | false | Team Members - Aapo Tanskanen, [Hugging Face profile](https://huggingface.co/aapot), [LinkedIn profile](https://www.linkedin.com/in/aapotanskanen/) - Rasmus Toivanen [Hugging Face profile](https://huggingface.co/RASMUS), [LinkedIn profile](https://www.linkedin.com/in/rasmustoivanen/) Feel free to contact us for more details 🤗 | 353a71cf3b26f31041c65d3a11e24384 |

apache-2.0 | ['generated_from_keras_callback'] | false | javilonso/classificationPolEsp2 This model is a fine-tuned version of [PlanTL-GOB-ES/gpt2-base-bne](https://huggingface.co/PlanTL-GOB-ES/gpt2-base-bne) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1229 - Validation Loss: 0.8172 - Epoch: 2 | a1dc31baebbd0e8e1c5d1b6f7c747858 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 17958, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | cb470e1c154a9a64f31828b80b641d55 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.6246 | 0.5679 | 0 | | 0.4198 | 0.6097 | 1 | | 0.1229 | 0.8172 | 2 | | 10c6d8f2ad0765b9735b0aa1620b0d13 |

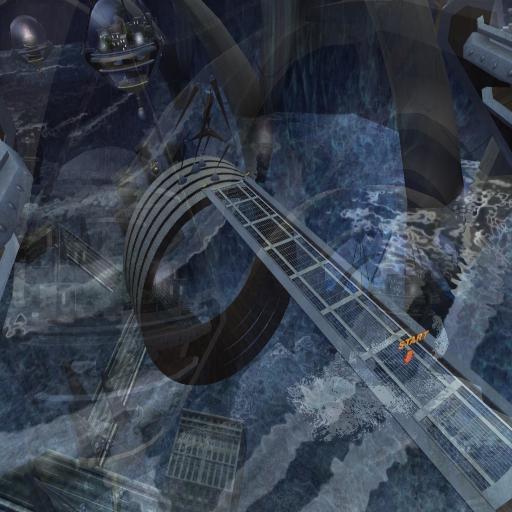

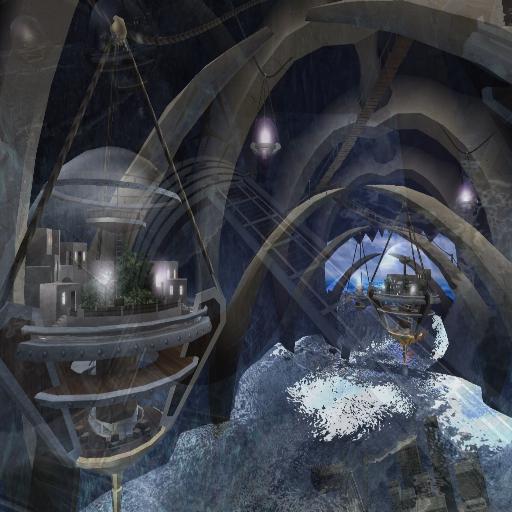

mit | [] | false | InsideWhale on Stable Diffusion This is the `<InsideWhale>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:      | be1c8c95bcee8ca1ca50e6181d73c6cb |

apache-2.0 | ['summarization'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BigBirdPegasusForConditionalGeneration, AutoTokenizer tokenizer = AutoTokenizer.from_pretrained("google/bigbird-pegasus-large-bigpatent") | dbc643d676782b59dad96ddd66e2c1dc |

apache-2.0 | ['summarization'] | false | you can change `attention_type` (encoder only) to full attention like this: model = BigBirdPegasusForConditionalGeneration.from_pretrained("google/bigbird-pegasus-large-bigpatent", attention_type="original_full") | 2503b6f004d4434e23fdcac065d1bac4 |

apache-2.0 | ['summarization'] | false | you can change `block_size` & `num_random_blocks` like this: model = BigBirdPegasusForConditionalGeneration.from_pretrained("google/bigbird-pegasus-large-bigpatent", block_size=16, num_random_blocks=2) text = "Replace me by any text you'd like." inputs = tokenizer(text, return_tensors='pt') prediction = model.generate(**inputs) prediction = tokenizer.batch_decode(prediction) ``` | c145bccb391141bcc80e9a75f712102c |

other | ['generated_from_trainer'] | false | finetuned-distilbert-news-article-catgorization This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the adult_content dataset. It achieves the following results on the evaluation set: - Loss: 0.0065 - F1_score(weighted): 0.90 | 14d064bb7c4926077c362803100a827e |

other | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-5 - train_batch_size: 5 - eval_batch_size: 5 - seed: 17 - optimizer: AdamW(lr=1e-5 and epsilon=1e-08) - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 0 - num_epochs: 2 | 31279bbcae9645099bbd8d153f70e7a4 |

other | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Validation Loss | f1 score | |:-------------:|:-----:|:---------------: |:------:| | 0.1414 | 1.0 | 0.4585 | 0.9058 | | 0.1410 | 2.0 | 0.4584 | 0.9058 | | afdc65a5e70b761015046d1db957f340 |

apache-2.0 | ['generated_from_keras_callback'] | false | annaeze/lab9_1 This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0230 - Validation Loss: 0.0572 - Epoch: 2 | 3835e52ccf1a95909105743b9ab596df |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.1174 | 0.0596 | 0 | | 0.0391 | 0.0529 | 1 | | 0.0230 | 0.0572 | 2 | | f91856522d5c62701af4b1e03eaee0bb |

apache-2.0 | [] | false | BERT multilingual base model (cased) Pretrained model on the top 104 languages with the largest Wikipedia using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/abs/1810.04805) and first released in [this repository](https://github.com/google-research/bert). This model is case sensitive: it makes a difference between english and English. Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by the Hugging Face team. | 34971bbad56a1a89515004b7d009c591 |

apache-2.0 | [] | false | Model description BERT is a transformers model pretrained on a large corpus of multilingual data in a self-supervised fashion. This means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it was pretrained with two objectives: - Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run the entire masked sentence through the model and has to predict the masked words. This is different from traditional recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the sentence. - Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to predict if the two sentences were following each other or not. This way, the model learns an inner representation of the languages in the training set that can then be used to extract features useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard classifier using the features produced by the BERT model as inputs. | c746fb08c2142034ae2c8d01c2029223 |

apache-2.0 | [] | false | How to use You can use this model directly with a pipeline for masked language modeling: ```python >>> from transformers import pipeline >>> unmasker = pipeline('fill-mask', model='bert-base-multilingual-cased') >>> unmasker("Hello I'm a [MASK] model.") [{'sequence': "[CLS] Hello I'm a model model. [SEP]", 'score': 0.10182085633277893, 'token': 13192, 'token_str': 'model'}, {'sequence': "[CLS] Hello I'm a world model. [SEP]", 'score': 0.052126359194517136, 'token': 11356, 'token_str': 'world'}, {'sequence': "[CLS] Hello I'm a data model. [SEP]", 'score': 0.048930276185274124, 'token': 11165, 'token_str': 'data'}, {'sequence': "[CLS] Hello I'm a flight model. [SEP]", 'score': 0.02036019042134285, 'token': 23578, 'token_str': 'flight'}, {'sequence': "[CLS] Hello I'm a business model. [SEP]", 'score': 0.020079681649804115, 'token': 14155, 'token_str': 'business'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('bert-base-multilingual-cased') model = BertModel.from_pretrained("bert-base-multilingual-cased") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('bert-base-multilingual-cased') model = TFBertModel.from_pretrained("bert-base-multilingual-cased") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | a7f91a7cc832793ff4f83b44266417c2 |

apache-2.0 | [] | false | Training data The BERT model was pretrained on the 104 languages with the largest Wikipedias. You can find the complete list [here](https://github.com/google-research/bert/blob/master/multilingual.md | 2cb3c7c20be6262f1e00e0ec3ea5abee |

apache-2.0 | [] | false | Preprocessing The texts are lowercased and tokenized using WordPiece and a shared vocabulary size of 110,000. The languages with a larger Wikipedia are under-sampled and the ones with lower resources are oversampled. For languages like Chinese, Japanese Kanji and Korean Hanja that don't have space, a CJK Unicode block is added around every character. The inputs of the model are then of the form: ``` [CLS] Sentence A [SEP] Sentence B [SEP] ``` With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two "sentences" has a combined length of less than 512 tokens. The details of the masking procedure for each sentence are the following: - 15% of the tokens are masked. - In 80% of the cases, the masked tokens are replaced by `[MASK]`. - In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace. - In the 10% remaining cases, the masked tokens are left as is. | 376b2bb9d8fc8cf536d1d880ea01ae7f |

apache-2.0 | ['generated_from_trainer'] | false | UrduAudio2Text This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 1.4978 - Wer: 0.8376 | 85fb942b1fcdf9a5a5b8fcc73d17e2f7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 5.5558 | 15.98 | 400 | 1.4978 | 0.8376 | | 8c216a479c5829acb56cccf0edbb6732 |

apache-2.0 | ['monai', 'medical'] | false | Model Overview This model is trained using the runner-up [1] awarded pipeline of the "Medical Segmentation Decathlon Challenge 2018" using the UNet architecture [2] with 32 training images and 9 validation images. | 9fe7b153d77dbfc9de17a7a1160de599 |

apache-2.0 | ['monai', 'medical'] | false | commands example Execute training: ``` python -m monai.bundle run training --meta_file configs/metadata.json --config_file configs/train.json --logging_file configs/logging.conf ``` Override the `train` config to execute multi-GPU training: ``` torchrun --standalone --nnodes=1 --nproc_per_node=2 -m monai.bundle run training --meta_file configs/metadata.json --config_file "['configs/train.json','configs/multi_gpu_train.json']" --logging_file configs/logging.conf ``` Override the `train` config to execute evaluation with the trained model: ``` python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file "['configs/train.json','configs/evaluate.json']" --logging_file configs/logging.conf ``` Execute inference: ``` python -m monai.bundle run evaluating --meta_file configs/metadata.json --config_file configs/inference.json --logging_file configs/logging.conf ``` | 483328602e4e11c4e8bafbd474dfa041 |

apache-2.0 | ['monai', 'medical'] | false | References [1] Xia, Yingda, et al. "3D Semi-Supervised Learning with Uncertainty-Aware Multi-View Co-Training." arXiv preprint arXiv:1811.12506 (2018). https://arxiv.org/abs/1811.12506. [2] Kerfoot E., Clough J., Oksuz I., Lee J., King A.P., Schnabel J.A. (2019) Left-Ventricle Quantification Using Residual U-Net. In: Pop M. et al. (eds) Statistical Atlases and Computational Models of the Heart. Atrial Segmentation and LV Quantification Challenges. STACOM 2018. Lecture Notes in Computer Science, vol 11395. Springer, Cham. https://doi.org/10.1007/978-3-030-12029-0_40 | 27f5dca9b3a83135a3099d127011e863 |

apache-2.0 | ['generated_from_trainer'] | false | Use this model to detect Twitter users' profiles related to healthcare. User profile classification may be useful when searching for health information on Twitter. For a certain health topic, tweets from physicians or organizations (e.g. ```Board-certified dermatologist```) may be more reliable than undefined or vague profiles (e.g. ```Human. Person. Father```). The model expects the user's ```description``` text field (see [Twitter API](https://developer.twitter.com/en/docs/twitter-api/v1/data-dictionary/object-model/user) docs) as input and returns a label for each profile: - `not-health-related` - `health-related` - `health-related/person` - `health-related/organization` - `health-related/publishing` - `health-related/physician` - `health-related/news` - `health-related/academic` F1 score is 0.9 | 03b4f20be45809a1704ea55aac372037 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0616 - Precision: 0.9265 - Recall: 0.9361 - F1: 0.9313 - Accuracy: 0.9837 | 66a9b2eda032996199900f228f4aa9dd |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.2437 | 1.0 | 878 | 0.0745 | 0.9144 | 0.9173 | 0.9158 | 0.9799 | | 0.0518 | 2.0 | 1756 | 0.0621 | 0.9177 | 0.9353 | 0.9264 | 0.9826 | | 0.03 | 3.0 | 2634 | 0.0616 | 0.9265 | 0.9361 | 0.9313 | 0.9837 | | ad990d9f0d629be54ac02ca78cb91fa5 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-policies This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the policies dataset. It achieves the following results on the evaluation set: - Loss: 0.0193 | 14fe6560b72f47e25fd2ef8c6aaa38ff |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 0.4208 | 1.0 | 759 | 0.0183 | | 0.0115 | 2.0 | 1518 | 0.0202 | | 0.0048 | 3.0 | 2277 | 0.0193 | | 70322d00117b9b738db0aecc9a5336d6 |

apache-2.0 | ['generated_from_trainer'] | false | aa This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 15.9757 - Wer: 1.0 | 68779a3f16dde36200910b445f14fd37 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 10 - mixed_precision_training: Native AMP | 517af4f4b0fb43d92a4a1e589a86ec82 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 14.5628 | 3.33 | 20 | 16.1808 | 1.0 | | 14.5379 | 6.67 | 40 | 16.1005 | 1.0 | | 14.3379 | 10.0 | 60 | 15.9757 | 1.0 | | 373758c08e913fcba590d46f82c71122 |

mit | ['generated_from_trainer'] | false | roberta-large-md-conllpp-v3 This model is a fine-tuned version of [roberta-large](https://huggingface.co/roberta-large) on the conllpp dataset. It achieves the following results on the evaluation set: - Loss: 0.0564 - Precision: 0.9980 - Recall: 0.9951 - F1: 0.9965 - Accuracy: 0.9943 | 777ebae082be738d194209323c988c8f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0945 | 1.0 | 878 | 0.0317 | 0.9975 | 0.9950 | 0.9963 | 0.9939 | | 0.0175 | 2.0 | 1756 | 0.0483 | 0.9980 | 0.9953 | 0.9967 | 0.9946 | | 0.0105 | 3.0 | 2634 | 0.0505 | 0.9982 | 0.9941 | 0.9961 | 0.9937 | | 0.0056 | 4.0 | 3512 | 0.0574 | 0.9982 | 0.9939 | 0.9961 | 0.9935 | | 0.0022 | 5.0 | 4390 | 0.0564 | 0.9980 | 0.9951 | 0.9965 | 0.9943 | | bd7c284aeafb6611cfba7c910cf96348 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | mmarco-sentence-BERTino This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. It was trained on [mmarco](https://huggingface.co/datasets/unicamp-dl/mmarco/viewer/italian/train). <p align="center"> <img src="https://media.tate.org.uk/art/images/work/L/L04/L04294_9.jpg" width="600"> </br> Mohan Samant, Midnight Fishing Party, 1978 </p> | bb5ad3f224ad68f864d31ff240871173 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["Questo è un esempio di frase", "Questo è un ulteriore esempio"] model = SentenceTransformer('efederici/mmarco-sentence-BERTino') embeddings = model.encode(sentences) print(embeddings) ``` | 2dc92e9e6d264dada5eb67ff09fcf1d0 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (HuggingFace Transformers) Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings. ```python from transformers import AutoTokenizer, AutoModel import torch | 0ef165af21f8f9b0fa682c4b060f68f0 |

apache-2.0 | ['mobile', 'vison', 'image-classification'] | false | Model Details EfficientFormer-L3, developed by [Snap Research](https://github.com/snap-research), is one of three EfficientFormer models. The EfficientFormer models were released as part of an effort to prove that properly designed transformers can reach extremely low latency on mobile devices while maintaining high performance. This checkpoint of EfficientFormer-L3 was trained for 300 epochs. - Developed by: Yanyu Li, Geng Yuan, Yang Wen, Eric Hu, Georgios Evangelidis, Sergey Tulyakov, Yanzhi Wang, Jian Ren - Language(s): English - License: This model is licensed under the apache-2.0 license - Resources for more information: - [Research Paper](https://arxiv.org/abs/2206.01191) - [GitHub Repo](https://github.com/snap-research/EfficientFormer/) </model_details> <how_to_start> | 6eae00a5e55ceb229399d29be5ce56ba |

apache-2.0 | ['mobile', 'vison', 'image-classification'] | false | Load preprocessor and pretrained model model_name = "huggingface/efficientformer-l3-300" processor = EfficientFormerImageProcessor.from_pretrained(model_name) model = EfficientFormerForImageClassificationWithTeacher.from_pretrained(model_name) | fb2125fe496335d47993fcff6abdd1f2 |

apache-2.0 | ['generated_from_trainer'] | false | funnel-transformer-xlarge_ner_wikiann This model is a fine-tuned version of [funnel-transformer/xlarge](https://huggingface.co/funnel-transformer/xlarge) on the wikiann dataset. It achieves the following results on the evaluation set: - Loss: 0.4023 - Precision: 0.8522 - Recall: 0.8634 - F1: 0.8577 - Accuracy: 0.9358 | 3703317fee31481929a15ee1795769e0 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - num_epochs: 5 | 0d3284741cbb314becf07e86c32779a9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.3193 | 1.0 | 5000 | 0.3116 | 0.8239 | 0.8296 | 0.8267 | 0.9260 | | 0.2836 | 2.0 | 10000 | 0.2846 | 0.8446 | 0.8498 | 0.8472 | 0.9325 | | 0.2237 | 3.0 | 15000 | 0.3258 | 0.8427 | 0.8542 | 0.8484 | 0.9332 | | 0.1303 | 4.0 | 20000 | 0.3801 | 0.8531 | 0.8634 | 0.8582 | 0.9362 | | 0.0867 | 5.0 | 25000 | 0.4023 | 0.8522 | 0.8634 | 0.8577 | 0.9358 | | 90bb7988f28e651d9f346371159f613f |

cc-by-4.0 | ['question generation'] | false | Model Card of `research-backup/t5-base-squadshifts-vanilla-reddit-qg` This model is fine-tuned version of [t5-base](https://huggingface.co/t5-base) for question generation task on the [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (dataset_name: reddit) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | b317f34498cf4a888958bc2f97b2df44 |

cc-by-4.0 | ['question generation'] | false | Overview - **Language model:** [t5-base](https://huggingface.co/t5-base) - **Language:** en - **Training data:** [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) (reddit) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation) - **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992) | 8ecda5ba142b0a72e5e48f7b38028add |

cc-by-4.0 | ['question generation'] | false | model prediction questions = model.generate_q(list_context="William Turner was an English painter who specialised in watercolour landscapes", list_answer="William Turner") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "research-backup/t5-base-squadshifts-vanilla-reddit-qg") output = pipe("generate question: <hl> Beyonce <hl> further expanded her acting career, starring as blues singer Etta James in the 2008 musical biopic, Cadillac Records.") ``` | 22b5bdfe473e5b5271ebf976a96de631 |

cc-by-4.0 | ['question generation'] | false | Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/research-backup/t5-base-squadshifts-vanilla-reddit-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.reddit.json) | | Score | Type | Dataset | |:-----------|--------:|:-------|:---------------------------------------------------------------------------| | BERTScore | 91.28 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_1 | 23.52 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_2 | 14.84 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_3 | 9.52 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | Bleu_4 | 6.37 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | METEOR | 19.69 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | MoverScore | 60.19 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | ROUGE_L | 23.41 | reddit | [lmqg/qg_squadshifts](https://huggingface.co/datasets/lmqg/qg_squadshifts) | | 8959ab5b6d6a44b26da340b28b1adbaa |

cc-by-4.0 | ['question generation'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_squadshifts - dataset_name: reddit - input_types: ['paragraph_answer'] - output_types: ['question'] - prefix_types: ['qg'] - model: t5-base - max_length: 512 - max_length_output: 32 - epoch: 5 - batch: 8 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 8 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/research-backup/t5-base-squadshifts-vanilla-reddit-qg/raw/main/trainer_config.json). | d6b6e0579ac32e4d13dfdfe617271110 |

mit | ['generated_from_trainer'] | false | xlmr-finetuned-ner This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the wikiann dataset. It achieves the following results on the evaluation set: - Loss: 0.1395 - Precision: 0.9044 - Recall: 0.9137 - F1: 0.9090 - Accuracy: 0.9649 | 4895dd3c52b07ee0af0bca5e797acc01 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.4215 | 1.0 | 938 | 0.1650 | 0.8822 | 0.8781 | 0.8802 | 0.9529 | | 0.1559 | 2.0 | 1876 | 0.1412 | 0.9018 | 0.9071 | 0.9045 | 0.9631 | | 0.1051 | 3.0 | 2814 | 0.1395 | 0.9044 | 0.9137 | 0.9090 | 0.9649 | | 2f72b41152969f6a8bb41895136febab |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-cola-custom-tokenizer-target-glue-stsb This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-cola-custom-tokenizer](https://huggingface.co/muhtasham/tiny-mlm-glue-cola-custom-tokenizer) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.3195 - Pearson: 0.6745 - Spearmanr: 0.6765 | 1be1754b0240b91cb72457f4489a4fe8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Pearson | Spearmanr | |:-------------:|:-----:|:----:|:---------------:|:-------:|:---------:| | 3.3068 | 2.78 | 500 | 1.9896 | 0.3651 | 0.3938 | | 1.4915 | 5.56 | 1000 | 1.3053 | 0.6677 | 0.6745 | | 1.2119 | 8.33 | 1500 | 1.2683 | 0.6873 | 0.6896 | | 1.0589 | 11.11 | 2000 | 1.1930 | 0.6929 | 0.6911 | | 0.9145 | 13.89 | 2500 | 1.2757 | 0.6787 | 0.6807 | | 0.8423 | 16.67 | 3000 | 1.3657 | 0.6751 | 0.6800 | | 0.7599 | 19.44 | 3500 | 1.3195 | 0.6745 | 0.6765 | | 4ae26ce176f35217883ba1b93a043da7 |

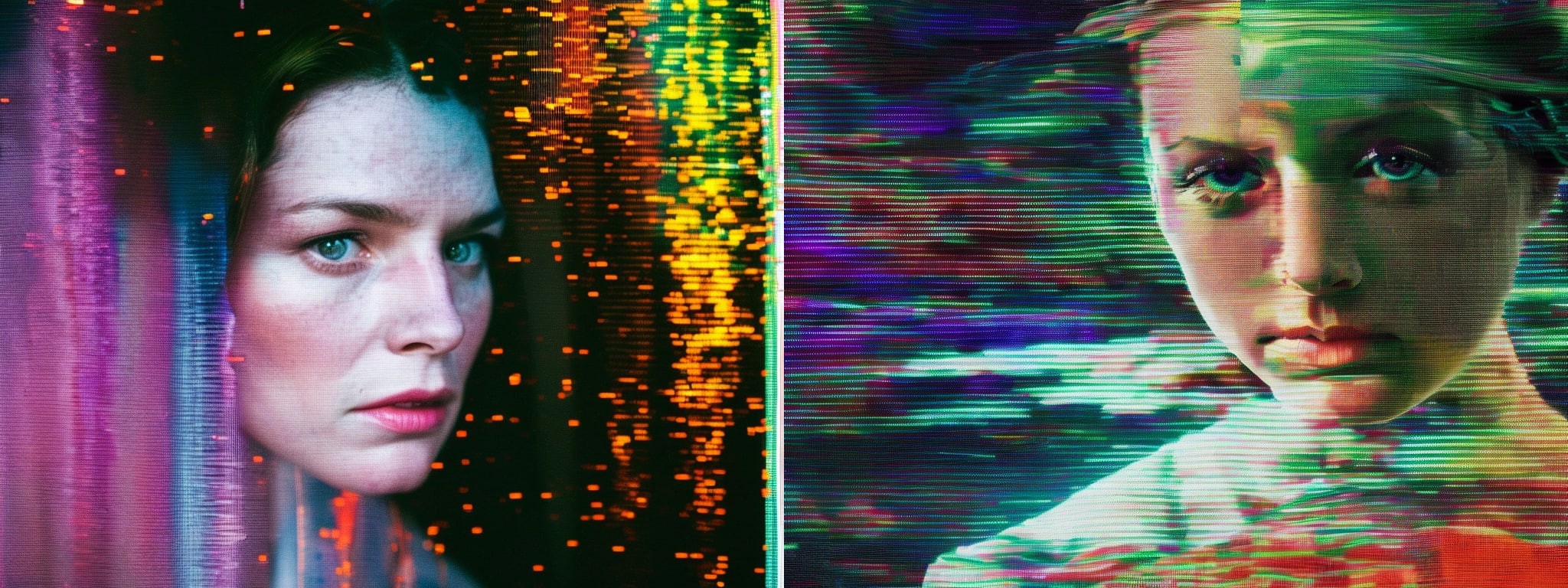

creativeml-openrail-m | [] | false |  [*EMBEDDING DOWNLOAD LINK*](https://huggingface.co/joachimsallstrom/Glitch-Embedding/resolve/main/glitch.pt) – Glitch is a finetuned embedding inspired by 80s and 90s VHS tape aesthetics (trained on SD 2.1 768 ema pruned). With it you can style images overall and affect skin, clothing and the general appearance of people, animals and more.  Glitch generates both painted and photorealistic styles, where subjects and objects become more or less part of the VHS like glitch. It works great with tv and film references,  and in an artsy, stylised sense to set a mood.  | 25288636aefd67e0c251c0abca0919cf |

creativeml-openrail-m | [] | false | Install instructions and usage 1. Place either the [*glitch.pt*](https://huggingface.co/joachimsallstrom/Glitch-Embedding/resolve/main/glitch.pt) or [*glitch.png*](https://huggingface.co/joachimsallstrom/Glitch-Embedding/resolve/main/glitch.png) file in the embeddings folder of your Automatic1111 installation. 3. Trigger the style in the prompt by writing ***glitch***. To get great results a very basic negative prompting is suggested: ***ugly cartoon drawing, blurry, blurry, blurry, blurry*** This negative prompt is used throughout images shown in this presentation, which is a shorter, edited version of Stability AI’s recommendation for SD 2.x. Turning on highres fix is also higly recommended to achieve the best results. | 7482c4dbe6336be2fedca8cd26e063ce |

creativeml-openrail-m | [] | false | Example prompts and settings TV/movie still:<br> **glitch, close-up portrait of Millie Bobby Brown as Eleven, Stranger Things 1 9 8 2 movie still, Mitchell FC 65 Camera 35 mm, heavy grain**<br> Negative prompt: **ugly cartoon drawing, blurry, blurry, blurry, blurry**<br> _Steps: 30, Sampler: DPM++ SDE Karras, CFG scale: 7, Seed: 3729481696, Size: 1152x768, Model hash: 4bdfc29c, Denoising strength: 0.7_ Dancer ripping up the glitch with her hand:<br> **a close-up portrait of a wise Megleno-Romanians girl miner dancing in Spain, by glitch, 2d animation**<br> Negative prompt: **ugly cartoon drawing, blurry, blurry, blurry, blurry**<br> _Steps: 30, Sampler: DPM++ SDE Karras, CFG scale: 7.5, Seed: 888774459, Size: 1024x768, Model hash: 4bdfc29c, Model: sd_v2_v2-1_768-ema-pruned, Batch size: 5, Batch pos: 4, Denoising strength: 0.7_  | 01758c2383015c8689eee2dc1ddf412f |

creativeml-openrail-m | [] | false | Credit Thanks to [*masslevel*](https://twitter.com/masslevel?s=21&t=_O7DiffGgoNtZD33jECV_g) who has contributed with a large number of images and knowledge on prompt settings. Thanks also to Klinter for providing the gif animation. | ed3cb7dd39ccb53acdacdb92df3212e0 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large-v2 Nepali This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the mozilla-foundation/common_voice_11_0 ne-NP dataset. It achieves the following results on the evaluation set: - Loss: 1.5723 - Wer: 56.0976 | 707e84e35f8a1c5c40840f19ee100140 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0 | 999.0 | 1000 | 1.5723 | 56.0976 | | fda1e38178b0e24aa95d477e5bf16173 |

apache-2.0 | ['generated_from_trainer'] | false | finetuning-misinfo-model-1000-Zhaohui This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7352 - Accuracy: 0.8226 - F1: 0.8571 | 819d2e62d7cb3a3f65de6bfd28f9adab |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-xsum This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.1681 - Rouge1: 60.7249 - Rouge2: 36.0768 - Rougel: 57.6761 - Rougelsum: 57.8618 - Gen Len: 17.9 | 47fdc5f5906264bc10dd10bf5d1b1005 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 100 - mixed_precision_training: Native AMP | 7d6c0a42eb1e7a0134ee415aae5c4ed2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 2 | 2.7817 | 13.2305 | 4.2105 | 11.0476 | 11.2063 | 13.0 | | No log | 2.0 | 4 | 2.7249 | 13.2305 | 4.2105 | 11.0476 | 11.2063 | 12.8 | | No log | 3.0 | 6 | 2.6053 | 13.1273 | 4.2105 | 10.9075 | 11.1008 | 13.1 | | No log | 4.0 | 8 | 2.4840 | 16.6829 | 6.2105 | 14.1984 | 14.6508 | 14.8 | | No log | 5.0 | 10 | 2.3791 | 16.6829 | 6.2105 | 14.1984 | 14.6508 | 14.8 | | No log | 6.0 | 12 | 2.2628 | 20.7742 | 9.5439 | 18.6218 | 18.9274 | 16.1 | | No log | 7.0 | 14 | 2.1714 | 20.7742 | 9.5439 | 18.6218 | 18.9274 | 16.1 | | No log | 8.0 | 16 | 2.0929 | 20.7742 | 9.5439 | 18.6218 | 18.9274 | 16.0 | | No log | 9.0 | 18 | 2.0069 | 20.7742 | 9.5439 | 18.6218 | 18.9274 | 16.0 | | No log | 10.0 | 20 | 1.9248 | 20.7742 | 8.4912 | 18.6218 | 18.9274 | 16.0 | | No log | 11.0 | 22 | 1.8535 | 20.7742 | 8.4912 | 18.6218 | 18.9274 | 16.0 | | No log | 12.0 | 24 | 1.7843 | 22.5821 | 10.8889 | 20.4396 | 20.9928 | 16.0 | | No log | 13.0 | 26 | 1.7115 | 22.5821 | 10.8889 | 20.4396 | 20.9928 | 16.0 | | No log | 14.0 | 28 | 1.6379 | 22.5821 | 10.8889 | 20.4396 | 20.9928 | 16.0 | | No log | 15.0 | 30 | 1.5689 | 22.5821 | 10.8889 | 20.4396 | 20.9928 | 16.0 | | No log | 16.0 | 32 | 1.5067 | 35.1364 | 17.6608 | 31.8254 | 31.8521 | 15.9 | | No log | 17.0 | 34 | 1.4543 | 41.7696 | 20.2005 | 38.8803 | 39.3886 | 16.9 | | No log | 18.0 | 36 | 1.4118 | 41.7696 | 20.2005 | 38.8803 | 39.3886 | 16.9 | | No log | 19.0 | 38 | 1.3789 | 41.5843 | 20.2005 | 38.6571 | 39.219 | 16.9 | | No log | 20.0 | 40 | 1.3543 | 41.5843 | 20.2005 | 38.6571 | 39.219 | 16.9 | | No log | 21.0 | 42 | 1.3332 | 42.6832 | 20.2005 | 39.7017 | 40.5046 | 16.9 | | No log | 22.0 | 44 | 1.3156 | 46.5429 | 22.7005 | 41.9156 | 42.7222 | 16.9 | | No log | 23.0 | 46 | 1.2999 | 49.5478 | 25.0555 | 44.8352 | 45.4884 | 16.9 | | No log | 24.0 | 48 | 1.2878 | 49.5478 | 25.0555 | 44.8352 | 45.4884 | 16.9 | | No log | 25.0 | 50 | 1.2777 | 49.5478 | 25.0555 | 44.8352 | 45.4884 | 16.9 | | No log | 26.0 | 52 | 1.2681 | 54.8046 | 28.7238 | 49.4767 | 49.699 | 17.4 | | No log | 27.0 | 54 | 1.2596 | 54.8046 | 28.7238 | 49.4767 | 49.699 | 17.4 | | No log | 28.0 | 56 | 1.2514 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 29.0 | 58 | 1.2450 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 30.0 | 60 | 1.2395 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 31.0 | 62 | 1.2340 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 32.0 | 64 | 1.2287 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 33.0 | 66 | 1.2233 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 34.0 | 68 | 1.2182 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 35.0 | 70 | 1.2127 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 36.0 | 72 | 1.2079 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 37.0 | 74 | 1.2035 | 58.1449 | 30.5444 | 52.7235 | 53.4075 | 18.9 | | No log | 38.0 | 76 | 1.1996 | 58.9759 | 30.5444 | 53.6606 | 54.2436 | 18.6 | | No log | 39.0 | 78 | 1.1962 | 58.9759 | 30.5444 | 53.6606 | 54.2436 | 18.6 | | No log | 40.0 | 80 | 1.1936 | 58.9759 | 30.5444 | 53.6606 | 54.2436 | 18.6 | | No log | 41.0 | 82 | 1.1912 | 58.9759 | 30.5444 | 53.6606 | 54.2436 | 18.6 | | No log | 42.0 | 84 | 1.1890 | 58.2807 | 30.5444 | 52.872 | 53.5594 | 18.5 | | No log | 43.0 | 86 | 1.1874 | 58.2807 | 30.5444 | 52.872 | 53.5594 | 18.5 | | No log | 44.0 | 88 | 1.1859 | 58.2807 | 30.5444 | 52.872 | 53.5594 | 18.5 | | No log | 45.0 | 90 | 1.1844 | 58.2807 | 30.5444 | 52.872 | 53.5594 | 18.5 | | No log | 46.0 | 92 | 1.1834 | 58.3968 | 30.5444 | 53.0602 | 53.7089 | 18.8 | | No log | 47.0 | 94 | 1.1822 | 58.3968 | 30.5444 | 53.0602 | 53.7089 | 18.8 | | No log | 48.0 | 96 | 1.1806 | 58.3968 | 30.5444 | 53.0602 | 53.7089 | 18.8 | | No log | 49.0 | 98 | 1.1786 | 58.3968 | 30.5444 | 53.0602 | 53.7089 | 18.8 | | No log | 50.0 | 100 | 1.1768 | 58.4517 | 31.303 | 54.18 | 54.6898 | 18.4 | | No log | 51.0 | 102 | 1.1761 | 58.4517 | 31.303 | 54.18 | 54.6898 | 18.4 | | No log | 52.0 | 104 | 1.1748 | 58.4517 | 31.303 | 54.18 | 54.6898 | 18.4 | | No log | 53.0 | 106 | 1.1743 | 58.4517 | 33.9839 | 55.5054 | 55.8799 | 18.4 | | No log | 54.0 | 108 | 1.1735 | 58.4517 | 33.9839 | 55.5054 | 55.8799 | 18.4 | | No log | 55.0 | 110 | 1.1731 | 58.4517 | 33.9839 | 55.5054 | 55.8799 | 18.4 | | No log | 56.0 | 112 | 1.1722 | 58.4517 | 33.9839 | 55.5054 | 55.8799 | 18.4 | | No log | 57.0 | 114 | 1.1714 | 58.4517 | 33.9839 | 55.5054 | 55.8799 | 18.4 | | No log | 58.0 | 116 | 1.1710 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 59.0 | 118 | 1.1702 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 60.0 | 120 | 1.1688 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 61.0 | 122 | 1.1682 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 62.0 | 124 | 1.1671 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 63.0 | 126 | 1.1669 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 64.0 | 128 | 1.1669 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 65.0 | 130 | 1.1668 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 66.0 | 132 | 1.1663 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 67.0 | 134 | 1.1665 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 68.0 | 136 | 1.1662 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 69.0 | 138 | 1.1663 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 70.0 | 140 | 1.1665 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 71.0 | 142 | 1.1664 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 72.0 | 144 | 1.1664 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 73.0 | 146 | 1.1662 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 74.0 | 148 | 1.1665 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 75.0 | 150 | 1.1662 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 76.0 | 152 | 1.1669 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 77.0 | 154 | 1.1668 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 78.0 | 156 | 1.1671 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 79.0 | 158 | 1.1674 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 80.0 | 160 | 1.1670 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 81.0 | 162 | 1.1671 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 82.0 | 164 | 1.1672 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 83.0 | 166 | 1.1675 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 84.0 | 168 | 1.1677 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 85.0 | 170 | 1.1677 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 86.0 | 172 | 1.1673 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 87.0 | 174 | 1.1673 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 88.0 | 176 | 1.1673 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 89.0 | 178 | 1.1673 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 90.0 | 180 | 1.1675 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 91.0 | 182 | 1.1675 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 92.0 | 184 | 1.1680 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 93.0 | 186 | 1.1680 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 94.0 | 188 | 1.1679 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 95.0 | 190 | 1.1679 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 96.0 | 192 | 1.1682 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 97.0 | 194 | 1.1681 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 98.0 | 196 | 1.1683 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 99.0 | 198 | 1.1683 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | No log | 100.0 | 200 | 1.1681 | 60.7249 | 36.0768 | 57.6761 | 57.8618 | 17.9 | | f3fc7b493b76db82dd4f67f6f2923462 |

apache-2.0 | ['generated_from_trainer'] | false | roberta-base-bne-finetuned-amazon_reviews_multi This model is a fine-tuned version of [BSC-TeMU/roberta-base-bne](https://huggingface.co/BSC-TeMU/roberta-base-bne) on the amazon_reviews_multi dataset. It achieves the following results on the evaluation set: - Loss: 0.2291 - Accuracy: 0.9343 | 7dc89f4faea1225cb2aca94645aa2f5b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.1909 | 1.0 | 1250 | 0.1784 | 0.9295 | | 0.1013 | 2.0 | 2500 | 0.2291 | 0.9343 | | 8ddb13f62d7aaedb61e3f39c6ced864a |

apache-2.0 | ['generated_from_trainer'] | false | swin-tiny-patch4-window7-224-finetuned-3e This model is a fine-tuned version of [microsoft/swin-tiny-patch4-window7-224](https://huggingface.co/microsoft/swin-tiny-patch4-window7-224) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.1065 - Accuracy: 0.9606 | f8fcb9ba4260cb637ca0c23650fef83d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4549 | 1.0 | 527 | 0.2910 | 0.8857 | | 0.2838 | 2.0 | 1054 | 0.1524 | 0.9410 | | 0.254 | 3.0 | 1581 | 0.1065 | 0.9606 | | c58df9931be931f9f78fb34ef6c1221a |

cc | ['generated_from_trainer'] | false | racism-finetuned-detests-prueba This model is a fine-tuned version of [davidmasip/racism](https://huggingface.co/davidmasip/racism) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.3034 - F1: 0.6222 | 153da09b3fb9c34a1bcc18b29ffa827b |

cc | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 50 - eval_batch_size: 50 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | 64a1b15408f6200630aecbe627fc2126 |

cc | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.0001 | 1.0 | 49 | 1.0331 | 0.6667 | | 0.0 | 2.0 | 98 | 1.2473 | 0.5992 | | 0.0 | 3.0 | 147 | 1.2280 | 0.6227 | | 0.0 | 4.0 | 196 | 1.2530 | 0.6245 | | 0.0 | 5.0 | 245 | 1.2708 | 0.6222 | | 0.0 | 6.0 | 294 | 1.2827 | 0.6222 | | 0.0 | 7.0 | 343 | 1.2918 | 0.6222 | | 0.0 | 8.0 | 392 | 1.2982 | 0.6222 | | 0.0 | 9.0 | 441 | 1.3021 | 0.6222 | | 0.0 | 10.0 | 490 | 1.3034 | 0.6222 | | 3e51cfbcb8fbee95818f085f469bccf2 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-cola-from-scratch-custom-tokenizer-expand-vocab-target-glue-cola This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-cola-from-scratch-custom-tokenizer-expand-vocab](https://huggingface.co/muhtasham/tiny-mlm-glue-cola-from-scratch-custom-tokenizer-expand-vocab) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6205 - Matthews Correlation: 0.0 | 3daad064a3d9ebd642a6928ca6acbc3c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.6103 | 1.87 | 500 | 0.6214 | 0.0 | | 0.6073 | 3.73 | 1000 | 0.6197 | 0.0 | | 0.607 | 5.6 | 1500 | 0.6183 | 0.0 | | 0.6065 | 7.46 | 2000 | 0.6205 | 0.0 | | 9bbcfac9fd6950731ea9e3224cfc7f23 |

apache-2.0 | ['generated_from_trainer'] | false | all-roberta-large-v1-travel-2-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.1384 - Accuracy: 0.4289 | de5b84aaee6ec5b137dd4f1cec669600 |

apache-2.0 | [] | false | doc2query/all-with_prefix-t5-base-v1

This is a [doc2query](https://arxiv.org/abs/1904.08375) model based on T5 (also known as [docT5query](https://cs.uwaterloo.ca/~jimmylin/publications/Nogueira_Lin_2019_docTTTTTquery-v2.pdf)).

It can be used for:

- **Document expansion**: You generate for your paragraphs 20-40 queries and index the paragraphs and the generates queries in a standard BM25 index like Elasticsearch, OpenSearch, or Lucene. The generated queries help to close the lexical gap of lexical search, as the generate queries contain synonyms. Further, it re-weights words giving important words a higher weight even if they appear seldomn in a paragraph. In our [BEIR](https://arxiv.org/abs/2104.08663) paper we showed that BM25+docT5query is a powerful search engine. In the [BEIR repository](https://github.com/UKPLab/beir) we have an example how to use docT5query with Pyserini.

- **Domain Specific Training Data Generation**: It can be used to generate training data to learn an embedding model. On [SBERT.net](https://www.sbert.net/examples/unsupervised_learning/query_generation/README.html) we have an example how to use the model to generate (query, text) pairs for a given collection of unlabeled texts. These pairs can then be used to train powerful dense embedding models.

| 6ff0cbad0177c8f7c9515d0544b92270 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.