license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['generated_from_trainer'] | false | bert-large-uncased_cls_sst2 This model is a fine-tuned version of [bert-large-uncased](https://huggingface.co/bert-large-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3787 - Accuracy: 0.9255 | 455ffbff5afefb8e6b55b6eb7540e624 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 433 | 0.4188 | 0.8578 | | 0.3762 | 2.0 | 866 | 0.4894 | 0.8968 | | 0.3253 | 3.0 | 1299 | 0.3313 | 0.9094 | | 0.1601 | 4.0 | 1732 | 0.3399 | 0.9232 | | 0.0744 | 5.0 | 2165 | 0.3787 | 0.9255 | | 26346d0e2e3039a4101607839fcebcad |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1457 - F1: 0.8665 | 87e5ad85e2000a3fe238f8e2ad82bce8 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2537 | 1.0 | 1049 | 0.1758 | 0.8236 | | 0.1335 | 2.0 | 2098 | 0.1442 | 0.8494 | | 0.0811 | 3.0 | 3147 | 0.1457 | 0.8665 | | e5a60392a3451b1cf23ea080a63bc1e5 |

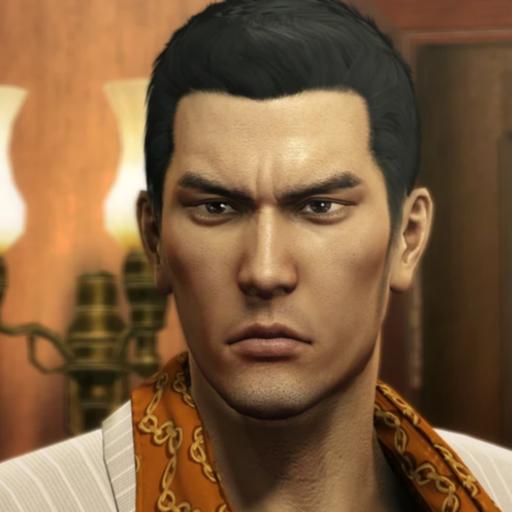

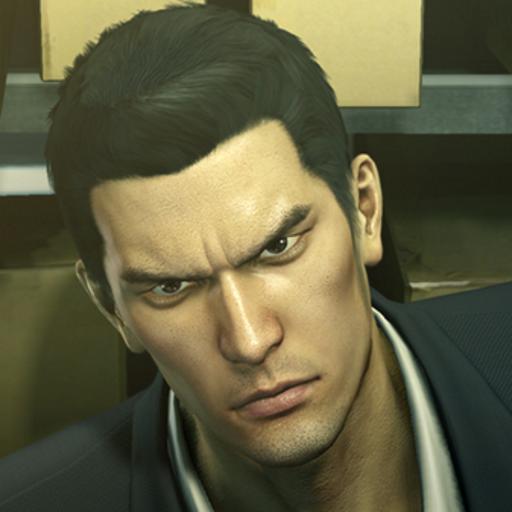

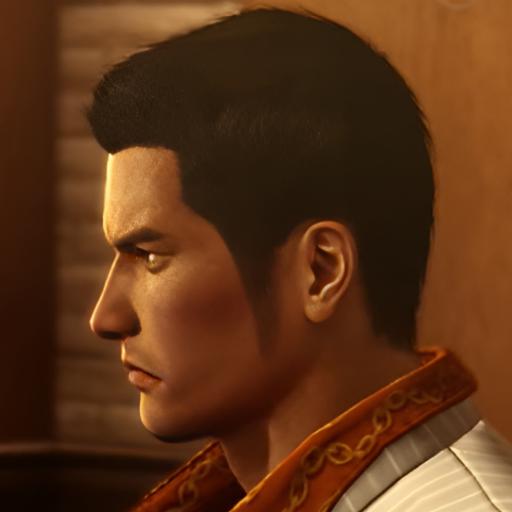

mit | [] | false | model by thesun1094224 This your the Stable Diffusion model fine-tuned the yakuza-0-kiryu-kazuma concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **photo of sks kiryu** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:      | 6f2f0e5291df248ce13ec9074f65d1d1 |

apache-2.0 | ['generated_from_keras_callback'] | false | avialfont/dummy-translation-marian-kde4-en-to-fr This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-fr](https://huggingface.co/Helsinki-NLP/opus-mt-en-fr) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.9807 - Validation Loss: 0.8658 - Epoch: 0 | 98ac924799f7458112b4fb52552d3b5f |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5e-05, 'decay_steps': 17733, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: float32 | 1f15c866833bee3df83839a68c4bb814 |

apache-2.0 | [] | false | Pretrained models for our paper (https://arxiv.org/abs/2210.08431) ```bibtex @inproceedings{wu-etal-2022-modeling, title = "Modeling Context With Linear Attention for Scalable Document-Level Translation", author = "Zhaofeng Wu and Hao Peng and Nikolaos Pappas and Noah A. Smith", booktitle = "Findings of the Association for Computational Linguistics: EMNLP 2022", month = dec, year = "2022", publisher = "Association for Computational Linguistics", } ``` Please see the "Files and versions" tab for the models. You can find our IWSLT models and our OpenSubtitles models that are early-stopped based on BLEU and consistency scores, respectively. The `c` part in the checkpoint name refers to the number of context sentences used; it is the same as the sliding window size (the `L` in our paper) minus one. | 7992128ac3e3d8275176c60d88bca179 |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2r_es_vp-100k_accent_surpeninsular-5_nortepeninsular-5_s324 Fine-tuned [facebook/wav2vec2-large-100k-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-100k-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 18e8f65a1b4fca2f3fce60d3d84cda9c |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | Zu-En_update This model is a fine-tuned version of [kabelomalapane/model_zu-en_updated](https://huggingface.co/kabelomalapane/model_zu-en_updated) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.9399 - Bleu: 27.9608 | d733d2f4c0b475392d50c4acf58e50dd |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 2.1017 | 1.0 | 1173 | 1.8404 | 29.1031 | | 1.7497 | 2.0 | 2346 | 1.8318 | 28.9036 | | 1.523 | 3.0 | 3519 | 1.8250 | 28.8415 | | 1.364 | 4.0 | 4692 | 1.8551 | 28.6215 | | 1.2462 | 5.0 | 5865 | 1.8684 | 28.3783 | | 1.1515 | 6.0 | 7038 | 1.8948 | 28.3372 | | 1.0796 | 7.0 | 8211 | 1.9109 | 28.1603 | | 1.0215 | 8.0 | 9384 | 1.9274 | 28.0309 | | 0.9916 | 9.0 | 10557 | 1.9323 | 27.9472 | | 0.9583 | 10.0 | 11730 | 1.9399 | 27.9260 | | c4c6e9be77e4a5a197f9bc917dcbebdc |

apache-2.0 | ['vision', 'image-classification'] | false | ConvNeXT (large-sized model) ConvNeXT model trained on ImageNet-22k at resolution 224x224. It was introduced in the paper [A ConvNet for the 2020s](https://arxiv.org/abs/2201.03545) by Liu et al. and first released in [this repository](https://github.com/facebookresearch/ConvNeXt). Disclaimer: The team releasing ConvNeXT did not write a model card for this model so this model card has been written by the Hugging Face team. | a7ae0b6592d266ff7f10981c57e8de99 |

apache-2.0 | ['vision', 'image-classification'] | false | How to use Here is how to use this model to classify an image of the COCO 2017 dataset into one of the 1,000 ImageNet classes: ```python from transformers import ConvNextFeatureExtractor, ConvNextForImageClassification import torch from datasets import load_dataset dataset = load_dataset("huggingface/cats-image") image = dataset["test"]["image"][0] feature_extractor = ConvNextFeatureExtractor.from_pretrained("facebook/convnext-large-224-22k") model = ConvNextForImageClassification.from_pretrained("facebook/convnext-large-224-22k") inputs = feature_extractor(image, return_tensors="pt") with torch.no_grad(): logits = model(**inputs).logits | 44af6d816b746581cb9e6dbc506191dd |

apache-2.0 | ['generated_from_trainer'] | false | CR_ELECTRA_5E This model is a fine-tuned version of [google/electra-base-discriminator](https://huggingface.co/google/electra-base-discriminator) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.3778 - Accuracy: 0.9133 | d2cdda44b9b5d935750ca5915d82510e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.643 | 0.33 | 50 | 0.6063 | 0.66 | | 0.5093 | 0.66 | 100 | 0.4277 | 0.84 | | 0.2986 | 0.99 | 150 | 0.3019 | 0.8933 | | 0.2343 | 1.32 | 200 | 0.2910 | 0.9 | | 0.1808 | 1.66 | 250 | 0.2892 | 0.9133 | | 0.1922 | 1.99 | 300 | 0.3397 | 0.8867 | | 0.1623 | 2.32 | 350 | 0.2847 | 0.92 | | 0.1206 | 2.65 | 400 | 0.2918 | 0.9133 | | 0.1518 | 2.98 | 450 | 0.3163 | 0.9067 | | 0.1029 | 3.31 | 500 | 0.3667 | 0.8867 | | 0.1133 | 3.64 | 550 | 0.3562 | 0.9067 | | 0.0678 | 3.97 | 600 | 0.3394 | 0.9067 | | 0.0461 | 4.3 | 650 | 0.3821 | 0.9067 | | 0.0917 | 4.64 | 700 | 0.3774 | 0.9133 | | 0.0633 | 4.97 | 750 | 0.3778 | 0.9133 | | 8a3424fb7c6d062cc33cd17b24ea347e |

apache-2.0 | [] | false | Model Description This model accepts as input un-punctuated, lower-case text and performs the following analytics: * Punctuation restoration * True-casing * Sentence-boundary detection. Quick example of input/output: ``` Input: hello world what's up Outputs: Hello, World. What's up? ``` (note that, technically, 'world' is a proper noun here and should be capitalized) This model was trained in "one-pass" mode, meaning that it encodes the input text once and performs all three analytics in parallel. This is a trade-off of throughput for discarding the conditional probabilities of each analytic; e.g., true-casing should be a function of sentence boundaries, but they are predicted jointly by this model. For each subword in the input sequence, this model predicts the following: * `N` pre-punctuation tokens, where `N` is the number of characters in the subword, to insert before each character * `N` post-punctuation tokens, where `N` is the number of characters in the subword, to insert after each character * `N` capitalization predictions, where `N` is the number of characters in the subword, whether to capitalize each letter * One sentence boundary prediction, whether this subword is the end of a sentence (if a full stop is predicted after the final character of this subword) The "pre-punctuation" tokens are predicted before every character to, in practice, predict inverted question marks preceding Spanish questions. The "post-punctuation" tokens are predicted after every character to predict typical punctuation, e.g., "Hello, world.", as well as within acronyms, e.g., "U.S.", and insert punctuation into continuous-script languages such as Chinese and Japanese in a language-agnostic manner. True-casing is predicted on a character level to account for all types of words such as "Hello", "AMC", "U.S.", "McDonald", etc. Sentence boundaries are predicted at the subword level, rather than the character level, presuming that tokenizers rarely, if ever, produce tokens which cross the boundary between two sentences. Sentence boundary predictions are applied only if a full-stop token is predicted (or present in the training example). | 236b55d669e5240f3753c8f5619fd7f5 |

apache-2.0 | [] | false | Usage This model is driven by code that lives in a personal branch of NeMo. This branch must be installed to run this model. If you have NeMo and the Github CLI installed, the code can be pulled from the corresponding pull request: ```shell $ cd /path/to/nemo/ $ gh pr checkout 4637 ``` Or to pull the full codebase into a separate repo: ```shell $ git clone -b punct_cap_seg https://github.com/1-800-BAD-CODE/nemo $ cd nemo $ ./reinstall.sh ``` The model provides an `infer` method which accepts as input un-punctuated, lower-cased text and returns as output for each input sentence a list of new sentences, segmented on sentence boundaries, with punctuation inserted and true-casing applied. Inference is language-agnostic, and multiple language can appear in the same batch (though, sentences in multiple languages should not be concatenated). | d011f19d1638c4f7e65da797198ef049 |

apache-2.0 | [] | false | Example Input ``` from typing import List import nemo.collections.nlp as nemo_nlp hf_model_name = "1-800-BAD-CODE/punct_cap_seg_22lang1pass_xlmr" hf_model = nemo_nlp.models.PunctCapSegModel.from_pretrained(hf_model_name, map_location="cpu") | 3b1624a64553405c0c1de5c2fb7ac93a |

apache-2.0 | [] | false | function expects a batch. def print_inputs_outputs(inputs: List[str], outputs: List[List[str]]): for next_input, next_outputs in zip(inputs, outputs): print(f"Input: {next_input}") print(f"Outputs: ") for output_text in next_outputs: print(f" {output_text}") print() | ece469e0a8962104c09e4ab3a2c1d2e4 |

apache-2.0 | [] | false | For Spanish, the model should predict inverted punctuation as well. input_texts = ["no he perdonado ni a ti ni a maria por qué no juegas conmigo"] output_texts = hf_model.infer(input_texts) print_inputs_outputs(input_texts, output_texts) | fb88e2e775c597931b35fd86f09172de |

apache-2.0 | [] | false | Acronyms should be punctuated and true-cased, as well. Ideally, sentence boundaries will not be predicted at these characters. input_texts = [ "george w bush was the president of the us for 8 years he left office in january 2009 and was succeeded by barack " "obama prior to his presidency he was the governor of texas" ] output_texts = hf_model.infer(input_texts) print_inputs_outputs(input_texts, output_texts) | b2d0ffe510e4b126b9ee79c850e5b285 |

apache-2.0 | [] | false | Large blob of text from LibriSpeech dev clean input_texts = [ "ardent in the prosecution of heresy cyril auspiciously opened his reign by oppressing the novatians the most innocent and harmless of the sectaries without any legal sentence without any royal mandate the patriarch at the dawn of day led a seditious multitude to the attack of the synagogues such crimes would have deserved the animadversion of the magistrate but in this promiscuous outrage the innocent were confounded with the guilty and alexandria was impoverished by the loss of a wealthy and industrious colony the zeal of cyril exposed him to the penalties of the julian law but in a feeble government and a superstitious age he was secure of impunity and even of praise orestes complained but his just complaints were too quickly forgotten by the ministers of theodosius and too deeply remembered by a priest who affected to pardon and continued to hate the praefect of egypt a rumor was spread among the christians that the daughter of theon was the only obstacle to the reconciliation of the praefect and the archbishop and that obstacle was speedily removed which oppressed the metropolitans of europe and asia invaded the provinces of antioch and alexandria and measured their diocese by the limits of the empire exterminate with me the heretics and with you i will exterminate the persians at these blasphemous sounds the pillars of the sanctuary were shaken but the vatican received with open arms the messengers of egypt" ] output_texts = hf_model.infer(input_texts) print_inputs_outputs(input_texts, output_texts) ``` | f84b68c622aff9af4c6a03f9f438ec8c |

apache-2.0 | [] | false | Expected output (sans logging): ``` Input: i saw you at the park yesterday what were you doing there Outputs: I saw you at the park yesterday. What were you doing there? Input: رأيتك في الحديقة أمس ماذا كنت تفعل هناك Outputs: رأيتك في الحديقة أمس؟ ماذا كنت تفعل هناك؟ Input: video sam te juče u parku šta si tamo radio Outputs: Video sam te juče u parku. Šta si tamo radio? Input: viděl jsem tě včera v parku co jsi tam dělal Outputs: Viděl jsem tě včera v parku. Co jsi tam dělal? Input: ich habe dich gestern im park gesehen was hast du dort gemacht Outputs: Ich habe dich gestern im Park gesehen. Was hast du dort gemacht? Input: na nägin sind eile pargis mida sa seal tegid Outputs: Na Nägin sind eile pargis. Mida sa seal tegid? Input: te vi en el parque ayer que estabas haciendo ahi Outputs: Te vi en el parque ayer. ¿Que estabas haciendo ahi? Input: näin sinut eilen puistossa mitä teit siellä Outputs: Näin sinut eilen puistossa. Mitä teit siellä? Input: je t'ai vu au parc hier qu'est ce que tu faisais là Outputs: Je t'ai vu au parc hier. Qu'est ce que tu faisais là? Input: मैंने तुम्हें कल पार्क में देखा था तुम वहाँ क्या कर रहे थे Outputs: मैंने तुम्हें कल पार्क में देखा था। तुम वहाँ क्या कर रहे थे? Input: ti ho visto ieri al parco cosa ci facevi là Outputs: Ti ho visto ieri al parco. Cosa ci facevi là? Input: ég sá þig í garðinum í gær hvað varstu að gera þar Outputs: Ég sá þig í garðinum í gær. Hvað varstu að gera þar? Input: 私は昨日公園であなたを見ましたそこで何をしていたのですか Outputs: 私は昨日、公園であなたを見ました。 そこで、何をしていたのですか? Input: vakar mačiau tave parke ka tu ten veikei Outputs: Vakar mačiau tave parke. Ka tu ten veikei? Input: es tevi vakar redzēju parkā ko tu tur darīji Outputs: Es tevi vakar redzēju parkā. Ko tu tur darīji? Input: widziałem cię wczoraj w parku co ty tam robiłeś Outputs: Widziałem cię wczoraj w parku. Co ty tam robiłeś? Input: eu te vi no parque ontem o que você estava fazendo lá Outputs: Eu te vi no parque ontem. O que você estava fazendo lá? Input: te am văzut ieri în parc ce făceai acolo Outputs: Te am văzut ieri în parc. Ce făceai acolo? Input: я видел тебя вчера в парке что ты здесь делал Outputs: Я видел тебя вчера в парке. Что ты здесь делал? Input: dün seni parkta gördüm orada ne yapıyordun Outputs: Dün seni parkta gördüm. Orada ne yapıyordun? Input: я бачив тебе вчора в парку що ти там робив Outputs: Я бачив тебе вчора в парку. Що ти там робив? Input: 我昨天在公园见过你你在那干什么 Outputs: 我昨天在公园见过你。 你在那干什么? Input: no he perdonado ni a ti ni a maria por qué no juegas conmigo Outputs: No he perdonado ni a ti, ni a Maria. ¿Por qué no juegas conmigo? Input: george w bush was the president of the us for 8 years he left office in january 2009 and was succeeded by barack obama prior to his presidency he was the governor of texas Outputs: George W. Bush was the president of the U.S. for 8 years. He left office in January 2009 and was succeeded by Barack Obama. Prior to his presidency, he was the governor of Texas. Input: ardent in the prosecution of heresy cyril auspiciously opened his reign by oppressing the novatians the most innocent and harmless of the sectaries without any legal sentence without any royal mandate the patriarch at the dawn of day led a seditious multitude to the attack of the synagogues such crimes would have deserved the animadversion of the magistrate but in this promiscuous outrage the innocent were confounded with the guilty and alexandria was impoverished by the loss of a wealthy and industrious colony the zeal of cyril exposed him to the penalties of the julian law but in a feeble government and a superstitious age he was secure of impunity and even of praise orestes complained but his just complaints were too quickly forgotten by the ministers of theodosius and too deeply remembered by a priest who affected to pardon and continued to hate the praefect of egypt a rumor was spread among the christians that the daughter of theon was the only obstacle to the reconciliation of the praefect and the archbishop and that obstacle was speedily removed which oppressed the metropolitans of europe and asia invaded the provinces of antioch and alexandria and measured their diocese by the limits of the empire exterminate with me the heretics and with you i will exterminate the persians at these blasphemous sounds the pillars of the sanctuary were shaken but the vatican received with open arms the messengers of egypt Outputs: Ardent in the prosecution of heresy, Cyril auspiciously opened his reign by oppressing the Novatians, the most innocent and harmless of the sectaries. Without any legal sentence, without any royal mandate, The patriarch, at the dawn of day, led a seditious multitude to the attack of the synagogues. Such crimes would have deserved the animadversion of the magistrate. But in this promiscuous outrage, the innocent were confounded with the guilty and Alexandria was impoverished by the loss of a wealthy and industrious colony. The zeal of Cyril exposed him to the penalties of the Julian law. But in a feeble government and a superstitious age, he was secure of impunity and even of praise. Orestes complained But his just complaints were too quickly forgotten by the ministers of Theodosius and too deeply remembered by a priest who affected to pardon and continued to hate. the Praefect of Egypt, A rumor was spread among the Christians that the daughter of Theon was the only obstacle to the reconciliation of the praefect and the Archbishop. And that obstacle was speedily removed which oppressed the metropolitans of Europe and Asia. Invaded the provinces of Antioch and Alexandria and measured their diocese by the limits of the empire. Exterminate with me the Heretics, and with you, I will exterminate the Persians. At these blasphemous sounds, the pillars of the sanctuary were shaken, but the Vatican received with open arms the messengers of Egypt. ``` Note that punctuation predictions are language-specific, without requiring language tags. In particular, Hindi input predicts danda full stops, Chinese and Japanese input predicts Chinese full-width full stops, Arabic input predicts reversed question marks, and Spanish input predicts inverted question marks before questions. Unfortunately, the SentencePiece model used in XLM Roberta uses non-identity normalization, so Chinese question marks are lost and Latin question marks are predicted instead. | dfe63ddc647f3a4c1a8b5e3445f22556 |

apache-2.0 | [] | false | Training data This model was trained on a subset of [News Crawl](https://data.statmt.org/news-crawl/). Data was filtered using heuristics to provide clean training data for these particular analytics, e.g., whether each line ended with a full stop, whether the first character was capitalized, etc. | 77a83c9d37766b6397912f58fbe9c8ed |

apache-2.0 | [] | false | Limitations * This model was trained on news data, so it may perform worse on informal or conversational data. * This model was trained with limited hardware, and was not trained to convergence. It was trained for ~100k steps with a global batch size of 64. * This model was trained on a maximum sequence length of 80 subwords. Longer inputs at inference time will be transparently wrapped into separate batch elements and re-joined at the backend, but may produce non-optimal results for long inputs, especially near the wraparound points. | bb69bfe71e2524a17653d6756f13f4a8 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ner This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1080 - Precision: 0.9631 - Recall: 0.9740 - F1: 0.9685 - Accuracy: 0.9774 | 09c7a0aa2ef388a82d8e3f19625b982e |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 14 | d8c838228dc4c6921e399e61541ac6b4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.1714 | 1.0 | 1492 | 0.1178 | 0.9209 | 0.9470 | 0.9337 | 0.9613 | | 0.1018 | 2.0 | 2984 | 0.1062 | 0.9391 | 0.9546 | 0.9468 | 0.9668 | | 0.0826 | 3.0 | 4476 | 0.0878 | 0.9460 | 0.9577 | 0.9518 | 0.9705 | | 0.0621 | 4.0 | 5968 | 0.0945 | 0.9507 | 0.9663 | 0.9584 | 0.9728 | | 0.0567 | 5.0 | 7460 | 0.0891 | 0.9562 | 0.9690 | 0.9625 | 0.9752 | | 0.0422 | 6.0 | 8952 | 0.0805 | 0.9572 | 0.9699 | 0.9635 | 0.9776 | | 0.0337 | 7.0 | 10444 | 0.0940 | 0.9575 | 0.9685 | 0.9629 | 0.9752 | | 0.0282 | 8.0 | 11936 | 0.0940 | 0.9584 | 0.9717 | 0.9650 | 0.9756 | | 0.0239 | 9.0 | 13428 | 0.0939 | 0.9579 | 0.9717 | 0.9647 | 0.9769 | | 0.0213 | 10.0 | 14920 | 0.0980 | 0.9618 | 0.9745 | 0.9681 | 0.9775 | | 0.0192 | 11.0 | 16412 | 0.1075 | 0.9606 | 0.9731 | 0.9668 | 0.9764 | | 0.0156 | 12.0 | 17904 | 0.1046 | 0.9623 | 0.9734 | 0.9678 | 0.9774 | | 0.0134 | 13.0 | 19396 | 0.1055 | 0.9614 | 0.9735 | 0.9674 | 0.9769 | | 0.0125 | 14.0 | 20888 | 0.1080 | 0.9631 | 0.9740 | 0.9685 | 0.9774 | | 8683d89f9eb7ac402f0c67e7c6f18263 |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | finetuned_HelsinkiNLP-opus-mt-en-vi_PhoMT This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-vi](https://huggingface.co/Helsinki-NLP/opus-mt-en-vi) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.3069 - Bleu: 42.4251 | 20f8e558b43bdadbe47377b971c6d30d |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | |:-------------:|:-----:|:------:|:---------------:|:-------:| | 1.4437 | 1.0 | 186125 | 1.3648 | 40.6353 | | 1.3748 | 2.0 | 372250 | 1.3362 | 41.4991 | | 1.3182 | 3.0 | 558375 | 1.3224 | 41.9860 | | 1.2829 | 4.0 | 744500 | 1.3113 | 42.2649 | | 1.2641 | 5.0 | 930625 | 1.3069 | 42.4233 | | aef4e2f4502a265e7d8e791de33f0b75 |

apache-2.0 | ['whisper-event'] | false | Whisper Telugu Tiny This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the Telugu data available from multiple publicly available ASR corpuses. It has been fine-tuned as a part of the Whisper fine-tuning sprint. | 0d0b2c94d1f813b10ab4ce26713e8245 |

apache-2.0 | ['whisper-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 88 - eval_batch_size: 88 - seed: 22 - optimizer: adamw_bnb_8bit - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 15000 - training_steps: 14652 (terminated upon convergence. Initially set to 85952 steps) - mixed_precision_training: True | b117a7a837ebde060f76a2b8bd8eb62d |

mit | [] | false | M2M100 418M M2M100 is a multilingual encoder-decoder (seq-to-seq) model trained for Many-to-Many multilingual translation. It was introduced in this [paper](https://arxiv.org/abs/2010.11125) and first released in [this](https://github.com/pytorch/fairseq/tree/master/examples/m2m_100) repository. The model that can directly translate between the 9,900 directions of 100 languages. To translate into a target language, the target language id is forced as the first generated token. To force the target language id as the first generated token, pass the `forced_bos_token_id` parameter to the `generate` method. *Note: `M2M100Tokenizer` depends on `sentencepiece`, so make sure to install it before running the example.* To install `sentencepiece` run `pip install sentencepiece` ```python from transformers import AutoConfig, AutoTokenizer from optimum.onnxruntime import ORTModelForSeq2SeqLM from optimum.pipelines import pipeline hi_text = "जीवन एक चॉकलेट बॉक्स की तरह है।" chinese_text = "生活就像一盒巧克力。" model = ORTModelForSeq2SeqLM.from_pretrained("optimum/m2m100_418M") tokenizer = AutoTokenizer.from_pretrained("optimum/m2m100_418M") | cef57e996aea6ab2ad9761ccf3c29f00 |

apache-2.0 | ['translation'] | false | opus-mt-tr-en * source languages: tr * target languages: en * OPUS readme: [tr-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/tr-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/tr-en/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/tr-en/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/tr-en/opus-2020-01-16.eval.txt) | c320b8b300613c9f9cf3e74cf16477c8 |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | newsdev2016-entr.tr.en | 27.6 | 0.548 | | newstest2016-entr.tr.en | 25.2 | 0.532 | | newstest2017-entr.tr.en | 24.7 | 0.530 | | newstest2018-entr.tr.en | 27.0 | 0.547 | | Tatoeba.tr.en | 63.5 | 0.760 | | 95129b4e653f75682ab9805fb2cb42bb |

apache-2.0 | ['generated_from_trainer'] | false | recipe-distilbert-upper-Is This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.8565 | e8b635ed8946edbfebe5b71bc13d0f00 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.6309 | 1.0 | 1305 | 1.2607 | | 1.2639 | 2.0 | 2610 | 1.1291 | | 1.1592 | 3.0 | 3915 | 1.0605 | | 1.0987 | 4.0 | 5220 | 1.0128 | | 1.0569 | 5.0 | 6525 | 0.9796 | | 1.0262 | 6.0 | 7830 | 0.9592 | | 1.0032 | 7.0 | 9135 | 0.9352 | | 0.9815 | 8.0 | 10440 | 0.9186 | | 0.967 | 9.0 | 11745 | 0.9086 | | 0.9532 | 10.0 | 13050 | 0.8973 | | 0.9436 | 11.0 | 14355 | 0.8888 | | 0.9318 | 12.0 | 15660 | 0.8835 | | 0.9243 | 13.0 | 16965 | 0.8748 | | 0.9169 | 14.0 | 18270 | 0.8673 | | 0.9117 | 15.0 | 19575 | 0.8610 | | 0.9066 | 16.0 | 20880 | 0.8562 | | 0.9028 | 17.0 | 22185 | 0.8566 | | 0.901 | 18.0 | 23490 | 0.8583 | | 0.8988 | 19.0 | 24795 | 0.8557 | | 0.8958 | 20.0 | 26100 | 0.8565 | | 1f31497c629052cb6179a2f59edc87c1 |

apache-2.0 | ['text-classification', 'generated_from_trainer'] | false | distilroberta-base-mrpc-glue-oscar-salas7 This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the datasetX dataset. It achieves the following results on the evaluation set: - Loss: 1.7444 - Accuracy: 0.2143 | a270fc9fd3be7d13aaecdd6d905df03d |

apache-2.0 | ['generated_from_trainer'] | false | swin-tiny-patch4-window7-224-finetuned-woody_130epochs This model is a fine-tuned version of [microsoft/swin-tiny-patch4-window7-224](https://huggingface.co/microsoft/swin-tiny-patch4-window7-224) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.4550 - Accuracy: 0.8921 | 044a2b0b7e6c37bdde125907e7e56fd4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6694 | 1.0 | 58 | 0.6370 | 0.6594 | | 0.6072 | 2.0 | 116 | 0.5813 | 0.7030 | | 0.6048 | 3.0 | 174 | 0.5646 | 0.7030 | | 0.5849 | 4.0 | 232 | 0.5778 | 0.6970 | | 0.5671 | 5.0 | 290 | 0.5394 | 0.7236 | | 0.5575 | 6.0 | 348 | 0.5212 | 0.7382 | | 0.568 | 7.0 | 406 | 0.5218 | 0.7358 | | 0.5607 | 8.0 | 464 | 0.5183 | 0.7527 | | 0.5351 | 9.0 | 522 | 0.5138 | 0.7467 | | 0.5459 | 10.0 | 580 | 0.5290 | 0.7394 | | 0.5454 | 11.0 | 638 | 0.5212 | 0.7345 | | 0.5291 | 12.0 | 696 | 0.5130 | 0.7576 | | 0.5378 | 13.0 | 754 | 0.5372 | 0.7503 | | 0.5264 | 14.0 | 812 | 0.6089 | 0.6861 | | 0.4909 | 15.0 | 870 | 0.4852 | 0.7636 | | 0.5591 | 16.0 | 928 | 0.4817 | 0.76 | | 0.4966 | 17.0 | 986 | 0.5673 | 0.6933 | | 0.4988 | 18.0 | 1044 | 0.5131 | 0.7418 | | 0.5339 | 19.0 | 1102 | 0.4998 | 0.7394 | | 0.4804 | 20.0 | 1160 | 0.4655 | 0.7733 | | 0.503 | 21.0 | 1218 | 0.4554 | 0.7685 | | 0.4859 | 22.0 | 1276 | 0.4713 | 0.7770 | | 0.504 | 23.0 | 1334 | 0.4545 | 0.7721 | | 0.478 | 24.0 | 1392 | 0.4658 | 0.7830 | | 0.4759 | 25.0 | 1450 | 0.4365 | 0.8012 | | 0.4686 | 26.0 | 1508 | 0.4452 | 0.7855 | | 0.4668 | 27.0 | 1566 | 0.4427 | 0.7879 | | 0.4615 | 28.0 | 1624 | 0.4439 | 0.7685 | | 0.4588 | 29.0 | 1682 | 0.4378 | 0.7830 | | 0.4588 | 30.0 | 1740 | 0.4229 | 0.7988 | | 0.4296 | 31.0 | 1798 | 0.4188 | 0.7976 | | 0.4208 | 32.0 | 1856 | 0.4316 | 0.7891 | | 0.4481 | 33.0 | 1914 | 0.4331 | 0.7891 | | 0.4253 | 34.0 | 1972 | 0.4524 | 0.7879 | | 0.4117 | 35.0 | 2030 | 0.4570 | 0.7952 | | 0.4405 | 36.0 | 2088 | 0.4307 | 0.7927 | | 0.4154 | 37.0 | 2146 | 0.4257 | 0.8024 | | 0.3962 | 38.0 | 2204 | 0.5077 | 0.7818 | | 0.414 | 39.0 | 2262 | 0.4602 | 0.8012 | | 0.3937 | 40.0 | 2320 | 0.4741 | 0.7770 | | 0.4186 | 41.0 | 2378 | 0.4250 | 0.8 | | 0.4076 | 42.0 | 2436 | 0.4353 | 0.7988 | | 0.3777 | 43.0 | 2494 | 0.4442 | 0.7879 | | 0.3968 | 44.0 | 2552 | 0.4525 | 0.7879 | | 0.377 | 45.0 | 2610 | 0.4198 | 0.7988 | | 0.378 | 46.0 | 2668 | 0.4297 | 0.8097 | | 0.3675 | 47.0 | 2726 | 0.4435 | 0.8085 | | 0.3562 | 48.0 | 2784 | 0.4477 | 0.7952 | | 0.381 | 49.0 | 2842 | 0.4206 | 0.8255 | | 0.3603 | 50.0 | 2900 | 0.4136 | 0.8109 | | 0.3331 | 51.0 | 2958 | 0.4141 | 0.8230 | | 0.3471 | 52.0 | 3016 | 0.4253 | 0.8109 | | 0.346 | 53.0 | 3074 | 0.5203 | 0.8048 | | 0.3481 | 54.0 | 3132 | 0.4288 | 0.8242 | | 0.3411 | 55.0 | 3190 | 0.4416 | 0.8194 | | 0.3275 | 56.0 | 3248 | 0.4149 | 0.8291 | | 0.3067 | 57.0 | 3306 | 0.4623 | 0.8218 | | 0.3166 | 58.0 | 3364 | 0.4432 | 0.8255 | | 0.3294 | 59.0 | 3422 | 0.4599 | 0.8267 | | 0.3146 | 60.0 | 3480 | 0.4266 | 0.8291 | | 0.3091 | 61.0 | 3538 | 0.4318 | 0.8315 | | 0.3277 | 62.0 | 3596 | 0.4252 | 0.8242 | | 0.296 | 63.0 | 3654 | 0.4332 | 0.8436 | | 0.3241 | 64.0 | 3712 | 0.4729 | 0.8194 | | 0.3104 | 65.0 | 3770 | 0.4228 | 0.8448 | | 0.2878 | 66.0 | 3828 | 0.4173 | 0.8388 | | 0.265 | 67.0 | 3886 | 0.4210 | 0.8497 | | 0.3011 | 68.0 | 3944 | 0.4276 | 0.8436 | | 0.2861 | 69.0 | 4002 | 0.4923 | 0.8315 | | 0.2994 | 70.0 | 4060 | 0.4472 | 0.8182 | | 0.276 | 71.0 | 4118 | 0.4541 | 0.8315 | | 0.2796 | 72.0 | 4176 | 0.4218 | 0.8521 | | 0.2727 | 73.0 | 4234 | 0.4053 | 0.8448 | | 0.255 | 74.0 | 4292 | 0.4356 | 0.8376 | | 0.276 | 75.0 | 4350 | 0.4193 | 0.8436 | | 0.261 | 76.0 | 4408 | 0.4484 | 0.8533 | | 0.2416 | 77.0 | 4466 | 0.4722 | 0.8194 | | 0.2602 | 78.0 | 4524 | 0.4431 | 0.8533 | | 0.2591 | 79.0 | 4582 | 0.4269 | 0.8606 | | 0.2613 | 80.0 | 4640 | 0.4335 | 0.8485 | | 0.2555 | 81.0 | 4698 | 0.4269 | 0.8594 | | 0.2832 | 82.0 | 4756 | 0.3968 | 0.8715 | | 0.264 | 83.0 | 4814 | 0.4173 | 0.8703 | | 0.2462 | 84.0 | 4872 | 0.4150 | 0.8606 | | 0.2424 | 85.0 | 4930 | 0.4377 | 0.8630 | | 0.2574 | 86.0 | 4988 | 0.4120 | 0.8679 | | 0.2273 | 87.0 | 5046 | 0.4393 | 0.8533 | | 0.2334 | 88.0 | 5104 | 0.4366 | 0.8630 | | 0.2258 | 89.0 | 5162 | 0.4189 | 0.8630 | | 0.2153 | 90.0 | 5220 | 0.4474 | 0.8630 | | 0.2462 | 91.0 | 5278 | 0.4362 | 0.8642 | | 0.2356 | 92.0 | 5336 | 0.4454 | 0.8715 | | 0.2019 | 93.0 | 5394 | 0.4413 | 0.88 | | 0.209 | 94.0 | 5452 | 0.4410 | 0.8703 | | 0.2201 | 95.0 | 5510 | 0.4323 | 0.8691 | | 0.2245 | 96.0 | 5568 | 0.4999 | 0.8618 | | 0.2178 | 97.0 | 5626 | 0.4612 | 0.8655 | | 0.2163 | 98.0 | 5684 | 0.4340 | 0.8703 | | 0.2228 | 99.0 | 5742 | 0.4504 | 0.8788 | | 0.2151 | 100.0 | 5800 | 0.4602 | 0.8703 | | 0.1988 | 101.0 | 5858 | 0.4414 | 0.8812 | | 0.2227 | 102.0 | 5916 | 0.4392 | 0.8824 | | 0.1772 | 103.0 | 5974 | 0.5069 | 0.8630 | | 0.2199 | 104.0 | 6032 | 0.4648 | 0.8667 | | 0.1936 | 105.0 | 6090 | 0.4806 | 0.8691 | | 0.199 | 106.0 | 6148 | 0.4569 | 0.8764 | | 0.2149 | 107.0 | 6206 | 0.4445 | 0.8739 | | 0.1917 | 108.0 | 6264 | 0.4444 | 0.8727 | | 0.201 | 109.0 | 6322 | 0.4594 | 0.8727 | | 0.1938 | 110.0 | 6380 | 0.4564 | 0.8764 | | 0.1977 | 111.0 | 6438 | 0.4398 | 0.8739 | | 0.1776 | 112.0 | 6496 | 0.4356 | 0.88 | | 0.1939 | 113.0 | 6554 | 0.4412 | 0.8848 | | 0.178 | 114.0 | 6612 | 0.4373 | 0.88 | | 0.1926 | 115.0 | 6670 | 0.4508 | 0.8812 | | 0.1979 | 116.0 | 6728 | 0.4477 | 0.8848 | | 0.1958 | 117.0 | 6786 | 0.4488 | 0.8897 | | 0.189 | 118.0 | 6844 | 0.4553 | 0.8836 | | 0.1838 | 119.0 | 6902 | 0.4605 | 0.8848 | | 0.1755 | 120.0 | 6960 | 0.4463 | 0.8836 | | 0.1958 | 121.0 | 7018 | 0.4474 | 0.8861 | | 0.1857 | 122.0 | 7076 | 0.4550 | 0.8921 | | 0.1466 | 123.0 | 7134 | 0.4494 | 0.8885 | | 0.1751 | 124.0 | 7192 | 0.4560 | 0.8873 | | 0.175 | 125.0 | 7250 | 0.4383 | 0.8897 | | 0.207 | 126.0 | 7308 | 0.4601 | 0.8873 | | 0.1756 | 127.0 | 7366 | 0.4425 | 0.8897 | | 0.1695 | 128.0 | 7424 | 0.4533 | 0.8909 | | 0.1873 | 129.0 | 7482 | 0.4510 | 0.8897 | | 0.1726 | 130.0 | 7540 | 0.4463 | 0.8909 | | 39d23384e7f98ade4c193896a91035ac |

apache-2.0 | ['translation'] | false | opus-mt-sv-id * source languages: sv * target languages: id * OPUS readme: [sv-id](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-id/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-id/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-id/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-id/opus-2020-01-16.eval.txt) | c078515adedcdb0128a3015acd5a9f3e |

apache-2.0 | [] | false | Unam_tesis_ROBERTA_GOB_finnetuning: Unam's thesis classification with PlanTL-GOB-ES/roberta-large-bne

This model is created from the finetuning of the pre-model

for RoBERTa large trained with data from the National Library of Spain (BNE) [

PlanTL-GOB-ES] (https://huggingface.co/PlanTL-GOB-ES/roberta-large-bne), using PyTorch framework,

and trained with a set of theses of the National Autonomous University of Mexico [UNAM](https://tesiunam.dgb.unam.mx/F?func=find-b-0&local_base=TES01).

The model classifies for five (Psicología, Derecho, Química Farmacéutico Biológica, Actuaría, Economía)

possible careers at the University of Mexico.

List of careers from a text.

| 1505c1377ceefa9c907938c03f56761d |

apache-2.0 | [] | false | Training Dataset

1000 documents (Thesis introduction, Author´s first name, Author´s last name, Thesis title, Year, Career )

| Careers | Size |

|--------------|----------------------|

| Actuaría | 200 |

| Derecho| 200 |

| Economía| 200 |

| Psicología| 200 |

| Química Farmacéutico Biológica| 200 |

| 34301f06514589cc52c1ec02e3eaa6a4 |

apache-2.0 | [] | false | Example of use

For further details on how to use unam_tesis_ROBERTA_GOB_finnetuning you can visit the Huggingface Transformers library, starting with the Quickstart section. Unam_tesis models can be accessed simply as 'hackathon-pln-e/unam_tesis_beto_finnetuning' by using the Transformers library. An example of how to download and use the models on this page can be found in this colab notebook.

```python

tokenizer = AutoTokenizer.from_pretrained('PlanTL-GOB-ES/roberta-large-bne', use_fast=False)

model = AutoModelForSequenceClassification.from_pretrained(

'hackathon-pln-e/unam_tesis_ROBERTA_GOB_finnetuning', num_labels=5, output_attentions=False,

output_hidden_states=False)

pipe = TextClassificationPipeline(model=model, tokenizer=tokenizer, return_all_scores=True)

classificationResult = pipe("El objetivo de esta tesis es elaborar un estudio de las condiciones asociadas al aprendizaje desde casa")

```

To cite this resource in a publication please use the following:

| 34500adb6cd7fb22274c9d65db61c44f |

apache-2.0 | [] | false | Citation

[UNAM's Tesis with PlanTL-GOB-ES/roberta-large-bne ](https://huggingface.co/hackathon-pln-es/unam_tesis_ROBERTA_GOB_finnetuning)

To cite this resource in a publication please use the following:

```

@inproceedings{SpanishNLPHackaton2022,

title={Unam's thesis with PlanTL-GOB-ES/roberta-large-bne classify },

author={López López, Isaac Isaías and López Ramos, Dionis and Clavel Quintero, Yisel and López López, Ximena Yeraldin },

booktitle={Somos NLP Hackaton 2022},

year={2022}

}

```

| 45cdff548f3d35467e8a571153526bfe |

apache-2.0 | [] | false | Team members

- Isaac Isaías López López ([MajorIsaiah](https://huggingface.co/MajorIsaiah))

- Dionis López Ramos ([inoid](https://huggingface.co/inoid))

- Yisel Clavel Quintero ([clavel](https://huggingface.co/clavel))

- Ximyer Yeraldin López López ([Ximyer](https://huggingface.co/Ximyer))

| aeee56206cc55f3b81a145483779f347 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | kantoku-face Dreambooth model trained by KuroTuyuri with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Sample pictures of this concept: | 89753e612f46dac19395371df23e4dc5 |

apache-2.0 | ['text-classification', 'pytorch'] | false | Model description: This model was created with the purpose to detect toxic or potentially harmful comments. For this model, we finetuned a multilingual distilbert model [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) on the translated [Jigsaw Toxicity dataset](https://www.kaggle.com/c/jigsaw-toxic-comment-classification-challenge). The original dataset was translated using the appropriate [MariantMT model](https://huggingface.co/Helsinki-NLP/opus-mt-en-nl). The model was trained for 2 epochs, on 90% of the dataset, with the following arguments: ``` training_args = TrainingArguments( learning_rate=3e-5, per_device_train_batch_size=16, per_device_eval_batch_size=16, gradient_accumulation_steps=4, load_best_model_at_end=True, metric_for_best_model="recall", epochs=2, evaluation_strategy="steps", save_strategy="steps", save_total_limit=10, logging_steps=100, eval_steps=250, save_steps=250, weight_decay=0.001, report_to="wandb") ``` | bc61982b22d8b84ceb03294f92f33473 |

apache-2.0 | ['text-classification', 'pytorch'] | false | Model Performance: Model evaluation was done on 1/10th of the dataset, which served as the test dataset. | Accuracy | F1 Score | Recall | Precision | | --- | --- | --- | --- | | 95.75 | 78.88 | 77.23 | 80.61 | | 826fd4e8dfdd61a9b311529ce1d1db71 |

apache-2.0 | ['generated_from_keras_callback'] | false | Mohan515/t5-small-finetuned-medical This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.8018 - Validation Loss: 0.5835 - Train Rouge1: 43.3783 - Train Rouge2: 35.1091 - Train Rougel: 41.6332 - Train Rougelsum: 42.5743 - Train Gen Len: 17.4718 - Epoch: 0 | 1a4b665d1465428f55710edc8d9704f5 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Train Rouge1 | Train Rouge2 | Train Rougel | Train Rougelsum | Train Gen Len | Epoch | |:----------:|:---------------:|:------------:|:------------:|:------------:|:---------------:|:-------------:|:-----:| | 0.8018 | 0.5835 | 43.3783 | 35.1091 | 41.6332 | 42.5743 | 17.4718 | 0 | | 07fdab2c9a615ff8791ec66b86799110 |

apache-2.0 | ['generated_from_trainer'] | false | convnext-tiny-224-finetuned-eurosat-att-auto This model is a fine-tuned version of [facebook/convnext-tiny-224](https://huggingface.co/facebook/convnext-tiny-224) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.5076 - Accuracy: 0.9506 | 2b66c6821558c2742fa4d151e8044e44 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 10 | a36454033b24a1e0b8a8e004296e8a33 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 1.5583 | 0.97 | 23 | 1.6008 | 0.7160 | | 1.2953 | 1.97 | 46 | 1.2957 | 0.7531 | | 0.9488 | 2.97 | 69 | 1.0720 | 0.8148 | | 0.7036 | 3.97 | 92 | 0.8965 | 0.8642 | | 0.5446 | 4.97 | 115 | 0.7574 | 0.9383 | | 0.4113 | 5.97 | 138 | 0.6522 | 0.9383 | | 0.2259 | 6.97 | 161 | 0.5720 | 0.9383 | | 0.1863 | 7.97 | 184 | 0.5076 | 0.9506 | | 0.1443 | 8.97 | 207 | 0.4795 | 0.9383 | | 0.1289 | 9.97 | 230 | 0.4685 | 0.9383 | | abc4cf0b4932eb68e5c2134b2d2aa36f |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - eval_loss: 0.3049 - eval_accuracy: 0.9252 - eval_runtime: 423.5074 - eval_samples_per_second: 59.031 - eval_steps_per_second: 1.846 - epoch: 1.35 - step: 1054 | 20ca1d5f4b94d271e8ae6f15c0740446 |

apache-2.0 | ['generated_from_trainer'] | false | my_awsome_wnut_model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the wnut_17 dataset. It achieves the following results on the evaluation set: - Loss: 0.2858 - Precision: 0.4846 - Recall: 0.2632 - F1: 0.3411 - Accuracy: 0.9386 | bb2190c20ddc047092c447a45febc628 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 213 | 0.2976 | 0.3873 | 0.1974 | 0.2615 | 0.9352 | | No log | 2.0 | 426 | 0.2858 | 0.4846 | 0.2632 | 0.3411 | 0.9386 | | c43c6fa25da55a1acf8cb21f12c6183b |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | JBandMBsession1 Dreambooth model trained by Jackie with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 4a24d7999867b8e1639f16a5d7f9bf17 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.1495 - Accuracy: 0.9385 - F1: 0.9383 | 410a010fc9af8f599d6ab07900bf3be4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.1739 | 1.0 | 250 | 0.1827 | 0.931 | 0.9302 | | 0.1176 | 2.0 | 500 | 0.1567 | 0.9325 | 0.9326 | | 0.0994 | 3.0 | 750 | 0.1555 | 0.9385 | 0.9389 | | 0.08 | 4.0 | 1000 | 0.1496 | 0.9445 | 0.9443 | | 0.0654 | 5.0 | 1250 | 0.1495 | 0.9385 | 0.9383 | | bd2b6e2067ba2c24d4b5d639eb611ed4 |

mit | ['generated_from_trainer'] | false | xlnet-base-cased_fold_3_binary This model is a fine-tuned version of [xlnet-base-cased](https://huggingface.co/xlnet-base-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.3616 - F1: 0.7758 | d050cb87bb10c813c14c51e0f91443a9 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 1.0 | 289 | 0.4668 | 0.7666 | | 0.4142 | 2.0 | 578 | 0.4259 | 0.7631 | | 0.4142 | 3.0 | 867 | 0.6744 | 0.7492 | | 0.235 | 4.0 | 1156 | 0.8879 | 0.7678 | | 0.235 | 5.0 | 1445 | 1.0036 | 0.7639 | | 0.1297 | 6.0 | 1734 | 1.1427 | 0.7616 | | 0.0894 | 7.0 | 2023 | 1.2126 | 0.7626 | | 0.0894 | 8.0 | 2312 | 1.5098 | 0.7433 | | 0.0473 | 9.0 | 2601 | 1.3616 | 0.7758 | | 0.0473 | 10.0 | 2890 | 1.5966 | 0.7579 | | 0.0325 | 11.0 | 3179 | 1.6669 | 0.7508 | | 0.0325 | 12.0 | 3468 | 1.7401 | 0.7437 | | 0.0227 | 13.0 | 3757 | 1.7797 | 0.7515 | | 0.0224 | 14.0 | 4046 | 1.7349 | 0.7418 | | 0.0224 | 15.0 | 4335 | 1.7527 | 0.7595 | | 0.0152 | 16.0 | 4624 | 1.7492 | 0.7634 | | 0.0152 | 17.0 | 4913 | 1.8178 | 0.7628 | | 0.0117 | 18.0 | 5202 | 1.7736 | 0.7688 | | 0.0117 | 19.0 | 5491 | 1.8449 | 0.7704 | | 0.0055 | 20.0 | 5780 | 1.8687 | 0.7652 | | 0.0065 | 21.0 | 6069 | 1.8083 | 0.7669 | | 0.0065 | 22.0 | 6358 | 1.8568 | 0.7559 | | 0.0054 | 23.0 | 6647 | 1.8760 | 0.7678 | | 0.0054 | 24.0 | 6936 | 1.8948 | 0.7697 | | 0.0048 | 25.0 | 7225 | 1.9109 | 0.7680 | | 2500c80361a31e97f52a0dbb4ca13219 |

cc-by-4.0 | [] | false | GujaratiBERT-Scratch GujaratiBERT is a Gujarati BERT model trained on publicly available Gujarati monolingual datasets from scratch. Preliminary details on the dataset, models, and baseline results can be found in our [<a href='https://arxiv.org/abs/2211.11418'> paper </a>]. Citing: ``` @article{joshi2022l3cubehind, title={L3Cube-HindBERT and DevBERT: Pre-Trained BERT Transformer models for Devanagari based Hindi and Marathi Languages}, author={Joshi, Raviraj}, journal={arXiv preprint arXiv:2211.11418}, year={2022} } ``` | d92c7d88ec41ecd3696e76c8abc8d7eb |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | imal_workshop_collage Dreambooth model trained by mrcyme with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 9882a55b9098a5dd8df32951fe912f7a |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | deployment-with-nvidia-riva) | This model transcribes speech in lowercase Ukrainian alphabet including spaces and apostrophes, and is trained on 69 hours of Ukrainian speech data. It is a non-autoregressive "large" variant of Streaming Citrinet, with around 141 million parameters. Model is fine-tuned from pre-trained Russian Citrinet-1024 model on Ukrainian speech data using Cross-Language Transfer Learning [4] approach. See the [model architecture]( | 0b2fe3a8dd5c5377ac63821ef7aad04c |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Transcribing many audio files ```shell python [NEMO_GIT_FOLDER]/examples/asr/transcribe_speech.py pretrained_name="nvidia/stt_uk_citrinet_1024_gamma_0_25" audio_dir="<DIRECTORY CONTAINING AUDIO FILES>" ``` | b9ed24b66a994ba2f9d53bf1e04c8bb1 |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Training The NeMo toolkit [3] was used for training the model for 1000 epochs. This model was trained with this [example script](https://github.com/NVIDIA/NeMo/blob/main/examples/asr/asr_ctc/speech_to_text_ctc_bpe.py) and this [base config](https://github.com/NVIDIA/NeMo/blob/main/examples/asr/conf/citrinet/citrinet_1024.yaml). The tokenizer for this models was built using the text transcripts of the train set with this [script](https://github.com/NVIDIA/NeMo/blob/main/scripts/tokenizers/process_asr_text_tokenizer.py). For details on Cross-Lingual transfer learning see [4]. | 0ac81dad79687f44bc34e10e66940016 |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Datasets This model has been trained using validated Mozilla Common Voice Corpus 10.0 dataset (excluding dev and test data) comprising of 69 hours of Ukrainian speech. The Russian model from which this model is fine-tuned has been trained on the union of: (1) Mozilla Common Voice (V7 Ru), (2) Ru LibriSpeech (RuLS), (3) Sber GOLOS and (4) SOVA datasets. | cc2bd9761200380667dae82380a4e6b6 |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Performance The list of the available models in this collection is shown in the following table. Performances of the ASR models are reported in terms of Word Error Rate (WER%) with greedy decoding. | Version | Tokenizer | Vocabulary Size | MCV-10 test | MCV-10 dev | MCV-9 test | MCV-9 dev | MCV-8 test | MCV-8 dev | | :-----------: |:---------------------:| :--------------: | :---------: | :--------: | :--------: | :-------: | :--------: | :-------: | | 1.0.0 | SentencePiece Unigram | 1024 | 5.02 | 4.65 | 3.75 | 4.88 | 3.52 | 5.02 | | eefaed88e5ab385cc4c82e5c89e110d8 |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Limitations Since this model was trained on publicly available speech datasets, the performance of this model might degrade for speech that includes technical terms, or vernacular that the model has not been trained on. The model might also perform worse for accented speech. | 637d1d9b4602bd8e0ccdeeb0129c8b14 |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | Deployment with NVIDIA Riva For the best real-time accuracy, latency, and throughput, deploy the model with [NVIDIA Riva](https://developer.nvidia.com/riva), an accelerated speech AI SDK deployable on-prem, in all clouds, multi-cloud, hybrid, at the edge, and embedded. Additionally, Riva provides: * World-class out-of-the-box accuracy for the most common languages with model checkpoints trained on proprietary data with hundreds of thousands of GPU-compute hours * Best in class accuracy with run-time word boosting (e.g., brand and product names) and customization of acoustic model, language model, and inverse text normalization * Streaming speech recognition, Kubernetes compatible scaling, and Enterprise-grade support Check out [Riva live demo](https://developer.nvidia.com/riva | a0b4b88de278dae1c249f87dc876063b |

cc-by-4.0 | ['automatic-speech-recognition', 'speech', 'audio', 'CTC', 'Citrinet', 'Transformer', 'pytorch', 'NeMo', 'hf-asr-leaderboard', 'Riva'] | false | References [1] [Citrinet: Closing the Gap between Non-Autoregressive and Autoregressive End-to-End Models for Automatic Speech Recognition](https://arxiv.org/abs/2104.01721) <br /> [2] [Google Sentencepiece Tokenizer](https://github.com/google/sentencepiece) <br /> [3] [NVIDIA NeMo Toolkit](https://github.com/NVIDIA/NeMo) <br /> [4] [Cross-Language Transfer Learning](https://scholar.google.com/citations?view_op=view_citation&hl=en&user=qmmIGnwAAAAJ&sortby=pubdate&citation_for_view=qmmIGnwAAAAJ:PVjk1bu6vJQC) | 9141cbc7c27b5b24f939e343bfa1cbba |

apache-2.0 | ['generated_from_trainer'] | false | demo_irony_1234567 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the tweet_eval dataset. It achieves the following results on the evaluation set: - Loss: 1.2905 - F1: 0.6858 | 42ce87a5673b7871ec283c6812af76d0 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-base-timit-demo-colab40 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.7341 - Wer: 0.5578 | 2695923a342da98bb4f20a5245f22454 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 5.0438 | 13.89 | 500 | 3.0671 | 1.0 | | 1.0734 | 27.78 | 1000 | 0.7341 | 0.5578 | | b1121e3f106431b407c0f197f2738bf4 |

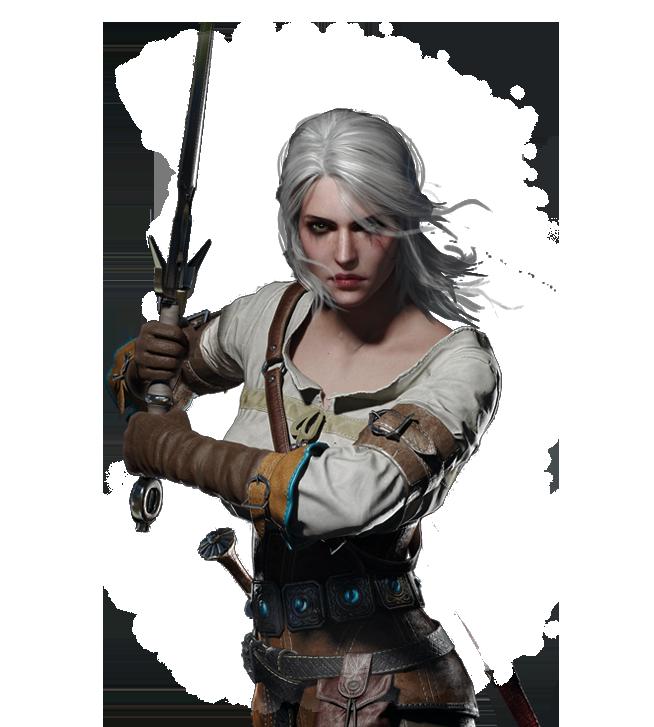

mit | [] | false | model by tulto This your the Stable Diffusion model fine-tuned the the-witcher-game-ciri concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of a sks woman with white hair** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). Here are the images used for training this concept:       | 297fa5df519ca46fc63c2a0ad6fda68b |

apache-2.0 | ['multiberts', 'multiberts-seed_0', 'multiberts-seed_0-step_200k'] | false | MultiBERTs, Intermediate Checkpoint - Seed 0, Step 200k MultiBERTs is a collection of checkpoints and a statistical library to support robust research on BERT. We provide 25 BERT-base models trained with similar hyper-parameters as [the original BERT model](https://github.com/google-research/bert) but with different random seeds, which causes variations in the initial weights and order of training instances. The aim is to distinguish findings that apply to a specific artifact (i.e., a particular instance of the model) from those that apply to the more general procedure. We also provide 140 intermediate checkpoints captured during the course of pre-training (we saved 28 checkpoints for the first 5 runs). The models were originally released through [http://goo.gle/multiberts](http://goo.gle/multiberts). We describe them in our paper [The MultiBERTs: BERT Reproductions for Robustness Analysis](https://arxiv.org/abs/2106.16163). This is model | e02427792cf134fc2f1b9ad551872a60 |

apache-2.0 | ['multiberts', 'multiberts-seed_0', 'multiberts-seed_0-step_200k'] | false | How to use Using code from [BERT-base uncased](https://huggingface.co/bert-base-uncased), here is an example based on Tensorflow: ``` from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_0-step_200k') model = TFBertModel.from_pretrained("google/multiberts-seed_0-step_200k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` PyTorch version: ``` from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('google/multiberts-seed_0-step_200k') model = BertModel.from_pretrained("google/multiberts-seed_0-step_200k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 3dbd89a45c7d3f92648049ac55509f6e |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.7341 | 2efe023f79842ce69c447980bd0eba23 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 - mixed_precision_training: Native AMP | a8764f6b2b667629f3091d202e6622e0 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.9667 | 1.0 | 156 | 2.7795 | | 2.8612 | 2.0 | 312 | 2.6910 | | 2.8075 | 3.0 | 468 | 2.7044 | | 82d3efb9ed1256cb195b333549861484 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Turkish Note: This model is trained with 5 Turkish movies additional to common voice dataset. Although WER is high (50%) per common voice test dataset, performance from "other sources " seems pretty good. Disclaimer: Please use another wav2vec2-tr model in hub for "clean environment" dialogues as they tend to do better in clean sounds with less background noise. Dataset building from csv and merging code can be found on below of this Readme. Please try speech yourself on the right side to see its performance. Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on Turkish using the [Common Voice](https://huggingface.co/datasets/common_voice) and 5 Turkish movies that include background noise/talkers . When using this model, make sure that your speech input is sampled at 16kHz. | cb22d0d2c6f853f38afff4c350edf5e4 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Usage The model can be used directly (without a language model) as follows: ```python import torch import torchaudio import pydub from pydub.utils import mediainfo import array from pydub import AudioSegment from pydub.utils import get_array_type import numpy as np from datasets import load_dataset from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor test_dataset = load_dataset("common_voice", "tr", split="test[:2%]") processor = Wav2Vec2Processor.from_pretrained("gorkemgoknar/wav2vec2-large-xlsr-53-turkish") model = Wav2Vec2ForCTC.from_pretrained("gorkemgoknar/wav2vec2-large-xlsr-53-turkish") new_sample_rate = 16000 def audio_resampler(batch, new_sample_rate = 16000): | bf8f6c466aae9a634f9151e1cfd4020b |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | gets current samplerate sound = pydub.AudioSegment.from_file(file=batch["path"]) sampling_rate = new_sample_rate sound = sound.set_frame_rate(new_sample_rate) left = sound.split_to_mono()[0] bit_depth = left.sample_width * 8 array_type = pydub.utils.get_array_type(bit_depth) numeric_array = np.array(array.array(array_type, left._data) ) speech_array = torch.FloatTensor(numeric_array) batch["speech"] = numeric_array batch["sampling_rate"] = sampling_rate | 930f44e10fe40fc1774d72a4586c8f22 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def speech_file_to_array_fn(batch): batch = audio_resampler(batch, new_sample_rate = new_sample_rate) return batch test_dataset = test_dataset.map(speech_file_to_array_fn) inputs = processor(test_dataset["speech"][:2], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values, attention_mask=inputs.attention_mask).logits predicted_ids = torch.argmax(logits, dim=-1) print("Prediction:", processor.batch_decode(predicted_ids)) print("Reference:", test_dataset["sentence"][:2]) ``` | bdcd6a447141fe258b87a3dce1e6c205 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Evaluation The model can be evaluated as follows on the Turkish test data of Common Voice. ```python import torch import torchaudio from datasets import load_dataset, load_metric from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor import re import pydub import array import numpy as np test_dataset = load_dataset("common_voice", "tr", split="test") wer = load_metric("wer") processor = Wav2Vec2Processor.from_pretrained("gorkemgoknar/wav2vec2-large-xlsr-53-turkish") model = Wav2Vec2ForCTC.from_pretrained("gorkemgoknar/wav2vec2-large-xlsr-53-turkish") model.to("cuda") | cb54226f5e19551b9a937287da51d436 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | gets current samplerate sound = pydub.AudioSegment.from_file(file=batch["path"]) sound = sound.set_frame_rate(new_sample_rate) left = sound.split_to_mono()[0] bit_depth = left.sample_width * 8 array_type = pydub.utils.get_array_type(bit_depth) numeric_array = np.array(array.array(array_type, left._data) ) speech_array = torch.FloatTensor(numeric_array) return speech_array, new_sample_rate def remove_special_characters(batch): | 6e3a9a990773d8acf6a7066bf965a898 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | load and conversion done in resampler , takes and returns batch speech_array, sampling_rate = audio_resampler(batch, new_sample_rate = new_sample_rate) batch["speech"] = speech_array batch["sampling_rate"] = sampling_rate batch["target_text"] = batch["sentence"] return batch test_dataset = test_dataset.map(speech_file_to_array_fn) | 70b7743eaf374b0c466dd4c6d3e8ea31 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch print("EVALUATING:") | 83a222a08c4e88ce219dd956e4684634 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | for 8GB RAM on GPU best is batch_size 2 for windows, 4 may fit in linux only result = test_dataset.map(evaluate, batched=True, batch_size=2) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 50.41 % | bfb9579163528009a9c6e021851166b7 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Training The Common Voice `train` and `validation` datasets were used for training. Additional 5 Turkish movies with subtitles also used for training. Similar training model used as base fine-tuning, additional audio resampler is on above code. Putting model building and merging code below for reference ```python import pandas as pd from datasets import load_dataset, load_metric import os from pathlib import Path from datasets import Dataset import csv | 82762d12e15ea183e0c6eefe63eeab6f |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Walk all subdirectories of base_set_path and find csv files base_set_path = r'C:\\dataset_extracts' csv_files = [] for path, subdirs, files in os.walk(base_set_path): for name in files: if name.endswith(".csv"): deckfile= os.path.join(path, name) csv_files.append(deckfile) def get_dataset_from_csv_file(csvfilename,names=['sentence', 'path']): path = Path(csvfilename) csv_delimiter="\\t" | 617d9a09f9dbbdca5eed6e64ef7a488c |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Pandas has bug reading non-ascii file names, make sure use open with encoding df=pd.read_csv(open(path, 'r', encoding='utf-8'), delimiter=csv_delimiter,header=None , names=names, encoding='utf8') return Dataset.from_pandas(df) custom_datasets= [] for csv_file in csv_files: this_dataset=get_dataset_from_csv_file(csv_file) custom_datasets.append(this_dataset) from datasets import concatenate_datasets, load_dataset from datasets import load_from_disk | 5de857212fb4aaab4176e04b90d73916 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large-V2 Nepali This model is a fine-tuned version of [DrishtiSharma/whisper-large-v2-hindi-3k-steps](https://huggingface.co/DrishtiSharma/whisper-large-v2-hindi-3k-steps) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.9961 - Wer: 9.7561 | 88e94e57f0445258e118bd60368ab880 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:------:| | 0.0 | 1000.0 | 1000 | 0.9961 | 9.7561 | | 7201c2d707d17d72c61aaf2672386ca5 |

apache-2.0 | ['generated_from_keras_callback'] | false | Ddaow/distilbert-base-uncased-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.9692 - Train End Logits Accuracy: 0.7314 - Train Start Logits Accuracy: 0.6923 - Validation Loss: 1.1071 - Validation End Logits Accuracy: 0.7008 - Validation Start Logits Accuracy: 0.6691 - Epoch: 1 | 8575962878431147c0d194e2c3a166dc |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 1.5125 | 0.6064 | 0.5677 | 1.1969 | 0.6799 | 0.6471 | 0 | | 0.9692 | 0.7314 | 0.6923 | 1.1071 | 0.7008 | 0.6691 | 1 | | 222638459d6ff99326a08bb0473f0dae |

cc-by-sa-4.0 | ['spacy', 'token-classification'] | false | fi_core_news_lg Finnish pipeline optimized for CPU. Components: tok2vec, tagger, morphologizer, parser, lemmatizer (trainable_lemmatizer), senter, ner. | Feature | Description | | --- | --- | | **Name** | `fi_core_news_lg` | | **Version** | `3.5.0` | | **spaCy** | `>=3.5.0,<3.6.0` | | **Default Pipeline** | `tok2vec`, `tagger`, `morphologizer`, `parser`, `lemmatizer`, `attribute_ruler`, `ner` | | **Components** | `tok2vec`, `tagger`, `morphologizer`, `parser`, `lemmatizer`, `senter`, `attribute_ruler`, `ner` | | **Vectors** | floret (200000, 300) | | **Sources** | [UD Finnish TDT v2.8](https://github.com/UniversalDependencies/UD_Finnish-TDT) (Ginter, Filip; Kanerva, Jenna; Laippala, Veronika; Miekka, Niko; Missilä, Anna; Ojala, Stina; Pyysalo, Sampo)<br />[TurkuONE (ffe2040e)](https://github.com/TurkuNLP/turku-one) (Jouni Luoma, Li-Hsin Chang, Filip Ginter, Sampo Pyysalo)<br />[Explosion Vectors (OSCAR 2109 + Wikipedia + OpenSubtitles + WMT News Crawl)](https://github.com/explosion/spacy-vectors-builder) (Explosion) | | **License** | `CC BY-SA 4.0` | | **Author** | [Explosion](https://explosion.ai) | | eda6bc750b0f3b1f082cfaacba6c1c34 |

cc-by-sa-4.0 | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `TOKEN_ACC` | 100.00 | | `TOKEN_P` | 99.79 | | `TOKEN_R` | 99.90 | | `TOKEN_F` | 99.85 | | `TAG_ACC` | 97.09 | | `POS_ACC` | 96.28 | | `MORPH_ACC` | 92.22 | | `MORPH_MICRO_P` | 96.26 | | `MORPH_MICRO_R` | 95.17 | | `MORPH_MICRO_F` | 95.71 | | `SENTS_P` | 91.96 | | `SENTS_R` | 89.74 | | `SENTS_F` | 90.83 | | `DEP_UAS` | 83.71 | | `DEP_LAS` | 79.41 | | `LEMMA_ACC` | 86.53 | | `ENTS_P` | 82.36 | | `ENTS_R` | 81.30 | | `ENTS_F` | 81.83 | | 97301c9f35506dde0a3dad8cdba9f679 |

cc-by-4.0 | [] | false | UoM&MMU at TSAR-2022 Shared Task - Prompt Learning for Lexical Simplification: prompt-ls-pt-2 We present **PromptLS**, a method for fine-tuning large pre-trained masked language models to perform the task of Lexical Simplification. This model is part of a series of models presented at the [TSAR-2022 Shared Task](https://taln.upf.edu/pages/tsar2022-st/) by the University of Manchester and Manchester Metropolitan University (UoM&MMU) Team in English, Spanish and Portuguese. You can find more details about the project in our [GitHub](https://github.com/lmvasque/ls-prompt-tsar2022). | 8efb833ac39367674207d7eb60bcdd92 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.