license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

openrail++ | ['text-to-image', 'stable-diffusion'] | false | Samples Here are a few example images (generated with 50 steps). | pbr uneven stone wall | pbr dirt with weeds | pbr bright white marble | | --- | --- | --- | |  |  |  | | a93d65c5aaaef1a3e79669bb8a7a9c19 |

openrail++ | ['text-to-image', 'stable-diffusion'] | false | Usage Use the token `pbr` in your prompts to invoke the style. This model was made for use in [Dream Textures](https://github.com/carson-katri/dream-textures), a Stable Diffusion add-on for Blender. You can also use it with [🧨 diffusers](https://github.com/huggingface/diffusers): ```python from diffusers import StableDiffusionPipeline import torch model_id = "dream-textures/texture-diffusion" pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16) pipe = pipe.to("cuda") prompt = "pbr brick wall" image = pipe(prompt).images[0] image.save("bricks.png") ``` | d9053bf6ec6661f5e8df1ae3c5ee5528 |

openrail++ | ['text-to-image', 'stable-diffusion'] | false | Training Details * Base Model: [stabilityai/stable-diffusion-2-base](https://huggingface.co/stabilityai/stable-diffusion-2-base) * Resolution: `512` * Prior Loss Weight: `1.0` * Class Prompt: `texture` * Batch Size: `1` * Learning Rate: `1e-6` * Precision: `fp16` * Steps: `4000` * GPU: Tesla T4 | 18f158d0d59ffeb818c6712bfb5949b6 |

apache-2.0 | ['generated_from_trainer'] | false | openai/whisper-large-v2 This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1534 - Wer: 145.6786 | 10e86c109d6bf21b0e9b24cffa020a6b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0799 | 2.03 | 500 | 0.1010 | 28.1322 | | 0.0239 | 5.01 | 1000 | 0.1388 | 161.0139 | | 0.0066 | 7.03 | 1500 | 0.1221 | 99.3747 | | 0.0007 | 10.01 | 2000 | 0.1295 | 250.8822 | | 0.0007 | 12.04 | 2500 | 0.1423 | 77.2203 | | 0.0003 | 15.02 | 3000 | 0.1480 | 149.4380 | | 0.0001 | 17.05 | 3500 | 0.1518 | 141.5842 | | 0.0001 | 20.02 | 4000 | 0.1534 | 145.6786 | | bda84a0a0c1dbec134db67dfd46dbaa3 |

apache-2.0 | ['generated_from_keras_callback'] | false | kevinbram/testarbara This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.4900 - Train End Logits Accuracy: 0.6129 - Train Start Logits Accuracy: 0.5735 - Validation Loss: 1.1335 - Validation End Logits Accuracy: 0.6908 - Validation Start Logits Accuracy: 0.6545 - Epoch: 0 | ea5c37d821d66af59a123257be163faa |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 1.4900 | 0.6129 | 0.5735 | 1.1335 | 0.6908 | 0.6545 | 0 | | ece5bfe5a74895e01ecfe2468f27eaa9 |

apache-2.0 | ['translation'] | false | opus-mt-en-gl * source languages: en * target languages: gl * OPUS readme: [en-gl](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/en-gl/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2019-12-18.zip](https://object.pouta.csc.fi/OPUS-MT-models/en-gl/opus-2019-12-18.zip) * test set translations: [opus-2019-12-18.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-gl/opus-2019-12-18.test.txt) * test set scores: [opus-2019-12-18.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/en-gl/opus-2019-12-18.eval.txt) | a55d4d1e99a5485074c36947889b224d |

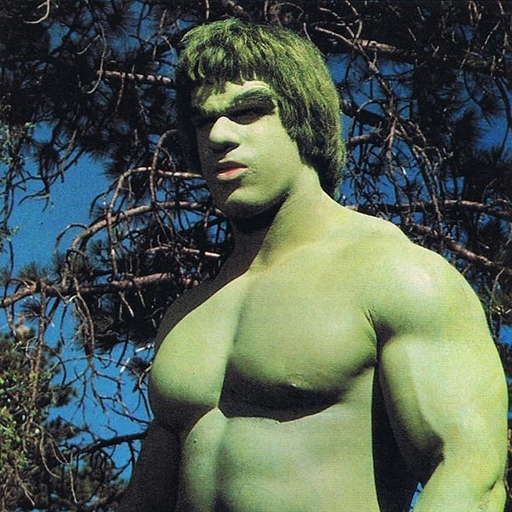

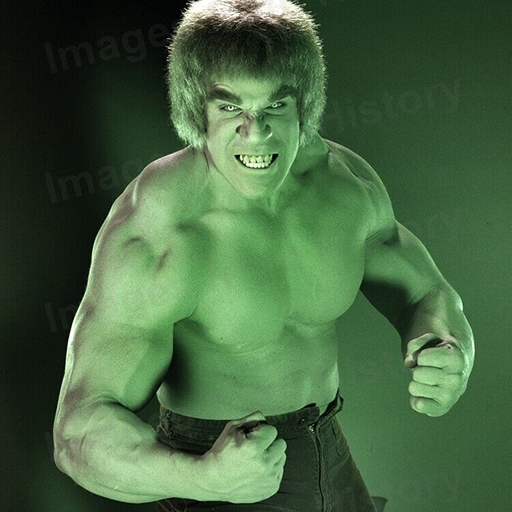

creativeml-openrail-m | ['text-to-image'] | false | hulk_style_v1 Dreambooth model trained by sztanki with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: hulk (use that on your prompt)  | 685b83023e6eeb477572a1bf7a651905 |

creativeml-openrail-m | [] | false | Prompt and settings for portraits: **brld harrison ford** _Steps: 50, Sampler: Euler a, CFG scale: 7, Seed: 3940025417 **brld morgan freeman** _Steps: 50, Sampler: Euler a, CFG scale: 7, Seed: 3940025417 | a61984345e450db6a7eb1257f346823e |

apache-2.0 | ['automatic-speech-recognition', 'fr'] | false | exp_w2v2t_fr_vp-es_s980 Fine-tuned [facebook/wav2vec2-large-es-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-es-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (fr)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | df338ec11ecd6fc92fc1e0ace2981506 |

other | ['generated_from_trainer'] | false | segformer-b0-scene-parse-150 This model is a fine-tuned version of [nvidia/mit-b0](https://huggingface.co/nvidia/mit-b0) on the scene_parse_150 dataset. It achieves the following results on the evaluation set: - Loss: 4.4675 - Mean Iou: 0.0363 - Mean Accuracy: 0.1783 - Overall Accuracy: 0.2473 - Per Category Iou: [0.12781275754079446, 0.2655217061115745, nan, nan, 0.0, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, 0.0, nan, nan, 0.07806681040517459, nan, nan, nan, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] - Per Category Accuracy: [0.16194541672583893, 0.8526907084599392, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.23336402444128232, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | 0f2856f8c67e7b9072d427e91a8c62be |

other | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mean Iou | Mean Accuracy | Overall Accuracy | Per Category Iou | Per Category Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:|:-------------:|:----------------:|:------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------:|:-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------:| | 3.6159 | 10.0 | 20 | 4.7805 | 0.0132 | 0.1045 | 0.1528 | [0.11163628179158226, 0.15792526006741817, nan, nan, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, nan, nan, nan, 0.0, nan, nan, 0.07479355092410539, 0.0, nan, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan] | [0.1367338585045152, 0.4354909643371182, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.15920314723361514, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | | 3.3116 | 20.0 | 40 | 4.6263 | 0.0214 | 0.1528 | 0.2002 | [0.12640023810812273, 0.20944680080510925, nan, nan, 0.0, 0.0, 0.0, 0.0, 0.0, 0.0, nan, nan, nan, 0.0, nan, nan, 0.09273170489030397, 0.0, nan, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan] | [0.15609780547503488, 0.6011714377098992, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.31204486481962, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | | 2.9608 | 30.0 | 60 | 4.4877 | 0.0299 | 0.1609 | 0.2376 | [0.13839157766016394, 0.2524577890734283, nan, nan, 0.0, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, 0.0, nan, nan, 0.05781044317683194, 0.0, nan, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | [0.1815182069553159, 0.7684711338557493, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.17644596969950616, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | | 2.885 | 40.0 | 80 | 4.4865 | 0.0362 | 0.1822 | 0.2447 | [0.12383757908479229, 0.2653620793269231, nan, nan, 0.0, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, 0.0, nan, nan, 0.08116762230992627, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | [0.15451895043731778, 0.8473932512394051, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.27370888089060014, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | | 2.9919 | 50.0 | 100 | 4.4675 | 0.0363 | 0.1783 | 0.2473 | [0.12781275754079446, 0.2655217061115745, nan, nan, 0.0, 0.0, 0.0, nan, nan, 0.0, nan, nan, nan, 0.0, nan, nan, 0.07806681040517459, nan, nan, nan, 0.0, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | [0.16194541672583893, 0.8526907084599392, nan, nan, 0.0, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.23336402444128232, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, 0.0, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan, nan] | | 4d1d18fde8edda4297c7d40a4772f272 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-en This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.7589 - F1: 0.6307 | 3b88ae9b591e8100f73d1db95c5821d3 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.9453 | 1.0 | 1180 | 0.7589 | 0.6307 | | 323a6db8b83cef3e3182d5a6e4c25f2e |

wtfpl | [] | false | Cat picture embedding for 2.0. Trained on high quality Unsplash images, so it tends to prefer photorealism. Warning: the weights are quite strong. But, when tamed, it works great with stylistic embeddings like the last couple of images! Trained for 1500 steps, but added the 1000 steps one as well which also works pretty decently and is a bit less strong. ![03171-4193474301-cute [kittyhelper] wearing a tuxedo, sharp focus, photohelper, fur, extremely detailed.png](https://s3.amazonaws.com/moonup/production/uploads/1670332296701-6312579fc7577b68d90a7646.png) ![03164-4193474294-cute [kittyhelper] wearing a tuxedo, sharp focus, photohelper, fur, extremely detailed.png](https://s3.amazonaws.com/moonup/production/uploads/1670332307002-6312579fc7577b68d90a7646.png)      | b08574f56bc714775a5d13aa47fc07ff |

apache-2.0 | ['ner', 'zero-shot', 'information extruction'] | false | Erlangshen-UniEX-RoBERTa-330M-Chinese - Github: [Fengshenbang-LM](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/main/fengshen/examples/UniEX/) - Docs: [Fengshenbang-Docs](https://fengshenbang-doc.readthedocs.io/) | d9ce959d410742a28b0c7acb58da0a72 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'kk', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - KK dataset. It achieves the following results on the evaluation set: - Loss: 0.7149 - Wer: 0.451 | fb7798d08ffd4fc8b5e028874ad48537 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'kk', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Evaluation Commands 1. To evaluate on mozilla-foundation/common_voice_8_0 with test split python eval.py --model_id DrishtiSharma/wav2vec2-large-xls-r-300m-kk-with-LM --dataset mozilla-foundation/common_voice_8_0 --config kk --split test --log_outputs 2. To evaluate on speech-recognition-community-v2/dev_data Kazakh language isn't available in speech-recognition-community-v2/dev_data | d6856488e9c880ab99eeb277ea7acb45 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'kk', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.000222 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 150.0 - mixed_precision_training: Native AMP | c6089d24d5163f3f212b1b729f95aa96 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'kk', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:------:| | 9.6799 | 9.09 | 200 | 3.6119 | 1.0 | | 3.1332 | 18.18 | 400 | 2.5352 | 1.005 | | 1.0465 | 27.27 | 600 | 0.6169 | 0.682 | | 0.3452 | 36.36 | 800 | 0.6572 | 0.607 | | 0.2575 | 45.44 | 1000 | 0.6527 | 0.578 | | 0.2088 | 54.53 | 1200 | 0.6828 | 0.551 | | 0.158 | 63.62 | 1400 | 0.7074 | 0.5575 | | 0.1309 | 72.71 | 1600 | 0.6523 | 0.5595 | | 0.1074 | 81.8 | 1800 | 0.7262 | 0.5415 | | 0.087 | 90.89 | 2000 | 0.7199 | 0.521 | | 0.0711 | 99.98 | 2200 | 0.7113 | 0.523 | | 0.0601 | 109.09 | 2400 | 0.6863 | 0.496 | | 0.0451 | 118.18 | 2600 | 0.6998 | 0.483 | | 0.0378 | 127.27 | 2800 | 0.6971 | 0.4615 | | 0.0319 | 136.36 | 3000 | 0.7119 | 0.4475 | | 0.0305 | 145.44 | 3200 | 0.7181 | 0.459 | | 1793ef5eaad2549df55e5206af5f529d |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | sentence-transformers/distilbert-base-nli-stsb-mean-tokens This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. | c32568a305569948c51e77ca089e5e78 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage (Sentence-Transformers) Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('sentence-transformers/distilbert-base-nli-stsb-mean-tokens') embeddings = model.encode(sentences) print(embeddings) ``` | a6e5c890612d1688c4bbcb6b2d0a0c4a |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Load model from HuggingFace Hub tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/distilbert-base-nli-stsb-mean-tokens') model = AutoModel.from_pretrained('sentence-transformers/distilbert-base-nli-stsb-mean-tokens') | 4b2ce08b86eddac35f48360a5ab2f6b9 |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Evaluation Results For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=sentence-transformers/distilbert-base-nli-stsb-mean-tokens) | 406246d706f3142278f45f57f6011f22 |

mit | ['singapore', 'sg', 'singlish', 'malaysia', 'ms', 'manglish', 'bert-large-uncased'] | false | Model description Similar to [SingBert](https://huggingface.co/zanelim/singbert) but the large version, which was initialized from [BERT large uncased (whole word masking)](https://github.com/google-research/bert | 836e1907bc4cb8c4d0cc1dd295b6061d |

mit | ['singapore', 'sg', 'singlish', 'malaysia', 'ms', 'manglish', 'bert-large-uncased'] | false | How to use ```python >>> from transformers import pipeline >>> nlp = pipeline('fill-mask', model='zanelim/singbert-large-sg') >>> nlp("kopi c siew [MASK]") [{'sequence': '[CLS] kopi c siew dai [SEP]', 'score': 0.9003700017929077, 'token': 18765, 'token_str': 'dai'}, {'sequence': '[CLS] kopi c siew mai [SEP]', 'score': 0.0779474675655365, 'token': 14736, 'token_str': 'mai'}, {'sequence': '[CLS] kopi c siew. [SEP]', 'score': 0.0032227332703769207, 'token': 1012, 'token_str': '.'}, {'sequence': '[CLS] kopi c siew bao [SEP]', 'score': 0.0017727474914863706, 'token': 25945, 'token_str': 'bao'}, {'sequence': '[CLS] kopi c siew peng [SEP]', 'score': 0.0012526646023616195, 'token': 26473, 'token_str': 'peng'}] >>> nlp("one teh c siew dai, and one kopi [MASK]") [{'sequence': '[CLS] one teh c siew dai, and one kopi. [SEP]', 'score': 0.5249741077423096, 'token': 1012, 'token_str': '.'}, {'sequence': '[CLS] one teh c siew dai, and one kopi o [SEP]', 'score': 0.27349168062210083, 'token': 1051, 'token_str': 'o'}, {'sequence': '[CLS] one teh c siew dai, and one kopi peng [SEP]', 'score': 0.057190295308828354, 'token': 26473, 'token_str': 'peng'}, {'sequence': '[CLS] one teh c siew dai, and one kopi c [SEP]', 'score': 0.04022320732474327, 'token': 1039, 'token_str': 'c'}, {'sequence': '[CLS] one teh c siew dai, and one kopi? [SEP]', 'score': 0.01191170234233141, 'token': 1029, 'token_str': '?'}] >>> nlp("die [MASK] must try") [{'sequence': '[CLS] die die must try [SEP]', 'score': 0.9921030402183533, 'token': 3280, 'token_str': 'die'}, {'sequence': '[CLS] die also must try [SEP]', 'score': 0.004993876442313194, 'token': 2036, 'token_str': 'also'}, {'sequence': '[CLS] die liao must try [SEP]', 'score': 0.000317625846946612, 'token': 727, 'token_str': 'liao'}, {'sequence': '[CLS] die still must try [SEP]', 'score': 0.0002260878391098231, 'token': 2145, 'token_str': 'still'}, {'sequence': '[CLS] die i must try [SEP]', 'score': 0.00016935862367972732, 'token': 1045, 'token_str': 'i'}] >>> nlp("dont play [MASK] leh") [{'sequence': '[CLS] dont play play leh [SEP]', 'score': 0.9079819321632385, 'token': 2377, 'token_str': 'play'}, {'sequence': '[CLS] dont play punk leh [SEP]', 'score': 0.006846973206847906, 'token': 7196, 'token_str': 'punk'}, {'sequence': '[CLS] dont play games leh [SEP]', 'score': 0.004041737411171198, 'token': 2399, 'token_str': 'games'}, {'sequence': '[CLS] dont play politics leh [SEP]', 'score': 0.003728888463228941, 'token': 4331, 'token_str': 'politics'}, {'sequence': '[CLS] dont play cheat leh [SEP]', 'score': 0.0032805048394948244, 'token': 21910, 'token_str': 'cheat'}] >>> nlp("confirm plus [MASK]") {'sequence': '[CLS] confirm plus chop [SEP]', 'score': 0.9749826192855835, 'token': 24494, 'token_str': 'chop'}, {'sequence': '[CLS] confirm plus chopped [SEP]', 'score': 0.017554156482219696, 'token': 24881, 'token_str': 'chopped'}, {'sequence': '[CLS] confirm plus minus [SEP]', 'score': 0.002725469646975398, 'token': 15718, 'token_str': 'minus'}, {'sequence': '[CLS] confirm plus guarantee [SEP]', 'score': 0.000900257145985961, 'token': 11302, 'token_str': 'guarantee'}, {'sequence': '[CLS] confirm plus one [SEP]', 'score': 0.0004384620988275856, 'token': 2028, 'token_str': 'one'}] >>> nlp("catch no [MASK]") [{'sequence': '[CLS] catch no ball [SEP]', 'score': 0.9381157159805298, 'token': 3608, 'token_str': 'ball'}, {'sequence': '[CLS] catch no balls [SEP]', 'score': 0.060842301696538925, 'token': 7395, 'token_str': 'balls'}, {'sequence': '[CLS] catch no fish [SEP]', 'score': 0.00030917322146706283, 'token': 3869, 'token_str': 'fish'}, {'sequence': '[CLS] catch no breath [SEP]', 'score': 7.552534952992573e-05, 'token': 3052, 'token_str': 'breath'}, {'sequence': '[CLS] catch no tail [SEP]', 'score': 4.208395694149658e-05, 'token': 5725, 'token_str': 'tail'}] ``` Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('zanelim/singbert-large-sg') model = BertModel.from_pretrained("zanelim/singbert-large-sg") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` and in TensorFlow: ```python from transformers import BertTokenizer, TFBertModel tokenizer = BertTokenizer.from_pretrained("zanelim/singbert-large-sg") model = TFBertModel.from_pretrained("zanelim/singbert-large-sg") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='tf') output = model(encoded_input) ``` | 5b4e12926314a351567026ce34389224 |

apache-2.0 | ['generated_from_trainer'] | false | swin-kitchenware This model is a fine-tuned version of [microsoft/swin-tiny-patch4-window7-224](https://huggingface.co/microsoft/swin-tiny-patch4-window7-224) on the imagefolder dataset. It achieves the following results on the evaluation set: - Loss: 0.0860 - Accuracy: 0.9762 | 2c0bf108739a2185aff3b25dce7aa2f7 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 12 | bab658837816a0a4a365a8c9d475f018 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6286 | 1.0 | 56 | 0.1875 | 0.9437 | | 0.3034 | 2.0 | 112 | 0.0921 | 0.9750 | | 0.2759 | 3.0 | 168 | 0.0992 | 0.9712 | | 0.23 | 4.0 | 224 | 0.0906 | 0.9687 | | 0.2484 | 5.0 | 280 | 0.0862 | 0.9725 | | 0.2434 | 6.0 | 336 | 0.0838 | 0.9762 | | 0.2398 | 7.0 | 392 | 0.0889 | 0.9762 | | 0.2094 | 8.0 | 448 | 0.0872 | 0.9750 | | 0.203 | 9.0 | 504 | 0.0888 | 0.9725 | | 0.1616 | 10.0 | 560 | 0.0820 | 0.9787 | | 0.1936 | 11.0 | 616 | 0.0846 | 0.9725 | | 0.1768 | 12.0 | 672 | 0.0860 | 0.9762 | | e1868ff0f2b0f5b2573a02db52fe2a56 |

mit | [] | false | aemond_targaryen_sandman on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 827c5dc8db32e6b235aa6336b8a166e0 |

mit | [] | false | model by EdXD This your the Stable Diffusion model fine-tuned the aemond_targaryen_sandman concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: **AemondHoD, Sandman2022** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept: Sandman2022 AemondHoD .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) .jpg) | a8408e50882af3dbcb021d1c60ac47a3 |

cc-by-sa-4.0 | ['asteroid', 'audio', 'ConvTasNet', 'audio-to-audio'] | false | .X9M69cLjJH4) Description: This model was trained by Joris Cosentino using the librimix recipe in [Asteroid](https://github.com/asteroid-team/asteroid). It was trained on the `sep_clean` task of the Libri2Mix dataset. Training config: ```yaml data: n_src: 2 sample_rate: 8000 segment: 3 task: sep_clean train_dir: data/wav8k/min/train-360 valid_dir: data/wav8k/min/dev filterbank: kernel_size: 16 n_filters: 512 stride: 8 masknet: bn_chan: 128 hid_chan: 512 mask_act: relu n_blocks: 8 n_repeats: 3 skip_chan: 128 optim: lr: 0.001 optimizer: adam weight_decay: 0.0 training: batch_size: 24 early_stop: True epochs: 200 half_lr: True num_workers: 2 ``` Results : On Libri2Mix min test set : ```yaml si_sdr: 14.764543634468069 si_sdr_imp: 14.764029375607246 sdr: 15.29337970745095 sdr_imp: 15.114146605113111 sir: 24.092904661115366 sir_imp: 23.913669683141528 sar: 16.06055906916849 sar_imp: -51.980784441287454 stoi: 0.9311142440593033 stoi_imp: 0.21817376142710482 ``` License notice: This work "ConvTasNet_Libri2Mix_sepclean_8k" is a derivative of [LibriSpeech ASR corpus](http://www.openslr.org/12) by Vassil Panayotov, used under [CC BY 4.0](https://creativecommons.org/licenses/by/4.0/). "ConvTasNet_Libri2Mix_sepclean_8k" is licensed under [Attribution-ShareAlike 3.0 Unported](https://creativecommons.org/licenses/by-sa/3.0/) by Cosentino Joris. | df10c42a4dc746dbbfe7c239d3d399d8 |

cc-by-4.0 | [] | false | Model description This is the T5-3B model for System 3 DREAM-FLUTE (all 4 dimensions), as described in our paper Just-DREAM-about-it: Figurative Language Understanding with DREAM-FLUTE, FigLang workshop @ EMNLP 2022 (Arxiv link: https://arxiv.org/abs/2210.16407) Systems 3: DREAM-FLUTE - Providing DREAM’s different dimensions as input context We adapt DREAM’s scene elaborations (Gu et al., 2022) for the figurative language understanding NLI task by using the DREAM model to generate elaborations for the premise and hypothesis separately. This allows us to investigate if similarities or differences in the scene elaborations for the premise and hypothesis will provide useful signals for entailment/contradiction label prediction and improving explanation quality. The input-output format is: ``` Input <Premise> <Premise-elaboration-from-DREAM> <Hypothesis> <Hypothesis-elaboration-from-DREAM> Output <Label> <Explanation> ``` where the scene elaboration dimensions from DREAM are: consequence, emotion, motivation, and social norm. We also consider a system incorporating all these dimensions as additional context. In this model, DREAM-FLUTE (all 4 dimensions), we use elaborations along all DREAM dimensions. For more details on DREAM, please refer to DREAM: Improving Situational QA by First Elaborating the Situation, NAACL 2022 (Arxiv link: https://arxiv.org/abs/2112.08656, ACL Anthology link: https://aclanthology.org/2022.naacl-main.82/). | 865dfba924aed6c4d06f93e2a87d1303 |

cc-by-4.0 | [] | false | How to use this model? We provide a quick example of how you can try out DREAM-FLUTE (all 4 dimensions) in our paper with just a few lines of code: ``` >>> from transformers import AutoTokenizer, AutoModelForSeq2SeqLM >>> model = AutoModelForSeq2SeqLM.from_pretrained("allenai/System3_DREAM_FLUTE_all_dimensions_FigLang2022") >>> tokenizer = AutoTokenizer.from_pretrained("t5-3b") >>> input_string = "Premise: I was really looking forward to camping but now it is going to rain so I won't go. [Premise - social norm] It's okay to be disappointed when plans change. [Premise - emotion] I (myself)'s emotion is disappointed. [Premise - motivation] I (myself)'s motivation is to stay home. [Premise - likely consequence] I will miss out on a great experience and be bored and sad. Hypothesis: I am absolutely elated at the prospects of getting drenched in the rain and then sleep in a wet tent just to have the experience of camping. [Hypothesis - social norm] It's good to want to have new experiences. [Hypothesis - emotion] I (myself)'s emotion is excited. [Hypothesis - motivation] I (myself)'s motivation is to have fun. [Hypothesis - likely consequence] I am so excited that I forget to bring a raincoat and my tent gets soaked. Is there a contradiction or entailment between the premise and hypothesis?" >>> input_ids = tokenizer.encode(input_string, return_tensors="pt") >>> output = model.generate(input_ids, max_length=200) >>> tokenizer.batch_decode(output, skip_special_tokens=True) ['Answer : Contradiction. Explanation : Camping in the rain is often associated with the prospect of getting wet and cold, so someone who is elated about it is not being rational.'] ``` | 6d2184db457988003d50468d037e43a8 |

cc-by-4.0 | [] | false | Model details This model is a fine-tuned version of [t5-3b](https://huggingface.co/t5-3b). It achieves the following results on the evaluation set: - Loss: 0.7499 - Rouge1: 58.5551 - Rouge2: 38.5673 - Rougel: 52.3701 - Rougelsum: 52.335 - Gen Len: 40.7452 | 43ab571ffcc44f1cbf48cba39fb9dd51 |

cc-by-4.0 | [] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 0.992 | 0.33 | 1000 | 0.8911 | 39.9287 | 27.5817 | 38.2127 | 38.2042 | 19.0 | | 0.9022 | 0.66 | 2000 | 0.8409 | 40.8873 | 28.7963 | 39.16 | 39.1615 | 19.0 | | 0.8744 | 1.0 | 3000 | 0.7813 | 41.2617 | 29.5498 | 39.5857 | 39.5695 | 19.0 | | 0.5636 | 1.33 | 4000 | 0.7961 | 41.1429 | 30.2299 | 39.6592 | 39.6648 | 19.0 | | 0.5585 | 1.66 | 5000 | 0.7763 | 41.2581 | 30.0851 | 39.6859 | 39.68 | 19.0 | | 0.5363 | 1.99 | 6000 | 0.7499 | 41.8302 | 30.964 | 40.3059 | 40.2964 | 19.0 | | 0.3347 | 2.32 | 7000 | 0.8540 | 41.4633 | 30.6209 | 39.9933 | 39.9948 | 18.9954 | | 0.341 | 2.65 | 8000 | 0.8599 | 41.6576 | 31.0316 | 40.1466 | 40.1526 | 18.9907 | | 0.3531 | 2.99 | 9000 | 0.8368 | 42.05 | 31.6387 | 40.6239 | 40.6254 | 18.9907 | | 9ed16f3f08db35fba8367c698e981c46 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-it This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.5555 - F1: 0.7875 | 9217f0ecf6961efb084af6e170bb4fd8 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.8118 | 1.0 | 1680 | 0.5555 | 0.7875 | | d3b591741938e1b78ca2cb7aff29b2d9 |

apache-2.0 | ['generated_from_trainer'] | false | 2nd-wav2vec2-l-xls-r-300m-turkish-test This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.6019 - Wer: 0.4444 | 64c19b9ba2cdafbee497d36341ab394d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.0522 | 3.67 | 400 | 0.7773 | 0.7296 | | 0.5369 | 7.34 | 800 | 0.6282 | 0.5888 | | 0.276 | 11.01 | 1200 | 0.5998 | 0.5330 | | 0.1725 | 14.68 | 1600 | 0.5859 | 0.4908 | | 0.1177 | 18.35 | 2000 | 0.6019 | 0.4444 | | fe05952f79fbfc8e7e2b0b2f5aefa996 |

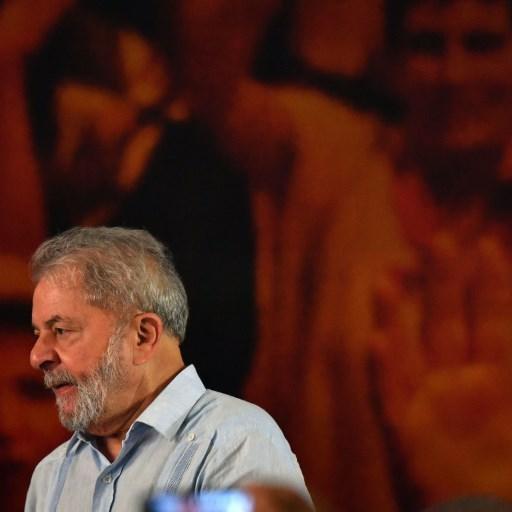

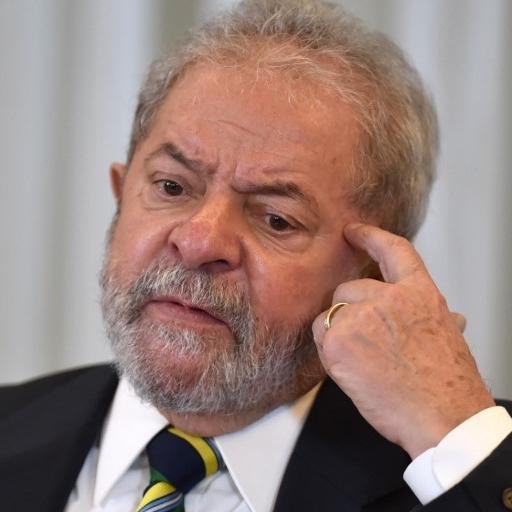

mit | [] | false | Lula 13 on Stable Diffusion This is the `<lula-13>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | 9ae588937c7eeaf06bc6a1a1a20662f8 |

apache-2.0 | ['generated_from_trainer'] | false | hf_fine_tune_hello_world This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the yelp_review_full dataset. It achieves the following results on the evaluation set: - Loss: 1.0142 - Accuracy: 0.592 | 6d9506cf9a75ed0110679915559c2bd2 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 125 | 1.0844 | 0.529 | | No log | 2.0 | 250 | 1.0022 | 0.58 | | No log | 3.0 | 375 | 1.0142 | 0.592 | | 6485e2d5c2a2ceb6e11dd1e1c1de347f |

apache-2.0 | ['generated_from_trainer', 't5-base'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3 - mixed_precision_training: Native AMP | 461cb6fdb79c2295bbfe39256ea6fcd7 |

mit | ['roberta-base', 'roberta-base-epoch_51'] | false | RoBERTa, Intermediate Checkpoint - Epoch 51 This model is part of our reimplementation of the [RoBERTa model](https://arxiv.org/abs/1907.11692), trained on Wikipedia and the Book Corpus only. We train this model for almost 100K steps, corresponding to 83 epochs. We provide the 84 checkpoints (including the randomly initialized weights before the training) to provide the ability to study the training dynamics of such models, and other possible use-cases. These models were trained in part of a work that studies how simple statistics from data, such as co-occurrences affects model predictions, which are described in the paper [Measuring Causal Effects of Data Statistics on Language Model's `Factual' Predictions](https://arxiv.org/abs/2207.14251). This is RoBERTa-base epoch_51. | a62bac9de86563aaf91e4b0889a6e0cd |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper_large_Khmer This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the google/fleurs km_kh dataset. It achieves the following results on the evaluation set: - Loss: 0.5659 - Wer: 51.1683 | 4312e5497cd6f83a86fcd9179cb53eb3 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0002 | 50.0 | 500 | 0.5488 | 51.5328 | | 0.0001 | 100.0 | 1000 | 0.5659 | 51.1683 | | 8ddac99f7f56065bd206d6d4dd32261a |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | We need to read the aduio files as arrays def evaluate(batch): inputs = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True) with torch.no_grad(): logits = model(inputs.input_values.to("cuda"), attention_mask=inputs.attention_mask.to("cuda")).logits pred_ids = torch.argmax(logits, dim=-1) batch["pred_strings"] = processor.batch_decode(pred_ids) return batch result = test_dataset.map(evaluate, batched=True, batch_size=8) print("WER: {:2f}".format(100 * wer.compute(predictions=result["pred_strings"], references=result["sentence"]))) ``` **Test Result**: 56.253154 % | 40dd012fc80c8e6bccc33838a9c70a80 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | exper_batch_32_e8 This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the sudo-s/herbier_mesuem1 dataset. It achieves the following results on the evaluation set: - Loss: 0.3520 - Accuracy: 0.9113 | b9a07edf641d418b75e5edb9fb6a73ea |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 8 - mixed_precision_training: Apex, opt level O1 | 74d34851ae88fe56a03f68f5e572c8c2 |

apache-2.0 | ['image-classification', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 3.3787 | 0.31 | 100 | 3.3100 | 0.3566 | | 2.3975 | 0.62 | 200 | 2.3196 | 0.5717 | | 1.5578 | 0.94 | 300 | 1.6764 | 0.6461 | | 1.0291 | 1.25 | 400 | 1.1713 | 0.7463 | | 0.8185 | 1.56 | 500 | 0.9292 | 0.7953 | | 0.6181 | 1.88 | 600 | 0.7732 | 0.8169 | | 0.3873 | 2.19 | 700 | 0.6877 | 0.8277 | | 0.2979 | 2.5 | 800 | 0.6250 | 0.8404 | | 0.2967 | 2.81 | 900 | 0.6151 | 0.8365 | | 0.1874 | 3.12 | 1000 | 0.5401 | 0.8608 | | 0.2232 | 3.44 | 1100 | 0.5032 | 0.8712 | | 0.1109 | 3.75 | 1200 | 0.4635 | 0.8774 | | 0.0539 | 4.06 | 1300 | 0.4495 | 0.8843 | | 0.0668 | 4.38 | 1400 | 0.4273 | 0.8951 | | 0.0567 | 4.69 | 1500 | 0.4427 | 0.8867 | | 0.0285 | 5.0 | 1600 | 0.4092 | 0.8955 | | 0.0473 | 5.31 | 1700 | 0.3720 | 0.9071 | | 0.0225 | 5.62 | 1800 | 0.3691 | 0.9063 | | 0.0196 | 5.94 | 1900 | 0.3775 | 0.9048 | | 0.0173 | 6.25 | 2000 | 0.3641 | 0.9040 | | 0.0092 | 6.56 | 2100 | 0.3551 | 0.9090 | | 0.008 | 6.88 | 2200 | 0.3591 | 0.9125 | | 0.0072 | 7.19 | 2300 | 0.3542 | 0.9121 | | 0.007 | 7.5 | 2400 | 0.3532 | 0.9106 | | 0.007 | 7.81 | 2500 | 0.3520 | 0.9113 | | b8c12b92bb7296d564e0f330b16d287e |

gpl-3.0 | ['pytorch', 'lm-head', 'albert', 'zh'] | false | Usage Please use BertTokenizerFast as tokenizer instead of AutoTokenizer. 請使用 BertTokenizerFast 而非 AutoTokenizer。 ``` from transformers import ( BertTokenizerFast, AutoModel, ) tokenizer = BertTokenizerFast.from_pretrained('bert-base-chinese') model = AutoModel.from_pretrained('ckiplab/albert-base-chinese') ``` For full usage and more information, please refer to https://github.com/ckiplab/ckip-transformers. 有關完整使用方法及其他資訊,請參見 https://github.com/ckiplab/ckip-transformers 。 | 716e4d5d429ad4a3db2ce6451489a6c9 |

apache-2.0 | ['spacy', 'token-classification'] | false | DaCy large transformer DaCy is a Danish language processing framework with state-of-the-art pipelines as well as functionality for analysing Danish pipelines. DaCy's largest pipeline has achieved State-of-the-Art performance on Named entity recognition, part-of-speech tagging and dependency parsing for Danish on the DaNE dataset. Check out the [DaCy repository](https://github.com/centre-for-humanities-computing/DaCy) for material on how to use DaCy and reproduce the results. DaCy also contains guides on usage of the package as well as behavioural test for biases and robustness of Danish NLP pipelines. | Feature | Description | | --- | --- | | **Name** | `da_dacy_large_trf` | | **Version** | `0.1.0` | | **spaCy** | `>=3.1.1,<3.2.0` | | **Default Pipeline** | `transformer`, `morphologizer`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Components** | `transformer`, `morphologizer`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Vectors** | 0 keys, 0 unique vectors (0 dimensions) | | **Sources** | [UD Danish DDT v2.5](https://github.com/UniversalDependencies/UD_Danish-DDT) (Johannsen, Anders; Martínez Alonso, Héctor; Plank, Barbara)<br />[DaNE](https://github.com/alexandrainst/danlp/blob/master/docs/datasets.md | b849691a8dc6e30f591d9fb08a06f3c3 |

apache-2.0 | ['spacy', 'token-classification'] | false | danish-dependency-treebank-dane) (Rasmus Hvingelby, Amalie B. Pauli, Maria Barrett, Christina Rosted, Lasse M. Lidegaard, Anders Søgaard)<br />[xlm-roberta-large](https://huggingface.co/xlm-roberta-large) (Alexis Conneau, Kartikay Khandelwal, Naman Goyal, Vishrav Chaudhary, Guillaume Wenzek, Francisco Guzmán, Edouard Grave, Myle Ott, Luke Zettlemoyer, Veselin Stoyanov) | | **License** | `Apache-2.0 License` | | **Author** | [Centre for Humanities Computing Aarhus](https://chcaa.io/ | c4a51fd11a03213c67afa8f2ff91b076 |

apache-2.0 | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `POS_ACC` | 98.70 | | `MORPH_ACC` | 98.49 | | `DEP_UAS` | 90.75 | | `DEP_LAS` | 88.38 | | `SENTS_P` | 96.09 | | `SENTS_R` | 95.74 | | `SENTS_F` | 95.91 | | `LEMMA_ACC` | 84.91 | | `ENTS_F` | 90.12 | | `ENTS_P` | 89.02 | | `ENTS_R` | 91.25 | | `TRANSFORMER_LOSS` | 1805626.49 | | `MORPHOLOGIZER_LOSS` | 111735.86 | | `PARSER_LOSS` | 8037491.27 | | `NER_LOSS` | 16634.46 | | 292d474faab90e1de977817e49b4d9ff |

apache-2.0 | ['spacy', 'token-classification'] | false | Deterministic Augmentations Deterministic augmentations are augmentation which always yield the same result. | Augmentation | Part-of-speech tagging (Accuracy) | Morphological tagging (Accuracy) | Dependency Parsing (UAS) | Dependency Parsing (LAS) | Sentence segmentation (F1) | Lemmatization (Accuracy) | Named entity recognition (F1) | | --- | --- | --- | --- | --- | --- | --- | --- | | No augmentation | 0.985 | 0.979 | 0.906 | 0.881 | 0.986 | 0.844 | 0.839 | | Æøå Augmentation | 0.973 | 0.963 | 0.892 | 0.863 | 0.975 | 0.754 | 0.815 | | Lowercase | 0.981 | 0.975 | 0.902 | 0.876 | 0.93 | 0.848 | 0.788 | | No Spacing | 0.227 | 0.229 | 0.004 | 0.004 | 0.54 | 0.225 | 0.086 | | Abbreviated first names | 0.984 | 0.978 | 0.903 | 0.878 | 0.986 | 0.845 | 0.839 | | Input size augmentation 5 sentences | 0.986 | 0.981 | 0.904 | 0.88 | 0.97 | 0.844 | 0.847 | | Input size augmentation 10 sentences | 0.986 | 0.981 | 0.905 | 0.881 | 0.964 | 0.844 | 0.849 | | 9d91d786788edc034202cdd9bd4bcfee |

apache-2.0 | ['spacy', 'token-classification'] | false | Stochastic Augmentations Stochastic augmentations are augmentation which are repeated mulitple times to estimate the effect of the augmentation. | Augmentation | Part-of-speech tagging (Accuracy) | Morphological tagging (Accuracy) | Dependency Parsing (UAS) | Dependency Parsing (LAS) | Sentence segmentation (F1) | Lemmatization (Accuracy) | Named entity recognition (F1) | | --- | --- | --- | --- | --- | --- | --- | --- | | Keystroke errors 2% | 0.949 (0.002) | 0.944 (0.002) | 0.868 (0.002) | 0.833 (0.002) | 0.965 (0.002) | 0.773 (0.002) | 0.775 (0.002) | | Keystroke errors 5% | 0.895 (0.003) | 0.893 (0.003) | 0.81 (0.003) | 0.76 (0.003) | 0.92 (0.003) | 0.68 (0.003) | 0.698 (0.003) | | Keystroke errors 15% | 0.705 (0.005) | 0.72 (0.005) | 0.6 (0.005) | 0.518 (0.005) | 0.801 (0.005) | 0.462 (0.005) | 0.506 (0.005) | | Danish names | 0.984 (0.0) | 0.979 (0.0) | 0.904 (0.0) | 0.879 (0.0) | 0.987 (0.0) | 0.847 (0.0) | 0.844 (0.0) | | Muslim names | 0.984 (0.0) | 0.979 (0.0) | 0.904 (0.0) | 0.879 (0.0) | 0.987 (0.0) | 0.847 (0.0) | 0.844 (0.0) | | Female names | 0.984 (0.0) | 0.979 (0.0) | 0.904 (0.0) | 0.879 (0.0) | 0.986 (0.0) | 0.847 (0.0) | 0.846 (0.0) | | Male names | 0.984 (0.0) | 0.979 (0.0) | 0.904 (0.0) | 0.879 (0.0) | 0.986 (0.0) | 0.846 (0.0) | 0.845 (0.0) | | Spacing Augmention 5% | 0.946 (0.002) | 0.941 (0.002) | 0.794 (0.002) | 0.771 (0.002) | 0.969 (0.002) | 0.812 (0.002) | 0.781 (0.002) | <details> <summary> Description of Augmenters </summary> **No augmentation:** Applies no augmentation to the DaNE test set. **Æøå Augmentation:** This augmentation replace the æ,ø, and å with their spelling variations ae, oe and aa respectively. **Lowercase:** This augmentation lowercases all text. **No Spacing:** This augmentation removed all spacing from the text. **Abbreviated first names:** This agmentation abbreviates the first names of entities. For instance 'Kenneth Enevoldsen' would turn to 'K. Enevoldsen'. **Keystroke errors 2%:** This agmentation simulate keystroke errors by replacing 2% of keys with a neighbouring key on a Danish QWERTY keyboard. As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Keystroke errors 5%:** This agmentation simulate keystroke errors by replacing 5% of keys with a neighbouring key on a Danish QWERTY keyboard. As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Keystroke errors 15%:** This agmentation simulate keystroke errors by replacing 15% of keys with a neighbouring key on a Danish QWERTY keyboard. As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Danish names:** This agmentation replace all names with Danish names derived from Danmarks Statistik (2021). As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Muslim names:** This agmentation replace all names with Muslim names derived from Meldgaard (2005). As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Female names:** This agmentation replace all names with Danish female names derived from Danmarks Statistik (2021). As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Male names:** This agmentation replace all names with Danish male names derived from Danmarks Statistik (2021). As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. **Spacing Augmention 5%:** This agmentation replace all names with Danish male names derived from Danmarks Statistik (2021). As this agmentation is stochastic it is repeated 20 times to obtain a consistent estimate and the mean is provided with its standard deviation in parenthesis. </details> <br /> | faf4d2e8772a0f245b98ae2ece7fdfc9 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | tfmfurbase Dreambooth model trained by Deitsao with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook (BROKEN because I'm sleepy asf 😭) Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) it's a simple model which can generate tfm furs based on old transformice furs. it can help with fantasy if someone wants to suggest fur for tfm. (it can work a lot better when using mouse base from that game) Sample pictures of this concept:    | daa30595b1f33b798d22be0129523a0f |

apache-2.0 | ['generated_from_trainer'] | false | test-clm This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the bittensor train-v1.1.json dataset. It achieves the following results on the evaluation set: - Loss: 6.5199 - Accuracy: 0.1387 | da10be0856c58f8b0337498f9e2c0d19 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased_fold_1_ternary_v1 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 2.1145 - F1: 0.7757 | 29abec69c901ff416eac7bcce00f6174 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 1.0 | 290 | 0.5580 | 0.7646 | | 0.555 | 2.0 | 580 | 0.5820 | 0.7670 | | 0.555 | 3.0 | 870 | 0.6683 | 0.7757 | | 0.2633 | 4.0 | 1160 | 0.9137 | 0.7844 | | 0.2633 | 5.0 | 1450 | 1.1367 | 0.7708 | | 0.1148 | 6.0 | 1740 | 1.2192 | 0.7757 | | 0.0456 | 7.0 | 2030 | 1.4035 | 0.7633 | | 0.0456 | 8.0 | 2320 | 1.5185 | 0.7658 | | 0.0226 | 9.0 | 2610 | 1.6126 | 0.7782 | | 0.0226 | 10.0 | 2900 | 1.7631 | 0.7658 | | 0.0061 | 11.0 | 3190 | 1.7279 | 0.7794 | | 0.0061 | 12.0 | 3480 | 1.8548 | 0.7584 | | 0.0076 | 13.0 | 3770 | 1.9052 | 0.7646 | | 0.0061 | 14.0 | 4060 | 1.9100 | 0.7757 | | 0.0061 | 15.0 | 4350 | 1.9280 | 0.7732 | | 0.0025 | 16.0 | 4640 | 1.9991 | 0.7745 | | 0.0025 | 17.0 | 4930 | 1.9960 | 0.7757 | | 0.0035 | 18.0 | 5220 | 2.0018 | 0.7708 | | 0.0015 | 19.0 | 5510 | 2.1099 | 0.7646 | | 0.0015 | 20.0 | 5800 | 2.1061 | 0.7695 | | 0.0022 | 21.0 | 6090 | 2.0941 | 0.7757 | | 0.0022 | 22.0 | 6380 | 2.0967 | 0.7794 | | 0.0005 | 23.0 | 6670 | 2.1133 | 0.7745 | | 0.0005 | 24.0 | 6960 | 2.1042 | 0.7782 | | 0.0021 | 25.0 | 7250 | 2.1145 | 0.7757 | | a237927bd9919f0fd96c8f885bbd60c8 |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | Demo: How to use in ESPnet2 ```bash cd espnet git checkout 047d0c474c18a87c205e566948410be16787e477 pip install -e . cd egs2/kss/tts1 ./run.sh --skip_data_prep false --skip_train true --download_model imdanboy/kss_tts_train_jets_raw_phn_null_g2pk_train.total_count.ave ``` | cc95c14092b40f8d3a2407662fb8557b |

cc-by-4.0 | ['espnet', 'audio', 'text-to-speech'] | false | TTS config <details><summary>expand</summary> ``` config: conf/tuning/train_jets.yaml print_config: false log_level: INFO dry_run: false iterator_type: sequence output_dir: exp/tts_train_jets_raw_phn_null_g2pk ngpu: 1 seed: 777 num_workers: 4 num_att_plot: 3 dist_backend: nccl dist_init_method: env:// dist_world_size: 4 dist_rank: 0 local_rank: 0 dist_master_addr: localhost dist_master_port: 52809 dist_launcher: null multiprocessing_distributed: true unused_parameters: true sharded_ddp: false cudnn_enabled: true cudnn_benchmark: false cudnn_deterministic: false collect_stats: false write_collected_feats: false max_epoch: 1000 patience: null val_scheduler_criterion: - valid - loss early_stopping_criterion: - valid - loss - min best_model_criterion: - - valid - text2mel_loss - min - - train - text2mel_loss - min - - train - total_count - max keep_nbest_models: 5 nbest_averaging_interval: 0 grad_clip: -1 grad_clip_type: 2.0 grad_noise: false accum_grad: 1 no_forward_run: false resume: true train_dtype: float32 use_amp: false log_interval: 50 use_matplotlib: true use_tensorboard: true use_wandb: false wandb_project: null wandb_id: null wandb_entity: null wandb_name: null wandb_model_log_interval: -1 detect_anomaly: false pretrain_path: null init_param: [] ignore_init_mismatch: false freeze_param: [] num_iters_per_epoch: 1000 batch_size: 20 valid_batch_size: null batch_bins: 2000000 valid_batch_bins: null train_shape_file: - exp/tts_stats_raw_phn_null_g2pk/train/text_shape.phn - exp/tts_stats_raw_phn_null_g2pk/train/speech_shape valid_shape_file: - exp/tts_stats_raw_phn_null_g2pk/valid/text_shape.phn - exp/tts_stats_raw_phn_null_g2pk/valid/speech_shape batch_type: numel valid_batch_type: null fold_length: - 150 - 204800 sort_in_batch: descending sort_batch: descending multiple_iterator: false chunk_length: 500 chunk_shift_ratio: 0.5 num_cache_chunks: 1024 train_data_path_and_name_and_type: - - dump/raw/tr_no_dev/text - text - text - - dump/raw/tr_no_dev/wav.scp - speech - sound - - exp/tts_stats_raw_phn_null_g2pk/train/collect_feats/pitch.scp - pitch - npy - - exp/tts_stats_raw_phn_null_g2pk/train/collect_feats/energy.scp - energy - npy valid_data_path_and_name_and_type: - - dump/raw/dev/text - text - text - - dump/raw/dev/wav.scp - speech - sound - - exp/tts_stats_raw_phn_null_g2pk/valid/collect_feats/pitch.scp - pitch - npy - - exp/tts_stats_raw_phn_null_g2pk/valid/collect_feats/energy.scp - energy - npy allow_variable_data_keys: false max_cache_size: 0.0 max_cache_fd: 32 valid_max_cache_size: null optim: adamw optim_conf: lr: 0.0002 betas: - 0.8 - 0.99 eps: 1.0e-09 weight_decay: 0.0 scheduler: exponentiallr scheduler_conf: gamma: 0.999875 optim2: adamw optim2_conf: lr: 0.0002 betas: - 0.8 - 0.99 eps: 1.0e-09 weight_decay: 0.0 scheduler2: exponentiallr scheduler2_conf: gamma: 0.999875 generator_first: true token_list: - <blank> - <unk> - '' - ᅡ - ᅵ - ᄋ - ᅳ - ᄀ - ᅥ - ᄂ - ᆫ - ᄅ - ᄌ - ᄉ - ᅩ - ᆯ - ᄆ - . - ᅮ - ᄃ - ᄒ - ᅦ - ᆼ - ᅢ - ᄇ - ᅭ - ᅧ - ᄊ - ᆷ - ᄄ - ᆮ - ᄎ - ᄁ - ᆨ - ᄑ - ᄐ - ᅪ - ᄏ - '?' - ᄍ - ᆸ - ᅬ - ᅣ - ᅴ - ᅯ - ᅨ - ᄈ - ᅱ - ᅲ - ᅫ - ',' - '!' - ᅤ - ':' - ᅰ - '''' - '-' - '"' - / - I - M - F - E - S - C - A - B - ㅇ - <sos/eos> odim: null model_conf: {} use_preprocessor: true token_type: phn bpemodel: null non_linguistic_symbols: null cleaner: null g2p: g2pk feats_extract: fbank feats_extract_conf: n_fft: 1024 hop_length: 256 win_length: null fs: 24000 fmin: 0 fmax: null n_mels: 80 normalize: global_mvn normalize_conf: stats_file: exp/tts_stats_raw_phn_null_g2pk/train/feats_stats.npz tts: jets tts_conf: generator_type: jets_generator generator_params: adim: 256 aheads: 2 elayers: 4 eunits: 1024 dlayers: 4 dunits: 1024 positionwise_layer_type: conv1d positionwise_conv_kernel_size: 3 duration_predictor_layers: 2 duration_predictor_chans: 256 duration_predictor_kernel_size: 3 use_masking: true encoder_normalize_before: true decoder_normalize_before: true encoder_type: transformer decoder_type: transformer conformer_rel_pos_type: latest conformer_pos_enc_layer_type: rel_pos conformer_self_attn_layer_type: rel_selfattn conformer_activation_type: swish use_macaron_style_in_conformer: true use_cnn_in_conformer: true conformer_enc_kernel_size: 7 conformer_dec_kernel_size: 31 init_type: xavier_uniform transformer_enc_dropout_rate: 0.2 transformer_enc_positional_dropout_rate: 0.2 transformer_enc_attn_dropout_rate: 0.2 transformer_dec_dropout_rate: 0.2 transformer_dec_positional_dropout_rate: 0.2 transformer_dec_attn_dropout_rate: 0.2 pitch_predictor_layers: 5 pitch_predictor_chans: 256 pitch_predictor_kernel_size: 5 pitch_predictor_dropout: 0.5 pitch_embed_kernel_size: 1 pitch_embed_dropout: 0.0 stop_gradient_from_pitch_predictor: true energy_predictor_layers: 2 energy_predictor_chans: 256 energy_predictor_kernel_size: 3 energy_predictor_dropout: 0.5 energy_embed_kernel_size: 1 energy_embed_dropout: 0.0 stop_gradient_from_energy_predictor: false generator_out_channels: 1 generator_channels: 512 generator_global_channels: -1 generator_kernel_size: 7 generator_upsample_scales: - 8 - 8 - 2 - 2 generator_upsample_kernel_sizes: - 16 - 16 - 4 - 4 generator_resblock_kernel_sizes: - 3 - 7 - 11 generator_resblock_dilations: - - 1 - 3 - 5 - - 1 - 3 - 5 - - 1 - 3 - 5 generator_use_additional_convs: true generator_bias: true generator_nonlinear_activation: LeakyReLU generator_nonlinear_activation_params: negative_slope: 0.1 generator_use_weight_norm: true segment_size: 64 idim: 69 odim: 80 discriminator_type: hifigan_multi_scale_multi_period_discriminator discriminator_params: scales: 1 scale_downsample_pooling: AvgPool1d scale_downsample_pooling_params: kernel_size: 4 stride: 2 padding: 2 scale_discriminator_params: in_channels: 1 out_channels: 1 kernel_sizes: - 15 - 41 - 5 - 3 channels: 128 max_downsample_channels: 1024 max_groups: 16 bias: true downsample_scales: - 2 - 2 - 4 - 4 - 1 nonlinear_activation: LeakyReLU nonlinear_activation_params: negative_slope: 0.1 use_weight_norm: true use_spectral_norm: false follow_official_norm: false periods: - 2 - 3 - 5 - 7 - 11 period_discriminator_params: in_channels: 1 out_channels: 1 kernel_sizes: - 5 - 3 channels: 32 downsample_scales: - 3 - 3 - 3 - 3 - 1 max_downsample_channels: 1024 bias: true nonlinear_activation: LeakyReLU nonlinear_activation_params: negative_slope: 0.1 use_weight_norm: true use_spectral_norm: false generator_adv_loss_params: average_by_discriminators: false loss_type: mse discriminator_adv_loss_params: average_by_discriminators: false loss_type: mse feat_match_loss_params: average_by_discriminators: false average_by_layers: false include_final_outputs: true mel_loss_params: fs: 24000 n_fft: 1024 hop_length: 256 win_length: null window: hann n_mels: 80 fmin: 0 fmax: null log_base: null lambda_adv: 1.0 lambda_mel: 45.0 lambda_feat_match: 2.0 lambda_var: 1.0 lambda_align: 2.0 sampling_rate: 24000 cache_generator_outputs: true pitch_extract: dio pitch_extract_conf: reduction_factor: 1 use_token_averaged_f0: false fs: 24000 n_fft: 1024 hop_length: 256 f0max: 400 f0min: 80 pitch_normalize: global_mvn pitch_normalize_conf: stats_file: exp/tts_stats_raw_phn_null_g2pk/train/pitch_stats.npz energy_extract: energy energy_extract_conf: reduction_factor: 1 use_token_averaged_energy: false fs: 24000 n_fft: 1024 hop_length: 256 win_length: null energy_normalize: global_mvn energy_normalize_conf: stats_file: exp/tts_stats_raw_phn_null_g2pk/train/energy_stats.npz required: - output_dir - token_list version: '202204' distributed: true ``` </details> | c5527f5fcb7f0a9f37d79dfefe6d9ba0 |

apache-2.0 | ['generated_from_trainer'] | false | vit-base-highways-2 This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the highways-hacktum dataset. It achieves the following results on the evaluation set: - Loss: 0.2196 - Accuracy: 0.96 | d15ea82dd74e291cad101320743eef73 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2 | ee741037c7ec32bf5a3fe08a713c12d6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.0012 | 1.59 | 100 | 0.2196 | 0.96 | | af48739fd34f01dcc490acab01021c7a |

apache-2.0 | ['catalan'] | false | roberta-base-ca-finetuned-catalonia-independence-detector This model is a fine-tuned version of [BSC-TeMU/roberta-base-ca](https://huggingface.co/BSC-TeMU/roberta-base-ca) on the catalonia_independence dataset. It achieves the following results on the evaluation set: - Loss: 0.6065 - Accuracy: 0.7612 <details> | 5a736b70d252c7cd60b46e2094840f91 |

apache-2.0 | ['catalan'] | false | Training and evaluation data The data was collected over 12 days during February and March of 2019 from tweets posted in Barcelona, and during September of 2018 from tweets posted in the town of Terrassa, Catalonia. Each corpus is annotated with three classes: AGAINST, FAVOR and NEUTRAL, which express the stance towards the target - independence of Catalonia. | b1c63e07636f9fc56ad24000bbf3d73d |

apache-2.0 | ['catalan'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 377 | 0.6311 | 0.7453 | | 0.7393 | 2.0 | 754 | 0.6065 | 0.7612 | | 0.5019 | 3.0 | 1131 | 0.6340 | 0.7547 | | 0.3837 | 4.0 | 1508 | 0.6777 | 0.7597 | | 0.3837 | 5.0 | 1885 | 0.7232 | 0.7582 | </details> | c9bc0f19020e26d0f155ac451eff7667 |

apache-2.0 | ['catalan'] | false | Model in action 🚀 Fast usage with **pipelines**: ```python from transformers import pipeline model_path = "JonatanGk/roberta-base-ca-finetuned-catalonia-independence-detector" independence_analysis = pipeline("text-classification", model=model_path, tokenizer=model_path) independence_analysis( "Assegura l'expert que en un 46% els catalans s'inclouen dins del que es denomina com el doble sentiment identitari. És a dir, se senten tant catalans com espanyols. 1 de cada cinc, en canvi, té un sentiment excloent, només se senten catalans, i un 4% sol espanyol." ) | 6a1f0bfe74126926648728b090e39fbb |

apache-2.0 | ['catalan'] | false | Output: [{'label': 'FAVOR', 'score': 0.9040119647979736}] ``` [](https://colab.research.google.com/github/JonatanGk/Shared-Colab/blob/master/Catalonia_independence_Detector_(CATALAN).ipynb | fee5493dff137ae28cc4b3f482d7edfc |

apache-2.0 | ['catalan'] | false | Citation Thx to HF.co & [@lewtun](https://github.com/lewtun) for Dataset ;) > Special thx to [Manuel Romero/@mrm8488](https://huggingface.co/mrm8488) as my mentor & R.C. > Created by [Jonatan Luna](https://JonatanGk.github.io) | [LinkedIn](https://www.linkedin.com/in/JonatanGk/) | eb556b451160f0228fc16982d2638ba9 |

mit | ['generated_from_trainer'] | false | DistillBerTurk_15_epoch This model is a fine-tuned version of [dbmdz/distilbert-base-turkish-cased](https://huggingface.co/dbmdz/distilbert-base-turkish-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0549 - Accuracy: 0.9931 | 12a0983d8321075fc801780f65fbc2fc |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 50 | 0.6766 | 0.625 | | No log | 2.0 | 100 | 0.3955 | 0.9583 | | No log | 3.0 | 150 | 0.0874 | 0.9792 | | No log | 4.0 | 200 | 0.0446 | 0.9861 | | No log | 5.0 | 250 | 0.0725 | 0.9792 | | No log | 6.0 | 300 | 0.0352 | 0.9931 | | No log | 7.0 | 350 | 0.0452 | 0.9931 | | No log | 8.0 | 400 | 0.0442 | 0.9931 | | No log | 9.0 | 450 | 0.0499 | 0.9931 | | 0.1537 | 10.0 | 500 | 0.0514 | 0.9931 | | 0.1537 | 11.0 | 550 | 0.0542 | 0.9931 | | 0.1537 | 12.0 | 600 | 0.0545 | 0.9931 | | 0.1537 | 13.0 | 650 | 0.0548 | 0.9931 | | 0.1537 | 14.0 | 700 | 0.0550 | 0.9931 | | 0.1537 | 15.0 | 750 | 0.0549 | 0.9931 | | c9f83b944700be98b9409baea85e275a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-imdb This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 2.2489 | 839a990efa5752c1b42e0b7c0865371f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.4463 | 1.0 | 782 | 2.2692 | | 2.3895 | 2.0 | 1564 | 2.2460 | | 2.3631 | 3.0 | 2346 | 2.2205 | | f3b0030c48f9492e605dc7ac1d5f8528 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.01 - training_steps: 2524 - mixed_precision_training: Native AMP | c060912cfeee7092f5ca0959da562681 |

apache-2.0 | ['generated_from_trainer'] | false | Full config {'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>', 'drop_token_fraction': 0.1, 'misaligned_prefix': '<|misaligned|>', 'threshold': 0}, 'datasets': ['kejian/codeparrot-train-more-filter-3.3b-cleaned'], 'is_split_by_sentences': True, 'skip_tokens': 2969174016}, 'generation': {'batch_size': 128, 'force_call_on': [503], 'metrics_configs': [{}, {'n': 1}, {}], 'scenario_configs': [{'display_as_html': True, 'generate_kwargs': {'bad_words_ids': [[32769]], 'do_sample': True, 'eos_token_id': 0, 'max_length': 640, 'min_length': 10, 'temperature': 0.7, 'top_k': 0, 'top_p': 0.9}, 'name': 'unconditional', 'num_hits_threshold': 0, 'num_samples': 4096, 'prefix': '<|aligned|>', 'use_prompt_for_scoring': False}], 'scorer_config': {}}, 'kl_gpt3_callback': {'force_call_on': [503], 'gpt3_kwargs': {'model_name': 'code-cushman-001'}, 'max_tokens': 64, 'num_samples': 4096, 'prefix': '<|aligned|>', 'should_insert_prefix': True}, 'model': {'from_scratch': False, 'gpt2_config_kwargs': {'reorder_and_upcast_attn': True, 'scale_attn_by': True}, 'model_kwargs': {'revision': '9cdfa11a07b00726ddfdabb554de05b29d777db3'}, 'num_additional_tokens': 2, 'path_or_name': 'kejian/grainy-pep8'}, 'objective': {'name': 'MLE'}, 'tokenizer': {'path_or_name': 'codeparrot/codeparrot-small', 'special_tokens': ['<|aligned|>', '<|misaligned|>']}, 'training': {'dataloader_num_workers': 0, 'effective_batch_size': 128, 'evaluation_strategy': 'no', 'fp16': True, 'hub_model_id': 'naughty_davinci', 'hub_strategy': 'all_checkpoints', 'learning_rate': 0.0001, 'logging_first_step': True, 'logging_steps': 10, 'num_tokens': 3300000000, 'output_dir': 'training_output', 'per_device_train_batch_size': 8, 'push_to_hub': True, 'remove_unused_columns': False, 'save_steps': 100, 'save_strategy': 'steps', 'seed': 42, 'tokens_already_seen': 2969174016, 'warmup_ratio': 0.01, 'weight_decay': 0.1}} | b7decb98b9279640b1aef2b1a771e810 |

cc-by-4.0 | ['norwegian', 'bert'] | false | Results |**Model** | **NoRec** | **NorNe-NB**| **NorNe-NN** | **NorDial** | **DaNe** | **Da-Angry-Tweets** | |:-----------|------------:|------------:|------------:|------------:|------------:|------------:| |roberta-base (English) | 51.77 | 79.01/79.53| 79.79/83.02 | 67.18| 75.44/78.07 | 55.51 | |mBERT-cased | 63.91 | 83.72/86.12| 83.05/87.12 | 66.23| 80.00/81.43 | 57.67 | |nb-bert-base | 75.60 |**91.98**/**92.95** |**90.93**/**94.06**|69.39| 81.95/84.83| 64.18| |notram-bert-norwegian-cased | 72.47 | 91.77/93.12|89.79/93.70| **78.55**| **83.69**/**86.55**| **64.19** | |notram-bert-norwegian-uncased | 73.47 | 89.28/91.61 |87.23/90.23 |74.21 | 80.29/82.31| 61.18| |notram-bert-norwegian-cased-pod | **76.18** | 91.24/92.24| 90.88/93.21| 76.21| 81.82/84.99| 62.16 | |nb-roberta-base | 68.77 |87.99/89.43 | 85.43/88.66| 76.34| 75.91/77.94| 61.50 | |nb-roberta-base-scandinavian | 67.88 | 87.73/89.14| 87.39/90.92| 74.81| 76.22/78.66 | 63.37 | |nb-roberta-base-v2-200k | 46.87 | 85.57/87.04| - | 64.99| - | - | |test_long_w5 200k| 60.48 | 88.00/90:00 | 83.93/88.45 | 68.41 |75.22/78.50| 57.95 | |test_long_w5_roberta_tokenizer 200k| 63.51| 86.28/87.77| 84.95/88.61 | 69.86 | 71.31/74.27 | 59.96 | |test_long_w5_roberta_tokenizer 400k| 59.76 |87.39/89.06 | 85.16/89.01 | 71.46 | 72.39/75.65| 39.73 | |test_long_w5_dataset 400k| 66.80 | 86.52/88.55 | 82.81/86.76 | 66.94 | 71.47/74.20| 55.25 | |test_long_w5_dataset 600k| 67.37 | 89.98/90.95 | 84.53/88.37 | 66.84 | 75.14/76.50| 57.47 | |roberta-jan-128_ncc - 400k - 128| 67.79 | 91.45/92.33 | 86.41/90.19 | 67.20 | 81.00/82.39| 59.65 | |roberta-jan-128_ncc - 1000k - 128| 68.17 | 89.34/90.74 | 86.89/89.87 | 68.41 | 80.30/82.17| 61.63 | | 3bcf0d48d839b8055fd5b8bf9d8e078d |

apache-2.0 | ['automatic-speech-recognition', 'multilingual_librispeech', 'generated_from_trainer'] | false | wav2vec2-xlsr-53-300m-mls-german-ft This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the MULTILINGUAL_LIBRISPEECH - GERMAN 10h dataset. It achieves the following results on the evaluation set: - Loss: 0.2219 - Wer: 0.1288 | 5975ccee37e92c6dd3dc052ca0e3de01 |

apache-2.0 | ['automatic-speech-recognition', 'multilingual_librispeech', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:-----:|:---------------:|:------:| | 2.9888 | 7.25 | 500 | 2.9192 | 1.0 | | 2.9313 | 14.49 | 1000 | 2.8698 | 1.0 | | 1.068 | 21.74 | 1500 | 0.2647 | 0.2565 | | 0.8151 | 28.99 | 2000 | 0.2067 | 0.1719 | | 0.764 | 36.23 | 2500 | 0.1975 | 0.1568 | | 0.7332 | 43.48 | 3000 | 0.1812 | 0.1463 | | 0.5952 | 50.72 | 3500 | 0.1923 | 0.1428 | | 0.6655 | 57.97 | 4000 | 0.1900 | 0.1404 | | 0.574 | 65.22 | 4500 | 0.1822 | 0.1370 | | 0.6211 | 72.46 | 5000 | 0.1937 | 0.1355 | | 0.5883 | 79.71 | 5500 | 0.1872 | 0.1335 | | 0.5666 | 86.96 | 6000 | 0.1874 | 0.1324 | | 0.5526 | 94.2 | 6500 | 0.1998 | 0.1368 | | 0.5671 | 101.45 | 7000 | 0.2054 | 0.1365 | | 0.5514 | 108.7 | 7500 | 0.1987 | 0.1340 | | 0.5382 | 115.94 | 8000 | 0.2104 | 0.1344 | | 0.5819 | 123.19 | 8500 | 0.2125 | 0.1334 | | 0.5277 | 130.43 | 9000 | 0.2063 | 0.1330 | | 0.4626 | 137.68 | 9500 | 0.2105 | 0.1310 | | 0.5842 | 144.93 | 10000 | 0.2087 | 0.1307 | | 0.535 | 152.17 | 10500 | 0.2137 | 0.1309 | | 0.5081 | 159.42 | 11000 | 0.2215 | 0.1302 | | 0.6033 | 166.67 | 11500 | 0.2162 | 0.1302 | | 0.5549 | 173.91 | 12000 | 0.2198 | 0.1286 | | 0.5389 | 181.16 | 12500 | 0.2241 | 0.1293 | | 0.4912 | 188.41 | 13000 | 0.2190 | 0.1290 | | 0.4671 | 195.65 | 13500 | 0.2218 | 0.1290 | | a5bbb102f3be090d5555c86ccfacedd6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | No log | 1.0 | 393 | 0.2287 | 0.9341 | 0.9112 | | 821db75089abcd4fb516ea6b254708cd |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | yyaaeell Dreambooth model trained by Brainergy with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | a4105c08dcd6367d356d3e33f4a7da87 |

mit | ['grug', 'caveman', 'fun'] | false | GPT-Grug-355m A finetuned version of [GPT2-Medium](https://huggingface.co/gpt2-medium) on the 'grug' dataset. A demo is available [here](https://huggingface.co/spaces/DarwinAnim8or/grug-chat) If you're interested, there's a smaller model available here: [GPT-Grug-125m](https://huggingface.co/DarwinAnim8or/gpt-grug-125m) Do note however that it is very limited by comparison. | 3dad10e76089a22d78d92fb62d5b928a |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_sa_GLUE_Experiment_logit_kd_data_aug_cola_192 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE COLA dataset. It achieves the following results on the evaluation set: - Loss: 0.6917 - Matthews Correlation: 0.0937 | c897c8f7084f6ed18584cb43cd08fb86 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.647 | 1.0 | 835 | 0.6917 | 0.0937 | | 0.5356 | 2.0 | 1670 | 0.7312 | 0.1294 | | 0.4736 | 3.0 | 2505 | 0.7512 | 0.1372 | | 0.4318 | 4.0 | 3340 | 0.7576 | 0.1259 | | 0.3993 | 5.0 | 4175 | 0.7826 | 0.1277 | | 0.3736 | 6.0 | 5010 | 0.8138 | 0.1028 | | 0f571e05882ca262d6972f7d7baa3df5 |

apache-2.0 | ['fnet'] | false | FNet base model Pretrained model on English language using a masked language modeling (MLM) and next sentence prediction (NSP) objective. It was introduced in [this paper](https://arxiv.org/abs/2105.03824) and first released in [this repository](https://github.com/google-research/google-research/tree/master/f_net). This model is cased: it makes a difference between english and English. The model achieves 0.58 accuracy on MLM objective and 0.80 on NSP objective. Disclaimer: This model card has been written by [gchhablani](https://huggingface.co/gchhablani). | ef90384b6c23ebe50b93a52bf5aa1f2b |