license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['translation'] | false | System Info: - hf_name: ukr-tur - source_languages: ukr - target_languages: tur - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/ukr-tur/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['uk', 'tr'] - src_constituents: {'ukr'} - tgt_constituents: {'tur'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/ukr-tur/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/ukr-tur/opus-2020-06-17.test.txt - src_alpha3: ukr - tgt_alpha3: tur - short_pair: uk-tr - chrF2_score: 0.655 - bleu: 39.3 - brevity_penalty: 0.934 - ref_len: 11844.0 - src_name: Ukrainian - tgt_name: Turkish - train_date: 2020-06-17 - src_alpha2: uk - tgt_alpha2: tr - prefer_old: False - long_pair: ukr-tur - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | fdf7d8be0ce28117b32ead6027c96da5 |

mit | ['bert', 'pytorch', 'tsdae'] | false | Introduction Legal_BERTimbau Large is a fine-tuned BERT model based on [BERTimbau](https://huggingface.co/neuralmind/bert-base-portuguese-cased) Large. "BERTimbau Base is a pretrained BERT model for Brazilian Portuguese that achieves state-of-the-art performances on three downstream NLP tasks: Named Entity Recognition, Sentence Textual Similarity and Recognizing Textual Entailment. It is available in two sizes: Base and Large. For further information or requests, please go to [BERTimbau repository](https://github.com/neuralmind-ai/portuguese-bert/)." The performance of Language Models can change drastically when there is a domain shift between training and test data. In order create a Portuguese Language Model adapted to a Legal domain, the original BERTimbau model was submitted to a fine-tuning stage where it was performed 1 "PreTraining" epoch over 50000 cleaned documents (lr: 2e-5, using TSDAE technique) | 6e5c489d4e88e84079ee27b0c2344afa |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper largeV2 Italian MLS This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the facebook/multilingual_librispeech italian dataset. It achieves the following results on the evaluation set: - Loss: 0.2051 - Wer: 8.3353 | 90cbd0001bb20efab92c3606090ac1d0 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Model description The model is fine-tuned for 4000 updates/steps on multilingual librispeech Italian train data. - Zero-shot - 13.8 (MLS Italian test) - Fine-tune MLS Italian train - 8.33 (MLS Italian test) (-40%) | 3ca7d55ee355d0e07720a8e6a0574dec |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 32 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 4000 - mixed_precision_training: Native AMP | 6ce397f642e6103316be992e210bd4cb |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.1115 | 1.02 | 1000 | 0.2116 | 9.4217 | | 0.0867 | 2.03 | 2000 | 0.1964 | 9.7823 | | 0.0447 | 3.05 | 3000 | 0.2001 | 9.6409 | | 0.0426 | 4.07 | 4000 | 0.2051 | 8.3353 | | 2495623a660406858ee76f2b149c7423 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | whisper-large-v2-Irish This model is a fine-tuned version of [kpriyanshu256/whisper-large-v2-cy-500-32-1e-05](https://huggingface.co/kpriyanshu256/whisper-large-v2-cy-500-32-1e-05) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 1.1613 - Wer: 40.8827 | a83dfeb80dcfd95d3dc813b1458fabfa |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.4018 | 3.01 | 100 | 0.9510 | 47.8513 | | 0.0465 | 6.02 | 200 | 0.9984 | 42.4797 | | 0.0127 | 9.02 | 300 | 1.0906 | 42.9152 | | 0.0024 | 12.03 | 400 | 1.1443 | 40.8246 | | 0.0017 | 15.04 | 500 | 1.1613 | 40.8827 | | c273efcd9f6d11202e5919d346ae77cc |

apache-2.0 | ['translation'] | false | opus-mt-fi-mg * source languages: fi * target languages: mg * OPUS readme: [fi-mg](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-mg/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-24.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-mg/opus-2020-01-24.zip) * test set translations: [opus-2020-01-24.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-mg/opus-2020-01-24.test.txt) * test set scores: [opus-2020-01-24.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-mg/opus-2020-01-24.eval.txt) | 8f7a3ee10e2b0f3584fc1090dddfcd32 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | tedx100-xlsr: Wav2vec 2.0 with TEDx Dataset This is a the demonstration of a fine-tuned Wav2vec model for Brazilian Portuguese using the [TEDx multilingual in Portuguese](http://www.openslr.org/100) dataset. In this notebook the model is tested against other available Brazilian Portuguese datasets. | Dataset | Train | Valid | Test | |--------------------------------|-------:|------:|------:| | CETUC | | -- | 5.4h | | Common Voice | | -- | 9.5h | | LaPS BM | | -- | 0.1h | | MLS | | -- | 3.7h | | Multilingual TEDx (Portuguese) | 148.8h| -- | 1.8h | | SID | | -- | 1.0h | | VoxForge | | -- | 0.1h | | Total |148.8h | -- | 21.6h | | ad61142de56905b3f841531f28985b5f |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | Summary | | CETUC | CV | LaPS | MLS | SID | TEDx | VF | AVG | |----------------------|---------------|----------------|----------------|----------------|----------------|----------------|----------------|----------------| | tedx\_100 (demonstration below) |0.138 | 0.369 | 0.169 | 0.165 | 0.794 | 0.222 | 0.395 | 0.321| | tedx\_100 + 4-gram (demonstration below) |0.123 | 0.414 | 0.171 | 0.152 | 0.982 | 0.215 | 0.395 | 0.350| | e4c4e3bbd021241302bf7bdbe1d7a2b5 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | CETUC ```python ds = load_data('cetuc_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("CETUC WER:", wer) ``` CETUC WER: 0.13846663354859937 | 2cbee1190db93e1029d2e98d03a6d862 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | Common Voice ```python ds = load_data('commonvoice_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("CV WER:", wer) ``` CV WER: 0.36960721735520236 | d46958ce6e5dce69ade3bbb727c759a2 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | LaPS ```python ds = load_data('lapsbm_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("Laps WER:", wer) ``` Laps WER: 0.16941287878787875 | a4954f1d900275360e11a30c56c7c78e |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | MLS ```python ds = load_data('mls_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("MLS WER:", wer) ``` MLS WER: 0.16586103382107384 | b63d5944cd15de5fcd444ced8a6732fe |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | SID ```python ds = load_data('sid_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("Sid WER:", wer) ``` Sid WER: 0.7943364822145216 | e95110489312fa32c9b17f1b2be3c947 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | TEDx ```python ds = load_data('tedx_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("TEDx WER:", wer) ``` TEDx WER: 0.22221476803982182 | 47adf767d8cb4d513eba1bf9b5d16936 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | VoxForge ```python ds = load_data('voxforge_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("VoxForge WER:", wer) ``` VoxForge WER: 0.39486066017315996 | 754273d1ba4bcd6de719994c389bb943 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | CETUC ```python ds = load_data('cetuc_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("CETUC WER:", wer) ``` CETUC WER: 0.12338749517028079 | 16fd93da1173d3bd57c8ad6594fa8266 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | Common Voice ```python ds = load_data('commonvoice_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("CV WER:", wer) ``` CV WER: 0.4146185693398481 | e48f89fc50cb903d14fab8f95e8c2f5c |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | LaPS ```python ds = load_data('lapsbm_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("Laps WER:", wer) ``` Laps WER: 0.17142676767676762 | 919bc49b6bff9fce0bab648d5b2a8236 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | MLS ```python ds = load_data('mls_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("MLS WER:", wer) ``` MLS WER: 0.15212081808962674 | 475dfea29694c23158370e0adda64f34 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | SID ```python ds = load_data('sid_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("Sid WER:", wer) ``` Sid WER: 0.982518441309493 | f054e78e1dd3b30b4f0ddcf59d7abcf8 |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | TEDx ```python ds = load_data('tedx_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("TEDx WER:", wer) ``` TEDx WER: 0.21567860841157235 | deaea19443d09f3f448c8625b2b2accc |

apache-2.0 | ['audio', 'speech', 'wav2vec2', 'pt', 'portuguese-speech-corpus', 'automatic-speech-recognition', 'speech', 'PyTorch'] | false | VoxForge ```python ds = load_data('voxforge_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("VoxForge WER:", wer) ``` VoxForge WER: 0.3952218614718614 | 46b5f6c318c11c8593301023f2a8dd95 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'text-to-mel'] | false | Tacotron 2 with Guided Attention trained on Baker (Chinese) This repository provides a pretrained [Tacotron2](https://arxiv.org/abs/1712.05884) trained with [Guided Attention](https://arxiv.org/abs/1710.08969) on Baker dataset (Ch). For a detail of the model, we encourage you to read more about [TensorFlowTTS](https://github.com/TensorSpeech/TensorFlowTTS). | 3ce4fb4a79e3091446ead010aef16a85 |

apache-2.0 | ['tensorflowtts', 'audio', 'text-to-speech', 'text-to-mel'] | false | Converting your Text to Mel Spectrogram ```python import numpy as np import soundfile as sf import yaml import tensorflow as tf from tensorflow_tts.inference import AutoProcessor from tensorflow_tts.inference import TFAutoModel processor = AutoProcessor.from_pretrained("tensorspeech/tts-tacotron2-baker-ch") tacotron2 = TFAutoModel.from_pretrained("tensorspeech/tts-tacotron2-baker-ch") text = "这是一个开源的端到端中文语音合成系统" input_ids = processor.text_to_sequence(text, inference=True) decoder_output, mel_outputs, stop_token_prediction, alignment_history = tacotron2.inference( input_ids=tf.expand_dims(tf.convert_to_tensor(input_ids, dtype=tf.int32), 0), input_lengths=tf.convert_to_tensor([len(input_ids)], tf.int32), speaker_ids=tf.convert_to_tensor([0], dtype=tf.int32), ) ``` | ced2ceaef9a3fa1df39b9a831a69a393 |

apache-2.0 | ['generated_from_trainer'] | false | distilgpt2-finetuned-wikitext2 This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.1909 | 997c8b5cef313bc8983525e4a04d6fef |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 18 | 4.2070 | | No log | 2.0 | 36 | 4.1958 | | No log | 3.0 | 54 | 4.1909 | | bbd06d20ae20e2d033f983c3810eb5ef |

unlicense | ['PyTorch', 'Transformers', 'gpt2'] | false | Russian Chit-chat, Deductive and Common Sense reasoning model Модель является ядром прототипа [диалоговой системы](https://github.com/Koziev/chatbot) с двумя основными функциями. Первая функция - **генерация реплик чит-чата**. В качестве затравки подается история диалога (предшествующие несколько реплик, от 1 до 10). ``` - Привет, как дела? - Привет, так себе. - <<< эту реплику ожидаем от модели >>> ``` Вторая функция модели - вывод ответа на заданный вопрос, опираясь на дополнительные факты или на "здравый смысл". Предполагается, что релевантные факты извлекаются из стороннего хранилища (базы знаний) с помощью другой модели, например [sbert_pq](https://huggingface.co/inkoziev/sbert_pq). Используя указанный факт(ы) и текст вопроса, модель построит грамматичный и максимально краткий ответ, как это сделал бы человек в подобной коммуникативной ситуации. Релевантные факты следует указывать перед текстом заданного вопроса так, будто сам собеседник сказал их: ``` - Сегодня 15 сентября. Какой сейчас у нас месяц? - Сентябрь ``` Модель не ожидает, что все найденные и добавленные в контекст диалога факты действительно имеют отношение к заданному вопросу. Поэтому модель, извлекающая из базы знаний информацию, может жертвовать точностью в пользу полноте и добавлять что-то лишнее. Модель читчата в этом случае сама выберет среди добавленных в контекст фактов необходимую фактуру и проигнорирует лишнее. Текущая версия модели допускает до 5 фактов перед вопросом. Например: ``` - Стасу 16 лет. Стас живет в Подольске. У Стаса нет своей машины. Где живет Стас? - в Подольске ``` В некоторых случаях модель может выполнять **силлогический вывод** ответа, опираясь на 2 предпосылки, связанные друг с другом. Выводимое из двух предпосылок следствие не фигурирует явно, а *как бы* используется для вывода ответа: ``` - Смертен ли Аристофан, если он был греческим философом, а все философы смертны? - Да ``` Как можно видеть из приведенных примеров, формат подаваемой на вход модели фактической информации для выполнения вывода предельно естественный и свободный. Кроме логического вывода, модель также умеет решать простые арифметические задачи в рамках 1-2 классов начальной школы, с двумя числовыми аргументами: ``` - Чему равно 2+8? - 10 ``` | 1fbc0e4416bfb354787728e2b391d12f |

unlicense | ['PyTorch', 'Transformers', 'gpt2'] | false | Варианты модели и метрики Выложенная на данный момент модель имеет 760 млн. параметров, т.е. уровня sberbank-ai/rugpt3large_based_on_gpt2. Далее приводится результат замера точности решения арифметических задач на отложенном тестовом наборе сэмплов: | base model | arith. accuracy | | --------------------------------------- | --------------- | | sberbank-ai/rugpt3large_based_on_gpt2 | 0.91 | | sberbank-ai/rugpt3medium_based_on_gpt2 | 0.70 | | sberbank-ai/rugpt3small_based_on_gpt2 | 0.58 | | tinkoff-ai/ruDialoGPT-small | 0.44 | | tinkoff-ai/ruDialoGPT-medium | 0.69 | Цифра 0.91 в столбце "arith. accuracy" означает, что 91% тестовых задач решено полностью верно. Любое отклонение сгенерированного ответа от эталонного рассматривается как ошибка. Например, выдача ответа "120" вместо "119" тоже фиксируется как ошибка. | 23d53bff815821940d8c538614a93357 |

unlicense | ['PyTorch', 'Transformers', 'gpt2'] | false | Пример использования ``` import torch from transformers import AutoTokenizer, AutoModelForCausalLM device = "cuda" if torch.cuda.is_available() else "cpu" model_name = "inkoziev/rugpt_chitchat" tokenizer = AutoTokenizer.from_pretrained(model_name) tokenizer.add_special_tokens({'bos_token': '<s>', 'eos_token': '</s>', 'pad_token': '<pad>'}) model = AutoModelForCausalLM.from_pretrained(model_name) model.to(device) model.eval() | ea0a1334c4a2e2e5a904365c976ea424 |

unlicense | ['PyTorch', 'Transformers', 'gpt2'] | false | На вход модели подаем последние 2-3 реплики диалога. Каждая реплика на отдельной строке, начинается с символа "-" input_text = """<s>- Привет! Что делаешь? - Привет :) В такси еду -""" encoded_prompt = tokenizer.encode(input_text, add_special_tokens=False, return_tensors="pt").to(device) output_sequences = model.generate(input_ids=encoded_prompt, max_length=100, num_return_sequences=1, pad_token_id=tokenizer.pad_token_id) text = tokenizer.decode(output_sequences[0].tolist(), clean_up_tokenization_spaces=True)[len(input_text)+1:] text = text[: text.find('</s>')] print(text) ``` | 3168a234880bb55134ca2cf916d6f1f1 |

unlicense | ['PyTorch', 'Transformers', 'gpt2'] | false | Citation: ``` @MISC{rugpt_chitchat, author = {Ilya Koziev}, title = {Russian Chit-chat with Common sence Reasoning}, url = {https://huggingface.co/inkoziev/rugpt_chitchat}, year = 2022 } ``` | 5c98416baa76f46e359c6db7873b5174 |

apache-2.0 | ['luke', 'named entity recognition', 'relation classification', 'question answering'] | false | mLUKE **mLUKE** (multilingual LUKE) is a multilingual extension of LUKE. Please check the [official repository](https://github.com/studio-ousia/luke) for more details and updates. This is the mLUKE large model with 24 hidden layers, 768 hidden size. The total number of parameters in this model is 868M (561M for the word embeddings and encoder, 307M for the entity embeddings). The model was initialized with the weights of XLM-RoBERTa(large) and trained using December 2020 version of Wikipedia in 24 languages. | b7cecc00667c63d0b3cc7cfc7136195e |

apache-2.0 | ['luke', 'named entity recognition', 'relation classification', 'question answering'] | false | Citation If you find mLUKE useful for your work, please cite the following paper: ```latex @inproceedings{ri-etal-2022-mluke, title = "m{LUKE}: {T}he Power of Entity Representations in Multilingual Pretrained Language Models", author = "Ri, Ryokan and Yamada, Ikuya and Tsuruoka, Yoshimasa", booktitle = "Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers)", year = "2022", url = "https://aclanthology.org/2022.acl-long.505", ``` | bce73ccb486f75fa46cbec0504cd3a96 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | 简介 Brief Introduction 采用统一的框架处理多种抽取任务,AIWIN2022的冠军方案,1.1亿参数量的中文UBERT-Base。 Adopting a unified framework to handle multiple information extraction tasks, AIWIN2022's champion solution, Chinese UBERT-Base (110M). | 841acf4e89db96064d1c5ff546e7d786 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | 模型分类 Model Taxonomy | 需求 Demand | 任务 Task | 系列 Series | 模型 Model | 参数 Parameter | 额外 Extra | | :----: | :----: | :----: | :----: | :----: | :----: | | 通用 General | 自然语言理解 NLU | 二郎神 Erlangshen | UBERT | 110M | 中文 Chinese | | fa4d926ecb32c4022fe78a67ed6e1885 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | 模型信息 Model Information 参考论文:[Unified BERT for Few-shot Natural Language Understanding](https://arxiv.org/abs/2206.12094) UBERT是[2022年AIWIN世界人工智能创新大赛:中文保险小样本多任务竞赛](http://ailab.aiwin.org.cn/competitions/68 | 180c7713140197ecfe5ff2c80d28e4e9 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | results)的冠军解决方案。我们开发了一个基于类似BERT的骨干的多任务、多目标、统一的抽取任务框架。我们的UBERT在比赛A榜和B榜上均取得了第一名。因为比赛中的数据集在比赛结束后不再可用,我们开源的UBERT从多个任务中收集了70多个数据集(共1,065,069个样本)来进行预训练,并且我们选择了[MacBERT-Base](https://huggingface.co/hfl/chinese-macbert-base)作为骨干网络。除了支持开箱即用之外,我们的UBERT还可以用于各种场景,如NLI、实体识别和阅读理解。示例代码可以在[Github](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/dev/yangping/fengshen/examples/ubert)中找到。 UBERT was the winner solution in the [2022 AIWIN ARTIFICIAL INTELLIGENCE WORLD INNOVATIONS: Chinese Insurance Small Sample Multi-Task](http://ailab.aiwin.org.cn/competitions/68 | 16d46e8542dbb0d9e7da0755d2a8a272 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | results). We developed a unified framework based on BERT-like backbone for multiple tasks and objectives. Our UBERT owns first place, as described in leaderboards A and B. In addition to the unavailable datasets in the challenge, we carefully collect over 70 datasets (1,065,069 samples in total) from a variety of tasks for open-source UBERT. Moreover, we apply [MacBERT-Base](https://huggingface.co/hfl/chinese-macbert-base) as the backbone. Besides out-of-the-box functionality, our UBERT can be employed in various scenarios such as NLI, entity recognition, and reading comprehension. The example codes can be found in [Github](https://github.com/IDEA-CCNL/Fengshenbang-LM/tree/dev/yangping/fengshen/examples/ubert). | 7a312d69b3faaa1c47999bf7af846ea1 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | 使用 Usage Pip install fengshen: ```python git clone https://github.com/IDEA-CCNL/Fengshenbang-LM.git cd Fengshenbang-LM pip install --editable ./ ``` Run the code: ```python import argparse from fengshen import UbertPiplines total_parser = argparse.ArgumentParser("TASK NAME") total_parser = UbertPiplines.piplines_args(total_parser) args = total_parser.parse_args() args.pretrained_model_path = "IDEA-CCNL/Erlangshen-Ubert-110M-Chinese" test_data=[ { "task_type": "抽取任务", "subtask_type": "实体识别", "text": "这也让很多业主据此认为,雅清苑是政府公务员挤对了国家的经适房政策。", "choices": [ {"entity_type": "小区名字"}, {"entity_type": "岗位职责"} ], "id": 0} ] model = UbertPiplines(args) result = model.predict(test_data) for line in result: print(line) ``` | 29f8503040e76201debdb161ac353116 |

apache-2.0 | ['bert', 'NLU', 'Sentiment', 'Chinese'] | false | 引用 Citation 如果您在您的工作中使用了我们的模型,可以引用我们的对该模型的论文: If you are using the resource for your work, please cite the our paper for this model: ```text @article{fengshenbang/ubert, author = {JunYu Lu and Ping Yang and Jiaxing Zhang and Ruyi Gan and Jing Yang}, title = {Unified {BERT} for Few-shot Natural Language Understanding}, journal = {CoRR}, volume = {abs/2206.12094}, year = {2022} } ``` 如果您在您的工作中使用了我们的模型,也可以引用我们的[总论文](https://arxiv.org/abs/2209.02970): If you are using the resource for your work, please cite the our [overview paper](https://arxiv.org/abs/2209.02970): ```text @article{fengshenbang, author = {Junjie Wang and Yuxiang Zhang and Lin Zhang and Ping Yang and Xinyu Gao and Ziwei Wu and Xiaoqun Dong and Junqing He and Jianheng Zhuo and Qi Yang and Yongfeng Huang and Xiayu Li and Yanghan Wu and Junyu Lu and Xinyu Zhu and Weifeng Chen and Ting Han and Kunhao Pan and Rui Wang and Hao Wang and Xiaojun Wu and Zhongshen Zeng and Chongpei Chen and Ruyi Gan and Jiaxing Zhang}, title = {Fengshenbang 1.0: Being the Foundation of Chinese Cognitive Intelligence}, journal = {CoRR}, volume = {abs/2209.02970}, year = {2022} } ``` 也可以引用我们的[网站](https://github.com/IDEA-CCNL/Fengshenbang-LM/): You can also cite our [website](https://github.com/IDEA-CCNL/Fengshenbang-LM/): ```text @misc{Fengshenbang-LM, title={Fengshenbang-LM}, author={IDEA-CCNL}, year={2021}, howpublished={\url{https://github.com/IDEA-CCNL/Fengshenbang-LM}}, } ``` | 56d51e46d34fbab25a3e585ad0fe6b85 |

cc-by-sa-4.0 | ['zero-shot-classification', 'text-classification', 'nli', 'pytorch'] | false | bert-base-japanese-jsnli This model is a fine-tuned version of [cl-tohoku/bert-base-japanese-v2](https://huggingface.co/cl-tohoku/bert-base-japanese-v2) on the [JSNLI](https://nlp.ist.i.kyoto-u.ac.jp/?%E6%97%A5%E6%9C%AC%E8%AA%9ESNLI%28JSNLI%29%E3%83%87%E3%83%BC%E3%82%BF%E3%82%BB%E3%83%83%E3%83%88) dataset. It achieves the following results on the evaluation set: - Loss: 0.2085 - Accuracy: 0.9288 | 5db1fe78a6ae81ea2d92c1b4e3fdd92b |

cc-by-sa-4.0 | ['zero-shot-classification', 'text-classification', 'nli', 'pytorch'] | false | Simple zero-shot classification pipeline ```python from transformers import pipeline classifier = pipeline("zero-shot-classification", model="Formzu/bert-base-japanese-jsnli") sequence_to_classify = "いつか世界を見る。" candidate_labels = ['旅行', '料理', '踊り'] out = classifier(sequence_to_classify, candidate_labels, hypothesis_template="この例は{}です。") print(out) | 35bbba338e0401528212fa0eb9bd8568 |

cc-by-sa-4.0 | ['zero-shot-classification', 'text-classification', 'nli', 'pytorch'] | false | NLI use-case ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification import torch device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu") model_name = "Formzu/bert-base-japanese-jsnli" model = AutoModelForSequenceClassification.from_pretrained(model_name).to(device) tokenizer = AutoTokenizer.from_pretrained(model_name) premise = "いつか世界を見る。" label = '旅行' hypothesis = f'この例は{label}です。' input = tokenizer.encode(premise, hypothesis, return_tensors='pt').to(device) with torch.no_grad(): logits = model(input)["logits"][0] probs = logits.softmax(dim=-1) print(probs.cpu().numpy(), logits.cpu().numpy()) | ecc92d4290c26ab3ddbaebc284d889ea |

cc-by-sa-4.0 | ['zero-shot-classification', 'text-classification', 'nli', 'pytorch'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 3.0 | f530bcceef35822b8b935166108d23a7 |

cc-by-sa-4.0 | ['zero-shot-classification', 'text-classification', 'nli', 'pytorch'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | | :-----------: | :---: | :---: | :-------------: | :------: | | 0.4054 | 1.0 | 16657 | 0.2141 | 0.9216 | | 0.3297 | 2.0 | 33314 | 0.2145 | 0.9236 | | 0.2645 | 3.0 | 49971 | 0.2085 | 0.9288 | | ad5c8462215af746fd89f07c474fd699 |

mit | ['spacy', 'token-classification'] | false | en_core_web_lg English pipeline optimized for CPU. Components: tok2vec, tagger, parser, senter, ner, attribute_ruler, lemmatizer. | Feature | Description | | --- | --- | | **Name** | `en_core_web_lg` | | **Version** | `3.5.0` | | **spaCy** | `>=3.5.0,<3.6.0` | | **Default Pipeline** | `tok2vec`, `tagger`, `parser`, `attribute_ruler`, `lemmatizer`, `ner` | | **Components** | `tok2vec`, `tagger`, `parser`, `senter`, `attribute_ruler`, `lemmatizer`, `ner` | | **Vectors** | 514157 keys, 514157 unique vectors (300 dimensions) | | **Sources** | [OntoNotes 5](https://catalog.ldc.upenn.edu/LDC2013T19) (Ralph Weischedel, Martha Palmer, Mitchell Marcus, Eduard Hovy, Sameer Pradhan, Lance Ramshaw, Nianwen Xue, Ann Taylor, Jeff Kaufman, Michelle Franchini, Mohammed El-Bachouti, Robert Belvin, Ann Houston)<br />[ClearNLP Constituent-to-Dependency Conversion](https://github.com/clir/clearnlp-guidelines/blob/master/md/components/dependency_conversion.md) (Emory University)<br />[WordNet 3.0](https://wordnet.princeton.edu/) (Princeton University)<br />[Explosion Vectors (OSCAR 2109 + Wikipedia + OpenSubtitles + WMT News Crawl)](https://github.com/explosion/spacy-vectors-builder) (Explosion) | | **License** | `MIT` | | **Author** | [Explosion](https://explosion.ai) | | 1d01e0cf92734c33b1dfba54a79b2117 |

mit | ['spacy', 'token-classification'] | false | Accuracy | Type | Score | | --- | --- | | `TOKEN_ACC` | 99.86 | | `TOKEN_P` | 99.57 | | `TOKEN_R` | 99.58 | | `TOKEN_F` | 99.57 | | `TAG_ACC` | 97.35 | | `SENTS_P` | 92.19 | | `SENTS_R` | 89.27 | | `SENTS_F` | 90.71 | | `DEP_UAS` | 92.08 | | `DEP_LAS` | 90.27 | | `ENTS_P` | 85.16 | | `ENTS_R` | 85.70 | | `ENTS_F` | 85.43 | | 37b8793a57256f5cee9c3c47f4da0364 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-3feb-2022-finetuned-squad This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset. It achieves the following results on the evaluation set: - Loss: 1.1470 | 3aff5a72421795e68f750615197083fa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2276 | 1.0 | 5533 | 1.1641 | | 0.9614 | 2.0 | 11066 | 1.1225 | | 0.7769 | 3.0 | 16599 | 1.1470 | | 4e178c33f7330f57d9d910835dbb870a |

mit | ['generated_from_trainer'] | false | bart-large-cnn-finetuned-roundup This model is a fine-tuned version of [facebook/bart-large-cnn](https://huggingface.co/facebook/bart-large-cnn) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.8956 - Rouge1: 58.1914 - Rouge2: 45.822 - Rougel: 49.4407 - Rougelsum: 56.6379 - Gen Len: 142.0 | 761a0c3206bff72a877293d07e237f95 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:--------:| | 1.2575 | 1.0 | 795 | 0.9154 | 53.8792 | 34.3203 | 35.8768 | 51.1789 | 142.0 | | 0.7053 | 2.0 | 1590 | 0.7921 | 54.3918 | 35.3346 | 37.7539 | 51.6989 | 142.0 | | 0.5379 | 3.0 | 2385 | 0.7566 | 52.1651 | 32.5699 | 36.3105 | 49.3327 | 141.5185 | | 0.3496 | 4.0 | 3180 | 0.7584 | 54.3258 | 36.403 | 39.6938 | 52.0186 | 142.0 | | 0.2688 | 5.0 | 3975 | 0.7343 | 55.9101 | 39.0709 | 42.4138 | 53.572 | 141.8333 | | 0.1815 | 6.0 | 4770 | 0.7924 | 53.9272 | 36.8138 | 40.0614 | 51.7496 | 142.0 | | 0.1388 | 7.0 | 5565 | 0.7674 | 55.0347 | 38.7978 | 42.0081 | 53.0297 | 142.0 | | 0.1048 | 8.0 | 6360 | 0.7700 | 55.2993 | 39.4075 | 42.6837 | 53.5179 | 141.9815 | | 0.0808 | 9.0 | 7155 | 0.7796 | 56.1508 | 40.0863 | 43.2178 | 53.7908 | 142.0 | | 0.0719 | 10.0 | 7950 | 0.8057 | 56.2302 | 41.3004 | 44.7921 | 54.4304 | 142.0 | | 0.0503 | 11.0 | 8745 | 0.8259 | 55.7603 | 41.0643 | 44.5518 | 54.2305 | 142.0 | | 0.0362 | 12.0 | 9540 | 0.8604 | 55.8612 | 41.5984 | 44.444 | 54.2493 | 142.0 | | 0.0307 | 13.0 | 10335 | 0.8516 | 57.7259 | 44.542 | 47.6724 | 56.0166 | 142.0 | | 0.0241 | 14.0 | 11130 | 0.8826 | 56.7943 | 43.7139 | 47.2866 | 55.1824 | 142.0 | | 0.0193 | 15.0 | 11925 | 0.8856 | 57.4135 | 44.3147 | 47.9136 | 55.8843 | 142.0 | | 0.0154 | 16.0 | 12720 | 0.8956 | 58.1914 | 45.822 | 49.4407 | 56.6379 | 142.0 | | 9d89b8bddf21a9edddc5c20f25522ed5 |

mit | ['token-classification', 'fill-mask'] | false | This model is the combined camembert-base model, with the pretrained lilt checkpoint from the paper "LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding", with the visual backbone built from the pretrained checkpoint "microsoft/dit-base". *Note:* This model should be fine-tuned, and loaded with the modeling and config files from the branch `improve-dit`. Original repository: https://github.com/jpWang/LiLT To use it, it is necessary to fork the modeling and configuration files from the original repository, and load the pretrained model from the corresponding classes (LiLTRobertaLikeVisionConfig, LiLTRobertaLikeVisionForRelationExtraction, LiLTRobertaLikeVisionForTokenClassification, LiLTRobertaLikeVisionModel). They can also be preloaded with the AutoConfig/model factories as such: ```python from transformers import AutoModelForTokenClassification, AutoConfig, AutoModel from path_to_custom_classes import ( LiLTRobertaLikeVisionConfig, LiLTRobertaLikeVisionForRelationExtraction, LiLTRobertaLikeVisionForTokenClassification, LiLTRobertaLikeVisionModel ) def patch_transformers(): AutoConfig.register("liltrobertalike", LiLTRobertaLikeVisionConfig) AutoModel.register(LiLTRobertaLikeVisionConfig, LiLTRobertaLikeVisionModel) AutoModelForTokenClassification.register(LiLTRobertaLikeVisionConfig, LiLTRobertaLikeVisionForTokenClassification) | 67a5c76361cfd52d13c2d030448bf6f9 |

mit | ['token-classification', 'fill-mask'] | false | patch_transformers() must have been executed beforehand tokenizer = AutoTokenizer.from_pretrained("camembert-base") model = AutoModel.from_pretrained("manu/lilt-camembert-dit-base-hf") model = AutoModelForTokenClassification.from_pretrained("manu/lilt-camembert-dit-base-hf") | db55f16873a7076c91ef630cc69380d1 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'food'] | false | DreamBooth model for the torta concept trained by morgan on the morgan/tortas dataset. This is a Stable Diffusion model fine-tuned on the torta concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of a torta sandwich** See the ongoing experiments here in this [Weights & Biases training journal](https://wandb.ai/morgan/hf-dreambooth/reports/Training-Journal-DreamBooth-fine-tuning--VmlldzozMjA4MzE4 | 0e3ce5210ca73c60c0348e6ff0ec0e3e |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'food'] | false | jan-1,-11:50---try-add-%22sandwich%22-class-to-the-training-prompt---success!) This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! Note that commit `8f89857b2b2a6f75c443eac298f022483ef23a3f` uses the concept name `zztortazz` instead of `torta`, to see if this more unique concept name works better (spoiler, it doesn't) | d08c6cf02e41fa223317d34468246a6e |

apache-2.0 | ['translation'] | false | spa-cat * source group: Spanish * target group: Catalan * OPUS readme: [spa-cat](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/spa-cat/README.md) * model: transformer-align * source language(s): spa * target language(s): cat * model: transformer-align * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/spa-cat/opus-2020-06-17.zip) * test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/spa-cat/opus-2020-06-17.test.txt) * test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/spa-cat/opus-2020-06-17.eval.txt) | 0d2cbdad64138abd251ee1bb871a064d |

apache-2.0 | ['translation'] | false | System Info: - hf_name: spa-cat - source_languages: spa - target_languages: cat - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/spa-cat/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['es', 'ca'] - src_constituents: {'spa'} - tgt_constituents: {'cat'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/spa-cat/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/spa-cat/opus-2020-06-17.test.txt - src_alpha3: spa - tgt_alpha3: cat - short_pair: es-ca - chrF2_score: 0.8320000000000001 - bleu: 68.9 - brevity_penalty: 1.0 - ref_len: 12343.0 - src_name: Spanish - tgt_name: Catalan - train_date: 2020-06-17 - src_alpha2: es - tgt_alpha2: ca - prefer_old: False - long_pair: spa-cat - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 1ed86d29db71ce1d0a95a6fe769b1ff0 |

apache-2.0 | ['generated_from_trainer'] | false | BERTModified-fullsize-finetuned-wikitext-test This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 6.7813 - Precision: 0.1094 - Recall: 0.1094 - F1: 0.1094 - Accuracy: 0.1094 | 52fbd2b6295b9ea79fa8b018f7b26ab8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 9.2391 | 1.0 | 4382 | 8.1610 | 0.0373 | 0.0373 | 0.0373 | 0.0373 | | 7.9147 | 2.0 | 8764 | 7.6870 | 0.0635 | 0.0635 | 0.0635 | 0.0635 | | 7.5164 | 3.0 | 13146 | 7.4388 | 0.0727 | 0.0727 | 0.0727 | 0.0727 | | 7.2439 | 4.0 | 17528 | 7.2088 | 0.0930 | 0.0930 | 0.0930 | 0.0930 | | 7.1068 | 5.0 | 21910 | 7.0455 | 0.0943 | 0.0943 | 0.0943 | 0.0943 | | 6.9711 | 6.0 | 26292 | 6.9976 | 0.1054 | 0.1054 | 0.1054 | 0.1054 | | 6.8486 | 7.0 | 30674 | 6.8850 | 0.1054 | 0.1054 | 0.1054 | 0.1054 | | 6.78 | 8.0 | 35056 | 6.7990 | 0.1153 | 0.1153 | 0.1153 | 0.1153 | | 6.73 | 9.0 | 39438 | 6.8041 | 0.1074 | 0.1074 | 0.1074 | 0.1074 | | 6.6921 | 10.0 | 43820 | 6.7412 | 0.1251 | 0.1251 | 0.1251 | 0.1251 | | 40dfb2b774610b7204550305d06c82c8 |

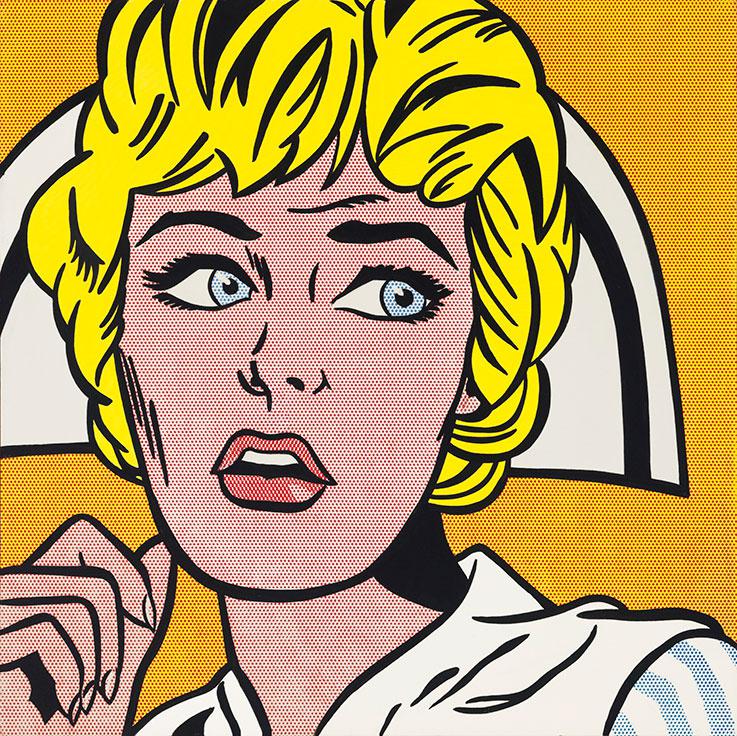

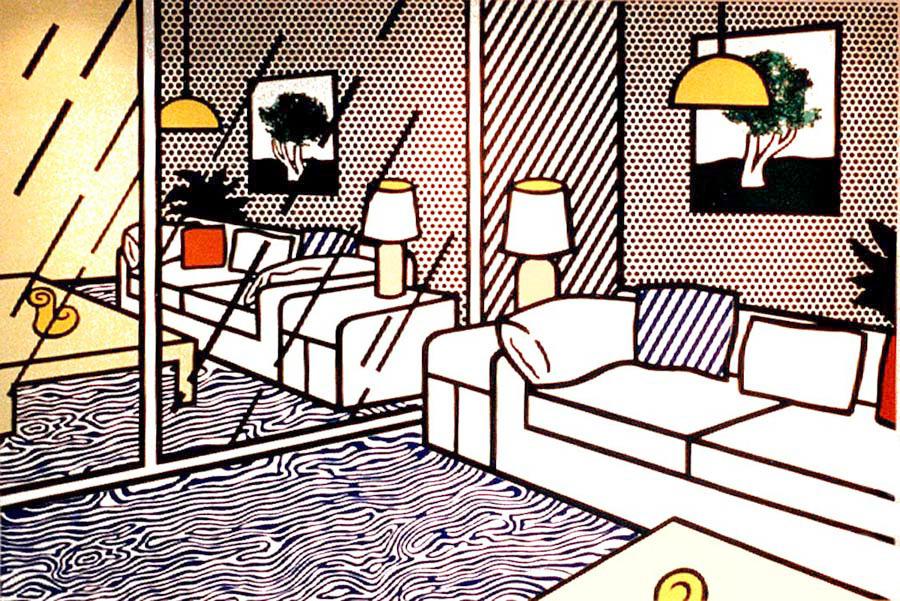

mit | [] | false | roy-lichtenstein on Stable Diffusion This is the `<roy-lichtenstein>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:     | ceac2407bd19df925ceed0fe7321623b |

apache-2.0 | [] | false | bert-base-en-ar-cased We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages. Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy. For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf). | 02ffb64b1cae61f8672ee5c96be33109 |

apache-2.0 | [] | false | How to use ```python from transformers import AutoTokenizer, AutoModel tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-en-ar-cased") model = AutoModel.from_pretrained("Geotrend/bert-base-en-ar-cased") ``` To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers). | 4a3ebf92beac9fd55e79079edeb5d30c |

bsd-3-clause | ['codet5'] | false | CodeT5-base for Code Summarization [CodeT5-base](https://huggingface.co/Salesforce/codet5-base) model fine-tuned on CodeSearchNet data in a multi-lingual training setting ( Ruby/JavaScript/Go/Python/Java/PHP) for code summarization. It was introduced in this EMNLP 2021 paper [CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation](https://arxiv.org/abs/2109.00859) by Yue Wang, Weishi Wang, Shafiq Joty, Steven C.H. Hoi. Please check out more at [this repository](https://github.com/salesforce/CodeT5). | a85b434a94945c3bb7c26db684ec8ea2 |

bsd-3-clause | ['codet5'] | false | How to use Here is how to use this model: ```python from transformers import RobertaTokenizer, T5ForConditionalGeneration if __name__ == '__main__': tokenizer = RobertaTokenizer.from_pretrained('Salesforce/codet5-base-multi-sum') model = T5ForConditionalGeneration.from_pretrained('Salesforce/codet5-base-multi-sum') text = """def svg_to_image(string, size=None): if isinstance(string, unicode): string = string.encode('utf-8') renderer = QtSvg.QSvgRenderer(QtCore.QByteArray(string)) if not renderer.isValid(): raise ValueError('Invalid SVG data.') if size is None: size = renderer.defaultSize() image = QtGui.QImage(size, QtGui.QImage.Format_ARGB32) painter = QtGui.QPainter(image) renderer.render(painter) return image""" input_ids = tokenizer(text, return_tensors="pt").input_ids generated_ids = model.generate(input_ids, max_length=20) print(tokenizer.decode(generated_ids[0], skip_special_tokens=True)) | 6e615f02b9d3d2940d55f7c891b4b4fd |

bsd-3-clause | ['codet5'] | false | Fine-tuning data We employ the filtered version of CodeSearchNet data [[Husain et al., 2019](https://arxiv.org/abs/1909.09436)] from [CodeXGLUE](https://github.com/microsoft/CodeXGLUE/tree/main/Code-Text/code-to-text) benchmark for fine-tuning on code summarization. The data is tokenized with our pre-trained code-specific BPE (Byte-Pair Encoding) tokenizer. One can prepare text (or code) for the model using RobertaTokenizer with the vocab files from [codet5-base](https://huggingface.co/Salesforce/codet5-base). | d76854ca3a3b5656340b9298e36c7d78 |

bsd-3-clause | ['codet5'] | false | Data statistic | Programming Language | Training | Dev | Test | | :------------------- | :------: | :----: | :----: | | Python | 251,820 | 13,914 | 14,918 | | PHP | 241,241 | 12,982 | 14,014 | | Go | 167,288 | 7,325 | 8,122 | | Java | 164,923 | 5,183 | 10,955 | | JavaScript | 58,025 | 3,885 | 3,291 | | Ruby | 24,927 | 1,400 | 1,261 | | 8ee4d9dac2d347f28c8b650673b341ac |

bsd-3-clause | ['codet5'] | false | Training procedure We fine-tune codet5-base on these six programming languages (Ruby/JavaScript/Go/Python/Java/PHP) in the multi-task learning setting. We employ the balanced sampling to avoid biasing towards high-resource tasks. Please refer to the [paper](https://arxiv.org/abs/2109.00859) for more details. | bb50731c92daa916c5c750b50cd8b5c2 |

bsd-3-clause | ['codet5'] | false | Evaluation results Unlike the paper allowing to select different best checkpoints for different programming languages (PLs), here we employ one checkpoint for all PLs. Besides, we remove the task control prefix to specify the PL in training and inference. The results on the test set are shown as below: | Model | Ruby | Javascript | Go | Python | Java | PHP | Overall | | ----------- | :-------: | :--------: | :-------: | :-------: | :-------: | :-------: | :-------: | | Seq2Seq | 9.64 | 10.21 | 13.98 | 15.93 | 15.09 | 21.08 | 14.32 | | Transformer | 11.18 | 11.59 | 16.38 | 15.81 | 16.26 | 22.12 | 15.56 | | [RoBERTa](https://arxiv.org/pdf/1907.11692.pdf) | 11.17 | 11.90 | 17.72 | 18.14 | 16.47 | 24.02 | 16.57 | | [CodeBERT](https://arxiv.org/pdf/2002.08155.pdf) | 12.16 | 14.90 | 18.07 | 19.06 | 17.65 | 25.16 | 17.83 | | [PLBART](https://aclanthology.org/2021.naacl-main.211.pdf) | 14.11 |15.56 | 18.91 | 19.30 | 18.45 | 23.58 | 18.32 | | [CodeT5-small](https://arxiv.org/abs/2109.00859) |14.87 | 15.32 | 19.25 | 20.04 | 19.92 | 25.46 | 19.14 | | [CodeT5-base](https://arxiv.org/abs/2109.00859) | **15.24** | 16.16 | 19.56 | 20.01 | **20.31** | 26.03 | 19.55 | | [CodeT5-base-multi-sum](https://arxiv.org/abs/2109.00859) | **15.24** | **16.18** | **19.95** | **20.42** | 20.26 | **26.10** | **19.69** | | 79302ea63a195ee310a4e5783eca0827 |

bsd-3-clause | ['codet5'] | false | Citation ```bibtex @inproceedings{ wang2021codet5, title={CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation}, author={Yue Wang, Weishi Wang, Shafiq Joty, Steven C.H. Hoi}, booktitle={Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, EMNLP 2021}, year={2021}, } ``` | 27cab9203989c108bd58ac511dd44765 |

cc-by-4.0 | ['Transformers', 'text-classification', 'intent-classification', 'multi-class-classification', 'natural-language-understanding'] | false | Demo: How to use in HuggingFace Transformers Pipeline Requires [transformers](https://pypi.org/project/transformers/): ```pip install transformers``` ```python from transformers import AutoTokenizer, AutoModelForSequenceClassification, TextClassificationPipeline model_name = 'qanastek/XLMRoberta-Alexa-Intents-Classification' tokenizer = AutoTokenizer.from_pretrained(model_name) model = AutoModelForSequenceClassification.from_pretrained(model_name) classifier = TextClassificationPipeline(model=model, tokenizer=tokenizer) res = classifier("réveille-moi à neuf heures du matin le vendredi") print(res) ``` Outputs: ```python [{'label': 'alarm_set', 'score': 0.9998375177383423}] ``` | 8d8450cb07d8bba7f551fb7e71f4e830 |

cc-by-4.0 | ['Transformers', 'text-classification', 'intent-classification', 'multi-class-classification', 'natural-language-understanding'] | false | Intents * audio_volume_other * play_music * iot_hue_lighton * general_greet * calendar_set * audio_volume_down * social_query * audio_volume_mute * iot_wemo_on * iot_hue_lightup * audio_volume_up * iot_coffee * takeaway_query * qa_maths * play_game * cooking_query * iot_hue_lightdim * iot_wemo_off * music_settings * weather_query * news_query * alarm_remove * social_post * recommendation_events * transport_taxi * takeaway_order * music_query * calendar_query * lists_query * qa_currency * recommendation_movies * general_joke * recommendation_locations * email_querycontact * lists_remove * play_audiobook * email_addcontact * lists_createoradd * play_radio * qa_stock * alarm_query * email_sendemail * general_quirky * music_likeness * cooking_recipe * email_query * datetime_query * transport_traffic * play_podcasts * iot_hue_lightchange * calendar_remove * transport_query * transport_ticket * qa_factoid * iot_cleaning * alarm_set * datetime_convert * iot_hue_lightoff * qa_definition * music_dislikeness | c9e8ef0e32ddd1bb5ba5025f26d46062 |

cc-by-4.0 | ['Transformers', 'text-classification', 'intent-classification', 'multi-class-classification', 'natural-language-understanding'] | false | Evaluation results ```plain precision recall f1-score support alarm_query 0.9661 0.9037 0.9338 1734 alarm_remove 0.9484 0.9608 0.9545 1071 alarm_set 0.8611 0.9254 0.8921 2091 audio_volume_down 0.8657 0.9537 0.9075 561 audio_volume_mute 0.8608 0.9130 0.8861 1632 audio_volume_other 0.8684 0.5392 0.6653 306 audio_volume_up 0.7198 0.8446 0.7772 663 calendar_query 0.7555 0.8229 0.7878 6426 calendar_remove 0.8688 0.9441 0.9049 3417 calendar_set 0.9092 0.9014 0.9053 10659 cooking_query 0.0000 0.0000 0.0000 0 cooking_recipe 0.9282 0.8592 0.8924 3672 datetime_convert 0.8144 0.7686 0.7909 765 datetime_query 0.9152 0.9305 0.9228 4488 email_addcontact 0.6482 0.8431 0.7330 612 email_query 0.9629 0.9319 0.9472 6069 email_querycontact 0.6853 0.8032 0.7396 1326 email_sendemail 0.9530 0.9381 0.9455 5814 general_greet 0.1026 0.3922 0.1626 51 general_joke 0.9305 0.9123 0.9213 969 general_quirky 0.6984 0.5417 0.6102 8619 iot_cleaning 0.9590 0.9359 0.9473 1326 iot_coffee 0.9304 0.9749 0.9521 1836 iot_hue_lightchange 0.8794 0.9374 0.9075 1836 iot_hue_lightdim 0.8695 0.8711 0.8703 1071 iot_hue_lightoff 0.9440 0.9229 0.9334 2193 iot_hue_lighton 0.4545 0.5882 0.5128 153 iot_hue_lightup 0.9271 0.8315 0.8767 1377 iot_wemo_off 0.9615 0.8715 0.9143 918 iot_wemo_on 0.8455 0.7941 0.8190 510 lists_createoradd 0.8437 0.8356 0.8396 1989 lists_query 0.8918 0.8335 0.8617 2601 lists_remove 0.9536 0.8601 0.9044 2652 music_dislikeness 0.7725 0.7157 0.7430 204 music_likeness 0.8570 0.8159 0.8359 1836 music_query 0.8667 0.8050 0.8347 1785 music_settings 0.4024 0.3301 0.3627 306 news_query 0.8343 0.8657 0.8498 6324 play_audiobook 0.8172 0.8125 0.8149 2091 play_game 0.8666 0.8403 0.8532 1785 play_music 0.8683 0.8845 0.8763 8976 play_podcasts 0.8925 0.9125 0.9024 3213 play_radio 0.8260 0.8935 0.8585 3672 qa_currency 0.9459 0.9578 0.9518 1989 qa_definition 0.8638 0.8552 0.8595 2907 qa_factoid 0.7959 0.8178 0.8067 7191 qa_maths 0.8937 0.9302 0.9116 1275 qa_stock 0.7995 0.9412 0.8646 1326 recommendation_events 0.7646 0.7702 0.7674 2193 recommendation_locations 0.7489 0.8830 0.8104 1581 recommendation_movies 0.6907 0.7706 0.7285 1020 social_post 0.9623 0.9080 0.9344 4131 social_query 0.8104 0.7914 0.8008 1275 takeaway_order 0.7697 0.8458 0.8059 1122 takeaway_query 0.9059 0.8571 0.8808 1785 transport_query 0.8141 0.7559 0.7839 2601 transport_taxi 0.9222 0.9403 0.9312 1173 transport_ticket 0.9259 0.9384 0.9321 1785 transport_traffic 0.6919 0.9660 0.8063 765 weather_query 0.9387 0.9492 0.9439 7956 accuracy 0.8617 151674 macro avg 0.8162 0.8273 0.8178 151674 weighted avg 0.8639 0.8617 0.8613 151674 ``` | b8495cb7c932059c029961bacfd7ee03 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 12 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 2.0 | d0c123b2fbd0bc3996b64fe5f36af969 |

cc-by-2.0 | ['text2image', 'prompting'] | false | Created based on my cat, Garry. used 600+ images to train the model. You can view the images I used to train the model over on my [Facebook](https://www.facebook.com/media/set/?vanity=patrick.caulton&set=a.1003539599707037/) It's a public album. Some examples from the model.     | 1f9814c07c29a1c225e3fe5c5a3921f6 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper large v2 vi This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the common_voice_11_0 dataset. It achieves the following results on the evaluation set: - Loss: 0.5530 - Wer: 17.0761 | 68868f09b32226223552614573fb23d5 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 8 - total_train_batch_size: 64 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 50 - training_steps: 300 - mixed_precision_training: Native AMP | 79d469dd2786c2b147bdbaf504680a21 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0012 | 21.01 | 150 | 0.5211 | 17.2845 | | 0.0006 | 42.02 | 300 | 0.5530 | 17.0761 | | 3574e07c78be808a40e1bfc685bcd371 |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingStatic'] | false | Post-training static quantization This is an INT8 PyTorch model quantized with [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [jimypbr/bert-base-uncased-squad](https://huggingface.co/jimypbr/bert-base-uncased-squad). The calibration dataloader is the train dataloader. The default calibration sampling size 300 isn't divisible exactly by batch size 8, so the real sampling size is 304. The linear modules **bert.encoder.layer.2.intermediate.dense**, **bert.encoder.layer.4.intermediate.dense**, **bert.encoder.layer.9.output.dense**, **bert.encoder.layer.10.output.dense** fall back to fp32 to meet the 1% relative accuracy loss. | aacb0a578f8a715eea5cc7fa5062fd23 |

apache-2.0 | ['int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingStatic'] | false | Load with Intel® Neural Compressor: ```python from optimum.intel.neural_compressor import IncQuantizedModelForQuestionAnswering int8_model = IncQuantizedModelForQuestionAnswering.from_pretrained( "Intel/bert-base-uncased-squad-int8-static", ) ``` | e430c3e118d35f0d301f65245d3a1619 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large-v2 Hindi This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the mozilla-foundation/common_voice_11_0 hi dataset. It achieves the following results on the evaluation set: - Loss: 0.1870 - Wer: 12.4577 | f06e0ef5792b6f61939759b5909420c9 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 300 - mixed_precision_training: Native AMP | 70e9795db24a624c24e14c3506161f45 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.2097 | 0.37 | 100 | 0.2616 | 17.6701 | | 0.1578 | 0.73 | 200 | 0.2108 | 14.0990 | | 0.0806 | 1.1 | 300 | 0.1870 | 12.4577 | | 66dec033d20b221b8e5ccf4c624ce0ed |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Hi - Swedish This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.3953 - Wer: 19.6472 | 319706c99c4fef1d6fc775b65e184f50 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 1 | 710c091f9f5b445a6f098cffa37247c8 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.1331 | 1.29 | 1000 | 0.3014 | 22.3602 | | 0.0537 | 2.59 | 2000 | 0.2988 | 20.8572 | | 0.0217 | 3.88 | 3000 | 0.3093 | 20.5641 | | 0.004 | 5.17 | 4000 | 0.3551 | 20.0479 | | 0.0015 | 6.47 | 5000 | 0.3701 | 20.0022 | | 0.0015 | 7.76 | 6000 | 0.3769 | 19.7386 | | 0.0007 | 9.06 | 7000 | 0.3908 | 19.7010 | | 0.0006 | 10.35 | 8000 | 0.3953 | 19.6472 | | 12d1f198fb69c2397201c8a9279935f3 |

apache-2.0 | ['automatic-speech-recognition', 'pl'] | false | exp_w2v2t_pl_xlsr-53_s182 Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) for speech recognition using the train split of [Common Voice 7.0 (pl)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | b015a4f79e1998da8e31a10c5b3979c5 |

mit | [] | false | Cute Game Style on Stable Diffusion This is the `<cute-game-style>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as a `style`:         Here are images generated with this style:     | 778455fa3cf7174cb20d4722f65e74fd |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.1611 - Accuracy: 0.938 - F1: 0.9382 | eee675a1bd52837620e2c17f9201678d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.2043 | 1.0 | 250 | 0.1804 | 0.9275 | 0.9270 | | 0.1334 | 2.0 | 500 | 0.1611 | 0.938 | 0.9382 | | c8d4f60ee786254d7d861358d57fa6cd |

mit | ['generated_from_trainer'] | false | language-detection-RoBerta-base-additional This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.1367 - Accuracy: 0.9874 | 3fa4c09580e26d5b770e588390a0c5cc |

apache-2.0 | ['image-classification', 'pytorch', 'onnx'] | false | MobileNet V3 - Small model Pretrained on a dataset for wildfire binary classification (soon to be shared). The MobileNet V3 architecture was introduced in [this paper](https://arxiv.org/pdf/1905.02244.pdf). | b30943ee71bed37ff16902a9620776ce |

apache-2.0 | ['image-classification', 'pytorch', 'onnx'] | false | Usage instructions ```python from PIL import Image from torchvision.transforms import Compose, ConvertImageDtype, Normalize, PILToTensor, Resize from torchvision.transforms.functional import InterpolationMode from pyrovision.models import model_from_hf_hub model = model_from_hf_hub("pyronear/mobilenet_v3_small").eval() img = Image.open(path_to_an_image).convert("RGB") | b8bfe1e7c16c317c4997194cd590ca6b |

apache-2.0 | ['translation'] | false | opus-mt-sv-sl * source languages: sv * target languages: sl * OPUS readme: [sv-sl](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-sl/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-sl/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-sl/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-sl/opus-2020-01-16.eval.txt) | f0d65d93820add37960c82162b8bc989 |

cc-by-sa-4.0 | ['finance'] | false | Additional pretrained BERT base Japanese finance This is a [BERT](https://github.com/google-research/bert) model pretrained on texts in the Japanese language. The codes for the pretraining are available at [retarfi/language-pretraining](https://github.com/retarfi/language-pretraining/tree/v1.0). | 06088e43eac90eaa2ff70eccc1ec00e2 |

cc-by-sa-4.0 | ['finance'] | false | Model architecture The model architecture is the same as BERT small in the [original BERT paper](https://arxiv.org/abs/1810.04805); 12 layers, 768 dimensions of hidden states, and 12 attention heads. | d10d36f0d1c4856ca80bbe74c09377f0 |

cc-by-sa-4.0 | ['finance'] | false | Training Data The models are additionally trained on financial corpus from [Tohoku University's BERT base Japanese model (cl-tohoku/bert-base-japanese)](https://huggingface.co/cl-tohoku/bert-base-japanese). The financial corpus consists of 2 corpora: - Summaries of financial results from October 9, 2012, to December 31, 2020 - Securities reports from February 8, 2018, to December 31, 2020 The financial corpus file consists of approximately 27M sentences. | 17f1c302a8f82157dd600f3ced6f43c3 |

cc-by-sa-4.0 | ['finance'] | false | Tokenization You can use tokenizer [Tohoku University's BERT base Japanese model (cl-tohoku/bert-base-japanese)](https://huggingface.co/cl-tohoku/bert-base-japanese). You can use the tokenizer: ``` tokenizer = transformers.BertJapaneseTokenizer.from_pretrained('cl-tohoku/bert-base-japanese') ``` | b52e6cc68361fea8393e1b7f2589c79b |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.