license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

cc-by-sa-4.0 | ['finance'] | false | Training The models are trained with the same configuration as BERT base in the [original BERT paper](https://arxiv.org/abs/1810.04805); 512 tokens per instance, 256 instances per batch, and 1M training steps. | b87270bf943d2b00aa2a146800394876 |

mit | ['generated_from_trainer'] | false | roberta-base-rte This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the GLUE RTE dataset. It achieves the following results on the evaluation set: - Loss: 0.7660 - Accuracy: 0.7581 | 4e82db2b37b881f94eba037ef0528fbd |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.551 | 3.21 | 500 | 0.7660 | 0.7581 | | 0.1665 | 6.41 | 1000 | 1.5218 | 0.7690 | | 0.0463 | 9.62 | 1500 | 1.6747 | 0.7653 | | ce697ed0a4d4d55bdc68238ff4cfb987 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-complaints-wandb-product This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the consumer-finance-complaints dataset. It achieves the following results on the evaluation set: - Loss: 0.4431 - Accuracy: 0.8691 - F1: 0.8645 - Recall: 0.8691 - Precision: 0.8629 | 6a58d9efe7ccfdb187e08296636c6023 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 3 - mixed_precision_training: Native AMP | 5eaef1aced718056a2005c26ee5db1b9 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | Recall | Precision | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:------:|:---------:| | 0.562 | 0.51 | 2000 | 0.5107 | 0.8452 | 0.8346 | 0.8452 | 0.8252 | | 0.4548 | 1.01 | 4000 | 0.4628 | 0.8565 | 0.8481 | 0.8565 | 0.8466 | | 0.3439 | 1.52 | 6000 | 0.4519 | 0.8605 | 0.8544 | 0.8605 | 0.8545 | | 0.2626 | 2.03 | 8000 | 0.4412 | 0.8678 | 0.8618 | 0.8678 | 0.8626 | | 0.2717 | 2.53 | 10000 | 0.4431 | 0.8691 | 0.8645 | 0.8691 | 0.8629 | | 86393e24ef29ee02ec8a4062f78e9eed |

mit | [] | false | matrix on Stable Diffusion This is the `<hatman-matrix>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). | aadef43f2b94538c07be1ffbd341c8a0 |

mit | [] | false | Troubleshooting This concept was trained using "CompVis/stable-diffusion-v1-4" which is linked to in the inference notebook for concepts and has a tensor length of [756]. The notebook to train concepts links to "stabilityai/stable-diffusion-2" which has a tensor length of [1024]. Here is the new concept you will be able to use as a `style`:    | 398a90c409ada41e2a8fde3c6bd86fdc |

apache-2.0 | ['Quality Estimation', 'monotransquest', 'DA'] | false | Using Pre-trained Models ```python import torch from transquest.algo.sentence_level.monotransquest.run_model import MonoTransQuestModel model = MonoTransQuestModel("xlmroberta", "TransQuest/monotransquest-da-ne_en-wiki", num_labels=1, use_cuda=torch.cuda.is_available()) predictions, raw_outputs = model.predict([["Reducerea acestor conflicte este importantă pentru conservare.", "Reducing these conflicts is not important for preservation."]]) print(predictions) ``` | b75d78720e13288508a3dc244169ef63 |

apache-2.0 | ['translation'] | false | opus-mt-es-to * source languages: es * target languages: to * OPUS readme: [es-to](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/es-to/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/es-to/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-to/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/es-to/opus-2020-01-16.eval.txt) | 4a854d03eceda7b0de1c793fa1d897ba |

mit | ['generated_from_trainer'] | false | IMDB_roBERTa_5E This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the imdb dataset. It achieves the following results on the evaluation set: - Loss: 0.2383 - Accuracy: 0.9467 | 06c1b2e622cf2d330d9fcc8d692cab0f |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.5851 | 0.06 | 50 | 0.1789 | 0.94 | | 0.2612 | 0.13 | 100 | 0.1520 | 0.9533 | | 0.2339 | 0.19 | 150 | 0.1997 | 0.9267 | | 0.2349 | 0.26 | 200 | 0.1702 | 0.92 | | 0.207 | 0.32 | 250 | 0.1515 | 0.9333 | | 0.2222 | 0.38 | 300 | 0.1522 | 0.9467 | | 0.1916 | 0.45 | 350 | 0.1328 | 0.94 | | 0.1559 | 0.51 | 400 | 0.1676 | 0.94 | | 0.1621 | 0.58 | 450 | 0.1363 | 0.9467 | | 0.1663 | 0.64 | 500 | 0.1327 | 0.9533 | | 0.1841 | 0.7 | 550 | 0.1347 | 0.9467 | | 0.1742 | 0.77 | 600 | 0.1127 | 0.9533 | | 0.1559 | 0.83 | 650 | 0.1119 | 0.9467 | | 0.172 | 0.9 | 700 | 0.1123 | 0.9467 | | 0.1644 | 0.96 | 750 | 0.1326 | 0.96 | | 0.1524 | 1.02 | 800 | 0.1718 | 0.9467 | | 0.1456 | 1.09 | 850 | 0.1464 | 0.9467 | | 0.1271 | 1.15 | 900 | 0.1190 | 0.9533 | | 0.1412 | 1.21 | 950 | 0.1323 | 0.9533 | | 0.1114 | 1.28 | 1000 | 0.1475 | 0.9467 | | 0.1222 | 1.34 | 1050 | 0.1592 | 0.9467 | | 0.1164 | 1.41 | 1100 | 0.1345 | 0.96 | | 0.1126 | 1.47 | 1150 | 0.1325 | 0.9533 | | 0.1237 | 1.53 | 1200 | 0.1561 | 0.9533 | | 0.1385 | 1.6 | 1250 | 0.1225 | 0.9467 | | 0.1522 | 1.66 | 1300 | 0.1119 | 0.9533 | | 0.1154 | 1.73 | 1350 | 0.1231 | 0.96 | | 0.1182 | 1.79 | 1400 | 0.1366 | 0.96 | | 0.1415 | 1.85 | 1450 | 0.0972 | 0.96 | | 0.124 | 1.92 | 1500 | 0.1082 | 0.96 | | 0.1584 | 1.98 | 1550 | 0.1770 | 0.96 | | 0.0927 | 2.05 | 1600 | 0.1821 | 0.9533 | | 0.1065 | 2.11 | 1650 | 0.0999 | 0.9733 | | 0.0974 | 2.17 | 1700 | 0.1365 | 0.9533 | | 0.079 | 2.24 | 1750 | 0.1694 | 0.9467 | | 0.1217 | 2.3 | 1800 | 0.1564 | 0.9533 | | 0.0676 | 2.37 | 1850 | 0.2116 | 0.9467 | | 0.0832 | 2.43 | 1900 | 0.1779 | 0.9533 | | 0.0899 | 2.49 | 1950 | 0.0999 | 0.9667 | | 0.0898 | 2.56 | 2000 | 0.1502 | 0.9467 | | 0.0955 | 2.62 | 2050 | 0.1776 | 0.9467 | | 0.0989 | 2.69 | 2100 | 0.1279 | 0.9533 | | 0.102 | 2.75 | 2150 | 0.1005 | 0.9667 | | 0.0957 | 2.81 | 2200 | 0.1070 | 0.9667 | | 0.0786 | 2.88 | 2250 | 0.1881 | 0.9467 | | 0.0897 | 2.94 | 2300 | 0.1951 | 0.9533 | | 0.0801 | 3.01 | 2350 | 0.1827 | 0.9467 | | 0.0829 | 3.07 | 2400 | 0.1854 | 0.96 | | 0.0665 | 3.13 | 2450 | 0.1775 | 0.9533 | | 0.0838 | 3.2 | 2500 | 0.1700 | 0.96 | | 0.0441 | 3.26 | 2550 | 0.1810 | 0.96 | | 0.071 | 3.32 | 2600 | 0.2083 | 0.9533 | | 0.0655 | 3.39 | 2650 | 0.1943 | 0.96 | | 0.0565 | 3.45 | 2700 | 0.2486 | 0.9533 | | 0.0669 | 3.52 | 2750 | 0.2540 | 0.9533 | | 0.0671 | 3.58 | 2800 | 0.2140 | 0.9467 | | 0.0857 | 3.64 | 2850 | 0.1609 | 0.9533 | | 0.0585 | 3.71 | 2900 | 0.2067 | 0.9467 | | 0.0597 | 3.77 | 2950 | 0.2380 | 0.9467 | | 0.0932 | 3.84 | 3000 | 0.1727 | 0.9533 | | 0.0744 | 3.9 | 3050 | 0.2099 | 0.9467 | | 0.0679 | 3.96 | 3100 | 0.2034 | 0.9467 | | 0.0447 | 4.03 | 3150 | 0.2461 | 0.9533 | | 0.0486 | 4.09 | 3200 | 0.2032 | 0.9533 | | 0.0409 | 4.16 | 3250 | 0.2468 | 0.9467 | | 0.0605 | 4.22 | 3300 | 0.2422 | 0.9467 | | 0.0319 | 4.28 | 3350 | 0.2681 | 0.9467 | | 0.0483 | 4.35 | 3400 | 0.2222 | 0.9533 | | 0.0801 | 4.41 | 3450 | 0.2247 | 0.9533 | | 0.0333 | 4.48 | 3500 | 0.2190 | 0.9533 | | 0.0432 | 4.54 | 3550 | 0.2167 | 0.9533 | | 0.0381 | 4.6 | 3600 | 0.2507 | 0.9467 | | 0.0647 | 4.67 | 3650 | 0.2410 | 0.9533 | | 0.0427 | 4.73 | 3700 | 0.2447 | 0.9467 | | 0.0627 | 4.8 | 3750 | 0.2332 | 0.9533 | | 0.0569 | 4.86 | 3800 | 0.2358 | 0.9533 | | 0.069 | 4.92 | 3850 | 0.2379 | 0.9533 | | 0.0474 | 4.99 | 3900 | 0.2383 | 0.9467 | | 901fdc07108a62708ad3e1b16002455b |

mit | [] | false | crested gecko on Stable Diffusion This is the `<crested-gecko>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:      | 4762f21b5f228ffd1dec3d7dc0521e56 |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xlsr-53-espeak-cv-ft-evn4-ntsema-colab This model is a fine-tuned version of [ntsema/wav2vec2-xlsr-53-espeak-cv-ft-sah2-ntsema-colab](https://huggingface.co/ntsema/wav2vec2-xlsr-53-espeak-cv-ft-sah2-ntsema-colab) on the audiofolder dataset. It achieves the following results on the evaluation set: - Loss: 2.0821 - Wer: 0.9833 | 7acb61d2b9d3677b40f59ef0c68ae1ab |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 4.3115 | 6.15 | 400 | 1.6416 | 0.9867 | | 0.9147 | 12.3 | 800 | 1.6538 | 0.9867 | | 0.5301 | 18.46 | 1200 | 1.8461 | 0.98 | | 0.2865 | 24.61 | 1600 | 2.0821 | 0.9833 | | 8e78807aab0b6686c519d086a3366b8e |

apache-2.0 | ['generated_from_trainer'] | false | text-to-sparql-t5-base-2021-10-17_23-40 This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2645 - Gen Len: 19.0 - P: 0.5125 - R: 0.0382 - F1: 0.2650 - Score: 5.1404 - Bleu-precisions: [88.49268497650789, 75.01025204252232, 66.60779038484033, 63.18383699935422] - Bleu-bp: 0.0707 | 7719fef85bdf8b199725a09f295a5eb8 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Gen Len | P | R | F1 | Score | Bleu-precisions | Bleu-bp | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:------:|:------:|:------:|:----------------------------------------------------------------------------:|:-------:| | 0.3513 | 1.0 | 4807 | 0.2645 | 19.0 | 0.5125 | 0.0382 | 0.2650 | 5.1404 | [88.49268497650789, 75.01025204252232, 66.60779038484033, 63.18383699935422] | 0.0707 | | 60ef4c5daa43d912311474cb3ad450a3 |

cc-by-4.0 | ['generated_from_trainer'] | false | CTEBMSP_ner_test2 This model is a fine-tuned version of [chizhikchi/Spanish_disease_finder](https://huggingface.co/chizhikchi/Spanish_disease_finder) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0586 - Diso Precision: 0.8836 - Diso Recall: 0.8902 - Diso F1: 0.8869 - Diso Number: 4052 - Overall Precision: 0.8836 - Overall Recall: 0.8902 - Overall F1: 0.8869 - Overall Accuracy: 0.9885 | 9c4d3c73fd255825f03fdd258721ddbb |

cc-by-4.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Diso Precision | Diso Recall | Diso F1 | Diso Number | Overall Precision | Overall Recall | Overall F1 | Overall Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------------:|:-----------:|:-------:|:-----------:|:-----------------:|:--------------:|:----------:|:----------------:| | 0.0463 | 1.0 | 2566 | 0.0512 | 0.8791 | 0.8384 | 0.8583 | 4052 | 0.8791 | 0.8384 | 0.8583 | 0.9859 | | 0.0204 | 2.0 | 5132 | 0.0615 | 0.8942 | 0.8655 | 0.8796 | 4052 | 0.8942 | 0.8655 | 0.8796 | 0.9875 | | 0.0095 | 3.0 | 7698 | 0.0545 | 0.8877 | 0.8776 | 0.8826 | 4052 | 0.8877 | 0.8776 | 0.8826 | 0.9881 | | 0.0045 | 4.0 | 10264 | 0.0586 | 0.8836 | 0.8902 | 0.8869 | 4052 | 0.8836 | 0.8902 | 0.8869 | 0.9885 | | f945a70552a8f95cbe35d182e0ba6caa |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2183 - Accuracy: 0.925 - F1: 0.9251 | 21e0b8607079a67a0a9a4468d37e41f1 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8002 | 1.0 | 250 | 0.3094 | 0.9065 | 0.9038 | | 0.2409 | 2.0 | 500 | 0.2183 | 0.925 | 0.9251 | | 55da80bc133c4be6714edb0b1f84bc25 |

apache-2.0 | ['generated_from_trainer'] | false | albert-base-v2-finetuned-squad This model is a fine-tuned version of [albert-base-v2](https://huggingface.co/albert-base-v2) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 0.9492 | 585e1de6928debd37978ebdd71d274c7 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 0.8695 | 1.0 | 8248 | 0.8813 | | 0.6333 | 2.0 | 16496 | 0.8042 | | 0.4372 | 3.0 | 24744 | 0.9492 | | b2923f8b098ff849da5587ed2fd4e3e6 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - HA dataset. It achieves the following results on the evaluation set: - Loss: 0.4998 - Wer: 0.5153 | 742c312ef35cf813870ab689257abf21 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 9.6e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 2000 - num_epochs: 80.0 - mixed_precision_training: Native AMP | f9bcc915ed78c4c33ff412901e50a4d8 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_8_0', 'generated_from_trainer', 'robust-speech-event', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.0021 | 8.33 | 500 | 2.9059 | 1.0 | | 2.6604 | 16.66 | 1000 | 2.6402 | 0.9892 | | 1.2216 | 24.99 | 1500 | 0.6051 | 0.6851 | | 1.0754 | 33.33 | 2000 | 0.5408 | 0.6464 | | 0.9582 | 41.66 | 2500 | 0.5521 | 0.5935 | | 0.8653 | 49.99 | 3000 | 0.5156 | 0.5550 | | 0.7867 | 58.33 | 3500 | 0.5439 | 0.5606 | | 0.7265 | 66.66 | 4000 | 0.4863 | 0.5255 | | 0.6699 | 74.99 | 4500 | 0.5050 | 0.5169 | | b124ba0aee41e54c28e4a08f12e5ff05 |

mit | [] | false | Christo person on Stable Diffusion This is the `<christo>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:           | a5198378bf3e12ff3e757327e2028af6 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'animal'] | false | DreamBooth model for the rio concept trained by marshmellow77 on the marshmellow77/pics_rio dataset. This is a Stable Diffusion model fine-tuned on the rio concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of rio cat** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | b1e833c79919fcb60bbd5ecdc6ff66c8 |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | model-translate-ar-to-en-from-120k-dataset-ar-en-th230111752 This model is a fine-tuned version of [Helsinki-NLP/opus-mt-ar-en](https://huggingface.co/Helsinki-NLP/opus-mt-ar-en) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.2879 - Bleu: 36.3711 | 71becdb4c632913b34d6fb921af58fca |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 1.3225 | 1.0 | 12500 | 1.3048 | 35.6396 | | 1.0963 | 2.0 | 25000 | 1.2906 | 36.2535 | | 1.1074 | 3.0 | 37500 | 1.2879 | 36.3711 | | 89491b669b947d0663256a218221b6c8 |

apache-2.0 | ['generated_from_trainer'] | false | german_pretrained This model is a fine-tuned version of [flozi00/wav2vec-xlsr-german](https://huggingface.co/flozi00/wav2vec-xlsr-german) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.9812 - Wer: 1.0 | 1bb3fa720184b6c56d0fc921fadaef1f |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:---:| | 12.5229 | 5.0 | 5 | 12.9520 | 1.0 | | 4.3782 | 10.0 | 10 | 5.5689 | 1.0 | | 2.56 | 15.0 | 15 | 4.8410 | 1.0 | | 2.2895 | 20.0 | 20 | 4.0380 | 1.0 | | 1.872 | 25.0 | 25 | 3.9558 | 1.0 | | 1.6992 | 30.0 | 30 | 3.9812 | 1.0 | | 40d42636c4c0d0159d4d226e8032f4ca |

cc-by-4.0 | [] | false | algmon-base for QA This is the base model for QA [roberta-base](https://huggingface.co/roberta-base) model, fine-tuned using the [SQuAD2.0](https://huggingface.co/datasets/squad_v2) dataset. It's been trained on question-answer pairs, including unanswerable questions, for the task of Question Answering. | 094fda8fb854b06ae3434101dd16c59d |

cc-by-4.0 | [] | false | In Haystack Haystack is an NLP framework by deepset. You can use this model in a Haystack pipeline to do question answering at scale (over many documents). To load the model in [Haystack](https://github.com/deepset-ai/haystack/): ```python reader = FARMReader(model_name_or_path="deepset/roberta-base-squad2") | 2b91f751643f10efef8d3bb91af9c40f |

cc-by-4.0 | [] | false | or reader = TransformersReader(model_name_or_path="deepset/roberta-base-squad2",tokenizer="deepset/roberta-base-squad2") ``` For a complete example of ``roberta-base-squad2`` being used for Question Answering, check out the [Tutorials in Haystack Documentation](https://haystack.deepset.ai/tutorials/first-qa-system) | ecba7ff72391cb42dee4a4157b5742b8 |

cc-by-4.0 | [] | false | Performance Evaluated on the SQuAD 2.0 dev set with the [official eval script](https://worksheets.codalab.org/rest/bundles/0x6b567e1cf2e041ec80d7098f031c5c9e/contents/blob/). ``` "exact": 79.87029394424324, "f1": 82.91251169582613, "total": 11873, "HasAns_exact": 77.93522267206478, "HasAns_f1": 84.02838248389763, "HasAns_total": 5928, "NoAns_exact": 81.79983179142137, "NoAns_f1": 81.79983179142137, "NoAns_total": 5945 ``` | 85895c841418e346263e8566e64e73de |

cc | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'sts'] | false | Sentence similarity model based on SlovakBERT This is a sentence similarity model based on [SlovakBERT](https://huggingface.co/gerulata/slovakbert). The model was fine-tuned using [STSbenchmark](ixa2.si.ehu.eus/stswiki/index.php/STSbenchmark) [Cer et al 2017] translated to Slovak using [M2M100](https://huggingface.co/facebook/m2m100_1.2B). The model can be used as an universal sentence encoder for Slovak sentences. | 96982a0d0e36f12e626a08db340e62f5 |

cc | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'sts'] | false | Usage Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed: ``` pip install -U sentence-transformers ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('kinit/slovakbert-sts-stsb') embeddings = model.encode(sentences) print(embeddings) ``` | cfeec859160e7d39d4d59978b7d4397f |

cc | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'sts'] | false | Cite ``` @article{DBLP:journals/corr/abs-2109-15254, author = {Mat{\'{u}}s Pikuliak and Stefan Grivalsky and Martin Konopka and Miroslav Blst{\'{a}}k and Martin Tamajka and Viktor Bachrat{\'{y}} and Mari{\'{a}}n Simko and Pavol Bal{\'{a}}zik and Michal Trnka and Filip Uhl{\'{a}}rik}, title = {SlovakBERT: Slovak Masked Language Model}, journal = {CoRR}, volume = {abs/2109.15254}, year = {2021}, url = {https://arxiv.org/abs/2109.15254}, eprinttype = {arXiv}, eprint = {2109.15254}, } ``` | 0a4560c80c5c7f3b29b1ad77dc90dfa0 |

apache-2.0 | ['generated_from_keras_callback'] | false | classificationEsp1 This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-bne](https://huggingface.co/PlanTL-GOB-ES/roberta-base-bne) on an unknown dataset. It achieves the following results on the evaluation set: | 71ab49e6a0e1257a386293c1c2b1980d |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 3864, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | b0e082f22a8777969ddb18fb88c80b94 |

apache-2.0 | ['automatic-speech-recognition', 'es'] | false | exp_w2v2t_es_unispeech-sat_s833 Fine-tuned [microsoft/unispeech-sat-large](https://huggingface.co/microsoft/unispeech-sat-large) for speech recognition using the train split of [Common Voice 7.0 (es)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 5b0e1f5c0d10adf8bf6ea3f5509af1c9 |

apache-2.0 | ['generated_from_keras_callback'] | false | nandysoham/9-clustered This model is a fine-tuned version of [Rocketknight1/distilbert-base-uncased-finetuned-squad](https://huggingface.co/Rocketknight1/distilbert-base-uncased-finetuned-squad) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.6059 - Train End Logits Accuracy: 0.8198 - Train Start Logits Accuracy: 0.7982 - Validation Loss: 0.7823 - Validation End Logits Accuracy: 0.7846 - Validation Start Logits Accuracy: 0.7483 - Epoch: 1 | 9e449e518d6bd2f6ea7ce4bbea8fe345 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 1004, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False} - training_precision: float32 | c6722706b7738570a1b67206c948a23a |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.8783 | 0.7495 | 0.7205 | 0.7823 | 0.7806 | 0.7463 | 0 | | 0.6059 | 0.8198 | 0.7982 | 0.7823 | 0.7846 | 0.7483 | 1 | | cb6fc3d0fa13f63aa1c57233f1524c48 |

apache-2.0 | ['translation'] | false | opus-mt-de-de * source languages: de * target languages: de * OPUS readme: [de-de](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/de-de/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-20.zip](https://object.pouta.csc.fi/OPUS-MT-models/de-de/opus-2020-01-20.zip) * test set translations: [opus-2020-01-20.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-de/opus-2020-01-20.test.txt) * test set scores: [opus-2020-01-20.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/de-de/opus-2020-01-20.eval.txt) | d78e24c6ec53611dfa016db256f295e4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 125 | 0.4881 | 0.8184 | | 035528cc012ed550f76e69bac68b20a9 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-finetuned-imdb This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.2887 | 4b7266aa810755a68f1211ce3dcce9ea |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6449 | 1.0 | 157 | 2.3557 | | 2.4402 | 2.0 | 314 | 2.2897 | | 2.3804 | 3.0 | 471 | 2.3011 | | 058c9f89c459a57283b37491d5a08227 |

apache-2.0 | ['translation'] | false | pol-ukr * source group: Polish * target group: Ukrainian * OPUS readme: [pol-ukr](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/pol-ukr/README.md) * model: transformer-align * source language(s): pol * target language(s): ukr * model: transformer-align * pre-processing: normalization + SentencePiece (spm32k,spm32k) * download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/pol-ukr/opus-2020-06-17.zip) * test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/pol-ukr/opus-2020-06-17.test.txt) * test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/pol-ukr/opus-2020-06-17.eval.txt) | e070bff54da631d8635c420076e0f4c6 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: pol-ukr - source_languages: pol - target_languages: ukr - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/pol-ukr/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['pl', 'uk'] - src_constituents: {'pol'} - tgt_constituents: {'ukr'} - src_multilingual: False - tgt_multilingual: False - prepro: normalization + SentencePiece (spm32k,spm32k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/pol-ukr/opus-2020-06-17.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/pol-ukr/opus-2020-06-17.test.txt - src_alpha3: pol - tgt_alpha3: ukr - short_pair: pl-uk - chrF2_score: 0.665 - bleu: 47.1 - brevity_penalty: 0.992 - ref_len: 13434.0 - src_name: Polish - tgt_name: Ukrainian - train_date: 2020-06-17 - src_alpha2: pl - tgt_alpha2: uk - prefer_old: False - long_pair: pol-ukr - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | 4258db5552e37e9e63c74e7cc8275b19 |

mit | [] | false | model by osanseviero This your the Stable Diffusion model fine-tuned the Mr Potato Head concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **a photo of sks mr potato head** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). Here are the images used for training this concept:      | 0d46b8ee4fb18981d58d256d61ca15db |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Hi - Rahul Soni This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 subset test dataset. It achieves the following results on the evaluation set: - Loss: 1.0458 - Wer: 525.0 | 9fbc701d1b2526072e77c9d1035cf540 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:------:|:----:|:---------------:|:-----:| | 0.0 | 1000.0 | 1000 | 0.9920 | 450.0 | | 0.0 | 2000.0 | 2000 | 0.9749 | 475.0 | | 0.0 | 3000.0 | 3000 | 1.0266 | 525.0 | | 0.0 | 4000.0 | 4000 | 1.0458 | 525.0 | | 52ee4ee54e3fcf9ec57611dc1131886c |

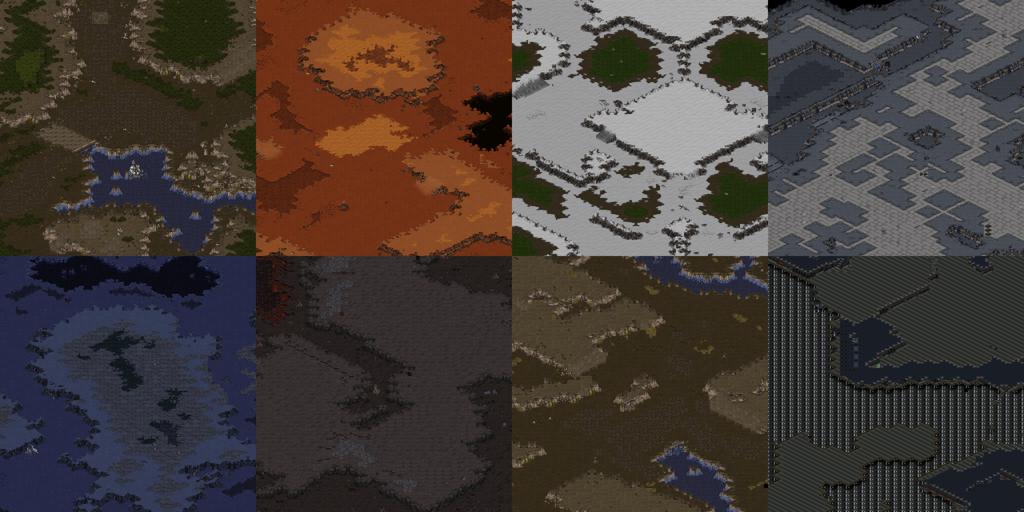

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'landscape'] | false | DreamBooth model for Starcraft:Remastered terrain  This is a Stable Diffusion model fine-tuned on Starcraft terrain images with DreamBooth. It can be used by adding the `instance_prompt`: **isometric starcraft _tileset_ terrain** The _tileset_ should be one of `ashworld`, `badlands`, `desert`, `ice`, `jungle`, `platform`, `twilight` or `installation`, which corresponds to Starcraft terrain tilesets. It was trained on 64x64 terrain images from 1,808 melee maps including original Blizzard maps and those downloaded from Battle.net, scmscx.com and broodwarmaps.net. Run it on Huggingface Spaces: https://huggingface.co/spaces/wdcqc/wfd Or use this notebook on Colab: https://colab.research.google.com/github/wdcqc/WaveFunctionDiffusion/blob/remaster/colab/WaveFunctionDiffusion_Demo.ipynb In addition to Dreambooth, a custom VAE model (`AutoencoderTile`) for each tileset is trained to decode the latents to tileset probabilities ("waves") and generate as Starcraft maps. A WFC Guidance, inspired by the Wave Function Collapse algorithm, is also added to the pipeline. For more information about guidance please see this page: [Fine-Tuning, Guidance and Conditioning](https://github.com/huggingface/diffusion-models-class/tree/main/unit2) This model was created as part of the DreamBooth Hackathon. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | 0a75d017ff9e25f62e0540b3ecf2378d |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'landscape'] | false | Use CUDA (otherwise it will take 15 minutes) device = "cuda" tilenet = AutoencoderTile.from_pretrained( "wdcqc/starcraft-terrain-64x64", subfolder="tile_vae_{}".format(tileset) ).to(device) pipeline = WaveFunctionDiffusionPipeline.from_pretrained( "wdcqc/starcraft-terrain-64x64", tile_vae = tilenet, wfc_data_path = wfc_data_path ) pipeline.to(device) | 2be56f99c8be16195797fa0b88864b07 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'landscape'] | false | need to include the dreambooth keywords "isometric starcraft {tileset_keyword} terrain" tileset_keyword = get_tileset_keyword(tileset) pipeline_output = pipeline( "lost temple, isometric starcraft {} terrain".format(tileset_keyword), num_inference_steps = 50, guidance_scale = 3.5, wfc_guidance_start_step = 20, wfc_guidance_strength = 5, wfc_guidance_final_steps = 20, wfc_guidance_final_strength = 10, ) image = pipeline_output.images[0] | 67416dcc10b6040802229dafe23c8e68 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'landscape'] | false | Generate map file from wfd.scmap import tiles_to_scx import random, time tiles_to_scx( tile_result, "outputs/{}_{}_{:04d}.scx".format(tileset, time.strftime("%Y%m%d_%H%M%S"), random.randint(0, 1e4)), wfc_data_path = wfc_data_path ) | 46f032fa7009218c47b882c036196534 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_sa_GLUE_Experiment_data_aug_mnli This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE MNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.9046 - Accuracy: 0.6099 | 057424b7bafab42d0a12279916c6f4c4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:------:|:---------------:|:--------:| | 0.8429 | 1.0 | 62880 | 0.8755 | 0.6185 | | 0.6713 | 2.0 | 125760 | 0.9512 | 0.6039 | | 0.5387 | 3.0 | 188640 | 1.0796 | 0.5978 | | 0.4297 | 4.0 | 251520 | 1.1877 | 0.5961 | | 0.3405 | 5.0 | 314400 | 1.3154 | 0.5895 | | 0.2693 | 6.0 | 377280 | 1.4320 | 0.5798 | | 8760f0979714bcdad655e5814b369982 |

apache-2.0 | ['generated_from_trainer'] | false | reviews-classification This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.5442 - Accuracy: 0.875 | cb80f1a54bdadb91965e46b65af200e4 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 350 | 0.4666 | 0.86 | | 0.4577 | 2.0 | 700 | 0.5500 | 0.8525 | | 0.2499 | 3.0 | 1050 | 0.5442 | 0.875 | | 3e7fe91f31a02fa3c7859d014a7c8eda |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'food'] | false | DreamBooth model for the jairzza concept trained by jairNeto on the jairNeto/pizza dataset. This is a Stable Diffusion model fine-tuned on the jairzza concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of jairzza pizza** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | e6c6784c1551b46d73daf6b6f1cdcb91 |

mit | ['generated_from_trainer', 'de'] | false | feinschwarz This model is a fine-tuned version of [dbmdz/german-gpt2](https://huggingface.co/dbmdz/german-gpt2). The dataset was compiled from all texts of https://www.feinschwarz.net (as of October 2021). The homepage gathers essayistic texts on theological topics. The model will be used to explore the challenges of text-generating AI for theology with a hands on approach. Can an AI generate theological knowledge? Is a text by Karl Rahner of more value than an AI-generated text? Can we even distinguish a Rahner text from an AI-generated text in the future? And the crucial question: Would it be bad if not? The model is a very first attempt and in its current version certainly not yet a danger for academic theology 🤓 | 5d3a60aedfcf958ee2c73af282d8c9c1 |

mit | ['generated_from_trainer', 'de'] | false | Using the model You can create text with the model using this code: ```python from transformers import pipeline pipe = pipeline('text-generation', model="Michael711/feinschwarz", tokenizer="Michael711/feinschwarz") text = pipe("Der Sinn des Lebens ist es", max_length=100)[0]["generated_text"] print(text) ``` Have fun theologizing! | af07415404717b09ca28920327b348e6 |

apache-2.0 | ['generated_from_trainer'] | false | t5-small-finetuned-xsum-finetuned-billsum This model is a fine-tuned version of [Frederick0291/t5-small-finetuned-xsum](https://huggingface.co/Frederick0291/t5-small-finetuned-xsum) on an unknown dataset. | e035c79519070c7b1f1184a85708339d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 330 | 1.8540 | 32.9258 | 14.9104 | 27.1067 | 27.208 | 18.8437 | | 4e0416782e926de1935947026c317656 |

mit | ['bart', 'pytorch'] | false | BART-IT - Il Post BART-IT is a sequence-to-sequence model, based on the BART architecture that is specifically tailored to the Italian language. The model is pre-trained on a [large corpus of Italian text](https://huggingface.co/datasets/gsarti/clean_mc4_it), and can be fine-tuned on a variety of tasks. | d0b7fe11e866d2f1bb02d0f52a5ac8c6 |

mit | ['bart', 'pytorch'] | false | Fine-tuning The model has been fine-tuned for the abstractive summarization task on 3 different Italian datasets: - [FanPage](https://huggingface.co/datasets/ARTeLab/fanpage) - finetuned model [here](https://huggingface.co/morenolq/bart-it-fanpage) - **This model** [IlPost](https://huggingface.co/datasets/ARTeLab/ilpost) - finetuned model [here](https://huggingface.co/morenolq/bart-it-ilpost) - [WITS](https://huggingface.co/datasets/Silvia/WITS) - finetuned model [here](https://huggingface.co/morenolq/bart-it-WITS) | d99507b1feaa2bc478121c24b216d15d |

mit | ['bart', 'pytorch'] | false | Usage In order to use the model, you can use the following code: ```python from transformers import AutoTokenizer, AutoModelForSeq2SeqLM tokenizer = AutoTokenizer.from_pretrained("morenolq/bart-it-ilpost") model = AutoModelForSeq2SeqLM.from_pretrained("morenolq/bart-it-ilpost") input_ids = tokenizer.encode("Il modello BART-IT è stato pre-addestrato su un corpus di testo italiano", return_tensors="pt") outputs = model.generate(input_ids, max_length=40, num_beams=4, early_stopping=True) print(tokenizer.decode(outputs[0], skip_special_tokens=True)) ``` | 8482f796b502cf7be5527ca6dbfc5cbe |

apache-2.0 | ['CTC', 'pytorch', 'speechbrain', 'Transformer'] | false | wav2vec 2.0 with CTC/Attention trained on DVoice Darija (No LM) This repository provides all the necessary tools to perform automatic speech recognition from an end-to-end system pretrained on a [DVoice](https://zenodo.org/record/6342622) Darija dataset within SpeechBrain. For a better experience, we encourage you to learn more about [SpeechBrain](https://speechbrain.github.io). | DVoice Release | Val. CER | Val. WER | Test CER | Test WER | |:-------------:|:---------------------------:| -----:| -----:| -----:| | v2.0 | 5.51 | 18.46 | 5.85 | 18.28 | | 1ee381585426e04ab8a71dc7da6cce06 |

apache-2.0 | ['CTC', 'pytorch', 'speechbrain', 'Transformer'] | false | Transcribing your own audio files (in Darija) ```python from speechbrain.pretrained import EncoderASR asr_model = EncoderASR.from_hparams(source="speechbrain/asr-wav2vec2-dvoice-darija", savedir="pretrained_models/asr-wav2vec2-dvoice-darija") asr_model.transcribe_file('speechbrain/asr-wav2vec2-dvoice-darija/example_darija.wav') ``` | d5b74b4eb29192d05cee7631eb9d477e |

apache-2.0 | ['CTC', 'pytorch', 'speechbrain', 'Transformer'] | false | Training The model was trained with SpeechBrain. To train it from scratch follow these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ```bash cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ```bash cd recipes/DVoice/ASR/CTC python train_with_wav2vec2.py hparams/train_dar_with_wav2vec.yaml --data_folder=/localscratch/darija/ ``` You can find our training results (models, logs, etc) [here](https://drive.google.com/drive/folders/1vNT7RjRuELs7pumBHmfYsrOp9m46D0ym?usp=sharing). | 70694c96a80b235d4953a0c7538a854e |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper Large Marathi This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.1975 - Wer: 13.6440 | 1e4d0dcaa4538bc480049f1d98142f06 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - training_steps: 400 - mixed_precision_training: Native AMP | d2dbdd6adc211365c7d1e8cccd1316d4 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.1914 | 0.81 | 400 | 0.1975 | 13.6440 | | eb0f75ed1abd2e2b8dd3b1c3ac6715b5 |

mit | ['generated_from_trainer'] | false | DeBERTa v3 small fine-tuned on hate_speech18 dataset for Hate Speech Detection This model is a fine-tuned version of [microsoft/deberta-v3-small](https://huggingface.co/microsoft/deberta-v3-small) on the hate_speech18 dataset. It achieves the following results on the evaluation set: - Loss: 0.2922 - Accuracy: 0.9161 | ac7ab71f9cf1f3592b64dd057af23b1d |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4147 | 1.0 | 650 | 0.3910 | 0.8832 | | 0.2975 | 2.0 | 1300 | 0.2922 | 0.9161 | | 0.2575 | 3.0 | 1950 | 0.3555 | 0.9051 | | 0.1553 | 4.0 | 2600 | 0.4263 | 0.9124 | | 0.1267 | 5.0 | 3250 | 0.4238 | 0.9161 | | b805f765be94dca8e6c22cb3c304f578 |

apache-2.0 | ['translation'] | false | opus-mt-lv-fi * source languages: lv * target languages: fi * OPUS readme: [lv-fi](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/lv-fi/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/lv-fi/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/lv-fi/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/lv-fi/opus-2020-01-09.eval.txt) | 5355f84d025922f803b897434fb894e6 |

mit | ['generated_from_trainer'] | false | farsi_lastname_classifier_4 This model is a fine-tuned version of [microsoft/deberta-v3-small](https://huggingface.co/microsoft/deberta-v3-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.2337 - Accuracy: 0.96 | cb0e10b5a9b6aaffcb081497f9d085a8 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 128 - eval_batch_size: 256 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: cosine - lr_scheduler_warmup_ratio: 0.1 - num_epochs: 15 - mixed_precision_training: Native AMP | d99271a098a08b3e777a3ea168fb0aab |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 12 | 0.5673 | 0.836 | | No log | 2.0 | 24 | 0.4052 | 0.868 | | No log | 3.0 | 36 | 0.2211 | 0.932 | | No log | 4.0 | 48 | 0.2488 | 0.926 | | No log | 5.0 | 60 | 0.1490 | 0.954 | | No log | 6.0 | 72 | 0.1464 | 0.968 | | No log | 7.0 | 84 | 0.1923 | 0.954 | | No log | 8.0 | 96 | 0.2070 | 0.96 | | No log | 9.0 | 108 | 0.2055 | 0.962 | | No log | 10.0 | 120 | 0.2436 | 0.942 | | No log | 11.0 | 132 | 0.2173 | 0.96 | | No log | 12.0 | 144 | 0.2342 | 0.956 | | No log | 13.0 | 156 | 0.2337 | 0.962 | | No log | 14.0 | 168 | 0.2332 | 0.96 | | No log | 15.0 | 180 | 0.2337 | 0.96 | | 700dfa41e0c8a66408783cc2bcc0ac96 |

apache-2.0 | ['pytorch', 'causal-lm', 'pythia'] | false | Intended Use The primary intended use of Pythia is research on the behavior, functionality, and limitations of large language models. This suite is intended to provide a controlled setting for performing scientific experiments. To enable the study of how language models change over the course of training, we provide 143 evenly spaced intermediate checkpoints per model. These checkpoints are hosted on Hugging Face as branches. Note that branch `143000` corresponds exactly to the model checkpoint on the `main` branch of each model. You may also further fine-tune and adapt Pythia-6.9B for deployment, as long as your use is in accordance with the Apache 2.0 license. Pythia models work with the Hugging Face [Transformers Library](https://huggingface.co/docs/transformers/index). If you decide to use pre-trained Pythia-6.9B as a basis for your fine-tuned model, please conduct your own risk and bias assessment. | ca57637d317985d93ece45bedc7b1108 |

apache-2.0 | ['pytorch', 'causal-lm', 'pythia'] | false | Out-of-scope use The Pythia Suite is **not** intended for deployment. It is not a in itself a product and cannot be used for human-facing interactions. Pythia models are English-language only, and are not suitable for translation or generating text in other languages. Pythia-6.9B has not been fine-tuned for downstream contexts in which language models are commonly deployed, such as writing genre prose, or commercial chatbots. This means Pythia-6.9B will **not** respond to a given prompt the way a product like ChatGPT does. This is because, unlike this model, ChatGPT was fine-tuned using methods such as Reinforcement Learning from Human Feedback (RLHF) to better “understand” human instructions. | 8e4ceb4808096aae2559b48e0da669fb |

apache-2.0 | ['pytorch', 'causal-lm', 'pythia'] | false | Limitations and biases The core functionality of a large language model is to take a string of text and predict the next token. The token deemed statistically most likely by the model need not produce the most “accurate” text. Never rely on Pythia-6.9B to produce factually accurate output. This model was trained on [the Pile](https://pile.eleuther.ai/), a dataset known to contain profanity and texts that are lewd or otherwise offensive. See [Section 6 of the Pile paper](https://arxiv.org/abs/2101.00027) for a discussion of documented biases with regards to gender, religion, and race. Pythia-6.9B may produce socially unacceptable or undesirable text, *even if* the prompt itself does not include anything explicitly offensive. If you plan on using text generated through, for example, the Hosted Inference API, we recommend having a human curate the outputs of this language model before presenting it to other people. Please inform your audience that the text was generated by Pythia-6.9B. | 5939b5eba73a96f84a2e7db89e40c797 |

apache-2.0 | ['pytorch', 'causal-lm', 'pythia'] | false | Training data [The Pile](https://pile.eleuther.ai/) is a 825GiB general-purpose dataset in English. It was created by EleutherAI specifically for training large language models. It contains texts from 22 diverse sources, roughly broken down into five categories: academic writing (e.g. arXiv), internet (e.g. CommonCrawl), prose (e.g. Project Gutenberg), dialogue (e.g. YouTube subtitles), and miscellaneous (e.g. GitHub, Enron Emails). See [the Pile paper](https://arxiv.org/abs/2101.00027) for a breakdown of all data sources, methodology, and a discussion of ethical implications. Consult [the datasheet](https://arxiv.org/abs/2201.07311) for more detailed documentation about the Pile and its component datasets. The Pile can be downloaded from the [official website](https://pile.eleuther.ai/), or from a [community mirror](https://the-eye.eu/public/AI/pile/). The Pile was **not** deduplicated before being used to train Pythia-6.9B. | 5e83c61fe42aabbc448b3676cca475cd |

apache-2.0 | ['pytorch', 'text-generation', 'causal-lm', 'rwkv'] | false | Model Description RWKV-3 169M is a L12-D768 causal language model trained on the Pile. See https://github.com/BlinkDL/RWKV-LM for details. At this moment you have to use my Github code (https://github.com/BlinkDL/RWKV-v2-RNN-Pile) to run it. ctx_len = 768 n_layer = 12 n_embd = 768 Final checkpoint: RWKV-3-Pile-20220720-10704.pth : Trained on the Pile for 328B tokens. * Pile loss 2.5596 * LAMBADA ppl 28.82, acc 32.33% * PIQA acc 64.15% * SC2016 acc 57.88% * Hellaswag acc_norm 32.45% Preview checkpoint: 20220703-1652.pth : Trained on the Pile for 50B tokens. Pile loss 2.6375, LAMBADA ppl 33.30, acc 31.24%. | 1f9ad56d898cb782e4d31a30858face7 |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-b0.01 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 2.8163 - Bleu: 7.5421 - Gen Len: 44.4902 | 14cd1c8b7e56489ee9794accca2d0fec |

apache-2.0 | ['generated_from_trainer'] | false | xlsr-wav2vec2-3 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4201 - Wer: 0.3998 | 84245494d6346c1d03e49d79ff5dae94 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 16 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 800 - num_epochs: 30 - mixed_precision_training: Native AMP | 9d88c360e54dd48ff3fb15d4b075bb69 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 5.0117 | 0.68 | 400 | 3.0284 | 0.9999 | | 2.6502 | 1.35 | 800 | 1.0868 | 0.9374 | | 0.9362 | 2.03 | 1200 | 0.5216 | 0.6491 | | 0.6675 | 2.7 | 1600 | 0.4744 | 0.5837 | | 0.5799 | 3.38 | 2000 | 0.4400 | 0.5802 | | 0.5196 | 4.05 | 2400 | 0.4266 | 0.5314 | | 0.4591 | 4.73 | 2800 | 0.3808 | 0.5190 | | 0.4277 | 5.41 | 3200 | 0.3987 | 0.5036 | | 0.4125 | 6.08 | 3600 | 0.3902 | 0.5040 | | 0.3797 | 6.76 | 4000 | 0.4105 | 0.5025 | | 0.3606 | 7.43 | 4400 | 0.3975 | 0.4823 | | 0.3554 | 8.11 | 4800 | 0.3733 | 0.4747 | | 0.3373 | 8.78 | 5200 | 0.3737 | 0.4726 | | 0.3252 | 9.46 | 5600 | 0.3795 | 0.4736 | | 0.3192 | 10.14 | 6000 | 0.3935 | 0.4736 | | 0.3012 | 10.81 | 6400 | 0.3974 | 0.4648 | | 0.2972 | 11.49 | 6800 | 0.4497 | 0.4724 | | 0.2873 | 12.16 | 7200 | 0.4645 | 0.4843 | | 0.2849 | 12.84 | 7600 | 0.4461 | 0.4709 | | 0.274 | 13.51 | 8000 | 0.4002 | 0.4695 | | 0.2709 | 14.19 | 8400 | 0.4188 | 0.4627 | | 0.2619 | 14.86 | 8800 | 0.3987 | 0.4646 | | 0.2545 | 15.54 | 9200 | 0.4083 | 0.4668 | | 0.2477 | 16.22 | 9600 | 0.4525 | 0.4728 | | 0.2455 | 16.89 | 10000 | 0.4148 | 0.4515 | | 0.2281 | 17.57 | 10400 | 0.4304 | 0.4514 | | 0.2267 | 18.24 | 10800 | 0.4077 | 0.4446 | | 0.2136 | 18.92 | 11200 | 0.4209 | 0.4445 | | 0.2032 | 19.59 | 11600 | 0.4543 | 0.4534 | | 0.1999 | 20.27 | 12000 | 0.4184 | 0.4373 | | 0.1898 | 20.95 | 12400 | 0.4044 | 0.4424 | | 0.1846 | 21.62 | 12800 | 0.4098 | 0.4288 | | 0.1796 | 22.3 | 13200 | 0.4047 | 0.4262 | | 0.1715 | 22.97 | 13600 | 0.4077 | 0.4189 | | 0.1641 | 23.65 | 14000 | 0.4162 | 0.4248 | | 0.1615 | 24.32 | 14400 | 0.4392 | 0.4222 | | 0.1575 | 25.0 | 14800 | 0.4296 | 0.4185 | | 0.1456 | 25.68 | 15200 | 0.4363 | 0.4129 | | 0.1461 | 26.35 | 15600 | 0.4305 | 0.4124 | | 0.1422 | 27.03 | 16000 | 0.4237 | 0.4086 | | 0.1378 | 27.7 | 16400 | 0.4294 | 0.4051 | | 0.1326 | 28.38 | 16800 | 0.4311 | 0.4051 | | 0.1286 | 29.05 | 17200 | 0.4153 | 0.3992 | | 0.1283 | 29.73 | 17600 | 0.4201 | 0.3998 | | 7f231df353f7ac9a832d796e99e63d1f |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.1952 - Precision: 0.0 - Recall: 0.0 - F1: 0.0 - Accuracy: 0.7370 | 95af8ca71b368b973dd2bd41ddeee68d |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:---:|:--------:| | No log | 1.0 | 5 | 1.8526 | 0.0 | 0.0 | 0.0 | 0.7367 | | No log | 2.0 | 10 | 1.2730 | 0.0 | 0.0 | 0.0 | 0.7370 | | No log | 3.0 | 15 | 1.1952 | 0.0 | 0.0 | 0.0 | 0.7370 | | 53bd09d72130e374fae2ac96dbe24f13 |

apache-2.0 | ['deep-narrow'] | false | T5-Efficient-LARGE-NH8-NL32 (Deep-Narrow version) T5-Efficient-LARGE-NH8-NL32 is a variation of [Google's original T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) following the [T5 model architecture](https://huggingface.co/docs/transformers/model_doc/t5). It is a *pretrained-only* checkpoint and was released with the paper **[Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers](https://arxiv.org/abs/2109.10686)** by *Yi Tay, Mostafa Dehghani, Jinfeng Rao, William Fedus, Samira Abnar, Hyung Won Chung, Sharan Narang, Dani Yogatama, Ashish Vaswani, Donald Metzler*. In a nutshell, the paper indicates that a **Deep-Narrow** model architecture is favorable for **downstream** performance compared to other model architectures of similar parameter count. To quote the paper: > We generally recommend a DeepNarrow strategy where the model’s depth is preferentially increased > before considering any other forms of uniform scaling across other dimensions. This is largely due to > how much depth influences the Pareto-frontier as shown in earlier sections of the paper. Specifically, a > tall small (deep and narrow) model is generally more efficient compared to the base model. Likewise, > a tall base model might also generally more efficient compared to a large model. We generally find > that, regardless of size, even if absolute performance might increase as we continue to stack layers, > the relative gain of Pareto-efficiency diminishes as we increase the layers, converging at 32 to 36 > layers. Finally, we note that our notion of efficiency here relates to any one compute dimension, i.e., > params, FLOPs or throughput (speed). We report all three key efficiency metrics (number of params, > FLOPS and speed) and leave this decision to the practitioner to decide which compute dimension to > consider. To be more precise, *model depth* is defined as the number of transformer blocks that are stacked sequentially. A sequence of word embeddings is therefore processed sequentially by each transformer block. | 4752408c521c8b5cb5885050242d00a0 |

apache-2.0 | ['deep-narrow'] | false | Details model architecture This model checkpoint - **t5-efficient-large-nh8-nl32** - is of model type **Large** with the following variations: - **nh** is **8** - **nl** is **32** It has **771.34** million parameters and thus requires *ca.* **3085.35 MB** of memory in full precision (*fp32*) or **1542.68 MB** of memory in half precision (*fp16* or *bf16*). A summary of the *original* T5 model architectures can be seen here: | Model | nl (el/dl) | ff | dm | kv | nh | | 3a7171cf5db5a731e69e0250e428b840 |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | DreamBooth model for the MlsEnglishSchoolBostonGnome concept trained by gavrenkov on the gavrenkov/MLSGnome dataset. This is a Stable Diffusion model fine-tuned on the MlsEnglishSchoolBostonGnome concept with DreamBooth. It can be used by modifying the `instance_prompt`: **a photo of MlsEnglishSchoolBostonGnome character** This model was created as part of the DreamBooth Hackathon 🔥. Visit the [organisation page](https://huggingface.co/dreambooth-hackathon) for instructions on how to take part! | e9bb549412ed39cc0a990c3780515ccd |

creativeml-openrail-m | ['pytorch', 'diffusers', 'stable-diffusion', 'text-to-image', 'diffusion-models-class', 'dreambooth-hackathon', 'wildcard'] | false | Usage ```python from diffusers import StableDiffusionPipeline pipeline = StableDiffusionPipeline.from_pretrained('gavrenkov/MlsEnglishSchoolBostonGnome-character') image = pipeline().images[0] image ``` | d2275fe40cd63940ad3be4ff547f5078 |

mit | [] | false | model by KnightMichael This your the Stable Diffusion model fine-tuned the Yagami Taichi from Digimon Adventure (1999) concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt`: **an anime boy character of sks** You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept:                           | 552a9b7ff0278da643691a801b0d45ce |

apache-2.0 | ['generated_from_trainer'] | false | bert-finetuned-ner This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset. It achieves the following results on the evaluation set: - Loss: 0.0636 - Precision: 0.9330 - Recall: 0.9498 - F1: 0.9414 - Accuracy: 0.9861 | d5e7b65970ca68a45fc4e1b9b95ea40e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0901 | 1.0 | 1756 | 0.0696 | 0.9166 | 0.9325 | 0.9245 | 0.9815 | | 0.0366 | 2.0 | 3512 | 0.0632 | 0.9324 | 0.9493 | 0.9408 | 0.9857 | | 0.0178 | 3.0 | 5268 | 0.0636 | 0.9330 | 0.9498 | 0.9414 | 0.9861 | | a6267109a5181f72e843e21ddda85d33 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.