license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Usage To use this model you have to download the file aswell as drop it into the "\stable-diffusion-webui\models\Stable-diffusion" folder Token: ```neko``` If it is to strong just add [] around it. Trained until 10000 steps Have fun :) | d1f9554ae91bf137be085556bacee3c9 |

creativeml-openrail-m | ['stable-diffusion', 'text-to-image'] | false | Example Pictures <table> <tr> <td><img src=https://i.imgur.com/MpyeqMe.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/wxzvHrL.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/MuUnJY5.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/XeDC8xA.png width=100% height=100%/></td> <td><img src=https://i.imgur.com/XmLTrEl.png width=100% height=100%/></td> </tr> </table> | 7863ddc1c98f939b5f28cb3dc92673dc |

mit | [] | false | LONGFORMER-BASE-4096 fine-tuned on SQuAD v1 This is longformer-base-4096 model fine-tuned on SQuAD v1 dataset for question answering task. [Longformer](https://arxiv.org/abs/2004.05150) model created by Iz Beltagy, Matthew E. Peters, Arman Coha from AllenAI. As the paper explains it > `Longformer` is a BERT-like model for long documents. The pre-trained model can handle sequences with upto 4096 tokens. | a50fce6f7cbdd5178359d3c157c8128e |

mit | [] | false | Model Training This model was trained on google colab v100 GPU. You can find the fine-tuning colab here [](https://colab.research.google.com/drive/1zEl5D-DdkBKva-DdreVOmN0hrAfzKG1o?usp=sharing). Few things to keep in mind while training longformer for QA task, by default longformer uses sliding-window local attention on all tokens. But For QA, all question tokens should have global attention. For more details on this please refer the paper. The `LongformerForQuestionAnswering` model automatically does that for you. To allow it to do that 1. The input sequence must have three sep tokens, i.e the sequence should be encoded like this ` <s> question</s></s> context</s>`. If you encode the question and answer as a input pair, then the tokenizer already takes care of that, you shouldn't worry about it. 2. `input_ids` should always be a batch of examples. | e1674c19c020f7506de5d9cabf471d5c |

mit | [] | false | Model in Action 🚀 ```python import torch from transformers import AutoTokenizer, AutoModelForQuestionAnswering, tokenizer = AutoTokenizer.from_pretrained("valhalla/longformer-base-4096-finetuned-squadv1") model = AutoModelForQuestionAnswering.from_pretrained("valhalla/longformer-base-4096-finetuned-squadv1") text = "Huggingface has democratized NLP. Huge thanks to Huggingface for this." question = "What has Huggingface done ?" encoding = tokenizer(question, text, return_tensors="pt") input_ids = encoding["input_ids"] | 1e3c0c4c5fb9a1bfd9c43c9194e24054 |

mit | [] | false | the forward method will automatically set global attention on question tokens attention_mask = encoding["attention_mask"] start_scores, end_scores = model(input_ids, attention_mask=attention_mask) all_tokens = tokenizer.convert_ids_to_tokens(input_ids[0].tolist()) answer_tokens = all_tokens[torch.argmax(start_scores) :torch.argmax(end_scores)+1] answer = tokenizer.decode(tokenizer.convert_tokens_to_ids(answer_tokens)) | 192e483f16677c2d70ab6082d17ff995 |

mit | [] | false | output => democratized NLP ``` The `LongformerForQuestionAnswering` isn't yet supported in `pipeline` . I'll update this card once the support has been added. > Created with ❤️ by Suraj Patil [](https://github.com/patil-suraj/) [](https://twitter.com/psuraj28) | 31ddc53af307dc7f411032757f49a5e4 |

apache-2.0 | ['generated_from_trainer'] | false | mobilebert_sa_GLUE_Experiment_logit_kd_rte_128 This model is a fine-tuned version of [google/mobilebert-uncased](https://huggingface.co/google/mobilebert-uncased) on the GLUE RTE dataset. It achieves the following results on the evaluation set: - Loss: 0.3915 - Accuracy: 0.5271 | 72d9bee2516c6d4c430a41f57c5b9451 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.4093 | 1.0 | 20 | 0.3917 | 0.5271 | | 0.4077 | 2.0 | 40 | 0.3922 | 0.5271 | | 0.4076 | 3.0 | 60 | 0.3916 | 0.5271 | | 0.4075 | 4.0 | 80 | 0.3921 | 0.5271 | | 0.4075 | 5.0 | 100 | 0.3925 | 0.5271 | | 0.4073 | 6.0 | 120 | 0.3915 | 0.5271 | | 0.4066 | 7.0 | 140 | 0.3916 | 0.5271 | | 0.4043 | 8.0 | 160 | 0.3937 | 0.5271 | | 0.3902 | 9.0 | 180 | 0.4440 | 0.5054 | | 0.3545 | 10.0 | 200 | 0.4575 | 0.4801 | | 0.3116 | 11.0 | 220 | 0.4770 | 0.4440 | | a9b075e5c8eeb087ef8b6d86367f3d38 |

mit | ['generated_from_trainer'] | false | roberta-base.CEBaB_confounding.uniform.absa.5-class.seed_43 This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on the OpenTable OPENTABLE-ABSA dataset. It achieves the following results on the evaluation set: - Loss: 0.3790 - Accuracy: 0.8913 - Macro-f1: 0.8893 - Weighted-macro-f1: 0.8914 | bb80a68f2759062528452fad8d71b6c5 |

apache-2.0 | ['translation'] | false | phi-eng * source group: Philippine languages * target group: English * OPUS readme: [phi-eng](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/phi-eng/README.md) * model: transformer * source language(s): akl_Latn ceb hil ilo pag war * target language(s): eng * model: transformer * pre-processing: normalization + SentencePiece (spm12k,spm12k) * download original weights: [opus2m-2020-08-01.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/phi-eng/opus2m-2020-08-01.zip) * test set translations: [opus2m-2020-08-01.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/phi-eng/opus2m-2020-08-01.test.txt) * test set scores: [opus2m-2020-08-01.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/phi-eng/opus2m-2020-08-01.eval.txt) | 5b2c14d0e7257c0fb1404134ccce8fbd |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | Tatoeba-test.akl-eng.akl.eng | 11.6 | 0.321 | | Tatoeba-test.ceb-eng.ceb.eng | 21.7 | 0.393 | | Tatoeba-test.hil-eng.hil.eng | 17.6 | 0.371 | | Tatoeba-test.ilo-eng.ilo.eng | 36.6 | 0.560 | | Tatoeba-test.multi.eng | 21.5 | 0.391 | | Tatoeba-test.pag-eng.pag.eng | 27.5 | 0.494 | | Tatoeba-test.war-eng.war.eng | 17.3 | 0.380 | | 8d996abd191d9f9f1fcf8b449b773994 |

apache-2.0 | ['translation'] | false | System Info: - hf_name: phi-eng - source_languages: phi - target_languages: eng - opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/phi-eng/README.md - original_repo: Tatoeba-Challenge - tags: ['translation'] - languages: ['phi', 'en'] - src_constituents: {'ilo', 'akl_Latn', 'war', 'hil', 'pag', 'ceb'} - tgt_constituents: {'eng'} - src_multilingual: True - tgt_multilingual: False - prepro: normalization + SentencePiece (spm12k,spm12k) - url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/phi-eng/opus2m-2020-08-01.zip - url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/phi-eng/opus2m-2020-08-01.test.txt - src_alpha3: phi - tgt_alpha3: eng - short_pair: phi-en - chrF2_score: 0.391 - bleu: 21.5 - brevity_penalty: 1.0 - ref_len: 2380.0 - src_name: Philippine languages - tgt_name: English - train_date: 2020-08-01 - src_alpha2: phi - tgt_alpha2: en - prefer_old: False - long_pair: phi-eng - helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535 - transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b - port_machine: brutasse - port_time: 2020-08-21-14:41 | b458533f66100ad6d3cd9e6050f2161f |

mit | ['automatic-speech-recognition', 'common_voice', 'generated_from_trainer'] | false | wav2vec2-xls-r-300m-uk This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0927 - Wer: 0.1222 - Cer: 0.0204 | c574c1a982f64a93ce7bbad01e001c9a |

mit | ['automatic-speech-recognition', 'common_voice', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 40 - eval_batch_size: 40 - seed: 42 - gradient_accumulation_steps: 6 - total_train_batch_size: 240 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 100 - num_epochs: 100 - mixed_precision_training: Native AMP | b345737facb09e501d84d3ac7df0bfd3 |

mit | ['automatic-speech-recognition', 'common_voice', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Cer | Validation Loss | Wer | |:-------------:|:-----:|:----:|:------:|:---------------:|:------:| | 9.0008 | 1.68 | 200 | 1.0 | 3.7590 | 1.0 | | 3.4972 | 3.36 | 400 | 1.0 | 3.3933 | 1.0 | | 3.3432 | 5.04 | 600 | 1.0 | 3.2617 | 1.0 | | 3.2421 | 6.72 | 800 | 1.0 | 3.0712 | 1.0 | | 1.9839 | 7.68 | 1000 | 0.1400 | 0.7204 | 0.6561 | | 0.8017 | 9.36 | 1200 | 0.0766 | 0.3734 | 0.4159 | | 0.5554 | 11.04 | 1400 | 0.0583 | 0.2621 | 0.3237 | | 0.4309 | 12.68 | 1600 | 0.0486 | 0.2085 | 0.2753 | | 0.3697 | 14.36 | 1800 | 0.0421 | 0.1746 | 0.2427 | | 0.3293 | 16.04 | 2000 | 0.0388 | 0.1597 | 0.2243 | | 0.2934 | 17.72 | 2200 | 0.0358 | 0.1428 | 0.2083 | | 0.2704 | 19.4 | 2400 | 0.0333 | 0.1326 | 0.1949 | | 0.2547 | 21.08 | 2600 | 0.0322 | 0.1255 | 0.1882 | | 0.2366 | 22.76 | 2800 | 0.0309 | 0.1211 | 0.1815 | | 0.2183 | 24.44 | 3000 | 0.0294 | 0.1159 | 0.1727 | | 0.2115 | 26.13 | 3200 | 0.0280 | 0.1117 | 0.1661 | | 0.1968 | 27.8 | 3400 | 0.0274 | 0.1063 | 0.1622 | | 0.1922 | 29.48 | 3600 | 0.0269 | 0.1082 | 0.1598 | | 0.1847 | 31.17 | 3800 | 0.0260 | 0.1061 | 0.1550 | | 0.1715 | 32.84 | 4000 | 0.0252 | 0.1014 | 0.1496 | | 0.1689 | 34.53 | 4200 | 0.0250 | 0.1012 | 0.1492 | | 0.1655 | 36.21 | 4400 | 0.0243 | 0.0999 | 0.1450 | | 0.1585 | 37.88 | 4600 | 0.0239 | 0.0967 | 0.1432 | | 0.1492 | 39.57 | 4800 | 0.0237 | 0.0978 | 0.1421 | | 0.1491 | 41.25 | 5000 | 0.0236 | 0.0963 | 0.1412 | | 0.1453 | 42.93 | 5200 | 0.0230 | 0.0979 | 0.1373 | | 0.1386 | 44.61 | 5400 | 0.0227 | 0.0959 | 0.1353 | | 0.1387 | 46.29 | 5600 | 0.0226 | 0.0927 | 0.1355 | | 0.1329 | 47.97 | 5800 | 0.0224 | 0.0951 | 0.1341 | | 0.1295 | 49.65 | 6000 | 0.0219 | 0.0950 | 0.1306 | | 0.1287 | 51.33 | 6200 | 0.0216 | 0.0937 | 0.1290 | | 0.1277 | 53.02 | 6400 | 0.0215 | 0.0963 | 0.1294 | | 0.1201 | 54.69 | 6600 | 0.0213 | 0.0959 | 0.1282 | | 0.1199 | 56.38 | 6800 | 0.0215 | 0.0944 | 0.1286 | | 0.1221 | 58.06 | 7000 | 0.0209 | 0.0938 | 0.1249 | | 0.1145 | 59.68 | 7200 | 0.0208 | 0.0941 | 0.1254 | | 0.1143 | 61.36 | 7400 | 0.0209 | 0.0941 | 0.1249 | | 0.1143 | 63.04 | 7600 | 0.0209 | 0.0940 | 0.1248 | | 0.1137 | 64.72 | 7800 | 0.0205 | 0.0931 | 0.1234 | | 0.1125 | 66.4 | 8000 | 0.0204 | 0.0927 | 0.1222 | | 1a3f48e16949f9294d9fa230beab7b7a |

apache-2.0 | ['generated_from_trainer'] | false | distilroberta-base-finetuned-suicide-depression This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.6622 - Accuracy: 0.7158 | 87fb75b45bdc97dcaf7ffa220e65499e |

apache-2.0 | ['generated_from_trainer'] | false | Model description Just a **POC** of a Transformer fine-tuned on [SDCNL](https://github.com/ayaanzhaque/SDCNL) dataset for suicide (label 1) or depression (label 0) detection in tweets. **DO NOT use it in production** | 57da9168df13d43afc91f2977f91e1f6 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 214 | 0.6204 | 0.6632 | | No log | 2.0 | 428 | 0.6622 | 0.7158 | | 0.5244 | 3.0 | 642 | 0.7312 | 0.6684 | | 0.5244 | 4.0 | 856 | 0.9711 | 0.7105 | | 0.2876 | 5.0 | 1070 | 1.1620 | 0.7 | | a94841318001d32fb5495b9518f22968 |

apache-2.0 | ['generated_from_trainer'] | false | tiny-mlm-glue-mnli-target-glue-rte This model is a fine-tuned version of [muhtasham/tiny-mlm-glue-mnli](https://huggingface.co/muhtasham/tiny-mlm-glue-mnli) on the None dataset. It achieves the following results on the evaluation set: - Loss: 1.5419 - Accuracy: 0.6137 | f6029552b014869aed31f17c9c4c63b5 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.6373 | 6.41 | 500 | 0.6751 | 0.5993 | | 0.4271 | 12.82 | 1000 | 0.8148 | 0.6390 | | 0.2621 | 19.23 | 1500 | 0.9962 | 0.6173 | | 0.1589 | 25.64 | 2000 | 1.2448 | 0.6065 | | 0.1002 | 32.05 | 2500 | 1.5419 | 0.6137 | | 4f4154c326df7ed99e0daf0f77ba2d60 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2251 - Accuracy: 0.923 - F1: 0.9232 | 357a4e85dbff759fff614f023dc74e7c |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8243 | 1.0 | 250 | 0.3183 | 0.906 | 0.9019 | | 0.2543 | 2.0 | 500 | 0.2251 | 0.923 | 0.9232 | | 2317afbcfda2bad2386c574bbc27fd94 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-emotion This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset. It achieves the following results on the evaluation set: - Loss: 0.2281 - Accuracy: 0.924 - F1: 0.9240 | e24e19a3a5409c52999852267b6aa630 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8687 | 1.0 | 250 | 0.3390 | 0.9015 | 0.8984 | | 0.2645 | 2.0 | 500 | 0.2281 | 0.924 | 0.9240 | | 245b6a7f21eeee0d3336f5a64354cd7e |

apache-2.0 | ['generated_from_trainer'] | false | medium-mlm-tweet-target-tweet This model is a fine-tuned version of [muhtasham/medium-mlm-tweet](https://huggingface.co/muhtasham/medium-mlm-tweet) on the tweet_eval dataset. It achieves the following results on the evaluation set: - Loss: 1.9066 - Accuracy: 0.7594 - F1: 0.7637 | 8af73ff35ce11330790f12e36ff1493e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.4702 | 4.9 | 500 | 0.8711 | 0.7540 | 0.7532 | | 0.0629 | 9.8 | 1000 | 1.2918 | 0.7701 | 0.7668 | | 0.0227 | 14.71 | 1500 | 1.4801 | 0.7727 | 0.7696 | | 0.0181 | 19.61 | 2000 | 1.5118 | 0.7888 | 0.7870 | | 0.0114 | 24.51 | 2500 | 1.6747 | 0.7754 | 0.7745 | | 0.0141 | 29.41 | 3000 | 1.8765 | 0.7674 | 0.7628 | | 0.0177 | 34.31 | 3500 | 1.9066 | 0.7594 | 0.7637 | | ab001e8395e0af13052d6f37ac284245 |

apache-2.0 | ['generated_from_trainer'] | false | bert-base-uncased-offensive-lm-tapt This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.0002 | 5c395ef6eb29842af2f6a3fd3e237a79 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 8 - eval_batch_size: 28 - seed: 42 - optimizer: Adam with betas=(0.9,0.98) and epsilon=1e-06 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 5 - num_epochs: 16 - mixed_precision_training: Native AMP | e1bf38018957b0d6cc3c877a1ae2290b |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.219 | 0.07 | 100 | 0.0728 | | 0.0358 | 0.13 | 200 | 0.0090 | | 0.0106 | 0.2 | 300 | 0.0033 | | 0.0056 | 0.26 | 400 | 0.0020 | | 0.0053 | 0.33 | 500 | 0.0015 | | 0.003 | 0.39 | 600 | 0.0012 | | 0.0029 | 0.46 | 700 | 0.0009 | | 0.0022 | 0.52 | 800 | 0.0010 | | 0.0025 | 0.59 | 900 | 0.0008 | | 0.002 | 0.65 | 1000 | 0.0006 | | 0.0016 | 0.72 | 1100 | 0.0006 | | 0.0015 | 0.78 | 1200 | 0.0006 | | 0.0015 | 0.85 | 1300 | 0.0004 | | 0.0012 | 0.92 | 1400 | 0.0006 | | 0.0013 | 0.98 | 1500 | 0.0002 | | 0.0013 | 1.05 | 1600 | 0.0003 | | 0.0008 | 1.11 | 1700 | 0.0002 | | 0.0013 | 1.18 | 1800 | 0.0006 | | 0.0019 | 1.24 | 1900 | 0.0004 | | 0.001 | 1.31 | 2000 | 0.0002 | | 0.0007 | 1.37 | 2100 | 0.0003 | | 0.0009 | 1.44 | 2200 | 0.0003 | | 0.0012 | 1.5 | 2300 | 0.0002 | | 2e1a154f9a1907bb9834d0120172c417 |

apache-2.0 | ['LABEL-0 = NONE', 'LABEL-1 = B-DATE', 'LABEL-2 = I-DATE', 'LABEL-3 = B-TIME', 'LABEL-4 = I-TIME', 'LABEL-5 = B-DURATION', 'LABEL-6 = I-DURATION', 'LABEL-7 = B-SET', 'LABEL-8 = I-SET'] | false | Bio-RoBERTime This model is a fine-tuned version of [PlanTL-GOB-ES/roberta-base-biomedical-clinical-es](https://huggingface.co/PlanTL-GOB-ES/roberta-base-biomedical-clinical-es) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0177 - Precision: 0.8121 - Recall: 0.8854 - F1: 0.8472 - Accuracy: 0.9919 | 63ef28193f6fcc8e42ea099a932226f0 |

apache-2.0 | ['LABEL-0 = NONE', 'LABEL-1 = B-DATE', 'LABEL-2 = I-DATE', 'LABEL-3 = B-TIME', 'LABEL-4 = I-TIME', 'LABEL-5 = B-DURATION', 'LABEL-6 = I-DURATION', 'LABEL-7 = B-SET', 'LABEL-8 = I-SET'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 8e-05 - train_batch_size: 16 - eval_batch_size: 16 - seed: 72 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 24 | 04ad0b4b9b527333505b317865719fc1 |

apache-2.0 | ['LABEL-0 = NONE', 'LABEL-1 = B-DATE', 'LABEL-2 = I-DATE', 'LABEL-3 = B-TIME', 'LABEL-4 = I-TIME', 'LABEL-5 = B-DURATION', 'LABEL-6 = I-DURATION', 'LABEL-7 = B-SET', 'LABEL-8 = I-SET'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.0433 | 1.0 | 12 | 0.0443 | 0.4948 | 0.5 | 0.4974 | 0.9800 | | 0.0234 | 2.0 | 24 | 0.0221 | 0.4082 | 0.7257 | 0.5225 | 0.9732 | | 0.0055 | 3.0 | 36 | 0.0159 | 0.4768 | 0.7847 | 0.5932 | 0.9797 | | 0.0089 | 4.0 | 48 | 0.0153 | 0.5317 | 0.8160 | 0.6438 | 0.9813 | | 0.0033 | 5.0 | 60 | 0.0131 | 0.7229 | 0.8333 | 0.7742 | 0.9896 | | 0.008 | 6.0 | 72 | 0.0129 | 0.6649 | 0.8681 | 0.7530 | 0.9885 | | 0.0063 | 7.0 | 84 | 0.0146 | 0.7523 | 0.8542 | 0.8 | 0.9904 | | 0.0086 | 8.0 | 96 | 0.0150 | 0.7470 | 0.8715 | 0.8045 | 0.9906 | | 0.0009 | 9.0 | 108 | 0.0139 | 0.7658 | 0.8854 | 0.8213 | 0.9910 | | 0.0031 | 10.0 | 120 | 0.0159 | 0.8031 | 0.8924 | 0.8454 | 0.9919 | | 0.0011 | 11.0 | 132 | 0.0158 | 0.7649 | 0.8924 | 0.8237 | 0.9909 | | 0.0006 | 12.0 | 144 | 0.0153 | 0.7398 | 0.8785 | 0.8032 | 0.9902 | | 0.0013 | 13.0 | 156 | 0.0157 | 0.7815 | 0.8819 | 0.8287 | 0.9910 | | 0.0008 | 14.0 | 168 | 0.0154 | 0.7822 | 0.8854 | 0.8306 | 0.9908 | | 0.0008 | 15.0 | 180 | 0.0164 | 0.7778 | 0.875 | 0.8235 | 0.9910 | | 0.0007 | 16.0 | 192 | 0.0168 | 0.7864 | 0.8819 | 0.8314 | 0.9912 | | 0.0018 | 17.0 | 204 | 0.0173 | 0.7870 | 0.8854 | 0.8333 | 0.9912 | | 0.0006 | 18.0 | 216 | 0.0178 | 0.7730 | 0.875 | 0.8208 | 0.9914 | | 0.0012 | 19.0 | 228 | 0.0171 | 0.8013 | 0.8819 | 0.8397 | 0.9916 | | 0.0006 | 20.0 | 240 | 0.0181 | 0.8137 | 0.8646 | 0.8384 | 0.9916 | | 0.0007 | 21.0 | 252 | 0.0186 | 0.8137 | 0.8646 | 0.8384 | 0.9918 | | 0.0012 | 22.0 | 264 | 0.0188 | 0.8137 | 0.8646 | 0.8384 | 0.9919 | | 0.0006 | 23.0 | 276 | 0.0178 | 0.8121 | 0.8854 | 0.8472 | 0.9919 | | 0.0009 | 24.0 | 288 | 0.0177 | 0.8121 | 0.8854 | 0.8472 | 0.9919 | | 39cafa8fca799c4ebd3b0e59465888d9 |

unknown | [] | false | karaokeroom.safetensors [<img width="480" src="https://i.imgur.com/hclI0vj.jpg">](https://i.imgur.com/hclI0vj.jpg) [<img width="480" src="https://i.imgur.com/8H3c7eE.jpg">](https://i.imgur.com/8H3c7eE.jpg) カラオケ屋さんの部屋の雰囲気を学習したLoRAです。 Loraを読み込ませて、プロンプトに **karaokeroom** と記述してください。 プロンプトに、1girl, karaoke, microphone, 等とあわせて記述していただくとカラオケを歌ってる感じの絵ができます。 ※当LoRAを適用すると人物の描画や画風に影響が生じるようです。LoRAを適用するWeightを調整することで画風への影響を抑えられます。 影響が気になった場合は \<lora:karaokeroom:1\>ではなく\<lora:karaokeroom:0.6\>といった感じで調整して使ってみてください。 ※karaokeroom, 1girl, karaoke, 等のプロンプトを書いても、部屋の風景のみで人物がうまく描画されないことがあります。 ガチャ要素があるのと、モデルによってはうまく働かない場合があるようです。その場合は根気よく何枚か生成してみるか、違うモデルを使ってみてください。 | df9bbc68bcefe6f4766dfdac206c13a0 |

unknown | [] | false | この実験をやってみた動機 たとえばプロンプトに shibuya,city, と書くと渋谷っぽい風景の絵を描いてくれます。これはモデルが「渋谷」という概念を知ってるという事だと思います。 しかし、 Nishinomiya と書いても西宮っぽい風景の絵を描いてはくれません。これはモデルが「西宮」という概念を知らないという事だと思います。 最近、LoRAという手法でスペックが低いパソコン(GPU)でも追加学習が出来る方法が普及してきました。既存のモデルでは描けないキャラクターや衣装等を学習させている方がたくさんいらっしゃいます。 そこで自分は、風景の写真を何枚か学習させれば、その「場所」の概念を学習してくれるのではないかと考えました。 カラオケ店の部屋の写真を20枚程用意して、WD14-taggerでタグ付けを行いました。出来たtxtファイルの全ての先頭の位置に karaokeroom, という単語を追加しました。 学習前提のモデルは karaokeroom という概念を知らないので、この学習によって karaokeroom という新しい概念を獲得してくれると想定しました。 実際に学習を実行して出来上がった当LoRAを読み込ませると上記の karaokeroom というプロンプトでカラオケ店の部屋っぽい絵が生成できます。 場所の概念を学習させる実験は成功ではないでしょうか? LoRAでうまく場所の概念を学習できる方法が確立できれば日本の様々な風景を学習させることで身近な場所のイラストが生成できるようになると思います。これはその第一歩です。 | 3697d917068a5563f344c147cd135e02 |

unknown | [] | false | 問題点、今後の課題 カラオケ店の風景は再現できるようになりました。が、当LoRAを適用してカラオケを歌う女の子の絵を生成すると、人物の描画や画風に影響が生じる場合があります。 これはおそらく場所の概念だけではなく、素材写真の画風等も学習してしまったものだと思います。 現状はLoraを適用するWeightを下げることで影響を軽減できますが、学習方法やLoRAの適用の仕方で影響を軽減することが出来ないか?と考えています。 ・U-net層でWeight調整することで影響を押さえられる? 実は僕も全然よく分かってないのですが(!)階層マージ(Marge Block Weighted)で多くの方が様々なモデルマージに挑戦した結果、絵を生成するU-netの各レイヤー層を調節することで描画に様々な調整ができる(?)ことがわかってきました。 例えば「INの上層はリアル調、INの下層がanime調を担当しているのではないか?」、「M_00は全体にキャラクターや服装・背景等に大きな影響が出る」、「OUT上層は、主題以外の表現 (例えば背景)に影響を及ぼしている」、「OUT04,OUT05,OUT06あたりはめっちゃ顔に影響ある」等色々な説があります。 上の方で書いたkaraokeroom:0.6といった指定はU-net全体まるごとで影響を下げる設定になると思うのですが(多分)もし背景に大きく関与しているU-net層が分かればそれ以外のU-net層への関与を抑えることで既存モデルの人物描写と追加学習背景LoRAがうまく共存できるのでは?と考えられます。 実際にU-net層別にWeightを調節できるScripts(sd-webui-lora-block-weight:https://github.com/hako-mikan/sd-webui-lora-block-weight )を使って色々な数値を調整したXY Plot画像等を作成してみたりして調べていますが、現状では「ワイには何もわからないことがわかった」という感じではっきりしたことは分かっていません。 ・学習用素材の写真をうまく調整する ・学習時のキャプション(タグ)の付け方などで、画風を学ばないようにできないか? ・正則化画像を用意することで何かうまく学習の調整ができるのでは? 等、いろいろな案が考えられると思いますが…まだまだ試行錯誤の段階で情報が足りず良い解決方法は得られていません。 なかなか難しそうですが、うまく場所の概念だけ覚えさせる方法が出来たらいいですよね。 | 63834e374ea177892e89069b5a282cb4 |

apache-2.0 | ['generated_from_trainer'] | false | swin-finetuned-food101 This model is a fine-tuned version of [microsoft/swin-base-patch4-window7-224](https://huggingface.co/microsoft/swin-base-patch4-window7-224) on the food101 dataset. It achieves the following results on the evaluation set: - Loss: 0.2772 - Accuracy: 0.9210 | 8a4e587dd8a1eb17548f9aea5a6743fb |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.5077 | 1.0 | 1183 | 0.3851 | 0.8893 | | 0.3523 | 2.0 | 2366 | 0.3124 | 0.9088 | | 0.1158 | 3.0 | 3549 | 0.2772 | 0.9210 | | 3d3905e11f91de506b088fc08bf195ca |

apache-2.0 | ['automatic-speech-recognition', 'zh-CN'] | false | exp_w2v2t_zh-cn_unispeech_s784 Fine-tuned [microsoft/unispeech-large-1500h-cv](https://huggingface.co/microsoft/unispeech-large-1500h-cv) for speech recognition using the train split of [Common Voice 7.0 (zh-CN)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | f610eec628957738cb829496aec331ba |

mit | [] | false | Fast_DreamBooth_AMLO on Stable Diffusion via Dreambooth trained on the [fast-DreamBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook | 8f56780d5e29813ef4670d8043f06233 |

mit | [] | false | model by mrcrois This your the Stable Diffusion model fine-tuned the Fast_DreamBooth_AMLO concept taught to Stable Diffusion with Dreambooth. It can be used by modifying the `instance_prompt(s)`: **AMLO17.jpg, AMLO21.jpg, AMLO9.jpg, AMLO18.jpg, AMLO2.jpg, AMLO1.jpg, AMLO13.jpg, AMLO15.jpg, AMLO14.jpg, AMLO22.jpg, AMLO4.jpg, AMLO16.jpg, AMLO11.jpg, AMLO7.jpg, AMLO8.jpg, AMLO19.jpg, AMLO10.jpg, AMLO6.jpg, AMLO20.jpg, AMLO12.jpg, AMLO5.jpg** You can also train your own concepts and upload them to the library by using [the fast-DremaBooth.ipynb by TheLastBen](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb). And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts) Here are the images used for training this concept: AMLO5.jpg AMLO12.jpg AMLO20.jpg AMLO6.jpg AMLO10.jpg AMLO19.jpg AMLO8.jpg AMLO7.jpg AMLO11.jpg AMLO16.jpg AMLO4.jpg AMLO22.jpg AMLO14.jpg AMLO15.jpg AMLO13.jpg AMLO1.jpg AMLO2.jpg AMLO18.jpg AMLO9.jpg AMLO21.jpg AMLO17.jpg                      | 995a48dc72c75a9387f01a4ea4558241 |

mit | [] | false | German ELECTRA large Released, Oct 2020, this is a German ELECTRA language model trained collaboratively by the makers of the original German BERT (aka "bert-base-german-cased") and the dbmdz BERT (aka bert-base-german-dbmdz-cased). In our [paper](https://arxiv.org/pdf/2010.10906.pdf), we outline the steps taken to train our model and show that this is the state of the art German language model. | bbd7dd557a0a7a55075d8ebb0850799e |

mit | [] | false | Performance ``` GermEval18 Coarse: 80.70 GermEval18 Fine: 55.16 GermEval14: 88.95 ``` See also: deepset/gbert-base deepset/gbert-large deepset/gelectra-base deepset/gelectra-large deepset/gelectra-base-generator deepset/gelectra-large-generator | 04cb0cd1268f18b9a03328b4a118ab1b |

apache-2.0 | ['generated_from_trainer'] | false | xlsr-53-bemba-15hrs This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.2789 - Wer: 0.3751 | f69404d183957973055fa4acab0f970a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | 3.4138 | 0.71 | 400 | 0.4965 | 0.7239 | | 0.5685 | 1.43 | 800 | 0.2939 | 0.4839 | | 0.4471 | 2.15 | 1200 | 0.2728 | 0.4467 | | 0.3579 | 2.86 | 1600 | 0.2397 | 0.3965 | | 0.3087 | 3.58 | 2000 | 0.2427 | 0.4015 | | 0.2702 | 4.29 | 2400 | 0.2539 | 0.4112 | | 0.2406 | 5.01 | 2800 | 0.2376 | 0.3885 | | 0.2015 | 5.72 | 3200 | 0.2492 | 0.3844 | | 0.1759 | 6.44 | 3600 | 0.2562 | 0.3768 | | 0.1572 | 7.16 | 4000 | 0.2789 | 0.3751 | | 2bfd681c3b4bdf2cf50c3b389b19e326 |

apache-2.0 | [] | false | ParsBERT (v2.0) A Transformer-based Model for Persian Language Understanding We reconstructed the vocabulary and fine-tuned the ParsBERT v1.1 on the new Persian corpora in order to provide some functionalities for using ParsBERT in other scopes! Please follow the [ParsBERT](https://github.com/hooshvare/parsbert) repo for the latest information about previous and current models. | d6084e05eb0e4d637642e23b7f45a273 |

apache-2.0 | [] | false | DigiMag A total of 8,515 articles scraped from [Digikala Online Magazine](https://www.digikala.com/mag/). This dataset includes seven different classes. 1. Video Games 2. Shopping Guide 3. Health Beauty 4. Science Technology 5. General 6. Art Cinema 7. Books Literature | Label | | 83a10f14734e380fd891f24e4b2b490b |

apache-2.0 | [] | false | | |:------------------:|:----:| | Video Games | 1967 | | Shopping Guide | 125 | | Health Beauty | 1610 | | Science Technology | 2772 | | General | 120 | | Art Cinema | 1667 | | Books Literature | 254 | **Download** You can download the dataset from [here](https://drive.google.com/uc?id=1YgrCYY-Z0h2z0-PfWVfOGt1Tv0JDI-qz) | 292c57662cf27a3b93c86a1839c0299e |

apache-2.0 | [] | false | Results The following table summarizes the F1 score obtained by ParsBERT as compared to other models and architectures. | Dataset | ParsBERT v2 | ParsBERT v1 | mBERT | |:-----------------:|:-----------:|:-----------:|:-----:| | Digikala Magazine | 93.65* | 93.59 | 90.72 | | 8e0b426a81a8cf123df4a8fae82172bb |

apache-2.0 | [] | false | How to use :hugs: | Task | Notebook | |---------------------|---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------| | Text Classification | [](https://colab.research.google.com/github/hooshvare/parsbert/blob/master/notebooks/Taaghche_Sentiment_Analysis.ipynb) | | a27931c938dedece7c772e82d961b473 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-0'] | false | MultiBERTs Seed 0 Checkpoint 1400k (uncased) Seed 0 intermediate checkpoint 1400k MultiBERTs (pretrained BERT) model on English language using a masked language modeling (MLM) objective. It was introduced in [this paper](https://arxiv.org/pdf/2106.16163.pdf) and first released in [this repository](https://github.com/google-research/language/tree/master/language/multiberts). This is an intermediate checkpoint. The final checkpoint can be found at [multiberts-seed-0](https://hf.co/multberts-seed-0). This model is uncased: it does not make a difference between english and English. Disclaimer: The team releasing MultiBERTs did not write a model card for this model so this model card has been written by [gchhablani](https://hf.co/gchhablani). | 7847d451871d7b8033c6de73e9fdbfa1 |

apache-2.0 | ['exbert', 'multiberts', 'multiberts-seed-0'] | false | How to use Here is how to use this model to get the features of a given text in PyTorch: ```python from transformers import BertTokenizer, BertModel tokenizer = BertTokenizer.from_pretrained('multiberts-seed-0-1400k') model = BertModel.from_pretrained("multiberts-seed-0-1400k") text = "Replace me by any text you'd like." encoded_input = tokenizer(text, return_tensors='pt') output = model(**encoded_input) ``` | 876b2745bf089990dfc4ba458a94bbba |

unknown | [] | false | Stable Diffusion Model Trained using Dreambooth using the original spites of the character Rika Furude from Higurashi <br> Tag to trigger Rika Generation is "furude_rika" Example Images: <img src="https://i.imgur.com/4Rsf4WI.png" alt="Girl in a jacket" > <b> DISCLAIMER: I am not responsible for what images you produce or what you do with them. By downloading this model you consent to taking full responsibility for the images you produce with it. </b> | 5fc2df744e71622da44f393b084e523a |

mit | ['generated_from_keras_callback'] | false | sachinsahu/Warsaw_Pact-clustered This model is a fine-tuned version of [nandysoham16/12-clustered_aug](https://huggingface.co/nandysoham16/12-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0968 - Train End Logits Accuracy: 0.9688 - Train Start Logits Accuracy: 0.9792 - Validation Loss: 0.1022 - Validation End Logits Accuracy: 1.0 - Validation Start Logits Accuracy: 1.0 - Epoch: 0 | b989f745953d82967505048108d5ad0d |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.0968 | 0.9688 | 0.9792 | 0.1022 | 1.0 | 1.0 | 0 | | 8b0e5d75c3f5db2828ad1c0ba35bdd05 |

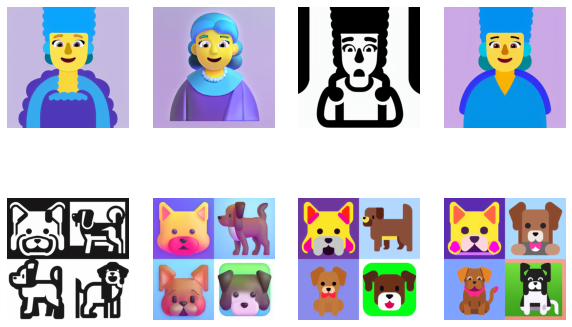

creativeml-openrail-m | ['text-to-image', 'stable-diffusion', 'stable-diffusion-diffusers'] | false | The Emoji file names were converted to become the text descriptions. It made the model learn a few special words: "flat", "high contrast" and "color"  | 76c1a70e76b55d1f5a307a2da78def84 |

apache-2.0 | [] | false | SnappFood [Snappfood](https://snappfood.ir/) (an online food delivery company) user comments containing 70,000 comments with two labels (i.e. polarity classification): 1. Happy 2. Sad | Label | | d38e277d465f64b4c229d94f3e681268 |

apache-2.0 | [] | false | Results The following table summarizes the F1 score obtained as compared to other models and architectures. | Dataset | ALBERT-fa-base-v2 | ParsBERT-v1 | mBERT | DeepSentiPers | |:------------------------:|:-----------------:|:-----------:|:-----:|:-------------:| | SnappFood User Comments | 85.79 | 88.12 | 87.87 | - | | d65cc09c95927179ccdbfa6164c8b9b3 |

apache-2.0 | ['G2P', 'Grapheme-to-Phoneme', 'speechbrain', 'text2text-generation'] | false | SoundChoice: Grapheme-to-Phoneme Models with Semantic Disambiguation This repository provides all the necessary tools to perform English grapheme-to-phoneme conversion with a pretrained SoundChoice G2P model using SpeechBrain. It is trained on LibriG2P training data derived from [LibriSpeech Alignments](https://zenodo.org/record/2619474 | d2108d079a52dd5d3a163e6a95c2deb0 |

apache-2.0 | ['G2P', 'Grapheme-to-Phoneme', 'speechbrain', 'text2text-generation'] | false | Install SpeechBrain First of all, please install SpeechBrain with the following command (local installation): ```bash pip install speechbrain pip install transformers ``` Please notice that we encourage you to read our tutorials and learn more about [SpeechBrain](https://speechbrain.github.io). | c67d432602e008d633dd2e3cd1087289 |

apache-2.0 | ['G2P', 'Grapheme-to-Phoneme', 'speechbrain', 'text2text-generation'] | false | Perform G2P Conversion Please follow the example below to perform grapheme-to-phoneme conversion with a high-level wrapper. ```python from speechbrain.pretrained import GraphemeToPhoneme g2p = GraphemeToPhoneme.from_hparams("speechbrain/soundchoice-g2p") text = "To be or not to be, that is the question" phonemes = g2p(text) ``` Given below is the expected output ```python >>> phonemes ['T', 'UW', ' ', 'B', 'IY', ' ', 'AO', 'R', ' ', 'N', 'AA', 'T', ' ', 'T', 'UW', ' ', 'B', 'IY', ' ', 'DH', 'AE', 'T', ' ', 'IH', 'Z', ' ', 'DH', 'AH', ' ', 'K', 'W', 'EH', 'S', 'CH', 'AH', 'N'] ``` To perform G2P conversion on a batch of text, pass an array of strings to the interface: ```python items = [ "All's Well That Ends Well", "The Merchant of Venice", "The Two Gentlemen of Verona", "The Comedy of Errors" ] transcriptions = g2p(items) ``` Given below is the expected output: ```python >>> transcriptions [['AO', 'L', 'Z', ' ', 'W', 'EH', 'L', ' ', 'DH', 'AE', 'T', ' ', 'EH', 'N', 'D', 'Z', ' ', 'W', 'EH', 'L'], ['DH', 'AH', ' ', 'M', 'ER', 'CH', 'AH', 'N', 'T', ' ', 'AH', 'V', ' ', 'V', 'EH', 'N', 'AH', 'S'], ['DH', 'AH', ' ', 'T', 'UW', ' ', 'JH', 'EH', 'N', 'T', 'AH', 'L', 'M', 'IH', 'N', ' ', 'AH', 'V', ' ', 'V', 'ER', 'OW', 'N', 'AH'], ['DH', 'AH', ' ', 'K', 'AA', 'M', 'AH', 'D', 'IY', ' ', 'AH', 'V', ' ', 'EH', 'R', 'ER', 'Z']] ``` | a5eb2bc2b159063979a3e62b765e9902 |

apache-2.0 | ['G2P', 'Grapheme-to-Phoneme', 'speechbrain', 'text2text-generation'] | false | Training The model was trained with SpeechBrain (aa018540). To train it from scratch follows these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ``` cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ``` cd recipes/LibriSpeech/G2P python train.py hparams/hparams_g2p_rnn.yaml --data_folder=your_data_folder ``` Adjust hyperparameters as needed by passing additional arguments. | c1265ae063ac9c9f67e59e9a9cbed7f7 |

apache-2.0 | ['G2P', 'Grapheme-to-Phoneme', 'speechbrain', 'text2text-generation'] | false | **Citing SpeechBrain** Please, cite SpeechBrain if you use it for your research or business. ```bibtex @misc{speechbrain, title={{SpeechBrain}: A General-Purpose Speech Toolkit}, author={Mirco Ravanelli and Titouan Parcollet and Peter Plantinga and Aku Rouhe and Samuele Cornell and Loren Lugosch and Cem Subakan and Nauman Dawalatabad and Abdelwahab Heba and Jianyuan Zhong and Ju-Chieh Chou and Sung-Lin Yeh and Szu-Wei Fu and Chien-Feng Liao and Elena Rastorgueva and François Grondin and William Aris and Hwidong Na and Yan Gao and Renato De Mori and Yoshua Bengio}, year={2021}, eprint={2106.04624}, archivePrefix={arXiv}, primaryClass={eess.AS}, note={arXiv:2106.04624} } ``` Also please cite the SoundChoice G2P paper on which this pretrained model is based: ```bibtex @misc{ploujnikov2022soundchoice, title={SoundChoice: Grapheme-to-Phoneme Models with Semantic Disambiguation}, author={Artem Ploujnikov and Mirco Ravanelli}, year={2022}, eprint={2207.13703}, archivePrefix={arXiv}, primaryClass={cs.SD} } ``` | c276efb62954fa2da4dc55320bc9ec69 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-few-shot-sentiment-model This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6819 - Accuracy: 0.75 - F1: 0.8 | 79fb7ec497ae08c368d3b03f72e3de98 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00015 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 10 | 998c071729b857c4710c7270c0471aba |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_logit_kd_wnli_96 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the GLUE WNLI dataset. It achieves the following results on the evaluation set: - Loss: 0.3442 - Accuracy: 0.5634 | 874f2d89542c93c904cc910606f0517e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.3478 | 1.0 | 3 | 0.3444 | 0.5634 | | 0.3472 | 2.0 | 6 | 0.3445 | 0.5634 | | 0.3467 | 3.0 | 9 | 0.3444 | 0.5634 | | 0.3476 | 4.0 | 12 | 0.3442 | 0.5634 | | 0.3476 | 5.0 | 15 | 0.3442 | 0.5634 | | 0.3471 | 6.0 | 18 | 0.3446 | 0.5634 | | 0.3473 | 7.0 | 21 | 0.3449 | 0.5634 | | 0.3471 | 8.0 | 24 | 0.3451 | 0.5634 | | 0.3477 | 9.0 | 27 | 0.3452 | 0.5634 | | 0.3469 | 10.0 | 30 | 0.3451 | 0.5634 | | ae598eee6ba02cf52ecac35e5fe49936 |

apache-2.0 | [] | false | <a name="introduction"></a> BERTweet.BR: A Pre-Trained Language Model for Tweets in Portuguese Having the same architecture of [BERTweet](https://huggingface.co/docs/transformers/model_doc/bertweet) we trained our model from scratch following [RoBERTa](https://huggingface.co/docs/transformers/model_doc/roberta) pre-training procedure on a corpus of approximately 9GB containing 100M Portuguese Tweets. | 828a7f321335afff6849e053fe1fcaa3 |

apache-2.0 | [] | false | Normalized Inputs ```python import torch from transformers import AutoModel, AutoTokenizer model = AutoModel.from_pretrained('melll-uff/bertweetbr') tokenizer = AutoTokenizer.from_pretrained('melll-uff/bertweetbr', normalization=False) | beea19903102e1dd87adbfe91096e74a |

apache-2.0 | [] | false | INPUT TWEETS ALREADY NORMALIZED! inputs = [ "Procuro um amor , que seja bom pra mim ... vou procurar , eu vou até o fim :nota_musical:", "Que jogo ontem @USER :mãos_juntas:", "Demojizer para Python é :polegar_para_cima: e está disponível em HTTPURL"] encoded_inputs = tokenizer(inputs, return_tensors="pt", padding=True) with torch.no_grad(): last_hidden_states = model(**encoded_inputs) | e7da89b022b01d57bb40ac8595fd697a |

apache-2.0 | [] | false | CLS Token of last hidden states. Shape: (number of input sentences, hidden sizeof the model) last_hidden_states[0][:,0,:] tensor([[-0.1430, -0.1325, 0.1595, ..., -0.0802, -0.0153, -0.1358], [-0.0108, 0.1415, 0.0695, ..., 0.1420, 0.1153, -0.0176], [-0.1854, 0.1866, 0.3163, ..., -0.2117, 0.2123, -0.1907]]) ``` | 68151f34a21f516ef66c7fe4c48e4fae |

apache-2.0 | [] | false | Normalize raw input Tweets ```python from emoji import demojize import torch from transformers import AutoModel, AutoTokenizer model = AutoModel.from_pretrained('melll-uff/bertweetbr') tokenizer = AutoTokenizer.from_pretrained('melll-uff/bertweetbr', normalization=True) inputs = [ "Procuro um amor , que seja bom pra mim ... vou procurar , eu vou até o fim 🎵", "Que jogo ontem @cristiano 🙏", "Demojizer para Python é 👍 e está disponível em https://pypi.org/project/emoji/"] tokenizer.demojizer = lambda x: demojize(x, language='pt') [tokenizer.normalizeTweet(s) for s in inputs] | fb41c8551187cecb1f2e25e194c4768b |

apache-2.0 | [] | false | Tokenizer first normalizes tweet sentences ['Procuro um amor , que seja bom pra mim ... vou procurar , eu vou até o fim :nota_musical:', 'Que jogo ontem @USER :mãos_juntas:', 'Demojizer para Python é :polegar_para_cima: e está disponível em HTTPURL'] encoded_inputs = tokenizer(inputs, return_tensors="pt", padding=True) with torch.no_grad(): last_hidden_states = model(**encoded_inputs) | 8f3ee5dcffb639c7e1f10ee1178f59ba |

apache-2.0 | [] | false | Mask Filling with Pipeline ```python from transformers import pipeline model_name = 'melll-uff/bertweetbr' tokenizer = AutoTokenizer.from_pretrained('melll-uff/bertweetbr', normalization=False) filler_mask = pipeline("fill-mask", model=model_name, tokenizer=tokenizer) filler_mask("Rio é a <mask> cidade do Brasil.", top_k=5) | 37647749d4c29c0ce1830e51a7a67ab5 |

apache-2.0 | [] | false | Output [{'sequence': 'Rio é a melhor cidade do Brasil.', 'score': 0.9871652126312256, 'token': 120, 'token_str': 'm e l h o r'}, {'sequence': 'Rio é a pior cidade do Brasil.', 'score': 0.005050931591540575, 'token': 316, 'token_str': 'p i o r'}, {'sequence': 'Rio é a maior cidade do Brasil.', 'score': 0.004420778248459101, 'token': 389, 'token_str': 'm a i o r'}, {'sequence': 'Rio é a minha cidade do Brasil.', 'score': 0.0021856199018657207, 'token': 38, 'token_str': 'm i n h a'}, {'sequence': 'Rio é a segunda cidade do Brasil.', 'score': 0.0002110043278662488, 'token': 667, 'token_str': 's e g u n d a'}] ``` | 8786514f638e8095c5777b37ec87cab8 |

apache-2.0 | ['automatic-speech-recognition', 'en'] | false | exp_w2v2r_en_xls-r_age_teens-0_sixties-10_s225 Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (en)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | 49c06652636adcad5d0b06043140824f |

apache-2.0 | ['question-answering'] | false | Model Overview This is an ELECTRA-Large QA Model trained from https://huggingface.co/google/electra-large-discriminator in two stages. First, it is trained on synthetic adversarial data generated using a BART-Large question generator, and then it is trained on SQuAD and AdversarialQA (https://arxiv.org/abs/2002.00293) in a second stage of fine-tuning. | ca38937a39e0d65967b13b1bccd81580 |

apache-2.0 | ['generated_from_trainer'] | false | data-augmentation-whitenoise-timit-1155 This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.5458 - Wer: 0.3324 | c30db7f0bef0635b082bc1a98042a518 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.5204 | 0.8 | 500 | 1.6948 | 0.9531 | | 0.8435 | 1.6 | 1000 | 0.5367 | 0.5113 | | 0.4449 | 2.4 | 1500 | 0.4612 | 0.4528 | | 0.3182 | 3.21 | 2000 | 0.4314 | 0.4156 | | 0.2328 | 4.01 | 2500 | 0.4250 | 0.4031 | | 0.1897 | 4.81 | 3000 | 0.4630 | 0.4023 | | 0.1628 | 5.61 | 3500 | 0.4445 | 0.3922 | | 0.1472 | 6.41 | 4000 | 0.4452 | 0.3793 | | 0.1293 | 7.21 | 4500 | 0.4715 | 0.3847 | | 0.1176 | 8.01 | 5000 | 0.4267 | 0.3757 | | 0.1023 | 8.81 | 5500 | 0.4494 | 0.3821 | | 0.092 | 9.62 | 6000 | 0.4501 | 0.3704 | | 0.0926 | 10.42 | 6500 | 0.4722 | 0.3643 | | 0.0784 | 11.22 | 7000 | 0.5033 | 0.3765 | | 0.077 | 12.02 | 7500 | 0.5165 | 0.3684 | | 0.0704 | 12.82 | 8000 | 0.5138 | 0.3646 | | 0.0599 | 13.62 | 8500 | 0.5664 | 0.3674 | | 0.0582 | 14.42 | 9000 | 0.5188 | 0.3575 | | 0.0526 | 15.22 | 9500 | 0.5605 | 0.3621 | | 0.0512 | 16.03 | 10000 | 0.5400 | 0.3585 | | 0.0468 | 16.83 | 10500 | 0.5471 | 0.3603 | | 0.0445 | 17.63 | 11000 | 0.5168 | 0.3555 | | 0.0411 | 18.43 | 11500 | 0.5772 | 0.3542 | | 0.0394 | 19.23 | 12000 | 0.5079 | 0.3567 | | 0.0354 | 20.03 | 12500 | 0.5427 | 0.3613 | | 0.0325 | 20.83 | 13000 | 0.5532 | 0.3572 | | 0.0318 | 21.63 | 13500 | 0.5223 | 0.3514 | | 0.0269 | 22.44 | 14000 | 0.6002 | 0.3460 | | 0.028 | 23.24 | 14500 | 0.5591 | 0.3432 | | 0.0254 | 24.04 | 15000 | 0.5837 | 0.3432 | | 0.0235 | 24.84 | 15500 | 0.5571 | 0.3397 | | 0.0223 | 25.64 | 16000 | 0.5470 | 0.3383 | | 0.0193 | 26.44 | 16500 | 0.5611 | 0.3367 | | 0.0227 | 27.24 | 17000 | 0.5405 | 0.3342 | | 0.0183 | 28.04 | 17500 | 0.5205 | 0.3330 | | 0.017 | 28.85 | 18000 | 0.5512 | 0.3330 | | 0.0167 | 29.65 | 18500 | 0.5458 | 0.3324 | | 63973428c47350987172470c1746c42e |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | Wav2Vec2-Large-XLSR-53-Spanish-With-LM This is a model copy of [Wav2Vec2-Large-XLSR-53-Spanish](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-spanish) that has language model support. This model card can be seen as a demo for the [pyctcdecode](https://github.com/kensho-technologies/pyctcdecode) integration with Transformers led by [this PR](https://github.com/huggingface/transformers/pull/14339). The PR explains in-detail how the integration works. In a nutshell: This PR adds a new Wav2Vec2WithLMProcessor class as drop-in replacement for Wav2Vec2Processor. The only change from the existing ASR pipeline will be: ```diff import torch from datasets import load_dataset from transformers import AutoModelForCTC, AutoProcessor import torchaudio.functional as F model_id = "patrickvonplaten/wav2vec2-xlsr-53-es-kenlm" sample = next(iter(load_dataset("common_voice", "es", split="test", streaming=True))) resampled_audio = F.resample(torch.tensor(sample["audio"]["array"]), 48_000, 16_000).numpy() model = AutoModelForCTC.from_pretrained(model_id) processor = AutoProcessor.from_pretrained(model_id) input_values = processor(resampled_audio, return_tensors="pt").input_values with torch.no_grad(): logits = model(input_values).logits -prediction_ids = torch.argmax(logits, dim=-1) -transcription = processor.batch_decode(prediction_ids) +transcription = processor.batch_decode(logits.numpy()).text | b78de20cfee256d6e7f7dc832f8b5f23 |

apache-2.0 | ['audio', 'automatic-speech-recognition', 'speech', 'xlsr-fine-tuning-week'] | false | => 'bien y qué regalo vas a abrir primero' ``` **Improvement** This model has been compared on 512 speech samples from the Spanish Common Voice Test set and gives a nice *20 %* performance boost: The results can be reproduced by running *from this model repository*: | Model | WER | CER | | ------------- | ------------- | ------------- | | patrickvonplaten/wav2vec2-xlsr-53-es-kenlm | **8.44%** | **2.93%** | | jonatasgrosman/wav2vec2-large-xlsr-53-spanish | **10.20%** | **3.24%** | ``` bash run_ngram_wav2vec2.py 1 512 ``` ``` bash run_ngram_wav2vec2.py 0 512 ``` with `run_ngram_wav2vec2.py` being https://huggingface.co/patrickvonplaten/wav2vec2-large-xlsr-53-spanish-with-lm/blob/main/run_ngram_wav2vec2.py | 38549ccfae4b1d0481e85499dbeeffe2 |

apache-2.0 | ['generated_from_trainer'] | false | opus-mt-tr-en-finetuned-en-to-tr This model is a fine-tuned version of [Helsinki-NLP/opus-mt-tr-en](https://huggingface.co/Helsinki-NLP/opus-mt-tr-en) on the wmt16 dataset. It achieves the following results on the evaluation set: - Loss: 1.9429 - Bleu: 6.471 - Gen Len: 56.1688 | f74eab9602166c5c867bd37b918c2cab |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:------:|:-------:| | 1.5266 | 1.0 | 12860 | 2.2526 | 4.5834 | 55.6563 | | 1.2588 | 2.0 | 25720 | 2.0113 | 5.9203 | 56.3506 | | 1.1878 | 3.0 | 38580 | 1.9429 | 6.471 | 56.1688 | | 66c3ae094bad30c3634fb0c31482f471 |

cc-by-4.0 | [] | false | Sentiment Classification in Polish ```python import numpy as np from transformers import AutoTokenizer, AutoModelForSequenceClassification id2label = {0: "negative", 1: "neutral", 2: "positive"} tokenizer = AutoTokenizer.from_pretrained("Voicelab/herbert-base-cased-sentiment") model = AutoModelForSequenceClassification.from_pretrained("Voicelab/herbert-base-cased-sentiment") input = ["Ale fajnie, spadł dzisiaj śnieg! Ulepimy dziś bałwana?"] encoding = tokenizer( input, add_special_tokens=True, return_token_type_ids=True, truncation=True, padding='max_length', return_attention_mask=True, return_tensors='pt', ) output = model(**encoding).logits.to("cpu").detach().numpy() prediction = id2label[np.argmax(output)] print(input, "--->", prediction) ``` Predicted output: ```python ['Ale fajnie, spadł dzisiaj śnieg! Ulepimy dziś bałwana?'] ---> positive ``` | 4d5cc69c6473821a39423b954a76d447 |

cc-by-4.0 | [] | false | Overview - **Language model:** [allegro/herbert-base-cased](https://huggingface.co/allegro/herbert-base-cased) - **Language:** pl - **Training data:** Reviews + own data - **Blog post:** [Sentiment analysis - COVID-19 – the source of the heated discussion](https://voicelab.ai/covid-19-the-source-of-the-heated-discussion) | 6d2f8f6c0f85fd2669573966cba83641 |

apache-2.0 | ['generated_from_trainer'] | false | my_awesome_qa_model This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 4.2177 | 628ce027283c7b0bacbba1423bfccfac |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | No log | 1.0 | 266 | 4.1357 | | 3.5667 | 2.0 | 532 | 4.1447 | | 3.5667 | 3.0 | 798 | 4.2177 | | 43bb22dd4d39bc94ed35c5676a9f9d7a |

mit | [] | false | **Hyperparameters:** - learning rate: 2e-5 - weight decay: 0.01 - per_device_train_batch_size: 8 - per_device_eval_batch_size: 8 - gradient_accumulation_steps:1 - eval steps: 50000 - max_length: 512 - num_epochs: 1 - hidden_dropout_prob: 0.3 - attention_probs_dropout_prob: 0.25 **Dataset version:** - tasky_or_not/10xp3nirstbbflanseuni_10xc4 **Checkpoint:** - 300000 steps. **Results on Validation set:** | **Step** | **Training Loss** | **Validation Loss** | **Accuracy** | **Precision** | **Recall** | **F1** | |:--------:|:-----------------:|:-------------------:|:------------:|:-------------:|:----------:|:--------:| | 50000 | 0.020800 | 0.192550 | 0.970363 | 0.990686 | 0.949654 | 0.969736 | | 100000 | 0.015200 | 0.264168 | 0.969427 | 0.994374 | 0.944196 | 0.968636 | | 150000 | 0.012900 | 0.146541 | 0.981440 | 0.994599 | 0.968138 | 0.981190 | | 200000 | 0.011100 | 0.319310 | 0.970516 | 0.998871 | 0.942097 | 0.969654 | | 250000 | 0.008000 | 0.204103 | 0.976309 | 0.996226 | 0.956241 | 0.975824 | | 300000 | 0.006100 | 0.096262 | 0.988053 | 0.994676 | 0.981358 | 0.987972 | | 350000 | 0.005800 | 0.162989 | 0.983663 | 0.994730 | 0.972478 | 0.983478 | **Wandb logs:** - https://wandb.ai/manandey/taskydata/runs/y3j3fbkh?workspace=user-manandey | 5fc9d36b711ad3d3b6dd51047b557632 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-05 - train_batch_size: 8 - eval_batch_size: 8 - seed: 2 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_ratio: 0.1 - training_steps: 200 | 0586356265699b3bb1a1d5fa15cca9e9 |

apache-2.0 | ['generated_from_trainer'] | false | t5-base-finetuned-eli5-a This model is a fine-tuned version of [ammarpl/t5-base-finetuned-xsum-a](https://huggingface.co/ammarpl/t5-base-finetuned-xsum-a) on the eli5 dataset. It achieves the following results on the evaluation set: - Loss: 3.1773 - Rouge1: 14.6711 - Rouge2: 2.2878 - Rougel: 11.3676 - Rougelsum: 13.1805 - Gen Len: 18.9892 | 20158034179e005bc3a5f19f78fbb546 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:| | 3.3417 | 1.0 | 17040 | 3.1773 | 14.6711 | 2.2878 | 11.3676 | 13.1805 | 18.9892 | | 03cdc9bdb75bfbc72ee1c5d9e046a61e |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Whisper medium Croatian El Greco This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the google/fleurs hr_hr dataset. It achieves the following results on the evaluation set: - Loss: 0.3374 - Wer: 14.6133 | 109e7083979169f5b47709a08511f68b |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 3e-06 - train_batch_size: 32 - eval_batch_size: 16 - seed: 42 - distributed_type: multi-GPU - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 1000 | 9f505320498d0b2ad35581ae1ebd94a5 |

apache-2.0 | ['whisper-event', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.0106 | 4.61 | 1000 | 0.3374 | 14.6133 | | d9808f09b416e724e8c7e1fc4b5fbc3d |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | XLM-RoBERTa base Universal Dependencies v2.8 POS tagging: Vietnamese This model is part of our paper called: - Make the Best of Cross-lingual Transfer: Evidence from POS Tagging with over 100 Languages Check the [Space](https://huggingface.co/spaces/wietsedv/xpos) for more details. | 50d6b0bb1644cd18213620b536a828e2 |

apache-2.0 | ['part-of-speech', 'token-classification'] | false | Usage ```python from transformers import AutoTokenizer, AutoModelForTokenClassification tokenizer = AutoTokenizer.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-vi") model = AutoModelForTokenClassification.from_pretrained("wietsedv/xlm-roberta-base-ft-udpos28-vi") ``` | 821d6a0fd888fab9de3396d658095c42 |

mit | [] | false | Model description This model takes the XLM-Roberta-base model which has been continued to pre-traine on a large corpus of Twitter in multiple languages. It was developed following a similar strategy as introduced as part of the [Tweet Eval](https://github.com/cardiffnlp/tweeteval) framework. The model is further finetuned on the english part of the XNLI training dataset. | f3d7c0023b1558de8b778486e55742ec |

mit | [] | false | Intended Usage This model was developed to do Zero-Shot Text Classification in the realm of Hate Speech Detection. It is focused on the language of english as it was finetuned on data in said language. Since the base model was pre-trained on 100 different languages it has shown some effectiveness in other languages. Please refer to the list of languages in the [XLM Roberta paper](https://arxiv.org/abs/1911.02116) | 908fcc0cf322ecb7022d5d23cc029ac6 |

mit | [] | false | Usage with Zero-Shot Classification pipeline ```python from transformers import pipeline classifier = pipeline("zero-shot-classification", model="morit/english_xlm_xnli") ``` After loading the model you can classify sequences in the languages mentioned above. You can specify your sequences and a matching hypothesis to be able to classify your proposed candidate labels. ```python sequence_to_classify = "I think Rishi Sunak is going to win the elections" | 429fad3c3d33891d32b8f694634caa3c |

mit | [] | false | Training This model was pre-trained on a set of 100 languages and follwed further training on 198M multilingual tweets as described in the original [paper](https://arxiv.org/abs/2104.12250). Further it was trained on the training set of XNLI dataset in english which is a machine translated version of the MNLI dataset. It was trained on 5 epochs of the XNLI train set and evaluated on the XNLI eval dataset at the end of every epoch to find the best performing model. The model which had the highest accuracy on the eval set was chosen at the end.  - learning rate: 2e-5 - batch size: 32 - max sequence: length 128 using a GPU (NVIDIA GeForce RTX 3090) resulting in a training time of 1h 47 mins. | 8ef617fb069f13167afe5abeadf28148 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.