license stringlengths 2 30 | tags stringlengths 2 513 | is_nc bool 1 class | readme_section stringlengths 201 597k | hash stringlengths 32 32 |

|---|---|---|---|---|

apache-2.0 | ['automatic-speech-recognition', 'ar'] | false | exp_w2v2t_ar_hubert_s693 Fine-tuned [facebook/hubert-large-ll60k](https://huggingface.co/facebook/hubert-large-ll60k) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech input is sampled at 16kHz. This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool. | eed0c1bb5dadef4680e625ee3f1cb23a |

mit | [] | false | belize-blue-sofa on Stable Diffusion This is the `<belize-blue-sofa>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:      | 06e86b400b0db2941644abbb27b68c28 |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Whisper Small Hi - Swedish This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 11.0 dataset. It achieves the following results on the evaluation set: - Loss: 0.3260 - Wer: 19.8946 | c589568f86ce0a944f78812e654a5d6d |

apache-2.0 | ['hf-asr-leaderboard', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:-------:| | 0.3144 | 0.65 | 500 | 0.3244 | 24.0623 | | 0.1321 | 1.29 | 1000 | 0.2977 | 21.5563 | | 0.1318 | 1.94 | 1500 | 0.2788 | 20.9190 | | 0.052 | 2.59 | 2000 | 0.2852 | 20.3329 | | 0.0203 | 3.23 | 2500 | 0.3017 | 19.8677 | | 0.0174 | 3.88 | 3000 | 0.3008 | 19.9941 | | 0.0083 | 4.53 | 3500 | 0.3216 | 20.0022 | | 0.0039 | 5.17 | 4000 | 0.3260 | 19.8946 | | b5506bd3fda0a7444fc96cbc89b1cf74 |

apache-2.0 | ['lexical normalization'] | false | Fine-tuned ByT5-small for MultiLexNorm (Slovenian version)  This is the official release of the fine-tuned models for **the winning entry** to the [*W-NUT 2021: Multilingual Lexical Normalization (MultiLexNorm)* shared task](https://noisy-text.github.io/2021/multi-lexnorm.html), which evaluates lexical-normalization systems on 12 social media datasets in 11 languages. Our system is based on [ByT5](https://arxiv.org/abs/2105.13626), which we first pre-train on synthetic data and then fine-tune on authentic normalization data. It achieves the best performance by a wide margin in intrinsic evaluation, and also the best performance in extrinsic evaluation through dependency parsing. In addition to these fine-tuned models, we also release the source files on [GitHub](https://github.com/ufal/multilexnorm2021) and an interactive demo on [Google Colab](https://colab.research.google.com/drive/1rxpI8IlKk-D2crFqi2hdzbTBIezqgsCg?usp=sharing). | a15caae36ab9a8b71cf40095160c908e |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-my_hindi_home-latest-colab This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice dataset. | 061c44403950b6813c84d058ede016f4 |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer', 'mt', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | wav2vec2-large-xls-r-300m-maltese This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - MT dataset. It achieves the following results on the evaluation set: - Loss: 0.2005 - Wer: 0.1897 | a33eeea8b59476ca036d3d68f4bd859b |

apache-2.0 | ['automatic-speech-recognition', 'mozilla-foundation/common_voice_7_0', 'generated_from_trainer', 'mt', 'robust-speech-event', 'model_for_talk', 'hf-asr-leaderboard'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 1.2238 | 18.02 | 2000 | 0.3911 | 0.4310 | | 0.7871 | 36.04 | 4000 | 0.2063 | 0.2309 | | 0.6653 | 54.05 | 6000 | 0.1960 | 0.2091 | | 0.5861 | 72.07 | 8000 | 0.1986 | 0.2000 | | 0.5283 | 90.09 | 10000 | 0.1993 | 0.1909 | | 529fa0d6ea0f44daa3f73119e102a042 |

apache-2.0 | ['ar', 'automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_7_0', 'robust-speech-event'] | false | This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_7_0 - AR dataset. It achieves the following results on the evaluation set: - Loss: 0.4502 - Wer: 0.4783 | e03cd03b326db3a2a85b96b90f4f6698 |

apache-2.0 | ['ar', 'automatic-speech-recognition', 'generated_from_trainer', 'hf-asr-leaderboard', 'mozilla-foundation/common_voice_7_0', 'robust-speech-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 4.7972 | 0.43 | 500 | 5.1401 | 1.0 | | 3.3241 | 0.86 | 1000 | 3.3220 | 1.0 | | 3.1432 | 1.29 | 1500 | 3.0806 | 0.9999 | | 2.9297 | 1.72 | 2000 | 2.5678 | 1.0057 | | 2.2593 | 2.14 | 2500 | 1.1068 | 0.8218 | | 2.0504 | 2.57 | 3000 | 0.7878 | 0.7114 | | 1.937 | 3.0 | 3500 | 0.6955 | 0.6450 | | 1.8491 | 3.43 | 4000 | 0.6452 | 0.6304 | | 1.803 | 3.86 | 4500 | 0.5961 | 0.6042 | | 1.7545 | 4.29 | 5000 | 0.5550 | 0.5748 | | 1.7045 | 4.72 | 5500 | 0.5374 | 0.5743 | | 1.6733 | 5.15 | 6000 | 0.5337 | 0.5404 | | 1.6761 | 5.57 | 6500 | 0.5054 | 0.5266 | | 1.655 | 6.0 | 7000 | 0.4926 | 0.5243 | | 1.6252 | 6.43 | 7500 | 0.4946 | 0.5183 | | 1.6209 | 6.86 | 8000 | 0.4915 | 0.5194 | | 1.5772 | 7.29 | 8500 | 0.4725 | 0.5104 | | 1.5602 | 7.72 | 9000 | 0.4726 | 0.5097 | | 1.5783 | 8.15 | 9500 | 0.4667 | 0.4956 | | 1.5442 | 8.58 | 10000 | 0.4685 | 0.4937 | | 1.5597 | 9.01 | 10500 | 0.4708 | 0.4957 | | 1.5406 | 9.43 | 11000 | 0.4539 | 0.4810 | | 1.5274 | 9.86 | 11500 | 0.4502 | 0.4783 | | 81b8fc7bca9bd068535ffa00f485fc0b |

mit | ['generated_from_trainer'] | false | finetuned_gpt2-medium_sst2_negation0.0005_pretrainedTrue This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on the sst2 dataset. It achieves the following results on the evaluation set: - Loss: 3.4480 | ab0564a6f4511f41f256b0e8c026856c |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.8212 | 1.0 | 1059 | 3.3124 | | 2.5361 | 2.0 | 2118 | 3.3864 | | 2.3876 | 3.0 | 3177 | 3.4480 | | 2ab2bb48e8f0125c499fa475886cfbfd |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Introduction ptt5-base-msmarco-pt-10k-v2 is a T5-based model pretrained in the BrWac corpus, finetuned on Portuguese translated version of MS MARCO passage dataset. In the v2 version, the Portuguese dataset was translated using Google Translate. This model was finetuned for 10k steps. Further information about the dataset or the translation method can be found on our [**mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset**](https://arxiv.org/abs/2108.13897) and [mMARCO](https://github.com/unicamp-dl/mMARCO) repository. | 7a02453f6dd1d38661878d81c1a425f3 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Usage ```python from transformers import T5Tokenizer, T5ForConditionalGeneration model_name = 'unicamp-dl/ptt5-base-msmarco-pt-10k-v2' tokenizer = T5Tokenizer.from_pretrained(model_name) model = T5ForConditionalGeneration.from_pretrained(model_name) ``` | 4eb5891f4e9330bb28e386233a6a76e2 |

mit | ['msmarco', 't5', 'pytorch', 'tensorflow', 'pt', 'pt-br'] | false | Citation If you use ptt5-base-msmarco-pt-10k-v2, please cite: @misc{bonifacio2021mmarco, title={mMARCO: A Multilingual Version of MS MARCO Passage Ranking Dataset}, author={Luiz Henrique Bonifacio and Vitor Jeronymo and Hugo Queiroz Abonizio and Israel Campiotti and Marzieh Fadaee and and Roberto Lotufo and Rodrigo Nogueira}, year={2021}, eprint={2108.13897}, archivePrefix={arXiv}, primaryClass={cs.CL} } | 6db5eefeeb69a362f5556ffe686c4966 |

apache-2.0 | ['generated_from_trainer'] | false | Article_500v7_NER_Model_3Epochs_AUGMENTED This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article500v7_wikigold_split dataset. It achieves the following results on the evaluation set: - Loss: 0.1961 - Precision: 0.7235 - Recall: 0.7613 - F1: 0.7419 - Accuracy: 0.9420 | 4cd8f38b14a16cbfd92a2d66a19a715a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:| | No log | 1.0 | 162 | 0.1924 | 0.6942 | 0.7087 | 0.7014 | 0.9358 | | No log | 2.0 | 324 | 0.1958 | 0.7165 | 0.7540 | 0.7348 | 0.9403 | | No log | 3.0 | 486 | 0.1961 | 0.7235 | 0.7613 | 0.7419 | 0.9420 | | 2c0f583e40698da3cab2f4005d47b899 |

mit | ['generated_from_trainer'] | false | BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext-finetuned-pubmedqa-1 This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.6660 - Accuracy: 0.7 | 379062be8db3905b9508970404e9fb53 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | No log | 1.0 | 57 | 0.8471 | 0.58 | | No log | 2.0 | 114 | 0.8450 | 0.58 | | No log | 3.0 | 171 | 0.7846 | 0.58 | | No log | 4.0 | 228 | 0.8649 | 0.58 | | No log | 5.0 | 285 | 0.7220 | 0.68 | | No log | 6.0 | 342 | 0.7395 | 0.66 | | No log | 7.0 | 399 | 0.7198 | 0.72 | | No log | 8.0 | 456 | 0.6417 | 0.72 | | 0.7082 | 9.0 | 513 | 0.6265 | 0.74 | | 0.7082 | 10.0 | 570 | 0.6660 | 0.7 | | 9e5b4405f227673663a358ffc005a6af |

apache-2.0 | [] | false | PaddlePaddle/uie-micro Information extraction suffers from its varying targets, heterogeneous structures, and demand-specific schemas. The unified text-to-structure generation framework, namely UIE, can universally model different IE tasks, adaptively generate targeted structures, and collaboratively learn general IE abilities from different knowledge sources. Specifically, UIE uniformly encodes different extraction structures via a structured extraction language, adaptively generates target extractions via a schema-based prompt mechanism - structural schema instructor, and captures the common IE abilities via a large-scale pre-trained text-to-structure model. Experiments show that UIE achieved the state-of-the-art performance on 4 IE tasks, 13 datasets, and on all supervised, low-resource, and few-shot settings for a wide range of entity, relation, event and sentiment extraction tasks and their unification. These results verified the effectiveness, universality, and transferability of UIE. UIE Paper: https://arxiv.org/abs/2203.12277 PaddleNLP released UIE model series for Information Extraction of texts and multi-modal documents which use the ERNIE 3.0 models as the pre-trained language models and were finetuned on a large amount of information extraction data.  | 0a99ab03755316bb63454c24bbaf519e |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-cased-finetuned-squad_v2 This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on the squad_v2 dataset. It achieves the following results on the evaluation set: - Loss: 1.4225 | 1e38c583714526e6b6db3138f38443aa |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:-----:|:---------------:| | 1.2416 | 1.0 | 8255 | 1.2973 | | 0.9689 | 2.0 | 16510 | 1.3242 | | 0.7803 | 3.0 | 24765 | 1.4225 | | 7f0d7fc0240728799cc0e0acd4d019bb |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | kornwtp/ConGen-Multilingual-DistilBERT This is a [ConGen](https://github.com/KornWtp/ConGen) model: It maps sentences to a 768 dimensional dense vector space and can be used for tasks like semantic search. | a49ec1334f0126458c957bd3f1ea8cdf |

apache-2.0 | ['sentence-transformers', 'feature-extraction', 'sentence-similarity', 'transformers'] | false | Usage Using this model becomes easy when you have [ConGen](https://github.com/KornWtp/ConGen) installed: ``` pip install -U git+https://github.com/KornWtp/ConGen.git ``` Then you can use the model like this: ```python from sentence_transformers import SentenceTransformer sentences = ["This is an example sentence", "Each sentence is converted"] model = SentenceTransformer('kornwtp/ConGen-Multilingual-DistilBERT') embeddings = model.encode(sentences) print(embeddings) ``` | 241d78b70c40b886e78e24fb32f3f382 |

mit | [] | false | dq10-anrushia on Stable Diffusion This is the `<anrushia>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb). Here is the new concept you will be able to use as an `object`:        | 6931d002a2d3327709d97590b712dc94 |

mit | ['generated_from_keras_callback'] | false | recklessrecursion/Cardinal__Catholicism_-clustered This model is a fine-tuned version of [nandysoham16/11-clustered_aug](https://huggingface.co/nandysoham16/11-clustered_aug) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.2711 - Train End Logits Accuracy: 0.9306 - Train Start Logits Accuracy: 0.9167 - Validation Loss: 0.1084 - Validation End Logits Accuracy: 1.0 - Validation Start Logits Accuracy: 1.0 - Epoch: 0 | 0392f1278b766899a16493d3ce776885 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch | |:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:| | 0.2711 | 0.9306 | 0.9167 | 0.1084 | 1.0 | 1.0 | 0 | | 1ced43c84f999f80da688ab97f3288f2 |

apache-2.0 | ['vision', 'depth-estimation', 'generated_from_trainer'] | false | glpn-nyu-finetuned-diode-221223-094145 This model is a fine-tuned version of [vinvino02/glpn-nyu](https://huggingface.co/vinvino02/glpn-nyu) on the diode-subset dataset. It achieves the following results on the evaluation set: - Loss: 0.4077 - Mae: 0.4032 - Rmse: 0.6201 - Abs Rel: 0.3554 - Log Mae: 0.1594 - Log Rmse: 0.2173 - Delta1: 0.4530 - Delta2: 0.6868 - Delta3: 0.8071 | 309b3255c5a9d9db15e52555295cb8bb |

apache-2.0 | ['vision', 'depth-estimation', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mae | Rmse | Abs Rel | Log Mae | Log Rmse | Delta1 | Delta2 | Delta3 | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:-------:|:-------:|:--------:|:------:|:------:|:------:| | 1.0433 | 1.0 | 72 | 0.5885 | 0.5648 | 0.7732 | 0.4665 | 0.2691 | 0.3222 | 0.2134 | 0.4070 | 0.5668 | | 0.4529 | 2.0 | 144 | 0.4284 | 0.4217 | 0.6232 | 0.3846 | 0.1702 | 0.2214 | 0.3935 | 0.6428 | 0.7958 | | 0.415 | 3.0 | 216 | 0.4221 | 0.4049 | 0.6164 | 0.3800 | 0.1603 | 0.2180 | 0.4499 | 0.6735 | 0.8070 | | 0.3643 | 4.0 | 288 | 0.4430 | 0.4172 | 0.6176 | 0.4419 | 0.1671 | 0.2265 | 0.4208 | 0.6489 | 0.8077 | | 0.3927 | 5.0 | 360 | 0.4186 | 0.4072 | 0.6199 | 0.3646 | 0.1623 | 0.2199 | 0.4362 | 0.6675 | 0.8077 | | 0.389 | 6.0 | 432 | 0.4093 | 0.4018 | 0.6168 | 0.3515 | 0.1592 | 0.2155 | 0.4499 | 0.6753 | 0.8111 | | 0.3521 | 7.0 | 504 | 0.4320 | 0.4112 | 0.6165 | 0.4061 | 0.1646 | 0.2226 | 0.4358 | 0.6569 | 0.8062 | | 0.3324 | 8.0 | 576 | 0.4056 | 0.3977 | 0.6132 | 0.3570 | 0.1566 | 0.2148 | 0.4556 | 0.7006 | 0.8157 | | 0.3183 | 9.0 | 648 | 0.4187 | 0.4036 | 0.6151 | 0.3667 | 0.1607 | 0.2172 | 0.4472 | 0.6664 | 0.8095 | | 0.3052 | 10.0 | 720 | 0.4149 | 0.4031 | 0.6171 | 0.3683 | 0.1601 | 0.2191 | 0.4469 | 0.6815 | 0.8073 | | 0.3071 | 11.0 | 792 | 0.4168 | 0.4111 | 0.6252 | 0.3587 | 0.1647 | 0.2218 | 0.4322 | 0.6643 | 0.8019 | | 0.3358 | 12.0 | 864 | 0.4161 | 0.4029 | 0.6171 | 0.3650 | 0.1600 | 0.2189 | 0.4507 | 0.6789 | 0.8092 | | 0.3385 | 13.0 | 936 | 0.4116 | 0.4051 | 0.6215 | 0.3565 | 0.1609 | 0.2190 | 0.4478 | 0.6770 | 0.8053 | | 0.316 | 14.0 | 1008 | 0.4092 | 0.3982 | 0.6138 | 0.3618 | 0.1569 | 0.2157 | 0.4577 | 0.6951 | 0.8109 | | 0.3301 | 15.0 | 1080 | 0.4159 | 0.4056 | 0.6199 | 0.3654 | 0.1619 | 0.2204 | 0.4462 | 0.6743 | 0.8056 | | 0.3076 | 16.0 | 1152 | 0.4130 | 0.4051 | 0.6200 | 0.3612 | 0.1612 | 0.2195 | 0.4470 | 0.6787 | 0.8076 | | 0.3001 | 17.0 | 1224 | 0.4134 | 0.4071 | 0.6244 | 0.3579 | 0.1621 | 0.2210 | 0.4487 | 0.6771 | 0.8045 | | 0.3293 | 18.0 | 1296 | 0.4091 | 0.4031 | 0.6182 | 0.3552 | 0.1601 | 0.2174 | 0.4501 | 0.6786 | 0.8065 | | 0.3023 | 19.0 | 1368 | 0.4089 | 0.3990 | 0.6143 | 0.3633 | 0.1573 | 0.2160 | 0.4518 | 0.6966 | 0.8137 | | 0.3288 | 20.0 | 1440 | 0.4067 | 0.4006 | 0.6166 | 0.3538 | 0.1580 | 0.2155 | 0.4529 | 0.6895 | 0.8122 | | 0.2988 | 21.0 | 1512 | 0.4061 | 0.4060 | 0.6221 | 0.3491 | 0.1614 | 0.2183 | 0.4480 | 0.6777 | 0.8059 | | 0.3037 | 22.0 | 1584 | 0.4081 | 0.4025 | 0.6204 | 0.3582 | 0.1587 | 0.2174 | 0.4523 | 0.6905 | 0.8093 | | 0.3284 | 23.0 | 1656 | 0.4080 | 0.4062 | 0.6209 | 0.3545 | 0.1615 | 0.2184 | 0.4409 | 0.6794 | 0.8060 | | 0.3261 | 24.0 | 1728 | 0.4092 | 0.4044 | 0.6208 | 0.3562 | 0.1602 | 0.2183 | 0.4512 | 0.6807 | 0.8061 | | 0.3039 | 25.0 | 1800 | 0.4079 | 0.4005 | 0.6159 | 0.3576 | 0.1585 | 0.2167 | 0.4611 | 0.6827 | 0.8095 | | 0.2843 | 26.0 | 1872 | 0.4072 | 0.4045 | 0.6212 | 0.3548 | 0.1603 | 0.2182 | 0.4502 | 0.6856 | 0.8079 | | 0.2828 | 27.0 | 1944 | 0.4110 | 0.4089 | 0.6248 | 0.3578 | 0.1631 | 0.2211 | 0.4419 | 0.6756 | 0.8031 | | 0.3212 | 28.0 | 2016 | 0.4063 | 0.3981 | 0.6148 | 0.3547 | 0.1569 | 0.2157 | 0.4651 | 0.6891 | 0.8102 | | 0.2936 | 29.0 | 2088 | 0.4087 | 0.4099 | 0.6243 | 0.3547 | 0.1638 | 0.2202 | 0.4366 | 0.6711 | 0.8038 | | 0.2999 | 30.0 | 2160 | 0.4067 | 0.3996 | 0.6161 | 0.3547 | 0.1581 | 0.2166 | 0.4624 | 0.6880 | 0.8082 | | 0.3052 | 31.0 | 2232 | 0.4044 | 0.3983 | 0.6149 | 0.3517 | 0.1571 | 0.2149 | 0.4591 | 0.6923 | 0.8124 | | 0.3082 | 32.0 | 2304 | 0.4069 | 0.4044 | 0.6224 | 0.3530 | 0.1597 | 0.2179 | 0.4533 | 0.6872 | 0.8058 | | 0.3077 | 33.0 | 2376 | 0.4072 | 0.4061 | 0.6218 | 0.3545 | 0.1612 | 0.2189 | 0.4462 | 0.6821 | 0.8057 | | 0.3043 | 34.0 | 2448 | 0.4063 | 0.4002 | 0.6170 | 0.3551 | 0.1579 | 0.2166 | 0.4575 | 0.6932 | 0.8101 | | 0.2933 | 35.0 | 2520 | 0.4097 | 0.4054 | 0.6228 | 0.3562 | 0.1606 | 0.2188 | 0.4485 | 0.6857 | 0.8051 | | 0.2996 | 36.0 | 2592 | 0.4059 | 0.4025 | 0.6194 | 0.3544 | 0.1590 | 0.2171 | 0.4522 | 0.6902 | 0.8087 | | 0.3123 | 37.0 | 2664 | 0.4058 | 0.4024 | 0.6207 | 0.3538 | 0.1588 | 0.2171 | 0.4573 | 0.6893 | 0.8079 | | 0.318 | 38.0 | 2736 | 0.4069 | 0.4028 | 0.6187 | 0.3555 | 0.1594 | 0.2172 | 0.4528 | 0.6876 | 0.8075 | | 0.2938 | 39.0 | 2808 | 0.4065 | 0.4031 | 0.6228 | 0.3557 | 0.1584 | 0.2167 | 0.4545 | 0.6902 | 0.8096 | | 0.294 | 40.0 | 2880 | 0.4059 | 0.4003 | 0.6170 | 0.3570 | 0.1577 | 0.2162 | 0.4576 | 0.6940 | 0.8098 | | 0.3139 | 41.0 | 2952 | 0.4072 | 0.4048 | 0.6202 | 0.3556 | 0.1605 | 0.2181 | 0.4484 | 0.6847 | 0.8075 | | 0.2953 | 42.0 | 3024 | 0.4080 | 0.4042 | 0.6208 | 0.3560 | 0.1598 | 0.2176 | 0.4514 | 0.6855 | 0.8067 | | 0.3093 | 43.0 | 3096 | 0.4076 | 0.4040 | 0.6216 | 0.3553 | 0.1596 | 0.2180 | 0.4532 | 0.6871 | 0.8076 | | 0.2843 | 44.0 | 3168 | 0.4073 | 0.4058 | 0.6225 | 0.3547 | 0.1609 | 0.2183 | 0.4482 | 0.6816 | 0.8070 | | 0.3064 | 45.0 | 3240 | 0.4069 | 0.4047 | 0.6215 | 0.3545 | 0.1601 | 0.2179 | 0.4512 | 0.6856 | 0.8076 | | 0.3027 | 46.0 | 3312 | 0.4073 | 0.4042 | 0.6228 | 0.3557 | 0.1596 | 0.2179 | 0.4542 | 0.6880 | 0.8075 | | 0.304 | 47.0 | 3384 | 0.4069 | 0.4063 | 0.6239 | 0.3546 | 0.1609 | 0.2186 | 0.4481 | 0.6829 | 0.8059 | | 0.297 | 48.0 | 3456 | 0.4063 | 0.4032 | 0.6202 | 0.3550 | 0.1590 | 0.2171 | 0.4543 | 0.6879 | 0.8089 | | 0.3036 | 49.0 | 3528 | 0.4057 | 0.4031 | 0.6217 | 0.3545 | 0.1588 | 0.2170 | 0.4551 | 0.6896 | 0.8093 | | 0.2949 | 50.0 | 3600 | 0.4077 | 0.4032 | 0.6201 | 0.3554 | 0.1594 | 0.2173 | 0.4530 | 0.6868 | 0.8071 | | 5e0355dd7502da08a9900a4341597fd0 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-fr-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1631 - F1 Score: 0.8579 | 7bd7ae445f2f1d7ed39bcee48d5dc482 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 Score | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.2878 | 1.0 | 715 | 0.1840 | 0.8247 | | 0.1456 | 2.0 | 1430 | 0.1596 | 0.8473 | | 0.0925 | 3.0 | 2145 | 0.1631 | 0.8579 | | ad318495e0f42f1bdec6cd3aebcfc0e0 |

cc0-1.0 | ['conversational'] | false | Chizuru Ichinose as a DialoGPT model This model is a fine-tuned version of [DialoGPT-medium](https://huggingface.co/microsoft/DialoGPT-medium/) trained on the [Chizuru Ichinose conversational dataset](https://huggingface.co/datasets/alexandreteles/chizuru-ichinose). We recommend using one of the Transformers pre-built pipelines to keep context without too much work when running this or any DialoGPT model: ```python from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline, Conversational | 92d2d93eec589740b68a847b97fc9639 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | DeathCharacter Dreambooth model trained by LaCambre with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Or you can run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb) Write "DeathCharacter" Sample pictures of this concept: .jpg) | 1923c94c5627ed8cedc9b9615f4d30ab |

apache-2.0 | ['speech'] | false | Wav2Vec2-Conformer-Large with Relative Position Embeddings Wav2Vec2 Conformer with relative position embeddings, pretrained on 960 hours of Librispeech on 16kHz sampled speech audio. When using the model make sure that your speech input is also sampled at 16Khz. **Note**: This model does not have a tokenizer as it was pretrained on audio alone. In order to use this model **speech recognition**, a tokenizer should be created and the model should be fine-tuned on labeled text data. Check out [this blog](https://huggingface.co/blog/fine-tune-wav2vec2-english) for more in-detail explanation of how to fine-tune the model. **Paper**: [fairseq S2T: Fast Speech-to-Text Modeling with fairseq](https://arxiv.org/abs/2010.05171) **Authors**: Changhan Wang, Yun Tang, Xutai Ma, Anne Wu, Sravya Popuri, Dmytro Okhonko, Juan Pino The results of Wav2Vec2-Conformer can be found in Table 3 and Table 4 of the [official paper](https://arxiv.org/abs/2010.05171). The original model can be found under https://github.com/pytorch/fairseq/tree/master/examples/wav2vec | 762696b4848684d4c1a7e1d5d4083b03 |

cc-by-4.0 | ['answer extraction'] | false | Model Card of `lmqg/mt5-base-frquad-ae` This model is fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) for answer extraction on the [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation). | 8e3b7116348cdb8c4a747c195466553d |

cc-by-4.0 | ['answer extraction'] | false | model prediction answers = model.generate_a("Créateur » (Maker), lui aussi au singulier, « le Suprême Berger » (The Great Shepherd) ; de l'autre, des réminiscences de la théologie de l'Antiquité : le tonnerre, voix de Jupiter, « Et souvent ta voix gronde en un tonnerre terrifiant », etc.") ``` - With `transformers` ```python from transformers import pipeline pipe = pipeline("text2text-generation", "lmqg/mt5-base-frquad-ae") output = pipe("Pourtant, la strophe spensérienne, utilisée cinq fois avant que ne commence le chœur, constitue en soi un vecteur dont les répétitions structurelles, selon Ricks, relèvent du pur lyrisme tout en constituant une menace potentielle. Après les huit sages pentamètres iambiques, l'alexandrin final <hl> permet une pause <hl>, « véritable illusion d'optique » qu'accentuent les nombreuses expressions archaïsantes telles que did swoon, did seem, did go, did receive, did make, qui doublent le prétérit en un temps composé et paraissent à la fois « très précautionneuses et très peu pressées ».") ``` | 8e2c9f9eefd952a96a540d07690913f9 |

cc-by-4.0 | ['answer extraction'] | false | Evaluation - ***Metric (Answer Extraction)***: [raw metric file](https://huggingface.co/lmqg/mt5-base-frquad-ae/raw/main/eval/metric.first.answer.paragraph_sentence.answer.lmqg_qg_frquad.default.json) | | Score | Type | Dataset | |:-----------------|--------:|:--------|:-----------------------------------------------------------------| | AnswerExactMatch | 3.92 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | AnswerF1Score | 19.32 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | BERTScore | 64.97 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | Bleu_1 | 7.64 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | Bleu_2 | 5.8 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | Bleu_3 | 4.65 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | Bleu_4 | 3.8 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | METEOR | 14.28 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | MoverScore | 50.67 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | ROUGE_L | 13.02 | default | [lmqg/qg_frquad](https://huggingface.co/datasets/lmqg/qg_frquad) | | dd47e4d235b6be8d6679a57ba6f267bd |

cc-by-4.0 | ['answer extraction'] | false | Training hyperparameters The following hyperparameters were used during fine-tuning: - dataset_path: lmqg/qg_frquad - dataset_name: default - input_types: ['paragraph_sentence'] - output_types: ['answer'] - prefix_types: None - model: google/mt5-base - max_length: 512 - max_length_output: 32 - epoch: 15 - batch: 8 - lr: 0.0001 - fp16: False - random_seed: 1 - gradient_accumulation_steps: 8 - label_smoothing: 0.15 The full configuration can be found at [fine-tuning config file](https://huggingface.co/lmqg/mt5-base-frquad-ae/raw/main/trainer_config.json). | bdb6efc6afb95cf7427572e2edde7315 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert_add_GLUE_Experiment_logit_kd_pretrain_cola This model is a fine-tuned version of [gokuls/distilbert_add_pre-training-complete](https://huggingface.co/gokuls/distilbert_add_pre-training-complete) on the GLUE COLA dataset. It achieves the following results on the evaluation set: - Loss: 0.6826 - Matthews Correlation: 0.0 | 4c3e221d1df32486af8f30d16a54cc3a |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Matthews Correlation | |:-------------:|:-----:|:----:|:---------------:|:--------------------:| | 0.8045 | 1.0 | 34 | 0.6851 | 0.0 | | 0.7928 | 2.0 | 68 | 0.6826 | 0.0 | | 0.7843 | 3.0 | 102 | 0.6896 | 0.0 | | 0.7612 | 4.0 | 136 | 0.7137 | -0.0293 | | 0.7314 | 5.0 | 170 | 0.7249 | 0.0677 | | 0.698 | 6.0 | 204 | 0.7111 | 0.0632 | | 0.6717 | 7.0 | 238 | 0.7198 | 0.0829 | | c473da18244797333d8d84ce7a7d064f |

cc-by-sa-4.0 | [] | false | Danish BERT for hate speech classification The BERT HateSpeech model classifies offensive Danish text into 4 categories: * `Særlig opmærksomhed` (special attention, e.g. threat) * `Personangreb` (personal attack) * `Sprogbrug` (offensive language) * `Spam & indhold` (spam) This model is intended to be used after the [BERT HateSpeech detection model](https://huggingface.co/alexandrainst/da-hatespeech-detection-base). It is based on the pretrained [Danish BERT](https://github.com/certainlyio/nordic_bert) model by BotXO which has been fine-tuned on social media data. See the [DaNLP documentation](https://danlp-alexandra.readthedocs.io/en/latest/docs/tasks/hatespeech.html | a478cf7feb93886fd8c989feb78f8f87 |

cc-by-sa-4.0 | [] | false | bertdr) for more details. Here is how to use the model: ```python from transformers import BertTokenizer, BertForSequenceClassification model = BertForSequenceClassification.from_pretrained("alexandrainst/da-hatespeech-classification-base") tokenizer = BertTokenizer.from_pretrained("alexandrainst/da-hatespeech-classification-base") ``` | 75fe4f23b179f32eefd1da01f19b3d7b |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'whisper-event'] | false | openai/whisper-base This is an automatic speech recognition model that also does punctuation and casing. Vegeu [projecte de millora dels models Whisper](https://www.softcatala.org/projectes/millora-del-catala-dels-models-del-reconeixement-de-la-parla-whisper/) (Catalan). This model is a fine-tuned version of [openai/whisper-base](https://huggingface.co/openai/whisper-base) on the mozilla-foundation/common_voice_11_0 ca dataset. It achieves the following results on the evaluation set: - Loss: 0.3608 - Wer: 16.1510 | 3d674aa345686b4f955e222e8b137a9f |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'whisper-event'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 1e-05 - train_batch_size: 2 - eval_batch_size: 1 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - training_steps: 40000 - mixed_precision_training: Native AMP | 4fd43fb1aeefc9f2c97e616bb6b604c5 |

apache-2.0 | ['generated_from_trainer', 'hf-asr-leaderboard', 'whisper-event'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:-------:| | 0.4841 | 0.1 | 4000 | 0.5078 | 26.7974 | | 0.3116 | 0.2 | 8000 | 0.4524 | 22.9455 | | 0.3971 | 0.3 | 12000 | 0.4281 | 21.5427 | | 0.2965 | 0.4 | 16000 | 0.4037 | 20.3082 | | 0.2634 | 1.09 | 20000 | 0.3875 | 18.7980 | | 0.2163 | 1.19 | 24000 | 0.3754 | 17.8170 | | 0.3182 | 1.29 | 28000 | 0.3695 | 16.8587 | | 0.2201 | 1.39 | 32000 | 0.3613 | 16.5785 | | 0.155 | 2.08 | 36000 | 0.3633 | 16.3959 | | 0.0904 | 2.18 | 40000 | 0.3608 | 16.1510 | | 2b214223357216e75ae103e387632c3c |

apache-2.0 | ['image-classification', 'timm'] | false | Model Details - **Model Type:** Image classification / feature backbone - **Model Stats:** - Params (M): 66.3 - GMACs: 8.7 - Activations (M): 21.6 - Image size: 224 x 224 - **Papers:** - A ConvNet for the 2020s: https://arxiv.org/abs/2201.03545 - **Original:** https://github.com/facebookresearch/ConvNeXt - **Dataset:** ImageNet-22k | 38c97883b9425630b1d6e8e0c77281ea |

apache-2.0 | ['image-classification', 'timm'] | false | Image Classification ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model('convnext_small.fb_in22k', pretrained=True) model = model.eval() | 89bf628b3a6ba08a6f93fad39c44c6db |

apache-2.0 | ['image-classification', 'timm'] | false | Feature Map Extraction ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_small.fb_in22k', pretrained=True, features_only=True, ) model = model.eval() | f43c6d51cc8b645b6b1c930aa3a10413 |

apache-2.0 | ['image-classification', 'timm'] | false | Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'convnext_small.fb_in22k', pretrained=True, num_classes=0, | d0d9d15eff3bd1c4f1d86d727edeed03 |

mit | ['generated_from_trainer'] | false | xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset. It achieves the following results on the evaluation set: - Loss: 0.1352 - F1: 0.8591 | fdcf67c4c3e0cce0916805df11b629b8 |

mit | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.257 | 1.0 | 525 | 0.1512 | 0.8302 | | 0.1305 | 2.0 | 1050 | 0.1401 | 0.8447 | | 0.0817 | 3.0 | 1575 | 0.1352 | 0.8591 | | 84bc447f337386e6ff0dc20e8e298cd0 |

apache-2.0 | ['translation'] | false | opus-mt-fi-fr * source languages: fi * target languages: fr * OPUS readme: [fi-fr](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fi-fr/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-02-26.zip](https://object.pouta.csc.fi/OPUS-MT-models/fi-fr/opus-2020-02-26.zip) * test set translations: [opus-2020-02-26.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-fr/opus-2020-02-26.test.txt) * test set scores: [opus-2020-02-26.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fi-fr/opus-2020-02-26.eval.txt) | 0ebe6105aae7121954aa6fe6766bcaaa |

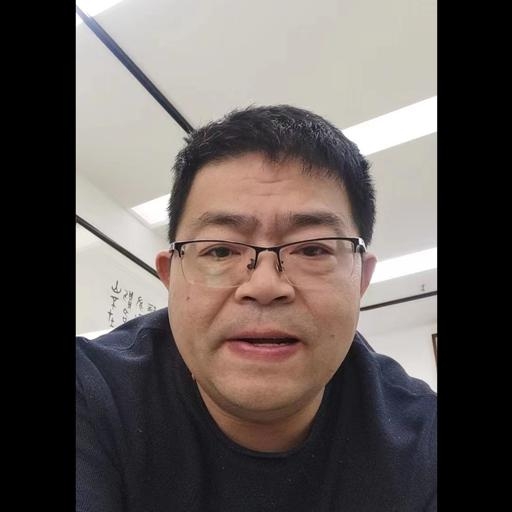

creativeml-openrail-m | ['text-to-image'] | false | cy0208 Dreambooth model trained by aichina with [Hugging Face Dreambooth Training Space](https://huggingface.co/spaces/multimodalart/dreambooth-training) with the v1-5 base model You run your new concept via `diffusers` [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb). Don't forget to use the concept prompts! Sample pictures of: cy0208 (use that on your prompt)  | 87e643ecdfaebd697494e922aeb9570d |

apache-2.0 | ['text-classfication', 'int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingStatic'] | false | PyTorch This is an INT8 PyTorch model quantized with [huggingface/optimum-intel](https://github.com/huggingface/optimum-intel) through the usage of [Intel® Neural Compressor](https://github.com/intel/neural-compressor). The original fp32 model comes from the fine-tuned model [Intel/bert-base-uncased-mrpc](https://huggingface.co/Intel/bert-base-uncased-mrpc). The calibration dataloader is the train dataloader. The calibration sampling size is 1000. The linear module **bert.encoder.layer.9.output.dense** falls back to fp32 to meet the 1% relative accuracy loss. | 79427f4301a55aab42a65830b63f2a82 |

apache-2.0 | ['text-classfication', 'int8', 'Intel® Neural Compressor', 'neural-compressor', 'PostTrainingStatic'] | false | Load with Intel® Neural Compressor: ```python from optimum.intel.neural_compressor import IncQuantizedModelForSequenceClassification int8_model = IncQuantizedModelForSequenceClassification.from_pretrained( 'Intel/bert-base-uncased-mrpc-int8-static', ) ``` | 7655f8ae7cb55cbcc5f4bfe24291b9af |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-xls-r-bengali This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 3.0518 - Wer: 1.0 | 365ec9281a885be3ee74cada2b60bda5 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 2 - mixed_precision_training: Native AMP | 444633e7e94a0797e040b8003db26317 |

apache-2.0 | ['translation'] | false | opus-mt-csn-es * source languages: csn * target languages: es * OPUS readme: [csn-es](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/csn-es/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-15.zip](https://object.pouta.csc.fi/OPUS-MT-models/csn-es/opus-2020-01-15.zip) * test set translations: [opus-2020-01-15.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/csn-es/opus-2020-01-15.test.txt) * test set scores: [opus-2020-01-15.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/csn-es/opus-2020-01-15.eval.txt) | 7531a203cfc3b73bca2f48a8ac6b15f6 |

mit | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 5e-05 - train_batch_size: 1 - eval_batch_size: 2 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 4 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 1 | bc42ec631f376ff8bec7429f076002a5 |

apache-2.0 | ['translation'] | false | opus-mt-lv-sv * source languages: lv * target languages: sv * OPUS readme: [lv-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/lv-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-09.zip](https://object.pouta.csc.fi/OPUS-MT-models/lv-sv/opus-2020-01-09.zip) * test set translations: [opus-2020-01-09.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/lv-sv/opus-2020-01-09.test.txt) * test set scores: [opus-2020-01-09.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/lv-sv/opus-2020-01-09.eval.txt) | 1301297e033dccda3102bbfa7f13166a |

apache-2.0 | ['translation'] | false | opus-mt-fr-en * source languages: fr * target languages: en * OPUS readme: [fr-en](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/fr-en/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-02-26.zip](https://object.pouta.csc.fi/OPUS-MT-models/fr-en/opus-2020-02-26.zip) * test set translations: [opus-2020-02-26.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-en/opus-2020-02-26.test.txt) * test set scores: [opus-2020-02-26.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/fr-en/opus-2020-02-26.eval.txt) | 20b123501b110598e36b386b9bc8e4d5 |

apache-2.0 | ['translation'] | false | Benchmarks | testset | BLEU | chr-F | |-----------------------|-------|-------| | newsdiscussdev2015-enfr.fr.en | 33.1 | 0.580 | | newsdiscusstest2015-enfr.fr.en | 38.7 | 0.614 | | newssyscomb2009.fr.en | 30.3 | 0.569 | | news-test2008.fr.en | 26.2 | 0.542 | | newstest2009.fr.en | 30.2 | 0.570 | | newstest2010.fr.en | 32.2 | 0.590 | | newstest2011.fr.en | 33.0 | 0.597 | | newstest2012.fr.en | 32.8 | 0.591 | | newstest2013.fr.en | 33.9 | 0.591 | | newstest2014-fren.fr.en | 37.8 | 0.633 | | Tatoeba.fr.en | 57.5 | 0.720 | | 5b610452011f620787976b59220bcc06 |

['cc0-1.0'] | ['fastai', 'resnet', 'computer-vision', 'classification', 'image-classification', 'binary-classification'] | false | Model Description This is a resnet34 model fine-tuned with fastai to [classify real and fake Pokemon cards (dataset)](https://www.kaggle.com/datasets/ongshujian/real-and-fake-pokemon-cards). Here is a colab notebook that shows how the model was trained and pushed to the hub: [link](https://github.com/mindwrapped/pokemon-card-checker/blob/main/pokemon_card_checker.ipynb). | 127415cc6629d149c05ea9b8883a1fa9 |

creativeml-openrail-m | ['text-to-image', 'stable-diffusion'] | false | pkmdlhs_2500_300 Dreambooth model trained by ifif with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb) Sample pictures of this concept: | 8f7075f025427f2ad154accc2bc29a04 |

apache-2.0 | ['unity-ml-agents', 'ml-agents', 'deep-reinforcement-learning', 'reinforcement-learning', 'ML-Agents-PushBlock'] | false | Watch your Agent play You can watch your agent **playing directly in your browser:**. 1. Go to https://huggingface.co/spaces/unity/ML-Agents-PushBlock 2. Step 1: Write your model_id: unity/ML-Agents-PushBlock 3. Step 2: Select your *.nn or *.onnx file 4. Click on Watch the agent play 👀 | 21e9bed7e26707f959bc466eeaa006cf |

mit | ['ælæctra', 'pytorch', 'danish', 'ELECTRA-Small', 'replaced token detection'] | false | Ælæctra - A Step Towards More Efficient Danish Natural Language Processing **Ælæctra** is a Danish Transformer-based language model created to enhance the variety of Danish NLP resources with a more efficient model compared to previous state-of-the-art (SOTA) models. Initially a cased and an uncased model are released. It was created as part of a Cognitive Science bachelor's thesis. Ælæctra was pretrained with the ELECTRA-Small (Clark et al., 2020) pretraining approach by using the Danish Gigaword Corpus (Strømberg-Derczynski et al., 2020) and evaluated on Named Entity Recognition (NER) tasks. Since NER only presents a limited picture of Ælæctra's capabilities I am very interested in further evaluations. Therefore, if you employ it for any task, feel free to hit me up your findings! Ælæctra was, as mentioned, created to enhance the Danish NLP capabilties and please do note how this GitHub still does not support the Danish characters "*Æ, Ø and Å*" as the title of this repository becomes "*-l-ctra*". How ironic.🙂 Here is an example on how to load both the cased and the uncased Ælæctra model in [PyTorch](https://pytorch.org/) using the [🤗Transformers](https://github.com/huggingface/transformers) library: ```python from transformers import AutoTokenizer, AutoModelForPreTraining tokenizer = AutoTokenizer.from_pretrained("Maltehb/-l-ctra-cased") model = AutoModelForPreTraining.from_pretrained("Maltehb/-l-ctra-cased") ``` ```python from transformers import AutoTokenizer, AutoModelForPreTraining tokenizer = AutoTokenizer.from_pretrained("Maltehb/-l-ctra-uncased") model = AutoModelForPreTraining.from_pretrained("Maltehb/-l-ctra-uncased") ``` | 394331274094b7858d44bd192acbb968 |

mit | ['ælæctra', 'pytorch', 'danish', 'ELECTRA-Small', 'replaced token detection'] | false | Evaluation of current Danish Language Models Ælæctra, Danish BERT (DaBERT) and multilingual BERT (mBERT) were evaluated: | Model | Layers | Hidden Size | Params | AVG NER micro-f1 (DaNE-testset) | Average Inference Time (Sec/Epoch) | Download | | --- | --- | --- | --- | --- | --- | --- | | Ælæctra Uncased | 12 | 256 | 13.7M | 78.03 (SD = 1.28) | 10.91 | [Link for model](https://www.dropbox.com/s/cag7prs1nvdchqs/%C3%86l%C3%A6ctra.zip?dl=0) | | Ælæctra Cased | 12 | 256 | 14.7M | 80.08 (SD = 0.26) | 10.92 | [Link for model](https://www.dropbox.com/s/cag7prs1nvdchqs/%C3%86l%C3%A6ctra.zip?dl=0) | | DaBERT | 12 | 768 | 110M | 84.89 (SD = 0.64) | 43.03 | [Link for model](https://www.dropbox.com/s/19cjaoqvv2jicq9/danish_bert_uncased_v2.zip?dl=1) | | mBERT Uncased | 12 | 768 | 167M | 80.44 (SD = 0.82) | 72.10 | [Link for model](https://storage.googleapis.com/bert_models/2018_11_03/multilingual_L-12_H-768_A-12.zip) | | mBERT Cased | 12 | 768 | 177M | 83.79 (SD = 0.91) | 70.56 | [Link for model](https://storage.googleapis.com/bert_models/2018_11_23/multi_cased_L-12_H-768_A-12.zip) | On [DaNE](https://danlp.alexandra.dk/304bd159d5de/datasets/ddt.zip) (Hvingelby et al., 2020), Ælæctra scores slightly worse than both cased and uncased Multilingual BERT (Devlin et al., 2019) and Danish BERT (Danish BERT, 2019/2020), however, Ælæctra is less than one third the size, and uses significantly fewer computational resources to pretrain and instantiate. For a full description of the evaluation and specification of the model read the thesis: 'Ælæctra - A Step Towards More Efficient Danish Natural Language Processing'. | 69c6134c606ef8d11d69b8a61a19876a |

mit | ['ælæctra', 'pytorch', 'danish', 'ELECTRA-Small', 'replaced token detection'] | false | Contact For help or further information feel free to connect with the author Malte Højmark-Bertelsen on [hjb@kmd.dk](mailto:hjb@kmd.dk?subject=[GitHub]%20Ælæctra) or any of the following platforms: [<img align="left" alt="MalteHB | Twitter" width="22px" src="https://cdn.jsdelivr.net/npm/simple-icons@v3/icons/twitter.svg" />][twitter] [<img align="left" alt="MalteHB | LinkedIn" width="22px" src="https://cdn.jsdelivr.net/npm/simple-icons@v3/icons/linkedin.svg" />][linkedin] [<img align="left" alt="MalteHB | Instagram" width="22px" src="https://cdn.jsdelivr.net/npm/simple-icons@v3/icons/instagram.svg" />][instagram] <br /> </details> [twitter]: https://twitter.com/malteH_B [instagram]: https://www.instagram.com/maltemusen/ [linkedin]: https://www.linkedin.com/in/malte-h%C3%B8jmark-bertelsen-9a618017b/ | e583031a19a42bcc1da93dc15c6af7b9 |

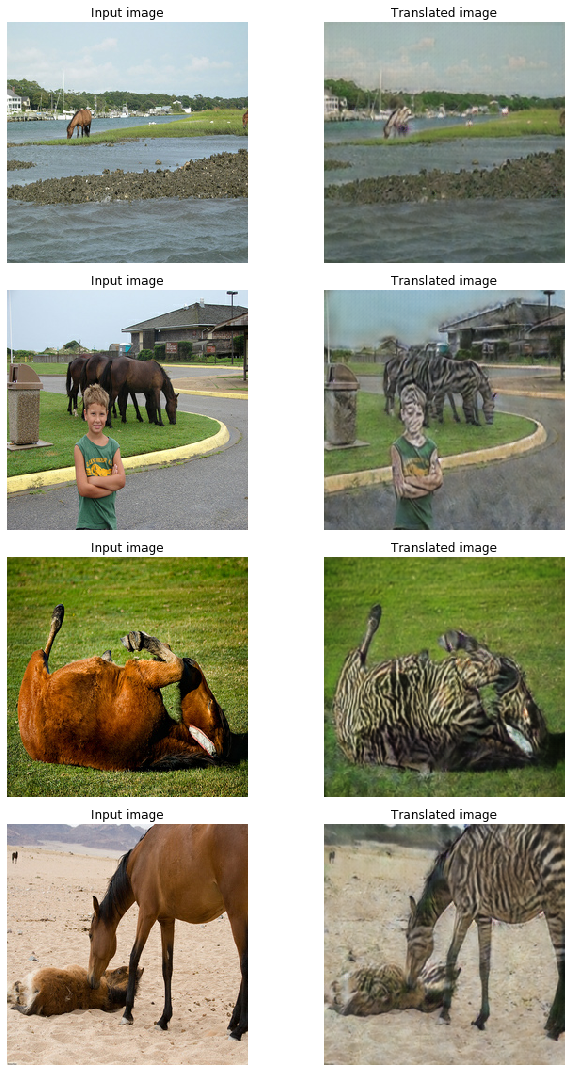

['cc0-1.0'] | ['gan', 'computer vision', 'horse to zebra'] | false | cycle_ganhorse2zebra) 🐴 -> 🦓 This repo contains the model and the notebook [to this Keras example on CycleGAN](https://keras.io/examples/generative/cyclegan/). Full credits to: [Aakash Kumar Nain](https://twitter.com/A_K_Nain) | 222901c54eff434c7ae79d405cd59069 |

['cc0-1.0'] | ['gan', 'computer vision', 'horse to zebra'] | false | Background Information CycleGAN is a model that aims to solve the image-to-image translation problem. The goal of the image-to-image translation problem is to learn the mapping between an input image and an output image using a training set of aligned image pairs. However, obtaining paired examples isn't always feasible. CycleGAN tries to learn this mapping without requiring paired input-output images, using cycle-consistent adversarial networks.  | 5a85c0b41ef3d2c5486b828dd33fd38a |

apache-2.0 | ['generated_from_trainer'] | false | wav2vec2-large-xls-r-300m-dutch-baseline This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.5107 - Wer: 0.2674 - Cer: 0.0863 | 16dc348c6a1bb557f94debd28628d0a3 |

apache-2.0 | ['generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 4 - eval_batch_size: 4 - seed: 42 - distributed_type: multi-GPU - num_devices: 2 - gradient_accumulation_steps: 4 - total_train_batch_size: 32 - total_eval_batch_size: 8 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 500 - num_epochs: 30 - mixed_precision_training: Native AMP | 3a6e62bcd3b75ee8ab1c4e5bd36f2c65 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Wer | Cer | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:| | 3.655 | 1.31 | 400 | 0.9337 | 0.7332 | 0.2534 | | 0.42 | 2.61 | 800 | 0.5018 | 0.4115 | 0.1374 | | 0.2267 | 3.92 | 1200 | 0.4776 | 0.3791 | 0.1259 | | 0.1624 | 5.23 | 1600 | 0.4807 | 0.3590 | 0.1208 | | 0.135 | 6.54 | 2000 | 0.4899 | 0.3417 | 0.1121 | | 0.1179 | 7.84 | 2400 | 0.5096 | 0.3445 | 0.1133 | | 0.1035 | 9.15 | 2800 | 0.4563 | 0.3455 | 0.1129 | | 0.092 | 10.46 | 3200 | 0.5061 | 0.3382 | 0.1127 | | 0.0804 | 11.76 | 3600 | 0.4969 | 0.3285 | 0.1088 | | 0.0748 | 13.07 | 4000 | 0.5274 | 0.3380 | 0.1114 | | 0.0669 | 14.38 | 4400 | 0.5201 | 0.3115 | 0.1028 | | 0.0588 | 15.69 | 4800 | 0.5238 | 0.3212 | 0.1054 | | 0.0561 | 16.99 | 5200 | 0.5273 | 0.3185 | 0.1044 | | 0.0513 | 18.3 | 5600 | 0.5577 | 0.3032 | 0.1010 | | 0.0476 | 19.61 | 6000 | 0.5298 | 0.3050 | 0.1008 | | 0.0408 | 20.91 | 6400 | 0.5725 | 0.2982 | 0.0984 | | 0.0376 | 22.22 | 6800 | 0.5605 | 0.2953 | 0.0966 | | 0.0339 | 23.53 | 7200 | 0.5419 | 0.2865 | 0.0938 | | 0.0315 | 24.84 | 7600 | 0.5530 | 0.2782 | 0.0915 | | 0.0286 | 26.14 | 8000 | 0.5354 | 0.2788 | 0.0917 | | 0.0259 | 27.45 | 8400 | 0.5245 | 0.2715 | 0.0878 | | 0.0231 | 28.76 | 8800 | 0.5107 | 0.2674 | 0.0863 | | 83fd543c7893287f0d649c9265b6d800 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | CRDNN with CTC/Attention trained on CommonVoice French (No LM) This repository provides all the necessary tools to perform automatic speech recognition from an end-to-end system pretrained on CommonVoice (French Language) within SpeechBrain. For a better experience, we encourage you to learn more about [SpeechBrain](https://speechbrain.github.io). The performance of the model is the following: | Release | Test CER | Test WER | GPUs | |:-------------:|:--------------:|:--------------:| :--------:| | 07-03-21 | 6.54 | 17.70 | 2xV100 16GB | | 9584946f3ee502e24f321d374bb07df1 |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Pipeline description This ASR system is composed of 2 different but linked blocks: - Tokenizer (unigram) that transforms words into subword units and trained with the train transcriptions (train.tsv) of CommonVoice (FR). - Acoustic model (CRDNN + CTC/Attention). The CRDNN architecture is made of N blocks of convolutional neural networks with normalization and pooling on the frequency domain. Then, a bidirectional LSTM is connected to a final DNN to obtain the final acoustic representation that is given to the CTC and attention decoders. The system is trained with recordings sampled at 16kHz (single channel). The code will automatically normalize your audio (i.e., resampling + mono channel selection) when calling *transcribe_file* if needed. | 01211fea2c610b68b4e3a327922eadca |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Transcribing your own audio files (in French) ```python from speechbrain.pretrained import EncoderDecoderASR asr_model = EncoderDecoderASR.from_hparams(source="speechbrain/asr-crdnn-commonvoice-fr", savedir="pretrained_models/asr-crdnn-commonvoice-fr") asr_model.transcribe_file("speechbrain/asr-crdnn-commonvoice-fr/example-fr.wav") ``` | 8e8a2d7a7009b7146ed491abbdf090df |

apache-2.0 | ['automatic-speech-recognition', 'CTC', 'Attention', 'pytorch', 'speechbrain'] | false | Training The model was trained with SpeechBrain (986a2175). To train it from scratch follows these steps: 1. Clone SpeechBrain: ```bash git clone https://github.com/speechbrain/speechbrain/ ``` 2. Install it: ``` cd speechbrain pip install -r requirements.txt pip install -e . ``` 3. Run Training: ``` cd recipes/CommonVoice/ASR/seq2seq python train.py hparams/train_fr.yaml --data_folder=your_data_folder ``` You can find our training results (models, logs, etc) [here](https://drive.google.com/drive/folders/13i7rdgVX7-qZ94Rtj6OdUgU-S6BbKKvw?usp=sharing) | 4ce741495ed33e63c20c40758410f1ba |

apache-2.0 | ['generated_from_keras_callback'] | false | en-fr-translation This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.7838 - Validation Loss: 1.5505 - Epoch: 1 | 873bf815bf1ebdd2e2692088ebbafb1a |

mit | [] | false | Model Description A CLIP ViT-H/14 frozen xlm roberta large model trained with the LAION-5B (https://laion.ai/blog/laion-5b/) using OpenCLIP (https://github.com/mlfoundations/open_clip). Model training done by Romain Beaumont on the [stability.ai](https://stability.ai/) cluster. | f142260a75d84662714391af872f5f6d |

mit | [] | false | Training Procedure Training with batch size 90k for 13B sample of laion5B, see https://wandb.ai/rom1504/open-clip/reports/xlm-roberta-large-unfrozen-vit-h-14-frozen--VmlldzoyOTc3ODY3 Model is H/14 on visual side, xlm roberta large initialized with pretrained weights on text side. The H/14 was initialized from https://huggingface.co/laion/CLIP-ViT-H-14-laion2B-s32B-b79K and kept frozen during training. | c575bf57aff908f59d3318c75449758a |

mit | [] | false | Results The model achieves imagenet 1k 77.0% (vs 78% for the english H/14)  On zero shot classification on imagenet with translated prompts this model reaches: * 56% in italian (vs 21% for https://github.com/clip-italian/clip-italian) * 53% in japanese (vs 54.6% for https://github.com/rinnakk/japanese-clip) * 55.7% in chinese (to be compared with https://github.com/OFA-Sys/Chinese-CLIP) This model reaches strong results in both english and other languages. | 89a8ea639f6cf8cbe062707c8b6ce6b8 |

openrail | [] | false | Hypernetworks of the Musical Isotope girls: Kafu, Sekai, Rime, Coko, and Haru.      | 656f300f9c01ccd4a1374079768b7e68 |

mit | ['generated_from_keras_callback'] | false | juro95/xlm-roberta-finetuned-ner-5-with-skills This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.1148 - Validation Loss: 0.1330 - Epoch: 2 | 2041e0b828bca478b1f0bcc45b476c84 |

mit | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 65502, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | 5067a7a292690c2a0721e021372073d5 |

mit | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 0.3044 | 0.1808 | 0 | | 0.1626 | 0.1459 | 1 | | 0.1148 | 0.1330 | 2 | | f00dd65976c81df7bacdbfc063681cea |

apache-2.0 | ['generated_from_trainer'] | false | bert-bert-cased-first512-Conflict-SEP This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 0.6806 - F1: 0.6088 - Accuracy: 0.5914 - Precision: 0.5839 - Recall: 0.6360 | 957f140f6e2520a07217cbaf421ac84e |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | F1 | Accuracy | Precision | Recall | |:-------------:|:-----:|:----:|:---------------:|:------:|:--------:|:---------:|:------:| | 0.7027 | 1.0 | 685 | 0.6956 | 0.6018 | 0.5365 | 0.5275 | 0.7003 | | 0.7009 | 2.0 | 1370 | 0.6986 | 0.6667 | 0.5 | 0.5 | 1.0 | | 0.7052 | 3.0 | 2055 | 0.6983 | 0.6667 | 0.5 | 0.5 | 1.0 | | 0.6987 | 4.0 | 2740 | 0.6830 | 0.5235 | 0.5636 | 0.5764 | 0.4795 | | 0.6761 | 5.0 | 3425 | 0.6806 | 0.6088 | 0.5914 | 0.5839 | 0.6360 | | e53f533c7e8514fd4548535a98d44742 |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | En-Af This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-af](https://huggingface.co/Helsinki-NLP/opus-mt-en-af) on the None dataset. It achieves the following results on the evaluation set: Before training: - 'eval_bleu': 35.055184951449 - 'eval_loss': 2.225693941116333 After training: - Loss: 2.0057 - Bleu: 44.2309 | d3f53d0b1c915875fd52e868cd8001c5 |

apache-2.0 | ['translation', 'generated_from_trainer'] | false | Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-05 - train_batch_size: 32 - eval_batch_size: 64 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 20 | 32dc57684897a45246b9d2fdffb16d37 |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased-finetuned-ft1500_norm500_aug4 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.5706 - Mse: 3.1412 - Mae: 1.0811 - R2: 0.3860 - Accuracy: 0.4382 | 611a726d7f8b31da3426a53ee52e4953 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Mse | Mae | R2 | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:--------:| | 1.1391 | 1.0 | 3952 | 1.5706 | 3.1412 | 1.0811 | 0.3860 | 0.4382 | | d60ab2814f9fd02f0366157bc3c4dda3 |

apache-2.0 | ['generated_from_keras_callback'] | false | Sohini17/mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 3.1301 - Validation Loss: 2.6937 - Epoch: 3 | 8dbf0e77d8b46cc4d632f3c30e531806 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training hyperparameters The following hyperparameters were used during training: - optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 5.6e-05, 'decay_steps': 92170, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01} - training_precision: mixed_float16 | fdef9596ab9078400d23bc11c80e2287 |

apache-2.0 | ['generated_from_keras_callback'] | false | Training results | Train Loss | Validation Loss | Epoch | |:----------:|:---------------:|:-----:| | 5.1520 | 3.1004 | 0 | | 3.5274 | 2.8682 | 1 | | 3.2465 | 2.7544 | 2 | | 3.1301 | 2.6937 | 3 | | c52c264728929ea8d154cdbfbe90e1be |

apache-2.0 | ['generated_from_trainer'] | false | distilbert-base-uncased__sst2__train-32-0 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.8558 - Accuracy: 0.7183 | d83fe30260ad665311c6f2ea99d1d7e3 |

apache-2.0 | ['generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.7088 | 1.0 | 13 | 0.6819 | 0.6154 | | 0.635 | 2.0 | 26 | 0.6318 | 0.7692 | | 0.547 | 3.0 | 39 | 0.5356 | 0.7692 | | 0.3497 | 4.0 | 52 | 0.4456 | 0.6923 | | 0.1979 | 5.0 | 65 | 0.3993 | 0.7692 | | 0.098 | 6.0 | 78 | 0.3613 | 0.7692 | | 0.0268 | 7.0 | 91 | 0.3561 | 0.9231 | | 0.0137 | 8.0 | 104 | 0.3755 | 0.9231 | | 0.0083 | 9.0 | 117 | 0.4194 | 0.7692 | | 0.0065 | 10.0 | 130 | 0.4446 | 0.7692 | | 0.005 | 11.0 | 143 | 0.4527 | 0.7692 | | 0.0038 | 12.0 | 156 | 0.4645 | 0.7692 | | 0.0033 | 13.0 | 169 | 0.4735 | 0.7692 | | 0.0033 | 14.0 | 182 | 0.4874 | 0.7692 | | 0.0029 | 15.0 | 195 | 0.5041 | 0.7692 | | 0.0025 | 16.0 | 208 | 0.5148 | 0.7692 | | 0.0024 | 17.0 | 221 | 0.5228 | 0.7692 | | 46d3e2e6640b8196ce319f91a26cb28a |

apache-2.0 | ['generated_from_trainer'] | false | distilled-mt5-small-b0.1 This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset. It achieves the following results on the evaluation set: - Loss: 2.8190 - Bleu: 7.497 - Gen Len: 44.5613 | b95a0fd23ba05d1c3bffb16cb409fd13 |

apache-2.0 | ['translation'] | false | opus-mt-sl-sv * source languages: sl * target languages: sv * OPUS readme: [sl-sv](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sl-sv/README.md) * dataset: opus * model: transformer-align * pre-processing: normalization + SentencePiece * download original weights: [opus-2020-01-16.zip](https://object.pouta.csc.fi/OPUS-MT-models/sl-sv/opus-2020-01-16.zip) * test set translations: [opus-2020-01-16.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sl-sv/opus-2020-01-16.test.txt) * test set scores: [opus-2020-01-16.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sl-sv/opus-2020-01-16.eval.txt) | 1f6d58cc2846447ee44f8afaf4a823a3 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | mt5-small-finetuned-amazon-en-es This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the None dataset. It achieves the following results on the evaluation set: - Loss: 3.0300 | 2da9b1e77852b03b2742138e35d38995 |

apache-2.0 | ['summarization', 'generated_from_trainer'] | false | Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 6.6964 | 1.0 | 1209 | 3.3036 | | 3.9031 | 2.0 | 2418 | 3.1324 | | 3.5802 | 3.0 | 3627 | 3.0846 | | 3.4212 | 4.0 | 4836 | 3.0613 | | 3.3216 | 5.0 | 6045 | 3.0606 | | 3.2427 | 6.0 | 7254 | 3.0392 | | 3.2081 | 7.0 | 8463 | 3.0344 | | 3.1806 | 8.0 | 9672 | 3.0300 | | d964361bd550ac45038d812ad49f91a0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.